A Comprehensive Guide to Method Validation in Organic Chemistry: From ICH Guidelines to Advanced HPLC Techniques

This article provides a definitive guide to analytical method validation tailored for researchers, scientists, and drug development professionals working in organic chemistry.

A Comprehensive Guide to Method Validation in Organic Chemistry: From ICH Guidelines to Advanced HPLC Techniques

Abstract

This article provides a definitive guide to analytical method validation tailored for researchers, scientists, and drug development professionals working in organic chemistry. It systematically covers the foundational principles of method validation as defined by major regulatory bodies (ICH, USP, FDA), detailed methodologies for developing and applying robust analytical procedures, advanced troubleshooting and optimization strategies for techniques like HPLC, and comprehensive validation protocols including comparative assessment and technology transfer. By integrating current guidelines, practical case studies, and emerging trends such as computational screening and innovative validation metrics, this resource aims to equip practitioners with the knowledge to ensure their analytical methods are accurate, precise, reliable, and fully compliant for pharmaceutical and biomedical applications.

Understanding Method Validation: Core Principles and Regulatory Requirements

Method validation is the formal, documented process of demonstrating that an analytical procedure is suitable for its intended purpose, ensuring the reliability, accuracy, and consistency of test results throughout drug development and manufacturing [1]. Within the pharmaceutical industry, these analytical methods are indispensable tools for assessing the identity, strength, quality, purity, and potency of drug substances and products. The process provides scientific evidence that the method consistently delivers results that are a true measure of the quality attribute being tested, known as the analyte.

Method validation does not occur in isolation but is a core component of the Chemistry, Manufacturing, and Controls (CMC) framework, which encompasses the rigorous systems and procedures governing drug development, production, and quality assurance [2]. This integration ensures that analytical activities are aligned with manufacturing realities and regulatory expectations. For researchers and drug development professionals, understanding method validation principles is essential for developing robust, defensible analytical methods that support product quality from initial development through commercial manufacturing, ultimately ensuring patient safety and product efficacy.

Core Objectives of Analytical Method Validation

The primary objective of method validation is to demonstrate that a specific analytical procedure is suitable for its intended use, providing a high degree of assurance that the data generated are both reliable and meaningful [1]. This foundational goal is achieved through several specific, interconnected objectives that collectively establish the method's fitness for purpose.

- Ensuring Data Quality and Integrity: Validation confirms that a method produces accurate (true) and precise (repeatable) results, forming the basis for sound decision-making in drug development [3]. This is crucial for assessing critical quality attributes (CQAs) like purity, potency, and impurity levels [2].

- Establishing Method Robustness and Ruggedness: A key objective is to demonstrate that the method's performance remains unaffected by small, deliberate variations in method parameters (robustness) and when performed under different conditions, such as by different analysts or instruments (ruggedness) [4].

- Supporting Regulatory Compliance and Submissions: Regulatory agencies worldwide require validated methods as a condition for drug approval [4]. Validation generates the documented evidence needed to show compliance with guidelines from the International Council for Harmonisation (ICH), FDA, and other bodies [1].

- Guiding Phase-Appropriate Development: The rigor of validation evolves with the drug development lifecycle. In early phases, methods may be partially validated, while later stages and commercial production require full validation to support marketing applications [2].

Key Validation Parameters and Regulatory Standards

The validation of an analytical method involves testing a defined set of performance characteristics or parameters. The specific parameters required depend on the type of analytical procedure, whether it is used for identification, testing for impurities, or assay of the main component [1]. The table below summarizes the core validation parameters and their definitions as outlined by major regulatory guidelines.

Table 1: Key Parameters for Analytical Method Validation

| Parameter | Definition | Primary Purpose |

|---|---|---|

| Accuracy [1] [3] | The closeness of agreement between a test result and the true or accepted reference value. | Demonstrates the method measures the analyte correctly without bias. |

| Precision [1] [3] | The closeness of agreement among a series of measurements from multiple sampling. Includes repeatability and intermediate precision. | Ensures consistency of results under normal operating conditions. |

| Specificity [1] [3] | The ability to assess the analyte unequivocally in the presence of other components like impurities, degradants, or matrix. | Confirms the method can distinguish and accurately measure the target analyte. |

| Linearity [1] [3] | The ability of the method to obtain test results directly proportional to analyte concentration within a given range. | Establives the proportional relationship between response and concentration. |

| Range [1] [3] | The interval between the upper and lower concentrations of analyte for which suitable levels of precision, accuracy, and linearity are demonstrated. | Defines the concentrations over which the method is applicable. |

| Detection Limit (LOD) [1] [3] | The lowest amount of analyte in a sample that can be detected, but not necessarily quantified. | Determines trace-level detection capability for impurities. |

| Quantitation Limit (LOQ) [1] [3] | The lowest amount of analyte in a sample that can be quantitatively determined with acceptable precision and accuracy. | Determines the lower limit for precise impurity quantification. |

| Robustness [1] [3] | A measure of the method's capacity to remain unaffected by small, deliberate variations in procedural parameters. | Evaluates the method's reliability during normal use and transfer. |

Regulatory bodies have harmonized requirements for these parameters. The ICH Q2(R1) guideline serves as the international standard, followed by the FDA and European Medicines Agency (EMA) [1] [3]. These guidelines ensure that methods developed in different regions meet consistent standards of quality, facilitating global drug development and registration.

The Integral Link Between Method Validation and CMC

Method validation is deeply embedded within the CMC framework, providing the critical data needed to establish and maintain control over the drug product's quality [2]. The relationship is symbiotic: CMC activities define what a method must measure, and the validated method provides the data to control the manufacturing process and final product.

- Defining Critical Quality Attributes (CQAs): CMC development identifies a product's CQAs—such as purity, potency, and impurity profiles. Method validation then ensures the analytical procedures for monitoring these CQAs are scientifically sound and fit-for-purpose [2]. For example, if a CMC study identifies a potential process impurity, a validated method must be able to detect and quantify it with specificity and sufficient sensitivity [2].

- Phase-Appropriate Validation Rigor: The level of validation rigor aligns with the stage of CMC development. Early development may only require method qualification to monitor basic safety and feasibility. As the process is refined and scaled up for late-stage trials and commercial readiness, full method validation is necessary to support the more fixed and robust control strategy [2].

- Adapting to CMC Changes: Drug development is iterative, with frequent process improvements and formulation changes. Any change in synthesis, excipients, or scale can introduce new impurities or alter degradation pathways. CMC change control mechanisms require that analytical methods are revalidated or supplementally validated to ensure they remain capable of assuring product quality after such changes [2].

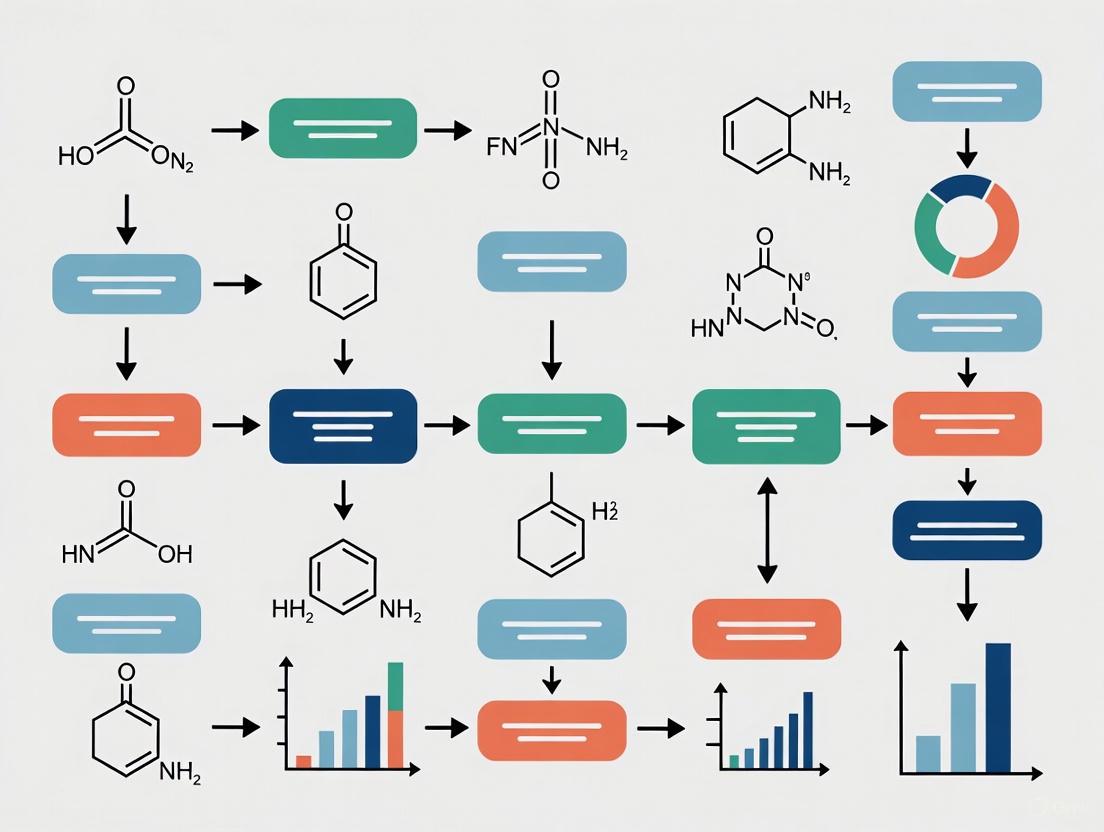

The following diagram illustrates the logical workflow of method validation within the CMC framework, from development to ongoing control.

Experimental Protocols for Key Validation Parameters

This section provides detailed methodologies for conducting experiments to validate the core parameters of an analytical method, using High-Performance Liquid Chromatography (HPLC) as a common example.

Protocol for Accuracy, Linearity, and Range

This experiment is designed to determine the accuracy, linearity, and working range of an assay method simultaneously through a recovery study [1] [4].

- Objective: To verify that the method provides results proportional to analyte concentration (linearity), is accurate across a specified range, and to define that range.

- Materials: Drug substance (analyte) of known high purity, placebo/excipients matching the drug product formulation, appropriate solvents and reagents, HPLC system with suitable detector.

- Procedure:

- Prepare a stock solution of the analyte at a concentration near the upper end of the anticipated range.

- Prepare a placebo solution containing all excipients at their expected concentration in the final drug product.

- From the stock solution, prepare a series of at least five standard solutions spanning the intended range (e.g., 50%, 75%, 100%, 125%, 150% of the target concentration).

- Prepare sample solutions by spiking the placebo with the analyte at the same concentration levels as the standards (e.g., 50%, 75%, 100%, 125%, 150%). Each level should be prepared in triplicate.

- Inject each solution into the HPLC system in a randomized sequence.

- Record the peak response (e.g., area) for the analyte.

- Data Analysis:

- Linearity: Plot the average peak response of the standard solutions against their concentration. Calculate the regression line (y = mx + c) and the correlation coefficient (R²). An R² value ≥ 0.999 is typically expected for a linear relationship [4].

- Accuracy: For each spike level, calculate the percent recovery:

(Measured Concentration / Theoretical Concentration) * 100. The mean recovery at each level should be within 98.0-102.0% for the assay of a drug substance. - Range: The range is established as the interval between the lowest and highest concentration levels for which linearity and accuracy have been demonstrated.

Protocol for Precision (Repeatability and Intermediate Precision)

Precision is validated through experiments that assess variability under different conditions [1].

- Objective: To demonstrate the consistency of results under normal operating conditions (repeatability) and when conditions change within a laboratory (intermediate precision).

- Materials: Homogeneous sample of drug substance or product, standard solutions, HPLC system.

- Procedure for Repeatability:

- Prepare six independent sample preparations from a single, homogeneous batch at 100% of the test concentration.

- Have a single analyst analyze all six samples on the same day, using the same instrument.

- Calculate the % Relative Standard Deviation (%RSD) of the six results.

- Procedure for Intermediate Precision:

- To incorporate variation, a second analyst repeats the procedure on a different day, using a different HPLC instrument (if available).

- The data from both analysts and both days are combined.

- The overall %RSD is calculated, or an analysis of variance (ANOVA) can be performed to separate the sources of variation.

- Data Analysis: The method is considered precise if the %RSD for repeatability is ≤ 1.0% and for intermediate precision is ≤ 2.0% for a drug substance assay.

Protocol for Specificity

This protocol ensures the method can distinguish the analyte from other components [1].

- Objective: To demonstrate that the peak response for the analyte is free from interference from impurities, degradants, and the sample matrix.

- Materials: Purified analyte (drug substance), placebo/excipients, known impurities and degradation products (if available), stressed samples (e.g., exposed to heat, light, acid, base, oxidant).

- Procedure:

- Inject a blank (solvent) and record the chromatogram.

- Inject the placebo solution and record the chromatogram.

- Inject a standard solution of the analyte and record the chromatogram.

- Inject individual solutions of available impurities and degradation products.

- Inject a sample that has been intentionally stressed to force degradation.

- Compare all chromatograms to check for co-elution (overlapping peaks).

- Data Analysis: The method is specific if there is no interference from the blank or placebo at the retention time of the analyte, and the analyte peak is baseline-resolved from all known impurity and degradation peaks. Peak purity tests using a diode array or mass spectrometry detector provide strong evidence of specificity [1].

Essential Research Reagent Solutions for Method Validation

The successful development and validation of analytical methods rely on a suite of high-quality reagents, standards, and instruments. The following table details the essential materials required for these activities.

Table 2: Key Reagent Solutions and Materials for Analytical Method Validation

| Material / Solution | Function in Method Development & Validation |

|---|---|

| High-Purity Reference Standards [5] | Serves as the benchmark for identifying the analyte and for quantifying potency, purity, and impurities. Essential for establishing method accuracy and linearity. |

| Chromatographic Systems (HPLC, GC) [4] | Provide the platform for separating the analyte from other components in a mixture. Critical for assessing specificity, precision, and robustness. |

| Mass Spectrometry (MS) Detectors [1] | Coupled with HPLC or GC, MS provides definitive structural identification of analytes and impurities, and is a powerful tool for confirming peak purity and method specificity. |

| Validated Mobile Phase Solvents & Reagents | The chemical environment for the analysis. Their quality and consistency are vital for achieving reproducible retention times, baseline stability, and robust method performance. |

| Characterized Impurity Standards [1] | Used to challenge the method's ability to detect and quantify impurities in the presence of the main analyte, directly supporting validation of specificity, LOD, and LOQ. |

Method validation is a foundational and non-negotiable scientific discipline within drug development and the CMC framework. It transforms an analytical procedure from a simple laboratory technique into a validated tool capable of generating reliable data to support critical decisions regarding product quality, safety, and efficacy. As outlined, the process is governed by clearly defined objectives and a comprehensive set of performance parameters aligned with international regulatory standards.

The dynamic interplay between method validation and CMC ensures that product quality is consistently built into the manufacturing process and verified through testing. From early development to commercial production and lifecycle management, a rigorous, phase-appropriate approach to method validation provides the evidence-based assurance required by regulators and, ultimately, by patients who rely on the quality and safety of their medicines. For researchers and drug development professionals, mastering these principles is essential for navigating the complex pathway from API to clinic and beyond.

For researchers in organic chemistry and drug development, demonstrating that an analytical method is reliable and fit for purpose is a critical regulatory requirement. Method validation provides documented evidence that a specific analytical procedure consistently yields results that accurately measure the characteristic it is intended to measure. Several key guidelines govern this process, primarily the International Council for Harmonisation (ICH) Q2(R1) guideline, various standards from the United States Pharmacopeia (USP), and guidance documents from the U.S. Food and Drug Administration (FDA). While these frameworks share the common goal of ensuring data quality and patient safety, their scope, focus, and application exhibit important differences. Understanding this complex regulatory landscape is essential for designing robust validation protocols, avoiding costly non-compliance, and accelerating the development of quality medicines.

The ICH Q2(R1) guideline, titled "Validation of Analytical Procedures: Text and Methodology," serves as the international benchmark for the validation of analytical methods. It harmonizes the requirements for registration applications across the European Union, Japan, and the United States [6]. The USP publishes legally recognized standards for medicines, including detailed monographs for drug substances and general chapters that describe analytical procedures and their validation requirements [7]. The FDA, in turn, issues product-specific guidance for various regulated products, such as tobacco, which build upon the foundational principles of ICH and USP [8]. This guide will objectively compare these frameworks, detail their experimental demands, and place them within the practical context of organic chemistry research.

Comparative Analysis of ICH, USP, and FDA Guidelines

The following table provides a structured, point-by-point comparison of the core attributes of the ICH Q2(R1), USP, and FDA guidelines for analytical method validation.

| Feature | ICH Q2(R1) | USP Standards | FDA Guidance (e.g., for Tobacco Products) |

|---|---|---|---|

| Primary Role & Scope | Definitive, international standard for method validation in pharmaceutical marketing applications [6]. | Legally recognized compendia of quality specifications and validated methods for drugs and ingredients in the United States [7]. | Product-specific recommendations for validating methods used in premarket applications for regulated products like tobacco [8]. |

| Key Validation Parameters | Defines precision, accuracy, specificity, detection limit, quantitation limit, linearity, range, and robustness [6]. | Covers similar parameters to ICH; heavily relies on the use of USP Reference Standards to ensure accuracy and reproducibility [7]. | Recommends validation of accuracy, precision, specificity, and range, tailored to the unique matrix of tobacco products [8]. |

| Regulatory Status | Harmonized guideline for ICH regions; adopted by regulatory bodies like the TGA [9]. | Official and legally enforceable in the U.S. under the Federal Food, Drug, and Cosmetic Act [7]. | Issued as "guidance," representing the FDA's current thinking on a topic, but not legally binding [8]. |

| Typical Application Context | Registration of pharmaceuticals for human use (New Drug Applications, Marketing Authorisation Applications) [6]. | Quality control testing and compliance of marketed pharmaceuticals and compounded preparations with USP monographs [7] [10]. | Premarket applications for specific product categories (e.g., Premarket Tobacco Product Applications, Substantial Equivalence Reports) [8]. |

| Experimental Material Requirements | Does not specify particular reference standards. | Mandates the use of official USP Reference Standards for compendial testing to ensure analytical rigor [7]. | Does not specify a single source for reference materials, but they must be suitably qualified. |

Experimental Protocols for Key Validation Parameters

A successful method validation study requires a structured plan that defines quality requirements, selects appropriate experiments, and establishes statistical criteria for acceptability [11]. The following section outlines detailed experimental methodologies for the core validation parameters defined in ICH Q2(R1), which also form the basis for USP and FDA requirements.

Precision

Precision, the degree of scatter in a series of measurements from the same homogeneous sample, is typically validated at three levels.

- Protocol for Repeatability: Prepare a homogeneous sample of the analyte at 100% of the test concentration. Using a single analyst and the same analytical system, analyze a minimum of six independent replicates. The relative standard deviation (RSD) of the results is calculated and compared against pre-defined acceptance criteria.

- Protocol for Intermediate Precision: Demonstrate the impact of within-laboratory variations by having a second analyst repeat the repeatability study on a different day, using a different instrument of the same type, if available. The combined data from both analysts is used to calculate an overall RSD.

- Protocol for Reproducibility: This is assessed through a collaborative inter-laboratory study, which is typically required for standardization of methods, such as for USP monographs.

Accuracy

Accuracy expresses the closeness of agreement between the measured value and a value accepted as a true or reference value.

- Protocol via Spiked Recovery: Prepare the sample matrix without the analyte. Spike this blank matrix with known quantities of the analyte across the specified range (e.g., 50%, 100%, 150% of the target concentration). Each level should be analyzed in triplicate. The accuracy is calculated as the percentage recovery of the known, added amount:

(Measured Concentration / Known Concentration) * 100%.

Specificity and Selectivity

Specificity is the ability to assess the analyte unequivocally in the presence of components that may be expected to be present, such as impurities, degradants, or matrix.

- Protocol for Chromatographic Methods: Inject and analyze the following solutions separately: the analyte standard, a placebo or blank matrix, samples spiked with potential impurities and degradation products (generated by stressing the sample), and a mixture of all components. The method should demonstrate that the analyte peak is baseline resolved from all other peaks and that no interfering peaks are present at the retention time of the analyte in the blank.

Linearity and Range

Linearity is the ability of the method to obtain test results proportional to the concentration of the analyte.

- Protocol: Prepare a minimum of five standard solutions spanning the intended range of the method (e.g., 50%, 75%, 100%, 125%, 150%). Plot the instrumental response against the known concentration of the analyte. The data is evaluated by linear regression analysis. The correlation coefficient (r), y-intercept, and slope of the regression line are reported. The range is the interval between the upper and lower concentration levels for which linearity, accuracy, and precision have been demonstrated.

Robustness

Robustness is a measure of the method's capacity to remain unaffected by small, deliberate variations in procedural parameters.

- Protocol for HPLC: A method is typically challenged with deliberate variations in parameters such as mobile phase pH (±0.2 units), organic composition (±2%), column temperature (±5°C), and flow rate (±10%). A single set of samples is analyzed under these slightly modified conditions, and the results are compared to those obtained under nominal conditions. Key performance indicators, such as resolution from the closest peak, tailing factor, and capacity factor, should remain within specified limits.

The following workflow diagram visualizes the logical sequence of a typical analytical method validation process, integrating the parameters described above.

The Scientist's Toolkit: Essential Reagents and Materials

The execution of a validated analytical method relies on high-quality, well-characterized materials. The following table details key research reagent solutions and their critical functions in ensuring the accuracy and reproducibility of analytical data.

| Item | Function & Importance |

|---|---|

| USP Reference Standards | Highly characterized specimens of drug substances, impurities, and excipients. They serve as the primary benchmark for confirming the identity, strength, quality, and purity of pharmaceuticals during compendial testing as per USP-NF [7]. |

| Chromatographic Columns | The stationary phase for HPLC and GC separations. The specific type (e.g., C18, phenyl) and its properties are critical for achieving the resolution, peak shape, and selectivity specified in the validated method. |

| High-Purity Solvents & Reagents | Essential for preparing mobile phases, standards, and samples. Impurities can cause high background noise, baseline drift, ghost peaks, and interfere with the detection or quantification of the analyte, compromising accuracy. |

| Certified Mass Spectrometry Standards | Used for instrument calibration and confirming mass accuracy in LC-MS or GC-MS workflows. These standards are traceable to a primary reference and are crucial for generating reliable qualitative and quantitative data. |

| System Suitability Standards | A reference preparation tailored to the method that is used to verify that the chromatographic system is adequate for the intended analysis. It typically tests for parameters like plate count, tailing factor, and resolution, ensuring the system is performing as it did during validation [7]. |

Data Presentation and Reporting Standards

Publishing or reporting data from validated methods requires meticulous documentation to enable verification and reproduction. The Royal Society of Chemistry and other publishing bodies mandate that experimental procedures be described in sufficient detail for a skilled researcher to replicate the work [12]. The following outlines key reporting requirements.

- Experimental Details: The description must include all critical procedural details. For organic compounds, this includes yields, melting points, and full spectroscopic characterization (e.g., NMR, IR, MS) [12]. For example, NMR data should be reported with δ values, specifying instrument frequency, solvent, and standard, while mutually coupled protons must be quoted with precisely matching J values [12].

- Data Presentation and Uncertainty: All data must be reported with the correct number of significant figures. Figures, such as chromatograms or calibration curves, should include error bars where appropriate, and results must be accompanied by an analysis of experimental uncertainty [12].

- Adherence to FAIR Principles: Supporting data should be made as Findable, Accessible, Interoperable, and Reusable as possible. This often involves depositing primary data (e.g., raw chromatograms, spectra) in an appropriate repository and including a formal data availability statement [12].

Navigating the requirements of ICH Q2(R1), USP, and FDA is fundamental to successful drug development and organic chemistry research. While these guidelines are complementary, they serve distinct roles: ICH Q2(R1) provides the foundational, internationally-harmonized validation methodology; USP supplies the legally-recognized documentary standards and physical reference materials for quality testing; and FDA offers product-specific application guidance. A robust validation strategy begins with ICH Q2(R1) as its core framework, incorporates USP Reference Standards for compendial methods or to ensure analytical rigor, and is finalized with a careful review of any relevant FDA product-specific guidance. By integrating these elements into a coherent experimental plan, researchers can generate high-quality, reliable data that meets regulatory expectations, ensures patient safety, and brings quality medicines to market efficiently.

In the pharmaceutical sciences, the reliability of any analytical method is paramount. Method validation provides documented evidence that a specific analytical procedure is suitable for its intended use, ensuring the identity, purity, potency, and performance of drug substances and products. For researchers and drug development professionals, this process is not merely a regulatory checkbox but a fundamental component of scientific excellence and data integrity. It forms the bedrock upon which quality control, stability studies, and regulatory submissions are built.

This guide focuses on four core validation parameters—Accuracy, Precision, Specificity, and Linearity—that are universally recognized as essential by international guidelines such as the International Council for Harmonisation (ICH) Q2(R1) [13] [3]. These parameters are critically examined through the lens of modern reversed-phase high-performance liquid chromatography (RP-HPLC) applications, a cornerstone technique in pharmaceutical analysis. The objective is to provide a comparative overview of how these parameters are demonstrated and assessed in practice, supported by experimental data from recent studies, to aid in the development and evaluation of robust analytical methods.

Core Principles and Comparative Analysis of Validation Parameters

The following section dissects each of the four essential validation parameters, defining their role, explaining their evaluation, and presenting comparative experimental data from contemporary research.

Accuracy

Accuracy refers to the closeness of agreement between a test result and the accepted reference value (the true value). It is typically expressed as percent recovery of a known, spiked amount of analyte in a sample [13] [3]. A method cannot be considered precise if it is not accurate, as accuracy is a direct measure of correctness.

- Experimental Protocol for Assessment: Accuracy is determined by spiking a sample matrix (e.g., a placebo or a blank formulation) with known concentrations of the analyte at multiple levels, usually covering the specified range (e.g., 80%, 100%, and 120% of the target concentration). The sample is then analyzed using the developed method. The measured concentration is compared to the spiked concentration, and the percent recovery is calculated. Recovery rates between 98% and 102% are generally considered acceptable for pharmaceutical assays [13] [14].

- Comparative Experimental Data: The following table summarizes accuracy data from multiple RP-HPLC method validations.

Table 1: Comparative Accuracy Data from Recent RP-HPLC Method Validations

| Analytes | Sample Matrix | Spiking Levels | Average Recovery (%) | Citation |

|---|---|---|---|---|

| Favipiravir | Laboratory-prepared tablets | Not Specified | RSD < 2% (implied high accuracy) | [15] |

| Metoclopramide & Camylofin | Pharmaceutical dosage forms | Multiple levels across the range | 98.2 - 101.5 | [13] |

| Brimonidine Tartrate | Ophthalmic dosage forms | 100-500 ppm | 99.42 - 99.82 | [14] |

| Timolol Maleate | Ophthalmic dosage forms | 250-1250 ppm | 98.71 - 101.10 | [14] |

Precision

Precision describes the closeness of agreement between a series of measurements obtained from multiple sampling of the same homogeneous sample under prescribed conditions. It is a measure of the method's repeatability and reproducibility and is usually expressed as the relative standard deviation (RSD) or coefficient of variation [3]. Precision is investigated at multiple levels.

- Experimental Protocol for Assessment:

- Repeatability: Expresses the precision under the same operating conditions over a short interval of time. It is assessed by analyzing six replicate preparations of a single homogeneous sample at 100% of the test concentration.

- Intermediate Precision: Demonstrates the reliability of results within the same laboratory under varying conditions, such as different days, different analysts, or different equipment [16]. The data from these variations are combined, and the RSD is calculated.

- Comparative Experimental Data: A key benchmark for a precise method is an RSD value of less than 2% for the assay of drug substances in pharmaceutical dosage forms.

Table 2: Precision Data from Validated HPLC Methods

| Analytes | Precision Level | RSD Value (%) | Citation |

|---|---|---|---|

| Favipiravir | Repeatability | < 2 | [15] |

| Metoclopramide & Camylofin | Intra-day & Inter-day | < 2 | [13] |

| Brimonidine Tartrate & Timolol Maleate | Repeatability & Intermediate Precision | < 2 | [14] |

Specificity

Specificity is the ability of a method to assess the analyte unequivocally in the presence of other components that may be expected to be present, such as impurities, degradants, or matrix components [3]. A specific method provides confidence that the peak being measured is indeed the analyte of interest and nothing else.

- Experimental Protocol for Assessment: Specificity is demonstrated by analyzing a blank sample (e.g., placebo without the active ingredient), a standard of the pure analyte, and a sample spiked with potential interferents (e.g., impurities or excipients). The gold standard for proving specificity is through forced degradation studies (stress testing), where the sample is subjected to harsh conditions like acid/base hydrolysis, oxidative stress, thermal stress, and photolytic stress. The method should be able to separate the analyte peak from any degradation products, proving its stability-indicating capability [14].

- Case Study Example: In the development of a method for Brimonidine Tartrate and Timolol Maleate, forced degradation studies under acid/base hydrolysis and oxidation successfully separated the active drugs from their degradation products. While both drugs were stable to heat and light, Timolol Maleate showed significant degradation under hydrolytic and oxidative conditions, confirming the method's specificity in detecting the analyte amidst impurities [14].

Linearity

Linearity of an analytical method is its ability to produce test results that are directly proportional to the concentration of the analyte in a defined range. The range is the interval between the upper and lower concentrations for which the method has suitable levels of accuracy, precision, and linearity.

- Experimental Protocol for Assessment: Linearity is established by preparing and analyzing a series of standard solutions at a minimum of five concentration levels across the claimed range. The peak response (e.g., area) is plotted against the concentration, and a regression line is calculated using the least-squares method. The correlation coefficient (R²) is a key indicator, with a value greater than 0.999 being desirable for assay methods. The y-intercept and slope of the line are also evaluated [13] [14].

- Comparative Experimental Data:

Table 3: Linearity and Range Data from Validated Methods

| Analytes | Linear Range | Correlation Coefficient (R²) | Citation |

|---|---|---|---|

| Favipiravir | Not Specified | Excellent (per report) | [15] |

| Metoclopramide | 0.375 - 2.7 μg/mL | > 0.999 | [13] |

| Camylofin | 0.625 - 4.5 μg/mL | > 0.999 | [13] |

| Brimonidine Tartrate | 100 - 500 ppm | Excellent (per report) | [14] |

| Timolol Maleate | 250 - 1250 ppm | Excellent (per report) | [14] |

Advanced Concepts: LOD/LOQ and Robustness

Beyond the four core parameters, a comprehensive validation includes other critical characteristics.

- Limit of Detection (LOD) and Limit of Quantification (LOQ): The LOD is the lowest amount of analyte that can be detected, but not necessarily quantified. The LOQ is the lowest amount that can be quantified with acceptable precision and accuracy [17]. These are crucial for impurity methods. Strategies for determining them include signal-to-noise ratio (3:1 for LOD, 10:1 for LOQ) or using the standard deviation of the response and the slope of the calibration curve. Advanced graphical approaches like the uncertainty profile are emerging as more realistic and reliable for their assessment [17].

- Robustness: Robustness is a measure of a method's capacity to remain unaffected by small, deliberate variations in procedural parameters (e.g., mobile phase pH, flow rate, column temperature) and provides an indication of its reliability during normal usage [16]. It is typically evaluated using multivariate experimental designs (e.g., full factorial, fractional factorial, or Plackett-Burman designs) during the method development phase. This allows for the simultaneous study of multiple factors to identify critical parameters and establish system suitability limits, ensuring the method's ruggedness over its lifecycle [15] [16].

Experimental Protocols in Practice

Workflow for a Validation Study

The following diagram outlines a generalized experimental workflow for validating an analytical method, integrating the core parameters discussed.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents and materials commonly used in the development and validation of RP-HPLC methods for pharmaceutical analysis, as evidenced in the cited studies.

Table 4: Essential Reagents and Materials for HPLC Method Validation

| Item | Typical Function & Specification | Application Example |

|---|---|---|

| HPLC Grade Solvents (Acetonitrile, Methanol) | Mobile phase component; high purity minimizes background noise and detector interference. | Organic modifier in mobile phase for eluting analytes [15] [13]. |

| Buffer Salts (e.g., Disodium hydrogen phosphate, Ammonium acetate) | Provides consistent pH in aqueous mobile phase, controlling analyte ionization and retention. | 20 mM Ammonium acetate buffer, pH 3.5, for separation of metoclopramide and camylofin [13]. |

| pH Adjusting Agents (e.g., Ortho-phosphoric acid, Glacial acetic acid) | Fine-tunes mobile phase pH, critical for reproducibility and robustness [16]. | Glacial acetic acid used to adjust buffer pH to 3.5 [13]. |

| C18 Stationary Phase Columns | The most common RP-HPLC column; separates analytes based on hydrophobicity. | Inertsil ODS-3 C18 column used for favipiravir quantification [15]. |

| Reference Standards (High Purity Analytes) | Serves as the benchmark for identity, potency, and quantification during method validation. | Metoclopramide and Camylofin standards from Tokyo Chemical Industry [13]. |

The parameters of Accuracy, Precision, Specificity, and Linearity are non-negotiable pillars of a reliable analytical method. As demonstrated by experimental data from contemporary research, the validation process is a rigorous, data-driven endeavor. The trend in the field is moving towards more robust and sustainable methods, often developed using principles of Analytical Quality by Design (AQbD) [15] and Green Analytical Chemistry (GAC) [14] [18]. Furthermore, advanced statistical and graphical tools are being adopted for a more realistic determination of critical parameters like LOD/LOQ [17] and robustness [16]. For researchers in drug development, a deep understanding of these principles is essential not only for regulatory compliance but also for ensuring the safety and efficacy of pharmaceutical products brought to the market.

In the field of analytical chemistry, particularly for pharmaceutical analysis, method validation provides the foundational evidence that an analytical procedure is suitable for its intended purpose. While parameters such as accuracy, precision, and specificity form the core validation criteria, supplementary parameters including the Limit of Detection (LOD), Limit of Quantitation (LOQ), Range, Ruggedness, and Robustness provide critical additional assurance of method reliability [19]. These parameters ensure methods perform consistently at their operational limits and under varied realistic conditions, forming an essential component of compliance with global regulatory standards such as the International Council for Harmonisation (ICH) guidelines [20] [19].

This guide objectively compares the performance characteristics of these critical supplementary parameters through experimental data and established protocols, providing researchers and drug development professionals with practical insights for implementing these concepts within organic chemistry method validation frameworks.

Parameter Definitions and Regulatory Significance

Core Definitions

Limit of Detection (LOD): The lowest concentration of an analyte in a sample that can be detected, but not necessarily quantitated, under the stated experimental conditions [20] [21]. It is typically expressed as a signal-to-noise ratio of 3:1 [20].

Limit of Quantitation (LOQ): The lowest concentration of an analyte in a sample that can be quantitatively determined with acceptable precision and accuracy [20] [21]. It is typically expressed as a signal-to-noise ratio of 10:1 [20].

Range: The interval between the upper and lower concentrations of an analyte (inclusive) that has been demonstrated to be determined with acceptable precision, accuracy, and linearity using the method as written [20].

Robustness: A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters [21]. It provides an indication of the method's reliability during normal usage [13] [22].

Ruggedness: The degree of reproducibility of test results obtained by the analysis of the same samples under a variety of normal expected conditions, such as different laboratories, analysts, instruments, reagent lots, elapsed assay times, assay temperature, or days [20]. The term "ruggedness" is falling out of favor with ICH and is now largely addressed under "intermediate precision" and "reproducibility" [20].

Regulatory Context and Guidelines

The ICH Q2(R2) guideline provides the primary framework for analytical method validation for pharmaceutical applications, with the revised version effective June 2024 [19]. These guidelines harmonize requirements across regulatory bodies including the FDA and European Medicines Agency (EMA). The United States Pharmacopeia (USP) General Chapter <621> provides specific chromatography requirements, with updated sections on system sensitivity and peak symmetry becoming effective May 1, 2025 [23].

Comparative Analysis of Performance Characteristics

Acceptance Criteria Across Analytical Applications

Table 1: Comparative Acceptance Criteria for Supplementary Validation Parameters

| Parameter | Assay Methods | Impurity Methods | Identification Methods |

|---|---|---|---|

| LOD | Typically not required for main analyte | Signal-to-noise ratio ≥ 3:1 [20] | Not the primary focus |

| LOQ | Typically not required for main analyte | Signal-to-noise ratio ≥ 10:1 [20]; Must demonstrate precision (RSD < 10-20%) and accuracy (80-120%) [20] [21] | Not applicable |

| Range | 80-120% of test concentration [21] | LOQ to 120% of specification level [21] | Not applicable |

| Robustness | Method performance remains within acceptance criteria despite deliberate variations [13] | Same as assay methods | Method maintains specificity despite variations |

| Ruggedness (Intermediate Precision) | RSD typically ≤ 2% for different analysts, instruments, days [21] | RSD criteria wider than assay, dependent on concentration level | Consistent identification confirmed across variations |

Experimental Data from Validated Methods

Table 2: Experimental Data from Published Method Validations

| Analyte/ Method | LOD | LOQ | Range | Robustness Assessment | Precision (Repeatability) |

|---|---|---|---|---|---|

| Metoclopramide (MET) & Camylofin (CAM) RP-HPLC [13] | MET: 0.23 μg/mLCAM: 0.15 μg/mL | MET: 0.35 μg/mLCAM: 0.42 μg/mL | MET: 0.375-2.7 μg/mLCAM: 0.625-4.5 μg/mL | Deliberate variations in flow rate (0.9-1.1 mL/min), column temperature (35-45°C); RSD < 2% | Intra- and inter-day RSD < 2% |

| Mesalamine RP-HPLC [24] | 0.22 μg/mL | 0.68 μg/mL | 10-50 μg/mL (R² = 0.9992) | Robust under slight method variations (RSD < 2%) | Intra- and inter-day RSD < 1% |

Experimental Protocols and Methodologies

Determination of LOD and LOQ

Signal-to-Noise Method: This approach is particularly applicable to chromatographic methods where baseline noise can be measured [20]. The LOD is determined as the analyte concentration that produces a signal-to-noise ratio of 3:1, while the LOQ is determined as the concentration producing a signal-to-noise ratio of 10:1 [20]. This method was successfully employed in the validation of methods for both metoclopramide and mesalamine [13] [24].

Standard Deviation/Slope Method: This calculation-based approach uses the formula: LOD = 3.3 × σ/S and LOQ = 10 × σ/S, where σ is the standard deviation of the response and S is the slope of the calibration curve [20] [21]. This method is gaining popularity for its statistical rigor and is particularly useful when baseline noise is difficult to measure consistently.

Establishing Range

The range is established by demonstrating that the method exhibits suitable levels of linearity, accuracy, and precision across the entire interval [20]. For assay methods, this typically spans 80-120% of the target test concentration, while for impurity methods, it extends from the LOQ to 120% of the specification level [21]. The mesalamine method validation demonstrated a range of 10-50 μg/mL with excellent linearity (R² = 0.9992) [24].

Robustness Testing Methodology

Robustness is verified by introducing small, deliberate variations in method parameters and evaluating system performance [13]. Key variables to test include:

- Chromatographic conditions: Flow rate (±10%), column temperature (±5°C), mobile phase composition (±2-3% absolute), pH (±0.2 units) [13] [22]

- Sample preparation: Extraction time, solvent composition, stability in solution

- Instrument parameters: Detection wavelength, injection volume

The metoclopramide study demonstrated robustness by testing flow rate variations from 0.9-1.1 mL/min and column temperature from 35-45°C while maintaining RSD values below 2% [13].

Ruggedness (Intermediate Precision) Assessment

Ruggedness is evaluated through intermediate precision studies that incorporate variations expected during routine method use [20]. A standard protocol includes:

- Different analysts performing the analysis

- Different instruments or HPLC systems

- Different days of analysis

- Different reagent lots

Each analyst prepares their own standards and solutions, and results are statistically compared (e.g., using Student's t-test) to determine if significant differences exist between the means obtained under different conditions [20].

Visualization of Parameter Relationships and Workflows

Method Validation Parameter Relationships

LOD and LOQ Determination Workflow

The Researcher's Toolkit: Essential Reagents and Equipment

Table 3: Essential Research Reagents and Equipment for Method Validation

| Item | Function/Purpose | Examples/Specifications |

|---|---|---|

| HPLC System with UV/Vis Detector | Separation and detection of analytes | Shimadzu systems with SPD-20A detector [13]; Binary pump systems capable of precise flow rates (0.0001-10 mL/min) [24] |

| Chromatography Columns | Analytical separation | Phenyl-hexyl columns [13]; C18 columns (150 mm × 4.6 mm, 5 μm) [24] |

| Reference Standards | Method calibration and accuracy determination | Certified reference materials with documented purity (e.g., 99.8% for Mesalamine API) [24] |

| HPLC-Grade Solvents | Mobile phase preparation | Methanol, acetonitrile, water (HPLC grade, 99.0% purity) [13] [24] |

| Buffer Components | Mobile phase modification | Ammonium acetate (analytical grade) [13]; Glacial acetic acid for pH adjustment [13] |

| pH Meter | Mobile phase pH adjustment and control | Digital pH meter with calibration capabilities [13] |

| Analytical Balance | Precise weighing of standards and samples | Balance with 0.1 mg readability [13] |

| Membrane Filters | Mobile phase and sample filtration | 0.45 μm nylon membrane filters [13] [24] |

| Ultrasonic Bath | Mobile phase degassing and sample dissolution | Equipment for consistent degassing (5 minutes typical) [13] [24] |

| Volumetric Glassware | Precise solution preparation | Class A volumetric flasks and pipettes |

The Role of Method Validation in Ensuring Product Quality, Safety, and Efficacy

Method validation is the process of providing documented evidence that an analytical procedure does what it is intended to do [25]. In regulated industries such as pharmaceuticals, laboratories must perform analytical method validation (AMV) to comply with regulations, but conducting AMV is also fundamentally sound science [25]. The primary purpose of method validation is to demonstrate that an established method is "fit for the purpose," meaning it will provide data that meets the criteria set during the planning phase for its intended use [26]. This process ensures that analytical methods consistently produce reliable, accurate, and precise results that safeguard product quality, ensure patient safety, and confirm therapeutic efficacy.

For analytical methods used in organic chemistry techniques, validation is not a single event but a structured, iterative process often performed during method development and finalized before routine use [26] [16]. It is considered unacceptable for analysts to use a published 'validated method' without demonstrating their own capability and the method's performance in their specific laboratory environment [26]. This verification confirms that the method will perform as expected under local conditions, providing a critical foundation for decision-making in drug development and manufacturing.

Performance Characteristics of Method Validation

A robust method validation study systematically investigates several key performance characteristics. The specific parameters evaluated depend on the type of method and its intended application, but core characteristics have been defined by regulatory guidelines from bodies like the International Conference on Harmonization (ICH) and the USP [25] [16].

Table 1: Key Performance Characteristics in Method Validation

| Characteristic | Definition | Typical Validation Approach |

|---|---|---|

| Specificity | The ability to measure the analyte accurately and specifically in the presence of other components [25]. | Demonstration of resolution between peaks; peak-purity tests using photodiode-array or mass spectrometry [25]. |

| Accuracy | The closeness of test results to the true value [25]. | Comparison to a standard reference material or a second, well-characterized method; recovery studies of spiked samples [26] [25]. |

| Precision | The degree of agreement among test results from repeated applications to multiple samplings of a homogeneous sample [25]. | Measured as repeatability (same conditions), intermediate precision (different days, analysts), and reproducibility (different labs); reported as %RSD [25]. |

| Linearity & Range | The ability to provide results proportional to analyte concentration within a given interval [25]. | Minimum of five concentration levels; data reported as equation for the calibration curve, coefficient of determination (r²), and residuals [25]. |

| Limit of Detection (LOD) | The lowest concentration of an analyte that can be detected [25]. | Signal-to-noise ratio of 3:1 is common in chromatography [25]. |

| Limit of Quantitation (LOQ) | The lowest concentration that can be quantified with acceptable precision and accuracy [25]. | Signal-to-noise ratio of 10:1 is common in chromatography [25]. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in procedural parameters [25] [16]. | Intentional variation of method parameters (e.g., mobile phase pH, temperature, flow rate) to study effects on results [16]. |

The approach for formulating a validation plan involves defining a quality requirement for the test, selecting experiments to reveal analytical errors, collecting data, performing statistical calculations, and comparing observed errors to allowable error to judge acceptability [11].

Experimental Protocols for Key Validation Tests

Protocol for Determining Accuracy

The accuracy of a method is best established through the analysis of a certified reference material (CRM) [26]. When a CRM is not available, alternative approaches are used in the following order of preference:

- Comparison to a Validated Independent Method: Data obtained from a second, well-characterized method serves as a reference [26].

- Inter-laboratory Comparison: Results are compared with those from other accredited laboratories [26].

- Spike Recovery Experiments: This is a common protocol for drug products and involves analyzing synthetic mixtures spiked with known quantities of the analyte [26] [25].

Detailed Spike Recovery Protocol:

- Sample Preparation: Prepare synthetic mixtures containing all excipient materials in the correct proportions but without the analyte (blank matrix).

- Spiking: Spike the blank matrix with known quantities of the analyte across a minimum of three concentration levels covering the specified range of the method [25].

- Analysis and Data Collection: Analyze a minimum of nine determinations (e.g., three replicates at each of the three concentration levels) [25].

- Data Reporting: Report data as the percent recovery of the known, added amount, or as the difference between the mean and the true value with confidence intervals (e.g., ±1 standard deviation) [25]. Acceptability criteria are user-defined but often fall within 97–103% of the nominal value [25].

Protocol for Assessing Precision

Precision is measured at different levels, with repeatability being the most fundamental.

Detailed Repeatability Protocol:

- Sample Preparation: Prepare a single, homogeneous sample.

- Analysis: Apply the method repeatedly to multiple samplings of this homogeneous sample.

- Data Collection: Conduct a minimum of nine determinations covering the specified range of the procedure, or a minimum of six determinations at 100% of the test concentration [25].

- Data Analysis: Calculate the standard deviation and report results as the percent relative standard deviation (%RSD). A common acceptability criterion is less than 2% RSD, though less than 5% can be acceptable for minor components [25].

Protocol for Evaluating Robustness

Robustness testing investigates the method's reliability when small, deliberate changes are made to operational parameters. A multivariate experimental design is more efficient than a univariate (one-factor-at-a-time) approach, as it allows for the observation of interactions between parameters [16].

Screening Design for Robustness (Plackett-Burman):

- Factor Selection: Identify critical operational parameters (e.g., mobile phase pH, organic solvent proportion, flow rate, column temperature, detection wavelength) [16].

- Set Levels: For each factor, define a nominal (target) value, as well as a high (+) and low (-) value that represent small, realistic variations expected in a laboratory setting [16].

- Experimental Design: Use a Plackett-Burman design, which is an efficient screening design for identifying critical factors without testing all possible combinations. This design uses a specific set of runs in multiples of four [16].

- Execution and Analysis: Perform the runs as per the experimental design matrix. Analyze the data to identify which factors have a significant effect on critical method responses (e.g., resolution, retention time, peak area). System suitability parameters are often established based on the results of robustness studies [16].

Comparative Analysis of Method Performance

Method validation provides the quantitative data necessary to objectively compare the performance of a new or alternative analytical method against an established benchmark. This comparison is crucial for method selection, transfer, and optimization.

Table 2: Quantitative Comparison of Hypothetical HPLC Methods for Assaying Active Pharmaceutical Ingredient (API)

| Performance Characteristic | Compendial Method (Benchmark) | New UPLC Method (Alternative) | Acceptance Criteria |

|---|---|---|---|

| Accuracy (% Recovery) | 98.5 - 101.2% | 99.2 - 100.8% | 97 - 103% [25] |

| Repeatability (%RSD, n=6) | 0.8% | 0.5% | ≤ 2.0% |

| Intermediate Precision (%RSD) | 1.5% | 1.1% | ≤ 3.0% |

| Specificity (Resolution) | Resolution > 2.0 from closest eluting impurity | Resolution > 2.5 from all impurities | Resolution ≥ 1.5 |

| Linearity (r²) | 0.999 | 0.9995 | ≥ 0.998 |

| Range | 50-150% of test concentration | 25-150% of test concentration | As per ICH guidelines |

| Analysis Time | 15 minutes | 5 minutes | N/A |

| Solvent Consumption | 15 mL per run | 4 mL per run | N/A |

The data in Table 2 demonstrates how a new Ultra Performance Liquid Chromatography (UPLC) method can be compared to a compendial High-Performance Liquid Chromatography (HPLC) method. The validation data shows that the UPLC method not only meets all key performance criteria but also offers significant advantages in speed and solvent reduction, supporting a decision for its adoption based on both reliability and sustainability.

The Scientist's Toolkit: Essential Research Reagent Solutions

The reliability of method validation is contingent upon the quality of materials used. The following table details key reagents and materials essential for conducting validation experiments in organic chemistry analysis.

Table 3: Essential Research Reagent Solutions for Method Validation

| Reagent/Material | Function in Validation | Critical Quality Attributes |

|---|---|---|

| Certified Reference Material (CRM) | Serves as the primary standard for establishing method accuracy and trueness [26]. | Certified purity and stability; traceability to a national metrology institute. |

| High-Purity Analytical Standards | Used for preparing calibration curves (linearity), spiking solutions (accuracy), and determining LOD/LOQ [25]. | High chemical purity; well-characterized identity and structure; appropriate stability. |

| Chromatography-Mobile Phase Solvents | The liquid medium that carries the sample through the chromatographic system; variations are tested in robustness studies [16]. | HPLC or UPLC grade; low UV absorbance; minimal particulate matter. |

| Chromatography-Buffers & Additives | Modify mobile phase properties to control selectivity, pH, and efficiency; pH and concentration are critical robustness factors [16]. | High purity; specified pH and concentration; compatibility with the analytical column. |

| Sample Matrix Placebo | A blend of all excipient materials without the active analyte; used for specificity testing and spike recovery experiments [25]. | Represents the final product formulation; confirmed to be free of interfering components. |

| Analytical Columns | The stationary phase where chemical separation occurs; different lots and brands are tested for robustness [16]. | Specified chemistry (C18, C8, etc.); lot-to-lot reproducibility; stable performance over time. |

Method validation is an indispensable, scientifically rigorous discipline that forms the bedrock of product quality, safety, and efficacy. By systematically characterizing performance attributes such as specificity, accuracy, precision, and robustness, scientists generate the defensible data required to trust the analytical results upon which critical decisions are made. The structured experimental protocols and comparative frameworks outlined in this guide provide a pathway for researchers to not only comply with regulatory standards but also to advance analytical science through the development of more reliable, efficient, and sustainable methods. In the highly regulated world of drug development, a thoroughly validated method is more than a procedural requirement—it is a fundamental commitment to scientific integrity and public health.

Developing and Implementing Validated Organic Chemistry Methods

A Step-by-Step Framework for Analytical Method Development and Validation

In pharmaceutical development and organic chemistry research, the generation of reliable, reproducible, and accurate data is paramount. Analytical method development and validation constitute a systematic process to ensure that the procedures used to identify, quantify, and characterize substances deliver consistent results that stand up to regulatory scrutiny. These processes are foundational for ensuring product quality and safety, supporting regulatory submissions, facilitating batch release and stability testing, and aiding in formulation and process development [22]. Inaccurate or poorly validated methods can lead to costly delays in development timelines, regulatory rejections, product recalls, or the release of ineffective or dangerous products into the market [22].

This guide provides a comprehensive, step-by-step framework for analytical method development and validation, objectively comparing traditional one-variable-at-a-time (OVAT) approaches with modern, efficient strategies like Analytical Quality by Design (AQbD) and High-Throughput Experimentation (HTE). The content is structured within the broader context of method validation guidelines for organic chemistry techniques research, providing drug development professionals and scientists with the experimental protocols and comparative data needed to select the optimal strategy for their analytical challenges.

Comparative Strategic Approaches: AQbD and HTE vs. Traditional Methods

The paradigm for developing and optimizing analytical methods has shifted from traditional, empirical approaches to systematic, science-based, and risk-managed frameworks. The table below compares the core characteristics of these strategic approaches.

Table 1: Comparison of Strategic Approaches to Method Development and Optimization

| Feature | Traditional OVAT Approach | Modern AQbD Approach | High-Throughput Experimentation (HTE) |

|---|---|---|---|

| Core Philosophy | Empirical, one-variable-at-a-time | Systematic, risk-based, predefined objectives | Data-driven, leveraging automation and large-scale screening |

| Experimental Design | Linear, sequential variation of factors | Multivariate experiments (e.g., DoE) to understand interactions | Highly parallelized screening of numerous conditions simultaneously |

| Primary Advantage | Simple, intuitive, low initial planning | Robust, defines a Method Operable Design Region (MODR) | Rapid exploration of vast experimental spaces; captures valuable negative data |

| Key Limitation | Inefficient; misses factor interactions; poor robustness | Requires greater upfront investment in planning and statistical expertise | High initial setup cost for automation; data analysis complexity; can be more qualitative |

| Regulatory Alignment | Basic compliance | Highly encouraged by FDA/ICH (Q14 lifecycle approach) | Supports data-rich submissions; emerging best practices |

| Best Application | Simple methods with few critical variables | Complex methods requiring high robustness and reliability | Optimization of methods with many variables or poorly understood spaces |

The Traditional OVAT Approach involves changing a single parameter at a time while holding others constant. While simple, this method is inefficient and often fails to detect interactions between variables, potentially resulting in a method that is not robust [16].

The Analytical Quality by Design (AQbD) approach is a systematic, risk-based framework that builds quality into the method from the start. It emphasizes predefined objectives and uses multivariate experimental design to understand the interaction of method parameters and their combined impact on performance. A case study for an RP-HPLC method for favipiravir used a risk assessment to identify high-risk factors, a D-optimal experimental design to study their impact, and Monte Carlo simulation to establish a robust Method Operable Design Region (MODR) [15].

High-Throughput Experimentation (HTE) utilizes automation to rapidly execute a vast number of experiments in parallel. This is particularly powerful for probing complex "reactomes" and identifying hidden relationships between reaction components and outcomes [27]. HTE can significantly improve the understanding of organic chemistry by systematically interrogating reactivity across diverse chemical spaces, providing both positive and valuable negative data [27].

A Step-by-Step Framework for Analytical Method Development

The development of an analytical method is an iterative, data-driven process that evolves from initial conception to an optimized and reproducible protocol [22]. The following workflow outlines the critical stages.

Step 1: Define the Analytical Target Profile (ATP) The ATP is a formal statement that defines the method's purpose, its performance requirements, and the conditions under which it will operate [22]. It specifies the goal, such as "quantify an active pharmaceutical ingredient (API) in a tablet matrix," and defines the required performance levels for accuracy, precision, and resolution.

Step 2: Select the Appropriate Analytical Technique The choice of technique—such as HPLC, GC, or UV-Vis—is guided by the compound's physical and chemical properties (e.g., polarity, volatility, stability) and the ATP's requirements [22]. For instance, a simultaneous determination of five COVID-19 antivirals with diverse properties was achieved using RP-HPLC with a C18 column [28].

Step 3: Risk Assessment and Initial Scoping A risk assessment identifies factors that could significantly impact method performance. In the AQbD approach for a favipiravir method, factors like the ratio of solvent, pH of the buffer, and column type were classified as high risk and selected for further study [15]. Preliminary testing evaluates feasibility, retention time, and peak shape.

Step 4: Multivariate Optimization Instead of OVAT, this stage employs structured experimental designs (DoE) to efficiently optimize multiple parameters simultaneously. This reveals interactions between variables. For example, a robustness study might use a fractional factorial design to efficiently test the impact of pH, flow rate, and mobile phase composition [16].

Step 5: Final Method Protocol and Robustness Testing The optimized parameters are consolidated into a final method protocol. A robustness study is then conducted, which measures the method's capacity to remain unaffected by small, deliberate variations in method parameters (e.g., flow rate ±0.1 mL/min, temperature ±2°C) [16]. This confirms the method's reliability.

Step 6: System Suitability Testing Before moving to validation, the method's readiness is confirmed using system suitability tests. These tests, which may include parameters like resolution, tailing factor, and theoretical plate count, ensure the system is performing adequately at the time of analysis [22].

Core Validation Parameters and Experimental Protocols

Once a method is developed, it must be validated to ensure it is fit for its intended purpose. The International Council for Harmonisation (ICH) guideline Q2(R1) defines the key validation parameters, their definitions, and typical experimental protocols [22].

Table 2: Core Analytical Method Validation Parameters and Acceptance Criteria

| Validation Parameter | Definition | Experimental Protocol & Acceptance Criteria |

|---|---|---|

| Specificity | Ability to assess analyte unequivocally in the presence of potential interferences (excipients, impurities) [22]. | Inject blank, placebo, standard, and sample. Check for peak interference. Resolution > 2.0 between analyte and closest eluting peak. |

| Accuracy | Closeness of test results to the true value or accepted reference value [22]. | Spike known amounts of analyte into placebo matrix at multiple levels (e.g., 80%, 100%, 120%). Calculate % recovery. Typically 98–102% recovery for drug substance. |

| Precision | Degree of agreement among individual test results. Includes repeatability and intermediate precision [22]. | Repeatability: 6 injections of 100% standard. RSD < 1.0%.Intermediate Precision: 2 analysts/days/instruments. RSD < 2.0%. |

| Linearity | Ability to obtain test results proportional to analyte concentration [22]. | Prepare and analyze standards at 5+ concentration levels across the range (e.g., 50-150%). Calculate correlation coefficient (R²). Typically R² ≥ 0.999. |

| Range | Interval between upper and lower concentration with demonstrated precision, accuracy, and linearity [22]. | Derived from linearity and precision studies. Must encompass all intended test concentrations. |

| Robustness | Capacity to remain unaffected by small, deliberate variations in method parameters [16]. | Deliberately vary parameters (e.g., flow rate ±0.1 mL/min, temp ±2°C, pH ±0.1). Monitor system suitability criteria. |

Experimental Protocol: Specificity and Forced Degradation

A key protocol for demonstrating specificity, especially for stability-indicating methods, is forced degradation studies.

- Objective: To demonstrate that the method can accurately quantify the analyte of interest and resolve it from degradation products generated under stress conditions.

- Procedure: Subject the drug substance to stress conditions including acid hydrolysis (e.g., 0.1M HCl), base hydrolysis (e.g., 0.1M NaOH), oxidative stress (e.g., 3% H₂O₂), thermal stress, and photolytic stress [22]. The samples are then analyzed using the developed method.

- Data Analysis: Chromatograms are examined for the appearance of degradation peaks. The method must demonstrate that the analyte peak is pure and baseline-separated from all degradation products, proving its ability to monitor the analyte's stability over time.

Experimental Protocol: Robustness via Experimental Design

A multivariate approach to robustness is more efficient than OVAT.

- Objective: To identify method parameters that have a significant effect on performance and to establish permissible ranges for these parameters.

- Procedure: Select critical factors (e.g., mobile phase pH, flow rate, column temperature, % organic solvent) and define a high/low value for each. Use a screening design (e.g., a Plackett-Burman design or a fractional factorial design) to create a set of experimental runs that efficiently varies all factors simultaneously [16].

- Data Analysis: For each experimental run, measure key responses (e.g., retention time, resolution, tailing factor). Use statistical analysis (e.g., ANOVA) to determine which factors have a significant effect. This data is used to set system suitability limits and define controlled parameters in the method.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and reagents essential for developing and executing a robust analytical method, illustrated with examples from the cited research.

Table 3: Essential Research Reagent Solutions for HPLC Method Development

| Item | Function / Role | Exemplary Application in Research |

|---|---|---|

| C18 Chromatographic Column | Reversed-phase stationary phase for separating non-polar to medium-polarity compounds. | Inertsil ODS-3 C18 (250 mm, 4.6 mm, 5 μm) for favipiravir [15]; Hypersil BDS C18 (150 mm, 4.6 mm, 5 μm) for COVID-19 antivirals [28]. |

| Buffers (e.g., Phosphate) | Control mobile phase pH to ensure reproducible retention times and peak shape. | Disodium hydrogen phosphate anhydrous buffer (20 mM, pH 3.1) for favipiravir method [15]. |

| HPLC-Grade Organic Solvents | Act as the strong solvent in the mobile phase to elute compounds from the column. | Acetonitrile and Methanol are most common. Methanol used in water:methanol (30:70 v/v) mobile phase for COVID-19 antivirals [28]. |

| Diode Array Detector (DAD) | Detects analytes across a range of wavelengths, allowing for peak purity assessment and optimal wavelength selection. | Detection at 323 nm for favipiravir [15] and 230 nm for a mixture of five antivirals [28]. |

| Reference Standards | Highly pure characterized substances used to confirm identity and prepare calibration standards for quantification. | Pure reference standards of favipiravir (99.55%), molnupiravir (98.86%), etc., were used for method validation [28]. |

The evolution from traditional OVAT to structured, data-driven frameworks like AQbD and HTE represents a significant advancement in analytical science. The AQbD approach, as demonstrated in the favipiravir case study, provides a systematic, risk-based path to a robust and well-understood method with a defined MODR [15]. Meanwhile, HTE offers a powerful tool for rapidly exploring complex parameter spaces and uncovering hidden chemical insights, though it requires sophisticated data analysis frameworks like HiTEA [27].

This step-by-step framework underscores that a rigorous, planned approach to method development and validation is not merely a regulatory hurdle but a critical scientific endeavor. It ensures the generation of reliable data that underpins drug efficacy and patient safety, from the research bench to quality control in manufacturing. By adopting these modern principles and tools, scientists and drug development professionals can enhance the efficiency, robustness, and regulatory compliance of their analytical methods.

{eyebrow}

Defining Analytical Objectives and Critical Quality Attributes (CQAs)

For researchers in organic chemistry and drug development, the reliability of analytical data is paramount. This guide provides a structured framework for defining analytical objectives and Critical Quality Attributes (CQAs)—the physical, chemical, biological, or microbiological properties that must be within an appropriate limit, range, or distribution to ensure the desired product quality [29]. We will objectively compare the performance of established and emerging analytical techniques, supported by experimental data and detailed protocols, to guide your method validation strategies.

Understanding CQAs and Analytical Objectives

In any analytical method development, the primary analytical objective is to ensure that the method is fit-for-purpose, providing accurate, precise, and reliable data for decision-making. This process is intrinsically linked to the identification and monitoring of CQAs [29].

A CQA is a property or characteristic that, when controlled within a predefined limit, helps ensure the final product meets its quality standards. The table below outlines common types of CQAs relevant to pharmaceutical development and organic chemistry.

Table 1: Categories and Examples of Critical Quality Attributes (CQAs)

| Category | Description | Specific Examples |

|---|---|---|

| Product-Related | Variants of the molecular entity itself [29] | Size, charge, glycan patterns, oxidation state [29] |

| Process-Related | Impurities introduced during manufacturing [29] | Host cell proteins, DNA, leachables from equipment [29] |

| Regulatory | Aspects related to composition, strength, and safety [29] | pH, excipient concentration, osmolality, bioburden, endotoxin levels [29] |

The core objective of an analytical method is to measure these CQAs with trueness (the closeness of agreement between the average value obtained from a large series of test results and an accepted reference value) and precision (the closeness of agreement between independent test results obtained under stipulated conditions) [30] [31]. Method validation, particularly through comparison studies, is the key process for demonstrating that a new or alternative method can be used interchangeably with an established one without affecting patient results or scientific conclusions [30].

Analytical Technique Comparison: Performance and Data

Selecting the right analytical technique depends on the CQA being measured, the required sensitivity, and the context of the analysis. The following table summarizes the performance characteristics of different techniques based on comparative studies.

Table 2: Comparison of Analytical Technique Performance

| Analytical Technique | Typical Application / CQA Measured | Key Performance Findings | Reference Method |

|---|---|---|---|

| LC-MS/MS | Confirmatory analysis of contaminants (e.g., Ochratoxin A) [32] | More specific and sensitive for confirmation at sub-ppb levels [32] | HPLC with fluorescence detection [32] |

| HPLC-FL | Quantitative analysis of contaminants (e.g., Ochratoxin A) [32] | Reliable for quantification; may require derivatization [32] | LC-MS/MS for confirmation [32] |

| Process Analytical Technology (PAT) | In-line monitoring of drug concentration & morphology in solid dispersions [33] | Raman/NIR sensors & AI models enable rapid, non-destructive analysis [33] | Off-line techniques (e.g., SEM) [33] |

| Coupled-Cluster Theory CCSD(T) | Computational prediction of molecular properties [34] | "Gold standard" for quantum chemistry; high accuracy but computationally expensive [34] | Density Functional Theory (DFT) [34] |

| AI/ML Models | Prediction of free energy, kinetics, and reaction outcomes [35] | Achieve high accuracy with reduced computational cost; offer high-speed retrosynthetic planning [35] | Traditional ab initio methods and manual design [35] |

Protocols for Method Comparison and Validation

A robust method-comparison study is fundamental to demonstrating that two methods can be used interchangeably. The following provides a detailed protocol based on established guidelines [36] [30] [31].

Experimental Objectives and Scope