A Practical Guide to Selecting Factors for DoE in Organic Synthesis

This article provides a comprehensive guide for researchers and development scientists on strategically selecting factors for Design of Experiments (DoE) in organic synthesis.

A Practical Guide to Selecting Factors for DoE in Organic Synthesis

Abstract

This article provides a comprehensive guide for researchers and development scientists on strategically selecting factors for Design of Experiments (DoE) in organic synthesis. It covers foundational principles, moving beyond inefficient one-variable-at-a-time (OVAT) approaches, and delves into advanced methodologies for incorporating complex factor types like mixtures and solvents. The content offers practical troubleshooting advice for common experimental roadblocks and outlines frameworks for validating and comparing different DoE designs to ensure robust, reproducible, and efficient synthetic processes, ultimately accelerating development in pharmaceutical and related fields.

Why Factor Selection is the Bedrock of Successful Synthesis DoE

The Critical Shift from OVAT to Multivariate Factor Analysis

Traditional One-Variable-at-a-Time (OVAT) experimentation has long been the default approach in organic synthesis, where researchers systematically alter a single factor while holding all others constant. While intuitively straightforward, this method contains fundamental flaws that limit its efficiency and effectiveness in complex chemical systems. The OVAT approach fails to capture interaction effects between factors—critical relationships where the effect of one variable depends on the level of another [1]. Furthermore, OVAT requires a substantial number of experiments to explore even a modest experimental space, often leading to suboptimal conditions and missed opportunities for process improvement [2].

In contrast, Multivariate Factor Analysis (MFA) and Design of Experiments (DOE) provide a structured framework for simultaneously investigating multiple factors and their interactions, maximizing information gain while minimizing experimental costs [3]. This systematic approach to experimentation is particularly valuable in organic synthesis, where numerous factors—including temperature, catalyst loading, solvent composition, concentration, and reaction time—can interact in complex ways to influence yield, purity, and selectivity.

Table 1: Comparison of OVAT vs. Multivariate Approaches

| Characteristic | OVAT Approach | Multivariate Factor Analysis |

|---|---|---|

| Experimental Efficiency | Low (requires many runs) | High (maximizes information per experiment) |

| Interaction Detection | Cannot detect interactions | Explicitly models and estimates interactions |

| Optimum Identification | Often finds local, not global, optimum | Maps response surface to find true optimum |

| Statistical Validity | Limited, no estimate of experimental error | Provides rigorous estimate of error and significance |

| Scope of Inference | Limited to tested factor levels | Can predict behavior across entire experimental region |

Fundamental Principles of Multivariate Experimental Design

Core Concepts and Terminology

Multivariate experimental design rests upon several key principles that distinguish it from traditional OVAT approaches. Understanding these concepts is essential for proper implementation in organic synthesis research:

- Factors: Input variables or parameters that can be controlled or varied in an experiment (e.g., temperature, concentration, catalyst type) [3].

- Levels: Specific values or settings at which factors are maintained during experimentation [1].

- Responses: Measurable outputs or outcomes of experimental trials (e.g., yield, purity, selectivity) [3].

- Interactions: Occur when the effect of one factor depends on the level of another factor [1].

- Experimental Domain: The bounded region of factor space defined by the ranges of each factor to be studied [2].

- Randomization: The practice of running experimental trials in random order to minimize the effects of lurking variables and external influences [3].

The Mathematics of Multivariate Analysis

Multivariate approaches employ mathematical models to represent the relationship between factors and responses. A general second-order model for a response Y with k factors can be represented as:

Y = β₀ + ΣβᵢXᵢ + ΣβᵢᵢXᵢ² + ΣΣβᵢⱼXᵢXⱼ + ε

Where β₀ is the intercept, βᵢ are linear coefficients, βᵢᵢ are quadratic coefficients, βᵢⱼ are interaction coefficients, and ε represents random error [1]. This model enables prediction of responses across the entire experimental space, not just at the points where data were collected.

Key Experimental Designs for Organic Synthesis

Screening Designs: Identifying Influential Factors

When facing complex organic syntheses with numerous potential factors, screening designs help identify which variables have significant effects on responses, allowing researchers to focus optimization efforts on the most important parameters.

- Two-Level Factorial Designs: These designs study k factors at two levels (typically coded as -1 and +1) requiring 2^k experiments. They efficiently estimate main effects and interactions but cannot detect curvature in responses [3].

- Fractional Factorial Designs: When the number of factors is large, fractional factorials (2^(k-p)) reduce experimental burden by examining a carefully chosen subset of the full factorial, sacrificing higher-order interactions that are typically negligible [3].

- Plackett-Burman Designs: Extremely efficient for screening large numbers of factors with very few runs, these are useful for early-stage exploration but have limited ability to resolve interactions [2].

Table 2: Screening Designs for Initial Factor Selection in Organic Synthesis

| Design Type | Number of Factors | Minimum Runs | Can Detect Interactions? | Best Use Case in Organic Synthesis |

|---|---|---|---|---|

| Full Factorial | 2-5 | 2^k | Yes, all | Early-stage reactions with few variables |

| Fractional Factorial | 5+ | 2^(k-p) | Yes, but partially confounded | Reaction screening with medium complexity |

| Plackett-Burman | 7+ | Multiple of 4 | No | High-throughput screening of many parameters |

| D-Optimal | Any | Flexible | Yes | Irregular experimental regions or constraint systems |

Response Surface Methodology: Modeling and Optimization

After identifying critical factors through screening, Response Surface Methodology (RSM) designs characterize the relationship between factors and responses more precisely, enabling true process optimization.

- Central Composite Designs (CCD): These designs augment two-level factorials with center points and axial points to efficiently estimate second-order effects, making them ideal for locating optima [2].

- Box-Behnken Designs: An alternative to CCD that uses fewer runs by combining two-level factorial with incomplete block designs, often advantageous when extreme factor combinations are problematic or expensive [2].

- Three-Level Factorial Designs: Full factorial designs with three levels per factor can directly estimate quadratic effects but require more experimental runs than CCD or Box-Behnken [1].

Detailed Methodological Protocols

Protocol 1: Screening Critical Factors in Catalytic Reaction Using Fractional Factorial Design

Objective: Identify significant factors affecting yield and enantioselectivity in an asymmetric catalytic reaction from seven potential variables.

Experimental Factors and Levels:

- Catalyst loading (0.5-2.0 mol%)

- Temperature (0-25°C)

- Solvent polarity (Dielectric constant 4-20)

- Additive (None vs. Molecular sieves)

- Concentration (0.1-0.5 M)

- Base (None vs. 1.1 equiv.)

- Mixing speed (300-900 rpm)

Procedure:

- Select a 2^(7-4) fractional factorial design requiring 8 experimental runs plus 3 center point replicates (11 total runs)

- Randomize run order to minimize systematic error

- Prepare reaction mixtures according to the design matrix specifications

- Conduct reactions under inert atmosphere with precise temperature control

- Monitor reaction completion by TLC or GC/MS

- Work up reactions using standardized purification protocols

- Analyze yields by quantitative NMR and enantioselectivity by chiral HPLC

- Statistically analyze results using ANOVA with α=0.05 significance level

Statistical Analysis:

- Calculate main effects for each factor

- Perform half-normal probability plot analysis to identify significant effects

- Construct Pareto charts of standardized effects

- Develop first-order model for each response

- Validate model with center point replicates and lack-of-fit testing

Protocol 2: Reaction Optimization Using Central Composite Design

Objective: Optimize yield and impurity profile for a key synthetic transformation using Response Surface Methodology.

Experimental Factors and Levels (after screening reduced factors to three critical variables):

- Temperature (Three levels: 60°C, 80°C, 100°C)

- Reaction time (Three levels: 4h, 12h, 20h)

- Catalyst/substrate ratio (Three levels: 0.5%, 1.0%, 1.5%)

Procedure:

- Implement a Central Composite Design with 20 runs (8 factorial points, 6 axial points, 6 center points)

- Randomize execution order to mitigate time-dependent biases

- Set up parallel reactions in controlled heating blocks with accurate temperature monitoring

- Quench reactions at predetermined times using standardized protocols

- Analyze crude reaction mixtures by UPLC-MS for yield and impurity quantification

- Perform response surface regression to develop second-order models

- Generate contour plots and response surface plots for visualization

- Apply desirability functions for multi-response optimization

- Conduct confirmation experiments at predicted optimum conditions

Analysis Methods:

- Fit full quadratic model: Y = β₀ + β₁A + β₂B + β₃C + β₁₂AB + β₁₃AC + β₂₃BC + β₁₁A² + β₂₂B² + β₃₃C²

- Perform stepwise regression or all-subsets analysis to reduce non-significant terms

- Calculate lack-of-fit and R² statistics to assess model adequacy

- Use canonical analysis to characterize stationary points

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents for Multivariate Analysis in Organic Synthesis

| Reagent/Material | Function in Experimental Design | Application Example | Considerations for DoE |

|---|---|---|---|

| Experimental Design Software (JMP, Design-Expert, R) | Creates design matrices, analyzes results, generates models | All stages from screening to optimization | Enables randomization, analysis, and visualization |

| High-Throughput Reaction Equipment | Parallel synthesis of design points | Screening multiple conditions simultaneously | Critical for efficient execution of multifactor designs |

| In-Line Analytical Technologies (FTIR, Raman) | Real-time monitoring of multiple responses | Kinetic profiling of reactions | Provides rich dataset for multivariate modeling |

| Design Templates (ASQ DOE Template) | Standardized worksheets for recording data | Ensuring consistent execution across experiments | Maintains experimental integrity and organization |

| Catalyst Libraries | Systematic variation of catalytic systems | Screening ligand effects in metal-catalyzed reactions | Enables categorical factor studies |

| Solvent Selection Kits | Controlled variation of solvent environment | Studying solvent effects on yield and selectivity | Allows mixture designs for solvent optimization |

Advanced Multivariate Techniques for Complex Systems

Multivariate Factor Analysis for Latent Variable Modeling

In complex organic syntheses where numerous correlated responses are measured, Multivariate Factor Analysis (FA) can identify underlying latent variables that explain observed patterns in the data:

Model Structure: X = Λξ + δ

Where X is the vector of observed variables, Λ is the matrix of factor loadings, ξ represents the latent factors, and δ represents unique variances [4]. This approach is particularly valuable when dealing with multiple, correlated quality attributes in pharmaceutical development.

Bayesian Approaches for Enhanced Inference

Bayesian methods offer advantages in experimental design through their ability to incorporate prior knowledge and naturally account for uncertainty in model parameters:

Posterior Distribution: p(θ|y) ∝ p(y|θ) × p(θ)

Where p(θ|y) is the posterior distribution of parameters, p(y|θ) is the likelihood function, and p(θ) is the prior distribution [4]. This framework is especially powerful when dealing with limited data or when integrating information from previous experimental campaigns.

Implementation Framework for Organic Synthesis Research

Strategic Factor Selection Methodology

Choosing appropriate factors for multivariate analysis requires systematic consideration of chemical knowledge and practical constraints:

- Mechanistic Plausibility: Factors should have credible connection to reaction mechanism through established physical organic chemistry principles

- Practical Adjustability: Factors must be controllable within available equipment and resource constraints

- Range Selection: Factor ranges should be wide enough to detect effects but narrow enough to avoid catastrophic failure or safety issues

- Categorical vs. Continuous: Distinguish between discrete categorical factors (e.g., solvent type, catalyst class) and continuous factors (e.g., temperature, concentration)

Case Study: Pharmaceutical Intermediate Synthesis Optimization

Background: Optimization of a Pd-catalyzed cross-coupling reaction for the synthesis of a drug candidate intermediate with challenging purity requirements.

Initial OVAT Approach: 45 experiments varying catalyst, ligand, base, solvent, temperature, and concentration individually identified suboptimal conditions (72% yield, 94% purity).

Multivariate Strategy:

- Screening design (16 runs) identified catalyst loading, temperature, and base equivalents as critical factors

- Central Composite Design (20 runs) modeled quadratic effects and interactions

- Multi-response optimization balanced yield and purity requirements

Results: Identified optimum conditions achieving 89% yield and 99.2% purity with 60% fewer experiments than comprehensive OVAT approach.

The critical shift from OVAT to Multivariate Factor Analysis represents a paradigm change in how organic synthesis research should be conducted. By embracing systematic experimental design, researchers can efficiently navigate complex factor spaces, uncover critical interactions, and develop robust synthetic processes with fewer resources. The structured methodologies outlined in this guide provide a framework for implementing these powerful approaches in diverse synthetic contexts, from early reaction screening to final process optimization. As the field of organic synthesis continues to emphasize efficiency, sustainability, and quality-by-design principles, multivariate approaches will become increasingly essential tools in the synthetic chemist's arsenal.

Defining Continuous, Categorical, and Mixture Factors in a Synthetic Context

In the realm of organic synthesis, the strategic selection and definition of experimental factors constitute a critical foundation for effective Design of Experiments (DoE). Factors represent the variables that researchers deliberately modify to observe their effect on reaction outcomes such as yield, purity, or selectivity [1]. The systematic approach of DoE represents a paradigm shift from traditional one-factor-at-a-time (OFAT) experimentation, which fails to detect interactions between variables and often leads to suboptimal conclusions [1] [5]. Within synthetic chemistry, factors can be broadly classified into three fundamental types—continuous, categorical, and mixture—each with distinct characteristics and implications for experimental design.

The appropriate classification and handling of these factor types enables researchers to efficiently navigate complex experimental spaces, a capability particularly valuable in pharmaceutical development where process optimization directly impacts drug quality, development timelines, and manufacturing costs [6]. This guide provides a comprehensive technical framework for defining these factor types within synthetic contexts, supporting the broader objective of implementing statistically sound and resource-efficient experimentation strategies.

Theoretical Foundations of Factor Classification

Continuous Factors

Continuous factors are quantitative variables that can assume any value within a specified range [5]. These factors are measured on a continuous numerical scale and allow for interpolation between tested levels. In synthetic chemistry, continuous factors frequently include parameters such as temperature, reaction time, pressure, concentration, and pH [1] [5]. A key advantage of continuous factors is their compatibility with mathematical modeling and optimization techniques, including Response Surface Methodology (RSM), which enables researchers to predict optimal conditions even between experimentally tested points [1] [7].

Categorical Factors

Categorical factors represent qualitative attributes that divide experimental runs into distinct groups or categories [5]. These factors lack inherent numerical meaning and cannot be logically ordered or interpolated. Categorical factors in synthetic chemistry might include catalyst type, solvent identity, reagent vendor, or reactor material [5] [8]. Categorical factors can be further subdivided into nominal categories (no inherent order, e.g., solvent type) and ordinal categories (meaningful sequence but inconsistent intervals, e.g., gene order in a cluster) [5]. The inclusion of categorical factors expands the investigative scope of DoE beyond merely "how much" to "what kind" or "which type."

Mixture Factors

Mixture factors occur in experimental situations where the components collectively sum to a constant total, creating a dependent relationship where changing one component necessarily alters the proportions of others [8]. In synthetic contexts, this most commonly applies to formulations where ingredients sum to 100%, such as solvent blends, catalyst mixtures, or combinatorial reagent systems. The distinctive characteristic of mixture factors is that the response depends on the relative proportions of components rather than their absolute amounts [8]. These factors require specialized experimental designs that accommodate the constraint that the sum of all components must equal one.

Table 1: Comparative Analysis of Fundamental Factor Types in Synthetic DoE

| Factor Type | Definition | Key Characteristics | Synthetic Examples | Modeling Considerations |

|---|---|---|---|---|

| Continuous | Quantitative variables on a measurable scale | Infinite values between boundaries; interpolatable | Temperature, time, pressure, concentration, pH [5] | Fits regression models; suitable for RSM [7] |

| Categorical | Qualitative attributes defining distinct groups | Discrete, non-numeric categories; no interpolation | Catalyst type, solvent identity, vendor, reactor material [5] [8] | Requires dummy variables; compared to reference category |

| Mixture | Components summing to a constant total | Proportional dependence; constrained design space | Solvent blends, catalyst mixtures, reagent combinations [8] | Specialized designs (e.g., simplex); proportion-based effects |

Methodological Framework for Factor Definition

Systematic Approach to Factor Selection

Defining factors for synthetic DoE requires a structured methodology that aligns with overall experimental objectives. The process begins with clear definition of the study's purpose, whether screening influential factors, understanding interaction effects, or optimizing reaction conditions [8] [6]. Researchers must then identify all potential factors through comprehensive process mapping of the synthetic procedure, including materials, equipment, and environmental conditions [6]. A risk assessment follows to prioritize factors based on their potential impact on critical reaction outcomes, ultimately yielding a refined set of factors for experimental investigation [6].

Practical Protocols for Factor Definition

Protocol for Defining Continuous Factors:

- Identify quantitatively adjustable parameters with potentially nonlinear effects on responses [1].

- Establish minimum and maximum boundaries based on practical constraints (e.g., solvent boiling points, safety limits) or prior knowledge [8].

- Select appropriate level increments based on the expected curvature of response and available experimental resources [7].

- Document the operational procedure for precise factor adjustment (e.g., calibration protocols, measurement techniques) to ensure reproducibility.

Protocol for Defining Categorical Factors:

- Identify qualitatively distinct options for materials, methods, or equipment [5].

- Enumerate all relevant categories based on scientific rationale or practical availability.

- Establish consistent implementation protocols for each category to minimize operational variability.

- Consider potential ordering effects and implement randomization where appropriate.

Protocol for Defining Mixture Factors:

- Identify component systems where the total proportion is constrained (typically to 100%) [8].

- Define minimum and maximum boundaries for individual components based on chemical compatibility or functional requirements.

- Account for component interactions that may create non-linear blending effects.

- Select appropriate mixture design (e.g., simplex lattice, simplex centroid) aligned with experimental objectives.

Table 2: Experimental Design Alignment with Factor Types and Research Objectives

| Research Objective | Recommended Design Type | Continuous Factors | Categorical Factors | Mixture Factors | Key Considerations |

|---|---|---|---|---|---|

| Initial Screening | Fractional Factorial, Plackett-Burman [7] [5] | 2 levels (high/low) | 2 categories if binary; minimal practical categories | Not typically addressed | Focus on main effects; resolution III-IV designs [7] |

| Characterization & Optimization | Full Factorial, Response Surface Methodology (RSM) [7] | 3+ levels (enables curvature detection) | Included as blocking factors; limited categories | Specialized mixture designs (e.g., simplex) [8] | Models interactions; Central Composite or Box-Behnken for RSM [7] |

| Robustness Testing | Taguchi Methods, Space-Filling Designs [7] [8] | Multiple levels across operating range | Noise factors included in outer array | Not typically primary focus | Assesses sensitivity to variation; identifies robust conditions |

Integrated Experimental Workflow

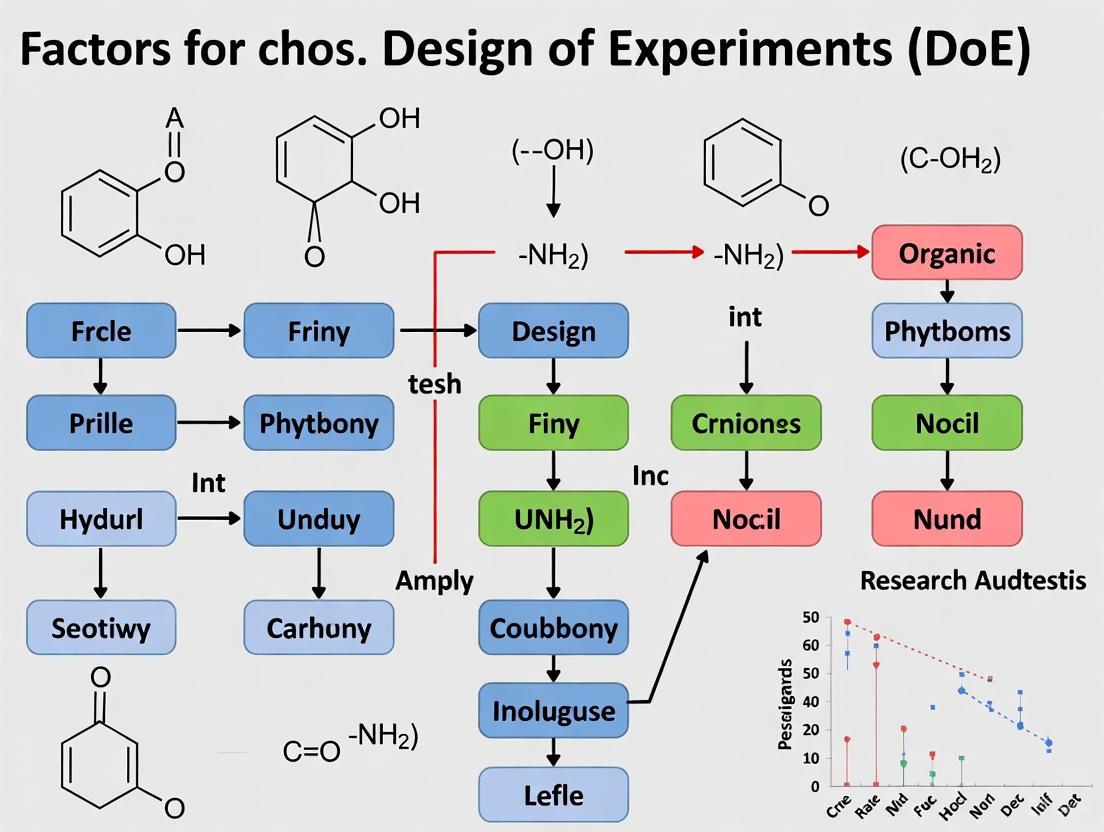

The following workflow diagram illustrates the systematic process for defining factors and selecting appropriate DoE methodologies within synthetic optimization contexts:

Advanced Considerations in Factor Management

Factor Interaction Effects

A fundamental advantage of DoE over OFAT approaches is the ability to detect and quantify interaction effects between factors [1]. Interactions occur when the effect of one factor depends on the level of another factor, creating non-additive behavior that can significantly impact optimization outcomes. For example, in a synthetic transformation, the optimal temperature might differ substantially depending on the catalyst type employed—a categorical-continuous interaction [1]. The systematic variation inherent in factorial designs enables detection and modeling of these interactions, providing more accurate predictions of system behavior across the experimental space [1].

Resource-Aware Experimental Design

Practical experimentation inevitably faces resource constraints that influence factor selection and experimental design. As the number of factors increases, full factorial designs become exponentially more resource-intensive, making fractional factorial designs a pragmatic alternative [7] [8]. Strategic factor screening during early experimentation stages helps prioritize the most influential variables for subsequent optimization phases [7] [5]. Recent advances in automated synthesis platforms and machine learning-guided optimization further enhance resource efficiency by enabling adaptive experimentation strategies that focus on promising regions of the experimental space [9] [10].

Method Validation and Regulatory Considerations

In pharmaceutical development, analytical method validation requires careful factor consideration to establish method robustness [6]. Controlled factors might include HPLC parameters (e.g., mobile phase pH, column temperature, gradient profile), while uncontrolled factors (e.g., analyst, day, instrument) should be monitored as potential noise variables [6]. The International Conference on Harmonisation (ICH) Q2(R1) guideline provides a framework for validation parameters (specificity, accuracy, precision, etc.) that should guide factor selection when developing analytical methods supporting synthetic chemistry [6].

Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Synthetic DoE Implementation

| Reagent/Material | Function in DoE Context | Factor Type Association | Implementation Considerations |

|---|---|---|---|

| Solvent Systems | Reaction medium; impacts solubility, kinetics, and mechanism | Categorical (single solvent); Mixture (blends) | Polarity, protic/aprotic character, environmental impact |

| Catalysts | Alters reaction pathway and activation energy | Categorical (type); Continuous (loading) | Ligand architecture, coordination geometry, recycling potential |

| Reagents & Building Blocks | Participates in bond formation/transformation | Categorical (identity); Continuous (stoichiometry) | Electrophilicity/nucleophilicity, stability, commercial availability |

| Acid/Base Modulators | Adjusts pH or reaction equilibrium | Continuous (concentration, pKa) | Aqueous vs. organic compatibility, buffering capacity |

| Temperature Control Systems | Governs reaction kinetics and thermodynamics | Continuous (temperature, ramp rate) | Heating/cooling capability, stability, monitoring accuracy |

The precise definition of continuous, categorical, and mixture factors establishes a critical foundation for effective experimental design in synthetic chemistry. By understanding the distinct characteristics, applications, and methodological requirements for each factor type, researchers can develop strategically sound experimentation approaches that efficiently extract maximum information from limited resources. The integration of this factor classification framework within a structured DoE methodology enables comprehensive exploration of complex synthetic landscapes, ultimately accelerating process optimization in pharmaceutical development and related fields. As synthetic methodologies continue to evolve alongside automation and machine learning technologies [9] [10], the principled definition and management of experimental factors will remain essential for advancing synthetic efficiency and sustainability.

This guide provides a structured framework for selecting and optimizing critical factors in organic synthesis using Design of Experiments (DoE). Tailored for researchers and drug development professionals, it addresses the systematic approach required for efficient reaction optimization.

Traditional One-Variable-at-a-Time (OVAT) approaches to reaction optimization are inefficient and can easily miss optimal conditions due to interactions between factors [11]. For example, optimizing reagent equivalents at one temperature, then optimizing temperature at the fixed reagent level, may completely miss the true optimum combination of high temperature and low reagent loading [11]. Design of Experiments (DoE) is a statistical methodology that overcomes these limitations by systematically varying multiple factors simultaneously to map the reaction space, identify significant variables, and understand complex interaction effects [11] [12]. This approach is particularly valuable in pharmaceutical development where it accelerates process optimization and provides comprehensive process understanding for regulatory filings.

Core Input Parameters in Synthetic DoE

The first critical step in any DoE study is the selection of factors to investigate. The following parameters are most frequently optimized in synthetic chemistry studies.

Quantitative Reaction Parameters

These continuous numerical factors are fundamental to nearly all reaction optimizations.

- Catalyst Loading: Often a primary driver of reaction rate and yield. Its significance can depend on other factors like pressure and temperature [12].

- Temperature: Directly influences reaction kinetics and can affect selectivity and impurity formation.

- Reaction Time: Must be balanced against decomposition or side reactions.

- Concentration: Can impact reaction rate, selectivity, and safety profile.

- Stoichiometry of Reagents: Optimizing equivalents is crucial for cost-effective and sustainable processes.

Qualitative Reaction Parameters

These categorical factors require specialized experimental designs for effective screening.

- Solvent Environment: Arguably one of the most influential factors, as it can affect reaction rate, mechanism, and equilibrium [11] [13].

- Catalyst/Ligand Identity: A key choice that defines reaction pathway and selectivity.

- Base/Additive Selection: Can influence kinetics, intermediate stability, and product distribution.

Systematic Solvent Selection Methodology

Solvent choice is a complex multi-dimensional problem. A systematic approach moves beyond trial-and-error to efficiently navigate "solvent space."

Physical Properties and Solvent Effects

Solvents influence reactions through their physicochemical properties, which can be grouped by their primary effect.

Table 1: Key Solvent Properties and Their Impact on Reactions

| Property | Chemical Impact | Process Consideration |

|---|---|---|

| Polarity (ε) | Affects solubility of polar intermediates/transition states; influences SN1 vs SN2 pathways [13] | Determines reactant solubility, boiling point for T control |

| Hydrogen Bonding | Can stabilize or destabilize transition states; may act as a chemical participant | Miscibility with aqueous phases for workup |

| Dipole Moment | Interacts with polar functional groups; influences reaction equilibrium [13] | - |

| Vapor Pressure | - | Determines pressure build-up in sealed vessels; evaporation losses |

| Viscosity | - | Impacts mixing efficiency, particularly in flow systems |

Navigating Solvent Space with PCA Maps

To simplify solvent selection, Principal Component Analysis (PCA) can condense multiple solvent properties into 2-3 principal components, creating a "solvent map" where solvents with similar properties cluster together [11]. In a DoE context, solvents are selected from different regions of this map to ensure a diverse representation of chemical properties. The effect of each principal component on the reaction outcome is then modeled, pinpointing the optimal region of solvent space [11]. This method also facilitates the identification of safer, more sustainable solvent alternatives to traditional toxic/hazardous options [11].

Computer-Aided Molecular Design (CAMD)

Advanced approaches use Computer-Aided Molecular Design (CAMD) to frame solvent selection as an optimization problem. CAMD uses property prediction models (e.g., group contribution methods, COSMO-based models) and mixed-integer nonlinear programming (MINLP) to identify or design optimal solvent molecules based on predicted reaction performance, considering both kinetic and thermodynamic effects [13].

Catalyst Screening and Optimization

Catalyst selection and loading are often the most critical and costly factors in a catalytic transformation.

High-Throughput Experimentation (HTE) Screening

High-Throughput Experimentation (HTE) involves miniaturizing and parallelizing reactions to rapidly screen large numbers of catalysts or conditions [14]. A case study on reducing a halogenated nitroheterocycle demonstrates this process: initial screening of 15 different catalysts from three suppliers under standard conditions identified a platinum-based catalyst that increased conversion from 60% to 98.8% while reducing reaction time from 21 hours to 6 hours [12]. This highlights how a broad primary screen can dramatically improve process performance.

DoE for Catalyst Loading Optimization

After identifying a promising catalyst, a focused DoE study can precisely optimize its loading. In the same reduction case study, a two-level factorial DoE with three variables (catalyst load, temperature, pressure) including a center point revealed that catalyst loading was the most significant factor [12]. The model further showed that loading could be reduced if pressure and temperature were increased, providing a design space for future scale-up [12].

Designing an Integrated DoE Workflow

A robust DoE workflow integrates the screening of both qualitative and quantitative factors to efficiently find a process optimum.

Case Study: Impurity Control in a Reduction Reaction

A development project for a halogenated nitroheterocycle reduction showcases a staged DoE approach [12]:

- Factor Scoping: Initial studies assessed substrate solubility and stability, identifying incompatibility with nucleophilic solvents.

- Catalyst Screening: A primary HTE screen of 15 catalysts identified a Pt-catalyst that minimized dehalogenation impurity.

- DoE Optimization: A two-level, three-factor (catalyst load, temperature, pressure) DoE with a center point (9 total experiments) quantified factor significance and interactions, confirming catalyst loading as the dominant factor [12].

Recommended Research Reagent Solutions

Table 2: Essential Toolkit for Synthesis DoE

| Reagent / Material | Function in DoE | Application Notes |

|---|---|---|

| Heterogeneous Catalysts (Pt, Pd, Ni) | Hydrogenation; reduction reactions | Screen multiple types (e.g., 15+) to find optimal activity/selectivity [12] |

| Solvent Library (PCA-Selected) | Covering diverse chemical space | Select 5-7 solvents from different PCA map regions for initial screening [11] |

| Design-Ease / Expert Software | Statistical design and data analysis | Critical for designing experiments and modeling complex factor interactions [12] |

| Microtiter Plates (MTP) | High-Throughput Experimentation (HTE) | Enable parallel reaction execution; mindful of spatial bias in heating/lighting [14] |

Adopting a systematic strategy for identifying key inputs—from sophisticated solvent selection using PCA maps to structured catalyst screening with HTE—transforms reaction optimization from an empirical art into a data-driven science. Integrating these parameters into a structured DoE framework allows researchers to not only find robust optimal conditions but also to develop a deep understanding of their synthetic processes, ultimately leading to more efficient, sustainable, and scalable chemical synthesis.

Understanding Factor Interactions and Their Impact on Reaction Outcome

In the pursuit of optimizing organic syntheses for drug development, researchers traditionally relied on One-Factor-At-A-Time (OFAT) approaches. However, this method harbors a fundamental flaw: it inherently fails to account for interactions between experimental factors, often leading to suboptimal results and a misleading understanding of the reaction system [11]. In contrast, a Design of Experiments (DoE) framework provides a statistical methodology for simultaneously varying multiple factors, enabling the efficient exploration of the reaction space and, most importantly, the detection and quantification of factor interactions [5] [11]. This guide details the nature of factor interactions, methodologies for their study, and their pivotal role in informing factor selection for effective DoE in organic synthesis.

Defining and Visualizing Factor Interactions

A factor interaction occurs when the effect of one factor on the response variable depends on the level of another factor. In other words, the factors are not independent; they work in concert. The failure of OFAT to find a true optimum is a direct consequence of unmeasured interactions [11].

Methodologies for Detecting and Quantifying Interactions

The experimental strategy for studying interactions depends on the project phase: initial screening or subsequent optimization.

Screening Designs (Identifying Important Factors): The primary goal is to efficiently distinguish significant main effects from negligible ones. Screening designs, such as Fractional Factorial or Plackett-Burman designs, use a subset of the full factorial runs to achieve this [15] [5]. A key trade-off is that these designs often confound (alias) interaction effects with main effects, meaning they may not cleanly separate the two [15]. They operate under the initial assumption that higher-order interactions are negligible. Definitive Screening Designs (DSDs) offer a more advanced alternative, capable of estimating main effects and some two-factor interactions efficiently [15] [5].

Optimization Designs (Characterizing Interactions): Once critical factors are identified, Response Surface Methodology (RSM) designs, like Central Composite Design (CCD) or Box-Behnken Design (BBD), are employed [5]. These designs explicitly include experiments that allow for the modeling of interaction terms (e.g., A*B) and quadratic effects in a mathematical model, providing a detailed map of the response surface around the optimum [11].

Table 1: DoE Design Types and Their Capability for Interaction Analysis

| Design Type | Primary Purpose | Example Methods | Interaction Analysis Capability | Best Used When |

|---|---|---|---|---|

| Screening | Identify vital few factors from many | Plackett-Burman, Fractional Factorial [15] [5] | Limited; interactions are often confounded with main effects [15] | Early stage, >5 potential factors |

| Optimization | Model relationship and find optimum | Central Composite (CCD), Box-Behnken (BBD) [5] | High; can model and quantify specific interaction terms | After screening, for 2-4 key factors |

| Definitive Screening | Hybrid screening & optimization | Definitive Screening Design (DSD) [5] | Moderate; can estimate some two-factor interactions clearly | When both screening and initial modeling are needed |

Experimental Protocol: A Two-Stage DoE Workflow for Organic Synthesis

The following integrated protocol is framed within the context of optimizing a novel catalytic reaction.

Stage 1: Screening DoE to Identify Critical Factors & Potential Interactions

- Define Objective & Factors: Select 5-8 potential factors (e.g., catalyst loading (mol%), ligand equivalency, temperature, solvent type, concentration, reaction time).

- Choose Design: For 6 factors, select a Resolution IV fractional factorial design. This allows estimation of all main effects unconfounded by two-factor interactions, though two-factor interactions may be confounded with each other [11].

- Set Levels: Define a high (+) and low (-) level for each continuous factor (e.g., 2 mol% vs. 5 mol% catalyst). For categorical factors like solvent, use a "solvent map" based on Principal Component Analysis (PCA) to choose representatives from different regions of solvent property space [11].

- Execute Experiments: Perform the set of experimental runs (e.g., 16 runs for a 6-factor half-fraction) in randomized order to minimize noise.

- Analyze Data: Use statistical software to analyze the yield/selectivity data. Identify factors with significant main effects. Warning: A large, significant effect for a factor could actually be a strong interaction confounded with its main effect. Note any aliasing structure.

Stage 2: Optimization DoE to Model Interactions and Find Optimum

- Refine Factors: Select the 2-3 most significant factors from Stage 1.

- Choose Design: For 3 factors, implement a Central Composite Design (CCD) with center points.

- Set Levels: Expand the range around the promising region identified in Stage 1 to include axial points, creating 5 levels for each factor.

- Execute & Analyze: Run the CCD experiments. Fit a quadratic model (e.g.,

Yield = β₀ + β₁A + β₂B + β₃C + β₁₂AB + β₁₃AC + β₂₃BC + β₁₁A² + ...). - Interpret Interaction: The sign and magnitude of coefficients like β₁₂ (for interaction A*B) quantify the interaction. A positive coefficient indicates synergy, while a negative one indicates antagonism between factors. Visualize using interaction plots or 3D response surfaces.

The Scientist's Toolkit: Research Reagent Solutions for DoE

Table 2: Essential Materials and Tools for Conducting DoE in Organic Synthesis

| Item / Solution | Function in DoE Context |

|---|---|

| Statistical Software (JMP, Design-Expert, Minitab, R) | Creates randomized run orders, analyzes data, calculates significance (p-values), fits models, and generates predictive response surfaces. |

| Solvent Property Database & PCA Map [11] | Enables rational, systematic selection of diverse solvents for "solvent" as a categorical factor, moving beyond trial-and-error. |

| Automated Liquid Handling/Synthesis Platforms | Ensures precision and reproducibility in preparing the many slight variations of reaction conditions required by a DoE matrix. |

| High-Throughput Analytics (UPLC, GC-MS automation) | Provides rapid, quantitative yield and purity data for the large number of samples generated in a screening DoE. |

| Design Table (Run Sheet) | The core experimental protocol listing each run's specific combination of factor levels in a randomized order to mitigate bias. |

Data Analysis: From p-values to Practical Significance with Effect Size

Statistical significance (p-value < 0.05) indicates that an observed effect (e.g., a main effect or interaction) is unlikely due to random chance. However, for decision-making in development, practical significance is paramount. This is assessed using Effect Size measures [16].

Table 3: Interpreting Effect Size Measures for DoE Results [16]

| Effect Size Measure | Typical Context in DoE | Small Effect | Medium Effect | Large Effect |

|---|---|---|---|---|

| Cohen's d (or similar) | Comparing mean response between two factor levels (e.g., High vs. Low Temp) | 0.20 | 0.50 | 0.80 |

| η² (Eta-squared) | Proportion of total variance explained by a factor (or interaction) in ANOVA | 0.01 | 0.06 | 0.14 |

| Coefficient in Coded Model | The estimated change in response per unit change in the coded factor (-1 to +1). | Context-dependent; must be compared to overall variability and business-relevant delta. |

Protocol for Analysis: After conducting a DoE, perform ANOVA. For each significant factor and interaction term, report both the p-value and an effect size measure (like η²). A factor with a very low p-value but a trivial η² (<0.01) may be statistically significant but practically irrelevant for process control [16]. Conversely, a potential interaction with a modest p-value (e.g., 0.06) but a sizable effect should be investigated further, not dismissed.

Strategic Factor Selection Guided by Interaction Understanding

The overarching thesis for choosing factors in organic synthesis DoE is: Select factors where interactions are biologically or chemically plausible and strategically important to understand. Do not waste degrees of freedom on trivial interactions.

- Prioritize Factors with Plausible Interactions: Focus on factors likely to interact (e.g., catalyst & ligand, temperature & solvent, pH & reagent stoichiometry). Prior mechanistic knowledge is crucial.

- Use Screening Wisely: In initial screening with many factors, accept the confounding of interactions. The goal is risk reduction—ensuring no critical main effect is missed.

- Plan for Sequential Learning: A DoE project is iterative. Use results from a screening design to make an informed decision about which factors and their potential interactions merit a detailed, optimization-focused DoE.

- Leverage DSDs for Complex Systems: When dealing with a moderate number of factors (6-12) in a new, poorly understood system, consider Definitive Screening Designs, which provide clearer information on some interactions without the run count of a full factorial [5].

- Quantify to Decide: Ultimately, the quantified interaction coefficient from an optimization DoE provides a powerful, numerical basis for process understanding and control strategy, far exceeding the qualitative guesses derived from OFAT approaches.

Strategic Methodologies for Selecting and Screening Key Factors

A Step-by-Step Framework for Initial Factor Screening

Factor screening represents the critical first phase in the application of Design of Experiments (DoE) within organic synthesis and drug development research. This systematic process enables researchers to efficiently identify the few truly influential factors from many potential variables that significantly impact reaction outcomes, yield, and selectivity. In pharmaceutical development, where time and resources are constrained, effective screening prevents wasted experimentation on insignificant variables while ensuring critical process parameters are not overlooked.

Traditional one-variable-at-a-time (OVAT) approaches remain prevalent in academic synthetic chemistry but contain fundamental flaws for multi-factor systems. As demonstrated in Figure 1, OVAT methodology can completely miss optimal conditions when factor interactions exist, potentially leading researchers to abandon promising synthetic routes prematurely [11]. Implementing statistical screening designs transforms this process by exploring multi-dimensional reaction space efficiently, capturing interaction effects, and building foundational process understanding early in development.

Fundamental Concepts and Definitions

Key Terminology

- Factors: Input variables or conditions that can be manipulated in an experiment and may influence the output. In organic synthesis, this includes temperature, catalyst loading, solvent, concentration, and reagent equivalents [11].

- Responses: Measurable outputs or outcomes of experimental interest. Common responses in synthetic chemistry include chemical yield, enantiomeric excess, purity, and reaction rate [11].

- Factor Interactions: Situation where the effect of one factor on the response depends on the level of one or more other factors [11].

- Experimental Space: The multi-dimensional region defined by the ranges of all factors being studied [11].

- Screening Design: A specialized experimental arrangement that allows simultaneous evaluation of multiple factors with minimal experimental runs [11].

Classification of Factor Types

Table 1: Classification of Experimental Factor Types in Organic Synthesis

| Factor Type | Description | Examples in Organic Synthesis |

|---|---|---|

| Continuous | Can assume any value within a specified range | Temperature, concentration, catalyst loading |

| Discrete | Limited to distinct, separate values | Solvent identity, catalyst type, reagent source |

| Qualitative | Non-numerical categories or classes | Solvent class (protic/aprotic), atmosphere (N₂/air) |

| Quantitative | Measurable numerical values | Reaction time, temperature, pressure |

Pre-Screening Phase: Foundational Preparation

Define Experimental Objectives and Constraints

Clearly articulate the primary goal of the screening study, which typically falls into these categories:

- Factor Prioritization: Distinguishing the vital few factors from the trivial many

- Factor Mapping: Understanding direction and magnitude of factor effects

- Constraint Identification: Determining operational boundaries and limitations

Simultaneously, document practical constraints including safety limitations, material availability, equipment capabilities, and budgetary restrictions. This establishes realistic boundaries for the experimental program.

Establish Critical Quality Attributes (CQAs)

Identify and prioritize measurable responses that define successful synthetic outcomes. For pharmaceutical applications, typical CQAs include:

- Primary CQAs: Chemical yield, product purity, enantioselectivity

- Secondary CQAs: Reaction completion time, cost indicators, safety parameters

- Tertiary CQAs: Process robustness, scalability potential, environmental impact

Each CQA should have a clearly defined measurement protocol with established precision and accuracy to ensure reliable data generation.

Compile Potential Factor List

Conduct thorough scientific assessment to identify all potentially influential factors through:

- Literature analysis of analogous synthetic transformations

- Mechanistic considerations based on proposed reaction pathways

- Historical data from similar chemical systems

- Theoretical knowledge of physical organic chemistry principles

- Stakeholder input from multidisciplinary team members

A typical factor compilation for a metal-catalyzed cross-coupling might include 10-15 potential variables before screening.

Statistical Design Selection for Screening

Design Comparison and Selection Criteria

Table 2: Comparison of Screening Designs for Organic Synthesis Applications

| Design Type | Factors Screened | Runs Required | Strengths | Limitations |

|---|---|---|---|---|

| Fractional Factorial | 4-15 | 8-32 | Excellent efficiency; estimates main effects and some 2FI | Aliasing of interactions |

| Plackett-Burman | 5-31 | 12-36 | Highly efficient for many factors | Cannot estimate interactions |

| Definitive Screening | 6-50 | 13-101 | Identifies active main effects and 2FI; robust to outliers | Larger run size for small factors |

| Resolution IV | 5-8 | 16-32 | All main effects clear of 2FI | Requires more runs than minimal designs |

Solvent Screening Using Principal Component Analysis

Solvent selection represents a particularly challenging categorical factor in organic synthesis optimization. The principle component analysis (PCA) approach transforms numerous solvent properties into a simplified "solvent space map" containing 136 solvents characterized by diverse physicochemical properties [11]. This statistical technique enables:

- Systematic solvent selection from different regions of solvent property space

- Identification of safer alternatives to toxic/hazardous solvents

- Structured exploration of solvent effects beyond traditional trial-and-error [11]

For screening purposes, solvents are selected from the extremes (vertices) of the principal component map to maximize property diversity, followed by focused investigation in promising regions.

Figure 1: Factor Screening Workflow for Organic Synthesis

Practical Implementation Protocol

Experimental Design Execution

Implement the selected statistical design with careful attention to experimental rigor:

- Randomization: Execute experimental runs in random order to minimize confounding from lurking variables

- Center Points: Include 3-5 center point replicates to estimate pure error and check for curvature

- Blocking: Account for potential batch effects when experiments must be performed across multiple time periods

For a typical 6-8 factor screening study in medicinal chemistry, this typically requires 16-32 individual experiments, including necessary controls and replicates.

Data Collection and Management

Establish systematic data recording protocols that capture:

- Controlled factors with actual versus target values

- Measured responses with appropriate precision

- Observational data including color changes, precipitates, and unexpected phenomena

- Environmental conditions such as humidity and ambient temperature

- Raw analytical data for potential retrospective analysis

Utilize electronic laboratory notebooks with structured data templates to ensure consistency and enable efficient statistical analysis.

Analysis and Interpretation Framework

Statistical Analysis Methods

Apply appropriate statistical techniques to identify significant factors:

- Half-normal probability plots to visually identify significant effects

- Analysis of Variance (ANOVA) to quantify statistical significance

- Model adequacy checking through residual analysis

- Effect size estimation to determine practical significance

Focus interpretation on both statistical significance (p-values) and practical importance (effect size) relative to the Critical Quality Attributes established during planning.

Decision Making and Factor Selection

Implement structured decision criteria for factor prioritization:

- Primary Factors: Strong statistical significance with large effect on key CQAs

- Secondary Factors: Moderate statistical significance or impact on less critical CQAs

- Interactions: Statistically significant interaction terms between important main effects

- Noise Factors: Statistically insignificant factors that can be fixed at economical levels

Typically, screening identifies 3-5 vital factors from an initial 8-15 potential variables to carry forward into optimization studies.

Case Study: SNAr Reaction Screening

A published case study demonstrates the application of this framework to the optimization of a nucleophilic aromatic substitution (SNAr) reaction [11]. The systematic approach included:

- Initial factor selection of 8 potential variables including solvent, base, temperature, and stoichiometry

- Resolution IV design implementation requiring 19 experimental runs

- Solvent optimization using the PCA solvent map to explore diverse chemical space

- Identification of 3 significant factors for subsequent optimization

- Development of robust conditions with demonstrated substrate scope

This methodology enabled identification of improved conditions with reduced environmental impact compared to traditional optimization approaches [11].

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Synthetic DoE Studies

| Reagent Category | Specific Examples | Function in Screening | Considerations |

|---|---|---|---|

| Catalyst Systems | Pd(PPh₃)₄, Ni(COD)₂, RuPhos, BrettPhos | Facilitate key bond formations; significant cost and performance factors | Air sensitivity, commercial availability, cost |

| Solvent Libraries | DMAc, NMP, DMSO, THF, 2-MeTHF, CPME | Solvation, stability, and reaction rate effects | Green chemistry metrics, safety profile, boiling point |

| Activation Reagents | HATU, T3P, DCC, EDC·HCl, CDI | Coupling efficiency, racemization minimization | Cost, byproduct properties, handling characteristics |

| Base Selection Sets | K₂CO₃, Cs₂CO₃, DIPEA, DBU, NaOH | Acidity manipulation, intermediate stabilization | Solubility, nucleophilicity, safety considerations |

Integration with Subsequent Development

Effective factor screening establishes the foundation for subsequent reaction optimization and robustness testing. The vital few factors identified through screening become the focus of response surface methodology (RSM) studies to locate true optima and understand response curvature. This sequential approach maximizes resource efficiency while building comprehensive process understanding.

For pharmaceutical development, the screening data generated provides crucial regulatory documentation demonstrating scientific understanding of critical process parameters and their impact on drug substance quality. This knowledge directly supports Quality by Design (QbD) initiatives and regulatory filings.

Figure 2: DoE Workflow Integration from Screening to Control

This framework provides synthetic chemists with a systematic approach to initial factor screening that maximizes information gain while conserving precious resources. By implementing these structured methodologies, researchers in drug development can accelerate process development while building the fundamental scientific understanding required for robust pharmaceutical manufacturing.

In the design of experiments (DoE) for organic synthesis, particularly in pharmaceutical development, mixture factors such as solvent blends and precursor compositions present a unique class of variables. Unlike independent factors, these components interact in complex, non-linear ways that directly dictate reaction pathways, intermediate phase formation, and ultimate product properties. Framing solvent and precursor selection within a DoE context requires a deep understanding of these chemical interactions and physical kinetics. This guide synthesizes advanced methodologies for rational ink design, focusing on the interplay between solvent coordination, evaporation kinetics, and precursor solubility to enable predictive control over crystallization pathways and material properties in scalable synthesis.

Quantitative Analysis of Solvent and Precursor Properties

The physical properties of solvents and their coordination strength with precursors are primary factors that dictate the kinetics and pathway of crystallization. The following table summarizes key quantitative parameters for common solvents used in hybrid perovskite synthesis, though the principles apply broadly to organic crystallization processes.

Table 1: Physical Properties and Crystallization Kinetics for Common Solvents in Precursor Solutions [17]

| Solvent | Vapor Pressure (Pa) at 28°C | Evaporation Rate (mol m⁻¹ s⁻¹) at 28°C | Crystallization Onset Time (min) | Initial Solvent Molecules per PbI₂ (N solv start) | Solvent Molecules per PbI₂ at Crystallization (N solv cryst) |

|---|---|---|---|---|---|

| DMF | 596 | 3.51 × 10⁻⁶ | 3.75 | 12.9 | 8.8 |

| GBL | 402 | 2.36 × 10⁻⁶ | 5.75 | 13.0 | 8.9 |

| DMSO | 110 | 6.45 × 10⁻⁷ | 15.0 | 13.5 | 9.7 |

| NMP | 97 | 5.69 × 10⁻⁷ | >30 (No crystallization at 28°C) | 14.2 | - |

The data reveals a strong correlation between a solvent's vapor pressure and the onset of crystallization, with more volatile solvents (higher vapor pressure) leading to faster supersaturation and nucleation. Furthermore, the decrease in solvent molecules per precursor unit (N solv start to N solv cryst) indicates a consistent desolvation threshold required for nucleation across different solvent systems, a critical parameter for DoE factor levels.

Experimental Protocols for Pathway Analysis

In Situ Grazing-Incidence Wide-Angle X-Ray Scattering (GIWAXS)

Objective: To monitor the evolution of solution species, intermediate solvate phases, and final crystalline material in real-time during the drying process [17].

Detailed Methodology:

- Solution Preparation: Dissolve methylammonium iodide (MAI) and PbI₂ powders in a 1:1 molar ratio in anhydrous solvents (e.g., DMF, GBL, DMSO, NMP) under a N₂ atmosphere to achieve a 1 M precursor solution. Shake solutions at 60°C for 12 hours to ensure complete dissolution and complex formation.

- Sample Deposition & Environment Control: Dispense 5 μL of the precursor solution and spread it uniformly via blade-coating onto a clean glass substrate. The substrate is placed on a temperature-controlled stage (e.g., Anton Paar heating stage) within a N₂-filled environment (6 L h⁻¹ flow) to precisely control atmosphere and humidity.

- Data Acquisition: Use synchrotron radiation (e.g., 8048 eV, equivalent to Cu Kα1) at a shallow incidence angle (e.g., one degree). Collect one diffraction pattern frame at short intervals (e.g., every 14.2 seconds) throughout the drying and thermal treatment process. The temperature program may include steps (e.g., 28°C, 40°C, 100°C) to simulate thermal annealing.

- Data Analysis: Integrate 2D GIWAXS patterns to 1D diffractograms. Track the appearance, shift, and disappearance of diffraction peaks corresponding to the amorphous sol-gel phase, intermediate crystalline solvate phases (e.g., (DMF)₂(MA)₂Pb₃I₈), and the final perovskite phase (MAPbI₃).

Analysis of Solvent Coordination via Absorbance Spectroscopy

Objective: To probe the formation of polyhalido plumbate complexes in solution, which act as building blocks for intermediate phases [17].

Detailed Methodology:

- Sample Preparation: Prepare precursor solutions with a reduced concentration (e.g., 0.1 M) to avoid signal saturation in the spectrometer.

- Measurement: Place the solution in a short path length quartz cuvette (e.g., 10 μm) and acquire absorbance spectra across the UV-Vis range.

- Interpretation: shifts in the absorption onset and changes in the absorption profile indicate the specific coordination of solvent molecules with the lead-halide precursor, forming complexes like [PbI₂(Solvent)ₓ]ⁿ. This coordination strength is a key determinant in the stability of subsequent intermediate phases.

Visualizing Crystallization Pathways and DoE Factor Interplay

The following diagrams map the complex relationships and workflows involved in managing mixture factors, from the molecular interactions to the experimental decision process.

Diagram 1: Crystallization pathway and influencing factors.

Diagram 2: DoE factor framework for mixture and process variables.

The Scientist's Toolkit: Essential Research Reagent Solutions

The selection of solvents and precursors is foundational to designing experiments involving mixture factors. The following table details key reagents, their functions, and strategic considerations for their use in a DoE context.

Table 2: Key Research Reagents for Precursor and Solvent Formulation [17]

| Reagent | Function & Role in Formulation | Key Considerations for DoE |

|---|---|---|

| DMF (Dimethylformamide) | Primary solvent; coordinates with PbI₂ via carbonyl group to form solvated complexes. | High volatility dictates fast crystallization kinetics; factor in evaporation rate when blending. |

| DMSO (Dimethyl Sulfoxide) | Strongly coordinating solvent; forms stable intermediate phases (e.g., (DMSO)₂PbI₂). | Slower evaporation can delay crystallization; useful for controlling film formation kinetics in blends. |

| GBL (Gamma-Butyrolactone) | Primary solvent; similar coordination to DMF via carbonyl, forming analogous intermediate phases. | Moderate volatility and low toxicity make it suitable for large-scale deposition techniques. |

| NMP (N-Methyl-2-pyrrolidone) | Strongly coordinating solvent with low volatility. | Can inhibit crystallization at room temperature; a key factor for widening process windows. |

| MAI (Methylammonium Iodide) | Organic precursor; reacts with lead halide to form the hybrid perovskite structure. | Stoichiometric ratio with PbI₂ is a critical mixture factor; directly impacts phase purity. |

| PbI₂ (Lead Iodide) | Inorganic precursor; forms the metal-halide framework of the perovskite. | Solubility and complex formation are solvent-dependent; source purity is a critical noise factor. |

| DMAc (Dimethylacetamide) | Alternative solvent for polymer-precursor systems (e.g., PAN-lignin blends) [18]. | High boiling point suitable for solution casting; consider for specialized polymer precursor inks. |

Strategic Implementation in DoE for Synthesis

Integrating these elements into a robust DoE requires a strategic approach:

- Define the Mixture Factor Space: Treat the entire solvent system as a mixture factor with the total volume constrained to 100%. Individual solvents (DMF, DMSO, GBL) are the components of this mixture. Similarly, precursor ratios (MAI:PbI₂) constitute another mixture factor.

- Correlate Physical Properties with Responses: Use data from Table 1 to hypothesize relationships. For instance, a model might predict that increasing the proportion of high vapor pressure solvents in a blend will linearly decrease crystallization onset time, a factor to be tested.

- Account for Complex Interactions: The structure of intermediate phases in solvent blends is not an average of its components but is determined by the strongest coordinating solvent available upon nucleation [17]. This non-linear interaction must be a focal point of the experimental design.

- Layer Process Factors: Introduce process factors like drying gas flow rate (affecting evaporation) and annealing temperature orthogonally to the mixture factors to study their interacting effects on final material properties, as visualized in Diagram 2.

By applying this structured, data-driven approach to solvent and precursor selection, researchers can move beyond empirical optimization. This enables the predictive design of synthesis pathways, ensuring the reproducible formation of high-purity materials with targeted properties, which is the ultimate goal of a well-constructed Design of Experiments.

The choice of solvent is a critical factor in organic synthesis, profoundly influencing reaction efficiency, selectivity, and scalability. Traditional solvent optimization, often based on iterative, one-variable-at-a-time approaches, is inefficient and can overlook significant solvent-solvent interactions. This whitepaper details a systematic methodology employing Design of Experiments (DoE) and Principal Component Analysis (PCA) to navigate solvent space rationally. By mapping solvents based on their physicochemical properties, researchers can select optimal, safer, and more effective reaction media in a fraction of the time required by conventional methods, thereby accelerating development in drug discovery and other synthetic domains.

In the development of new synthetic methodologies, the selection of an appropriate solvent is paramount. The solvent can drastically alter the reaction rate, mechanism, and product distribution. Despite its importance, solvent optimization is frequently conducted in a non-systematic manner, relying heavily on a chemist's intuition and previous laboratory experience [19]. This approach is not only time-consuming and resource-intensive but also carries a high risk of failing to identify the true optimum, especially when complex interactions between multiple factors exist.

The integration of Design of Experiments (DoE) and Principal Component Analysis (PCA) provides a powerful framework to overcome these limitations. This guide outlines a robust, data-driven protocol for creating a map of solvent space and utilizing it for efficient reaction optimization, directly addressing the broader thesis of establishing rational, factor-based selection for organic synthesis DoE research.

Theoretical Foundation: PCA for Solvent Mapping

The Rationale for a Property-Based Approach

Every solvent possesses a set of intrinsic physicochemical properties—such as dielectric constant, dipole moment, hydrogen-bond donor/acceptor ability, and polarity parameters—that determine its behavior in a chemical reaction. Instead of testing a haphazard list of solvents, a property-based approach allows for the exploration of a wide, continuous "solvent space." The challenge is that this space is multi-dimensional, making it difficult to visualize and navigate.

Principal Component Analysis (PCA) as a Dimensionality Reduction Tool

PCA is a statistical technique that transforms a large set of correlated variables into a smaller, uncorrelated set of variables called principal components (PCs). The first principal component (PC1) captures the greatest possible variance in the data, the second component (PC2) captures the next greatest variance, and so on. When applied to solvent properties, PCA reduces the numerous physicochemical descriptors to two or three composite dimensions that can be easily visualized as a 2D or 3D map [19]. Solvents with similar properties will cluster together on this map, while dissimilar solvents will be far apart, creating a rational basis for selection.

Experimental Methodology: A Step-by-Step Guide

Solvent and Property Selection

The first step is to assemble a comprehensive library of solvents relevant to synthetic chemistry. A recently developed map for this purpose incorporates 136 solvents characterized by a wide range of properties [19]. Key properties for inclusion typically encompass:

- Polarity and Solvation Parameters: Dielectric constant (ε), Dipole moment (μ), Reichardt's ET(30), Kamlet-Taft parameters (α, β, π*).

- Physical Properties: Boiling point, Vapor pressure, Viscosity, Surface tension.

- Hazard and Safety: Carcinogenicity, Mutagenicity, Flammability.

Table 1: Key Physicochemical Properties for Solvent PCA

| Property Category | Specific Parameter | Role in Reaction Performance |

|---|---|---|

| Polarity | Dielectric Constant (ε) | Influces ion solvation and stability; critical for polar mechanisms. |

| Dipole Moment (μ) | Affects interactions with polar molecules and transition states. | |

| Hydrogen-Bonding | Kamlet-Taft α (HBD acidity) | Measures ability to donate a hydrogen bond. |

| Kamlet-Taft β (HBA basicity) | Measures ability to accept a hydrogen bond. | |

| Polarizability | Kamlet-Taft π* | Measures dipolarity/polarizability. |

| Physical Property | Boiling Point | Informs on reaction temperature range and ease of removal. |

Data Preprocessing and PCA Execution

- Data Matrix Construction: Compile a data matrix where rows represent the 136 solvents and columns represent the selected, normalized physicochemical properties.

- Data Standardization: Normalize the data for each property to a common scale (e.g., mean of 0, standard deviation of 1) to prevent variables with larger numerical ranges from dominating the analysis.

- PCA Calculation: Perform the PCA using statistical software (e.g., R, Python with scikit-learn, or commercial statistical packages). The output will include:

- Loadings: The contribution of each original property to each principal component. This reveals what each PC represents chemically (e.g., PC1 might be a "polarity" axis, PC2 a "hydrogen-bonding" axis).

- Scores: The coordinates of each solvent on the new principal component axes, which are used to create the solvent map.

The following workflow diagram illustrates the core process of creating and utilizing the solvent map.

DoE for Reaction Optimization on the Solvent Map

Once the solvent map is established, it becomes the foundation for a highly efficient DoE.

- Selecting Solvent Candidates: Choose a diverse subset of 5-7 solvents that are widely dispersed across the PCA map to ensure a broad exploration of chemical space [19]. This is far more efficient than testing 5-7 structurally similar solvents.

- Designing the Experiment: A typical approach is to use the scores of the first two principal components (PC1 and PC2) as the continuous factors in a response surface methodology (RSM) design, such as a central composite design (CCD). This treats solvent composition as a continuous, multi-property variable.

- Execution and Analysis: Run the reactions as per the experimental design. Measure the critical responses (e.g., yield, conversion, selectivity). Fit the data to a statistical model to generate a response surface, which predicts the performance of any solvent within the mapped space, even those not experimentally tested.

Case Study: Optimization of an SNAr Reaction

The application of this methodology was demonstrated in the optimization of a nucleophilic aromatic substitution (SNAr) reaction [19]. By using the novel PCA solvent map, the research team was able to systematically identify solvents that promoted high yield and selectivity. The model built from the DoE results allowed them to understand which combination of solvent properties (as defined by the principal components) was critical for success. Furthermore, the map facilitated the identification of safer, less hazardous solvent alternatives that performed as well as or better than traditional, more problematic solvents, thereby supporting the development of greener synthetic processes.

A separate case study involving the optimization of a hydrogenation reaction for an halogenated nitroheterocycle further underscores the power of DoE. While initially focused on catalyst screening, the subsequent optimization stage used a factorial design to efficiently understand the impact and interactions of catalyst loading, temperature, and pressure, identifying catalyst loading as the most significant factor [12].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and resources required to implement this solvent optimization strategy.

Table 2: Essential Research Reagent Solutions for Solvent Mapping and DoE

| Item Name | Function / Purpose | Specification / Notes |

|---|---|---|

| Solvent Library | Provides the chemical space for experimental testing. | Should include 100+ solvents covering a wide range of polarities, hydrogen-bonding capabilities, and structures [19]. |

| Statistical Software | For performing PCA, designing DoE, and building response models. | Examples: R, Python (with pandas, scikit-learn), JMP, Design-Expert, Minitab. |

| Physicochemical Database | Source of numerical properties for each solvent in the library. | Databases: PubChem, CRC Handbook, solvent supplier technical data. |

| DoE Consumables | High-throughput experimentation equipment. | Includes vial racks, automated liquid handlers, and multi-place reaction stations for parallel synthesis. |

| Analytical Instrumentation | For quantifying reaction outcomes (yield, conversion). | HPLC, GC-MS, or NMR spectroscopy for accurate and precise analysis. |

The integration of PCA-based solvent mapping with DoE represents a paradigm shift in reaction optimization for organic synthesis. This methodology moves solvent selection from an art based on anecdotal experience to a science driven by data and statistical modeling. It enables researchers to efficiently explore a vast chemical space, uncover complex relationships, and identify superior solvent systems with confidence. For drug development professionals operating under stringent time and resource constraints, adopting this systematic approach is not just an advantage—it is a necessity for maintaining a competitive edge in modern synthetic chemistry.

Leveraging Definitive Screening Designs for High-Dimensional Factor Spaces

The optimization of organic synthesis is a fundamental process in pharmaceutical research and development, traditionally governed by labor-intensive, time-consuming methods that require the exploration of a high-dimensional parametric space [9]. Historically, this has been accomplished through manual experimentation guided by chemist intuition or via one-factor-at-a-time (OFAT) approaches, where reaction variables are modified sequentially to find optimal conditions for a specific reaction outcome [5]. The OFAT method, while straightforward, suffers from significant limitations: it is resource-intensive, becomes impractical as system complexity grows, and crucially, fails to detect interactions between factors, often resulting in suboptimal conditions [5].

The paradigm is shifting with advances in lab automation and the introduction of machine learning algorithms, enabling the synchronous optimization of multiple reaction variables [9]. Within this modern framework, Design of Experiments (DoE) emerges as a powerful statistical modeling strategy for planning and analyzing experiments that simultaneously investigates multiple factors [5]. For organic synthesis, where factors can include temperature, catalyst loading, concentration, solvent composition, and more, selecting the optimal experimental design is paramount. This guide focuses on Definitive Screening Designs (DSDs), a specialized class of DoE that offers unique advantages for navigating the high-dimensional factor spaces typical in organic synthesis optimization.

What Are Definitive Screening Designs?

Definitive Screening Designs are a modern class of experimental designs that share characteristics with three traditional types of DoE: screening designs, factorial designs, and response surface designs [20]. They are continuous, three-level designs constructed from conference matrices that allow for the efficient investigation of a large number of factors in a minimal number of experimental runs [21] [20].

Key Characteristics and Structure

The core structure of a DSD involves a specific arrangement of factor levels:

- Three-Level Structure: Each continuous factor is run at a low level (−1), a high level (+1), and a center point (0). This is a fundamental difference from two-level screening designs and enables the detection of curvature in the response [21] [20].

- Run Structure: For a design with

mcontinuous factors, the total number of runs in a single block isn = 2m' + 1, wherem' = mifmis even, andm' = m + 1ifmis odd [21]. This makes DSDs highly efficient; for example, 6 factors can be screened in only 13 runs. - Mirror Image Pairs: The design rows (excluding the center point) consist of pairwise mirror images, where one row is the sign-folded version of another. This is a known technique to convert a screening design into a resolution IV factorial design, protecting main effects from confounding [20].

Table 1: Comparison of Common DoE Types for High-Dimensional Spaces

| Design Type | Primary Purpose | Factor Levels | Key Advantage | Key Limitation | Ideal Use Case |

|---|---|---|---|---|---|