Achieving Precision: A Comprehensive Guide to Temperature Control Reproducibility in Parallel Droplet Reactors

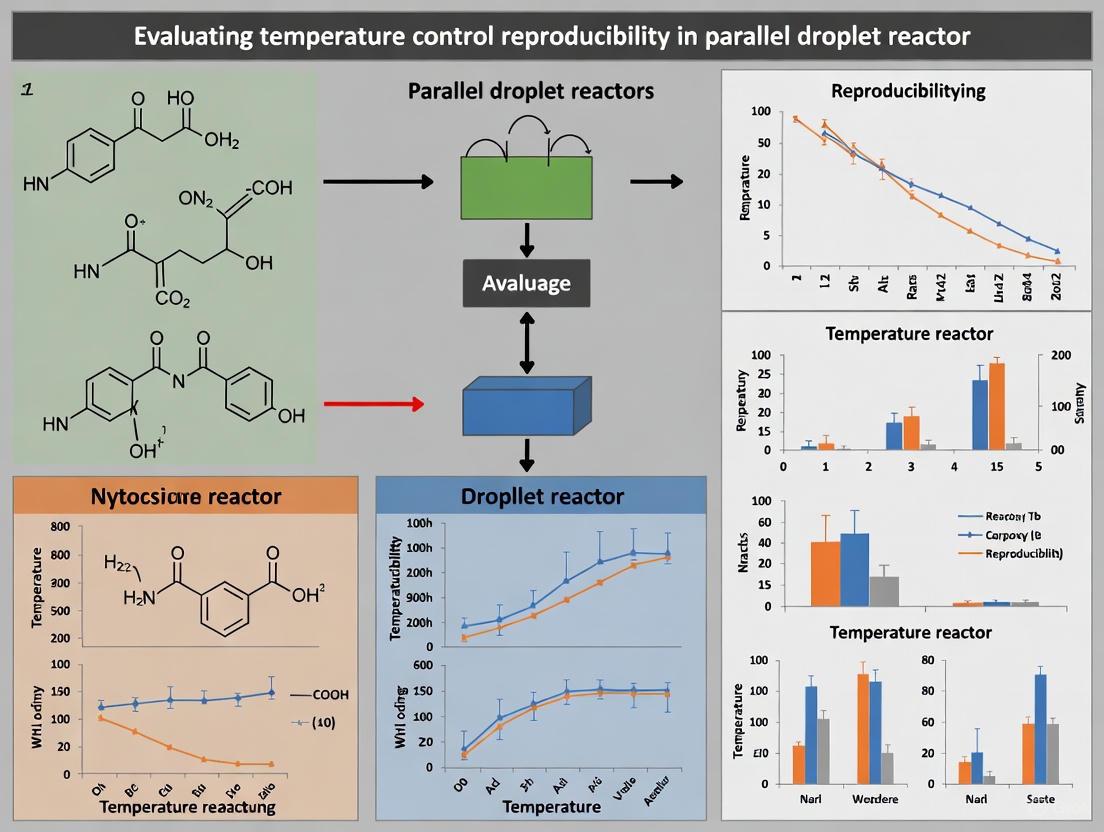

This article provides a thorough evaluation of temperature control reproducibility in parallel droplet microfluidic reactors, a critical technology for high-throughput screening in drug development and biomedical research.

Achieving Precision: A Comprehensive Guide to Temperature Control Reproducibility in Parallel Droplet Reactors

Abstract

This article provides a thorough evaluation of temperature control reproducibility in parallel droplet microfluidic reactors, a critical technology for high-throughput screening in drug development and biomedical research. It explores the fundamental principles governing thermal management at the microscale, details the mechanisms and integration of advanced heating technologies, and presents robust strategies for troubleshooting common issues like channel clogging and thermal crosstalk. By synthesizing foundational knowledge with practical methodological applications and validation frameworks, this guide equips researchers with the knowledge to achieve precise, reliable, and scalable thermal control, thereby enhancing experimental reproducibility and accelerating innovation in areas such as synthetic chemistry and single-cell analysis.

Fundamentals of Thermal Management in Microscale Droplet Systems

Why Temperature Precision is Non-Negotiable in Microfluidic Reactors

In the realm of parallel droplet reactors, temperature precision is not merely a desirable attribute but a foundational requirement for experimental integrity and reproducibility. Microfluidic reactors enable the manipulation of nanoliter to femtoliter fluid volumes within microchannels, harnessing unique fluid dynamics at the microscale where laminar flow dominates due to low Reynolds numbers, thus enhancing mass and heat transfer efficiency [1]. This miniaturization allows for efficient analyses while significantly reducing sample and reagent consumption, integrating multiple laboratory processes into single, compact "lab-on-a-chip" platforms [1]. However, this same miniaturization makes thermal control exceptionally challenging—and vitally important—as even minor temperature deviations can dramatically alter reaction kinetics, product yields, and experimental conclusions.

The high surface-area-to-volume ratio that enables rapid heat transfer also creates vulnerability to rapid heat dissipation and makes these systems susceptible to temperature fluctuations [1]. For researchers in pharmaceutical development and chemical synthesis working with parallel reactor systems, understanding and implementing precise temperature control is therefore non-negotiable for generating reliable, reproducible data. This guide examines the critical relationship between temperature precision and experimental outcomes in microfluidic environments, providing a comprehensive comparison of control methodologies and their implications for research reproducibility.

Temperature Control Techniques: A Comparative Analysis

Various approaches have been developed to regulate temperature within microfluidic systems, each with distinct advantages, limitations, and performance characteristics. The choice of technique significantly influences the precision, accuracy, and applicability of microfluidic reactors for different experimental needs.

External Heating Methods

External heating techniques employ commercial heating elements positioned outside the microfluidic device. The most common approach utilizes Peltier elements (thermoelectric coolers) which can both heat and cool, making them suitable for applications requiring thermal cycling [1] [2]. These systems typically achieve temperature ramp rates from 4-100°C/s for heating and 5-90°C/s for cooling, with accuracy ranging from ±0.1°C to ±0.5°C [2] [3]. Another external method uses pre-heated liquids flowing through control channels adjacent to reaction channels, capable of switching between 5°C and 45°C in less than 10 seconds [3].

While external methods are relatively straightforward to implement and offer good temperature uniformity, their control is not fully integrated, potentially limiting response times and spatial resolution [3]. The thermal mass of the system components can also hinder rapid cooling and contribute to temperature overshoot [1].

Integrated Heating Approaches

Integrated heating methods incorporate heating elements directly within the microfluidic device, enabling more precise localized control. Joule heating uses electrical current passed through the fluid or integrated structures to generate heat, achieving ramp rates up to 20°C/s and temperatures from 25°C to 130°C with accuracy of ±0.2°C [3]. Integrated resistive heaters using thin-film metals can reach temperatures from 20°C to 96°C with power requirements of approximately 1000mW [3].

More advanced integrated approaches include micro-Peltier junctions as small as 0.6 × 0.6 × 1 mm³ integrated directly into devices, generating temperatures from -3°C to 120°C with 0.2°C accuracy and exceptional ramp rates of 106°C/s for heating and 89°C/s for cooling [2]. Emerging technologies also incorporate liquid metal-based sensing and carbon nanotubes infused with gallium for innovative thermal monitoring capabilities [1].

Integrated methods generally offer faster response times and better localization but increase fabrication complexity and may introduce compatibility issues with biological samples or specific solvents.

Advanced and Emerging Techniques

Recent advances in temperature control include microwave heating which can achieve extremely rapid ramp rates up to 2,000°C/s, though temperature stability can be challenging [3]. Artificial intelligence-driven feedback systems represent the cutting edge, enabling adaptive, real-time thermal optimization that significantly enhances precision and responsiveness [1].

Additive manufacturing technologies now allow direct integration of heating elements and sensors during microchip fabrication, improving thermal efficiency and device compactness [1]. Additionally, non-contact thermal monitoring using temperature-sensitive quantum dots provides innovative approaches for real-time thermal sensing without physical intrusion [1].

Table 1: Performance Comparison of Microfluidic Temperature Control Techniques

| Control Method | Temperature Range (°C) | Heating/Cooling Rate (°C/s) | Accuracy (±°C) | Integration Level | Key Applications |

|---|---|---|---|---|---|

| Peltier Elements | -3 to 120 | 4-100 (heat), 5-90 (cool) | 0.1-0.5 | Low to Moderate | PCR, general thermal cycling |

| Pre-heated Liquids | 5-45 | ~0.5-4 | 0.3-1 | Low | Cell analysis, continuous flow |

| Joule Heating | 25-130 | Up to 20 | 0.2-2 | High | Chemical synthesis, droplet control |

| Integrated Micro-Peltier | -3-120 | 106 (heat), 89 (cool) | 0.2 | High | High-speed PCR, nanoliter reactions |

| Microwave Heating | 20-70 | Up to 2,000 | Not stable | Moderate | Ultra-rapid heating applications |

| AI-Driven Control | Application-dependent | Adaptive | <0.1 (potential) | High | Complex optimization tasks |

Experimental Evidence: Connecting Temperature Precision to Reproducible Outcomes

The critical importance of temperature precision in microfluidic reactors is demonstrated through specific experimental investigations across various applications. These studies quantitatively link thermal control to experimental reproducibility and outcome reliability.

Case Study 1: Parallelized Droplet Reactor Performance

Recent research with parallelized droplet reactor platforms highlights exacting standards for temperature control. These systems, designed for both thermal and photochemical reactions, specifically target reproducibility with less than 5% standard deviation in reaction outcomes—a benchmark that demands precise thermal management [4]. The platform operates across a broad temperature range (0-200°C, solvent-dependent) while maintaining independent temperature control for each of ten parallel reactor channels [4].

This independent control is crucial for valid experimental comparisons, as it eliminates temperature as a confounding variable when assessing other reaction parameters. The integration of Bayesian optimization algorithms with the temperature control system further enhances efficiency by leveraging preexisting reaction information to guide experimental conditions [4]. This approach demonstrates how precise thermal control enables high-fidelity reaction screening and optimization while using minimal material.

Case Study 2: Droplet Stability Under Thermal Stress

Investigations into the dynamics of temperature-actuated droplets within microfluidics reveal how thermal precision directly impacts system stability. Research shows that for every 10°C increase in temperature, droplet diameter increases by approximately 5.7% for pure oil and 4.2% for oil with surfactant due to changes in aqueous phase density [5].

This thermal expansion has profound implications for experimental reproducibility. Without accounting for these predictable volume changes, concentration calculations and reaction rates become significantly skewed. The study further demonstrated that droplets become increasingly unstable when transported at temperatures above 60°C, with instability manifesting as irregular movement and coalescence risk [5]. The addition of SPAN 20 surfactant improved droplet stability at higher temperatures, but the fundamental relationship between temperature control and system behavior remained critical.

Perhaps most significantly, this research developed a validated 3D numerical model that accounts for temperature-dependent properties including surface tension, density, and viscosity of both phases—highlighting the complex interplay between thermal conditions and droplet physics [5].

Case Study 3: Ceramic Microreactors for High-Temperature Syntheses

The development of ceramic microreactors for high-temperature applications illustrates the material considerations essential for thermal precision. These systems, based on Low Temperature Cofired Ceramics (LTCC) technology, integrate microfluidics with embedded heaters and sensors to perform reactions at temperatures up to 300°C [6].

The monolithic design enables precise thermal management for applications such as quantum dots synthesis, where temperature directly determines nanoparticle size and properties. The integration of a dedicated digital PID controller implemented on a PIC18F4431 microcontroller demonstrates the level of sophistication required for maintaining thermal stability in these systems [6]. The exceptional thermal and chemical resistance of ceramic materials enables reproducible performance even with organic solvents and reagents that would compromise polymer-based systems.

Table 2: Impact of Temperature Precision on Experimental Parameters in Microfluidic Reactors

| Experimental Parameter | Effect of Temperature Variation | Consequence for Reproducibility |

|---|---|---|

| Droplet Volume/Size | 5.7% diameter increase per 10°C for pure oil systems | Altered reagent concentrations, changed reaction kinetics |

| Reaction Kinetics | Exponential change with temperature (Arrhenius equation) | Inconsistent reaction rates, variable conversion yields |

| Material Properties | Changed viscosity, density, surface tension | Altered flow profiles, mixing efficiency, droplet stability |

| Biological Activity | Enzyme denaturation, cell viability impacts | Inconsistent bioassay results, variable cell responses |

| Nanoparticle Synthesis | Determines crystal size, size distribution, morphology | Batch-to-batch variability in material properties |

| PCR Efficiency | Specific temperature requirements for denaturation, annealing, extension | False positives/negatives, quantitative inaccuracies |

Implementation Framework: Protocols for Precision

Achieving temperature precision in microfluidic reactors requires systematic implementation across device design, control systems, and operational protocols. The following methodologies represent best practices derived from experimental studies.

Temperature Controller Implementation

For ceramic microreactors requiring high-temperature operation, researchers have successfully implemented a digital PID controller using a PIC18F4431 microcontroller with computer monitoring [6]. This approach separates the control electronics from high-temperature zones to prevent heat damage while maintaining precise regulation. The PID algorithm continuously adjusts heating power based on the difference between setpoint and measured temperatures, with proportional, integral, and derivative terms optimized for the specific thermal mass and insulation characteristics of the microreactor assembly.

Microfluidic Device Design for Thermal Management

Device architecture significantly influences thermal performance. Key considerations include:

Material Selection: Polydimethylsiloxane (PDMS) offers relatively low thermal conductivity (0.15 W/mK typically), allowing efficient heat transfer from source to liquid while minimizing energy losses [3]. Ceramic substrates provide high-temperature stability but different thermal transfer characteristics.

Integration Approach: Modular designs allow easy exchangeability but may sacrifice thermal performance compared to monolithic systems that integrate heating elements directly during fabrication [6].

Geometric Factors: Channel diameter, wall thickness, and heater placement all influence thermal response times and gradient formation. Research shows heater placement significantly affects droplet stability, with optimal performance achieved when heaters are positioned downstream from droplet generation sites [5].

System Validation and Calibration Protocols

Robust temperature validation is essential for reproducible research. Effective methodologies include:

Direct Sensing: Integration of thin platinum resistance sensors (50nm) whose electrical resistance changes nearly linearly with temperature, enabling real-time monitoring [3].

Thermocouple Calibration: Ensuring all thermocouples are calibrated and identically positioned relative to reaction zones [4].

Performance Verification: Conducting thermal characterization under actual operating conditions to verify response times, stability, and gradient formation.

The following diagram illustrates the key relationships between temperature control components and experimental outcomes in microfluidic reactors:

Temperature Control Impact Pathway

The Scientist's Toolkit: Essential Solutions for Thermal Precision

Successful implementation of precise temperature control in microfluidic reactors requires specific materials and instrumentation. The following toolkit identifies essential components and their functions for researchers designing thermally-stable microfluidic systems.

Table 3: Research Reagent Solutions for Microfluidic Temperature Control

| Toolkit Component | Function | Performance Considerations |

|---|---|---|

| Peltier Elements (TECs) | Solid-state heat pumping for heating/cooling | Compact size, rapid response, susceptible to overshoot |

| Platinum Resistance Sensors | Temperature measurement via resistance change | Near-linear temperature response, high accuracy |

| PID Control Systems | Algorithmic temperature regulation | Prevents overshooting, maintains setpoint stability |

| Ceramic Microreactors (LTCC) | High-temperature reaction platforms | Withstand up to 300°C, chemical resistance |

| PDMS Microfluidic Chips | Flexible, low-thermal-conductivity substrates | k = 0.15 W/mK, enables efficient heat transfer |

| Surfactants (e.g., SPAN 20) | Stabilize droplets at elevated temperatures | Prevents coalescence above 60°C |

| AI-Driven Optimization | Adaptive experimental design | Leverages prior data for thermal parameter optimization |

| Microfluidic Distributor Chips | Precise flow distribution to parallel reactors | <0.5% RSD between channels ensures thermal uniformity |

| Individual Reactor Pressure Control | Compensates for pressure drop variations | Maintains consistent flow despite catalyst changes |

Temperature precision in microfluidic reactors transcends technical preference to become a fundamental requirement for experimental validity. The evidence consistently demonstrates that thermal control directly influences essential parameters including reaction kinetics, droplet stability, product quality, and ultimately, data reproducibility. As microfluidic systems continue to enable more complex experimental designs with parallelization and miniaturization, the demand for sophisticated temperature management will only intensify.

Emerging technologies including AI-driven control systems, advanced nanomaterials for sensing, and innovative fabrication techniques promise enhanced thermal precision for future microfluidic platforms. However, the principles remain constant: rigorous validation, appropriate system design, and understanding of temperature-dependent phenomena are all essential components of reliable research using microfluidic reactors. For scientists in drug development and chemical research, investing in robust temperature control infrastructure is not merely optimizing experimental conditions—it is safeguarding the very integrity of their scientific conclusions.

In the development of modern technologies, from advanced drug discovery platforms to powerful microelectronics, the ability to control heat at the microscale has become fundamentally important. Thermal management in microfluidic systems and parallel reactors is particularly crucial for applications such as high-throughput screening in pharmaceutical development, where precise temperature control directly impacts reaction kinetics, reproducibility, and ultimately, the validity of experimental results. At these diminutive scales, heat transfer phenomena differ significantly from macroscale behavior due to the dramatically increased surface-area-to-volume ratio, which amplifies the effects of surface interactions and makes heat loss to the surroundings a dominant factor [7] [8].

The core challenge in microscale heat transfer stems from the breakdown of classical theoretical frameworks. Fourier's Law of heat conduction, which reliably describes heat transfer at macroscopic scales, often fails to accurately predict thermal behavior when the system dimensions become comparable to or smaller than the mean free path of thermal carriers (phonons and electrons) [8]. This deviation from classical behavior necessitates specialized measurement techniques, innovative materials, and sophisticated control strategies to manage thermal processes in microfluidic and parallel reactor systems effectively. Understanding these principles is especially critical for applications requiring high temperature control reproducibility, such as in parallel droplet reactors used for drug screening and development.

Fundamental Physics of Microscale Heat Transfer

Departure from Classical Macroscale Behavior

At the microscale, the physics of heat transfer undergoes a significant transformation. The primary reason for this fundamental shift is the dramatically increased surface-to-volume ratio, which makes surface effects dominant over bulk material properties [8]. In macroscopic systems, heat transfer follows well-established continuum models where Fourier's Law provides accurate predictions. However, when system dimensions approach the mean free path of energy carriers (typically 1-100 nm for phonons in non-metallic systems), these classical models become inadequate [8].

The failure of Fourier's Law at microscales can be quantitatively identified through the parameterization of thermal conductivity as κ ~ L^β, where L represents the characteristic length and β indicates deviation from classical behavior (β = 0 indicates perfect agreement with Fourier's Law) [8]. Experimental and computational studies have demonstrated significant deviations in various microscale systems, including multiwalled carbon nanotubes, boron-nitride nanotubes, superlattice structures, and silicon nanowires [8]. This deviation presents both challenges and opportunities—while complicating thermal management, it enables the development of materials with extremely low thermal conductivity for applications such as solid-state refrigeration devices [8].

Key Challenges in Microscale Thermal Management

Several interconnected challenges define the landscape of microscale heat transfer management. First, rapid heat dissipation to the surroundings presents a major obstacle because the high surface-area-to-volume ratio that characterizes microscale systems facilitates extremely efficient thermal exchange with the environment [7]. This effect is particularly pronounced in microfluidic droplet systems, where reaction heat can quickly dissipate into the surrounding oil and chip walls, potentially quenching thermal signatures of reactions [9].

Second, non-uniform thermal distributions emerge due to the difficulty of maintaining consistent temperature fields across microscale geometries. The substantial thermal gradients that can develop within microchannels or droplets significantly impact reaction kinetics and yields [10]. This challenge is especially critical in exothermic reactions like the oxidative coupling of methane, where hotspot formation can lead to undesirable side reactions and reduced product selectivity [10].

Third, measurement limitations constrain researchers' ability to characterize thermal phenomena at microscales. Traditional embedded sensors like thermocouples and thin-film resistors are confined to single-point measurements and cannot provide full-field temperature mapping [7]. Even advanced techniques like Raman spectroscopy, while offering high spatial resolution (approximately 500 nm), suffer from slow capture speeds (approximately 0.5 points per second), making them unsuitable for capturing dynamic thermal processes [7].

Experimental Approaches for Measuring Microscale Temperatures

Advanced Temperature Sensing Techniques

Thermochromic Liquid Crystal (TLC) Calorimetry

Experimental Protocol: Microfluidic optical calorimetry using thermochromic liquid crystals (TLCs) involves several carefully orchestrated steps. First, a microfluidic droplet generation chip is fabricated with separate inlet channels for aqueous reactants, limiting contact between streams until immediately before droplet formation [9]. TLC slurry is prepared by purchasing microencapsulated chiral nematic TLCs as a 40% (w/w) slurry and diluting them to 4% (w/w) in an appropriate buffer containing 0.015% (w/v) Triton X-100 to prevent non-specific interactions [9]. The slurry is filtered through a 20 μm nylon net filter, resulting in TLC particles with an average size of 7 μm [9].

During operation, aqueous reactant streams containing TLCs in one stream converge at a junction with immiscible fluoropolymer oil flows, forming discrete aqueous droplets approximately 100 μm in diameter (≈500 pL volume) surrounded by an oil sheath [9]. The fluoropolymer oil serves as both a droplet stabilization medium and thermal insulator, maximizing heat retention within droplets [9]. As reactions occur inside droplets, temperature changes induce color shifts in the TLCs, with reflectance spectra shifting at rates up to 200 nm/K [9]. These spectral changes are recorded using a sensitive wavelength shift detector as droplets travel through the detection region, achieving temperature resolution of approximately 6 mK [9].

Table 1: Performance Comparison of Microscale Temperature Measurement Techniques

| Technique | Spatial Resolution | Temperature Resolution | Temporal Resolution | Key Advantages | Primary Limitations |

|---|---|---|---|---|---|

| TLC Calorimetry [9] | Single droplet level (≈100 μm) | ≈6 mK | Limited by droplet flow rate | High sensitivity, suitable for reaction enthalpy measurements | Requires particle incorporation in droplets |

| Rhodamine B Functionalized PDMS (RAP) [7] | 5 μm (after processing) | 2-6°C | 550 ms | 3D mapping capability, excellent stability | Lower temperature resolution |

| Fluorescence Thermometry (RhB in solution) [7] | Sub-micrometer | Not specified | Microsecond | High spatial and temporal resolution | Photobleaching, solvent absorption, potential interference with reactions |

| Raman Spectroscopy [7] | ≈500 nm | Not specified | 0.5 points/second | Excellent spatial resolution | Very slow capture speed |

Rhodamine B Functionalized PDMS (RAP) for 3D Thermal Mapping

Experimental Protocol: The RAP method begins with synthesizing the temperature-sensitive material by grafting Rhodamine B to polydimethylsiloxane (PDMS) using allyl glycidyl ether (AGE) as a molecular connector [7]. The chemical process involves three sequential reactions: initial partial polymerization of PDMS, platinum-catalyzed hydrosilation between remaining Si-H groups and vinyl groups of AGE, and final epoxy ring opening to link RhB to PDMS after full curing [7]. Optimization studies determined ideal parameters as 25 hours incubation time, 0.02 wt% RhB concentration, and 2-4 wt% AGE concentration, which maintain mechanical properties and bonding force of the resulting microfluidic chip [7].

For temperature mapping, the calibrated intensity-temperature relationship (fluorescence intensity decreases linearly with temperature increases from 20 to 100°C) is applied to convert fluorescence intensity measurements to temperature values [7]. A confocal microscope performs optical sectioning of the RAP material surrounding microchannels or chambers, enabling reconstruction of three-dimensional temperature fields [7]. The method achieves spatial resolution of approximately 5 μm after adjacent-averaging processing, temporal resolution of 550 ms, and temperature resolution between 2-6°C depending on capture parameters [7].

Figure 1: Experimental workflow for 3D temperature mapping using Rhodamine B functionalized PDMS (RAP)

Research Reagent Solutions for Microscale Thermal Studies

Table 2: Essential Research Reagents and Materials for Microscale Thermal Experiments

| Reagent/Material | Function | Application Examples | Key Characteristics |

|---|---|---|---|

| Thermochromic Liquid Crystals (TLCs) [9] | Temperature transduction via color shift | Optical calorimetry in microfluidic droplets | 7-10 μm diameter, 200 nm/K spectral shift, ≈6 mK resolution |

| Rhodamine B Functionalized PDMS (RAP) [7] | 3D temperature mapping material | Full-field thermal monitoring in microchannels | Grafted structure, linear intensity-temperature response, excellent stability |

| Fluoropolymer Oil [9] | Droplet phase and thermal insulator | Microfluidic droplet calorimetry | Immiscible with aqueous solutions, low thermal conductivity |

| Nanodiamond Nanofluids [11] | Heat transfer enhancement | Microchannel cooling systems | High thermal conductivity (up to 3320 W/m·K), particle size ≈10 nm |

| Carboxylated Nanodiamonds [11] | Stable nanofluid formulation | Advanced thermal management | Surface functionalization improves dispersion stability |

Heat Transfer Enhancement Strategies at Microscale

Passive Flow Manipulation Techniques

Geometric modifications to microchannels represent a powerful approach to enhancing heat transfer without external energy input. The introduction of helical connectors at the inlet of microchannels has demonstrated significant potential for improving heat transfer coefficients, particularly at low Reynolds numbers where traditional enhancement methods are ineffective [11]. Experimental investigations have revealed that helical connectors can act as either flow stabilizers or mixers depending on their geometric characteristics relative to flow conditions [11]. When functioning as mixers, these connectors promote secondary flows that increase molecular random motion, thereby enhancing thermal transport [11].

The efficacy of geometric modifications is strongly influenced by specific design parameters. In mini twisted oval tubes, for instance, the cross-sectional aspect ratio (major axis diameter divided by minor axis diameter) significantly impacts heat transfer enhancement while maintaining constant hydraulic diameter [11]. These geometric strategies are particularly valuable in microelectronics cooling applications, where they improve thermal management without increasing system complexity or power requirements [11].

Nanofluids for Enhanced Thermal Performance

Nanofluids—base fluids containing suspended nanoparticles—offer another promising approach to microscale heat transfer enhancement. Experimental studies with diamond-deionized water nanofluids at 0.1 wt% concentration have demonstrated substantially improved heat transfer coefficients compared to pure base fluids [11]. The enhancement mechanism involves increased thermal conductivity, greater surface area for heat exchange, and intensified particle collisions due to nanoparticle presence [11].

However, nanofluid performance depends critically on multiple factors. Higher nanoparticle concentrations generally improve thermal conductivity (up to 17.8% enhancement reported for 1.0% nanodiamond in ethylene glycol/water mixtures) but simultaneously increase viscosity, which can impede fluid flow and diminish heat transfer benefits [11]. Temperature also plays a crucial role, with elevated temperatures typically reducing viscosity while enhancing thermal conductivity [11]. Most importantly, dispersion stability fundamentally determines nanofluid efficacy, as aggregation and sedimentation lead to inconsistent thermophysical properties and potential channel blockages [11]. Surface functionalization strategies, such as carboxylation of nanodiamonds, combined with ultrasonication have proven effective for achieving homogeneous dispersions with stable thermal performance [11].

Figure 2: Microscale heat transfer enhancement strategies and their pathways to performance improvement

Implications for Parallel Reactor Systems and Temperature Control Reproducibility

Precision Control in Parallel Systems

In parallel reactor systems, maintaining consistent temperature conditions across multiple reaction channels presents substantial technical challenges. The fundamental requirement for reproducible results in high-throughput experimentation is precise fluid distribution and thermal uniformity, which becomes increasingly difficult to achieve as system scale decreases [12]. Advanced microfluidic distribution systems have been developed to address this challenge, with proprietary distributor chips guaranteeing flow distribution precision of <0.5% RSD between channels [12].

The critical importance of individual reactor pressure control has been recognized as essential for maintaining distribution precision when catalyst pressure drop varies between reactors or changes over time due to blockages [12]. Systems incorporating individual Reactor Pressure Control (RPC) modules can actively compensate for such variations by maintaining equal reactor inlet pressures across all reactors, thereby ensuring consistent flow distribution and thermal conditions [12]. This capability is particularly valuable for low-pressure processes like oxidative coupling of methane, where even minor pressure variations significantly impact product yields [12].

Reproducibility Challenges in Biological Systems

The complex interplay between heat transfer and biological systems introduces additional reproducibility challenges in parallel reactor applications. Studies of parallel continuous dark fermentation systems for biohydrogen production have demonstrated that even under strictly controlled identical conditions, complete consistency across reactors is difficult to achieve [13]. While key performance indicators and core microbial features show broad reproducibility, variations in microbial community structure and relative abundances inevitably occur between reactors over time [13].

Operational disturbances common in microscale systems—including feed line clogging, pH control failures, and mixing interruptions—further complicate thermal management and process reproducibility [13]. These findings highlight the sensitivity of biological systems to subtle thermal variations and underscore the necessity for refined control strategies to translate laboratory results into stable, high-performance real-world systems [13].

The specialized field of microscale heat transfer presents unique challenges that demand sophisticated measurement techniques and enhancement strategies. The departure from classical macroscopic behavior necessitates approaches like TLC calorimetry and RAP-based 3D thermal mapping to characterize thermal phenomena at these diminutive scales. The integration of passive geometric modifications and nanofluids offers promising pathways for performance improvement, while advanced microfluidic distribution and pressure control systems enable the precision required for reproducible parallel reactor operation. As technologies continue to miniaturize, developing a comprehensive understanding of core physical principles governing heat transfer at the microscale will remain essential for advancing applications across drug discovery, materials synthesis, and microelectronics cooling.

In the pursuit of efficient chemical discovery and development, automated reaction platforms have emerged as transformative tools. Among these, parallel droplet reactors represent a significant advancement, enabling high-throughput experimentation (HTE) with minimal material consumption. Within this context, reproducibility—encompassing both the accuracy (proximity to true value) and precision (degree of measurement repeatability) of reaction outcomes—becomes a paramount metric for evaluating platform performance. Accurate temperature control is a foundational element, as it directly influences reaction kinetics and yield. This guide objectively compares the performance of a next-generation parallel droplet reactor platform against conventional well-plate systems, providing supporting experimental data to define reproducibility in practice.

Platform Comparison: Technical Specifications and Performance Metrics

Parallel Droplet Reactor Platform

The parallelized droplet reactor platform, developed from an oscillatory droplet flow reactor foundation, utilizes a bank of independent parallel reactor channels constructed from fluoropolymer tubes [4]. This design provides high surface-area-to-volume ratios for efficient heat and mass transfer. A key feature is the integration of selector valves upstream and downstream of the reactor bank, enabling the distribution of droplets to assigned reactors and subsequent collection for analysis [4]. The platform operates with a customized control software that synchronizes all hardware operations via a scheduling algorithm to ensure both droplet integrity and operational efficiency [4].

Table 1: Key Specifications of the Parallel Droplet Reactor Platform

| Parameter | Specification |

|---|---|

| Number of Reactors | 10 independent parallel channels [4] |

| Temperature Range | 0 to 200 °C (solvent-dependent) [4] |

| Operating Pressure | Up to 20 atm [4] |

| Reproducibility (Precision) | <5% standard deviation in reaction outcomes [4] |

| Reaction Modes | Thermal and photochemical transformations [4] |

| Analytical Integration | On-line HPLC with minimal delay between reaction completion and evaluation [4] |

| Experimental Design | Integrated Bayesian optimization algorithm for iterative experimentation [4] |

Conventional Well-Plate Systems

In contrast, conventional high-throughput screening often relies on well-plate approaches adopted from life sciences. These systems typically utilize 96- or 384-well plates with well volumes around 300 μL [14]. A significant limitation of these systems is that all reactions on a particular plate are often confined to the same temperature and reaction time, restricting the exploration of continuous variables [4] [14]. Furthermore, the compatibility of well-plate materials with diverse organic solvents can be limited, and operating at elevated temperatures and pressures is challenging [4] [14].

Table 2: Performance Comparison of Reactor Systems

| Performance Characteristic | Parallel Droplet Reactor | Conventional Well-Plate System |

|---|---|---|

| Temperature Control Independence | Full individual control per channel [4] | Typically uniform across a plate [4] [14] |

| Throughput | Moderate but flexible [4] | High (hundreds to thousands) [4] |

| Material Consumption | Microscale, material-efficient [4] [15] | ~300 μL per well [14] |

| Process Window (T, P) | Wide (0-200°C, up to 20 atm) [4] | Limited by solvent boiling points and plate material [4] |

| Reaction Outcome Reproducibility | High (<5% standard deviation) [4] | Subject to greater variability due to less controlled mixing and thermal gradients [4] |

| Optimization Capability | Integrated closed-loop optimization [4] [15] | Typically requires separate, sequential optimization |

Experimental Protocols for Reproducibility Assessment

Protocol for Validating Single-Channel Reproducibility

As a precursor to parallelization, the single-channel version of the droplet platform underwent rigorous validation to ensure its performance met strict reproducibility criteria [4].

- System Calibration: All thermocouples are calibrated and positioned in identical locations on the reactor plate to ensure temperature measurement accuracy [4].

- Droplet Generation and Operation: Reaction mixtures are prepared as discrete droplets within fluoropolymer tubing. While the original design used oscillatory motion for mixing, the platform was switched to stationary operation to mitigate solvent loss issues, relying on diffusion and the platform's design for mixing [4].

- Temperature Control Verification: The reactor is set to a target temperature, and the stability and homogeneity of the temperature within the reaction droplet are verified using calibrated sensors [4].

- Reaction Execution and Analysis: A model reaction is run repeatedly under identical conditions. After the reaction is complete, the droplet is transported to an internal injection valve, where a nanoliter-scale sample (20 nL, 50 nL, or 100 nL) is injected directly into an on-line HPLC system for analysis [4].

- Data Processing: The raw HPLC-DAD (Diode Array Detector) data is automatically exported and analyzed. Advanced data analysis tools, such as the MOCCA (Multivariate Online Chromatogram Classification and Analysis) open-source Python project, can be employed. MOCCA uses automated peak deconvolution routines to accurately quantify reaction outcomes, even in the presence of overlapped peaks from impurities or side products, ensuring analytical accuracy [15].

- Precision Calculation: The standard deviation of the reaction outcomes (e.g., yield or conversion) across multiple replicate runs is calculated. The platform was validated to achieve a standard deviation of less than 5% [4].

Protocol for a Comparative Reaction Optimization Campaign

This protocol demonstrates how the parallel droplet platform can be used for closed-loop optimization, directly showcasing its capability to generate precise and accurate data efficiently.

- Objective Definition: The goal is to optimize the yield of a model reaction, such as a Pd-catalyzed C-N coupling, by varying continuous parameters (e.g., temperature, residence time, concentration) and categorical parameters (e.g., solvent, ligand) [15].

- Algorithm Integration: A Bayesian optimization algorithm (e.g., EDBO) is integrated into the platform's control software. The algorithm proposes a set of experimental conditions for the next batch of reactions [4] [15].

- Parallelized Execution: The control software translates the proposed parameters into an experimental protocol. Using the liquid handler and selector valves, reaction droplets are prepared and dispatched to the available parallel reactor channels, each operating at its independently controlled set of conditions [4].

- Scheduled Analysis: Upon reaction completion, a scheduling algorithm orchestrates the transport of droplets to the on-line HPLC for sequential analysis, minimizing delay and eliminating the need for manual quenching [4].

- Feedback Loop: The HPLC-DAD raw data is automatically analyzed (e.g., by MOCCA), and the results (e.g., yield) are fed back to the Bayesian optimizer. The algorithm then uses this data to propose the next set of experiments, closing the loop [15].

- Performance Benchmarking: The optimization efficiency and the reproducibility of the final optimized conditions are compared against results obtained from a traditional well-plate screening campaign. The droplet platform achieves this with fewer overall experiments and higher data quality per experiment due to its superior control and integrated analytics [4] [15].

The workflow diagram below illustrates the closed-loop optimization process.

Diagram Title: Closed-Loop Reaction Optimization Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key components and reagents essential for operating and validating a parallel droplet reactor system.

Table 3: Key Research Reagent Solutions for Droplet Reactor Platforms

| Item | Function |

|---|---|

| Fluoropolymer Tubing (e.g., PFA) | Serves as the reactor channel; provides broad chemical compatibility and ability to withstand elevated pressures [4]. |

| Selector Valves | Enables distribution of reaction droplets to and from multiple independent reactor channels, facilitating parallelization [4]. |

| Six-Port, Two-Position Valves | Allows isolation of individual reaction droplets within a reactor channel during the reaction period [4]. |

| On-line HPLC with DAD | Provides immediate, automated analysis of reaction outcomes, crucial for real-time feedback and kinetic studies [4] [15]. |

| Bayesian Optimization Software | An algorithm for autonomous experimental design, enabling efficient exploration of complex parameter spaces for optimization [4] [15]. |

| MOCCA (Open-Source Python Tool) | Analyzes complex HPLC-DAD raw data; performs automated peak deconvolution to ensure accurate quantification of reaction components [15]. |

The defining characteristic of a next-generation parallel droplet reactor platform is its ability to deliver high-fidelity data through exceptional control over reaction conditions, particularly temperature. This translates into superior reproducibility, characterized by both high precision (<5% standard deviation) and accuracy, as verified against known model reactions. While conventional well-plate systems offer high throughput for specific applications, they often sacrifice independent control of continuous variables, limiting the depth and quality of information obtained. The integration of parallel operation with closed-loop optimization and advanced analytics makes the droplet platform a powerful tool for researchers and drug development professionals who prioritize data quality and efficient reaction understanding over sheer experimental volume.

Impact of Thermal Fluctuations on Biochemical Kinetics and Assay Results

Reproducible temperature control is a foundational requirement in life sciences research, particularly in the context of high-throughput biochemical assays and reaction optimization. Thermal fluctuations, even of small magnitude, can significantly alter enzymatic kinetics, protein structural ensembles, and ultimately, experimental results. The emergence of parallelized droplet-based microreactors represents a significant advancement, offering the potential for high-fidelity, high-throughput experimentation under independently controlled conditions [4] [15]. This guide provides an objective comparison of this technology against traditional methods, focusing on its capacity to mitigate the impact of thermal fluctuations. Framed within a broader thesis on evaluating temperature control reproducibility, we present experimental data and methodologies that underscore the critical importance of precise thermal management for reliable drug development and biochemical research.

The Critical Link Between Temperature and Biochemical Kinetics

Temperature exerts a profound and complex influence on biochemical systems. Understanding its mechanisms is essential for appreciating the value of advanced reactor technologies.

Fundamental Kinetic and Thermodynamic Effects

At the most basic level, the rate of a biochemical reaction increases with temperature, as described by the Arrhenius equation [16]. This relationship assumes that underlying thermodynamic parameters remain constant. However, for enzymes, this is often an oversimplification. Unlike small-molecule catalysts, enzymes exhibit temperature-dependent structural ensembles [17]. As temperature increases, the distribution of enzyme conformations shifts, which can modulate activity independent of the Arrhenius effect. Multi-temperature X-ray crystallography has shown that even within a linearly increasing Arrhenius plot, enzymes can undergo small but significant structural changes that populate more catalytically competent conformations [17].

Non-Monotonic Responses and Enzyme Denaturation

A hallmark of enzymatic reactions is their non-monotonic temperature response; rates increase to an optimum point ((T_{opt})) before declining sharply [16]. This decline has traditionally been attributed to irreversible thermal denaturation. However, contemporary theories emphasize the role of thermally reversible enzyme denaturation [16]. Due to ceaseless thermal motion, a fraction of enzyme molecules spontaneously unfold into inactive states at any given temperature, with this fraction increasing as temperature rises. This equilibrium between active and inactive states explains the plateau and subsequent decrease in reaction rates observed well below the threshold for irreversible damage [16].

Table 1: Models for Temperature Dependence in Enzymatic Systems

| Model | Core Principle | Explanation for Rate Decline at High T |

|---|---|---|

| Arrhenius/Eyring-Polanyi | Rate increase driven by increased kinetic energy and collision frequency [16]. | Not originally addressed; assumes linearity in log(k) vs. 1/T plots. |

| Macromolecular Rate Theory (MMRT) | Negative heat capacity change (( \Delta C_p^\ddagger )) between ground and transition states affects Gibbs free energy [17] [16]. | Downward curvature in Arrhenius plots due to thermodynamic effects, not denaturation. |

| Equilibrium Model | Thermal equilibrium between active and inactive enzyme states [16]. | Shift in equilibrium towards increasing population of inactive states prior to denaturation. |

| Chemical Kinetics Theory | Combines mass action, diffusion-limited theory, and transition state theory with reversible denaturation [16]. | Plateau and fall-off caused by reversible enzyme denaturation and temperature-dependent binding affinity (K). |

Technology Comparison: Parallel Droplet Reactors vs. Conventional Systems

The choice of experimental platform is critical for controlling temperature and ensuring reproducible kinetics data. The following comparison contrasts a conventional well-plate system with an automated parallel droplet reactor.

Experimental Protocol for Droplet Reactor Performance

Objective: To validate the performance of a parallelized droplet reactor platform against predefined design criteria, including temperature control reproducibility and operational range [4].

- Platform Setup: The system consists of a bank of ten independent parallel reactor channels constructed from fluoropolymer tubing. Each channel is equipped with individual temperature control and a six-port, two-position valve to isolate reaction droplets [4].

- Temperature Control: Reactors are situated on a thermostatically controlled plate. Each thermocouple is calibrated and identically positioned to ensure uniformity [4].

- Reaction Execution: Droplets containing reagent mixtures are dispensed into the reactor channels via a liquid handler. A scheduling algorithm orchestrates the movement and timing for each droplet to ensure consistent reaction times and prevent cross-contamination [4].

- Analysis: On-line HPLC with an internal injection valve (20-100 nL injection volume) is used for immediate analysis upon reaction completion, eliminating the need for quenching and preserving sample integrity [4] [15]. Data analysis is performed with specialized open-source software (MOCCA) for automated peak deconvolution [15].

Comparative Performance Data

Table 2: Platform Comparison for Temperature Control and Reproducibility

| Feature | Traditional Well-Plate System | Parallel Droplet Reactor Platform |

|---|---|---|

| Temperature Range | Often limited by material compatibility (e.g., plastic) and evaporative loss [4]. | 0 to 200 °C (solvent-dependent) [4]. |

| Temperature Uniformity | All reactions on a plate are typically confined to the same temperature [4]. | Fully independent control for each reactor channel [4] [15]. |

| Pressure Tolerance | Limited to atmospheric or low pressure. | Up to 20 atm [4]. |

| Reaction Reproducibility | Susceptible to evaporative loss and positional effects on a plate. | <5% standard deviation in reaction outcomes [4]. |

| Mixing | Dependent on orbital shaking, leading to potential variability. | Reproducible mixing via droplet oscillation or stationary operation [4] [15]. |

| Throughput vs. Flexibility | High throughput but with constrained variable control (e.g., one temperature per plate) [4]. | Moderate throughput (e.g., 10 channels) with maximum flexibility for independent condition screening [4]. |

Diagram 1: Closed-loop optimization workflow in automated droplet platforms.

Essential Research Reagent Solutions

The following reagents and materials are fundamental to conducting experiments in droplet-based systems and studying temperature-dependent kinetics.

Table 3: Key Research Reagent Solutions for Kinetic Studies

| Reagent/Material | Function in Experimental Context |

|---|---|

| Fluoropolymer Tubing | Reactor material offering broad chemical solvent compatibility and operating up to 200°C and 20 atm [4]. |

| Ionic Liquids | Used as reaction medium in droplet synthesis; colloidally stabilize nanoparticles and allow solvent recycling [18]. |

| 3-Phosphoglycerate Kinase (PGK) | Model monomeric enzyme used to study temperature effects on kcat and Km in thermophilic and mesophilic isolates [19]. |

| rcPEPCK Enzyme | A mesophilic GTP-dependent phosphoenolpyruvate carboxykinase used in multi-temperature crystallography studies to probe structural changes [17]. |

| MOCCA Software | Open-source Python-based tool for automated deconvolution of HPLC-DAD raw data, critical for accurate analysis in feedback loops [15]. |

| Bayesian Optimization Algorithm | An optimal experimental design tool integrated into control software for efficient iterative experimentation and reaction optimization [4]. |

The data and methodologies presented herein lead to a clear conclusion: parallel multi-droplet reactor platforms offer a superior approach for managing thermal fluctuations and ensuring reproducibility in biochemical kinetics studies. While traditional well-plates provide high throughput, they do so at the cost of experimental flexibility and individual reaction control, making them susceptible to temperature-induced variability.

The defining advantages of the droplet platform—independent temperature control for each channel, a broad operating range (0-200°C, 20 atm), and integrated analytics coupled with Bayesian optimization—create a closed-loop system that actively compensates for variability and efficiently navigates complex parameter spaces [4] [15]. For researchers in drug development and related fields, where the fidelity of kinetic and optimization data is paramount, adopting such technologies is a critical step toward more predictive, reliable, and efficient research outcomes.

Advanced Heating Mechanisms and Their Integration in Droplet Platforms

In the field of parallel droplet reactors, precise and reproducible temperature control is a critical parameter for ensuring experimental reliability, particularly in applications such as high-throughput catalyst screening, single-cell analysis, and drug development. Temperature influences reaction kinetics, biomolecular interactions, and material properties, making its control paramount for generating statistically relevant and reproducible data. This guide provides a objective comparison of four primary heating technologies—resistive, Peltier, photothermal, and induction—evaluating their performance within the specific context of droplet microreactors. The analysis synthesizes current experimental data and documented protocols to assist researchers and drug development professionals in selecting the most appropriate temperature control methodology for their high-throughput experimentation needs.

In microfluidic systems, especially those handling droplet-based reactions, heating technologies can be broadly categorized into integrated and external methods. Integrated methods, such as resistive and photothermal heating, incorporate heating elements directly onto or within the microfluidic chip, enabling localized and rapid temperature control. External methods, including Peltier elements and oil baths, heat the entire device or platform from the outside. The choice between these approaches significantly impacts the thermal response time, spatial resolution, and compatibility with high-throughput parallel operations. The following sections and comparative tables detail the specific characteristics, experimental protocols, and performance data of each technology in the context of droplet microreactors.

Comparative Analysis of Heating Technologies

The table below summarizes the core characteristics and a qualitative performance assessment of the four heating technologies based on current implementations and literature.

Table 1: Comparative overview of heating technologies for droplet microreactors

| Technology | Integration Type | Heating Principle | Max Reported Temp (°C) | Relative Response Speed | Relative Spatial Resolution |

|---|---|---|---|---|---|

| Resistive | Integrated | Joule heating | >500 [20] | Very Fast | High |

| Peltier | External | Peltier effect | Information Missing | Moderate | Low |

| Photothermal | Integrated | Light absorption | Information Missing | Fast (theoretical) | Very High (theoretical) |

| Induction | External/Contact-less | Magnetic hysteresis | Information Missing | Fast (theoretical) | Moderate (theoretical) |

Resistive Heating

Principle and Implementation: Resistive, or Joule heating, functions by passing an electric current through a resistive element, generating heat. In droplet microreactors, this is typically achieved using thin-film metallic microheaters fabricated in close proximity to the microfluidic channels. Materials like platinum are preferred for their stability at high temperatures and consistent resistive properties [20]. An integrated Resistance Temperature Detector (RTD) is often used in conjunction, providing real-time temperature feedback by monitoring the resistance change of the platinum structure, enabling precise closed-loop control [20].

Experimental Protocol and Performance Data: A documented application involved a droplet microreactor designed for high-throughput acidity screening of individual fluid catalytic cracking (FCC) catalyst particles [20]. The protocol required an on-chip oligomerization reaction at a stable temperature of 95°C. The system utilized an integrated thin-film platinum microheater with a dedicated RTD for temperature measurement and control. This setup demonstrated the capability to operate at a throughput of 1 catalyst particle every 2.4 seconds, successfully detecting approximately 1000 particles with high stability throughout the experiment [20]. The technology is reported to be stable for operations up to at least 500°C [20].

Table 2: Quantitative performance data for resistive heating in microreactors

| Parameter | Reported Value / Capability |

|---|---|

| Temperature Stability | Suitable for precise control of on-chip reactions at 95°C [20] |

| Throughput | ~1,000 catalyst particles analyzed at a rate of 1 particle/2.4s [20] |

| Heater Stability | Stable up to at least 500°C [20] |

| Key Advantage | Fast temperature cycling and localized heating [20] |

Peltier Heating

Principle and Implementation: Peltier devices, or thermoelectric coolers (TECs), utilize the Peltier effect to create a heat flux between the junction of two different materials when an electric current is passed through them. A single Peltier module can both heat and cool by reversing the current direction, which is a significant advantage for applications requiring sub-ambient temperatures or precise thermocycling. In microfluidics, they are typically used as an external heating method, where the module is placed in contact with the microfluidic chip or a segment of the flow system to control its temperature.

Experimental Protocol and Performance Data: While the specific search results do not contain a detailed experimental protocol for Peltier heating in droplet reactors, this technology is commonly employed in commercial instruments and lab-built setups for plate-based HTE. Its strength lies in providing robust, if slower, heating and cooling for well-plates or larger sections of microfluidic devices, rather than for localized, rapid heating of individual droplets.

Photothermal Heating

Principle and Implementation: Photothermal heating relies on the absorption of light (often from a laser) by a material, which then dissipates the energy as heat. This is a non-contact method that can achieve extremely high spatial resolution, theoretically targeting individual droplets or specific regions within a channel. The heating efficiency depends on the absorption cross-section of the target material.

Experimental Protocol and Performance Data: The search results do not provide specific experimental data for photothermal heating within droplet microreactors. Its application in this context is largely emergent. The theoretical benefit is the potential for unparalleled spatial and temporal control, allowing researchers to apply rapid heat pulses to specific droplets in a high-throughput stream without affecting neighboring ones.

Induction Heating

Principle and Implementation: Induction heating generates heat in a conductive material via eddy currents and magnetic hysteresis losses when the material is placed in a high-frequency alternating magnetic field. For droplet microreactors, this would involve dispersing magnetic nanoparticles (e.g., iron oxide) into the droplet's dispersed phase or incorporating a ferromagnetic element near the reaction zone. Heating is contact-less and can be very rapid.

Experimental Protocol and Performance Data: The provided search results do not contain specific examples or performance data for induction heating in parallel droplet reactors. Similar to photothermal heating, it represents an advanced approach with potential for fast, localized heating, but its practical implementation and reproducibility in high-throughput screening workflows are less documented in the current results.

Experimental Protocols for Temperature Control

Workflow for Integrated Resistive Heating and Sensing

The following diagram illustrates the experimental workflow for implementing and validating a resistive heating system in a droplet microreactor, as derived from a published acidity screening study [20].

(Diagram 1: Workflow for resistive heating in droplet microreactors)

Key Reagents and Materials for Droplet Reactor Experiments

The table below lists essential research reagents and materials commonly used in experiments with heated droplet microreactors, as identified in the search results.

Table 3: Research reagent solutions for droplet microreactor experiments

| Item | Function / Description | Example Application |

|---|---|---|

| Thin-Film Platinum Microheater/RTD | Provides localized heating and real-time temperature sensing. | On-chip temperature control for chemical reactions [20]. |

| Fluid Catalytic Cracking (FCC) Catalyst Particles | Heterogeneous catalyst particles with Brønsted acid sites. | Acidity screening via model reactions in droplets [20]. |

| 4-Methoxystyrene | A reagent for oligomerization reactions catalyzed by acid sites. | Fluorescence-based probing of single-catalyst-particle acidity [20]. |

| CNFCPEG (α-cyanostilbene derivative) | A self-assembling, dual-emissive fluorophore. | Stabilizing complex emulsions and acting as a transducer in sensing [21]. |

| Hydrocarbon/Fluorocarbon/Oil Phases | Forms the dispersed and continuous phases for droplet generation. | Creating stable O/W, W/O, or complex (e.g., H/F/W) emulsions [20] [21]. |

Based on the available experimental data, resistive (Joule) heating is currently the most mature and documented integrated heating solution for achieving reproducible temperature control in parallel droplet reactors. Its combination with thin-film RTDs enables the fast, localized, and feedback-controlled heating necessary for high-throughput applications like single-catalyst-particle screening [20]. The documented stability at high temperatures and successful integration into functional analytical platforms make it a robust choice.

In contrast, while Peltier devices offer the unique benefit of active cooling, their use as an external heating method typically results in slower thermal response and lower spatial resolution, making them less suitable for applications requiring rapid heating of individual droplets. Photothermal and Induction heating present compelling theoretical advantages for ultra-localized and contact-less heating but lack extensive, publicly available experimental validation in the context of high-throughput, reproducible droplet reactor research.

For researchers prioritizing reproducibility, throughput, and precise thermal management in parallelized systems, integrated resistive heating with real-time sensing presents a strongly supported option. Future work should focus on generating direct comparative experimental data for all four technologies under standardized conditions to further guide the scientific community.

In the field of parallel droplet reactors, achieving and reproducing precise temperature conditions is a cornerstone of experimental reliability. Temperature control is not merely a technical requirement but a fundamental parameter that influences reaction kinetics, biomolecular stability, and ultimately, the validity of high-throughput screening data [1]. The evolution from bulky external heating apparatus to sophisticated, integrated on-chip systems represents a significant stride toward addressing the unique thermal challenges at the microscale, such as rapid heat dissipation, non-uniform temperature distribution, and the intricate interplay between fluid flow and heat transfer [1] [22]. This guide objectively compares the performance of different temperature control strategies, providing researchers with a structured framework to select the optimal technology for ensuring reproducibility in their droplet-based experiments.

Comparative Analysis of Temperature Control Strategies

The choice of a heating strategy involves trade-offs between integration level, response time, spatial control, and system complexity. The following table summarizes the key characteristics of prevalent methods.

Table 1: Performance Comparison of Temperature Control Strategies for Microfluidic Systems

| Strategy | Heating Mechanism | Typical Heating/Cooling Rates | Temperature Range | Spatial Resolution | Key Advantages | Key Limitations for Reproducibility |

|---|---|---|---|---|---|---|

| External Peltier Elements | Thermoelectric heating/cooling of the entire chip or a block. | 4–100 °C/s (heating); 5–90 °C/s (cooling) [2] | -3 °C to 120 °C [2] | Low (device-level) | Simple setup, active cooling capability, good for uniform bulk heating [2] [23]. | High thermal mass slows response; prone to thermal crosstalk between parallel reactors; less suitable for localized heating [23]. |

| Integrated Resistive (Joule) Heaters | Joule heating from patterned thin-film metals (e.g., Pt) on the chip substrate. | Up to 2000 °C/s (theoretical ramp rates) [2] | Up to 500 °C (with Pt) [20] | High (sub-millimeter) | Very fast response, excellent for localized heating and creating gradients; enables precise in-situ thermal cycling [24] [20]. | Challenging to achieve uniform temperature over large areas; requires sophisticated fabrication [24] [1]. |

| Photothermal Heating | Light absorption (laser, IR) by the sample or integrated nanomaterials (e.g., gold nanostructures). | Sub-second modulation; 40 PCR cycles in ~370 s [24] [23] | Up to 95 °C and beyond [24] | Very High (focused spot) | Ultra-fast, contact-less heating; no thermal mass added to the chip [24] [23]. | Risk of sample overheating; non-uniform heating in droplets; complex optical setup required [23]. |

| Pre-heated Liquid Flow | Flowing a thermally controlled liquid through a channel adjacent to the reaction channel. | Switching between 5–45 °C in <10 s [2] | Limited by heat exchanger fluid | Medium (channel-level) | Can establish stable, accurate temperature gradients [2]. | System complexity; risk of heat transfer lag; can consume significant chip real estate. |

Experimental Protocols for Assessing Thermal Performance

To ensure temperature control reproducibility, specific experimental methodologies are employed to characterize and validate system performance.

Protocol for Characterizing Dynamic Thermal Response

This protocol is used to measure the speed and accuracy of temperature transitions, which is critical for applications like digital droplet PCR (ddPCR).

- Sensor Integration: Integrate a thin-film Resistance Temperature Detector (RTD), typically made of platinum, directly into the microfluidic chip adjacent to the reaction chamber or channel. The RTD's resistance changes linearly and predictably with temperature [20].

- Setup Calibration: Calibrate the RTD reading against a NIST-traceable thermocouple under static temperature conditions in a controlled thermal environment.

- Stimulus Application: For on-chip heaters, apply a voltage step function to the integrated microheater. For external systems, trigger a temperature setpoint change on the Peltier controller.

- Data Acquisition: Use a high-speed data acquisition system to record the resistance of the RTD at a frequency of at least 10 Hz, converting the resistance values to temperature in real-time.

- Data Analysis: Plot temperature versus time. Calculate the heating and cooling ramp rates (°C/s) and the settling time—the time required for the system to reach and stay within ±0.5 °C of the target temperature [2].

Supporting Experimental Data: Studies using integrated platinum microheaters and sensors have demonstrated stable temperature control up to 500 °C with high stability and sensitivity, enabling precise feedback for rapid thermal cycling [20]. External Peltier systems have been documented to achieve heating rates of 100 °C/s and cooling rates of 90 °C/s for nanoliter volumes [2].

Protocol for Mapping Temperature Uniformity in Parallel Reactors

This protocol assesses thermal crosstalk and gradient formation across multiple reaction sites, which is essential for the reproducibility of parallelized experiments.

- Fluorophore Preparation: Prepare a solution of a temperature-sensitive fluorescent dye, such as Rhodamine B, in the buffer or solvent used for droplets. The fluorescence intensity of Rhodamine B is inversely proportional to temperature [23].

- Device Loading: Load the dye solution into all parallel droplet reactors or channels of the microfluidic device.

- Image Acquisition: Set the temperature control system to a stable target temperature (e.g., 60 °C for LAMP assays). Use a fluorescence microscope with a temperature-insensitive filter set to capture an image of all reactors simultaneously.

- Calibration Curve: Generate a separate calibration curve by measuring the fluorescence intensity of the dye at a series of known, stable temperatures.

- Image Analysis: Convert the fluorescence intensity values from the acquired image to temperature values using the calibration curve. Analyze the data to determine the mean temperature, standard deviation, and maximum temperature difference across the reactor array.

Supporting Experimental Data: Research has shown that temperature gradients can be effectively managed using advanced control strategies like adaptive fuzzy PID control, which minimizes temperature fluctuations and enhances uniformity compared to conventional PID controllers [1]. The use of materials with tailored thermal conductivity, such as PDMS (0.15 W/m·K), also helps in isolating thermal domains [2].

Protocol for Evaluating Droplet Stability Under Thermal Stress

This protocol tests the physical integrity of droplets at elevated temperatures, a common failure point in thermal droplet assays.

- Droplet Generation: Generate a monodisperse water-in-oil emulsion using a flow-focusing or T-junction droplet generator on-chip. The continuous oil phase should contain a surfactant (e.g., SPAN 20) at a defined concentration [5].

- Thermal Exposure: Direct the droplet stream over an integrated microheater or through a temperature-controlled zone set to the target stress temperature (e.g., 90 °C for PCR).

- High-Speed Imaging: Use a high-speed camera to record the droplets immediately before, during, and after the heating zone.

- Stability Quantification: Analyze the recordings to measure droplet diameter and inter-droplet spacing. Monitor for instances of coalescence (merging of two droplets) or breakup. The stability is quantified by the percentage of droplets that maintain their integrity and size through the thermal zone.

Supporting Experimental Data: Experiments have demonstrated that pure oil droplets become unstable and prone to coalescence at temperatures above 60 °C. Adding SPAN 20 surfactant significantly improves stability at higher temperatures (up to 90 °C), though it can also lead to a slight increase in initial droplet size [5]. For every 10 °C increase, droplet diameter can expand by approximately 4.2-5.7% due to reduced aqueous phase density, a factor that must be accounted for in reactor design [5].

Diagram 1: Experimental workflow for thermal characterization

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of thermal control strategies relies on a suite of specialized materials and reagents.

Table 2: Key Reagents and Materials for Temperature-Controlled Droplet Reactors

| Item | Function/Description | Application in Thermal Control |

|---|---|---|

| SPAN 20 Surfactant | A non-ionic surfactant added to the continuous oil phase. | Stabilizes droplets against coalescence at elevated temperatures (up to 90 °C), which is critical for assay reproducibility [5]. |

| Platinum Thin-Film Structures | Micropatterned metal layers fabricated on the chip substrate. | Serves as both a microheater (Joule heating) and an RTD temperature sensor, enabling fast, localized heating and direct feedback [20]. |

| Rhodamine B | A temperature-sensitive fluorescent dye. | Acts as a non-contact molecular thermometer for mapping temperature uniformity within channels and droplets via fluorescence intensity [23]. |

| Polydimethylsiloxane (PDMS) | A common elastomer for rapid prototyping of microfluidic devices. | Its low thermal conductivity (~0.15 W/m·K) provides thermal insulation, helping to isolate heated regions and minimize crosstalk [2]. |

| Magnetic Nanoparticles (e.g., Iron Oxide) | Nanoparticles suspended in the reaction mixture. | Enable induction heating when exposed to an alternating magnetic field, offering a mechanism for direct volumetric heating of the reactor content [24]. |

Integrated System Architecture and Future Directions

The highest level of reproducibility is achieved by moving beyond discrete components to fully integrated systems. These architectures combine heaters, sensors, and control algorithms into a single, automated platform.

Diagram 2: Integrated control system architecture

Advanced control algorithms are crucial for maintaining stability. Proportional-Integral-Derivative (PID) controllers are widely used, but adaptive fuzzy PID controllers have demonstrated superior performance in microfluidic systems, minimizing temperature fluctuations and overshoot in the face of dynamic loads [1]. The integration of artificial intelligence (AI) and machine learning (ML) is a emerging trend. These systems can predict thermal behavior, autonomously optimize setpoints, and compensate for disturbances in real-time, paving the way for self-driving laboratories that can ensure reproducible outcomes with minimal human intervention [1] [25].

Future developments are focused on novel materials and fabrication techniques. Additive manufacturing (3D printing) allows for the direct integration of complex heating and cooling channels within reactor geometries [1] [25]. Furthermore, nanomaterials like gallium-infused carbon nanotubes and temperature-sensitive quantum dots are being explored for next-generation non-invasive thermal sensing and management [1].

In the pursuit of accelerated drug development and high-throughput reaction screening, parallel droplet reactors have emerged as a transformative technology. These systems enable researchers to conduct numerous experiments simultaneously, drastically reducing both time and material consumption [4]. However, the fidelity of the data generated by these platforms hinges on one critical factor: the precise and uniform distribution of fluidic samples across all parallel channels. Any inconsistency in this distribution can introduce variability in reaction outcomes, compromising data integrity and leading to erroneous conclusions.

This guide objectively evaluates the performance of various microfluidic flow distributor chips, the core components responsible for achieving this uniformity. The analysis is framed within the broader thesis of evaluating temperature control reproducibility in parallel droplet reactors, as both factors—thermal management and fluidic distribution—are interdependent in ensuring experimental fidelity [4]. We present comparative experimental data on different distributor designs, detail the methodologies for assessing their performance, and provide a curated list of essential research tools. This resource is designed to aid researchers, scientists, and drug development professionals in selecting the optimal flow distributor technology for their specific application needs, thereby enhancing the reliability and reproducibility of their experimental results.

Flow Distributor Chip Technologies: A Comparative Analysis

Microfluidic flow distributor chips are engineered to split a single incoming fluid stream into multiple identical streams with minimal variation. The design and fabrication of these chips directly influence the uniformity of flow, which in turn affects droplet size, reaction time, and ultimately, reaction yield across parallel channels. Below, we compare the key performance characteristics of common distributor chip technologies.

Table 1: Performance Comparison of Microfluidic Flow Distributor Chip Technologies

| Chip Technology / Feature | Material Compatibility | Typical Number of Outputs | Reported Droplet Size Uniformity (CV) | Key Advantages | Documented Limitations |

|---|---|---|---|---|---|

| Tree-like Distributor | Glass, Silicon [26] | 8 - 64+ | < 2% [27] | Excellent scalability, symmetric channel design minimizes flow resistance variation. | Complex fabrication; larger footprint; difficult to reconfigure. |

| Flow-Focusing Design | PDMS, Glass [28] [27] | 1 (per unit) | < 1% - 3% [27] [29] | High monodispersity; integrable with droplet generation. | Primarily for droplet generation, not bulk distribution; pressure sensitivity. |

| Manifold/Valve-Based | Fluoropolymer, PEEK [4] | 10 (customizable) | Linked to system reproducibility (<5% reaction outcome deviation) [4] | High flexibility; independent channel control; suitable for diverse reaction conditions. | System complexity; requires sophisticated control software and scheduling algorithms [4]. |

The choice of distributor technology is not one-size-fits-all. Tree-like distributors are ideal for applications requiring a very high degree of parallelism and uniformity, such as large-scale screening campaigns where all reactions are run under identical conditions. In contrast, manifold/valve-based systems, like the 10-channel platform developed for reaction kinetics and optimization, offer superior flexibility [4]. They enable each channel to operate under entirely independent conditions (e.g., different temperatures, reaction times, or reagents), which is crucial for experimental design algorithms that propose diverse reaction conditions simultaneously. This independence, however, comes with the added complexity of requiring advanced control software to orchestrate all parallel operations without cross-contamination or timing conflicts [4].

Quantitative Experimental Verification of Distribution Performance

Empirical validation is paramount when selecting a flow distributor. The following data, synthesized from recent studies, provides a benchmark for expected performance.

Table 2: Experimental Performance Data for Flow Distributor Systems

| System Description | Measured Parameter | Reported Performance | Experimental Conditions | Source |

|---|---|---|---|---|