Achieving Uniform Temperature Distribution in Parallel Reactor Arrays: Strategies for High-Throughput Experimentation

This article provides a comprehensive guide for researchers and drug development professionals on achieving and maintaining uniform temperature distribution in parallel reactor arrays, a critical factor for reproducibility and efficiency...

Achieving Uniform Temperature Distribution in Parallel Reactor Arrays: Strategies for High-Throughput Experimentation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on achieving and maintaining uniform temperature distribution in parallel reactor arrays, a critical factor for reproducibility and efficiency in high-throughput experimentation (HTE). It covers the fundamental principles of heat transfer and multiphysics coupling in reactor design, explores advanced methodological approaches including computational modeling and machine learning optimization, addresses common troubleshooting and performance optimization challenges, and outlines rigorous validation and comparative analysis techniques. By synthesizing insights from multiphysics simulations, computational fluid dynamics, and autonomous laboratory platforms, this review serves as a strategic resource for enhancing the reliability and throughput of parallelized chemical synthesis and process development.

Fundamentals of Heat Transfer and Temperature Uniformity in Parallel Systems

The Critical Impact of Temperature Gradients on Reaction Yield and Reproducibility

In the pursuit of efficient and sustainable chemical processes, achieving uniform temperature distribution is a foundational challenge, particularly within parallel reactor arrays used for high-throughput experimentation (HTE). Temperature gradients—systematic variations in temperature across a reaction vessel or between parallel reactors—can significantly impact reaction kinetics, product selectivity, and overall yield. In fields such as pharmaceutical development, where reproducibility is paramount, uncontrolled gradients can lead to misleading data and failed scale-up attempts. This Application Note details the sources and effects of temperature heterogeneity and provides validated protocols for its characterization and control, enabling researchers to secure robust and reproducible reaction outcomes.

Quantitative Data on Temperature Control Systems

The selection of an appropriate temperature control system is critical for minimizing unwanted gradients. The following table compares common methods used in parallel reactor systems.

Table 1: Comparison of Temperature Control Methods for Parallel Reactor Systems [1]

| Control Method | Principle | Typical Temperature Range | Heating/Cooling Rate | Uniformity | Best Use Cases |

|---|---|---|---|---|---|

| Peltier-Based | Thermoelectric effect | Limited by heat sinks | Rapid | High (for small scales) | Small-scale parallel photoreactors; rapid thermal cycling |

| Liquid Circulation | Heat transfer via fluid | Broad (solvent-dependent) | Moderate | High (with good design) | Large-scale or exothermic reactions; high-heat-load applications |

| Air Cooling | Convective dissipation | Ambient to moderate | Slow | Low | Low-heat-load reactions; cost-sensitive applications |

| Matrix-in-Batch | Resistive heating spots | 0°C to 200°C (solvent-dependent) [2] | Configurable | Excellent (via active rotation) [3] | Versatile applications requiring high uniformity in batch mode |

The performance of a reactor system is often quantified by its thermal mixing efficiency, a metric used to evaluate the uniformity of temperature distribution. Computational Fluid Dynamics (CFD) studies on advanced systems like the OnePot reactor have shown that an optimal geometric pitch between heating spots (approximately 36% of the vessel diameter) can maximize this efficiency. Such configurations can prevent the formation of large, cold "islands" within the reaction medium, even at high fluid viscosities [3].

Experimental Protocols

Protocol: Determination of Optimal Annealing Temperature Using a Gradient Thermal Cycler

This protocol is adapted from standard molecular biology practices for PCR optimization and exemplifies the constructive use of thermal gradients for parameter screening [4].

Research Reagent Solutions [4]

- Primer Pair Solution: Forward and reverse primers, resuspended in nuclease-free water to a stock concentration of 100 µM.

- DNA Template: Purified DNA containing the target sequence, diluted to a working concentration.

- PCR Master Mix: A commercial or laboratory-prepared mixture containing thermostable DNA polymerase, dNTPs, MgCl₂, and reaction buffers.

- Agarose Gel: Typically 1-2% agarose in TAE or TBE buffer, stained with a DNA-intercalating dye.

Procedure:

- Define Gradient Range: Calculate the theoretical melting temperature (Tm) for your primer pair. Set the thermal cycler's gradient to span a range of approximately 10–12°C, centered on this Tm. For example, for a Tm of 60°C, set a gradient from 55°C to 65°C [4].

- Prepare Reaction Mixtures:

- In a single master mix tube, combine the following components per reaction:

- 10.0 µL of 2X PCR Master Mix

- 1.0 µL of Primer Pair Solution (forward and reverse, 100 µM stock)

- 1.0 µL of DNA Template

- 8.0 µL of Nuclease-free Water

- Mix thoroughly by pipetting and gently vortexing.

- Aliquot 20 µL of the master mix into each well of a PCR plate that corresponds to the desired temperature gradient columns.

- In a single master mix tube, combine the following components per reaction:

- Run PCR Program:

- Execute the following program on the gradient thermal cycler:

- Initial Denaturation: 95°C for 3 minutes.

- Amplification Cycles (35 cycles):

- Denaturation: 95°C for 30 seconds.

- Annealing: Use the gradient setting for 30 seconds.

- Extension: 72°C for 1 minute per kb of product.

- Final Extension: 72°C for 5 minutes.

- Hold: 4°C.

- Execute the following program on the gradient thermal cycler:

- Analyze Results:

- Analyze the PCR products using agarose gel electrophoresis or capillary electrophoresis.

- Identify the optimal annealing temperature (Ta) as the one that produces the brightest, single band of the expected size with minimal or no non-specific amplification or primer-dimer formation.

- Narrow the Range (Optional): If the optimal temperature is at the extreme end of the initial gradient, perform a second, narrower gradient run to pinpoint the Ta with greater precision.

Protocol: CFD-Assisted Optimization of Temperature Distribution in a Batch Reactor

This protocol outlines a computational approach to predict and optimize temperature uniformity in a custom reactor design [3].

Procedure:

- System Definition:

- Create a 2D or 3D geometric model of the reactor vessel and its internal heating elements (e.g., rotating spots, jackets).

- Define the fluid properties (e.g., water, argon) including density, viscosity, and specific heat capacity.

- Mathematical Modeling:

- Apply the governing conservation equations for momentum (Navier-Stokes) and energy.

ρ(∂u/∂t + u·∇u) = ∇·[-pI + μ(∇u + (∇u)ᵀ)](Momentum)ρCₚ(∂T/∂t + u·∇T) = ∇·(-q) + Q(Energy)

- Set Boundary Conditions:

- Assign a fixed temperature or heat flux to the heating elements.

- Set the reactor walls to adiabatic or fixed-temperature conditions as appropriate.

- Define the rotational velocity of any moving parts (e.g., 250 rpm for a rotating head [3]).

- Mesh Generation and Simulation:

- Generate a computational mesh for the model domain.

- Run a transient CFD simulation until a steady-state temperature field is achieved.

- Post-Processing and Analysis:

- Visualize the temperature field and velocity streamlines.

- Calculate a thermal mixing efficiency (η) to quantify uniformity. This can be defined as

η = 1 - (σ_T / ΔT_avg), whereσ_Tis the standard deviation of temperature within the vessel andΔT_avgis the average temperature difference from the set point [3]. - Iteratively adjust the reactor geometry (e.g., pitch between heating spots) or operating parameters to maximize η.

Visualization of Workflows

Gradient Optimization Workflow

Reactor Temperature Uniformity Analysis

Achieving uniform temperature distribution is a critical challenge in the design and operation of parallel reactor arrays, directly impacting product yield, quality, and safety in pharmaceutical and chemical manufacturing. This application note details protocols and methodologies for implementing multiphysics coupling to optimize thermal management and reaction kinetics. By integrating thermal-hydraulic modeling with material behavior and reaction dynamics, researchers can predict and control hot spots, minimize temperature gradients, and enhance process reliability.

Multiphysics coupling simultaneously solves interacting physical phenomena—neutronics, thermal-hydraulics, material corrosion, and reaction kinetics—that exhibit strong feedback relationships. In reactor systems, power distribution determines thermal-hydraulic parameters like fuel and coolant temperatures, which in turn affect material macroscopic cross-sections and corrosion rates, creating a tightly coupled system [5]. The fidelity of these simulations has advanced significantly through unified computational frameworks that avoid spatial mapping errors between different physical modules [5].

Key Coupling Methodologies and Numerical Approaches

Unified Coupling Frameworks

Advanced multiphysics coupling leverages unified computational frameworks where multiple physical modules are integrated within a single codebase, sharing the same mesh system and time steps. This approach eliminates interpolation errors and conservation issues associated with traditional mapping techniques.

The Operator Splitting Semi-Implicit (OSSI) method sequentially solves each physical field without iterations between modules within a time step, requiring small time increments for temporal convergence [5]. The Picard method extends OSSI by adding convergence checks and iterative loops within each time step until parameter convergence is achieved [5]. The Jacobian-free Newton-Krylov (JFNK) method simultaneously solves all coupled equations in a tightly nonlinear form, offering superior accuracy at greater computational expense [5].

Table 1: Comparison of Multiphysics Coupling Methods

| Method | Implementation Complexity | Computational Cost | Accuracy | Stability Requirements |

|---|---|---|---|---|

| OSSI | Low | Low | Moderate | Small time steps |

| Picard | Moderate | Moderate | Good | Relaxation factors needed |

| JFNK | High | High | Excellent | Robust |

Thermal-Hydraulic Modeling in Sub-Channels

Sub-channel thermal-hydraulics (SCTH) analysis remains the predominant approach for fuel assembly and reactor core simulation, balancing accuracy with computational efficiency. Advanced SCTH codes incorporate closure models for:

- Transversal exchange phenomena between sub-channels including turbulent mixing, void drift, and wire wrap induced sweeping flow [6]

- Circumferential non-uniform heat transfer in tight lattice fuel assemblies (pitch-to-diameter ratio <1.25) where significant temperature variations occur around fuel pin surfaces [6]

- Post-dryout (PDO) heat transfer and rewetting behavior during boiling crisis conditions [6]

Computational Fluid Dynamics (CFD) approaches provide high-resolution modeling of sub-channel phenomena, particularly for single-phase flow in rod bundles with bare rods or wire wraps [6]. Coupling SCTH with system thermal-hydraulics (STH) or CFD enables comprehensive reactor analysis across scales [6].

Protocols for Multiphysics Analysis of Reactor Systems

Protocol 1: Implementation of Unified Neutronic/Thermal-Hydraulic/Material Corrosion Coupling

Application: High-fidelity simulation of nuclear reactor cores with corrosion feedback for lifetime analysis.

Principle: This protocol integrates neutron diffusion theory, conjugate heat transfer, and material corrosion models within a unified OpenFOAM framework, enabling high-resolution multiphysical coupling without spatial mapping [5].

Table 2: Research Reagent Solutions for Multiphysics Reactor Simulation

| Component | Function | Implementation Example |

|---|---|---|

| OpenFOAM | Open-source C++ CFD library providing foundation for multiphysics coupling | Base platform for module integration [5] |

| Neutron Diffusion Solver | Calculates 3D neutron flux and power distribution | Steady-state and transient neutron diffusion equations [5] |

| Conjugate Heat Transfer Solver | Determines temperature field distribution in fluid-solid systems | Multi-region CHT solver with CFD [5] |

| Material Corrosion Module | Models oxidation growth and thermal resistance | Corrosion growth model and corrosion thermal resistance model [5] |

| OSSI Coupling Method | Coordinates data exchange between physics modules | Sequential solving with small time steps [5] |

Procedure:

- Mesh Generation: Create a unified 3D mesh system shared by all physics modules to prevent spatial mapping errors [5]

- Neutronics Module Implementation:

- Solve steady-state and transient neutron diffusion equations

- Calculate 3D high-resolution neutron flux and power distributions

- Map power distribution to thermal-hydraulic module as heat source

- Thermal-Hydraulics Module Implementation:

- Implement conjugate heat transfer solver for solid-fluid interfaces

- Calculate temperature field distribution using CFD approaches

- Pass temperature feedback to neutronics module for cross-section updates

- Material Corrosion Module Implementation:

- Solve corrosion growth model for oxide thickness

- Calculate thermal resistance of corrosion products

- Update material properties and geometry for heat transfer calculations

- Coupling Configuration:

- Apply OSSI method with time steps of 0.1-1.0 seconds

- Implement parameter transfer through underlying C++ code

- Execute sequential solving: neutronics → thermal-hydraulics → corrosion

- Validation and Verification:

- Compare with single-physics codes for module verification

- Validate against experimental data for coupled phenomena

- Perform sensitivity analysis on key parameters

Diagram 1: Unified Multiphysics Coupling Methodology

Protocol 2: Temperature Uniformity Optimization in Multi-Channel Reactor Arrays

Application: Design and optimization of parallel reactor channels for pharmaceutical applications with stringent temperature control requirements.

Principle: This protocol employs arborescent (tree-like) flow distribution networks combined with multiphysics optimization to achieve temperature uniformity across multiple parallel reaction channels [7].

Procedure:

- Arborescent Distributor Design:

- Design bifurcating channel structures that provide identical flow paths from inlet to outlet

- Optimize channel dimensions using scaling laws to maintain uniform flow resistance

- Fabricate using modern methods (3D printing, SLA, DMLS) for complex geometries [7]

Flow Distribution Characterization:

- Perform CFD simulations to quantify flow distribution at operating conditions

- Conduct visualization experiments with tracer particles to validate distribution uniformity

- Verify maximum flowrate deviation less than 10% across channels [7]

Thermal-Hydraulic Coupling:

- Implement conjugate heat transfer modeling between reactor channels and cooling/heating jackets

- Calculate overall heat transfer coefficients (2000-5000 W/m²°C) and volumetric heat exchange capability (~200 kW/m³°C) [7]

- Incorporate circumferential non-uniform heat transfer correlations for tight lattice arrangements [6]

Temperature Control Implementation:

- Install multiple temperature sensors along reactor channels

- Implement multi-zone heating control with independent power supplies

- Develop control algorithms to adjust heater powers based on temperature measurements [8]

Validation with Exothermic Reactions:

- Conduct neutralization reactions between acid and basic solutions as test cases

- Measure temperature profiles across reactor array under different cooling conditions

- Verify isothermal operation can be maintained with proper coolant flowrates [7]

Table 3: Performance Metrics for Multi-Channel Reactor Temperature Uniformity

| Parameter | Target Value | Measurement Method | Validation Criteria |

|---|---|---|---|

| Flow Distribution Uniformity | <10% deviation between channels | CFD simulation + tracer visualization | Maximum flowrate difference |

| Overall Heat Transfer Coefficient | 2000-5000 W/m²°C | Heat exchange experiments | Temperature measurements |

| Volumetric Heat Exchange Capability | ~200 kW/m³°C | Thermal performance tests | Energy balance |

| Temperature Uniformity | ±0.5°C across susceptor | Multiple thermocouples | Standard deviation <0.2°C [8] |

Protocol 3: Power-Frequency Coordinated Optimization for Microwave Reactors

Application: Microwave-assisted reaction systems where electromagnetic field distribution critically affects temperature uniformity.

Principle: This protocol coordinates multiple microwave sources with varying power and frequency to create alternating hot spot patterns that compensate for inherent temperature non-uniformities [9].

Procedure:

- Electromagnetic-Thermal Coupling:

- Solve Maxwell's equations for electric field distribution within cavity

- Map dielectric loss (Qe = πfε₀ε''r|E|²) as heat source in thermal model

- Solve heat conduction equation with convective boundary conditions [9]

Regional Hot Spot Alternation Algorithm:

- Divide heated material into multiple regions (typically 4-8 zones)

- Heat at fixed input power (200W) with different frequencies (2.41-2.50GHz)

- Record temperature ranking for each region at each frequency

- Determine frequency sequence that alternates hot spots between regions [9]

Sequential Quadratic Programming (SQP) Optimization:

- Define objective function to minimize temperature uniformity index (UI)

- Apply constraints on maximum power and frequency ranges

- Solve optimization problem to determine power allocation across frequencies [9]

Experimental Validation:

- Heat SiC materials in high-power microwave reactor

- Measure temperature distribution using thermal imaging

- Verify improvements in uniformity index (56.8-94.3% for single-material, 44.4-76.6% for multi-material) [9]

Diagram 2: Microwave Heating Optimization Workflow

Advanced Applications and Case Studies

Matrix-in-Batch OnePot Reactor Temperature Optimization

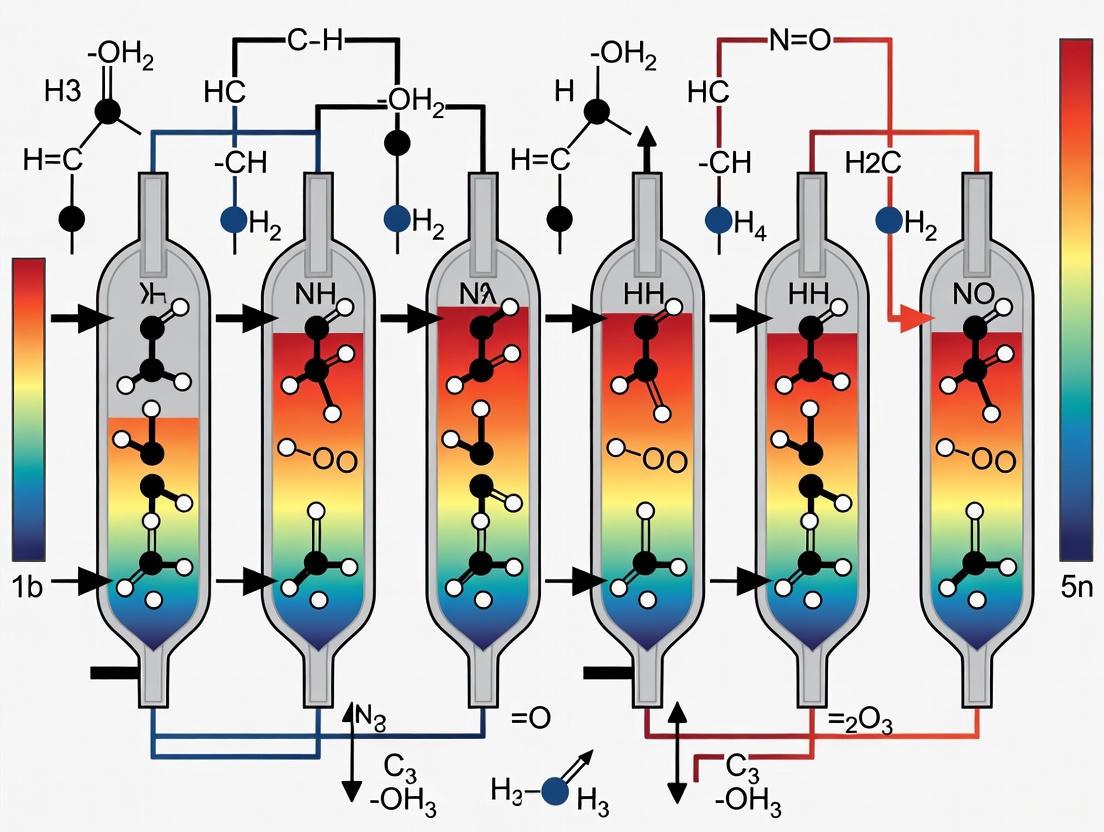

The novel OnePot reactor implements a "matrix-in-batch" heating approach with seven rotating thermal spots that discretize the reaction volume into smaller, continuously mixed cells [3]. Optimization studies reveal:

- Optimal pitch configuration: Approximately 36% of vessel diameter for both water and argon fluids [3]

- Alternate spot arrangement: Superior temperature distribution compared to uniform spacing, particularly at high viscosities [3]

- Thermal mixing efficiency: Quantitative metric for optimizing temperature distribution uniformity [3]

CFD simulations of the 2D cross-section model solve Navier-Stokes equations with energy balance to determine velocity and temperature fields, enabling spot arrangement optimization without expensive experimental iterations [3].

MOCVD Reactor Multi-Zone Temperature Control

Metal-Organic Chemical Vapor Deposition (MOCVD) reactors require stringent temperature control (±0.5°C) for uniform film deposition in LED manufacturing [8]. A systematic approach to heater zone optimization includes:

- Finite Element Model Development: 2D axisymmetric model incorporating conduction, convection, and radiation heat transfer [8]

- Process Window Definition: Temperature (1100°C), pressure (100-500 Torr), flow rate (5-50 slm) parameter ranges [8]

- Flow Pattern Analysis: Identification of recirculation cells at higher pressures that create radial variations in convective heat transfer [8]

- Zone Configuration Testing: Comparison of single-zone, two-zone, and six-zone control schemes [8]

Results demonstrate that six-zone independent control successfully maintains temperature uniformity within design specifications across the entire process window, while simplified approaches fail at extreme operating conditions [8].

Embryo Chamber Heating Element Optimization

Quantitative optimization of metal foil heating elements in embryo chambers reduces temperature gradients from 0.5°C to less than 0.1°C, critical for consistent embryonic development [10]. The methodology involves:

- Isothermal Region Segmentation: Dividing chamber structure based on temperature distribution at thermal equilibrium [10]

- Resistance Adjustment Calculation: Using energy conservation principles to determine required resistance changes (R' = k·A·h·ΔT·R_a/U₀²) [10]

- Geometric Modification: Adjusting foil length or width to achieve target resistance values in different regions [10]

This approach systematically addresses temperature non-uniformities inherent in complex chamber geometries with multiple heat transfer mechanisms (conduction, convection, radiation) [10].

Multiphysics coupling approaches provide powerful methodologies for achieving temperature uniformity in parallel reactor arrays through integrated simulation of thermal-hydraulics, reaction kinetics, and electromagnetic phenomena. The protocols outlined enable researchers to implement unified computational frameworks, optimize flow distribution networks, and coordinate multi-parameter control strategies. Case studies across nuclear, chemical, and biomedical applications demonstrate consistent improvements in temperature uniformity ranging from 35.7% to 94.3% through systematic application of these methods. Continued advancement in multiphysics coupling will further enhance process control capabilities for pharmaceutical development and other precision manufacturing applications requiring exacting thermal management.

Challenges of Non-Conservative Field Transfers and Spatial Accuracy Losses in Dissimilar Meshes

In advanced nuclear reactor systems, achieving uniform temperature distribution across parallel reactor arrays is critical for both operational safety and efficiency. Multi-physics simulations play an indispensable role in optimizing these systems, yet they face fundamental challenges when transferring data between component models employing spatially dissimilar meshes. These simulations typically couple thermal-hydraulics, neutronics, and structural mechanics, each utilizing distinct spatial discretizations tailored to their specific physical requirements. Non-conservative field transfers between these non-matching meshes introduce spatial accuracy losses that directly compromise temperature uniformity predictions. Research indicates that the interpolation errors at fluid-structure interfaces can trigger unphysical oscillations in transferred fields, particularly affecting pressure and temperature distributions critical to reactor performance [11]. Within the MOOSE framework, experiences coupling applications for nuclear reactor analysis have revealed significant challenges with non-conservation problems and order-of-accuracy losses when transferring fields between dissimilar meshes [12]. This application note details these challenges and provides structured protocols to mitigate accuracy degradation in multi-physics simulation of parallel reactor arrays.

Theoretical Foundations of Field Transfer Challenges

Classification of Remapping Approaches

Field transfer between non-matching meshes operates under two primary paradigms with distinct mathematical constraints and physical guarantees:

Conservative transfers preserve the integral of the transferred field across the interface, ensuring that quantities like mass, energy, or momentum are exactly conserved between source and target domains. This approach typically employs a transformation matrix H that satisfies strict conservation constraints, often through a weak formulation of coupling conditions [11].

Consistent (non-conservative) transfers prioritize pointwise accuracy and field smoothness without guaranteeing integral preservation. These methods utilize independent transformation operators for different field types, potentially offering superior accuracy for state variable mapping at the cost of exact conservation [11].

The selection between these approaches involves fundamental trade-offs. Research demonstrates that while conservative methods prevent artificial mass/energy sources or sinks, they can introduce unphysical oscillations in the received pressure and temperature fields at flexible structures [11]. Conversely, consistent approaches typically produce smoother fields but may violate fundamental conservation laws, potentially introducing systematic errors in coupled energy balances.

Mathematical Framework and Accuracy Metrics

The remapping operation between source mesh Ω~s~ and target mesh Ω~t~ is mathematically represented as:

ψ^t^ = Rψ^s^

where ψ^s^ ∈ R^f^s^ and ψ^t^ ∈ R^f^t^ are discrete field values on source and target meshes with f~s~ and f~t~ degrees of freedom respectively, and R is the remapping operator [13].

Spatial accuracy is quantified using standardized error metrics:

Table 1: Key Accuracy Metrics for Remapping Operations

| Metric | Mathematical Definition | Physical Interpretation |

|---|---|---|

| L¹ Error | It[│RDs(ψ)-Dt(ψ)│]/It[│Dt(ψ)│] | Measures relative error in field integrals |

| L² Error | √(It[│RDs(ψ)-Dt(ψ)│²]/It[│Dt(ψ)│²]) | Root-mean-square relative error |

| L^∞^ Error | max│RDs(ψ)-Dt(ψ)│/max│Dt(ψ)│ | Worst-case pointwise relative error |

| Extrema Errors | (min│RDs(ψ)│-min│Dt(ψ)│)/min│Dt(ψ)│ & (max│RDs(ψ)│-max│Dt(ψ)│)/max│Dt(ψ)│ | Measures preservation of field bounds |

These metrics provide comprehensive assessment of remapping accuracy, with particular emphasis on L^∞^ error and extrema preservation for temperature uniformity analysis in reactor arrays [13].

Meshing Strategies and Their Impact on Temperature Uniformity

Mesh Typology for Reactor Simulations

Nuclear reactor multi-physics simulations employ diverse mesh types tailored to specific physics requirements:

- Structured meshes with regular connectivity patterns, typically employed in computational fluid dynamics for their numerical efficiency

- Unstructured triangular/tetrahedral meshes offering geometrical flexibility for complex reactor core geometries

- Adaptive moving meshes that dynamically refine based on solution characteristics, particularly valuable for capturing steep thermal gradients [14]

- Lagrangian meshes that move with material deformation, essential for fuel performance analysis under thermal cycling

The fundamental challenge emerges from the inherent dissimilarity between optimal meshing strategies for different physics. For example, thermal-hydraulics typically requires fine boundary layer resolution near fuel pins, while neutronics benefits from homogeneous pin-cell averaging, and structural mechanics prioritizes accurate fuel cladding discretization [12].

Remeshing and Data Transfer in Adaptive Methods

Adaptive meshing techniques introduce additional complexities through remeshing procedures that dynamically modify mesh resolution and topology. In Lagrangian methods, nodes move with material deformation, necessitating periodic insertion, removal, or reconnection of nodes to maintain mesh quality. This process fundamentally alters the state vector dimension, creating significant challenges for consistent field transfer between physics components [14].

The sea-ice model neXtSIM exemplifies these challenges, employing a 2-D unstructured triangular adaptive moving mesh with remeshing to capture localized deformation features. Similar approaches show promise for reactor thermal analysis but require specialized data transfer methodologies to handle the changing state space dimensionality [14].

Diagram Title: Adaptive Mesh Field Transfer Challenge

Quantitative Assessment of Remapping Errors

Analytical Test Problems

Studies comparing conservative and consistent approaches employ analytical test problems to quantify interpolation characteristics. A sinusoidal test function q~e~ = 0.2sin(2πx) with x ∈ [-0.5,0.5] evaluated on non-matching source and target meshes reveals fundamental performance differences:

Table 2: Performance Comparison of Transfer Approaches for Analytical Problems

| Transfer Approach | Smooth Field Accuracy | Discontinuous Field Handling | Oscillation Tendency | Conservation Properties |

|---|---|---|---|---|

| Conservative | High (2nd order) | Excellent with limiters | High (unphysical oscillations) | Exact conservation |

| Consistent | Very High | Poor (Gibbs phenomenon) | Minimal | No guarantees |

| Clip and Assured Sum (CAAS) | Moderate | Excellent | Controlled | Adjusted conservation |

For smooth fields typical of temperature distributions in homogeneous reactor regions, consistent approaches generally outperform conservative methods in pointwise accuracy. However, near material interfaces or steep thermal gradients, conservative methods with monotonicity limiters provide superior stability despite introducing numerical diffusion [11].

Impact on Reactor Temperature Uniformity

In quasi-1D fluid-structure interaction problems representative of reactor channel analysis, the choice of transfer method significantly impacts predicted temperature distributions:

- Conservative approaches generate unphysical oscillations in transferred temperature and pressure fields when applied to flexible structures, directly impacting temperature uniformity predictions [11]

- Consistent approaches maintain smoother temperature distributions but may introduce artificial energy imbalances up to 3-5% over multiple transfer cycles

- Hybrid methods that apply conservation constraints only to specific conserved quantities (mass, energy) while using consistent transfer for state variables offer promising compromise

The spatial accuracy degradation compounds temporally in transient simulations, with initial transfer errors of 1-2% potentially amplifying to 10-15% after several coupling iterations, severely compromising temperature uniformity predictions in reactor arrays [12].

Experimental Protocols for Transfer Method Validation

Monotone Conservative Remapping Protocol

Purpose: Validate boundedness preservation for physically constrained fields (e.g., species concentrations between 0-1, non-negative temperatures)

Procedure:

- Initialize source field with discontinuous or near-boundary values

- Apply remapping operator R to transfer to target mesh

- Post-process using Clip and Assured Sum (CAAS) methodology:

- Clip out-of-bounds values to physical limits

- Adjust field to preserve integral conservation via assured summation

- Quantify bounds preservation using L~min~ and L~max~ metrics from Table 1

- Evaluate accuracy degradation relative to unlimited remapping

Applications: Species transport in reactor coolants, radiative heat transfer with non-negative intensities, turbulent combustion with bounded progress variables [13]

Multi-Mesh Coupling Validation Protocol

Purpose: Characterize error accumulation in multi-physics simulations with three or more coupled meshes

Procedure:

- Establish high-fidelity reference solution on unified mesh

- Configure partitioned simulation with dedicated meshes for each physics

- Implement bidirectional transfer between thermal-hydraulics, neutronics, and structural mechanics

- Quantify transfer errors at each interface using L¹, L², and L^∞^ metrics

- Monitor global conservation errors over multiple coupling iterations

Validation Metrics:

- Global energy balance discrepancy (< 1% per coupling cycle)

- Maximum pointwise temperature error relative to reference solution

- Spatial correlation of error distributions across reactor domain [12]

Diagram Title: Multi-Mesh Coupling Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Mesh Transfer Research

| Tool/Reagent | Function | Application Context |

|---|---|---|

| TempestRemap | Conservative, consistent, and monotone remapping between spherical meshes | Climate modeling adapted to reactor thermal analysis [13] |

| MOOSE Framework | Multiphysics object-oriented simulation environment with field transfer utilities | Nuclear reactor multiphysics coupling [12] |

| BAMG Library | Bidimensional anisotropic mesh generator for adaptive remeshing | Localized mesh refinement for thermal gradients [14] |

| ESMF Remapping | Earth System Modeling Framework conservative remapping utilities | Structured/unstructured mesh interpolation [13] |

| CAAS Algorithm | Clip and Assured Sum method for bounds-preserving remapping | Physically constrained field transfers [13] |

| EnKF with Reference Mesh | Ensemble Kalman Filter with fixed reference mesh for varying dimensions | Data assimilation with adaptive meshing [14] |

Implementation Protocol: Reference Mesh Strategy for Adaptive Meshes

Background: Adaptive meshing with remeshing operations causes state vector dimension changes, preventing direct ensemble-based analysis as required in data assimilation and uncertainty quantification.

Procedure:

- Forward Mapping: Before analysis, map all ensemble members from individual adapted meshes to a fixed, uniform reference mesh

- Reference Mesh Selection:

- High-Resolution (HR) reference: Resolution determined by minimum remeshing tolerance

- Low-Resolution (LR) reference: Resolution determined by maximum remeshing tolerance

- Analysis Operation: Perform ensemble analysis (data assimilation, uncertainty propagation) on the common reference mesh

- Backward Mapping: Map updated ensemble members back to their individual adapted meshes for continued simulation

Applications: Uncertainty quantification in reactor thermal analysis, data assimilation for fuel performance modeling, parameter estimation with adaptive discretizations [14]

Validation Studies: Implemented for 1D Burgers and Kuramoto-Sivashinsky equations, demonstrating effective error reduction despite dimension changes, with HR strategy generally outperforming LR at increased computational cost.

The challenges of non-conservative field transfers and spatial accuracy losses in dissimilar meshes represent significant obstacles for high-fidelity prediction of temperature uniformity in parallel reactor arrays. Analytical and empirical studies demonstrate that no single transfer approach dominates across all application scenarios, necessitating physics-informed selection of conservative, consistent, or hybrid methodologies. The reference mesh strategy for adaptive meshing shows particular promise for uncertainty-aware reactor analysis, while bounded remapping techniques ensure physical realizability of transferred fields.

Future research directions should prioritize machine-learning-enhanced transfer operators, non-intrusive coupling schemes with error control, and standardized validation protocols specific to nuclear reactor multi-physics simulation. These advancements will directly support the development of more predictable and uniform temperature distributions in advanced reactor systems, ultimately enhancing both safety and performance.

Inherent Design Limitations of Microtiter Plates and Standard Reactor Vessels

Achieving uniform temperature distribution is a foundational challenge in the design and operation of parallel reactor arrays for pharmaceutical and chemical research. This Application Note details the inherent design limitations of two ubiquitous systems: microtiter plates and standard reactor vessels. Framed within broader research on achieving thermal homogeneity in parallel setups, we dissect the root causes of temperature gradients, present quantitative data on their effects, and provide validated experimental protocols to characterize and mitigate these critical limitations. The pursuit of uniform temperature is not merely a technical objective but a prerequisite for obtaining reliable, reproducible, and scalable data in high-throughput experimentation and process development.

Design Limitations of Microtiter Plates

Microtiter plates (MTPs) are workhorses of high-throughput screening but are prone to significant spatial temperature variations that can compromise experimental integrity.

Core Thermal Challenges

The fundamental architecture of MTPs creates an inherent conflict between high-throughput capacity and precise thermal control. The primary limitations include:

- Edge Effects and Evaporation: External wells, particularly those on the perimeter of the plate, experience greater heat transfer with the ambient environment compared to internal wells. This leads to the formation of persistent temperature gradients. One study documented that internal wells were approximately 0.14°C warmer than outer wells at 25°C, a discrepancy that widened to 0.68°C at 37°C [15]. This gradient is exacerbated by differential evaporation rates, which are more pronounced in edge wells.

- Inefficient Heat Transfer: Standard incubators or heating blocks often provide inadequate conductive or convective heat transfer to the entire plate simultaneously. The use of MTPs in temperature-controlled rooms or standard incubators represents the simplest control method but is insufficient for eliminating intra-plate gradients [16].

- Limitations of Conventional Control Systems: Systems that circulate temperature-controlled fluid through the base of an MTP chamber improve uniformity but are inherently limited in operating parallel reactors at different temperatures, thus restricting experimental design flexibility [16].

Quantitative Analysis of Thermal Performance

The following table summarizes key quantitative findings from studies investigating temperature distribution in microtiter plates.

Table 1: Quantitative Data on Microtiter Plate Temperature Uniformity

| Parameter | Findings | Experimental Conditions | Source |

|---|---|---|---|

| Well-to-Well Variation | Internal wells ~0.14°C warmer at 25°C; ~0.68°C cooler at 37°C. | Custom incubator; 96-well plate. | [15] |

| Overall Uncertainty | ±0.4°C at 25°C; ±0.7°C at 37°C (95% confidence interval). | Custom incubator; 96-well plate. | [15] |

| Single Well Uniformity | Standard error of ±0.02°C within a single well. | Custom incubator. | [15] |

| Minimum Working Volume | Cultivation results replicable at volumes as low as 400 µL. | 96-deep-well plates (round & square). | [17] |

Diagram 1: MTP thermal gradient mechanism.

Design Limitations of Standard Reactor Vessels

Scaling up from microtiter plates to standard reactor vessels introduces a different set of challenges for temperature uniformity, primarily driven by larger volumes and more complex fluid dynamics.

Scale-Up and Heat Transfer Hurdles

The transition from pilot-scale to industrial-scale reactors is a critical point where temperature control often fails. Key limitations include:

- Geometric and Mixing Inefficiencies: A geometrical configuration that provides excellent heat transfer and mixing on a small scale may be ineffective on a larger scale. Variations in temperature, pressure, and mixing intensity can significantly impact reaction performance, product consistency, and safety [18].

- Hot Spot Formation: In large-scale vessels, inadequate mixing or insufficient heat dissipation can lead to localized "hot spots." This is particularly dangerous in highly exothermic reactions, where it can trigger a thermal runaway reaction, presenting a critical safety hazard [18].

- Flow Distribution in Parallel Channels: In reactor systems employing parallel channels or tubes to increase throughput, achieving uniform flow distribution is a major challenge. Non-uniform flow leads directly to non-uniform heat transfer and residence times, resulting in variable product quality. Computational Fluid Dynamics (CFD) studies show that smaller channel sizes (<300 µm) and higher fluid viscosity can improve flow uniformity, but the design of inlet and outlet manifolds is critically important [19].

Quantitative Analysis of Reactor Performance

The table below consolidates data on operational challenges in standard reactor vessels.

Table 2: Operational Challenges in Standard Reactor Vessels

| Challenge Category | Specific Limitation | Impact on Process | Source |

|---|---|---|---|

| Heat Transfer | Formation of hot/cold spots in large-scale operations. | Inhibits reaction efficiency, product consistency, and poses safety risks (e.g., thermal runaway). | [18] |

| Mixing & Mass Transfer | Poor mixing creates concentration gradients; highly viscous fluids require robust mixing. | Uneven reaction rates, product inconsistencies, and exacerbation of thermal control challenges. | [18] |

| Flow Distribution | Standard deviation in flow reduced by almost 90% using pressure equalization slots. | Directly affects heat transfer coefficient and mean residence time in parallel channels. | [19] |

| Catalyst Deactivation | Poisoning, fouling, sintering, and thermal degradation over time. | Reduced reactor efficiency, increased operational costs, need for frequent regeneration/replacement. | [18] |

Experimental Protocols

This section provides detailed methodologies for characterizing and addressing temperature distribution limitations in both microtiter plates and reactor systems.

Protocol 1: High-Throughput Temperature Profiling in Microtiter Plates

This protocol uses fluorescence thermometry to map temperature profiles across a 96-well MTP [16].

Key Research Reagent Solutions: Table 3: Reagents for Fluorescence Thermometry

| Item | Function | Specification |

|---|---|---|

| Rhodamine B (RhB) | Temperature-sensitive fluorophore. | 1 g/L stock in methanol. |

| Rhodamine 110 (Rh110) | Temperature-insensitive internal reference fluorophore. | 1 g/L stock in methanol. |

| Measuring Solution | Working solution for temperature calibration. | 10 mg/L each of RhB and Rh110 in water. |

Procedure:

- Preparation of Fluorescent Solution: Prepare fresh stock solutions of RhB and Rh110 in methanol at 1 g/L. Mix and dilute with water to create a working solution with a final concentration of 10 mg/L for each dye.

- Plate Loading: Pipette 200 µL of the prepared measuring solution into each well of a 96-well MTP.

- Instrument Setup: Place the MTP into an on-line monitoring device (e.g., a BioLector) equipped with a customized temperature control unit. The unit should consist of a thermostating block connected to separate heating and cooling water circulation systems.

- Temperature Calibration:

- Insert a calibrated PT100 temperature sensor into a designated reference well (e.g., well A2).

- Program the temperature control unit to execute a specific temperature profile.

- For the reference well, record the fluorescence signals (RhB and Rh110) simultaneously with the physical temperature reading from the PT100 sensor.

- Generate a calibration curve by plotting the fluorescence ratio (RhB/Rh110) against the measured temperature.

- Experimental Profiling:

- Replace the solution in the MTP with your actual reaction mixture (e.g., microbial culture, enzymatic reaction).

- Expose the MTP to the desired temperature profile.

- The BioLector device will continuously monitor the fluorescence signals from all wells. Use the pre-determined calibration curve to convert the fluorescence ratio in each well to a real-time temperature value.

Diagram 2: MTP temperature profiling workflow.

Protocol 2: Investigating Flow Distribution in Parallel Channel Reactors

This protocol employs CFD and experimental validation to diagnose and mitigate flow non-uniformity, a primary cause of temperature maldistribution in reactor arrays [19].

Procedure:

- CFD Model Setup:

- Geometry Creation: Develop a 3D computational model of the parallel channel reactor, including the precise geometry of the inlet and outlet manifolds and all individual channels.

- Mesh Generation: Create a computational mesh, ensuring sufficient mesh density in critical regions like the manifolds and channel entrances/exits.

- Boundary Conditions: Define inlet boundary conditions (e.g., velocity or mass flow rate) and outlet conditions (e.g., pressure outlet). Set fluid properties (density, viscosity) corresponding to the working fluid.

- Solver Configuration: Use a pressure-based solver in CFD software (e.g., ANSYS Fluent) with a k-ε turbulence model if applicable. Run the simulation until convergence is achieved.

- Flow Analysis:

- Extract the mass flow rate or velocity data for each individual parallel channel from the converged solution.

- Calculate the standard deviation of the flow across all channels to quantify the degree of non-uniformity.

- Design Modification - Pressure Equalization:

- To overcome inequality in pressure distribution, modify the reactor design by incorporating 'pressure equalization slots' (PES).

- In the CFD model, add at least two PES at an equal distance from the inlet and outlet that open into the respective manifolds. The width and distance of the PES from the channel entrance should be greater than 7 times the channel size for optimal effect.

- Re-run the simulation with the modified geometry and compare the new standard deviation of flow to the baseline case. The literature reports a reduction of almost 90% [19].

- Experimental Validation:

- Fabricate the reactor geometry, both conventional and PES-modified.

- Use syringe pumps to provide a steady flow of water through the system.

- To monitor flow velocities in individual channels, dose a colored fluid as a tracer and measure its velocity, or use residence time distribution (RTD) studies.

- Compare the experimentally observed flow distribution with the CFD predictions to validate the model and the efficacy of the PES modification.

The Scientist's Toolkit

This section details essential reagents, materials, and equipment for implementing the protocols described in this note.

Table 4: Key Research Reagent Solutions and Materials

| Item | Function / Application | Key Specifications / Notes |

|---|---|---|

| Rhodamine B & Rhodamine 110 | Fluorescent dyes for temperature profiling via fluorescence thermometry in MTPs. | Requires an optical monitoring device (e.g., BioLector) with appropriate filter sets. |

| Silicon Carbide (SiC) Heated Platforms | Enables high-temperature/pressure sealed vessel reactions and extractions in MTP format. | Provides rapid, homogeneous heating; allows use of standard HPLC/GC vials as vessels [20]. |

| Computational Fluid Dynamics (CFD) Software | Virtual prototyping and analysis of flow and temperature distribution in reactor designs. | Essential for diagnosing maldistribution and testing design modifications like PES before fabrication. |

| Pressure Equalization Slots (PES) | A design modification to equalize pressure in inlet/outlet manifolds of parallel channel reactors. | At least two PES, positioned equidistant from inlet/outlet, can drastically improve flow uniformity [19]. |

| Oxygen Transfer Rate (OTR) Monitoring | Non-invasive online tool for monitoring cell density and activity in microbioreactors. | Can be used as a scale-up parameter from MTPs to stirred tank reactors [17]. |

Advanced Computational and Experimental Methods for Thermal Management

In high-throughput chemistry for drug development, maintaining consistent thermal conditions across parallel reactor arrays is a fundamental challenge. Non-uniform temperature distribution can severely impact experimental validity, leading to irreproducible results and failed reactions. Computational Fluid Dynamics (CFD) provides powerful tools to address this challenge through high-fidelity modeling and intelligent simplification. This application note details a structured methodology, from establishing highly accurate CFD models to creating efficient porous media approximations, specifically framed within ongoing thesis research on achieving unprecedented temperature uniformity (±1°C) in parallel reactor systems. These protocols enable researchers to predict, analyze, and optimize thermal performance while balancing computational accuracy with practical efficiency.

Establishing a High-Fidelity CFD Model

The foundation of reliable thermal analysis is a verified high-fidelity CFD model. This protocol ensures minimal error between simulation and physical reality, which is crucial for predicting temperature distribution in sensitive chemical processes.

Model Setup and Calibration Protocol

Following established CFD guidelines [21], a rigorous setup and calibration process must be followed:

- Geometry Preparation: Create a detailed 3D model of the reactor array, including all vessels, heating/cooling elements, and surrounding components. Use dimensions matching the physical apparatus (e.g., 28.0 mm × 8.0 mm × 1.2 mm reactor cell as used in spacer studies [22]).

- Mesh Generation: Create a structured or unstructured computational mesh, ensuring sufficient refinement in critical regions (e.g., near reactor walls and heat transfer surfaces). Perform a mesh sensitivity study to ensure results are independent of element size [21].

- Boundary Conditions: Define all boundary conditions, including:

- Inlet/outlet flow rates and temperatures

- Heat fluxes from heating elements

- Thermal properties of all materials

- Solver Settings: Select appropriate physical models (e.g., turbulent flow, heat transfer, species transport). Set convergence criteria tightly to minimize iteration error [21].

Model Validation Against Experimental Data

To achieve high accuracy, CFD models must be validated with experimental measurements:

- Instrumentation: Place temperature sensors at multiple locations within the reactor array, including center and peripheral positions.

- Data Collection: Record temperature data under various operational conditions (different setpoints, flow rates, power levels).

- Error Quantification: Calculate the percentage error between simulated and experimental values. Following the protocol in [22], errors can be minimized to approximately 1.0% through careful calibration.

- Model Refinement: Adjust uncertain parameters (e.g., contact resistances, material properties) within physical limits to improve agreement with experimental data.

Table 1: CFD Model Error Sources and Mitigation Strategies

| Error Type | Description | Mitigation Strategy |

|---|---|---|

| Modeling Error | Difference between true physics and modeled equations [21] | Select appropriate turbulence and heat transfer models |

| Discretization Error | Induced by solving equations on finite grid points [21] | Perform mesh sensitivity analysis |

| Convergence Error | Due to finite convergence level [21] | Set tight convergence criteria (e.g., 10⁻⁶) |

| Input Error | From uncertain boundary conditions or material properties [21] | Validate with experimental measurements |

High-Fidelity CFD Application: Reactor Thermal Analysis

With a validated model, high-fidelity CFD can reveal critical insights into thermal performance and guide optimization strategies.

Quantitative Analysis of Temperature Distribution

Simulations quantify the extent and pattern of temperature variation. For example, standard reactor blocks can exhibit thermal gradients as high as ±13°C [23], while properly designed temperature-controlled reactors (TCRs) achieve uniformity of ±1°C [23]. Key analysis parameters include:

- Maximum Temperature Difference (ΔT_max): The difference between the hottest and coldest points in the array

- Standard Deviation of Temperature: Statistical measure of uniformity

- Thermal Maps: Visual representation of temperature distribution identifying hot/cold spots

Table 2: Key Performance Indicators for Reactor Thermal Analysis

| Performance Indicator | Target Value | Measurement Protocol |

|---|---|---|

| Temperature Uniformity | ±1°C [23] | Standard deviation across all reactor positions |

| Wall Shear Stress | Optimized for mixing | CFD simulation of fluid dynamics [22] |

| Flow Channel Pressure Drop | Minimized for energy efficiency [22] | CFD simulation of hydraulic performance [22] |

| Thermal Response Time | Application-dependent | Time to reach steady state after temperature change |

Flow and Spacer Optimization

In flow reactors, spacers significantly impact temperature distribution by influencing flow patterns and mixing. Recent research demonstrates that optimized spacer geometries can enhance wall shear stress by 52.6% and reduce pressure drop by 31.4% [22] compared to conventional designs. The protocol for spacer optimization includes:

- Parametric Modeling: Create multiple spacer designs varying key geometric parameters (filament shape, thickness, orientation angle)

- CFD Simulation: Evaluate each design for hydraulic and thermal performance

- Multi-objective Optimization: Balance competing factors (pressure drop vs. heat transfer) to identify optimal configurations

- Experimental Validation: Verify performance improvements with physical prototypes

Porous Media Approximations for System-Level Modeling

While high-fidelity models provide detailed insights, their computational cost can be prohibitive for system-level optimization. Porous media approximations offer an efficient alternative for representing complex components.

Theoretical Foundation of Porous Media Approach

Porous media modeling represents volumes where structured solids and fluids are interspersed, accounting for macro-scale effects of flow resistance and heat transfer without resolving microscopic details [24]. The pressure loss through porous media is modeled using a momentum source term:

Where:

S_i= pressure loss per unit lengthμ= fluid viscosityα= permeability (viscous loss coefficient)ρ= fluid densityv_i= fluid velocity vectorC₂= inertial pressure loss coefficient [24]

Protocol for Determining Porous Media Coefficients

Two methods can be used to determine the permeability (α) and inertial loss coefficient (C₂):

A. Experimental Method

- Measure pressure drop across the actual reactor component at multiple flow velocities

- Plot pressure drop versus velocity and fit a second-order polynomial

- Extract linear and quadratic coefficients from the curve fit

- Calculate α and C₂ using the equations:

- α = μ/A (where A is the linear coefficient)

- C₂ = B/ρ (where B is the quadratic coefficient) [24]

B. Numerical Method

- Create a detailed CFD model of a representative section of the component

- Simulate flow at different velocities and record pressure drop

- Follow the same curve-fitting procedure as the experimental method [24]

This approach can reduce mesh count by a factor of 1000 or more while maintaining acceptable accuracy for system-level modeling [24].

Integrated Workflow: From Detailed Analysis to System Optimization

Combining high-fidelity and porous media approaches creates a comprehensive workflow for reactor thermal management.

Table 3: Essential Resources for CFD-Enhanced Reactor Thermal Management

| Resource | Function/Application | Specifications/Requirements |

|---|---|---|

| ANSYS Fluent CFD Software | 3D simulation of fluid flow and heat transfer [22] | With User Defined Functions (UDF) capability for custom boundary conditions [22] |

| Temperature Controlled Reactor (TCR) | Experimental validation of thermal performance [23] | Capable of ±1°C temperature uniformity, -40°C to 82°C range [23] |

| Heat Transfer Fluids | Thermal management in TCR systems [23] | Water (down to 5°C), silicone-based fluids, ethylene glycol, polypropylene glycol [23] |

| High-Performance Computing (HPC) | Execution of high-fidelity CFD simulations [25] | Sufficient memory for billions of grid points, parallel processing capability [21] |

| Solidworks | 3D geometry creation for reactor components [22] | Compatibility with CFD meshing tools |

This integrated approach to reactor thermal management—combining high-fidelity CFD with efficient porous media approximations—provides researchers with a powerful methodology for achieving unprecedented temperature uniformity in parallel reactor arrays. The protocols outlined enable both deep physical insight and practical system optimization, supporting accelerated drug development through more reliable and reproducible reaction conditions. By implementing these application notes, scientists can significantly improve the validity of high-throughput experimentation while developing a fundamental understanding of the thermal phenomena governing their systems.

Implementing Multiphysics Object-Oriented Simulation Environment (MOOSE) for Coupled Physics

This application note provides a detailed protocol for implementing the Multiphysics Object-Oriented Simulation Environment (MOOSE) framework to investigate uniform temperature distribution in parallel reactor arrays. MOOSE offers a robust, high-fidelity platform for solving fully-coupled, fully-implicit multiphysics problems, enabling dimension-independent physics simulations with automated parallelization capabilities that have achieved runs exceeding 100,000 CPU cores [26]. Within the context of advanced nuclear reactor analysis, this document outlines systematic procedures for installation, application configuration, multiphysics coupling, and execution of reactor array simulations, with particular emphasis on the MultiApp and Transfer systems that facilitate complex data exchange between coupled physics solutions [27]. The methodologies presented herein establish a foundation for achieving predictive simulation of temperature uniformity critical to the safety and efficiency of advanced nuclear systems.

System Requirements and Installation

Minimum System Specifications

Before implementing MOOSE, verify that your computational environment meets the following minimum requirements:

Table 1: Minimum System Requirements for MOOSE Implementation

| Component | Specification |

|---|---|

| Operating System | POSIX compliant Unix-like OS (Modern Linux distribution or last two macOS releases) |

| CPU Architecture | x86_64 or ARM (Apple Silicon) |

| Memory | 8 GB (16 GB recommended for debug compilation) |

| Disk Space | 30 GB minimum |

| Compiler (GCC) | Version 9.0.0 - 13.3.1 |

| LLVM/Clang | Version 14.0.6 - 19 |

| Python | Version 3.10 - 3.13 |

| Python Packages | packaging, pyaml, jinja2 |

Installation Protocol

For most research applications, the Conda pre-built MOOSE distribution is recommended for its stability and excellent training compatibility [28]. The installation protocol consists of the following key stages:

- Environment Validation: Confirm compiler compatibility and Python version adherence to specifications in Table 1.

- Distribution Selection: Download and install the Conda pre-built package, which provides pre-compiled binaries that significantly reduce setup complexity.

- Verification Testing: Execute built-in test suites to validate proper installation and functionality.

- Application Configuration: Initialize the MOOSE environment and prepare for application development specific to reactor array simulations.

Researchers requiring custom configurations or specific HPC cluster deployments should consult the extended installation instructions available in the official MOOSE documentation [28].

MOOSE Framework Fundamentals for Reactor Physics

MOOSE is a finite-element, multiphysics framework primarily developed by Idaho National Laboratory that provides a high-level interface to sophisticated nonlinear solver technology [26]. Its architecture is particularly suited for nuclear reactor simulations due to several foundational capabilities:

- Fully-coupled, fully-implicit multiphysics solver enabling simultaneous solution of interacting physical phenomena

- Dimension-independent physics allowing seamless transition between 1D, 2D, and 3D representations

- Automatically parallel design distributing computations across CPU cores with minimal user intervention

- Modular development approach facilitating code reuse and specialized application development

- Built-in mesh adaptivity dynamically refining computational meshes based on solution characteristics

These capabilities are implemented through MOOSE's core C++ infrastructure, which presents a straightforward API aligned with engineering problem-solving approaches [26].

MOOSE-Based Reactor Physics Ecosystem

The MOOSE framework serves as the foundation for multiple specialized nuclear engineering applications, creating an integrated ecosystem for reactor analysis:

Table 2: MOOSE-Based Applications for Nuclear Reactor Multiphysics

| Application | Primary Physics | Role in Reactor Analysis |

|---|---|---|

| Griffin | Neutronics | Solves neutron transport equation with depletion and precursors [29] |

| BISON | Fuel Performance | Analyzes thermomechanical behavior in solid fuel structures [27] |

| Pronghorn | Multidimensional Thermal-Hydraulics | Models coolant flow and heat transfer in reactor cores [27] |

| SAM | Systems Thermal-Hydraulics | Provides system-level thermal-fluid analysis [27] |

Griffin, as a MOOSE-based reactor physics application, exemplifies the framework's flexibility, offering various finite element methods for solving the neutron transport equation and having been applied to fast reactors, pebble bed reactors, molten salt reactors, and microreactor designs [29].

Multiphysics Coupling Methodology

MultiApp System for Operator Splitting

The MultiApp system enables operator splitting approaches where each physics simulation is performed independently and coupled through fixed-point iterations [27]. This methodology addresses the challenge of differing spatial and temporal discretization requirements across physics domains. The implementation protocol involves:

- Parent Application Designation: Establish a primary MOOSE application that will coordinate the multiphysics simulation.

- Child Application Creation: Spawn specialized physics applications (e.g., Griffin for neutronics, Pronghorn for thermal-hydraulics) as MultiApps within the parent.

- Hierarchical Organization: Structure MultiApps in potentially multi-level hierarchies, such as a Griffin neutronics simulation spawning multiple BISON MultiApps for individual fuel pin calculations [27].

- Execution Scheduling: Define the sequence of MultiApp executions to optimize convergence and computational efficiency.

MultiApp Hierarchy with Sibling Transfers - Diagram showing parent application managing multiple child MultiApps with direct sibling transfers.

Transfer System for Data Exchange

The Transfer system manages all data exchange between applications in a MOOSE multiphysics simulation [27]. For reactor array temperature distribution studies, the following transfer types are essential:

- Field-to-field transfers: Move multidimensional data fields between applications, handling projection across dissimilar meshes and different finite element representations

- Scalar transfers: Communicate reduced quantities (integrated values, extremes) computed from field variables

- Sibling transfers: Enable direct data exchange between child applications without routing through parent, simplifying coupling schemes and reducing data duplication [27]

The transfer implementation protocol consists of:

- Field Identification: Designate source and target fields for each physics coupling (e.g., power density from neutronics to thermal-hydraulics, temperature feedback from thermal-hydraulics to neutronics).

- Transfer Type Selection: Choose appropriate transfer algorithms based on mesh compatibility and conservation requirements.

- Conservation Enforcement: Apply mathematical techniques to preserve integral quantities across non-matching meshes.

- Parallel Communication Optimization: Configure data exchange for efficient operation across distributed memory systems.

Experimental Protocol for Reactor Array Temperature Analysis

Application Development and Workflow Setup

This protocol outlines the complete procedure for implementing MOOSE to investigate temperature distribution in parallel reactor arrays:

Application Creation

- Execute MOOSE application generation script to establish custom application structure

- Implement custom objects (Kernels, Boundary Conditions, Materials) specific to reactor array physics

- Register new objects within the MOOSE factory system for instantiation

Input File Configuration

- Define mesh specifications reflecting parallel reactor array geometry

- Establish material properties for all reactor components

- Configure boundary conditions and initial values

- Set up MultiApp blocks for each physics component (neutronics, thermal-hydraulics, fuel performance)

- Specify Transfer blocks for all required field exchanges

Multiphysics Coupling

- Implement power density transfer from neutronics to thermal-hydraulics

- Configure temperature feedback from thermal-hydraulics to neutronics

- Establish sibling transfers between concurrently executing applications where appropriate

- Set up convergence criteria for fixed-point iterations

Execution and Monitoring

- Launch simulation using MPI for parallel execution

- Monitor convergence of coupled system through MOOSE console output

- Track field variables of interest (temperature distribution, power profile, fluid flow)

Post-processing and Analysis

- Extract temperature field data across reactor array

- Calculate uniformity metrics (standard deviation, peak-to-average ratio)

- Visualize results using ParaView or Peacock GUI tools

Advanced Meshing Techniques for Reactor Geometries

Recent advancements in MOOSE meshing capabilities offer significant improvements for reactor array simulations:

- Spline-based meshing: Coreform technology enables geometrically exact spline meshes with C¹ smoothness, reducing faceting artifacts in cylindrical reactor geometries [30]

- U-spline support: Enhanced libMesh capabilities allow consumption of Bezier Extraction (.bext) file formats for superior geometric representation

- Reactor Module tools: Specialized meshing utilities for nuclear reactor components facilitate accurate representation of complex array geometries

Implementation of these advanced meshing techniques has demonstrated superior contact resolution in fuel pellet simulations, eliminating striation artifacts observed in traditional linear FEA meshing and providing smoothly varying contact pressures that accurately reflect cylindrical geometries [30].

Research Reagent Solutions: Computational Tools

Table 3: Essential Computational Tools for MOOSE Reactor Simulations

| Tool | Function | Application in Reactor Analysis |

|---|---|---|

| libMesh | Finite element library | Core discretization infrastructure for MOOSE applications [27] |

| CUBIT/Coreform Trelis | Mesh generation | Geometry creation and mesh preparation for reactor components [30] |

| Griffin | Neutronics solver | Particle transport with depletion for power distribution [29] |

| BISON | Fuel performance | Thermomechanical analysis in fuel elements [27] |

| Pronghorn | Thermal-hydraulics | Multidimensional coolant flow and heat transfer [27] |

| ParaView | Visualization | Results processing and field variable analysis |

| Peacock | MOOSE GUI | Input file generation and simulation monitoring [31] |

Molten Salt Reactor Coupling Workflow

The following diagram illustrates a specific implementation for molten salt reactor multiphysics coupling, demonstrating advanced sibling transfer capabilities:

MSR Coupling with Sibling Transfers - Data exchange pattern for molten salt reactor analysis showing direct transfers between physics.

This coupling scheme for molten salt reactor analysis exemplifies advanced MOOSE capabilities where:

- Neutronics provides power density to thermal-hydraulics

- Thermal-hydraulics returns temperature feedback to neutronics

- Velocity fields are transferred from thermal-hydraulics to precursor equations

- Fission source is communicated from neutronics to precursor equations

- Precursor concentration completes the coupling loop back to neutronics

The sibling transfer capability enables this efficient organization without duplicating fields or requiring transfers to route through the parent application [27].

Verification and Validation Protocol

Solution Verification

MOOSE provides built-in capabilities for solution verification essential for confirming temperature distribution results:

- Method of Manufactured Solutions (MMS): Implementation of MMS for code verification confirms proper implementation of governing equations [32]

- Analytical solution comparison: For simplified geometries, comparison to known analytical solutions validates computational approaches

- Mesh convergence studies: Systematic refinement of computational mesh ensures results are independent of discretization

Conservation Verification

For reactor array simulations, maintaining conservation across physics couplings is critical:

- Integral quantity tracking: Monitor global conservation of energy across transfers between applications

- Boundary flux consistency: Verify matching fluxes at coupled boundaries between physics domains

- Scalar transfer validation: Use integrated quantities to confirm proper field transfer implementation

This application note has established comprehensive protocols for implementing MOOSE to investigate temperature distribution uniformity in parallel reactor arrays. The MOOSE framework, with its sophisticated MultiApp and Transfer systems, provides a robust foundation for multiphysics nuclear reactor simulations capable of addressing the complex coupled physics inherent in advanced reactor designs. The methodologies outlined—from installation through advanced coupling techniques—enable researchers to construct high-fidelity simulations that accurately capture the interdependent phenomena governing temperature distribution in reactor arrays. As MOOSE continues to evolve with enhanced spline support, improved transfer algorithms, and expanded physics modules, it remains an essential tool for advancing nuclear energy simulation capabilities.

Multi-App Hierarchies and Sibling Transfers for Efficient Data Exchange Between Solvers

Achieving uniform temperature distribution is a paramount objective in the design and operation of parallel reactor arrays, a common architecture in pharmaceutical and fine chemical production. Non-uniform temperatures can lead to inconsistent product quality, reduced yield, and potential safety risks. Traditional simulation approaches that solve for neutronics, thermal-hydraulics, and fuel performance in a single, coupled system often prove inefficient or unworkable due to the vastly different spatial and temporal discretization requirements of each physical phenomenon [27]. This application note details a robust computational methodology, employing multi-app hierarchies and sibling transfers, to enable high-fidelity, spatially resolved multiphysics simulations. By facilitating efficient data exchange between specialized solvers, this approach allows researchers to precisely model and optimize temperature distribution, thereby accelerating the development of safer and more efficient reactor systems.

Background: The Challenge of Temperature Uniformity

In advanced reactor analysis, high-fidelity simulations must resolve the coupling between physics spatially. Lower-fidelity models may use integrated quantities for coupling, but for precise temperature control, a spatially resolved approach is essential [27]. The challenge of temperature distribution is not unique to nuclear systems; it is a critical factor in various reactor technologies. For instance, studies on novel power-to-heat batch reactors have highlighted the importance of optimizing thermal spot configuration to maximize thermal mixing efficiency and prevent the formation of large cold "islands" [3]. Similarly, thermal management in complex systems like data centers, which share a conceptual similarity with reactor arrays in managing heat load distribution, requires multi-scale optimization of layout parameters to improve thermal uniformity and mitigate adverse hotspot effects [33]. These parallels underscore the universal importance of advanced computational techniques for thermal optimization.

Core Concepts: Multi-App Hierarchies and Sibling Transfers

The Multiphysics Object Oriented Simulation Environment (MOOSE) framework provides a sophisticated infrastructure for coupling multiple physics solvers. This is primarily achieved through two core systems: MultiApps and Transfers [27].

The MultiApp System

Instead of solving all equations within a single numerical system, the MultiApp system allows a parent application to create and manage multiple child applications. Each child application, such as a dedicated solver for neutronics, thermal hydraulics, or fuel performance, operates independently with its optimal discretization and numerical methods. A key advantage is the flexible parallel execution: child applications within a MultiApp can be solved concurrently, with processes distributed to maximize computational resource utilization [27]. This hierarchy can be nested, enabling complex multi-scale simulations.

The Transfer System and Sibling Transfers

Once simulations are decoupled via MultiApps, the Transfer system manages the exchange of data between them. This includes field variables (e.g., temperature, power density) and scalar quantities. Transfers handle complex operations such as projecting fields between non-matching meshes and managing communication between applications running on different numbers of processes [27].

A significant advancement is the introduction of sibling transfers, which enable direct data exchange between two child applications that are part of different MultiApps [27]. Previously, transferring data between such applications required a two-step process: first from child A to the parent, and then from the parent to child B. Sibling transfers streamline this into a single, direct communication, simplifying the coupling scheme and avoiding unnecessary duplication of fields in the parent application's memory.

Diagram: Simplified Molten Salt Reactor Coupling Scheme with Sibling Transfers

Application to Reactor Temperature Distribution

The multi-app and sibling transfer paradigm is directly applicable to the core challenge of achieving uniform temperature distribution in parallel reactor arrays. The coupling scheme for a molten salt reactor provides an excellent example of these concepts in practice [27]. In this multiphysics problem, several critical data exchanges are necessary, as outlined in the protocol below.

Table: Key Data Transfers for Reactor Thermal Analysis

| Source Application | Destination Application | Transferred Field | Impact on Temperature Distribution |

|---|---|---|---|

| Neutronics (Griffin) | Thermal-Hydraulics (Pronghorn) | Power Density / Fission Heat Source | Provides the volumetric heat generation term, the primary driver of the temperature field. |

| Thermal-Hydraulics (Pronghorn) | Neutronics (Griffin) | Temperature Field | Impacts neutron cross-sections, creating a crucial feedback loop for coupled neutronics-thermal simulations. |

| Thermal-Hydraulics (Pronghorn) | Precursor Transport | Velocity Field | Enables accurate modeling of precursor advection in the coolant, affecting the delayed neutron source. |

| Neutronics (Griffin) | Precursor Transport | Fission Source | Defines the production term for delayed neutron precursors. |

| Precursor Transport | Neutronics (Griffin) | Delayed Neutron Precursor Concentration | Closes the feedback loop by providing the delayed neutron contribution to the total fission source. |

Experimental Protocol: Implementing a Coupled Simulation

This protocol outlines the steps to set up a coupled simulation for analyzing temperature distribution in a reactor core, using the MOOSE framework.

Step 1: Problem Definition and Application Selection

- Define the reactor geometry and physical phenomena to be modeled.

- Select the appropriate MOOSE-based applications (e.g., Griffin for neutronics, Pronghorn for thermal-hydraulics, BISON for fuel performance) [27].

Step 2: Input File Configuration

- Create Parent Input File: The main input file defines the overall execution settings and creates the

MultiApps. - Declare MultiApps: Within the parent input file, create a

[MultiApps]block for each child application to be spawned. - Configure Transfers: In the