Advanced Thermal Control Protocols for Parallel Synthesis: Enhancing Efficiency and Reproducibility in Drug Development

This article provides a comprehensive guide to thermal control protocols in parallel synthesis, a critical enabling technology for modern drug discovery and development.

Advanced Thermal Control Protocols for Parallel Synthesis: Enhancing Efficiency and Reproducibility in Drug Development

Abstract

This article provides a comprehensive guide to thermal control protocols in parallel synthesis, a critical enabling technology for modern drug discovery and development. Aimed at researchers, scientists, and process development professionals, it covers the foundational principles of heat management in automated reactor systems, explores advanced methodological applications including automated platforms and machine learning-driven optimization, addresses common troubleshooting and optimization challenges, and outlines validation and comparative analysis techniques. By synthesizing the latest advancements, this resource aims to equip practitioners with the knowledge to implement robust thermal management strategies that accelerate development timelines, improve product quality, and ensure the reproducibility of chemical synthesis.

The Critical Role of Temperature in Parallel Synthesis: Principles and Impact on Reaction Outcomes

Why Thermal Control is a Bottleneck in High-Throughput Experimentation

In the drive to accelerate discovery and optimization in chemical synthesis and drug development, high-throughput experimentation (HTE) has become an indispensable paradigm. However, this pursuit of speed and parallelization has exposed a critical and often limiting factor: thermal control. This application note details why precise thermal management constitutes a significant bottleneck in HTE for parallel synthesis reactions and provides structured data, actionable protocols, and visual guides to help researchers overcome these challenges. The inability to perfectly scale thermal processes from traditional batch reactors to miniature, parallelized systems introduces substantial variability, compromising the integrity and reproducibility of experimental data. We frame this discussion within the broader thesis that advanced thermal control protocols are not merely supportive but foundational to reliable and meaningful parallel synthesis research.

The Thermal Bottleneck: Core Challenges in HTE

The transition from single-batch synthesis to parallelized, miniaturized HTE platforms fundamentally alters the thermal landscape. The core challenges can be categorized as follows:

Spatial Thermal Gradients: In a multi-reactor block, maintaining a uniform temperature across all reaction vessels is notoriously difficult. Peripheral reactors often experience different thermal conditions compared to those in the center, leading to inter-reactor variability. Even with advanced heater blocks, temperature differences of several degrees Celsius are common, which can dramatically alter reaction kinetics and outcomes [1].

Limited Heat Transfer in Miniaturized Volumes: As reaction volumes are scaled down to the microliter level—as in the Photoredox Optimization (PRO) reactor, which uses <10 μL reaction volumes—the surface-area-to-volume ratio increases [1]. While this can be beneficial for heating, it becomes a critical issue for heat dissipation. Exothermic reactions can lead to rapid and significant local temperature increases, which are difficult to mitigate without active and responsive cooling systems.

Power Density and Coupling Challenges: The close stacking of electronics and reaction modules in compact HTE systems creates challenges in dissipating internally generated heat, potentially creating thermal cross-talk between adjacent reaction vessels or between a system's electronics and its reaction blocks [2].

Real-Time Monitoring and Control Limitations: Many conventional HTE systems lack integrated, per-vessel temperature monitoring and feedback control. Instead, they often rely on a single block temperature measurement, which fails to capture the true thermal profile of each individual reaction, especially during rapid exotherms or endotherms [1].

Table 1: Quantitative Thermal Control Specifications in Modern HTE Systems

| System/Study | Reaction Volume | Reported Thermal Performance | Key Thermal Control Feature |

|---|---|---|---|

| Automated Photoredox (PRO) Reactor [1] | <10 μL | Precise irradiance control to temperature-controlled reaction volumes | High-intensity laser illumination with temperature control |

| 12-Parallel Reactor System [3] | ~4 g precursor per batch | Consistency and reliability in catalyst synthesis | Magnetically suspended stirring in miniature vessels |

| Space Camera Mirror [4] | N/A | Stable at ~20 °C, radial temperature difference <1 °C | Passive thermal management with active compensation |

Detailed Experimental Protocol: Thermal Control for Parallel Photoredox Cross-Couplings

This protocol is adapted from the work of Gesmundo et al. (2025) on an automated photoredox optimization reactor, which exemplifies the implementation of precise thermal control in a high-throughput setting [1].

Background and Principle

Photoredox catalysis reactions are particularly sensitive to both light irradiance and temperature. This protocol enables the execution and optimization of challenging decarboxylative cross-couplings in a 384-reaction array format. The core innovation is the integration of precise light control with active temperature regulation of optically thin reaction volumes, ensuring that thermal energy does not become a confounding variable.

Materials and Equipment

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for High-Throughput Photoredox Experimentation

| Item | Function/Benefit |

|---|---|

| Automated Photoredox Optimization (PRO) Reactor | Provides precise control over light irradiance and temperature for optically thin reaction volumes [1]. |

| Halogen Lamp or Laser Illumination System | Acts as a controlled, high-intensity heating source for photoredox reactions [1]. |

| Infrared (IR) Camera (e.g., FLIR quantum) | Enables non-contact, high-frequency (300 Hz) thermal mapping and monitoring of reaction progress or product quality [5]. |

| Infrared Matrix-Assisted Laser Desorption Electrospray Ionization Mass Spectrometry (IR-MALDESI-MS) | Allows for rapid, high-throughput analysis of 384 reactions in under 6 minutes, quantifying yields from miniature volumes [1]. |

| Sealed Quartz Ampules | Used for reactions requiring an inert atmosphere or controlled pressure, enabling high-temperature solid-state reactions (e.g., 1000°C for 48 h) [6]. |

| Microplates and Automated Liquid Handling Systems | Facilitate the miniaturization, parallelization, and automated transfer of reaction mixtures and crude products. |

Step-by-Step Procedure

Reaction Plate Preparation:

- Using an automated liquid handler, dispense all reaction components (photocatalyst, substrates, solvent, etc.) into the wells of a specialized microplate designed for optical clarity and thermal conductivity. The total reaction volume in each well should be <10 μL.

System Calibration and Sealing:

- Place the reaction plate into the PRO reactor and ensure secure thermal contact with the Peltier-controlled base plate.

- Calibrate the light source (laser or halogen lamp) to deliver the desired irradiance (in mW/cm²) across the entire plate.

- Seal the plate with an optically transparent, thermally stable membrane to prevent solvent evaporation.

Reaction Execution with Thermal Monitoring:

- Initiate the reaction protocol, which simultaneously triggers the light source and sets the base plate to the target temperature (e.g., 25°C).

- The PRO reactor's control system actively manages the Peltier element to maintain the set temperature, countering the heating effects of the light source and any reaction exotherms.

- Optional: Use an integrated IR camera to monitor the real-time thermal profile of each well, confirming the absence of hot spots or thermal gradients [5].

Reaction Quenching and Analysis:

- After the prescribed reaction time, automatically transfer the crude reaction mixtures from the PRO reactor to a standard 384-well analysis plate.

- Analyze the plate using IR-MALDESI-MS to quantify reaction conversion and yield for all 384 reactions in under 6 minutes [1].

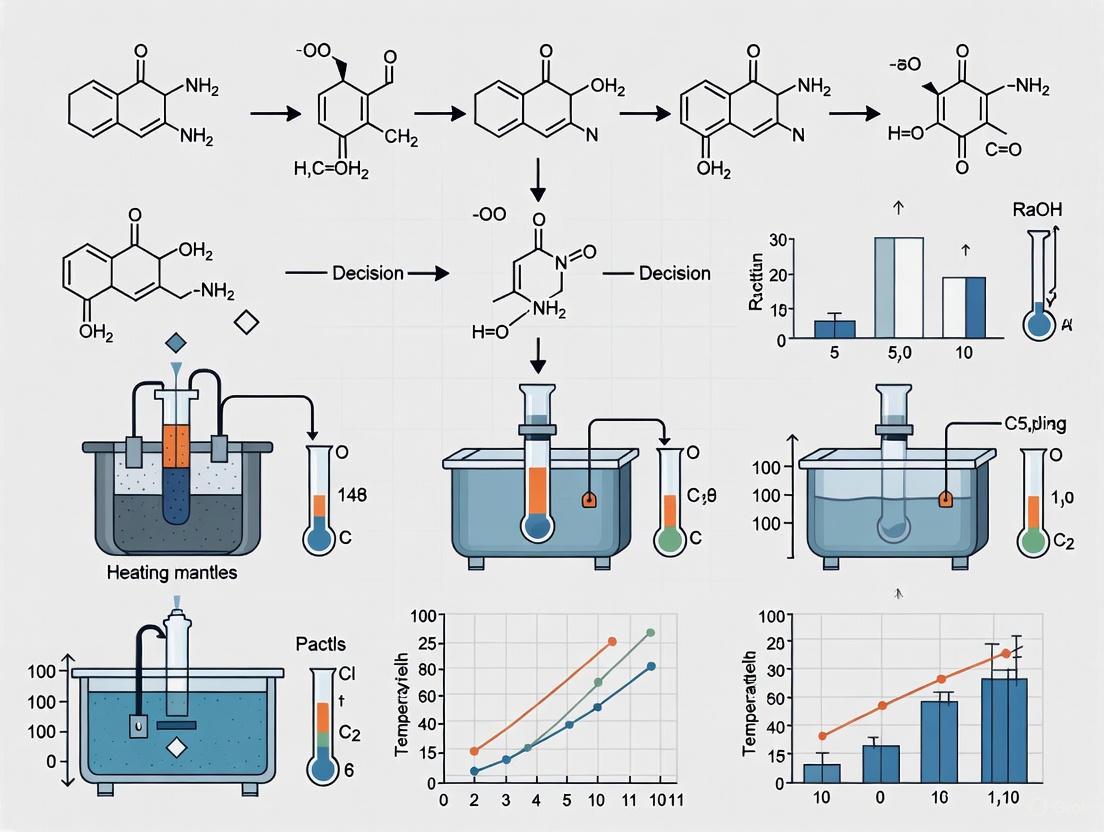

Diagram 1: High-Throughput Photoredox Reaction Workflow

Advanced Thermal Control Strategies and Solutions

Overcoming the thermal bottleneck requires a move beyond simple heating blocks to integrated control strategies.

Thermal Control System Design

Effective thermal control in HTE systems, much like in spacecraft or semiconductor manufacturing, often employs a hybrid approach [4] [7].

- Passive Thermal Management: This includes the use of materials with high thermal conductivity (e.g., aluminum or copper for reactor blocks) to promote even heat distribution. Phase Change Materials (PCMs) can be incorporated to absorb and release thermal energy during phase transitions, effectively buffering against temperature fluctuations [2].

- Active Thermal Control: This involves dynamic, sensor-driven systems. Thermoelectric Coolers (TECs or Peltier elements) are ideal for HTE as they can both heat and cool rapidly, enabling precise temperature control and management of exotherms. Proportional-Integral-Derivative (PID) control algorithms are used to minimize overshoot and maintain setpoints with high stability [8]. For the most complex systems, Model Predictive Control (MPC) can anticipate thermal disturbances and adjust control actions preemptively [8].

Diagram 2: Thermal Bottleneck Resolution Strategy

Quantitative Thermal Analysis and Monitoring

Non-contact methods like infrared thermography are powerful for HTE as they do not interfere with reactions. An IR camera can map temperatures across an entire reaction plate with high spatial and temporal resolution (e.g., 300 Hz), identifying gradients and hot spots that would otherwise go undetected [5]. This data is crucial for validating thermal control systems and for post-hoc analysis of reaction outcomes. For instance, a study on composite tape inspection used IR thermography to scroll tapes at 25 cm/s, using thermal signatures to detect and characterize defects like fiber content variations in real-time [5]. This principle is directly transferable to monitoring the thermal signature of flowing or parallel reactions.

Thermal control remains a critical bottleneck in high-throughput experimentation because the fundamental physics of heat transfer do not scale linearly with reaction volume, and the penalties for non-uniformity are severe in data-driven research. Addressing this challenge is not a matter of simple instrumentation but requires a systematic approach combining passive thermal design, active control algorithms, and advanced thermal monitoring. The protocols and strategies outlined here provide a framework for researchers to implement robust thermal control protocols, thereby ensuring that the high throughput of experimentation is matched by the high quality and reliability of the generated data.

Fundamental Thermodynamic Principles Governing Reaction Energetics and Heat Transfer

In modern drug development, the push for accelerated discovery timelines necessitates highly efficient synthetic methodologies. Parallel synthesis, which allows for the simultaneous execution of numerous reactions, has become a cornerstone of this effort. However, the fundamental thermodynamic principles of reaction energetics and heat transfer are often overlooked challenges in these systems. The precise thermal control of multiple concurrent reactions is not merely an engineering concern but a critical variable that directly influences reaction kinetics, yield, selectivity, and ultimately, the success of drug discovery campaigns. This Application Note details the core thermodynamic concepts, measurement protocols, and thermal management strategies essential for reliable parallel synthesis research, providing scientists with a framework to optimize thermal control protocols.

Theoretical Foundations: Core Thermodynamic Concepts

Understanding the energy landscape of chemical reactions is paramount for predicting and controlling their behavior, especially when scaled to parallel platforms.

Reaction Energetics and Kinetics

The energy profile of a reaction coordinate is defined by enthalpic (ΔH) and entropic (ΔS) changes, which together determine the Gibbs Free Energy (ΔG) and the reaction's feasibility at a given temperature. The relationship is given by: ΔG = ΔH - TΔS Where T is the absolute temperature in Kelvin. A negative ΔG indicates a spontaneous reaction. Temperature directly influences the reaction rate constant (k) as described by the Arrhenius equation: k = A e^(-Ea/RT) Where A is the pre-exponential factor, Ea is the activation energy, and R is the universal gas constant. This underscores that even minor temperature fluctuations across a reaction plate can lead to significant variances in reaction rates and yields [9].

Fundamentals of Heat Transfer in Reaction Vessels

Heat transfer in chemical systems occurs through three primary mechanisms: conduction, convection, and radiation. In parallel synthesis, the conductive heat transfer through reactor materials and convective heat transfer to the surrounding environment are most critical. The basic heat transfer equation is highly relevant: δQ = c * δT This states that the heat transferred (δQ) is proportional to the temperature difference (δT) and the heat capacity (c) of the material or system [10]. Ensuring uniform heat distribution across all wells in a parallel reactor requires managing these factors to prevent the creation of thermal gradients.

Advanced thermal management systems, such as Pulsating Heat Pipes (PHPs), leverage these principles. PHPs are passive devices that use the latent heat of vaporization and sensible heat of liquid slugs to efficiently transfer heat away from a source, maintaining temperature uniformity. Recent studies on double-layered closed-loop PHPs (CLPHPs) have demonstrated a 12.8–15.1% reduction in thermal resistance compared to single-layered systems, highlighting the importance of system design in thermal performance [11].

Quantitative Data on Thermal Influences in Chemical Systems

Empirical data is crucial for modeling thermodynamic behavior. The following table summarizes key quantitative findings from recent investigations into temperature-dependent phenomena.

Table 1: Quantitative Data on Thermal Influences in Chemical and Cluster Systems

| System Studied | Key Thermodynamic Observation | Quantitative Impact | Reference |

|---|---|---|---|

| Na₃₉ Clusters (Neutral, Cationic, Anionic) | Energy dependence on temperature and cluster charge state. | A single electron change (charge state) significantly alters the cluster's energy response to temperature, as confirmed by multiple linear regression analysis (p < 0.05). | [12] |

| Ni-catalyzed Suzuki Reaction (96-well HTE) | Optimization of yield and selectivity via ML-guided thermal control. | Identified conditions achieving >95% area percent (AP) yield and selectivity, a result not found by traditional methods. | [9] |

| Double-Layered Pulsating Heat Pipe (PHP) | Thermal resistance under different orientations. | Thermal resistance improved by 12.8–15.1% compared to a single-layered PHP, enhancing heat dissipation efficiency. | [11] |

| Fused Enhancer Constructs (eve37/eve46) | Thermodynamic modeling of gene expression. | A "two-tier" model, which treats regulatory segments as independent modules, better fit experimental readouts than a simple "bag of sites" model. | [13] |

Further analysis of sodium clusters revealed distinct thermodynamic grouping. Fuzzy clustering analysis applied to the energy data of Na₃₉ clusters verified that each cluster type (neutral, cationic, anionic) could be divided into three distinct groups based on the temperatures used to investigate their properties (120 K to 400 K) [12]. This illustrates how underlying thermodynamic states can be classified through statistical analysis of energy data.

Experimental Protocols for Thermodynamic Analysis

Protocol: High-Throughput Reaction Optimization with Integrated Thermal Control

This protocol leverages machine learning (ML) to efficiently navigate complex reaction spaces, including temperature, for parallel synthesis optimization [9].

1. Reaction Setup and Initialization:

- Define Search Space: Enumerate all plausible reaction conditions, including categorical variables (e.g., solvent, ligand) and continuous variables (e.g., temperature, catalyst loading). Implement automatic filters to exclude impractical conditions (e.g., temperatures exceeding solvent boiling points).

- Initial Batch Selection: Use algorithmic quasi-random Sobol sampling to select an initial batch of 24, 48, or 96 experiments. This maximizes the coverage of the reaction condition space and increases the probability of discovering regions containing optimal conditions.

2. Automated Execution and Data Acquisition:

- Parallel Reaction Execution: Utilize an automated high-throughput experimentation (HTE) platform to carry out the batch of reactions in parallel (e.g., in a 96-well plate format).

- In-Situ Reaction Monitoring: Employ techniques such as in-situ FTIR or Raman spectroscopy to monitor reaction progression and heat generation in real-time.

- Product Analysis: Analyze reaction outcomes (e.g., yield, selectivity) using automated analytical techniques like UPLC/MS. Ensure all data is formatted for ML processing.

3. Machine Learning-Guided Optimization:

- Model Training: Train a Gaussian Process (GP) regressor on the accumulated experimental data to predict reaction outcomes and their associated uncertainties for all possible conditions in the search space.

- Next-Batch Selection: Use a scalable multi-objective acquisition function (e.g., q-NParEgo, TS-HVI, q-NEHVI) to select the next batch of experiments. This function balances the exploration of uncertain regions of the search space with the exploitation of known high-performing conditions.

- Iteration: Repeat steps 2 and 3 for multiple cycles until convergence is achieved, improvement stagnates, or the experimental budget is exhausted.

Protocol: Measuring Thermodynamic Properties of Molecular Clusters using Born-Oppenheimer Molecular Dynamics (BOMD)

This protocol describes a method for obtaining energy and structural data used in thermodynamic analysis, as applied to sodium clusters [12].

1. System Preparation and Equilibrium Structure Search:

- Generate approximately 200 different initial isomer configurations for the cluster of interest. These can be derived from ab initio constant-temperature runs at temperatures near the cluster's melting point and beyond.

- Employ density functional theory (DFT) methods, such as those implemented in the VASP package, using ultrasoft pseudopotentials and appropriate approximations (e.g., Local Density Approximation).

- Optimize all geometries to locate the equilibrium structures.

2. Thermodynamic Data Collection via BOMD:

- For each cluster of interest, carry out BOMD calculations at a minimum of 10-12 different temperatures across a relevant range (e.g., 120 K to 400 K).

- Use a thermostat (e.g., Nośe–Hoover thermostat) to maintain constant temperature during the simulations.

- For each temperature, collect trajectory data for a minimum of 240 ps. Discard the initial 30 ps of data to allow for system thermalization.

3. Data Extraction for Statistical Analysis:

- Extract the time-series energy data (total energy, potential energy) from the thermally equilibrated portions of the BOMD trajectories.

- This data serves as the direct input for subsequent statistical analysis, such as multiple linear regression with dummy variables or fuzzy clustering, to quantify the impact of variables like temperature and charge state.

Visualization of Workflows and System Designs

Machine Learning-Driven Reaction Optimization Workflow

The following diagram illustrates the iterative, closed-loop workflow for optimizing parallel reactions, integrating automation, experimentation, and machine learning as described in the protocol [9].

Thermal Management System of a Double-Layered Pulsating Heat Pipe

This diagram visualizes the design and operating principle of a double-layered Closed-Loop Pulsating Heat Pipe (CLPHP), a advanced system for managing heat in compact spaces, which can be analogous to thermal control in parallel reactor blocks [11].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of thermodynamic control in parallel synthesis relies on specific materials and tools. The following table lists key solutions used in the featured experiments.

Table 2: Key Research Reagent Solutions for Thermodynamic Studies and Parallel Synthesis

| Item | Function / Application | Experimental Context |

|---|---|---|

| High-Throughput Experimentation (HTE) Robotic Platform | Enables highly parallel execution of numerous reactions at miniaturized scales, making the exploration of vast condition spaces time- and cost-efficient. | Used for automated setup of 96-well reaction plates in ML-guided optimization campaigns [9]. |

| Pulsating Heat Pipe (PHP) | A passive heat transfer device that uses the oscillatory flow of liquid-vapor slugs to achieve high heat flux and temperature uniformity with minimal resistance. | Double-layered PHP design showed 12.8-15.1% lower thermal resistance than single-layered designs [11]. |

| Nośe–Hoover Thermostat | An algorithm used in molecular dynamics simulations to maintain a system at a constant temperature, mimicking a thermodynamic bath. | Critical for performing BOMD calculations to collect energy data at specific temperatures for thermodynamic analysis [12]. |

| Gaussian Process (GP) Regressor | A machine learning model that predicts reaction outcomes and, importantly, quantifies the uncertainty of its predictions, guiding experimental design. | Core component of the ML framework (Minerva) for selecting the most informative next experiments in reaction optimization [9]. |

| Earth-Abundant Metal Catalysts (e.g., Nickel) | Lower-cost, sustainable alternatives to precious metal catalysts (e.g., Palladium) for cross-coupling reactions, aligning with green chemistry principles. | Successfully optimized in a Ni-catalyzed Suzuki reaction using the ML-driven HTE workflow [9]. |

Within the context of thermal control protocols for parallel synthesis reactions, the transition from small-scale research to industrial production presents significant challenges. Traditional manual methods for reaction optimization, particularly concerning temperature parameters, are increasingly inadequate for modern drug discovery and development demands. These manual approaches struggle with error rates, reproducibility, and scalability, creating critical bottlenecks in pharmaceutical research and development. This document details these challenges and presents automated solutions through structured data comparison, experimental protocols, and visual workflows specifically framed for researchers, scientists, and drug development professionals focused on thermal control in parallel synthesis systems.

Quantitative Analysis: Manual vs. Automated Screening

Traditional manual screening requires operators to evaluate numerous reaction parameters—including pressure, pH, temperature, and catalysts—through extensive experimentation. The significant number of possible parameter combinations makes manual methods highly impractical for industrial processes that require speed, accuracy, and reproducibility [14]. Manual preparation, execution, and analysis are prone to human error, leading to delayed results and additional resource consumption when experiments require repetition [14].

Table 1: Comparative Analysis of Screening Method Performance Characteristics

| Performance Characteristic | Traditional Manual Screening | Automated High-Throughput Screening |

|---|---|---|

| Experimental Throughput | Low (sequential testing) | High (simultaneous testing) [14] |

| Parameter Control Precision | Moderate to Low (manual adjustment) | High (real-time automated control) [14] |

| Reproducibility Between Experiments | Low (operator-dependent) | High (systematic operation) [14] |

| Human Error Incidence | High (manual handling) | Minimal (reduced intervention) [14] |

| Resource Consumption per Experiment | High | Optimized |

| Scalability to Production | Limited and challenging | Enhanced (tight control facilitates scale-up) [14] |

| Data Consistency | Variable (dependent on technician skill) | Consistent (standardized protocols) |

| Thermal Gradient Management | Inconsistent across reaction vessels | Precise and uniform control |

Automated high-throughput screening addresses these limitations by improving efficiency, maintaining consistency, and ensuring reproducibility [14]. The implementation of automated reactors with real-time monitoring and control enables precise adjustments to optimize chemical synthesis, including thermal parameters critical to parallel synthesis reactions [14].

Experimental Protocol: Thermal Control Optimization for Parallel Synthesis

Objective

To establish a standardized protocol for optimizing thermal control parameters in parallel synthesis reactions using automated high-throughput screening systems, enabling efficient identification of optimal temperature conditions while ensuring reproducibility and scalability.

Materials and Equipment

Table 2: Research Reagent Solutions and Essential Materials

| Item | Function/Application |

|---|---|

| Parallel Reactor System | Independently controlled reactors for simultaneous testing of different thermal conditions [14] |

| Temperature Control Module | Precise regulation and monitoring of reaction temperature across multiple vessels |

| Real-Time Monitoring Software | Live measurement of reaction parameters and data collection [14] |

| Robotic Liquid-Handling System | Automated reagent addition with improved precision and speed [14] |

| Catalyst Library | Diverse catalytic materials for reaction optimization screening |

| Solvent System | Appropriate reaction medium with defined temperature stability profile |

| pH Adjustment Reagents | Buffer solutions for maintaining specific reaction environments |

| Calibration Standards | Reference materials for instrument validation and measurement verification |

Methodology

Experimental Setup

- System Initialization: Power on the automated parallel reactor system and allow temperature control units to stabilize.

- Reactor Configuration: Load identical reaction vessels into all positions of the parallel reactor system.

- Reagent Preparation: Prepare stock solutions of all reaction components according to standardized concentration protocols.

- Liquid Handling Programming: Program robotic liquid-handling systems for precise reagent addition across all reaction vessels.

- Temperature Gradient Establishment: Program the thermal control system to establish different temperature set points across reactor vessels, covering the range of 25°C to 150°C with 5°C increments.

Reaction Execution

- Automated Reagent Dispensing: Use robotic systems to dispense precise volumes of reagents into all reaction vessels simultaneously.

- Thermal Protocol Initiation: Start the temperature ramping sequence according to the pre-established gradient profile.

- Real-Time Monitoring: Activate software controls for continuous monitoring of temperature stability, reaction progress, and byproduct formation.

- Parameter Adjustment: Implement feedback loops to automatically adjust thermal parameters when deviations from optimal conditions are detected.

- Reaction Termination: Automatically quench reactions simultaneously across all vessels once predetermined endpoints are reached.

Data Collection and Analysis

- Performance Metrics Recording: Document conversion rates, byproduct formation, and reaction kinetics for each temperature condition.

- Statistical Analysis: Apply statistical models to identify optimal temperature parameters that maximize yield and minimize impurities.

- Reproducibility Assessment: Conduct triplicate runs of optimal conditions to verify reproducibility across different reactor positions.

- Scale-Up Projection: Utilize data to model reaction behavior at pilot and production scales, with particular attention to thermal transfer considerations.

Safety Considerations

- Implement automated pressure relief systems for reactions under elevated temperatures

- Establish containment protocols for volatile or hazardous reagents

- Program emergency shutdown procedures for thermal runaway scenarios

- Install redundant temperature monitoring systems for high-temperature reactions

Workflow Visualization: Automated Thermal Control Screening

Thermal Control Screening Workflow: This diagram illustrates the automated workflow for optimizing thermal parameters in parallel synthesis reactions, highlighting the critical path from protocol definition to scale-up projection.

Implementation and Technological Integration

Advanced automation technology introduces innovative solutions that enhance throughput in chemical synthesis screening. These include automated reactors that enable real-time monitoring and control of reaction conditions, allowing for precise adjustments to optimize chemical synthesis [14]. Software control solutions facilitate live measurements and data collection, while feedback loops can swiftly correct detected variations from optimal conditions [14]. This effective hardware-software integration provides comprehensive insights into reaction performance and increases efficiency. The use of robotic implements and liquid-handling systems improves the precision and speed of sample preparation and reagent addition, further increasing accuracy and throughput [14].

The integration of these automated systems addresses the fundamental challenges of traditional manual methods by ensuring that all tests required to achieve safe and efficient chemical processes are conducted under controlled and reproducible conditions. This facilitates a smoother transition between development stages and avoids unexpected complications during scale-up [14]. For thermal control protocols specifically, this means maintaining precise temperature parameters across multiple parallel reactions while systematically collecting performance data essential for both optimization and subsequent production scaling.

In the realm of modern chemical research, particularly in pharmaceutical development, the shift toward parallel synthesis has necessitated advanced thermal control protocols. This approach enables the rapid generation of molecular libraries, as evidenced by methods for synthesizing 96 different hexapeptides within 24 hours [15]. The reproducibility and success of these high-throughput experimentation (HTE) platforms are fundamentally governed by the precise management of thermal energy. Temperature (T), enthalpy change (ΔH), and heat capacity at constant pressure (Cp) are interdependent thermodynamic parameters that collectively dictate the rate, yield, and selectivity of chemical transformations. Within the context of parallel synthesis, where reaction scales are miniaturized and volumes are reduced, the thermal mass of the system is significantly lower, making reactions more susceptible to exothermic or endothermic events and resulting in substantial temperature fluctuations if not properly controlled. A thorough understanding and meticulous regulation of these key parameters are therefore not merely beneficial but essential for developing robust, scalable, and transferable synthetic protocols within a comprehensive thesis on thermal control [16] [9].

Theoretical Foundation of Key Thermodynamic Parameters

Fundamental Definitions and Relationships

Heat Capacity (C) and Specific Heat Capacity (c): Heat capacity (C) is an extensive property of a body of matter, defined as the quantity of heat (q) it absorbs or releases when it experiences a temperature change (ΔT) of 1 degree Celsius or 1 Kelvin: C = q / ΔT [17]. Specific heat capacity (c), an intensive property, is the heat capacity per unit mass. It is the amount of heat that must be added to one unit of mass of a substance to cause an increase of one unit in temperature. Its SI unit is joule per kilogram per Kelvin (J⋅kg⁻¹⋅K⁻¹) [18]. The formal definition is c = Q / (m × ΔT), where Q is the heat added, m is the mass, and ΔT is the temperature change [19].

Specific Heat at Constant Pressure (Cp) and Constant Volume (Cv): The specific heat capacity of a substance depends on whether it is measured at constant pressure or constant volume [18] [20].

- Cp (Isobaric Heat Capacity): This is the energy required to raise the temperature of a unit mass of a material by one degree in a process where pressure is held constant. It is denoted as Cp [20] [21].

- Cv (Isochoric Heat Capacity): This is the energy required to raise the temperature of a unit mass of a material by one degree in a process where volume is held constant. It is denoted as Cv [20] [21].

- For solids and liquids, which are largely incompressible, the difference between Cp and Cv is usually negligible. However, for gases, the difference is significant. When a gas is heated at constant pressure, it expands and does work on its surroundings, requiring more energy input than when heated at constant volume. The relationship between Cp and Cv for an ideal gas is given by Cp = Cv + R, where R is the specific gas constant [21].

Enthalpy (H) and Enthalpy Change (ΔH): Enthalpy (H) is a state function defined as H = U + PV, where U is internal energy, P is pressure, and V is volume [17]. For a constant-pressure process, the change in enthalpy (ΔH) is equal to the heat transferred (qP): ΔH = qP [17]. This makes enthalpy the natural thermodynamic potential for characterizing heat effects in chemical reactions, which are typically carried out at constant pressure (e.g., open to the atmosphere).

The Interplay of ΔH, Cp, and Temperature in Reaction Optimization

The temperature dependence of the enthalpy change for a reaction is directly governed by the difference in heat capacities between the products and reactants. This relationship is formalized by Kirchhoff's law: ΔH(T₂) = ΔH(T₁) + ∫ΔCp dT, where ΔCp is the sum of the heat capacities of the products minus the sum of the heat capacities of the reactants (ΣCp(products) - ΣCp(reactants)) [17]. This equation is critical for predicting thermodynamic driving forces across a range of temperatures in optimization campaigns. A reaction's inherent thermal signature—whether it is exothermic (ΔH < 0) or endothermic (ΔH > 0)—combined with the heat capacities of the reaction mixture, determines the magnitude of adiabatic temperature rise or fall. In parallel synthesis, where heat transfer is rapid due to high surface-to-volume ratios, this relationship must be carefully calibrated to maintain the target isothermal conditions essential for reproducible results [16].

Table 1: Specific Heat Capacities (Cp) of Common Substances in Chemical Synthesis

| Substance | State | Specific Heat Capacity (Cp) | Relevance to Synthesis |

|---|---|---|---|

| Water | Liquid | 4184 J·kg⁻¹·K⁻¹ [18] | Common solvent; high Cp provides excellent temperature stability and heat transfer [19]. |

| Steam | Gas | 2016.69 J·kg⁻¹·K⁻¹ [20] | Critical for design of steam-based heating systems and distillation processes. |

| Iron (Fe) | Solid | 449 J·kg⁻¹·K⁻¹ [17] [19] | Material for reactor components and catalyst; influences heating/cooling rates of equipment. |

| Copper (Cu) | Solid | 385 J·kg⁻¹·K⁻¹ [19] | Used in heat exchangers and cooling coils due to high thermal conductivity. |

| Air (Dry) | Gas | ~1005 J·kg⁻¹·K⁻¹ [20] | Medium for convective heat transfer in ovens and dryers. |

Diagram 1: Thermal Parameter Interplay. This diagram illustrates the logical relationships between the key thermodynamic parameters in a reacting system.

Experimental Protocols for Thermal Parameter Determination

Protocol A: Determination of Reaction Enthalpy (ΔH) via Calorimetry

Principle: This protocol uses a calorimeter to directly measure the heat flow (q) associated with a chemical reaction at constant pressure, thereby determining the enthalpy change (ΔH) [17] [20].

Materials:

- Reaction calorimeter (e.g., differential scanning calorimeter or equivalent)

- Solvents and reagents (high purity, degassed if necessary)

- Syringes or automated dispensers

- Temperature calibration standards (e.g., indium)

Methodology:

- Calibration: Calibrate the calorimeter's temperature and heat flow sensors using a standard of known melting point and enthalpy of fusion (e.g., indium) according to the manufacturer's instructions [20].

- Baseline Establishment: Load the solvent or reference material into the sample cell. Run a temperature program matching the planned reaction conditions to establish a stable baseline.

- Reaction Execution: a. For a solution-phase reaction, introduce the reactants into the calorimeter cell at the initial temperature (T₁). b. Initiate the reaction (e.g., by breaking an ampoule, injecting a catalyst, or starting agitation). c. Monitor the heat flow (q) as a function of time throughout the reaction until the signal returns to baseline, indicating completion.

- Data Analysis: a. Integrate the area under the heat flow versus time curve to obtain the total heat evolved or absorbed (qP). b. Since the process occurs at constant pressure, ΔH = qP. c. Normalize the calculated ΔH by the number of moles of the limiting reagent to report the enthalpy change in kJ/mol⁻¹.

Protocol B: Measurement of Specific Heat Capacity (Cp) Using Differential Scanning Calorimetry (DSC)

Principle: DSC measures the heat flow difference between a sample and an inert reference as a function of temperature, allowing for the accurate determination of Cp [20].

Materials:

- Differential Scanning Calorimeter (DSC)

- Standard alumina (Al₂O₃) crucibles

- Sample of the material (e.g., reaction solvent, mixture, or product)

- Reference material (typically an empty crucible or one filled with inert material)

Methodology:

- Instrument Preparation: Purge the DSC cell with an inert gas (e.g., N₂) at a specified flow rate. Allow the instrument to stabilize.

- Baseline Run: Load two identical, empty crucibles into the sample and reference holders. Run a temperature ramp over the desired range (e.g., 25°C to 80°C) to obtain a baseline.

- Standard Run: Replace the sample crucible with one containing a known mass of a standard reference material (e.g., sapphire) of known heat capacity. Repeat the temperature ramp.

- Sample Run: Replace the standard with a known mass of your sample. Repeat the identical temperature ramp.

- Data Analysis: a. The heat capacity of the sample (Cp,sample) is calculated by the instrument software by comparing the heat flow signals from the sample, standard, and baseline runs, using the formula: Cp,sample = (Dsample / Dstandard) × (msample / mstandard) × Cp,standard b. Where D is the measured heat flow displacement from baseline, and m is the mass.

Protocol C: In-situ Thermal Monitoring for Parallel Synthesis Optimization

Principle: This protocol integrates real-time temperature monitoring within a parallel synthesis reactor (e.g., a 96-well plate system) to ensure isothermal conditions and profile reaction exotherms [15] [16].

Materials:

- Parallel synthesis reactor with temperature control (e.g., microwave reactor) [15]

- Multi-well reaction plates (e.g., 96-well polypropylene filter plates)

- Fiber-optic temperature probes or infrared thermal imaging

- Automated liquid handling system

Methodology:

- System Setup: Arrange the fiber-optic probes in designated wells across the reaction plate to capture spatial temperature variations. Alternatively, calibrate the IR thermal imager for the plate material.

- Reaction Initiation: Using an automated pipettor, dispense reactants and solvents into the wells according to the experimental design [15].

- Thermal Monitoring and Control: a. Initiate the reaction under the defined conditions (e.g., microwave irradiation, heating block) [15]. b. Record temperature data from all probes/imaging at high frequency throughout the reaction duration. c. Utilize the reactor's feedback control system to adjust power output in response to measured exotherms or endotherms, maintaining the set-point temperature.

- Data Correlation: a. Correlate the maximum temperature deviation (ΔTmax) in each well with the reaction outcome (yield, selectivity). b. Use the measured ΔTmax and the known total heat capacity of the reaction mixture (Ctotal ≈ msolvent × Cp,solvent) to estimate the reaction enthalpy: q ≈ Ctotal × ΔTmax.

Application in Automated Reaction Optimization

The integration of thermal parameters into machine learning (ML)-driven optimization loops represents the cutting edge of reaction development. As demonstrated by the Minerva framework, Bayesian optimization can efficiently navigate high-dimensional search spaces—including continuous variables like temperature and categorical variables like solvent choice—to identify optimal conditions for multiple objectives such as yield and selectivity [9]. In such a workflow, thermal data are not merely observational but are active inputs for the model. For instance, the magnitude of an exotherm (ΔH) can serve as a proxy for conversion in near-real-time, while the system's overall heat capacity (Cp) informs heat transfer and scaling calculations. This approach was successfully deployed in a 96-well HTE campaign for a nickel-catalyzed Suzuki reaction, where the ML-driven workflow identified high-performing conditions (76% yield, 92% selectivity) that eluded traditional, chemist-designed screens [9]. This demonstrates that a quantitative understanding of T, ΔH, and Cp enables not just control but also intelligent optimization, dramatically accelerating process development timelines from months to weeks [9].

Table 2: The Scientist's Toolkit: Essential Reagents and Materials for Thermal Analysis and Control

| Item | Function/Benefit | Application Example |

|---|---|---|

| Differential Scanning Calorimeter (DSC) | Precisely measures heat flow differences to determine Cp, ΔH, and phase transitions [20]. | Protocol B: Determining the Cp of a new solvent or reagent mixture. |

| Reaction Calorimeter | Measures heat flow in real-time under conditions mimicking large-scale reactors [20]. | Protocol A: Determining the enthalpy change (ΔH) of a novel catalytic reaction. |

| Microwave Reactor with Parallel Synthesis | Enables rapid, temperature-controlled heating of multiple reactions simultaneously [15]. | Protocol C: Optimizing peptide coupling reactions in a 96-well plate format [15]. |

| High-Throughput Automation Platform | Robotic liquid handling and solid dispensing for highly parallel experiment execution [9]. | Enables the setup of hundreds of reactions varying temperature, concentration, and solvent for ML-driven optimization [9]. |

| Fiber-Optic Temperature Probes | Inert, precise, and capable of monitoring temperature inside individual small-scale reaction vessels. | Protocol C: Real-time thermal profiling of exothermic events in parallel synthesis wells. |

Diagram 2: ML-Driven Thermal Optimization Workflow. This workflow chart outlines the iterative cycle of automated experimentation and machine learning used to optimize reactions based on yield, selectivity, and thermal data [9].

The systematic integration of temperature, enthalpy, and heat capacity as controlled variables and measured responses is fundamental to advancing the field of parallel synthesis. As this application note has detailed, protocols for determining ΔH and Cp provide critical quantitative data that feed into predictive models and optimization algorithms. Framing experimental workflows within the context of these thermodynamic principles ensures that reactions are not only optimized for yield and selectivity but also for safety and scalability from the outset. The future of drug development and chemical process research lies in the seamless fusion of automated high-throughput experimentation, real-time thermal analytics, and machine intelligence. A deep thesis on thermal control protocols must, therefore, anchor itself on these key parameters—T, ΔH, and Cp—to build a robust, data-driven foundation for the next generation of synthetic methodologies.

Automated lab reactors represent a significant advancement in laboratory technology, enabling greater efficiency, precision, and scalability in chemical synthesis and process development [22]. These systems integrate advanced software and hardware to automate the monitoring and control of chemical reactions, providing unparalleled command over reaction parameters such as temperature, pressure, and mixing [22]. For researchers in parallel synthesis, particularly within pharmaceutical development, the implementation of robust thermal control protocols is essential for ensuring reaction reproducibility, optimizing yield, and accelerating development timelines [9] [22]. This document details the application of automated reactor systems, with a focus on thermal management and real-time analytics, to provide standardized protocols for researchers.

Key System Capabilities and Specifications

Automated reactor systems offer a suite of features designed to enhance control and data capture. The table below summarizes the core capabilities relevant to parallel synthesis and thermal control.

Table 1: Key Capabilities of Automated Reactor Systems

| Feature | Description | Benefit for Parallel Synthesis & Thermal Control |

|---|---|---|

| Precision Temperature Control | Advanced jacketed reactors manage thermal process safety and ensure uniform temperature distribution [22]. | Enables accurate study of reaction kinetics and thermal hazards; essential for screening reactions at different temperatures in parallel [22] [23]. |

| Real-Time, Non-Invasive Temperature Monitoring | Use of advanced thermopile technology for contactless temperature measurement inside the reactor [23]. | Allows for precise thermal profiling of exothermic/endothermic reactions without invasive probes, ensuring process stability [23]. |

| Integrated Automated Sampling | Systems like ReactALL use SmartCap technology for fully automated, unattended sampling, quenching, dilution, and transfer to HPLC vials [23]. | Streamlines workflow for kinetic studies; provides representative, quenched samples for analysis without manual intervention [23]. |

| Fully Automated Liquid Dosing | Modules like DoseALL allow for automated addition of reagents [23]. | Critical for optimizing reagent addition protocols and for conducting multi-step reactions reproducibly [22] [23]. |

| Real-Time Analytics | In-line analytics such as color cameras for particle visualization and optional Raman spectroscopy [23]. | Provides immediate insights into reaction progress, phase changes, and composition without perturbation or cross-contamination [23]. |

| Independent Parallel Reactors | Multiple reactors (e.g., 5 in ReactALL) operating independently with individual temperature control [23]. | Allows for highly efficient reaction screening and optimization of multiple conditions simultaneously [9] [23]. |

| Machine Learning Integration | Frameworks like Minerva use Bayesian optimization for highly parallel multi-objective reaction optimisation [9]. | Dramatically reduces experimental cycles needed to identify optimal process conditions, navigating complex reaction landscapes effectively [9]. |

Experimental Protocols

Protocol: High-Throughput Reaction Optimization using Machine Learning and Automated Reactors

This protocol describes a methodology for optimizing a chemical reaction using a machine-learning-driven automated workflow, as demonstrated in recent studies [9].

1. Reaction Condition Space Definition

- Guidance: Define the discrete combinatorial set of potential reaction conditions. Parameters should include reagents, solvents, catalysts, ligands, and temperatures deemed plausible for the transformation by a chemist.

- Thermal Control Note: Set feasible temperature ranges considering solvent boiling points and reaction safety. The algorithm can automatically filter unsafe combinations (e.g., temperatures exceeding solvent boiling points) [9].

2. Initial Experimental Batch via Sobol Sampling

- Procedure: Use algorithmic quasi-random Sobol sampling to select the initial batch of experiments (e.g., a 96-well plate). This aims to maximally diversify coverage of the reaction condition space [9].

3. Reaction Execution & Data Acquisition

- Procedure: Execute the batch of reactions using the automated reactor system (e.g., HTE platform).

- Analysis: Analyze reaction outcomes (e.g., yield, selectivity) using standard analytical techniques (e.g., HPLC, UPLC, or inline NMR [24]).

4. Machine Learning Model Training & Next-Batch Selection

- Procedure: Input experimental results into the ML framework (e.g., Minerva). A Gaussian Process (GP) regressor is trained to predict reaction outcomes and their uncertainties for all possible conditions [9].

- Acquisition Function: An acquisition function (e.g., q-NEHVI, q-NParEgo) evaluates all conditions to balance exploration of uncertain regions and exploitation of known high-performing areas, selecting the next most promising batch of experiments [9].

5. Iteration

- Procedure: Repeat steps 3 and 4 for as many iterations as desired, terminating upon convergence, stagnation, or exhaustion of the experimental budget [9].

Protocol: Real-Time Reaction Monitoring and Self-Optimization using Inline NMR

This protocol outlines the setup for a self-optimizing flow reactor using inline NMR analytics, based on a published application note [24].

1. System Setup

- Components: Assemble a flow reactor system (e.g., Ehrfeld MMRS), a benchtop NMR spectrometer (e.g., Magritek Spinsolve Ultra), an automation control system (e.g., HiTec Zang LabManager/LabVision), and syringe pumps [24].

- Configuration: Connect the reactor outlet to the NMR flow cell. Ensure the automation software can trigger NMR measurements and receive results.

2. Analytical Method Development

- qNMR Method: Develop a quantitative NMR (qNMR) template in the NMR software. The example used a 1D EXTENDED+ protocol with 4 scans, 6.55 s acquisition time, and a 15 s repetition time [24].

- Integration Regions: Define chemical shift integration regions for reactant and product signals. For the referenced Knoevenagel condensation, these were: aromatic region (reference, 6.6-8.10 ppm), aldehyde proton from starting material (9.90-10.20 ppm), and double-bond proton from product (8.46-8.71 ppm) [24].

3. Optimization Loop Configuration

- Steady-State Check: Program the system to conduct consecutive NMR measurements until three consecutive readings show no significant change in conversion/yield, indicating steady-state [24].

- Algorithmic Control: Feed the yield data to the Bayesian optimization algorithm within the control software. The algorithm then calculates and sets new reaction parameters (e.g., flow rates, temperature) for the next experiment [24].

4. Execution

- Procedure: Start the system. The automation will continuously run the optimization loop, adjusting parameters and recording data until a predefined number of iterations or a performance threshold is met. The trade-off between exploration and exploitation is managed by the algorithm [24].

Workflow Visualization

Diagram 1: ML-Driven Reaction Optimization Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and reagents commonly used in advanced reaction optimization campaigns, particularly those involving non-precious metal catalysis and parallel screening.

Table 2: Key Research Reagent Solutions for Parallel Synthesis Optimization

| Reagent / Material | Function in Optimization | Application Note |

|---|---|---|

| Nickel Catalysts | Earth-abundant, lower-cost alternative to palladium for cross-coupling reactions (e.g., Suzuki, Buchwald-Hartwig) [9]. | Central to campaigns tackling challenges in non-precious metal catalysis; requires specific ligand partners for stability and activity [9]. |

| Ligand Libraries | Modulate catalyst activity, selectivity, and stability; a critical categorical variable in ML-driven optimization [9]. | Exploration of diverse ligand structures is key to finding optimal conditions, especially for challenging substrate pairs [9]. |

| Solvent Sets | Affect reaction rate, solubility, and mechanism; a key dimension in HTE screening [9]. | Selection often guided by pharmaceutical industry guidelines for environmental, health, and safety considerations [9]. |

| Piperidine | Common organic base catalyst for condensation reactions (e.g., Knoevenagel) [24]. | Used in self-optimization flow reactor demonstrations; its concentration can be a variable [24]. |

| Deuterated Solvents | Required for traditional NMR analysis of reaction outcomes. | Not needed for benchtop NMR in online monitoring when using solvent suppression techniques [24]. |

Implementing Thermal Control: From Automated Reactors to Digital Synthesis Platforms

Automated High-Throughput Screening Systems for Simultaneous Multi-Condition Testing

Automated High-Throughput Screening (HTS) has become a cornerstone technology in modern drug discovery and biomedical research, enabling the rapid testing of thousands to millions of chemical or biological compounds against therapeutic targets [25]. This approach significantly accelerates the path from initial concept identification to viable candidate selection, making it indispensable for pharmaceutical and biotechnology industries facing increasing pressure to reduce development timelines. The core principle of HTS involves the miniaturized and parallelized execution of assays, allowing researchers to efficiently explore vast experimental spaces that would be intractable with traditional one-factor-at-a-time approaches.

The integration of automation, specialized hardware, and advanced software has transformed HTS into a highly sophisticated platform capable of generating enormous datasets in remarkably short timeframes. Current market analyses project the global HTS market to reach USD 26.12 billion in 2025, growing at a compound annual growth rate (CAGR) of 10.7% to reach USD 53.21 billion by 2032 [26]. This growth is fueled by increasing adoption across pharmaceutical, biotechnology, and chemical sectors, all driven by the persistent need for faster drug discovery and development processes. North America continues to lead the market with a 39.3% share in 2025, while the Asia-Pacific region demonstrates the fastest growth trajectory with 24.5% market share [26].

System Architecture and Core Components

Automated HTS systems comprise specialized hardware and software components working in harmony to enable simultaneous multi-condition testing. These integrated systems function as complete workflows from sample preparation through data analysis, with each component playing a critical role in overall system performance.

Hardware Components

The physical infrastructure of automated HTS systems consists of several integrated instruments that handle liquid manipulation, environmental control, and signal detection:

Robotic Liquid Handlers: These systems automate the precise dispensing and mixing of small sample volumes, with capabilities ranging from microliters to nanoliters. This precision is vital for maintaining consistency across thousands of screening reactions. Recent advancements have focused on improving speed, accuracy, and reliability while operating at progressively smaller scales [25] [26]. For instance, Beckman Coulter's Cydem VT Automated Clone Screening System reduces manual steps in cell line development by up to 90%, significantly accelerating monoclonal antibody screening [26].

Microplate Handlers and Readers: Automated systems process miniaturized assay plates in 96-, 384-, 1536- or even higher-density formats to maximize throughput while minimizing reagent consumption [25]. High-sensitivity detectors and readers then capture biological signals from these plates, with recent systems like the iQue 5 High-Throughput Screening Cytometer offering continuous 24-hour runtime and measurement of up to 27 channels simultaneously [26].

Thermal Control Units: Precise temperature regulation systems maintain optimal reaction conditions across all wells, a critical factor for reaction consistency and reliability. These systems integrate with microplate platforms to ensure uniform thermal distribution, preventing edge effects or gradient formation that could compromise data quality [9].

Software and Data Management

Sophisticated software components control hardware operations, manage data collection, and analyze results:

Automation Control Software: These platforms coordinate the movements and operations of robotic components, scheduling tasks to maximize throughput and minimize conflicts in resource usage [25].

Data Analysis Pipelines: Advanced algorithms, including machine learning approaches, process raw data to identify promising compounds by filtering out noise and false positives [25] [9]. For quantitative HTS (qHTS), specialized statistical models like the Hill equation fit concentration-response data to estimate parameters such as AC50 (potency) and Emax (efficacy) [27].

Laboratory Information Management Systems (LIMS): These platforms manage the vast datasets generated during screening campaigns, with cloud-based systems increasingly enabling collaboration across teams and institutions [25]. Modern systems emphasize standards and interoperability, supporting APIs that allow integration with various hardware and software components while ensuring compliance with regulatory standards like 21 CFR Part 11 for data integrity and security [25].

Table 1: Key Market Segments in High-Throughput Screening (2025 Projections)

| Segment Category | Specific Segment | Projected Market Share (2025) | Key Drivers |

|---|---|---|---|

| Product & Services | Instruments (Liquid Handling, Detectors, Readers) | 49.3% | Advancements in automation precision and miniaturization |

| Technology | Cell-Based Assays | 33.4% | Better physiological relevance for drug discovery |

| Application | Drug Discovery | 45.6% | Need for rapid, cost-effective candidate identification |

| Region | North America | 39.3% | Established biopharma ecosystem and R&D funding |

| Region | Asia Pacific | 24.5% | Expanding pharmaceutical industry and government initiatives |

Thermal Control in Parallel Synthesis Reactions

Thermal control represents a critical parameter in parallel synthesis reactions within HTS workflows, directly influencing reaction kinetics, yield, and selectivity. Maintaining precise and uniform temperature across all reaction vessels ensures consistent conditions for meaningful comparison between different experimental conditions.

Importance of Thermal Management

In the context of parallel synthesis, thermal regulation ensures that reactions proceed under their optimal temperature conditions, which is particularly important when screening diverse chemical transformations simultaneously. The Design-Make-Test-Analyse (DMTA) cycle – a fundamental framework in drug discovery – relies heavily on reproducible synthesis conditions, where temperature control plays a crucial role in the "Make" step [28]. Inconsistent thermal profiles can introduce significant variability, compromising data quality and potentially leading to false positives or negatives in screening outcomes.

Advanced HTS systems incorporate precision thermal control modules that maintain setpoint temperatures within narrow tolerances across all wells of microtiter plates. This capability is especially valuable when exploring temperature-sensitive reactions or when employing catalysts with specific thermal activation requirements. The integration of these thermal control systems with robotic automation enables sequential or parallel screening at multiple temperatures, providing valuable kinetic data and thermodynamic parameters alongside primary screening results.

Integration with Automated Workflows

Modern automated platforms seamlessly integrate thermal control into overall system operation. Temperature regulation modules interface with scheduling software to precondition plates before liquid handling steps and maintain stability throughout incubation periods. Sophisticated systems can even implement dynamic temperature profiles, ramping between setpoints to explore different reaction phases or simulate physiological conditions within a single screening run.

The Minerva ML framework for reaction optimization exemplifies the importance of thermal parameters, incorporating temperature as a key variable in its Bayesian optimization approach [9]. By including temperature in the multidimensional search space, these systems can identify optimal thermal conditions alongside other reaction parameters such as solvent, catalyst, and concentration.

Experimental Protocols for Multi-Condition HTS

Quantitative HTS (qHTS) Concentration-Response Protocol

Quantitative HTS represents an advanced screening approach that generates concentration-response data simultaneously for thousands of compounds, providing richer datasets for candidate prioritization [27].

Materials and Reagents:

- Compound libraries dissolved in DMSO at 10 mM concentration

- Assay-specific buffers and reagents

- Cell lines or protein targets relevant to therapeutic area

- Detection reagents (fluorogenic, chromogenic, or luminescent)

Procedure:

- Plate Preparation: Dispense 5 nL of each compound solution into 1536-well assay plates using acoustic dispensing technology, creating a concentration series via serial dilution.

- Reagent Addition: Add assay reagents in volumes of 5-10 μL using automated liquid handlers, ensuring precise mixing without cross-contamination.

- Incubation: Maintain plates under controlled thermal conditions (typically 37°C for cell-based assays) for predetermined durations using precision incubators.

- Signal Detection: Measure endpoint or kinetic signals using plate readers appropriate for detection modality (fluorescence, absorbance, luminescence).

- Data Processing: Fit concentration-response curves using the Hill equation: Ri = E0 + (E∞ - E0) / [1 + exp(-h (logCi - logAC50))], where Ri is response at concentration Ci, E0 is baseline, E∞ is maximal response, h is shape parameter, and AC50 is half-maximal activity concentration [27].

Quality Control:

- Include reference controls on each plate (positive, negative, vehicle)

- Monitor Z-factor values to ensure assay robustness (Z > 0.5)

- Implement replicate testing for confirmation of active compounds

Machine Learning-Guided Reaction Optimization Protocol

The integration of machine learning with HTS enables more efficient exploration of complex reaction parameter spaces, particularly valuable for optimizing challenging chemical transformations [9].

Materials and Reagents:

- Building block libraries (typically 1000-3000 compounds)

- Catalyst systems (e.g., nickel or palladium catalysts for cross-couplings)

- Solvent collections covering diverse polarity and coordination properties

- Additives (bases, ligands, activators)

Procedure:

- Experimental Design: Define reaction space including categorical (solvent, ligand, additive) and continuous (temperature, concentration, time) parameters.

- Initial Sampling: Select initial batch of experiments (typically 96 conditions) using quasi-random Sobol sampling to maximize space coverage.

- Automated Execution: Perform reactions in parallel using liquid handling robots and thermal control modules.

- Analysis and Model Training: Quantify reaction outcomes (yield, selectivity) and train Gaussian Process regressors to predict outcomes for all possible conditions.

- Iterative Optimization: Use acquisition functions (q-NEHVI, q-NParEgo, or TS-HVI) to select subsequent batches balancing exploration and exploitation [9].

- Validation: Confirm optimal conditions in scale-up experiments.

Quality Control:

- Include internal standards for reaction quantification

- Implement LC-MS or HPLC analysis for yield determination

- Monitor model performance metrics throughout optimization campaign

Table 2: Key Research Reagent Solutions for HTS Implementation

| Reagent Category | Specific Examples | Function in HTS Workflows | Application Notes |

|---|---|---|---|

| Building Blocks | Enamine MADE collection, eMolecules, Chemspace | Provide structural diversity for library synthesis | Virtual catalogs expand accessible chemical space; pre-weighted options reduce handling [28] |

| Detection Reagents | Fluorogenic substrates, luminescent probes | Enable signal generation for activity measurement | Must be compatible with miniaturized formats and detection systems |

| Cell-Based Assay Systems | Reporter cells, primary cells, iPSCs | Provide physiologically relevant screening contexts | Melanocortin receptor assays exemplify target-specific systems [26] |

| Catalyst Systems | Ni-catalysts for Suzuki coupling, Pd-catalysts for Buchwald-Hartwig | Enable key bond-forming reactions in library synthesis | Earth-abundant alternatives (Ni vs Pd) gaining importance [9] |

| Solvent Collections | DMAc, NMP, DMSO, MeCN, alcoholic solvents | Create diverse reaction environments for optimization | Must be compatible with plasticware and automation components |

Data Analysis and Interpretation

Quantitative HTS Data Processing

The analysis of qHTS data presents unique statistical challenges, particularly when fitting nonlinear models to concentration-response data [27]. The Hill equation, while widely used, requires careful interpretation as parameter estimates can be highly variable when experimental designs fail to capture both asymptotes of the response curve.

Critical considerations for robust data analysis include:

Parameter Estimation Reliability: AC50 estimates show poor repeatability when concentration ranges fail to establish both upper and lower response asymptotes [27]. Simulation studies demonstrate that AC50 confidence intervals can span several orders of magnitude in such cases, complicating compound prioritization.

Impact of Replication: Increasing sample size through experimental replicates significantly improves parameter estimation precision. For instance, increasing from single to quintuplicate measurements reduces AC50 confidence intervals from spanning 1.47×10^4 to 4.63 for challenging compounds with AC50 = 0.001 μM and Emax = 25% [27].

Multi-Objective Optimization: Advanced screening campaigns increasingly monitor multiple endpoints simultaneously (yield, selectivity, cost). The hypervolume metric provides a comprehensive optimization performance measure by calculating the volume of objective space enclosed by identified reaction conditions [9].

Machine Learning-Enhanced Analysis

Machine learning approaches significantly enhance HTS data analysis by identifying complex patterns beyond conventional curve-fitting:

Advanced Applications and Case Studies

Pharmaceutical Process Development

Automated HTS systems have demonstrated remarkable success in accelerating pharmaceutical process development. In one notable case study, the Minerva ML framework optimized both a Ni-catalyzed Suzuki coupling and a Pd-catalyzed Buchwald-Hartwig reaction, identifying multiple conditions achieving >95% yield and selectivity [9]. This approach directly translated to improved process conditions at scale, achieving in 4 weeks what previously required 6 months of development time.

The application of these systems in process chemistry addresses more rigorous demands than academic settings, encompassing economic, environmental, health, and safety considerations alongside traditional yield and selectivity objectives [9]. This comprehensive optimization capability makes automated HTS particularly valuable for industrial applications where multiple constraints must be satisfied simultaneously.

DNA-Encoded Library Screening

DNA-encoded library (DEL) technology represents a powerful convergence of combinatorial chemistry and HTS principles. The "split and pool" synthesis method enables creation of billion-member compound libraries with only 3000 coupling steps, compared to the 3 billion steps required for parallel synthesis of similarly sized libraries [29]. This enormous efficiency advantage makes DEL approaches particularly valuable for exploring vast chemical spaces.

Screening these massive libraries presents unique challenges, as traditional well-based HTS would require 1 billion wells and cost between $50 million and $1 billion for a comprehensive screen [29]. Affinity-based selection methods coupled with high-throughput DNA sequencing overcome this limitation, enabling efficient screening of enormous compound collections that would be intractable with conventional approaches.

Troubleshooting and Technical Considerations

Common Challenges and Solutions

Implementation of automated HTS systems presents several technical challenges that require careful management:

Liquid Handling Accuracy: Inaccurate pipetting represents a primary source of variability in HTS data. Regular calibration, maintenance, and verification of liquid handlers using dye-based or gravimetric methods are essential for maintaining data quality. Implementing acoustic dispensing technology can improve accuracy for nanoliter-volume transfers.

Thermal Uniformity: Edge effects and thermal gradients across microplates can introduce significant variability. Using dedicated thermal control plates rather than ambient air incubators, allowing sufficient equilibration time, and periodically rotating plates during extended incubations can improve thermal uniformity.

Data Quality Assessment: Monitoring assay performance metrics such as Z-factor (≥0.5 indicates excellent assay), signal-to-background ratio, and coefficient of variation ensures robust screening performance. Implementing control charting for these parameters helps identify declining performance before it compromises screen integrity.

Compound Interference: False positives from compound autofluorescence, quenching, or chemical reactivity with assay components represent common challenges in HTS. Implementing orthogonal assays, counterscreens, and label-free detection methods can mitigate these issues.

Future Directions and Emerging Technologies

The HTS landscape continues to evolve with several emerging technologies shaping future capabilities:

AI Integration: Artificial intelligence is rapidly reshaping the HTS landscape by enhancing efficiency, lowering costs, and driving automation in drug discovery [26]. Companies like Schrödinger, Insilico Medicine, and Thermo Fisher Scientific are leveraging AI-driven screening to optimize compound libraries, predict molecular interactions, and streamline assay design.

Advanced Detection Technologies: New detection methods including high-content imaging, mass spectrometry-based readouts, and single-cell analysis provide richer data from screening campaigns, moving beyond simple endpoint measurements to multidimensional characterization.

Miniaturization and Microfluidics: Continued progression toward smaller assay volumes (nanoliter and picoliter) increases throughput and reduces reagent costs. Microfluidic approaches enable sophisticated assay designs with temporal control and complex fluid manipulations not possible in well-based formats.

Human-Relevant Models: The FDA's push toward non-animal testing approaches is driving adoption of more physiologically relevant models including organ-on-chip systems, 3D organoids, and iPSC-derived cells for HTS applications [26]. These systems improve clinical translatability of early screening data.

Liquid-Cooled vs. Air-Cooled Architectures for Thermal Management Systems

Thermal management is a critical consideration in scientific instrumentation, particularly for parallel synthesis reactors where precise temperature control directly impacts reaction kinetics, yield, and product purity. This document provides application notes and experimental protocols for selecting and implementing air-cooled versus liquid-cooled thermal control architectures. These systems are essential for maintaining optimal operating temperatures in research-scale chemical synthesis equipment, ensuring experimental reproducibility and safeguarding sensitive instrumentation from heat-related degradation.

The escalating thermal demands of modern research equipment, driven by higher processing intensities and increased miniaturization, have rendered traditional cooling methods insufficient for many advanced applications. This analysis draws upon engineering principles from high-performance computing and energy storage to inform thermal control strategies in pharmaceutical research and development.

Comparative Analysis of Cooling Architectures

Fundamental Operating Principles

Air-Cooled Systems utilize fans or blowers to circulate ambient air over heat-generating components. The system relies on convective heat transfer to the air and conductive heat transfer through heat sinks attached to critical components [30]. This setup is simple, involving heatsinks to increase surface area and fans to move air across them [30].

Liquid-Cooled Systems employ a closed-loop circuit where a coolant absorbs heat directly from components. In direct-to-chip cooling, cold plates mounted directly onto heat sources remove heat at the source [31]. More comprehensively, immersion cooling submerges entire systems in a dielectric fluid for maximum heat absorption [32] [31]. Liquid cooling leverages the superior thermal capacity and conductivity of liquids, which is approximately 3,500 times higher than that of air [33].

Quantitative Performance Comparison

Table 1: Quantitative Comparison of Air-Cooled vs. Liquid-Cooled Systems

| Performance Characteristic | Air-Cooled Systems | Liquid-Cooled Systems |

|---|---|---|

| Heat Transfer Efficiency | Low to moderate; suitable for low to medium heat loads [32] | Very high; 3,500x greater heat transfer capacity than air [33] |

| Temperature Control Precision | Moderate; susceptible to ambient temperature fluctuations [34] | High (±2°C); precise thermal regulation [34] |

| Typical Power Density Support | <25 kW/rack (in data center contexts) [32] | 80-120 kW/rack and beyond [31] [35] |

| Noise Level | High (can exceed 80 dB) [32] | Low (virtually silent operation) [32] |

| Energy Consumption | Higher; cooling can consume ~38% of total system energy [32] | Lower; Power Usage Effectiveness (PUE) can reach <1.2 [31] |

| Space Requirements | Bulky; requires significant space for airflow management [32] | Compact; high energy density allows smaller footprints [31] [34] |

Table 2: Application-Based Suitability Analysis

| Application Scenario | Recommended Architecture | Rationale |

|---|---|---|

| Small-scale, Low-Power Synthesis | Air-Cooled | Cost-effective for thermal loads <1kW; simpler maintenance [34] |

| High-Throughput Parallel Synthesis | Liquid-Cooled | Superior heat flux management; precise temperature uniformity [31] |

| Temperature-Sensitive Catalytic Reactions | Liquid-Cooled | Enhanced temperature stability (±2°C) protects reaction integrity [34] |

| Portable or Field Research Equipment | Air-Cooled | No fluid circulation system; fewer leak-related risks [34] |

| Process Intensification & Scale-up Studies | Liquid-Cooled | Manages >700W thermal design power for advanced reactors [35] |

Implementation Protocols

Protocol 1: Thermal Load Assessment for Reactor Systems

Objective: To quantitatively determine the heat generation profile of parallel synthesis reactors to inform appropriate cooling architecture selection.

Materials and Equipment:

- Thermal Couples (K-type): For direct temperature measurement at reactor junctions

- Heat Flux Sensors: To measure rate of heat energy transfer

- Power Analyzer: For correlating electrical input with thermal output

- Data Acquisition System: For continuous thermal profiling

- Infrared Thermography Camera: For identifying hotspot locations and distributions

Methodology:

- Instrument Calibration: Calibrate all temperature and power measurement devices against certified standards.

- Baseline Profiling: Operate the reactor system at 25%, 50%, 75%, and 100% of maximum designed capacity.

- Data Collection:

- Record temperature measurements at 5-second intervals at minimum of 8 critical locations: reaction vessels, heating elements, control systems, and power modules.

- Simultaneously record power consumption using the power analyzer.

- Document thermal distribution using IR thermography at each operational level.

- Heat Load Calculation:

- Calculate steady-state heat load using formula: Q = m × Cp × ΔT, where m is mass flow rate, Cp is specific heat capacity, and ΔT is temperature differential.