Analytical Method Validation for Organic Compounds: A Comprehensive Guide from Development to Compliance

This article provides a systematic framework for validating analytical methods used to determine organic compounds in pharmaceuticals, environmental samples, and biological matrices.

Analytical Method Validation for Organic Compounds: A Comprehensive Guide from Development to Compliance

Abstract

This article provides a systematic framework for validating analytical methods used to determine organic compounds in pharmaceuticals, environmental samples, and biological matrices. Tailored for researchers, scientists, and drug development professionals, it covers foundational principles, methodological applications across different sectors, troubleshooting for complex matrices, and comparative validation strategies. By integrating current regulatory guidelines, green chemistry considerations, and advanced instrumental techniques, this guide aims to ensure the generation of reliable, accurate, and reproducible data crucial for quality control, regulatory submissions, and environmental monitoring.

Core Principles and Regulatory Landscape of Analytical Method Validation

In regulated environments such as pharmaceutical development, environmental monitoring, and food safety, analytical method validation serves as a foundational process that provides documented evidence that an analytical method is suitable for its intended purpose [1] [2]. This rigorous demonstration ensures that testing methods are accurate, consistent, and reliable across different conditions, products, and analysts, forming the bedrock of data integrity in scientific research and quality control [3]. Method validation establishes, through laboratory studies, that the performance characteristics of a method meet the requirements for its specific analytical application, providing assurance of reliability during normal use [1]. In essence, it is "the process of providing documented evidence that the method does what it is intended to do" [4].

The scope of method validation extends across various analytical applications, from drug discovery and development to environmental pollutant monitoring [5]. Globally recognized guidelines from organizations like the International Council for Harmonisation (ICH), FDA, and USP provide frameworks for validation protocols, emphasizing that validated methods are not merely regulatory obligations but fundamental components of good science [1] [2]. For researchers and drug development professionals, understanding method validation's purpose and scope is essential for ensuring regulatory compliance, consumer safety, and product quality in highly regulated industries [3].

Core Principles: Validation Versus Verification

A critical distinction in quality assurance practices lies between method validation and method verification, processes often confused but serving different roles in the analytical workflow [3]. Understanding this distinction is essential for proper implementation in regulated environments.

Method validation is a comprehensive, documented process that proves an analytical method is acceptable for its intended use through rigorous testing and statistical evaluation [3]. It is typically required when developing new methods, significantly modifying existing methods, or transferring methods between labs or instruments [3]. During validation, parameters such as accuracy, precision, specificity, detection limit, quantitation limit, linearity, and robustness are systematically assessed against predefined acceptance criteria [3].

In contrast, method verification is the process of confirming that a previously validated method performs as expected under specific laboratory conditions [3]. It is employed when adopting standard methods (e.g., compendial or published methods) in a new lab or with different instruments [3]. Verification involves limited testing—focusing on critical parameters like accuracy, precision, and detection limits—to ensure the method performs within predefined acceptance criteria in the new environment [3].

Table: Comparison of Method Validation and Verification

| Comparison Factor | Method Validation | Method Verification |

|---|---|---|

| Purpose | Prove method suitability for intended use | Confirm validated method works in specific lab |

| Scope | Comprehensive assessment of all parameters | Limited testing of critical parameters |

| When Performed | Method development, significant changes | Adopting standard methods in new environment |

| Regulatory Status | Required for new methods/submissions | Acceptable for standard methods in established workflows |

| Resource Intensity | High (time, cost, expertise) | Moderate (faster, more economical) |

| Documentation | Extensive validation protocol and report | Verification report demonstrating performance |

Key Performance Parameters in Method Validation

Method validation systematically evaluates specific performance characteristics to demonstrate methodological reliability. The ICH Q2(R2) guidelines outline seven key criteria that collectively ensure a testing method functions like a foolproof recipe—working consistently regardless of who performs the test or under what reasonable conditions [2].

Specificity and Selectivity

Specificity is the ability to measure accurately and specifically the analyte of interest in the presence of other components that may be expected to be present in the sample [1] [4]. For chromatographic methods, specificity ensures that a peak's response is due to a single component, typically demonstrated through resolution measurements and peak purity tests using photodiode-array detection or mass spectrometry [1] [4]. In pharmaceutical analysis, specificity must account for interference from other active ingredients, excipients, impurities, and degradation products [1].

Accuracy and Precision

Accuracy measures the exactness of an analytical method, or the closeness of agreement between an accepted reference value and the value found [1] [4]. For drug substances, accuracy is measured as the percent of analyte recovered by the assay, typically requiring data from a minimum of nine determinations over three concentration levels covering the specified range [1]. Precision, expressed as repeatability, intermediate precision, and reproducibility, measures the closeness of agreement among individual test results from repeated analyses of a homogeneous sample [1] [4]. Repeatability (intra-assay precision) requires a minimum of nine determinations covering the specified range, while intermediate precision assesses within-laboratory variations due to different days, analysts, or equipment [1].

Linearity and Range

Linearity is the ability of the method to provide test results that are directly proportional to analyte concentration within a given range [1]. Range is the interval between the upper and lower concentrations of an analyte that have been demonstrated to be determined with acceptable precision, accuracy, and linearity [1] [4]. Guidelines specify that a minimum of five concentration levels be used to determine range and linearity, with the data reported as the equation for the calibration curve line and the coefficient of determination (r²) [1].

Detection and Quantitation Limits

The limit of detection (LOD) is defined as the lowest concentration of an analyte in a sample that can be detected, but not necessarily quantitated, while the limit of quantitation (LOQ) is the lowest concentration that can be quantitated with acceptable precision and accuracy under stated operational conditions [1]. In chromatography laboratories, the most common determination uses signal-to-noise ratios (3:1 for LOD and 10:1 for LOQ) [1] [4]. Regardless of the method used, an appropriate number of samples must be analyzed at the limit to fully validate method performance [1].

Robustness

The robustness of an analytical procedure is defined as a measure of its capacity to obtain comparable and acceptable results when perturbed by small but deliberate variations in procedural parameters [1] [4]. Robustness provides an indication of the method's suitability and reliability during normal use, typically tested by intentionally varying method parameters like eluent composition, gradient, and detector settings to study effects on analytical results [4].

Table: Analytical Performance Characteristics and Validation Methodologies

| Performance Characteristic | Definition | Typical Validation Methodology |

|---|---|---|

| Specificity | Ability to measure analyte accurately in presence of potential interferents | Resolution, peak purity tests (PDA/MS), spiked samples |

| Accuracy | Closeness of agreement between accepted reference value and value found | Percent recovery studies, comparison to reference materials |

| Precision | Closeness of agreement between a series of measurements | Repeatability (9 determinations), intermediate precision (different analysts/days) |

| Linearity | Ability to obtain results proportional to analyte concentration | Minimum 5 concentration levels, correlation coefficient (r²) |

| Range | Interval between upper and lower concentration with demonstrated precision, accuracy, linearity | Established based on linearity studies and intended application |

| LOD/LOQ | Lowest concentration detectable/quantifiable with acceptable precision | Signal-to-noise ratios (3:1 & 10:1), based on standard deviation and slope |

| Robustness | Capacity to remain unaffected by small, deliberate variations in parameters | Deliberate changes to method parameters (pH, temperature, flow rate) |

Experimental Protocols for Method Validation

Method Comparison Protocols

The comparison of methods experiment is critical for assessing systematic errors that occur with real patient specimens [6]. This experiment involves analyzing patient samples by both the new method (test method) and a comparative method, then estimating systematic errors based on observed differences [6]. Key considerations include selecting an appropriate comparative method (preferably a reference method), using a minimum of 40 patient specimens selected to cover the entire working range, and analyzing specimens within two hours of each other to ensure stability [6]. Data analysis should include both graphical representation (difference plots or comparison plots) and statistical calculations (linear regression for wide analytical ranges or paired t-tests for narrow ranges) [6].

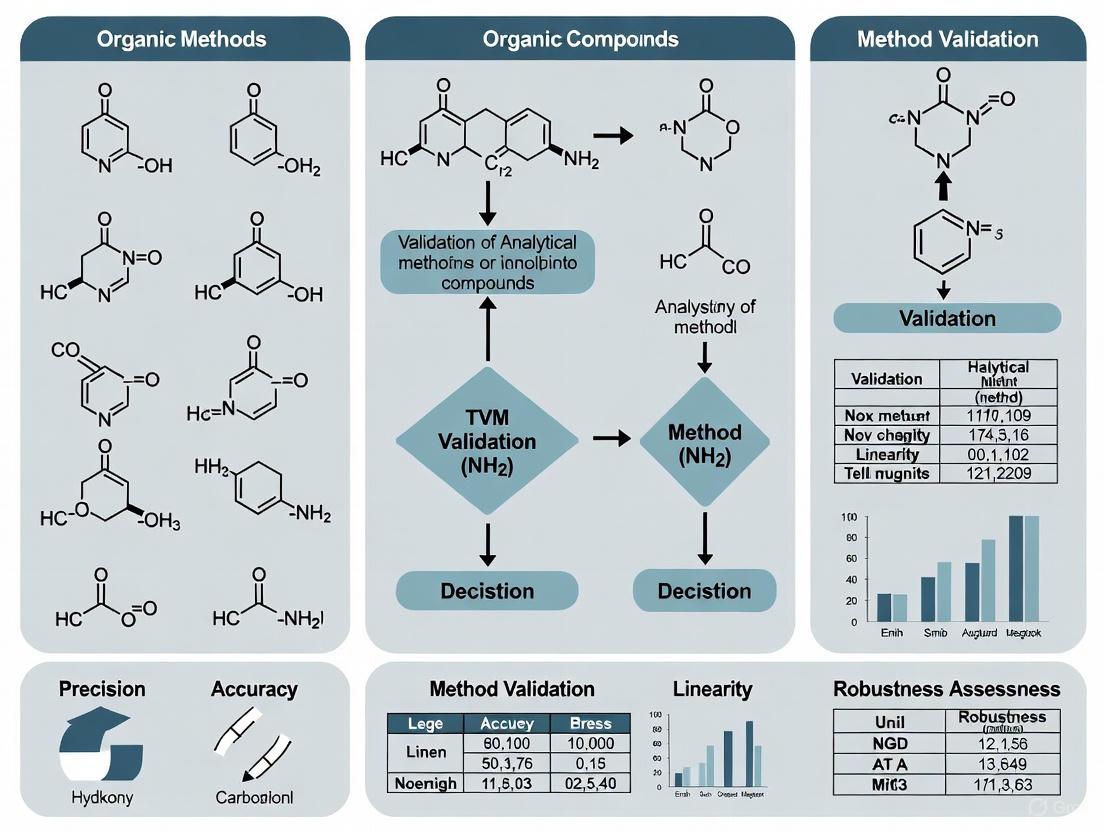

Method Comparison Workflow: This diagram outlines the sequential steps for conducting a proper method comparison study, from selecting reference methods to documentation.

Validation Experimental Designs

For a full method validation, a systematic approach is essential. The process begins with defining the method's purpose, scope, and critical parameters [2]. Feasibility testing follows to determine if the method works with the specific product or matrix [2]. A robust validation plan then outlines how each validation criterion will be tested, including experimental designs and acceptance criteria [2]. Full validation constitutes the core of the process, where the method is rigorously tested against the seven ICH Q2(R2) criteria [2]. For instance, accuracy is typically evaluated by analyzing synthetic mixtures spiked with known quantities of components, while precision is demonstrated through repeatability and intermediate precision studies [1].

Case Studies in Method Validation

Pharmaceutical Impurity Profiling

A recent study detailed the development and validation of an RP-HPLC method for organic impurity profiling in baclofen utilizing a Quality-by-Design (QbD) approach [7]. The method employed a Waters Symmetry C18 column with gradient elution and demonstrated linear response (R² > 0.999), accuracy (recoveries 97.1%-102.5%), precision (RS ≤ 5.0%), sensitivity, and specificity [7]. The drug product was subjected to forced degradation studies under acidity, base, oxidation, heat, and photolysis conditions according to ICH Q2 criteria, with final method conditions assessed using a full-factorial design to identify robust technique conditions [7].

Environmental Pharmaceutical Monitoring

Another study developed and validated a green/blue UHPLC-MS/MS method for trace pharmaceutical monitoring of carbamazepine, caffeine, and ibuprofen in water and wastewater [8]. Following ICH Q2(R2) guidelines, the method proved specific, linear (correlation coefficients ≥ 0.999), precise (RSD < 5.0%), and accurate (recovery rates ranging from 77 to 160%) [8]. The method achieved impressive sensitivity with limits of quantification at 1000 ng/L for caffeine, 600 ng/L for ibuprofen, and 300 ng/L for carbamazepine, while incorporating sustainable principles by omitting energy-intensive evaporation steps after solid-phase extraction [8].

Multi-Residue Analytical Methods

Research on multi-residue analytical methods highlights the particular challenges in validating methods for diverse compounds. One study developed a novel protocol for 285 polar and non-polar organic pollutants in passive air samplers, combining accelerated solvent extraction and solid-phase extraction [9]. Method validation confirmed excellent linearity (r² > 0.99 within 1–1000 ng), sensitivity, robustness, and precision (relative standard deviation <30% for most compounds) [9]. The method demonstrated varying recovery rates, with approximately 60% of target compounds achieving recoveries above 60%, highlighting the importance of establishing compound-specific performance characteristics in multi-residue methods [9].

Essential Research Reagents and Materials

The following table details key research reagent solutions and essential materials used in analytical method validation for organic compounds research, along with their specific functions in the validation process.

Table: Essential Research Reagents and Materials for Analytical Method Validation

| Reagent/Material | Function in Validation | Application Example |

|---|---|---|

| Reference Standards | Provide known purity materials for accuracy, linearity, and precision studies | High-purity (>98%) individual standard solutions [9] |

| Chromatography Columns | Stationary phases for separation; critical for specificity demonstrations | Waters Symmetry C18 column for pharmaceutical impurity profiling [7] |

| Mass Spectrometry Reagents | Enable detection and quantification; essential for LOD/LOQ studies | Mobile phase additives for UHPLC-MS/MS pharmaceutical monitoring [8] |

| Derivatization Agents | Improve volatility and stability of polar analytes for GC analysis | MtBSTFA for silylation of compounds with hydroxyl, amino, or carboxyl groups [9] |

| Solid-Phase Extraction Cartridges | Sample cleanup and concentration; impact recovery and precision | CHROMABOND HLB cartridges for diverse analyte purification [9] |

| Sorbent Materials | Sample collection and retention; affect method sensitivity and robustness | N-doped carbon-coated silicon carbide foam for passive air sampling [9] |

Method validation represents an indispensable discipline in regulated scientific environments, serving as the critical bridge between analytical method development and reliable implementation. Through systematic assessment of performance characteristics including specificity, accuracy, precision, linearity, and robustness, validation provides documented evidence that methods consistently produce reliable results suitable for their intended purposes [1] [2] [4]. The distinction between full validation for new methods and verification for established methods allows for efficient resource allocation while maintaining quality standards [3].

For researchers and drug development professionals, understanding validation principles and protocols is not merely a regulatory requirement but a fundamental aspect of scientific rigor. As analytical challenges continue to evolve with increasing demands for sensitivity, specificity, and sustainability [8], the principles of method validation remain constant—ensuring that the "recipes" for analytical testing produce reliable, accurate, and reproducible results regardless of who performs the tests or under what reasonable conditions they are conducted [2]. This foundation enables scientific progress while protecting public health and maintaining the integrity of research and quality control in regulated industries.

The development and validation of robust analytical methods are fundamental to the advancement of research on organic compounds, particularly in the pharmaceutical sector. These methods provide the critical data required to ensure the identity, potency, quality, and purity of drug substances and products. Validation is a formal, required process that establishes, through extensive laboratory studies, that the performance characteristics of an analytical method are suitable for its intended analytical application [1].

This process is governed by international guidelines, primarily the International Council for Harmonisation (ICH) Q2(R2) guideline, which defines the key parameters that must be evaluated [2] [10]. Among these, accuracy, precision, specificity, limit of detection (LOD), limit of quantitation (LOQ), linearity, and robustness form the essential core set. This guide provides a detailed comparison of these parameters, outlining their experimental protocols and showcasing application data to equip researchers and drug development professionals with the knowledge to implement them effectively.

Comparative Analysis of Key Validation Parameters

The table below summarizes the purpose, experimental methodology, and common acceptance criteria for the seven key validation parameters, providing a quick-reference overview for scientists.

| Parameter | Purpose / Definition | Key Experimental Methodology | Typical Acceptance Criteria |

|---|---|---|---|

| Accuracy | Closeness of agreement between the accepted reference value and the value found [1] [11]. | Analysis of a minimum of 9 determinations over 3 concentration levels covering the specified range (e.g., 3 concentrations, 3 replicates each) [1]. | Reported as % recovery of the known, added amount. Specific criteria depend on the method [1]. |

| Precision | Closeness of agreement among individual test results from repeated analyses of a homogeneous sample [1]. | Repeatability: Multiple analyses under identical conditions [2].Intermediate Precision: Different days, analysts, or equipment [2] [1]. | Expressed as % Relative Standard Deviation (% RSD). Specific criteria are method-dependent [1]. |

| Specificity | Ability to assess the analyte unequivocally in the presence of other components that may be expected to be present (e.g., impurities, degradants, matrix) [1] [11]. | Demonstration of the separation of the target analyte from closely eluting compounds, impurities, or degradants. Use of peak purity tests (e.g., photodiode-array or mass spectrometry) is recommended [1]. | The method must detect only the target analyte without interference; resolution of critical pairs should be demonstrated [2]. |

| LOD | The lowest concentration of an analyte that can be detected, but not necessarily quantitated, under the stated experimental conditions [1]. | Signal-to-Noise ratio (typically 3:1) or based on the standard deviation of the response and the slope of the calibration curve (LOD = 3.3 × SD/S) [1]. | The analyte peak should be detectable and distinguishable from baseline noise. |

| LOQ | The lowest concentration of an analyte that can be quantified with acceptable precision and accuracy under the stated experimental conditions [1]. | Signal-to-Noise ratio (typically 10:1) or based on the standard deviation of the response and the slope of the calibration curve (LOQ = 10 × SD/S) [1]. | At the LOQ, the method should demonstrate acceptable accuracy (e.g., 80-120% recovery) and precision (e.g., ±20% RSD) [8]. |

| Linearity | The ability of the method to obtain test results that are directly proportional to the concentration of the analyte in a given range [1]. | A minimum of 5 concentration levels across the specified range [1]. The response is plotted against concentration to generate a calibration curve. | The correlation coefficient (r²) is typically ≥ 0.990 [2] [8]. Visual inspection of the residual plot is also used. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters [1] [12]. | Deliberate variation of parameters (e.g., mobile phase pH, flow rate, column temperature, wavelength) using experimental designs like full factorial or Plackett-Burman [12]. | System suitability criteria must still be met despite variations. Results are evaluated for consistency [12]. |

Experimental Protocols for Parameter Evaluation

Protocol for Accuracy and Precision

Accuracy and precision are often evaluated concurrently in a single inter-related study [1].

- Sample Preparation: Prepare a minimum of nine samples of a known, homogeneous reference material. These should cover three concentration levels (e.g., 80%, 100%, 150% of the target concentration) with three replicates at each level.

- Analysis: Analyze all samples using the validated method.

- Accuracy Calculation: For each concentration level, calculate the mean measured value. Accuracy is expressed as the percentage recovery:

% Recovery = (Mean Measured Concentration / Known Concentration) × 100[1]. - Precision Calculation: Calculate the standard deviation and the % Relative Standard Deviation (%RSD) for the replicate measurements at each concentration level. The %RSD is calculated as:

%RSD = (Standard Deviation / Mean) × 100. This evaluates repeatability. Intermediate precision is assessed by repeating the study on a different day, with a different analyst, or on a different instrument [2] [1].

Protocol for Specificity

Specificity ensures the method is measuring only the intended analyte [2].

- Analysis of Blank: Inject a blank sample (the matrix without the analyte) to demonstrate the absence of interfering peaks at the retention time of the analyte.

- Analysis of Standard: Inject a standard solution of the pure analyte to confirm its retention time and response.

- Forced Degradation Studies: Stress the sample (e.g., with acid, base, oxidant, heat, or light) to generate degradants. Analyze the stressed sample to demonstrate that the analyte peak is pure and unaffected by degradant peaks [7].

- Peak Purity Assessment: Use a photodiode-array detector (PDA) to collect spectra across the entire analyte peak. The software then compares these spectra to confirm the peak is homogenous and free from co-eluting impurities [1].

Protocol for LOD and LOQ

The LOD and LOQ can be determined based on the standard deviation of the response and the slope of the calibration curve.

- Calibration Curve: Run a linearity study with a minimum of 5 concentrations.

- Standard Deviation Calculation: Determine the standard deviation (SD) of the y-intercept of the regression line, or of the response for multiple low-concentration samples.

- Slope Determination: Obtain the slope (S) of the calibration curve.

- Calculation:

LOD = 3.3 × (SD / S)LOQ = 10 × (SD / S)[1]

- Verification: Experimentally verify the calculated LOD and LOQ by analyzing samples at those concentrations to confirm they can be reliably detected and quantified, respectively [1].

Protocol for Robustness

Robustness testing uses experimental design (DoE) to efficiently study multiple factors [12].

- Factor Selection: Identify the method parameters to be varied (e.g., mobile phase pH ±0.2 units, flow rate ±0.1 mL/min, column temperature ±2°C, organic solvent composition ±2%).

- Experimental Design: Employ a screening design, such as a fractional factorial or Plackett-Burman design. This allows for the simultaneous variation of multiple factors in a controlled set of experimental runs, making the process highly efficient [12].

- Execution: Perform the analysis according to the set of experimental conditions defined by the design.

- Evaluation: For each run, monitor critical performance criteria (e.g., retention time, resolution, tailing factor, plate count). Statistical analysis of the results will identify which parameters have a significant effect on the method's performance [12].

- System Suitability: Establish system suitability test limits based on the findings of the robustness study to ensure the method remains valid during routine use [12].

Experimental Workflow and Logical Relationships

The following diagram illustrates the logical sequence and interrelationships between the key validation parameters and the overall method lifecycle.

Research Reagent Solutions for Analytical Validation

The table below lists essential materials and reagents commonly used in the development and validation of chromatographic methods for organic compounds.

| Reagent / Material | Function / Application | Example from Literature |

|---|---|---|

| Symmetry C18 Column | A common reversed-phase HPLC column stationary phase used for separating a wide range of organic compounds. | Used for impurity profiling of Baclofen [7]. |

| Orthophosphoric Acid | Used to adjust the pH of the aqueous mobile phase in reversed-phase chromatography, which can critical for controlling selectivity and peak shape. | Component of Mobile Phase A in the Baclofen method [7]. |

| Tetrabutylammonium Hydroxide | An ion-pairing reagent. It can be added to the mobile phase to improve the separation of ionic or ionizable compounds by forming neutral pairs with the analytes. | Component of Mobile Phase A in the Baclofen method [7]. |

| 1-Octane Sulfonic Acid Sodium Salt | Another type of ion-pairing reagent used to modify the retention behavior of ionic analytes in reversed-phase chromatography. | Component of Mobile Phase A in the Baclofen method [7]. |

| Methanol & Water | Fundamental solvents used to create the mobile phase in reversed-phase HPLC and UHPLC. The ratio of organic to aqueous solvent is a primary factor controlling analyte retention. | Used in a green UHPLC-MS/MS method for pharmaceutical contaminants in water [8]. |

| Reference Standards | Highly purified, well-characterized compounds used to prepare calibration standards for determining linearity, accuracy, LOD, and LOQ. | Known concentrations of salicylic acid used to validate a method for an OTC acne cream [2]. |

The validation of analytical methods is a critical pillar in pharmaceutical development and quality control, ensuring that products consistently meet predefined standards for identity, strength, quality, and purity. For researchers working with organic compounds, navigating the complex landscape of regulatory requirements presents a significant challenge. Three primary regulatory bodies establish complementary yet distinct frameworks governing these activities: the International Council for Harmonisation (ICH), the U.S. Food and Drug Administration (FDA), and the United States Pharmacopeia (USP). The ICH provides internationally recognized guidelines adopted by regulatory authorities across the United States, Europe, and Japan. Simultaneously, the FDA issues binding regulations and non-binding guidance documents specific to the U.S. market, while the USP establishes legally recognized compendial standards for drugs and dietary supplements.

Understanding the nuanced relationships and specific requirements among these organizations is essential for successful regulatory compliance. This guide provides a detailed comparative analysis of the ICH, FDA, and USP frameworks specifically contextualized for analytical method validation in organic compounds research. We examine the core principles, validation parameters, experimental protocols, and practical implementation strategies across these regulatory systems, supported by structured data comparisons and procedural workflows to assist researchers, scientists, and drug development professionals in constructing compliant and scientifically robust analytical approaches.

Comparative Analysis of ICH, FDA, and USP Requirements

Core Focus and Regulatory Authority

Table 1: Regulatory Scope and Authority Comparison

| Aspect | ICH | FDA | USP |

|---|---|---|---|

| Primary Focus | International harmonization of technical requirements | Public health protection through regulation | Public quality standards for medicines and foods |

| Legal Status | Guidance (adopted by regulatory authorities) | Regulations (binding) & Guidance (non-binding) | Officially recognized in U.S. law (Food, Drug & Cosmetic Act) |

| Geographic Scope | International (US, EU, Japan, and others) | United States | Primarily United States (internationally influential) |

| Key Documents | Q2(R2), Q14, Q12 | Guidance documents (product-specific & general) | USP-NF compendium, General Chapters |

| Enforcement Mechanism | Through adopting regulatory agencies | Application review, inspections, approvals | Standards enforced by FDA |

The ICH functions as a harmonization body whose guidelines gain legal authority only when adopted by regulatory agencies like the FDA. Its Q2(R2) guideline on analytical procedure validation provides the foundational scientific principles for validation studies, while Q14 addresses analytical procedure development, together forming a comprehensive lifecycle approach [13]. The FDA implements these ICH guidelines while also issuing its own product-specific guidance documents. For instance, in March 2024, the FDA published two final guidance documents based on ICH Q2(R2) and Q14, providing recommendations on validation and development of analytical procedures to facilitate regulatory evaluations [13]. The USP establishes public standards through its USP-NF compendium, which contains monographs for specific substances and general chapters describing tests, procedures, and acceptance criteria [14]. These standards are officially recognized by the Federal Food, Drug, and Cosmetic Act, giving them legal force in the United States.

Analytical Method Validation Parameters

Table 2: Validation Parameter Requirements Across Frameworks

| Validation Parameter | ICH Q2(R2) | FDA Recommendations | USP General Chapters |

|---|---|---|---|

| Specificity/Selectivity | Required | Required (including stability-indicating properties) | <1220> Analytical Procedure Life Cycle, <1210> Statistical Tools |

| Accuracy | Required with % recovery data | Required with justification of acceptance criteria | Required with statistical confidence intervals |

| Precision (Repeatability, Intermediate Precision) | Required with statistical measures | Required, including multiple analysts, instruments, days | Required with detailed statistical analysis |

| Detection Limit (LOD) | Required for impurity methods | Required with determination methodology described | Multiple approaches described in <1210> |

| Quantitation Limit (LOQ) | Required for impurity quantification | Required with determination methodology and accuracy at LOQ | Multiple approaches described in <1210> |

| Linearity & Range | Required with correlation coefficient, residual plots | Required with demonstrated suitable range | Required with statistical measures of fit |

| Robustness | Recommended during development | Recommended, should be documented | System suitability parameters established |

| Solution Stability | Recommended | Required for sample and standard solutions | Addressed in specific monographs |

The ICH Q2(R2) guideline outlines the fundamental validation characteristics that demonstrate an analytical procedure is suitable for its intended purpose, serving as the foundational document adopted by regulatory authorities worldwide [13]. The FDA incorporates these ICH principles while adding specific contextual requirements based on product type and regulatory submission pathway. For example, the FDA's recent final guidance on "Validation and Verification of Analytical Testing Methods Used for Tobacco Products" demonstrates how ICH principles are adapted to specific product categories, addressing premarket tobacco product applications, substantial equivalence reports, and modified risk tobacco product applications [15] [16]. The USP provides detailed implementation guidance through general chapters such as <1220> Analytical Procedure Life Cycle, which incorporates both validation and verification concepts, and <1210> Statistical Tools, which offers specific methodologies for evaluating validation data [14].

Experimental Protocols for Method Validation

Protocol for Analytical Method Validation According to ICH Q2(R2)

Objective: To establish documented evidence that an analytical procedure consistently produces results that meet predetermined specifications and quality attributes when testing organic compounds.

Materials and Equipment:

- Reference standards of known purity

- Test samples representative of production material

- Appropriate chromatographic system (HPLC/UPLC), spectrophotometer, or other analytical instrumentation

- Certified reference materials for accuracy determination

- Class A volumetric glassware and analytical balance

Procedure:

- Specificity Determination:

- Inject individual blank solutions to demonstrate absence of interference at retention times of interest

- Analyze samples spiked with potential impurities to demonstrate separation capability

- For stability-indicating methods, subject the sample to stress conditions (acid, base, oxidation, heat, light) and demonstrate separation of degradants from main peak

Linearity and Range Evaluation:

- Prepare minimum of five concentrations spanning the claimed range (e.g., 50-150% of target concentration)

- Analyze each concentration in triplicate

- Plot mean response against concentration and calculate regression statistics

- The correlation coefficient should be >0.999 for assay methods, >0.99 for impurity methods

- The y-intercept as percentage of target concentration response should be statistically insignificant

Accuracy Assessment:

- Prepare recovery samples at three levels (e.g., 80%, 100%, 120%) in triplicate using placebo spiked with known amounts of analyte

- Compare measured value to theoretical value and calculate percent recovery

- Mean recovery should be 98-102% for assay methods, 90-107% for impurity methods depending on level

Precision Evaluation:

- Repeatability: Analyze six independent preparations at 100% of test concentration by same analyst same day

- Intermediate Precision: Different analyst on different day using different instrument analyzes same six concentrations

- Calculate %RSD for each set; typically ≤1% for assay methods, ≤5-10% for impurities depending on level

Quantitation and Detection Limits:

- Signal-to-Noise Approach: Inject series of diluted solutions and determine concentration where S/N=10 for LOQ and S/N=3 for LOD

- Standard Deviation of Response and Slope: Based on standard deviation of y-intercepts of regression lines

Robustness Testing:

- Deliberately vary method parameters (mobile phase pH ±0.2 units, column temperature ±5°C, flow rate ±10%)

- Evaluate system suitability and chromatographic resolution under varied conditions

- Establish system suitability criteria to ensure method remains valid within defined operational ranges

Documentation: Maintain complete records of all raw data, chromatograms, calculations, and statistical analysis. Document any deviations from protocol with scientific justification.

FDA-Recommended Verification of Compendial Methods

Objective: To verify that a compendial USP method is suitable for use with a specific material under actual conditions of use, as required for ANDA submissions for generic drug products [17].

Materials and Equipment:

- USP reference standards

- Test samples from at least three representative batches

- All reagents, columns, and equipment as specified in USP monograph

- System suitability reference mixture

Procedure:

- Method Familiarization: Completely understand each step of the USP method, including sample preparation, chromatography conditions, and detection parameters

Specificity Verification:

- Demonstrate analytical procedure can unequivocally assess analyte in presence of components that may be expected to be present

- For assay and impurity methods, demonstrate separation from known and potential impurities

- For dissolution, demonstrate absence of interference from dissolution medium and capsule or tablet shell

Precision (Repeatability) Under Actual Conditions:

- Prepare six independent test preparations from a homogeneous sample by the same analyst

- Calculate %RSD of results; should meet or exceed monograph requirements or typical acceptance criteria if not specified

Accuracy/Recovery for Assay:

- Spike placebo with known quantity of analyte at 80%, 100%, and 120% of test concentration (n=3 each level)

- Calculate percent recovery; should be 98.0-102.0% for drug substance, 98.0-102.0% for drug product

Accuracy for Impurities:

- Spike placebo or drug substance with impurities at specification level(s)

- Recovery should be established for each impurity, typically 90-110% depending on level

Filter Compatibility (for HPLC methods):

- Compare filtered versus centrifuged samples for agreement (98.0-102.0%)

- Test at least two different filter types if significant adsorption is suspected

Solution Stability:

- Analyze standard and sample solutions over time (typically 24-48 hours) under storage conditions (ambient and refrigerated)

- Results should be within 98.0-102.0% of initial value

System Suitability:

- Confirm all system suitability parameters specified in monograph are met

- If no parameters specified, establish appropriate criteria based on method type

Documentation: Prepare formal verification report including protocol, raw data, results summary, and conclusion regarding method suitability. Include in ANDA submission Module 3.2.P.5 [17].

Visualization of Analytical Method Lifecycle

Analytical Method Lifecycle Flow

This diagram illustrates the integrated analytical procedure lifecycle approach described in ICH Q14 and Q2(R2), showing the continuous process from initial design through routine monitoring with feedback mechanisms for continuous improvement [13].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Analytical Method Validation

| Item | Function | Specific Application Examples | Quality/Regulatory Considerations |

|---|---|---|---|

| Certified Reference Standards | Method calibration and accuracy determination | USP reference standards for drug compounds; CRM for impurities | Certified purity with uncertainty statement; traceable to SI units |

| Chromatography Columns | Separation of analytes from matrix components | C18, C8, phenyl, HILIC for different selectivity | Multiple manufacturers from same phase; column efficiency testing |

| HPLC/UPLC Grade Solvents | Mobile phase preparation | Acetonitrile, methanol, water, buffer salts | Low UV absorbance; specified purity; particle-free |

| Volumetric Glassware | Precise solution preparation | Class A pipettes, volumetric flasks, burettes | Certified tolerance; calibration documentation |

| pH Meters and Buffers | Mobile phase pH control | Standard buffers (pH 4, 7, 10) for calibration | Regular calibration with NIST-traceable buffers |

| Filters | Sample clarification | Nylon, PVDF, PTFE membranes; various pore sizes | Compatibility testing required; extractables profile |

| System Suitability Mixtures | Daily verification of method performance | Resolution mixtures, tailing factor standards | Stability data; defined acceptance criteria |

The selection of appropriate reagents and materials forms the foundation of reliable analytical method validation. Certified reference standards provide the metrological traceability required for accurate quantification, with USP standards being legally recognized for compendial methods [14]. Chromatography column selection critically impacts method specificity, requiring evaluation of multiple columns from different manufacturers to establish robust operational ranges as recommended in ICH Q14 enhanced approach [13]. Filter compatibility must be experimentally verified, as absorption can significantly impact accuracy, particularly for low-dose compounds [17].

For regulatory submissions, comprehensive documentation of all materials used in validation studies is essential. This includes certificates of analysis for reference standards, column specifications, and verification of equipment calibration. The FDA's controlled correspondence Q&A emphasizes that appropriate justification should be provided for all critical method components, including column selection and mobile phase composition [17].

Implementation Strategies and Compliance Considerations

Successful navigation of the ICH, FDA, and USP requirements demands a strategic approach that integrates quality by design principles with practical regulatory intelligence. The following implementation framework ensures comprehensive compliance while maintaining scientific rigor:

Integrated Validation Strategy: Develop a unified validation protocol that simultaneously addresses ICH Q2(R2) validation parameters, FDA submission requirements for specific product types, and relevant USP general chapter recommendations. This integrated approach eliminates redundant testing while ensuring all regulatory expectations are met. For tobacco product applications, for instance, the FDA's final guidance on analytical testing methods provides specific recommendations on presenting validated and verified data, which should be incorporated alongside general ICH principles [15] [16].

Knowledge Management: Implement robust knowledge management systems to capture method development and validation data as recommended in ICH Q14 enhanced approach. This includes documenting the analytical target profile (ATP), risk assessments, experimental designs, and control strategies. This knowledge forms the basis for establishing appropriate controls and facilitates more efficient management of post-approval changes through established protocols as described in ICH Q12 [13].

Lifecycle Management: Adopt a complete analytical procedure lifecycle approach rather than treating validation as a one-time activity. This includes ongoing monitoring of method performance through system suitability tests, trending of quality control data, and periodic method reassessment. The USP's updated publication model, which transitions to six official publications annually beginning July 2025, supports this approach by providing more frequent updates to compendial standards [18].

Regulatory Intelligence: Maintain current awareness of evolving regulatory expectations through monitoring of FDA guidance issuances, USP revision announcements, and ICH implementation updates. The FDA frequently issues product-specific guidances and Q&A documents that clarify validation expectations for particular analytical challenges, such as the recent Q&A on bacterial endotoxins testing acceptance criteria for finished drug products [17].

By implementing this comprehensive framework, researchers can establish analytically sound methods that satisfy the overlapping requirements of ICH, FDA, and USP while maintaining the flexibility needed for continuous improvement throughout the method lifecycle.

The Role of Validation in Drug Development Lifecycle (Preclinical to Commercial)

Validation serves as the foundational pillar ensuring quality, safety, and efficacy throughout the multi-stage drug development journey. From initial discovery to commercial launch and beyond, demonstrating that analytical methods, processes, and data are reliable and fit for their intended purpose is not merely good scientific practice but a regulatory requirement [19] [5]. This process provides documented evidence that an analytical method consistently performs as intended, offering assurance that results can be trusted for critical decision-making, from selecting a candidate molecule in preclinical stages to releasing a commercial batch for patient use [1]. The approach to validation is not monolithic; it strategically evolves in a phase-appropriate manner, balancing scientific rigor with resource efficiency as a product advances through the pipeline [19]. This guide examines the role and requirements of validation at each stage, providing a comparative framework for professionals navigating this complex landscape.

The Drug Development Lifecycle: A Validation Perspective

The journey of a new drug from concept to market is a long, complex, and costly endeavor, typically taking 10 to 15 years and costing over $1 billion [20]. Only about 8-10% of drug candidates entering preclinical testing ultimately achieve FDA approval [21]. Validation acts as a critical quality gate at each of these stages, ensuring that resources are invested only in viable candidates and that patient safety is never compromised.

The following workflow illustrates the drug development lifecycle and the corresponding focus of validation activities at each stage:

Phase-Appropriate Validation: A Comparative Analysis

The stringency and scope of validation activities escalate as a drug candidate progresses through development, reflecting the increasing stakes and data requirements. This risk-based approach ensures efficient resource allocation while safeguarding product quality and patient safety [19].

Preclinical Stage Validation

During preclinical research, the primary goal is to establish a compound's safety profile and biological activity using in vitro and in vivo models [20]. This stage may last from one to six years and requires compliance with Good Laboratory Practice (GLP) regulations [22] [21]. Validation at this stage focuses on ensuring that analytical methods are sufficiently reliable to support early safety assessments and the decision to proceed to human trials.

Key Validation Activities:

- Test Method Qualification: Ensuring methods provide accurate and reliable results for pharmacokinetic and toxicological studies [19].

- Safety Profile Determination: Assessing efficacy, toxicity, and dose parameters in non-human models [22].

- GLP Compliance: Adhering to FDA regulations for preclinical laboratory studies (21 CFR Part 58) [21].

Clinical Stage Validation

As a drug moves into human trials, validation requirements become increasingly comprehensive. The table below summarizes the evolution of validation activities across clinical development phases:

Table 1: Comparative Analysis of Validation Requirements Across Clinical Development Phases

| Development Phase | Primary Objectives | Typical Study Size | Validation Focus & Rigor | Key Validation Activities |

|---|---|---|---|---|

| Phase I [19] [21] | Safety, tolerability, pharmacokinetics | 20-100 volunteers | Method Qualification: Minimum regulatory requirements; focus on safety parameters. | - Qualified facility production- Test method qualification- Sterilization validation (for injectables) |

| Phase II [19] | Efficacy, optimal dosing, side effects | Up to several hundred patients | Intermediate Validation: Expanded parameters; reliability for clinical decision-making. | - Analytical procedure validation (accuracy, precision, linearity)- Validation master plan- Small-scale development batch validation |

| Phase III [19] [21] | Confirm efficacy, monitor adverse reactions | 300-3,000 volunteers | Full Validation: Stringent validation for regulatory approval; high resource investment. | - Production-scale process validation- Product-specific validation (media fills, filters)- Terminal sterilization validation- Conformance batch production |

This phase-appropriate approach is strategic; with approximately 70% of drugs proceeding from Phase I to Phase II, 33% from Phase II to Phase III, and only 25-30% from Phase III to FDA review, it prevents over-investment in candidates unlikely to succeed [21].

Commercial Stage Validation

Upon FDA approval, validation enters its most rigorous and maintenance-focused phase. This includes Post-Marketing Surveillance (Phase IV) where real-world evidence is collected to monitor long-term safety and efficacy in diverse patient populations [19]. Continued monitoring of method robustness and reliability is essential, and any changes to analytical methods require careful comparability assessments to demonstrate equivalent or better performance compared to the approved method [23].

Analytical Method Validation: Core Principles & Parameters

Analytical method validation provides the documented evidence that a specific analytical procedure is suitable for its intended use [1] [5]. The International Council for Harmonisation (ICH) guideline Q2(R2) outlines the core validation parameters, whose stringency of assessment aligns with the phase-appropriate approach [19] [5].

Key Validation Parameters & Experimental Protocols

For researchers designing validation studies, the following parameters must be assessed through structured experimental protocols:

Table 2: Key Analytical Method Validation Parameters and Assessment Methodologies

| Validation Parameter | Definition | Experimental Protocol & Assessment Methodology |

|---|---|---|

| Accuracy [1] | Closeness of agreement between accepted reference and measured values. | Analyze minimum of 9 determinations across 3 concentration levels. Report as % recovery of known, added amount. |

| Precision [1] | Closeness of agreement between individual test results from repeated analyses. | - Repeatability: 9 determinations over specified range or 6 at 100% target.- Intermediate Precision: Vary days, analysts, equipment; report % RSD.- Reproducibility: Collaborative inter-laboratory studies. |

| Specificity/Selectivity [1] [5] | Ability to measure analyte accurately in presence of potential interferents (impurities, degradants, matrix). | For chromatographic methods: Demonstrate resolution of closely eluted compounds. Use peak purity tools (PDA, MS). Spiked samples prove discrimination. |

| Linearity & Range [1] | Ability to obtain results proportional to analyte concentration within a given range. | Minimum of 5 concentration levels. Report calibration curve, regression equation, coefficient of determination (r²). |

| LOD & LOQ [1] | Lowest concentration that can be detected (LOD) or quantitated with precision and accuracy (LOQ). | Signal-to-Noise: 3:1 for LOD, 10:1 for LOQ. Standard Deviation Method: LOD=3.3(SD/S), LOQ=10(SD/S). |

| Robustness [1] | Capacity of a method to remain unaffected by small, deliberate variations in method parameters. | Measure impact of changes (e.g., mobile phase pH, column temperature, flow rate) on results. Establishes method's operational ranges. |

Experimental Case Study: Comparative Method Validation

A 2024 study compared two analytical techniques for quantifying Metoprolol Tartrate (MET) in tablets, illustrating a real-world validation approach [24]. The study highlights how method choice involves trade-offs between performance, cost, and environmental impact.

Experimental Protocol:

- Techniques Compared: Ultra-Fast Liquid Chromatography-Diode Array Detector (UFLC−DAD) versus UV Spectrophotometry.

- Method Validation: Both methods were validated for specificity, sensitivity, linearity, accuracy, precision, and robustness.

- Statistical Analysis: ANOVA and Student's t-test at 95% confidence level were used to compare results.

- Greenness Assessment: The Analytical GREEnness (AGREE) metric evaluated environmental impact.

Key Findings:

- UFLC-DAD: Offered superior specificity and sensitivity, successfully analyzing both 50 mg and 100 mg MET tablets.

- UV Spectrophotometry: Provided simplicity, precision, and lower cost, but was limited to analyzing 50 mg tablets due to concentration constraints.

- Conclusion: For quality control of MET tablets where specificity is achievable, the UV spectrophotometric method presented a cost-effective and environmentally friendly alternative to UFLC [24]. This demonstrates the importance of aligning method selection with the specific needs of the analytical application.

The Scientist's Toolkit: Essential Reagents & Materials

Successful method validation relies on high-quality, well-characterized materials. The following table details essential reagents and their functions in analytical procedures for drug development.

Table 3: Essential Research Reagent Solutions for Analytical Method Validation

| Reagent / Material | Function & Role in Validation |

|---|---|

| Analytical Reference Standards | High-purity analyte used to prepare calibration standards for establishing linearity, range, accuracy, and LOD/LOQ. Serves as the benchmark for all quantitative measurements [1]. |

| Chromatographic Columns & Supplies | Stationary phases (e.g., C18) and mobile phase components for separation. Critical for demonstrating specificity, resolution, and robustness of chromatographic methods [24] [23]. |

| Mass Spectrometry-Grade Solvents | High-purity solvents for mobile phase preparation and sample reconstitution. Minimize background noise and ion suppression, essential for achieving required sensitivity (LOD/LOQ) and accuracy in LC-MS methods [25]. |

| System Suitability Standards | Defined mixtures used to verify that the total analytical system (instrument, reagents, column) is functioning adequately at the time of testing. Checks parameters like retention time, peak tailing, and resolution before sample analysis [1]. |

| Impurity & Degradant Standards | Isolated impurities or forced degradation products used to validate method specificity and the stability-indicating nature of an assay. Prove the method can resolve the main analyte from its potential impurities [1] [5]. |

Advanced Techniques & Future Directions

The landscape of analytical method validation continues to evolve with technological advancements. Techniques like LC-NMR-MS and HPLC-HRMS-SPE-NMR represent powerful hyphenated platforms that combine separation power with structural elucidation, enabling direct structural and biological activity characterization of metabolites from crude extracts [25]. Furthermore, the adoption of a risk-based approach for analytical method comparability is gaining traction, guiding how changes to methods (e.g., transitioning from HPLC to UHPLC) are managed in registration and post-approval stages with a focus on scientific justification rather than mere regulatory compliance [23].

Validation is not a single event but a continuous, phase-appropriate process that underpins every stage of the drug development lifecycle. From foundational method qualification in preclinical studies to full validation for market approval and ongoing comparability assessments post-launch, it provides the critical data integrity required to ensure that new therapeutics are both safe and effective. As technologies advance and regulatory frameworks evolve, the principles of validation remain constant: documented evidence, scientific rigor, and an unwavering focus on patient safety.

Within organic compounds research and drug development, the intended use of any new analytical method must be rigorously supported by experimental evidence. This process is formalized through meticulous documentation and protocol design, which serve as the foundational framework for proving a method's reliability and fitness for its specific purpose [26] [27]. In the context of validating analytical methods for organic compounds, this practice moves beyond simple record-keeping; it is an integral part of the scientific evidence, demonstrating that the method consistently produces results that are accurate, precise, and specific for the measurement of the target analyte [27]. The principles of this validation are closely aligned with those in clinical research, where documents like the study protocol, Case Report Form (CRF), and Informed Consent Form (ICF) provide the structure for ensuring data integrity and participant safety [26].

The validation process for a new analytical method must be guided by a "fit-for-purpose" approach, where the extent of validation is commensurate with the intended application of the data [27]. This article provides a comparative guide for researchers and scientists, offering objective performance data and detailed experimental protocols to support the selection and validation of analytical methods in organic chemistry and biomarker development.

Performance Benchmarking of Analytical Techniques

Selecting an appropriate analytical technique requires a clear understanding of its performance characteristics relative to alternatives. The following benchmarks are critical for evaluating methods used in the analysis of organic compounds, such as during the qualification of a new biomarker or the characterization of a synthesized molecule [27].

Key Performance Metrics for Analytical Methods

The table below summarizes core performance metrics that should be evaluated during method validation.

Table 1: Key Performance Metrics for Analytical Method Validation

| Performance Metric | Description | Typical Acceptance Criteria |

|---|---|---|

| Accuracy | The closeness of agreement between a measured value and a known true value. | Recovery of 90-110% for validation standards. |

| Precision | The closeness of agreement between a series of measurements. | Relative Standard Deviation (RSD) < 15% (or < 20% at LLOQ). |

| Specificity/Specificity | The ability to assess the analyte unequivocally in the presence of components that may be expected to be present. | No interference from blank matrix or other compounds. |

| Linearity & Range | The ability to obtain test results proportional to the concentration of analyte over a specified range. | Correlation coefficient (R²) > 0.99. |

| Limit of Detection (LOD) | The lowest concentration of an analyte that can be detected. | Signal-to-noise ratio ≥ 3:1. |

| Limit of Quantification (LOQ) | The lowest concentration of an analyte that can be quantified with acceptable precision and accuracy. | Signal-to-noise ratio ≥ 10:1; Precision and accuracy within ±20%. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters. | Method performance remains within specified criteria. |

Comparison of Chromatographic Techniques

Liquid chromatography is a cornerstone of organic compound analysis. The following table provides a generalized comparison of common techniques.

Table 2: Comparison of Chromatographic Techniques for Organic Compound Analysis

| Technique | Optimal Use Case | Key Performance Differentiators | Limitations |

|---|---|---|---|

| HPLC (High-Performance Liquid Chromatography) | Analysis of a wide range of semi-volatile and non-volatile compounds. | Robust, cost-effective; well-understood. | Lower peak capacity and resolution compared to UHPLC. |

| UHPLC (Ultra-HPLC) | High-throughput analysis; complex mixtures requiring high resolution. | Higher speed, sensitivity, and resolution due to smaller particle sizes (<2µm) and higher pressures. | Higher instrument cost; more stringent requirements for sample cleanliness. |

| LC-MS/MS (Liquid Chromatography-Tandem Mass Spectrometry) | Quantitative analysis of trace-level analytes in complex matrices (e.g., biomarkers in plasma). | Superior specificity and sensitivity; enables structural elucidation. | High instrument cost and operational complexity; requires skilled personnel. |

Experimental Protocols for Method Validation

This section provides detailed methodologies for key experiments cited in the performance benchmarking, allowing for the reproduction of results and ensuring the reliability of the validation data.

Protocol for Determining Accuracy and Precision

This protocol is designed to assess the accuracy and intra-day precision of an analytical method for quantifying a small organic molecule, such as a drug candidate or biomarker.

1. Objective: To evaluate the accuracy and precision of the analytical method over three concentration levels (low, medium, high) across five replicates per level within a single day.

2. Materials and Reagents:

- Analyte reference standard of known purity.

- Appropriate biological or solvent matrix (e.g., plasma, mobile phase).

- Volumetric flasks, pipettes, and other standard laboratory glassware.

- HPLC or LC-MS/MS system with validated instrumental conditions.

3. Procedure: 1. Prepare a stock solution of the analyte at a concentration that is accurately known. 2. Serially dilute the stock solution to prepare working standard solutions. 3. Spike the working standards into the blank matrix to generate Quality Control (QC) samples at three concentrations: Low QC (near the LOQ), Medium QC (mid-range of the calibration curve), and High QC (near the upper limit of quantification). 4. Process all QC samples (n=5 per concentration level) according to the established sample preparation procedure (e.g., protein precipitation, liquid-liquid extraction). 5. Analyze the processed QC samples in a single batch alongside a freshly prepared calibration curve. 6. Calculate the measured concentration for each QC sample using the calibration curve.

4. Data Analysis:

- Accuracy: For each QC level, calculate the mean measured concentration. Accuracy is expressed as % Bias = [(Mean Measured Concentration - Nominal Concentration) / Nominal Concentration] x 100.

- Precision: For each QC level, calculate the standard deviation (SD) and the Relative Standard Deviation (RSD). Precision is expressed as % RSD = (SD / Mean Measured Concentration) x 100.

- The method is typically considered acceptable if the % Bias and % RSD are within ±15% for all but the LOQ, where ±20% is often acceptable.

Protocol for Establishing the Limit of Quantification (LOQ)

1. Objective: To determine the lowest concentration of the analyte that can be quantified with acceptable precision and accuracy.

2. Materials and Reagents: (As per Protocol 3.1)

3. Procedure: 1. Prepare at least five independent samples of the analyte at a concentration presumed to be near the LOQ in the required matrix. 2. Process and analyze these samples through the entire analytical procedure. 3. Calculate the concentration for each sample using a calibration curve that includes lower concentrations.

4. Data Analysis:

- Calculate the % RSD (precision) and % Bias (accuracy) for the five replicates.

- The LOQ is confirmed if the % RSD is ≤20% and the % Bias is within ±20%. If these criteria are not met, repeat the experiment at a higher concentration until they are satisfied.

Diagram 1: Analytical Method Validation Workflow.

The Biomarker Qualification Pathway

The validation of analytical methods is a critical component of the broader biomarker qualification process, which establishes the evidentiary link between a biomarker and a biological process or clinical endpoint [27]. This pathway, as outlined by regulatory bodies, provides a structured framework for building evidence for intended use.

Diagram 2: Biomarker Qualification Pathway.

Table 3: Stages of Biomarker Qualification and Evidence Requirements

| Qualification Stage | Definition | Level of Evidence Required | Example from Oncology |

|---|---|---|---|

| Exploratory Biomarker | Used to fill gaps in understanding disease targets or variability in drug response. | Foundational scientific rationale; method is under development. | Use of gene expression panels for preclinical safety evaluation [27]. |

| Probable Valid Biomarker | Measured with a well-characterized assay; scientific evidence suggests predictive value. | Established analytical performance; evidence elucidates biological/clinical significance from a single or limited number of studies. | EGFR mutations predicting response in Non-Small Cell Lung Cancer (NSCLC) in initial clinical trials [27]. |

| Known Valid Biomarker | Widely accepted by the scientific community to predict clinical outcome. | Broad consensus achieved through independent replication and cross-validation at different sites. | HER2/neu overexpression for selecting patients for trastuzumab therapy in breast cancer [27]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

The reliability of analytical data is contingent upon the quality of materials used. The following table details key reagents and their functions in method development and validation for organic compounds research.

Table 4: Essential Research Reagents and Materials for Analytical Method Validation

| Item | Function/Application | Critical Quality Attributes |

|---|---|---|

| Reference Standard | Serves as the benchmark for identifying and quantifying the target analyte. | High purity (>95%), certificate of analysis, defined storage conditions. |

| Stable Isotope-Labeled Internal Standard | Corrects for variability in sample preparation and ionization efficiency in LC-MS/MS. | Isotopic purity, chemical stability, identical chromatographic behavior to the analyte. |

| Appropriate Matrix (e.g., Human Plasma) | Used to prepare calibration standards and QCs to mimic the test samples. | Source-relevant, free of interference for the analyte, appropriate anticoagulant. |

| HPLC-Grade Solvents | Used for mobile phase and sample preparation to prevent column damage and background noise. | Low UV absorbance, high purity, minimal particulate matter. |

| Solid-Phase Extraction (SPE) Cartridges | For sample clean-up and pre-concentration of analytes from complex matrices. | Selective sorbent chemistry, high and reproducible recovery of the analyte. |

Comprehensive documentation and rigorous protocol design are not merely administrative tasks; they are the very means by which scientists provide irrefutable evidence for the intended use of an analytical method. From the initial development of a research assay to its final qualification as a known valid biomarker, each step must be captured in a detailed protocol, and its performance must be benchmarked against objective, pre-defined criteria [26] [27]. This systematic and evidence-based approach ensures that methods used in organic compounds research and drug development are reliable, reproducible, and fit-for-purpose, ultimately supporting the creation of safe and effective therapeutics. As the field advances, the integration of these principles with new technologies and data analysis frameworks will continue to elevate the standards of analytical science.

Developing and Applying Validated Methods Across Matrices and Instruments

The accuracy and sensitivity of analyzing organic compounds in complex matrices are fundamentally dependent on the initial sample preparation stage. Efficient extraction and cleanup are critical for isolating target analytes from interfering substances, thereby ensuring the reliability of subsequent chromatographic or spectrometric determinations. Within this context, Solid-Phase Extraction (SPE), Matrix Solid-Phase Dispersion (MSPD), and Liquid-Liquid Extraction (LLE) have emerged as three foundational techniques, each with distinct principles and application domains. Validating analytical methods for organic compounds research requires a deep understanding of the strengths, limitations, and specific protocols of these methods. This guide provides a comparative analysis of these techniques, supported by experimental data and detailed methodologies, to aid researchers, scientists, and drug development professionals in selecting and optimizing their sample preparation workflows.

Solid-Phase Extraction (SPE)

SPE is a widely adopted sample preparation technique that separates analytes from a liquid matrix based on their affinity for a solid sorbent. The basic procedure involves loading a solution onto a solid phase that retains the target compounds, washing away undesired components, and then eluting the purified analytes with a stronger solvent [28]. Its primary advantages over traditional methods include reduced solvent consumption, higher selectivity, and better efficiency in removing matrix interferences [29]. SPE is highly versatile and is routinely used in environmental, pharmaceutical, clinical, and food analysis [28] [29].

Matrix Solid-Phase Dispersion (MSPD)

MSPD is a specialized technique particularly suited for solid, semi-solid, and viscous biological samples. It involves the direct mechanical blending of a sample with a solid sorbent material to create a homogeneous mixture. This process simultaneously disrupts the sample's structure and disperses its components onto the sorbent surface. The resulting blended material is then packed into a column, and analytes are eluted after a wash step. A key advantage of MSPD is its ability to perform extraction and cleanup in a single procedure, eliminating the need for multiple sample preparation steps such as homogenization, filtration, and precipitation [30] [31]. It has proven highly effective for complex matrices like animal tissues, plant materials, and foodstuffs [32].

Liquid-Liquid Extraction (LLE)

LLE, also known as solvent extraction, is one of the oldest and most fundamental separation methods. It is based on the principle of partitioning, where compounds are separated based on their relative solubilities in two immiscible liquids, typically an aqueous phase and an organic solvent [33] [34]. The distribution of a solute between these two phases is governed by its partition coefficient (K~D~) or distribution ratio (D) [33]. While LLE is a simple and well-established technique effective for non-polar and semi-polar analytes, it has notable drawbacks, including high solvent consumption, being labor-intensive, and the potential formation of emulsions that complicate phase separation [29] [35].

Comparative Performance Analysis

The selection of an appropriate sample preparation technique is guided by the specific requirements of the analysis. The table below summarizes the key characteristics of SPE, MSPD, and LLE to facilitate a direct comparison.

Table 1: Comparative overview of SPE, MSPD, and LLE techniques.

| Aspect | Solid-Phase Extraction (SPE) | Matrix Solid-Phase Dispersion (MSPD) | Liquid-Liquid Extraction (LLE) |

|---|---|---|---|

| Principle | Affinity for solid sorbent [28] | Mechanical blending & dispersion on sorbent [30] | Partitioning between immiscible liquids [33] |

| Primary Function | Selective isolation & concentration [35] | Simultaneous disruption & extraction [31] | Solvent-based partitioning [35] |

| Typical Sample Types | Liquid samples (environmental, biological) [29] | Solid & semi-solid samples (tissues, food) [30] | Liquid samples [34] |

| Selectivity | High [35] | Moderate to High [30] | Moderate [35] |

| Solvent Consumption | Low to Moderate [35] | Low [32] | High [29] [35] |

| Automation Potential | High [29] [35] | Low to Moderate | Low [35] |

| Key Advantage | High selectivity, automation, low solvent use [29] [35] | Simplicity for solid samples, minimal required equipment [30] | Simplicity, suitability for large volumes [35] |

| Key Limitation | Requires method development, cartridge cost [35] | May require optimization for new matrices | High solvent use, emulsion formation, labor-intensive [29] [35] |

Supporting Experimental Data

The theoretical comparisons are best understood when contextualized with performance data from real-world applications. The following table compiles quantitative results from studies that validated these methods for specific analytes and matrices.

Table 2: Experimental performance data for SPE, MSPD, and LLE from cited studies.

| Technique | Analytes | Matrix | Performance Data | Source |

|---|---|---|---|---|

| MSPD | 11 Endocrine-disrupting chemicals (Bisphenols, Alkylphenols, Phthalates) [30] | Mussel tissue | Recoveries: 80-100%LOQ: 0.25 - 16.20 µg/kgRSD: < 7% | [30] |

| MSPD | >70 Semi-volatile organic compounds (Pesticides, PAHs) [32] | Tadpole tissue | Recoveries: Improved vs. PLEAdvantages: Less time, less solvent, more SOCs measured than PLE | [32] |

| SPE | Hydrocarbon Oxidation Products (HOPs) [36] | Groundwater | Selectivity: More effective at isolating highly oxidized, polar HOPs compared to LLE. LLE underrepresented polar HOPs. | [36] |

| LLE | Hydrocarbon Oxidation Products (HOPs) [36] | Groundwater | Selectivity: Selectively recovered aliphatic-like compounds. Precision: Less precise and less representative of polar HOPs than SPE. | [36] |

Detailed Experimental Protocols

To ensure the reproducibility of analytical methods, it is essential to document detailed experimental protocols. The workflows for each technique are also visualized in the diagrams below.

MSPD Protocol for Organic Contaminants in Biological Tissue

This protocol, adapted from a study analyzing endocrine-disrupting chemicals in mussels, outlines the key steps for a typical MSPD procedure [30].

Diagram Title: MSPD Workflow

Procedure:

- Sample Preparation: Precisely weigh a representative portion of the biological sample (e.g., 0.5 g of mussel tissue) [30].

- Dispersion: Place the sample in a glass mortar and blend it thoroughly with an appropriate sorbent (e.g., C18, silica) using a glass pestle until a homogeneous, dry-looking mixture is achieved. The mass ratio of sorbent to sample is a critical optimization parameter [30].

- Column Packing: Transfer the blended mixture to an empty solid-phase extraction column or a syringe barrel. Gently compress the material to form a packed bed and place a frit on top to secure it.

- Washing: Pass a washing solvent (e.g., a buffer or a weak solvent) through the column to remove undesired matrix components like fats and proteins.

- Elution: Elute the target analytes by passing a small volume of an appropriate organic solvent (e.g., acetonitrile, ethyl acetate) through the column. Collect the eluate for analysis.

- Analysis: The eluate can often be directly analyzed or may require mild concentration before analysis by techniques such as High-Performance Liquid Chromatography with a Diode-Array Detector (HPLC-DAD) [30].

SPE Protocol for Aqueous Samples

This general protocol for isolating organic compounds from water samples can be adapted based on the specific sorbent and analytes of interest [36] [29].

Diagram Title: SPE Workflow

Procedure:

- Conditioning: Pass several column volumes of an organic solvent (e.g., methanol) through the SPE sorbent bed to wet and activate it. This is followed by a volume of water or a buffer to create an optimal environment for analyte retention [29].

- Sample Loading: Apply the aqueous sample to the column. The sample pH may need adjustment to suppress ionization and enhance the retention of ionic analytes. The sample is passed through the column under a gentle vacuum or positive pressure.

- Washing: Rinse the sorbent bed with a weak solvent or buffer (e.g., water or a 5% methanol solution) to remove weakly retained interferences without displacing the target analytes.

- Drying: A drying step (e.g., under vacuum or by centrifugation) may be incorporated to remove residual water, especially if the elution solvent is immiscible with water.

- Elution: The analytes are recovered by passing a small volume of a strong, appropriate solvent (e.g., pure methanol, acetonitrile, or dichloromethane) through the column [36]. The collected eluate contains the purified and concentrated analytes.

LLE Protocol for Non-Polar Analytes

This protocol follows standard LLE practices, such as those used in EPA methods for water analysis [36] [37].

Diagram Title: LLE Workflow

Procedure:

- pH Adjustment: Adjust the pH of the aqueous sample to a value that ensures the target analytes are in their neutral form. For instance, a pH of 10 is used to extract bases and neutrals, while a pH of 2 is used for acidic compounds [36].

- Solvent Addition: Add a water-immiscible organic solvent (e.g., dichloromethane, pentane, or ethyl acetate) to the sample in a separatory funnel. A typical solvent-to-sample ratio is 1:4 (v/v) [36].

- Equilibration: Seal the vessel and shake it vigorously for several minutes to ensure intimate contact between the two phases and allow the solutes to partition.

- Phase Separation: Allow the mixture to stand until the two liquid phases separate completely. This can be accelerated by centrifugation.

- Collection: Drain and collect the organic phase (the extract), which contains the extracted analytes. The process can be repeated with fresh solvent to improve recovery.

- Concentration: The combined organic extracts may be dried with anhydrous sodium sulfate and then concentrated to a small volume using evaporation under a gentle stream of nitrogen or in a rotary evaporator.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of any extraction method relies on the use of appropriate materials. The following table lists key reagents and their functions in the featured protocols.

Table 3: Essential research reagents and materials for extraction techniques.

| Item | Function/Description | Common Examples / Notes |

|---|---|---|

| C18 Sorbent | A reversed-phase sorbent used to retain non-polar analytes from polar matrices. | Octadecylsilyl (ODS)-silica; used in SPE and as the dispersant in MSPD [30] [32]. |

| Silica Sorbent | A polar, normal-phase sorbent used for retention of polar compounds. | Used for sample cleanup in MSPD and SPE [32] [29]. |