Balancing Act: Multi-Objective Optimization for Maximizing Drug Yield and Minimizing Environmental Impact

This article explores the critical application of multi-objective optimization (MOO) in drug discovery and development, addressing the inherent conflict between maximizing therapeutic yield and minimizing environmental impact.

Balancing Act: Multi-Objective Optimization for Maximizing Drug Yield and Minimizing Environmental Impact

Abstract

This article explores the critical application of multi-objective optimization (MOO) in drug discovery and development, addressing the inherent conflict between maximizing therapeutic yield and minimizing environmental impact. Aimed at researchers and drug development professionals, it provides a comprehensive framework from foundational principles to advanced applications. The content covers core MOO concepts like Pareto optimality, surveys methodological approaches including evolutionary algorithms and machine learning, and addresses troubleshooting for high-dimensional problems. Through validation techniques and comparative analysis of real-world case studies, this review serves as a strategic guide for implementing MOO to develop efficacious, safe, and environmentally sustainable pharmaceuticals.

The Core Conflict: Understanding Trade-Offs Between Drug Efficacy, Safety, and Environmental Sustainability

Molecular discovery is fundamentally a multi-objective optimization (MOO) problem that requires identifying molecules which optimally balance multiple, often competing, properties [1]. Traditional drug discovery approaches, which often optimize for a single objective like binding affinity or use a weighted sum (scalarization) to combine goals, struggle to reveal the inherent trade-offs between objectives and impose assumptions about their relative importance early in the design process [1]. In contrast, modern MOO frameworks, particularly Pareto optimization, do not require pre-defined weights and instead map the set of solutions—the Pareto front—where no single objective can be improved without worsening another [2] [1]. This provides medicinal chemists with a comprehensive view of the design landscape, enabling more informed decision-making. The application of MOO in drug discovery has been greatly accelerated by computational approaches, which can navigate the vast chemical space (estimated at ~10^60 molecules) to find candidate compounds that simultaneously satisfy critical objectives such as high efficacy, low toxicity, good solubility, and high binding affinity to target proteins [2] [3].

Key Multi-Objective Optimization Algorithms and Workflows

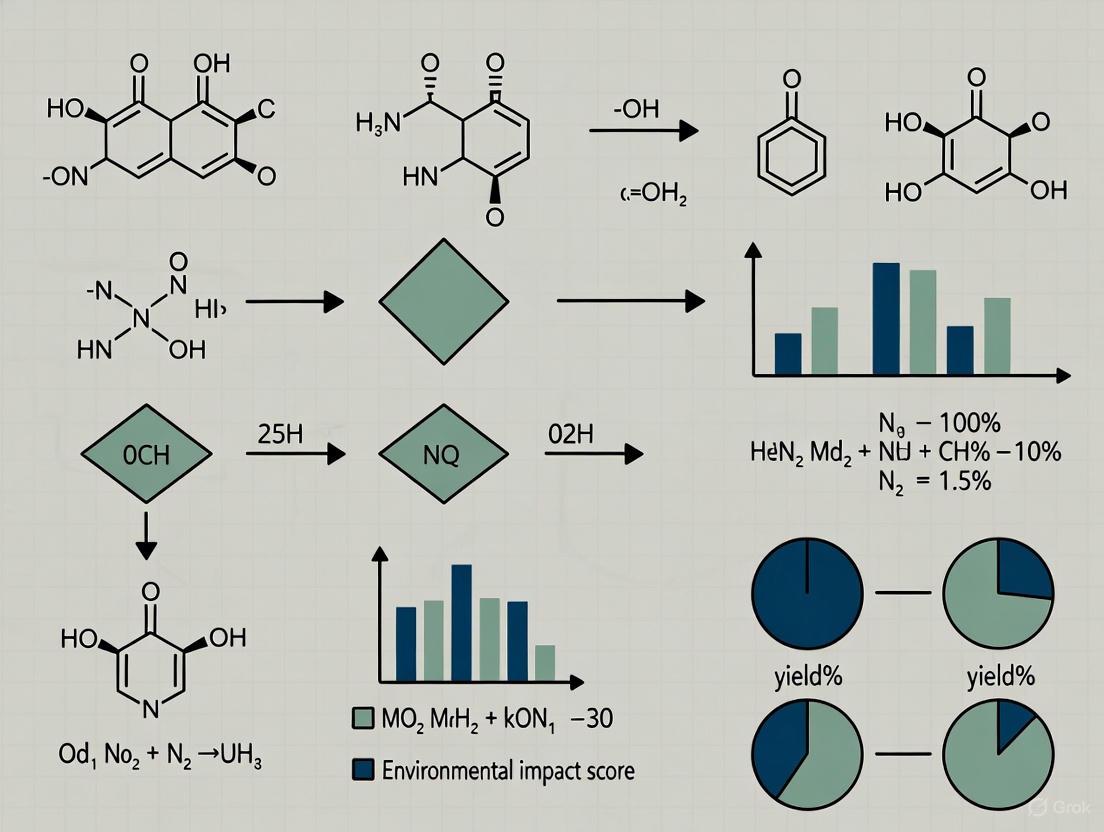

MOO algorithms in drug discovery can be broadly classified into mathematical programming-based and population-based approaches, with the latter, especially evolutionary computation, flourishing since the 1990s [4]. These algorithms typically aim to discover the entire Pareto front or incorporate decision-maker preferences to bias the search toward preferred regions of the objective space [4]. The following workflow diagram illustrates the general stages of a multi-objective molecular optimization process.

Dominant Algorithmic Approaches

Evolutionary Algorithms (EAs): Inspired by natural selection, EAs maintain a population of candidate molecules that undergo selection, crossover, and mutation over multiple generations. They excel at global search and exploring complex chemical landscapes with minimal reliance on large training datasets [2]. Key variants include the Non-dominated Sorting Genetic Algorithm II (NSGA-II) and NSGA-III, renowned for their efficiency and ability to maintain population diversity [2] [5].

Monte Carlo Tree Search (MCTS): This heuristic search procedure navigates the chemical space atom-by-atom or fragment-by-fragment. Algorithms like ParetoDrug use MCTS to find molecules on the Pareto front, balancing exploration of new regions with exploitation of known promising areas guided by pre-trained generative models [6].

Deep Generative Models: Models such as Variational Autoencoders (VAEs) learn a continuous latent representation of molecular structures. Multi-objective optimization is then performed in this latent space, where sampled vectors are decoded into molecules with desired properties [3]. Frameworks like ScafVAE integrate scaffold-aware generation with surrogate models for property prediction [3].

Bayesian Optimization: This model-guided approach is particularly useful when property evaluations are expensive. It builds a probabilistic model to predict molecular performance and strategically selects the most promising candidates for the next evaluation, aiming to maximize the information gain about the Pareto front [1].

Performance Comparison of Leading MOO Algorithms

The following tables consolidate quantitative performance data from benchmark studies, providing a direct comparison of various MOO algorithms used in drug discovery.

Table 1: Performance on GuacaMol Benchmark Tasks (Tasks 1-5) and DAP Kinases Task (Task 6) [2]

| Algorithm | Success Rate (%) | Dominating Hypervolume | Geometric Mean | Internal Similarity |

|---|---|---|---|---|

| MoGA-TA | Higher than baselines | Higher than baselines | Higher than baselines | Data Not Specified |

| NSGA-II | Lower than MoGA-TA | Lower than MoGA-TA | Lower than MoGA-TA | Data Not Specified |

| GB-EPI | Lower than MoGA-TA | Lower than MoGA-TA | Lower than MoGA-TA | Data Not Specified |

Note: The six benchmark tasks require optimization of 3-5 objectives, including Tanimoto similarity to a target drug, TPSA, logP, molecular weight, number of rotatable bonds, and specific biological activities. MoGA-TA's improved crowding distance and population update strategy led to superior performance across metrics [2].

Table 2: Benchmark Results for Target-Aware Molecule Generation (ParetoDrug) [6]

| Metric | ParetoDrug | Ligand-Based Methods | Target-Scoring-Based Methods |

|---|---|---|---|

| Docking Score | Higher (Better) | Lower | Mixed |

| Uniqueness (%) | High | Lower | Lower |

| QED | Optimized | Optimized | Not Explicitly Optimized |

| SA Score | Optimized | Optimized | Not Explicitly Optimized |

| LogP | Within drug-like range | May be optimized | May be optimized |

Note: Benchmark involved 100 protein targets from BindingDB; 10 molecules generated per target. ParetoDrug demonstrated a remarkable ability to generate novel, unique molecules with satisfactory binding affinities and drug-like properties simultaneously [6].

Table 3: Performance Profile of Broader MOO Algorithm Families [7]

| Algorithm Family | Computational Efficiency | Scalability | Interpretability | Best-Suited Scenario |

|---|---|---|---|---|

| Bio-inspired (e.g., NSGA-II/III) | Medium | High | Medium | Complex, non-linear problems |

| ML-Enhanced/Hybrid | Low (Training) / High (Deployment) | Very High | Low | High-dimensional, dynamic environments |

| Mathematical Theory-Driven | High | Low | High | Problems with well-defined mathematical properties |

| Physics-Inspired | Medium | Medium | Medium | Continuous optimization problems |

Detailed Experimental Protocols for Key MOO Methods

MoGA-TA: An Improved Genetic Algorithm for Molecular Optimization

MoGA-TA was developed to overcome limitations of traditional methods, such as high data dependency, computational cost, and the tendency to converge to local optima with low molecular diversity [2].

4.1.1 Detailed Methodology:

- Initialization: The process begins with a population of molecular structures, often based on existing lead compounds.

- Decoupled Crossover and Mutation: Genetic operations are applied within the chemical space. Crossover combines fragments of parent molecules, and mutation introduces structural changes, both designed to produce novel offspring molecules.

- Tanimoto Similarity-based Crowding Distance: Instead of a standard crowding distance, MoGA-TA uses the Tanimoto coefficient (a measure of molecular fingerprint similarity) to calculate the structural diversity within the population. This method more accurately captures molecular structural differences, helping to maintain diversity and prevent premature convergence [2].

- Non-dominated Sorting: The population is ranked into fronts based on Pareto dominance. Molecules in the first front are not dominated by any other molecule, those in the second front are dominated only by those in the first, and so on.

- Dynamic Acceptance Probability Population Update: A novel strategy balances exploration and exploitation. In early generations, a higher probability of accepting less-fit molecules allows for broader exploration of the chemical space. In later stages, the probability decreases, favoring the retention of superior individuals and guiding the population toward the global Pareto front [2].

- Termination: Optimization continues iteratively until a predefined stopping condition is met (e.g., a maximum number of generations or convergence of the Pareto front).

ParetoDrug: Pareto Monte Carlo Tree Search for Multi-Objective Target-Aware Generation

ParetoDrug addresses the gap in deep learning-based methods that focus solely on binding affinity by synchronously optimizing multiple properties, including drug-likeness [6].

4.2.1 Detailed Methodology:

- Pareto Front Maintenance: The algorithm maintains a global pool of Pareto-optimal molecules. A molecule is added to this pool if it is not dominated by any other molecule in the pool across all property objectives.

- Autoregressive Generation Guidance: ParetoDrug leverages a pre-trained atom-by-atom autoregressive generative model. This model provides a prior understanding of molecular structure and target binding, guiding the MCTS toward chemically valid and target-aware molecules.

- Tree Search with ParetoPUCT: The MCTS is conducted as follows:

- Selection: Starting from the root, a path is selected by choosing nodes that maximize the ParetoPUCT score, a variant of the Polynomial Upper Confidence Tree score. This scheme balances the exploration of new molecular paths with the exploitation of paths that have historically led to high-performing molecules [6].

- Expansion: When a leaf node is reached, new child nodes (representing the next possible atoms or fragments) are added to the tree.

- Simulation: A "rollout" is performed from the new node to complete a molecule, often using the pre-trained generative model for efficiency.

- Backpropagation: The properties of the generated molecule (e.g., docking score, QED, SA) are evaluated. This information is then propagated back up the tree, updating the performance statistics of all parent nodes.

- Output: After the search is complete, the global pool of Pareto-optimal molecules is returned as the result.

ScafVAE: A Scaffold-Aware Variational Autoencoder

ScafVAE is a graph-based deep generative model designed for multi-objective drug candidates, overcoming limitations of atom-based and fragment-based generation [3].

4.3.1 Detailed Methodology:

- Bond Scaffold-Based Generation: Unlike generating atoms or predefined fragments sequentially, ScafVAE first assembles a "bond scaffold"—a molecular skeleton without specified atom types. This scaffold is subsequently "decorated" with specific atom types to form a valid molecule. This approach expands the accessible chemical space while preserving high chemical validity [3].

- Perplexity-Inspired Fragmentation: The encoder learns molecular representations by breaking molecules into fragments. The breaking points are determined by a bond's "perplexity," estimated by a pre-trained masked graph model, which reflects the uncertainty or predictability of a bond.

- Model Augmentation: The surrogate models used for predicting molecular properties are augmented through contrastive learning (which helps learn robust latent representations by pulling similar molecules closer and pushing dissimilar ones apart) and molecular fingerprint reconstruction (an auxiliary task that improves prediction accuracy) [3].

- Latent Space Optimization: Molecules are encoded into a Gaussian-distributed latent vector. Multi-objective optimization is performed by sampling vectors from this latent space and using the augmented surrogate models to predict their properties, guiding the search toward regions that satisfy the multiple objectives.

Table 4: Key Research Reagent Solutions for Computational MOO Experiments

| Tool / Resource | Type | Primary Function in MOO |

|---|---|---|

| RDKit | Open-Source Cheminformatics | Calculates molecular descriptors (e.g., logP, TPSA), fingerprints (ECFP, FCFP), and modifies molecular structures. Essential for evaluating objective functions [2]. |

| smina | Molecular Docking Software | A fork of AutoDock Vina used to compute binding affinity (docking score) between a generated molecule and a target protein, a key objective in target-aware optimization [6]. |

| GuacaMol | Benchmarking Suite | Provides standardized molecular optimization tasks and scoring functions to fairly evaluate and compare the performance of different MOO algorithms [2]. |

| DesignBuilder (EnergyPlus) | Building Performance Simulation | Used in analogous MOO fields (e.g., building retrofit). Simulates energy consumption and environmental metrics; surrogate models can be trained on its data to reduce computational cost in MOO [5]. |

| Galapagos/Octopus | Evolutionary Solver | A genetic algorithm component integrated into parametric design software (e.g., Grasshopper) for optimizing design variables against multiple objectives, demonstrating cross-domain applicability of EA principles [8]. |

| BindingDB | Public Database | A repository of experimental protein-ligand binding affinities. Used as a source of protein targets and data for training and benchmarking target-aware generative models [6]. |

The comparative analysis of multi-objective optimization algorithms reveals a clear trajectory toward hybrid and adaptive systems that balance structural exploration with computational efficiency. Methods like MoGA-TA and ParetoDrug demonstrate that enhancements to classic algorithms—through improved diversity metrics and sophisticated search strategies—can yield significant performance gains in complex molecular landscapes. Furthermore, the rise of deep learning frameworks such as ScafVAE highlights the power of learning meaningful molecular representations to accelerate the search for optimal candidates. The choice of algorithm depends heavily on the specific problem context: evolutionary algorithms offer robustness and global search capabilities, MCTS provides a principled way to balance exploration and exploitation, and deep generative models leverage learned chemical priors for efficient optimization in latent space. As the field matures, the integration of these approaches, along with a stronger emphasis on uncertainty quantification and environmental considerations in the optimization lifecycle, will be critical for delivering more effective, sustainable, and reliable drug discovery outcomes.

The pursuit of a new viable drug candidate necessitates a delicate balancing act between three critical objectives: high biological activity (often quantified as pIC50), favorable ADMET properties (Absorption, Distribution, Metabolism, Excretion, and Toxicity), and adherence to Green Chemistry principles. Historically, the primary focus was on potency and efficacy, often at the expense of environmental considerations in the discovery and development process. Today, a paradigm shift is underway, recognizing that sustainable drug design is not merely an ethical adjunct but a strategic imperative that can enhance efficiency, reduce costs, and mitigate risk [9] [10] [11].

This guide provides a comparative framework for evaluating drug candidates and synthetic processes against these three objectives. It underscores the necessity of a multi-objective optimization strategy, where decisions are made by considering the complex trade-offs and synergies between potency, pharmacokinetics, and environmental impact, ultimately leading to more sustainable and successful drug development pipelines [10].

Comparative Analysis of Key Objectives

The table below provides a structured comparison of the three core objectives, their key metrics, and their role in the drug development process.

Table 1: Comparative Overview of Core Drug Discovery Objectives

| Objective | Core Description & Metrics | Traditional Role | Modern & Integrated Role |

|---|---|---|---|

| Biological Activity (pIC50) | Description: Measures a compound's potency by quantifying the concentration needed to inhibit a biological target. Key Metric: pIC50 = -log10(IC50), where IC50 is the half-maximal inhibitory concentration. Higher pIC50 indicates greater potency. | Primary driver for lead selection and optimization. Often the sole initial focus. | One critical parameter among several. A compound with high potency but poor ADMET or a wasteful synthesis will fail. |

| ADMET Properties | Description: A suite of properties defining the compound's pharmacokinetic and safety profile [12] [13]. Key Metrics: Cell permeability (e.g., Caco-2), plasma protein binding (PPB), cytochrome P450 inhibition, half-life, volume of distribution, and toxicity endpoints (e.g., hERG inhibition) [13]. | Assessed later in development, often leading to costly attrition of potent leads. | Integrated early in discovery via predictive computational models and high-throughput screening to de-risk candidates [10] [13]. |

| Green Chemistry Principles | Description: A framework of 12 principles to design chemical processes that reduce waste, hazard, and energy consumption [9] [11]. Key Metrics: Process Mass Intensity (PMI), E-Factor, solvent selection guide, atom Economy, and use of renewable feedstocks [11]. | Rarely a consideration in early discovery; applied later, if at all, during process chemistry. | A strategic design constraint from the outset, influencing route selection, solvent choice, and molecular design to reduce environmental and economic costs [10] [11]. |

Quantitative Data and Experimental Protocols

In Silico ADMET Prediction Workflow

Machine learning (ML) models for ADMET prediction rely on robust, standardized protocols to ensure reliability and reproducibility. The following workflow, based on current best practices, outlines the key steps from data curation to model deployment [13].

Diagram 1: ADMET Prediction Workflow

Protocol 1: Benchmarking ML for ADMET Prediction [13]

- Data Curation: Obtain datasets from public sources like Therapeutics Data Commons (TDC). Perform rigorous cleaning: remove duplicates, standardize SMILES representations, and resolve conflicting activity labels.

- Feature Engineering: Generate multiple ligand-based representations for each compound (e.g., molecular fingerprints, RDKit descriptors, and deep-learned representations). Systematically evaluate individual and combined feature sets to identify the most informative ones for a specific ADMET endpoint.

- Model Training & Validation:

- Train multiple ML models (e.g., Random Forest, Gaussian Process Regression).

- Optimize hyperparameters using dataset-specific tuning.

- Employ a robust validation strategy using 5-fold cross-validation combined with statistical hypothesis testing (e.g., paired t-tests) to confirm the significance of performance improvements.

- External Validation: Evaluate the final model's performance on a hold-out test set from a different data source to simulate a practical scenario and assess generalizability.

Green Chemistry Metrics and Synthesis Protocols

Evaluating chemical processes requires quantifiable green metrics. Process Mass Intensity (PMI) is a key indicator, measuring the total mass of materials used per kilogram of active pharmaceutical ingredient (API) produced [11]. A lower PMI signifies higher efficiency and less waste.

Table 2: Comparison of Traditional vs. Green Synthetic Approaches for a Model Reaction

| Parameter | Traditional Batch Synthesis | Green Chemistry approach | Impact & Implication |

|---|---|---|---|

| Process Mass Intensity (PMI) | Often >100 kg/kg API [11] | Can achieve >10-fold reduction [11] | Drastically reduces raw material consumption and waste generation. |

| Solvent Choice | Hazardous solvents (e.g., dichloromethane, THF) [11] | Safer alternatives (water, bio-based solvents) [9] | Reduces environmental toxicity, disposal costs, and safety risks. |

| Catalyst System | Stoichiometric reagents or precious metals (e.g., Palladium) [10] | Sustainable catalysts (Nickel, Biocatalysts) [10] | Nickel catalysts can reduce CO2 emissions and waste by >75% vs. Palladium [10]. |

| Reaction Technology | Conventional flask-based synthesis | Continuous Flow Synthesis [9] | Improves reaction control, safety, and scalability while reducing energy and space. |

| Energy Efficiency | Often requires high heating/cooling [11] | Photocatalysis (visible light) [10] | Enables reactions at ambient temperature, significantly reducing energy demand. |

Protocol 2: Late-Stage Functionalization (LSF) Using Photoredox Catalysis [10]

- Reaction Setup: In a dried glassware reactor, add the complex drug-like substrate (e.g., 1 mmol) and the photoredox catalyst (e.g., 2 mol%). Purge the system with an inert gas (e.g., N₂).

- Reagent Addition: Add the desired functionalization reagent (e.g., a radical precursor) and a green solvent mixture (e.g., acetone/water) via syringe.

- Reaction Execution: Stir the reaction mixture under irradiation with blue LEDs at room temperature. Monitor reaction progress in real-time using analytical techniques like TLC or HPLC.

- Work-up and Purification: Upon completion, concentrate the reaction mixture under reduced pressure. Purify the crude product using chromatography or crystallization.

- Green Metrics Calculation: Calculate the PMI and compare it to the multi-step linear synthesis route to quantify waste reduction.

The Multi-Objective Optimization Framework

The core challenge is navigating the trade-offs between the three objectives. A compound with excellent potency might be poorly soluble or require a synthetic route with a high PMI. The modern solution is to frame this as a multi-objective optimization problem, seeking a "Pareto-optimal" solution where no single objective can be improved without worsening another [14] [15] [16].

The following diagram illustrates the integrated decision-making framework for balancing pIC50, ADMET, and Green Chemistry.

Diagram 2: Multi-Objective Drug Optimization

Framework Application:

- Computational Triage: Use predictive 4D-QSAR models for pIC50 and ML for ADMET to virtually screen compound libraries, flagging molecules likely to fail early [12] [13].

- Route Selection: When multiple syntheses are possible for a promising candidate, choose the one with superior atom economy, safer solvents, and catalytic methods, even if the yield is marginally lower, as this often lowers the overall cost and risk [11].

- Synergistic Technologies: Leverage techniques like late-stage functionalization and flow chemistry which simultaneously advance multiple objectives. LSF efficiently generates diverse analogues for SAR and metabolite testing from a common intermediate, reducing synthetic steps and waste [10]. Flow chemistry enhances reaction control and safety while reducing PMI [9].

The Scientist's Toolkit: Essential Reagents and Technologies

Table 3: Key Research Reagents and Solutions for Integrated Drug Discovery

| Tool / Reagent | Function / Application | Relevance to Core Objectives |

|---|---|---|

| Machine Learning Platforms (e.g., TDC) | Provides curated benchmarks and datasets for building robust ADMET prediction models [13]. | Primarily ADMET, indirectly supports Green Chemistry by reducing experimental waste. |

| Photoredox Catalysts | Organic dyes or metal complexes that use visible light to catalyze challenging transformations under mild conditions [10]. | Green Chemistry (energy efficiency), enables synthesis of novel scaffolds for pIC50 optimization. |

| Biocatalysts | Engineered enzymes used as highly selective and efficient catalysts for API synthesis [10]. | Green Chemistry (high atom economy, renewable), reduces protective group steps, improves PMI. |

| Late-Stage Functionalization Reagents | Reagents designed to selectively modify complex molecules at the final stages of synthesis [10]. | pIC50 (rapid SAR exploration) and Green Chemistry (shorter synthetic routes, lower PMI). |

| Sustainable Metal Catalysts (e.g., Nickel) | Abundant metal catalysts replacing scarce/precious metals like Palladium in cross-coupling reactions [10]. | Green Chemistry (reduces environmental impact and supply chain risk for key reactions). |

| Process Analytical Technology (PAT) | Tools for real-time, in-process monitoring of reactions (e.g., in-situ spectroscopy) [11]. | Green Chemistry (prevents formation of hazardous substances, ensures high yield), supports Quality by Design. |

The Concept of Pareto Optimality and the Pareto Front in Pharmaceutical Development

Multi-objective optimization represents a critical paradigm shift in pharmaceutical development, where the identification of viable drug candidates necessitates balancing numerous, often competing, properties. This guide compares contemporary computational methodologies that leverage Pareto optimality to navigate this complex design space. We objectively evaluate the performance of algorithms such as Pareto Monte Carlo Tree Search (PMMG), DrugEx v2, and Bayesian optimization against traditional scalarization techniques, providing supporting quantitative data on success rates, diversity, and hypervolume metrics. The experimental protocols and reagent toolkits underpinning these comparisons are detailed to equip researchers with practical insights for implementing these approaches in drug discovery pipelines focused on optimizing both yield and environmental impact.

The "one drug, one target" paradigm has been superseded by the recognition that effective therapeutics must simultaneously satisfy multiple criteria [17]. An ideal drug candidate requires not only high binding affinity for its primary protein target but also minimal off-target interactions, favorable pharmacokinetics (ADMET), high synthetic accessibility, and low toxicity [18] [19]. Optimizing for any single property in isolation is trivial; the fundamental challenge lies in the inherent trade-offs between these objectives. For instance, increasing molecular complexity to improve binding affinity may concurrently worsen synthetic accessibility or solubility.

Pareto optimality provides a mathematical framework for this multi-property optimization [20]. A solution is considered Pareto optimal if it is impossible to improve one objective without worsening another. The collection of all such optimal solutions forms the Pareto front, which visually represents the best possible trade-offs between the competing objectives. This allows scientists to explore a spectrum of optimally balanced candidates rather than a single, potentially sub-optimal, point. The following sections compare computational strategies that identify this frontier, enabling a more efficient and holistic approach to molecular design.

Comparative Analysis of Multi-Objective Optimization Algorithms

We benchmark the performance of several state-of-the-art algorithms against traditional methods. The evaluation metrics include:

- Hypervolume (HV): Measures the volume of objective space dominated by the computed Pareto front, indicating overall performance.

- Success Rate (SR): The percentage of generated molecules that simultaneously satisfy all objective thresholds.

- Diversity (Div): Assesses the structural and property variety of molecules on the Pareto front.

Table 1: Performance Comparison of Multi-Objective Molecular Optimization Algorithms

| Method | Type | Key Mechanism | Hypervolume (HV) | Success Rate (SR) | Diversity (Div) |

|---|---|---|---|---|---|

| PMMG [18] | SMILES-based | Pareto Monte Carlo Tree Search | 0.569 ± 0.054 | 51.65% ± 0.78% | 0.930 ± 0.005 |

| DrugEx v2 [17] | SMILES-based | RL + Evolutionary Crossover/Mutation | Benchmark-specific results* | Benchmark-specific results* | Benchmark-specific results* |

| MolPAL [19] | Bayesian | Pareto Hypervolume Improvement | Benchmark-specific results* | Benchmark-specific results* | Benchmark-specific results* |

| SMILES-GA [18] | SMILES-based | Genetic Algorithm | 0.184 ± 0.021 | 3.02% ± 0.12% | - |

| REINVENT [18] | SMILES-based | Reinforcement Learning (Scalarized) | Outperformed by PMMG | Outperformed by PMMG | Outperformed by PMMG |

| MARS [18] | Graph-based | MCMC Sampling | Outperformed by PMMG | Outperformed by PMMG | Outperformed by PMMG |

Note: DrugEx v2 and MolPAL demonstrate superior performance over scalarization methods in their respective studies but do not report identical metrics to PMMG for direct numerical comparison in this table.

The data reveals that PMMG significantly outperforms other methods, including genetic algorithms (SMILES-GA) and reinforcement learning with scalarized objectives (REINVENT), achieving a success rate more than 2.5 times higher than other baselines [18]. The core advantage of Pareto-based methods like PMMG and DrugEx v2 over scalarization (e.g., weighted sum of objectives) is their ability to uncover the entire trade-off frontier without requiring pre-defined weights, which can bias the search and mask optimal solutions [19].

Experimental Protocols & Workflows

This section details the standard methodologies for implementing and evaluating multi-objective optimization algorithms in molecular design.

Algorithm Training and Molecular Generation Workflow

The following diagram illustrates the generalized workflow for Pareto-based molecular generation algorithms like PMMG [18] and DrugEx v2 [17].

Detailed Protocol:

- Pre-training: A generative model, typically a Recurrent Neural Network (RNN) or Transformer, is pre-trained on a large database of known molecules (e.g., ChEMBL) represented as SMILES strings to learn valid chemical structures and syntax [18] [17].

- Candidate Generation: The trained model generates a batch of novel candidate molecules.

- Multi-Objective Evaluation: Each candidate is scored against all predefined objective functions. These can include:

- Biological Activity: Predicted using machine learning QSAR models or molecular docking tools (e.g., smina) to calculate docking scores for target proteins [18] [6].

- Drug-likeness: Calculated via metrics like Quantitative Estimate of Drug-likeness (QED) [18].

- Synthetic Accessibility: Estimated with the Synthetic Accessibility Score (SA Score) [18] [19].

- ADMET & Toxicity: Predicted by specialized classifiers for permeability, metabolic stability, and toxicity [18] [17].

- Pareto Analysis: A non-dominated sorting algorithm ranks the generated molecules. Molecules on the first non-dominated front form the current Pareto front [17] [19].

- Search Guidance: The Pareto ranks are used to compute a reward or guide the search algorithm:

- Iteration: Steps 2-5 repeat until convergence, typically when the Pareto front stabilizes and no significantly better molecules are discovered over multiple iterations.

Pareto Front Identification and Analysis

The process of identifying the Pareto front from a generated molecular library is crucial for final candidate selection.

Detailed Protocol:

- Virtual Screening: A large virtual library of molecules is generated, either exhaustively or via an optimization algorithm like MolPAL [19].

- Exhaustive Evaluation: All molecules in the library are evaluated on all objective functions (e.g., docking against on-target and off-target proteins).

- Non-Dominated Sorting: The entire set of molecules is sorted into Pareto fronts. The algorithm iteratively identifies the non-dominated set (Pareto front 1), removes it, and finds the next non-dominated set from the remainder (Pareto front 2), and so on [19].

- Trade-off Analysis: The molecules on the first Pareto front (Pareto front 1) represent the optimal trade-offs. Researchers can visually inspect the front and select candidates based on project-specific priorities without being constrained by pre-defined weights [19] [21].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational tools and resources essential for conducting multi-objective molecular optimization experiments.

Table 2: Essential Research Reagents and Tools for Multi-Objective Optimization

| Item Name | Function/Description | Application in Protocol |

|---|---|---|

| ChEMBL Database | A large, open-access database of bioactive molecules with drug-like properties. | Serves as the primary source for pre-training generative models and building predictive QSAR models [17]. |

| SMILES Representation | Simplified Molecular-Input Line-Entry System; a string notation for representing molecules. | The standard representation for SMILES-based generative models (e.g., in PMMG, DrugEx) [18]. |

| smina | A software package for molecular docking, based on AutoDock Vina. | Used to calculate docking scores for evaluating binding affinity to target and off-target proteins [6]. |

| RDKit | Open-source cheminformatics software. | Used for calculating molecular descriptors, fingerprints (ECFP), and properties like QED and SA Score [17]. |

| ProPred | An in silico tool for predicting T-cell epitopes via MHC II binding. | Used in biotherapeutic deimmunization studies to predict and minimize immunogenic potential [22]. |

| Multi-task DNN | A deep neural network trained to predict multiple biological activity endpoints simultaneously. | Functions as the "environment" in reinforcement learning algorithms (e.g., DrugEx) to predict compound properties [17]. |

The adoption of Pareto optimization principles marks a significant advancement in pharmaceutical development, directly addressing the multi-faceted nature of drug efficacy, safety, and sustainability. As evidenced by the quantitative data, algorithms like PMMG that explicitly search for the Pareto front offer a superior and more efficient strategy for molecular design compared to traditional scalarization or single-objective methods. By providing a clear visualization of the inherent trade-offs—be it between binding affinity and synthetic yield, or between drug efficacy and environmental impact—this framework empowers scientists to make more informed decisions. The continued development and application of these tools hold the potential to de-risk and accelerate the journey from concept to viable drug candidate.

Why Single-Objective Optimization Fails in Complex Drug Design

In the resource-intensive landscape of drug discovery, the pursuit of a single, paramount property for a candidate molecule often comes at the expense of other critical parameters. This article explores the fundamental limitations of single-objective optimization (SingleOOP) and demonstrates why multi-objective optimization (MultiOOP) is not merely an enhancement but a necessity for developing viable, safe, and effective drugs.

The Fundamental Flaw: A Narrow Focus in a Multi-Faceted Problem

Single-objective optimization is designed to find the optimal solution that corresponds to the minimum or maximum value of a single objective function. [23] In drug design, this might translate to solely maximizing a compound's binding affinity to a biological target. While this approach can identify potent molecules, it ignores a host of other essential properties that determine a drug's ultimate success.

Traditional drug discovery, which often relies on step-by-step single-objective optimization, suffers from a high failure rate, with more than 90% of drug candidates failing during clinical development. [24] Many of these failures are due to poor biopharmaceutic properties—such as inadequate solubility, limited permeability, or extensive metabolism—which are typically not considered when using a single-objective approach. [24]

In contrast, multi-objective optimization problems involve multiple objective functions and do not generate a single best solution. Instead, they produce an array of best solutions known as the Pareto front. [23] These solutions are non-dominated, meaning no one solution is better in all objectives than any other, forcing a conscious trade-off between conflicting goals. [25] [23] Designing a new drug is inherently a problem with diverse, conflicting objectives to be optimized concurrently, such as maximizing potency and structural novelty while minimizing synthesis costs and unwanted side effects. [25]

Comparative Experimental Data: Single vs. Multi-Objective Performance

The theoretical superiority of multi-objective optimization is borne out in practical, experimental studies. The table below summarizes key performance comparisons as demonstrated in recent research.

Table 1: Comparative Performance of Single vs. Multi-Objective Optimization in Drug Discovery Applications

| Study Focus | Single-Objective Approach | Multi-Objective Approach | Key Outcome |

|---|---|---|---|

| Virtual Screening (CheapVS Framework) [26] | Sequential screening and post-processing hit selection | Preferential Multi-Objective Bayesian Optimization with human feedback | Recovered 37 known DRD2 drugs while screening only 6% of a 100K compound library, showcasing superior efficiency. |

| Sustained-Release Formulation (Glipizide) [27] | Traditional single-objective transformation with subjective weighting | Integrated NSGA-III, MOGWO, and NSWOA algorithms | Achieved a balanced cumulative release profile (22.75% at 2h, 64.98% at 8h, 100.23% at 24h) that met all pharmacopoeia standards. |

| De Novo Molecular Design (DyRAMO Framework) [28] | Optimization for a single property (e.g., inhibitory activity) risking "reward hacking" | Dynamic reliability adjustment for multiple properties (activity, stability, permeability) | Successfully designed molecules with high predicted values and reliabilities for all three properties, including an approved drug. |

Detailed Experimental Protocols

To illustrate how these comparisons are derived, here are the methodologies from two key studies.

Protocol 1: Preferential Multi-Objective Bayesian Optimization for Virtual Screening

This protocol, based on the CheapVS framework, integrates human expertise into the optimization loop. [26]

- Problem Formulation: Define the multiple objectives (e.g., binding affinity, solubility, synthetic accessibility) for the virtual screening campaign.

- Library Preparation: Curate a large library of chemical compounds (e.g., 100,000 molecules) for screening.

- Preference Elicitation: Present a chemist with pairs of candidate molecules and their property trade-offs. The chemist provides a preference, indicating which molecule is more desirable.

- Bayesian Optimization Loop:

- Model Training: Update a probabilistic model that incorporates the human preferences to balance the multiple objectives.

- Candidate Selection: Using the model, select the most promising batch of molecules for the next round of evaluation (e.g., docking simulation).

- Iteration: Repeat steps 3 and 4 within a limited computational budget (e.g., screening only 6% of the total library).

- Validation: The top-ranked molecules identified by the algorithm are validated by their ability to recover known active drugs from the library.

Protocol 2: Systematic Multi-Objective Optimization of a Sustained-Release Formulation

This protocol outlines a comprehensive strategy for optimizing a complex drug formulation with multiple, time-dependent release objectives. [27]

- Variable Generation and Screening:

- Mixture Design: A glipizide sustained-release tablet formulation with five excipients (HPMC K4M, HPMC K100LV, MgO, lactose, anhydrous CaHPO4) is defined.

- Variable Selection: Use penalized regression methods (LASSO, SCAD, MCP) to identify key excipient interactions from a large set of polynomial variables, avoiding data non-saturation.

- Time-Dependent Modeling:

- Response Definition: The cumulative drug release rates at 2 hours (Y2), 8 hours (Y8), and 24 hours (Y24) are set as the objective functions.

- Model Fitting: A Quadratic Inference Function (QIF) is employed to build a robust statistical model that captures the temporal correlation of the release profile.

- Multi-Objective Global Optimization:

- Algorithm Application: Three multi-objective algorithms—NSGA-III, MOGWO, and NSWOA—are used to generate a set of Pareto-optimal solutions (formulations) that best balance Y2, Y8, and Y24.

- Multi-Criteria Decision Making:

- Solution Ranking: The entropy weight method combined with the TOPSIS technique is applied to the Pareto-optimal set to select the single best formulation, minimizing subjective bias in the final choice.

Visualizing the Optimization Concepts

The core difference between single and multi-objective optimization, and the challenge of reliability in molecular design, can be visualized through the following diagrams.

Single vs. Multi-Objective Workflow

The Pareto Front as a Trade-Off

Single-Objective Leading to Reward Hacking

The Scientist's Toolkit: Key Research Reagents and Solutions

The effective implementation of multi-objective optimization in drug discovery relies on a suite of computational tools and algorithms.

Table 2: Essential Multi-Optimization Reagents and Their Functions

| Tool/Algorithm | Type | Primary Function in Drug Design |

|---|---|---|

| NSGA-III (Non-dominated Sorting Genetic Algorithm III) [27] | Evolutionary Algorithm | Solves many-objective optimization problems (≥4 objectives) by finding a diverse set of Pareto-optimal solutions. |

| Bayesian Optimization (e.g., CheapVS) [26] | Probabilistic Model | Efficiently balances exploration and exploitation in high-dimensional spaces, incorporating human preference. |

| DyRAMO (Dynamic Reliability Adjustment) [28] | Optimization Framework | Prevents "reward hacking" by dynamically adjusting the reliability level of multiple property predictions during molecular design. |

| MOLLM (Multi-Objective Large Language Model) [29] | Large Language Model | Leverages domain knowledge and in-context learning to generate and optimize molecules across multiple objectives. |

| QIF (Quadratic Inference Function) [27] | Statistical Model | Provides robust modeling of time-dependent responses (e.g., drug release profiles) with limited sample data. |

| MOGWO / NSWOA (Multi-Objective Grey Wolf/Whale Optimizers) [27] | Bio-Inspired Algorithm | Population-based metaheuristics used for global optimization of complex, non-linear formulation problems. |

The evidence is clear: single-objective optimization is fundamentally ill-suited for the intricate challenges of modern drug design. Its narrow focus inevitably leads to molecules that are optimized for a single characteristic but flawed in others, contributing to the high attrition rates in clinical development. The paradigm is shifting towards multi-objective strategies that explicitly acknowledge and manage the inherent trade-offs between efficacy, safety, and manufacturability. By adopting algorithms and frameworks that generate a Pareto front of candidate solutions, researchers can make more informed decisions, mitigate risks earlier, and ultimately increase the probability of delivering successful new therapeutics to patients.

The development of anti-breast cancer drugs represents a complex multi-objective optimization challenge, requiring careful balance between biological efficacy, pharmacokinetic properties, and increasingly, environmental sustainability. Traditional drug discovery approaches often focus predominantly on biological activity metrics, particularly against well-established targets like estrogen receptor alpha (ERα). However, this narrow focus frequently leads to late-stage failures when candidates demonstrate poor absorption, distribution, metabolism, excretion, or toxicity (ADMET) profiles [30] [31]. Furthermore, the environmental impact of cancer care—from drug production to patient treatment—has emerged as a significant consideration in sustainable healthcare strategies [32].

This case study examines the computational and experimental frameworks being employed to navigate these trade-offs systematically. By integrating machine learning, molecular modeling, and multi-objective optimization algorithms, researchers can simultaneously optimize multiple drug properties that were traditionally addressed sequentially. This integrated approach not only accelerates discovery timelines but also reduces resource consumption and experimental waste, contributing to more environmentally sustainable drug development pipelines [32] [30].

Computational Frameworks for Balanced Drug Design

Machine Learning-Driven QSAR and ADMET Optimization

Recent advances in quantitative structure-activity relationship (QSAR) modeling have enabled researchers to predict both biological activity and ADMET properties early in the discovery process. Zhou Dong et al. (2025) developed a machine learning-based optimization model that identified 20 critical molecular descriptors from an initial set of 729 possibilities to guide anti-breast cancer drug design [33] [30]. Their approach demonstrates how feature selection techniques like grey relational analysis, Spearman correlation, and Random Forest with SHAP values can pinpoint structural characteristics that simultaneously influence multiple drug properties.

The most effective models employed ensemble methods including LightGBM, Random Forest, and XGBoost, achieving an impressive R² value of 0.743 for predicting biological activity (pIC50 values) against ERα [30]. For ADMET prediction, the best-performing models achieved F1 scores of 0.8905 for Caco-2 (absorption) and 0.9733 for CYP3A4 (metabolism) prediction, demonstrating robust classification performance for these critical pharmacokinetic parameters [30].

Table 1: Performance Metrics of Machine Learning Models for Drug Property Prediction

| Prediction Task | Best Model | Performance Metric | Value |

|---|---|---|---|

| ERα Bioactivity | Stacking Ensemble | R² | 0.743 |

| Caco-2 (Absorption) | LightGBM | F1 Score | 0.8905 |

| CYP3A4 (Metabolism) | XGBoost | F1 Score | 0.9733 |

| hERG (Toxicity) | Naive Bayes | F1 Score | Not Specified |

| MN (Toxicity) | XGBoost | F1 Score | Not Specified |

Particle Swarm Optimization for Multi-Objective Balancing

To formally address the trade-offs between biological activity and ADMET properties, researchers have implemented Particle Swarm Optimization (PSO) algorithms. This approach treats drug optimization as a multi-objective problem where the goal is to identify chemical structures that maximize anti-cancer activity while satisfying constraints on pharmacokinetics and toxicity [30]. The PSO algorithm navigates this complex chemical space by iteratively updating candidate solutions based on both individual and population best performances, gradually converging toward optimal compromises between competing objectives.

This methodology represents a significant advancement over traditional sequential optimization, where medicinal chemists would first maximize potency before attempting to remedy poor ADMET properties—a process that often diminished hard-won gains in biological activity [30]. The multi-objective approach acknowledges the inherent interconnectedness of these properties and seeks balanced solutions from the outset.

Experimental Protocols for Validation

Molecular Docking and Dynamics Simulations

Experimental validation of computational predictions relies heavily on structure-based methods. The following protocol exemplifies current approaches for evaluating candidate drugs targeting breast cancer-related proteins:

Target Preparation: Protein structures are obtained from Protein Data Bank (e.g., PDB ID: 7LD3 for adenosine A1 receptor) and prepared using molecular modeling software. Structures are optimized with the AMBER99SB-ILDN force field and hydrated using TIP3P water models [34].

Molecular Docking: Candidates are docked into binding sites using software such as Discovery Studio with CHARMM force fields. The LibDock scoring function is employed to evaluate binding poses, with scores typically exceeding 130 considered promising [34].

Molecular Dynamics (MD) Simulations: To evaluate binding stability, docked complexes undergo 100ns MD simulations using GROMACS. Systems are energy-minimized, followed by 150ps restrained MD at 298.15K before unrestricted production simulations. Trajectories are analyzed using VMD software to assess complex stability and interaction persistence [34] [35].

This integrated computational workflow successfully identified stable binding between compound 5 and the adenosine A1 receptor, and guided the design of derivative D3, which showed improved binding energy (-8.14 kcal/mol) compared to tamoxifen (-7.2 kcal/mol) [34] [35].

In Vitro Biological Evaluation

Promising candidates from computational screens undergo experimental validation using established breast cancer cell models:

Cell Culture: MCF-7 (ER+) and MDA-MB-231 (triple-negative) cell lines are maintained under standard conditions (37°C, 5% CO₂) in appropriate media [34] [36].

Proliferation Assays: Cells are treated with candidate compounds across a concentration range (typically 0.1-100 μM) for 48-72 hours. Viability is assessed using MTT or similar assays, and IC₅₀ values are calculated [34].

Mechanistic Studies: Additional assays evaluate apoptosis induction (e.g., caspase activation, Annexin V staining), cell migration (wound healing/transwell assays), and reactive oxygen species generation [36].

This approach validated the potent antitumor activity of Molecule 10, which showed an IC₅₀ value of 0.032 μM against MCF-7 cells, significantly outperforming the positive control 5-FU (IC₅₀ = 0.45 μM) [34].

Signaling Pathways and Molecular Interactions

Diagram: Key Signaling Pathways in Breast Cancer. The diagram illustrates primary molecular pathways involved in breast cancer progression, showing how drug antagonists target critical nodes like ERα.

Research Reagent Solutions for Anti-Breast Cancer Drug Discovery

Table 2: Essential Research Reagents and Computational Tools for Breast Cancer Drug Discovery

| Reagent/Tool | Function | Application Example |

|---|---|---|

| MCF-7 Cell Line | ER+ breast cancer model | In vitro validation of anti-proliferative activity [34] |

| MDA-MB-231 Cell Line | Triple-negative breast cancer model | Studying aggressive breast cancer behavior [34] |

| Discovery Studio | Molecular docking and simulation | LibDock scoring of protein-ligand complexes [34] |

| GROMACS | Molecular dynamics simulations | 100ns MD simulations for binding stability [34] [35] |

| SwissTargetPrediction | Target prediction | Identifying potential protein targets for compounds [34] [36] |

| GDSC Database | Drug response resource | IC₅₀ values for anticancer agents across cell lines [37] |

| STRING Database | Protein-protein interactions | Constructing PPI networks for mechanism studies [36] |

Environmental Impact Considerations

The environmental sustainability of breast cancer care presents another dimension for optimization. A scoping review by The Breast (2025) highlighted that hormonal and chemotherapeutic drugs have ecotoxic effects and exploit natural resources during production and distribution [32]. This environmental perspective adds a crucial systems-level consideration to drug development trade-offs.

Strategies to improve environmental sustainability include:

- Optimizing resource consumption in operating rooms

- Developing targeted therapies that minimize environmental persistence

- Implementing mobile screening clinics to reduce travel-related emissions

- Adopting green chemistry principles in drug synthesis and formulation [32]

Radiation therapy, particularly travel to centralized care centers, contributes significantly to greenhouse gas emissions, suggesting opportunities for decentralized treatment models [32]. These environmental factors represent emerging considerations in the comprehensive evaluation of anti-breast cancer therapies.

The trade-offs in anti-breast cancer drug development require sophisticated multi-objective optimization strategies that balance potency, pharmacokinetics, and increasingly, environmental impact. Machine learning models, particularly ensemble methods and graph neural networks, now enable simultaneous prediction of biological activity and ADMET properties with considerable accuracy [30] [31]. When combined with experimental validation through molecular dynamics and in vitro assays, these computational approaches facilitate more efficient drug discovery while reducing resource consumption.

The most promising frameworks integrate QSAR modeling, structure-based design, and multi-objective optimization algorithms like PSO to navigate the complex trade-offs between competing drug properties [35] [30]. This integrated approach acknowledges that optimal cancer therapeutics must balance multiple objectives rather than maximizing single parameters, ultimately leading to more developable candidates with favorable efficacy, safety, and environmental profiles.

Diagram: Drug Optimization Workflow. The workflow illustrates the integrated computational and experimental approach for multi-objective optimization of anti-breast cancer candidates.

Algorithmic Solutions: A Guide to MOO Methods in Sustainable Drug Development

Multi-Objective Evolutionary Algorithms (MOEAs) are a class of optimization techniques designed to solve problems with multiple, often conflicting, objectives. Unlike single-objective optimization that yields a single best solution, MOEAs identify a set of optimal solutions, known as the Pareto-optimal front [38]. In this set, no solution is superior to another in all objectives; improvements in one objective necessitate compromises in others. MOEAs simulate natural selection and evolution processes, maintaining a population of potential solutions that evolve over generations through selection, crossover, and mutation operations [39]. Their ability to handle complex, non-linear problems makes them particularly valuable in fields like software engineering, drug development, and sustainable design, where balancing competing goals such as performance, cost, and environmental impact is crucial [40] [16] [15].

Detailed Review of Key MOEAs

Core Algorithms and Their Methodologies

NSGA-II (Non-dominated Sorting Genetic Algorithm II)

NSGA-II is a pioneering and widely-used algorithm that employs a fast non-dominated sorting approach to rank solutions into Pareto fronts and a crowding distance operator to maintain diversity within the population [40] [38]. This crowding distance estimates the density of solutions surrounding a particular point in the objective space, favoring individuals in less crowded regions to preserve a spread of solutions. Its effectiveness and relatively low computational requirements have made it a benchmark against which newer algorithms are often measured [40].

MOEA/D (Multi-Objective Evolutionary Algorithm based on Decomposition)

MOEA/D introduces a different philosophy by decomposing a multi-objective problem into several single-objective subproblems [38]. These subproblems are optimized simultaneously using information from neighboring subproblems. This approach can be computationally more efficient than Pareto-based sorting and often demonstrates strong performance, particularly on problems with many objectives. Experimental studies have shown MOEA/D to outperform or perform similarly to NSGA-II on various test problems, including multi-objective 0-1 knapsack problems [38].

SPEA2 (Strength Pareto Evolutionary Algorithm 2)

SPEA2 is another canonical algorithm that improves upon its predecessor through a fine-grained fitness assignment strategy and a density estimation technique [38]. It maintains an archive of non-dominated solutions and uses a nearest-neighbor method to estimate density, which helps guide the selection process towards a well-distributed Pareto front. Comparative studies have found it to be effective in sampling from along the entire Pareto-optimal front [38].

Other Notable Algorithms

Recent years have seen the development of numerous other MOEAs. rNSGA-II (Reference-point based NSGA-II) allows for the incorporation of user preferences [40]. NSGA-III is specifically designed for many-objective problems (those with more than three objectives) by using reference points to ensure diversity [40]. MOPSO (Multi-Objective Particle Swarm Optimization) adapts the particle swarm optimization paradigm for multi-objective problems [40]. Other algorithms like NNIA, SPEAR, HypE, and KnEA offer varied strategies for balancing convergence and diversity in the objective space [40].

Comparative Performance Analysis

A 2023 comparative study evaluated 19 state-of-the-art evolutionary algorithms on the Next Release Problem (NRP), a software engineering optimization task aiming to maximize customer satisfaction while minimizing cost [40]. Performance was measured using the Hyper-volume (HV) indicator, which calculates the volume of objective space dominated by the obtained solutions, and Spread, which measures the extent and uniformity of solution distribution.

Table 1: Algorithm Performance Ranking on the Next Release Problem (NRP) [40]

| Performance Rank | Algorithm | Key Finding |

|---|---|---|

| 1st | NNIA | Achieved the best Hyper-volume (HV) performance, with most values >0.708 |

| 2nd | SPEAR | Showed strong HV performance, with values between 0.706 and 0.708 |

| Best CPU Runtime | NSGA-II | Exhibited the shortest computation time across all test scales |

The study concluded that no single algorithm dominated all others in every metric, but NNIA and SPEAR demonstrated superior convergence and diversity, while NSGA-II remained highly efficient computationally [40].

Table 2: Generalized Comparison of Canonical MOEA Characteristics

| Algorithm | Core Mechanism | Strengths | Weaknesses/Limitations |

|---|---|---|---|

| NSGA-II | Fast non-dominated sorting & Crowding distance | Computational efficiency; Good spread on 2/3-objective problems | Performance degrades with many objectives (>3) |

| MOEA/D | Decomposition & Neighborhood cooperation | High search efficiency; Suitable for many-objective problems | Performance sensitive to weight vectors/neighborhood size |

| SPEA2 | Archive-based & Fine-grained fitness assignment | Effective archive maintenance; Good diversity preservation | Higher computational complexity than NSGA-II |

Experimental Protocols and Evaluation

Standard Experimental Methodology

Rigorous evaluation of MOEAs typically follows a structured protocol to ensure fairness and reproducibility. The standard workflow can be visualized as follows:

- Problem and Benchmark Selection: Algorithms are tested on standardized benchmark problems with known Pareto fronts (e.g., ZDT, DTLZ, WFG series) and/or real-world applications (e.g., Next Release Problem, structural design) [40] [38] [15]. These problems vary in difficulty, geometry, and number of objectives.

- Algorithm Selection and Parameterization: The MOEAs to be compared are identified. Critical parameters, such as population size, mutation and crossover rates, and stopping criteria (e.g., number of generations or function evaluations), are set to predetermined values to ensure a fair comparison [40].

- Performance Metric Definition: Quantitative metrics are chosen to evaluate different aspects of performance. Common metrics include [40] [38]:

- Hyper-volume (HV): Measures the volume of the objective space dominated by the obtained Pareto front and bounded by a reference point. A higher HV indicates better convergence and diversity.

- Spread (or Diversity): Assesses the extent and uniformity of the spread of solutions in the Pareto front.

- Inverted Generational Distance (IGD): Measures the average distance from each point in the true Pareto front to the nearest point in the obtained front, evaluating both convergence and diversity.

- Runtime: Records the computational time required by the algorithm.

- Algorithm Execution and Data Collection: Each algorithm is run multiple times (often 20-30 independent runs) to account for stochastic variations. The resulting Pareto fronts and metric values are recorded for each run [40].

- Results Analysis and Statistical Testing: The collected data is analyzed, and often non-parametric statistical tests (e.g., the Wilcoxon signed-rank test) are performed to determine if performance differences between algorithms are statistically significant [40].

- Conclusion and Reporting: Findings are synthesized to rank the algorithms and provide guidance on their suitability for the tested problem domains [40].

Application in Environmental Impact Research

The experimental MOEA framework is powerfully applied in environmental research. A study on green hydrogen production in Germany (2025) exemplifies this, developing a multi-objective optimization framework to balance environmental impact (carbon footprint) and energy cost per kilogram of hydrogen [16]. The methodology integrated life-cycle assessment (LCA) with machine-learning surrogate models trained on historical data.

Table 3: Key Research Reagents and Computational Tools for MOEA-based Environmental Optimization

| Item/Tool Name | Function in the Research Context | Exemplar Use Case |

|---|---|---|

| Life Cycle Inventory (LCI) Database | Provides emission factors and resource use data for various technologies and materials. | Ecoinvent v3.8 database was used to source environmental impact data [16]. |

| Surrogate Model | A machine-learning model used as a computationally cheap proxy for expensive simulations or models. | Random Forest Regression models were trained to rapidly estimate GWP and cost of hydrogen [16]. |

| Constrained Latin Hypercube Sampling (cLHS) | A statistical method for generating near-random, policy-compliant input parameter samples from a multidimensional distribution. | Used to generate policy-compliant grid-mix scenarios for Germany [16]. |

| ReCiPe 2016 Methodology | A standardized life cycle impact assessment (LCIA) method for translating inventory data into environmental impact scores. | Employed to calculate the Global Warming Potential (GWP) in kg CO₂-eq/kg H₂ [16]. |

The workflow for this application is detailed below:

The study successfully identified Pareto-optimal grid mix scenarios, demonstrating that cost-effective, low-carbon hydrogen production requires balanced portfolios emphasizing hydropower, biomass, and solar energy [16]. Similarly, in sustainable construction, a multi-objective ant colony algorithm was applied to optimize prefabricated building designs, simultaneously minimizing cost, duration, and carbon emissions [15]. These cases highlight MOEAs' critical role in supporting decisions for sustainable development and environmental impact mitigation.

The field of Multi-Objective Evolutionary Algorithms is dynamic, with a wide array of sophisticated algorithms available. Canonical methods like NSGA-II, MOEA/D, and SPEA2 remain highly relevant due to their proven performance and efficiency, particularly for problems with two or three objectives [40] [38]. However, recent studies indicate that newer algorithms like NNIA and SPEAR can achieve superior results on specific problems and metrics, such as the hyper-volume indicator [40]. The choice of the most suitable MOEA is inherently problem-dependent. There is no single "best" algorithm for all scenarios. Factors such as the number of objectives, the computational cost of function evaluations, the desired balance between convergence and diversity, and the need for computational efficiency must all be considered. The integration of MOEAs with other techniques, such as machine learning surrogate models, is a powerful trend that enhances their applicability to complex real-world problems in sustainability and environmental research [16] [15]. As this field evolves, MOEAs will continue to be indispensable tools for navigating complex trade-offs in science and engineering.

The integration of machine learning (ML) with Quantitative Structure-Activity Relationship (QSAR) modeling marks a transformative shift in computational drug discovery and environmental impact assessment [41]. This evolution, often termed 'deep QSAR', leverages advanced artificial intelligence to enhance the prediction of biological activity, toxicity, and physicochemical properties, thereby accelerating the design of safer and more effective compounds [41]. Within the broader thesis context of multi-objective optimization—which seeks to balance critical parameters like synthetic yield, efficacy, cost, and environmental footprint—these ML-enhanced models provide indispensable tools for informed decision-making [42] [15] [16]. This guide objectively compares the performance of traditional and ML-driven QSAR workflows, supported by experimental data, to delineate their advantages and limitations for researchers and drug development professionals.

Core Methodologies and Model Comparison

The validity and predictive power of a QSAR model are paramount, and their assessment has evolved beyond simple metrics. External validation, where a model is tested on a completely independent dataset, is a critical benchmark for reliability [43]. The following sections compare key methodologies.

Traditional QSAR Modeling Workflow

The conventional QSAR pipeline is a multi-stage process. It begins with data collection and curation, followed by calculation of molecular descriptors (e.g., using software like Dragon), model development using statistical techniques like Multiple Linear Regression (MLR), and rigorous internal and external validation [43] [44]. A critical best practice is the separation of data into training and test sets to avoid overfitting and to obtain a true measure of predictive ability [43] [44]. However, reliance on the coefficient of determination (r²) alone for validation is insufficient, as a high r² does not guarantee model robustness or external predictability [43].

Machine Learning-Enhanced QSAR Workflow

Modern "deep QSAR" integrates deep learning and other ML algorithms directly into the modeling fabric [41]. This workflow often uses more complex molecular representations, including learned features from graphs or SMILES strings, as inputs to neural networks [41]. Techniques like Support Vector Machines (SVM) and Neural Networks (NN) are employed to capture non-linear relationships between structure and activity that traditional linear models might miss [45]. The process still emphasizes rigorous validation, descriptor importance analysis, and defining the Applicability Domain (AD) to understand the model's scope [46] [45].

Performance Comparison of Modeling Approaches

The table below summarizes quantitative performance data from various studies, comparing traditional and ML-driven QSAR models across different applications.

Table 1: Performance Comparison of QSAR Modeling Approaches

| Application Domain | Model Type | Key Performance Metric (Test Set) | Key Descriptors/Features | Reference |

|---|---|---|---|---|

| General Biological Activity | Various Traditional (MLR, PLS) | r² range: 0.088 to 0.963 across 44 models; Many with r² > 0.8 showed good predictive power [43]. | Descriptors calculated via Dragon, CODESSA, etc. [43]. | [43] |

| Nanoparticle Mixture Toxicity (E. coli) | SVM-QSAR | R²test = 0.908, RMSEtest = 0.255 [45]. | Metal electronegativity, metal oxide energy descriptors [45]. | [45] |

| Nanoparticle Mixture Toxicity (E. coli) | NN-QSAR | R²test = 0.911, RMSEtest = 0.091 (internal) [45]. | Enthalpy of formation of gaseous cation, metal oxide standard molar enthalpy [45]. | [45] |

| Caco-2 Permeability (Demo) | Random Forest (ML) | Test-set R² = 0.7 with minimal optimization [44]. | RDKit molecular descriptors [44]. | [44] |

| Environmental Persistence (Cosmetics) | BIOWIN (EPISUITE) | High performance for qualitative ready biodegradability prediction [46]. | Fragment-based functional groups [46]. | [46] |

| Bioaccumulation (Log Kow) | ALogP (VEGA), ADMETLab 3.0 | Identified as most appropriate for Log Kow prediction [46]. | Atom/fragment contribution methods, graph-based ML [46]. | [46] |

Key Insights from Comparison:

- Predictive Power: ML models, particularly NN and SVM, can achieve exceptionally high predictive accuracy (R² > 0.9) for complex endpoints like nanoparticle mixture toxicity, often outperforming traditional component-based mixture models [45].

- Descriptor Evolution: While traditional models rely on pre-defined molecular descriptors, deep QSAR models can learn relevant features directly from data, potentially uncovering novel structure-activity insights [41].

- Validation Necessity: Both paradigms underscore that no single metric (e.g., r²) is sufficient for validation. A suite of statistical parameters and external validation is essential [43].

- Applicability Domain (AD): The AD is crucial for assessing model reliability. Studies show that predictions for compounds within the AD are significantly more reliable, especially for regulatory-grade qualitative assessments (e.g., persistent vs. not persistent) [46].

Detailed Experimental Protocol for QSAR Model Development & Validation

The following protocol synthesizes best practices from the referenced literature for building and validating a robust QSAR model [43] [44] [45].

1. Data Curation and Preparation: * Source: Collect experimental biological/toxicity data from literature or in-house studies. Public repositories like Therapeutic Data Commons provide curated datasets [44]. * Curation: Meticulously standardize chemical structures (e.g., remove salts, correct stereochemistry), check for errors, and identify duplicates. Data quality is the foundation of model reliability [41]. * Activity Data: Use a consistent endpoint (e.g., IC50, LogP, toxicity value) and express it on a logarithmic scale if appropriate.

2. Molecular Representation: * Option A - Traditional Descriptors: Calculate a wide array of 1D, 2D, and 3D molecular descriptors using software like RDKit, Dragon, or PaDEL [44]. * Option B - Learned Representations: For deep learning models, use SMILES strings, molecular graphs, or fingerprint vectors as direct input [41].

3. Dataset Division: * Randomly split the curated dataset into a Training Set (~70-80%) for model building and a held-out Test Set (~20-30%) for final, unbiased validation. More complex methods like sphere exclusion may be used to ensure representativeness [43].

4. Model Training and Internal Validation: * Algorithm Selection: Choose based on problem complexity: MLR/PLS for linear relationships; Random Forest, SVM, or Neural Networks for non-linear relationships [44] [45]. * Feature Selection: Apply methods (e.g., stepwise selection, genetic algorithms) to reduce descriptor number and avoid overfitting. * Internal Validation: Perform k-fold cross-validation (e.g., 5-fold) on the training set to tune hyperparameters and assess initial stability.

5. External Validation and Statistical Analysis: * Prediction: Apply the final model, trained on the full training set, to predict the activity of the unseen Test Set. * Statistical Metrics: Calculate a comprehensive set of metrics: R² (coefficient of determination), R²₀ and R'²₀ (for regression through origin), RMSE (Root Mean Square Error), MAE (Mean Absolute Error) [43] [45]. * Acceptance Criteria: Use published guidelines. For instance, a model may be considered predictive if R²test > 0.6, and the slopes of regressions through the origin (k and k') are close to 1 [43].

6. Defining the Applicability Domain (AD): * Use methods like leverage, distance to model in descriptor space, or ranges of descriptor values to define the chemical space where the model's predictions are reliable. Always report whether new prediction compounds fall within the AD [46].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for ML-Enhanced QSAR and Predictive Modeling

| Tool/Solution Category | Example/Name | Primary Function in Workflow |

|---|---|---|

| Cheminformatics & Descriptor Calculation | RDKit, Dragon, PaDEL | Generates numerical molecular descriptors (1D-3D) from chemical structures for model input. |

| Machine Learning Frameworks | scikit-learn, TensorFlow, PyTorch | Provides algorithms (Random Forest, SVM, Neural Networks) and environment for building, training, and validating models. |

| Integrated QSAR Platforms | StarDrop's Auto-Modeller, VEGA, ADMETLab 3.0 | Offers automated, guided workflows for model building, validation, and pre-built models for specific endpoints (ADME, toxicity). |

| Toxicity & Environmental Assessment Databases | Ecoinvent, OPERA, EPISUITE | Supplies environmental fate data, physicochemical properties, and emission factors for life-cycle impact and environmental risk assessment. |

| Multi-Objective Optimization Solvers | Ant Colony Algorithm, Pareto Frontier Analysis | Solves optimization problems with conflicting objectives (e.g., cost vs. environmental impact) to identify optimal trade-off solutions. |

Workflow and Relationship Visualizations

Diagram 1: ML-Enhanced QSAR Predictive Modeling Workflow

Diagram 2: Decision Logic for QSAR Model Selection

De novo molecular design represents a paradigm shift in drug discovery, enabling the computational creation of novel drug-like compounds from scratch without relying on pre-existing templates [47]. In practice, a potential drug candidate must simultaneously satisfy multiple, often conflicting, objectives: it must demonstrate high affinity for its target protein, possess suitable drug-like properties (QED), exhibit low toxicity, and have acceptable synthetic accessibility [48]. The chemical space that must be navigated to find these compounds is astronomically vast, estimated to contain approximately 10^60 different molecules, making brute-force screening approaches impractical [48].

Multi-objective optimization (MOO) provides a computational framework to address this challenge by systematically exploring trade-offs between competing objectives. Instead of combining metrics into a single weighted score, Pareto-based MOO methods identify the set of optimal compromises where no objective can be improved without worsening another [49] [48]. This review compares the performance of leading MOO approaches for de novo molecular design, examining their methodological foundations, experimental validation, and practical implementation for drug development professionals.

Methodological Comparison of MOO Approaches

Multiple computational strategies have been developed to tackle the multi-objective optimization challenge in molecular design. The table below compares four distinct methodological approaches, highlighting their core algorithms, optimization strategies, and handling of objective conflicts.

Table 1: Comparison of Multi-Objective Optimization Approaches for De Novo Molecular Design

| Method Name | Core Algorithm | Optimization Strategy | Key Objectives Handled | Conflict Resolution |

|---|---|---|---|---|

| DrugEx v2 [49] | Multi-objective Reinforcement Learning (RL) with RNN | Evolutionary algorithm crossover/mutation + Pareto ranking | Target affinity (A1AR, A2AAR), anti-target avoidance (hERG) | Non-dominated sorting + Tanimoto crowding distance |

| Mothra [48] | Monte Carlo Tree Search (MCTS) + RNN | Pareto multi-objective MCTS with NSGA-II | Docking score, QED, estimated toxicity | Pareto front identification without weight adjustment |

| DPO with Curriculum Learning [50] | Direct Preference Optimization | Reward model training on molecular preference pairs | Multiple drug property benchmarks | Preference likelihood maximization |

| RFpeptides [51] | Denoising diffusion models + cyclic positional encoding | Conditional generation with structural filters | Binding affinity, specificity, structural accuracy | Sequential filtering (iPAE, ddG, SAP, CMS) |

The workflow for multi-objective molecular design typically follows a structured pipeline that integrates generation, evaluation, and optimization components, as illustrated below.

Figure 1: Multi-Objective Molecular Design Workflow

Experimental Protocols and Performance Benchmarks

Experimental Validation Frameworks

Rigorous experimental validation is crucial for establishing the real-world utility of computationally designed molecules. The protocols employed across studies typically involve a multi-stage process:

Biochemical Synthesis: Successful designs proceed to chemical synthesis using Fmoc-based solid-phase peptide synthesis for macrocycles [51] or traditional organic synthesis for small molecules. Studies report synthesis success rates as a key feasibility filter, with one study noting 14 of 27 designed macrocycles were synthesizable in sufficient yield for characterization [51].

Binding Affinity Measurement: Surface plasmon resonance (SPR) single-cycle kinetics experiments quantify binding affinity (reported as Kd values) between designed molecules and target proteins [51]. For example, RFpeptides achieved sub-10 nM affinity binders for multiple diverse protein targets [51].

Structural Validation: X-ray crystallography of molecule-target complexes provides the highest validation standard, with successful designs demonstrating close alignment to computational models (Cα root-mean-square deviation < 1.5 Å) [51].