Bayesian Optimization for Organic Synthesis: A Complete Guide to AI-Driven Yield Prediction

This comprehensive guide explores Bayesian optimization (BO) as a transformative framework for predicting and maximizing yields in organic synthesis.

Bayesian Optimization for Organic Synthesis: A Complete Guide to AI-Driven Yield Prediction

Abstract

This comprehensive guide explores Bayesian optimization (BO) as a transformative framework for predicting and maximizing yields in organic synthesis. Designed for researchers, scientists, and drug development professionals, it covers the foundational principles of BO and its unique advantages over traditional high-throughput experimentation (HTE). The article details methodological implementation, including surrogate models (e.g., Gaussian Processes) and acquisition functions, for navigating complex chemical spaces. It provides practical strategies for troubleshooting common pitfalls and optimizing BO workflows. Finally, it evaluates BO's performance against other optimization methods, presents validation case studies from recent literature, and discusses its profound implications for accelerating drug discovery and sustainable chemistry.

What is Bayesian Optimization? Foundations for Revolutionizing Synthesis Planning

Application Notes

The application of Bayesian Optimization (BO) for yield prediction in organic synthesis represents a paradigm shift from heuristic-driven experimentation to a closed-loop, data-efficient design of experiments (DoE). This approach is grounded in a probabilistic framework that quantifies uncertainty, enabling the strategic selection of the next most informative reaction conditions to evaluate.

Core Quantitative Data Summary

Table 1: Comparative Performance of BO vs. Traditional DoE in Yield Optimization

| Method & Study | Reaction Type | Search Space Dimensions | Experiments to >90% Max Yield | Final Reported Yield |

|---|---|---|---|---|

| BO (Expected Improvement) | Palladium-catalyzed C–N cross-coupling | 4 (Cat., Base, Solv., Temp.) | 24 | 92% |

| BO (Upper Confidence Bound) | Nickel-photoredox C–O cross-coupling | 5 (Cat., Ligand, Base, Solv., Time) | 18 | 94% |

| Classical One-at-a-time | Reference C–N cross-coupling | 4 (Cat., Base, Solv., Temp.) | 56+ | 89% |

| Full Factorial Design | Reference C–N cross-coupling | 4 (2 levels each) | 16 (no optimization) | N/A (screening only) |

Table 2: Key Hyperparameters for Gaussian Process Surrogate Models in Synthesis

| Hyperparameter | Typical Setting / Prior | Impact on Yield Prediction Model |

|---|---|---|

| Kernel (Covariance Function) | Matérn 5/2 or ARD RBF | Defines smoothness and feature relevance; ARD kernels automatically identify influential variables (e.g., catalyst loading vs. temperature). |

| Acquisition Function | Expected Improvement (EI) or Noisy EI | Balances exploitation (high predicted yield) and exploration (high uncertainty); Noisy EI accounts for experimental replication error. |

| Initial Design Size | 4–8 points (Latin Hypercube) | Provides the baseline data to build the initial surrogate model prior to BO loop initiation. |

Experimental Protocols

Protocol 1: Initial Dataset Generation via Latin Hypercube Sampling (LHS)

- Define Search Space: For a Suzuki-Miyaura coupling, list variables: Pd catalyst (4 choices), ligand (6 choices), base (5 choices), solvent (8 choices), temperature (30–100 °C), and time (1–24 h). Encode categorical variables numerically.

- Generate LHS Points: Use statistical software (e.g.,

PyDOEin Python) to generate 6–10 experimental conditions ensuring maximal stratification across each variable dimension. - Execute Reactions: Perform reactions in parallel using an automated liquid-handling platform or manually in a glovebox under inert atmosphere.

- Analyze Yields: Quantify yields via UPLC/UV-MS using a calibrated internal standard. Record all data with metadata.

Protocol 2: Iterative Bayesian Optimization Loop

- Model Training: Train a Gaussian Process (GP) regression model on all accumulated yield data. Use a Matérn 5/2 kernel. Optimize kernel hyperparameters via maximum likelihood estimation.

- Surrogate Prediction & Uncertainty: Use the trained GP to predict the mean and standard deviation (uncertainty) of yield for all untested conditions in the search space.

- Acquisition Function Maximization: Calculate the Expected Improvement (EI) for all candidate conditions. Select the condition with the highest EI value.

- Experimental Evaluation: Execute the reaction(s) at the proposed condition(s), typically in triplicate to estimate experimental noise.

- Data Augmentation & Iteration: Append the new yield result(s) to the training dataset. Return to Step 1. Loop continues until a yield threshold is met or the iteration budget (e.g., 30 experiments) is exhausted.

Visualizations

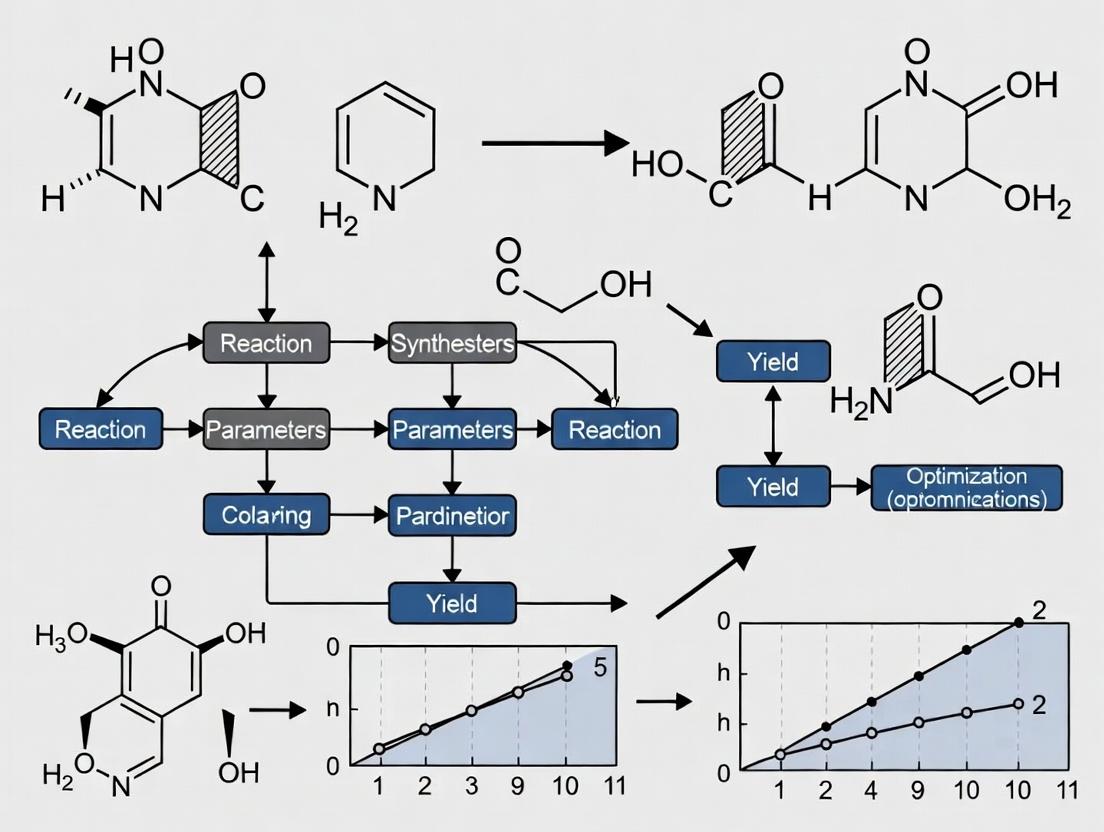

Title: Bayesian Optimization Workflow for Reaction Yield

Title: GP Model Predicts Yield & Uncertainty for Acquisition

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Bayesian Optimization-Driven Synthesis

| Item / Reagent Solution | Function in BO Workflow |

|---|---|

| Automated Parallel Reactor (e.g., Chemspeed, Unchained Labs) | Enables high-fidelity, reproducible execution of the initial LHS and subsequent BO-proposed experiments in parallel, minimizing human error and time. |

| High-Throughput Analysis Suite (UPLC-MS with automated sampling) | Provides rapid, quantitative yield data essential for quick iteration of the BO loop. Integration with LIMS allows direct data streaming to the model. |

BO Software Platform (e.g., BoTorch, GPyOpt, Scikit-optimize) |

Open-source Python libraries that provide the core algorithms for Gaussian Process modeling and acquisition function optimization. |

| Chemical Variable Encoder (Custom scripts for one-hot, ordinal encoding) | Transforms categorical variables (e.g., solvent, ligand type) into numerical representations usable by the GP model kernel. |

| Bench-Stable Catalyst & Ligand Kits (e.g., Pd PEPPSI complexes, Buchwald ligands) | Provides consistent, pre-weighed reagents to reduce preparation variability and accelerate testing of diverse conditions proposed by the BO algorithm. |

Within the broader thesis investigating Bayesian optimization (BO) for organic synthesis yield prediction in drug development, this document details the core iterative philosophy of BO. This approach is critical for efficiently navigating high-dimensional, expensive-to-evaluate chemical spaces to identify optimal reaction conditions, thereby accelerating medicinal chemistry campaigns.

The Iterative Bayesian Optimization Cycle: Core Algorithm

Bayesian optimization is a sequential design strategy for global optimization of black-box functions. It builds a probabilistic surrogate model of the objective function (e.g., chemical reaction yield) and uses an acquisition function to decide where to sample next, balancing exploration and exploitation.

Quantitative Framework & Data Presentation

The BO process relies on two core quantitative components: the surrogate model (typically a Gaussian Process) and the acquisition function.

Table 1: Common Acquisition Functions in Bayesian Optimization

| Acquisition Function | Mathematical Formulation | Key Property | Best Use-Case in Synthesis |

|---|---|---|---|

| Expected Improvement (EI) | EI(x) = E[max(f(x) - f(x*), 0)] |

Balances improvement probability and magnitude. | General-purpose, robust choice for yield optimization. |

| Upper Confidence Bound (UCB) | UCB(x) = μ(x) + κ * σ(x) |

Explicit trade-off parameter (κ). | When control over exploration/exploitation balance is needed. |

| Probability of Improvement (PoI) | PoI(x) = P(f(x) ≥ f(x*) + ξ) |

Simpler, can be less aggressive. | Early-stage exploration or when seeking incremental gains. |

| Entropy Search (ES) | Maximizes information gain about the optimum. | Information-theoretic, computationally intensive. | When the precise location of the optimum is critical. |

Table 2: Gaussian Process Kernel Functions for Chemical Features

| Kernel Name | Formula | Hyperparameters | Suitability for Reaction Data |

|---|---|---|---|

| Matérn 5/2 | k(r) = σ² (1 + √5r + 5r²/3) exp(-√5r) |

Length-scale (l), variance (σ²) | Default choice; accommodates moderate smoothness. |

| Radial Basis Function (RBF) | k(r) = exp(-r² / 2l²) |

Length-scale (l) | Assumes very smooth functions; may over-smooth. |

| Matérn 3/2 | k(r) = σ² (1 + √3r) exp(-√3r) |

Length-scale (l), variance (σ²) | For less smooth, more erratic response surfaces. |

Iterative Learning Protocol

Protocol 1.1: Core Bayesian Optimization Iteration for Reaction Yield Prediction

Objective: To execute one complete cycle of the BO loop for optimizing a chemical reaction yield.

Materials: Historical reaction data (initial design of experiments), surrogate model software (e.g., GPyTorch, scikit-learn), acquisition function optimizer.

Procedure:

Initialization:

- Start with an initial dataset

D₁:n = { (x_i, y_i) }wherex_iis a vector of reaction conditions (e.g., catalyst loading, temperature, solvent polarity) andy_iis the corresponding measured yield. - This set is typically generated via a space-filling design (e.g., Latin Hypercube Sampling) to provide broad initial coverage.

- Start with an initial dataset

Surrogate Model Training (The "Learn" Phase):

- Train a Gaussian Process (GP) model on

D₁:n. - Model Specification: Define a mean function (often zero or constant) and a covariance kernel (see Table 2). The Matérn 5/2 kernel is recommended as a starting point.

- Hyperparameter Optimization: Optimize the kernel hyperparameters (length-scales, noise variance) by maximizing the log marginal likelihood

log p(y | X, θ)using a conjugate gradient method (e.g., L-BFGS-B). - Output: A posterior distribution over functions:

f(x) | D₁:n ~ N( μ_n(x), σ_n²(x) ).

- Train a Gaussian Process (GP) model on

Acquisition Function Maximization (The "Decide" Phase):

- Using the trained GP, compute the chosen acquisition function

α(x; D)across the entire input space (see Table 1). Expected Improvement (EI) is a robust default. - Identify the next candidate point

x_n+1by solving:x_n+1 = argmax_x α(x; D₁:n). - Optimization Method: This is performed on the acquisition function, which is cheap to evaluate. Use a combination of multi-start quasi-Newton methods (e.g., L-BFGS-B) and random sampling.

- Using the trained GP, compute the chosen acquisition function

Parallel Experimentation & Evaluation (The "Experiment" Phase):

- In a laboratory setting, set up and run the chemical reaction as defined by the proposed conditions

x_n+1. - Critical Step: Purify the product and measure the reaction yield

y_n+1using a standardized analytical technique (e.g., qNMR, HPLC with internal standard).

- In a laboratory setting, set up and run the chemical reaction as defined by the proposed conditions

Data Augmentation (The "Update" Phase):

- Augment the dataset:

D₁:n+1 = D₁:n ∪ { (x_n+1, y_n+1) }. - Return to Step 2 and repeat until a convergence criterion is met (e.g., budget exhausted, yield exceeds target, or successive improvements are below a threshold

ϵ).

- Augment the dataset:

Visualization 1: The Bayesian Optimization Iterative Cycle

Diagram Title: Bayesian Optimization Core Iterative Loop

Application Protocol: Multi-Objective Optimization for Yield and Purity

Protocol 2.1: Bayesian Optimization for Concurrent Yield and Enantiomeric Excess (ee) Optimization

Objective: To optimize reaction conditions for both high yield and high enantioselectivity in an asymmetric catalysis screen.

Materials: Chiral catalyst library, substrate, analytical chiral HPLC system, multi-objective BO framework (e.g., using ParEGO or Expected Hypervolume Improvement).

Procedure:

- Define Objective Vector: For each experiment

i, the output is a vectorY_i = [Yield_i, ee_i]. The goal is to maximize both objectives simultaneously, finding the Pareto front. - Initial Design: Perform 10-15 initial reactions using a space-filling design across continuous (temperature, time) and categorical (catalyst identity, solvent) variables.

- Surrogate Modeling: Model each objective with an independent GP. For categorical variables, use a transformation (e.g., one-hot encoding) or a dedicated kernel (e.g., Hamming kernel for categorical dimensions).

- Multi-Objective Acquisition: Use the Expected Hypervolume Improvement (EHVI) acquisition function. EHVI measures the expected increase in the hypervolume of the Pareto front dominated by the current data.

- Candidate Selection: Maximize EHVI to propose the next set of reaction conditions

x_n+1. - Evaluation & Update: Run the experiment, measure both yield (by HPLC) and ee (by chiral HPLC), and update the dataset. Iterate for 20-30 cycles.

Visualization 2: Multi-Objective BO with EHVI

Diagram Title: Multi-Objective BO with EHVI Workflow

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 3: Essential Toolkit for BO-Driven Organic Synthesis Research

| Item / Reagent Solution | Function in BO Context | Example / Specification |

|---|---|---|

| Automated Synthesis Platform (e.g., Chemspeed, Unchained Labs) | Enables high-throughput execution of proposed experiments from the BO algorithm, closing the loop rapidly. | Chemspeed Swing XL with liquid handling and solid dosing. |

| Online Analytical Instrument | Provides immediate in-situ or at-line yield/purity data for fast dataset updating. | ReactIR (FTIR) for reaction profiling, or UHPLC with autosampler. |

| Gaussian Process Software Library | Core engine for building the surrogate probabilistic model. | GPyTorch (for flexibility, GPU acceleration) or scikit-learn (for prototyping). |

| Bayesian Optimization Framework | Provides acquisition functions, candidate selection, and iteration management. | BoTorch (PyTorch-based), Dragonfly, or custom Python scripts. |

| Chemical Descriptor Set | Numerically encodes categorical/discrete variables (e.g., catalysts, ligands) for the model. | DRFP (Depth-based Reaction Fingerprint), Mordred descriptors, or one-hot encoding. |

| Internal Standard for qNMR | Provides accurate, reproducible yield measurements critical for reliable model training. | 1,3,5-Trimethoxybenzene or maleic acid in a dedicated deuterated solvent. |

| Diverse Chemical Stock Library | Ensures the initial space-filling design covers a broad, representative chemical space. | Commercially available catalyst/solvent libraries or in-house compound collections. |

Within the context of a broader thesis on accelerating drug development, this document details the application of Bayesian Optimization (BO) for predicting and optimizing reaction yields in organic synthesis. BO provides a sample-efficient framework for navigating complex, high-dimensional chemical spaces where experiments are resource-intensive. This protocol demystifies its three core components—the surrogate model, the acquisition function, and the optimization loop—providing application notes for their implementation in a chemical research setting.

Key Component 1: The Surrogate Model

The surrogate model is a probabilistic approximation of the unknown function mapping reaction parameters (e.g., temperature, catalyst loading, solvent ratio) to the yield outcome. It provides both a predicted mean and an uncertainty estimate.

Common Models & Comparative Performance:

| Model Type | Key Advantages | Limitations | Typical Use Case in Synthesis |

|---|---|---|---|

| Gaussian Process (GP) | Naturally provides uncertainty quantification; well-calibrated predictions. | Scales poorly with data (O(n³)); sensitive to kernel choice. | Initial optimization phases (<500 data points) with continuous variables. |

| Random Forest (RF) | Handles mixed data types; faster training for larger datasets. | Uncertainty estimates are less reliable than GP. | Larger historical datasets with categorical descriptors (e.g., solvent type). |

| Bayesian Neural Network (BNN) | Scalable to very high dimensions and large datasets. | Complex training; computational overhead. | High-throughput experimentation data with thousands of observations. |

Protocol 2.1: Implementing a Gaussian Process Surrogate with RDKit Features

Objective: To construct a GP surrogate model for predicting yield based on molecular descriptors and reaction conditions.

Materials & Reagents:

- Software: Python (≥3.9), Scikit-learn, GPy or GPflow, RDKit.

- Data: Tabular dataset of previous reactions with

[SMILES_Reactant, Solvent, Temp(°C), Time(h), Catalyst_Loading(mol%), Yield(%)].

Procedure:

- Feature Engineering:

- For each reactant SMILES string, use RDKit to compute 200-bit Morgan fingerprints (radius=2).

- Standardize continuous variables (Temperature, Time, Loading) using

StandardScaler. - One-hot encode categorical variables (Solvent).

- Concatenate all features into a single vector x_i for each reaction i.

Model Definition:

- Define a GP prior: f(x) ~ GP(m(x), k(x, x')).

- Set mean function m(x) = 0.

- Select a Matérn 5/2 kernel: k(xi, xj) = σ² (1 + √5r + 5/3 r²) exp(-√5r), where r is the scaled Euclidean distance.

- Initialize kernel variance σ² and lengthscales.

Model Training:

- Partition data into training (90%) and test (10%) sets.

- Maximize the log marginal likelihood p(y | X) of the GP with respect to the kernel hyperparameters using the L-BFGS-B optimizer.

- Convergence is typically reached within 200 iterations.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Bayesian Optimization for Synthesis |

|---|---|

| RDKit | Open-source cheminformatics library for converting SMILES to numerical molecular fingerprints (features). |

| GPflow/GPyTorch | Python libraries for flexible, scalable Gaussian Process modeling. |

| Scikit-optimize | Provides off-the-shelf BO loops with GP surrogates and various acquisition functions. |

| High-Throughput Experimentation (HTE) Robot | Automated platform to physically execute the proposed experiments generated by the BO loop. |

| Electronic Lab Notebook (ELN) | Centralized repository for structured reaction data (features X and outcomes y) required for model training. |

Key Component 2: The Acquisition Function

The acquisition function α(x) uses the surrogate's posterior (μ(x), σ(x)) to quantify the utility of evaluating a candidate point x. It balances exploration (high uncertainty) and exploitation (high predicted mean).

Quantitative Comparison of Acquisition Functions:

| Function | Mathematical Form | Balance Parameter | Best For |

|---|---|---|---|

| Probability of Improvement (PI) | α_{PI}(x) = Φ((μ(x) - f(x⁺) - ξ) / σ(x)) | ξ (exploration bias) | Quick, greedy improvement; simple landscapes. |

| Expected Improvement (EI) | α_{EI}(x) = (μ(x)-f(x⁺)-ξ)Φ(Z) + *σ(x)φ(Z) | ξ | General-purpose; strong theoretical basis. |

| Upper Confidence Bound (UCB) | α_{UCB}(x) = μ(x) + κ σ(x) | κ | Systematic exploration; theoretical guarantees. |

Protocol 3.1: Optimizing the Expected Improvement (EI) Function

Objective: To select the next reaction conditions x_next by maximizing the Expected Improvement.

Procedure:

- Define Incumbent: Identify the current best observation f(x⁺) from the observed data.

- Compute EI: For a candidate x from the surrogate posterior:

- Calculate standard normal variable Z = (μ(x) - f(x⁺) - ξ) / σ(x).

- Where ξ = 0.01 (default) to encourage slight exploration.

- Compute α_{EI}(x) = (μ(x)-f(x⁺)-ξ)Φ(Z) + *σ(x)φ(Z).

- Φ and φ are the CDF and PDF of the standard normal distribution.

- Global Maximization: Use a multi-start strategy (e.g., 50 random starts followed by L-BFGS-B) to find xnext = argmax α*{EI}(x*). This step operates in the *input space (reaction conditions).

Key Component 3: The Optimization Loop

The BO loop iteratively couples the surrogate model and acquisition function to guide experimental campaigns.

Protocol 4.1: The Bayesian Optimization Experimental Cycle

Objective: To execute a closed-loop optimization campaign for a Suzuki-Miyaura cross-coupling reaction yield.

Initial Materials:

- Chemical Space: Pd catalyst (SPhos, XPhos), Base (K₂CO₃, Cs₂CO₃), Solvent (1,4-dioxane, DMF, toluene), Temperature (70-120°C).

- Initial Dataset: A space-filling design (e.g., 10 Latin Hypercube samples) for initial model training.

Procedure:

- Initialization: Execute the 10 initial reactions as per the designed conditions. Record yields.

- Loop (Iterations 11 to 60): a. Model Update: Train/update the GP surrogate model (Protocol 2.1) on all available {X, y} data. b. Proposal: Maximize the EI acquisition function (Protocol 3.1) to propose the next reaction condition xnext. c. Execution: Dispatch xnext to the automated synthesis platform for execution. d. Analysis: Measure and record the reaction yield y_next. e. *Append Data: X = X ∪ xnext; y = y ∪ *ynext.

- Termination: Halt after a fixed budget (e.g., 60 total experiments) or when yield improvement plateaus (<2% over 5 iterations).

Integration with High-Throughput Experimentation: The BO loop's proposal step (x_next) can be formatted as a robot-readable instruction set (e.g., a .csv or .json file), enabling fully autonomous "self-driving" laboratories. The choice of acquisition function becomes critical here, with UCB often preferred for its parameter interpretability.

Handling Failed Reactions: Reactions with no yield (e.g., due to precipitation) should be incorporated into the dataset, not discarded. A sensible approach is to set a floor yield (e.g., 0.1%) and potentially use a warped GP likelihood to handle censored data.

Conclusion: For the thesis on organic synthesis yield prediction, Bayesian Optimization provides a rigorous, iterative framework that efficiently leverages historical data to guide costly experiments. The surrogate model (GP) forms a probabilistic belief, the acquisition function (EI) directs experimental policy, and the loop integrates them into a workflow that consistently outperforms random or grid search, accelerating the discovery of optimal synthetic routes in drug development.

Why BO? Advantages Over Grid Search, Random Search, and Traditional DoE.

This application note is framed within a broader thesis on leveraging Bayesian Optimization (BO) for organic synthesis yield prediction. In pharmaceutical research, optimizing reaction conditions to maximize yield is a critical, expensive, and time-consuming multivariate problem. Traditional Design of Experiments (DoE), Grid Search, and Random Search have been standard methodologies. However, BO has emerged as a superior strategy for navigating complex, high-dimensional experimental spaces with expensive function evaluations (e.g., multi-step chemical synthesis). This document details the comparative advantages of BO and provides protocols for its implementation in yield optimization workflows.

Comparative Analysis of Optimization Methods

The core challenge is efficiently finding global optima (e.g., maximum yield) with minimal experiments. The following table summarizes key quantitative and qualitative comparisons.

Table 1: Comparison of Optimization Methodologies for Reaction Yield Prediction

| Feature | Traditional DoE | Grid Search | Random Search | Bayesian Optimization (BO) |

|---|---|---|---|---|

| Core Principle | Pre-defined, structured sampling (e.g., factorial, response surface) | Exhaustive search over a discretized grid | Uniform random sampling at each iteration | Probabilistic model (surrogate) guides sequential sampling |

| Sample Efficiency | Low to Moderate. Requires many initial points. Scales poorly with dimensions. | Very Low. Number of experiments grows exponentially with dimensions. | Low. Better than Grid for high-dimensional spaces but still inefficient. | Very High. Actively selects the most informative next experiment. |

| Handling of Noise | Moderate (model-based analysis). | None. | None. | Excellent. Can explicitly model uncertainty/noise (e.g., via Gaussian Processes). |

| Exploitation vs. Exploration | Fixed by design. | None (pure exhaustion). | None (pure random). | Adaptively balanced. Uses an acquisition function (e.g., EI, UCB). |

| Parallelization Potential | High (all points defined upfront). | High (all points defined upfront). | High (independent random trials). | Moderate. Requires specialized strategies (e.g., batch, hallucinated observations). |

| Best For | Low-dimensional (<5), linear or well-understood systems. Initial screening. | Trivially small, discrete parameter spaces. | Moderately high-dimensional spaces where gradient information is unavailable. | Expensive, black-box, non-convex functions (e.g., chemical reaction yield with >3 continuous variables). |

| Typical Iterations to Optima* | 50-100+ | 1000+ | 200-500 | 10-50 |

*Estimates based on benchmark studies for functions analogous to chemical yield landscapes.

Bayesian Optimization Protocol for Organic Synthesis Yield

Protocol 1: Setting Up a BO Loop for Reaction Optimization

Objective: To maximize the predicted yield of a Pd-catalyzed cross-coupling reaction by optimizing four continuous variables: Temperature, Catalyst Loading, Equivalents of Reagent, and Reaction Time.

Materials & Computational Tools:

- Reaction substrates, catalyst, solvent.

- Automated/reactor system for consistent execution (optional but recommended).

- Bayesian Optimization software library (e.g., BoTorch, GPyOpt, scikit-optimize).

Procedure:

- Define Parameter Space: Set feasible bounds for each variable (e.g., Temperature: 25-100 °C, Catalyst Loading: 0.5-5.0 mol%).

- Choose Initial Design: Perform a small space-filling design (e.g., 5-10 points via Latin Hypercube Sampling) to seed the BO model. Execute these experiments and record yields.

- Select Surrogate Model: Fit a Gaussian Process (GP) model to the initial data. A Matern 5/2 kernel is often a robust default for chemical landscapes.

- Define Acquisition Function: Select Expected Improvement (EI) to balance exploration and exploitation.

- Optimization Loop: a. Using the fitted GP and EI, compute the point in parameter space that maximizes EI. b. Perform the experiment at the suggested conditions. c. Record the yield and update the dataset (X, y). d. Re-fit the GP model to the updated dataset. e. Repeat steps a-d for a set number of iterations (e.g., 20-30) or until yield convergence.

- Analysis: Identify the conditions with the highest observed yield and the highest posterior mean predicted by the final GP model.

Protocol 2: Benchmarking BO Against Random Search (In Silico)

Objective: To quantitatively demonstrate the sample efficiency of BO using a simulated reaction yield function.

Procedure:

- Simulate Yield Surface: Use a known test function with local optima and noise (e.g., Branin-Hoo function modified to represent yield between 0-100%).

- Define Optimization Runs: Initialize both BO (GP+EI) and Random Search from the same 5 random starting points.

- Execute Iterations: Run each method for 30 sequential iterations. For each iteration, record the best yield found so far.

- Replicate: Repeat the entire process 20 times with different random seeds.

- Analyze: Plot the average best-found yield (± standard error) vs. iteration number for both methods. Statistical comparison (e.g., AUC of the curve) will show BO's faster convergence.

Visualizing the BO Workflow and Comparative Logic

Title: Bayesian Optimization Loop for Experimentation

Title: Choosing an Optimization Method Decision Tree

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 2: Essential Tools for BO-Driven Synthesis Optimization

| Item / Solution | Category | Function in Research |

|---|---|---|

| Gaussian Process (GP) Model | Computational Model | Serves as the probabilistic surrogate model in BO. Learns from data to predict yield and uncertainty at untested conditions. |

| Expected Improvement (EI) | Acquisition Function | Computes the potential utility of testing a new point, balancing exploration of uncertain regions and exploitation of known high-yield regions. |

| Automated Reactor Platform | Hardware | Enables precise control of reaction parameters (temp, stir, addition) and high-throughput execution of the sequential experiments suggested by BO. |

| Latin Hypercube Sampling | Experimental Design | Generates a space-filling set of initial experiments to seed the BO algorithm, ensuring broad coverage of the parameter space. |

| BoTorch / GPyOpt | Software Library | Specialized Python frameworks for implementing BO loops, featuring state-of-the-art GP models, acquisition functions, and optimization tools. |

| MATLAB Optimization Toolbox | Software Library | Alternative platform with Global Optimization and Statistics toolboxes for implementing BO and comparative benchmarks. |

Application Notes: Bayesian Optimization (BO) in Synthesis Yield Prediction

Bayesian Optimization (BO) has transitioned from a theoretical machine-learning framework to a practical tool accelerating discovery in pharmaceutical and materials research. Its core value lies in intelligently navigating high-dimensional, expensive-to-evaluate experimental spaces—such as reaction conditions or material formulations—to find optimal yields or properties with minimal experimental trials.

Table 1: Current Adoption Metrics Across Research Domains

| Domain / Application | Key Objective | Typical # of BO Iterations | Reported Yield/Performance Improvement | Key BO Surrogate Model Used |

|---|---|---|---|---|

| Pharmaceutical: Small Molecule Synthesis | Maximize yield of API intermediates | 10-20 | 15-40% increase over traditional OFAT/DoE | Gaussian Process (GP) with Matérn kernel |

| Pharmaceutical: Peptide/Catalyst Optimization | Identify optimal conditions (temp, solvent, equiv.) | 15-30 | Often identifies global optimum missed by grid search | Tree-structured Parzen Estimator (TPE) |

| Materials: OLED Emitter Formulation | Maximize device efficiency (cd/A) & lifetime | 20-50 | 2x improvement in efficiency after 40 experiments | Random Forest or GP |

| Materials: MOF/Porous Polymer Synthesis | Optimize BET surface area & pore volume | 30-60 | 25% higher surface area than baseline literature | GP with composite kernel |

Table 2: Comparative Analysis of BO Software Platforms in Use (2024)

| Platform / Tool | Primary Interface | Key Feature for Synthesis | Integration with Lab Automation | Best Suited For |

|---|---|---|---|---|

| BoTorch / Ax | Python library | High flexibility for custom models & constraints | High (via API) | In-house teams with ML expertise |

| Optuna | Python library | Efficient pruning of trials, parallelization | Medium | High-throughput computational screening |

| SigOpt | Commercial SaaS | User-friendly UI, robust experiment tracking | High (native drivers) | Industry R&D with mixed expertise |

| Gryffin / Phoenics | Python library | Physical knowledge integration (via descriptors) | Medium | Materials formulation with prior knowledge |

Detailed Experimental Protocols

Protocol 2.1: Bayesian Optimization for Pd-Catalyzed Cross-Coupling Yield Maximization

Objective: To maximize the isolated yield of a Suzuki-Miyaura cross-coupling reaction using a BO-guided search over a 4-dimensional chemical space.

I. Pre-Experimental Setup & Parameter Definition

- Define Search Space: Create a bounded, continuous/discrete space for key variables:

- Catalyst Loading (mol%): [0.5, 2.5]

- Equivalents of Base: [1.0, 3.0]

- Reaction Temperature (°C): [25, 110]

- Solvent Ratio (Water:THF): [0:1, 1:0] (encoded as %Water [0, 100])

- Select Acquisition Function: Expected Improvement (EI).

- Choose Surrogate Model: Gaussian Process with Matérn 5/2 kernel.

- Initialize: Generate 5 initial data points via Latin Hypercube Sampling (LHS) and run experiments to obtain initial yield data.

II. Iterative BO Loop & Experimental Procedure

- Model Training: Train the GP surrogate model on all existing (condition, yield) data.

- Proposal Generation: The acquisition function (EI) queries the model to propose the next set of reaction conditions predicted to most improve yield.

- Parallel Execution (Optional): For batch BO, use q-EI to propose 3-4 conditions for parallel experimentation.

- Experimental Execution: a. Setup: In a nitrogen-filled glovebox, charge a 2-dram vial with aryl halide (0.1 mmol), boronic acid (0.12 mmol), and Pd catalyst (X mol% as per BO suggestion). b. Add Solvents/Solution: Add the solvent mixture (total 1 mL) as per the BO-suggested Water:THF ratio. Add the base (Y equiv. as per BO suggestion) as an aqueous solution or solid. c. React: Seal the vial, remove from glovebox, and place on a pre-heated magnetic stirrer at the suggested temperature (Z °C) for 18 hours. d. Analyze: Cool the vial. Dilute an aliquot with methanol. Analyze by UPLC to determine conversion and preliminary yield via internal standard. e. Isolate & Confirm: Isolate the product via preparative TLC or automated flash chromatography. Obtain isolated mass for true yield calculation.

- Data Incorporation: Add the new experimental result (conditions, isolated yield) to the dataset.

- Loop: Repeat steps II.1 to II.5 until a predetermined budget (e.g., 30 total experiments) or convergence criterion is met (e.g., <2% yield improvement over 5 consecutive iterations).

Protocol 2.2: BO-Driven Optimization of Perovskite Film Photoluminescence Quantum Yield (PLQY)

Objective: To optimize the composition and processing of a mixed-cation perovskite film (e.g., FA_x_MA_y_Cs_z_PbI_3_) for maximum PLQY via a 5-factor BO campaign.

I. Search Space Definition & Initial Design

- Define Search Space:

- Cation Ratios (x, y, z): Continuous, constrained to x + y + z = 1.

- Anti-Solvent Drop Time (s): [10, 30] after spin-coating start.

- Annealing Temperature (°C): [90, 150].

- Choose Model: Use a Random Forest or GP model with a Dirichlet kernel for the composition variables.

- Initialize: 8 initial compositions/conditions via LHS, ensuring the stoichiometric constraint.

II. Synthesis, Characterization & Iteration

- Film Fabrication: a. Prepare precursor solutions in DMF:DMSO according to the BO-suggested cation ratios. b. Spin-coat onto cleaned glass substrates (3000 rpm for 30s). c. At the suggested anti-solvent drop time, apply chlorobenzene (200 µL). d. Immediately transfer to a hotplate and anneal at the suggested temperature for 10 minutes.

- Characterization: Measure PLQY using an integrating sphere coupled to a spectrophotometer and a calibrated excitation source (e.g., 450 nm LED). Use absolute method.

- Data Incorporation & Iteration: Feed the (conditions, PLQY) datum back into the BO loop. Use the upper confidence bound (UCB) acquisition function to balance exploration and exploitation. Iterate for 40-50 cycles.

Visualization: Workflows & Relationships

Bayesian Optimization for Synthesis Workflow

BO Core Algorithm Components

The Scientist's Toolkit: Research Reagent & Platform Solutions

Table 3: Essential Toolkit for BO-Driven Synthesis Research

| Category / Item | Example Product/System | Function in BO Workflow |

|---|---|---|

| Automated Synthesis Platform | Chemspeed Accelerator SLT-II, Unchained Labs Junior | Executes liquid handling, dosing, and reaction setup for proposed conditions 24/7, enabling rapid iteration. |

| High-Throughput Analytics | UPLC-MS (e.g., Waters ACQUITY), HPLC with autosampler | Provides rapid, quantitative yield/conversion data for each experiment to feed back into the BO model. |

| Reaction Screening Kits | Solvent & Additive Toolkit (e.g., Sigma-Aldrich), Catalyst Library (e.g., Strem) | Pre-formatted, spatially encoded chemical libraries for efficient LHS initialization and variable space exploration. |

| BO Software & Compute | BoTorch (PyTorch backend), Google Cloud Vertex AI | Provides the core ML algorithms, surrogate modeling, and scalable compute for high-dimensional optimization. |

| Data Management & ELN | Titian Mosaic, Benchling | Tracks and structures all experimental metadata (conditions, outcomes, failed runs) for reproducible model training. |

| Specialty Reagents for Key Reactions | Pd PEPPSI-type precatalysts (e.g., Sigma-Aldrich 900970), Buchwald Ligands | Robust, widely applicable catalysts that expand the viable chemical space for BO campaigns in cross-coupling. |

Implementing Bayesian Optimization: A Step-by-Step Guide for Chemists

Application Note

Within a Bayesian optimization (BO) framework for predicting organic synthesis yield, the precise definition of the chemical search space is the critical first step that determines the success or failure of the entire campaign. This space, composed of discrete and continuous variables representing reagents, catalysts, and reaction conditions, is the high-dimensional landscape the BO algorithm will navigate. A well-constructed search space balances breadth (to avoid local optima) with practical constraints (to ensure synthetic feasibility). This note details a systematic protocol for defining this space, grounded in current literature and high-throughput experimentation (HTE) practices, to enable efficient BO-driven discovery.

Quantitative Data on Search Space Parameters

A review of recent BO-driven synthesis studies reveals typical dimensionalities and parameter ranges.

Table 1: Characteristic Ranges for Common Search Space Parameters

| Parameter Category | Specific Variable | Typical Range/Options | Data Type | Notes for BO |

|---|---|---|---|---|

| Reagents | Nucleophile (e.g., Boronic Acid) | 10-50 discrete choices | Categorical (one-hot encoded) | Major driver of yield variance. Pre-filter for commercial availability. |

| Reagents | Electrophile (e.g., Aryl Halide) | 10-50 discrete choices | Categorical | Often paired with nucleophile. |

| Catalyst | Pd Catalyst Ligand | 5-20 discrete choices (e.g., XPhos, SPhos, tBuXPhos, RuPhos) | Categorical | Key optimization target. Ligand property descriptors (e.g., %VBur) can be used as features. |

| Catalyst | Pd Source | [Pd(OAc)2, Pd2(dba)3, Pd(MeCN)2Cl2] | Categorical | Often less impactful than ligand choice. |

| Catalyst | Catalyst Loading (mol%) | 0.5 - 5.0 % | Continuous | Log-scale sampling can be efficient. |

| Base | Base Identity | [Cs2CO3, K3PO4, K2CO3, tBuONa] | Categorical | Solubility and strength are critical. |

| Base | Base Equivalents | 1.0 - 3.0 eq. | Continuous | Linear or log-scale. |

| Solvent | Solvent Identity | [Toluene, dioxane, DMF, MeCN, THF] | Categorical | Can be encoded via solvent descriptors (dipolarity, H-bonding). |

| Conditions | Temperature (°C) | 60 - 120 °C | Continuous | Bounded by solvent boiling point. |

| Conditions | Reaction Time (h) | 1 - 24 h | Continuous | Log-scale sampling is often appropriate. |

Experimental Protocol for Search Space Definition

Protocol: Systematic Construction of a BO-Ready Chemical Search Space for a Suzuki-Miyaura Cross-Coupling Reaction

Objective: To define a discrete and continuous parameter space for the BO algorithm, informed by chemical knowledge and preliminary screening, focusing on a model Suzuki-Miyaura reaction between aryl halides and boronic acids.

I. Pre-Definition Curation & Literature Review

- Define Core Transformation: Clearly specify the reaction (e.g., Suzuki-Miyaura coupling of heteroaryl chlorides with (hetero)aryl boronic acids).

- Literature Mining: Use tools like Reaxys or SciFinder to compile:

- Common Catalysts: List frequently reported Pd precursors and ligands (bisphosphines, SPhos derivatives, N-heterocyclic carbenes).

- Viable Reagent Pools: Identify commercially available substrates with diverse electronic and steric properties. Prioritize vendors (e.g., Sigma-Aldrich, Combi-Blocks, Enamine) with stock availability.

- Typical Conditions: Note common solvents (toluene, water/dioxane mixtures), bases (carbonates, phosphates), and temperature ranges.

II. Preliminary High-Throughput Experimental (HTE) Screening

- Purpose: To empirically validate the feasibility of reagent combinations and identify gross incompatibilities before BO.

- Procedure:

- Design a sparse matrix assay using a liquid handling robot.

- Select a subset (8-12) of the most electronically diverse aryl halides and boronic acids from the curated list.

- Test each substrate pair against 3-4 distinct catalyst/ligand systems (e.g., Pd(OAc)2/XPhos, Pd2(dba)3/RuPhos) in 2-3 different solvents.

- Use standard conditions for base (2.0 eq. Cs2CO3) and temperature (80°C) in this initial screen.

- Analyze yields via UPLC/UV-MS.

- Outcome: Remove any substrate or catalyst that consistently yields <5% conversion across all tested partners, refining the reagent pools.

III. Parameter Discretization & Encoding for BO

- Categorical Variables:

- Reagents & Catalysts: Finalize the lists from Step II. Each unique chemical becomes a category (e.g., Ligand1, Ligand2, ...).

- Encoding Strategy: Plan for one-hot encoding or use molecular fingerprint vectors (e.g., ECFP4) as continuous feature representations for the BO algorithm's kernel.

- Continuous Variables:

- Define strict, practical bounds (e.g., Temperature: 25°C – Solvent Reflux Temp; Catalyst Loading: 0.1 mol% – 5.0 mol%).

- Decide on a prior distribution (e.g., uniform, log-normal) for the BO algorithm's initial surrogate model.

IV. Documentation & Featurization

- Create a master table (see Table 1) listing all variables, their types, and bounds/options.

- For all categorical chemicals (substrates, catalysts, solvents), generate a descriptor file containing calculated physicochemical properties (logP, molar refractivity, TPSA) and molecular fingerprints. This enables more informative distance metrics for the BO model.

V. Final Validation & BO Initiation

- The final search space is a Cartesian product of all allowed combinations, though many will be unexplorable.

- Initiate the BO loop by selecting an initial design (e.g., 20-50 random experiments within the defined space) to seed the Gaussian Process model.

Visualization

Diagram 1: Workflow for chemical search space definition.

Diagram 2: Bayesian optimization loop with search space.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Search Space Definition & HTE

| Item | Function in Protocol | Example Product/Catalog |

|---|---|---|

| Liquid Handling Robot | Enables precise, high-throughput dispensing of reagents, catalysts, and solvents for preliminary matrix screening. | Hamilton Microlab STAR, Chemspeed Swing |

| HTE Reaction Blocks | Microtiter-style plates (96- or 384-well) capable of sealing and withstanding heating/mixing for parallel synthesis. | Chemglass CLS-8ML-RDV, J-Kem Cat. No. SPS-24 |

| Pd Catalyst Kits | Pre-weighed, diverse sets of Pd sources and ligands in individual vials to accelerate catalyst space exploration. | Sigma-Aldrich "Suzuki-Miyaura Catalyst Kit" (Product No. 759046) |

| Substrate Libraries | Commercially available sets of diverse, purified building blocks (e.g., aryl halides, boronic acids). | Enamine "Aryl Bromides Building Box", Combi-Blocks "Boronic Acid Library" |

| Automated UPLC/UV-MS System | Provides rapid, quantitative analysis of reaction yields from micro-scale experiments. | Waters Acquity UPLC H-Class with QDa, Agilent 1290 Infinity II |

| Chemical Featurization Software | Calculates molecular descriptors and fingerprints for encoding categorical chemicals. | RDKit (Open Source), Schrödinger Canvas |

| BO Software Platform | Implements the Gaussian process and acquisition function to propose experiments. | Gryffin, Dragonfly, BoTorch (PyTorch-based) |

Within the broader thesis on Bayesian optimization for organic synthesis yield prediction, the selection and encoding of molecular and reaction descriptors form the critical data layer. This step transforms chemical intuition and experimental conditions into a quantifiable feature space, enabling the machine learning model to learn complex structure-yield relationships. The choice of descriptors directly impacts the performance, interpretability, and generalizability of the Bayesian optimization pipeline.

Categories of Descriptors

Molecular Descriptors

These encode the structural and physicochemical properties of reactants, reagents, catalysts, and solvents.

Table 1: Key Molecular Descriptor Categories

| Category | Example Descriptors | Calculation Source/Basis | Relevance to Yield Prediction |

|---|---|---|---|

| 1D/2D (Constitutional/Topological) | Molecular weight, atom count, bond count, logP (octanol-water partition coefficient), topological polar surface area (TPSA), molecular refractivity. | RDKit, Mordred, PaDEL-Descriptor. | Captures bulk properties affecting solubility, reactivity, and steric accessibility. |

| 3D (Geometric/Steric) | Principal moments of inertia, radius of gyration, van der Waals volume, solvent-accessible surface area (SASA). | Conformer generation (e.g., RDKit, Open Babel) followed by calculation. | Encodes steric hindrance and molecular shape critical for transition state energetics. |

| Electronic | HOMO/LUMO energies, dipole moment, partial atomic charges (e.g., Gasteiger), Fukui indices. | Semi-empirical (e.g., PM6, PM7) or DFT calculations (costly). | Directly related to frontier molecular orbital interactions and reaction site reactivity. |

| Fingerprint-Based | Extended-Connectivity Fingerprints (ECFP4, ECFP6), MACCS keys, Path-based fingerprints. | RDKit, CDK. | Substructural patterns; provides a sparse, information-rich representation for similarity. |

Reaction Descriptors

These encode the context of the chemical transformation and experimental conditions.

Table 2: Key Reaction Descriptor Categories

| Category | Example Descriptors | Encoding Method | Relevance to Yield Prediction |

|---|---|---|---|

| Condition Parameters | Temperature (°C), time (h), concentration (mol/L), catalyst/ligand loading (mol%), equivalents of reagents. | Direct numerical encoding, often scaled. | Core optimization variables in Bayesian search. |

| Difference Descriptors | ΔlogP (product - reactants), ΔTPSA, ΔHOMO (product - reactants). | Arithmetic difference of molecular descriptors for reaction components. | Captures net changes in properties through the reaction. |

| Interaction Descriptors | Catalyst-solvent pairwise fingerprints, reactant-catalyst steric clash score. | Concatenation or specifically designed interaction terms. | Models synergistic or antagonistic effects between components. |

| Categorical Encodings | Solvent identity, catalyst class, reaction type (e.g., Suzuki, Buchwald-Hartwig). | One-hot encoding, learned embeddings, or solvent/catalyst property vectors. | Integrates discrete choices into continuous optimization framework. |

Experimental Protocols for Descriptor Generation

Protocol 3.1: Generating a Standard 2D/3D Molecular Descriptor Set Using RDKit and Mordred

Objective: To compute a comprehensive set of ~1800 1D-3D molecular descriptors for all reaction components.

- Input Preparation: Prepare an SDF or SMILES file for each unique molecule (reactants, catalysts, solvents, products) in the reaction dataset.

- Environment Setup: Install

rdkit,mordred, andnumpyin a Python environment. - Descriptor Calculation Script:

- Output: A CSV file where rows are molecules and columns are descriptor values. Perform subsequent standardization (e.g., z-score) across the dataset.

Protocol 3.2: Encoding a Chemical Reaction with Condition and Difference Descriptors

Objective: Create a unified feature vector for a single reaction entry.

- Gather Data: For a reaction, list: SMILES for R1, R2, Product; Catalyst SMILES/ID; Solvent name; Temperature (T), Time (t), Concentration (C).

- Encode Molecular Components:

- Compute a fixed set of molecular descriptors (e.g., logP, TPSA, MW) for R1, R2, Product, Catalyst using Protocol 3.1.

- For the solvent, retrieve property vectors (e.g., from a solvent property database: dielectric constant, dipolarity, H-bonding).

- Calculate Difference Descriptors:

- ΔDescriptor = Descriptor(Product) - [Descriptor(R1) + Descriptor(R2)]

- Assemble Reaction Vector:

- Concatenate: [Condition(T, t, C), CatalystDescriptors, SolventProperty_Vector, ΔDescriptors].

- Scale: Apply feature scaling (e.g., MinMaxScaler) fitted on the entire training set.

Protocol 3.3: Feature Selection for High-Dimensional Descriptor Spaces

Objective: Reduce dimensionality to mitigate overfitting in the Bayesian model.

- Variance Thresholding: Remove descriptors with variance below a threshold (e.g., <0.01) across the dataset.

- Correlation Filtering: Compute pairwise Pearson correlation. For descriptor pairs with |r| > 0.95, remove one.

- Model-Based Selection: Use LASSO (L1) regression or Random Forest feature importance on a preliminary yield prediction task. Retain top-k features.

- Domain-Knowledge Filter: Curate a final list based on chemical relevance to the reaction class (e.g., prioritize electronic descriptors for cross-coupling).

Visualization of Descriptor Selection and Encoding Workflow

Title: Descriptor Encoding Pipeline for Synthesis Optimization

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 3: Essential Tools for Molecular & Reaction Descriptor Workflow

Item / Reagent Solution

Function / Purpose in Descriptor Context

RDKit

Open-source cheminformatics toolkit. Core functions: molecule parsing, fingerprint generation (ECFP), basic descriptor calculation, and substructure searching.

Mordred

Python library that calculates ~1800 1D-3D molecular descriptors directly from SMILES, extending RDKit's capabilities.

PaDEL-Descriptor

Standalone software/library for calculating 2D/3D descriptors and fingerprints; useful for large batch processing.

Psi4 / Gaussian

Quantum chemistry software for computing high-fidelity electronic descriptors (HOMO/LUMO, charges) when semi-empirical methods are insufficient.

Conda/Pip Environment

For dependency management (e.g., rdkit, mordred, pandas, scikit-learn). Ensures reproducible descriptor calculations.

Solvent Property Database

Curated table (e.g., from "The Organic Chemist's Book of Solvents") linking solvent names to physicochemical properties (dielectric constant, polarity, etc.) for encoding.

Jupyter Notebook / Python Scripts

For scripting the automated feature extraction, fusion, and preprocessing pipeline.

Scikit-learn

For critical post-processing: feature scaling (StandardScaler), dimensionality reduction (PCA), and feature selection (VarianceThreshold, SelectFromModel).

Within Bayesian optimization (BO) frameworks for predicting organic synthesis yields, the surrogate model probabilistically approximates the unknown function mapping reaction conditions to yield. The choice between Gaussian Processes (GPs) and Bayesian Neural Networks (BNNs) fundamentally shapes the optimization's data efficiency, uncertainty quantification, and scalability. This application note provides a comparative analysis and detailed protocols for implementing both models in a chemical synthesis context.

Comparative Quantitative Analysis

Table 1: Core Model Comparison for Chemical Yield Prediction

| Feature | Gaussian Process (GP) | Bayesian Neural Network (BNN) |

|---|---|---|

| Intrinsic Uncertainty | Naturally provides well-calibrated posterior variance. | Uncertainty derived from posterior over weights; often requires approximations. |

| Data Efficiency | Excellent with small datasets (<500 data points). | Typically requires larger datasets (>1000 points) for robust training. |

| Scalability | Poor; cubic complexity O(n³) in dataset size. | Good; linear complexity in dataset size post-training. |

| Handling High-Dimensions | Can struggle with >20 descriptors without careful kernel design. | Naturally suited for high-dimensional input (e.g., many molecular descriptors). |

| Non-Linearity Capture | Dependent on kernel choice (e.g., Matérn, RBF). | Very flexible; learns complex, hierarchical representations. |

| Interpretability | High via kernel structure and hyperparameters. | Low; acts as a "black box." |

| Implementation Complexity | Moderate (matrix inversions, hyperparameter tuning). | High (stochastic variational inference, MCMC sampling). |

Table 2: Typical Performance Metrics on Benchmark Reaction Datasets

| Model (Kernel/Architecture) | Avg. RMSE (Yield %) | Avg. MAE (Yield %) | Avg. Negative Log Likelihood | Calibration Score (↓ is better) |

|---|---|---|---|---|

| GP (Matérn 5/2) | 4.8 | 3.5 | 1.12 | 0.08 |

| GP (Composite Chemical) | 3.9 | 2.9 | 0.98 | 0.05 |

| BNN (2-Layer, 50 Units) | 5.2 | 3.9 | 1.45 | 0.15 |

| BNN (3-Layer, 100 Units) | 3.5 | 2.6 | 1.21 | 0.12 |

| Deep GP | 3.8 | 2.8 | 1.05 | 0.07 |

Note: Metrics aggregated from recent literature on Suzuki and Ugi reaction yield prediction. Composite kernels combine linear, periodic, and noise terms.

Experimental Protocols

Protocol 1: Implementing a Gaussian Process Surrogate for Reaction Screening

Objective: To build a GP surrogate model using chemical descriptors to predict the yield of a palladium-catalyzed cross-coupling reaction.

Materials: See "Scientist's Toolkit" below.

Procedure:

- Data Preparation:

- Represent each reaction using a feature vector incorporating catalyst identity (one-hot encoded), ligand steric/electronic parameters (e.g., %VBur), aryl halide electronic descriptors (Hammett σp), temperature (scaled), and solvent polarity (logP).

- Split data into training (n=80) and hold-out test (n=20) sets.

Kernel Selection & Model Definition:

- Define a composite kernel:

k = ConstantKernel * Matern52(length_scale=2.0) + WhiteKernel(noise_level=0.1). The Matérn kernel captures smooth trends, while the White Kernel accounts for experimental noise. - Instantiate a

GaussianProcessRegressorwith the defined kernel.

- Define a composite kernel:

Model Training & Hyperparameter Optimization:

- Fit the GP to the training data.

- Optimize kernel hyperparameters by maximizing the log-marginal likelihood using the L-BFGS-B optimizer.

Prediction & Uncertainty Quantification:

- For a new set of reaction conditions, call

predict()to return the mean predicted yield and standard deviation. - The acquisition function (e.g., Expected Improvement) uses this posterior distribution to propose the next experiment.

- For a new set of reaction conditions, call

Diagram: GP Surrogate Workflow for Reaction Optimization

Protocol 2: Implementing a Bayesian Neural Network Surrogate

Objective: To train a BNN as a high-capacity surrogate for a heterogeneous library of multi-step reactions.

Procedure:

- Architecture Definition:

- Design a neural network with 3 fully connected hidden layers (128, 64, 32 units) and ReLU activations.

- Place a variational posterior distribution (e.g., mean-field Gaussian) over all network weights.

Model Training via Stochastic Variational Inference (SVI):

- Define a Gaussian prior over weights and a Gaussian likelihood for yield predictions.

- Use the Evidence Lower Bound (ELBO) as the loss function.

- Minimize the negative ELBO using stochastic gradient descent (e.g., Adam optimizer) with mini-batches.

Uncertainty Estimation:

- At prediction time, perform multiple stochastic forward passes (e.g., 50) using Monte Carlo Dropout or by sampling weights from the learned posterior.

- Calculate the mean and standard deviation of the predictions across these passes to estimate the predictive posterior.

Integration with BO:

- Feed the predictive mean and variance from the BNN to the acquisition function to guide the next experiment selection.

Diagram: BNN Surrogate Training with Variational Inference

The Scientist's Toolkit

Table 3: Essential Research Reagents & Software for Model Implementation

| Item | Function in Surrogate Modeling | Example Product/ Library |

|---|---|---|

| Chemical Descriptor Calculator | Generates quantitative features (e.g., sterics, electronics) from reactant structures. | RDKit, Dragon, Mordred |

| GP Implementation Library | Provides robust algorithms for GP regression, hyperparameter tuning, and prediction. | GPyTorch, scikit-learn GaussianProcessRegressor, GPflow |

| BNN/VI Implementation Library | Enables construction and training of BNNs using variational inference or MCMC. | Pyro (PyTorch), TensorFlow Probability, Edward2 |

| Bayesian Optimization Suite | Integrates surrogate models with acquisition functions for closed-loop optimization. | BoTorch (PyTorch), Ax, GPyOpt |

| High-Throughput Experimentation (HTE) Data | Provides structured, medium-large scale reaction datasets for training data-intensive models like BNNs. | MIT ORC, NREL High-Throughput Experimental Data |

| Automated Reactor System | Physically executes proposed experiments in an iterative BO loop. | Chemspeed, Unchained Labs, custom flow systems |

In Bayesian optimization (BO) for organic synthesis yield prediction, the acquisition function is the critical decision-making mechanism that guides the search for optimal reaction conditions. It balances the exploration of uncertain regions of the chemical space with the exploitation of known high-yielding conditions. This protocol details the application of three principal acquisition functions—Expected Improvement (EI), Upper Confidence Bound (UCB), and Probability of Improvement (PI)—within a thesis framework focused on accelerating drug development through machine learning-driven synthesis planning.

Comparative Analysis of Acquisition Functions

The selection of an acquisition function directly influences the efficiency and outcome of the optimization campaign. The table below summarizes their core characteristics, mathematical formulations, and applicability in chemical synthesis contexts.

Table 1: Comparison of Key Acquisition Functions for Yield Optimization

| Acquisition Function | Mathematical Formulation (for maximization) | Key Hyperparameter(s) | Exploration Tendency | Best Suited For |

|---|---|---|---|---|

| Expected Improvement (EI) | EI(x) = E[max(f(x) - f(x*), 0)] where f(x*) is the current best yield. |

ξ (jitter parameter) | Balanced, tunable | General-purpose yield optimization; when sample efficiency is critical. |

| Upper Confidence Bound (UCB) | UCB(x) = μ(x) + κ * σ(x) where μ is mean prediction, σ is uncertainty. |

κ (balance parameter) | Explicitly controllable via κ | Systematic exploration; noisy yield data; constrained reaction spaces. |

| Probability of Improvement (PI) | PI(x) = P(f(x) ≥ f(x*) + ξ) |

ξ (trade-off parameter) | Lower, more exploitative | Quick convergence to a good yield; initial screening phases. |

Note: In all formulations, x represents the reaction conditions (e.g., catalyst, temperature, solvent).

Experimental Protocols for Function Evaluation

Protocol 1: Benchmarking Acquisition Functions on a Known Reaction Landscape

Objective: To empirically determine the most efficient acquisition function for optimizing the yield of a Pd-catalyzed cross-coupling reaction.

- Data Preparation: Curate a historical dataset of ~100 previous experiments for the target reaction, with yields and condition parameters (ligand, temperature, solvent, concentration).

- Surrogate Model Training: Train a Gaussian Process (GP) model using 80% of the data, using a Matérn kernel to capture non-linear effects.

- Optimization Loop: Run three parallel BO loops (each n=20 sequential experiments), one each using EI, UCB (κ=2.0), and PI (ξ=0.01).

- Evaluation Metrics: Track and plot for each iteration:

- Best Observed Yield: To assess convergence speed.

- Inverse Distance to Global Optimum: If known from a full factorial screen.

- Cumulative Regret: The sum of yield differences between the chosen point and the true best point.

Protocol 2: Tuning Hyperparameters for Chemical Context

Objective: To optimize the balance parameter κ in UCB for a novel, high-uncertainty enzymatic synthesis.

- Initial Design: Perform a space-filling design (e.g., Latin Hypercube) of 15 initial experiments across pH, temperature, and enzyme loading.

- Iterative Tuning: Conduct five sequential BO cycles using UCB with different κ values (0.5, 1.0, 2.0, 3.0) in parallel batches.

- Analysis: Identify the κ value that leads to the most significant yield improvement after the fifth cycle, indicating optimal balance for that specific chemical space.

Logical Workflow for Acquisition Function Selection

Title: Decision Workflow for Selecting an Acquisition Function

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational & Experimental Materials

| Item | Function in Bayesian Optimization for Synthesis |

|---|---|

| Gaussian Process Regression Library (e.g., GPyTorch, scikit-learn) | Provides the probabilistic surrogate model to predict yield and uncertainty at untested conditions. |

| Bayesian Optimization Framework (e.g., BoTorch, Ax, GPflowOpt) | Implements acquisition functions (EI, UCB, PI) and manages the optimization loop. |

| Chemical Descriptor Software (e.g., RDKit, Mordred) | Generates numerical representations (fingerprints, descriptors) of molecules (catalysts, solvents) for the model. |

| High-Throughput Experimentation (HTE) Robotic Platform | Enables automated execution of the suggested experiments from each BO iteration. |

| Standardized Reaction Vessels & Analysis Plates | Ensures experimental consistency and enables parallel yield determination (e.g., via HPLC or UPLC). |

| Liquid Handling Robot | Automates the precise dispensing of reagents and catalysts for the DOE suggested by BO. |

| Online Analytical Instrument (e.g., UPLC-MS) | Provides rapid, quantitative yield data to feedback into the BO loop, minimizing cycle time. |

Within the broader thesis on Bayesian optimization for organic synthesis yield prediction, Step 5 represents the operational core. This phase transforms theoretical models into actionable experimental campaigns, iteratively guiding chemists toward optimal reaction conditions. It integrates initial design-of-experiment (DoE) data with a continuously updated surrogate model to propose high-yield candidates for validation.

Core Algorithmic Protocol: The BO Iteration Cycle

Protocol 2.1: Single Iteration of the Bayesian Optimization Loop Objective: To execute one complete cycle of candidate proposal and experimental feedback. Duration: 24-72 hours per cycle (dependent on reaction scale and analysis). Steps:

- Surrogate Model Update: Using all accumulated experimental data (initial DoE + previous BO runs), retrain the Gaussian Process (GP) regression model. Standard practice uses a Matérn kernel with automatic relevance determination (ARD).

- Acquisition Function Maximization: Calculate the next proposed experiment(s) by maximizing the chosen acquisition function (e.g., Expected Improvement, EI).

- Parameter: For parallel experimentation, use q-EI (batch size, q=4-8).

- Method: Optimize using L-BFGS-B or random sampling with restarts (typically 50-100) across the bounded chemical space.

- Experimental Execution: Synthesize the proposed reaction condition(s) in the laboratory.

- Yield Quantification: Analyze reaction outcome via calibrated HPLC or NMR to obtain precise yield data.

- Data Augmentation: Append the new {condition, yield} pair to the master dataset.

Key Software Tools: BoTorch, GPyTorch, scikit-optimize.

Initial Experimental Design (DoE) Protocol

Protocol 3.1: Generating the Initial Data Set Objective: To create a diverse, space-filling set of initial experiments to seed the GP model. Method: Sobol Sequence or Latin Hypercube Sampling (LHS). Typical Scale: 10-24 experiments, covering 4-7 continuous variables (e.g., temperature, catalyst loading, equivalence, concentration, time). Procedure:

- Define hard bounds for each continuous variable based on solvent boiling point, reagent solubility, and safety limits.

- Define categorical variables (e.g., ligand identity, solvent class) using one-hot encoding.

- Generate

nsample points using a Sobol sequence viascipy.stats.qmc.Sobol. - Scale points to practical laboratory ranges (e.g., temperature: 25°C - 120°C).

- Execute reactions in a randomized order to minimize systematic bias.

Table 1: Representative Initial DoE Data for a Pd-Catalyzed Cross-Coupling

| Exp ID | Temp (°C) | Cat. Load (mol%) | Equiv. Base | Conc. (M) | Ligand | Yield (%) |

|---|---|---|---|---|---|---|

| S1 | 45 | 1.5 | 1.8 | 0.15 | SPhos | 22 |

| S2 | 100 | 0.5 | 2.5 | 0.05 | XPhos | 15 |

| S3 | 80 | 2.0 | 1.2 | 0.20 | RuPhos | 65 |

| S4 | 60 | 1.0 | 3.0 | 0.10 | SPhos | 38 |

| ... | ... | ... | ... | ... | ... | ... |

| S20 | 75 | 1.2 | 2.0 | 0.12 | XPhos | 41 |

Data Presentation & Iterative Results

Table 2: Progression of Top Yield Through BO Iterations

| BO Iteration | Experiments Completed | Best Yield Found (%) | Proposed Temp (°C) | Proposed Cat. Load (mol%) |

|---|---|---|---|---|

| 0 (DoE) | 20 | 65 | 80 | 2.0 |

| 1 | 24 | 78 | 92 | 1.8 |

| 3 | 32 | 85 | 88 | 1.6 |

| 5 | 40 | 92 | 86 | 1.4 |

| 10 | 60 | 96 | 85 | 1.1 |

Visualization of the BO Workflow

Diagram 1: Closed-Loop Bayesian Optimization for Synthesis

The Scientist's Toolkit: Key Reagents & Materials

Table 3: Research Reagent Solutions for High-Throughput BO Experimentation

| Item | Function in BO Loop | Example/Notes |

|---|---|---|

| Pre-weighed Reagent Stocks | Enables rapid, precise dispensing of varying amounts for each proposed condition. | Solid aryl halides, ligands in separate vials. |

| Automated Liquid Handler | Precisely dispenses variable volumes of liquid reagents (solvent, base, catalyst stock). | Enables preparation of 96-well reaction blocks. |

| Catalyst Stock Solutions | Consistent source of metal catalyst for varying loadings; prepared fresh daily. | e.g., Pd2(dba)3 in dry THF (0.01 M). |

| Inert Atmosphere Glovebox | Essential for handling air-sensitive reagents and setting up reactions. | Maintains <1 ppm O2 for phosphine ligands. |

| Parallel Reactor Block | Allows simultaneous heating/stirring of multiple (e.g., 24) reaction vials. | Temperature range 25-150°C, with stirring. |

| QC Analytics (UPLC/MS) | Rapid, quantitative yield analysis of crude reaction mixtures. | Enables <30 min analysis of 96 samples. |

| Laboratory Information Management System (LIMS) | Tracks all experimental parameters and outcomes, feeds data directly to BO algorithm. | Critical for data integrity and automation. |

Application Notes

This study details the application of Bayesian optimization (BO) to efficiently optimize the yield of a Suzuki-Miyaura cross-coupling reaction, a critical transformation in pharmaceutical synthesis. The work is framed within a thesis investigating machine learning-guided yield prediction for complex organic reactions. Traditional one-variable-at-a-time (OVAT) approaches are resource-intensive. By treating the reaction as a black-box function, BO uses a Gaussian process surrogate model to predict yield and an acquisition function (Expected Improvement) to propose the next most informative experiment, rapidly converging on the global yield maximum with fewer experiments.

Objective: Maximize the yield of the coupling between 4-bromoanisole (A) and 2-formylphenylboronic acid (B) to form biaryl aldehyde (C), a key pharmaceutical intermediate.

Reaction: 4-BrC₆H₄OCH₃ + (2-HCO)C₆H₄B(OH)₂ → (4-CH₃OC₆H₄)-(2-HCOC₆H₄) + Byproducts

Variables Optimized:

- Catalyst loading (mol%)

- Reaction temperature (°C)

- Equivalents of base (K₂CO₃)

- Water content in solvent (THF/H₂O v/v%)

Key Quantitative Results:

Table 1: Bayesian Optimization Performance vs. Traditional Screening

| Optimization Method | Initial Design Points | Total Experiments Needed to Reach >90% Yield | Maximum Yield Achieved |

|---|---|---|---|

| Traditional OVAT Grid Search | 0 | 81 (9x9 grid) | 92% |

| Bayesian Optimization (EI) | 12 (Latin Hypercube) | 24 | 95% |

Table 2: Optimized Reaction Conditions Identified by BO

| Parameter | Low Bound | High Bound | BO-Optimized Value |

|---|---|---|---|

| Pd(PPh₃)₄ Loading | 0.5 mol% | 3.0 mol% | 1.8 mol% |

| Temperature | 50 °C | 120 °C | 85 °C |

| K₂CO₃ Equivalents | 1.5 eq. | 3.5 eq. | 2.4 eq. |

| Water Content | 0% v/v | 50% v/v | 18% v/v |

| Resulting Isolated Yield | 95% |

Detailed Experimental Protocol

Protocol 1: General Procedure for Bayesian-Optimized Suzuki-Miyaura Coupling

Materials: See "Scientist's Toolkit" below.

Procedure:

- Setup: In a nitrogen-filled glovebox, charge a 5 mL microwave vial with a magnetic stir bar.

- Weighing: Accurately weigh 4-bromoanisole (93.5 mg, 0.50 mmol, 1.0 eq.) and 2-formylphenylboronic acid (97.6 mg, 0.65 mmol, 1.3 eq.) into the vial.

- Catalyst/Base Addition: Add tetrakis(triphenylphosphine)palladium(0) (17.3 mg, 0.015 mmol, 1.8 mol%) and potassium carbonate (166 mg, 1.20 mmol, 2.4 eq.).

- Solvent Addition: Using a positive displacement pipette, add a degassed mixture of tetrahydrofuran (1.64 mL) and deionized water (0.36 mL) (Total volume: 2.0 mL, 18% v/v H₂O).

- Sealing: Seal the vial with a PTFE-lined crimp cap.

- Reaction: Remove the vial from the glovebox and place it in a pre-heated aluminum heating block at 85 °C. Stir vigorously (800 rpm) for 18 hours.

- Work-up: Cool the vial to room temperature. Dilute the reaction mixture with ethyl acetate (10 mL) and transfer to a separatory funnel. Wash with water (5 mL) and brine (5 mL). Dry the organic layer over anhydrous magnesium sulfate.

- Analysis: Filter and concentrate the organic layer under reduced pressure. Purify the crude product by flash chromatography (silica gel, 9:1 hexanes:ethyl acetate) to yield the pure biaryl aldehyde C as a white solid (108 mg, 95% yield).

- Validation: Characterize the product by ¹H NMR, ¹³C NMR, and HRMS. Data should match literature values.

Protocol 2: Yield Analysis Workflow for Bayesian Learning Loop

- After each reaction (Protocol 1, steps 1-7), take an aliquot (100 µL) of the crude mixture.

- Dilute the aliquot with 1.0 mL of ethyl acetate and filter through a short plug of silica gel.

- Analyze the filtrate by High-Performance Liquid Chromatography (HPLC) using a C18 column and a UV detector at 254 nm.

- Calculate the crude yield by integrating the product peak relative to a calibrated external standard of pure compound C.

- Report the (x, y) data pair (reaction conditions, crude yield) to the Bayesian optimization algorithm.

- The algorithm proposes the next set of conditions for the subsequent experiment.

Visualizations

Title: Bayesian Optimization Workflow for Reaction

Title: Suzuki-Miyaura Catalytic Mechanism

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions & Materials

| Item | Function in Experiment | Key Details |

|---|---|---|

| Tetrakis(triphenylphosphine)palladium(0) [Pd(PPh₃)₄] | Pre-formed, air-sensitive Pd(0) catalyst. Initiates the catalytic cycle via oxidative addition. | Store under N₂/Ar at -20°C. Weigh rapidly in glovebox. |

| 2-Formylphenylboronic Acid | Nucleophilic coupling partner. Boronic acid must be activated (as borate) by base for transmetalation. | Check for dehydration (anhydride formation); can be re-purified by recrystallization. |

| Anhydrous Potassium Carbonate (K₂CO₃) | Base. Activates boronic acid and neutralizes HBr generated during reductive elimination. | Must be finely powdered and thoroughly dried (≥120°C under vacuum) for consistent reactivity. |

| Degassed Mixed Solvent (THF/H₂O) | Reaction medium. THF solubilizes organics; water enhances base solubility and boronate formation. | Degas by sparging with N₂ for 20 min or via freeze-pump-thaw cycles to prevent Pd oxidation. |

| Inert Atmosphere (N₂/Ar) Glovebox | Essential for handling air-sensitive catalyst and ensuring reproducible initial conditions. | Maintain O₂ and H₂O levels <0.1 ppm for reliable catalyst performance. |

| Automated HPLC System with C18 Column | Enables rapid, quantitative yield measurement for the BO data loop. Crucial for high-throughput feedback. | Use an external standard for calibration. Method runtime should be <10 min per sample. |

Overcoming Challenges: Troubleshooting and Advanced BO Strategies

Within the broader thesis on Bayesian optimization (BO) for organic synthesis yield prediction, the quality of training data is paramount. The performance of Gaussian Process (GP) regression, the typical surrogate model in BO, degrades significantly with noisy (high-variance) or sparse (low-volume) yield observations. This pitfall directly impacts the efficiency of closed-loop reaction optimization campaigns, leading to wasted resources and suboptimal conditions. This application note provides protocols to diagnose, mitigate, and design experiments that are robust to these data challenges.

Table 1: Effect of Noise and Data Sparsity on GP Prediction Accuracy (RMSE)

| Data Condition | Number of Initial Points | Noise Level (σ) | Mean RMSE (Yield %) | 95% Confidence Interval |

|---|---|---|---|---|

| Sparse & Clean | 8 | 0.05 | 12.4 | ± 1.8 |

| Sparse & Noisy | 8 | 0.20 | 21.7 | ± 3.5 |

| Moderate & Clean | 16 | 0.05 | 6.1 | ± 0.9 |

| Moderate & Noisy | 16 | 0.20 | 11.3 | ± 2.1 |

| Dense & Clean | 32 | 0.05 | 3.2 | ± 0.5 |

| Dense & Noisy | 32 | 0.20 | 8.9 | ± 1.7 |

Note: Simulated data for a 3-factor Suzuki-Miyaura cross-coupling reaction space. Noise Level represents the standard deviation of added Gaussian noise.

Table 2: Comparative Efficacy of Mitigation Strategies

| Strategy | Sparse Data (n=8) RMSE Improvement | Noisy Data (σ=0.2) RMSE Improvement | Computational Overhead |

|---|---|---|---|

| Heteroscedastic Likelihood Model | 5% | 35% | High |

| Data Augmentation (SMILES) | 25% | 10% | Medium |

| Hierarchical/Multi-task Model | 30%* | 15%* | High |

| Robust Kernels (Matern 3/2) | 8% | 12% | Low |

| Active Learning for Exploration | 40% | 20% | Medium |

*Improvement relies on related reaction data. Improvement measured after 5 BO iterations.

Experimental Protocols

Protocol 3.1: Diagnosing Data Noise and Sparsity

Objective: Quantify the noise level and sparsity of an existing yield dataset. Materials: Historical yield data for a reaction of interest (minimum 5 data points). Procedure:

- Residual Analysis: Fit a preliminary GP model (using a Matern 5/2 kernel). Extract the residuals (difference between observed and predicted yields).

- Noise Estimation: Calculate the standard deviation of the residuals. If a dedicated noise parameter (α) is provided by the GP library (e.g.,

gpytorchorscikit-learn), record its value. - Sparsity Assessment: Compute the coverage of your experimental space. For a space with d dimensions (e.g., catalysts, temperature, concentration), calculate the normalized distance between all points. A mean nearest-neighbor distance >20% of the maximum possible distance indicates severe sparsity.

- Cross-Validation: Perform 5-fold leave-one-out cross-validation. A high variance in prediction error across folds indicates sensitivity to sparsity.

Protocol 3.2: Implementing a Heteroscedastic Likelihood Model for Noisy Data

Objective: Build a GP model that accounts for variable noise across the reaction space.

Software: Python with GPyTorch or BoTorch.

Procedure:

- Model Definition: Instead of a standard

GaussianLikelihood(constant noise), define aHeteroscedasticLikelihood. This involves a second GP or a neural network to model the noise level as a function of input conditions. - Model Training: Use Type-II Maximum Likelihood Estimation to jointly optimize the hyperparameters of the primary yield GP and the auxiliary noise GP. Use a combined loss function (marginal log likelihood + regularization).

- Acquisition Function Adjustment: When using Expected Improvement (EI) or Upper Confidence Bound (UCB), ensure the acquisition function incorporates the predicted variance from the heteroscedastic model. This prevents over-exploitation of points that appear high-yielding due to high local noise.

Protocol 3.3: Data Augmentation via Reaction Representation for Sparse Data

Objective: Generate informative prior data to alleviate sparsity.

Materials: SMILES strings of reactants, products, and catalysts; a pretrained reaction representation model (e.g., rxnfp, Molecular Transformer embeddings).

Procedure: