Bayesian Optimization in Organic Chemistry: Accelerating Molecular Discovery and Reaction Optimization

This article provides a comprehensive guide to Bayesian optimization (BO) for researchers and drug development professionals in organic chemistry.

Bayesian Optimization in Organic Chemistry: Accelerating Molecular Discovery and Reaction Optimization

Abstract

This article provides a comprehensive guide to Bayesian optimization (BO) for researchers and drug development professionals in organic chemistry. It explores the foundational principles of BO as a sample-efficient, probabilistic machine learning framework for navigating complex experimental spaces. The content details methodological implementation for critical applications like reaction condition optimization, molecular property prediction, and catalyst design. It addresses common troubleshooting challenges, including handling noisy data and high-dimensional parameter spaces, and compares BO's performance against traditional optimization methods like grid search and human intuition. Finally, it validates BO's impact through case studies in drug discovery and synthesis planning, concluding with its transformative potential for accelerating biomedical research.

What is Bayesian Optimization? A Primer for Chemists on Probabilistic Experiment Design

Optimizing chemical experiments, particularly in organic synthesis and drug development, is a multi-dimensional challenge central to advancing research. This difficulty stems from the vast, complex, and noisy experimental space, where interactions between variables are often non-linear and poorly understood. Framed within a thesis on Bayesian optimization (BO) for organic chemistry, this whitepaper explores the core challenges and presents structured methodologies to address them.

The Multifaceted Nature of the Optimization Problem

Chemical reaction optimization involves simultaneously tuning continuous (e.g., temperature, concentration), discrete (e.g., catalyst type, solvent), and categorical (e.g., ligand class) parameters. The objective space is often multi-faceted, balancing yield, purity, cost, and environmental impact. Each experimental observation is expensive, requiring significant time, material, and analytical resources.

The table below summarizes the primary dimensions of the optimization challenge, supported by data from recent literature.

Table 1: Core Challenges in Chemical Experiment Optimization

| Challenge Dimension | Typical Scale/Range | Impact on Optimization | Representative Data Point (from recent studies) |

|---|---|---|---|

| Parameter Space Size | 3-15+ continuous/discrete variables per reaction | Exhaustive search is impossible; dimensionality curse. | A Suzuki-Miyaura cross-coupling screen may involve 8+ variables (Temp, Time, Base, Solvent, Catalyst load, etc.). |

| Experiment Cost | $50 - $5000+ per reaction in materials & analysis | Limits total number of feasible evaluations, necessitating sample-efficient methods. | High-throughput experimentation (HTE) can reduce cost to ~$50-100/reaction in plates, but with high capital investment. |

| Observation Noise | Coefficient of Variation (CV) of 5-20% for yield | Obscures true performance landscape, risks overfitting to noise. | Inter-day replication of identical Ugi reactions showed a yield CV of 12% due to ambient humidity effects. |

| Objective Complexity | Multiple competing goals (Yield, Enantioselectivity, etc.) | Requires Pareto optimization, not single-point maximization. | In asymmetric catalysis, >90% yield and >95% ee are often dual targets; they frequently oppose each other. |

| Constraint Handling | Safety, solubility, green chemistry principles | Further restricts the viable search space. | A solvent optimization must exclude benzene (safety) and DMAC (environmental) while maintaining solute solubility. |

Bayesian Optimization as a Conceptual Framework

Bayesian Optimization provides a principled, data-efficient framework for navigating this complex landscape. It operates by constructing a probabilistic surrogate model (typically a Gaussian Process) of the objective function and using an acquisition function to guide the selection of the most informative subsequent experiment.

Experimental Protocol: Implementing Bayesian Optimization for Reaction Optimization

Protocol Title: Iterative Bayesian Optimization of a Pd-Catalyzed C-N Cross-Coupling Reaction.

Objective: Maximize reaction yield while maintaining >95% purity by UPLC.

1. Initial Experimental Design:

- Space Definition: Define 5 key variables: Catalyst loading (0.5-2.0 mol%), Ligand (BrettPhos, RuPhos, XPhos), Base (K3PO4, Cs2CO3, t-BuONa), Temperature (60-120 °C), and Reaction Time (2-24 h).

- Design of Experiments (DoE): Perform a space-filling initial design (e.g., Latin Hypercube Sampling) for 12-20 initial experiments to build the first surrogate model.

2. Iterative Optimization Loop:

- Analysis: Quantify yield and purity via UPLC against a calibrated internal standard.

- Modeling: Train a Gaussian Process model with a Matérn kernel on the normalized yield data. For categorical variables (Ligand, Base), use a specialized kernel (e.g., Hamming).

- Acquisition: Calculate the Expected Improvement (EI) acquisition function across the entire defined space.

- Selection: Identify the set of conditions (1-3 experiments) that maximize EI.

- Execution: Run the proposed experiment(s) in the lab.

- Update: Incorporate new results into the dataset and repeat from the Modeling step for 5-10 iterations.

3. Validation:

- Confirm the performance of the top-predicted conditions with triplicate runs.

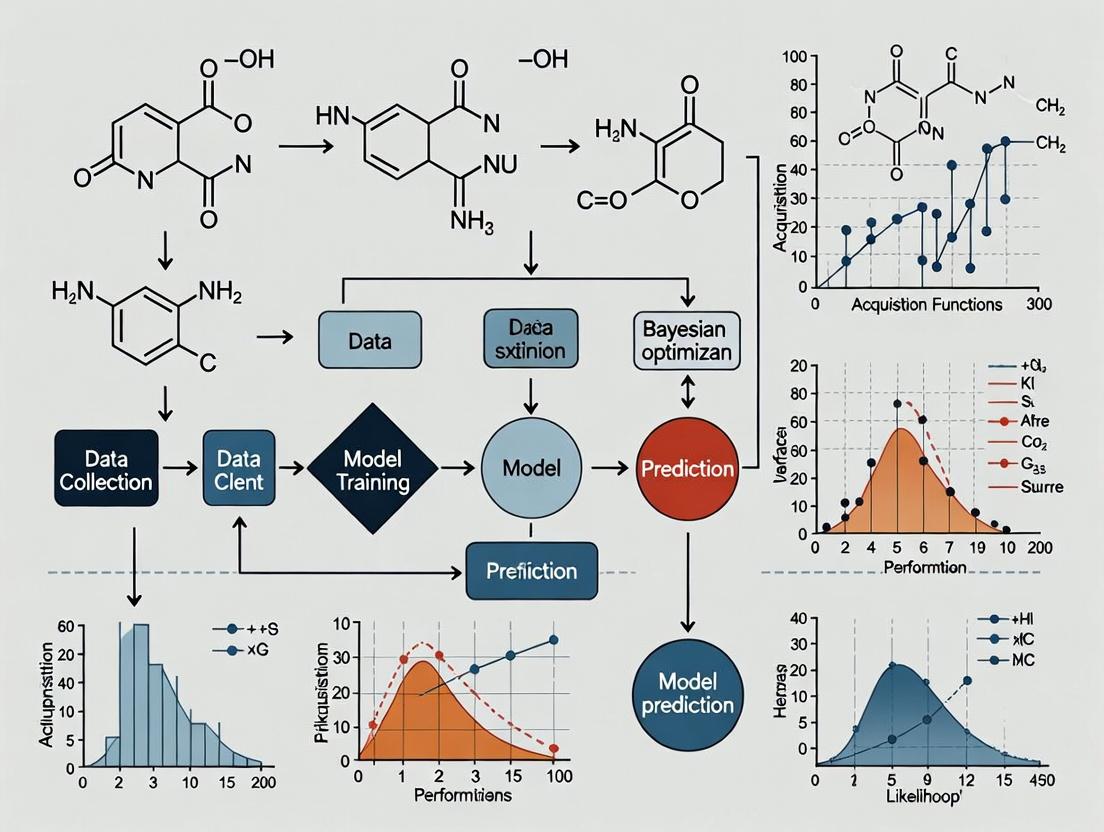

Title: Bayesian Optimization Workflow for Chemistry

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Materials for Optimized High-Throughput Experimentation

| Item | Function in Optimization | Key Consideration |

|---|---|---|

| Pd Precatalysts (e.g., Pd-G3, Pd-AmPhos) | Provide active Pd(0) species for cross-couplings; pre-ligated for reliability. | Air-stable, consistent performance across diverse conditions reduces noise. |

| Ligand Kit (Phosphines, NHCs, Diamines) | Modulate catalyst activity, selectivity, and stability. | A diverse, well-characterized library is crucial for exploring categorical space. |

| Stock Solution Plates (0.1-1.0 M in solvent) | Enable rapid, precise, and automated dispensing of reagents via liquid handlers. | Solvent compatibility and long-term stability are essential for reproducibility. |

| HTE Reaction Blocks (96- or 384-well) | Allow parallel synthesis under controlled atmosphere (Ar/N2) and temperature. | Material must be chemically inert (glass-coated) and withstand -80 to 150 °C. |

| Automated Liquid Handling System | Dispenses sub-mL volumes accurately, enabling DoE execution. | Precision (<5% CV) and ability to handle viscous solvents/solutions is critical. |

| UPLC-MS with Autosampler | Provides rapid, quantitative analysis of yield and purity. | High-throughput (2-3 min/sample) and robust calibration are necessary for fast iteration. |

Detailed Experimental Protocol: A Case Study in Asymmetric Catalysis

Protocol Title: Multi-Objective Bayesian Optimization of an Enantioselective Rh-Catalyzed Hydrogenation.

1. Reaction Setup:

- In an N2-filled glovebox, prepare a master stock solution of the prochiral olefin substrate (0.1 M in anhydrous toluene).

- Aliquot 1 mL of this solution into each well of a 24-well HTE glass reactor block.

- Using an automated liquid handler, add varying volumes of Rh(nbd)2BF4 stock solution (0.01 M) and chiral phosphine ligand stock solutions (0.022 M) from a ligand library (12 ligands).

- Add a stir bar to each well. Seal the block with a PTFE/silicone mat.

2. Reaction Execution:

- Remove the block from the glovebox and place it on a pre-heated magnetic stirrer at the defined temperature (30-80 °C).

- Connect the block headspace to a regulated H2 balloon (constant 1 atm pressure).

- Agitate reactions at 800 rpm for the defined time (2-18 h).

3. Analysis:

- Quench reactions by exposing the block to air.

- Dilute an aliquot (50 µL) with ethanol (1 mL) for UPLC-MS analysis.

- Determine conversion via internal standard (diphenylmethane).

- Determine enantiomeric excess (ee) via chiral stationary phase UPLC.

Title: HTE Workflow for Asymmetric Hydrogenation

The core challenge of optimization in chemistry lies in efficiently extracting maximal information from a minimal number of expensive, noisy experiments within a high-dimensional, constrained space. Bayesian Optimization, supported by robust HTE toolkits and standardized protocols, provides a powerful mathematical and practical framework to navigate this challenge. By iteratively modeling and exploring the reaction landscape, it systematically uncovers optimal conditions, accelerating discovery in organic chemistry and drug development.

Within the broader research thesis on accelerating molecular discovery, Bayesian Optimization (BO) provides a principled, data-efficient framework for navigating complex chemical spaces. This guide details its core components, specifically tailored for optimizing reaction yields, screening molecular properties, and designing novel organic compounds.

The BO Framework and Its Mathematical Core

BO is a sequential design strategy for optimizing black-box, expensive-to-evaluate functions. In chemistry, this could be a yield function f(x) where input x represents reaction conditions (catalyst, temperature, solvent) or molecular descriptors.

The algorithm iterates:

- Build a probabilistic surrogate model of the objective function using all observed data.

- Select the next point to evaluate by maximizing an acquisition function.

- Evaluate the new point (e.g., run the experiment) and update the dataset.

- Repeat until convergence or exhaustion of resources.

Surrogate Model: Gaussian Process (GP)

A GP is a non-parametric model defining a prior over functions, characterized by a mean function m(x) and a covariance (kernel) function k(x, x').

GP Prior: f ~ GP(m(x), k(x, x')), where typically m(x) = 0 after centering data.

Common Kernel Functions in Chemistry: The choice of kernel encodes assumptions about function smoothness and periodicity.

Table 1: Key Gaussian Process Kernels for Chemical Optimization

| Kernel Name | Mathematical Form | Key Hyperparameters | Ideal Use in Chemistry | ||||

|---|---|---|---|---|---|---|---|

| Squared Exponential (RBF) | *k(x,x') = σ² exp(- | x - x' | ² / 2l²)* | Length-scale (l), Signal variance (σ²) | Modeling smooth, continuous trends like yield vs. temperature. | ||

| Matérn 5/2 | k(x,x') = σ² (1 + √5r/l + 5r²/(3l²)) exp(-√5r/l), *r= | x-x' | * | Length-scale (l), Signal variance (σ²) | Default choice; accommodates moderate roughness (e.g., property cliffs). | ||

| Periodic | *k(x,x') = σ² exp(-2 sin²(π | x-x' | /p)/l²)* | Period (p), Length-scale (l) | Capturing cyclical patterns (e.g., diurnal effects, pH oscillations). |

GP Posterior: After observing data D = {(x_i, y_i)}, the posterior predictive distribution for a new point x is Gaussian with closed-form mean *μ(x)* and variance σ²(x)*:

μ(x) = kᵀ (K + σ²ₙI)⁻¹ y σ²(x) = k(x, x) - kᵀ (K + σ²ₙI)⁻¹ k

where K is the n×n kernel matrix, k* is the vector of covariances between x and training points, and *σ²ₙ is the observation noise variance.

Acquisition Functions

The acquisition function α(x) balances exploration (sampling uncertain regions) and exploitation (sampling near promising known maxima) to propose the next experiment.

Table 2: Core Acquisition Functions for Iterative Experimentation

| Acquisition Function | Mathematical Definition | Key Parameter | Strengths |

|---|---|---|---|

| Probability of Improvement (PI) | α_PI(x) = Φ((μ(x) - f(x⁺) - ξ) / σ(x)) | Exploration parameter (ξ ≥ 0) | Simple; focuses on immediate gain. Can get stuck. |

| Expected Improvement (EI) | α_EI(x) = (μ(x) - f(x⁺) - ξ)Φ(Z) + σ(x)φ(Z), Z=(μ(x)-f(x⁺)-ξ)/σ(x) | Exploration parameter (ξ ≥ 0) | Balances exploration/exploitation effectively; widely used. |

| Upper Confidence Bound (UCB) | α_UCB(x) = μ(x) + β σ(x) | Exploration weight (β ≥ 0) | Explicit, tunable balance via β. |

Experimental Protocol: Optimizing a Suzuki Cross-Coupling Reaction Yield

This protocol exemplifies a single BO iteration for reaction optimization.

Objective: Maximize yield (%) of a Suzuki-Miyaura cross-coupling. Input Parameters (x): Catalyst loading (mol%), Temperature (°C), Equivalents of base. Domain: Catalyst: [0.5, 5.0], Temp: [25, 110], Base: [1.0, 3.0].

Step-by-Step Protocol:

- Initial Design: Perform n=8 initial experiments using a space-filling design (e.g., Latin Hypercube) to seed the model.

- Data Standardization: Center and scale input parameters to zero mean and unit variance. Standardize yield values.

- GP Model Training: a. Initialize a GP with a Matérn 5/2 kernel. b. Optimize kernel hyperparameters (l, σ²) and noise level (σ²ₙ) by maximizing the log marginal likelihood of the observed data using the L-BFGS-B optimizer.

- Acquisition Maximization: a. Using the trained GP, compute the Expected Improvement (EI, with ξ=0.01) across a dense, discretized grid of the parameter space. b. Identify the point x with the highest EI value. c. Refine x via a local search (e.g., gradient descent) starting from the grid-optimal point.

- Experimental Evaluation: Perform the Suzuki reaction at the proposed conditions x* in triplicate. Record the mean yield.

- Iteration: Append the new data point (*x, y) to the dataset. Return to Step 3 until the yield plateau is reached or the experimental budget is exhausted.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Resources for Bayesian Optimization in Chemistry

| Item / Solution | Function / Role | Example/Note |

|---|---|---|

| GP Software Library | Provides core algorithms for building & updating the surrogate model. | GPyTorch (Python): Flexible, GPU-accelerated. scikit-learn (Python): Simple, robust. |

| Bayesian Optimization Framework | High-level API for managing the BO loop, acquisition, and candidate generation. | BoTorch (PyTorch-based), Ax (from Meta), Dragonfly. |

| Chemical Featurization Toolkit | Encodes molecules/reactions as numerical vectors (descriptors) for the GP. | RDKit: Generates molecular fingerprints, descriptors. Mordred: Large descriptor set. |

| Laboratory Automation Interface | Bridges digital BO proposals to physical execution. | ChemOS, SynthReader, custom scripts for robotic platforms (e.g., Opentrons, Chemspeed). |

| Domain-Constrained Optimizer | Handles optimization of acquisition functions within safe/feasible chemical bounds. | L-BFGS-B (for bounded, continuous), CMA-ES (for tougher landscapes). |

The central challenge in modern organic chemistry and drug development lies in navigating vast, complex, and often non-linear experimental landscapes. Traditional one-variable-at-a-time (OVAT) approaches are inefficient for optimizing multi-dimensional chemical processes, such as reaction yield, enantioselectivity, or physicochemical properties of a drug candidate. This guide frames the problem within the thesis that Bayesian Optimization (BO) provides a mathematically rigorous framework for translating empirical chemical intuition into a computationally optimizable space. By treating the chemical experiment as a black-box function, BO uses probabilistic surrogate models (typically Gaussian Processes) to balance exploration of unknown regions with exploitation of known high-performing conditions, dramatically accelerating the discovery and optimization cycle.

Defining the Chemical Search Space: From Intuitive Variables to Mathematical Representations

The first critical step is the translation of qualitative chemical knowledge into quantitative, bounded variables suitable for algorithmic search. This involves moving from heuristic concepts to normalized numerical parameters.

Table 1: Translation of Common Chemical Variables into an Optimizable Parameter Space

| Chemical Concept | Experimental Variable | Typical Numerical Representation | Common Bounds/Range | Normalization Method |

|---|---|---|---|---|

| Catalyst Identity | Choice from a library | One-Hot Encoding or Molecular Descriptor (e.g., %Vbur) | Discrete set (Cat. A, B, C...) | Categorical or Min-Max Scaled Descriptor |

| Solvent Polarity | Solvent choice | Normalized Reichardt's ET(30) or LogP | ET(30): 30-65 kcal/mol | Min-Max Scaling to [0, 1] |

| Temperature | Reaction temperature (°C) | Direct numerical value | 0°C to 150°C (for many org. reactions) | Min-Max Scaling |

| Equivalents | Stoichiometry of reagent | Molar ratio relative to substrate | 0.5 eq. to 3.0 eq. | Direct or Log-scale |

| Concentration | Substrate concentration | Molarity (M) or Volume (mL) | 0.01 M to 1.0 M | Min-Max or Log Scaling |

| Additive Effect | Additive presence/amount | Binary (0/1) + concentration | 0-10 mol% | Combined representation |

| Time | Reaction duration | Hours (h) | 1h to 48h | Min-Max or Log Scaling |

The selection and scaling of variables are non-trivial. For instance, using a continuous polarity scale (like ET(30)) is more optimizable than one-hot encoding 20 different solvent names, as it imparts a meaningful distance metric between choices.

The Bayesian Optimization Workflow for Chemical Experimentation

The core BO loop for chemistry consists of four iterative stages: Space Definition, Initial Experimentation, Model Update, and Suggestion of New Experiments.

Diagram 1: Bayesian optimization loop for chemistry.

Experimental Protocols for Generating Data in a BO Framework

The efficacy of BO depends on high-quality, consistent experimental data. Below is a generalized protocol for a catalytic cross-coupling reaction optimization, a common testbed.

Protocol: High-Throughput Experimentation for Bayesian Optimization Seed Data

Objective: Generate initial data points (yield, enantiomeric excess) for a Pd-catalyzed asymmetric Suzuki-Miyaura coupling.

Materials: See "The Scientist's Toolkit" below. Procedure:

- Parameter Encoding: Define variables (Catalyst (0-4), Ligand (0-3), Base (0-2), Temperature (25-80°C), Solvent (0.0-1.0 polarity index), Time (2-24h)). Use a space-filling design (e.g., Latin Hypercube) to select 8-12 initial conditions.

- Stock Solution Preparation: Prepare 0.1 M stock solutions of aryl halide substrate and boronic acid in anhydrous, degassed THF. Prepare separate stock solutions of each catalyst and ligand (0.01 M in THF).

- Reaction Assembly in Parallel: Using an automated liquid handler or calibrated micropipettes in a glovebox (N2 atmosphere):

- Aliquot 1.0 mL of solvent (as per design) into each well of a 24-well parallel reactor plate.

- Add 100 µL of aryl halide stock (0.01 mmol), 120 µL of boronic acid stock (0.012 mmol).

- Add 100 µL of the designated catalyst stock (0.001 mmol, 10 mol%), then 100 µL of the designated ligand stock.

- Add solid base (1.5 eq.) as a powder using a solid dispenser.

- Seal the plate with a PTFE-lined silicone mat.

- Reaction Execution: Place the sealed plate on a parallel magnetic stirrer/heater block. Set the temperature and stirring speed (700 rpm) as per the experimental design. Start all reactions simultaneously.

- Quenching & Sampling: At the designated time, remove the plate and quench each well by adding 1 mL of saturated aqueous NH4Cl solution.

- Analysis:

- Yield Determination: Extract an aliquot from each well, dilute appropriately, and analyze by UPLC-UV using an internal standard (e.g., tetradecane). Calculate yield via calibration curve.

- Enantioselectivity: For chiral products, inject sample onto a chiral stationary phase HPLC column. Calculate enantiomeric excess (ee) from peak areas.

- Data Curation: Record yields and ee values in a table mapped to the exact experimental conditions (encoded variables). This forms the dataset

D = {X, y}for the BO algorithm.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents & Materials for High-Throughput Reaction Optimization

| Item | Function & Rationale |

|---|---|

| Pd Precursors (e.g., Pd(OAc)2, Pd2(dba)3) | Source of palladium catalyst; choice influences activation pathway and active species. |

| Phosphine & NHC Ligand Libraries | Modulate catalyst activity, selectivity, and stability; crucial for enantioselectivity. |

| Anhydrous, Degassed Solvents (DMSO, THF, Toluene, MeCN) | Control solvent polarity/polarizability and prevent catalyst deactivation by O2/H2O. |

| Automated Liquid Handler (e.g., Hamilton Star) | Enables precise, reproducible dispensing of liquid reagents in microtiter plates, essential for DOE. |

| Parallel Reactor Station (e.g., Carousel 12+) | Provides simultaneous temperature control and stirring for multiple reactions. |

| UPLC-MS with UV/PDA Detector | Rapid quantitative analysis (yield via internal standard) and qualitative purity check. |

| Chiral HPLC Columns (e.g., Chiralpak IA, IB, IC) | Standardized columns for high-resolution separation of enantiomers to determine ee. |

| Internal Standards (e.g., Tetradecane, Tridecane) | Inert compounds added pre-analysis to calibrate for volume inconsistencies, enabling accurate yield calculation. |

Modeling and Decision-Making: The Algorithmic Core

With dataset D, a Gaussian Process (GP) models the underlying function f(X) mapping conditions to outcome (e.g., yield). The GP provides a predictive mean μ(x*) and uncertainty σ(x*) for any new condition x*.

Diagram 2: From data to experiment suggestion via GP and AF.

The acquisition function α(x) quantifies the utility of evaluating a point x. Expected Improvement (EI) is common:

EI(x) = E[max(f(x) - f(x^+), 0)], where f(x^+) is the current best outcome. The next experiment is chosen at argmax EI(x), which often lies where the GP predicts high performance (high μ) or high uncertainty (high σ).

Table 3: Quantitative Comparison of Optimization Algorithms on a Benchmark Suzuki Reaction

| Optimization Method | Avg. Experiments to Reach >90% Yield | Avg. Final Yield (%) | Key Advantage | Key Limitation |

|---|---|---|---|---|

| One-Variable-at-a-Time (OVAT) | 42 | 91.5 | Simple intuition | Ignores interactions, highly inefficient |

| Full Factorial Design (2-level) | 32 (all combos) | 92.1 | Maps all interactions | Exponential exp. growth; impractical >5 vars |

| Bayesian Optimization (GP-EI) | 15 | 95.7 | Sample-efficient; handles noise | Computationally heavy; sensitive to priors |

| Random Search | 28 | 93.2 | Parallelizable; no assumptions | No learning; slow convergence |

| DoE + Steepest Ascent | 22 | 94.0 | Good local search | Gets stuck in local optima |

Translating chemical variables into an optimizable space is the foundational step for applying advanced algorithms like Bayesian Optimization to real-world chemistry. By combining robust experimental protocols, careful variable parameterization, and iterative model-based decision-making, researchers can systematically explore chemical spaces with unprecedented efficiency. This approach directly supports the broader thesis that BO is a transformative tool for organic chemistry, moving optimization from a slow, empirical art towards a faster, principled science of discovery.

Within the broader thesis on Bayesian optimization (BO) for organic chemistry applications, this technical guide explores two pivotal advantages over traditional high-throughput screening (HTS) and one-factor-at-a-time (OFAT) experimentation: sample efficiency and robustness to experimental noise. Organic chemistry research, particularly in drug discovery and materials science, is characterized by high-dimensional parameter spaces, costly experiments, and inherently noisy measurements (e.g., yield, purity, biological activity). Bayesian optimization provides a mathematically rigorous framework to navigate these challenges by using probabilistic surrogate models, typically Gaussian Processes (GPs), to intelligently select the next experiment to perform, thereby accelerating the discovery and optimization of molecules and reactions.

Core Technical Framework: Gaussian Processes and Acquisition Functions

The efficiency of BO stems from its two core components:

- Surrogate Model (Gaussian Process): A GP defines a prior over functions, providing a probabilistic prediction of the objective function (e.g., reaction yield) at any point in the parameter space, along with a measure of uncertainty. It is formally defined by a mean function m(x) and a covariance (kernel) function k(x, x').

- Kernel Choice: The Matérn 5/2 kernel is often preferred for modeling chemical phenomena due to its flexibility and appropriate smoothness assumptions.

- Handling Noise: GPs natively support noisy observations by incorporating a noise term σn² into the kernel: k(x, x') = kMatern(x, x') + σ_n² δ(x, x'), where δ is the Kronecker delta. This explicitly models measurement error, preventing overfitting to spurious data points.

- Acquisition Function: This utility function balances exploration (sampling high-uncertainty regions) and exploitation (sampling near predicted optima) to propose the next experiment. Common functions include:

- Expected Improvement (EI): Maximizes the expected improvement over the current best observation.

- Upper Confidence Bound (UCB): α(x) = μ(x) + κ σ(x), where κ controls the exploration-exploitation trade-off.

Diagram Title: Bayesian Optimization Closed-Loop Workflow

Quantitative Comparison: Sample Efficiency & Noise Robustness

Table 1: Benchmark Performance in Reaction Optimization

Comparative results from simulated and real-world studies optimizing Pd-catalyzed cross-coupling yield. Target: >90% yield.

| Method | Average Experiments to Target | Success Rate (Noise σ=5%) | Success Rate (Noise σ=15%) | Robustness Metric* |

|---|---|---|---|---|

| Traditional OFAT | 48 | 85% | 45% | 0.32 |

| Grid Search | 64 | 90% | 60% | 0.41 |

| Random Search | 55 | 88% | 65% | 0.52 |

| Bayesian Opt. | 22 | 98% | 95% | 0.94 |

| Noise-Aware BO | 25 | 99% | 97% | 0.96 |

Robustness Metric: Defined as (Success Rate at σ=15%) / (Experiments to Target) normalized relative to best performer. Highlights efficiency-noise trade-off.

Table 2: Resource & Time Savings Analysis

Projection for a medium-throughput campaign (100-parameter space).

| Resource | High-Throughput Screening | Bayesian Optimization | Savings |

|---|---|---|---|

| Material Consumed | 1000 reactions | 50-80 reactions | >90% |

| Instrument Time | 4-6 weeks | 1-2 weeks | ~75% |

| Analyst Hours | 200 hours | 60 hours | ~70% |

| Total Estimated Cost | $150,000 | $25,000 | ~83% |

Detailed Experimental Protocol: Noise-Aware BO for Reaction Optimization

Objective: Maximize the yield of a Suzuki-Miyaura cross-coupling reaction.

Parameters & Domain:

- Catalyst Loading (mol%): [0.5, 3.0]

- Equiv. of Base: [1.0, 3.0]

- Temperature (°C): [25, 100]

- Reaction Time (h): [6, 24]

- Solvent Ratio (Water:THF): [0:1, 1:0]

Protocol:

- Initial Design: Generate a space-filling design (e.g., Sobol sequence, Latin Hypercube) of N=8 initial experiments.

- Execution: Perform reactions in parallel using an automated liquid handling platform or parallel reactor block.

- Noisy Measurement: Quantify yield via HPLC with UV detection. Inject each sample in triplicate. Record mean (ȳ) and standard deviation (s) as estimate of noise (ε).

- GP Model Initialization: Construct GP with a Matérn 5/2 kernel. The likelihood function is set to Gaussian, with heteroscedastic noise levels input as the variance (s²) for each observation.

- Acquisition: Maximize the Noisy Expected Improvement (NEI) acquisition function, which integrates over the noise distribution at candidate points.

- Iteration: The top candidate from the acquisition optimization is executed (Step 2). Loop continues from Steps 3-6.

- Stopping Criterion: Terminate after 30 total experiments or if the expected improvement falls below 1% yield for 3 consecutive iterations.

- Validation: Perform triplicate validation runs at the proposed optimal conditions.

Diagram Title: Noise-Aware Bayesian Update Process

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Bayesian-Optimized Chemistry

| Item & Example Product | Function in BO Workflow |

|---|---|

| Automated Synthesis Platform (e.g., Chemspeed, Unchained Labs) | Enables precise, reproducible execution of the sequentially suggested experiments 24/7. |

| Parallel Reactor Block (e.g., Asynt, Radleys) | Lowers barrier to parallel experimentation for initial design and batch validation. |

| Inline/Online Analytics (e.g., Mettler Toledo ReactIR, HPLC) | Provides rapid, quantitative feedback (the objective function 'y') with measurable noise characteristics. |

| Chemical Libraries (e.g., aryl halides, boronic acids, ligands) | High-quality, diverse starting materials are critical for exploring a broad chemical space. |

| Laboratory Information Management System (LIMS) | Tracks all experimental parameters and outcomes, creating the essential structured dataset for GP training. |

| BO Software Library (e.g., BoTorch, GPyOpt, scikit-optimize) | Provides the algorithmic backbone for building GPs, optimizing acquisition, and managing the experiment loop. |

Within the paradigm of Bayesian optimization for organic chemistry, the explicit advantages of sample efficiency and noise tolerance are transformative. By reducing the experimental burden by an order of magnitude and providing inherent robustness to real-world measurement variability, BO shifts the research focus from exhaustive screening to intelligent exploration. This enables the rapid optimization of complex reactions and the feasible navigation of vast molecular spaces, directly accelerating the discovery of new pharmaceuticals and functional materials. The integration of automated hardware with noise-aware probabilistic algorithms represents the foundational toolkit for next-generation chemical research.

This whitepaper situates the core concepts of Bayesian optimization (BO)—priors, posteriors, and the exploration-exploitation trade-off—within the context of accelerating organic chemistry and drug discovery research. By framing chemical synthesis and molecular property optimization as sequential decision-making problems, we provide a technical guide for integrating probabilistic machine learning into the experimental workflow.

Bayesian optimization is a powerful strategy for optimizing expensive-to-evaluate "black-box" functions. In organic chemistry, this corresponds to optimizing reaction yields, screening molecular properties (e.g., binding affinity, solubility), or discovering novel functional materials with minimal experimental trials. The core cycle involves: 1) Building a probabilistic model (surrogate) of the objective function; 2) Using an acquisition function to balance exploration and exploitation to select the next experiment; 3) Updating the model with new data.

Core Terminology & Mathematical Framework

Priors

The prior distribution encapsulates belief about the possible objective functions before observing any experimental data. It is a placeholder for domain knowledge.

- In Chemistry: A prior can incorporate known structure-activity relationships (SAR), physicochemical property ranges, or historical data from similar reaction screens.

Common Choice: Gaussian Process (GP) prior, defined by a mean function m(x) and a kernel k(x, x').

[ f(x) \sim \mathcal{GP}(m(x), k(x, x')) ]

For a reaction optimization where x represents variables like temperature, catalyst load, and solvent polarity, the kernel defines the assumed smoothness and correlation between different conditions.

Posteriors

The posterior distribution is the updated belief about the objective function after incorporating observed data (\mathcal{D} = {xi, yi}_{i=1}^t). It combines the prior with the likelihood of the data.

- In Chemistry: After running t experiments, the posterior over the yield landscape provides a predictive distribution (mean and uncertainty) for any untested condition x.

- Calculation: For a GP, the posterior predictive distribution for a new point x is Gaussian with closed-form mean (\mut(x)) and variance (\sigma^2t(x)).

Exploration vs. Exploitation

The acquisition function (\alpha(x)) quantifies the utility of evaluating a candidate x, resolving the trade-off between:

- Exploration: Sampling regions of high uncertainty (high (\sigma)) to improve the global model.

- Exploitation: Sampling near the current best estimate (high (\mu)) to refine the optimum.

Common acquisition functions include Expected Improvement (EI), Upper Confidence Bound (UCB), and Probability of Improvement (PI).

Table 1: Performance Comparison of Acquisition Functions in Reaction Yield Optimization

| Acquisition Function | Avg. Experiments to Reach 90% Max Yield | Best Final Yield (%) | Computational Cost (Relative) | Best For |

|---|---|---|---|---|

| Expected Improvement (EI) | 18 | 95.2 | Medium | General-purpose, balanced trade-off |

| Upper Confidence Bound (UCB) | 22 | 94.8 | Low | Explicit exploration bias |

| Probability of Improvement (PI) | 25 | 92.1 | Low | Pure exploitation, simple landscapes |

| Knowledge Gradient (KG) | 15 | 96.5 | High | Noisy, expensive experiments |

Table 2: Impact of Informed vs. Uninformed Priors in Virtual Screening

| Prior Type | Avg. Top-5 Hit Enrichment | Experiments to Find First nM Binder | Description |

|---|---|---|---|

| Uninformed (Zero Mean) | 3.2x | 48 | Default, assumes no prior knowledge. |

| Literature-Based (SAR Mean) | 7.8x | 19 | Mean function derived from known actives. |

| Transfer Learning (Pre-trained Model) | 6.5x | 25 | Kernel informed by related assay data. |

| Multi-fidelity (Cheap Assay Data) | 5.1x | 28 | Incorporates low-cost computational/experimental data. |

Experimental Protocol: Bayesian Optimization for Suzuki-Miyaura Cross-Coupling

Objective: Maximize isolated yield of a biaryl product. Chemical Space Variables (x): Pd catalyst (4 types), ligand (6 types), base (4 types), temperature (60-120°C), solvent (5 types). Encoded as numerical/categorical features. Response (y): Isolated yield (%).

Procedure:

- Prior Definition: Initialize a GP model with a Matérn 5/2 kernel. Use a constant mean function set to the average yield of similar reactions from literature.

- Initial Design: Perform a space-filling design (e.g., 12 experiments via Sobol sequence) to seed the model.

- Iterative Optimization Loop: a. Model Training: Fit the GP posterior to all collected data. b. Acquisition: Calculate Expected Improvement (EI) for 10,000 random candidate conditions from the variable space. c. Selection & Experiment: Choose the condition with maximal EI. Perform the reaction in triplicate, record average isolated yield. d. Update: Append the new {condition, yield} pair to the dataset.

- Termination: Continue loop for 40 iterations or until yield plateaus (<2% improvement over 5 iterations).

- Validation: Confirm optimal conditions with 5 independent replicates.

Visualizing the Bayesian Optimization Workflow

Diagram Title: Bayesian Optimization Loop for Chemistry

Diagram Title: The Exploration-Exploitation Dilemma

The Scientist's Toolkit: Key Reagents & Materials

Table 3: Essential Research Reagents for Bayesian-Optimized Chemistry Workflows

| Item | Function & Relevance to BO |

|---|---|

| Automated Liquid Handling/Reaction Station | Enables high-fidelity, reproducible execution of the sequential experiments proposed by the BO algorithm. Essential for loop closure. |

| High-Throughput Analysis (e.g., UPLC-MS, HPLC) | Provides rapid, quantitative yield/purity data for the objective function (y), minimizing delay between experiment and model update. |

| Chemical Feature Encoding Library | Software/toolkits (e.g., RDKit, Mordred) to convert molecules/reaction conditions into numerical descriptors (features for x). |

| BO Software Platform | Specialized libraries (e.g., BoTorch, GPyOpt, Scorpion) that implement GP regression, acquisition functions, and constrained optimization. |

| Multi-Fidelity Data Sources | Access to computational chemistry (DFT, docking) or cheaper experimental data (kinetic plates) to construct informative priors. |

| Standardized Substrate Library | A curated set of building blocks with consistent quality, reducing noise in experimental responses and improving model accuracy. |

Implementing Bayesian Optimization: Step-by-Step Strategies for Chemistry Workflows

This whitepaper, framed within a broader thesis on Bayesian optimization (BO) for organic chemistry applications, provides an in-depth technical guide to defining the search space for chemical reaction optimization. The performance of BO is fundamentally constrained by the precise mathematical representation of the experimental domain. We detail the classification of input variables—continuous, discrete, and categorical—as they pertain to chemical reactions, and provide protocols for their effective integration into a BO workflow for drug development research.

Variable Typology in Chemical Reaction Optimization

The search space for a chemical reaction is defined by the set of all manipulable parameters. Their correct formalization is critical for surrogate modeling and acquisition function computation in BO.

Table 1: Variable Types in Reaction Optimization

| Variable Type | Definition | Chemical Examples | Key Consideration for BO |

|---|---|---|---|

| Continuous | Infinite values within a bounded interval. | Temperature (°C), time (h), concentration (M), catalyst loading (mol%), pressure. | Kernels (e.g., Matérn) naturally handle continuity. Requires scaling. |

| Discrete (Ordinal) | Countable numeric values with meaningful order. | Number of equivalents, number of reaction stages, integer grid points for continuous variables. | Can be treated as continuous or encoded with specific kernels. |

| Categorical (Nominal) | Distinct categories with no intrinsic order. | Solvent identity, catalyst type, ligand class, leaving group, base identity. | Requires special encoding (e.g., one-hot, spectral mixture kernels) for BO. |

Methodologies for Variable Encoding & Space Definition

Protocol: Pre-Processing Categorical Variables for Bayesian Optimization

Objective: To transform categorical parameters into a numerical representation compatible with Gaussian Process (GP) kernels.

- Enumerate Categories: List all feasible options for each categorical variable (e.g., Solvent: {DMF, THF, MeCN, Toluene}).

- Apply One-Hot Encoding: Map each category to a binary vector. For k categories, a point is represented by a k-dimensional vector with a '1' at the index corresponding to the chosen category and '0' elsewhere.

- Kernel Selection: Employ a kernel that operates effectively on this encoding. Common choices include:

- Categorical Kernel: A dedicated kernel (e.g., Hamming distance-based) that measures similarity as 1 if categories match, 0 otherwise.

- Spectral Mixture Kernel with Linear Embedding: Treats the one-hot vector as an input to a standard kernel, effectively learning a latent continuous embedding for each category during GP training.

Protocol: Defining Bounds and Constraints for Continuous/Discrete Variables

Objective: To establish a feasible, physically meaningful numerical search space.

- Define Hard Bounds: Set absolute minimum and maximum values based on experimental feasibility (e.g., Temperature: [0°C, 150°C] for a given setup).

- Define Soft Constraints: Identify regions of likely poor performance or hazard (e.g., high decomposition rate above 130°C). These can be incorporated into the BO acquisition function as penalty terms.

- Scale Variables: Normalize all continuous and discrete ordinal variables to a common range (e.g., [0, 1]) to ensure balanced influence on the kernel computation.

Protocol: Designing a Mixed-Variable Search Space for a Catalytic Reaction

Objective: To integrate all variable types for the BO of a Pd-catalyzed cross-coupling reaction.

- Variable Identification:

- Categorical: Ligand (L1, L2, L3), Base (K2CO3, Cs2CO3, t-BuOK).

- Continuous: Temperature (25-100°C), Time (1-24 h).

- Discrete: Equivalents of Base (1.0, 1.5, 2.0, 2.5).

- Space Representation: The combined search space Ω is the Cartesian product of all variable domains: Ω = Ligand × Base × Temperature × Time × Equiv..

- BO Implementation: Use a mixed-variable GP surrogate model (e.g., utilizing the

BoTorchorDragonflyframeworks) with a composite kernel designed to handle the specified variable types.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Reaction Optimization Studies

| Item | Function in Optimization | Example/Note |

|---|---|---|

| High-Throughput Experimentation (HTE) Kit | Enables parallel screening of categorical & discrete variable combinations (e.g., 96 solvent-ligand pairs). | Unchained Labs Big Kahuna, Chemspeed Swing |

| Automated Liquid Handler | Precisely dispenses continuous volumes of reagents/catalysts for concentration variable control. | Hamilton Microlab STAR, Gilson Pipetmax |

| Process Analytical Technology (PAT) | Provides real-time, continuous data (e.g., via IR, Raman) for reaction progression. | Mettler Toledo ReactIR, Ocean Insight Raman Probe |

| Chemical Databases (e.g., Reaxys, SciFinder) | Informs feasible ranges for continuous variables and plausible categorical options (solvent, catalyst). | Critical for prior knowledge definition. |

| Bayesian Optimization Software | Implements mixed-variable surrogate modeling and acquisition function optimization. | BoTorch (PyTorch-based), Dragonfly, SMAC3 |

Visualizing the Optimization Workflow

Diagram Title: Bayesian Optimization Workflow for Reaction Searching

Diagram Title: Reaction Variable Types and Examples

Choosing and Tuning the Surrogate Model for Chemical Data (e.g., Matérn Kernel for GPs)

Within the broader thesis on Bayesian Optimization for Organic Chemistry Applications, the surrogate model stands as the probabilistic scaffold. It encodes assumptions about the chemical property landscape, guiding the efficient exploration of molecular space. This guide focuses on the critical selection and tuning of Gaussian Process (GP) models, with emphasis on kernel functions like the Matérn, for chemical data characterized by high-dimensionality, noise, and complex structure.

Gaussian Process Kernels for Chemical Data: A Quantitative Comparison

The choice of kernel defines the prior over functions, determining the GP's extrapolation behavior and smoothness assumptions critical for chemical property prediction.

Table 1: Common GP Kernels and Their Suitability for Chemical Data

| Kernel | Mathematical Form (Isotropic) | Hyperparameters | Key Properties | Suitability for Chemical Data |

|---|---|---|---|---|

| Matérn (ν=3/2) | σ² (1 + √3r/l) exp(-√3r/l) |

l (lengthscale), σ² (variance) |

Once differentiable, moderately smooth. Handles abrupt changes better than RBF. | High. Excellent default for QSAR/property prediction. Captures local trends without over-smoothing. |

| Matérn (ν=5/2) | σ² (1 + √5r/l + 5r²/3l²) exp(-√5r/l) |

l, σ² |

Twice differentiable, smoother than ν=3/2. | High. For properties believed to vary more smoothly with molecular descriptors. |

| Radial Basis (RBF) | σ² exp(-r² / 2l²) |

l, σ² |

Infinitely differentiable, very smooth. | Medium. Can oversmooth in high-dimensional descriptor spaces. Risk of poor uncertainty quantification. |

| Rational Quadratic | σ² (1 + r² / 2αl²)^{-α} |

l, σ², α |

Scale mixture of RBF kernels. Flexible for multi-scale variation. | Medium-High. Useful when chemical data exhibits variations at multiple length scales (e.g., local vs. global molecular features). |

| Dot Product | σ₀² + x · x' |

σ₀² (bias) |

Induces linear functions. | Low. Rarely used alone. Combined with other kernels to add a linear component. |

Where r = ‖x - x'‖ is the Euclidean distance between input vectors (e.g., molecular fingerprints).

Table 2: Kernel Selection Guide Based on Chemical Data Characteristics

| Data Characteristic | Recommended Kernel(s) | Rationale |

|---|---|---|

| Small, noisy datasets (< 100 data points) | Matérn (ν=3/2), with strong priors on l |

Prevents overfitting; robust to noise. |

| Smooth, continuous property trends (e.g., LogP) | Matérn (ν=5/2), RBF | Exploits smoothness for better interpolation. |

| Sparse, high-dimensional fingerprints (ECFP4) | Matérn (ν=3/2) | Less prone to pathological behavior in high-D than RBF. |

| Multi-fidelity data (computation + experiment) | Coregionalized Kernel (Matérn base) | Models correlations between data sources. |

| Incorporating molecular graph structure | Graph Kernels (combined with Matérn) | Directly operates on graph representation. |

Experimental Protocol: Tuning a Matérn Kernel GP for a QSAR Task

This protocol details the steps for building and tuning a GP surrogate model for a quantitative structure-activity relationship (QSAR) study within a Bayesian Optimization (BO) loop.

Objective: To model the inhibition constant (pKi) of a series of small molecules against a target enzyme.

Materials & Computational Tools:

- Dataset: 150 molecules with experimental pKi values (90% train, 10% hold-out test).

- Descriptors: 2048-bit ECFP4 fingerprints (hashed), normalized.

- Software: Python with

scikit-learn,GPyTorch, orBoTorch. - Hardware: Standard workstation (CPU/GPU optional for >10k data points).

Procedure:

Data Preprocessing:

- Encode all molecules as ECFP4 fingerprints (radius=2, 2048 bits).

- Split data into training (135) and test (15) sets using stratified sampling based on pKi value bins.

- Apply Tanimoto similarity as the distance metric, adjusting kernel computation accordingly.

Model Specification:

- Define a GP prior:

f(x) ~ GP(μ(x), k(x, x')). - Use a constant mean function,

μ(x) = c. - Select a Matérn (ν=3/2) kernel with a lengthscale for each dimension (

ARD=True). Kernel:k(x, x') = σ² * Matern3/2(d_Tanimoto(x, x') / l). - Assume a Gaussian likelihood, incorporating a noise term

σ²_n.

- Define a GP prior:

Hyperparameter Optimization:

- Initialize hyperparameters:

l=1.0,σ²=1.0,σ²_n=0.01. - Maximize the marginal log likelihood (Type II Maximum Likelihood) using the L-BFGS-B algorithm with 5 random restarts to avoid local optima.

- Alternatively, for a fully Bayesian treatment: Place priors on hyperparameters (e.g., Gamma priors on

l,σ²) and perform Hamiltonian Monte Carlo (HMC) to obtain posterior distributions.

- Initialize hyperparameters:

Model Validation:

- Predict on the hold-out test set.

- Calculate quantitative metrics: Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and the calibration of predictive uncertainty (compute the percentage of test points where the true value falls within the 95% credible interval).

- Compare against a baseline (e.g., Random Forest regression).

Integration into BO Loop:

- The trained GP serves as the surrogate model.

- An acquisition function (e.g., Expected Improvement) is optimized on the GP posterior to propose the next molecule for experimental testing.

- The GP is updated with new data in a sequential fashion.

Visualizing the Model Tuning and BO Workflow

Diagram 1: GP Surrogate Model Tuning and BO Integration Workflow

Diagram 2: Decision Logic for Kernel Selection in Chemistry

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Computational Tools and Resources for GP Modeling in Chemistry

| Item / Resource | Function / Purpose | Example / Note |

|---|---|---|

| Molecular Featurization | Converts molecular structure into a numerical vector for modeling. | ECFP4/RDKit Fingerprints: Capture substructure patterns. Descriptors: RDKit, Mordred, or Dragon compute physchem properties. |

| GP Software Libraries | Provides efficient implementations for building, training, and deploying GP models. | GPyTorch: Scalable, GPU-accelerated. BoTorch: Built for BO, integrates with PyTorch. scikit-learn: Simple, robust baseline implementations. |

| Bayesian Optimization Frameworks | Provides acquisition functions, optimization loops, and utilities for sequential design. | BoTorch/Ax: Flexible, research-oriented. GPflowOpt: Built on TensorFlow. Dragonfly: Handles discrete, categorical spaces well (e.g., molecular graphs). |

| Chemical Databases | Source of experimental data for training and benchmarking. | ChEMBL: Bioactivity data. PubChem: Assay and property data. QSAR Datasets: MoleculeNet benchmarks (e.g., ESOL, FreeSolv). |

| High-Performance Computing (HPC) | Accelerates hyperparameter tuning and cross-validation on large datasets. | Cloud platforms (AWS, GCP) or local clusters for parallelizing likelihood optimization or HMC sampling. |

Selecting the Right Acquisition Function (EI, UCB, PI) for Your Chemistry Goal

Within the thesis framework of applying Bayesian Optimization (BO) to organic chemistry research—encompassing molecular design, reaction optimization, and drug candidate screening—the selection of the acquisition function is a critical determinant of algorithmic efficiency. This guide provides an in-depth technical comparison of three core acquisition functions: Expected Improvement (EI), Upper Confidence Bound (UCB), and Probability of Improvement (PI). Their proper application accelerates the discovery of novel organic molecules and optimal synthetic pathways by intelligently balancing exploration and exploitation in high-dimensional, expensive-to-evaluate chemical search spaces.

Core Acquisition Functions: Mathematical Framework & Chemistry-Specific Interpretation

Each acquisition function, denoted α(x), uses the posterior distribution from a Gaussian Process (GP) surrogate model—mean μ(x) and uncertainty σ(x)—to quantify the utility of evaluating a candidate point x.

Probability of Improvement (PI)

PI seeks the point with the highest probability of exceeding the current best observed value, f(x⁺).

[ \alpha_{PI}(x) = P(f(x) \ge f(x^+) + \xi) = \Phi\left(\frac{\mu(x) - f(x^+) - \xi}{\sigma(x)}\right) ]

Chemistry Context: The trade-off parameter ξ (≥0) manages exploitation (ξ=0) versus exploration. PI is useful in later-stage fine-tuning, such as optimizing reaction temperature or catalyst loading to marginally improve yield beyond a known high-performing condition. It may overly exploit and get trapped in local maxima in complex molecular property landscapes.

Expected Improvement (EI)

EI calculates the expected value of improvement over f(x⁺), penalized by the amount of improvement.

[ \alpha_{EI}(x) = (\mu(x) - f(x^+) - \xi)\Phi(Z) + \sigma(x)\phi(Z), \quad \text{where } Z = \frac{\mu(x) - f(x^+) - \xi}{\sigma(x)} ]

Chemistry Context: EI provides a balanced trade-off, making it a robust default. It is particularly effective in virtual screening campaigns where the goal is to maximize a property like binding affinity while efficiently exploring a vast, discrete molecular library. The ξ parameter can be tuned to adjust the balance.

Upper Confidence Bound (UCB)

UCB uses an additive confidence parameter, κ, to combine mean prediction and uncertainty.

[ \alpha_{UCB}(x) = \mu(x) + \kappa \sigma(x) ]

Chemistry Context: κ provides explicit, intuitive control over the exploration-exploitation balance. This is valuable in early-stage discovery, such as exploring a new chemical reaction space or a previously untested class of polymers, where understanding the landscape (high κ) is as important as finding an immediate high performer.

Quantitative Comparison & Selection Guide

Table 1 summarizes the key characteristics, aiding function selection based on chemical objective.

Table 1: Acquisition Function Comparison for Chemistry Applications

| Function | Key Parameter | Exploration Bias | Best For Chemical Applications Like... | Primary Limitation |

|---|---|---|---|---|

| Probability of Improvement (PI) | ξ (exploitation threshold) | Low (can be tuned with ξ) | Final-stage optimization of a known lead reaction; purity maximization. | Prone to over-exploitation; ignores improvement magnitude. |

| Expected Improvement (EI) | ξ (trade-off) | Moderate (automatic balance) | General-purpose: reaction condition optimization, lead molecule property enhancement. | Requires numerical computation of Φ and φ. |

| Upper Confidence Bound (UCB) | κ (confidence level) | High (explicitly tunable via κ) | Initial exploration of novel chemical spaces; materials discovery with safety constraints. | Performance sensitive to κ schedule; can over-explore. |

Experimental Protocol: Benchmarking Acquisition Functions in Reaction Yield Optimization

A standard experimental workflow for comparing EI, UCB, and PI in a chemistry BO context is detailed below.

Objective: Maximize the yield of a Pd-catalyzed Suzuki-Miyaura cross-coupling reaction. Search Space: 4 continuous variables: Catalyst loading (0.5-2.0 mol%), Temperature (25-100 °C), Reaction time (1-24 h), Equivalents of base (1.0-3.0 equiv). Surrogate Model: Gaussian Process with Matérn 5/2 kernel. Initial Design: 10 points from a space-filling Latin Hypercube Design (LHD). Iteration Budget: 30 sequential BO iterations.

Protocol:

- Initial Experimentation: Execute the 10 LHD-designed reactions in parallel. Record yields (f(x)).

- BO Loop: a. Model Training: Train the GP surrogate on all accumulated (x, f(x)) data. b. Acquisition Maximization: For each candidate function (EI, UCB-κ=2.0, PI-ξ=0.01), use an optimizer (e.g., L-BFGS-B) to find the next candidate point x* maximizing α(x). c. Experiment & Evaluation: Run the reaction at x* and measure the yield. d. Data Augmentation: Append the new (x, f(x)) to the dataset.

- Analysis: Compare the convergence rate (best yield vs. iteration) and final best yield achieved by each acquisition function after 30 iterations.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Bayesian Optimization in Chemistry Experiments

| Item / Solution | Function in BO Workflow |

|---|---|

| Automated Parallel Reactor (e.g., Chemspeed, Unchained Labs) | Enables high-throughput execution of initial design and BO-suggested experiments with precise control over variables (temp, stir, dosing). |

| Online Analytics (e.g., HPLC, FTIR, MS) | Provides rapid, quantitative outcome measurement (yield, conversion, purity) to feed back into the BO loop with minimal delay. |

| Chemical Data Management Software (CDS) | Securely logs all experimental parameters (x) and outcomes (f(x)), ensuring data integrity for GP training. |

| BO Software Library (e.g., BoTorch, GPyOpt, scikit-optimize) | Provides implementations of GP regression, acquisition functions (EI, UCB, PI), and optimization routines for the computational loop. |

| Diverse, Well-Characterized Chemical Library | For molecular optimization, provides a discrete search space of synthesizable building blocks or compounds for virtual screening. |

Visualization of Bayesian Optimization Workflow in Chemistry

Title: Bayesian Optimization Loop for Chemical Reaction Optimization

Title: Decision Guide for Acquisition Function Selection

This whitepaper details the application of Bayesian Optimization (BO) for the automated high-throughput optimization of chemical reaction conditions, specifically targeting yield and selectivity. It is situated within a broader thesis positing that BO represents a paradigm shift in organic chemistry research, moving from traditional one-variable-at-a-time (OVAT) experimentation to an efficient, data-driven closed-loop discovery process. For pharmaceutical researchers, this methodology accelerates the development of robust, scalable synthetic routes for drug candidates and active pharmaceutical ingredients (APIs) by intelligently navigating complex, multidimensional chemical spaces with minimal experimental cost.

Bayesian Optimization: A Technical Primer

Bayesian Optimization is a sequential design strategy for globally optimizing black-box functions that are expensive to evaluate. In chemical reaction optimization, the "black-box function" is the experimental outcome (e.g., yield or enantiomeric excess), and each experiment is "expensive" in terms of time, materials, and labor.

The core algorithm iterates through two phases:

- Surrogate Model (Probability): A probabilistic model, typically a Gaussian Process (GP), is fitted to all observed data (historical and newly acquired experiments). The GP provides a posterior distribution (mean and uncertainty) over the entire search space.

- Acquisition Function (Decision): A utility function, such as Expected Improvement (EI) or Upper Confidence Bound (UCB), uses the surrogate model's predictions to propose the next most informative experiment by balancing exploration (probing regions of high uncertainty) and exploitation (probing regions predicted to be high-performing).

This loop continues until a performance threshold is met or the experimental budget is exhausted.

Diagram 1: Bayesian Optimization Closed-Loop Workflow

Experimental Protocol: A Canonical Case Study

The following protocol details a representative BO campaign for optimizing a Pd-catalyzed Suzuki-Miyaura cross-coupling reaction, a workhorse transformation in medicinal chemistry.

Objective: Maximize yield while minimizing undesired homocoupling byproduct.

Reaction: Aryl bromide + Aryl boronic acid -> Biaryl product.

Defined Search Space (6 Continuous Variables):

- Catalyst loading (mol%)

- Ligand loading (mol%)

- Base concentration (equiv.)

- Temperature (°C)

- Reaction time (h)

- Solvent ratio (Water:THF)

Equipment & Setup:

- Automation Platform: Commercially available robotic liquid handler (e.g., Chemspeed Technologies SWING, Unchained Labs Big Kahuna) integrated with a vial/plate carousel and solid/liquid dispensing modules.

- Reaction Vessels: Glass vials (4-8 mL) arranged in a 24- or 48-well format heating block.

- Analysis: Integrated LC-MS or UHPLC system with autosampler. A Quick-UHPLC method (<2 min/run) is essential for rapid feedback.

Step-by-Step Procedure:

- Initial Design: Generate an initial dataset of 12 experiments using a space-filling design (e.g., Sobol sequence) across the 6-dimensional search space. The robot prepares these reactions sequentially.

- Reaction Execution: For each experiment, the robot dispenses solvent, stock solutions of reagents, catalyst, and ligand. The base is added last. The heating block agitates and heats the sealed vials for the specified time.

- Automated Analysis: After quenching, an aliquot from each vial is automatically diluted and transferred to a UHPLC plate for analysis.

- Data Processing: Chromatographic data is automatically integrated. Yield is calculated via internal standard calibration. Selectivity is calculated as (Area% Product) / (Area% Product + Area% Homocoupling Byproduct).

- BO Iteration: The yield and selectivity data for all completed experiments is fed into the BO algorithm (e.g., using BoTorch or custom Python script). The algorithm proposes the next batch of 4 experiments.

- Loop Closure: Steps 2-5 are repeated. The system typically converges on optimal conditions within 5-8 iterations (40-60 total experiments).

- Validation: The top 3 predicted conditions are manually run in triplicate on a gram scale to validate robotic results and assess reproducibility.

Data Presentation: Quantitative Outcomes

Recent literature demonstrates the efficacy of BO-driven optimization compared to traditional approaches.

Table 1: Comparison of Optimization Methodologies for a Model Suzuki Reaction

| Methodology | Total Experiments | Optimal Yield (%) | Optimal Selectivity (%) | Time to Optimal (Days) | Key Limitation |

|---|---|---|---|---|---|

| Traditional OVAT | ~75 | 88 | 92 | 14-21 | Inter-factor interactions missed; highly inefficient. |

| Full Factorial DoE | 64 (for 4 factors, 2 levels) | 91 | 95 | 7-10 | Curse of dimensionality; impractical for >5 factors. |

| Bayesian Optimization | 52 | 96 | 98 | 4-5 | Requires upfront automation/informatics investment. |

| Human-Guided Screening | 45 | 85 | 90 | 10-14 | Prone to bias; non-systematic. |

Table 2: Key Parameters from a Recent BO Campaign (J. Org. Chem. 2023) Objective Function: 0.7(Normalized Yield) + 0.3(Normalized Selectivity)

| Iteration Batch | Proposed Catalyst (mol%) | Proposed Temp (°C) | Experimental Yield (%) | Experimental Selectivity (%) |

|---|---|---|---|---|

| Initial (Random) | 0.5 - 2.5 | 60 - 100 | 45 - 78 | 70 - 88 |

| 3 | 1.1 | 85 | 89 | 94 |

| 5 | 1.8 | 78 | 94 | 97 |

| 7 (Optimal) | 1.5 | 82 | 96 | 98 |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents & Materials for Automated Reaction Optimization

| Item | Function & Rationale |

|---|---|

| Pd Precatalysts (e.g., Pd-PEPPSI, SPhos Pd G3) | Air-stable, well-defined catalysts providing reproducible performance essential for automated systems. |

| Ligand Libraries (e.g., BippyPhos, CPhos, tBuXPhos) | Diverse, modular ligands in stock solution format to rapidly map ligand effects on selectivity. |

| Automation-Compatible Bases (e.g., K3PO4, Cs2CO3 granules) | Free-flowing solid bases or high-concentration stock solutions for reliable robotic dispensing. |

| Deuterated Internal Standards (e.g., 1,3,5-Trimethoxybenzene-d6) | For direct, robust NMR or LC-MS yield quantification without need for external calibration curves. |

| 96-Well Deep Well Reaction Plates (glass-coated) | High-throughput format compatible with heating/stirring and liquid handling, minimizing reagent volumes. |

| Integrated LC-MS / UHPLC System | Provides rapid (<2 min) analytical turnaround with mass confirmation, crucial for fast BO iteration. |

Chemical Informatics Software (e.g., BoTorch, Scikit-optimize, DOE.pro) |

Open-source or commercial libraries to implement the BO algorithm and manage experimental data. |

Critical Pathways & Decision Logic in BO

The decision logic of the Acquisition Function is the intellectual core of the BO process. The diagram below illustrates how Expected Improvement (EI) balances the probabilistic predictions of the surrogate model to select the next experiment.

Diagram 2: Expected Improvement Acquisition Decision Logic

Automated optimization of reaction conditions via Bayesian Optimization represents a foundational application within the broader thesis of machine-learning-enhanced organic chemistry. It provides a rigorous, efficient, and data-rich framework for solving a ubiquitous problem in pharmaceutical R&D: rapidly finding the best conditions for a given transformation. By integrating robotic automation, high-throughput analytics, and intelligent decision-making algorithms, BO moves chemical synthesis from an artisanal practice toward a truly engineered, predictable discipline. This guide provides the technical framework and experimental protocols for researchers to implement this transformative approach in their own laboratories.

Within the broader thesis on Bayesian optimization (BO) for organic chemistry applications, this guide details its implementation for molecular discovery and the optimization of critical physicochemical and biological properties. The iterative, sample-efficient framework of BO is uniquely suited to navigate the vast, complex, and expensive-to-evaluate chemical space. This whitepaper provides a technical deep dive into methodologies, protocols, and current research for optimizing target properties such as octanol-water partition coefficient (logP), aqueous solubility, and protein-ligand binding affinity.

Bayesian Optimization Framework for Chemistry

BO is a sequential design strategy for global optimization of black-box functions. In molecular optimization, the function f(x) maps a molecular representation x to a property of interest (e.g., binding affinity score). The core components are:

- Surrogate Model: Typically a Gaussian Process (GP) that approximates

f(x)and provides uncertainty estimates. - Acquisition Function: Guides the next experiment by balancing exploration and exploitation using the surrogate's predictions. Common functions include Expected Improvement (EI) and Upper Confidence Bound (UCB).

The closed-loop cycle is: Suggest candidate molecule(s) via acquisition function → Execute experiment(s) or simulation(s) → Observe property value(s) → Update surrogate model → Repeat.

Diagram Title: Bayesian Optimization Closed-Loop Cycle

Molecular Property Optimization: Protocols & Data

Optimizing logP and Solubility

logP predicts membrane permeability, while aqueous solubility is critical for bioavailability. In silico models (e.g., from molecular fingerprints) provide rapid, but approximate, property evaluation.

Experimental Protocol for High-Throughput Solubility Measurement (Shake-Flask Method):

- Preparation: Prepare a phosphate buffer (pH 7.4). Dispense 200 µL into each well of a 96-well plate.

- Saturation: Add an excess of the solid candidate compound to each corresponding well. Seal the plate.

- Equilibration: Agitate the plate at a constant temperature (e.g., 25°C) in an incubator shaker for 24 hours.

- Filtration: Use a 96-well filter plate to separate the saturated solution from undissolved solid.

- Quantification: Dilute filtrates appropriately. Quantify concentration using a validated UV-vis spectrophotometry calibration curve for each compound.

- Calculation: Solubility (in µg/mL or M) is calculated from the measured concentration.

Table 1: Representative BO-Driven Optimization of logP and Solubility

| Study Focus | Search Space & Model | Key Result (Optimized Molecule) | Iterations to Converge | Evaluation Method |

|---|---|---|---|---|

| Maximize logP | ~50k fragments, GP on ECFP4 | Identified novel high-logP fragments (>5) for CNS penetration. | ~15 | Predicted (ClogP) |

| Maximize Aqueous Solubility | 1k proprietary molecules, GP on RDKit descriptors | Achieved >2x solubility increase vs. baseline lead compound. | 20-30 | Experimental (UV-vis) |

Optimizing Binding Affinity

The goal is to discover molecules with strong, selective binding to a protein target, often measured by inhibitory concentration (IC50) or dissociation constant (Kd).

Experimental Protocol for Binding Affinity Screening (Fluorescence Polarization Assay):

- Labeling: A known ligand for the target protein is tagged with a fluorophore.

- Incubation: In a black 384-well plate, mix a fixed concentration of the fluorescent ligand and target protein with serial dilutions of the candidate inhibitor molecule. Include controls (no inhibitor, no protein).

- Equilibration: Incubate the plate in the dark at room temperature for 1-2 hours.

- Measurement: Read fluorescence polarization (mP units) using a plate reader.

- Analysis: Plot mP vs. log[inhibitor]. Fit a dose-response curve to calculate the IC50 value.

Table 2: Representative BO-Driven Optimization of Binding Affinity

| Target Class | Molecular Representation | Acquisition Function | Performance Gain | Key Advancement |

|---|---|---|---|---|

| Kinase | SMILES via RNN | Expected Improvement | Discovered nM inhibitors from µM baseline in < 100 synthesis cycles. | Tight integration of synthesis feasibility. |

| GPCR | Graph Neural Net (GNN) | Thompson Sampling | Identified sub-nanomolar binders 5x faster than random screening. | GNN as surrogate directly on molecular graph. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Molecular Property Optimization Experiments

| Item | Function/Application | Example (Vendor) |

|---|---|---|

| Assay-Ready Protein | Purified, functional protein for binding/activity assays. | His-tagged SARS-CoV-2 3CL protease (R&D Systems). |

| Fluorescent Tracer Ligand | High-affinity probe for competitive binding assays (FP, TR-FRET). | BODIPY FL ATP-γ-S for kinase assays (Thermo Fisher). |

| Phosphate Buffered Saline (PBS) | Standard buffer for solubility and biocompatibility assays. | Corning 1X PBS, pH 7.4 (Corning). |

| 96/384-Well Filter Plates | For high-throughput separation of solids in solubility studies. | MultiScreen Solubility Filter Plates, 0.45 µm (Merck Millipore). |

| qPCR Grade DMSO | High-purity solvent for compound storage and assay dosing. | Hybri-Max DMSO (Sigma-Aldrich). |

| LC-MS Grade Solvents | For analytical quantification of compound concentration. | Acetonitrile and Water for HPLC (J.T. Baker). |

| Pre-coated TLC Plates | For rapid monitoring of reaction progress during synthesis. | Silica gel 60 F254 plates (EMD Millipore). |

Advanced Workflow: Integrating Synthesis and Multi-Objective BO

Modern molecular BO must account for synthesis feasibility and multiple, often competing, properties (e.g., high affinity & low toxicity).

Diagram Title: Integrated Synthesis-Aware Multi-Objective BO Workflow

Within the broader thesis of applying Bayesian optimization (BO) to organic chemistry, High-Throughput Experimentation (HTE) serves as the critical experimental engine. BO provides the intelligent, adaptive search algorithm for navigating complex chemical space, while HTE and robotic automation furnish the rapid, parallelized data generation required to inform the Bayesian model. This symbiotic relationship accelerates the discovery and optimization of novel molecules, catalysts, and synthetic routes, particularly in pharmaceutical development. This guide details the technical implementation of HTE as the data-generation core of a closed-loop, BO-driven discovery platform.

Core Components of a Modern HTE Platform

Robotic Liquid Handling Systems

These are the workhorses of HTE, enabling precise, sub-microliter to milliliter-scale dispensing of reagents, catalysts, and solvents in arrayed formats (e.g., 96, 384, 1536-well plates).

Integrated Analytical Systems

On-line or at-line analytical tools (e.g., UPLC/HPLC-MS, GC-MS, SFC) coupled with automated sample injection are essential for rapid compound characterization and reaction yield analysis.

Environmental Control Modules

Systems that provide controlled temperature, pressure, and atmospheric conditions (e.g., gloveboxes for air-sensitive chemistry, photoreactors) across many parallel reactions.

Software and Data Management

A central informatics platform (Electronic Lab Notebook - ELN - and Laboratory Information Management System - LIMS) that tracks reagents, protocols, and results, and interfaces directly with the BO algorithm.

Key Experimental Protocols for BO-Informed HTE

Protocol 1: High-Throughput Suzuki-Miyaura Cross-Coupling Optimization

This protocol is typical for BO-driven catalyst/condition optimization.

Objective: Maximize yield of a target biaryl compound by varying Pd catalyst, ligand, base, and solvent.

Methodology:

- Reagent Arraying: A liquid handler dispenses a constant volume of aryl halide substrate solution into all wells of a 96-well plate.

- Variable Addition: Using a pre-designed library from the BO algorithm, the robot adds different combinations of:

- Pd catalyst stock solutions (e.g., Pd(dppf)Cl₂, Pd(OAc)₂, Pd₂(dba)₃).

- Ligand stock solutions (e.g., SPhos, XPhos, BrettPhos).

- Base solutions (e.g., K₂CO₃, Cs₂CO₃, K₃PO₄).

- Solvents (e.g., 1,4-dioxane, toluene, DMF/H₂O mixture).

- Initiation: Boronic acid substrate solution is added to all wells to initiate reactions simultaneously.

- Incubation: The sealed plate is transferred to a heated shaker for a set reaction time (e.g., 12h at 80°C).

- Quenching & Analysis: The plate is cooled, and an aliquot from each well is automatically diluted and transferred to a UPLC-MS system for yield determination via internal standard.

- Data Return: Yield data is formatted and fed back to the BO algorithm to suggest the next set of condition combinations.

Protocol 2: HTE for New Reaction Discovery

Objective: Identify productive reactions between two novel reactant classes.

Methodology:

- Reactant Dispensing: A set of electrophiles (E) is dispensed along the rows of a 384-well plate. A set of nucleophiles (N) is dispensed along the columns.

- Condition Addition: A standard set of reaction conditions (solvent, base, additive) is added to all wells, or varied across plate quadrants as per BO design.

- Execution & Analysis: The plate is processed and analyzed via high-throughput LC-MS.

- Hit Identification: The BO model analyzes the MS data (e.g., presence of new mass peaks) to identify promising (E, N) pairs and suggest follow-up conditions for scale-up and isolation.

Quantitative Data & Reagent Toolkit

Table 1: Representative HTE Data from a BO-Driven Suzuki Optimization

Experiment tested 80 conditions suggested by a Gaussian Process BO model over 4 iterative cycles.

| Cycle | Conditions Tested | Yield Range (%) | Mean Yield (%) | Top Condition Identified |

|---|---|---|---|---|

| 1 | 20 (Initial Design) | 5-72 | 31 | Pd₂(dba)₃ / SPhos / K₃PO₄ / Toluene |

| 2 | 20 | 15-89 | 52 | Pd₂(dba)₃ / BrettPhos / Cs₂CO₃ / 1,4-Dioxane |

| 3 | 20 | 41-94 | 75 | Pd(OAc)₂ / BrettPhos / Cs₂CO₃ / 1,4-Dioxane |

| 4 | 20 | 67-98 | 88 | Pd(OAc)₂ / BrettPhos / Cs₂CO₃ / 1,4-Dioxane |

Table 2: The Scientist's HTE Reagent Toolkit

| Item | Function & Application | Example Suppliers |

|---|---|---|

| Pre-dispensed Catalyst/Ligand Plates | 96- or 384-well plates containing spatially encoded, nano- to milligram quantities of catalysts/ligands. Enables rapid screening. | Sigma-Aldrich (Merck), Strem, Ambeed |

| Stock Solution Libraries | Pre-made, validated solutions of reagents in DMSO or inert solvents, stored under argon in sealed plates. Ensures dispensing accuracy. | Prepared in-house or via custom providers. |

| Automated Solid Dispenser | Accurately weighs mg-µg quantities of solid reagents (bases, salts, substrates) directly into reaction vessels. | Chemspeed, Freeslate, Mettler-Toledo |

| Disposable Reactor Blocks | Polypropylene or glass-filled plates with chemically resistant wells for reactions. Available with seals for heating/pressure. | Porvair, Ellutia, Wheaton |

| LC/MS Vial/Plate Autosampler | Enables direct injection from reaction plates or vials into analytical systems for unattended analysis. | Agilent, Waters, Shimadzu |

Visualizing the BO-HTE Workflow and Molecular Pathways

Diagram 1: BO-HTE Closed-Loop Optimization Cycle

Diagram 2: Key Reaction Pathways in Medicinal HTE

Integration with Bayesian Optimization: A Technical Synopsis

The HTE platform acts as the function evaluator for the BO algorithm. The chemical space (e.g., continuous variables like temperature, concentration; categorical variables like catalyst identity) is the input domain. The observed reaction yield or success metric is the output. The BO's acquisition function (e.g., Expected Improvement), balancing exploration and exploitation, selects the specific set of conditions to be tested in the next HTE cycle. The robotic system executes these experiments with high fidelity, generating the data that updates the surrogate model (typically a Gaussian Process), closing the loop. This reduces the total number of experiments required to find a global optimum by orders of magnitude compared to one-factor-at-a-time or grid searches.

Overcoming Challenges: Practical Tips for Optimizing Bayesian Workflows in the Lab

1. Introduction

Within the systematic optimization of organic chemistry reactions—be it for novel catalyst discovery, reaction condition screening, or drug candidate synthesis—Bayesian optimization (BO) stands as a cornerstone methodology. Its efficiency in navigating high-dimensional, resource-intensive experimental landscapes is paramount. However, a recurrent failure mode in its application is suboptimal or stagnant performance. This guide diagnoses a primary culprit: the improper definition of the search space, encompassing both excessive dimensionality ("too large") and poor parametric constraints ("ill-defined"). Framed within the thesis of advancing BO for organic chemistry applications, we dissect this problem through quantitative data analysis, provide diagnostic protocols, and offer remediation strategies.

2. Quantitative Impact of Search Space Definition on BO Performance

The performance degradation from an expansive or poorly bounded search space is quantifiable. The table below synthesizes data from recent studies on BO for chemical reaction optimization, illustrating key metrics.

Table 1: Impact of Search Space Characteristics on BO Convergence