Beyond p-Values: A Practical Guide to Statistical Significance in Reaction Optimization for Pharmaceutical Development

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for applying and interpreting statistical significance in chemical reaction optimization.

Beyond p-Values: A Practical Guide to Statistical Significance in Reaction Optimization for Pharmaceutical Development

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for applying and interpreting statistical significance in chemical reaction optimization. It bridges foundational statistical concepts with cutting-edge methodologies like machine learning-driven High-Throughput Experimentation (HTE). The content covers core principles of hypothesis testing, p-values, and confidence intervals, explores their practical application in automated workflows, addresses common pitfalls like underpowered tests and false positives, and establishes rigorous validation protocols for comparing traditional and ML-driven optimization strategies. The guide emphasizes the critical distinction between statistical and practical significance to ensure that optimized reactions are not only mathematically sound but also chemically meaningful and scalable for industrial application.

The Statistical Bedrock: Core Principles of Significance Testing for Chemists

Defining the Research Hypothesis and Null Hypothesis in Reaction Optimization

In the rigorous world of chemical synthesis and pharmaceutical development, reaction optimization is a fundamental process for enhancing yield, selectivity, and efficiency while controlling impurities. Within this empirical framework, statistical hypothesis testing provides a structured methodology for making data-driven decisions, guiding researchers on whether observed improvements from new methods or conditions are statistically significant or attributable to random chance [1].

The core of this approach lies in defining two competing hypotheses. The null hypothesis (H₀) represents a default position or a baseline, typically stating that a new optimization method produces no significant improvement over a established standard or control method. The research hypothesis (H₁), also called the alternative hypothesis, posits that a meaningful, statistically significant improvement does exist [1]. For reaction optimization, this translates to a direct comparison of performance metrics—such as yield, purity, or efficiency—between a novel technique (e.g., a machine learning-guided platform) and a conventional benchmark (e.g., traditional Design of Experiments). The objective of the research is to gather sufficient evidence to reject the null hypothesis in favor of the alternative, thereby validating the new method's superiority [1].

This guide objectively compares the performance of two dominant optimization paradigms—Traditional Design of Experiments (DoE) and modern Machine Learning (ML)-Driven Optimization—by framing their comparison within the formal structure of hypothesis testing. We present summarized quantitative data, detailed experimental protocols, and key reagent solutions to equip scientists with the information necessary for critical evaluation.

Formulating Hypotheses for Method Comparison

To concretely illustrate the application of hypothesis testing, we define the specific null and research hypotheses for our comparison. The null hypothesis (H₀) states: "A machine learning-driven optimization platform does not provide a statistically significant improvement in reaction yield over Traditional Design of Experiments for the optimization of catalytic cross-coupling reactions." Conversely, the research hypothesis (H₁) states: "A machine learning-driven optimization platform provides a statistically significant improvement in reaction yield over Traditional Design of Experiments for the optimization of catalytic cross-coupling reactions."

The analysis in the following sections is designed to test these hypotheses. A rejection of the null hypothesis would provide evidence supporting the adoption of ML-driven methods, while a failure to reject it would suggest that the traditional DoE approach remains a statistically valid choice.

Experimental Data and Performance Comparison

The following table synthesizes key performance data from published case studies and controlled optimization campaigns, providing a quantitative basis for comparison.

Table 1: Performance Comparison of Reaction Optimization Methodologies

| Optimization Method | Reaction Type | Key Performance Metrics | Reported Experimental Scale | Citation |

|---|---|---|---|---|

| Traditional DoE | Catalytic Hydrogenation | Yield improved from ~60% to 98.8%; Impurities reduced to <0.1% | 25 g scale [2] | |

| Traditional DoE | Enzyme Assay Optimization | Optimization process reduced from >12 weeks to <3 days | Laboratory assay [3] | |

| ML-Driven (Minerva) | Ni-catalyzed Suzuki Coupling | Identified conditions with >95% yield and selectivity; Accelerated process development. | 96-well HTE [4] | |

| ML-Driven (Yoneda/Symeres) | Cross-Coupling | Yield improved from ~30% to >90%; Process accelerated from months to days. | Not specified [5] | |

| Multi-task Bayesian Optimization | C-H Activation | Successfully determined optimal conditions for new substrates; Large potential cost reductions. | Autonomous flow reactor [6] | |

| Interpretable ML + SA | Biodiesel Production | Identified optimal molar ratio (8.67), catalyst (3.00%), and time (30 min). | Laboratory scale [7] |

The data demonstrates that both methodologies can achieve significant optimization successes. ML-driven methods show a pronounced advantage in accelerating development timelines, often reducing optimization from months to mere days or weeks [4] [5] [6]. Furthermore, ML approaches excel at navigating extremely complex search spaces with high-dimensionality, uncovering high-yielding conditions that eluded traditional screening methods [4].

Detailed Experimental Protocols

To ensure reproducibility and provide a clear understanding of the experimental groundwork underlying the data, this section details the standard workflows for both optimization methodologies.

Protocol for Traditional Design of Experiments (DoE)

The traditional DoE approach is a structured, statistical method that systematically investigates the effects of multiple factors on reaction outcomes [3] [8].

- Screening and Hypothesis Scoping: Initially, critical factors (e.g., catalyst, solvent, temperature, concentration) are identified. The null hypothesis is that varying these factors has no significant effect on the reaction outcome. The research hypothesis is that one or more factors do have a significant effect [2] [9].

- Experimental Design and Execution: A factorial design (e.g., fractional factorial, Box-Behnken) is selected to efficiently explore the factor space. Experiments are conducted according to this design matrix [2] [8].

- Statistical Modeling and Analysis: Data on key responses (yield, selectivity) is collected. A statistical model (e.g., Response Surface Methodology) is built to depict the relationship between inputs and outputs. The significance of each factor is tested, and the model is used to locate optimal conditions [7] [8].

- Validation: The predicted optimal conditions are run experimentally to validate the model's accuracy and the robustness of the solution [2].

Protocol for Machine Learning-Driven Optimization

ML-driven optimization uses data-driven algorithms to efficiently guide the search for optimal conditions, starting with the hypothesis that an ML model can outperform human intuition or classical statistical designs [4].

- Problem Definition and Search Space Formulation: The chemical transformation is defined, and a combinatorial set of plausible reaction conditions (reagents, solvents, temperatures) is established, incorporating chemical constraints to filter impractical combinations [4].

- Initial Sampling and Data Generation: An initial set of experiments is selected using algorithmic sampling (e.g., Sobol sampling) to diversely cover the reaction space. These experiments are executed, often in a high-throughput manner [4].

- Model Training and Prediction: A machine learning model (e.g., a Gaussian Process regressor) is trained on the accumulated experimental data. This model learns to predict reaction outcomes and their associated uncertainties for all possible conditions in the search space [4] [7].

- Iterative Experiment Selection and Learning: An acquisition function uses the model's predictions to balance exploration and exploitation, selecting the next most promising batch of experiments to run. This closed-loop cycle repeats until performance converges or the experimental budget is exhausted [4] [10].

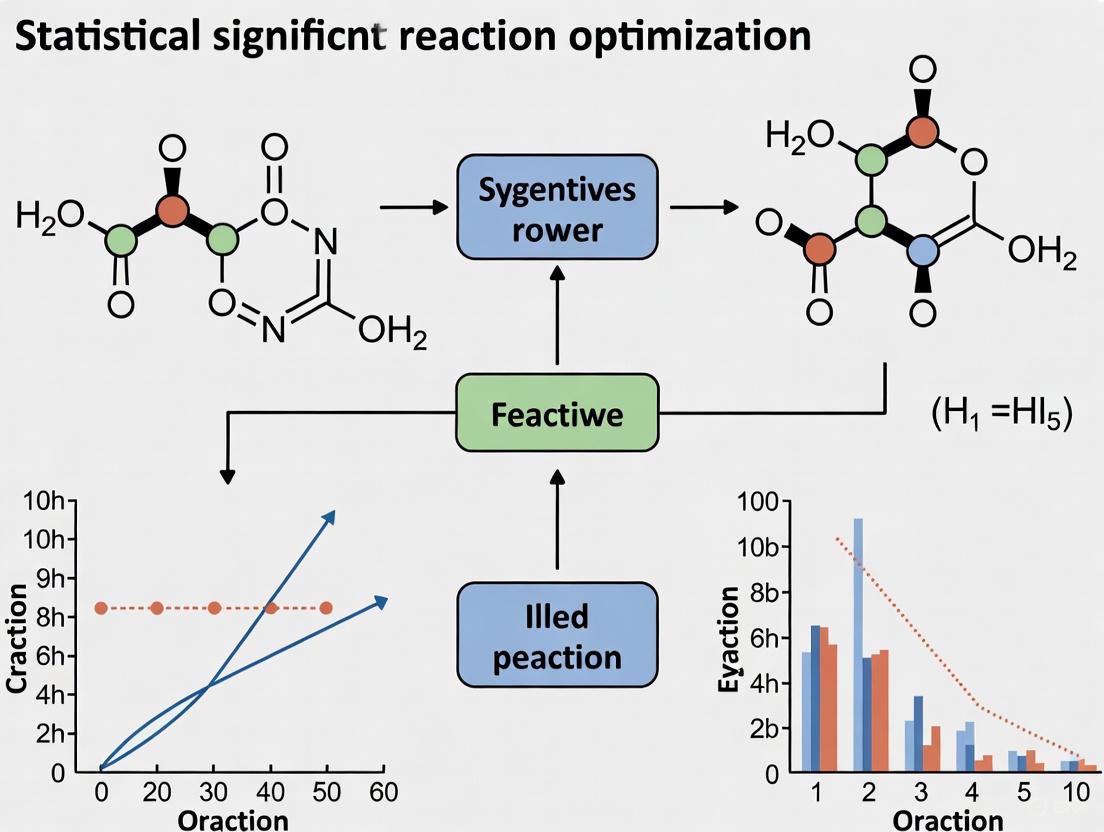

The following diagram visualizes the logical workflow and iterative nature of the ML-driven optimization protocol.

ML-Driven Optimization Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

The successful implementation of optimization strategies relies on specific reagents and materials. The following table details essential components featured in the cited studies.

Table 2: Essential Reagents and Materials for Reaction Optimization

| Reagent/Material | Function in Optimization | Example Context |

|---|---|---|

| Heterogeneous Catalysts | Facilitate catalytic hydrogenation; different catalysts are screened for activity and selectivity. | 14 catalysts screened for halonitroheterocycle hydrogenation [2]. |

| Non-Precious Metal Catalysts (Ni) | Earth-abundant, lower-cost alternative to precious metal catalysts like Pd; subject of optimization. | Ni-catalyzed Suzuki reaction [4]. |

| Ligand Libraries | Modulate the steric and electronic properties of a metal catalyst, dramatically influencing yield and selectivity. | Key categorical variable in ML-guided optimization of cross-couplings [4]. |

| Solvent Libraries | Affect solubility, reactivity, and mechanism; a key continuous or categorical variable in screening. | Explored in ML and DoE for their effect on reaction outcome [4] [7]. |

| Palm Fatty Acid Distillate (PFAD) | Renewable feedstock for biodiesel production; its ratio to methanol is a critical optimized parameter. | Methanol:PFAD molar ratio was the most influential factor (41%) [7]. |

| Methanol | Reactant and solvent in transesterification; its molar ratio is a key continuous optimization variable. | Optimized molar ratio for biodiesel production was 8.67 [7]. |

The collective experimental evidence from recent studies provides strong data that, for many complex reaction optimization challenges, allows for the rejection of the null hypothesis (H₀). Machine learning-driven methodologies demonstrate a statistically significant capability to not only match but often surpass the performance of Traditional DoE, particularly in terms of speed and efficiency [4] [5]. They achieve this by effectively navigating vast, high-dimensional search spaces that are intractable for exhaustive screening, identifying high-performing conditions with fewer experimental iterations [4].

However, the choice of methodology is context-dependent. Traditional DoE remains a powerful, accessible, and highly effective tool, especially for problems with fewer variables or when a comprehensive understanding of factor interactions is required [2] [8]. The emergence of strategies that combine interpretable ML with optimization algorithms further enriches the toolkit, offering both high performance and mechanistic insights [7]. Ultimately, the most effective approach to reaction optimization will be guided by the specific reaction constraints, available resources, and the strategic objectives of the development campaign.

Understanding P-Values and Confidence Intervals in an Experimental Context

In scientific research, particularly in reaction optimization and drug development, p-values and confidence intervals are fundamental statistical tools used to interpret experimental results and draw conclusions about the role of chance. These concepts provide complementary information for determining whether observed effects are statistically significant or likely represent random variation [11] [12].

The p-value is formally defined as the probability of obtaining a result as extreme as, or more extreme than, the observed result if the null hypothesis were true [11] [13]. In practical terms, it measures how strongly the experimental data contradicts the assumption that there is no real effect or difference. The conventional threshold for statistical significance is p < 0.05, indicating less than a 5% probability that the observed results occurred by chance alone [11] [13].

Confidence intervals provide a range of values within which the true population parameter is likely to fall with a specified degree of confidence [11] [12]. The most commonly reported interval is the 95% confidence interval, which means that if the same study were repeated multiple times, approximately 95% of the calculated intervals would contain the true population value [12]. Unlike p-values, confidence intervals provide information about both the precision of the estimate and the direction and magnitude of the effect [12] [14].

Comparative Analysis: P-Values vs. Confidence Intervals

Conceptual Differences and Complementary Roles

While both p-values and confidence intervals are derived from the same statistical principles and data, they provide different perspectives on the results. The p-value primarily serves as a measure of the strength of evidence against the null hypothesis, whereas the confidence interval estimates the range of plausible values for the parameter of interest [11] [12] [15].

These approaches are essentially reciprocal, with the width of the confidence interval relating to the p-value—narrower intervals typically corresponding to smaller p-values [11]. However, confidence intervals provide additional information about the clinical or practical significance of findings, which p-values alone cannot convey [14]. For this reason, many experts recommend confidence intervals as the preferred method for interpreting and reporting results, as they provide more complete information about both statistical and practical significance [11] [12].

Table 1: Direct Comparison of P-Values and Confidence Intervals

| Aspect | P-Value | Confidence Interval |

|---|---|---|

| Primary Purpose | Measures evidence against null hypothesis [13] | Estimates range of plausible values for true effect [11] |

| Information Provided | Strength of evidence for an effect [13] | Effect size, precision, and direction [12] [14] |

| Interpretation | Probability of observed data if null hypothesis true [11] | Range likely to contain true population parameter [12] |

| Null Hypothesis Testing | Direct comparison to significance level (α) [13] | Check if interval includes null value (e.g., 0 or 1) [11] |

| Clinical/ Practical Relevance | Cannot assess [14] | Can assess via magnitude of effect [12] [14] |

| Influence of Sample Size | Larger samples may yield significance for trivial effects [14] | Larger samples yield narrower intervals [12] |

Interpretation Guidelines and Decision Frameworks

Interpreting p-values requires comparing them to a predetermined significance level (α), typically set at 0.05. When p ≤ 0.05, the result is considered statistically significant, suggesting the observed effect is unlikely due to chance alone [13]. However, p-values between 0.05 and 0.10 may still suggest noteworthy findings, particularly in exploratory research [13].

Confidence intervals are interpreted by examining whether they include the null value (such as 0 for mean differences or 1 for risk ratios) and assessing the range of values within the interval [11] [12]. For example, if a 95% confidence interval for a mean difference does not include 0, the result is statistically significant at the 0.05 level [12]. The width of the interval indicates the precision of the estimate—narrow intervals reflect more precise estimates, while wide intervals suggest considerable uncertainty [12].

Table 2: Interpretation Guidelines for Common Scenarios

| Statistical Result | P-Value Interpretation | Confidence Interval Interpretation | Conclusion |

|---|---|---|---|

| p = 0.0395% CI: 1.2 to 3.8 | Statistically significant (p < 0.05) [13] | Does not include null value (0) [11] | Reject null hypothesis [13] |

| p = 0.0795% CI: -0.3 to 4.1 | Not statistically significant (p > 0.05) [13] | Includes null value (0) [11] | Fail to reject null hypothesis [13] |

| p = 0.0495% CI: 0.1 to 0.5 | Statistically significant (p < 0.05) [13] | Does not include null value (0) but effect is small | Statistical significance but questionable practical importance [14] |

| p = 0.6095% CI: -2.1 to 3.5 | Not statistically significant (p > 0.05) [13] | Includes null value (0) and wide range | Inconclusive results [16] |

Application in Reaction Optimization Research

Experimental Protocols and Methodologies

In reaction optimization research, particularly in pharmaceutical development, statistical methods guide the efficient exploration of complex reaction parameter spaces. High-Throughput Experimentation (HTE) platforms enable highly parallel execution of numerous reactions, generating extensive data that requires proper statistical analysis [4]. These approaches have been successfully applied to optimize challenging transformations such as nickel-catalyzed Suzuki couplings and Buchwald-Hartwig aminations, where traditional one-factor-at-a-time (OFAT) approaches may overlook important regions of the chemical landscape [4].

Machine learning frameworks like Bayesian optimization have emerged as powerful tools for reaction optimization, using statistical principles to balance exploration of unknown parameter spaces with exploitation of promising regions [4]. These approaches can efficiently handle large parallel batches, high-dimensional search spaces, and reaction noise present in real-world laboratories [4]. Validation studies have demonstrated that ML-driven optimization can identify conditions achieving >95% yield and selectivity for active pharmaceutical ingredient (API) syntheses, directly translating to improved process conditions at scale [4].

Statistical Workflow in Reaction Optimization

The following diagram illustrates the integrated role of p-values and confidence intervals within a statistical assessment workflow for reaction optimization experiments:

Research Reagent Solutions for Experimental Optimization

Table 3: Essential Research Reagents and Materials in Reaction Optimization

| Reagent/Material | Function in Optimization | Statistical Consideration |

|---|---|---|

| Catalyst Systems(Ni, Pd complexes) | Facilitate bond formation; impact yield and selectivity [4] | Primary categorical variable; requires multiple testing correction [4] |

| Ligand Libraries | Modulate catalyst activity and selectivity [4] | High-dimensional parameter; ML optimization efficient [4] |

| Solvent Arrays | Influence reaction kinetics and mechanism [4] | Categorical variable; affects reproducibility and confidence interval width [12] |

| Substrate Pairs | Core components undergoing transformation | Source of experimental noise; impacts p-value accuracy [4] |

| Additives & Bases | Modify reactivity; quench impurities | Interaction effects require multifactorial design [4] |

| HTE Reaction Blocks | Enable parallel reaction screening [4] | Increase sample size; narrow confidence intervals [12] |

Best Practices for Reporting and Interpretation

Reporting Standards in Scientific Publications

Proper reporting of statistical results is essential for transparent research communication. The CONSORT statement for randomized clinical studies and the QUORUM statement for systematic reviews expressly demand the use of confidence intervals [12]. Leading scientific journals recommend reporting both p-values and confidence intervals to provide a complete picture of the findings [12].

When reporting p-values, researchers should provide exact values (e.g., p = 0.034) rather than thresholds (e.g., p < 0.05) to two or three decimal places, with values less than 0.001 reported as p < 0.001 [13]. Confidence intervals should always be presented alongside the point estimate and confidence level (e.g., "the difference was 8.2 units (95% CI: 6.1 to 10.3)") [12] [14].

Avoiding Common Misinterpretations

Several common misconceptions surround p-values and confidence intervals. A p-value does not indicate the probability that the null hypothesis is true or that the results occurred by chance [13] [16]. Similarly, a 95% confidence interval does not mean there is a 95% probability that the true value lies within the interval for a given study; rather, it refers to the long-run frequency of such intervals containing the true value [16].

Researchers should distinguish between statistical significance and practical importance [14]. With large sample sizes, statistically significant results (small p-values) may represent trivial effects with little practical value [14]. Conversely, clinically important effects may not reach statistical significance in small studies [12]. This distinction is particularly relevant in reaction optimization, where small yield improvements may be statistically significant but not economically or practically meaningful [4] [14].

P-values and confidence intervals serve as complementary tools for interpreting experimental results in reaction optimization research. While p-values provide a measure of evidence against the null hypothesis, confidence intervals offer additional information about the precision, magnitude, and direction of effects [11] [12] [14]. By understanding and applying both concepts appropriately, researchers in drug development and chemical synthesis can make more informed decisions, ultimately accelerating optimization timelines and improving process conditions at scale [4]. The integration of these statistical tools with modern approaches such as high-throughput experimentation and machine learning represents a powerful framework for advancing reaction optimization research [4].

Distinguishing Statistical Significance from Practical (Clinical/Chemical) Significance

In the data-driven landscape of scientific research, particularly in fields like drug development and reaction optimization, the ability to distinguish between statistical significance and practical significance represents a fundamental competency for researchers and scientists. Statistical significance primarily answers whether a research finding or experimental result is likely real and not due to random chance, typically determined through p-values and confidence intervals [17] [18]. In contrast, practical significance—manifested as clinical significance in medical contexts or chemical significance in reaction optimization—addresses whether the finding makes a meaningful difference in real-world applications, patient outcomes, or chemical processes [17] [19].

This distinction is particularly crucial in reaction optimization research and drug development, where the translation of laboratory findings to practical applications depends on more than just mathematical probabilities. A statistically significant effect merely indicates that the finding is unlikely to have occurred by chance, whereas a practically significant effect demonstrates that the finding makes a genuine difference to treatment efficacy, reaction yield, or process efficiency [17] [18]. Understanding this dichotomy prevents the common pitfall of misinterpreting mathematically significant results as inherently valuable to scientific progress or clinical practice.

Core Conceptual Framework

Defining Statistical Significance

Statistical significance is predominantly determined through the p-value, which quantifies the probability that the observed results occurred by random chance under the assumption that the null hypothesis is true [18]. The most common threshold value in biomedical and chemical literature is 0.05 (5%), which often distorts the p-value into a dichotomous number where results are considered "statistically significant" when p ≤ 0.05 and otherwise declared "nonsignificant" [18].

Three key factors influence p-values and statistical significance:

Sample Size: Larger sample sizes reduce random error and variability, making studies more likely to detect a significant relationship if one exists [18]. With extremely large samples, even minuscule, practically meaningless differences can achieve statistical significance [17].

Magnitude of Relationship: The effect size between compared groups substantially impacts statistical significance. Larger differences between groups are easier to detect and typically yield smaller p-values [18].

Measurement Error: Both systematic errors (biases that distort results in a specific direction) and random errors (unexplained variability) can influence p-values and potentially lead to misleading conclusions [18].

The American Statistical Association has emphasized that p-values should not be viewed as definitive measures of truth, noting that "P values do not measure the probability that the studied hypothesis is true, or the probability that the data were produced by random chance alone" [18]. This underscores that statistical significance represents just one component of comprehensive result interpretation.

Defining Practical Significance

Practical significance, referred to as clinical significance in medical contexts, assesses whether a research finding or intervention effect makes a meaningful difference in real-world settings [17] [19]. In healthcare, clinically significant outcomes are those that improve patients' quality of life, physical function, mental status, and ability to engage in social life [18]. In chemical research and reaction optimization, practical significance translates to improvements that meaningfully enhance reaction efficiency, yield, cost-effectiveness, or environmental impact beyond marginal statistical improvements.

Practical significance is evaluated using various metrics depending on the field:

Clinical Research: Minimal clinically important difference (MCID), effect size measures (e.g., Cohen's d), quality of life measures, mortality/morbidity rates, and response rates [17]. For example, a Cohen's d value of 0.7 is considered a moderate effect, while a cancer treatment that prolongs life by an average of 3 months could represent a significant clinical difference for patients [17].

Chemical Research: Effect size, process efficiency improvements, yield enhancement, cost reduction, environmental impact, and scalability potential.

The fundamental distinction lies in their core questions: statistical significance asks "is it real?" while practical significance asks "does it matter?" [20]. This distinction is crucial because, as Stephen Senn cautions, "If you think that statistical significance is a slippery concept, clinical relevance is even worse" [21], requiring careful consideration of what constitutes a meaningful difference in specific contexts.

Comparative Framework: Key Characteristics

Table 1: Fundamental Differences Between Statistical and Practical Significance

| Characteristic | Statistical Significance | Practical Significance |

|---|---|---|

| Core Question | "Is the result real?" [20] | "Does the result matter?" [20] |

| Primary Basis | Probability (p-values, confidence intervals) [17] | Impact magnitude (effect size, MCID) [17] [19] |

| Key Drivers | Sample size, data variability [18] | Relevance to real-world outcomes [20] |

| Interpretation Context | Mathematical rigor [18] | Clinical, chemical, or business context [17] [19] |

| Primary Metrics | P-values, confidence intervals [18] | Effect size, quality of life, yield improvement, cost-benefit [17] |

| Decision Role | Evidence of a genuine effect [17] | Relevance for implementation decisions [20] |

Methodological Approaches and Assessment Protocols

Experimental Protocols for Assessing Statistical Significance

Protocol for Chi-Square Testing of Categorical Outcomes:

Formulate Hypothesis and Significance Level: Pre-specify the null hypothesis (no difference between groups) and alternative hypothesis (significant difference exists). Decide on significance level (typically α = 0.05) before data collection [22].

Calculate Expected Frequencies: For each cell in the contingency table, calculate expected frequencies using the formula: Expected = (Row Total × Column Total) / Grand Total [22].

Compute Chi-Square Statistic: For each cell, calculate (Observed - Expected)² / Expected, then sum these values across all cells to obtain the Chi-Square statistic [22].

Determine Degrees of Freedom: Calculate as (number of rows - 1) × (number of columns - 1). For a standard 2×2 A/B test, degrees of freedom = 1 [22].

Interpret Results: Compare the Chi-Square statistic to critical values from Chi-Square distribution tables or calculate the exact p-value. A p-value < 0.05 typically indicates statistical significance [22].

Protocol for T-Tests of Continuous Outcomes:

Establish Testing Conditions: Determine whether one-tailed or two-tailed testing is appropriate based on research question. Verify assumptions of normality and homogeneity of variance.

Calculate T-Statistic: Compute the difference between group means divided by the standard error of the difference.

Determine Degrees of Freedom: Based on sample sizes and specific test type (e.g., independent samples, paired samples).

Interpret P-Value: Compare calculated p-value to predetermined significance level (typically α = 0.05) to determine statistical significance.

Common pitfalls in statistical testing include data peeking (checking results repeatedly during data collection), which inflates false positive rates, and using scaled values instead of raw counts, which can flip conclusions [22]. With small sample sizes (expected frequencies < 5 in any cell), Chi-Square tests become unreliable, requiring alternative approaches like Fisher's Exact Test or Yates' correction [22].

Experimental Protocols for Assessing Practical Significance

Protocol for Determining Minimal Clinically Important Difference (MCID):

Define Outcome Metrics: Identify patient-centered outcomes relevant to the condition and treatment, such as pain reduction, functional improvement, or quality of life measures [17].

Establish Anchor-Based Criteria: Use external indicators of meaningful change, such as patient global impression of change scales, to categorize patients as "improved" or "not improved" [17].

Calculate Threshold Values: Determine the score difference that best distinguishes between "improved" and "not improved" groups using receiver operating characteristic curves or predictive modeling.

Validate with Distribution-Based Methods: Correlate anchor-based values with distribution-based measures (e.g., effect size, standard error of measurement) to establish a range of plausible values.

Contextualize with Clinical Expertise: Incorporate input from clinical experts regarding the practical relevance of the established thresholds [19].

Protocol for Chemical Significance in Reaction Optimization:

Define Critical Parameters: Identify key reaction metrics including yield, purity, throughput, cost, safety, and environmental impact.

Establish Baseline Performance: Measure current system performance under standard conditions to establish comparison baseline.

Determine Meaningful Improvement Thresholds: Define practically meaningful improvements based on industrial standards, economic considerations, or downstream processing requirements.

Evaluate Trade-offs: Assess whether improvements in one parameter (e.g., yield) negatively impact other important factors (e.g., cost, safety).

Contextualize with Scalability Assessment: Consider whether laboratory-scale improvements will translate meaningfully to industrial production environments.

Diagram 1: This workflow illustrates the sequential assessment process for evaluating both statistical and practical significance in research findings, highlighting the critical decision points where these concepts diverge.

Integrated Assessment Protocol

A comprehensive protocol for evaluating both statistical and practical significance:

Pre-Study Planning:

- Define both statistical parameters (alpha level, power, sample size) and practical significance thresholds (MCID, minimal important difference) before data collection [21].

- For clinical trials, distinguish between δ1 (the difference you would be happy to find) and δ4 (the difference you would not like to miss), recognizing that δ1 < δ4 [21].

Sequential Evaluation:

- First establish statistical significance using appropriate tests (e.g., t-tests, Chi-Square).

- For statistically significant results, proceed to practical significance assessment using field-specific metrics.

- For non-significant results, consider whether practical significance might still exist, particularly in underpowered studies.

Contextual Interpretation:

- Evaluate findings within the specific research, clinical, or industrial context.

- Consider cost-benefit ratios, potential risks, and implementation feasibility.

- Engage relevant stakeholders (clinicians, patients, industrial chemists) in interpretation.

Experimental Evidence and Comparative Data

Case Studies in Clinical Research

Table 2: Clinical Case Studies Illustrating the Discordance Between Statistical and Practical Significance

| Clinical Scenario | Statistical Significance | Practical Significance Assessment | Interpretation |

|---|---|---|---|

| Blood Pressure Medication [17] | Drug reduces blood pressure by average 3.5 mmHg (p < 0.05) | Reduction below established MCID for cardiovascular risk reduction | Statistically significant but not clinically significant - unlikely to meaningfully impact patient outcomes |

| Cancer Therapy Comparison [18] | Both Drug A and Drug B show survival benefit with p = 0.01 | Drug A increases survival by 5 years; Drug B increases survival by 5 months | Both statistically significant but substantially different clinical significance |

| Weight Loss Intervention [17] | Treatment group shows 0.5 kg weight loss (p < 0.05) in 10,000 participants | Weight loss below practical threshold for health benefits | Statistically significant but not clinically significant - large sample size detected trivial difference |

Case Studies in Chemical Research and Reaction Optimization

Recent advances in drug discovery provide compelling examples of the statistical versus practical significance distinction in chemical research. A 2025 study demonstrated an integrated medicinal chemistry workflow that effectively diversified hit and lead structures, accelerating the critical hit-to-lead optimization phase [23]. The research employed high-throughput experimentation to generate a comprehensive dataset encompassing 13,490 novel Minisci-type C-H alkylation reactions, using these data to train deep graph neural networks for predicting reaction outcomes [23].

The key findings demonstrated both statistical and practical significance:

Virtual Library Generation: Scaffold-based enumeration of potential Minisci reaction products, starting from moderate inhibitors of monoacylglycerol lipase (MAGL), yielded a virtual library containing 26,375 molecules [23].

Potency Improvement: Reaction prediction, physicochemical property assessment, and structure-based scoring identified 212 MAGL inhibitor candidates, of which 14 synthesized compounds exhibited subnanomolar activity, representing a potency improvement of up to 4500 times over the original hit compound [23].

This case exemplifies practically significant chemical research—the dramatic potency improvement (4500-fold) and favorable pharmacological profiles demonstrated clear practical significance beyond any statistical measures. The integration of high-throughput experimentation with artificial intelligence and multi-dimensional optimization enabled this advance, demonstrating how modern chemical research can simultaneously achieve both statistical rigor and practical impact [23].

The transformation of organic chemistry through laboratory automation and artificial intelligence creates unprecedented opportunities for accelerating chemical discovery and optimization [24]. This convergence enables researchers to rapidly test large numbers of reaction conditions using high-throughput experimentation platforms while employing machine learning algorithms to process complex chemical data and identify promising directions [24]. The most successful approaches combine the rapid exploration capabilities of AI with the deep understanding of experienced chemists, leveraging both human and artificial intelligence to maximize practical significance [24].

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Tools for Significance Assessment Across Disciplines

| Research Tool | Primary Function | Application Context |

|---|---|---|

| High-Throughput Experimentation (HTE) | Rapid testing of large numbers of reaction conditions or treatment options [23] [24] | Chemical reaction optimization, drug candidate screening |

| Deep Graph Neural Networks | Predicting reaction outcomes and molecular properties [23] | Virtual compound library screening, reaction optimization |

| Minisci-Type C-H Alkylation | Late-stage functionalization for diversifying lead structures [23] | Medicinal chemistry, hit-to-lead optimization |

| Effect Size Calculators | Quantifying magnitude of differences between groups | Clinical research, psychological studies |

| MCID Determination Kits | Establishing minimal clinically important differences [17] | Clinical trial design, outcomes research |

| Automated Flow Chemistry Systems | Combining kinetic modeling with experimental optimization [24] | Reaction optimization, process chemistry |

| Statistical Software Packages | Computing p-values, confidence intervals, power analyses [18] | All research disciplines |

Interpretation Framework and Decision-Making

Integrated Decision Matrix

The relationship between statistical and practical significance creates four possible interpretation scenarios:

Statistically Significant and Practically Significant: Ideal scenario where results are both reliable and meaningful. Implementation is typically recommended with appropriate monitoring.

Statistically Significant but Not Practically Significant: Results are reliable but trivial in magnitude. Implementation is generally not warranted unless cumulative effects or secondary benefits exist.

Not Statistically Significant but Practically Significant: The effect size is meaningful but reliability is uncertain. Further research with larger sample sizes or reduced variability may be warranted.

Not Statistically Significant and Not Practically Significant: Clear case for rejecting the intervention or approach.

Stephen Senn provides crucial guidance for navigating these scenarios, particularly warning against the common mistake of using the same value (δ) both as the difference we would be happy to find and the difference we would not like to miss [21]. When planning trials, researchers should use a more modest definition of clinical relevance (δ1) for judging the practical acceptability of results than that used (δ4) for power calculations, recognizing that δ1 < δ4 [21].

Field-Specific Considerations

Clinical Research Implications: In nursing and medical practice, distinguishing between statistical and clinical significance prevents misinterpretation and misapplication of research findings [19]. While statistical significance provides information about the likelihood of results occurring by chance, it does not necessarily indicate practical importance [19]. Nurses must critically evaluate research studies to determine whether findings are relevant and applicable to their specific patient population, considering clinical context, patient preferences, and values alongside statistical measures [19].

Chemical Research Implications: In reaction optimization and drug discovery, practical significance transcends statistical measures to encompass yield improvements, cost reductions, environmental impact, and scalability. The emergence of adaptive experimentation, automation, and human-AI synergy is reshaping organic chemistry research [24], with successful approaches combining the rapid exploration capabilities of AI with the deep understanding of experienced chemists [24]. This integration represents a new paradigm where practical significance is prioritized alongside statistical rigor.

Business and A/B Testing Implications: In technological contexts, the distinction remains equally crucial. As noted in A/B testing guidance, "Insights that are statistically but not practically significant tell you something true but trivial. Insights that are practically but not statistically significant tell you something useful but uncertain" [20]. With industry estimates suggesting that as many as 80% of A/B tests fail to produce a statistically significant winner, countless resources are wasted acting on "winners" from inconclusive tests [25].

The critical distinction between statistical significance and practical significance represents a fundamental principle in rigorous scientific research, particularly in reaction optimization and drug development. While statistical significance determines whether an effect is real, practical significance determines whether it matters in real-world applications [20]. This distinction is especially crucial in an era of large datasets and high-throughput experimentation, where statistical power can detect minute, meaningless differences [17] [23].

The most effective research approaches incorporate both concepts throughout the experimental process—from planning through interpretation—recognizing that they provide complementary rather than competing information. As the field progresses, successful researchers will be those who master both the mathematical rigor of statistical analysis and the contextual interpretation of practical impact, leveraging emerging technologies like artificial intelligence and high-throughput experimentation while maintaining focus on genuinely meaningful outcomes [23] [24].

The Role of Effect Size and its Interpretation in Yield and Selectivity Improvements

In the pursuit of optimizing chemical reactions, researchers traditionally focus on achieving statistical significance (the p-value) to demonstrate that an observed improvement is not due to random chance. However, within the broader thesis on statistical significance in reaction optimization research, a more nuanced and often more critical question is: "Is the observed improvement large enough to be of practical value?" This is the domain of effect size—a quantitative measure of the magnitude of an experimental effect. For researchers and drug development professionals, accurately interpreting effect size is paramount for making informed decisions on process viability, resource allocation, and ultimately, translating laboratory findings into industrially relevant or clinically beneficial applications.

This guide compares the performance of different modern optimization strategies, focusing not just on their ability to find statistically significant effects, but on the substantive yield and selectivity improvements they deliver.

Experimental Protocols for Reaction Optimization

The following section details the core methodologies employed in the contemporary studies from which the subsequent comparative data is drawn.

Statistical Design of Experiments (sDoE) for Screening

The Plackett-Burman Design (PBD) is a highly efficient screening method used to identify the most influential factors from a large set of variables before in-depth optimization [26].

- Objective: To simultaneously screen multiple reaction parameters (e.g., ligand properties, catalyst loading, base, solvent) and identify which have a significant effect on the outcome of cross-coupling reactions [26].

- Workflow:

- Factor Selection: Key factors and their two levels (e.g., high/+1 and low/–1) are defined. For instance, ligand electronic effect (vCO) and Tolman’s cone angle, catalyst loading (1 vs. 5 mol%), base strength (Et₃N vs. NaOH), and solvent polarity (DMSO vs. MeCN) [26].

- Experimental Design: A 12-run design is constructed to screen up to 11 factors. Experimental runs are randomized to minimize the influence of uncontrolled variables [26].

- Execution & Analysis: Reactions (e.g., Mizoroki–Heck, Suzuki–Miyaura) are performed, and yields are determined. Statistical analysis of the data ranks the factors based on their main effects, identifying the most critical variables for further optimization [26].

Machine Learning (ML) with High-Throughput Experimentation (HTE)

This protocol uses automated HTE to generate consistent, high-quality data for training machine learning models to predict reaction yields [27].

- Objective: To accurately predict reaction yields and recommend optimal conditions for novel substrate combinations, thereby minimizing experimental effort [27].

- Workflow:

- Diverse Substrate Selection: A representative set of substrates is selected from virtual chemical spaces (e.g., USPTO dataset) using machine-based sampling to ensure structural diversity [27].

- HTE Data Generation: An automated HTE platform conducts amide coupling reactions across a vast array of pre-determined conditions (e.g., 95 different conditions). Internal standards and replicate experiments are used to ensure data quality and reproducibility [27].

- Model Training with Intermediate Knowledge: A machine learning model is trained not only on reaction inputs and outputs but also on embedded "intermediate knowledge" (e.g., inferred mechanistic or physicochemical features), which significantly enhances its predictive robustness for novel substrates [27].

Forced Dynamic Operation (FDO) of Reactors

FDO is a advanced engineering approach that modulates reactor inputs to overcome fundamental thermodynamic and kinetic limitations [28].

- Objective: To enhance the selectivity-conversion tradeoff in catalytic reactions, such as oxidative dehydrogenation (ODH), to achieve yields beyond the steady-state optimum [28].

- Workflow:

- Catalyst Preparation: Supported metal oxide catalysts (e.g., VOx on Al₂O₃) are synthesized, as their lattice oxygen acts as a selective nucleophilic species [28].

- Dynamic Modulation: The reactor feed composition (e.g., ethane and oxygen concentrations) is deliberately and periodically switched between rich and lean phases, rather than being kept at a constant steady state [28].

- Performance Evaluation: Time-averaged ethylene selectivity and ethane conversion are measured over multiple cycles. The parameters of modulation (frequency, amplitude) are systematically tuned to maximize the time-averaged yield of the desired product [28].

Comparative Performance Data of Optimization Strategies

The table below summarizes the typical effect sizes, characterized by yield and selectivity improvements, achieved by the different optimization protocols.

Table 1: Comparison of Reaction Optimization Strategies and Their Outcomes

| Optimization Strategy | Reaction Type | Key Parameters Optimized | Reported Effect Size (Yield Improvement) | Key Advantages | Limitations |

|---|---|---|---|---|---|

| Statistical DoE (Plackett-Burman) [26] | C-C Cross-Coupling | Ligand, Catalyst, Base, Solvent | Identifies influential factors for future optimization | Highly efficient factor screening; Reduces experimental runs by ~90% vs. OFAT [26] | Screening only; does not provide optimal parameter levels |

| Machine Learning (with HTE) [27] | Amide Coupling | Reagent, Solvent, Additive | R² = 0.71 on external test set; Can recommend high-yield conditions for novel substrates [27] | High predictive accuracy for novel substrates; Guides condition recommendation [27] | High initial HTE investment; Requires data science expertise |

| Forced Dynamic Operation [28] | Ethane ODH | Feed Concentration, Cycling Frequency | 7% absolute increase in ethylene yield over steady-state maximum [28] | Overcomes fundamental selectivity-conversion tradeoff [28] | Complex reactor design and control; Not universally applicable |

Visualizing Optimization Workflows

The following diagrams illustrate the logical workflows for the key optimization strategies discussed.

sDoE Screening Process

ML Yield Prediction Process

The Scientist's Toolkit: Key Research Reagent Solutions

The successful implementation of these advanced optimization strategies relies on specific reagents and materials.

Table 2: Essential Research Reagents and Their Functions

| Reagent/Material | Function in Optimization | Example Use-Case |

|---|---|---|

| Phosphine Ligands [26] | Modulates steric and electronic properties of catalyst; A key factor screened in sDoE. | Screening in Pd-catalyzed C-C cross-coupling (Suzuki, Heck) [26]. |

| VOx / Al₂O₃ Catalyst [28] | Provides lattice oxygen for selective oxidation; Critical for FDO performance. | Ethane Oxidative Dehydrogenation (ODH) to ethylene [28]. |

| Amine & Acid Substrate Libraries [27] | Provides structurally diverse building blocks for robust ML model training. | Building high-quality HTE datasets for amide coupling reaction optimization [27]. |

| Palladium Catalysts (e.g., K₂PdCl₄) [26] | The central catalytic metal for cross-coupling reactions. | Mizoroki–Heck and Suzuki–Miyaura reactions [26]. |

| Polar Aprotic Solvents (DMSO, MeCN) [26] | Influences reaction rate and pathway; A common factor in screening studies. | Solvent factor in Plackett-Burman screening designs [26]. |

In statistical hypothesis testing, particularly in reaction optimization research and drug development, decisions are made based on sample data. These decisions are inherently probabilistic, carrying the risk of two distinct types of errors: Type I (false positive) and Type II (false negative) [29]. Incorrect conclusions can direct research down unproductive paths, wasting resources and potentially delaying the discovery of optimal reaction conditions or effective therapeutics.

A Type I error, or a false positive, occurs when a statistical test incorrectly rejects a true null hypothesis ((H_0)) [29] [30]. This is equivalent to concluding that an effect exists, such as a new catalyst or solvent system significantly improving reaction yield, when no real effect exists in the population [31]. The probability of committing a Type I error is denoted by alpha (α) and is also known as the significance level of a test, conventionally set at 0.05 (5%) [29] [32].

A Type II error, or a false negative, occurs when a statistical test fails to reject a false null hypothesis [29] [30]. This represents a missed opportunity, where a researcher fails to identify a genuinely effective optimization parameter or a bioactive compound. The probability of committing a Type II error is denoted by beta (β) [29]. The inverse of this probability, 1 – β, is known as the statistical power of a test—the likelihood that it will detect an effect when one truly exists [31] [29].

Comparative Analysis: Error Types and Research Impact

The following table provides a structured comparison of these two fundamental error types, crucial for interpreting experimental data in scientific research.

Table 1: Comparison of Type I and Type II Errors

| Feature | Type I Error (False Positive) | Type II Error (False Negative) |

|---|---|---|

| Core Definition | Rejecting a true null hypothesis [29] [30] | Failing to reject a false null hypothesis [29] [30] |

| Analogy | Convicting an innocent defendant; "crying wolf" when there is no wolf [31] [32] [33] | Acquitting a guilty defendant; missing the wolf when it is actually there [31] [33] |

| Probability | Significance level (α) [29] [32] | Beta (β) [29] |

| Impact in Reaction Optimization | Implementing a reaction parameter (e.g., temperature, catalyst) that is actually ineffective, leading to wasted resources and misguided research directions [34] | Overlooking a parameter that genuinely optimizes yield or selectivity, resulting in missed opportunities for process improvement [35] |

| Impact in Drug Development | Concluding a drug is effective when it is not, potentially leading to costly clinical trials for an ineffective compound and false hope for patients [31] [34] | Failing to identify a truly effective therapeutic, halting the development of a potentially life-saving treatment [31] [36] |

The relationship between these errors and the correct decisions in hypothesis testing is visually and conceptually summarized in the following decision matrix.

The Significance-Power Trade-Off: An Experimental Design Perspective

A fundamental principle in statistics is the trade-off between Type I and Type II errors [29] [36]. This trade-off is governed by the pre-set significance level (α) and its direct influence on statistical power (1 – β). Manipulating the significance level to reduce one type of error inevitably increases the risk of the other [29] [32]. This relationship is critical for planning robust experiments in reaction optimization.

Table 2: The Trade-Off Between Significance Level and Power

| Experimental Action | Impact on Type I Error (α) | Impact on Type II Error (β) & Power (1–β) |

|---|---|---|

| Decrease α (e.g., from 0.05 to 0.01) | Decreased Risk [29] [34] | Increased β (Higher Type II Error Risk)Decreased Power [29] [36] |

| Increase α (e.g., from 0.05 to 0.10) | Increased Risk [29] | Decreased β (Lower Type II Error Risk)Increased Power [29] |

| Increase Sample Size (n) | No direct effect | Decreased β (Lower Type II Error Risk)Increased Power [31] [37] [35] |

| Increase Effect Size | No direct effect | Decreased β (Lower Type II Error Risk)Increased Power [37] [35] |

The inverse relationship between α and β, and how they are influenced by the study's sample size and the true effect size, can be visualized through their overlapping distributions. The following diagram illustrates how the critical value for rejecting the null hypothesis creates a direct link between the probability of a Type I error (α) and the probability of a Type II error (β).

Experimental Protocols for Error Control in Research

Protocol for Controlling Type I (False Positive) Errors

- A Priori Alpha Level Specification: Before data collection, firmly establish the significance level (α) based on the consequences of a false positive. While 0.05 is conventional, a stricter level (e.g., 0.01) is warranted for high-stakes research, such as initial drug efficacy studies [32] [34].

- Multiple Comparison Corrections: When conducting numerous statistical tests simultaneously (e.g., testing the effect of dozens of reaction conditions on yield), the family-wise error rate inflates. Apply correction methods like the Bonferroni correction (conservative) or the False Discovery Rate (FDR) to control the overall Type I error rate [31] [34] [36].

- Pre-registration of Studies and Analysis Plans: Publicly registering the experimental hypothesis, design, and primary analysis plan before conducting the study helps prevent data dredging and p-hacking, which are practices that artificially inflate Type I error rates [32].

Protocol for Controlling Type II (False Negative) Errors

- A Priori Power Analysis for Sample Size Determination: Before experimentation, conduct a statistical power analysis to determine the minimum sample size required to detect a pre-specified, practically significant effect size with a given power (typically 80% or 90%) and α level [31] [37]. This is the most direct method to ensure a study is sufficiently sensitive. The required sample size increases for detecting smaller effect sizes and for achieving higher power [31] [35].

- Maximizing Effect Size Through Experimental Design: A well-designed experiment can amplify the signal of interest. In reaction optimization, this could involve selecting catalyst candidates with fundamentally different mechanistic properties or solvent systems with large polarity differences, rather than testing structurally similar ligands with minimal expected difference in outcome [35].

- Reducing Measurement Error and Variability: Implement precise measurement techniques and controlled experimental conditions to reduce random noise in the data. In analytical chemistry, this could involve using instrumentation with higher precision, rigorous calibration, and replicating measurements to average out random error, thereby increasing the power to detect true effects [29].

The Scientist's Toolkit: Essential Reagents for Robust Statistical Inference

In the context of statistical testing and experimental design, the "research reagents" are the methodological components that ensure the integrity and reliability of conclusions.

Table 3: Essential Methodological Reagents for Hypothesis Testing

| Tool / Reagent | Function in the Experimental Protocol |

|---|---|

| Significance Level (α) | Pre-set threshold (e.g., 0.05) that defines the maximum tolerable risk of a Type I error (false positive) [29] [32]. |

| Statistical Power (1–β) | The probability of correctly rejecting a false null hypothesis; the target sensitivity of the experiment, often set at 0.80 or higher [31] [29]. |

| P-value | The computed probability of obtaining the observed results, or more extreme ones, if the null hypothesis is true. Compared to α to make a reject/fail-to-reject decision [29] [32]. |

| Effect Size | A quantitative measure of the magnitude of a phenomenon, independent of sample size (e.g., difference in means divided by standard deviation). Defines the minimal effect of practical interest [37] [35]. |

| Sample Size (n) | The number of independent experimental replicates (e.g., individual reaction runs, biological replicates). The primary factor under direct researcher control for increasing power [31] [37]. |

| Confidence Interval | A range of values, derived from the sample data, that is likely to contain the true population parameter. Provides an estimate of precision and is useful for assessing practical significance [33] [32]. |

From Theory to the Lab Bench: Implementing Statistical Analysis in HTE and ML Workflows

In the data-driven landscape of modern chemical and pharmaceutical research, reaction optimization remains a critical yet resource-intensive challenge [4]. While machine learning and high-throughput experimentation (HTE) have accelerated discovery, the foundational role of traditional statistical tests in validating results, identifying significant effects, and guiding experimental design is irreplaceable [38]. Misapplication of these tests, however, can lead to incorrect conclusions and wasted resources [38]. This guide provides an objective comparison of three cornerstone statistical tests—Student’s t-test, Analysis of Variance (ANOVA), and the Chi-squared test—within the context of reaction optimization research. We will detail their appropriate use cases, present comparative experimental data, and outline protocols to equip scientists with a reliable framework for establishing statistical significance in their work.

Core Test Comparison: Principles and Applications

The choice of statistical test is fundamentally dictated by the type of data (continuous vs. categorical) and the structure of the research question (e.g., comparing two groups versus multiple groups) [39] [40] [41]. The following table summarizes the key characteristics of each test relevant to reaction optimization.

Table 1: Comparison of Statistical Tests for Reaction Optimization

| Test | Primary Use in Reaction Optimization | Data Type Required | Key Assumptions | Typical Output for Significance |

|---|---|---|---|---|

| Student’s t-test [38] [42] | Comparing the mean outcome (e.g., yield, purity) between two independent experimental conditions or to a target value. | Continuous, numerical data (e.g., yield %, ee, concentration). | Data approximates a normal distribution; groups have roughly equal variances (for independent t-test); observations are independent. | p-value < 0.05 indicates a statistically significant difference between the means. |

| ANOVA [39] [42] | Determining if there is a significant difference in mean outcomes across three or more independent experimental conditions (e.g., multiple catalysts, solvents, or temperature levels). | Continuous, numerical data. | Normality within each group; homogeneity of variances across groups; independence of observations. | p-value < 0.05 indicates that at least one group mean is significantly different. Requires post-hoc tests (e.g., Tukey’s HSD) to identify which specific groups differ. |

| Chi-squared Test [38] [41] | Analyzing relationships between categorical variables or assessing the fit of observed categorical outcomes to an expected distribution (e.g., success/failure rates across different ligand classes, or distribution of product selectivity categories). | Categorical, frequency, or count data (e.g., number of successful reactions per condition). | Observations are independent; expected frequency in each contingency table cell is >5. | p-value < 0.05 indicates a significant association between variables or a significant deviation from the expected distribution. |

Methodological Protocols for Key Experiments

The reliable application of these tests requires adherence to sound experimental and analytical protocols. Below are generalized methodologies for implementing each test in a reaction optimization context.

Protocol for Independent Samples t-Test

Objective: To determine if a change in a single continuous reaction parameter (e.g., using Ligand A vs. Ligand B) leads to a statistically significant difference in a mean outcome (e.g., yield).

- Experimental Design: Conduct a minimum of 3-5 replicate reactions for each of the two conditions being compared (e.g., Condition A with Ligand A, Condition B with Ligand B), ensuring all other parameters are constant.

- Data Collection: Measure the continuous outcome variable (e.g., yield via HPLC analysis) for each replicate.

- Assumption Checking: Test each dataset for normality (e.g., using Shapiro-Wilk test) and check for homogeneity of variances (e.g., using Levene’s test).

- Test Execution: If assumptions are met, perform an independent two-sample t-test [42]. The test statistic is calculated as:

t = (Mean₁ - Mean₂) / √(s_p²(1/n₁ + 1/n₂)), wheres_p²is the pooled variance. A p-value is derived from the t-distribution withn₁ + n₂ - 2degrees of freedom [43] [42]. - Interpretation: A p-value below the significance threshold (α=0.05) allows rejection of the null hypothesis, concluding the mean outcomes are significantly different [39].

Protocol for One-Way ANOVA

Objective: To evaluate the effect of a categorical factor with three or more levels (e.g., Solvent: THF, Dioxane, DME, Toluene) on a continuous reaction outcome.

- Experimental Design: Perform replicated reactions (n≥3) for each level of the categorical factor.

- Data Collection & Assumption Checking: As with the t-test, collect continuous outcome data and check for normality and homogeneity of variances across all groups.

- Test Execution: Compute the F-statistic, which is the ratio of variance between the group means to the variance within the groups:

F = MS(Between) / MS(Within)[42]. Associated degrees of freedom arek-1(between groups) andN-k(within groups), wherekis the number of groups andNthe total sample size. - Interpretation: A significant p-value (p<0.05) indicates that not all group means are equal. This must be followed by a post-hoc test like Tukey’s Honest Significant Difference (HSD) to perform pairwise comparisons and identify which specific solvents lead to different yields [39] [42].

Protocol for Chi-squared Test of Independence

Objective: To assess if two categorical factors in an optimization screen are independent (e.g., is "Reaction Success" associated with "Base Type"?).

- Experimental Design: Run a matrix of reactions covering all combinations of categories (e.g., different bases and ligands). Record the outcome for each reaction as a categorical success/failure or by product selectivity class.

- Data Organization: Tally the counts into a contingency table. For example, rows as Base (KOt-Bu, Cs₂CO₃, NaOAc) and columns as Outcome (Success, Failure).

- Expected Frequency Calculation: Calculate the expected count for each cell under the assumption of independence:

E_ij = (Row Total_i * Column Total_j) / Grand Total[42]. - Test Execution: Compute the Chi-squared statistic:

χ² = Σ [(O_ij - E_ij)² / E_ij]across all cells [42]. The degrees of freedom are(rows - 1) * (columns - 1). - Interpretation: A significant p-value suggests an association between the factors (e.g., the choice of base influences the likelihood of reaction success) [38] [41].

Statistical Test Selection Workflow for Reaction Optimization

The logical process for selecting the appropriate statistical test based on the nature of the reaction data and the experimental question can be visualized in the following decision diagram.

Diagram 1: Decision Workflow for Statistical Test Selection in Reaction Optimization.

The Scientist's Toolkit: Essential Research Reagent Solutions

The experimental data fed into statistical analyses are generated from carefully designed reactions. The following table lists key materials and tools commonly employed in modern, data-rich reaction optimization campaigns [4] [44].

Table 2: Key Research Reagent Solutions for Reaction Optimization Screening

| Item | Function in Optimization |

|---|---|

| High-Throughput Experimentation (HTE) Platform [4] | Enables automated, parallel synthesis of hundreds to thousands of reaction variants on micro-scale, generating the large datasets required for robust statistical analysis. |

| Diverse Catalyst & Ligand Libraries | Provides a broad matrix of categorical variables to screen for significant effects on reaction outcomes (e.g., activity, selectivity) using tests like ANOVA or Chi-squared. |

| Solvent Screening Kits | Allows systematic exploration of solvent effects, a critical continuous or categorical parameter, on yield and reaction profile. |

| Internal Standard & Analytical Standards | Ensures accurate and precise quantification of reaction outcomes (yield, conversion, ee) via techniques like HPLC, GC, or NMR, producing the continuous data for t-tests and ANOVA. |

| Statistical Software/Scripting Environment (e.g., Python/R) | Used to perform the statistical tests, calculate p-values, and visualize results, moving from raw data to actionable insights [43]. |

Selecting the correct statistical test is not a mere procedural step but a critical determinant of experimental validity in reaction optimization. The t-test serves as a precise tool for comparing two specific conditions, ANOVA efficiently screens multiple factors simultaneously, and the Chi-squared test unravels relationships within categorical outcome spaces like reaction success matrices. By aligning the experimental design with the appropriate test—guided by the data type and research question—researchers can move beyond subjective intuition to make objective, statistically sound decisions that accelerate the development of robust and efficient chemical processes [38] [40]. In an era of automated HTE and machine learning guidance [4], these classical statistical methods remain indispensable for rigorously validating discoveries and ensuring the significance of optimization results is rooted in reliable evidence.

Leveraging Confidence Intervals to Assess Precision in Parameter Estimation

In the empirical sciences, particularly reaction optimization in chemistry and drug development, parameter estimation is fundamental. The movement away from simplistic null hypothesis significance testing toward a focus on estimating effect sizes and building predictive models has made the precision of parameter estimates a central concern [45]. Confidence Intervals (CIs) provide a range of plausible values for an unknown parameter, offering a more nuanced and informative measure of uncertainty than a binary p-value [46]. In the context of reaction optimization—where parameters can represent catalyst loading, optimal temperature, or kinetic constants—accurately quantifying the precision of these estimates is critical for developing robust, scalable, and efficient chemical processes. This guide compares the performance of various CI methodologies, providing researchers with the data needed to select the optimal approach for assessing precision in their parameter estimation tasks.

Foundational Methods for Constructing Confidence Intervals

Several statistical methods exist for constructing confidence intervals, each with unique assumptions, strengths, and limitations. The choice of method directly impacts the reliability of the precision assessment, especially with complex parameters common in reaction optimization data.

- Standard Wald-type Intervals: The most common approach, based on asymptotic normality. It is simple to compute but can perform poorly in small samples or for non-linear parameters, where its symmetry may be unrealistic [45] [47].

- The Delta Method: Used for nonlinear functions of parameters, such as ratios. This method employs a first-order Taylor expansion to approximate the variance. While it typically produces a bounded, symmetric interval, its coverage probability can be inaccurate at moderate sample sizes, and it does not account for the skewness often present in finite-sample distributions [47].

- The Fieller Method: A classical approach specifically for ratios of parameters. It inverts a pivotal test statistic and can produce asymmetric intervals, better reflecting the skewness of a ratio's sampling distribution. A significant limitation is that it can produce unbounded intervals (e.g., the entire real line) when the denominator is not significantly different from zero [47].

- Bootstrap Methods: Resampling techniques (e.g., Percentile Bootstrap (PB) and Reverse Percentile Interval (RPI)) that empirically estimate the sampling distribution of a statistic. They are computationally intensive but make fewer distributional assumptions. Comparative analyses show that the Percentile Bootstrap often outperforms the Reverse Percentile method in terms of coverage accuracy and interval score [48].

- Sup-t Confidence Bands: An extension of confidence intervals to parameter vectors. These are crucial when multiple parameters are estimated simultaneously (e.g., effects on multiple outcomes or effect measure modification). Standard CIs for individual parameters do not provide correct simultaneous coverage, and their collective use understates uncertainty. Sup-t bands adjust for multiple comparisons to guarantee nominal coverage for the entire set of parameters [45].

Advanced and Emerging Techniques

Innovations in statistical computing and theory have led to more refined techniques that address the shortcomings of classical methods.

- Bias-Corrected Methods with Edgeworth Expansion: Novel analytical approaches modify the Delta method by using an Edgeworth expansion to correct for skewness arising from non-normal and asymmetric distributions. When combined with a bias-corrected estimator for the parameter itself, these methods produce confidence intervals with a coverage probability that converges to the nominal level at a rate of

O(n^{-1/2})and typically yield bounded intervals [47]. - Bayesian and AI-Guided Frameworks: With the rise of machine learning in optimization, Bayesian approaches are increasingly used. These methods produce credible intervals, the Bayesian analogue of confidence intervals, by combining prior knowledge with experimental data. In AI-guided reaction optimization, understanding the uncertainty of model predictions is critical for calibrating trust and making informed decisions [4] [49].

Table 1: Comparison of Key Confidence Interval Construction Methods

| Method | Primary Use Case | Key Assumptions | Advantages | Disadvantages |

|---|---|---|---|---|

| Standard Wald | Single parameters | Asymptotic normality | Computational simplicity, ease of presentation | Poor small-sample performance, symmetric intervals |

| Delta Method | Nonlinear functions (e.g., ratios) | Asymptotic normality of function | Produces bounded, symmetric intervals | Inaccurate coverage, ignores skewness, unbalanced tail errors [47] |

| Fieller Method | Ratios of parameters | Bivariate normality of numerator/denominator | Can produce realistic asymmetric intervals | Can yield unbounded or disconnected intervals [47] |

| Percentile Bootstrap | General purpose, small samples | Representative sampling | Few distributional assumptions, handles complexity | Computationally intensive, performance can vary [48] |

| Sup-t Confidence Bands | Multiple parameters / comparisons | Multivariate normality | Provides correct simultaneous coverage, easy presentation | Wider than pointwise intervals, requires covariance estimation [45] |

| Bias-Corrected Edgeworth | Nonlinear functions, small samples | Finite moments for expansion | Corrects for skewness, good coverage, bounded | More complex computation, relatively novel [47] |

Experimental Comparison of CI Performance

Simulation Protocol for Method Evaluation

To objectively compare the performance of different CI methods, researchers often conduct simulation studies. A typical protocol involves the following steps, which can be adapted for parameters relevant to reaction optimization (e.g., rate constants, optimal temperatures):

- Data Generation: Simulate thousands of datasets from a known statistical model where the true parameter value,

θ, is predefined. For ratio parameters, this involves generating paired data for the numerator and denominator [47] [48]. - Parameter Estimation: For each simulated dataset, compute the point estimate of the parameter,

θ_hat. - Interval Construction: For each method under evaluation (e.g., Delta, Fieller, Bootstrap, Edgeworth), construct a

(1-α)%confidence interval (e.g., 95% CI) forθ_hat. - Performance Calculation:

- Coverage Probability: Calculate the proportion of simulated datasets for which the constructed CI contains the true parameter

θ. A well-calibrated method should have a coverage probability close to the nominal level (e.g., 0.95). - Average Interval Width: Compute the average width of the CIs across all simulations. Narrower intervals indicate greater precision, but only if coverage is adequate.

- Interval Score: A composite metric that rewards narrowness but penalizes intervals that miss the true parameter, providing a single measure for comparison [48].

- Coverage Probability: Calculate the proportion of simulated datasets for which the constructed CI contains the true parameter

Comparative Performance Data

Simulation studies provide quantitative evidence for selecting a CI method. The following tables summarize typical findings.

Table 2: Simulated Coverage Probabilities (%) for a Ratio Parameter (Nominal Level = 95%)

| Sample Size (n) | Delta Method | Fieller Method | Percentile Bootstrap | Bias-Corrected Edgeworth |

|---|---|---|---|---|

| 30 | 89.2 | 93.5 | 91.8 | 94.1 |

| 50 | 91.5 | 94.3 | 93.2 | 94.8 |

| 100 | 93.1 | 94.9 | 94.5 | 95.1 |

| 500 | 94.6 | 95.0 | 94.9 | 95.0 |

Table 3: Average Width of Confidence Intervals from Simulation

| Sample Size (n) | Delta Method | Fieller Method | Percentile Bootstrap | Bias-Corrected Edgeworth |

|---|---|---|---|---|

| 30 | 1.45 | 1.81 | 1.72 | 1.78 |

| 50 | 1.12 | 1.29 | 1.25 | 1.27 |

| 100 | 0.79 | 0.85 | 0.83 | 0.84 |

| 500 | 0.35 | 0.36 | 0.36 | 0.36 |

Key Findings from Experimental Data:

- Coverage: The standard Delta method often shows under-coverage in small samples (n<100), meaning it is too optimistic about precision. The Fieller, Bootstrap, and Bias-Corrected Edgeworth methods more reliably achieve the nominal coverage level [47].

- Precision vs. Accuracy: While the Delta method produces the narrowest intervals, this is a false precision, as its coverage is inadequate. The slightly wider intervals from other methods are necessary for statistically accurate inference [47].

- Sample Size: As sample size increases, all methods converge toward the nominal coverage and their interval widths become similar, demonstrating the asymptotic validity of these procedures [47].

The Scientist's Toolkit: Essential Reagents for Statistical Precision

Successfully implementing these statistical techniques requires a combination of computational tools and methodological knowledge.

Table 4: Key Research Reagent Solutions for Statistical Analysis

| Tool / Reagent | Function in Analysis | Application Example |

|---|---|---|

| R / Python (statsmodels) | Open-source statistical software for computing CIs | Implementing Delta method, Bootstrap, and custom simulations [45] |

| DoE Software (JMP, MODDE) | Designs efficient experiments to maximize information gain | Generating data that leads to more precise parameter estimates [50] |

| Bootstrap Resampling Code | Automates the process of drawing samples with replacement | Estimating the sampling distribution of a complex kinetic parameter [48] |