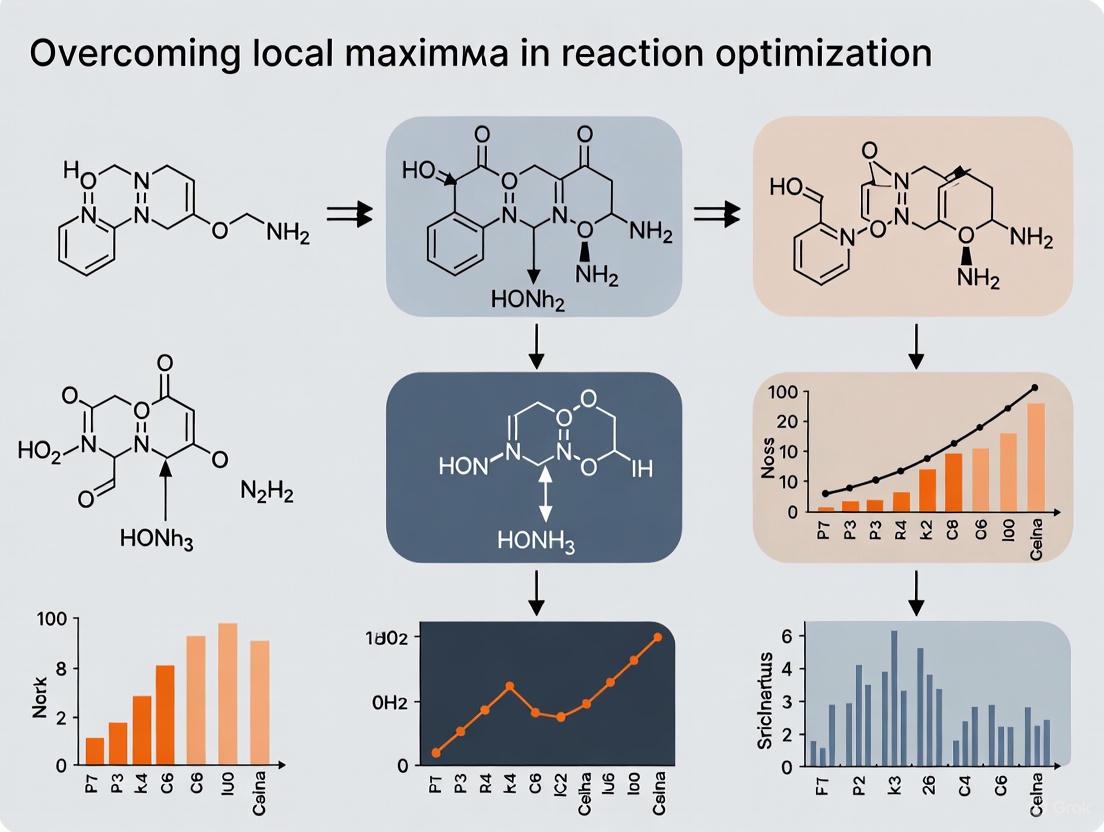

Beyond the Plateau: Advanced Strategies to Overcome Local Maxima in Chemical Reaction and Drug Discovery Optimization

This article addresses the pervasive challenge of local maxima in optimization processes critical to chemical synthesis and drug discovery.

Beyond the Plateau: Advanced Strategies to Overcome Local Maxima in Chemical Reaction and Drug Discovery Optimization

Abstract

This article addresses the pervasive challenge of local maxima in optimization processes critical to chemical synthesis and drug discovery. It explores the fundamental limitations of traditional One-Factor-At-a-Time (OFAT) methods that often converge on suboptimal solutions. The scope encompasses a detailed examination of modern global optimization algorithms—including stochastic, deterministic, and Bayesian methods—and their practical applications in overcoming energy landscape complexities. The content provides a troubleshooting guide for premature convergence and a comparative analysis of algorithmic performance across case studies in pharmaceutical development and materials science. Tailored for researchers, scientists, and drug development professionals, this review synthesizes strategic methodologies to enhance optimization efficiency, improve success rates in lead compound identification, and accelerate the development of viable therapeutic agents.

The Local Optima Problem: Understanding the Fundamental Barrier in Reaction Optimization

Frequently Asked Questions

What is a local maximum in the context of my reaction optimization? A local maximum is a point in your experimental parameter space where the reaction outcome (e.g., yield or selectivity) is higher than all other nearby points. However, it may not be the absolute best possible result (global maximum) for your system. Reaching a local maximum can make it seem like further optimization is impossible, even though better conditions might exist [1] [2] [3].

How can I tell if my optimization has stalled at a local maximum? Your optimization may be stuck at a local maximum if you observe a plateau in performance despite variations in reaction parameters, or if traditional one-factor-at-a-time (OFAT) approaches no longer lead to improvement [1] [4].

What are the main strategies to escape a local maximum? The primary strategies involve broadening your search. This includes exploring high-dimensional parameter spaces (e.g., solvent, catalyst, and temperature simultaneously) instead of varying single factors, and employing machine learning (ML) and high-throughput experimentation (HTE) to efficiently navigate complex reaction landscapes and discover better regions of performance [5] [4].

Why do traditional OFAT methods often get stuck? OFAT methods are limited because they can only explore a small, linear path through the multi-dimensional experimental space. They often miss optimal parameter combinations that exist outside of this narrow path and are ineffective at mapping the complex, interactive effects between different reaction variables [4].

What is the role of machine learning in overcoming this challenge? Machine learning, particularly Bayesian optimization, can model the complex relationship between your reaction parameters and outcomes. It intelligently proposes new experiments by balancing exploration of unknown regions and exploitation of known promising areas, thereby efficiently escaping local maxima and guiding you toward the global optimum [4].

Troubleshooting Guides

Guide 1: Diagnosing a Suspected Local Maximum

1. Understand the Problem

- Verify the Plateau: Confirm that your reaction yield or selectivity has truly stopped improving. Collect sufficient data to ensure that performance fluctuations are not due to experimental noise [6].

- Map a Local Landscape: Slightly but systematically vary two or three key parameters around your current best conditions (e.g., catalyst loading and temperature) to create a small response surface. A concave-down shape in this local map suggests a potential local maximum [2].

2. Isolate the Issue

- Compare to a Baseline: Benchmark your current results against a known, well-performing reaction system or a different substrate. This helps determine if the performance ceiling is specific to your current setup [6].

- Challenge Assumptions: Re-evaluate fixed parameters you may have taken for granted, such as solvent or catalyst class. A local maximum in one chemical space might not exist in another [4].

Guide 2: Implementing an ML-Driven Escape Strategy

1. Define Your Search Space Create a table of all plausible reaction variables and their ranges. This defines the "optimization landscape" you will explore.

| Variable Type | Examples | Range/Options |

|---|---|---|

| Categorical | Solvent, Ligand, Additive | DMSO, Toluene, Ligand A, Ligand B |

| Continuous | Temperature, Concentration, Time | 25°C - 120°C, 0.1 M - 1.0 M, 1h - 24h |

| Stoichiometric | Catalyst Loading, Equivalents | 1 mol% - 10 mol%, 1.0 eq - 2.0 eq |

2. Select an Initial Sampling Method

- Use a quasi-random sampling method (e.g., Sobol sampling) to select your first batch of experiments. This ensures your initial data points are well-spread across the entire search space, maximizing the chance of discovering promising regions [4].

3. Run the ML Optimization Loop

- Train a Model: Use an algorithm like a Gaussian Process (GP) regressor to learn from your experimental data and predict outcomes for all untested conditions [4].

- Propose New Experiments: Employ an acquisition function (e.g., q-NParEgo, TS-HVI) to select the next batch of experiments that best balance exploring uncertain regions and exploiting known high-yield areas [4].

- Iterate: Repeat the cycle of running experiments, updating the model, and proposing new conditions until performance converges to a satisfactory level.

4. Validate and Scale

- Manually verify the top conditions identified by the ML model in a traditional lab setup to ensure robustness and translatability to scale [4].

Experimental Protocols & Data

Protocol: High-Throughput Screening for Landscape Exploration

Objective: To efficiently explore a broad reaction space and identify regions of high performance, bypassing local maxima.

Methodology:

- Reaction Setup: Utilize an automated liquid handling system to prepare reactions in a 96-well plate format.

- Parameter Variation: Systematically vary key categorical and continuous parameters across the plate according to a predefined design (e.g., Sobol sequence).

- Analysis: Use high-throughput analytics (e.g., UPLC-MS) to quantify reaction outcomes (yield, selectivity) for all conditions in parallel.

- Data Analysis: Feed all results into an ML-driven optimization pipeline to guide subsequent experimental batches [4].

Key Data from a Model Study (Nickel-catalyzed Suzuki Reaction) [4]:

| Optimization Method | Number of Experiments | Best Identified Yield | Key Outcome |

|---|---|---|---|

| Chemist-Designed HTE | 2 plates (192 reactions) | Failed to find success | Stuck in a non-productive region (local maximum) |

| ML-Guided HTE | 1 plate (96 reactions) | 76% AP | Identified productive conditions missed by traditional approach |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Optimization |

|---|---|

| Bayesian Optimization Software | Core algorithm that models the reaction landscape and proposes the most informative next experiments [4]. |

| High-Throughput Experimentation (HTE) Robotics | Enables the parallel execution of hundreds of reactions, providing the large datasets needed for ML models [4]. |

| Gaussian Process (GP) Regressor | A type of ML model that predicts reaction outcomes and, crucially, quantifies the uncertainty of its predictions [4]. |

| Acquisition Function | The decision-making engine that uses the GP's predictions to balance exploring new areas vs. refining known good ones [4]. |

| Chemical Descriptors | Numerical representations of categorical variables (e.g., solvents, ligands) that allow ML models to process them [4]. |

Workflow Visualization

Troubleshooting Guides

Common OFAT Experimental Failures and Solutions

Problem: My OFAT experiment yielded seemingly optimal conditions, but the final result is suboptimal.

- Explanation: This is a classic symptom of the "Synergy Gap." OFAT fails to detect interaction effects between factors, meaning it can miss the optimal combination because it doesn't test factors simultaneously [7] [8]. It often finds a local maximum rather than the global optimum.

- Solution: Transition to a Design of Experiments (DOE) approach. Use a factorial design to systematically study multiple factors at once. This allows you to model both main effects and interaction effects, helping you find the true optimal conditions [7].

Problem: After an OFAT optimization, a process is unstable or highly sensitive to minor variations.

- Explanation: OFAT explores the experimental space along a single path, providing a very limited understanding of the overall experimental region. It cannot map the complex relationships and curvatures in the response surface, leaving you vulnerable to unexpected behavior in unexplored areas [7].

- Solution: Implement Response Surface Methodology (RSM). Use designs like Central Composite or Box-Behnken to build a predictive model of your system. This model will help you understand the curvature and find a robust operating region that is less sensitive to noise [7].

Problem: My OFAT screening of multiple drug combinations is resource-intensive and I'm likely missing promising synergistic pairs.

- Explanation: Assessing drug synergy requires a quantitative demonstration that the combined effect is greater than the expected additive effect based on individual drug potencies. OFAT is ill-suited for this as it cannot efficiently explore the vast dose-response landscape of two or more drugs [9] [10].

- Solution: Employ rigorous synergy assessment methods like isobolographic analysis. This method uses the concept of "dose equivalence" to define an additive line (isobole). Dose combinations that yield the same effect but lie significantly below this line indicate synergism (superadditivity) [9] [10].

FAQ: Overcoming the OFAT Mindset

Q: When is it acceptable to use an OFAT approach? A: OFAT may be suitable only in very specific scenarios: when data is cheap and abundant, when you are in the earliest, exploratory stages of investigation with no prior knowledge, or when it is known with certainty that no interactions exist between the factors [8]. In most modern research and development contexts, particularly in drug development and process chemistry, these conditions are rarely met.

Q: What is the core statistical principle I'm violating by using OFAT? A: OFAT violates the fundamental DOE principles of randomization, replication, and blocking [7]. By not randomizing the run order, you risk confounding factor effects with lurking variables (e.g., environmental changes, instrument drift). Without replication, you cannot estimate experimental error, making it impossible to judge if an observed effect is real or just noise [7].

Q: We have limited resources. Isn't DOE more expensive than OFAT? A: This is a common misconception. While a single OFAT run might be cheap, the total number of runs required to investigate several factors is often larger and less informative than an equivalent DOE [7] [8]. DOE is designed for efficiency, extracting the maximum amount of information from a minimal number of experimental runs. It is a more resource-effective strategy in the long run.

Q: How do I justify moving from OFAT to more advanced methods in my organization? A: Frame the argument around risk mitigation and value. Explain that OFAT carries a high risk of:

- Missing optimal conditions, leading to lower-efficacy drugs or less efficient processes.

- Failing to detect interactions, which can cause stability and reproducibility issues later in development, a cost far greater than the initial investment in proper experimental design [11].

OFAT vs. DOE: A Comparative Analysis

Table 1: A direct comparison of the One-Factor-at-a-Time (OFAT) and Design of Experiments (DOE) methodologies.

| Feature | OFAT (One-Factor-at-a-Time) | DOE (Design of Experiments) |

|---|---|---|

| Basic Principle | Varies one factor while holding all others constant [7]. | Varies multiple factors simultaneously according to a structured design [7]. |

| Ability to Detect Interactions | No. This is the primary cause of the "synergy gap" [7] [8]. | Yes. A key strength of factorial designs [7]. |

| Experimental Efficiency | Low. Requires more runs for the same precision in effect estimation [8]. | High. Provides more information and better precision per experimental run [7] [8]. |

| Optimization Capability | Limited. Can only find improvements along a single path, often missing the global optimum [7]. | Strong. Provides a systematic pathway for optimization, including via Response Surface Methodology (RSM) [7]. |

| Statistical Rigor | Low. Lacks principles like randomization and replication, increasing the risk of misleading results [7]. | High. Built on a foundation of randomization, replication, and blocking [7]. |

| Modeling Capability | Cannot generate a predictive model of the system [7]. | Can generate a predictive mathematical model for the response variable[s]. |

Key Metrics in Drug Synergy Analysis

Table 2: Core concepts and models used in the quantitative assessment of drug synergy.

| Concept/Model | Description | Formula / Application |

|---|---|---|

| Isobolographic Analysis | A graphical method to assess drug interactions based on dose equivalence [9]. | ( \frac{a}{A} + \frac{b}{B} = 1 ) defines the additive isobole, where (a) and (b) are doses in combination, and (A) and (B) are equally effective individual doses [9]. |

| Additive Effect | The expected combined effect if the two drugs do not interact. This is the baseline for synergy detection [9] [10]. | Defined by the chosen model (e.g., Loewe Additivity or Bliss Independence). Synergy is a statistically significant deviation above this expected value [10]. |

| Synergy (Superadditivity) | An effect greater than the expected additive effect [9] [10]. | Experimentally, a dose combination that produces the specified effect but plots as a point below the additive isobole [9]. |

| Zero-Interaction Theory | The concept that the total effect of a non-interacting drug combination can be predicted from the individual dose-effect curves [9]. | Provides the null hypothesis (additivity) that must be rejected to prove synergy or antagonism [10]. |

Experimental Protocols

Protocol 1: Standard Isobolographic Analysis for Drug Synergy

Purpose: To quantitatively determine if two drugs exhibit synergistic interaction at a specific effect level (e.g., ED₅₀, the dose that produces 50% of the maximum effect).

Methodology:

- Generate Individual Dose-Effect Curves:

- For each drug (A and B), conduct experiments to establish a full dose-effect relationship.

- Fit a model (e.g., sigmoid Emax model) to the data to determine the potency (e.g., ED₅₀) and efficacy (Emax) of each drug alone [9].

- Define the Additive Isobole:

- Select a specific effect level (e.g., 50% of maximum effect).

- The ED₅₀ of Drug A (dose A) and the ED₅₀ of Drug B (dose B) become the intercepts on their respective axes on an isobologram.

- The line connecting these two points is the additive isobole, representing all dose pairs (a, b) expected to produce the 50% effect additively [9].

- Test Combination Doses:

- Administer fixed-ratio combinations of the two drugs.

- Experimentally determine the total dose of the combination required to produce the same 50% effect level.

- Statistical Comparison:

Visual Workflow:

Protocol 2: Factorial Design for Screening Multiple Factors

Purpose: To efficiently screen multiple factors and identify their main effects and interaction effects.

Methodology:

- Select Factors and Levels:

- Choose the input factors (e.g., temperature, concentration, catalyst type) you wish to investigate.

- Define a "low" and "high" level for each continuous factor.

- Construct the Design Matrix:

- For a 2^k factorial design (k = number of factors), create a table that lists all possible combinations of the low and high levels of every factor. This requires 2^k experimental runs.

- Randomize the run order to minimize the effect of confounding variables [7].

- Run Experiments and Collect Data:

- Execute the experiments in the randomized order and record the response variable(s) for each run.

- Analyze Data with ANOVA:

- Perform an Analysis of Variance (ANOVA) on the results.

- The ANOVA will partition the total variation in the data into components attributable to each main effect and interaction effect.

- The significance of each effect is tested against the experimental error [7].

- Interpret Results:

- Use main effects plots and interaction plots to visualize the results.

- Significant interaction plots (where lines are not parallel) provide direct evidence of factor interdependence that OFAT would miss [7].

Visual Workflow:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential components for conducting rigorous drug synergy studies.

| Item | Function in Synergy Research |

|---|---|

| Full Agonists with Defined Dose-Effect Curves | Drugs used to establish the baseline potency (e.g., ED₅₀) and efficacy (Emax) required for isobolographic analysis. Their dose-effect relationship must be well-characterized [9]. |

| In Vitro or In Vivo Bioassay System | A reliable and reproducible biological system (e.g., cell-based assay for antiproliferation, animal model for antinociception) for measuring the quantifiable effect of the drugs [9] [10]. |

| Software for Synergy Calculation | Computational tools (e.g., Combenefit, R package Synergy) to perform complex calculations for models like Loewe Additivity and Bliss Independence, and to conduct statistical testing [10]. |

| Statistical Analysis Package | Software capable of performing ANOVA, regression analysis for dose-response curves, and statistical tests (e.g., t-tests) to compare observed combination effects to the predicted additive effect [7] [10]. |

FAQs and Troubleshooting Guides

Comprehending the Parameter Space

Q: The parameter space for my reaction is overwhelmingly large. How can I effectively visualize and understand which areas have been explored?

A: This is a common challenge, as reaction optimization (RO) datasets grow exponentially with the number of parameters [12]. To address this:

- Use Specialized Visual Analytics Tools: Platforms like the open-source CIME4R application are specifically designed to help researchers gain an overview of the RO parameter space. They allow you to see which experiments have been performed and visualize an AI model's predictions across the entire space [12].

- Track Exploration vs. Exploitation: These tools help you discern whether your campaign (or an AI model) is focusing on exploring unseen regions or maximizing outcomes within known high-performing areas, which is critical to avoid getting stuck in local maxima [12].

Investigating Optimization Progression

Q: How can I track how my reaction optimization process develops over multiple iterations and identify if I'm stuck in a local maximum?

A: Understanding temporal changes is key to diagnosing a stalled optimization.

- Visualize the Workflow Iterations: Actively monitor how data accumulates and changes across optimization cycles. A typical iterative workflow involves analyzing data, choosing experiments, performing them, and then augmenting the dataset with new results before repeating the cycle [12]. Tracking this progression can reveal plateaus.

- Implement Robotic Hyperspace Mapping: Robotic platforms can execute and quantify thousands of reactions in parallel. By mapping yield distributions over a multidimensional grid of conditions (e.g., concentrations, temperature), you can visually identify if you've found a global maximum or are confined to a local, suboptimal region [13]. These maps often show that yield distributions are "slow-varying" for continuous variables, helping to contextualize your results [13].

Identifying Critical Factors

Q: I have a large dataset from a high-throughput screen. How do I identify which parameters or combinations of parameters are the most critical for achieving a high yield?

A: Moving from data to insight requires analytical approaches that can handle high-dimensionality.

- Leverage Explainable AI (XAI): Machine learning models can be used not just for prediction, but also for explanation. Techniques like SHAP (SHapley Additive exPlanations) can quantify the importance of each reaction parameter (e.g., solvent, catalyst, temperature) for a given prediction, helping you pinpoint critical factors [12].

- Reconstruct Reaction Networks: In complex reactions, systematically surveying substrate proportions can help reconstruct the underlying reaction network. This can expose hidden intermediates and products, revealing which pathways lead to the desired product and which lead to by-products, even in well-established reactions [13].

Troubleshooting AI-Guided Optimization

Q: When using an AI to guide my optimization, how can I trust its suggestions and know when to override them?

A Effective human-AI collaboration is essential for navigating complex landscapes and overcoming local optima.

- Demand Model Understanding: You should be able to understand how an AI model arrived at a particular prediction. Use interactive tools that provide AI explanations, such as feature importance for a prediction or the model's own uncertainty estimates. This helps detect model flaws and calibrates trust [12].

- Combine Strengths: The optimal strategy combines human expertise with AI's computational power. For instance, the ML framework "Minerva" uses Bayesian optimization to balance the exploration of unknown regions with the exploitation of known high-performing areas. Your chemical intuition can be used to judge if the AI's exploratory suggestions are chemically reasonable or if its exploitative focus is too narrow, allowing you to strategically overrule it to escape a local maximum [4].

Experimental Protocols & Data

Protocol 1: Robotic Hyperspace Mapping for Anomaly Detection

This protocol outlines a method for mapping reaction outcomes across thousands of conditions to identify optimal regions and unexpected reactivity [13].

1. Robotic Setup and Execution:

- Platform: A robotic platform capable of handling organic solvents, equipped with liquid handlers and a UV-Vis spectrometer.

- Procedure:

- The robot examines the hyperspace of conditions at points of an N-dimensional grid (e.g., uniform grid for concentrations, temperature).

- It sets up reactions and acquires UV-Vis absorption spectra for each condition at the desired time(s).

- The crudes from all hyperspace points are combined into one mixture.

2. Bulk Analysis and Basis-Set Identification:

- The combined crude mixture is separated by chromatography.

- Isolated fractions are identified by traditional spectroscopic techniques (NMR, MS) to establish the "basis set" of all possible products.

3. Calibration and Spectral Unmixing:

- UV-Vis absorption spectra and concentration-absorbance calibration curves are created for each purified basis-set component.

- The complex UV-Vis spectra from each hyperspace point are fitted via linear combinations (vector decomposition) of the reference spectra from the basis set.

- The fitting procedure rejects solutions that violate reaction stoichiometry.

4. Anomaly Detection:

- The algorithm calculates residuals (differences between experimental and fitted spectra).

- It evaluates the variance of residuals and their autocorrelation using the Durbin-Watson statistic.

- A systematic deviation indicates the formation of an unexpected product in a specific region of the hyperspace, flagging it for further investigation.

Protocol 2: Highly Parallel Bayesian Optimization with Minerva

This protocol describes a machine learning-guided workflow for optimizing reactions in large parallel batches, suitable for high-throughput experimentation (HTE) [4].

1. Define the Combinatorial Search Space:

- Assemble a discrete set of potential reaction conditions (reagents, solvents, catalysts, temperatures) deemed plausible by a chemist.

- Apply automatic filtering to remove impractical or unsafe condition combinations.

2. Initial Experiment Selection:

- Use algorithmic quasi-random Sobol sampling to select an initial batch of experiments. This maximizes the initial coverage of the reaction space.

3. Machine Learning Optimization Loop:

- Train Model: Using the acquired experimental data, train a Gaussian Process (GP) regressor to predict reaction outcomes (e.g., yield) and their uncertainties for all possible conditions.

- Select Next Batch: An acquisition function (e.g., q-NParEgo, TS-HVI) uses the model's predictions and uncertainties to select the next most promising batch of experiments. This function balances exploration (testing uncertain conditions) and exploitation (testing conditions predicted to be high-performing).

- Iterate: The process is repeated for multiple iterations, with the chemist using evolving insights to refine the strategy.

Data Presentation

Table 1: Performance Comparison of Optimization Approaches

| Approach | Key Methodology | Strengths | Limitations / Challenges |

|---|---|---|---|

| Traditional OFAT/DoE [12] [5] | Modifying one variable at a time or using statistical design of experiments. | Low overhead, intuitive. | Inefficient for high-dimensional spaces; prone to missing optimal conditions and getting stuck in local maxima. |

| AI-Guided Bayesian Optimization (e.g., Minerva) [4] | Machine learning (Gaussian Process) with an acquisition function to guide experiments. | Efficiently handles large search spaces and parallel batches; balances exploration and exploitation. | Requires initial data/sampling; model predictions can be hard to interpret without proper tools. |

| Robotic Hyperspace Mapping [13] | Systematic, parallel exploration of a predefined grid of conditions using optical detection and spectral unmixing. | Provides a complete portrait of the reaction landscape; identifies unexpected products and reactivity switches. | Lower throughput than pure ML-guided methods; not suitable for products with no UV-Vis signal. |

| Visual Analytics (CIME4R) [12] | Interactive visualization of RO data and AI predictions. | Facilitates human-AI collaboration; helps comprehend parameter space and model decisions. | Does not execute experiments; is an analysis tool for data generated from other methods. |

Table 2: Research Reagent Solutions for Reaction Optimization

| Reagent / Material | Function in Optimization | Example Use-Case |

|---|---|---|

| High-Fidelity Polymerase (e.g., Q5) [14] | Reduces sequence errors in PCR by providing high replication accuracy. | Optimization of PCR reactions for genetic analysis. |

| PreCR Repair Mix [14] | Repairs damaged template DNA before amplification. | Troubleshooting "No Product" results in PCR when template quality is suspect. |

| Hot Start Polymerase (e.g., OneTaq Hot Start) [14] | Prevents premature replication during reaction setup, reducing non-specific products. | Improving specificity in PCR by minimizing primer-dimer formation and mispriming. |

| GC Enhancer [14] | A specialized additive that facilitates the denaturation of GC-rich DNA templates. | Optimization of PCR reactions targeting complex, GC-rich genomic regions. |

| Nickel & Palladium Catalysts [4] | Non-precious (Ni) and precious (Pd) metal catalysts for cross-coupling reactions (e.g., Suzuki, Buchwald-Hartwig). | Process development for APIs, aiming for cost-effective and high-yielding conditions. |

| Monarch Spin PCR & DNA Cleanup Kit [14] | Purifies template DNA or PCR products to remove inhibitors like salts or proteins. | Troubleshooting "No Product" issues by ensuring reaction purity. |

Workflow Visualizations

Diagram 1: AI-Guided Reaction Optimization Cycle

Diagram 2: Robotic Hyperspace Analysis Workflow

Frequently Asked Questions

Why is the traditional One-Factor-at-a-Time (OFAT) approach unreliable for finding the best reaction conditions? OFAT varies one factor while holding others constant, which fails to capture interaction effects between variables like temperature and catalyst loading. In a simulated study, OFAT found the true process optimum only about 25-30% of the time, despite requiring 19 experimental runs for a two-factor scenario [15]. It often converges on a local maximum, missing the global optimum.

What are the practical consequences of using OFAT for optimizing an SNAr reaction? SNAr reactions can be influenced by multiple interacting parameters—solvent, base, temperature, and concentration. A suboptimal OFAT protocol could result in:

- Misidentified "optimal" conditions that do not maximize yield or selectivity.

- Failure to discover critical solvent-base combinations that unlock superior performance.

- Wasted resources on a lengthy, inefficient screening process that yields non-robust conditions [7].

Which modern approaches effectively overcome the limitations of OFAT?

- Design of Experiments (DOE): A statistical framework that systematically varies all factors simultaneously in a minimal number of runs. One analysis showed that a DOE with only 14 runs successfully found the true optimum and generated a predictive model, outperforming a 19-run OFAT experiment [15].

- Machine Learning (ML) guided Bayesian Optimization: Algorithms like Gaussian Processes balance the exploration of unknown conditions with the exploitation of promising areas. This is particularly powerful for navigating high-dimensional spaces (e.g., 530 dimensions) and for multi-objective optimization (e.g., maximizing yield while minimizing cost) [4]. Frameworks like Minerva have been successfully deployed in pharmaceutical process development, identifying optimal conditions for API syntheses in a fraction of the traditional time [4].

How can I analyze data from a modern optimization campaign? Interactive visual analytics tools like CIME4R, an open-source web application, are specifically designed to help scientists comprehend complex reaction parameter spaces, investigate how an optimization developed over iterations, and understand the predictions made by AI models [16].

Troubleshooting Guide: Overcoming Local Maxima in SNAr Optimization

| Problem Scenario | Symptoms | Recommended Solution |

|---|---|---|

| Stagnating Yield | OFAT adjustments to a single parameter (e.g., temperature) no longer improve yield. | Switch to a DOE screening design (e.g., fractional factorial) to identify significant factors and their interactions [7]. |

| Poor Reaction Selectivity | Unwanted side products persist despite optimizing for yield alone. | Employ a multi-objective Bayesian optimization workflow to simultaneously maximize yield and selectivity [4]. |

| Irreproducible Results | Conditions deemed "optimal" in the lab fail upon scale-up. | Use a Response Surface Methodology (RSM) design (e.g., Central Composite) to model the response and find a robust, operable region where the outcome is less sensitive to small variations [7]. |

Experimental Protocols: From OFAT to Advanced Optimization

Protocol 1: Standard OFAT Baseline for an SNAr Reaction

- Objective: Establish a performance baseline and illustrate the limitations of a one-dimensional search.

- Methodology:

- Select a fixed combination of solvent (e.g., DMSO) and base.

- Run a series of reactions where only the temperature is varied.

- From the perceived "best" temperature, hold it constant and run a new series varying only the base equivalent.

- Repeat the process for other factors like concentration or catalyst loading.

- Expected Outcome: This process will likely identify a local optimum. Subsequent use of DOE or ML will often reveal a different combination of parameters that delivers a significantly better outcome [15].

Protocol 2: High-Throughput DOE Screening for an SNAr Reaction

- Objective: Efficiently identify critical factors and interactions in a broad parameter space.

- Methodology [17] [4]:

- Define the Search Space: Select categorical factors (e.g., solvent: DMSO, DMF, 2-Me-THF, EtOAc; base: K₂CO₃, Et₃N, NaOH) and continuous factors (e.g., temperature: 25-100 °C; concentration: 0.1-0.5 M) [18].

- Design the Experiment: Use an automated platform (e.g., Chemspeed SWING robot) and a DOE software to generate a fractional factorial or Plackett-Burman design for a 96-well plate [17].

- Execution and Analysis: Run the reactions in parallel. Analyze yields and use statistical software to perform ANOVA, identifying which main effects and interactions are significant.

Protocol 3: ML-Guided Bayesian Optimization Campaign

- Objective: Find the global optimum for multiple objectives with minimal experimental cycles.

- Methodology (as implemented in the Minerva framework) [4]:

- Define a Discrete Condition Space: Create a large set (e.g., 88,000 combinations) of plausible reaction conditions, automatically filtering out impractical ones (e.g., temperatures above a solvent's boiling point).

- Initial Sampling: Use an algorithm (e.g., Sobol sampling) to select a diverse first batch of 96 experiments that broadly cover the parameter space.

- Iterative Learning:

- Model Training: Train a Gaussian Process regressor on the collected data to predict reaction outcomes and their uncertainty for all possible conditions.

- Next-Batch Selection: An acquisition function (e.g., q-NParEgo for multi-objective) uses the model to select the next batch of experiments that best balances exploration and exploitation.

- Termination: The campaign ends when performance converges or a satisfactory condition is identified (e.g., >95% yield and selectivity) [4].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| 2-Me-THF | A biorenewable ether solvent with a better life-cycle assessment than THF; suitable for many SNAr reactions [18]. |

| Ethyl Acetate / i-Propyl Acetate | Greener ester solvents that can be used for SNAr reactions, though they are incompatible with strong bases [18]. |

| Cyrene (Dihydrolevoglucosenone) | A biosourced dipolar aprotic solvent promoted as a replacement for solvents like DMF; unstable with strong bases [18]. |

| Liquid Ammonia | A proposed alternative to traditional dipolar aprotic solvents for SNAr reactions [18]. |

| CIME4R Software | An open-source interactive web application for analyzing reaction optimization data and understanding AI model predictions [16]. |

| Minerva Framework | A scalable machine learning framework for highly parallel, multi-objective reaction optimization integrated with automated high-throughput experimentation [4]. |

Experimental Workflow Visualization

The diagram below contrasts the sequential OFAT process with the iterative, data-driven workflow of modern optimization methods.

In drug discovery and reaction optimization, researchers often find their experiments converging on suboptimal results—compounds with adequate but not outstanding potency, or reaction conditions that are good but not the best. This common experience of hitting a local optimum represents a significant bottleneck in research progress. A local optimum is a solution that is optimal within a immediate neighborhood of possibilities but is not the best possible solution overall (the global optimum) [19] [20].

The transition from intuition-driven experimentation to algorithm-guided optimization represents a fundamental paradigm shift in scientific research. This shift is characterized by the adoption of principled frameworks like the Multiphase Optimization Strategy (MOST), which systematically balances effectiveness, affordability, scalability, and efficiency (EASE) [21]. This article establishes a technical support framework to help researchers navigate this transition and overcome local optima in their reaction optimization work.

Understanding the Optimization Landscape

What are local optima and why do they occur?

Local optima occur in complex optimization landscapes where multiple interacting variables influence outcomes. In reaction optimization, this might involve temperature, catalyst concentration, reactant ratios, and solvent choices. The algorithm or experimental design becomes "trapped" when any immediate change to parameters appears to worsen outcomes, even though dramatically better solutions exist beyond these immediate neighbors [19] [22].

Mathematical Definition: For a minimization problem, a point x* is a local minimum if there exists a neighborhood N around x* such that: f(x*) ≤ f(x) for all x in N, where f is the objective function being optimized [20].

How can I identify if my experiment is stuck in a local optimum?

- Performance Plateau: Consistent results despite varied parameters

- Inconsistent Improvements: Small parameter changes cause performance degradation

- Literature Discrepancy: Known better solutions exist but cannot be reproduced

- Algorithm Convergence: Optimization algorithms converge rapidly to same solution from different starting points [19] [22]

Troubleshooting Guide: Overcoming Local Optima

Problem: My reaction optimization has plateaued at suboptimal yield

Possible Causes and Solutions:

Cause: Insufficient exploration of parameter space

- Solution: Implement high-throughput experimentation (HTE) to broadly explore conditions [23]

- Protocol: Set up a 96-well plate system varying catalyst (0.5-5 mol%), temperature (25-100°C), and solvent (DMF, DMSO, MeCN, THF)

Cause: Over-reliance on gradient-based optimization

- Solution: Incorporate non-elitist algorithms (e.g., Metropolis, SSWM) that accept temporarily worse solutions [22]

- Protocol: Implement Strong Selection Weak Mutation (SSWM) algorithm with acceptance probability: P(accept) = (1 + exp(-Δf/N))⁻¹ where Δf is fitness difference and N is population size

Problem: My optimization algorithm converges too quickly

Advanced Techniques:

Technique: Simulated Annealing

Technique: Multi-objective Optimization with Decomposition (MOEA/D)

- Implementation: Decomplex complex problems into subproblems using weight vectors [24]

- Parameters: Population size = 100, neighborhood size = 20, genetic operators: simulated binary crossover, polynomial mutation

Diagnostic Framework for Local Optima

Figure 1: Diagnostic Framework for Identifying Local Optima

Quantitative Comparison of Optimization Algorithms

Table 1: Performance Characteristics of Optimization Algorithms for Reaction Chemistry

| Algorithm | Mechanism | Local Optima Escape | Best for Problem Type | Computational Cost |

|---|---|---|---|---|

| (1+1) EA [22] | Elitist selection | Poor (exponential in valley length) | Simple landscapes | Low |

| Metropolis/Simulated Annealing [22] | Probabilistic acceptance | Good (depends on valley depth) | Medium complexity | Medium |

| SSWM [22] | Biological inspiration | Good (depends on valley depth) | Fitness valleys | Medium |

| Particle Swarm Optimization [25] | Social behavior | Moderate | High-dimensional spaces | High |

| Genetic Algorithm [25] | Crossover/mutation | Good with diversity | Multimodal problems | High |

Table 2: Algorithm Performance on Standard Benchmark Functions

| Algorithm | Convergence Speed | Solution Quality | Parameter Sensitivity | Implementation Complexity |

|---|---|---|---|---|

| Genetic Algorithm [25] | Medium | High | Medium | Medium |

| Particle Swarm Optimization [25] | Fast | Medium | High | Low |

| Simulated Annealing [22] | Slow | Medium | Low | Low |

| Grey Wolf Optimizer [25] | Fast | High | Medium | Medium |

| Artificial Bee Colony [25] | Medium | High | Low | High |

Advanced Methodologies for Complex Landscapes

Multi-Objective Optimization with MOEA/D Framework

For complex reaction optimization with multiple competing objectives (yield, cost, safety), decomposition-based approaches provide superior performance:

Workflow Implementation:

Figure 2: MOEA/D Optimization Workflow

Reference Point Strategy: The traditional method of reference point selection contributes to local optima convergence. Implement the Weight Vector-Guided and Gaussian-Hybrid method for improved diversity [24].

Integrated Workflow: Combining HTE with Machine Learning

Recent advances demonstrate the power of integrating high-throughput experimentation with deep learning:

Protocol from Recent Literature [23]:

- HTE Data Generation: Perform 13,490+ Minisci-type C-H alkylation reactions

- Model Training: Train deep graph neural networks on reaction outcomes

- Virtual Library Enumeration: Generate 26,375+ virtual molecules

- Multi-dimensional Filtering: Apply reaction prediction, physicochemical assessment, structure-based scoring

- Validation: Synthesize top candidates (14 compounds achieving subnanomolar activity)

Research Reagent Solutions for Optimization Experiments

Table 3: Essential Research Reagents and Computational Tools

| Reagent/Tool | Function | Application Example | Key Characteristics |

|---|---|---|---|

| Deep Graph Neural Networks [23] | Reaction outcome prediction | Minisci reaction optimization | Handles molecular complexity, predicts yield |

| High-Throughput Experimentation Platforms [23] | Rapid empirical testing | Reaction condition screening | 96/384-well format, automated liquid handling |

| SURF-Formatted Data Sets [23] | Standardized reaction data | Machine learning training | 13,490+ reactions, public availability |

| Geometric Machine Learning (PyTorch Geometric) [23] | Molecular property prediction | Virtual compound screening | 3D molecular structure handling |

| Multi-objective Evolutionary Algorithms (MOEA/D) [24] | Pareto front identification | Balancing yield/cost/safety | Weight vector decomposition |

Frequently Asked Questions (FAQs)

Q1: What is the most common mistake researchers make when facing local optima?

The most common error is premature convergence - stopping the optimization process too early because results appear stable. This often stems from insufficient exploration of the parameter space and over-reliance on traditional one-variable-at-a-time approaches rather than systematic design of experiments [21] [6].

Q2: How do I balance exploration (finding new solutions) and exploitation (refining known solutions)?

Implement the Multiphase Optimization Strategy (MOST) framework [21]:

- Preparation Phase: Define conceptual model and identify components

- Optimization Phase: Use factorial designs to evaluate component performance

- Evaluation Phase: Validate optimized intervention package

Requirements vary significantly:

- Basic: Personal computer for standard evolutionary algorithms (Python, R)

- Intermediate: Workstation for machine learning-guided optimization (GPU recommended)

- Advanced: Cluster computing for large-scale HTE data analysis and deep learning models [23]

Q4: How can I validate that my "optimized" solution isn't just another local optimum?

Employ multiple validation strategies:

- Multiple Restarts: Initialize algorithms from different starting points

- Algorithm Comparison: Test with both elitist (e.g., (1+1) EA) and non-elitist (e.g., SSWM) methods [22]

- Experimental Verification: Physically test predicted optimal conditions

- Cross-validation: Use k-fold validation for computational models

Q5: What metrics should I use to evaluate optimization success in reaction chemistry?

Key performance indicators include:

- Absolute Performance: Yield, selectivity, conversion

- Robustness: Performance under slight parameter variations

- Efficiency: Resource requirements (time, cost, safety)

- Scalability: Translation from microplate to preparative scale

- Pareto Optimality (for multi-objective): Balance between competing objectives [24]

Moving from intuition-driven to algorithm-guided optimization requires both conceptual understanding and practical implementation. By recognizing the local optima problem, employing appropriate diagnostic frameworks, and leveraging modern optimization strategies, researchers can significantly accelerate reaction optimization and drug discovery. The integration of high-throughput experimentation with machine learning and sophisticated optimization algorithms represents the new standard for research efficiency and effectiveness in pharmaceutical development.

The frameworks and methodologies presented provide a comprehensive toolkit for researchers navigating complex optimization landscapes. By adopting these approaches, the scientific community can systematically overcome the challenge of local optima and achieve truly global optimal solutions in reaction optimization research.

Escaping the Plateau: A Toolkit of Advanced Global Optimization Methods

技术支持中心:问答与故障排除指南

本技术支持中心旨在为在反应优化研究中应用随机全局优化方法的研究人员提供实用指南。这些方法对于探索复杂的势能面(PES)和克服局部极大值陷阱至关重要,是预测分子构象、晶体多晶型和反应路径等工作的核心工具 [26]。

常见问题解答 (FAQ)

Q1: 在我的反应路径优化研究中,我总是在势能面的同一个局部极小值处收敛,无法找到更稳定的全局最小结构。我应该选择哪种随机优化方法?

A1: 选择取决于您问题的具体特征。三种主要随机方法各有侧重:

- 遗传算法 (GA):适用于解空间庞大、复杂且存在多个局部最优解(即“欺骗性”地形)的问题 [27]。它通过种群内的选择、交叉和变异操作维持多样性,能有效进行全局探索 [26] [28]。

- 模拟退火 (SA):特别适合存在大量局部最优解的问题 [29]。其核心是通过基于温度的概率接受准则,在优化初期允许接受较差的解,从而有机会跳出局部最优区域 [30] [31]。

- 粒子群优化 (PSO):在连续优化问题中通常收敛速度较快 [27]。它通过粒子跟踪个体历史和群体历史最佳位置来搜索,但高维复杂问题中可能早熟收敛 [32] [33] [34]。

建议:对于未知或结构复杂的反应势能面,可从GA或SA开始以进行充分探索。若对潜在能量景观有一定先验知识(如大致区域),PSO可能更快。

Q2: 在使用模拟退火优化催化剂构型时,如何设置冷却计划表(退火历程)以避免过早陷入局部最优?

A2: 冷却策略是SA性能的关键。不恰当的冷却会导致次优解 [31]。

- 初始温度:应设置足够高,使得在初始阶段接受更差解的概率较大,从而允许算法广泛探索解空间 [29]。

- 冷却速率:冷却过快会导致类似“淬火”,陷入局部最优;冷却过慢则计算成本激增 [31]。常用的是几何冷却,即

T_{k+1} = α * T_k(0 < α < 1)。建议从较慢的冷却速率(如α=0.95)开始测试,并根据结果调整。 - 停止准则:可以设定最终温度阈值、最大迭代次数,或连续若干迭代解无改善时停止 [29]。

故障排除:如果发现结果不理想,尝试提高初始温度或降低冷却速率,增加算法在高温区的探索时间。

Q3: 在利用遗传算法筛选药物候选分子构象时,种群过早收敛(未成熟收敛),多样性丧失,怎么办?

A3: 这是GA的典型挑战,称为“未成熟收敛” [28]。

- 调整遗传算子:

- 提高变异率:适度增加变异概率可以向种群引入新基因,打破僵局。

- 调整选择压力:使用锦标赛选择或调整轮盘赌选择策略,避免超级个体过早主导种群。

- 采用重启策略:当种群多样性低于某个阈值时,保留当前最优个体,重新随机生成其余个体,重新开始进化 [28]。

- 检查参数:确保种群规模足够大,以维持必要的遗传多样性来覆盖有希望的解空间区域。

Q4: 粒子群算法用于优化反应条件参数(如温度、压力、浓度)时,粒子速度很快变为零,整个群体停滞,无法继续优化,是何原因?

A4: 这通常是粒子群陷入局部最优并出现“早熟收敛”的现象 [33] [34]。

- 惯性权重:检查是否使用了递减的惯性权重。惯性权重过小会使粒子过快失去探索能力。可以考虑使用随机衰减因子或自适应调整策略来平衡探索与开发 [33]。

- 社会与认知因子:调整加速度系数(c1, c2)。过度强调社会因子(c2)会导致粒子过快向当前群体最优聚集;过度强调认知因子(c1)则会使搜索过于分散。尝试不同的参数组合。

- 种群多样性:引入多样性保持机制,如当粒子过于聚集时,对部分粒子进行随机重置或变异操作。

Q5: 这些随机优化方法计算成本都很高,尤其是在结合第一性原理计算时。有什么策略可以加速优化过程?

A5: 可以考虑以下混合或改进策略:

- 分层优化:先用快速但精度较低的方法(如力场)进行大规模初步筛选,再用高精度方法(如DFT)对低能候选结构进行精炼 [26]。

- 集成机器学习:使用机器学习模型作为代理模型来近似昂贵的能量计算,指导优化算法向有希望的区域搜索 [26]。

- 采用混合算法:结合不同算法的优势。例如,将模拟退火的概率接受机制嵌入粒子群算法(SA-PSO),以帮助粒子逃离局部最优,提高收敛可靠性和成功率 [35]。

关键实验方案与工作流程

以下提供了两种典型的工作流程,分别适用于分子构象全局搜索和反应路径优化。

方案一:基于遗传算法和模拟退火的分子构象全局搜索协议

初始化:

迭代优化循环:

- 对于GA: a. 评价:计算种群中每个个体的能量(适应度)。 b. 选择:根据适应度选择父母个体(如轮盘赌选择)。 c. 交叉:对父母个体进行几何结构的“杂交”,产生子代。 d. 变异:对子代几何结构进行随机微小扰动(如旋转二面角)。 e. 新一代:用子代(或子代与精英父母)形成新种群 [26] [28]。

- 对于SA:

a. 扰动:对当前构象进行随机扰动,产生新构象。

b. 评估:计算新旧构象的能量差

ΔE。 c. Metropolis准则:若ΔE < 0,接受新构象;若ΔE > 0,以概率exp(-ΔE / T)接受新构象 [29] [31]。 d. 降温:完成一个温度下的若干步尝试后,按计划降低温度T。

收敛与验证:

- 重复迭代直至达到停止条件(如最大代数、温度降至阈值、解不再改进)。

- 对找到的若干最低能量构象进行局部几何优化和频率分析,确认其为真正的局部极小点(无虚频) [26]。

- 能量最低的结构被认定为全局最小(GM) 的候选结构。

方案二:基于粒子群优化的反应条件参数优化协议

问题定义:

算法初始化:

- 在参数边界内随机初始化一个粒子群(如30-50个粒子)的位置和速度。

- 记录每个粒子的位置为其个体历史最佳(

pbest)。 - 从所有

pbest中找到最佳位置,作为群体历史最佳(gbest) [32]。

迭代更新: a. 速度更新:对每个粒子

i,根据标准PSO公式更新其速度v_i:v_i = w * v_i + c1 * rand() * (pbest_i - x_i) + c2 * rand() * (gbest - x_i)其中w为惯性权重,c1,c2为加速常数,x_i为当前位置 [32] [34]。 b. 位置更新:x_i = x_i + v_i,并确保新位置在边界内。 c. 评估与更新:计算新位置的适应度。若优于当前pbest_i,则更新pbest_i;若优于当前gbest,则更新gbest[32]。终止:

- 重复迭代直至

gbest不再显著改进或达到最大迭代次数。 - 输出

gbest对应的参数组合作为最优反应条件。

- 重复迭代直至

随机优化方法核心特征对比

下表总结了三种方法的关键特性,助您快速比较和选择。

| 特征 | 遗传算法 (GA) | 模拟退火 (SA) | 粒子群优化 (PSO) |

|---|---|---|---|

| 核心原理 | 自然选择与遗传 | 物理退火过程 | 鸟群/鱼群社会行为 |

| 搜索方式 | 种群为基础 | 单点搜索 | 种群为基础 |

| 是否使用导数 | 否 | 否 | 否 |

| 处理局部最优能力 | 强(通过变异和种群多样性) | 强(通过概率性接受差解) | 中等(可能早熟收敛 [34]) |

| 主要算子/机制 | 选择、交叉、变异 | Metropolis接受准则、温度冷却 | 个体认知、社会学习、速度-位置更新 |

| 适用场景 | 复杂、多峰、离散或连续空间 | 多局部最优问题,组合优化 | 连续参数优化,收敛通常较快 |

| 随机性 | 是 | 是 | 是 |

| 可并行性 | 高(种群评估可并行) | 中等(可并行多条退火链) | 高(粒子评估可并行) |

| 调参关键 | 种群规模、交叉/变异率、选择策略 | 初始温度、冷却速率、链长 | 惯性权重、加速常数、种群规模 |

可视化:随机优化方法克服局部极大值的工作流

以下图表展示了三种方法在反应优化中协同工作以克服局部极大值的概念框架。

研究者的工具包:关键算法组件与功能

下表列出了应用这些随机优化方法时所需的“研究试剂”或核心算法组件及其功能。

| 类别 | 组件/参数 | 功能描述 | 类比实验试剂 |

|---|---|---|---|

| 遗传算法 | 染色体编码 | 将解(如分子构象、反应路径)表示为可遗传操作的字符串(如原子坐标、二面角序列)。 | DNA模板 - 携带解决方案的遗传信息。 |

| 适应度函数 | 评估染色体优劣的函数(通常为势能或目标产物的负值)。 | 筛选标记 - 用于识别和选择成功的个体。 | |

| 选择算子 | 根据适应度选择父母个体以产生后代(如轮盘赌、锦标赛选择)。 | 选择培养基 - 促进特定类型个体的生长。 | |

| 交叉算子 | 交换两个父母染色体的部分基因以产生新个体,促进优良性状组合。 | 基因重组酶 - 混合遗传物质以产生多样性。 | |

| 变异算子 | 以低概率随机改变染色体的部分基因,引入新特征并维持种群多样性。 | 诱变剂 - 引入随机突变,探索新的可能性。 | |

| 模拟退火 | 温度参数 (T) | 控制接受较差解概率的关键参数。高温允许广泛探索,低温聚焦局部开发。 | 退火炉温控仪 - 精确控制系统的“热运动”水平。 |

| Metropolis接受准则 | 以概率 min(1, exp(-ΔE/T)) 决定是否接受新解,是跳出局部最优的核心。 |

热力学平衡缓冲液 - 允许系统以一定概率处于非最低能态。 | |

| 冷却进度表 | 规定温度如何随时间从高到低衰减的策略,直接影响算法性能 [31]。 | 程序降温仪 - 设定从探索到精炼的转变速率。 | |

| 邻域函数 | 定义如何从当前解产生一个新候选解(如微小扰动几何结构)。 | 微移器 - 对当前状态进行可控的、随机的微小调整。 | |

| 粒子群优化 | 粒子位置与速度 | 每个粒子代表一个候选解,其速度决定了下一次迭代的移动方向和距离。 | 反应物微滴 - 携带特定配方(解)在参数空间中移动。 |

| 个体最佳 (pbest) | 粒子自身在飞行过程中找到的历史最佳位置。 | 个人实验记录 - 每个研究者自己最好的实验结果。 | |

| 全局最佳 (gbest) | 整个粒子群迄今为止找到的最佳位置。 | 课题组最佳记录 - 整个团队目前最好的发现。 | |

| 认知因子 (c1) & 社会因子 (c2) | 分别控制粒子飞向 pbest 和 gbest 的加速度权重,平衡个体经验和集体智慧 [32]。 |

自信系数与协作系数 - 调节个体创新与团队共识的平衡。 | |

| 惯性权重 (w) | 控制粒子继承前一时刻速度的程度,用于平衡全局探索与局部开发。 | 动量调节器 - 保持搜索方向惯性的同时,允许转向。 |

In reaction optimization research, a significant challenge is the tendency of algorithms to become trapped in local maxima (or minima on the energy surface), failing to locate the global optimum representing the most stable molecular configuration or most efficient reaction pathway. This article explores two deterministic approaches—Basin Hopping and Single-Ended Methods—designed to systematically navigate complex energy landscapes. Within a technical support framework, this guide provides troubleshooting and methodological protocols to help researchers effectively implement these strategies to overcome convergence problems in computational chemistry and drug development.

Understanding the Energy Landscape

The potential energy surface (PES) is a multidimensional hypersurface representing the energy of a molecular system as a function of its nuclear coordinates. Key features include [26]:

- Local Minima: Energetically stable structures, which may represent reactants, products, or intermediates.

- Transition States (TS): First-order saddle points on the PES that represent the highest energy structure along a reaction path.

- Global Minimum (GM): The most thermodynamically stable structure on the PES, which is the target of global optimization.

The number of local minima scales exponentially with system size, making the GM difficult to locate for larger molecules [26].

Basin Hopping Methodology

Basin Hopping (BH) is a global optimization algorithm that transforms the original complex PES into a collection of "basins" corresponding to local minima. It is particularly effective for nonlinear objective functions with multiple optima and is widely used for finding the lowest-energy structures of atomic clusters and macromolecular systems [36] [37].

Algorithm Workflow

The BH algorithm iterates through a cycle of perturbation, local optimization, and acceptance [36] [37]:

Experimental Protocol

To implement BH using the SciPy library in Python, follow this detailed protocol [36]:

Define the Objective Function: The function must map a vector of coordinates to a scalar energy value.

Set Initial Guess: Define a starting point, often a random sample from the domain.

Configure and Run Minimizer: Key hyperparameters control the algorithm's behavior.

Analyze Results: The result object contains key information about the optimization.

Key Research Reagents: BH Configuration

The table below summarizes the critical "research reagents" or hyperparameters for a successful BH experiment.

| Hyperparameter | Function | Recommended Setting |

|---|---|---|

Number of Iterations (niter) |

Total number of basin hopping cycles. | 100 - 10,000+ (higher for complex landscapes) [36]. |

Step Size (stepsize) |

Maximum displacement for random perturbation. | ~2.5-5% of the domain size (e.g., 0.5 for a [-5,5] domain) [36]. |

Temperature (T) |

Controls acceptance probability of higher-energy solutions. | Often starts at 1.0; may require tuning. |

| Local Minimizer Method | Algorithm for local optimization (e.g., L-BFGS-B, Nelder-Mead). | "L-BFGS-B" is the default; "nelder-mead" can be used for non-smooth functions [36]. |

Single-Ended Methods Methodology

Single-Ended Methods are designed to locate transition states and explore reaction paths starting from a single molecular geometry, without requiring knowledge of the final product structure. They are crucial for automated reaction network exploration [26] [38].

Algorithm Workflow (Growing String Method, SE-GSM)

The Single-Ended Growing String Method (SE-GSM) starts from a reactant and follows a specified internal coordinate to grow a path toward the transition state and product [38].

Experimental Protocol

A typical protocol for a single-ended transition state search, as implemented in tools like the Growing String Method, involves [39] [38]:

Define Reactant and Driving Coordinate:

- Provide a well-optimized geometry of the reactant.

- Define the driving coordinate, which is an internal coordinate (e.g., a bond length to be formed or broken, or an angle) believed to lead towards the transition state. This aligns reacting groups and initiates the reaction path.

Generate and Rank Initial Structures:

- The algorithm generates multiple initial structures by performing stepwise rotations along selected torsional degrees of freedom to achieve a proper spatial alignment of the reactants.

- These structures are ranked based on geometric criteria, such as the distance between reacting atoms and the absence of steric clashes, to select the most promising starting configuration for the TS search [39].

Execute the SE-GSM Search:

- The string begins to grow from the reactant by adding new nodes along the direction of the driving coordinate.

- Unlike double-ended methods, SE-GSM typically optimizes only the "frontier" node (the leading node of the string) during the growth phase.

- The algorithm continuously checks if the string has passed the transition state (e.g., by monitoring the energy profile along the string).

Complete the Path and Optimize:

- Once the TS region is identified, the string continues to grow and is reparametrized to ensure even spacing between nodes.

- The final product node is located and fully optimized.

- The complete reaction path, including the transition state geometry, is returned.

Troubleshooting Guides and FAQs

Basin Hopping

Q1: My BH run consistently converges to a high-energy local minimum. How can I improve the search?

- Check Step Size: A step size that is too small prevents the algorithm from escaping the current basin. Solution: Increase the

stepsizeparameter to 5-10% of your variable range to facilitate jumps to new basins [36]. - Increase Iterations: The budget may be insufficient. Solution: Drastically increase

niterto 10,000 or more for highly complex, multi-minima surfaces [36]. - Adjust Temperature: A low temperature too readily rejects higher-energy solutions. Solution: Start with a higher

T(e.g., 5.0-10.0) to allow more exploratory moves in early stages, analogous to simulated annealing [36] [37].

Q2: The BH algorithm is running too slowly. How can I improve its efficiency?

- Profile Local Optimizer: The local minimization step is often the bottleneck. Solution: Experiment with different, faster local optimizers in

minimizer_kwargs. For example,"method": 'Nelder-Mead'might be faster than the default L-BFGS-B for some problems, though it may be less robust [36]. - Use a Population Variant: Standard BH is trajectory-based. Solution: Implement a population-based BH variant (BHPOP), which maintains multiple candidate solutions and has been shown to perform well compared to other metaheuristics [40].

Single-Ended Methods

Q3: My single-ended TS search fails to converge or finds an incorrect TS. What could be wrong?

- Poor Driving Coordinate: The chosen internal coordinate may not accurately represent the reaction mode. Solution: Re-examine the suspected reaction mechanism. Consider using multiple driving coordinates or employing automated techniques that generate and rank multiple input structures based on geometric criteria [39].

- Steric Clashes or Incorrect Alignment: The initial approach of reactants can be geometrically unfavorable. Solution: Ensure the initial structure has reacting groups properly aligned and that severe steric repulsions are minimized in the starting geometry. The automated ranking of structures based on geometric criteria is designed to address this [39].

Q4: How do I know if the located transition state is correct?

- Frequency Calculation: A valid first-order saddle point must have exactly one imaginary vibrational frequency (negative value in the Hessian matrix). Solution: Always perform a frequency calculation on the optimized TS structure to confirm the presence of a single imaginary frequency [26].

- Intrinsic Reaction Coordinate (IRC): The TS should connect to the correct reactant and product basins. Solution: Perform an IRC calculation from the TS forward and backward to verify that it smoothly connects to the expected reactant and product structures.

Comparison of Methods

The table below provides a structured comparison of the two methods to guide selection for specific research problems.

| Feature | Basin Hopping | Single-Ended Methods |

|---|---|---|

| Primary Goal | Locate global minimum energy structure [37] [26]. | Locate transition states and reaction paths from a single geometry [26] [38]. |

| Required Input | Single starting structure. | Single reactant structure and a driving coordinate. |

| Nature of Search | Stochastic global optimization with local refinement [36]. | Deterministic, following a defined coordinate. |

| Typical Applications | Molecular conformation search, cluster geometry optimization [36] [26]. | Exploring unknown reaction pathways, automated TS searches [39] [38]. |

| Key Strength | Effective at escaping deep local minima to find the global optimum. | Does not require a known product structure. |

| Main Challenge | Requires careful tuning of step size and temperature. | Success is sensitive to the choice of driving coordinate. |

Technical Support Center: Troubleshooting Guides & FAQs

Context: This support center is framed within a doctoral thesis investigating novel strategies to overcome local maxima—a pervasive challenge where optimization algorithms converge to suboptimal solutions—in chemical reaction optimization research. It addresses common experimental hurdles encountered when applying swarm intelligence algorithms, specifically Manta-Ray Foraging Optimization (MRFO) and its variants, to complex, nonlinear reaction landscapes [41] [42].

FAQ: Algorithm Performance & Convergence

Q1: My optimization run for a chemical equilibrium calculation keeps converging to the same suboptimal set of conditions. The algorithm appears "stuck." Is this a local maxima problem, and how can I escape it?

A1: Yes, this is a classic symptom of convergence to a local optimum. The standard MRFO algorithm, while effective, can suffer from premature convergence and loss of population diversity, especially in high-dimensional or highly nonlinear problems like chemical equilibrium models [41] [43] [44].

Troubleshooting Guide:

- Diagnosis: Check the diversity of your candidate solution population over iterations. If the individuals' positions become very similar early in the run, exploration has ceased prematurely.

- Solution - Enhanced Exploration: Implement an improved MRFO variant that incorporates mechanisms to maintain diversity and escape local traps. Based on recent literature, you can:

- Use Chaotic Mapping for Initialization: Replace random initialization with Tent chaotic mapping. This ensures a more uniform and ergodic distribution of initial candidate solutions across the search space, providing a better starting point for the global search [43] [44].

- Integrate a Hierarchical Structure (HMRFO): Divide your population into elite, average, and low-performing subgroups. Apply different learning strategies to each: Elite Opposition-Based Learning for elites, Dynamic Opposition-Based Learning for average individuals, and Quantum-based Learning for the worst performers. This structured approach enhances both exploitation and exploration simultaneously [41].

- Apply Lévy Flight Dynamics: During the somersault foraging phase, incorporate Lévy flight steps. This strategy allows for occasional long jumps in the search space, helping the algorithm break out of local optima regions [43] [45] [44].

- Protocol - Implementing IMRFO for Reaction Optimization:

- Define Search Space: Set bounds for all reaction parameters (e.g., temperature, concentration, time).

- Initialize Population: Generate initial candidate solutions using Tent chaotic mapping [44].

- Iterative Optimization: For each iteration: a. Evaluate fitness (e.g., reaction yield, purity). b. Perform Chain Foraging (Eq. 1-2 from [43]) and Cyclone Foraging (Eq. 3-6 from [43]). c. Apply a Bidirectional Search Strategy after cyclone foraging to explore both improving and non-improving directions [43] [44]. d. Perform Somersault Foraging (Eq. 7 from [43]) modified with a Lévy flight step.

- Termination: Stop when the maximum number of iterations is reached or convergence criteria are met.

Q2: How do I choose between a traditional Design of Experiments (DoE) approach and a swarm intelligence algorithm like MRFO for my reaction optimization?

A2: The choice depends on the complexity of the reaction landscape and your objectives [42].

Comparison Guide:

| Aspect | Design of Experiments (DoE) | Swarm Intelligence (e.g., HMRFO/IMRFO) |

|---|---|---|

| Primary Strength | Excellent for building interpretable statistical models, identifying factor significance, and robustness testing [42]. | Superior for navigating highly nonlinear, rugged landscapes with many potential local optima [41] [46]. |

| Model Assumption | Often assumes a relatively smooth, low-order polynomial response surface. | Makes no assumptions about the landscape's differentiability or smoothness; a model-free optimizer [41] [45]. |

| Efficiency in High Dimensions | Can require many experiments as dimensions grow. | Designed to handle high-dimensional search spaces efficiently [41] [47]. |

| Escaping Local Maxima | Limited; optimal point is inferred from the fitted model. | Core strength; uses stochastic population-based search to explore broadly [41] [43]. |

| Best For | Initial screening, understanding factor effects, optimizing processes with relatively smooth landscapes. | Tackling "black-box," complex optimizations where the functional relationship is unknown or highly nonlinear, such as detailed chemical equilibrium calculations [41] [42]. |

Recommendation: For initial scoping and understanding main effects, start with a fractional factorial DoE. If the response is complex or you suspect multiple local optima, switch to an improved MRFO algorithm to refine and find the global optimum.

FAQ: Experimental Setup & Validation

Q3: I am adapting the Hierarchical MRFO (HMRFO) for a gas-phase reaction equilibrium problem. What are the critical parameters to tune, and how should I validate the results?

A3: Success hinges on proper parameter configuration and rigorous validation against known systems or benchmarks.

Troubleshooting Guide:

- Key Parameters to Calibrate:

- Population Size (N): Start with 30-50 individuals. Increase for more complex landscapes.

- Subpopulation Ratios (for HMRFO): A common starting point is 20% elite, 60% average, and 20% worst individuals [41].

- Somersault Factor (S): Typically set to 2, but can be adjusted to control local search intensity [43] [45].

- Lévy Flight Parameters: Tune the scale parameter of the Lévy distribution to balance local exploitation and global exploration jumps.

- Validation Protocol:

- Benchmark Testing: Before applying to your experimental system, test the configured HMRFO on standard optimization benchmark functions (e.g., CEC2017, CEC2022 suites) and compare its performance with other state-of-the-art metaheuristics like PSO, GWO, and standard MRFO [43] [44]. Use the quantitative metrics in the table below for comparison.

- Chemical Model Validation: Apply the algorithm to a well-studied chemical equilibrium problem with a known theoretical or reliable computational solution (e.g., a simple ideal gas mixture reaction). Compare the algorithm's predicted equilibrium composition and Gibbs free energy minimum with the reference solution.

- Statistical Significance: Run the optimization multiple times (≥30) from different random seeds. Perform statistical tests (e.g., Wilcoxon rank-sum) to confirm that any performance improvement over a baseline method is significant [43].

Performance Benchmark Data Summary (Synthetic Functions): The following table summarizes typical findings from recent studies comparing improved MRFO variants against other algorithms on standard test beds [43] [44].

| Algorithm | Average Rank (CEC2017) | Success Rate on Multimodal Functions | Key Strength |

|---|---|---|---|

| Standard MRFO | Mid-Range | Moderate | Fast initial convergence |

| PSO | Mid-Range | Moderate | Simplicity |

| GWO | Mid-Range | Moderate | Exploitation |

| IMRFO (w/ Tent, Lévy) | High (1st-2nd) | High | Escaping local optima |

| HMRFO (Hierarchical) | High (1st-2nd) | Very High | Balanced exploration/exploitation |

Q4: What are the essential computational "reagents" or tools needed to implement these swarm optimization experiments for chemistry?

A4: The Scientist's Toolkit

| Research Reagent / Tool | Function in the Experiment |

|---|---|

| Thermodynamic Calculation Core | Software or library (e.g., Cantera, ThermoCalc, custom code) to compute Gibbs free energy, equilibrium constants, and phase compositions for the reacting mixture at each candidate set of conditions. This is the fitness evaluator [41]. |

| Algorithm Implementation Framework | A programming environment (Python with NumPy/SciPy, MATLAB) to code the MRFO, HMRFO, or IMRFO logic, including the chaotic mapping, opposition learning, and Lévy flight modules [41] [43] [45]. |

| Benchmark Problem Suite | A collection of standard optimization functions (e.g., from CEC conferences) to calibrate and validate the algorithm's performance before costly chemical computations [43] [44]. |

| Statistical Analysis Package | Tools (e.g., in R, Python's SciPy) to perform descriptive statistics and hypothesis testing on multiple optimization runs to ensure result robustness [43]. |

| Visualization Library | Tools (Matplotlib, Plotly) to plot convergence curves, population diversity, and the explored reaction parameter space. |

Mandatory Visualizations

Diagram 1: Workflow for Overcoming Local Maxima with HMRFO in Reaction Optimization

Diagram 2: Logic of Key Strategies to Escape Local Optima

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary advantage of using Multi-Objective Bayesian Optimization (MOBO) over optimizing each objective separately? MOBO is designed to find a set of optimal solutions, known as the Pareto front, that represent the best trade-offs between multiple conflicting objectives, such as yield, purity, and efficiency. Instead of finding a single "best" setting, it identifies a range of solutions where improving one objective necessarily worsens another. This allows researchers to understand the fundamental trade-offs and select an operating condition that aligns with their priorities, thus avoiding sub-optimal solutions that can result from separate optimizations [48] [49].

FAQ 2: My experimental measurements are noisy. How can MOBO handle this to find reliable solutions? Noise, especially heteroscedastic noise, can significantly degrade the performance of optimization algorithms. To address this, specific MOBO algorithms have been developed that are robust to noise. One effective approach is the Multi-Objective Expected Quantile Improvement (MO-E-EQI), which focuses on improving the quantile of the objective distributions rather than the mean. This allows it to find reliable optimal conditions even when experimental uncertainty is significant and varies across the design space [50] [51].

FAQ 3: How can I incorporate known experimental constraints into the MOBO process? Constraints, such as safety limits or maximum allowable costs, can be integrated directly into the MOBO framework. Advanced methods like Multi-fidelity Joint Entropy Search for Multi-objective Bayesian Optimization with Constraints (MF-JESMOC) model these constraints as expensive black-box functions. The acquisition function is then designed to seek points that are expected to improve the Pareto front while simultaneously satisfying all specified constraints [52]. Another approach uses constrained expected improvement to ensure feasibility [53].

FAQ 4: We need to optimize more than three objectives. Does MOBO scale to "many-objective" problems? Optimizing a large number of objectives (e.g., >4) is challenging due to the curse of dimensionality. However, strategies exist to maintain efficiency. One key approach is the automatic detection and removal of redundant objectives using similarity metrics from Gaussian Process predictive distributions. This simplifies the problem without compromising the quality of the Pareto front. Additionally, methods like MORBO partition the high-dimensional search space into local trust regions, making the optimization tractable [49].

FAQ 5: Our experiments are very expensive to run. Can MOBO work with cheaper, lower-fidelity data? Yes, this is possible through Multi-Fidelity MOBO. Methods like MF-JESMOC allow you to leverage cheaper, lower-fidelity experiments (e.g., smaller scale or computational simulations) that are correlated with your high-fidelity, expensive experiments. The algorithm intelligently chooses both the next point to evaluate and the fidelity level at which to evaluate it, maximizing information gain while minimizing total experimental cost [52].

Troubleshooting Common Experimental Issues

Problem 1: The optimization is stuck in a local Pareto front, failing to find globally optimal trade-offs. This is a common challenge when overcoming local maxima in reaction optimization research.

- Potential Causes:

- Over-exploitation: The acquisition function is too greedy and fails to explore undiscovered regions of the design space.

- Poor Initial Sampling: The initial set of experiments does not cover the design space adequately.

- Solutions:

- Adjust the Acquisition Function: Use acquisition functions that have a better exploration-exploitation balance. qLogNEHVI (Noisy Expected Hypervolume Improvement) is known for its improved numerics and performance [54]. Information-theoretic acquisition functions like those used in MF-JESMOC can also help by seeking to reduce uncertainty about the Pareto front globally [52].

- Diversify Initialization: Instead of random initial points, use a maximal diversity initialisation scheme, such as clustering in the representation space, to ensure the initial design is spread out [55].

- Leverage Multi-Fidelity Information: If available, using lower-fidelity data can help the algorithm build a better global model, steering it away from local optima [52].

Problem 2: The algorithm fails to find a diverse set of Pareto-optimal solutions, clustering around a specific trade-off.

- Potential Causes:

- Inadequate Batch Diversity: In batch parallel experiments, selected points are too similar to each other.

- Poor Representation: The chosen chemical representation (e.g., one-hot encoding) does not capture meaningful similarities between different experimental conditions.

- Solutions:

- Use Diversity-Enhanced Batch Selection: Employ batch acquisition functions that explicitly promote diversity in the objective space. Methods like HIPPO use a penalization term to ensure batch points are spread out across the Pareto front. Another approach uses Determinantal Point Processes (DPPs) to enforce diversity [49].

- Improve Chemical Representations: Move beyond simple one-hot encoding. Use informative molecular or reaction descriptors such as Morgan fingerprints, reaction fingerprints (DRFP), or data-driven descriptors like CDDD or ChemBERTa to help the model generalize and navigate the space more effectively [55].

Problem 3: The optimization process is too slow, and the surrogate model is computationally expensive to train.

- Potential Causes:

- High-Dimensional Inputs: A large number of design variables makes Gaussian Process (GP) training slow.

- Large Dataset Size: The cubic scaling of GP training with data points becomes a bottleneck.

- Solutions:

- Dimensionality Reduction: For high-dimensional simulation outputs (e.g., from Finite Element Analysis), use Proper Orthogonal Decomposition (POD) to create a lower-dimensional approximation [53].

- Efficient Surrogate Models: For a moderate to high number of input variables, use Kriging Partial Least Squares (KPLS). This method reduces the number of kernel parameters in the GP model, significantly cutting training time while maintaining accuracy [53].

- Leverage Hardware and Software: Use libraries like BoTorch that provide GPU acceleration and efficient auto-differentiation for acquisition function optimization [54].

Experimental Protocols & Methodologies

Protocol 1: MOBO for Noisy Chemical Reaction Optimization

This protocol is based on the successful application of MO-E-EQI to optimize an esterification reaction for maximum space-time-yield and minimal E-factor under noisy conditions [50] [51].

Problem Formulation:

- Objectives: Maximize Space-Time-Yield (STY), Minimize E-Factor.

- Design Variables: Reaction conditions (e.g., temperature, concentration, catalyst loading).

- Noise Consideration: Acknowledge and model heteroscedastic (input-dependent) noise.

Algorithm Selection: Implement the Multi-Objective Euclidean Expected Quantile Improvement (MO-E-EQI) acquisition function. This is preferred over standard EHVI in noisy settings as it targets improvement in the quantile of the response distribution, leading to more robust solutions.

Experimental Workflow:

- Initialize: Use a space-filling design (e.g., Latin Hypercube Sampling) to conduct 10-20 initial experiments.

- Model: Fit independent Gaussian Process surrogates to each objective (STY and E-Factor), accounting for noise.

- Iterate: For a predetermined number of iterations or until convergence:

- Plan: Find the next experiment by optimizing the MO-E-EQI acquisition function.

- Experiment: Run the chemical reaction at the proposed conditions.

- Analyze: Measure the STY and E-Factor, then update the GP models with the new data.

- Conclude: Return the final approximated Pareto front, allowing the chemist to select the optimal trade-off.

Protocol 2: High-Dimensional Engineering Design with a Predefined Trade-off

This protocol, derived from bridge girder optimization, is ideal when the full Pareto front is not needed, and a specific balance between objectives (e.g., cost vs. environmental impact) is known [53].

Problem Formulation:

- Objectives: Minimize Financial Cost, Minimize Environmental Cost.

- Constraints: Multiple structural and geometric requirements (e.g., stress limits).

- Design Variables: 15 variables defining the girder's geometry and materials.

Algorithm Selection: Use Constrained Expected Improvement (CEI). The objectives are combined into a single objective using a predefined trade-off function (e.g., a weighted sum based on decision-maker preference). CEI then searches for a single solution that optimizes this composite objective while satisfying all constraints.

Computational Workflow:

- Initial Sampling: Generate an initial dataset using Latin Hypercube Sampling and run Finite Element (FE) simulations for each design.