Beyond Trial and Error: A Practical Guide to Bayesian Optimization for Enzymatic Reaction Optimization in Drug Discovery

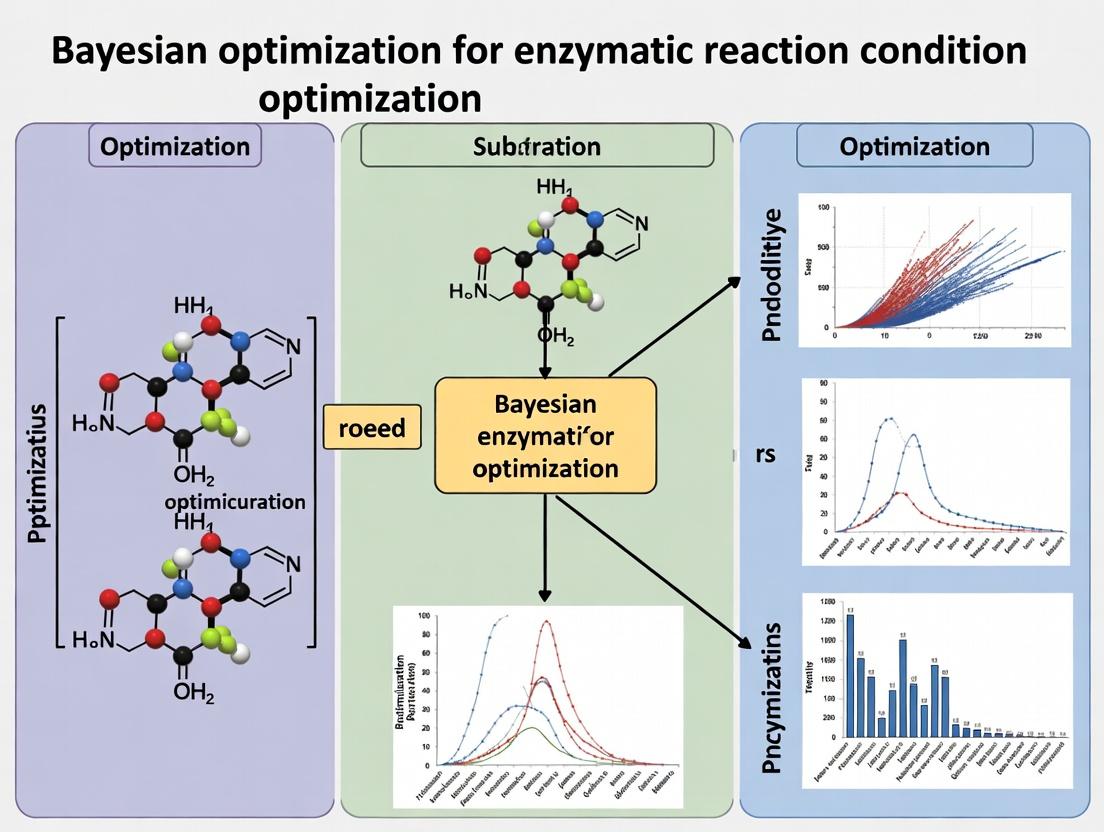

This article provides a comprehensive guide for researchers and drug development professionals on implementing Bayesian optimization (BO) to efficiently discover optimal enzymatic reaction conditions.

Beyond Trial and Error: A Practical Guide to Bayesian Optimization for Enzymatic Reaction Optimization in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing Bayesian optimization (BO) to efficiently discover optimal enzymatic reaction conditions. We explore the foundational principles that make BO superior to traditional one-factor-at-a-time and design-of-experiments approaches for expensive, multi-parameter biological experiments. The guide details methodological steps from surrogate model selection to acquisition function strategy, illustrated with practical application frameworks for common enzymatic assays. We address key troubleshooting challenges in experimental integration and algorithmic tuning. Finally, we present validation strategies and comparative analyses against other optimization methods, showcasing BO's proven impact on accelerating enzyme engineering, biocatalyst development, and high-value metabolite synthesis in biomedical research.

Why Bayesian Optimization? The Data-Efficient Paradigm for Complex Enzyme Systems

Within the broader thesis on Bayesian optimization for enzymatic reaction optimization, it is critical to first understand the costly paradigm it aims to replace. Traditional enzyme condition screening is a brute-force, high-dimension exploration problem. Researchers must navigate a vast landscape of variables (pH, temperature, buffer type, cofactors, substrate concentration, ionic strength) to find an optimal combination for activity, stability, or specificity. The "High Cost" is multifaceted: exorbitant reagent consumption, prohibitive time investment, and high rates of inconclusive or suboptimal results, which collectively impede drug development and biocatalyst engineering.

Quantitative Analysis of Traditional Screening Costs

The following table summarizes key quantitative burdens identified from current high-throughput screening (HTS) literature.

Table 1: Resource & Time Costs of Traditional High-Throughput Screening

| Cost Dimension | Typical Scale for a 3-Variable (e.g., pH, Temp, [Metal]) Screen | Estimated Resource Consumption | Time Investment |

|---|---|---|---|

| Plate-Based Assays | 96-well plate format (80 test wells) | 50-200 µL reaction volume per well; 4-16 mL total enzyme/buffer reagent per plate. | 1-2 days for setup, incubation, and analysis per plate. |

| Reagent Cost | Screening 5 substrates, 3 buffers, 4 temperatures | Enzyme: $0.50-$5.00 per µg; Specialty cofactors: $200-$1000 per gram. Total cost can exceed $2000 per screen. | N/A (Capitalized in reagents) |

| Data Points for Full Factorial Design | 5 pH x 4 Temp x 6 [Substrate] = 120 conditions | Requires >120 discrete reactions, plus replicates (240+). Scales combinatorially. | 3-5 days of experimental work. |

| "Failure" Rate | Literature suggests >85% of conditions yield <20% of max activity. | >85% of reagents and labor yield low-value data. | Wasted time on non-productive experimental runs. |

Table 2: Limitations and Consequences of Traditional Methods

| Limitation | Direct Consequence | Impact on Research |

|---|---|---|

| Sparse Sampling of Search Space | Misses optimal regions between tested grid points. | Suboptimal process conditions identified. |

| "One-Variable-at-a-Time" (OVAT) Approach | Fails to detect critical parameter interactions. | Leads to false optima and unreliable scalability. |

| High Material Consumption per Data Point | Limits screening breadth due to budget/availability. | Constrains exploration, especially with precious enzymes. |

| Long Experimental Cycle Times | Feedback loop between experiment and analysis is slow. | Slows iterative learning and project timelines. |

Experimental Protocols: Exemplar Traditional Screen

Protocol 1: Traditional Grid-Based Screening of pH and Temperature for Enzyme Kinetics

I. Objective: To determine the apparent optimal pH and temperature for a hydrolytic enzyme using a UV-Vis based endpoint assay.

II. Materials (The Scientist's Toolkit) Table 3: Key Research Reagent Solutions

| Reagent/Material | Function & Specification |

|---|---|

| Recombinant Enzyme (Lyophilized) | Target biocatalyst. Resuspend in recommended storage buffer to create a 1 mg/mL stock. Aliquot and store at -80°C. |

| p-Nitrophenyl Substrate Analogue (pNPP) | Chromogenic substrate. Cleavage releases p-nitrophenol, measurable at 405 nm. Prepare a 10 mM stock in DMSO or assay buffer. |

| Universal Buffer System (e.g., Britton-Robinson) | Covers a wide pH range (e.g., 3.0-9.0) with consistent ionic strength. Prepare 100 mM stock solutions. |

| Multi-Channel Pipettes (8- or 12-channel) | Enables rapid dispensing into 96-well microplates. |

| Clear 96-Well Microplates (Flat-Bottom) | Reaction vessel compatible with plate readers. |

| Microplate Spectrophotometer | For high-throughput absorbance measurement at 405 nm. |

| Thermocycler or Heated Microplate Shaker | For precise temperature control during incubation. |

III. Procedure:

- Experimental Design:

- Define grid: pH (5.0, 6.0, 7.0, 8.0, 9.0) and Temperature (25°C, 30°C, 37°C, 45°C, 55°C).

- This full factorial design yields 25 unique conditions. Perform in triplicate (75 reactions plus controls).

Reaction Setup in 96-Well Plate: a. Pre-incubate all buffers and enzyme solutions at the target temperatures for 10 minutes. b. Using a multichannel pipette, dispense 180 µL of the appropriate pre-warmed buffer into each well. c. Add 10 µL of enzyme stock solution to initiate the reaction. For negative controls, add 10 µL of storage buffer. d. Immediately add 10 µL of pre-warmed 10 mM pNPP substrate stock to all wells. Final reaction volume: 200 µL. e. Seal plate with optically clear film and place immediately into pre-heated microplate reader or shaker.

Data Acquisition: a. Kinetically measure absorbance at 405 nm every 30 seconds for 10-30 minutes. b. Alternatively, perform an endpoint read after a fixed incubation time (e.g., 5 minutes).

Data Analysis: a. Calculate the initial velocity (V₀) for each well from the linear slope of A405 vs. time (ΔA405/min). b. Average triplicate V₀ values for each pH/Temp condition. c. Plot 3D surface or heatmap (pH vs. Temperature vs. V₀) to identify the apparent optimum.

IV. Critical Limitations of This Protocol:

- The identified "optimum" is only the best of the 25 discrete points tested, not the true continuous optimum.

- No information is gained about the shape of the response surface between points.

- Consumes ~4 mL of total enzyme solution and 2 full microplates for a single, two-variable screen.

Visualization: The Traditional Screening Workflow & Its Flaws

Title: Traditional Enzyme Screening Cycle

Title: Search Space Sampling Comparison

Within the broader thesis on advancing enzymatic reaction optimization for biocatalysis and drug development, this document details the application of Bayesian Optimization (BO) as a core philosophy for efficient experimentation. BO transcends traditional one-variable-at-a-time or full-factorial design by implementing an intelligent, sequential, model-guided search. It is particularly suited for optimizing complex, noisy, and expensive-to-evaluate enzymatic reactions where the functional relationship between conditions (e.g., pH, temperature, cofactor concentration) and performance metrics (e.g., yield, enantiomeric excess, turnover number) is unknown.

Foundational Principles

BO operates on a simple yet powerful iterative loop:

- Model: Construct a probabilistic surrogate model (typically a Gaussian Process) of the unknown objective function using all data collected so far.

- Acquire: Use an acquisition function (e.g., Expected Improvement, Upper Confidence Bound) to compute the utility of evaluating any unsampled condition, balancing exploration and exploitation.

- Evaluate: Conduct the experiment at the condition recommended by the acquisition function.

- Update: Incorporate the new result into the dataset and update the surrogate model. This loop continues until a performance threshold is met or the experimental budget is exhausted.

Application Notes for Enzymatic Reaction Optimization

Defining the Optimization Problem

The success of BO hinges on precise problem formulation.

- Search Space: Define biologically and chemically plausible ranges for each continuous (temperature, pH) and categorical (buffer type, enzyme variant) variable.

- Objective Function: A single metric to maximize/minimize (e.g., product yield after 1 hour). Often requires careful weighting of multiple outputs (e.g., yield * enantiomeric excess).

- Constraints: Incorporate hard (e.g., pH must be between 5 and 9 for enzyme stability) or soft constraints via penalty functions.

Surrogate Model Selection for Biochemical Data

Gaussian Processes (GPs) are the default surrogate model due to their inherent uncertainty quantification. For enzymatic datasets:

- Kernel Choice: The Matérn kernel (ν=5/2) is preferred over the squared exponential, as it accommodates sharper changes common in biochemical response surfaces.

- Handling Noise: Use a Gaussian likelihood to model experimental observation noise, which is critical for reproducible biological data.

- Recent Advancements: For very high-dimensional spaces (e.g., >20 conditions), Bayesian Neural Networks or ensemble models like Tree-structured Parzen Estimators are gaining traction as more scalable surrogates.

Acquisition Functions in Practice

The acquisition function guides the search. Key choices include:

Table 1: Comparison of Common Acquisition Functions

| Acquisition Function | Key Principle | Best For Enzymatic Reactions When... | Potential Drawback |

|---|---|---|---|

| Expected Improvement (EI) | Maximizes the expected improvement over the current best. | A balance of progress and efficiency is desired; the most widely used. | Can become overly greedy. |

| Upper Confidence Bound (UCB) | Maximizes the upper confidence bound of the surrogate model. | Explicit exploration is needed; parameter β controls balance. | Requires tuning of the β parameter. |

| Probability of Improvement (PI) | Maximizes the probability of improving over the current best. | Rapid initial progress is critical. | Highly exploitative; can get stuck in local optima. |

| Knowledge Gradient (KG) | Considers the value of information for future steps. | Experiments are very expensive, and a fully sequential, non-myopic strategy is justified. | Computationally intensive. |

A 2023 benchmark study on enzyme kinetic parameter fitting found that EI and UCB performed most robustly across different noise levels and search space dimensions.

Integrating Prior Knowledge

A major strength of BO is the ability to incorporate domain expertise:

- Prior Mean Function: Initialize the GP with a simple mechanistic model (e.g., Arrhenius equation for temperature dependence) to accelerate convergence.

- Informative Priors on Hyperparameters: Set kernel length-scale priors based on known sensitivities of the enzyme class to certain factors.

- Seeding: Start the BO loop with a small, informative dataset from preliminary experiments or literature, rather than purely random points.

Experimental Protocols

Protocol: Initial Design of Experiments (DoE) for Seeding BO

Objective: Generate an initial dataset to train the first surrogate model. Materials: See "Scientist's Toolkit" (Section 6). Procedure:

- Define the n-dimensional search space (e.g., pH, temperature, [Substrate], [Enzyme]).

- For a low number of initial points (typically 4-10, depending on dimensions), employ a space-filling design:

- Method: Use Latin Hypercube Sampling (LHS) to ensure each variable is sampled uniformly across its range.

- Tools: Generate samples using Python (

pyDOE2,skopt) or commercial software (JMP, Design-Expert).

- Prepare reaction mixtures according to the sampled conditions.

- Run reactions in parallel where possible (e.g., using a thermocycler or parallel bioreactor blocks).

- Quench reactions at the predetermined time point and analyze product formation (e.g., via HPLC or UV/Vis assay).

- Calculate the objective metric (e.g., yield, initial rate) for each condition. This set

{X_initial, y_initial}forms the first dataset.

Protocol: Core Bayesian Optimization Iteration

Objective: To identify the next most informative reaction condition to evaluate. Materials: Initial dataset, BO software environment. Procedure:

- Model Training:

- Standardize input variables (X) and objective values (y).

- Train a Gaussian Process regression model on the current dataset. Optimize kernel hyperparameters (length scales, noise variance) via maximum likelihood estimation.

- Acquisition Optimization:

- Compute the acquisition function (e.g., Expected Improvement) over the entire search space using the trained GP.

- Identify the condition

x_nextthat maximizes the acquisition function. This is typically done using gradient-based methods or global optimizers like DIRECT.

- Experimental Evaluation:

- Physically set up the enzymatic reaction at the prescribed condition

x_next. - Perform the experiment with appropriate replicates (n≥2) to estimate experimental noise.

- Measure the objective value

y_next.

- Physically set up the enzymatic reaction at the prescribed condition

- Model Update:

- Append the new data point

(x_next, y_next)to the dataset:X = X ∪ x_next,y = y ∪ y_next. - Repeat from Step 1 until the experimental budget is reached or performance plateaus.

- Append the new data point

Protocol: Post-Optimization Analysis and Validation

Objective: Validate the optimal condition and analyze the learned model. Procedure:

- Identify Optimum: From the final dataset, select the condition

x*with the best observed objective valuey*. - Validation Run: Perform a confirmatory experiment at

x*with increased replication (n≥3) to obtain a robust estimate of performance and variance. - Model Interrogation:

- Use the final GP model to generate partial dependence plots for each variable, illustrating its inferred effect on the objective.

- Compute and visualize the model's predicted mean and uncertainty across 2D slices of the search space.

- Compare to Baseline: Compare the performance at

x*to that achieved under standard literature conditions or a control condition.

Visualizations

Title: Bayesian Optimization Sequential Workflow for Enzyme Screening

Title: Gaussian Process Update from Prior to Posterior

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for BO-Guided Enzymatic Optimization

| Item | Function in BO Experiment | Example/Note |

|---|---|---|

| Enzyme (Lyophilized or Liquid) | The biocatalyst whose performance is being optimized. | Recombinant ketoreductase for asymmetric synthesis. Store at -80°C. |

| Substrate(s) | The molecule(s) transformed by the enzyme. | Prochiral ketone substrate dissolved in DMSO. |

| Cofactor/Coenzyme | Required for enzyme activity (if applicable). | NADPH regenerating system (glucose-6-phosphate/G6PDH). |

| Buffer Components | Maintains reaction pH, a critical optimization variable. | 50 mM HEPES or phosphate buffer, titrated to target pH. |

| Parallel Reaction Vessels | Enables high-throughput evaluation of conditions. | 96-well deep-well plates or micro-reactor blocks. |

| Precision Liquid Handlers | For accurate, automated dispensing of reagents. | Assists in setting up the numerous conditions of seed and BO iterations. |

| Temperature-Controlled Incubator/Shaker | Controls temperature, a key optimization variable. | Thermocycler with heated lid or multi-position incubator shaker. |

| Analytical Instrument (HPLC/GC-MS/Plate Reader) | Quantifies reaction outcome (yield, ee, rate). | UPLC with chiral column for enantiomeric excess determination. |

| BO Software Platform | Implements the surrogate modeling and acquisition logic. | Python (BoTorch, GPyOpt, scikit-optimize) or commercial tools (Siemens PSE gPROMS). |

Bayesian Optimization (BO) is a powerful, sequential strategy for global optimization of expensive black-box functions. Within the context of enzymatic reaction condition optimization—such as finding the optimal pH, temperature, substrate concentration, and enzyme load for maximal yield or turnover number—BO provides a structured, data-efficient framework. It iteratively builds a probabilistic surrogate model of the reaction landscape and uses an acquisition function to decide the most informative condition to test next, dramatically reducing costly wet-lab experiments.

Key Components: Detailed Application Notes

Surrogate Model: Gaussian Processes (GPs)

GPs are the cornerstone surrogate model in BO for enzymatic optimization. They define a prior over functions and provide a posterior distribution after observing experimental data, quantifying both prediction and uncertainty.

Core GP Parameters for Enzymatic Studies:

- Mean Function (

m(x)): Encodes prior belief about the reaction output (e.g., expected yield at neutral pH). Often set to a constant. - Kernel/Covariance Function (

k(x, x')): Dictates the smoothness and shape of the function. Common choices include:- Matérn 5/2: Default for modeling physical, less smooth processes like reaction yields.

- Radial Basis Function (RBF): For modeling very smooth, continuous landscapes.

- Likelihood: Typically Gaussian, accounting for observational noise (experimental error).

Table 1: Quantitative Comparison of Common GP Kernels for Reaction Optimization

| Kernel | Mathematical Form (Simplified) | Hyperparameters | Best For Enzymatic Context |

|---|---|---|---|

| Matérn 5/2 | (1 + √5r + 5r²/3)exp(-√5r) |

Length-scale (l), Signal Variance (σ²) | Rugged, complex landscapes (e.g., multi-factor interactions) |

| RBF / SE | exp(-r²/2) |

Length-scale (l), Signal Variance (σ²) | Very smooth, continuous trends |

| Rational Quadratic | (1 + r²/2α)^(-α) |

Length-scale (l), Scale Mixture (α), Signal Variance (σ²) | Modeling variations at multiple length-scales |

Priors

Priors incorporate domain knowledge into the Bayesian model before data collection.

Types of Priors in Enzymatic BO:

- GP Function Prior: Defined by the mean and kernel. A prior favoring smoother functions might be chosen for well-behaved enzymes.

- Hyperparameter Priors: Placed on kernel parameters (e.g., length-scale). A prior on length-scale can prevent overfitting to sparse initial data.

- Domain Knowledge Priors: Directly inform the search space. For instance, a prior belief that optimal temperature is near 37°C can be encoded by initially sampling more densely in that region.

Table 2: Example Hyperparameter Priors for a Matérn 5/2 Kernel

| Hyperparameter | Suggested Prior (e.g., Gamma) | Justification for Enzymatic Experiments |

|---|---|---|

| Length-scale (l) | Gamma(α=2, β=0.5) |

Encourages moderate smoothness; avoids extreme wiggly or flat functions. |

| Signal Variance (σ²) | HalfNormal(σ=5) |

Constrains yield/turnover predictions to plausible ranges. |

| Noise Variance (σₙ²) | HalfNormal(σ=0.1) |

Reflects typical experimental error margins in HPLC/spectrophotometry assays. |

The Acquisition Engine

The acquisition function uses the GP posterior to balance exploration (probing uncertain regions) and exploitation (probing regions predicted to be high-performing) to propose the next experiment.

Common Acquisition Functions:

- Expected Improvement (EI): Measures the expected improvement over the current best observation.

- Upper Confidence Bound (UCB):

μ(x) + κσ(x), where κ controls the exploration-exploitation trade-off. - Probability of Improvement (PI): Probability that a point will improve upon the current best.

Table 3: Acquisition Function Performance Metrics

| Function | Key Parameter | Advantage in Enzyme Screening | Potential Drawback |

|---|---|---|---|

| Expected Improvement (EI) | ξ (jitter parameter) | Strong balance; widely used and robust. | Can be greedy in later stages. |

| Upper Confidence Bound (UCB) | κ (trade-off weight) | Explicit, tunable exploration control. | κ requires calibration. |

| PI | ξ (trade-off parameter) | Simple intuition. | Can be overly exploitative. |

Experimental Protocols

Protocol 1: Initial Experimental Design for BO Objective: Generate initial data to seed the Gaussian Process model.

- Define Search Space: Specify ranges for each parameter (e.g., pH: 5.0-9.0, Temp: 20-60°C, [S]: 1-100 mM).

- Choose Design: Use a space-filling design (e.g., Latin Hypercube Sampling) to select 5-10 initial reaction conditions.

- Execute Experiments: Perform enzymatic assays (see Protocol 2) at each condition in technical duplicate/triplicate.

- Measure Response: Quantify primary output (e.g., yield via HPLC, initial rate via absorbance).

- Data Preparation: Normalize response values if needed and collate into a matrix

X(conditions) and vectory(responses).

Protocol 2: Standard Microscale Enzymatic Assay for BO Iteration Objective: Reliably measure enzyme performance at a condition proposed by the acquisition engine. Reagents: See "The Scientist's Toolkit" below. Procedure:

- Buffer Preparation: Prepare appropriate buffer at the target pH.

- Reaction Assembly: In a 1.5 mL microcentrifuge tube or 96-well plate, mix:

- Buffer (to final volume, e.g., 200 µL)

- Substrate stock solution to desired final concentration.

- Enzyme stock solution to desired final load.

- (Optional) Cofactors or necessary additives.

- Incubation: Place reaction mixture in a thermostatted incubator/shaker at the target temperature for the specified time (t).

- Reaction Quenching: Terminate the reaction by heat inactivation (e.g., 95°C for 5 min), acid/base addition, or organic solvent.

- Analysis: Quantify product formation or substrate depletion using calibrated HPLC, LC-MS, or spectrophotometric methods.

- Data Recording: Record the calculated yield, rate, or other metric as the objective value

y_newfor conditionx_new.

Protocol 3: Single BO Iteration Loop Objective: Integrate a new experimental result and propose the next condition.

- Model Update: Condition the GP prior on the updated dataset

{X, y}to obtain the posterior meanμ(x)and uncertaintyσ(x). - Acquisition Optimization: Maximize the chosen acquisition function

α(x)over the defined search space using a numerical optimizer (e.g., L-BFGS-B, multi-start random search). - Next Proposal: The optimizer's solution

x_nextis the proposed condition for the next experiment. - Experimental Validation: Execute Protocol 2 at

x_next. - Iterate: Repeat until convergence (e.g., negligible improvement over several iterations) or resource exhaustion.

Visualizations

Diagram Title: Bayesian Optimization Workflow for Enzyme Reactions

Diagram Title: Core Bayesian Optimization Logic Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Enzymatic BO Experiments

| Item / Reagent | Function in Optimization Workflow | Example/Note |

|---|---|---|

| Purified Enzyme | The catalyst whose performance is being optimized. | Lyophilized powder or glycerol stock; store at appropriate T. |

| Substrate(s) | Molecule(s) transformed by the enzyme. | High-purity stock solution; may require solubility optimization. |

| Buffer System | Maintains pH and ionic strength. | Choose with pKa near target pH (e.g., phosphate, Tris, HEPES). |

| Cofactors / Cations | Essential for activity of many enzymes. | Mg²⁺, NAD(P)H, ATP, metal ions; include in search space if needed. |

| Quenching Agent | Stops reaction at precise time for accurate kinetics. | Acid (HCl), base (NaOH), organic solvent (MeCN), or heat. |

| Analytical Standard | For quantitative analysis of product/substrate. | Pure compound for HPLC/LC-MS calibration curve generation. |

| Microtiter Plates (96/384) | High-throughput reaction vessel. | Enables parallel assay of multiple conditions. |

| Plate Reader / HPLC | Primary data generation instrument. | Spectrophotometer for rates; HPLC for yield/purity. |

| BO Software Library | Implements GP, acquisition, and optimization. | Python: scikit-optimize, BoTorch, GPyOpt. |

Within the broader thesis on Bayesian optimization (BO) for enzymatic reaction optimization, this application note provides a pragmatic decision framework for experimental design. The primary challenge in developing biocatalytic processes lies in efficiently navigating a high-dimensional parameter space (e.g., pH, temperature, substrate concentration, cofactor loading, enzyme concentration) to maximize yield, selectivity, or activity. Traditional One-Factor-At-a-Time (OFAT) and classical Design of Experiments (DoE) methods are foundational but present limitations in complex, non-linear systems. BO emerges as a powerful machine learning-driven alternative for specific, challenging use-cases.

Decision Framework and Comparative Analysis

The choice between OFAT, DoE, and BO depends on the reaction complexity, prior knowledge, and resource constraints.

Table 1: Decision Framework for Selecting Experimental Optimization Strategy

| Criterion | OFAT | Classical DoE (e.g., RSM) | Bayesian Optimization (BO) |

|---|---|---|---|

| Primary Goal | Identify gross effects; preliminary screening. | Model interaction effects & find optimal within defined space. | Find global optimum with minimal experiments in expensive/high-dim spaces. |

| Number of Variables | Low (1-3). | Moderate (2-5). | High (4+). |

| Assumed Response Surface | Linear, additive. | Quadratic polynomial. | Non-linear, non-convex (handled by surrogate model). |

| Experiment Cost | Very low per experiment. | Low to moderate. | Very high per experiment (justifies smart sampling). |

| Prior Knowledge | Minimal. | Moderate (to define ranges). | Can incorporate strong priors. |

| Iterative Learning | No. Sequential but not adaptive. | Limited (usually one-shot design). | Yes. Core feature. Actively learns from each data point. |

| Best For | Initial scouting, establishing baselines. | Well-behaved systems with clear factors and ranges. | Expensive, noisy, black-box reactions with many factors. |

Table 2: Quantitative Comparison of a Simulated Enzyme Kinetics Optimization

Scenario: Maximizing initial reaction velocity (V₀) by varying pH, Temp, [S], and [E] with a non-linear, interactive response surface. Budget: 40 experimental runs.

| Method | Approx. Runs to Reach 90% of Max V₀ | Final Predicted V₀ (a.u.) | Model Accuracy (R²) | Key Limitation |

|---|---|---|---|---|

| OFAT | >40 (not reached) | 72.1 | N/A | Misses critical interactions; fails to converge. |

| DoE (Central Composite) | 30 | 88.5 | 0.79 | Struggles with severe non-linearity; requires all runs upfront. |

| BO (Gaussian Process) | 18 | 94.7 | 0.92 | Superior sample efficiency; model improves with each run. |

Ideal Use-Cases for Bayesian Optimization

- High-Throughput Experimentation (HTE) with Low Throughput Analysis: When robotic synthesis allows for many reactions but analytical costs (e.g., LC-MS) are prohibitive. BO sequentially selects the most informative experiments to run analysis on.

- Optimizing Complex, Non-Additive Responses: Enzymatic reactions with strong interacting effects (e.g., pH-Temperature-ionic strength interactions on stability and activity).

- Black-Box or Poorly Characterized Enzymes: Novel engineered enzymes or multi-enzyme cascades where mechanistic models are unavailable.

- Constrained, Feasibility-Driven Optimization: Maximizing yield while simultaneously minimizing byproduct formation or cost, where the objective is a custom function.

- Resource-Limited Scenarios: When starting materials (enzyme, substrate) are extremely scarce or expensive, demanding absolute minimal experiments.

Detailed Protocol: Bayesian Optimization for a Multi-Factor Enzymatic Hydrolysis

Objective: Maximize conversion yield of a hydrolytic reaction catalyzed by a novel lipase.

Reagents & Materials (The Scientist's Toolkit):

Table 3: Key Research Reagent Solutions

| Item | Function/Description |

|---|---|

| Purified Recombinant Lipase | Enzyme of interest, lyophilized. Store at -80°C. |

| p-Nitrophenyl Ester Substrate | Chromogenic substrate. Dissolve in anhydrous DMSO for stock. |

| Assay Buffer (Britton-Robinson) | Universal buffer for precise pH control across range 4.0-9.0. |

| Microplate Reader (UV-Vis) | For high-throughput kinetic analysis (monitor p-nitrophenol release at 405 nm). |

| Robotic Liquid Handler | For precise, reproducible setup of reaction conditions in 96-well plate format. |

| BO Software Platform | e.g., custom Python (GPyTorch, BoTorch) or commercial (SIGMA, Synthia). |

Protocol Steps:

Step 1: Define Parameter Space & Objective

- Define the bounded search space for 4 key factors:

- pH: [5.0, 8.5] (continuous)

- Temperature: [25°C, 55°C] (continuous)

- Substrate Concentration ([S]): [0.1, 5.0] mM (continuous)

- Enzyme Loading ([E]): [0.01, 0.5] mg/mL (continuous)

- Define objective function: Maximize Initial Velocity (V₀, derived from linear slope of A405 vs. time over first 10% of reaction).

Step 2: Initial Design (Space-Filling)

- Perform a Latin Hypercube Design (LHD) or Sobol sequence to select 8-10 initial experimental points. This ensures a sparse but uniform coverage of the entire parameter space.

- Procedure:

- Program liquid handler to prepare reactions in a 96-well plate according to LHD conditions.

- Pre-incubate buffer/substrate and enzyme separately at target temperature for 5 min.

- Initiate reaction by mixing enzyme into substrate/buffer solution.

- Immediately transfer plate to pre-warmed microplate reader.

- Monitor A405 kinetically for 10 minutes, record slope (V₀).

Step 3: Bayesian Optimization Loop

- Model Training: Train a Gaussian Process (GP) surrogate model using all collected data (parameter sets → observed V₀). The GP models the mean and uncertainty of V₀ across the entire space.

- Acquisition Function Maximization: Use an Expected Improvement (EI) function to compute the "promise" of each unexplored condition. EI balances exploring high-uncertainty regions and exploiting known high-performance regions.

- Select Next Experiment: The condition with the maximum EI value is selected as the next experiment to run.

- Run Experiment & Update: Execute the chosen experiment(s) in the lab, measure V₀, and append the new data point to the dataset.

- Convergence Check: Repeat steps 1-4 until convergence (e.g., <2% improvement in predicted optimum over 3 consecutive iterations) or until experimental budget is exhausted.

Step 4: Validation

- Run triplicate experiments at the optimal conditions predicted by the final BO model. Compare the observed yield with the model's prediction to validate the result.

Workflow and Pathway Diagrams

BO Experimental Workflow for Enzyme Optimization

Decision Pathway: OFAT vs DoE vs BO

The application of Bayesian Optimization (BO) in biochemistry and pharmaceutics has evolved from a conceptual niche to a core methodology for navigating complex experimental landscapes. This evolution is contextualized within the broader thesis that BO represents a paradigm shift for enzymatic reaction optimization, enabling efficient exploration of high-dimensional parameter spaces where traditional Design of Experiments (DoE) fails.

Application Notes

Note 1: Transition from High-Throughput Screening to Smart Exploration Early drug discovery relied on brute-force High-Throughput Screening (HTS). BO introduced an active learning framework, where each experiment is chosen to maximize the reduction in uncertainty about the location of the optimum (e.g., maximum reaction yield, highest enzyme activity). This drastically reduced the number of experiments required.

Note 2: Integration with Mechanistic Models Modern BO in enzymatics is not purely black-box. It increasingly functions as a grey-box optimizer, where a probabilistic surrogate model (e.g., Gaussian Process) is informed by partial mechanistic knowledge (e.g., known kinetic constraints, pH activity profiles). This prior knowledge accelerates convergence.

Note 3: Handling Multi-Fidelity and Cost-Aware Experiments BO protocols now incorporate data from inexpensive, low-fidelity experiments (e.g., microplate reader assays) to guide the selection of costly, high-fidelity experiments (e.g., HPLC quantification). The acquisition function is weighted by cost, optimizing the resource-to-information gain ratio.

Protocols

Protocol 1: BO for Initial Rate Optimization of a Kinase Enzyme Objective: Find the combination of [Substrate], [Mg²⁺], and pH that maximizes the initial reaction rate (V₀). Workflow:

- Define Domain: Set bounds: [Substrate] = 0.1-10 mM, [Mg²⁺] = 0.5-20 mM, pH = 6.5-8.5.

- Initial Design: Perform a space-filling design (e.g., Latin Hypercube) with 8-12 initial experiments. Measure V₀ via absorbance at 340 nm (coupled NADH depletion assay).

- Model Fitting: Construct a Gaussian Process (GP) surrogate model with a Matérn kernel, using the experimental data (parameters → V₀).

- Acquisition: Compute the Expected Improvement (EI) across the domain.

- Iteration: Execute the experiment with parameters maximizing EI. Update GP with the new result. Repeat steps 4-5 for 15-20 iterations.

- Validation: Confirm the BO-predicted optimum with triplicate experiments.

Protocol 2: Multi-Objective BO for Protein Purification Condition Screening Objective: Optimize a purification buffer for a recombinant antibody fragment to simultaneously maximize Yield and Purity while minimizing Aggregate Formation. Workflow:

- Parameterization: Define inputs: [NaCl] = 50-500 mM, [Imidazole] = 0-50 mM, pH = 5.8-7.5, [Additive X] = 0-5%.

- Objective Function: For each condition, run small-scale IMAC purification. Quantify yield (mg/L), purity (% by SDS-PAGE densitometry), and aggregates (% by SEC-HPLC).

- Multi-Objective Model: Fit a GP model for each objective.

- Acquisition: Use the Expected Hypervolume Improvement (EHVI) to select conditions predicted to Pareto-optimize the three competing objectives.

- Decision: After 25 iterations, analyze the Pareto front to select a condition offering the best trade-off.

Visualizations

Title: BO Iterative Workflow for Experiment Optimization

Title: Multi-Fidelity Bayesian Optimization Workflow

Table 1: Comparative Performance of BO vs. Traditional DoE in Enzymatic Optimization

| Study Focus | Method (Dimensions) | Experiments to Optimum | Improvement Over Baseline | Key Reference (Year) |

|---|---|---|---|---|

| Glycosidase pH/Temp Stability | BO (3) | 18 | Yield: +42% | Shields et al. (2015) |

| P450 Monooxygenase Activity | Grid Search (4) | 100 | Yield: +25% | (Comparison Baseline) |

| P450 Monooxygenase Activity | BO (4) | 32 | Yield: +28% | Same study |

| Transaminase Solvent Screening | BO (5) | 25 | ee: +15%, Yield: +35% | Häse et al. (2018) |

| mAb Formulation Stability | DoE (4) | 30 | Aggregates: -20% | (Comparison Baseline) |

| mAb Formulation Stability | BO (4) | 16 | Aggregates: -22% | Lima et al. (2022) |

Table 2: Typical Parameter Spaces in Pharmaceutical BO Applications

| Application Area | Common Parameters (Ranges) | Objective(s) | Typical Evaluation Method |

|---|---|---|---|

| Enzymatic Reaction Optimization | [Substrate], [Cofactor], pH, Temp, % Cosolvent | Maximize initial rate (V₀) or total yield | UV/Vis Spectroscopy, HPLC |

| Cell Culture Media Optimization | [Glucose], [Glutamine], [Pluronic], DO, pH | Maximize viable cell density (VCD) or product titer | Bioanalyzer, Metabolomics |

| Chromatography Purification | [Salt], pH, [Modifier], Gradient Slope, Temp | Maximize resolution, purity; Minimize aggregate formation | SDS-PAGE, SEC-HPLC |

| Drug Formulation | [API], [Excipient A, B], pH, Ionic Strength, Storage Temp | Maximize solubility & shelf-life; Minimize degradation | Stability-indicating HPLC |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function in BO-Driven Experimentation |

|---|---|

| Gaussian Process Modeling Software (e.g., GPyTorch, scikit-optimize) | Core library for building the surrogate probabilistic model that underpins the BO algorithm. |

| Acquisition Function Library (e.g., BoTorch, Ax Platform) | Provides implementations of EI, UCB, PoI, and complex functions like EHVI for multi-objective problems. |

| Automated Microfluidic Reactor Systems (e.g., Chempeed, Unchained Labs) | Enables rapid, automated execution of the small-scale reaction conditions proposed by the BO algorithm. |

| High-Throughput Analytics (e.g., UPLC/HPLC with autosamplers, plate readers) | Generates the quantitative fitness data (yield, titer, activity) required to update the BO model. |

| Benchling or Dotmatics ELN/LIMS | Critical for systematically logging the high volume of interconnected experimental data and parameters generated by iterative BO cycles. |

| Custom Python Scripting Environment | Essential for integrating laboratory instrumentation data outputs with the BO recommendation engine. |

Building Your Bayesian Optimization Pipeline: A Step-by-Step Framework for Enzyme Scientists

This application note details the initial and critical step in employing Bayesian optimization (BO) for enzymatic reaction optimization: defining the search space. For an enzyme-catalyzed reaction, the search space is the multidimensional region defined by the bounds of each critical reaction parameter. A precisely defined space is paramount for BO efficiency, ensuring it explores a physically and biologically plausible region to find the global optimum of a performance metric, such as initial velocity (V₀) or product yield. This protocol is framed within a thesis focused on developing BO frameworks for high-throughput biocatalysis and drug development.

Critical Parameters and Typical Experimental Bounds

Based on current literature and enzyme kinetics databases, the following four parameters are most frequently targeted for optimization of single-step enzymatic reactions. The recommended initial bounds are conservative to maintain enzyme activity while enabling efficient exploration.

Table 1: Critical Parameters and Recommended Initial Search Bounds

| Parameter | Symbol | Typical Lower Bound | Typical Upper Bound | Justification & Notes |

|---|---|---|---|---|

| pH | - | 5.5 | 9.0 | Spans common optima for most enzymes (6-8). Can be narrowed with prior knowledge (e.g., pH 7-8 for dehydrogenases). |

| Temperature | T | 20°C | 50°C | Balances reaction rate increase with thermal denaturation risk. Thermostable enzymes permit bounds up to 90°C. |

| Substrate Concentration | [S] | 0.1 × KM* | 10 × KM* | Essential to explore both first-order ([S] < KM) and zero-order ([S] > KM) kinetics regimes. |

| Co-factor Concentration | [C] | 0.1 × Kd* | 10 × Kd* | Applicable for NAD(P)H, ATP, metal ions (Mg²⁺). Prevents limitation or inhibition by excess. |

*KM (Michaelis constant) and Kd (dissociation constant) are enzyme-specific. Literature or preliminary experiments (e.g., saturation kinetics) are required to establish approximate values before setting bounds.

Protocol: Defining the Search Space for a Novel Enzyme

Objective: To establish robust initial bounds for pH, Temperature, [Substrate], and [Co-factor] for a novel hydrolase (Enzyme X) to be optimized via Bayesian Optimization.

I. Materials & Reagent Solutions Table 2: Research Reagent Solutions Toolkit

| Item | Function & Specification |

|---|---|

| Enzyme X Lyophilized Powder | Target enzyme, store at -80°C. Reconstitute in assay buffer without substrate/co-factor. |

| Universal Buffer System (e.g., HEPES, Tris, Phosphate) | 1M stock solutions, pH-adjusted to cover range 5.0-10.0, for initial pH scouting. |

| Substrate Stock Solution | High-purity substrate in DMSO or H₂O. Prepare 100x of anticipated maximum test concentration. |

| Co-factor Stock Solution (e.g., MgCl₂, NAD⁺) | Aqueous, 100x stock. Filter-sterilized, stored at -20°C if labile. |

| Detection Reagent | Fluorogenic/Chromogenic coupled assay system or direct product detection (HPLC standards). |

| Microplate Reader & Thermally-Controlled Plate Incubator | For high-throughput kinetic assay in 96- or 384-well format. |

II. Preliminary Experiments to Inform Bounds

A. Determination of Apparent KM for Substrate

- Procedure: In optimal known buffer (or pH 7.4), with saturating co-factor, perform a substrate saturation experiment.

- Method: Set up reactions with [S] ranging from 0.1 µM to 1000 µM (log-spaced). Initiate with Enzyme X.

- Analysis: Measure initial velocities (V₀). Fit data to the Michaelis-Menten model (e.g., using GraphPad Prism) to derive apparent KM.

- Outcome: Set [S] bounds as [0.1 × KM, 10 × KM].

B. Determination of Apparent Kd for Co-factor

- Procedure: Vary co-factor concentration at fixed, saturating [S].

- Method: Set up reactions with [C] ranging from 1 nM to 100 mM (dependent on co-factor type). Initiate with Enzyme X.

- Analysis: Fit V₀ vs. [C] to a binding isotherm or saturation kinetics model to derive apparent Kd.

- Outcome: Set [C] bounds as [0.1 × Kd, 10 × Kd].

C. Broad pH and Temperature Scouting

- Procedure: Perform a coarse grid search.

- Method:

- Use universal buffer at pH 5.5, 6.0, 6.5, 7.0, 7.5, 8.0, 8.5, 9.0.

- Run assays at 20°C, 30°C, 40°C, 50°C.

- Use a single intermediate [S] (~KM) and [C] (~Kd).

- Analysis: Plot V₀ as a heatmap (pH vs. Temp). Identify the region where activity is >20% of maximum observed.

- Outcome: Set pH and Temperature bounds to encompass this active region.

Workflow Diagram: From Parameter Definition to Bayesian Optimization

Title: Bayesian Optimization Workflow for Enzyme Reaction Optimization

Integration with Bayesian Optimization Framework

The defined 4D search space (pH, T, [S], [C]) becomes the domain for the BO algorithm. Each point in this space is a unique reaction condition. The BO's surrogate model (e.g., Gaussian Process) learns the complex, non-linear relationship between these parameters and the enzymatic performance metric from sequentially acquired data. Narrow, well-informed bounds drastically reduce the number of experiments required for convergence to the global optimum, accelerating the development cycle in biocatalyst and therapeutic enzyme engineering.

Within the overarching thesis on Bayelical reaction condition optimization, the selection of the surrogate model is a critical inflection point. Gaussian Process Regression (GPR) emerges as the preeminent choice due to its inherent quantification of uncertainty—a cornerstone of Bayesian optimization. GPR provides not just a prediction of enzymatic performance (e.g., yield, activity) at untested conditions but a full posterior probability distribution, enabling the calculation of acquisition functions like Expected Improvement. This deep dive outlines the theoretical justification, practical configuration protocols, and integration into an automated workflow for enzymatic optimization, targeting parameters such as pH, temperature, substrate concentration, and cofactor molar ratios.

Core Theoretical Components & Configuration Parameters

GPR is defined by a mean function, ( m(\mathbf{x}) ), and a covariance (kernel) function, ( k(\mathbf{x}, \mathbf{x}') ), governing the smoothness and structure of the response surface over the input space ( \mathbf{x} ). For enzymatic optimization, common configurations are summarized below.

Table 1: Standard Kernel Functions for Enzymatic Reaction Modeling

| Kernel Name | Mathematical Form | Hyperparameters | Best For Enzymatic Parameter | Notes |

|---|---|---|---|---|

| Radial Basis Function (RBF) | ( k(\mathbf{x}, \mathbf{x}') = \sigmaf^2 \exp\left(-\frac{1}{2} \sum{d=1}^D \frac{(xd - x'd)^2}{l_d^2}\right) ) | Length-scales (( ld )), Output variance (( \sigmaf^2 )) | Continuous, smooth parameters (Temp., pH) | Default choice; assumes isotropic or anisotropic smoothness. |

| Matérn 5/2 | ( k(\mathbf{x}, \mathbf{x}') = \sigmaf^2 \left(1 + \sqrt{5}r + \frac{5}{3}r^2\right) \exp\left(-\sqrt{5}r\right), \, r=\sqrt{\sum{d}\frac{(xd-x'd)^2}{l_d^2}} ) | Length-scales (( ld )), Output variance (( \sigmaf^2 )) | Parameters with moderate roughness (e.g., ionic strength) | Less smooth than RBF; more flexible for real-world noise. |

| Rational Quadratic (RQ) | ( k(\mathbf{x}, \mathbf{x}') = \sigmaf^2 \left(1 + \frac{\sum{d}(xd - x'd)^2}{2\alpha l_d^2}\right)^{-\alpha} ) | Length-scales (( ld )), ( \alpha ), ( \sigmaf^2 ) | Multi-scale phenomena (e.g., reaction kinetics across scales) | Can model variations at different length-scales. |

| Composite (RBF + WhiteKernel) | ( k{\text{total}} = k{\text{RBF}} + k_{\text{White}} ) | ( ld, \sigmaf^2, \sigma_{\text{noise}}^2 ) | All experimental data, accounting for measurement noise | Recommended Default. WhiteKernel captures homoscedastic experimental error. |

Table 2: GPR Hyperparameter Optimization & Model Selection Criteria

| Aspect | Common Approach | Protocol Recommendation for Enzymatic BO | |

|---|---|---|---|

| Mean Function | Often set to zero or constant. | Use a constant mean (e.g., average observed yield). Simpler, lets kernel capture structure. | |

| Likelihood | Gaussian (inherent). | Assume Gaussian observation noise, modeled via WhiteKernel or a fixed noise level. | |

| Hyperparameter Optimization | Maximize Log-Marginal Likelihood (LML): ( \log p(\mathbf{y} | \mathbf{X}) ) | Use L-BFGS-B or conjugate gradient. Perform from 10 random restarts to avoid local optima. |

| Model Selection (Kernel Choice) | Cross-Validation (CV) or Bayesian Information Criterion (BIC). | Use 5-fold CV on existing data. Prefer Matérn 5/2 or RBF + WhiteKernel for robustness. | |

| Critical Note on Scale | Inputs must be normalized. | Standardize all reaction condition parameters (e.g., pH 5-9 → 0-1 scale) to improve kernel performance and LML convergence. |

Experimental Protocol: Implementing GPR for Enzymatic Reaction Optimization

Protocol 3.1: Initial GPR Model Configuration from a Preliminary Dataset

Purpose: To establish a robust surrogate model from an initial space-filling design (e.g., 10-20 experiments) of enzymatic reaction conditions. Materials: See "The Scientist's Toolkit" (Section 5.0). Procedure:

- Data Preparation:

- Standardize each input variable (e.g., temperature, [S], pH) to zero mean and unit variance.

- Standardize the objective function output (e.g., product yield in µmol) to zero mean and unit variance.

- Kernel Initialization:

- Construct a base kernel:

Matérn(length_scale=1.0, nu=2.5) + WhiteKernel(noise_level=0.1). - Set all initial length-scales to 1.0 (on standardized data).

- Construct a base kernel:

- Model Instantiation:

- Define the GPR model using a

ConstantMeanfunction and the kernel from step 2. - Fix the hyperparameters of the

ConstantMeanto the mean of the standardized output data.

- Define the GPR model using a

- Hyperparameter Training:

- Maximize the log-marginal likelihood using the L-BFGS-B optimizer.

- Set bounds for hyperparameters:

length_scale_bounds=(1e-2, 1e2),noise_level_bounds=(1e-5, 1e1). - Run the optimization from 10 different random starting points to find the global optimum.

- Model Validation (Pre-BO):

- Perform 5-fold cross-validation on the initial dataset.

- Calculate the standardized mean squared error (SMSE) and mean standardized log loss (MSLL). Accept models with MSLL ≤ 0.

- Integration into BO Loop: The trained GPR model is now ready to predict the mean and standard deviation at any candidate point for the acquisition function.

Protocol 3.2: Iterative Model Refitting Within the Bayesian Optimization Loop

Purpose: To update the GPR surrogate model after each new experiment (or batch of experiments) in the sequential BO process. Procedure:

- Append New Data: After completing the enzymatic reaction experiment(s) proposed by the acquisition function, append the new

(conditions, yield)pair to the historical dataset. - Re-standardization: Re-standardize the entire (updated) input and output data based on the new combined dataset's mean and variance.

- Warm-Start Refitting: Initialize the GPR hyperparameters with the optimal values from the previous iteration. Refit the model by maximizing LML, using the previous optimum as the starting point and running 1-2 additional random restarts.

- Convergence Check: After 15-20 iterations, assess convergence by tracking the best observed value over iterations. A plateau may indicate a near-optimal region.

Mandatory Visualizations

Diagram 1: GPR Surrogate Model Update in BO Loop

Diagram 2: GPR Kernel Composition & Hyperparameters

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Computational Tools for GPR-Driven Enzymatic BO

| Item / Reagent Solution | Function in GPR/BO Workflow | Example/Supplier/Implementation Note |

|---|---|---|

| Scikit-learn Library (v1.3+) | Primary Python library for implementing GPR. Provides GaussianProcessRegressor with various kernels and optimizers. |

sklearn.gaussian_process |

| GPy or GPflow | Alternative, advanced libraries offering more flexibility for specialized kernels and large-scale GPR. | Useful for advanced research variants. |

| Enzyme & Substrate | The biological system under optimization. Must be stable enough for sequential testing. | Lyophilized enzyme, synthetic substrate. |

| High-Throughput Screening Assay | Enables rapid quantification of the objective function (yield/activity). | Fluorescence, absorbance, or LC-MS microplate assay. |

| Parameter Standardization Module | Critical pre-processing step to ensure stable GPR performance. | sklearn.preprocessing.StandardScaler |

| L-BFGS-B Optimizer | The standard algorithm for maximizing the GPR log-marginal likelihood. | Accessed via scipy.optimize.minimize. |

| Cross-Validation Framework | Used for initial kernel selection and model validation. | sklearn.model_selection.KFold |

| Laboratory Automation Software | Interfaces the BO algorithm output with liquid handling robots for experimental execution. | Custom Python scripts or platforms like Momentum. |

Within the context of Bayesian optimization (BO) for enzymatic reaction condition optimization, selecting an acquisition function is a critical step that determines the efficiency of the sequential experimental design. It navigates the exploration-exploitation trade-off, guiding the search for optimal reaction conditions (e.g., pH, temperature, substrate concentration, cofactor levels) by proposing the next experiment based on the surrogate model's posterior distribution. This note details three prominent functions: Expected Improvement (EI), Upper Confidence Bound (UCB), and Knowledge Gradient (KG).

Acquisition Functions: Core Principles & Quantitative Comparison

Mathematical Definitions

The acquisition function, denoted α(x; D), quantifies the desirability of evaluating a candidate point x given existing observational data D.

Expected Improvement (EI): Measures the expected amount by which the objective (e.g., reaction yield, enzyme activity) improves over the current best observation ( f^* ). [ EI(x) = \mathbb{E} [\max(0, f(x) - f^)] ] For a Gaussian process surrogate with mean ( \mu(x) ) and standard deviation ( \sigma(x) ), this simplifies to: [ EI(x) = (\mu(x) - f^ - \xi)\Phi(Z) + \sigma(x)\phi(Z), \quad \text{if } \sigma(x) > 0 ] where ( Z = \frac{\mu(x) - f^* - \xi}{\sigma(x)} ), and ( \Phi ) and ( \phi ) are the CDF and PDF of the standard normal distribution. ( \xi ) is a small positive tuning parameter that controls exploration.

Upper Confidence Bound (UCB): Selects points based on an optimistic estimate of the possible objective value. [ UCB(x) = \mu(x) + \kappa \sigma(x) ] The parameter ( \kappa \geq 0 ) balances exploration (high ( \kappa ), high ( \sigma )) and exploitation (low ( \kappa ), high ( \mu )).

Knowledge Gradient (KG): Measures the expected value of the maximum of the posterior mean after incorporating the hypothetical observation at x. [ KG(x) = \mathbb{E} [\max{x' \in \mathcal{X}} \mu{t+1}(x') - \max{x' \in \mathcal{X}} \mut(x') | x_t = x] ] It directly quantifies the expected improvement in the optimal predicted value of the surrogate model, not just over the current best observation.

Comparative Analysis

Table 1: Comparative analysis of acquisition functions for enzymatic optimization.

| Feature | Expected Improvement (EI) | Upper Confidence Bound (UCB) | Knowledge Gradient (KG) |

|---|---|---|---|

| Core Principle | Expectation over improvement beyond f* |

Optimistic bound on performance (μ + κσ) |

Expected improvement in the belief about the optimum |

| Exploration/Exploitation | Balanced; tuned by ξ |

Explicit balance via κ |

Implicitly balanced; values information gain |

| Computational Cost | Low (analytic form) | Very Low (analytic form) | High (requires nested optimization & integration) |

| Handling Noise | Moderate (can use noisy f* versions) |

Good (can be modified as GP-UCB) | Excellent (natively handles noisy observations) |

| Best For | General-purpose, limited budgets | Simple tuning, rapid iteration | Noisy, expensive experiments where information value is paramount |

| Key Parameter(s) | ξ (exploration weight) |

κ (confidence level) |

— (often parameter-free in basic form) |

Table 2: Typical parameter ranges from recent literature (2023-2024).

| Acquisition Function | Typical Parameter Range | Common Heuristic |

|---|---|---|

| EI | ξ ∈ [0.01, 0.1] |

Start with 0.01, increase if search is too greedy. |

| GP-UCB | κ decreasing schedule (e.g., κ_t = 2 log(t^{d/2+2}π²/3δ)) |

Theoretical schedules exist; often κ ∈ [1.0, 3.0] fixed in practice. |

| KG | — | Often used in its one-step optimal form without tuning parameters. |

Application Protocol for Enzymatic Reaction Optimization

This protocol outlines the integration of an acquisition function into a BO loop for optimizing a multi-parameter enzymatic reaction (e.g., transaminase activity).

Pre-Optimization Setup

A. Define Search Space (X):

- Parameters: pH (5.0-9.0), Temperature (°C, 25-60), Substrate Concentration (mM, 10-100), Enzyme Loading (mg/mL, 0.1-5.0).

- Normalize all parameters to [0, 1] for stable GP modeling.

B. Initialize Dataset (D₀):

- Perform a space-filling design (e.g., Latin Hypercube Sampling) for

n_initpoints (typically 5-10 times the dimensionality). - Execute enzymatic assays in triplicate for each condition. Measure primary objective (e.g., yield at 1 hour) and record variance.

C. Configure Gaussian Process (GP) Surrogate Model:

- Kernel: Use a Matérn 5/2 kernel to model smooth but possibly rugged response surfaces.

- Likelihood: For noisy data, use a Gaussian likelihood with a learned noise parameter (homoscedastic or heteroscedastic).

- Mean Function: Use a constant mean function.

Sequential Optimization Loop

Table 3: Iterative optimization cycle protocol.

| Step | Action | Details & Notes |

|---|---|---|

| 1. Model Update | Fit/update the GP surrogate model to the current dataset D_t. |

Use maximum likelihood or Markov Chain Monte Carlo (MCMC) for hyperparameter estimation. |

| 2. Acquisition Maximization | Compute and maximize the chosen acquisition function α(x) over X. |

EI/UCB: Use multi-start gradient-based optimizers (e.g., L-BFGS-B). KG: Requires stochastic optimization (e.g., one-shot KG via stochastic gradient ascent). |

| 3. Experiment Proposal | Select the point x_t = argmax α(x) for the next experiment. |

Include proposed condition in the experimental queue. |

| 4. Experimental Execution | Conduct the enzymatic assay at condition x_t. |

Follow standardized assay protocol (see 3.3). Record objective y_t and its standard error. |

| 5. Data Augmentation | Augment dataset: D_{t+1} = D_t ∪ {(x_t, y_t)}. |

Log all metadata (batch, operator, instrument IDs). |

| 6. Convergence Check | Evaluate stopping criteria. | Loop from Step 1 until: a) Max iterations (e.g., 50) reached, b) Improvement < threshold (e.g., <1% over 5 iterations), or c) budget exhausted. |

Detailed Experimental Assay Protocol

Title: Microplate-Based Enzymatic Activity Assay for BO Iterations.

Objective: Quantify reaction yield/activity from a proposed condition x_t.

Reagents: See "The Scientist's Toolkit" below.

Procedure:

- Buffer Preparation: Prepare assay buffer at the target pH specified by

x_t. - Reaction Assembly: In a 96-well deep-well plate, add buffer, substrate stock, and cofactors. Pre-incubate at the target temperature

Tfor 5 min. - Reaction Initiation: Add the enzyme stock (concentration per

x_t) to initiate the reaction. Final volume: 500 µL. - Incubation: Incubate in a thermomixer at temperature

Twith shaking at 500 rpm for the defined reaction time (e.g., 1 h). - Quenching: Remove 50 µL aliquots at

t=0andt=1hinto a 96-well PCR plate containing 50 µL of quenching solution (e.g., 1 M HCl). - Analysis: Dilute quenched samples appropriately and analyze product formation via HPLC-UV/Vis or a calibrated colorimetric assay.

- Data Processing: Calculate yield/activity from standard curves. Report mean and standard deviation of

n=3technical replicates.

Visual Workflows

Diagram Title: Bayesian Optimization Loop for Enzyme Reactions

Diagram Title: Acquisition Function Selection Decision Tree

The Scientist's Toolkit

Table 4: Key research reagents and materials for enzymatic BO experiments.

| Item | Function/Description | Example Supplier/Catalog |

|---|---|---|

| Recombinant Enzyme | The biocatalyst of interest; lyophilized powder or glycerol stock. | In-house expression/purification or commercial (e.g., Sigma-Aldrich). |

| Substrate(s) | The target molecule(s) transformed by the enzyme. | Custom synthesis or TCI America. |

| Cofactor (e.g., PLP, NADH) | Essential non-protein compound for enzyme activity. | Roche Diagnostics or MilliporeSigma. |

| Assay Buffer System | Maintains pH and ionic strength (e.g., HEPES, Tris, Phosphate). | Thermo Fisher Scientific. |

| Quenching Solution | Stops the enzymatic reaction instantly for accurate timing (e.g., acid, base, inhibitor). | Prepared in-lab (e.g., 1M HCl). |

| Analytical Standard (Product) | Pure compound for quantifying reaction yield via calibration curve. | Sigma-Aldrich or Cayman Chemical. |

| 96-Deep Well Plates | High-throughput reaction vessel for parallel condition screening. | Corning or Eppendorf. |

| Thermomixer | Provides precise temperature control and shaking during incubation. | Eppendorf ThermoMixer C. |

| HPLC-UV/Vis System | Primary analytical tool for separating and quantifying reaction components. | Agilent 1260 Infinity II. |

| Microplate Reader | For colorimetric or spectrophotometric endpoint/kinetic assays. | BioTek Synergy H1. |

Within the broader thesis on Bayesian optimization for enzymatic reaction condition optimization, this protocol details the implementation of a closed-loop, automated experimentation system. This system integrates high-throughput plate reader data acquisition with a Bayesian optimization (BO) model that iteratively proposes new experimental conditions. The loop enables the autonomous optimization of enzymatic reaction parameters (e.g., pH, temperature, substrate concentration, cofactor levels) to maximize yield or activity.

The Core Automated Loop: Workflow Diagram

Diagram Title: Automated Bayesian Optimization Loop for Enzymatic Reactions

Key Research Reagent Solutions & Materials

| Item | Function in the Automated Loop |

|---|---|

| 384-Well Microplate | High-throughput reaction vessel; compatible with plate readers and liquid handlers. |

| Liquid Handling Robot | Automates reagent dispensing for precise, reproducible setup of reaction conditions. |

| Multimode Plate Reader | Measures enzymatic output (e.g., fluorescence, absorbance, luminescence) in real-time or endpoint. |

| Enzyme & Substrate Stocks | Core reaction components. Prepared in stable, buffered solutions for robotic dispensing. |

| Buffer System Library | Pre-formulated buffers covering a range of pH and ionic strength for condition screening. |

| Cofactor/Inhibitor Libraries | Chemical modulators to test for optimal enzymatic activity. |

| Laboratory InformationManagement System (LIMS) | Tracks sample identity, well location, and metadata throughout the workflow. |

| Data Processing Scripts (Python/R) | Automate raw data normalization, background subtraction, and kinetic parameter calculation. |

Detailed Protocols

Protocol: Automated Plate Setup for Enzymatic Reaction Screening

Objective: To robotically prepare a microplate with varying conditions (factors) as defined by the BO algorithm. Materials: Liquid handling robot, 384-well plate, source plates containing enzyme, substrates, buffers, cofactors. Procedure:

- Receive Instruction File: The BO algorithm outputs a

.csvfile with volumes for each component per well. - Initialize Robot: Load labware definitions and aspirate/dispense protocols.

- Dispense Buffers: First, transfer variable volumes of different pH buffers to assigned wells.

- Dispense Cofactors/Inhibitors: Add modulating compounds according to the design.

- Dispense Substrate: Add substrate solution to all wells. Mix by repeated aspiration/dispensing.

- Initiate Reaction: Finally, add a fixed volume of enzyme solution to each well to start the reaction. The plate is immediately transferred to the pre-heated plate reader.

Protocol: Plate Reader Data Acquisition & Export

Objective: To measure reaction kinetics and export structured data. Materials: Temperature-controlled multimode plate reader. Procedure:

- Pre-heat: Set reader temperature to the defined point (e.g., 30°C).

- Load Protocol: Configure kinetic measurement (e.g., absorbance at 405 nm every 30 sec for 10 min).

- Load Plate & Run: Place the prepared plate and start the kinetic read.

- Automated Export: Configure the reader software to export results as a structured

.csvfile with columns:[Plate_ID, Well, Time_s, Absorbance, Temperature]to a dedicated network folder.

Protocol: Automated Data Processing for Model Input

Objective: Transform raw kinetic data into a single response variable (e.g., initial velocity) for the BO model. Software: Python script executed automatically upon file detection. Procedure:

- File Watchdog: A directory listener detects a new data file and triggers the processing script.

- Load & Annotate: Script loads the raw data

.csvand merges it with the experimental design.csvusing the well location as the key. - Calculate Response: For each well, the linear portion of the kinetic curve is identified. The slope (ΔAbsorbance/Δtime) is calculated, converted to velocity (using the substrate's extinction coefficient), and normalized if required.

- Format Output: Script creates a final dataframe:

[Experiment_ID, Factor1_pH, Factor2_[Cofactor], Factor3_Temp, Response_Velocity]and saves it asready_for_BO.csv.

Protocol: Bayesian Model Update & Next Experiment Proposal

Objective: Update the surrogate model and propose the next batch of experimental conditions.

Software: Python with libraries (e.g., scikit-optimize, BoTorch, GPyOpt).

Procedure:

- Load Data: The BO script loads the historical dataset, including the latest results.

- Train/Update Gaussian Process (GP) Model: The GP surrogate model is trained on all data, mapping the multi-dimensional factor space to the reaction velocity.

- Optimize Acquisition Function: The Expected Improvement (EI) function is computed over a candidate grid. The point(s) maximizing EI (balancing exploration and exploitation) are selected.

- Generate Instructions: The chosen factor levels are formatted into a new instruction

.csvfor the liquid handler and logged. The loop returns to Section 4.1.

System Integration & Data Flow Architecture

Diagram Title: Automated Experiment Loop Data Architecture

Representative Data Output Table

Table 1: Example Iteration Data from an Automated BO Run for Enzyme Optimization

| Iteration | Well ID | pH | [Mg²⁺] (mM) | Temp (°C) | Initial Velocity (μM/s) | Model Uncertainty (σ) | Acquisition Value (EI) |

|---|---|---|---|---|---|---|---|

| 0 | A1 | 7.0 | 2.0 | 25 | 12.5 | 4.21 | N/A (Initial Design) |

| 0 | A2 | 7.0 | 5.0 | 30 | 18.7 | 4.15 | N/A |

| ... | ... | ... | ... | ... | ... | ... | ... |

| 5 | G7 | 8.2 | 3.8 | 28 | 45.6 | 1.89 | 2.34 |

| 5 | G8 | 8.5 | 4.1 | 29 | 52.1 | 2.05 | 2.87 |

| 6 | H1 | 8.4 | 4.2 | 28.5 | 49.8 | 0.95 | 1.12 |

This table illustrates how key quantitative data flows and is utilized within the loop. The BO algorithm uses Velocity and Uncertainty to calculate the Expected Improvement (EI), guiding the selection of conditions for the next iteration (e.g., well H1 in Iteration 6).

Application Notes

This application note details the integration of Bayesian Optimization (BO) into high-throughput experimentation (HTE) platforms for the rapid optimization of enzymatic reaction conditions. Framed within a broader thesis on adaptive design of experiments (DoE) for biocatalysis, this template demonstrates a closed-loop workflow for maximizing yield and turnover number (TON) in kinase- or hydrolase-catalyzed transformations critical to pharmaceutical synthesis.

The core challenge is the high-dimensional parameter space (pH, temperature, co-solvent concentration, enzyme loading, substrate equivalence, etc.), where traditional one-factor-at-a-time (OFAT) or full-factorial DoE approaches are inefficient. BO addresses this by building a probabilistic surrogate model (typically a Gaussian Process) of the reaction performance landscape. It then uses an acquisition function (e.g., Expected Improvement) to intelligently select the next set of conditions to test, balancing exploration of unknown regions and exploitation of known high-performance areas.

A recent application involved optimizing a tyrosine kinase (Src) reaction for the phosphorylation of a peptide substrate. The primary objective was to maximize conversion yield (%) within a 96-well plate microreactor format. After an initial space-filling design of 24 experiments, a BO loop was run for 5 sequential rounds of 8 experiments each.

Table 1: Bayesian Optimization Results for Src Kinase Reaction

| Optimization Round | Conditions Tested (Cumulative) | Best Yield Identified (%) | Key Parameters for Best Yield |

|---|---|---|---|

| Initial Design (D-Optimal) | 24 | 42 | pH 7.2, 10% DMSO, 2 mol% Enzyme |

| BO Cycle 1 | 32 | 67 | pH 7.8, 15% DMSO, 1.5 mol% Enzyme |

| BO Cycle 2 | 40 | 78 | pH 7.5, 12% DMSO, 1 mol% Enzyme |

| BO Cycle 3 | 48 | 82 | pH 7.6, 10% DMSO, 1.2 mol% Enzyme, 1.5 eq. ATP |

| BO Cycle 4 | 56 | 84 | pH 7.6, 8% DMSO, 1 mol% Enzyme, 2.0 eq. ATP |

| BO Cycle 5 (Final) | 64 | 85 | pH 7.5, 10% DMSO, 1.1 mol% Enzyme, 1.8 eq. ATP |

The BO-driven approach achieved an 85% yield, a >100% improvement over the initial best result, using only 64 total experiments. A comparable full-factorial exploration of just 5 parameters at 3 levels would require 243 experiments.

Detailed Experimental Protocols

Protocol 1: Initial High-Throughput Reaction Setup for Bayesian Optimization Objective: To establish a robust, miniaturized reaction screen for generating the initial dataset.

- Reagent Preparation: Prepare stock solutions in 1.5 mL Eppendorf tubes: Substrate peptide (10 mM in Milli-Q H₂O), ATP (100 mM in H₂O, pH adjusted to 7.0), Src kinase (1 mg/mL in storage buffer), and assay buffer (50 mM HEPES, 10 mM MgCl₂, 1 mM DTT, 0.01% Brij-35).

- Plate Setup: Using a liquid handler (e.g., Beckman Coulter Biomek), dispense 80 μL of assay buffer into each well of a 96-well polypropylene plate.

- Parameter Variation: Program the liquid handler to add variable volumes of co-solvent (DMSO, 0-20% v/v final), substrate (0.1-0.5 mM final), and ATP (1.0-3.0 eq. final) according to an initial D-optimal design array. Mix by aspirating/dispensing 50 μL, 5 times.

- Reaction Initiation: Start reactions by adding a defined volume of enzyme solution (0.5-2.5 mol% final). Seal the plate and incubate in a thermostated microplate shaker (30°C, 500 rpm) for 90 minutes.

- Reaction Quenching: Add 20 μL of 10% (v/v) aqueous trifluoroacetic acid (TFA) to each well to stop the reaction.

Protocol 2: Analytical Quantification via UPLC-UV Objective: To quantify conversion yield for each reaction condition.

- Sample Preparation: Transfer 80 μL of each quenched reaction mixture to a 96-well analysis plate. Dilute with 120 μL of H₂O containing 0.1% TFA.

- UPLC Method:

- Column: Acquity UPLC BEH C18 (1.7 μm, 2.1 x 50 mm)

- Mobile Phase A: H₂O + 0.1% TFA

- Mobile Phase B: Acetonitrile + 0.1% TFA

- Gradient: 5% B to 95% B over 3.0 minutes, hold at 95% B for 0.5 min.

- Flow Rate: 0.6 mL/min

- Detection: UV at 214 nm

- Injection Volume: 5 μL

- Data Analysis: Integrate peak areas for substrate and phosphorylated product. Calculate conversion yield as [Areaₚᵣₒdᵤcₜ / (Areaₛᵤbₛₜᵣₐₜₑ + Areaₚᵣₒdᵤcₜ)] * 100%.

Protocol 3: Bayesian Optimization Loop Execution Objective: To iteratively select and test new reaction conditions.

- Data Aggregation: Compile all experimental data (parameters + yield) into a single

.csvfile. - Surrogate Modeling: Using a Python script (with libraries

scikit-learnorBoTorch), train a Gaussian Process regression model on all data. The kernel is typically a Matern 5/2 kernel. - Acquisition Function Maximization: Calculate the Expected Improvement (EI) across the entire predefined parameter space. Identify the set of 8 conditions that maximize EI.

- Experimental Execution: Program the liquid handler to set up these 8 new conditions in duplicate, following Protocol 1.

- Analysis & Iteration: Analyze yields via Protocol 2, append new data to the master file, and repeat from Step 2 for the desired number of cycles (typically 4-10).

Mandatory Visualizations

Bayesian Optimization Closed-Loop Workflow

Kinase Catalytic Phosphotransfer Reaction

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| HEPES Buffer (1M, pH 7.0-8.0) | Provides stable pH control in the physiological range critical for kinase activity, with minimal metal ion chelation. |

| Adenosine 5'-Triphosphate (ATP), Magnesium Salt | The essential phosphate donor for kinase reactions. The magnesium salt ensures Mg²⁺ cofactor availability for catalysis. |

| Recombinant Human Kinase (e.g., Src, PKA) | Catalyzes the phosphoryl transfer. Commercial sources provide high purity and well-characterized activity (U/mg). |

| LC-MS Grade Acetonitrile & Water with 0.1% FA/TFA | Essential for UPLC/HPLC analysis. High purity minimizes background noise; acid modifiers improve peptide chromatographic separation. |

| Dimethyl Sulfoxide (DMSO), Anhydrous | Common co-solvent for solubilizing hydrophobic substrates in aqueous reaction mixtures. Concentration is a key optimization parameter. |

| Dithiothreitol (DTT) | Reducing agent used in assay buffers to maintain cysteine residues in the kinase in a reduced, active state. |

| 96-Well Polypropylene Microplates | Chemically resistant plates for miniaturized reaction setup, compatible with organic solvents and automated liquid handling. |

| Quanvolutional Liquid Handler (e.g., Biomek i7) | Enables precise, rapid dispensing of variable reagent volumes for high-throughput setup of DOE/BO experiment arrays. |

Navigating Pitfalls: Expert Tips for Robust and Efficient Bayesian Optimization Runs

Within the broader thesis on applying Bayesian optimization (BO) for enzymatic reaction condition optimization, three prevalent failure modes critically impact performance: handling Noisy Data, mitigating Model Mismatch, and overcoming Stagnation in Low-Dimensional Spaces. These failures can lead to inefficient resource use, suboptimal reaction yields, and a lack of convergence to the true enzymatic optimum. This document provides detailed application notes and protocols to diagnose and address these issues in a biochemical research context.

Failure Mode Analysis & Application Notes

Noisy Data

- Description: In enzymatic optimization, noise arises from stochastic biological variation, measurement error in analytical platforms (e.g., HPLC, spectrophotometry), and minor, unrecorded fluctuations in reaction setup (pipetting, temperature).

- Impact on BO: Noise confounds the Gaussian Process (GP) surrogate model, leading to inaccurate estimation of the mean and uncertainty (

sigma). The acquisition function may over-exploit spurious high-performance regions or over-explore due to inflated uncertainty. - Diagnosis: High variance in replicate measurements at the same condition; a GP model with poor predictive accuracy on held-out test points despite a seemingly good fit.

| Noise Source | Typical Magnitude (CV%) | Primary Measurement Method | Mitigation Strategy |

|---|---|---|---|

| Biological Replicate Variance | 5-15% | Standard deviation of 3+ enzyme batch preps | Use normalized activity, robust enzyme purification |

| Analytical (HPLC/UV-Vis) | 1-5% | Repeated measurement of standard sample | Internal standards, calibration curves, replicate reads |

| Microplate Pipetting | 3-8% | Dye dilution assay across plate | Use liquid handlers, tip calibration, sufficient mixing |

| Ambient Temperature Fluctuation | 1-4% (∆Activity) | Data logger in incubator | Use Peltier-controlled thermal blocks |

Model Mismatch

- Description: Occurs when the prior assumptions of the GP surrogate model (e.g., choice of kernel, trend function) do not align with the true, unknown response surface of the enzymatic reaction.

- Impact on BO: The model fails to capture complex interactions (e.g., pH-enzyme cofactor synergy) or sharp discontinuities (e.g., activity cliff at a critical temperature). This results in the BO algorithm proposing suboptimal or uninformative experiments.

- Diagnosis: Systematic residuals in model predictions; failure to improve yield after several BO iterations despite low apparent noise.

Table 2: Kernel Selection Guide for Enzymatic Response Surfaces

| Kernel Type | Assumption about Reactivity Landscape | Best for Enzymatic Variables Like... | Risk of Mismatch |

|---|---|---|---|

| Squared Exponential (RBF) | Smooth, infinitely differentiable functions | Temperature, Ionic Strength | Oversmooths sharp transitions |

| Matérn 3/2 or 5/2 | Less smooth than RBF, more flexible | pH, Substrate Concentration | Moderate fit for most common cases |

| Linear / Polynomial | Global linear or polynomial trend | Dilution series, additive effects | Misses local optima entirely |

| Composite (RBF + Periodic) | Repeating patterns superimposed on smooth trend | Stirring rate, cyclical processes | Over-parameterization if periodicity absent |

Stagnation in Low-Dimensional Spaces

- Description: Paradoxically, BO can stagnate or converge to a local optimum too quickly when searching spaces with few variables (e.g., just pH and temperature). This is often due to over-exploitation from an overconfident model.

- Impact on BO: The algorithm becomes trapped, repeatedly sampling near the current best point without exploring potentially superior, distant regions of the simple space.

- Diagnosis: Sequential suggestions cluster tightly; acquisition function value plateaus at near-zero; minimal improvement over 3-5 iterations.

Experimental Protocols

Protocol 3.1: Quantifying and Integrating Noise for Robust BO

Objective: To empirically determine noise variance (sigma_noise^2) for integration into the GP model.

- Replicate Design: Select 3-5 representative reaction conditions spanning your experimental space (e.g., center point, extreme corners).

- Execution: Perform a minimum of

n=4technical replicates for each selected condition within the same experimental block. - Analysis: For each condition, calculate the mean yield and variance. Pool variances to estimate a global

sigma_noise^2. - GP Integration: Set the

alphaornoiseparameter in your GP regression (e.g.,GaussianProcessRegressor(alpha=sigma_noise^2)) to this value. This prevents the model from fitting noise.