Beyond Trial and Error: A Systematic DoE Framework for Optimizing Low-Yielding Chemical Reactions in Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on applying Design of Experiments (DoE) to diagnose and optimize low-yielding reactions.

Beyond Trial and Error: A Systematic DoE Framework for Optimizing Low-Yielding Chemical Reactions in Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying Design of Experiments (DoE) to diagnose and optimize low-yielding reactions. Moving beyond inefficient one-variable-at-a-time (OVAT) approaches, it details a structured framework from foundational principles to advanced application. Readers will learn how to systematically identify critical factors, uncover hidden variable interactions, and implement robust screening and optimization designs. The guide covers practical troubleshooting strategies, validation techniques to ensure reproducibility, and a comparative analysis of DoE's advantages over traditional methods, ultimately enabling more efficient and predictable synthetic route development for pharmaceuticals.

Why One-Variable-at-a-Time Fails: Laying the DoE Foundation for Reaction Understanding

The Critical Limitations of OVAT Optimization in Complex Synthesis

Frequently Asked Questions

What is the primary weakness of the OVAT approach in reaction optimization? The most critical weakness is that OVAT cannot detect interaction effects between factors [1]. In complex syntheses, factors like temperature, catalyst loading, and solvent often interact; varying them independently fails to reveal these synergies or antagonisms, potentially leading researchers to miss the true optimal conditions entirely [2] [1].

My reaction works poorly under my "optimized" OVAT conditions when I scale up or change substrates. Why? This is a common problem. OVAT-optimized conditions are often specific to a single substrate and set of fixed parameters [2]. When you change the substrate or process scale, underlying factor interactions that OVAT could not detect become significant, causing the reaction to fail or yield poorly [2] [3]. A DoE approach provides a broader understanding of the reaction landscape, making it more robust to such changes.

I already have a high-yielding reaction from OVAT. Why should I switch to DoE? While OVAT might find a workable solution, it may not find the best or most robust one [4] [1]. DoE can help you understand the precise influence of each variable and their interactions. This knowledge is invaluable for troubleshooting, scaling up, and making informed changes for different substrate classes, ultimately saving time and resources in the long run [2] [5].

Is DoE more expensive and time-consuming than OVAT? No, when properly applied, DoE is typically more efficient. While a single DoE might involve more initial experiments than a simple OVAT test, it systematically explores the entire experimental space with fewer total runs than a comprehensive OVAT study of multiple factors [1]. More importantly, it prevents costly dead-ends and re-development by finding the true optimum faster [6] [7].

Troubleshooting Guides

Problem: Inconsistent Reaction Yields

Symptoms: A reaction gives high yields with one batch of starting material but low yields with another, despite the OVAT protocol being followed exactly.

Root Cause: OVAT failed to identify a critical interaction between a factor you controlled (e.g., temperature) and an uncontrolled, lurking variable (e.g., slight variations in substrate purity or moisture content) [3].

Solution:

- Switch to a DoE Screening Design: Use a fractional factorial or definitive screening design to efficiently test multiple factors simultaneously, including potential lurking variables [3].

- Identify Key Interactions: The analysis will reveal which factors interact and have the largest effect on yield variability.

- Establish a Robust Operating Window: Use Response Surface Methodology (RSM) to find a region of factor settings where the yield is consistently high and less sensitive to minor variations in raw materials [8].

Problem: Failed Scale-Up

Symptoms: A reaction optimized in small-scale R&D vials fails or yields poorly in a larger reactor.

Root Cause: OVAT conditions were optimal for small-scale mass/heat transfer properties but are not suitable for the different transfer dynamics of a larger vessel. OVAT cannot capture these complex, non-linear interactions [2].

Solution:

- Incorporate Scale-Relevant Factors: In your DoE, include factors like agitation speed, dosing rate, and vessel geometry early in the process development.

- Model the Process: A well-designed DoE can create a mathematical model that predicts how the reaction will behave under different scale-related conditions, enabling a smoother and more reliable scale-up [5].

Problem: Optimal Solvent Not Identified

Symptoms: After testing a handful of common solvents via OVAT, you suspect a better, safer, or cheaper solvent might exist but cannot find it.

Root Cause: OVAT solvent selection is non-systematic and limited to a chemist's intuition and experience, failing to explore the vast "solvent space" effectively [2].

Solution:

- Use a Solvent Map: Employ a principled approach by selecting solvents from a principal component analysis (PCA) map of solvent properties. This map groups solvents by their physical-chemical properties [2].

- Design a Solvent DoE: Choose a small set of solvents (e.g., 5-7) that are far apart on the solvent map to maximally represent different chemical environments [2].

- Analyze and Optimize: The DoE will identify the region of solvent space that is optimal for your reaction, allowing you to select the best-performing solvent or even identify safer, more sustainable alternatives you hadn't considered [2].

Comparison of OVAT and DoE Approaches

The table below summarizes the fundamental differences between the OVAT and DoE methodologies, explaining why DoE is superior for optimizing complex systems.

| Characteristic | OVAT Approach | DoE Approach | Implication for Complex Synthesis |

|---|---|---|---|

| Factor Interactions | Cannot detect interactions [4] [1]. | Systematically identifies and quantifies interactions [2] [3]. | Prevents missing the true optimum caused by factor synergy [2]. |

| Experimental Efficiency | Low; requires many runs to study multiple factors, and precision can be poor [1]. | High; explores multiple factors simultaneously with greater precision per run [1] [3]. | Saves time and resources, especially with many variables [7]. |

| Optimal Condition | Can easily miss the global optimum [4] [1]. | Statistically models the entire space to locate a robust optimum [2] [8]. | Achieves higher yields, purity, and process robustness [5]. |

| Error Estimation | Difficult to estimate experimental error without repetition [2]. | Built-in replication (e.g., center points) allows for error estimation [2] [1]. | Provides confidence in results and significance of factor effects. |

| Problem-Solving Power | Limited to simple, linear cause-and-effect. | Powerful for troubleshooting complex, multi-factorial problems [3]. | Effectively diagnoses root causes of yield variation and scale-up failure. |

Experimental Protocol: Transitioning from OVAT to DoE for Reaction Optimization

This protocol provides a step-by-step methodology for moving from a baseline OVAT result to a systematically optimized process using Design of Experiments.

Objective: To optimize a low-yielding SNAr reaction by replacing a hazardous solvent and finding robust optimal conditions for catalyst loading, temperature, and pressure.

Background: Based on a case study where DoE and a solvent map were used to successfully optimize a synthetic reaction and identify a safer solvent [2].

Materials and Reagents

| Item | Function | Example/Note |

|---|---|---|

| Substrates | Reacting species | e.g., Haloaromatic compound, Nucleophile |

| Catalyst | Accelerates reaction rate | e.g., Commercial platinum-based catalyst [5] |

| Solvent Library | Reaction medium | Selected from a PCA-based solvent map (e.g., 5-7 solvents covering different regions) [2] |

| DoE Software | Design creation & data analysis | e.g., Design-Expert, JMP, or R statistical package |

Procedure

Define Objective and Factors:

- Objective: Maximize reaction yield and conversion while reducing or eliminating a toxic solvent.

- Select Factors: Choose 3-4 key factors to vary. For this example:

- Factor A: Solvent (Categorical, 5 types from solvent map)

- Factor B: Catalyst Loading (Numerical, e.g., 0.5 - 1.5 mol%)

- Factor C: Temperature (Numerical, e.g., 60 - 100 °C)

- Factor D: Pressure (Numerical, if applicable, e.g., 1 - 5 bar) [5]

Select and Execute an Experimental Design:

- A screening design (e.g., a Fractional Factorial design) is suitable for initially identifying the most important factors [3].

- For optimization, a Response Surface Methodology (RSM) design like a Central Composite Design (CCD) or Box-Behnken Design is ideal [1] [8].

- Input your factor ranges and levels into the software to generate a randomized run sheet.

- Execute the experiments in the randomized order to minimize the effects of lurking variables [1].

Analyze the Data and Build a Model:

- Input the experimental results (e.g., yield, purity) into the software.

- Use ANOVA (Analysis of Variance) to identify which factors and interactions are statistically significant.

- The software will generate a predictive mathematical model (e.g., a quadratic equation) for the response.

Interpret Results and Find Optimum:

- Use contour plots and 3D surface plots to visualize the relationship between factors and the response.

- Identify the "sweet spot"—the combination of factor settings that maximizes your yield.

- The model might reveal, for instance, that a specific, less toxic solvent performs best at a moderately high temperature and medium catalyst loading [2].

Confirm the Prediction:

- Run 2-3 confirmation experiments at the predicted optimal conditions.

- If the experimental results match the model's prediction, your optimization is successful and validated.

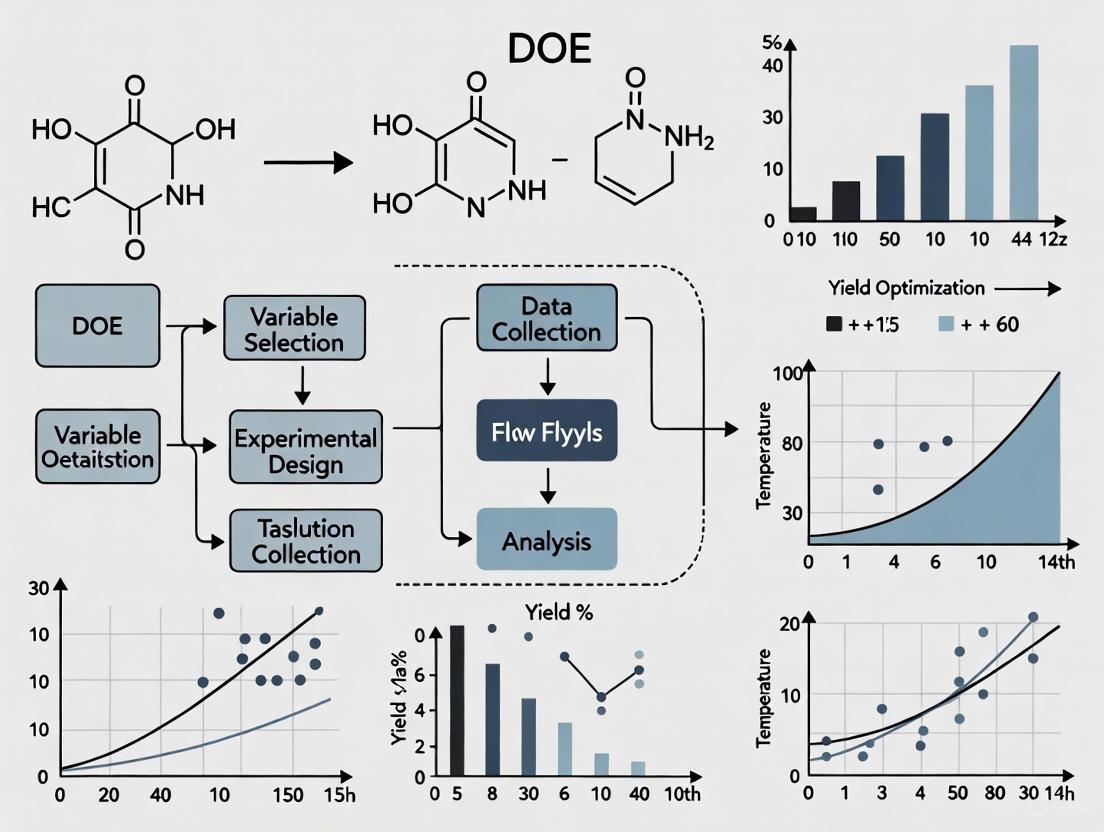

The DoE Optimization Workflow

The following diagram illustrates the logical, iterative process of optimizing a reaction using Design of Experiments.

Design of Experiments (DOE) is a systematic, statistical approach to process optimization that allows researchers to study the effects of multiple input factors on a desired output (response) simultaneously [9] [10]. In the context of improving low-yielding reactions in pharmaceutical research, DOE provides a structured methodology to efficiently identify key reaction parameters, optimize conditions, and understand complex factor interactions that traditional one-factor-at-a-time (OFAT) approaches often miss [9] [2].

For drug development professionals, implementing DOE enables more efficient screening of reaction conditions, reduces experimental time and costs, and provides a deeper understanding of the reaction landscape through statistical modeling [11]. This technical support center addresses common challenges researchers face when implementing DOE in their experimental workflows.

Core Principles and Terminology

Fundamental DOE Principles

DOE is built upon several key statistical principles that ensure experimental validity and reliability:

Randomization: The random assignment of experimental units to different treatment groups helps eliminate potential biases and distributes the effects of uncontrolled variables randomly across the experiment [12] [13]. For chemical reactions, this means performing experimental runs in a random order to mitigate the impact of environmental fluctuations or instrument drift.

Replication: Repeating experimental treatments allows researchers to estimate variability and improve the precision of effect estimates [12] [10]. In reaction optimization, replication helps distinguish significant factor effects from experimental noise.

Blocking: This technique accounts for known sources of nuisance variation by grouping experimental units into homogeneous blocks [12] [13]. For example, blocking by different reagent batches or laboratory technicians can remove these sources of variation from the experimental error.

Multifactorial Designs: Unlike OFAT approaches, DOE simultaneously varies multiple factors to efficiently explore the experimental space and detect interactions between factors [12] [2].

Key DOE Terminology

Table: Essential DOE Terminology for Reaction Optimization

| Term | Definition | Pharmaceutical Research Example |

|---|---|---|

| Factors | Input variables controlled by the researcher | Temperature, catalyst concentration, solvent type, reaction time |

| Levels | Specific values or settings assigned to a factor | Temperature: 25°C, 50°C, 75°C |

| Responses | Measurable outputs of experimental results | Reaction yield, purity, byproduct formation |

| Experimental Space | Multidimensional region defined by the ranges of all factors | All possible combinations of factor levels being studied |

| Interactions | Situation where the effect of one factor depends on the level of another factor | Temperature effect on yield varies with different solvent types |

| Confounding | When the effect of one factor cannot be distinguished from another | Unable to separate mixing speed effect from catalyst effect due to experimental design |

Experimental Design Types and Selection

Common DOE Designs

Different experimental designs serve specific purposes throughout the optimization process:

Screening Designs: Fractional factorial designs efficiently identify the most influential factors from a large set of potential variables with minimal experimental runs [14]. These are particularly valuable in early reaction development when many factors may affect the outcome.

Full Factorial Designs: These investigate all possible combinations of factors and their levels, allowing complete characterization of all main effects and interactions [10] [14]. While comprehensive, the number of runs grows exponentially with additional factors.

Response Surface Methodology (RSM): Designs such as Central Composite or Box-Behnken help model curvature in responses and locate optimal conditions [14]. These are ideal for final optimization stages when working with a few critical factors.

Space-Filling Designs: Useful when prior knowledge of the system is limited, these designs sample broadly across the experimental space without assumptions about the underlying model structure [14].

Design Selection Workflow

The following diagram illustrates the strategic selection of DOE designs throughout a typical reaction optimization campaign:

Troubleshooting Common DOE Implementation Issues

FAQ 1: How do I determine appropriate factor ranges for my reaction screening?

Problem: Researchers often struggle to define realistic minimum and maximum values for reaction factors, potentially missing optimal conditions or wasting resources on impractical regions.

Solution:

- Conduct preliminary scoping experiments to establish feasible ranges

- Utilize space-filling designs when prior knowledge is limited [14]

- Consider practical constraints (solvent boiling points, equipment limitations, safety concerns)

- For continuous factors (temperature, concentration), select ranges wide enough to detect effects but narrow enough to be practically relevant

Protocol: Start with a broad screening design using wide factor ranges, then progressively narrow the experimental space based on initial results. For a reaction with unknown optimal temperature, test from ambient to solvent reflux temperature rather than arbitrarily selecting a narrow window.

FAQ 2: My DOE results show unexpected factor interactions. How should I proceed?

Problem: Significant interaction effects between factors complicate interpretation and may contradict established mechanistic understanding of the reaction.

Solution:

- Verify the statistical significance of interactions through p-values and effect sizes

- Visualize interactions using interaction plots or 3D surface plots

- Consider whether interactions represent true chemical phenomena or confounding with uncontrolled variables

- If interactions are statistically significant and chemically plausible, incorporate them into your process model

Protocol: For a identified temperature-catalyst interaction, run confirmation experiments at the predicted optimal conditions and adjacent points to validate the response surface model [9].

FAQ 3: How can I effectively handle categorical factors like solvent or catalyst type in my DOE?

Problem: Traditional response surface methods assume continuous factors, creating challenges when including categorical variables like solvent choice or catalyst type.

Solution:

- Use specialized solvent maps based on principal component analysis (PCA) to incorporate solvent selection systematically [2]

- For catalyst screening, employ factorial designs that treat catalyst type as a categorical factor

- Consider split-plot designs when some factors are harder to change than others

Protocol: When optimizing solvent and temperature simultaneously, select solvents from different regions of solvent property space (polar, non-polar, protic, aprotic) rather than similar solvents to maximize information gain [2].

FAQ 4: What is the minimum number of experimental runs needed for reliable results?

Problem: Resource constraints often necessitate minimizing experimental runs while maintaining statistical validity.

Solution:

- For screening 5-8 factors, fractional factorial designs with 16-32 runs typically provide sufficient resolution [14]

- Include center points to detect curvature and estimate pure error

- Balance run numbers with required precision using power analysis

- Consider sequential experimentation rather than attempting complete optimization in one design

Protocol: When screening 6 factors for a new reaction, a resolution IV fractional factorial design with 16 runs plus 3 center points provides good ability to detect main effects and two-factor interactions while managing resource constraints.

FAQ 5: How do I address poor model fit or low predictive power in my DOE analysis?

Problem: The statistical model derived from experimental data shows poor fit statistics or fails validation experiments.

Solution:

- Check for outliers or influential points that may distort the model

- Verify that important factors weren't omitted from the original design

- Consider whether transformation of the response variable is appropriate

- Ensure the experimental region includes the optimum; otherwise, add experiments in promising directions

- Confirm measurement system reliability and response variability

Protocol: If R² value is low but significant factors are identified, add axial points to a factorial design to convert to a response surface design, providing additional information about curvature in the experimental region [14].

Factor-Response Relationships and Experimental Space

Mapping the Experimental Space

The experimental space represents all possible combinations of factor levels under investigation. Efficiently exploring this space requires understanding the relationship between factors and responses:

Quantitative Analysis of Factor Effects

Table: Calculating Main Effects and Interactions from a 2² Factorial Design

| Experiment | Temperature | Catalyst Loading | Yield (%) | Calculations |

|---|---|---|---|---|

| 1 | Low (-1) | Low (-1) | 65 | Main Effect Temp = (Y₂+Y₄)/2 - (Y₁+Y₃)/2 |

| 2 | High (+1) | Low (-1) | 78 | Main Effect Catalyst = (Y₃+Y₄)/2 - (Y₁+Y₂)/2 |

| 3 | Low (-1) | High (+1) | 72 | Interaction = (Y₁+Y₄)/2 - (Y₂+Y₃)/2 |

| 4 | High (+1) | High (+1) | 92 |

In this example, the main effect of temperature would be calculated as: (78+92)/2 - (65+72)/2 = 85 - 68.5 = 16.5%, indicating that increasing temperature generally improves yield. The interaction effect would be: (65+92)/2 - (78+72)/2 = 78.5 - 75 = 3.5%, suggesting a mild synergistic effect between temperature and catalyst loading [10].

Research Reagent Solutions for DOE Implementation

Table: Essential Materials and Tools for Reaction Optimization DOE

| Reagent/Equipment | Function in DOE | Application Example |

|---|---|---|

| Statistical Software | Experimental design generation and data analysis | JMP, Design-Expert, or R with specialized DOE packages |

| Automated Reactor Systems | Precise control of reaction parameters and high-throughput experimentation | Parallel reactor systems for simultaneous experimentation under different conditions |

| Solvent Libraries | Systematic variation of solvent environment | Curated solvent sets representing different polarity, hydrogen bonding, and polarizability parameters [2] |

| In Situ Analytics | Real-time reaction monitoring for multiple responses | FTIR, Raman spectroscopy, or HPLC for kinetic profiling |

| Design Templates | Standardized documentation of experimental plans | Customized spreadsheets or electronic lab notebooks with predefined DOE templates [10] |

Advanced DOE Applications in Pharmaceutical Research

Case Study: Reaction Optimization with Significant Interaction Effects

A pharmaceutical development team optimized a low-yielding SNAr reaction using DOE after traditional OFAT approaches failed to identify satisfactory conditions [2]. The team employed a fractional factorial design to screen six factors simultaneously:

- Factors included: solvent, temperature, base equivalence, nucleophile concentration, reaction time, and catalyst type

- Responses measured: yield, purity, and byproduct formation

- Key finding: Significant interaction between solvent and temperature explained why previous optimization attempts failed

- Outcome: Identified conditions that improved yield from 10% to 33% while reducing hazardous reagent use [11]

Sequential DOE for Multistep Optimization

For complex reactions with multiple competing responses (e.g., yield, purity, cost), a sequential approach is most effective:

- Screening Phase: Fractional factorial design to identify critical factors

- Optimization Phase: Response surface design around promising region

- Robustness Testing: Verify performance under small variations in factor settings

- Validation: Confirm optimal conditions with replication

This approach efficiently moves from broad screening to precise optimization while building comprehensive process understanding [14].

Implementing DOE principles for improving low-yielding reactions requires careful attention to factor selection, experimental design, and statistical analysis. By moving beyond one-factor-at-a-time approaches and embracing multifactorial experimentation with proper randomization, replication, and blocking, researchers can efficiently optimize complex reactions while developing deeper process understanding. The troubleshooting guidance provided in this technical support center addresses common implementation challenges, enabling more effective application of DOE methodologies in pharmaceutical research and development.

How DoE Efficiently Uncovers Hidden Factor Interactions

Why didn't my One-Factor-at-a-Time (OFAT) experiment find the optimal reaction conditions?

OFAT experiments, where you change only one variable while holding others constant, are inefficient and cannot detect interactions between factors [9] [15]. An interaction occurs when the effect of one factor (e.g., Temperature) on the response (e.g., Yield) depends on the level of another factor (e.g., pH) [9].

For example, an OFAT approach to maximize a chemical yield might conclude that a Temperature of 30°C and a pH of 6 is optimal, achieving an 86% yield [9]. However, a properly designed Design of Experiments (DOE) that systematically varies both factors together found the true optimum was at 45°C and a pH of 7, with a predicted yield of 92% [9]. The OFAT method completely missed this because it could not see how Temperature and pH interact [9].

What is a Design of Experiments (DOE) and how does it find interactions?

DOE is a systematic, statistical approach for planning, conducting, and analyzing controlled tests to evaluate the factors that influence a process [15]. Unlike OFAT, a DOE changes multiple factors simultaneously according to a structured plan (a design matrix) [15]. This allows you to:

- Estimate the individual effect of each factor on your response.

- Identify and quantify interactions between factors.

- Develop a predictive model to find optimal factor settings, even between the tested points [9].

The following diagram illustrates the core workflow of a DOE, from design to discovery.

How do I set up a basic DOE to test for interactions?

For a two-factor system, a full factorial design is an excellent starting point. It tests all possible combinations of the factors' levels [15].

Step-by-Step Protocol: Two-Factor Full Factorial DOE

- Define Factors and Levels: Select two factors you want to investigate (e.g., Reaction Temperature and Catalyst Concentration). Choose a realistic "low" and "high" level for each factor to investigate [15].

- Create the Design Matrix: This matrix outlines the exact experimental runs needed. For two factors at two levels, you need 2² = 4 runs [15].

- Run Experiments: Perform the experiments in a randomized order to avoid confounding the results with unknown, time-related variables [15].

- Analyze the Results: Calculate the main effects and the interaction effect from your experimental data.

The table below shows a sample design matrix and results for a chemical reaction, where the response is Yield (%).

Table 1: Design Matrix and Results for a Two-Factor DOE

| Experimental Run | Temperature (°C) | Catalyst Concentration (%) | Yield (%) |

|---|---|---|---|

| 1 | 100 (Low) | 1.0 (Low) | 21 |

| 2 | 100 (Low) | 2.0 (High) | 42 |

| 3 | 200 (High) | 1.0 (Low) | 51 |

| 4 | 200 (High) | 2.0 (High) | 57 |

Using the data in Table 1, you can calculate the effects [15]:

- Main Effect of Temperature: (Average Yield at High Temp) - (Average Yield at Low Temp) = [(51 + 57)/2] - [(21 + 42)/2] = 54 - 31.5 = +22.5%

- Main Effect of Concentration: (Average Yield at High Conc.) - (Average Yield at Low Conc.) = [(42 + 57)/2] - [(21 + 51)/2] = 49.5 - 36 = +13.5%

To calculate the interaction effect, you need to expand the design matrix to include an interaction column. The coded levels for the interaction are found by multiplying the levels of Temperature and Concentration for each run [15].

Table 2: Design Matrix with Interaction Term

| Run | Temp (T) | Conc (C) | T x C Interaction | Yield (%) |

|---|---|---|---|---|

| 1 | -1 (Low) | -1 (Low) | (-1) * (-1) = +1 | 21 |

| 2 | -1 (Low) | +1 (High) | (-1) * (+1) = -1 | 42 |

| 3 | +1 (High) | -1 (Low) | (+1) * (-1) = -1 | 51 |

| 4 | +1 (High) | +1 (High) | (+1) * (+1) = +1 | 57 |

- Interaction Effect (T x C): (Average Yield where Interaction is +1) - (Average Yield where Interaction is -1) = [(21 + 57)/2] - [(42 + 51)/2] = 39 - 46.5 = -7.5%

This negative interaction effect reveals that the effect of temperature is less pronounced at higher catalyst concentrations, and vice versa. This hidden relationship is impossible to detect with OFAT. The diagram below visualizes this concept.

What are the practical steps for executing a successful DOE?

Follow this five-step protocol to ensure your DOE is robust and provides actionable results [6].

- Identify and Isolate Variables: Test one variable or solution at a time to pinpoint the most effective change. Avoid making multiple modifications simultaneously, as this complicates analysis and can introduce new issues [6].

- Determine Sample Size: Test enough units for statistical significance. As a rule of thumb, to validate a fix for an issue with a failure rate of p, you should test at least n = 3/p units with zero failures to be 95% confident (⍺=0.05) the problem is solved. For a 10% failure rate, plan to test 30 units [6].

- Execute with Vigilance: Keep extremely accurate records and be hyper-vigilant during assembly to prevent kitting and configuration errors that can invalidate your results [6].

- Validate in the Real World: Ensure your test setup accurately simulates real-world conditions. A test is only valuable if its results predict actual performance in the field [6].

- Deploy and Confirm at Scale: After finding a solution, validate the change at full scale with all relevant performance and reliability tests before final implementation [6].

The Scientist's Toolkit: Essential DOE Research Reagents

Table 3: Key Components for a Successful DOE Initiative

| Item | Function in DOE |

|---|---|

| Design Matrix | A structured table that defines the set of experimental runs. It is the blueprint for your DOE, ensuring efficient and systematic data collection [15]. |

| Statistical Software | Tools (e.g., JMP, R, Python libraries) used to randomize the run order, analyze the results, calculate effects, build predictive models, and visualize interaction effects [9]. |

| Randomization Protocol | A procedure to run experimental trials in a random sequence. This is critical to eliminate the influence of confounding, uncontrolled variables (e.g., ambient humidity, reagent age) [15]. |

| Predictive Model | A mathematical equation (often a polynomial) that describes the relationship between your factors and the response. It allows you to predict outcomes and find optima within the experimental region [9]. |

| Response Surface Plot | A 3D visualization of the predictive model. This graph makes it easy to see the shape of the response and identify interactions and optimal settings [9]. |

Frequently Asked Questions (FAQs)

What is the primary advantage of using DoE over a traditional One-Variable-at-a-Time (OVAT) approach for optimizing a low-yielding reaction?

The primary advantage is the ability to efficiently identify optimal conditions and understand interactions between multiple factors simultaneously. A traditional OVAT approach, where only one variable is changed while others are held constant, can be inefficient and may miss the true optimum due to factor interactions [2]. In contrast, DoE is a systematic approach that allows scientists to screen a large number of reaction parameters in a relatively small number of experiments, enabling them to model the effect of each variable and their interactions to find the best possible outcome [11] [2].

A DoE study suggested that higher temperature and lower reagent equivalents are optimal for my reaction, which contradicts my initial hypothesis. Should I trust the model?

Yes, you should initially trust the model, as this is a common and powerful outcome of a DoE analysis. DoE is designed to uncover these non-intuitive interactions that are easily missed with OVAT. The statistical model is based on empirical data from your experiments [2]. The next step should be to run a verification experiment at the predicted optimal conditions (high temperature, low reagent equivalents) to confirm the model's accuracy. A successful verification experiment, which yields the predicted result, validates the model and provides a robust set of conditions [2].

My chemical reaction generates multiple problematic byproducts. Can DoE help with this beyond just improving yield?

Absolutely. DoE is exceptionally well-suited for improving reaction selectivity and reducing byproducts. By systematically varying parameters and analyzing the outcomes, you can identify conditions that favor the formation of your desired product while suppressing the pathways that lead to byproducts [11]. For example, one case study involved a reaction generating five structurally similar byproducts. A DoE exercise was used to adjust reaction conditions, which not only increased the desired product yield three-fold (from 10% to 33%) but also reduced the proportion of these hard-to-remove byproducts [11].

How can I use DoE to find a safer or more sustainable solvent for my reaction?

DoE can be coupled with a "solvent map" to systematically explore solvent space. Instead of testing a random selection of solvents, a solvent map based on Principal Component Analysis (PCA) groups solvents by their key physical properties [2]. You can select a few solvents from different regions of this map for your DoE screening. The results will show you which area of solvent space (e.g., polar aprotic, non-polar, etc.) is optimal for your reaction, allowing you to identify high-performing, safer, or more sustainable solvents you might not have otherwise considered [2].

After implementing new conditions from a DoE, how can I ensure my process remains robust and consistent over time?

Once optimal conditions are identified, Statistical Process Control (SPC) is the ideal methodology for ensuring long-term process robustness and consistency [16]. SPC uses control charts to monitor process behavior over time, distinguishing between common cause variation (inherent to the process) and special cause variation (indicating a problem) [16]. This data-driven approach allows for proactive problem-solving, helping you maintain control over your optimized process and prevent deviations before they lead to failed batches or variations in product quality [16] [17].

Troubleshooting Guide

Poor Model Fit or Insignificant Factors

Symptoms: The statistical model from your DoE has a low R² value (poor predictive power), or analysis of variance (ANOVA) shows that most factors are not statistically significant.

| Potential Cause | Solution |

|---|---|

| Insufficient factor range | The chosen high and low values for your factors were too close together, resulting in a signal that is too weak to detect over the background noise. Widen the ranges for key factors in a subsequent DoE round. |

| High experimental noise | Uncontrolled variables or measurement errors are obscuring the effects of your factors. Improve experimental consistency, ensure proper calibration of equipment, and consider replicating center points to better estimate noise. |

| Missing key factors | The variables you chose to study may not be the most impactful ones for this specific reaction. Conduct further fundamental research on the reaction mechanism to identify more critical factors to test. |

Failure to Verify the Model's Predictions

Symptoms: When you run a new experiment at the optimal conditions predicted by the DoE model, the result (e.g., yield) does not match the model's prediction.

| Potential Cause | Solution |

|---|---|

| Model interpolation | The verification point might lie far outside the experimental region used to build the model. Models are reliable for interpolation (within the factor space studied) but not for extrapolation. Ensure your verification point is within the bounds of your original experimental design. |

| Presence of curvature | The model may be too simple (e.g., linear) for a process that has significant curvature. Add center points to your initial design to detect curvature. If present, augment your design with additional experiments to create a higher-order (e.g., quadratic) model. |

| Uncontrolled factor drift | An unmeasured variable (e.g., raw material purity, ambient humidity) changed between the initial DoE and the verification run. Strictly control laboratory conditions and document all potential sources of variation. |

High Variation in Replicate Experiments

Symptoms: Replicate runs of the same experimental conditions (e.g., center points) show unacceptably high variability.

| Potential Cause | Solution |

|---|---|

| Inconsistent procedure | The experimental protocol may allow for too much interpreter judgment. Create a highly detailed, step-by-step procedure and ensure all lab personnel are trained on it. |

| Unreliable analytical method | The method used to measure the output (e.g., yield, purity) may not be precise. Perform a method validation to ensure the analytical technique is fit for purpose before starting the DoE. |

| Poor raw material control | The starting materials or reagents may have inconsistent quality or purity. Source materials from a single, reliable batch for the entire DoE study to eliminate this source of variation. |

Quantitative Benefits of DoE

The table below summarizes documented quantitative benefits of implementing DoE for process optimization.

| Benefit | Metric | Quantitative Improvement | Context / Source |

|---|---|---|---|

| Yield Improvement | Percentage Yield | Increased from 10% to 33% (3-fold increase) | Optimization of a complex API step with multiple byproducts [11]. |

| Resource Efficiency | Raw Material Usage | Reduced quantity of required materials | DoE-optimized conditions minimized use of expensive/hazardous reagents [11]. |

| Process Efficiency | Hazardous Chemical Use | Reduced use of hazardous chemicals | Mitigated risks by identifying safer process windows [11]. |

| Experimental Efficiency | Number of Experiments to Optimize | 19 experiments to explore up to 8 factors with interactions | Resolution IV DoE design versus dozens to hundreds of OVAT experiments [2]. |

Experimental Protocol: A Basic DoE Workflow for Reaction Optimization

Objective: To systematically optimize a low-yielding chemical reaction by evaluating the impact of and interactions between three critical factors: Reaction Temperature, Catalyst Loading, and Solvent Type.

Step 1: Define the Problem and Objectives

- Clearly state the primary response (e.g., reaction yield).

- Define secondary responses (e.g., purity, byproduct level).

Step 2: Select Factors and Ranges

- Factor 1: Temperature. Range: 50°C to 100°C.

- Factor 2: Catalyst Loading. Range: 1 mol% to 5 mol%.

- Factor 3: Solvent. Choose 3-4 solvents from different regions of a solvent property map (e.g., Toluene, THF, DMF) [2].

Step 3: Select an Experimental Design

- A full factorial design is recommended for 3 factors. This requires 8 experiments (2³) plus 2-3 replicates of the center point to estimate experimental error.

Step 4: Run the Experiments

- Execute all experiments in a randomized order to avoid systematic bias.

- Adhere to a strict, written procedure for consistency.

Step 5: Analyze the Data

- Use statistical software to perform ANOVA.

- Identify which factors and interactions are significant.

- Generate a statistical model and contour plots to visualize the relationship between factors and the response.

Step 6: Validate the Model

- Run 1-2 additional experiments at the predicted optimal conditions.

- Compare the actual result with the model's prediction. A close match confirms a reliable model.

Process Optimization Workflow

Research Reagent Solutions

The following table details key materials and their functions in a typical DoE study for reaction optimization.

| Item | Function in DoE Context |

|---|---|

| Solvent Map | A statistical tool (based on Principal Component Analysis) that groups solvents by physical properties, enabling systematic selection of a diverse set of solvents to screen in a DoE, moving beyond trial and error [2]. |

| Catalyst Library | A collection of commercially available or synthetically accessible catalysts. A DoE can efficiently screen different metal centers or ligand structures to identify the most active and selective catalyst for a transformation. |

| Statistical Software | Essential for generating the set of experiments for a given design (e.g., factorial, response surface) and for analyzing the resulting data to build predictive models and identify significant factors and interactions [11]. |

| Design Matrix | The pre-defined set of experimental conditions generated by statistical software. It serves as the rigorous protocol for the DoE study, ensuring efficient and systematic data collection [11] [2]. |

Defining the Problem and Setting Clear, Measurable Objectives for Yield Improvement

Frequently Asked Questions: Problem Definition and Objectives

Q1: Why is it crucial to define the problem and set objectives before starting a Design of Experiments (DoE) for a low-yielding reaction?

A clearly defined problem and objective are the foundation of a successful DoE. Without them, experiments can become unfocused, waste resources, and fail to identify a solution. A precise objective ensures that the experimental design is structured to collect the right data to solve your specific yield problem [18] [19]. It guides the selection of factors to study and the responses to measure, keeping the project aligned with the ultimate goal of yield improvement.

Q2: How can I determine if my low-yielding reaction is a good candidate for a DoE study?

A reaction is a good candidate for DoE if it is stable and repeatable, even if the yield is low. Before starting DoE, you should ensure that the process is under statistical control. This means that when you run the reaction multiple times at the same conditions, the results are consistent. If the yield varies wildly under identical conditions, the underlying process instability must be addressed first, as it will be difficult to distinguish the effect of your experimental factors from random process noise [18].

Q3: What are some common mistakes when setting objectives for yield improvement?

Common mistakes include:

- Vague Goals: Setting an objective like "improve yield" is not measurable. A good objective is specific, such as "increase the reaction yield from 20% to at least 40%."

- Ignoring Process Stability: Conducting a DoE on an unstable process, leading to unreliable results [18].

- Ignoring Critical Responses: Focusing only on yield while ignoring other critical responses like purity, byproduct formation, or cost. A yield increase is not beneficial if it leads to an impure product that is difficult to isolate [11] [19].

Q4: What does a well-structured, measurable objective for a yield improvement DoE look like?

A well-structured objective is Specific, Measurable, Achievable, Relevant, and Time-bound (SMART). For example: "To identify and model the effect of temperature, catalyst charge, and reaction time on the yield and purity of API XYZ-123. The goal is to define a design space that provides a consistent yield of >85% with a purity of >99.5% and reduces the current level of critical byproduct ABC by 90%, within the next 4 weeks." This objective clearly states the factors, responses, and desired targets [11] [19].

Troubleshooting Guide: Problem Definition & Objective Setting

This guide helps you diagnose and resolve common issues encountered during the initial phase of a yield improvement project.

| Problem Area | Symptom | Likely Cause | Corrective Action |

|---|---|---|---|

| Unclear Problem Scope | The team cannot agree on the primary goal; the experiment seems to be expanding to cover too many things. | The problem has not been sufficiently bounded or broken down. | Refocus on the primary issue. Use tools like a SIPOC diagram (Suppliers, Inputs, Process, Outputs, Customers) to map the process and isolate the step with the yield problem [3]. |

| Unmeasurable Objective | Success cannot be quantified. There is debate after the experiment about whether the goal was met. | The objective lacks specific, numerical targets for key responses. | Define measurable responses. Instead of "improve yield," set a target like "achieve a mean yield of 35%." Also, define measurable targets for other Critical Quality Attributes (e.g., reduce specified byproduct to <1.5%) [19]. |

| Unstable Baseline | High variation in yield even when reaction parameters are kept constant. | The process is influenced by uncontrolled lurking variables (e.g., raw material variability, equipment calibration drift, operator technique) [3] [18]. | Stabilize the process first. Use Statistical Process Control (SPC) charts to establish a baseline. Control and standardize all input materials, equipment settings, and operator procedures before beginning the DoE [18]. |

| Overlooked Interactions | The optimized conditions from the DoE do not perform as expected in validation runs. | The experimental objective was too narrow, focusing only on main effects and ignoring how factors interact (e.g., the effect of temperature might depend on the catalyst charge) [11]. | Include interaction effects in the objective. State that the goal is to understand not just the main effects of factors but also their two-factor interactions on the yield [11] [20]. |

Experimental Protocol: Establishing a Baseline for Yield Improvement

Before designing any experiments, you must establish a reliable baseline for your low-yielding reaction.

Objective: To confirm process stability and determine the baseline mean and variability of the reaction yield under current standard operating conditions.

Methodology:

- Standardize Conditions: Document and fix all reaction parameters (e.g., reagent concentrations, stirring speed, temperature, reaction vessel) based on existing procedures [18].

- Replicate Runs: Perform a minimum of n=5 independent experimental runs using the exact standardized conditions. A larger sample size (e.g., n=10) will provide a more reliable estimate of variability.

- Randomize Order: Execute the runs in a random order to protect against unknown lurking variables that may change over time [3] [21].

- Execute and Measure: Carry out each run carefully, ensuring the product is isolated and purified using the standard workup procedure. Measure the final yield for each run.

Data Analysis:

- Calculate the mean yield and standard deviation.

- Create a control chart (e.g., an Individuals and Moving Range chart) with the yield data to assess statistical process control. A process is considered stable if no points fall outside the control limits and no non-random patterns are observed [3] [18].

Example of Baseline Data Table:

| Run Order | Yield (%) | Notes |

|---|---|---|

| 1 | 22.5 | |

| 2 | 24.1 | |

| 3 | 19.8 | Material from new batch used |

| 4 | 23.3 | |

| 5 | 20.5 | |

| Mean | 22.0 | |

| Std. Dev. | 1.8 |

In this example, the low yield of Run 3 could be investigated as a potential special cause related to the new material batch, highlighting the importance of controlling inputs [18].

The Scientist's Toolkit: Key Research Reagent Solutions

Selecting and controlling reagents is critical for a successful yield improvement study. The table below lists essential material categories and their functions.

| Research Reagent / Material | Function in Optimization | Key Consideration for DoE |

|---|---|---|

| High-Purity Starting Materials | The foundation of the reaction; impurities can catalyze side reactions, consuming reactants and lowering yield. | Use a single, consistent batch for the entire DoE to eliminate raw material variability as a source of noise [18]. |

| Catalysts | Substances that increase the reaction rate and selectivity without being consumed, directly impacting yield and byproduct formation. | A primary factor to study. Systematically vary the type (if screening) or charge (mol%) as a factor in the experimental design [11]. |

| Solvents | The medium in which the reaction occurs; can influence reaction rate, mechanism, and selectivity. | A key factor to study. Vary solvent identity (e.g., polarity, protic/aprotic) or volume to find optimal reaction conditions. |

| Reagents & Ligands | Substances used in stoichiometric amounts or to modify catalyst properties, critical for achieving high selectivity. | Systematically test different reagents or ligand structures to suppress byproduct pathways and improve yield [11]. |

DoE Objective Setting Workflow

The following diagram outlines the logical workflow for moving from a vague problem to a clear, actionable DoE objective.

Your Practical Playbook: Implementing Screening and Optimization DoE Designs

FAQs and Troubleshooting Guide

FAQ 1: When should I use a Full Factorial design over a Fractional Factorial design for my reaction optimization?

| Design Type | When to Use | Key Advantages | Key Limitations |

|---|---|---|---|

| Full Factorial | - Number of factors (k) is small (e.g., ≤ 4) [3] [22]- A complete understanding of all interaction effects is required [22]- Sufficient resources (time, materials) are available for a large number of runs | - Provides comprehensive insights into all main effects and interactions [22]- Unambiguously reveals complex, non-linear relationships between variables [22] | - Number of runs grows exponentially with factors (2^k for 2-level designs) [22]- Can be resource-intensive (cost, time, materials) [22] |

| Fractional Factorial | - Screening a larger number of factors (e.g., 5 or more) to identify the most influential ones [3] [23]- Resources are limited, and experimental efficiency is critical [24] [23] | - Drastically reduces the number of runs required (e.g., a half or quarter fraction) [23]- Highly efficient for identifying vital few significant factors [23] | - Introduces aliasing (some effects are confounded and cannot be separated) [23]- Lower resolution designs may confound main effects with two-factor interactions [23] |

Troubleshooting: A common mistake is using a Resolution III fractional factorial design, where main effects are confounded with two-factor interactions, making interpretation difficult. If your initial fractional factorial results are ambiguous, consider folding the design—a technique that adds a mirror-image set of runs to break the alias chains and separate these confounded effects [23].

FAQ 2: What is a Definitive Screening Design (DSD) and when is it most beneficial?

Answer: A Definitive Screening Design (DSD) is an advanced, highly efficient experimental design that allows you to screen a large number of factors while requiring a very small number of runs. Its key differentiator is the ability to identify active factors and estimate their curvilinear (quadratic) effects simultaneously, which is not possible with standard two-level screening designs [3].

When to use a DSD:

- You need to screen many factors (e.g., 6 or more) [3].

- You suspect the relationship between a factor and your response (like reaction yield) might be non-linear [3].

- Experimental runs are exceptionally expensive or time-consuming, and you need the maximum information from a minimal number of experiments [3].

Troubleshooting: A significant advantage of DSDs is that their analysis is often more straightforward than that of highly fractionated factorial designs, as main effects are not confounded with each other or with two-factor interactions [3]. This makes them an excellent choice for researchers who may be less familiar with complex alias structures.

FAQ 3: My initial DoE yielded ambiguous results. How can I augment my design to get clearer answers?

Answer: If your initial design, particularly a fractional factorial, has confounded effects that you cannot separate, you do not need to abandon your work. You can augment your design with additional runs [23].

- Folding a Fractional Factorial Design: This involves adding a new set of runs that is a mirror image (where factor levels are swapped) of your original design. Folding on all factors can separate all main effects from two-factor interactions in a Resolution III design [23].

- Sequential Experimentation: DoE is often most effective when done sequentially. Start with a screening design (e.g., Fractional Factorial or DSD) to identify vital factors. Then, use a more focused design, like a Response Surface Methodology (RSM), to model curvature and find the precise optimum around those vital factors [24].

Experimental Protocol: A Sequential DoE Strategy for Reaction Optimization

This protocol outlines a two-stage approach to efficiently optimize a low-yielding chemical reaction, moving from screening to optimization.

Stage 1: Factor Screening with a Definitive Screening Design (DSD)

- Define Factors and Ranges: List all potential factors (e.g., temperature, catalyst loading, solvent, concentration) and define a high and low level for each that represents a reasonable but wide operating range [25].

- Generate the Design: Use statistical software (e.g., JMP, Minitab, R with the

daewrpackage) to create a DSD for your number of factors [3]. - Execute Experiments: Run the experiments in a fully randomized order to mitigate the effects of lurking variables [22] [26].

- Analyze Data: Fit a model to identify which factors have a statistically significant main or quadratic effect on your response (e.g., reaction yield).

Stage 2: Response Optimization with a Full Factorial or RSM Design

- Select Vital Factors: Choose the 2 or 3 most significant factors identified in Stage 1.

- Create Optimization Design:

- Execute and Analyze: Run the experiments in random order. Use regression analysis and ANOVA to build a predictive model and identify the optimal factor settings that maximize yield [22].

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function in DoE for Reaction Optimization |

|---|---|

| Statistical Software (e.g., JMP, Minitab, R) | Used to generate the experimental design matrix, randomize the run order, and perform statistical analysis (ANOVA, regression) to interpret results [24]. |

| Solvent Map (via Principal Component Analysis) | A plot that groups solvents by their physical properties. Allows for the systematic selection of a few, diverse solvents to efficiently represent the entire "solvent space" in a DoE, moving beyond trial-and-error [2]. |

| Center Points | Replicate experiments run at the midpoint level of all continuous factors. They are essential for estimating pure experimental error and testing for the presence of curvature in the response surface [27] [2]. |

| "Folded" Design | A follow-up set of experimental runs that is a mirror image of an initial fractional factorial design. Used to resolve ambiguity by separating confounded effects (aliases) [23]. |

Workflow Diagram: Selecting Your Experimental Design

The diagram below outlines a logical decision pathway to help you select the most appropriate experimental design for your project.

Troubleshooting Guides

Why is my screening design not identifying any significant factors?

This often occurs due to incorrect factor ranges or a missing critical factor.

Potential Cause & Solution: The chosen ranges for your factors might be too narrow to produce a detectable effect on the response. Re-visit your process knowledge and consider widening the high and low levels for your factors. Additionally, use a cause-and-effect diagram in your planning phase to ensure no critical factor has been overlooked [3].

Verification Protocol:

- Check the model's analysis of variance (ANOVA). A low model F-value and a high p-value indicate the model is not significant.

- Examine the Pareto chart of effects; no factors will exceed the significance line.

- If resources allow, re-run the screening design with wider factor ranges.

How do I handle a large number of potential factors with a limited experimental budget?

Use highly fractional designs like Plackett-Burman or Definitive Screening Designs (DSD).

Potential Cause & Solution: A full factorial design for many factors is prohibitively large. A Plackett-Burman design is an efficient screening design that assumes interactions are negligible compared to main effects, allowing you to screen a large number of factors (N-1 factors in N runs, where N is a multiple of 4) [19] [28]. For more advanced analysis, Definitive Screening Designs can handle a large number of factors with three levels each in a minimal number of runs and allow for the detection of curvature [3].

Verification Protocol:

- Select a design type (e.g., Plackett-Burman) in your statistical software (e.g., JMP, Minitab).

- Specify all your potential factors and set them to two levels.

- The software will generate an experimental run sheet with a fraction of the runs required for a full factorial study. Execute these runs [28].

What does significant "lack of fit" mean in my screening design analysis?

This indicates your linear model might be missing important curvature, often from a quadratic effect.

Potential Cause & Solution: The relationship between a factor and your response is not linear but curved. This can be detected by including center points in your two-level screening design. A significant lack of fit test suggests you may need to move to an optimization design, like a Response Surface Methodology (RSM), that can model this curvature [28].

Verification Protocol:

- Ensure your screening design included 3-5 replicate runs at the center point (all continuous factors set to their mid-level).

- In your software, run the analysis and check the "Lack of Fit" p-value in the ANOVA output. A p-value less than 0.05 indicates significant lack of fit.

- The design can be augmented with axial points to create a central composite design for response surface analysis.

My screening results are inconsistent with known process knowledge. What went wrong?

This can stem from assembly errors, uncontrolled noise variables, or a flawed testing method.

Potential Cause & Solution: Errors during the assembly of experimental units can introduce unexpected variation. Be hyper-vigilant during assembly to ensure each unit is built to the exact specification for its run [6]. Furthermore, an uncontrolled lurking variable (e.g., raw material batch, ambient humidity) may be influencing your results. Finally, validate that your test method accurately simulates real-world conditions [6].

Verification Protocol:

- Review assembly records and procedures for kitting errors.

- Brainstorm potential lurking variables using a SIPOC (Suppliers, Inputs, Process, Outputs, Customers) diagram and control them in future experiments [3].

- Correlate your test results with a known, real-world outcome to validate your test method.

Frequently Asked Questions (FAQs)

Q1: When should I use a screening design versus an optimization design? Screening designs are used early in experimentation when you have many potential factors and need to identify the "vital few" that have the largest impact. Optimization designs (e.g., RSM) are used later to model the response in detail and find the precise factor settings that produce an optimal result after the important factors are known [28] [3].

Q2: How many experimental runs do I need for a screening design? The number of runs depends on the number of factors and the specific design. For example, a Plackett-Burman design can screen up to 11 factors in 12 runs, or 7 factors in 8 runs. Definitive Screening Designs can screen k factors in 2k+1 runs [3]. The key is that it is a fraction of the full factorial, which would require 2^k runs.

Q3: Can screening designs detect interactions between factors? Some can, but with limitations. Traditional screening designs like Plackett-Burman assume interactions are negligible. However, modern designs like Definitive Screening Designs (DSD) or certain fractional factorials have better capabilities to identify active two-factor interactions without being confounded with main effects, which is a significant advantage [28] [3].

Q4: What is the "sparsity of effects" principle? This is a key principle underlying screening designs. It states that in most systems, only a relatively small number of factors (the "vital few") will have significant main effects, while most will have little to no effect (the "trivial many") [28]. Screening designs are built to efficiently find these vital few.

Q5: Our one-variable-at-a-time (OVAT) approach has worked so far. Why switch to screening designs? OVAT is inefficient and can miss critical interactions between factors. For example, changing the level of Factor A might have a different effect depending on the setting of Factor B. A screening design varies all factors simultaneously in a controlled pattern, allowing you to detect these interactions and find better optima with fewer total experiments [29]. A study on copper-mediated fluorination showed that DoE provided a more than two-fold increase in experimental efficiency compared to the OVAT approach [29].

Experimental Protocols

Protocol 1: Setting Up a Definitive Screening Design (DSD) for a Chemical Reaction

This protocol outlines the steps for using a DSD to screen factors affecting the yield of a low-yielding organic synthesis reaction.

1. Objective Definition:

- Primary Goal: Identify factors significantly affecting the reaction yield (%).

- Secondary Goal: Check for any strong two-factor interactions and curvature.

2. Factor and Level Selection:

- Select 5 continuous factors believed to influence the reaction (e.g., Temperature, Catalyst Amount, Reaction Time, Solvent Volume, Stirring Rate).

- Set each factor to three levels: Low (-1), Middle (0), and High (+1). The middle level is critical for detecting curvature.

3. Experimental Design Generation:

- Using statistical software (e.g., JMP Pro), select the "Definitive Screening Design" type.

- Input the 5 factors. The software will automatically generate a design with 2*5 + 1 = 11 experimental runs.

- Randomize the run order to minimize the impact of lurking variables.

4. Execution and Data Collection:

- Conduct each of the 11 reactions according to the randomized run sheet.

- Precisely control and record all factor levels for each run.

- Measure and record the response (reaction yield) for each run using a consistent analytical method (e.g., HPLC).

5. Data Analysis:

- Input the response data into the software.

- Fit a model using the software's automated analysis for DSDs.

- Identify significant factors by examining the Pareto chart and the p-values in the ANOVA table (typically p < 0.05).

- Use the model to understand the direction of each factor's effect and to predict settings for follow-up optimization.

Protocol 2: Executing a Plackett-Burman Screening Design for a Formulation

This protocol is for a formulation scientist needing to screen many excipients and process parameters to improve drug dissolution.

1. Objective Definition:

- Goal: Screen 7 formulation factors to identify those critically affecting the dissolution similarity factor (f2).

2. Factor and Level Selection:

- Choose 7 factors (e.g., Polymer Type [A or B], Binder Level [Low/High], Disintegrant Level [Low/High], Compression Force [Low/High], Lubricant Amount [Low/High], Mixing Time [Low/High], Moisture Content [Low/High]).

- Set each to two levels.

3. Experimental Design Generation:

- In software like Minitab or MODDE, select a Plackett-Burman design for 7 factors.

- The design will likely require 8 experimental runs.

- Include 2 additional center point runs (for continuous factors) to check for curvature and estimate pure error, for a total of 10 runs.

- Randomize the run order.

4. Execution and Data Collection:

- Prepare 10 batches of the formulation according to the design matrix.

- Perform dissolution testing on all batches according to pharmacopeial standards, collecting data at multiple time points.

- Calculate the f2 value for each batch against a reference profile.

5. Data Analysis:

- Perform multiple linear regression to fit a main-effects-only model with the f2 value as the response.

- The half-normal plot or a Pareto chart of effects will visually highlight the most influential factors.

- Factors far from the "noise" line or with low p-values (<0.05) are considered significant and should be investigated further in an optimization study.

Data Presentation

Table 1: Comparison of Common Screening Design Types

| Design Type | Number of Runs for k Factors | Can Estimate Main Effects? | Can Estimate Interactions? | Can Detect Curvature? | Best Use Case |

|---|---|---|---|---|---|

| Plackett-Burman | N (multiple of 4), e.g., 8 runs for 7 factors | Yes | No (assumed negligible) | No (requires center points) | Initial screening of a very large number of factors with a tight budget [19] [28] |

| Fractional Factorial (2^(k-p)) | 2^(k-p) (e.g., 8 runs for 4 factors) | Yes | Yes, but some are confounded (aliased) with other effects | No (requires center points) | Screening when some information on two-factor interactions is needed [3] |

| Definitive Screening Design (DSD) | 2k + 1 | Yes | Yes, all two-factor interactions are clear of main effects | Yes | The modern recommended choice for screening 6-12 factors; highly efficient and informative [3] |

Table 2: Research Reagent Solutions for a Model Copper-Mediated Radiofluorination

This table details key materials used in a model reaction optimized via a screening design, as referenced in the literature [29].

| Reagent / Material | Function in the Experiment |

|---|---|

| Arylstannane Precursor | The substrate molecule that undergoes radiofluorination; its structure is a key variable [29]. |

| Copper Mediator (e.g., Cu(OTf)₂(py)₄) | Facilitates the transfer of the fluoride ion to the aromatic ring, essential for the reaction to proceed [29]. |

| [[¹⁸F]Fluoride Ion | The radioactive isotope introduced into the precursor molecule to create the PET tracer [29]. |

| Anion Exchange Cartridge (QMA) | Used to process and purify the cyclotron-produced [[¹⁸F]fluoride ion before the reaction [29]. |

| Base (e.g., K₂CO₃) | Used to elute the [[¹⁸F]fluoride from the QMA cartridge and make it chemically reactive [29]. |

| Azeotropic Solvent (e.g., MeCN) | Used to dry the [[¹⁸F]fluoride to remove water, which is critical for achieving high reactivity [29]. |

| Organic Solvent (e.g., DMF, DMSO) | The reaction medium that dissolves all components and provides a suitable environment for the fluorination [29]. |

Visualizations

Screening Design Selection Workflow

Screening Design Conceptual Framework

Advanced Optimization with Response Surface Methodology (RSM) and Central Composite Designs

Response Surface Methodology (RSM) is a collection of statistical techniques for designing experiments, building models, and exploring factor relationships to optimize processes. Within RSM, Central Composite Design (CCD) is a widely used experimental design for fitting second-order models, which are essential for identifying optimal conditions in complex chemical processes. For researchers working on improving low-yielding reactions, this sequential methodology provides a structured path from initial screening to final optimization, moving efficiently through experimental space to find factor combinations that maximize desired outcomes. This technical guide addresses common implementation challenges and provides frameworks for successful application in pharmaceutical development contexts.

RSM Fundamentals: Core Concepts FAQ

What is the fundamental objective of Response Surface Methodology? RSM aims to find the optimal factor level combinations that achieve a specific process goal, such as maximum yield, minimum cost, or target specifications. Unlike screening designs that identify important factors, RSM focuses on optimization, often involving second-order models to capture curvature in the response surface and locate stationary points [30].

How does RSM improve upon the "one variable at a time" (OVAT) approach? Traditional OVAT optimization varies one factor while holding others constant, which frequently fails to identify true optimum conditions when factor interactions exist. RSM varies multiple factors simultaneously, efficiently exploring "reaction space" and capturing interaction effects that OVAT approaches miss [2]. For example, temperature and reagent equivalents might interact such that higher temperatures allow fewer equivalents to achieve better yields—a relationship OVAT would likely overlook.

What is the typical sequential process for implementing RSM? RSM generally follows a staged approach:

- Screening: Identify significant factors using first-order designs

- Steepest Ascent/Descent: March toward the optimum region using linear models

- Optimization: Characterize the optimum region with second-order models when curvature becomes significant [30]

This progression from initial first-order model ((y = \beta0 + \beta1x1 + \beta2x2 + \varepsilon)) to full second-order model ((y = \beta0 + \beta1x1 + \beta2x2 + \beta{12}x1x2 + \beta{11}x1^2 + \beta{22}x_2^2 + \varepsilon)) ensures efficient resource use throughout the optimization journey [30].

Central Composite Design: Technical Implementation

What is the structure of a Central Composite Design? CCD combines factorial points, axial (star) points, and center points to efficiently estimate second-order models. The total number of experimental runs in a CCD is calculated as: (N = 2^k + 2k + n0), where (k) is the number of factors, (2^k) represents the factorial points, (2k) represents the axial points, and (n0) represents the center point replicates [31]. For example, with 3 factors and 6 center points: (N = 2^3 + 2(3) + 6 = 8 + 6 + 6 = 20) runs [31].

What role do center points play in CCD? Center points provide three critical functions:

- Estimating pure experimental error

- Checking for model curvature

- Assessing reproducibility of results The test for curvature compares the average response at center points with the predicted response from the first-order model at the center of the design space [30].

How are axial point positions determined in CCD? The distance (\alpha) of axial points from the design center depends on the desired design properties:

- Face-centered ((\alpha = 1)): Points on cube faces; practical when factor limits cannot be exceeded

- Rotatable ((\alpha = (2^k)^{1/4})): Constant prediction variance at equal distances from center

- Spherical ((\alpha = \sqrt{k})): Points on sphere of radius (\sqrt{k}) [32]

The choice affects both statistical properties and practical implementation constraints.

Figure 1: Central Composite Design Structure for 2 Factors

Troubleshooting Common RSM Implementation Challenges

Model Inadequacy and Curvature Detection

Problem: After conducting initial experiments, the model shows significant lack of fit or the center points indicate substantial curvature.

Solution:

- Test for curvature by comparing center point averages with predicted values from the first-order model [30]

- If curvature is significant, proceed directly to second-order modeling rather than steepest ascent

- Consider transforming the response variable or adding additional terms if the second-order model still exhibits lack of fit

Case Example: In optimizing a hydrogenation reaction, researchers found significant curvature in initial screening. They implemented a CCD that revealed temperature and catalyst loading interaction, enabling a 3× yield increase from 10% to 33% while reducing hazardous reagent use [5].

Factor Range Selection and Scaling

Problem: Poor choice of factor ranges leads to insignificant effects or failure to capture the optimal region.

Solution:

- Use coded variables ((x1, x2)) centered on 0, extending +1 and -1 from the center of the experimentation region [30]

- Conduct preliminary range-finding experiments before committing to full CCD

- For factors with uncertain optimal ranges, consider a sequential approach with multiple smaller CCDs

Case Example: In Haemophilus influenzae biomass optimization, researchers used CCD with factors pH (5.15-9.25), temperature (33.6-40.0°C), and agitation (49-300 rpm) to successfully identify optimum conditions at pH 8.5, 35°C, and 250 rpm, achieving 5470 mg/L dry biomass [33].

Experimental Constraints and Disallowed Combinations

Problem: Physical, safety, or procedural constraints prevent testing certain factor combinations in a standard CCD.

Solution:

- Use optimal (computer-generated) designs instead of classical CCD when facing complex constraints

- Employ principal component analysis (PCA) for categorical factors like solvent selection [2]

- Implement constraint-handling methods such as penalty functions or search algorithms that respect boundaries

Case Example: In solvent optimization for an SNAr reaction, researchers used a solvent map based on PCA to systematically explore solvent space while avoiding toxic/hazardous solvents, successfully identifying safer alternatives with comparable performance [2].

Table 1: CCD Experimental Ranges for Different Applications

| Application Area | Factors Investigated | Factor Ranges | Response Variable | Optimum Conditions | Citation |

|---|---|---|---|---|---|

| Hib Biomass Production | pH, Temperature, Agitation | pH: 5.15-9.25, Temp: 33.6-40.0°C, Agitation: 49-300 rpm | Dry biomass (mg/L) | pH 8.5, 35°C, 250 rpm (5470 mg/L) | [33] |

| Cr(VI) Biosorption | Contact time, pH, Initial concentration | Time: 30-210 min, pH: 2-10, Conc: 10-90 mg/L | Adsorption capacity (mg/g) | 120 min, pH 8.0, 50 mg/L (2.355 mg/g) | [31] |

| HfB2 Nanofiber Synthesis | PVP conc., Voltage, Flow rate, Distance, B/Hf ratio | PVP: 6-14 wt%, Voltage: 10-22 kV, Flow: 4-16 μL/min | Fiber diameter, quality | Specific combination for narrow distribution | [34] |

| Halogenated Nitroheterocycle Reduction | Catalyst load, Temperature, Pressure | Specific ranges not provided | Conversion, Impurity profile | Platinum catalyst, optimized loading | [5] |

Research Reagent Solutions and Essential Materials

Table 2: Key Research Materials for RSM Optimization Experiments

| Material Category | Specific Examples | Function in Optimization | Application Context |

|---|---|---|---|

| Catalysts | Nickel Raney, Platinum-based catalysts | Facilitate reaction pathways; significant impact on yield and impurity profile | Hydrogenation reactions [5] |

| Solvents | 1-dodecanol, ethanol, specialized solvent matrices | Extraction, reaction medium; selected via PCA-based solvent maps | Dispersive-solidification liquid-liquid microextraction [35] |

| Polymer Carriers | Polyvinylpyrrolidone (PVP) | Fiber formation, precursor carrier | Electrospinning for nanofibrous composites [34] |

| Metal Precursors | HfCl4, H3BO3 | Ceramic precursor materials | Synthesis of HfB2-based composite nanofibers [34] |

| Biosorbents | Arachis hypogea husk | Heavy metal adsorption | Cr(VI) removal from aqueous media [31] |

| Culture Components | β-NAD, protoporphyrin IX, dialyzed yeast extract | Microbial growth media components | Haemophilus influenzae biomass production [33] |

Advanced Applications and Methodological Extensions

Solvent Optimization Using PCA-Based Maps

Challenge: Traditional solvent selection relies on trial-and-error, potentially overlooking optimal solvents and defaulting to familiar but suboptimal or hazardous options.

Solution:

- Develop solvent maps using Principal Component Analysis (PCA) incorporating 136 solvents with diverse properties [2]

- Select solvents from different map regions to systematically explore solvent space

- Identify safer alternatives to toxic/hazardous solvents while maintaining performance

Implementation: For an SNAr reaction optimization, researchers used this approach to select solvents from different PCA map regions, enabling identification of both optimal solvent characteristics and specific solvent recommendations [2].

Dealing with Multiple Responses and Constraints

Problem: Most real-world optimizations require balancing multiple responses (yield, purity, cost) simultaneously, often with conflicting optimal conditions.

Solution:

- Use desirability functions to combine multiple responses into a single objective

- Implement constrained optimization approaches within RSM framework

- Consider sequential optimization of most critical responses first

Figure 2: Sequential RSM Process for Reaction Optimization

Cross-Platform Implementation Considerations

Software Tools: Various software platforms implement RSM and CCD differently. For example, JMP's custom designer may not include star points by default, using optimal design algorithms instead of classical CCD templates [32].

Recommendation: Understand whether your software uses classical versus optimal design approaches. Classical designs (CCD, Box-Behnken) offer predictable properties, while optimal designs provide flexibility for constrained situations [32].