Box-Behnken Design for Reaction Optimization: A Comprehensive Guide for Pharmaceutical Research

This article provides a comprehensive guide to Box-Behnken Design (BBD), a powerful Response Surface Methodology (RSM) for optimizing chemical and enzymatic reactions in pharmaceutical research and drug development.

Box-Behnken Design for Reaction Optimization: A Comprehensive Guide for Pharmaceutical Research

Abstract

This article provides a comprehensive guide to Box-Behnken Design (BBD), a powerful Response Surface Methodology (RSM) for optimizing chemical and enzymatic reactions in pharmaceutical research and drug development. It covers foundational principles, from its structure as a three-level, spherical design that avoids extreme factorial points, to its application in real-world scenarios like nanomilling, chromatographic separation, and green extraction. The content details a step-by-step methodological workflow for implementation, addresses common troubleshooting challenges, and offers a comparative analysis with other optimization models like I-optimal design and Artificial Neural Networks (ANN). Aimed at researchers and scientists, this guide equips professionals with the knowledge to efficiently design experiments, build predictive models, and achieve robust, optimized reaction conditions.

What is a Box-Behnken Design? Understanding the Core Principles of this RSM Workhorse

Box-Behnken Design (BBD) is a class of highly efficient, rotatable, or nearly rotatable response surface methodology (RSM) designs devised by George E. P. Box and Donald Behnken in 1960 [1]. These three-level factorial designs are specially constructed to fit full quadratic (second-order) models, making them ideal for optimization studies where the goal is to understand the curvature of a response surface and identify optimal process conditions [1] [2] [3]. A key characteristic of BBD is that it avoids combining all factors at their extreme levels simultaneously, making it particularly advantageous when such extreme points are dangerous, physically impossible, or too expensive to run [4] [3]. This application note details the principles, protocols, and practical applications of BBD, providing a structured guide for researchers in drug development and related scientific fields.

Box-Behnken designs are independent quadratic designs that do not contain an embedded factorial design [5]. Instead, the treatment combinations are located at the midpoints of the edges of the process space and at the center [5]. For example, for three factors, the design consists of a central point and the middle points of the edges of the factorial cube [6]. This geometry is spherical, meaning all design points are equidistant from the center, and the design is either rotatable or nearly rotatable, ensuring that the prediction variance depends only on the distance from the center of the design [1] [4].

The design achieves several key goals [1]:

- Each independent variable is placed at one of three equally spaced values, usually coded as -1, 0, +1.

- The design is sufficient to fit a quadratic model containing squared terms, products of two factors, linear terms, and an intercept.

- It maintains a reasonable ratio of experimental points to the number of coefficients in the quadratic model (typically between 1.5 and 2.6).

Comparative Efficiency and Design Structure

Comparison of Required Experimental Runs

Table 1: Number of Experimental Runs Required in Box-Behnken Designs

| Number of Factors | Number of Coefficients in Quadratic Model | Typical Total Runs (with Center Points) | Notable Structure |

|---|---|---|---|

| 3 | 10 | 15, 17 | Combines 2 factors at 4 factorial points while third is at center [1] |

| 4 | 15 | 27, 29 | |

| 5 | 21 | 46, 48 | |

| 6 | 28 | 54, 56 |

Box-Behnken Design vs. Central Composite Design

Table 2: Comparison Between Box-Behnken and Central Composite Designs

| Feature | Box-Behnken Design (BBD) | Central Composite Design (CCD) |

|---|---|---|

| Levels per Factor | 3 levels [3] | Up to 5 levels [3] |

| Extreme Points | Avoids all corner points [7] | Includes corner points and axial (star) points [7] |

| Embedded Factorial | Does not contain an embedded factorial design [3] | Contains an embedded factorial or fractional factorial design [3] |

| Sequential Experimentation | Not suited for sequential experiments [3] | Well-suited for sequential experimentation [3] |

| Experimental Region | Spherical [4] | Cuboidal or spherical depending on axial point placement [2] |

| Primary Advantage | Safer and more practical when extreme points are problematic [7] [4] | Can be built upon previous factorial experiments; allows fitting of higher-order models [2] [3] |

Experimental Protocol: A Practical Guide

This protocol outlines the steps for designing, executing, and analyzing a Box-Behnken Design experiment, using a generic template applicable across various research domains.

Phase 1: Experimental Design and Setup

Step 1: Define the Experimental Goal and Variables

- Identify Response Variable: Clearly define the measurable output to be optimized (e.g., percentage removal of a contaminant, drug yield, purity) [8].

- Select Continuous Factors: Choose the key process factors (typically 3 to 5) that are believed to influence the response. BBD is only for continuous factors [4].

- Define Factor Ranges: Establish the low (-1), middle (0), and high (+1) levels for each factor based on practical knowledge, preliminary experiments, or scientific literature [9].

Step 2: Generate the Design Matrix

- Select a Design Template: Use statistical software (e.g., JMP, Minitab, Design-Expert) or an online calculator to generate the BBD matrix for your number of factors [6].

- Determine Replications and Center Points: Include an appropriate number of center points (often 3 to 6) to estimate pure error and check for curvature [1] [7]. The example for 3 factors with 3 center points results in 15 runs [7].

- Randomize Run Order: Randomize the order of experimental runs to minimize the effects of lurking variables and noise [9].

Table 3: Research Reagent Solutions and Essential Materials

| Item/Category | Function in BBD Experiment | Example from Literature |

|---|---|---|

| Biological Agent | The active material whose response is being measured. | Living macroalga Ulva sp. for Hg removal [8]. |

| Target Analyte/Substrate | The substance to be processed, transformed, or removed. | Mercury (Hg) in a simulated industrial effluent [8]. |

| Culture Medium/Buffer | Provides the necessary ionic strength and pH environment for the process. | Salinity control (15, 25, 35) in aqueous solution [8]. |

| Process Equipment | Apparatus to control and apply the independent factors. | UV-light strobe system controlling treatment time, distance, and voltage [9]. |

| Analytical Instrumentation | Used to quantify the response variable accurately. | Equipment for measuring logarithmic reduction of fungal spores [9]. |

Phase 2: Execution and Data Collection

Step 3: Execute the Experiments

- Follow the randomized test plan meticulously.

- Conduct all runs, including the center points, under the specified conditions.

- Precisely measure and record the response value for each run.

Step 4: Data Entry and Initial Model Fitting

- Enter the response data into the software corresponding to the design matrix.

- Fit the full quadratic model, which includes:

- Linear terms (e.g., A, B, C)

- Two-factor interaction terms (e.g., AB, AC, BC)

- Quadratic terms (e.g., A², B², C²) [4]

Phase 3: Analysis and Optimization

Step 5: Statistical Analysis and Model Reduction

- Analyze Variance (ANOVA): Check the significance of the overall model and individual model terms using p-values (typically with a significance level α = 0.05) [9].

- Reduce the Model: If necessary, remove non-significant terms (except those required for hierarchy) to create a simpler, more predictive model [9].

- Check Model Adequacy: Examine R² (coefficient of determination), adjusted R², and predicted R² to assess how well the model fits the data and predicts new observations [2].

Step 6: Optimization and Validation

- Interpret the Model: Use response surface plots and contour plots to visualize the relationship between factors and the response [2].

- Find Optimal Settings: Utilize the software's numerical optimization function to find the factor levels that achieve the desired response goal (e.g., maximize, minimize, or target) [9].

- Confirmatory Experiment: Conduct one or more additional experiments at the predicted optimal conditions to validate the model's accuracy [9].

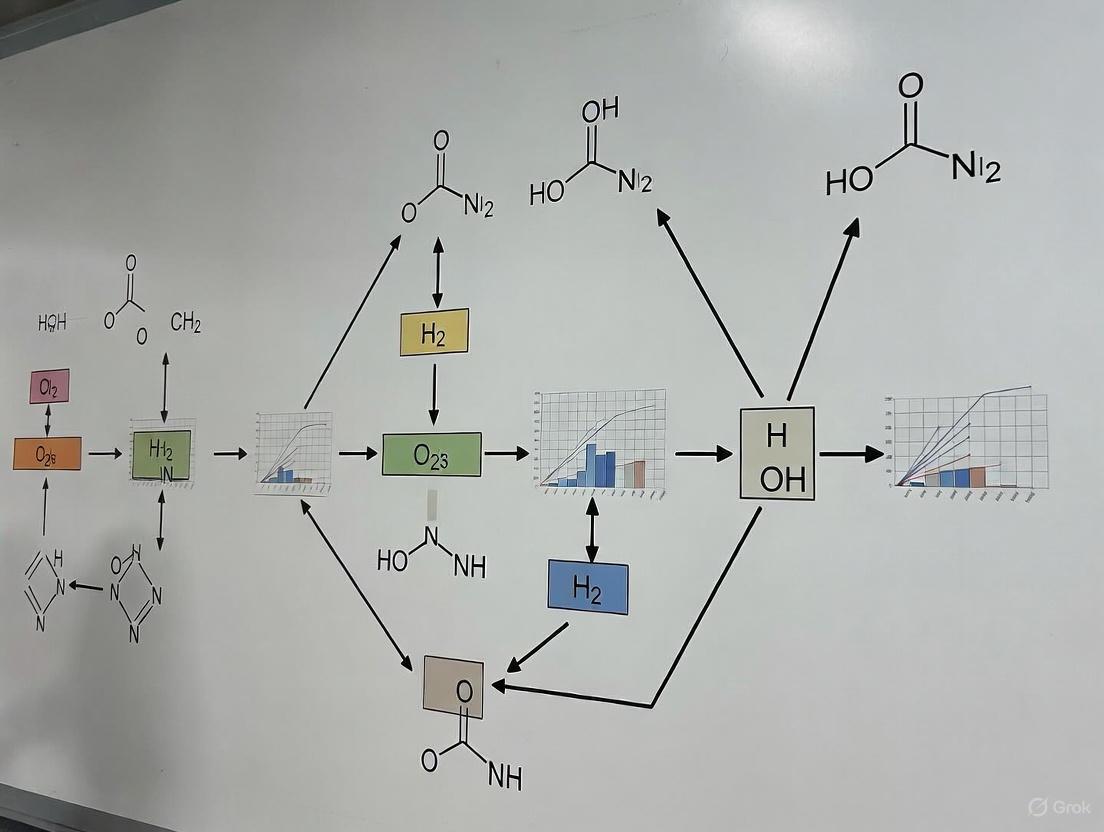

Diagram 1: BBD Experimental Workflow. This flowchart outlines the sequential phases from initial design to final validation.

Case Study: Optimization of Hg Removal by Ulva sp.

A study applied BBD to optimize the removal of mercury (Hg) from a complex aqueous solution using the macroalga Ulva sp. [8].

Experimental Setup:

- Response Variable: Hg removal efficiency (%).

- Factors and Levels:

- A: Seaweed stock density (1.0, 3.0, 5.0 g/L)

- B: Salinity (15, 25, 35)

- C: Initial Hg concentration (10, 100, 190 µg/L)

- Design: A 3-factor, 3-level BBD was used.

Results and Conclusions:

- The design successfully modeled the process, with removal efficiencies ranging from 69% to 97% [8].

- 3-D surface analysis revealed that seaweed stock density was the most impactful variable, with higher densities leading to higher removal [8].

- The application of RSM provided the optimal operating conditions for removing virtually 100% of Hg from waters with high ionic strength, demonstrating the power of BBD for scaling up this remediation biotechnology [8].

Critical Considerations for Researchers

When to Use a Box-Behnken Design:

- The primary goal is optimization and mapping of a response surface.

- You need to fit a quadratic model to detect curvature.

- Runs with all factors at extreme levels (corner points) are unsafe, impossible, or too expensive [4] [3].

- The process is well-understood, and the experimental region of interest is expected to be spherical, with the optimum near the center [2].

Limitations and Alternatives:

- BBD is not ideal for sequential experimentation. If you plan to build on a previous factorial experiment, a Central Composite Design (CCD) is more appropriate [3].

- BBD cannot fit models higher than second-order due to its three-level structure, whereas CCD can with its five levels [2].

- Prediction variance near the vertices (corners) of the design space can be higher since no data is collected there [4].

Diagram 2: BBD Conceptual Geometry. This diagram illustrates the spherical arrangement of points in a BBD, highlighting the central and edge points while showing the absence of corner points.

The Box-Behnken Design (BBD) is a class of experimental designs developed by statisticians George E. P. Box and Donald W. Behnken in 1960 for use in Response Surface Methodology (RSM) [10]. It serves as an efficient approach for fitting second-order quadratic models and optimizing processes involving multiple continuous quantitative factors [10]. BBD employs three coded levels for each factor (-1, 0, +1) while deliberately avoiding the extreme corner points of the factorial space, making it particularly valuable when testing factor extremes is physically impossible, prohibitively expensive, or potentially hazardous [4] [10].

Compared to other RSM designs like Central Composite Designs (CCD), Box-Behnken designs offer superior efficiency in terms of the number of experimental runs required, especially when dealing with three to seven factors [10]. For instance, a three-factor BBD requires only 15 runs (12 edge midpoints plus 3 center points) compared to the 27 runs needed for a full three-level factorial [10] [7]. This efficiency makes BBD particularly valuable in resource-constrained research environments such as pharmaceutical development, materials science, and chemical engineering, where experimental runs are costly or time-consuming [10].

Key Structural Characteristics

Spherical Design Configuration

Box-Behnken designs are characterized by their spherical geometry, where all design points are placed at equal distances from the center of the experimental region [4] [10]. Unlike cuboidal designs that include points at the vertices of a cube, BBD places points only on the surface of a sphere (or hypersphere for higher dimensions) that is inscribed within the factorial cube [7]. This spherical arrangement means that all non-center points lie the same distance from the center, creating a balanced distribution of points across the experimental region [4].

The spherical structure is achieved through a specific construction method that concatenates multiple 2² full factorial designs, each corresponding to a pair of factors varying at their low and high levels (±1), while the remaining factors are held constant at their center level (0) [10]. For k factors, the total number of such pairwise blocks is k(k-1)/2, completed with center points where all factors are set to their coded center value of 0 [10].

Avoidance of Extreme Corner Points

A defining feature of BBD is its systematic avoidance of extreme corner points [4] [10]. The design specifically excludes treatment combinations where all factors are simultaneously at their high or low levels (±1 for all factors) [10]. This characteristic is particularly advantageous in experimental scenarios where combined factor extremes could lead to:

- Dangerous conditions that risk equipment damage or personnel safety [10]

- Physically impossible combinations of factor levels [4]

- Prohibitively expensive experimental runs [4]

- Undesirable side effects or degraded system performance [4]

For pharmaceutical researchers, this means avoiding conditions that might degrade active ingredients, create unsafe reaction conditions, or waste expensive reagents [11] [12]. The avoidance of extremes makes BBD particularly suitable for initial optimization studies where the experimental boundaries are not fully known, as it reduces risks while still providing comprehensive data for modeling the response surface [7].

Rotatability and Prediction Variance

Box-Behnken designs are either rotatable or nearly rotatable, meaning the prediction variance depends only on the distance from the center of the design and not on the direction [10] [7]. Rotatability ensures that the precision of predictions is consistent in all directions from the center point, providing a balanced capability to explore the response surface in any direction with equal confidence [7].

This property is particularly valuable for optimization studies where the direction of improvement is unknown beforehand. The variance of the predicted values remains consistent at all points equidistant from the design center, allowing researchers to navigate the response surface without bias introduced by uneven prediction variance [10] [7]. While perfect rotatability requires a specific choice of alpha in central composite designs, BBDs achieve near-rotatability through their spherical structure and balanced point distribution [7].

Experimental Design and Optimization Workflow

The following diagram illustrates the standard workflow for employing Box-Behnken Design in experimental optimization:

Application Case Studies in Pharmaceutical and Materials Research

Case Study 1: Optimization of Phenolic Compound Extraction

A 2022 study published in Scientific Reports demonstrated the application of BBD for optimizing the extraction of phenolic compounds from Leontodon hispidulus, a wild plant with potential pharmaceutical applications [11]. The research aimed to maximize extraction yield and total phenolic content while evaluating antioxidant, anti-inflammatory, and cytotoxic activities of the optimized extract [11].

Table 1: BBD Factors and Levels for Extraction Optimization

| Factor | Low Level (-1) | Center Point (0) | High Level (+1) |

|---|---|---|---|

| Ethanol/Water Ratio | 50% | 62.5% | 75% |

| Material/Solvent Ratio | 1:10 | 1:15 | 1:20 |

| Extraction Time | 24 hours | 48 hours | 72 hours |

The study employed a 3-factor BBD with 15 experimental runs, including 3 center points [11]. Through response surface analysis, researchers identified optimal conditions: 74.5% ethanol/water ratio, material/solvent ratio of 1:13.5, and extraction time of 72 hours [11]. The optimized extract demonstrated significant biological activity, with 80% free radical inhibition in antioxidant assays, 83.5% inhibition of carrageenan-induced edema in anti-inflammatory tests, and potent cytotoxicity against prostate carcinoma cell lines (IC₅₀ = 16.5 μg/mL) [11].

Case Study 2: Development of Chitosan-Based Topical Films

A 2025 pharmaceutical study utilized BBD to optimize chitosan films plasticized with glycerol for topical delivery of ascorbic acid and metronidazole [12]. The research focused on developing a green fabrication approach that used an aqueous ascorbic acid solution as the solvent, eliminating the need for additional mineral or organic acids [12].

Table 2: BBD Factors and Responses for Film Formulation Optimization

| Factor | Low Level | Center Point | High Level | Response Variables |

|---|---|---|---|---|

| Chitosan (X₁) | 0.5% w/w | 1.0% w/w | 1.5% w/w | Ultimate Tensile Strength (Y₁) |

| Ascorbic Acid (X₂) | 0.5% w/w | 1.0% w/w | 1.5% w/w | Elongation at Break (Y₂) |

| Glycerol (X₃) | 30 wt% | 40 wt% | 50 wt% | Surface pH (Y₃) |

The BBD approach enabled researchers to efficiently explore the complex interactions between formulation components and identify optimal compositions that balanced mechanical properties with desired drug release characteristics [12]. Fourier-transform infrared spectroscopic analysis confirmed the formation of chitosan ascorbate and interactions between chitosan and glycerol in the optimized film [12].

Case Study 3: Optimization of Energy Consumption and Mechanical Properties in 3D Printing

A 2023 study published in Heliyon employed BBD to optimize the energy consumption and tensile strength of Polyetheretherketone (PEEK) in Material Extrusion (MEX) 3D-printing [13]. This research addressed the critical need for energy efficiency in production engineering while maintaining part functionality, particularly for high-performance polymers used in biomedical, automotive, and aerospace applications [13].

Table 3: BBD Factors for 3D Printing Optimization

| Factor | Low Level | Center Point | High Level | Key Findings |

|---|---|---|---|---|

| Nozzle Temperature | 360°C | 380°C | 400°C | Layer Thickness most decisive for tensile strength |

| Printing Speed | 20 mm/s | 30 mm/s | 40 mm/s | LT of 0.1 mm maximized strength (~74 MPa) |

| Layer Thickness (LT) | 0.1 mm | 0.2 mm | 0.3 mm | Minimum LT caused highest energy use (~0.58 MJ) |

The study implemented a three-level BBD with five replicas for each experimental run, enabling a double optimization strategy that simultaneously targeted energy minimization and strength maximization [13]. The statistical analysis revealed that layer thickness was the most decisive control parameter for mechanical strength, though it also correlated with higher energy consumption, highlighting the trade-offs common in optimization problems [13].

Detailed Experimental Protocol for Box-Behnken Design

Stage 1: Pre-Experimental Planning

Step 1: Define Optimization Objectives and Response Variables

- Clearly identify the primary response variable(s) to be optimized (e.g., yield, purity, mechanical strength)

- Establish measurable metrics for each response variable

- Determine acceptable ranges for each response

Step 2: Identify Critical Factors and Experimental Ranges

- Select continuous factors that potentially influence the responses

- Establish feasible low, middle, and high levels for each factor based on preliminary experiments or literature data

- Ensure selected ranges cover the region of interest while avoiding impractical or dangerous conditions

Step 3: Select Appropriate BBD Configuration

- Determine the number of factors (k) to include in the design

- Calculate the required number of experimental runs: N = 2k(k-1) + C₀, where C₀ is the number of center points (typically 3-6) [10]

- For 3 factors: 15 runs; 4 factors: 27 runs; 5 factors: 46 runs [10]

Stage 2: Design Implementation and Data Collection

Step 4: Randomize Experimental Run Order

- Randomize the order of all experimental runs to minimize confounding from extraneous variables

- Include center points distributed throughout the experimental sequence to account for process drift

Step 5: Execute Experiments and Collect Data

- Conduct experiments according to the randomized design matrix

- Precisely measure and record all response variables for each run

- Document any unusual observations or process deviations

Stage 3: Data Analysis and Optimization

Step 6: Fit Second-Order Response Surface Model

- Use multiple linear regression to fit the model: Y = β₀ + ΣβᵢXᵢ + ΣβᵢᵢXᵢ² + ΣβᵢⱼXᵢXⱼ [4]

- Where Y is the predicted response, β₀ is the constant coefficient, βᵢ are linear coefficients, βᵢᵢ are quadratic coefficients, and βᵢⱼ are interaction coefficients [4]

Step 7: Evaluate Model Adequacy

- Perform Analysis of Variance (ANOVA) to assess model significance [14]

- Check lack-of-fit test for model adequacy

- Examine residuals for violations of regression assumptions

- Calculate R² (coefficient of determination) and adjusted R² values

Step 8: Generate Response Surface and Contour Plots

- Visualize the relationship between factors and responses using 3D surface plots [14]

- Use contour plots to identify regions of optimal performance [14]

- Interpret interaction effects through examination of the plots

Step 9: Identify Optimal Conditions

- Utilize numerical optimization techniques or desirability functions to identify factor settings that optimize all responses simultaneously [4]

- Balance potential trade-offs between competing responses

Step 10: Verify Model Predictions

- Conduct confirmation experiments at the predicted optimal conditions

- Compare predicted and observed values to validate model accuracy

- Refine model if discrepancies exceed acceptable limits

Research Reagent Solutions and Essential Materials

Table 4: Essential Research Materials for BBD Experiments

| Category | Specific Examples | Function in BBD Experiments | Application Context |

|---|---|---|---|

| Chemical Reagents | Ethanol, organic solvents, acids, catalysts | Process factors or extraction media | Chemical synthesis, extraction optimization [11] [14] |

| Pharmaceutical Compounds | Ascorbic acid, metronidazole, bioactive molecules | Active ingredients or response indicators | Drug formulation development [12] |

| Polymeric Materials | Chitosan, glycerol, plasticizers | Matrix formers or structural components | Biomaterial and film formulation [12] |

| Engineering Materials | PEEK filaments, activated carbon, zeolite 5A | Primary materials for process optimization | 3D printing, adsorption processes [15] [13] |

| Analytical Tools | HPLC systems, FTIR spectrometers, mechanical testers | Response measurement instruments | Quantitative analysis of experimental outcomes [11] [12] |

| Statistical Software | Design-Expert, JMP, Minitab, R | Experimental design and data analysis | Design construction, model fitting, optimization [4] [12] |

Advantages and Limitations in Research Applications

Key Advantages for Research Optimization

Box-Behnken designs offer several significant advantages for research optimization, particularly in pharmaceutical and materials science applications:

Experimental Efficiency: BBD requires fewer runs than full three-level factorial designs, reducing resource requirements while maintaining model robustness [10]. For example, with four factors, BBD requires only 27 runs compared to 81 for a full factorial [10].

Risk Mitigation: The avoidance of extreme factor combinations prevents potentially dangerous or impractical experimental conditions, enhancing laboratory safety and reducing material waste [4] [10].

Quadratic Modeling Capability: BBD efficiently estimates second-order model coefficients, enabling identification of curvature in response surfaces and more accurate optimization [10].

Rotatability: The spherical design ensures consistent prediction precision in all directions from the center, providing unbiased exploration of the response surface [10] [7].

Important Limitations and Considerations

Despite their advantages, Box-Behnken designs have limitations that researchers should consider:

Lack of Extreme Point Data: The absence of corner points means BBD cannot directly model behavior at factor extremes, which may be important in some applications [10] [7].

Limited Factor Interactions: BBD may not capture all complex interactions in systems with more than five factors, where other designs might be more appropriate [10].

Prediction Variance: While rotatable, BBD may exhibit higher prediction variance near the cube vertices where no data points exist [4].

Sequential Experimentation: BBD cannot be built up sequentially from factorial designs, requiring researchers to commit to a specific design structure from the outset [4].

When applied appropriately with consideration of these characteristics, Box-Behnken Design serves as a powerful tool for systematic optimization across diverse research domains, particularly in pharmaceutical development, materials science, and process engineering where efficiency and safety are paramount concerns.

Box-Behnken Design (BBD) is a class of response surface methodology (RSM) that provides an efficient, systematic framework for optimizing processes through experimental design. Its core strength lies in its ability to estimate the coefficients of a second-order (quadratic) model with a significantly reduced number of experimental runs compared to other designs like the central composite design (CCD), especially as the number of factors increases [16] [2]. This makes BBD particularly valuable in resource-intensive fields such as pharmaceutical development, analytical chemistry, and material science, where experimentation is costly and time-consuming.

The efficiency is primarily achieved by avoiding extreme experimental conditions. Unlike full factorial or central composite designs, BBD does not include runs where all factors are simultaneously set at their highest or lowest levels (corner points of the experimental cube) [7] [16]. This not only reduces the number of runs but also provides a practical safety advantage by preventing experiments in regions where responses might be unstable, dangerous, or prohibitively expensive [16]. The design is structured to explore the experimental space using points on the edges of the cube and includes replicated center points to estimate pure error, ensuring robust model fitting with minimal experimental effort [7] [2].

Structural Framework of the BBD Matrix

Core Architectural Principle

The BBD is constructed for three or more factors, with each factor tested at three levels (coded as -1 for low, 0 for middle, and +1 for high). Its fundamental structure is built by combining two-level factorial designs with incomplete block designs. For each pair of factors, the design is generated by setting those two factors to a two-level factorial arrangement (the four corners of a square) while simultaneously holding all other factors at their mid-level (0) [7] [16] [2]. This systematic approach ensures that the design points are located on a sphere (or hypersphere for more factors) within the experimental region, maximizing the information gained from each run.

Illustrative Three-Factor Matrix

The classic and most straightforward BBD is for three factors. This design requires only 15 experimental runs, which includes 12 edge-point runs and 3 replicated center points, to fit a quadratic model. The design matrix is structured as follows [7]:

Table 1: Standard Box-Behnken Design Matrix for Three Factors

| Run | Block | Factor A | Factor B | Factor C |

|---|---|---|---|---|

| 1 | 1 | -1 | -1 | 0 |

| 2 | 1 | +1 | -1 | 0 |

| 3 | 1 | -1 | +1 | 0 |

| 4 | 1 | +1 | +1 | 0 |

| 5 | 1 | -1 | 0 | -1 |

| 6 | 1 | +1 | 0 | -1 |

| 7 | 1 | -1 | 0 | +1 |

| 8 | 1 | +1 | 0 | +1 |

| 9 | 1 | 0 | -1 | -1 |

| 10 | 1 | 0 | +1 | -1 |

| 11 | 1 | 0 | -1 | +1 |

| 12 | 1 | 0 | +1 | +1 |

| 13 | 1 | 0 | 0 | 0 |

| 14 | 1 | 0 | 0 | 0 |

| 15 | 1 | 0 | 0 | 0 |

This structure demonstrates the BBD's key feature: in every single run, at least one factor is always held at its center point (0). No run corresponds to a vertex (e.g., +1, +1, +1) where all factors are at their extremes [7]. This design is sufficient to efficiently estimate the 10 coefficients in a full quadratic model for three factors (constant, 3 linear, 3 quadratic, and 3 two-factor interaction terms).

Scalability and Run Efficiency

The efficiency of BBD becomes more pronounced as the number of factors increases. The number of required runs grows at a more manageable rate compared to a full factorial approach. For instance, a five-factor BBD can be conducted with 46 experimental runs [7]. This efficiency is a primary reason for its widespread adoption in optimization studies.

Table 2: Comparison of BBD Run Requirements

| Number of Factors | Base Runs in BBD | Example Total Runs (with Center Points) |

|---|---|---|

| 3 | 12 | 15 [7] |

| 4 | 24 | 27 [7] |

| 5 | 40 | 46 [7] |

| 6 | N/A | 54 [7] |

Experimental Protocols for BBD Implementation

Protocol 1: Optimization of Chromatographic Methods

The application of BBD in analytical method development, particularly for High-Performance Liquid Chromatography (HPLC), exemplifies its practical utility. The following protocol is adapted from studies optimizing the separation of fluoroquinolones and the estimation of Thymoquinone [17] [18].

1. Define Objective and Identify Critical Quality Attributes (CQAs):

- Objective: Develop a robust, precise, and accurate HPLC method.

- CQAs: These are the model responses. Common CQAs include retention time, peak resolution (Rs), tailing factor, and the number of theoretical plates (N) [17] [18].

2. Select Critical Method Parameters (CMPs) and Ranges:

- CMPs: These are the independent variables. Typical factors include:

- Ranges for each CMP are determined via preliminary univariate experiments.

3. Generate BBD Matrix and Execute Experiments:

- Using statistical software (e.g., Design-Expert, Minitab), generate the experimental matrix for the selected number of factors.

- Perform the HPLC runs in a randomized order to minimize the effects of uncontrolled variables.

4. Model Fitting and Data Analysis:

- Input the experimental response data (CQAs) into the software.

- Fit a second-order polynomial model. The generic form for three factors is:

Y = β₀ + β₁A + β₂B + β₃C + β₁₂AB + β₁₃AC + β₂₃BC + β₁₁A² + β₂₂B² + β₃₃C²where Y is the predicted response, β₀ is the constant, β₁-β₃ are linear coefficients, β₁₂-β₂₃ are interaction coefficients, and β₁₁-β₃₃ are quadratic coefficients [2]. - Use Analysis of Variance (ANOVA) to assess the model's significance and the lack-of-fit. A high F-value and a p-value < 0.05 typically indicate a significant model.

5. Validation and Optimization:

- Validate the model by performing confirmation experiments at the predicted optimal conditions.

- The method is considered optimized if the results from the confirmation runs fall within the predicted confidence intervals of the model [18].

Protocol 2: Optimization of Material Synthesis and Application

This protocol is adapted from research optimizing the synthesis of a CoO–Fe₂O₃/SiO₂/TiO₂ (CIST) nanocomposite for environmental remediation [20].

1. Define Objective and Responses:

- Objective: Maximize the removal efficiency of a target pollutant (e.g., methylene blue dye, copper ions).

- Response: Percentage removal efficiency of the pollutant.

2. Select Process Parameters and Ranges:

- Parameters:

3. Experimental Execution:

- Prepare solutions according to the BBD matrix.

- For each run, mix the adsorbent with the pollutant solution at the specified pH and concentration for the designated contact time (e.g., at 150 rpm, 25°C).

- Separate the adsorbent via centrifugation or filtration and analyze the supernatant for residual pollutant concentration using UV-Vis spectrophotometry or atomic absorption spectroscopy [20].

4. Data Analysis and Optimization:

- Fit the removal efficiency data to a quadratic model.

- Use the model's response surface and contour plots to identify the interaction between factors (e.g., pH and adsorbent amount) and to pinpoint the region of maximum removal efficiency.

- The optimal conditions are those that maximize the removal percentage while considering practical and economic constraints [20].

Visualizing the BBD Workflow and Efficiency

The following diagrams, created using the DOT language and adhering to the specified color and contrast guidelines, illustrate the logical flow of a BBD study and its core structural principle.

Diagram 1: The BBD Optimization Workflow. This flowchart outlines the sequential steps for conducting a successful Box-Behnken Design-based study, from problem definition to model validation.

Diagram 2: Structural Comparison of BBD and CCD for Three Factors. The BBD (right) uses only edge-centered points (green) and center points (blue), avoiding the extreme corner points (red) and axial points (yellow) found in the CCD (left). This visualizes the core efficiency and safety feature of the BBD structure.

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key materials and reagents commonly employed in BBD-driven experiments across different scientific domains, particularly in pharmaceutical and environmental analytical chemistry.

Table 3: Key Research Reagent Solutions for BBD Experiments

| Reagent/Material | Function/Application | Example Use in BBD Context |

|---|---|---|

| High Molecular Weight Chitosan | Biopolymer film former for topical drug delivery systems. | Used as a factor (e.g., concentration) in optimizing the mechanical properties and drug release of topical films [12]. |

| Acetonitrile (HPLC Grade) | Organic modifier in reverse-phase chromatography mobile phase. | A critical independent variable (% content) optimized to affect analyte retention time and peak resolution [19] [17] [18]. |

| Phosphate Buffer (pH-adjusted) | Aqueous component of HPLC mobile phase to control pH. | The pH is a key factor optimized to influence peak shape, selectivity, and separation efficiency [19] [17]. |

| Methanol (HPLC Grade) | Organic solvent for extraction and mobile phase component. | Used for extracting active compounds (e.g., Thymoquinone) and as a factor in chromatographic optimization [18]. |

| Trimethylamine (TEA) | Ion-pair reagent / masking agent in HPLC. | A factor optimized to reduce peak tailing of ionic analytes (e.g., fluoroquinolones) by interacting with residual silanols on the stationary phase [17]. |

| Magnetic Nanocomposites (e.g., CoO–Fe₂O₃/SiO₂/TiO₂) | Adsorbent for pollutant removal. | The adsorbent amount is a key factor optimized to maximize the removal efficiency of dyes and heavy metals from aqueous solutions [20]. |

Response Surface Methodology (RSM) is a set of advanced design of experiments (DOE) techniques used to better understand and optimize responses. The core difference between a standard factorial design and RSM is the addition of squared (quadratic) terms, which enables the modeling of curvature in the response surface [3]. This makes RSM indispensable for understanding complex regions of a response surface, finding factor levels that optimize a response, and selecting operating conditions to meet specifications. Within RSM, the two main types of designs are Central Composite Design (CCD) and Box-Behnken Design (BBD). While both can fit a full quadratic model, their structural differences and practical implications significantly influence the choice between them for a given experimental goal [3] [21].

The Central Composite Design (CCD) is built upon a factorial or fractional factorial design, augmented with center points and a group of axial points (star points) that enable the estimation of curvature [3]. This structure allows CCD to efficiently estimate first- and second-order terms and is particularly valuable for sequential experimentation, as it can build upon previous factorial experiments by simply adding axial and center points [3] [21]. Conversely, the Box-Behnken Design (BBD) takes a different approach. It is a three-level design that does not contain an embedded factorial design. Instead, its treatment combinations are derived from balanced incomplete block designs and are located at the midpoints of the edges of the experimental space (e.g., combinations of high and low factor levels and their midpoints), deliberately avoiding the extreme corner points [3] [7]. This fundamental difference in structure is the source of the distinct comparative advantages of the BBD.

Key Comparative Advantages of Box-Behnken Design

Operational Safety and Practical Feasibility

A primary advantage of the Box-Behnken Design is its inherent avoidance of extreme factor combinations. BBD never includes runs where all factors are simultaneously set at their highest or lowest extreme levels [3]. This characteristic is critically important when experimenting near the limits of safe operating conditions.

- Avoiding Hazardous Conditions: In processes involving chemical reactions, high pressure, or elevated temperatures, simultaneously pushing all factors to their limits could be dangerous, damage equipment, or produce undesirable outcomes [21]. BBD ensures all design points fall within a safe operating zone.

- Preventing Resource Waste: When using expensive reagents or materials, testing at extreme corners can be wasteful if those conditions are known to be suboptimal or impractical. BBD's structure is more economical in such scenarios [2] [21].

- Biological System Constraints: In biological or pharmaceutical applications (e.g., drug formulation, biomolecule optimization), extreme conditions might denature proteins or kill cells. BBD allows for optimization within a biologically relevant range [22] [23].

For example, in optimizing a drug formulation, a combination of very high binder concentration, very high disintegrant concentration, and very high compression force might produce a tablet that is too hard or fails other quality tests. BBD naturally avoids these risky extremes [23].

Run Efficiency and Cost-Effectiveness

For a given number of factors, BBD often requires fewer experimental runs than a standard CCD, making it more cost-effective and less resource-intensive, particularly for a specific range of factors. The table below illustrates a comparison of run counts between BBD and CCD for different numbers of factors.

Table 1: Comparison of Experimental Run Requirements for BBD and CCD [24] [21] [7]

| Number of Factors | Box-Behnken Design (BBD) Runs | Central Composite Design (CCD) Runs |

|---|---|---|

| 3 | 15 | 17 (Full) or 20 (Circumscribed) |

| 4 | 27 | 27 (Full) or 30 (Circumscribed) |

| 5 | 43 | 45 (Full) or 52 (Circumscribed) |

| 6 | 63 | 79 (Full) or 90 (Circumscribed) |

As shown, BBD offers significant run savings for 3, 5, and especially 6 factors. This efficiency is a major driver for its selection in projects with constrained budgets, time, or material availability. It is important to note that for 4 factors, the run counts are comparable. Furthermore, CCD can sometimes use a fractional factorial for its core, which can reduce its run count, though this may affect its ability to estimate all interactions [21] [7].

Ideal Application Contexts for BBD

Based on its strengths, BBD is the preferred design in the following scenarios:

- Refinement of Well-Understood Processes: When prior knowledge (e.g., from screening experiments) has already identified the critical factors and their approximate operational ranges, BBD is excellent for fine-tuning and finding the optimum point [21] [23].

- Strictly Bounded Design Spaces: When the experimental region is physically or practically constrained, and venturing beyond the original "cube" defined by the factor levels is impossible or undesirable. A face-centered CCD (CCF) is an alternative in this case, but it still tests all extreme corners [3] [24].

- Costly or Difficult Experimental Runs: When each experimental run is expensive, time-consuming, or requires scarce materials, the lower run count of BBD provides a direct and substantial benefit [2].

The following decision flowchart synthesizes the key criteria for selecting between BBD and CCD.

Experimental Protocol for a Box-Behnken Design

This section provides a detailed, step-by-step protocol for planning, executing, and analyzing a Box-Behnken Design, using a typical three-factor optimization as a model.

Stage 1: Pre-Experimental Planning

Step 1: Define the Problem and Responses Clearly state the optimization objective. Define the Critical Quality Attributes (CQAs) or responses that will be measured. These must be quantifiable (e.g., percentage yield, particle size, dissolution rate, impurity level). For example, in a nanoparticle formulation study, the responses could be particle size (nm), polydispersity index (PDI), and zeta potential (mV) [22].

Step 2: Identify and Select Factors Based on prior knowledge (e.g., from literature, preliminary screening designs, or risk assessment), select the Critical Process Parameters (CPPs) and Critical Material Attributes (CMAs) to be investigated. For a BBD, each factor must be continuous. Define the low (-1), middle (0), and high (+1) levels for each factor.

Example from Nanoparticle Optimization [22]:

- Factor X1: Chitosan to tripolyphosphate ratio (e.g., 2:1, 3:1, 4:1)

- Factor X2: pH of chitosan solution (e.g., 4.5, 5.0, 5.5)

- Factor X3: Ultrasonication amplitude (e.g., 40%, 60%, 80%)

Step 3: Generate the Experimental Design Matrix Using statistical software (e.g., Minitab, Design-Expert, JMP), generate the BBD matrix. For 3 factors, this will yield 15 experimental runs, including 3 center points to estimate pure error and model lack-of-fit [24] [7]. The standard design matrix for three factors is shown below.

Table 2: Standard Box-Behnken Design Matrix for Three Factors [24] [7]

| Standard Run Order | Factor X1 | Factor X2 | Factor X3 |

|---|---|---|---|

| 1 | -1 | -1 | 0 |

| 2 | +1 | -1 | 0 |

| 3 | -1 | +1 | 0 |

| 4 | +1 | +1 | 0 |

| 5 | -1 | 0 | -1 |

| 6 | +1 | 0 | -1 |

| 7 | -1 | 0 | +1 |

| 8 | +1 | 0 | +1 |

| 9 | 0 | -1 | -1 |

| 10 | 0 | +1 | -1 |

| 11 | 0 | -1 | +1 |

| 12 | 0 | +1 | +1 |

| 13 | 0 | 0 | 0 |

| 14 | 0 | 0 | 0 |

| 15 | 0 | 0 | 0 |

Stage 2: Execution and Data Collection

Step 4: Randomize and Execute Runs Randomize the run order provided by the software to minimize the impact of uncontrolled, lurking variables. Execute the experiments precisely as specified by the design matrix and measure the response(s) for each run.

Step 5: Record Data Meticulously Record all response data alongside the corresponding factor level settings. Note any unusual observations or deviations from the protocol during the experiment.

Stage 3: Data Analysis and Optimization

Step 6: Model Fitting and ANOVA

Input the experimental data into the statistical software. Fit the data to a second-order (quadratic) model:

Y = β₀ + β₁X₁ + β₂X₂ + β₃X₃ + β₁₂X₁X₂ + β₁₃X₁X₃ + β₂₃X₂X₃ + β₁₁X₁² + β₂₂X₂² + β₃₃X₃²

Perform Analysis of Variance (ANOVA) to assess the significance and adequacy of the model. Key outputs to check include:

- Model F-value and p-value: A significant p-value (typically < 0.05) indicates the model is significant compared to noise [22] [25].

- Lack-of-fit test: A non-significant lack-of-fit (p-value > 0.05) is desirable, suggesting the model adequately fits the data.

- Coefficient of Determination (R² and Adjusted R²): Values closer to 1.0 (e.g., > 0.80) indicate the model explains a large portion of the variability in the response [22] [25].

Step 7: Interpret Results via Diagnostic Plots

- Pareto Chart of Effects: Quickly identifies which linear, interaction, and quadratic terms have the most significant effects on the response [2].

- Response Surface and Contour Plots: Visualize the relationship between factors and the response. These 3D surfaces and 2D contours are invaluable for understanding the nature of the optimum (maximum, minimum, or saddle point) [2].

Step 8: Find Optimal Conditions and Validate Use the software's numerical optimization feature to find the factor levels that produce the most desirable response values. The software will provide one or more solutions. Crucially, perform confirmation experiments at the predicted optimal settings to validate the model's accuracy. Calculate the percent error between the predicted and actual observed values to confirm the model's predictive power [22] [25].

Case Study: Optimization of Chitosan Nanoparticles using BBD

A study aimed to optimize the preparation of chitosan nanoparticles using an ionic gelation-ultrasonication method provides an excellent example of BBD's effective application [22].

Research Reagent Solutions and Materials

Table 3: Key Research Reagents and Materials for Chitosan Nanoparticle Formulation [22]

| Reagent/Material | Function in the Experiment |

|---|---|

| Chitosan | A natural biopolymer serving as the primary matrix-forming material for the nanoparticles. |

| Sodium Tripolyphosphate (TPP) | A cross-linking agent that ionically gels with chitosan to form solid nanoparticles. |

| Acetic Acid Solution | Solvent used to dissolve chitosan and adjust the pH of the solution, a critical factor for nanoparticle formation. |

| Ultrasonic Homogenizer | Equipment used to apply controlled energy input (amplitude) to the mixture, determining the final nanoparticle size and distribution. |

Experimental Setup and Results: The investigators selected three factors: Chitosan:TPP ratio (X1), pH (X2), and Ultrasonication Amplitude (X3). A three-factor, three-level BBD was employed, requiring only 15 experimental runs. The responses measured were particle size, polydispersity index (PDI), and zeta potential [22].

The resulting quadratic models for particle size and PDI showed exceptional accuracy, with R² values of 0.9992 and 0.9955, respectively. The model for zeta potential was less predictive (R² = 0.7857), a common occurrence for responses highly sensitive to minor, un-controlled variations. The analysis, supported by surface plots, revealed that the chitosan ratio was the most significant factor affecting particle size and PDI, while ultrasonication amplitude predominantly influenced zeta potential [22].

Using the optimization function, the software predicted optimal factor settings. A confirmation run at these settings produced results with a low percent error (within 5.22% for the primary response), successfully validating the model's robustness and the effectiveness of BBD for this nanotechnological application [22].

The Box-Behnken Design is not a one-size-fits-all solution but a powerful and specialized tool within the RSM toolkit. Its comparative advantages are clearest when the experimental goal is the efficient and safe optimization of a well-characterized system. The avoidance of extreme factor combinations makes it the design of choice for processes with inherent safety or feasibility constraints, while its lower run requirement for specific numbers of factors provides tangible cost and resource benefits.

The choice between BBD and CCD is a strategic one. CCD retains the advantage for sequential experimentation and exploring less-understood systems where the experimental region might need to be expanded [21] [23]. However, for researchers and drug development professionals operating within known safe boundaries and aiming to refine a process to its peak performance, the Box-Behnken Design offers a robust, efficient, and practical path to success.

Implementing Box-Behnken Design: A Step-by-Step Protocol for Pharmaceutical Applications

Within the framework of a comprehensive thesis on Box-Behnken design (BBD) reaction optimization, the foundational and most critical phase is the precise identification of critical factors and the scientific definition of their experimental ranges. This initial step determines the entire experimental space, directly influencing the model's accuracy, predictive power, and the ultimate success of the optimization endeavor [16] [26]. This protocol details a systematic methodology for executing this crucial first step, tailored for researchers in chemical synthesis, pharmaceutical development, and process engineering.

Theoretical Foundation: The Importance of Factor Selection and Ranges in BBD

Box-Behnken designs are spherical, response surface methodology (RSM) designs used to fit second-order (quadratic) models [4]. Unlike full factorial designs, BBDs efficiently explore the experimental region by combining factors at their mid-levels with other factors at high or low levels, deliberately avoiding extreme corner points where processes might be unstable or hazardous [16]. This structure makes the pre-experimental definition of the feasible and relevant region—bounded by the chosen low and high levels for each factor—absolutely paramount. An incorrectly defined range can lead to a model that misses the true optimum or possesses high prediction variance near the region of interest [4].

Detailed Protocol for Identifying Critical Factors and Defining Ranges

Phase 1: Critical Factor Identification

The goal is to screen a larger set of potential variables to identify the few (typically 3-4) that exert the most significant influence on the response (e.g., yield, purity, conversion rate).

- Literature & Prior Knowledge Review: Conduct a thorough review of analogous reactions or processes. For instance, in optimizing a catalytic reaction, factors like catalyst load, temperature, and time are commonly critical [14]. In chromatographic method development, factors include mobile phase pH, flow rate, and composition [18].

- Mechanistic Understanding: Base hypotheses on the underlying chemical or physical mechanism. Understanding that membrane dehydration limits fuel cell performance at high temperatures directly informs selecting temperature as a critical factor with an upper bound [16].

- Preliminary Screening Designs: Employ two-level factorial or Plackett-Burman designs to statistically screen 5-7 potential factors. The factors showing statistically significant main effects (p-value < 0.05 or 0.1) are selected for deeper optimization via BBD [26].

Phase 2: Defining Factor Ranges (Low, Center, High)

Once critical factors (e.g., A, B, C) are identified, their operational ranges must be set with scientific rationale.

- Establish Practical Boundaries:

- Lower Bound (-1): The minimum feasible or interesting value. This could be defined by practical constraints (e.g., room temperature ~25°C), economic considerations (minimal catalyst use), or reaction kinetics (a temperature below which the reaction is impractically slow).

- Upper Bound (+1): The maximum feasible or safe value. Limits may be set by equipment limits, safety concerns (avoiding decomposition or dangerous pressures), solubility limits, or diminishing returns observed in preliminary tests [16].

- Conduct Scouting Experiments:

- Perform a small set of one-factor-at-a-time (OFAT) experiments or a very sparse factorial design around the suspected optimal region.

- Objective: To empirically verify that the response of interest (e.g., yield) changes meaningfully across the proposed range. The range should be wide enough to capture curvature but narrow enough that the optimum is contained within it [26].

- Set the Center Point (0): The center point is the arithmetic mean of the low and high levels for each continuous factor. It is crucial for estimating pure error and detecting curvature in the model. Multiple replicates (typically 3-6) at the center point are highly recommended [16] [4].

The following table consolidates quantitative data on factor selection and level definition from various published BBD optimization studies, illustrating the application of the above protocol.

Table 1: Critical Factors and Defined Ranges in Exemplary Box-Behnken Optimization Studies

| Application Field & Goal | Critical Factors (Independent Variables) | Low Level (-1) | Center Point (0) | High Level (+1) | Key Response (Dependent Variable) | Source |

|---|---|---|---|---|---|---|

| Organic SynthesisMaximize yield of dihydropyrimidinones | A: Catalyst Amount (mg)B: Reaction Time (min)C: Temperature (°C) | A: 10B: 55C: 60 | A: 20B: 67.5C: 70 | A: 30B: 80C: 80 | Product Yield (%) | [14] |

| Environmental EngineeringMaximize COD removal from wastewater | A: Current (A)B: Pyrite Mass (g)C: Electrolysis Time (min) | A: 0.3B: 0.1C: 60 | A: 0.5B: 0.2C: 75 | A: 0.7B: 0.3C: 90 | COD Abatement Rate (%) | [27] |

| Pharmaceutical FormulationOptimize mechanical properties of chitosan film | A: Chitosan (% w/w)B: Ascorbic Acid (% w/w)C: Glycerol (wt%) | A: 1.0B: 1.0C: 20 | A: 1.5B: 2.0C: 40 | A: 2.0B: 3.0C: 60 | Tensile Strength, Elongation at Break, pH | [12] |

| Analytical ChemistryOptimize HPLC method for thymoquinone | A: Flow Rate (mL/min)B: Buffer pHC: Wavelength (λmax, nm) | A: 0.8B: 3.5C: 247 | A: 0.9B: 4.0C: 249 | A: 1.0B: 4.5C: 251 | Retention Time, Tailing Factor | [18] |

| Materials ScienceOptimize Gd nanoparticle synthesis | A: Gd₂O₃ Mass (g)B: Temperature (°C)C: Time (h) | A: 0.4B: 160C: 5 | A: 0.45B: 170C: 6 | A: 0.5B: 180C: 7 | Nanoparticle Size (nm) | [28] |

Experimental Protocols for Key Steps

Protocol 1: Preliminary Scouting Experiment for Range Finding

Objective: To empirically determine the approximate region where the response changes significantly for a single critical factor. Materials: Standard reaction setup or analytical system. Procedure:

- Hold all other potential factors constant at a reasonable baseline.

- For the factor under investigation (e.g., temperature), run a series of experiments across a broad, safe spectrum (e.g., 30°C, 50°C, 70°C, 90°C).

- Measure the response (e.g., conversion after 1 hour).

- Plot the response versus the factor level. Identify the region where the response begins to plateau or decrease—this indicates a potential boundary. The range for the BBD should span this region of change.

- Repeat for each identified critical factor, time permitting, or use a very limited factorial design to assess interactions crudely.

Protocol 2: Verifying the Feasibility of the Design Space

Objective: To ensure all combinations within the proposed BBD are physically and safely executable. Procedure:

- List all unique factor-level combinations from the planned BBD matrix (e.g., for 3 factors: (Ahigh, Blow, Ccenter), (Alow, Bhigh, Ccenter), etc.) [16] [4].

- Conduct a hazard and operability (HAZOP) review for each combination, especially those involving multiple factors at non-center levels.

- Verify that equipment can maintain the simultaneous conditions (e.g., achieving both maximum temperature and maximum stirring speed).

- Crucial Check: Confirm that the extreme vertices of the cuboidal region (e.g., High-High-High) are not required, as BBD avoids them. This is a safety advantage but must be consciously acknowledged [16].

Visualization: The BBD Optimization Workflow

Diagram Title: BBD Reaction Optimization Workflow with Feedback Loop

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Reagents for BBD Reaction Optimization Studies

| Item / Solution | Function in Optimization Context | Example from Case Studies |

|---|---|---|

| Heterogeneous Catalyst | To accelerate reactions; amount is often a critical continuous factor for optimization. | Eggshell-supported transition metal catalysts (NiCl₂, ZnCl₂) for organic synthesis [14]. |

| Solid Support / Matrix | Provides a high-surface-area, inert platform for catalysts or actives; its properties can influence outcomes. | Chitosan polymer for forming drug-loaded topical films [12]; γ-Al₂O₃ support for Ru-Fe-Ce methanation catalysts [29]. |

| Model Substrate/ Analytic | The compound whose transformation or detection is the goal of the optimization. | Benzophenone for Schiff base synthesis [14]; Thymoquinone for HPLC method development [18]; Naproxen/Diclofenac for adsorption studies [30]. |

| Critical Solvent/ Mobile Phase | Medium for reaction or separation; its composition, pH, or flow rate are common optimized factors. | Ethanol as reaction solvent [14]; Methanol:Acetonitrile:Buffer mixtures in HPLC [18]. |

| Chemical Dopant / Additive | Used to modify material properties or process efficiency; concentration is an optimizable factor. | Glycerol as a plasticizer in film formulation [12]; Polyethylene glycol (PEG) as a nanoparticle stabilizer [28]; Ceria (CeO₂) as a promoter in catalysis [29]. |

| Advanced Electrode Material | In electrochemical optimization, the anode material is a key (sometimes categorical) factor affecting efficiency and cost. | Boron-Doped Diamond (BDD) vs. Platinum (Pt) anodes in electro-Fenton wastewater treatment [27]. |

| Statistical Software | Essential for generating the design matrix, randomizing runs, performing ANOVA, and generating response surface plots. | Tools like JMP [4], Design-Expert [12], or Minitab [14] are standard. |

The Box-Behnken Design (BBD) is a classical, independent quadratic response surface design that is constructed by combining two-level factorial designs with incomplete block designs [1]. It is a highly efficient experimental framework for optimizing processes and products, particularly in pharmaceutical and drug development research, as it requires only three levels for each factor (coded as -1, 0, +1) and does not involve any experiments where all factors are simultaneously at their extreme high or low levels [6] [4] [31]. This characteristic makes it exceptionally valuable when such extreme combinations are prohibitively expensive, physically impossible, or dangerous to run [4] [7]. The primary goal of a BBD is to fit a quadratic model, enabling researchers to identify and model curvature in the response surface and thereby locate the optimum conditions for a given process [1].

This protocol provides a detailed, step-by-step guide for creating a Box-Behnken Design matrix using modern statistical software tools, with a specific focus on JMP and a contextual example from drug formulation development. The workflow from design creation to final analysis is summarized in the diagram below.

Software Platform Selection and Comparison

Various software packages can generate a Box-Behnken Design, each with distinct capabilities and workflows. For pharmaceutical researchers, the choice of platform can depend on the need for a predefined classical design versus the flexibility to accommodate non-standard constraints.

Table 1: Comparison of Software Tools for Generating Box-Behnken Designs

| Software Tool | Recommended Workflow for BBD | Key Characteristics and Advantages | Considerations for Researchers |

|---|---|---|---|

| JMP | DOE > Classic > RSM [32] [33] |

Provides a dedicated platform for classical response surface designs, including BBD. The design table includes a built-in script to automatically fit the correct quadratic model. | The classical design platform is limited to continuous factors and a maximum of eight factors. It cannot construct a BBD within the Custom Design platform [32] [33]. |

| JMP Custom Design | DOE > Custom Design (then click RSM button) [33] |

Offers maximum flexibility. Ideal for non-standard scenarios, such as when the design space has restrictions, when categorical factors are involved, or when the number of runs must be customized. | The generated design will differ from a classical BBD. It is an optimal design tailored to your specific constraints and model, not a pre-defined BBD structure [32]. |

| NCSS | Response Surface Designs [34] |

Includes procedures for generating both Box-Behnken and Central-Composite designs. Offers various analysis tools alongside design generation. | The interface and workflow may differ from other statistical packages. |

| Other Tools (e.g., Design-Expert, MINITAB) | Varies by platform (e.g., in MINITAB: Stat > DOE > Response Surface > Create Response Surface Design) |

Many dedicated statistical packages offer streamlined workflows for generating and analyzing standard designs like the BBD. | Capabilities and default settings (e.g., number of center points) may vary. |

A key consideration is that while JMP's Custom Design platform is extremely powerful and flexible, it will not generate a classical Box-Behnken design. If the specific structure of a BBD is required for methodological consistency or comparison with prior literature, the Classic Response Surface Design platform must be used [32].

Detailed Experimental Protocol: Creating a BBD in JMP

This protocol uses a case study involving the optimization of a polymeric nanoparticle (PLGA) formulation for drug delivery, a common application in pharmaceutical sciences [35]. The goal is to understand how different process parameters affect the size of the nanoparticles, a critical quality attribute.

Research Reagent Solutions and Materials

Table 2: Essential Materials and Reagents for the Featured Nanoparticle Formulation Experiment

| Item Name | Function/Description | Research Application in Example |

|---|---|---|

| Poly(lactic-co-glycolic) acid (PLGA) | A biodegradable and biocompatible polymer. | Serves as the matrix material for the nanoparticle drug carrier system [35]. |

| Polyvinyl Alcohol (PVA) | An emulsifier and stabilizer. | Prevents coalescence of emulsion droplets during the formulation process, controlling particle size and distribution [35]. |

| Dichloromethane (DCM) | An organic solvent. | Dissolves the PLGA polymer to form the organic phase in the single emulsion-solvent evaporation method [35]. |

| Active Pharmaceutical Ingredient (API) | The drug or bioactive compound to be encapsulated. | In the featured study, a coffee extract was used as a model bioactive compound with antioxidant and anticancer properties [35]. |

| Ultra-Pure Water | Aqueous phase solvent. | Forms the continuous phase into which the polymer solution is emulsified [35]. |

Step-by-Step Procedure

Step 1: Define the Response and Factors Clearly state the objective. In this case, it is to understand the influence of three critical process parameters on the particle size (Y1) of PLGA nanoparticles. Select the continuous factors and their ranges based on preliminary experiments or literature. The factors for this example are:

- X1: PVA concentration (%) (Low: 0.5%, High: 2.5%)

- X2: Homogenization speed (rpm) (Low: 10,000, High: 20,000)

- X3: Homogenization time (min) (Low: 5, High: 7.5) [35].

Step 2: Launch the RSM Design Platform in JMP

- Open JMP and navigate to the

DOEmenu. - Select

Classicand thenResponse Surface Design[4] [33]. - This will open a design wizard to guide you through the setup.

Step 3: Specify Factors and Responses

- In the design wizard, first define your response. Click

Add Responseand name it (e.g., "Particle Size (nm)"). You can specify a goal (e.g.,Minimize) and lower/upper limits if desired [4]. - Next, define your factors. Click

Add Factorand chooseContinuous. Add the three factors (X1, X2, X3) and input their low and high values [4] [31].

Step 4: Select the Box-Behnken Design Type

- After defining the factors and response, JMP will present a list of available classical designs.

- Select

Box-Behnkenfrom the list. The software will automatically display the number of runs for the design (e.g., 15 runs for 3 factors, including 3 center points) [4] [7]. - The number of center points can often be adjusted. Center points are crucial for estimating pure error and detecting curvature [7].

Step 5: Generate and Review the Design Matrix

- Click

Continueand thenOKto generate the design. JMP will create a new data table containing the experimental run matrix [4]. - The design table is typically presented in a randomized run order to minimize the effects of lurking variables. The table will have columns for your factors, coded with the values

-1,0, and+1, and a column for recording the response [4] [7]. - A key characteristic of a BBD is visible in this matrix: no run will contain a combination where all factors are at their extreme levels (

-1,-1,-1or+1,+1,+1) [4] [31].

Step 6: Execute Experiments and Record Data

- Execute the experiments in the randomized order specified by the design table.

- Precisely control the factor levels for each run and carefully measure the response (particle size, in this case).

- Record the results in the response column of the JMP data table.

Step 7: Analyze the Data and Build the Model

- The JMP design table includes a built-in

Modelscript. Running this script automatically launches theFit Modeldialog with the correct model structure for a BBD: a full quadratic model including all main effects, two-factor interactions, and quadratic terms [4] [33]. - Click

Runto fit the model. The software will provide an Analysis of Variance (ANOVA) table, parameter estimates, and various diagnostic plots. - Statistically insignificant terms (typically based on a p-value threshold, e.g., 0.05 or 0.10) may be removed to refine the model, a process known as model reduction [31].

Step 8: Optimize the Process

- Use the

Optimization and Desirabilityprofiler tools within JMP to identify the factor settings that produce the most desirable response—in this case, the smallest particle size [4] [31]. - The profiler allows you to visually and numerically explore the response surface and find the optimal process parameters.

The logical relationships and decision points within this experimental process are illustrated below.

Expected Outcomes and Data Interpretation

Upon completing the experimental runs and analysis, the researcher will obtain a predictive quadratic model. For the nanoparticle example, the final model for particle size (Y) in terms of coded factors might take the following form [31]:

Y = β₀ + β₁X₁ + β₂X₂ + β₃X₃ + β₁₂X₁X₂ + β₁₃X₁X₃ + β₂₃X₂X₃ + β₁₁X₁² + β₂₂X₂² + β₃₃X₃²

The ANOVA table will indicate the overall significance of the model, and the parameter estimates will reveal the magnitude and direction of each factor's effect. For instance, a negative coefficient for the linear term of homogenization speed (X₂) would suggest that increasing speed generally decreases particle size. A significant positive interaction between PVA concentration and homogenization time (X₁X₃) would indicate that the effect of one factor depends on the level of the other.

Using the optimization tools, the researcher can then identify the precise combination of PVA concentration, homogenization speed, and time that is predicted to yield the target nanoparticle size with the highest desirability.

Troubleshooting and Technical Notes

- Avoiding Extreme Points: The primary advantage of a BBD is also a potential limitation. If the process optimum is expected to lie at a vertex (a combination of all factor extremes), the BBD, which lacks these points, will have higher prediction variance in the corners compared to a Central Composite Design (CCD) [7] [33].

- Sequential Experimentation: Unlike Central Composite Designs, a BBD cannot be built up sequentially from a simpler factorial design. The entire experiment must be planned and executed as a single set of runs [4].

- Software-Specific Output: The exact run order and number of center points might vary slightly between software packages. Always review the generated design matrix before beginning experimentation.

Within the framework of Box-Behnken Design (BBD) reaction optimization research, the construction and validation of a second-order polynomial model is a critical step that transforms raw experimental data into a powerful predictive tool. This model captures the complex, non-linear relationships between independent process factors and the experimental response, enabling researchers to navigate the optimization landscape efficiently. The general form of this model for k independent factors is expressed in Equation 1 [36] [1]:

Equation 1: General Second-Order Polynomial Model Y = β₀ + ∑βᵢXᵢ + ∑βᵢᵢXᵢ² + ∑∑βᵢⱼXᵢXⱼ + ε

Where:

- Y is the predicted response.

- β₀ is the constant intercept term.

- βᵢ are the linear coefficients.

- βᵢᵢ are the quadratic coefficients.

- βᵢⱼ are the interaction coefficients (for i < j).

- Xᵢ and Xⱼ are the coded values (-1, 0, +1) of the independent factors.

- ε is the random error term.

This model is particularly suited for BBD because the design's structure, with its three levels for each factor, is specifically created to allow for the efficient estimation of these quadratic coefficients and interaction effects, providing a comprehensive map of the response surface [1].

Mathematical Foundation and Model Building

Model Building Protocol

The process of building the model involves a structured protocol to ensure robustness and accuracy.

Step 1: Coefficient Estimation. The coefficients (β) of the polynomial model are estimated from the experimental data using the method of least squares regression. This statistical procedure finds the line of best fit by minimizing the sum of the squares of the residuals (the differences between observed and predicted values). Modern statistical software packages (e.g., Design-Expert, Minitab, R) perform these computations seamlessly [19] [14].

Step 2: Model Fitting and Expression. The estimated coefficients are substituted into the general model structure, resulting in a specific empirical model for the process under investigation.

Example from an HPLC Method Optimization Study [19]: In a study optimizing an RP-HPLC method for simultaneous drug determination, the resolution between peaks (R2) was modeled as a function of pH, percentage of acetonitrile (%ACN), and flow rate. The final fitted model, based on coded factors, would take a form similar to: R2 = 5.25 + 0.15A - 0.32B + 0.08C - 0.11AB + 0.05AC - 0.03BC - 0.45A² - 0.28B² - 0.12C² Here, A, B, and C represent the coded factors for pH, %ACN, and flow rate, respectively.

Step 3: Manual Coefficient Calculation (Illustrative Example). For a simple system with one factor X, the model is Y = β₀ + β₁X + β₁₁X². The coefficients can be calculated using the following matrix equations, which are extended for more complex models in software algorithms:

- β₀ = (∑Yᵢ / N) - β₁(∑Xᵢ / N) - β₁₁(∑Xᵢ² / N)

- The values for β₁ and β₁₁ are solved simultaneously from the normal equations:

- ∑XᵢYᵢ = β₀∑Xᵢ + β₁∑Xᵢ² + β₁₁∑Xᵢ³

- ∑Xᵢ²Yᵢ = β₀∑Xᵢ² + β₁∑Xᵢ³ + β₁₁∑Xᵢ⁴

The following workflow diagram illustrates the sequential protocol for building and validating the second-order model.

Model Validation and Statistical Analysis

Once the model is built, its statistical significance and adequacy must be rigorously validated before it can be used for prediction and optimization. This is primarily done using Analysis of Variance (ANOVA).

Analysis of Variance (ANOVA) Protocol

ANOVA deconstructs the total variability in the observed response data into components attributable to the model and to random error [37] [14].

Step 1: Determine Model Significance (Overall F-test).

- Null Hypothesis (H₀): All model coefficients (except β₀) are zero, meaning the model has no explanatory power.

- Alternative Hypothesis (H₁): At least one coefficient is not zero.

- Decision Rule: A p-value for the overall F-test less than 0.05 (or a chosen alpha level) indicates that the model is statistically significant and explains a substantial portion of the response variation [37].

Step 2: Evaluate Individual Parameter Significance (t-tests).

- The significance of each model term (linear, quadratic, interaction) is tested individually.

- Null Hypothesis (H₀): The specific coefficient βᵢ = 0.

- Decision Rule: A p-value less than 0.05 for a term suggests it is a significant contributor to the model and should be retained. Insignificant terms may be considered for removal to simplify the model, though this should be done cautiously [19].

Step 3: Assess the Lack-of-Fit Test.

- This test compares the residual error to the pure error (replication error from centre points).

- A non-significant Lack-of-Fit (p-value > 0.05) is desired, as it indicates the model fits the data well and there is no remaining systematic variation that could be explained by a more complex model [19] [37].

Key Goodness-of-Fit Metrics

The following metrics are used to quantify how well the model fits the experimental data.

Table 1: Key Goodness-of-Fit Metrics for Model Validation

| Metric | Formula / Description | Acceptance Criteria | Interpretation |

|---|---|---|---|

| Coefficient of Determination (R²) | R² = SSRegression / SSTotal | Closer to 1.0 is better (e.g., >0.90) [14] | Proportion of total variance in the response explained by the model. |

| Adjusted R² | Adj R² = 1 - [(1-R²)(N-1)/(N-P-1)] | Closer to 1.0 is better; should be close to R². | Adjusts R² for the number of model terms (P). Prevents overfitting. |

| Predicted R² | Calculated by excluding data points and predicting them. | Reasonable agreement with Adjusted R² (within 0.2) [19]. | Measures the model's predictive power for new data. |

| Adequate Precision | Signal-to-Noise Ratio = (Ymax - Ymin) / √(Variance) | > 4 is desirable [19]. | Indicates an adequate signal for model navigation. |

| Coefficient of Variation (C.V. %) | C.V. % = (Standard Deviation / Mean) × 100% | Lower values indicate higher reproducibility. | Measures experimental error relative to the mean response. |

Table 2: Exemplary ANOVA Table from a BBD Study on Catalytic Synthesis [14]

| Source | Sum of Squares | Degrees of Freedom | Mean Square | F-Value | p-Value |

|---|---|---|---|---|---|

| Model | 11250.5 | 9 | 1250.1 | 12.45 | < 0.001 |

| A-Catalyst | 1850.2 | 1 | 1850.2 | 18.42 | 0.001 |

| B-Time | 2450.8 | 1 | 2450.8 | 24.40 | < 0.001 |

| C-Temperature | 950.5 | 1 | 950.5 | 9.46 | 0.008 |

| AB | 120.5 | 1 | 120.5 | 1.20 | 0.292 |

| AC | 65.3 | 1 | 65.3 | 0.65 | 0.433 |

| BC | 45.1 | 1 | 45.1 | 0.45 | 0.513 |

| A² | 2850.4 | 1 | 2850.4 | 28.38 | < 0.001 |

| B² | 1850.1 | 1 | 1850.1 | 18.42 | 0.001 |

| C² | 750.3 | 1 | 750.3 | 7.47 | 0.015 |

| Residual | 1505.6 | 15 | 100.4 | ||

| Lack-of-Fit | 1405.2 | 10 | 140.5 | 4.68 | 0.051 |

| Pure Error | 100.4 | 5 | 20.1 | ||

| Cor Total | 12756.1 | 24 |

Interpretation of Table 2: The model is highly significant (Overall F-value of 12.45 with p < 0.001). The linear terms (A, B, C) and quadratic terms (A², B², C²) are significant, while the interaction terms (AB, AC, BC) are not. The lack-of-fit is non-significant (p=0.051 > 0.05), indicating a good model fit. The R² value for this study was reported as 71.2% [14].

Diagnostic Analysis and Model Adequacy Checking

Beyond summary statistics, diagnostic analysis of residuals (the differences between observed and predicted values) is crucial for verifying model assumptions.

Step 1: Check for Normal Distribution of Residuals.

- Protocol: Create a Normal Probability Plot of the residuals.

- Acceptance Criterion: The points should roughly follow a straight line.

- Deviation: A non-linear pattern suggests a non-normal distribution of errors, which may violate a key assumption for ANOVA.

Step 2: Check for Constant Variance (Homoscedasticity).

- Protocol: Plot the residuals against the predicted values.

- Acceptance Criterion: The residuals should be randomly scattered in a band of constant width around zero (no obvious patterns like funnels or curves).

- Deviation: A funnel-shaped pattern indicates non-constant variance (heteroscedasticity).

Step 3: Check for Independence.

- Protocol: Plot the residuals against the run order of the experiments.

- Acceptance Criterion: Random scatter.

- Deviation: A trend or pattern suggests that time-related factors may be influencing the response.

The following diagram illustrates the logical relationships and decision points in the model validation process.

Application Notes and Exemplary Protocols

Case Study 1: Optimization of an RP-HPLC Method

In the development of an RP-HPLC method for simultaneous determination of methocarbamol, indomethacin, and betamethasone, a BBD with three factors (pH, %ACN, flow rate) and two responses (peak resolutions) was employed [19].

Protocol: