Central Composite Design in Organic Synthesis: A Strategic Framework for Optimization in Pharmaceutical Research

This article provides a comprehensive guide to the application of Central Composite Design (CCD) in organic synthesis and pharmaceutical development.

Central Composite Design in Organic Synthesis: A Strategic Framework for Optimization in Pharmaceutical Research

Abstract

This article provides a comprehensive guide to the application of Central Composite Design (CCD) in organic synthesis and pharmaceutical development. Tailored for researchers and drug development professionals, it explores the foundational principles of CCD as a powerful Response Surface Methodology (RSM) tool. The scope spans from core concepts and experimental planning to advanced methodological applications in synthesizing nanomaterials, optimizing analytical techniques, and developing drug formulations. It further addresses critical troubleshooting aspects and offers a comparative analysis with other Design of Experiments (DOE) approaches, equipping scientists with the knowledge to efficiently optimize complex processes, enhance predictive capability, and ensure robust, sustainable outcomes in biomedical research.

Understanding Central Composite Design: Core Principles and Strategic Advantages for Synthetic Chemistry

Response Surface Methodology (RSM) is a powerful collection of statistical and mathematical techniques used for developing, improving, and optimizing processes [1]. It is particularly vital in modeling and analyzing problems where several independent variables influence a dependent response, with the core objective being to optimize that response [1]. RSM employs experimental designs to fit empirical models, which are most effective when the system response is well-modeled by a linear function. However, when curvature is present in the true response surface, a polynomial of a higher degree, such as a second-order model, must be used [2].

Central Composite Design (CCD) is the most prevalent and widely used experimental design for fitting second-order response surface models [2] [3] [4]. Originally developed by Box and Wilson, it serves as an efficient alternative to the more extensive three-level factorial designs [4] [5]. A CCD is a mathematically structured design that allows for the estimation of curvature and the modeling of a response with a second-order equation without requiring a complete three-level factorial experiment, which can be prohibitively expensive in terms of experimental runs, especially as the number of factors increases [2] [6] [4]. The design is constructed around a central point, making it ideal for sequential experimentation, as it can augment a pre-existing two-level factorial or fractional factorial design [2].

The Structure and Types of Central Composite Design

A Central Composite Design is composed of three distinct sets of experimental runs, which together enable the efficient estimation of a second-order model [6] [5]:

- Factorial Points: A two-level full factorial or fractional factorial design forms the core of the CCD. These points, located at the corners of the experimental space (coded as ±1), are used primarily to estimate linear and interaction effects [2] [5].

- Axial Points (or Star Points): These points are located on the axes of the coordinate system, symmetrically at a distance α from the center point, with all other factors set to zero (coded as (±α, 0,..., 0), (0, ±α,..., 0), etc.) [2] [5]. The introduction of these points is what allows for the estimation of the quadratic terms in the model [2].

- Center Points: Several replicates are performed at the center of the design space (coded as (0, 0,..., 0)) [2]. These points provide an independent estimate of pure experimental error and are crucial for detecting curvature in the response surface [2] [6].

The value of α, the distance of the axial points from the center, is a critical parameter that defines the geometry and properties of the CCD. Based on the chosen α value, three primary types of CCD are recognized [4]:

- Circumscribed CCD (CCC): This is the original form of CCD where the axial points are located outside the "cube" formed by the factorial points (|α| > 1). This design requires five levels for each factor and provides a spherical or hyperspherical experimental region. It is often a rotatable design, meaning it offers constant prediction variance at all points equidistant from the design center [2] [4].

- Face-Centered CCD (CCF): In this design, the axial points are placed precisely on the faces of the factorial cube (α = ±1). This results in only three levels for each factor and is useful when it is impractical to set factor levels beyond the -1 and +1 ranges. However, this design is not rotatable [2] [4].

- Inscribed CCD (CCI): Here, the factorial points are scaled down to fit within the space defined by the axial points, which are set at the operational limits of the factors. The entire design is thus inscribed within the original cube. This is applicable when the experimental region is strictly constrained [4].

The table below summarizes the key characteristics of these CCD types and provides the formula for calculating a rotatable design.

Table 1: Types of Central Composite Designs and Their Properties

| Design Type | Alpha (α) Value | Factor Levels | Key Property | Typical Use Case | ||

|---|---|---|---|---|---|---|

| Circumscribed (CCC) | α | > 1 (Often α = (nF)^(1/4) for rotatability) [6] [4] | 5 | Rotatable [4] | Exploring a spherical region; sequential experimentation [4] | |

| Face-Centered (CCF) | α = ±1 [2] [4] | 3 | Non-rotatable [4] | Region of interest is a cube; cannot run experiments outside factorial limits [2] | ||

| Inscribed (CCI) | α | > 1 (Factorial points scaled to ±1/α) [4] | 5 | Rotatable [4] | Experimentation is limited to a constrained, cubical region [4] | |

| Rotatable Design | α = (nF)^(1/4), where nF is the number of points in the factorial part [6] | Varies | Equal prediction variance at equal distances from center [2] [6] | When uniform precision of prediction is desired across the design space [6] |

The total number of experiments (N) required for a CCD with k factors is given by the formula: N = 2^k (or 2^(k-p) for fractional factorial) + 2k + nc, where *nc* is the number of center points [6]. The selection of center points (typically 3-6) is crucial as it helps stabilize the prediction variance across the experimental region [6].

Mathematical Foundation and Model Fitting

The relationship between the independent variables and the response in RSM is typically approximated by a second-order polynomial regression model. For k number of factors, the full quadratic model can be expressed as shown in Equation 1 [3] [4]:

Equation 1: Second-Order Polynomial Model Y = β₀ + ∑βᵢXᵢ + ∑βᵢᵢXᵢ² + ∑∑βᵢⱼXᵢXⱼ + ε

Where:

- Y is the predicted response.

- β₀ is the constant (intercept) term.

- βᵢ are the linear coefficients.

- βᵢᵢ are the quadratic coefficients.

- βᵢⱼ are the interaction coefficients.

- Xᵢ and Xⱼ are the coded values of the independent factors.

- ε is the random error term.

The use of coded factor levels (e.g., -1, 0, +1) instead of natural units is standard practice. This coding avoids issues with multicollinearity, improves the computational accuracy of the regression, and allows for the direct comparison of the magnitude of the regression coefficients, making it easier to judge the relative importance of each factor [1].

After conducting the experiments as per the CCD matrix, the data is analyzed using multiple regression analysis to fit the second-order model. The adequacy of the fitted model is then rigorously tested using Analysis of Variance (ANOVA). Key metrics evaluated during this validation include [7] [1]:

- Coefficient of Determination (R² and Adjusted R²): Measures the proportion of variation in the response explained by the model.

- Lack-of-Fit Test: Determines whether the selected model adequately fits the data or if a more complex model is needed.

- Residual Analysis: Checks the underlying assumptions of the regression model (e.g., normality, constant variance).

Once a statistically adequate model is established, it can be used to navigate the response surface and identify optimal conditions.

Experimental Protocol for Implementing a CCD in Organic Synthesis

The following protocol outlines a step-by-step methodology for applying a Central Composite Design to optimize a generic organic synthesis reaction, such as a catalytic process or a pharmaceutical intermediate synthesis.

Phase 1: Pre-Experimental Planning

- Define the Optimization Objective: Clearly state the goal. For organic synthesis, the Response (Y) could be reaction yield, product purity, selectivity, or the concentration of a key impurity. The objective is to maximize, minimize, or achieve a target value for this response.

- Identify Critical Process Factors (X): Based on prior knowledge or screening experiments, select the key independent variables to be optimized. For a synthetic reaction, typical factors include:

- X₁: Reaction Temperature (°C)

- X₂: Reaction Time (hours)

- X₃: Catalyst Loading (mol%)

- X₄: Reactant Molar Ratio

- Determine Factor Levels and Code Them: Define the low (-1) and high (+1) levels for each factor. Center point (0) is the midpoint. For a Face-Centered CCD with three factors, the levels would be:

- Temperature: Low (60°C), Center (70°C), High (80°C) → Coded: -1, 0, +1

- Time: Low (1 h), Center (2 h), High (3 h) → Coded: -1, 0, +1

- Catalyst Loading: Low (1 mol%), Center (2 mol%), High (3 mol%) → Coded: -1, 0, +1

- Select a CCD Type and Generate the Design Matrix: Using statistical software (e.g., Minitab, Design-Expert), select the appropriate CCD type (e.g., CCF for a constrained region) and generate the experimental run sheet. A three-factor CCF with 3 center points will yield 17 experimental runs (2³ factorial points + 2*3 axial points + 3 center points). Randomize the run order to minimize the effects of lurking variables.

Phase 2: Experimental Workflow and Data Collection

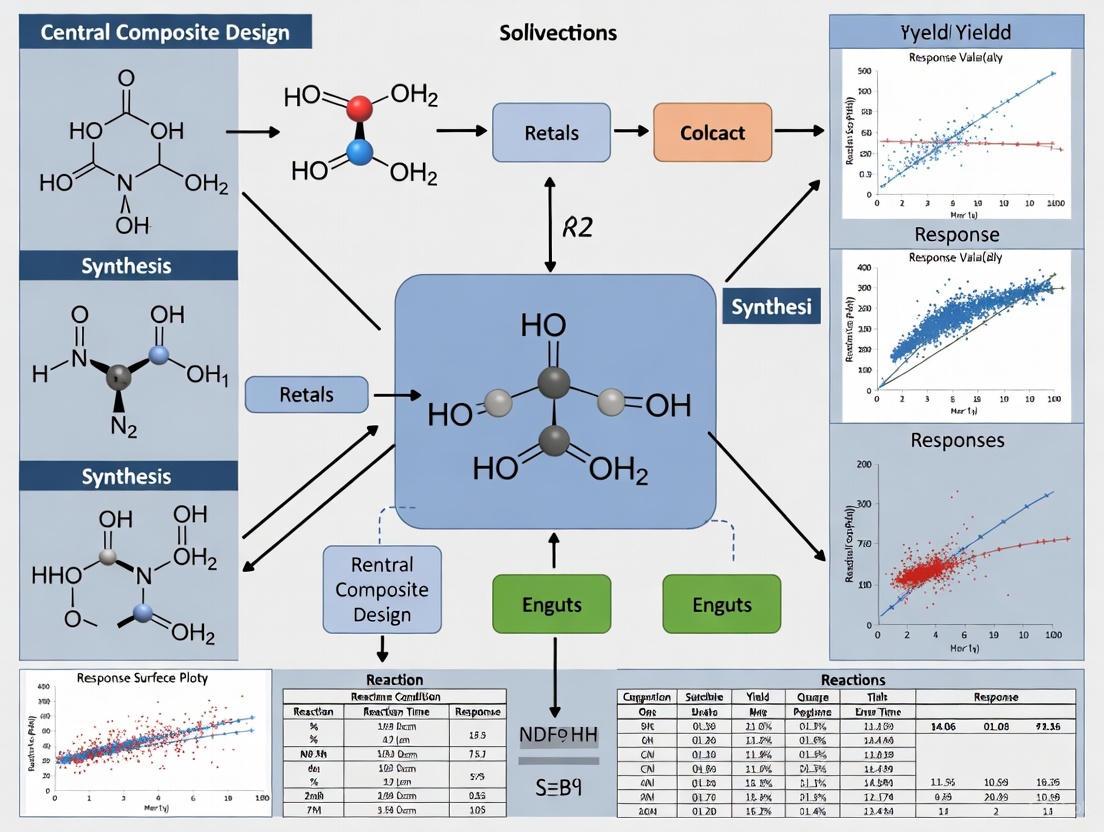

The following diagram illustrates the sequential workflow for conducting the CCD-based optimization.

- Execute Experiments: Perform the synthesis reactions according to the randomized design matrix. For example, for a run with coded values (X₁, X₂, X₃) = (-1, +1, 0), set the temperature to the low level (60°C), the time to the high level (3 h), and the catalyst loading to the center point (2 mol%).

- Measure the Response: After each reaction, work up the product and measure the defined response (e.g., calculate the percentage yield after purification and analysis by HPLC or NMR).

Phase 3: Data Analysis, Optimization, and Validation

- Model Fitting and Validation: Input the experimental data into the statistical software. Perform multiple regression analysis to fit the second-order model (Equation 1). Conduct ANOVA to check the model's significance and the lack-of-fit test. Examine R² values and residual plots to confirm model adequacy.

- Locate the Optimum: Use the software's optimization tools to navigate the generated response surface. This can involve examining contour plots or using numerical optimization techniques (e.g., the desirability function) to find the factor levels that produce the maximum predicted yield.

- Confirmatory Experiment: Run the synthesis reaction at the predicted optimal conditions. Compare the experimentally observed response with the model's prediction. A close agreement validates the model and the optimization process.

Research Reagent Solutions for a CCD in Organic Synthesis

Table 2: Essential Research Reagents and Materials for a Catalytic Reaction Optimization

| Reagent / Material | Function in the Experiment | Specification / Handling Notes |

|---|---|---|

| Organic Substrate(s) | The core starting material(s) undergoing the synthetic transformation. | High purity (e.g., >98%); may require purification (recrystallization, distillation) before use to ensure reproducibility. |

| Catalyst | Substance that increases the reaction rate and selectivity without being consumed. | Precise weighing is critical (e.g., to 0.1 mg). Store as per manufacturer guidelines (e.g., under inert atmosphere if air-sensitive). |

| Solvent | The medium in which the reaction occurs. Can influence reaction rate, mechanism, and selectivity. | Anhydrous grade if required; degassed with an inert gas (N₂, Ar) for air-sensitive reactions. |

| Reagents / Additives | Additional chemicals required for the reaction (e.g., bases, acids, oxidants, reducing agents). | Solution concentrations should be accurately prepared. Handle with appropriate safety measures (e.g., in a fume hood). |

| Internal Standard | For quantitative analysis (e.g., by GC or HPLC). | A chemically inert, non-volatile compound that does not co-elute with reactants or products. |

| Deuterated Solvent | For reaction monitoring or product characterization by NMR spectroscopy. | Stored under inert atmosphere; used in high-precision NMR tubes. |

Applications in Pharmaceutical Research and Organic Synthesis

CCD has been extensively and successfully applied in various domains of pharmaceutical and organic chemistry research, demonstrating its versatility and power:

- Optimization of Analytical Methods: A significant application is in the optimization of analytical procedures for determining analytes in food and pharmaceutical samples. CCD is used to optimize parameters like extraction time, temperature, solvent composition, and pH to maximize recovery and analytical performance [8].

- Drug Formulation and Process Optimization: In pharmaceutical development, CCD is employed to optimize drug formulations to achieve desired dissolution profiles, stability, and bioavailability. It is also used to refine manufacturing processes like tableting and lyophilization (freeze-drying) to control critical quality attributes [4] [1].

- Reaction Optimization: A study on the Photo-Fenton degradation of the antibiotic Tylosin used a CCD with three factors (H₂O₂ concentration, pH, and Fe²⁺ concentration) to optimize the Total Organic Carbon (TOC) removal. The model identified pH and Fe²⁺ concentration as the most critical parameters and successfully predicted optimal conditions for maximum degradation [7].

- Material Science and 3D Printing: In advanced manufacturing, CCD has been used to solve complex problems like color accuracy in PolyJet 3D printing. Researchers used CCD to develop a model that predicts color deviation and determines the optimal color input needed in the printer software to achieve a target color on the printed object [9].

Comparison with Other RSM Designs

While CCD is the most popular design, the Box-Behnken Design (BBD) is another efficient alternative for fitting second-order models. The table below provides a comparative overview.

Table 3: Comparison of Central Composite Design (CCD) and Box-Behnken Design (BBD)

| Feature | Central Composite Design (CCD) | Box-Behnken Design (BBD) |

|---|---|---|

| Structure | Combines factorial, axial, and center points [2]. | Combines two-level factorial designs with incomplete block designs; points are at midpoints of edges of the process space [2]. |

| Levels per Factor | Can have up to 5 levels (CCC), or 3 levels (CCF) [2]. | Always 3 levels per factor [2]. |

| Embedded Factorial | Contains a full or fractional factorial design [2]. | Does not contain an embedded factorial design [2]. |

| Sequentiality | Ideal for sequential experimentation; can build on a previous factorial design [2]. | Not suited for sequential experimentation; is a standalone design [2]. |

| Number of Runs | Generally more runs for the same number of factors (e.g., 15 for 3 factors with 1 center point). | Often fewer design points than CCD for the same number of factors (e.g., 15 for 3 factors) [2]. |

| Axial Points | Includes axial points outside the factorial space (except CCF) [2]. | No axial points; all points are within a safe operating cube [2]. |

| Primary Advantage | Flexibility, rotatability, and suitability for sequential studies. | Economical (fewer runs); ensures all factors are within safe operating limits simultaneously [2]. |

Within the methodological framework of a thesis investigating the optimization of complex organic synthesis pathways, the Central Composite Design (CCD) emerges as a pivotal response surface methodology. It efficiently builds a second-order (quadratic) model, essential for locating optimal reaction conditions—such as maximizing yield or purity—without the prohibitive cost of a full three-level factorial experiment [10]. This application note deconstructs the core architecture of the CCD, providing researchers and drug development professionals with detailed protocols and visual tools for implementation.

Anatomical Deconstruction of the CCD

A standard CCD is composed of three distinct sets of experimental runs, each serving a specific statistical and exploratory purpose [11] [10].

- Factorial Points (The "Cube"): This core is a two-level full factorial or a Resolution V fractional factorial design. The factor levels are coded as -1 (low) and +1 (high), defining the primary region of interest or the "cube" of the design space [11] [12]. For k factors, a full factorial contributes 2k points.

- Axial Points (The "Star"): These are 2k points located on the axes defined by each factor. For each factor, two runs are performed where that factor is set to ±α (with α > 1 typically), and all other factors are set at their center point (0) [11] [10]. These points allow for the estimation of pure quadratic curvature.

- Center Points: These are multiple replicate runs where all factors are set at their midpoint (coded level 0). They provide an estimate of pure experimental error, stabilize the prediction variance across the design space, and allow for a check of model curvature [11] [6].

The following diagram illustrates the integration of these components for a two-factor system, a common scenario in screening reaction parameters like temperature and catalyst loading.

Diagram 1: CCD structure for two factors showing factorial (blue), axial (red), and center (green) points.

Quantitative Design Data and Selection

The choice of the axial distance α and the number of center points (n_c) are critical design decisions that affect properties like rotatability (constant prediction variance at equal distances from the center) and orthogonal blocking [11] [12]. The following tables synthesize key quantitative data for planning.

Table 1: Common α Values and Design Properties for Different CCD Types

| CCD Type | Abbreviation | α Value | Factor Levels | Key Property | Application Context in Synthesis |

|---|---|---|---|---|---|

| Circumscribed | CCC | α > 1 (e.g., (2k)1/4 for rotatability) | 5 | Rotatable; explores largest space [11] | When the operational region can be safely extended beyond initial factorial bounds. |

| Face-Centered | CCF | α = 1 | 3 | Axial points at face centers; not rotatable [11] | When the ±1 levels represent hard practical or safety limits (e.g., solvent boiling point). |

| Inscribed | CCI | α = 1 (factorial points scaled in) | 5 | Rotatable; explores smallest space [11] | When the star points represent absolute limits of operability. |

Table 2: Run Count Comparison for k Factors [13] [6]

| Number of Factors (k) | Factorial Points (2k) | Axial Points (2k) | Recommended Center Points (n_c) | Total CCD Runs | Equivalent 3-Level Full Factorial (3k) |

|---|---|---|---|---|---|

| 2 | 4 | 4 | 5-6 | 13-14 | 9 |

| 3 | 8 | 6 | 5-6 | 19-20 | 27 |

| 4 | 16 | 8 | 6 | 30 | 81 |

| 5 | 32 (or 16 for frac. factorial) | 10 | 6 | 48-58 | 243 |

Table 3: Example α Values for Rotatable Designs with Full Factorial Core [11]

| Number of Factors (k) | Factorial Portion | α = (2k)1/4 |

|---|---|---|

| 2 | 2^2 | 1.414 |

| 3 | 2^3 | 1.682 |

| 4 | 2^4 | 2.000 |

| 5 | 2^5 | 2.378 |

The efficiency of a CCD versus a full 3-level factorial is stark, as shown in Table 2, making it a powerful tool for optimizing multi-parameter organic reactions where experimental runs (syntheses) are resource-intensive [13].

Experimental Protocol: Implementing a CCD for Reaction Optimization

This protocol outlines the steps to design, execute, and analyze a CCD, using the optimization of a hypothetical palladium-catalyzed cross-coupling reaction as a case study. Key factors might include temperature (Factor A), catalyst loading (Factor B), and reaction time (Factor C).

Protocol Part A: Design Phase

- Define Factor Ranges: Based on preliminary screening (e.g., from a prior factorial design), set the low (-1) and high (+1) levels for each continuous factor in uncoded units (e.g., Temperature: 80°C to 120°C) [13] [12].

- Choose CCD Type and α: For a first optimization where limits are not rigid, a rotatable CCC design is preferred. For 3 factors, use α = 1.682 (from Table 3). If factors have strict bounds (e.g., solvent reflux temperature), use a CCF design (α=1) [11] [13].

- Generate Design Matrix: Use statistical software (e.g., Minitab, JMP, R). Specify 3 factors, select "Central Composite" design, and choose the appropriate α. The software will generate a randomized run order to minimize confounding from lurking variables [14]. An example design table is shown in Table 4.

- Incorporate Center Points: Include at least 4-6 replicated center points to adequately estimate pure error and ensure good prediction variance properties in the region of most interest [6].

Table 4: Example CCD Design Table for 3 Factors (Randomized Run Order) [14] [6]

| Run Order | Block | Point Type | A: Temp (Coded) | B: Catalyst (Coded) | C: Time (Coded) | A (Uncoded °C) | B (Uncoded mol%) | C (Uncoded h) |

|---|---|---|---|---|---|---|---|---|

| 1 | 1 | Factorial | -1 | 1 | -1 | 80 | 3.0 | 12 |

| 2 | 1 | Center | 0 | 0 | 0 | 100 | 2.0 | 18 |

| 3 | 1 | Axial | 0 | 0 | -1.682 | 100 | 2.0 | 6.6 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 20 | 2 | Axial | 0 | 1.682 | 0 | 100 | 3.66 | 18 |

Protocol Part B: Execution & Analysis Phase

- Conduct Experiments: Perform the synthesis reactions exactly as specified by the randomized design table. Measure the response variable(s) of interest (e.g., reaction yield, purity by HPLC).

- Fit the Quadratic Model: Using the experimental data, perform multiple linear regression to fit a second-order model: Yield = β₀ + β₁A + β₂B + β₃C + β₁₂AB + β₁₃AC + β₂₃BC + β₁₁A² + β₂₂B² + β₃₃C²

- Model Reduction & ANOVA: Use backward elimination or forward selection based on p-values (e.g., significance level α=0.05) to remove non-significant terms. Analyze the resulting Analysis of Variance (ANOVA) table to assess the model's significance and lack-of-fit [13].

- Interpretation & Optimization: Use contour plots and response surface plots from the fitted model to visualize the relationship between factors and the response. Locate the factor settings that predict the maximum yield or desired purity profile.

- Validation: Run 2-3 confirmation experiments at the predicted optimal conditions to validate the model's accuracy.

The workflow from design to validation is summarized in the following diagram.

Diagram 2: Step-by-step workflow for implementing a CCD in organic synthesis optimization.

The Scientist's Toolkit: Essential Materials for a CCD-Based Study

Table 5: Key Research Reagent Solutions and Materials

| Item | Function/Explanation | Example in Context |

|---|---|---|

| Statistical Software | Used to generate the randomized CCD matrix, perform regression analysis, ANOVA, and create response surface plots. Essential for design and data interpretation. | Minitab, JMP, Design-Expert, R (with rsm package). |

| Controlled Reactor System | Provides precise control over key continuous factors like temperature and stirring rate, ensuring experimental consistency across all design points. | Heated stirrer plates with temperature probes, jacketed reactors connected to circulators. |

| Analytical Instrumentation | Measures the response variable(s) quantitatively and reliably. High precision is critical for detecting the effects modeled by the CCD. | HPLC for purity/ yield, GC-MS, NMR spectroscopy. |

| Standardized Reagents & Solvents | High-purity, consistently sourced materials minimize unexplained variance (noise) in responses, improving the signal-to-noise ratio for the model. | Anhydrous solvents, catalysts from single lot numbers, standardized substrate solutions. |

| (Optional) Automated Platform | For high-throughput experimentation, automated liquid handlers and reaction stations can execute the many runs of a CCD with superior precision and reproducibility. | Automated synthesis robots. |

For a thesis centered on optimizing intricate organic syntheses, the structured deconstruction of a CCD into its factorial, axial, and center point components provides a rigorous, efficient framework. By strategically selecting the design type (CCC, CCF) and parameters (α, n_c), researchers can construct a predictive quadratic model that reliably maps the response surface. This approach enables the identification of optimal reaction conditions—maximizing yield, minimizing byproducts, or balancing multiple critical quality attributes—with significantly fewer experimental runs than traditional one-factor-at-a-time or full factorial methods [13] [6]. The provided protocols, data tables, and toolkit serve as a direct blueprint for integrating this powerful DOE strategy into drug development and complex molecule synthesis workflows.

The Strategic Advantage over One-Variable-at-a-Time (OVAT) Optimization

In the landscape of organic synthesis research, particularly within pharmaceutical development, the optimization of chemical processes represents a critical activity for both process development and library production groups. Traditionally, this has been accomplished through the One-Variable-at-a-Time (OVAT) approach, a method where researchers vary a single factor while holding all others constant. While historically prevalent due to its conceptual simplicity, OVAT reveals significant limitations when applied to complex, modern synthetic pathways where factors frequently interact in non-linear ways [15] [16].

The OVAT method fundamentally assumes that factors do not interact, an assumption often violated in complex chemical systems. This approach fails to capture interaction effects between variables such as temperature, catalyst load, and solvent ratio, which can profoundly influence reaction outcomes including yield, purity, and enantiomeric excess. Consequently, OVAT can lead to misleading conclusions and suboptimal process conditions. Furthermore, OVAT is an inefficient use of resources, requiring a large number of experimental runs to explore the experimental space, which is particularly problematic when reactions are time-consuming or expensive [15] [17]. It also offers a limited scope for true optimization, as it only investigates factor levels along a single path rather than exploring the entire experimental region to find a global optimum [15].

The Design of Experiments (DOE) Alternative

Design of Experiments (DOE) provides a systematic, statistically sound framework that addresses the shortcomings of the OVAT approach. DOE involves the simultaneous variation of multiple input factors to study their main effects and, crucially, their interaction effects on one or more output responses [15] [16]. This methodology is rooted in several key principles:

- Randomization: The random order of experimental runs minimizes the impact of lurking variables and systematic biases.

- Replication: Repeating experimental runs under identical conditions allows for estimation of experimental error and improves the precision of effect estimates.

- Blocking: This technique accounts for known sources of variability (e.g., different batches of starting materials), improving the precision of the experiment [15].

The adoption of DOE, coupled with advances in parallel synthesis equipment and high-throughput analytical techniques, has led to its growing acceptance in pharmaceutical industry laboratories, where compressed development timelines and increasingly complex drug candidate structures demand more efficient optimization strategies [16].

Quantitative Comparison: OVAT vs. DOE

The table below summarizes the critical differences between the OVAT and DOE methodologies, highlighting the strategic advantage of DOE.

Table 1: A Comparative Analysis of OVAT and DOE Methodologies

| Feature | OVAT Approach | DOE Approach | Strategic Implication |

|---|---|---|---|

| Basic Principle | Varies one factor at a time; holds others constant [15] [17]. | Varies multiple factors simultaneously according to a structured design [15] [16]. | DOE efficiently explores the multi-dimensional factor space. |

| Interaction Effects | Cannot detect or quantify interactions between factors [15] [16]. | Explicitly models and quantifies interaction effects [15] [16]. | DOE reveals synergistic or antagonistic effects, preventing suboptimal conclusions. |

| Experimental Efficiency | Low; requires many runs for few factors (e.g., 16 runs for 4 factors) [15]. | High; explores multiple factors with minimal runs (e.g., 16 runs for a 4-factor full factorial) [15]. | DOE saves time and resources, enabling more rapid process development. |

| Optimization Capability | Limited; identifies improved conditions along a single path, not a global optimum [15]. | Strong; enables systematic optimization and identification of robust optimal conditions [15] [16]. | DOE leads to higher-performing, more reliable synthetic processes. |

| Statistical Robustness | Low; often lacks replication and proper error estimation [15]. | High; built on principles of randomization, replication, and blocking [15]. | DOE results are more reliable and reproducible. |

| Model Output | Provides a series of point estimates for individual factor effects [17]. | Generates a mathematical model (e.g., a polynomial) describing the response surface [15] [18]. | The model allows for prediction and deeper process understanding. |

Central Composite Design: A Protocol for Reaction Optimization

For in-depth optimization of organic reactions, Response Surface Methodology (RSM) is the DOE tool of choice. RSM employs mathematical models to map the relationship between input factors and output responses, with the goal of locating optimal factor settings [15]. The most common design used in RSM is the Central Composite Design (CCD).

A CCD is ideally suited for fitting a second-order (quadratic) model, which can capture curvature in the response surface—a common phenomenon in chemical processes. This design is composed of three distinct elements [15]:

- Factorial or Fractional Factorial Points: These form the core of the design and are used to estimate the linear and interaction effects of the factors.

- Axial (or Star) Points: These points are located at a distance α from the center along each factor axis and allow for the estimation of quadratic effects.

- Center Points: Multiple replicates at the center of the design space are used to estimate pure experimental error and check for model curvature.

The following diagram illustrates the structure of a Central Composite Design for two factors, showing how the different point types work together to map the response surface.

Detailed Protocol: Implementing a CCD for a Catalytic Reaction

This protocol outlines the steps for using a CCD to optimize a hypothetical asymmetric catalytic reaction, where the goal is to maximize enantiomeric excess (EE) and yield.

Objective: To determine the optimal combination of Reaction Temperature (°C), Catalyst Loading (mol%), and Solvent Ratio (Water:EtOH) that maximizes the enantiomeric excess and yield of the product.

Step 1: Define Factors and Experimental Domain Based on prior screening experiments (e.g., using a factorial design), the following ranges are established for the optimization:

Table 2: Experimental Factors and Levels for a Central Composite Design

| Factor Name | Low Level (-1) | Center Point (0) | High Level (+1) | Axial Distance (±α) |

|---|---|---|---|---|

| A: Temperature (°C) | 20 | 35 | 50 | ±1.682 (40 and 10) |

| B: Catalyst Loading (mol%) | 1.0 | 2.0 | 3.0 | ±1.682 (0.66 and 3.34) |

| C: Solvent Ratio (Water:EtOH) | 1:1 | 3:1 | 5:1 | ±1.682 (1:6.4 and 1:0.16) |

Note: The axial distance α is often set to (2^k)^(1/4) for a rotatable design, where k is the number of factors. For k=3, α ≈ 1.682 [15] [18].

Step 2: Construct the CCD Matrix A three-factor CCD requires 20 experimental runs: 8 factorial points (2^3), 6 axial points (2*3), and 6 center points. The experimental matrix is constructed as follows:

Table 3: Central Composite Design Matrix and Hypothetical Results

| Run Order | A: Temp | B: Catalyst | C: Solvent | Response 1: Yield (%) | Response 2: EE (%) |

|---|---|---|---|---|---|

| 1 | -1 | -1 | -1 | 75 | 85 |

| 2 | +1 | -1 | -1 | 82 | 78 |

| 3 | -1 | +1 | -1 | 88 | 90 |

| 4 | +1 | +1 | -1 | 90 | 85 |

| 5 | -1 | -1 | +1 | 70 | 80 |

| 6 | +1 | -1 | +1 | 78 | 75 |

| 7 | -1 | +1 | +1 | 85 | 88 |

| 8 | +1 | +1 | +1 | 87 | 82 |

| 9 | -α | 0 | 0 | 68 | 92 |

| 10 | +α | 0 | 0 | 85 | 70 |

| 11 | 0 | -α | 0 | 65 | 75 |

| 12 | 0 | +α | 0 | 92 | 91 |

| 13 | 0 | 0 | -α | 80 | 82 |

| 14 | 0 | 0 | +α | 78 | 84 |

| 15 | 0 | 0 | 0 | 83 | 87 |

| 16 | 0 | 0 | 0 | 84 | 86 |

| 17 | 0 | 0 | 0 | 82 | 88 |

| 18 | 0 | 0 | 0 | 83 | 87 |

| 19 | 0 | 0 | 0 | 84 | 86 |

| 20 | 0 | 0 | 0 | 83 | 87 |

Note: Run order should be randomized to comply with the principle of randomization.

Step 3: Experimental Execution

- Reagent Solutions: Prepare stock solutions of the substrate and catalyst to ensure consistent dosing across all experiments.

- Parallel Synthesis: Utilize a parallel synthesis reactor block to conduct reactions simultaneously, ensuring consistent heating and stirring for all vessels. This dramatically reduces the time required to complete the design [16].

- Workup & Analysis: Quench reactions in a pre-defined order and analyze yields and enantiomeric excess using a high-throughput analytical method, such as UPLC-MS with a chiral stationary phase.

Step 4: Data Analysis and Model Fitting Using statistical software (e.g., JMP, Minitab, or R), fit a quadratic model to each response. The general form of the model is: Y = β₀ + β₁A + β₂B + β₃C + β₁₂AB + β₁₃AC + β₂₃BC + β₁₁A² + β₂₂B² + β₃₃C² The analysis of variance (ANOVA) will identify significant linear, interaction, and quadratic terms. Contour plots and 3D response surface plots are generated from the model to visualize the relationship between factors and responses.

Step 5: Optimization and Validation Employ a desirability function to identify factor settings that simultaneously maximize both yield and EE. The software will suggest one or more optimal solutions. Finally, conduct confirmatory experiments (n=3) at the predicted optimal conditions to validate the model. A successful model will have a low prediction error between the average observed response and the model's prediction.

The Scientist's Toolkit: Essential Research Reagents & Materials

The successful implementation of a DOE strategy relies on specific reagents and technologies that enable efficient, parallel experimentation.

Table 4: Essential Reagents and Materials for Parallel Synthesis and DOE

| Item | Function/Application in DOE |

|---|---|

| Parallel Synthesis Reactor | A reaction block capable of conducting multiple reactions in parallel under controlled temperature and stirring, essential for executing a multi-run design efficiently [16]. |

| Automated Liquid Handler | Provides precise, high-throughput dispensing of reagents, catalysts, and solvents, ensuring accuracy and reproducibility across all experimental runs [16]. |

| Hertz-Mindlin with Parallel Bonding Model | A specific contact model used in Discrete Element Method (DEM) simulations to model flexible materials like crop stems; an example of a sophisticated model parameterized using DOE (e.g., DSD and CCD) [18]. |

| Polymer-Bound Reagents | Reagents like polymer-bound N-hydroxybenzotriazole, used to simplify workup and purification in parallel synthesis, facilitating the rapid development of robust processes [16]. |

| Definitive Screening Design (DSD) | A type of statistical screening design used to evaluate a large number of factors with a minimal number of runs. It is particularly useful in early-stage parameterization, such as calibrating DEM models or screening many potential reaction variables [18]. |

| High-Throughput UPLC-MS | An analytical system capable of rapidly analyzing the composition and purity of hundreds of samples generated from parallel synthesis, providing the data required for DOE modeling [16]. |

The strategic advantage of Design of Experiments over the traditional OVAT approach is clear and compelling. By enabling the efficient exploration of complex factor spaces, capturing critical interaction effects, and providing a robust framework for true optimization, DOE represents a fundamental shift in how organic synthesis is developed and optimized. The application of Central Composite Designs, supported by parallel synthesis technologies and high-throughput analytics, allows researchers in drug development to rapidly achieve superior process understanding and performance, ultimately compressing development timelines and delivering more robust and efficient synthetic routes for active pharmaceutical ingredients [16]. The move from OVAT to DOE is not merely a technical improvement but a strategic necessity in modern organic synthesis research.

In the realm of organic synthesis and drug development, Central Composite Design (CCD) serves as a powerful response surface methodology (RSM) for process optimization, modeling quadratic relationships, and identifying optimal experimental conditions [19]. The parameter Alpha (α), also termed the axial distance, is a fundamental metric that defines the geometry and statistical properties of a CCD [20] [6]. Establishing an appropriate α-value, in conjunction with a well-defined experimental domain, is critical for generating robust predictive models. A CCD is structured around three core sets of design points: a two-level factorial or fractional factorial design that screens factors efficiently, a set of axial (or star) points that estimate curvature, and center points that quantify pure error and stability [19] [21]. The placement of the axial points, governed by α, determines whether the design can model a spherical, rotatable, or cuboidal experimental region, directly impacting the quality of predictions across the factor space [6] [21].

The Significance of Alpha (α) and the Experimental Domain

The value of α directly influences the geometric and statistical characteristics of a CCD, dictating the region over which the model can reliably predict responses. The experimental domain refers to the specific range of values—from lower to upper limits—within which each input factor (e.g., temperature, concentration, pH) is studied [21]. Establishing this domain requires careful consideration of practical constraints, such as solvent boiling points or reagent stability in organic synthesis, and scientific judgment. The interplay between α and the defined experimental domain is crucial; it determines the location of the star points relative to the center and factorial points, thereby controlling the volume and shape of the explorable region [20]. Selecting an appropriate α ensures that the design possesses desirable properties for optimization, such as rotatability—where the prediction variance is constant at all points equidistant from the center—enabling an unbiased exploration of the response surface [6].

Classifying Central Composite Designs and Their Alpha Values

The choice of α gives rise to three primary, distinct types of Central Composite Designs, each with unique properties and applications in laboratory research. The table below summarizes these key design types and their characteristics.

Table 1: Classification and Properties of Central Composite Designs

| Design Type | Terminology | Alpha (α) Value | Factor Levels | Key Characteristics and Applications |

|---|---|---|---|---|

| Circumscribed CCD | CCC | ( \alpha > 1 ) (Often ( \alpha = (2^k)^{1/4} ) for rotatability) [6] [21] | 5 levels: -α, -1, 0, +1, +α [19] | The original form of CCD; explores the largest process space and is ideal when factors can be extended beyond the original factorial range [20]. |

| Face-Centered CCD | CCF | ( \alpha = 1 ) [21] | 3 levels: -1, 0, +1 [19] [20] | Star points are located at the center of the faces of the factorial cube. Used when the experimental domain is strictly limited to the original -1 and +1 factor levels [20]. |

| Inscribed CCD | CCI | ( \alpha < 1 ) | 5 levels: -1, -α, 0, +α, +1 | A scaled-down CCC design where the star points are set at the factorial boundaries. Applied when the experimental domain is truly limited and the extreme settings are -1 and +1 [20]. |

Calculating Alpha for Specific Properties

For a rotatable design, where the prediction variance is equal for all points at the same distance from the center, α is calculated as the fourth root of the number of points in the factorial portion of the design ((nF)): ( \alpha = (nF)^{1/4} ) [6]. For a full factorial design with (k) factors, (n_F = 2^k), making the formula ( \alpha = (2^k)^{1/4} ) [21]. For a spherical design, where all factorial and axial points lie on a sphere of radius (\sqrt{k}), the value is set to ( \alpha = \sqrt{k} ) [6]. The following table provides standard α values for designs with different factor numbers.

Table 2: Standard Alpha Values for Different Numbers of Factors

| Number of Factors (k) | Factorial Points ((2^k)) | Star Points ((2k)) | Rotatable (\alpha) ((2^k)^{1/4}) [6] | Spherical (\alpha) (\sqrt{k}) [6] |

|---|---|---|---|---|

| 2 | 4 | 4 | 1.414 | 1.414 |

| 3 | 8 | 6 | 1.682 | 1.732 |

| 4 | 16 | 8 | 2.000 | 2.000 |

| 5 | 32 | 10 | 2.378 | 2.236 |

Experimental Protocol for Establishing Alpha and the Experimental Domain

This protocol provides a step-by-step methodology for designing and executing a Central Composite Design in organic synthesis research, with a focus on determining α and the experimental domain.

Step 1: Define the Experimental Domain and Code Factor Levels

- Identify Critical Factors: From prior screening experiments (e.g., Plackett-Burman designs), select key continuous factors (e.g., reaction temperature, catalyst loading, pH) for optimization [21].

- Set Factor Boundaries: Define the lower and upper limits for each factor based on scientific knowledge and practical constraints. For instance, in a synthesis step, the lower limit for temperature may be defined by reaction initiation energy, while the upper limit is constrained by solvent reflux or compound degradation [21].

- Code Factor Levels: Assign the low and high levels of the factorial part of the design as -1 and +1, respectively. The center point is coded as 0 [6].

Step 2: Select the Type of CCD and Calculate Alpha

- Choose a Design Type:

- Select a Circumscribed CCD (CCC) if the experimental region can be extended beyond the original factorial boundaries to fit a spherical region and achieve rotatability [20].

- Select a Face-Centered CCD (CCF) if the experimental domain is rigidly fixed at the -1 and +1 levels, requiring only three levels per factor [19] [20].

- Calculate or Set Alpha:

Step 3: Generate the Experimental Design Matrix

- Determine Number of Runs: The total number of experimental runs (N) is given by: ( N = 2^k + 2k + nc ) where ( 2^k ) is the factorial portion, ( 2k ) is the axial portion, and ( nc ) is the number of center point replicates [6]. A typical recommendation is 4-6 center points to ensure a good estimate of pure error and balance prediction variance across the design space [19] [6].

- Create the Matrix: Use statistical software (e.g., Minitab, Stat-Ease, Design-Expert) to generate the design matrix. The software will output a table with coded values for all experimental runs [6].

Step 4: Execute Experiments and Analyze Data

- Randomize Runs: Perform all experiments in a randomized order to minimize the effects of lurking variables and noise.

- Record Responses: Measure the critical response(s) for each run (e.g., reaction yield, purity, particle size).

- Model and Interpret: Fit the data to a second-order polynomial model and use analysis of variance (ANOVA) to assess model significance. Utilize response surface plots to visualize the relationship between factors and identify optimum conditions [22] [23].

The following workflow diagram illustrates the key decision points in this protocol.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, materials, and software commonly employed in experimental design and optimization studies within organic synthesis and pharmaceutical development.

Table 3: Key Research Reagent Solutions and Essential Materials

| Item / Solution | Function / Application in CCD Research |

|---|---|

| Statistical Software (e.g., Minitab, Stat-Ease, Design-Expert) | Used to generate the CCD matrix, randomize runs, perform ANOVA, fit quadratic models, and create response surface plots [6]. |

| pH Adjusters (e.g., NaOH, HCl solutions) | Critical for optimizing processes where pH is a key factor, such as in coagulation-flocculation or hydrolysis reactions, to maintain the experimental domain [22]. |

| Metal Salt Precursors (e.g., ZnCl₂, Al₂O₃) | Used in the synthesis of doped nanocomposites as catalysts or adsorbents, where their concentration is a factor optimized via CCD [23]. |

| Organic Solvents (e.g., Acetonitrile, Methanol) | Employed as reaction media or in mobile phases for analytical monitoring (e.g., HPLC). Their composition or percentage can be a critical factor in a CCD [24]. |

| Bio-coagulants/Adsorbents (e.g., Aloe vera, nanocomposites) | Serve as sustainable, process-specific materials. Their dosage is a primary independent variable optimized in environmental remediation CCD studies [22] [23]. |

| Supporting Electrolytes (e.g., NaCl, Na₂SO₄) | Essential in electrocoagulation processes to enhance conductivity; their concentration can be a factor in a CCD for wastewater treatment optimization [24]. |

Application in Organic Synthesis and Drug Development: A Case Study

The application of CCD with a well-defined α is exemplified in the optimization of ketoprofen removal using a hybrid electrocoagulation-adsorption process, a relevant model for pharmaceutical wastewater treatment [24]. In this study, researchers applied a CCD to model and optimize four independent variables: pH, initial ketoprofen concentration, current density, and adsorbent dose [24]. The design, likely incorporating a rotatable or face-centered α, enabled the team to efficiently navigate the multi-factor experimental space. By analyzing the response surface model, they identified optimal conditions (adsorbent dose of 0.63–0.99 g, current density of 12.32–14.68 mA·cm⁻², pH of 6.5) that achieved complete (100%) removal of the pharmaceutical compound [24]. This case underscores the power of CCD in pinpointing precise operational parameters that maximize process efficiency—a common goal in drug development, from optimizing API synthesis conditions to purifying intermediates. The structured approach ensures that development resources are used efficiently to find the best possible conditions for a desired outcome.

Interpreting Quadratic Models for Reaction Optimization

In the realm of organic synthesis research, the optimization of reaction conditions is paramount for achieving high yields, purity, and process efficiency. Central Composite Design (CCD) has emerged as a powerful statistical technique within Response Surface Methodology (RSM) for building second-order (quadratic) models for response variables without requiring a complete three-level factorial experiment [25] [26] [10]. This approach enables researchers to efficiently explore the effects of multiple factors and their interactions on desired outcomes, providing a mathematical foundation for predicting optimal reaction conditions.

CCD combines a two-level factorial or fractional factorial design with center points and axial points, creating a structured experimental framework that captures linear, interaction, and quadratic effects [10]. For organic synthesis, this means systematically varying critical parameters such as temperature, reactant ratios, catalyst loading, and reaction time to develop a comprehensive model that predicts performance within the defined experimental space. The resulting quadratic model takes the general form:

Y = b₀ + ΣbᵢXᵢ + ΣbᵢᵢXᵢ² + ΣbᵢⱼXᵢXⱼ

Where Y represents the predicted response, b₀ is the constant coefficient, bᵢ represents linear coefficients, bᵢᵢ represents quadratic coefficients, and bᵢⱼ represents interaction coefficients [25] [27]. This polynomial equation serves as the cornerstone for interpreting complex relationships between process variables and reaction outcomes in organic synthesis.

Mathematical Foundation of Quadratic Models

Model Components and Interpretation

The quadratic model derived from CCD experiments provides invaluable insights into reaction behavior through its various coefficients. The linear terms (bᵢ) represent the direct effect of each factor on the response, indicating whether increasing a factor increases or decreases the outcome measure. The quadratic terms (bᵢᵢ) capture curvature in the response surface, revealing whether factors have diminishing or accelerating effects at extreme values. The interaction terms (bᵢⱼ) quantify how the effect of one factor depends on the level of another factor, uncovering synergistic or antagonistic relationships between variables [25] [28].

For example, in the optimization of urea-formaldehyde fertilizer synthesis, the quadratic model revealed that urea:formaldehyde molar ratio was the most significant factor affecting cold-water-insoluble nitrogen content, with both linear and interaction terms showing statistical significance [28]. This type of detailed coefficient analysis allows researchers to move beyond simple linear relationships and understand the complex, non-linear behavior typical of organic reactions.

Assessment of Model Adequacy

Before interpreting a quadratic model's coefficients, it is essential to verify its statistical adequacy. Several diagnostic metrics serve this purpose:

- R-Squared (R²) values indicate the proportion of variance in the response variable explained by the model, with values closer to 1.0 representing better fit [29] [28].

- Adjusted R-Squared accounts for the number of predictors in the model, providing a more conservative estimate of model fit [28].

- F-value and p-value from Analysis of Variance (ANOVA) determine the overall statistical significance of the model [25] [28].

- Lack of fit tests assess whether the model adequately describes the observed data or if a more complex model is needed [28].

In the flux-cored arc welding optimization study, the quadratic model demonstrated exceptional adequacy with an R-Squared value of 0.985 and no significant lack of fit, providing high confidence in the model's predictive capabilities [29]. Similarly, in the urea-formaldehyde study, R² values exceeding 0.97 for both response variables confirmed the models' excellent fit to experimental data [28].

Experimental Design and Protocol

Central Composite Design Configuration

Implementing CCD requires careful consideration of design parameters to ensure rotatability and uniform precision. The protocol involves three distinct sets of experimental runs: (1) factorial points from a 2^k design representing all combinations of factor levels, (2) center points with all factors set at their median values, and (3) axial points where one factor is set at ±α while others remain at center points [10]. The total number of experimental runs (N) is determined by the equation:

N = 2^k + 2k + n₀

Where k represents the number of factors and n₀ the number of center point replicates [25] [27]. The distance of axial points (α) from the design center depends on the desired properties, with common approaches including rotatable designs (α = F¹́⁴) or orthogonal designs [10] [30].

Table 1: Types of Central Composite Designs and Their Properties

| Design Type | Rotatable | Factor Levels | Uses Points Outside ±1 | Accuracy of Estimates |

|---|---|---|---|---|

| Circumscribed (CCC) | Yes | 5 | Yes | Good over entire design space |

| Inscribed (CCI) | Yes | 5 | No | Good over central subset of design space |

| Faced (CCF) | No | 3 | No | Fair over entire design space; poor for pure quadratic coefficients |

Step-by-Step Experimental Protocol

The following protocol outlines the systematic approach for implementing CCD in organic synthesis optimization:

Factor Selection and Level Determination: Identify critical process variables through preliminary screening experiments or literature review. Define low (-1), center (0), and high (+1) levels for each factor based on practical constraints and scientific rationale [25] [29].

Experimental Design Generation: Select the appropriate CCD type based on the number of factors and desired properties. Statistical software packages such as Design Expert, MATLAB, or R can generate the design matrix with randomized run order to minimize systematic error [25] [30].

Experimental Execution: Conduct experiments according to the generated design matrix, strictly adhering to the specified factor levels. Replicate center points to estimate pure error and assess experimental reproducibility [25] [10].

Response Measurement: Accurately measure response variables of interest (e.g., yield, purity, conversion) for each experimental run. Employ analytical techniques with demonstrated precision and accuracy [29] [28].

Model Development and Validation: Fit the quadratic model to experimental data using regression analysis. Evaluate model adequacy through statistical diagnostics and residual analysis [25] [28].

Optimization and Verification: Utilize response surface plots and optimization algorithms to identify factor settings that maximize or minimize the response as desired. Confirm model predictions through verification experiments at the identified optimum conditions [29].

Diagram 1: Experimental workflow for reaction optimization using Central Composite Design. The process begins with objective definition and proceeds through iterative model development until adequate predictive capability is achieved.

Case Studies in Organic Synthesis

Biodiesel Production Optimization

In a comprehensive study optimizing biodiesel yield from the transesterification of methanol and vegetable oil with a catalyst derived from eggshell, CCD was employed to investigate the effects of reaction time, methanol-to-oil ratio, catalyst loading, and reaction temperature [25]. The researchers utilized Design Expert 13 software to develop a reduced quadratic model with a significant p-value of 0.0325, indicating statistical significance. The model yielded an F-value of 3.57, suggesting only a 3.25% probability that such results could occur due to noise alone.

The optimization revealed that all studied factors significantly affected biodiesel yield, with optimal conditions identified at approximately 61°C temperature, 22.13 methanol-to-oil ratio, and 3.7 wt% catalyst loading. Under these conditions, approximately 91% biodiesel yield was achieved. Notably, the CCD approach reduced the experimental runs to 18 compared to the 20 runs originally used in the referenced work, demonstrating the efficiency of the experimental design [25].

Urea-Formaldehyde Fertilizer Synthesis

CCD was successfully applied to optimize the synthesis of urea-formaldehyde slow-release fertilizers, with specific focus on maximizing cold-water-insoluble nitrogen (CWIN) while minimizing hot-water-insoluble nitrogen (HWIN) [28]. Three critical factors were investigated: urea:formaldehyde molar ratio (X₁), reaction temperature (X₂), and reaction time (X₃). The resulting quadratic models were:

CWIN = 93.75 - 44.05X₁ - 1.65X₂ + 13.92X₃ + 0.95X₁X₂ - 10.27X₁X₃ + 0.10X₂X₃ - 3.11X₁² + 0.003X₂² - 1.39X₃²

HWIN = 216.64 - 235.59X₁ - 1.68X₂ + 15.32X₃ + 0.40X₁X₂ - 8.87X₁X₃ - 0.11X₂X₃ + 72.12X₁² + 0.016X₂² + 0.46X₃²

Statistical analysis revealed that the urea:formaldehyde molar ratio was the most significant factor, with both linear and quadratic terms showing high significance. The models exhibited excellent predictive capability with R² values of 0.9789 and 0.9721 for CWIN and HWIN, respectively. Optimization identified ideal conditions at a molar ratio of 1.33, temperature of 43.5°C, and reaction time of 1.64 hours, yielding CWIN of 22.14% and HWIN of 9.87% [28].

Flux-Cored Arc Welding Process Optimization

While not strictly an organic synthesis application, the optimization of flux-cored arc welding parameters demonstrates the universal applicability of CCD and quadratic model interpretation [29]. This study investigated four factors—current, voltage, stick out, and angle—on tensile strength of welded joints. The resulting quadratic model showed exceptional adequacy with an R-Squared value of 0.985 and no significant lack of fit.

Through response surface analysis and numerical optimization, the ideal parameters were identified as current = 300 ampere, voltage = 30 volts, stick out = 45 millimeter, and angle = 63.255 degree. The model predicted a tensile strength of 7,716.9811 kgf at these settings, which was verified through confirmation experiments [29]. This case highlights how CCD can effectively handle multiple factor optimizations even in non-chemical contexts.

Table 2: Comparative Analysis of CCD Applications Across Different Domains

| Application Domain | Factors Studied | Response Variable | Optimal Conditions | Model Performance |

|---|---|---|---|---|

| Biodiesel Production [25] | Temperature, Methanol-to-Oil Ratio, Catalyst Loading | Biodiesel Yield | Temp: ~61°C, Ratio: 22.13, Catalyst: 3.7 wt% | p-value: 0.0325, F-value: 3.57 |

| Urea-Formaldehyde Synthesis [28] | Molar Ratio, Temperature, Time | Cold/Hot Water Insoluble Nitrogen | Ratio: 1.33, Temp: 43.5°C, Time: 1.64h | R²: 0.9789/0.9721 for CWIN/HWIN |

| Welding Optimization [29] | Current, Voltage, Stick Out, Angle | Tensile Strength | Current: 300A, Voltage: 30V, Stick Out: 45mm, Angle: 63.26° | R²: 0.985, No significant lack of fit |

Visualization of Response Surfaces

Response surface plots provide powerful visualization tools for interpreting quadratic models and identifying optimal conditions. These three-dimensional surfaces represent the relationship between factors and response, enabling researchers to observe curvature, interaction effects, and locate regions of maximum or minimum response [29].

Diagram 2: Structural components of Central Composite Design showing how factorial, center, and axial points are combined to enable development of full quadratic models capable of capturing complex response surfaces.

When interpreting response surface plots, several characteristic shapes provide insights into factor effects:

- Elliptical contours indicate interaction between factors, where the effect of one factor depends on the level of another.

- Circular contours suggest minimal interaction between the plotted factors.

- Stationary points (maximum, minimum, or saddle points) represent regions where the response is optimized.

- Ridge systems occur when the response remains constant along a particular direction, suggesting multiple combinations of factors can achieve similar results.

In the welding optimization study, examination of response surfaces revealed significant interaction between current and voltage, with elliptical contours indicating these factors could not be optimized independently [29]. Similarly, in the urea-formaldehyde study, the pronounced curvature in response surfaces confirmed the importance of quadratic terms in the model [28].

Research Reagent Solutions and Materials

Table 3: Essential Research Reagents and Materials for CCD Implementation in Organic Synthesis

| Reagent/Material | Function in Optimization | Application Example |

|---|---|---|

| Statistical Software (Design Expert, MATLAB, R) | Experimental design generation, regression analysis, model visualization, and optimization | All case studies utilized specialized software for design generation and analysis [25] [29] [30] |

| Catalyst Systems | Variable factor affecting reaction rate and selectivity | Eggshell-derived catalyst in biodiesel production [25] |

| Reactants with Adjustable Stoichiometry | Factor manipulation through molar ratios | Methanol-to-oil ratio in biodiesel; urea-formaldehyde ratio in fertilizer synthesis [25] [28] |

| Temperature Control Systems | Precise manipulation of reaction temperature | Temperature as factor in biodiesel and urea-formaldehyde optimization [25] [28] |

| Analytical Instruments (HPLC, GC, FTIR, etc.) | Accurate response measurement | Inline FT-IR for real-time monitoring in imine synthesis [31] |

Advanced Applications and Methodological Considerations

Real-Time Optimization and Process Analytical Technology

Recent advances have integrated CCD with real-time optimization approaches and Process Analytical Technology (PAT). For instance, researchers have developed fully automated microreactor systems equipped with inline FT-IR spectroscopy that perform multi-variate optimizations in real-time [31]. This approach combines the structured experimental framework of CCD with continuous reaction monitoring, enabling rapid identification of optimal conditions while simultaneously collecting kinetic data.

In the optimization of imine synthesis from benzaldehyde and benzylamine, this integrated approach demonstrated significant advantages over traditional one-variable-at-a-time methods, including improved efficiency, better detection of factor interactions, and the ability to respond dynamically to process disturbances [31]. The integration of real-time analytics with experimental design represents a cutting-edge application of CCD principles in modern organic synthesis.

Comparison with Alternative Optimization Methods

While CCD offers numerous advantages for reaction optimization, researchers should consider alternative approaches based on specific research goals:

- Box-Behnken Designs provide rotatable designs that avoid extreme factor combinations and may require fewer runs for small numbers of factors [25] [30].

- Simplex Algorithms offer model-free optimization that can efficiently locate local optima without developing explicit mathematical models [31].

- One-Variable-at-a-Time (OVAT) approaches, while intuitively simple, fail to capture factor interactions and typically require more experiments to locate optima [31].

CCD is particularly advantageous when a comprehensive understanding of the response surface is desired, when factor interactions are suspected, and when the goal includes developing a predictive model for the process [25] [10]. The method's structured approach ensures efficient exploration of the factor space while providing the data necessary for rigorous statistical analysis.

Quadratic models derived from Central Composite Designs provide organic chemists with powerful tools for interpreting complex reaction landscapes and identifying optimal conditions. Through careful experimental design, rigorous statistical analysis, and thoughtful interpretation of model coefficients, researchers can efficiently navigate multi-dimensional factor spaces while developing comprehensive mathematical relationships between process variables and outcomes. The continued integration of these approaches with real-time analytics and automation promises to further enhance their utility in advancing synthetic methodology across pharmaceutical, materials, and chemical industries.

CCD in Action: Practical Applications from Nanomaterial Synthesis to Drug Formulation

Optimizing Reaction Parameters in Nanocomposite Synthesis

The synthesis of high-performance nanocomposites requires precise control over reaction parameters to optimize material properties such as mechanical strength, thermal stability, and electrical conductivity. Central Composite Design (CCD), a response surface methodology, provides a systematic framework for optimizing these complex multi-variable processes with minimal experimental runs [32]. This approach is particularly valuable in organic synthesis research where traditional one-factor-at-a-time methods are inefficient for capturing interaction effects between critical parameters such as temperature, reaction time, nanofiller concentration, and mixing intensity.

Within nanocomposite research, CCD enables researchers to efficiently navigate complex parameter spaces to identify optimal synthesis conditions while quantifying individual factor effects and interaction terms [33]. When augmented with advanced modeling techniques such as Artificial Neural Networks (ANN) coupled with Genetic Algorithms (GA), CCD can generate highly accurate predictive models that surpass the capabilities of traditional response surface methodology alone [33]. This integrated approach is particularly valuable for pharmaceutical and materials research professionals seeking to develop robust nanocomposite synthesis protocols with defined design spaces.

Central Composite Design Fundamentals

Core Principles and Structure

Central Composite Design operates by augmenting a basic factorial design with additional points that enable estimation of curvature in response surfaces [32]. The structure consists of three distinct component point types:

- Factorial Points: A two-level full factorial or fractional factorial design that forms the core of the experiment, covering the corners of the experimental space [32]

- Axial Points (Star Points): Points positioned along axes at a distance α from the center, which allow for estimation of quadratic effects [32]

- Center Points: Multiple replicates at the center of the design space that provide an estimate of pure error and model stability [32]

The total number of experimental runs required in a CCD is determined by the formula: N = 2^k + 2k + C, where k represents the number of input variables, and C denotes the number of center point replicates [32]. This efficient design structure enables researchers to fit a second-order response surface model while maintaining a manageable number of experimental trials.

CCD Variants and Applications

Central Composite Designs are categorized into several variants, each with distinct characteristics and applications in nanocomposite synthesis optimization:

Table: Comparison of Central Composite Design Types

| CCD Type | Axial Distance (α) | Factor Levels | Key Characteristics | Research Applications |

|---|---|---|---|---|

| Circumscribed (CCC) | |α| > 1 | 5 levels | Rotatable design; star points extend beyond factorial levels; spherical symmetry [32] | Exploring broad process spaces where factor ranges can be extended [32] |

| Face-Centered (CCF) | α = ±1 | 3 levels | Star points at face centers; not rotatable; requires only 3 factor levels [32] | Situations with fixed factor boundaries; most common in preliminary screening [32] |

| Inscribed (CCI) | |α| < 1 | 5 levels | Scaled-down CCC where star points define experiment boundaries [32] | Truly limited factor settings where settings cannot exceed specified limits [32] |

The selection of appropriate CCD type depends on research objectives and practical constraints. CCC designs explore the largest process space, while CCI designs explore the smallest process space [32]. For nanocomposite synthesis, where parameter boundaries are often well-defined, Face-Centered CCD (CCF) offers practical advantages with only three levels required for each factor while still enabling quadratic effect estimation.

Experimental Protocol: CCD for Nanocomposite Synthesis

Pre-Experimental Planning Phase

Objective Definition: Clearly define primary response variables relevant to nanocomposite performance, such as tensile strength, electrical conductivity, thermal stability, or dispersion quality. Establish minimum important differences for each response to determine practical significance of factor effects [34].

Factor Selection: Identify critical process parameters with suspected nonlinear effects on responses. For polymer nanocomposite synthesis, typical factors include:

- Nanofiller concentration (0.5-5.0 wt%)

- Reaction temperature (60-120°C)

- Mixing speed (200-800 rpm)

- Reaction time (2-24 hours)

- Solvent-to-polymer ratio (5:1-20:1)

Experimental Domain Definition: Establish appropriate upper and lower limits for each factor based on preliminary experiments and literature review. Avoid excessively narrow ranges that may miss optimal regions, or overly broad ranges that produce impractical processing conditions.

CCD Implementation Protocol

Step 1: Design Construction

- Select appropriate CCD type based on factor constraints (typically CCF for constrained systems)

- Determine number of center points (minimum 3-5 for adequate pure error estimation)

- Randomize run order to minimize systematic bias

- Include adequate replication for error estimation

Step 2: Experimental Execution

- Prepare nanocomposites according to randomized run order

- Maintain precise control over all identified factors

- Record all response measurements with appropriate precision

- Document any unexpected observations or process deviations

Step 3: Response Measurement

- Characterize nanocomposite properties using standardized analytical techniques

- Ensure measurement precision through instrument calibration and replicate measurements

- Normalize response data if necessary to address scale differences

Step 4: Data Analysis

- Perform multiple regression to develop second-order polynomial models

- Evaluate model adequacy through residual analysis and lack-of-fit testing

- Identify significant factors through ANOVA and Pareto analysis

- Generate response surface and contour plots to visualize factor effects

Step 5: Optimization and Validation

- Utilize desirability functions for multi-response optimization

- Confirm model predictions with confirmation experiments at identified optimum

- Establish design space boundaries for robust process operation

Advanced Modeling Integration

For enhanced predictive capability, CCD results can be integrated with Artificial Neural Networks (ANN) as demonstrated in radiolabeling process optimization [33]. The hybrid approach follows this workflow:

- Use CCD data to train ANN models with architecture optimized for specific response prediction

- Apply Genetic Algorithms to ANN models to identify global optima within the experimental space

- Validate hybrid model predictions with confirmation experiments

- Compare performance of RSM versus ANN-GA approaches using metrics such as Mean Squared Error and R² values [33]

Research indicates that ANN models often demonstrate superior predictive capability compared to traditional RSM, with one study reporting MSE of 9.08 for ANN versus 12.36 for RSM, and R² values of 0.99 for ANN versus 0.93 for RSM [33].

Research Reagent Solutions for Nanocomposite Synthesis

Table: Essential Materials for Nanocomposite Synthesis and Characterization

| Material Category | Specific Examples | Function in Nanocomposite Synthesis |

|---|---|---|

| Carbon-Based Nanomaterials | Carbon nanotubes (CNTs), Graphene, Fullerenes [35] | Primary reinforcement; enhance electrical conductivity (10^2-10^4 S/cm), mechanical strength (500-1000 MPa tensile strength), and thermal stability [35] |

| Inorganic Nanomaterials | Silver nanoparticles, Silica nanoparticles, Titanium oxide, Nanoclay [35] | Provide antimicrobial properties, barrier properties, UV protection, and improve mechanical strength [35] |

| Organic Nanomaterials | Nanocellulose, Dendrimers, Liposomes [35] | Biocompatible reinforcement, drug delivery carriers, and templates for hierarchical structures [35] |

| Polymer Matrices | Thermoplastics, Thermosets, Biopolymers [35] | Continuous phase that transfers stress to reinforcement; determines processability and environmental resistance [35] |

| Solvents & Dispersants | Dimethylformamide, Tetrahydrofuran, Surfactants [35] | Aid nanomaterial dispersion and prevent aggregation; critical for achieving percolation threshold [35] |

| Coupling Agents | Silanes, Titanates, Functionalized polymers [35] | Improve interfacial adhesion between nanomaterials and polymer matrix; critical for stress transfer [35] |

Data Presentation and Visualization Framework

Effective data presentation is critical for communicating research findings in nanocomposite optimization studies. Tables should present exact values while figures provide overall trends and relationships [34].

Table: Exemplary Data Summary Table for Nanocomposite Optimization Results

| Standard Order | Factor A: Nanofiller (%) | Factor B: Temp (°C) | Response 1: Strength (MPa) | Response 2: Conductivity (S/m) |

|---|---|---|---|---|

| 1 | -1 (1.0) | -1 (80) | 45.2 ± 2.1 | 0.05 ± 0.01 |

| 2 | +1 (3.0) | -1 (80) | 62.8 ± 1.7 | 0.89 ± 0.05 |

| 3 | -1 (1.0) | +1 (120) | 38.5 ± 3.2 | 0.03 ± 0.02 |

| 4 | +1 (3.0) | +1 (120) | 58.3 ± 2.8 | 0.76 ± 0.07 |

| 5 | -α (0.5) | 0 (100) | 32.1 ± 2.5 | 0.01 ± 0.01 |

| 6 | +α (3.5) | 0 (100) | 65.4 ± 1.9 | 1.12 ± 0.08 |

| 7 | 0 (2.0) | -α (70) | 52.7 ± 2.3 | 0.32 ± 0.03 |

| 8 | 0 (2.0) | +α (130) | 48.9 ± 2.7 | 0.28 ± 0.04 |

| 9 | 0 (2.0) | 0 (100) | 55.3 ± 1.5 | 0.45 ± 0.02 |

| 10 | 0 (2.0) | 0 (100) | 54.8 ± 1.8 | 0.43 ± 0.03 |

Tables should be self-explanatory with clear titles, properly defined abbreviations in footnotes, and consistent formatting throughout all research documentation [34]. Present data in meaningful order with comparisons arranged from left to right to facilitate interpretation [34].

Optimization Workflow Visualization

CCD Optimization Workflow for Nanocomposite Synthesis

Advanced Modeling Integration Diagram

Hybrid CCD-ANN-GA Modeling Approach

Case Study: Optimization of Polymer-CNT Nanocomposite Synthesis

Experimental Design and Parameters

A practical application of CCD in nanocomposite synthesis involves optimizing the preparation of polypropylene-carbon nanotube composites for enhanced electrical conductivity and mechanical strength. The following parameters were investigated:

- Factor A: CNT concentration (0.5-3.0 wt%)

- Factor B: Melt compounding temperature (180-220°C)

- Factor C: Screw speed in twin-screw extruder (200-400 rpm)

- Factor D: Residence time (3-7 minutes)

A Face-Centered CCD with 30 experimental runs (including 6 center points) was implemented to study these four factors. The experimental domain was constrained based on preliminary trials and processing limitations.

Results and Optimization

Table: ANOVA Summary for Electrical Conductivity Response

| Source | Sum of Squares | Degrees of Freedom | Mean Square | F-Value | p-Value |

|---|---|---|---|---|---|

| Model | 15.82 | 14 | 1.13 | 28.75 | < 0.0001 |

| A-CNT Concentration | 10.45 | 1 | 10.45 | 265.82 | < 0.0001 |

| B-Temperature | 0.87 | 1 | 0.87 | 22.13 | 0.0003 |

| C-Screw Speed | 0.42 | 1 | 0.42 | 10.68 | 0.0051 |

| D-Residence Time | 0.38 | 1 | 0.38 | 9.67 | 0.0072 |

| AB Interaction | 0.65 | 1 | 0.65 | 16.54 | 0.0010 |

| A² | 2.15 | 1 | 2.15 | 54.70 | < 0.0001 |

| Residual | 0.59 | 15 | 0.039 | ||

| Lack of Fit | 0.48 | 10 | 0.048 | 2.18 | 0.1945 |

| Pure Error | 0.11 | 5 | 0.022 |

The regression analysis revealed a significant quadratic model (R² = 0.964, Adjusted R² = 0.931) with CNT concentration demonstrating the most pronounced effect on electrical conductivity. The response surface model identified an optimum at 2.4 wt% CNT concentration, 205°C processing temperature, 350 rpm screw speed, and 5.2 minutes residence time, yielding a predicted conductivity of 1.85 S/m with a desirability function value of 0.92.

Model Validation

Confirmation experiments at the identified optimum conditions produced an average conductivity of 1.79 ± 0.12 S/m, representing a 94% agreement with predicted values and validating the CCD model adequacy. The optimized nanocomposite also demonstrated a tensile strength of 48.3 MPa, representing a 65% improvement over the pure polymer matrix while maintaining adequate processability.

Central Composite Design provides a powerful statistical framework for efficient optimization of nanocomposite synthesis parameters. Through systematic experimentation and modeling, researchers can identify optimal processing conditions while quantifying complex interaction effects between critical factors. The integration of CCD with advanced computational techniques such as Artificial Neural Networks and Genetic Algorithms further enhances predictive capability and optimization precision [33].

For successful implementation in organic synthesis research, we recommend: