Closed-Loop Optimization with Machine Learning DoE: Accelerating Drug Discovery and Formulation

This article explores the transformative integration of machine learning (ML) with Design of Experiments (DoE) in closed-loop optimization systems for biomedical research.

Closed-Loop Optimization with Machine Learning DoE: Accelerating Drug Discovery and Formulation

Abstract

This article explores the transformative integration of machine learning (ML) with Design of Experiments (DoE) in closed-loop optimization systems for biomedical research. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive overview of how these frameworks are recoding traditional R&D pipelines. We cover the foundational principles of ML-driven DoE, detail key methodological approaches like Bayesian optimization and their application in formulation design and molecular discovery, address critical troubleshooting and optimization challenges, and finally, present rigorous validation and comparative analyses that quantify the significant acceleration in development timelines and success rates. The synthesis of these four intents offers a actionable guide for implementing these advanced, data-driven strategies to overcome the high costs and attrition rates plaguing modern pharmaceutical development.

The Foundations of Machine Learning DoE and Closed-Loop Systems

The pursuit of optimal outcomes in research and development has long been guided by the principles of Design of Experiments (DoE). Traditional DoE provides a structured, statistical framework for investigating the relationship between multiple input factors and output responses, moving beyond inefficient one-factor-at-a-time approaches [1]. By systematically exploring factor interactions, it enables the creation of robust methods and processes [1]. However, as scientific challenges grow in complexity—encompassing vast, multidimensional design spaces—the limitations of traditional DoE become apparent. Its requirement for a predefined experimental grid and the exponential growth in experiments needed with increasing factors restrict its effectiveness for high-dimensional problems [2].

This landscape is being transformed by machine learning (ML)-driven closed-loop optimization. This paradigm integrates predictive ML models with automated experimentation, creating an iterative cycle of prediction, testing, and learning. The system uses algorithms to select the most informative experiments to run based on accumulating data, focusing resources on the most promising regions of the design space [2]. This approach has demonstrated remarkable efficiency, achieving performance targets with 50%-90% fewer experiments than traditional methods [2]. The following sections detail this paradigm shift through quantitative comparisons, specific application protocols, and practical implementation resources.

Comparative Analysis: Traditional DoE vs. ML-Driven Closed Loops

Table 1: A comparative summary of Traditional DoE and ML-Driven Closed Loops.

| Feature | Traditional DoE | ML-Driven Closed Loops |

|---|---|---|

| Core Philosophy | Structured, pre-planned experimental grid; "one-shot" design. | Iterative, adaptive learning loop; guided sequential discovery. |

| Underlying Mechanism | Statistical analysis of variance (ANOVA), response surface methodology. | Machine learning (e.g., Gaussian processes, Bayesian optimization). |

| Handling of High-Dimensionality | Number of experiments grows exponentially with factors; becomes inefficient. | Number of experiments scales linearly with dimensions; highly efficient for large spaces. |

| Exploration vs. Exploitation | Focuses on building a global model over a predefined space. | Actively balances exploring uncertain regions and exploiting known promising areas. |

| Data Utilization | Relies solely on data from the current, pre-defined experiment set. | Can leverage historical data and transfer learning from related projects. |

| Optimal Use Case | Local optimization, screening, and problems with a small number of factors. | Global optimization over vast, complex design spaces and for "black-box" problems. |

| Typical Experimental Reduction | Baseline (defines the standard number of experiments required). | 50% - 90% reduction compared to traditional DoE [2]. |

Application Notes: ML-Driven Closed-Loop Optimization in Action

Case Study 1: Accelerated Discovery of Organic Photoredox Catalysts

This study demonstrated a two-step, data-driven approach for the targeted synthesis of organic photoredox catalysts (OPCs) and the subsequent optimization of a metallophotocatalysis reaction [3].

- Objective: Discover a high-performance organic photocatalyst from a virtual library of 560 candidates and optimize its reaction conditions for a decarboxylative cross-coupling reaction.

- Challenge: The combinatorial complexity made exhaustive synthesis and testing impractical. Predicting catalytic activity from first principles was also infeasible due to multivariate complexity.

- Implementation: A closed-loop Bayesian optimization (BO) workflow was employed. The algorithm used a Gaussian process as a surrogate model to predict reaction yield based on molecular descriptors. It then selected the most promising batch of molecules to synthesize and test next, iteratively improving the model with each cycle.

- Outcome: The system identified a catalyst yielding 88% after evaluating only 107 of 4,500 possible reaction condition sets (~2.4% of the total space) [3]. This highlights the profound efficiency of the ML-guided approach in navigating a vast experimental landscape.

Detailed Experimental Protocol: Catalyst Discovery via Bayesian Optimization

Table 2: Key research reagents for organic photoredox catalyst discovery and optimization.

| Research Reagent | Function in the Experiment |

|---|---|

| Cyanopyridine (CNP) Core | The central molecular scaffold for constructing the virtual library of organic photocatalysts. |

| Ra (β-keto nitrile) & Rb (Aromatic Aldehydes) | Variable side-chain groups used to combinatorially generate a diverse virtual library of 560 molecules. |

| NiCl₂·glyme | The transition-metal catalyst precursor in the dual photoredox/nickel catalysis system. |

| dtbbpy (4,4′-di-tert-butyl-2,2′-bipyridine) | A ligand that coordinates with nickel, tuning its catalytic activity and stability. |

| Cs₂CO₃ | A base used to facilitate the decarboxylative step in the cross-coupling reaction. |

| DMF (Dimethylformamide) | The solvent for the reaction, chosen for its ability to dissolve the reactants and catalysts. |

| Blue LED | The light source for photoexciting the organic photocatalyst, initiating the photoredox cycle. |

Procedure:

- Virtual Library Construction: Define a virtual library of 560 synthesizable molecules based on a cyanopyridine core, combining 20 β-keto nitriles (Ra) and 28 aromatic aldehydes (Rb) [3].

- Molecular Descriptor Calculation: Compute 16 molecular descriptors (e.g., optoelectronic properties, redox potentials) for each candidate to numerically encode the chemical space.

- Initial Design: Select a small, diverse set of initial molecules (e.g., 6 candidates) using an algorithm like Kennard-Stone to ensure broad coverage of the chemical space.

- Synthesis & Testing:

- Synthesize the selected CNP molecules.

- Test their photocatalytic performance under standardized reaction conditions: 4 mol% CNP, 10 mol% NiCl₂·glyme, 15 mol% dtbbpy, 1.5 equiv. Cs₂CO₃ in DMF under blue LED irradiation.

- Measure the reaction yield (e.g., via HPLC or LC-MS) as the objective function for optimization.

- Model Building & Candidate Selection:

- Train a Bayesian Optimization model (e.g., with a Gaussian process surrogate) using the accumulated yield data and molecular descriptors.

- Use an acquisition function (e.g., Expected Improvement) to select the next batch of ~12 molecules that balance high predicted yield (exploitation) and high uncertainty (exploration).

- Iterative Loop: Repeat steps 4 and 5 until a performance target is met or the experimental budget is exhausted. The model is updated with new data after each iteration.

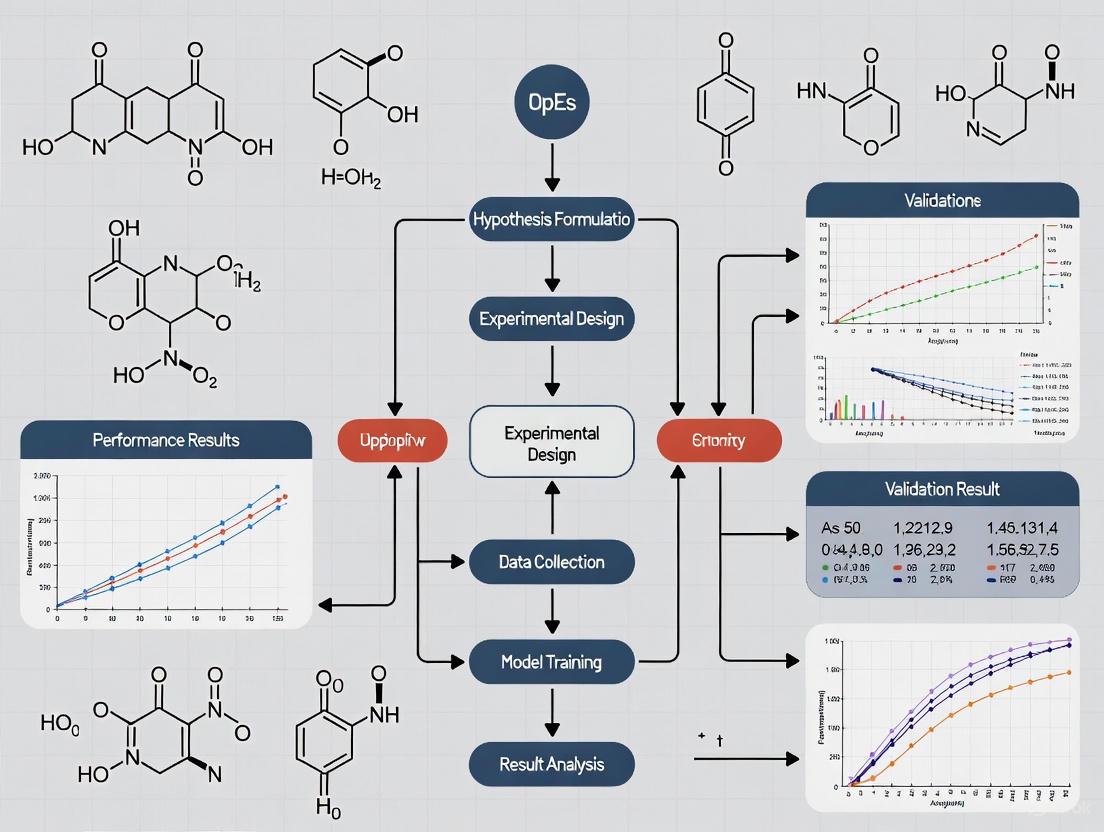

Diagram 1: Closed-loop catalyst discovery workflow.

Case Study 2: Sustainable Cement Formulation with Life-Cycle Assessment

This research addressed the carbon footprint of cement by incorporating carbon-negative algal biomatter, a complex design problem with competing objectives [4].

- Objective: Discover a green cement formulation that minimizes global warming potential (GWP) while maintaining functional strength requirements.

- Challenge: Navigating the complex hydration-strength relationships introduced by the biomatter within a combinatorial design space.

- Implementation: An ML-guided closed-loop framework integrated with life-cycle assessment (LCA). The system used real-time testing of algal cements with early-stopping criteria to accelerate the optimization process.

- Outcome: The approach achieved the target strength and secured a 21% reduction in GWP within just 28 days of experiment time, attaining 93% of the achievable improvement [4]. This demonstrates the power of ML-driven loops for rapid, sustainable material development.

Detailed Experimental Protocol: Multi-Objective Cement Optimization

Table 3: Key research reagents and materials for sustainable cement formulation.

| Research Reagent | Function in the Experiment |

|---|---|

| Ordinary Portland Cement (OPC) | The baseline cementitious binder used as the control and base for mixtures. |

| Whole Macroalgae Biomatter | A carbon-negative substitute material intended to reduce the GWP of the final formulation. |

| Water | The hydrating agent for the cementitious reactions; water-to-cement ratio is a key factor. |

| Standard Sand (e.g., ISO 679) | The aggregate used for creating standardized mortar specimens for strength testing. |

| Compressive Strength Tester | Equipment to measure the mechanical performance (the key functional constraint). |

| Life-Cycle Assessment (LCA) Database | Software/tool providing emission factors to calculate the GWP of each formulation. |

Procedure:

- Factor and Objective Definition: Define input factors (e.g., % algal substitution, water-to-cement ratio, curing conditions) and the two key objectives: maximize compressive strength and minimize GWP.

- Initial DoE: Create a small initial dataset, potentially using a sparse factorial design to cover a broad range of the factor space.

- Specimen Preparation & Early Testing:

- Prepare cement mortar specimens according to the defined mixture proportions.

- Begin curing specimens and perform early-age strength tests (e.g., at 1, 3, or 7 days). Use these early-strength results to predict 28-day strength, applying early-stopping criteria to abandon poorly performing mixtures without completing full curing.

- Objective Calculation: For each tested formulation, measure the final compressive strength and calculate the GWP using LCA software and associated databases.

- Multi-Objective Optimization Loop:

- Train a machine learning model (e.g., an Amortized Gaussian Process) on all collected data (formulation factors → strength & GWP).

- Use a multi-objective acquisition function to select the next set of formulations to test, aiming to improve both objectives simultaneously.

- Iteration: Repeat steps 3-5 until the optimization target—such as a specific strength threshold and a maximum GWP—is achieved.

Diagram 2: Cement optimization with early-stopping.

Implementation Guide: Core Components of a Closed-Loop System

Building an effective ML-driven closed-loop optimization system requires the integration of several key components.

The Model: Gaussian Process and Bayesian Optimization The Gaussian Process (GP) is a cornerstone of many closed-loop systems, as it provides a robust probabilistic surrogate model. It excels in modeling complex, non-linear relationships and, crucially, provides an uncertainty estimate for its own predictions [3]. Bayesian Optimization (BO) uses this GP model to decide which experiments to run next. An acquisition function, such as Expected Improvement (EI) or Upper Confidence Bound (UCB), uses the GP's prediction and uncertainty to balance exploring new areas of the space and exploiting known high-performing regions [3].

The Actuator: Automated Experimentation The "controller" in the loop is the BO algorithm that decides the next experiment. The "actuator" is the mechanism that physically performs the experiment. This can range from a manual system where a scientist receives a list from the algorithm and performs the lab work, to a fully integrated robotic system that receives instructions and conducts experiments autonomously [5].

The Sensor: Data Generation and Processing The "sensor" is the analytical method used to measure the outcome or response of each experiment. This could be a chromatograph measuring yield, a mass spectrometer identifying products, or a mechanical tester measuring strength [4] [3]. The quality and speed of this feedback are critical for the efficiency of the overall loop.

Diagram 3: Core components of a closed-loop system.

The paradigm of scientific research, particularly in fields like drug development and materials science, is undergoing a radical transformation through the integration of machine learning (ML) with Design of Experiments (DoE). This shift moves beyond traditional one-factor-at-a-time or high-throughput trial-and-error methods towards intelligent, closed-loop optimization systems. At the heart of this "self-driving lab" revolution lies the sophisticated interplay between three core components: sensors for data acquisition, algorithms for decision-making, and actuators for physical intervention. This application note details their roles, integration protocols, and quantitative performance within the context of ML-DoE closed-loop optimization research, providing a practical guide for implementing automated experimentation platforms.

The Trifecta of Automated Experimentation: Definitions and Roles

A closed-loop ML-DoE system functions as an autonomous scientist. The loop initiates with sensors gathering multidimensional data from an experiment. This data is processed by algorithms which generate hypotheses, optimize parameters, and design the next experiment. Finally, actuators precisely execute the designed experimental steps. This cycle repeats, converging rapidly towards an optimal solution [6] [7].

Sensors are the system's perceptual organs. They convert physical, chemical, and biological states into quantitative digital data. In bio-manufacturing, this includes online sensors for pH, dissolved oxygen (DO), temperature, and pressure in bioreactors [8]. Advanced platforms employ multi-modal sensing, such as multi-spectral cameras and laser radar (LiDAR) for 3D digital twin modeling of lab spaces, achieving 98.7% accuracy in dynamic bench-top modeling [7]. Real-time process gas analyzers monitor microbial metabolic states, providing the critical data stream for adaptive control [8].

Algorithms are the system's brain. They encompass ML models for prediction, optimization algorithms for DoE, and control algorithms for real-time adjustment. For instance, SurFF, a foundation model for predicting surface energy and morphology of intermetallic crystals, accelerates screening by over 100,000 times compared to traditional density functional theory (DFT) calculations [9]. In fermentation, machine learning models use sensor data to predict optimal feeding strategies and dynamically adjust parameters like agitation speed [7]. Multi-agent systems can orchestrate the entire research process, with specialized agents for literature analysis, experimental planning, coding, and safety checks [6].

Actuators are the system's hands. They translate digital commands from algorithms into precise physical actions. This includes robotic arms for liquid handling, automated bioreactor control valves for nutrient feeding, high-throughput strain pickers, and automated seed culture inoculators [8] [7]. The precision and reliability of actuators directly determine the fidelity with which the algorithm's experimental design is realized.

Quantitative Performance Data

The integration of these components yields dramatic improvements in research efficiency and outcomes. The table below summarizes key quantitative findings from recent implementations.

Table 1: Performance Metrics of Automated Experimentation Components & Systems

| Component / System | Metric | Performance Improvement / Outcome | Source / Context |

|---|---|---|---|

| Algorithm (SurFF Model) | Screening Efficiency | >100,000x faster than DFT calculations | Catalyst surface property prediction [9] |

| Algorithm (AI Co-Scientist) | Problem-Solving Speed | Solved a multi-year DNA transfer puzzle in 2 days | Biological discovery [6] |

| Algorithm (CaTS Framework) | Transition State Search | ~10,000x efficiency increase vs. conventional methods | Catalytic reaction kinetics [9] |

| Sensor Fusion System | Anomaly Detection Response | Reduced from 45 minutes to 8.2 seconds | BIOBot project in bio-processes [7] |

| Digital Twin System | Process Validation Cycle | Reduced from 18 months to 5.7 months | Pharmaceutical pilot-scale-up [7] |

| Closed-loop Fermentation | Unplanned Downtime | Reduced to 0.3% | AI-driven predictive control [7] |

| Hybrid Decision System | R&D Efficiency | Increased by 40% | AI-human collaborative framework [7] |

| Automated Strain Engineering | Optimization Cycle | Reduced from ~6 months to 22 days | Multi-omics data integration [7] |

| 3D Digital Twin Modeling | Workspace Modeling Accuracy | 98.7% accuracy | Lab space monitoring with LiDAR/cameras [7] |

| Full Automation (High-Use) | Return on Investment (ROI) | Up to 1:8.3 | When experiment frequency >50/week [7] |

Experimental Protocols for Key Applications

Protocol 1: Closed-Loop Optimization of Microbial Fermentation for Metabolite Production

Objective: To maximize the titer of a target compound (e.g., 2'-FL, ARA) using an ML-driven adaptive control system. Materials: Bioreactor with integrated pH, DO, temperature, and off-gas sensors; automated feeding pumps; cell density probe; high-performance liquid chromatography (HPLC) or equivalent for product quantification; central control server running ML models. Procedure:

- Initial DoE & Model Training: Execute a small-scale, model-informed DoE (e.g., 15-20 batches) varying key parameters (e.g., feed rate, induction timing, temperature). Use sensor and endpoint product data to train a Gaussian Process Regression or Random Forest model that predicts final titer based on process parameters.

- Closed-Loop Operation: a. Sensing: During a production run, sensors stream real-time data (pH, DO, CO2 evolution rate, optical density) to the control server [8]. b. Algorithmic Decision: The trained model, combined with a Bayesian optimization or reinforcement learning algorithm, analyzes the real-time trajectory. It predicts the optimal adjustment for the next control interval (e.g., adjust feed pump rate) to maximize the predicted final titer. c. Actuation: The control server sends commands to the actuators (precise dosing pumps) to implement the adjustment.

- Iteration & Model Update: After each batch, the final product titer is added to the training dataset, and the predictive model is retrained, refining its accuracy for subsequent runs. This loop continues until performance plateaus or target is met.

Protocol 2: AI-Driven High-Throughput Catalyst Screening

Objective: To discover novel single-atom catalysts for CO2 electroreduction to methanol. Materials: Pre-trained atomic foundation model (e.g., M3GNet); DFT calculation software; active learning pipeline; robotic synthesis and characterization platforms. Procedure:

- Initial Knowledge Embedding: Start with a pre-trained atomic foundation model capable of predicting material properties from structure [9].

- Active Learning Loop: a. Algorithmic Proposal: The model screens a vast virtual library of candidate catalyst structures (e.g., 3000+). Using an acquisition function (e.g., expected improvement), it selects a small batch (e.g., 10-20) with the most promising predicted activity/selectivity or highest uncertainty. b. Actuation & Sensing: A robotic synthesis platform (actuator) prepares the selected catalysts. Automated characterization tools (sensors) measure key performance indicators (e.g., Faradaic efficiency, current density for methanol). c. Data Feedback & Model Retraining: The new experimental data is fed back to the algorithm. The foundation model is fine-tuned with this small but high-value dataset, dramatically improving its predictive accuracy for the next round of screening.

- Validation: Top candidates identified through multiple active learning cycles are validated through detailed, manual electrochemical testing.

System Architecture & Workflow Diagrams

Diagram 1: Closed-loop ML-DoE Optimization Workflow

Diagram 2: Sensor-Algorithm-Actuator Interaction Loop

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Building an Automated Experimentation Platform

| Category | Item / Solution | Function in Automated Experimentation | Example/Note |

|---|---|---|---|

| Sensing & Monitoring | Multi-parameter Bioreactor Probes | Real-time monitoring of pH, DO, temperature, pressure for process control. | Foundation for adaptive fermentation control [8]. |

| Process Gas Analyzer (Mass Spectrometer) | Online analysis of O2, CO2 in off-gas for metabolic flux estimation. | Enables real-time metabolic feedback [8]. | |

| High-throughput Single-cell Raman Flow Sorter | Rapid, label-free screening and sorting of microbial cells based on biochemical composition. | Accelerates strain selection in synthetic biology [8]. | |

| Multi-spectral Camera + LiDAR | Creates dynamic 3D digital twin of lab environment for collision prediction and process monitoring. | Achieves 98.7% modeling accuracy [7]. | |

| Algorithm & Software | Active Learning Pipeline | Intelligently selects the most informative experiments to perform next, maximizing knowledge gain. | Core to efficient catalyst/material discovery [9]. |

| Domain-specific Foundation Models | Pre-trained models (e.g., for protein structure, material surfaces) provide strong prior knowledge. | SurFF for surfaces [9]; AlphaFold for proteins [10]. | |

| Bayesian Optimization Library | Efficient global optimization algorithm for guiding experiments in continuous parameter spaces. | Preferred for black-box, expensive-to-evaluate functions. | |

| Laboratory Information Management System | Centralized, standardized data management for all experimental data and metadata. | Critical for reproducibility and model training [7]. | |

| Actuation & Hardware | Automated Liquid Handling Robot | Performs precise, high-throughput pipetting for assay preparation, serial dilutions, etc. | Enables standardization and scales sample preparation. |

| Automated Bioreactor Control System | Integrates sensors and actuators (pumps, valves) for fully controlled fermentation runs. | Platform for closed-loop bioprocess optimization. | |

| Robotic Arm for Sample Transit | Moves labware (plates, flasks) between instruments (incubators, readers, etc.). | Connects discrete automation modules into a workflow. | |

| Modular High-throughput Experimentation Platform | Integrated systems for specific tasks (e.g., colony picking, PCR setup). | Increases experimental throughput by orders of magnitude. |

The synergy between sensors, algorithms, and actuators forms the operational backbone of next-generation automated laboratories. As evidenced by the protocols and data, this integration enables a shift from linear, human-paced research to parallel, adaptive, and data-driven discovery cycles. Successful implementation requires careful selection of tools from the scientist's toolkit, design of robust workflows as depicted in the diagrams, and a hybrid approach that leverages the scale and speed of AI while incorporating essential human oversight for validation and complex decision-making [6] [7]. This framework is central to advancing machine learning DoE closed-loop optimization research, promising to dramatically accelerate innovation in drug development, materials science, and beyond.

The integration of machine learning (ML) into Design of Experiments (DoE) represents a paradigm shift in scientific research, particularly within drug development and materials science. This fusion creates intelligent experimental systems capable of navigating complex parameter spaces with unprecedented efficiency. Traditional DoE approaches, while statistically sound, often require numerous iterative experiments when exploring multifaceted systems. ML-enhanced DoE introduces adaptive learning, where each experiment informs the next in a continuous, closed-loop manner, significantly accelerating the optimization cycle.

The core of this approach lies in creating a data-model-experiment闭环 (closed-loop) where predictive models guide experimental planning, and experimental results continuously refine the models [10]. This is especially valuable in fields like pharmaceutical development, where the relationships between molecular structures, processing parameters, and desired functional outcomes are exceptionally complex. AI-driven systems can now act as "co-researchers," managing the intricate data analysis and experimental iteration, thus freeing human scientists for higher-level interpretation and strategy [6].

Core ML Paradigms in Experimental Design

Supervised Learning for Predictive Modeling

Supervised learning operates on labeled historical data to build predictive models that map input experimental parameters to known outputs. In experimental design, these models serve as surrogate models or digital twins of the experimental system, allowing researchers to predict outcomes of untested conditions without conducting physical experiments.

- Function Approximation: Learning the complex function ( f(\mathbf{x}) ) that maps input variables ( \mathbf{x} ) (e.g., temperature, concentration, pH) to output targets ( \mathbf{y} ) (e.g., yield, purity, bioactivity) [11].

- Quantitative Structure-Activity Relationship (QSAR) Modeling: Predicting biological activity or physicochemical properties of compounds based on their structural descriptors or fingerprints, a cornerstone in computer-aided drug design [11].

- Response Surface Modeling: Moving beyond traditional polynomial models, ML algorithms like Gaussian Process Regression can model complex, non-linear response surfaces with inherent uncertainty quantification, ideal for process optimization [12].

Table 1: Supervised Learning Algorithms in Experimental Design

| Algorithm | Primary Use Case | Key Advantages | Typical Experimental Context |

|---|---|---|---|

| Gaussian Process Regression | Response surface modeling, Bayesian optimization | Provides uncertainty estimates, handles small datasets | Process parameter optimization, catalyst design |

| Graph Neural Networks (GNNs) | Molecular property prediction, protein-ligand binding | Naturally handles graph-structured data (molecules) | Drug candidate screening, material property prediction [10] [11] |

| Random Forests / Gradient Boosting | Feature importance analysis, initial screening | Robust to outliers, handles mixed data types | High-throughput screening data analysis, preliminary hypothesis testing |

| Transformer Models | Protein function prediction, reaction yield prediction | Processes sequence data (e.g., SMILES, protein sequences) [10] | Protein engineering, retrosynthetic planning [10] |

Unsupervised Learning for Experimental Exploration

Unsupervised learning techniques are invaluable in the early stages of experimental investigation when labeled data is scarce or when the objective is to discover inherent patterns, clusters, or anomalies within the data.

- Dimensionality Reduction: Techniques like Principal Component Analysis (PCA) and t-SNE project high-dimensional experimental data (e.g., spectral data, molecular descriptors) into 2D or 3D visualizations. This allows scientists to identify natural groupings, outliers, and the overall structure of their data space. For instance, PCA has been used to create structural similarity maps of materials, such as titanium dioxide polymorphs, where each point represents a distinct atomic configuration, colored by properties like enthalpy, providing an intuitive overview of the phase landscape [12].

- Clustering for Formulation Stratification: Identifying distinct subgroups within a library of experimental formulations or compounds. This can reveal sub-populations with similar characteristics, guiding targeted optimization strategies.

- Anomaly Detection: Flagging anomalous experimental results that deviate significantly from the norm, which could indicate measurement errors, contaminated samples, or potentially novel and interesting phenomena worthy of further investigation.

Table 2: Unsupervised Learning Techniques in Experimental Analysis

| Technique | Primary Function | Interpretation Aid | Application Example |

|---|---|---|---|

| Principal Component Analysis (PCA) | Data visualization, noise reduction | Identifies dominant patterns of variance | Mapping crystal structure landscapes [12] |

| t-SNE / UMAP | Visualizing high-dimensional clusters | Reveals non-linear manifolds and local structure | Exploring molecular dynamics trajectories [12] |

| K-Means Clustering | Grouping similar experiments | Partitions data into distinct sub-populations | Categorizing spectroscopic profiles of formulations |

| Autoencoders | Learning compressed representations | Latent space can reveal intrinsic factors | Anomaly detection in high-throughput screening |

Reinforcement Learning for Sequential Decision-Making

Reinforcement Learning (RL) frames experimental design as a sequential decision-making process where an agent learns to choose optimal actions (experimental conditions) through interaction with an environment (the experimental system) to maximize a cumulative reward (the objective function).

- Closed-Loop Optimization: RL is the engine behind fully autonomous experimental systems. The agent proposes an experiment, the experiment is executed (often via robotics), results are obtained, and the agent's policy is updated based on the reward. This creates the perception-cognition-decision-execution-feedback loop that defines an AI scientist [11].

- Adaptive Resource Allocation: RL algorithms can dynamically allocate limited resources (e.g., expensive reagents, instrument time) to the most promising experimental directions, maximizing the information gain per unit cost.

- Multi-Objective Optimization: Many experimental goals are multi-faceted (e.g., maximize yield while minimizing cost and impurity). RL can effectively handle these competing objectives, finding a Pareto-optimal set of conditions.

Protocol 2: Reinforcement Learning for Reaction Optimization

- Define State Space (S): Represent the experimental state, e.g., current reaction conditions (temperature, catalyst concentration, solvent ratio), and past experimental outcomes.

- Define Action Space (A): Specify the adjustable parameters and their permissible ranges (e.g., ±10°C temperature change, ±5 mol% catalyst).

- Define Reward (R): Formulate a reward function that quantifies the experimental goal, e.g., R = (Final Yield %) - (Penalty for High Impurity) - (Cost of Reagents).

- Initialize Policy (π): Start with a pre-trained policy or an exploration-heavy strategy (e.g., ε-greedy).

- Run Optimization Loop: a. The agent selects an action (new experimental conditions) based on its current policy. b. The action is executed in the lab (manually or via automation). c. The outcome is measured, and the reward is calculated. d. The agent's policy (e.g., a neural network) is updated using a RL algorithm (e.g., Proximal Policy Optimization). e. Repeat until convergence or resource exhaustion.

Integrated Closed-Loop Workflows

The true power of ML in experimental design emerges when these paradigms are integrated into a cohesive, closed-loop workflow. This represents the operational backbone of modern "AI scientists" [6].

Diagram 1: ML-Driven Closed-Loop Experimentation

These systems can operate at a scale and speed unattainable by humans. For example, the Coscientist system can autonomously retrieve literature, design a synthesis plan, write code for robotic execution, and analyze results upon a natural language command [6]. Similarly, multi-agent systems, like the one described by Yaghi, employ several specialized AI agents (e.g., a "Literature Analyst," "Algorithm Coder," and "Robot Controller") that collaborate to solve complex materials science problems, such as optimizing the crystallization of COF-323 [6].

The Scientist's Toolkit: Essential Research Reagents & Solutions

The implementation of ML-driven experimental design relies on a suite of computational and physical tools.

Table 3: Key Research Reagents & Solutions for ML-Driven Experimentation

| Category / Item | Function / Description | Example Tools / Formats |

|---|---|---|

| Data Representation | ||

| SMILES Strings | A line notation for representing molecular structures as text, enabling sequence models to process chemical information. [13] [11] | "CCO" for ethanol |

| Molecular Graph | Represents a molecule as a graph with atoms as nodes and bonds as edges, processed by Graph Neural Networks (GNNs). [11] | Adjacency matrix + node features |

| SOAP Descriptors | A powerful descriptor for atomic environments, enabling quantitative comparison of local structures in materials and molecules. [12] | Smooth Overlap of Atomic Positions |

| Software & Libraries | ||

| Gaussian Process Tools | Libraries for building surrogate models with uncertainty estimates for Bayesian optimization. | scikit-learn, GPy, GPflow |

| Deep Learning Frameworks | Platforms for building and training complex models like GNNs and Transformers. | PyTorch, TensorFlow, JAX |

| Cheminformatics Libraries | Tools for handling molecular data, generating fingerprints, and calculating descriptors. | RDKit, OpenBabel |

| Experimental Infrastructure | ||

| Automated Liquid Handlers | Robotics for executing the physical experiments proposed by the ML agent. | High-throughput screening systems |

| Laboratory Information Management System (LIMS) | Software for tracking samples, associated metadata, and experimental results, creating structured data for ML. | Benchling, other ELN/LIMS |

Advanced Applications & Protocols

Case Study: AI-Driven Protein Design

The field of protein engineering has been revolutionized by the integration of ML-guided DoE. The process involves a tight loop between predictive models and experimental validation.

Protocol 3: Closed-Loop De Novo Protein Design

- Objective Specification: Define the target protein function (e.g., "bind to target antigen X with high affinity").

- Inverse Folding with RFdiffusion: Use generative models (e.g., RFdiffusion, ProGen) to create novel protein backbones or sequences that are predicted to achieve the desired function. This inverts the traditional structure-function paradigm [10].

- Stability & Expression Prediction: Filter generated candidates using supervised models (e.g., AlphaFold 3, OpenComplex-2, ESMFold) to predict stability, solubility, and expressibility [10].

- High-Priority Candidate Selection: Use a Bayesian optimization policy to select a diverse, high-potential subset of candidates for experimental testing.

- Parallelized Synthesis & Testing: Synthesize genes and express proteins, testing them for the desired function (e.g., binding affinity via SPR).

- Model Retraining: Feed the experimental results (successes and failures) back into the predictive and generative models to improve their accuracy for the next design cycle. This entire pipeline, from objective to tested candidates, can now be completed in weeks rather than years [10].

Case Study: Autonomous Materials Discovery

A prime example is the discovery of novel materials, such as metal-organic frameworks (MOFs) or organic electronic materials, where the performance is determined by a complex interplay of composition, structure, and processing.

Diagram 2: Autonomous Materials Discovery Loop

Challenges and Future Directions

Despite the promising advances, several challenges remain in the full adoption of ML-driven DoE. The "black box" nature of complex models like deep neural networks poses a significant hurdle, as scientific discovery often requires not just a prediction but a causal, interpretable understanding [6]. Efforts in explainable AI (XAI) are crucial to address this. Furthermore, the quality and quantity of available data are often limiting factors, necessitating robust methods for small-data learning and the development of sophisticated data infrastructure in laboratories.

The future points towards more collaborative human-AI science, where AI systems handle high-volume, repetitive optimization and hypothesis generation, while human scientists provide creative direction, deep domain insight, and ethical oversight [6]. As these tools become more accessible and integrated into laboratory instrumentation, ML-driven DoE will become the standard, rather than the exception, for research and development across the chemical, materials, and pharmaceutical sciences.

For over half a century, the pharmaceutical industry has been trapped by Eroom’s Law—the observation that the cost and time required to bring a new drug to market increase exponentially despite technological advances, with current costs exceeding $2.6 billion and timelines stretching beyond a decade [14]. A core driver of this crisis is the high attrition rate in clinical development, where failures in late-stage trials due to lack of efficacy or toxicity consume immense resources [14]. This article, framed within a broader thesis on machine learning-driven Design of Experiment (DoE) closed-loop optimization, argues that intelligent, adaptive closed-loop systems represent a paradigm shift capable of bending this curve. By integrating real-time data acquisition, predictive AI models, and automated control, these systems can enhance precision in both drug discovery and therapeutic administration, directly targeting the inefficiencies and risks underpinning Eroom's Law [15] [16].

Quantitative Impact: The Scale of the Problem and the Measurable Benefit of Closed-Loop Approaches

The following tables summarize the key quantitative challenges of the current paradigm and the emerging evidence for closed-loop system efficacy.

Table 1: The Eroom's Law Challenge & AI's Potential Impact

| Metric | Traditional Paradigm Performance | AI/Closed-Loop Potential Impact | Data Source |

|---|---|---|---|

| Drug Development Cost | > $2.6 billion per new drug [14] | AI estimated to reduce discovery costs by 25-50% in preclinical stages [17] | [14] [17] |

| Development Timeline | Often > 10 years [14] | AI can reduce timelines by 25-50%; examples: AI-designed candidate to trials in <30 months (vs. ~60-month average) [14] [17] | [14] [17] |

| Clinical Trial Attrition | High failure rates, especially in Phase II/III due to efficacy/toxicity [14] | AI improves target selection, patient stratification, and predictive toxicology to lower failure rates [15] | [14] [15] |

| Pharmacokinetic Variability | BSA-based dosing leads to order-of-magnitude variations in drug exposure [16] | Closed-loop systems can maintain drug concentration within target range, reducing variability [16] | [16] |

Table 2: Documented Performance of Closed-Loop Drug Delivery Systems

| System / Drug Target | Key Performance Metric vs. Manual Control | Certainty of Evidence | Context |

|---|---|---|---|

| Closed-loop for Noradrenaline | Reduced duration of blood pressure outside target by 14.9% (95% CI 9.6-20.2%) [18] | Low to very low [18] | ICU/Operating Room [18] |

| Closed-loop for Vasodilators | Reduced duration of blood pressure outside target by 7.4% (95% CI 5.2-9.7%) [18] | Low to very low [18] | ICU/Operating Room [18] |

| CLAUDIA for 5-FU Chemotherapy | Maintained plasma concentration within target range vs. BSA-based dosing causing 7x overdose in animal model [16] | Foundational research [16] | Preclinical, in vivo [16] |

| Closed-loop for Propofol | Reduced recovery time by 1.3 minutes (95% CI 0.4-2.1 min) [18] | Low [18] | Anesthesia [18] |

Application Notes & Detailed Experimental Protocols

This section outlines concrete methodologies that exemplify the closed-loop, AI-driven approach to countering attrition and inefficiency.

Protocol: Integrated Machine Learning & High-Throughput Screening (HTS) for Probe Discovery

This protocol, based on the work by Yasgar et al. [19], details a closed-loop cycle of experimental data generation and model refinement to rapidly identify selective chemical probes.

Aim: To discover isoform-selective chemical probe candidates for the Aldehyde Dehydrogenase (ALDH) enzyme family.

Workflow Overview:

- Initial Experimental Data Generation (qHTS): Perform quantitative High-Throughput Screening of ~13,000 annotated compounds against multiple ALDH isoforms in both biochemical and cell-based assays.

- Model Training & Virtual Screening: Use the resulting activity dataset to train Machine Learning (ML) and Pharmacophore (PH4) models. Employ these models to perform a virtual screen of a larger, chemically diverse library (~174,000 compounds).

- Hit Expansion & Validation: Select top virtual hits for experimental validation in biochemical and cellular assays. Confirm target engagement using cellular thermal shift assays (CETSA).

- Feedback Loop: Integrate new validation data to refine the ML/PH4 models for subsequent iteration.

Detailed Materials & Methods:

- Library: Annotated chemical library (~13,000 compounds) for primary screening; diverse virtual library (~174,000 compounds).

- Assays: Recombinant ALDH isoform biochemical activity assays; cell-based viability/activity assays; Cellular Thermal Shift Assay (CETSA) for target engagement.

- Modeling: Standard QSAR/ML software (e.g., Random Forest, Deep Neural Networks); Pharmacophore modeling suite.

- Procedure:

- Execute qHTS in 384-well or 1536-well format, generating dose-response curves for all compounds against each ALDH isoform.

- Curate data to create a clean training set labeled with compound structures and isoform-specific activity metrics.

- Train separate ML models for each ALDH isoform. Develop PH4 models based on active compound structures.

- Apply models to score the large virtual library. Prioritize compounds with high predicted activity and selectivity, and desirable chemical diversity.

- Procure/purchase and test the top 100-500 virtual hits in the same experimental assays used in step 1.

- For confirmed selective hits, perform CETSA: treat cells with compound, heat lysates, and quantify remaining soluble target protein via immunoblotting to confirm direct binding.

- Add new experimental results to the training set and retrain models to close the loop.

Protocol: Closed-Loop Automated Drug Infusion for Personalized Chemotherapy (CLAUDIA)

This protocol, based on the CLAUDIA system [16], describes a physiologically closed-loop control system to personalize chemotherapy dosing in real-time.

Aim: To maintain a target plasma concentration of 5-Fluorouracil (5-FU) in a living subject, irrespective of inter- and intra-individual pharmacokinetic (PK) variability.

Workflow Overview:

- Continuous Sensing: The system continuously draws blood from the subject, processes it via inline high-performance liquid chromatography-mass spectrometry (HPLC-MS) to measure the current plasma drug concentration.

- Control Algorithm Computation: A controller (e.g., model-predictive control algorithm) compares the measured concentration to the target concentration-time profile (which could be constant or chronomodulated).

- Automated Actuation: The controller dynamically adjusts the infusion rate of a syringe pump delivering 5-FU to minimize the error between measured and target concentrations.

- Feedback Loop: The updated infusion rate changes the subject's plasma concentration, which is again measured by the sensor, closing the loop.

Detailed Materials & Methods:

- System Components: HPLC-MS system (sensor); Syringe pump with drug reservoir (actuator); Custom software with control algorithm (controller); Blood withdrawal and reinfusion lines with anticoagulant; Animal or human subject model.

- Control Algorithm: A pharmacokinetic model of the drug (e.g., a two-compartment model for 5-FU) is embedded within a model-predictive control (MPC) framework. The MPC algorithm uses the model to predict future drug concentrations based on past infusion rates and measurements, then calculates the optimal infusion trajectory to reach the target.

- Procedure (Preclinical In Vivo Validation):

- Establish a target plasma concentration range for 5-FU (e.g., therapeutic window).

- Implant venous access in an animal model (e.g., rabbit) for continuous blood withdrawal and drug infusion.

- Prime the CLAUDIA system, connecting the HPLC-MS for real-time analysis, the controller running the MPC algorithm, and the infusion pump.

- Initiate the experiment. The system begins with a standard infusion or a bolus calculated from population PK.

- The system operates autonomously: blood is sampled, analyzed (HPLC-MS cycle time ~5-7 minutes), concentration is fed to the controller, which computes and sets a new infusion rate.

- Compare outcomes to a control group receiving standard body-surface-area (BSA)-based dosing. Key metrics include: percentage time within target concentration range, incidence of toxic over-exposure, and antitumor efficacy.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function in Closed-Loop Optimization | Example/Context |

|---|---|---|

| AI/ML Model Platforms (e.g., Labguru AI Assistant, Sonrai Discovery) | Embedded in R&D software to automate data search, experiment comparison, and workflow generation; integrates multi-omic data for insight generation [20]. | Used for smarter data mining and hypothesis generation within a digital lab notebook environment [20]. |

| Quantitative High-Throughput Screening (qHTS) Platforms | Generates rich, dose-response datasets essential for training accurate ML models for compound activity prediction [19]. | Foundation for the integrated ML/HTS probe discovery protocol [19]. |

| Closed-Loop Drug Delivery System (e.g., CLAUDIA) | Integrates real-time biosensing (HPLC-MS) with adaptive control algorithms to personalize drug dosing in vivo [16]. | Research tool for maintaining precise chemotherapeutic drug levels [16]. |

| Automated Liquid Handlers (e.g., Tecan Veya, Eppendorf systems) | Provides the robotic physical interface to execute experiments with high reproducibility, feeding consistent data into analytical loops [20]. | Enables walk-up automation for assay execution, freeing scientist time [20]. |

| 3D Cell Culture Automation (e.g., mo:re MO:BOT) | Standardizes production of human-relevant tissue models (organoids) for more predictive efficacy and toxicity screening, reducing late-stage attrition [20]. | Automates seeding and feeding of organoids for high-content screening [20]. |

| Foundation Models for Biology (e.g., Bioptimus, Evo) | Trained on massive multi-omic datasets to uncover biological "rules," predict novel targets, and accelerate preclinical pipeline decisions [21]. | Used for target identification and mechanism of action prediction [21]. |

Visualizations: Workflows and System Architectures

Diagram 1: Eroom's Law Crisis & Closed-Loop Solution Pathways

Diagram 2: Integrated ML & HTS Closed-Loop Workflow

Diagram 3: CLAUDIA Closed-Loop Chemotherapy Dosing System

The integration of machine learning (ML), automated experimentation, and robotic hardware is establishing a new paradigm for scientific discovery. This paradigm is exemplified by the closed-loop optimization system, a workflow that autonomously iterates between computational design and physical experimental testing to rapidly identify optimal solutions. In the context of drug development and materials science, this approach directly addresses the dual challenges of navigating high-dimensional parameter spaces and managing limited experimental resources [22]. The core of this workflow is a Machine Learning Design of Experiments (ML-DoE) model that continuously learns from experimental outcomes. It uses this knowledge to propose new, informative experiments, thereby accelerating the search for high-performing candidates, such as therapeutic molecules or functional materials, while minimizing costly trial-and-error. This application note provides a detailed protocol for implementing such a workflow, from constructing a vast virtual chemical library to deploying a robotic system for synthesis and validation.

Virtual Chemical Library Creation

The first critical component of the workflow is the generation of a high-quality, synthesizable virtual chemical library. This library serves as the expansive search space from which the ML-DoE algorithm will select candidates.

Protocol: Building a DIY Virtual Library

The following protocol, adapted from the Do-It-Yourself (DIY) combinatorial chemistry approach, enables research groups to construct large, novel, and cost-effective virtual libraries [23].

Step 1: Building Block Curation

- Source: Collect commercially available building blocks from chemical supplier databases. Using a curated data set, where compounds are rigorously checked and standardized, is beneficial to avoid structural errors that can negatively impact in silico studies [23].

- Selection Criteria: Filter for reagents based on cost (e.g., <$10/gram), reactivity, and structural diversity. An initial set might include ~4,500 molecules [23].

- Output: A curated list of building blocks, typically featuring common reactive functional groups like amines, carboxylic acids, alcohols, and aryl halides.

Step 2: Reaction Rule Definition

- Define a set of robust chemical reactions frequently used in medicinal chemistry. The DIY library example utilizes four main reaction categories [23]:

- These reactions are encoded as SMIRKS patterns for computational enumeration.

Step 3: Virtual Library Enumeration

- Tool: Use a combinatorial chemistry enumeration algorithm (e.g., ARCHIE) [23].

- Process: The algorithm checks pairwise combinations of building blocks from the curated set. If two reagents match a main reaction SMIRKS pattern and do not trigger any "side reaction" patterns, a product is generated.

- Multi-step Synthesis: The process can be extended to consecutive reaction steps. For example, intermediates generated in the first step can be reacted with original building blocks in a second step, creating products from three reagents over two steps [23].

- Result: The described protocol, starting from 1,000 selected building blocks, can yield a virtual library of over 14 million novel compounds [23].

Step 4: Library Characterization and Focused Library Generation

- Analyze the enumerated library for physicochemical properties (e.g., molecular weight, lipophilicity) and structural diversity.

- Generate focused sub-libraries tailored to specific biological targets or therapeutic areas based on prior knowledge.

Table 1: Key Reagents for a DIY Virtual Library

| Reagent Functionality | Example Reaction | Role in Synthesis |

|---|---|---|

| Carboxylic Acids | Amide Bond Formation | Serves as the acylating agent to form the core amide scaffold. |

| Amines | Amide Bond Formation | Acts as the nucleophile, coupling with carboxylic acids. |

| Aryl Halides | Suzuki-Miyaura Coupling | Provides the electrophilic partner for palladium-catalyzed cross-coupling. |

| Organoboranes | Suzuki-Miyaura Coupling | Provides the nucleophilic partner for cross-coupling. |

| Alcohols | Ester Formation | Reacts with carboxylic acids to form ester linkages. |

AI-Accelerated Virtual Screening and Design

With an ultra-large virtual library in place, the next step is to computationally identify the most promising candidates for synthesis and testing. AI-accelerated virtual screening is critical for this task.

Protocol: Structure-Based Virtual Screening with RosettaVS

This protocol uses the open-source OpenVS platform and the RosettaVS method to screen a multi-billion compound library against a protein target of interest [24].

Step 1: Target Preparation

- Obtain a high-resolution 3D structure of the target protein (e.g., via X-ray crystallography or homology modeling).

- Define the binding site coordinates based on known ligand interactions or structural analysis.

Step 2: Pre-screening with Active Learning

- Objective: To avoid the prohibitive cost of docking every compound in a billion-member library.

- Method: Employ an active learning strategy. A target-specific neural network is trained simultaneously during docking computations to predict promising compounds. This model triages the library, selecting only the most likely binders for more expensive docking calculations [24].

Step 3: Hierarchical Docking with RosettaVS

- Virtual Screening Express (VSX) Mode: Perform rapid initial docking of the pre-selected compound subset. This mode uses a simplified energy function and limited conformational sampling for speed [24].

- Virtual Screening High-Precision (VSH) Mode: Re-dock the top-ranking hits from VSX mode. VSH incorporates full receptor side-chain flexibility and limited backbone movement, providing a more accurate ranking of binding affinities using the improved RosettaGenFF-VS force field, which combines enthalpy (∆H) and entropy (∆S) estimates [24].

Step 4: Hit Identification and Analysis

- Rank compounds based on their predicted binding affinity from the VSH stage.

- Select the top candidates for visual inspection and further computational analysis (e.g., interaction fingerprinting) before proceeding to synthesis.

Table 2: Performance of RosettaVS on Standard Benchmarks

| Benchmark Test | Metric | RosettaVS Performance | Comparative Advantage |

|---|---|---|---|

| CASF-2016 Docking Power | Success Rate (2Å) | Leading Performance | Superior at identifying native-like binding poses [24] |

| CASF-2016 Screening Power | Enrichment Factor (EF~1%~) | 16.72 | Outperforms second-best method (EF~1%~ = 11.9) [24] |

| DUD Dataset | AUC & ROC Enrichment | State-of-the-Art | Effectively distinguishes true binders from decoys [24] |

Robotic Synthesis and Testing

The computationally selected "virtual hits" must be synthesized and tested physically. This is achieved through a high-throughput, automated experimental platform.

Protocol: High-Throughput Robotic System for Membrane Development

This protocol, validated for the development of porous polymeric membranes, demonstrates a fully automated workflow for fabrication and characterization, readily adaptable to organic synthesis and other material systems [25].

Step 1: Automated Solution Preparation and Casting

- System: Integrated robotic platform with automated liquid handlers.

- Process:

- The system prepares polymer solutions according to predefined recipes, controlling parameters like polymer concentration and solvent type (e.g., using the green solvent PolarClean) [25].

- A robotic blade coater casts the solution into a thin film under controlled ambient conditions (e.g., humidity) [25].

Step 2: Controlled Phase Inversion

- The cast film is transferred to a coagulation bath (e.g., water) for nonsolvent-induced phase separation (NIPS), a process controlled by the robotic system to ensure reproducibility [25].

Step 3: High-Throughput Characterization

- Method: Instead of slow, traditional methods, the protocol uses automated compression testing [25].

- Analysis: The system performs compression tests on the membrane samples and automatically analyzes the stress-strain curves to estimate properties like stiffness, which serves as a proxy for porosity and intra-sample uniformity [25].

Step 4: Data Logging

- All fabrication parameters (e.g., concentration, humidity) and resulting characterization data are automatically recorded in a centralized database, ensuring data integrity for the ML model [25].

Table 3: The Scientist's Toolkit: Key Reagents and Materials

| Item | Function/Description | Application Note |

|---|---|---|

| Combinatorial Building Blocks | Low-cost, reactive molecules for library construction. | Select for cost (<$10/g) and diverse functional groups to maximize library size and novelty [23]. |

| RosettaVS Software Suite | Open-source platform for physics-based virtual screening. | Uses RosettaGenFF-VS force field; allows for receptor flexibility, critical for accurate screening [24]. |

| Automated Liquid Handler | Robotic system for nanoliter-scale liquid dispensing. | Enables miniaturized, reproducible assay setup and solution preparation for HTS [25] [26]. |

| Blade Coater/Casting System | Automated device for producing uniform thin films. | Precisely controls membrane/solid sample thickness; integrated into a larger robotic workflow [25]. |

| Automated Compression Tester | High-throughput mechanical characterization instrument. | Provides rapid, automated proxy measurement for material properties like porosity and stiffness [25]. |

| Microplates (1536-well) | Miniaturized assay platforms. | Foundation for uHTS, allowing for testing of >300,000 compounds per day with low reagent volumes [26]. |

The Closed-Loop: Integrating ML-DoE with Robotic Validation

The final and most transformative stage is closing the loop, where experimental results directly inform the next cycle of computational design.

Protocol: Implementing Deep Active Optimization

The DANTE (Deep Active optimization with Neural-surrogate-guided Tree Exploration) pipeline provides a robust framework for closed-loop optimization in high-dimensional, data-limited scenarios [27].

Step 1: Initial Data Collection and Surrogate Model Training

- Start with a small initial dataset (e.g., ~200 data points) from initial robotic experiments.

- Train a Deep Neural Network (DNN) as a surrogate model to approximate the complex relationship between input parameters (e.g., chemical structure, fabrication parameters) and the target output (e.g., locomotion speed, binding affinity, porosity) [27].

Step 2: Neural-Surrogate-Guided Tree Exploration (NTE)

- Objective: Find the global optimum with minimal experimental samples.

- Process:

- Conditional Selection: A tree search algorithm, modulated by a data-driven Upper Confidence Bound (DUCB), explores the search space. It uses the DNN to evaluate potential candidates and balances exploration (trying new areas of parameter space) and exploitation (refining known good candidates) [27].

- Local Backpropagation: When a promising candidate is identified via the tree search, its value information is used to update the DUCB of nearby nodes. This mechanism helps the algorithm escape local optima by preventing repeated visits to the same suboptimal candidate [27].

Step 3: Robotic Validation and Database Update

- The top candidates proposed by the NTE algorithm are synthesized and tested using the automated robotic platform described in Section 4.

- The newly generated experimental data, consisting of the input parameters and the measured outcome, is added to the database [27].

Step 4: Iterative Closed-Loop Optimization

- The surrogate DNN is retrained on the expanded dataset.

- The NTE process is repeated, using the improved surrogate model to propose the next batch of candidates.

- This loop continues until a performance target is met or the experimental budget is exhausted.

Diagram 1: Closed-Loop Optimization Workflow. The workflow integrates computational design (yellow), machine learning (blue), and robotic experimentation (green) in an iterative cycle, driven by a central database.

Workflow Performance and Validation

The integrated closed-loop workflow has been demonstrated to significantly accelerate the discovery of optimal solutions across various domains.

Table 4: Quantitative Performance of the Closed-Loop Workflow

| Application Domain | Workflow Input | Key Outcome | Experimental Duration |

|---|---|---|---|

| Vibration-Driven Robot Morphology [22] | Tetris-inspired polyomino encoding for robot shape. | 69% gain in max locomotion speed (to 25.27 mm/s) after 30 optimization rounds. | N/A |

| Drug Discovery (KLHDC2 Target) [24] | Multi-billion compound virtual library. | 14% hit rate with single-digit µM binding affinity; 7 discovered hits. | < 7 days |

| Drug Discovery (Na~V~1.7 Target) [24] | Multi-billion compound virtual library. | 44% hit rate with single-digit µM binding affinity; 4 discovered hits. | < 7 days |

| Complex System Optimization (DANTE) [27] | High-dimensional problems with limited initial data (~200 points). | Outperformed state-of-the-art methods by 10-20%, finding superior solutions in up to 2000 dimensions. | N/A |

Experimental Validation: The effectiveness of the virtual screening and design steps is confirmed by high-resolution experimental validation. For instance, an X-ray crystallographic structure of a discovered hit compound bound to its protein target (KLHDC2) showed remarkable agreement with the docking pose predicted by the RosettaVS method, confirming the predictive power of the computational protocol [24]. Similarly, in morphological optimization, the emergence of physically intelligible "forelimb-torso-tail" configurations in evolved robots clarifies the structure-function links learned by the algorithm [22].

Methodologies and Real-World Applications in Drug Discovery and Formulation

In the realm of machine learning-driven design of experiments (DoE), closed-loop optimization represents a paradigm shift toward autonomous experimental systems. These systems intelligently iterates between proposing experiments, executing them, and learning from the results to maximize a desired objective. Bayesian Optimization (BO) has emerged as a cornerstone of this framework, providing a sample-efficient strategy for optimizing expensive, noisy, or black-box functions. Its power derives from a Bayesian probabilistic model, typically a Gaussian Process (GP), which maps parameters to objectives, and an acquisition function, which guides the selection of subsequent experiments by balancing the exploration of uncertain regions with the exploitation of known promising areas. This article details the principles of BO, with a focus on Thompson Sampling and Gaussian Processes, and provides practical application notes and protocols for its implementation in closed-loop optimization research, particularly in scientific domains like drug development.

Theoretical Foundations: Gaussian Processes and Thompson Sampling

Gaussian Process as a Probabilistic Surrogate

At the heart of BO lies the surrogate model, a probabilistic approximation of the unknown objective function. The Gaussian Process is a dominant choice for this role due to its flexibility and well-calibrated uncertainty estimates. A GP defines a distribution over functions, where any finite set of function values has a joint Gaussian distribution. It is fully specified by a mean function, often set to zero, and a kernel function (k(x, x')) that encodes the covariance between function values at input points (x) and (x') [28].

The kernel function dictates the smoothness and properties of the functions modeled by the GP. For a set of observed data points (\mathcal{D}{1:t} = {(xi, yi)}{i=1}^t), the GP posterior distribution provides a predictive mean (\mut(x)) and variance (\sigma^2t(x)) for any new input (x). This posterior distribution forms the belief about the objective function upon which the acquisition function operates.

Thompson Sampling for Acquisition

Thompson Sampling (TS) is a classic yet powerful acquisition strategy that naturally balances exploration and exploitation. The core principle of TS is to randomly sample a function from the current posterior distribution of the surrogate model and then select the next evaluation point that maximizes this sampled function [29]. In the context of a GP surrogate, this involves drawing a sample from the GP posterior and choosing (x{t+1} = \arg\maxx \hat{f}(x)), where (\hat{f}) is the sampled function.

A key advantage of Thompson Sampling is its property that a candidate (x) is selected with a probability equal to its probability of maximality (PoM)—the posterior probability that it is the true optimum [29]. This property makes TS particularly well-suited for batched or parallel Bayesian optimization, as independent samples from the posterior will naturally yield a diverse set of evaluation points, efficiently exploring the space without additional mechanisms [29] [30].

Table 1: Key Components of Bayesian Optimization

| Component | Description | Common Choices | |

|---|---|---|---|

| Surrogate Model | A probabilistic model that approximates the black-box objective function. | Gaussian Process (GP), Random Forests, Bayesian Neural Networks [28]. | |

| Acquisition Function | A function that uses the surrogate's posterior to select the next point to evaluate by trading off exploration and exploitation. | Thompson Sampling (TS), Expected Improvement (EI), Upper Confidence Bound (UCB) [28] [30]. | |

| Kernel Function | Defines the covariance structure of a GP, influencing the smoothness of the functions it can model. | Radial Basis Function (RBF), Matérn [28]. | |

| Probability of Maximality (PoM) | The posterior probability that a given point is the true global optimum. Directly utilized by Thompson Sampling [29]. | (\mathrm{PoM}(x | \mathrm{data}):= \mathbb{P}[R_x = R^* | \mathrm{data}]) |

Advanced Methodologies and Scalable Extensions

Standard BO faces challenges in high-dimensional spaces, large unstructured domains (e.g., molecular sequences), and with limited evaluation budgets. Recent research has produced several advanced methodologies to address these limitations.

- Deep Kernel Learning: Combines the expressiveness of deep neural networks with the calibrated uncertainty of GPs. A neural network maps high-dimensional inputs to a latent representation, and a GP acts on this representation. This is particularly useful for optimizing complex structures like electrode microstructures [31] or molecular designs.

- Satisficing Thompson Sampling: For time-sensitive problems where finding the absolute optimum is infeasible, the target is shifted from the optimal to a "satisficing" solution—one that is good enough and requires less information to identify. The BLASTS-PBO algorithm uses a rate–distortion function to formalize this trade-off, making it highly suitable for applications like fast-charging battery design [30].

- Thompson Sampling via Fine-Tuning (ToSFiT): This approach scales TS to large, unstructured discrete spaces (e.g., protein sequences, quantum circuit code) by parameterizing the probability of maximality using a large language model (LLM). The LLM is initialized with prior knowledge and incrementally fine-tuned toward the posterior, avoiding intractable acquisition function maximization [29].

- Time-Series-Informed BO: In controller tuning, each experiment is a temporal trajectory. Standard BO treats the entire experiment as a single black-box evaluation. TSI-BO aligns the fidelity dimension in multi-fidelity BO with closed-loop time, allowing partial episode data to be incorporated as lower-fidelity observations. This enables probabilistic early stopping of unpromising experiments, drastically improving resource efficiency [32].

Application Notes: Protocols from Recent Research

Protocol 1: Closed-Loop Optimization of Composition-Spread Films for the Anomalous Hall Effect

This protocol demonstrates a specialized BO workflow for combinatorial materials science [33].

1. Problem Formulation:

- Objective: Maximize the anomalous Hall resistivity ((\rho_{yx}^{A})) of a five-element alloy system (Fe, Co, Ni, and two from Ta, W, Ir).

- Search Space: 18,594 candidate compositions defined by atomic percent ranges.

- Constraint: Composition gradients can only be applied to pairs of elements (3d-3d or 5d-5d) to ensure film uniformity.

2. Experimental Setup & Reagents:

- Deposition System: Combinatorial sputtering system.

- Substrate: Thermally oxidized Si (SiO2/Si) substrates.

- Fabrication: Laser patterning system for photoresist-free device fabrication.

- Measurement: Customized multichannel probe for simultaneous AHE measurement of 13 devices.

3. Bayesian Optimization Workflow: The following diagram illustrates the closed-loop optimization workflow for composition-spread films.

Diagram 1: Closed-loop optimization workflow for composition-spread films, adapted from [33].

4. Algorithmic Steps:

a. Initialization: Populate candidates.csv with all possible compositions.

b. Composition Selection (using nimo.selection in COMBI mode):

i. Select a base composition with the highest acquisition function value (e.g., Expected Improvement).

ii. For all valid element pairs, propose L compositions with evenly spaced mixing ratios of the two elements, keeping others fixed.

iii. Score each pair by averaging the acquisition function values across its L compositions.

iv. Propose the element pair with the highest score for the next composition-spread film.

c. Experiment Execution: Automatically generate a sputter recipe and execute thin-film deposition, laser patterning, and AHE measurement.

d. Data Assimilation (using nimo.analysis_output):

i. Remove candidate compositions within the range of the tested composition-spread film.

ii. Add the actual experimental compositions and their measured (\rho_{yx}^{A}) values to candidates.csv.

e. Iteration: Repeat steps (b) to (d) until the experimental budget is exhausted or performance converges.

5. Key Outcome: This closed-loop exploration achieved a maximum anomalous Hall resistivity of 10.9 µΩ cm in a Fe44.9Co27.9Ni12.1Ta3.3Ir11.7 amorphous thin film [33].

Protocol 2: Batch Bayesian Optimization for Molecular Design with Pretrained Joint Predictions

This protocol outlines a scalable BO method for drug discovery using foundation models [34].

1. Problem Formulation:

- Objective: Discover small-molecule inhibitors with high binding affinity for a target protein (e.g., EGFR).

- Challenge: The search space is extremely large (e.g., a virtual library of millions of compounds), and evaluations (synthesis and testing) are costly and performed in batches.

2. Surrogate Model: Epistemic Neural Networks (ENNs)

- Architecture: An ENN (f\theta(x, z)) is used, where (x) is a molecular representation and (z) is a latent epistemic index drawn from a distribution (pZ(z)).

- Pretrained Prior: The ENN incorporates a pretrained prior network, frozen and conditioned on the same input (x), to improve the quality of the joint predictive distribution. This prior is pretrained on synthetic data or a large chemical corpus.

- Joint Predictions: The model provides a joint predictive distribution (p(y{1:N} \| x{1:N}) = \intz \delta([f\theta(xi, z)]{1:N} = y{1:N}) pZ(z) dz), which is crucial for batch selection.

3. Batch Acquisition Functions:

- q-Probability of Improvement (qPOI): Selects the batch that has the highest probability of containing the global maximum. It hedges against correlations within the batch.

- Expected Maximum (EMAX): Selects the batch with the highest expected maximum value. It also penalizes correlations and is computationally more efficient for very large pools.

4. Workflow Diagram:

Diagram 2: Batch BO workflow for molecular design using pretrained ENNs.

5. Experimental Steps: a. Representation: Encode molecules using a structure-informed foundation model (e.g., COATI [34]). b. Model Training: Train the ENN surrogate model on available binding affinity data, incorporating the pretrained prior. c. Batch Selection: For a given batch size (B), sample multiple functions (particles) from the ENN. Use these samples to approximate the qPOI or EMAX acquisition function and select the batch of (B) molecules that maximizes it. d. Experiment & Update: Synthesize and test the selected batch, then add the new data to the training set. e. Iteration: Repeat until a potent inhibitor is identified or resources are exhausted.

6. Key Outcome: This approach led to the rediscovery of known potent EGFR inhibitors in up to 5x fewer iterations and potent inhibitors from a real-world library in up to 10x fewer iterations compared to baseline methods [34].

Table 2: Summary of Bayesian Optimization Applications and Outcomes

| Application Domain | Optimization Challenge | BO Method & Key Features | Reported Outcome |

|---|---|---|---|

| Electrode Microstructure Design [31] | Generate 3D microstructures with optimal morphological & transport properties. | Deep Kernel BO: GAN latent space + GP surrogate. Constrained optimization. | Simultaneous maximization of correlated properties (surface area & diffusivity). |

| Fast Charging Battery Design [30] | Large strategy space, time-sensitive degradation testing. | BLASTS-PBO: Satisficing TS with Gaussian Processes. Parallel evaluations. | Outperformed sequential & parallel TS in identifying effective charging strategies. |

| Anomalous Hall Effect Optimization [33] | Vast composition space for a 5-element alloy. | Custom BO for Combinatorial Films. Manages composition-spread proposals. | Achieved 10.9 µΩ cm Hall resistivity in an amorphous film. |

| Controller Tuning [32] | Resource-intensive closed-loop experiments; temporal structure. | Time-Series-Informed BO. Uses partial trajectories, enables early stopping. | Achieved comparable performance with ~50% fewer resources. |

| Molecular Design [34] | Extremely large search space; batch synthesis & testing. | Batch BO with Pretrained ENNs. Enables joint predictions for hedging. | 5x-10x faster discovery of potent inhibitors. |

Table 3: Key Research Reagent Solutions for Closed-Loop Bayesian Optimization

| Tool / Resource | Function / Description | Example Use Case |

|---|---|---|

| PHYSBO (Bayesian Optimization Package) [33] | A Python library for physics-based BO, providing core GP and acquisition function capabilities. | Used as the optimization engine in the closed-loop exploration of composition-spread films [33]. |

| NIMO (NIMS Orchestration System) [33] | Orchestration software to support autonomous closed-loop exploration by integrating experiment control, analysis, and BO. | Manages the entire workflow from proposal generation to experimental input file creation [33]. |

| Summit [28] | A Python framework for self-optimizing chemical reactions, integrating multiple BO strategies and benchmarks. | Used for multi-objective optimization of chemical reactions (e.g., using TSEMO algorithm) [28]. |

| Gaussian Process Prior VAE [35] | A conditional generative model that uses a GP prior in a VAE for efficient high-dimensional BO. | Projects high-dimensional data to a structured latent space where GP-based BO is performed effectively [35]. |

| Epistemic Neural Network (ENN) [34] | A neural network architecture that provides efficient joint predictive distributions by marginalizing over a latent index. | Enables scalable batch BO for molecular design by allowing rapid sampling of correlated batch properties [34]. |

| Combinatorial Sputtering System [33] | A deposition system capable of fabricating thin-film libraries with controlled composition gradients on a single substrate. | High-throughput fabrication of composition-spread films for materials discovery [33]. |

The development of commercial liquid formulations represents a significant challenge in the pharmaceutical and chemical industries, characterized by complex mixtures of ingredients where predictive physical models for desired properties are often unavailable [36]. This complexity, combined with the pressure to reduce time-to-market, necessitates innovative approaches to formulation design and optimization [37]. Traditional formulation development is a time-consuming, iterative process that depends heavily on researcher expertise and often yields suboptimal results due to resource and time constraints [38].

The integration of robotic platforms with machine learning-driven experimental design constitutes a paradigm shift in formulation science. This approach enables the rapid exploration of vast formulation spaces through automated, high-throughput experimentation guided by intelligent algorithms that learn from each experimental iteration [39]. This case study examines the application of this integrated technological framework to optimize a commercial liquid formulation, demonstrating how the synergy between robotics and AI can simultaneously address multiple, potentially competing objectives to identify optimal formulations with unprecedented efficiency.

Key Results and Quantitative Performance