Efficient Hyperparameter Optimization (HPO): Strategies for Managing Costly Function Evaluations in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals grappling with the computational expense of Hyperparameter Optimization (HPO).

Efficient Hyperparameter Optimization (HPO): Strategies for Managing Costly Function Evaluations in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals grappling with the computational expense of Hyperparameter Optimization (HPO). It begins by establishing the foundational challenge of expensive function evaluations (e.g., training complex ML models or running molecular dynamics simulations). It then explores key methodological approaches, including surrogate models (Bayesian Optimization), multi-fidelity methods, and evolutionary strategies tailored for low-data regimes. The guide further offers practical troubleshooting and optimization techniques to maximize information gain from each evaluation. Finally, it presents a validation framework to compare these methods on real-world biomedical datasets, empowering scientists to select the most efficient HPO strategy for their specific, resource-constrained projects.

The High Cost of Knowledge: Why Expensive Evaluations Hinder HPO in Biomedical AI

Technical Support Center: Troubleshooting Expensive Function Evaluations in HPO

This support center assists researchers in managing expensive evaluations during Hyperparameter Optimization (HPO) for scientific domains like drug development. "Expensive" manifests in three key dimensions, as defined in the table below.

Table 1: Dimensions of "Expensive" Evaluations

| Dimension | Description | Typical Metrics | Impact on HPO Strategy |

|---|---|---|---|

| Wall-clock Time | Total real-time from initiation to result. | Hours/Days per configuration. | Limits total number of sequential evaluations. Favors parallelizable HPO methods (e.g., random search, Hyperband). |

| Compute Cost | Financial cost of cloud/on-premise compute resources. | GPU/CPU hours, monetary cost per run. | Constrains total budget for the optimization campaign. Requires cost-aware early stopping. |

| Experimental Resource | Depletion of finite physical materials or lab capacity. | Consumables (reagents), assay plates, synthesis capacity. | Most critical in wet-lab settings. Demands sample-efficient HPO (e.g., Bayesian Optimization) to minimize physical trials. |

FAQs and Troubleshooting Guides

Q1: My simulation-based objective function takes 3 days per run. Which HPO algorithm should I prioritize? A: With high wall-clock time, avoid algorithms that require many sequential runs (e.g., standard Bayesian Optimization). Prioritize asynchronous or parallelizable methods.

- Recommended Protocol: Implement Hyperband with Successive Halving. It dynamically allocates resources to promising configurations and can be parallelized effectively. Use a high

eta(e.g., 3) for aggressive early stopping. - Troubleshooting: If parallel resources are limited, use a Random Search baseline first. It is embarrassingly parallel and often outperforms grid search. If you must use Bayesian Optimization, ensure it uses a batch acquisition function (e.g., qEI) to propose multiple configurations in parallel.

Q2: How can I reduce compute costs when using cloud-based GPU instances for model training in HPO? A: Implement aggressive early stopping and fidelity reduction.

- Detailed Methodology (Low-Fidelity Proxy):

- Define a low-fidelity proxy for your full evaluation (e.g., train on 10% of data, fewer epochs, lower-resolution images).

- Run the majority of your HPO (e.g., using a multi-fidelity method like BOHB) on the cheap proxy.

- Only promote the top

kconfigurations to be evaluated on the full, high-fidelity, expensive objective function. - Cost Control: Set up budget alerts and automate instance termination upon HPO completion.

Q3: In molecular screening, my assay is costly and reagent-limited. How can I optimize with fewer physical experiments? A: Sample efficiency is paramount. You must incorporate prior knowledge and use the most sample-efficient HPO methods.

- Experimental Protocol (Bayesian Optimization with Prior):

- Initial Design: Use a space-filling design (e.g., Latin Hypercube) for the first 5-10 data points to build an initial model.

- Surrogate Model: Use a Gaussian Process (GP) with a kernel (e.g., Matérn) appropriate for your chemical descriptor space.

- Acquisition Function: Employ Expected Improvement (EI) to propose the single next experiment that promises the highest gain.

- Iterate: After each experimental result, update the GP model and run EI to propose the next single experiment. This sequential approach maximizes information gain per trial.

- Troubleshooting: If the GP model is slow to fit, consider switching to a Random Forest based surrogate (e.g., in SMAC) for very high-dimensional parameter spaces.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for High-Throughput Experimental HPO

| Item / Solution | Function in Experimental HPO Context |

|---|---|

| Assay Kits (e.g., Cell Viability, Binding) | Standardized, reproducible readout for the objective function (e.g., IC50). Enables parallel evaluation of multiple HPO-suggested conditions. |

| Microplate Readers (384/1536-well) | High-throughput data acquisition, essential for gathering evaluation results in parallel to keep pace with HPO batch suggestions. |

| Laboratory Automation (Liquid Handlers) | Enforces rigorous protocol adherence and minimizes human error, ensuring HPO receives consistent, high-quality evaluation data. |

| Chemical/Biological Libraries | The finite search space. Each "evaluation" consumes a discrete, often non-replenishable, amount of material. |

| Electronic Lab Notebook (ELN) | Critical for logging exact experimental conditions (HPO parameters) paired with outcomes, creating the essential dataset for surrogate model training. |

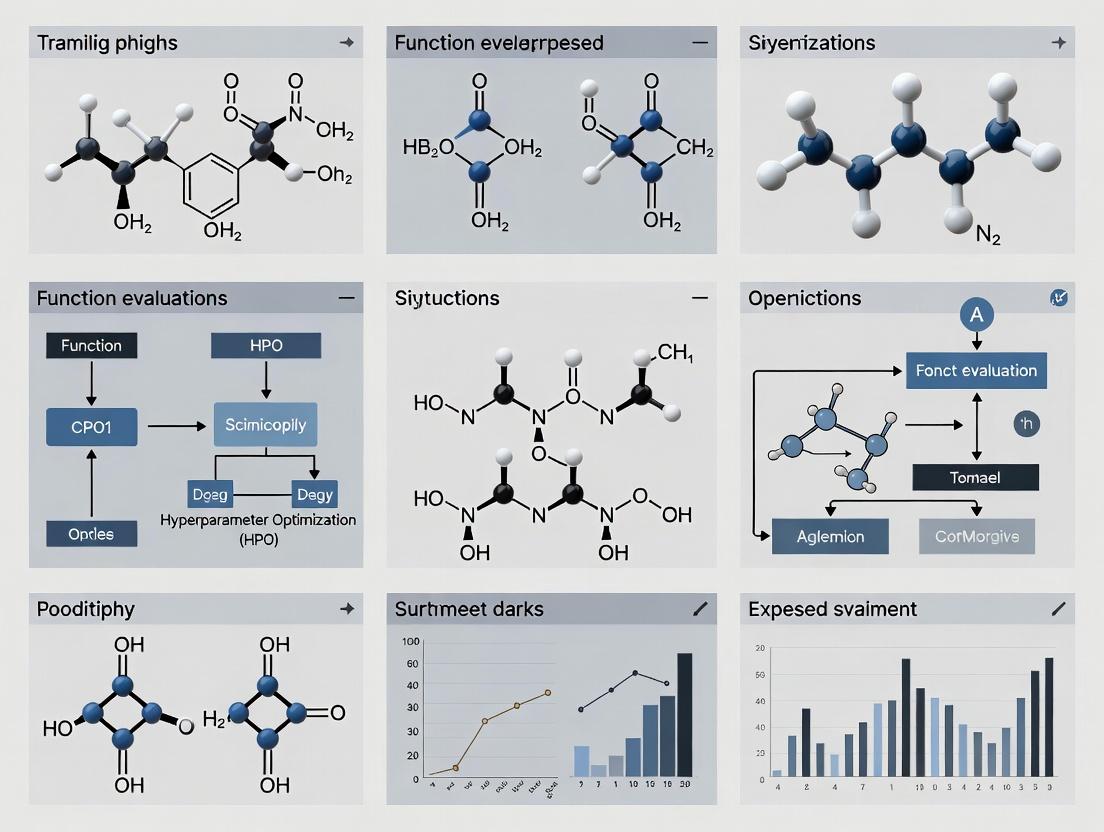

Visualizations

Diagram 1: Decision Flow for HPO Method Selection Based on Expense Type

Diagram 2: Bayesian Optimization Loop for Resource-Limited Experiments

Technical Support Center

Troubleshooting Guides

Guide 1: Dealing with Premature Convergence in Costly Bayesian Optimization

- Issue: The optimizer repeatedly suggests similar, suboptimal configurations, wasting expensive evaluations.

- Diagnosis: The acquisition function (e.g., EI) may be over-exploiting. The Gaussian Process kernel length-scales might be incorrectly specified, shrinking the model's uncertainty too quickly.

- Resolution:

- Switch from Expected Improvement (EI) to Upper Confidence Bound (UCB) with an increasing schedule for the beta parameter to force more exploration.

- Implement a "pending points" mechanism to account for parallel evaluations and prevent clustering.

- Use a kernel like Matérn 5/2 instead of the squared-exponential (RBF) for less smooth extrapolation.

- Consider adding explicit constraints via a penalty or a separate classifier model to steer the search away from known bad regions.

Guide 2: Managing High-Dimensional Search Spaces with Limited Budget

- Issue: With many hyperparameters (e.g., >20), performance plateaus rapidly as the budget is exhausted.

- Diagnosis: The "curse of dimensionality" makes global optimization infeasible; the search is effectively random.

- Resolution:

- Dimensionality Reduction: Perform a low-cost (e.g., Sobol) scan, fit a Random Forest, and use functional ANOVA to identify the top 5-10 most important parameters. Fix the rest to sensible defaults.

- Embedded Space Methods: Use a method like SAAS (Sparse Axis-Aligned Subspace) BO which places a strong sparsity prior on the high-dimensional space.

- Structure the Search: Use conditional parameters to create a hierarchical search space, ensuring irrelevant parameters are not activated.

Guide 3: Handling Noisy or Non-Stationary Objective Functions

- Issue: Repeated evaluation of the same configuration yields different results (noise), or the optimal region seems to shift during the search (non-stationarity).

- Diagnosis: Common in drug discovery due to experimental variance or changing assay conditions.

- Resolution:

- For Noise: Use a Gaussian Process model with a built-in noise parameter (

GaussianLikelihood) or switch to a Student-t process for heavier tails. Re-evaluate promising points 3 times to average noise. - For Non-Stationarity: Implement a rolling-window BO approach. Only use the last N=50 most recent evaluations to fit the surrogate model, discounting older data.

- For Noise: Use a Gaussian Process model with a built-in noise parameter (

Frequently Asked Questions (FAQs)

Q1: My experiment costs $10k per run, and I only have a budget for 50 trials. Should I use Random Search or Bayesian Optimization? A: Always use Bayesian Optimization (BO). The sample efficiency of BO becomes overwhelmingly superior under extreme budget constraints. Random Search is acceptable only for very low-dimensional spaces (<5) or when you can afford 100s of trials. With 50 expensive trials, BO's ability to model and reason about the space is critical.

Q2: How do I know if my HPO run has converged, and I should stop, given the high cost? A: Formal convergence proofs are rare in practical HPO. Use these heuristics:

- Performance Plateau: The moving average (window=10) of the best observed value has improved by less than 0.5% over the last 15 iterations.

- Prediction Uncertainty: The surrogate model's predicted mean at the suggested next point is not significantly better than the current best (within the model's uncertainty margin).

- Search Entropy: The acquisition function values for suggested points become very similar, indicating the model sees little gain from further exploration.

Q3: What open-source libraries are best for costly HPO in scientific domains? A: The leading libraries with robust implementations for expensive functions are:

- BoTorch/Ax (PyTorch): Industry-standard for research, offering state-of-the-art algorithms (e.g., NEI, qEI) and seamless GPU acceleration.

- Scikit-Optimize: Lightweight and easier to use for simpler problems, with good basic BO capabilities.

- Dragonfly: Excellent for high-dimensional and mixed (continuous, discrete, categorical) spaces, incorporating scalable global optimization.

Table 1: Comparison of HPO Methods Under Limited Evaluation Budget

| Method | Sample Efficiency (Rank) | Convergence Rate | Handling Noise | High-Dim. Scalability | Typical Use Case |

|---|---|---|---|---|---|

| Grid Search | Very Low (5) | Slow/No Proof | Poor | Very Poor | Tiny, discrete spaces only |

| Random Search | Low (4) | No Proof | Moderate | Poor | Baseline for small budgets (<30) |

| Bayesian Optimization (GP) | Very High (1) | Asymptotic Proofs | Good | Moderate (≤15 dim) | Costly, low-dim experiments |

| Sequential Model-Based Opt. | High (2) | Heuristic | Moderate | Moderate | General-purpose HPO |

| Tree Parzen Estimator (TPE) | High (2) | No Proof | Moderate | Good | Medium-budget, high-dim spaces |

| Evolutionary Algorithms | Moderate (3) | No Proof | Good | Moderate | Noisy, multi-modal objectives |

Table 2: Impact of Cost per Evaluation on Optimal HPO Strategy

| Cost per Evaluation | Typical Budget | Recommended Primary Strategy | Critical Complementary Action |

|---|---|---|---|

| Low (<$10) | >500 trials | Random Search, TPE | Extensive parallelization |

| Medium ($100 - $1k) | 50-200 trials | Bayesian Optimization (GP) | Careful space pruning, multi-fidelity |

| High ($5k - $50k) | 10-50 trials | Sparse BO, Trust Region BO | Transfer learning, strong priors |

| Extreme (>$100k) | <10 trials | Human-in-the-loop BO, Bayesian Opt. w/derivatives | Leverage all prior domain knowledge |

Experimental Protocols

Protocol 1: Benchmarking HPO Methods for Expensive Black-Box Functions

- Objective: Compare the performance of different HPO algorithms under strict evaluation budgets.

- Methodology:

- Select a suite of standard synthetic benchmark functions (e.g., Branin, Hartmann6, Ackley) known to mimic the properties of costly scientific objectives.

- For each HPO method (Random, TPE, GP-BO), run 50 independent trials with a fixed budget of 30 function evaluations.

- Initialize each run with 5 random points (seed the same for all methods).

- Record the best objective value found after each evaluation, averaged across all 50 trials.

- Plot the average performance vs. number of evaluations curve. The method whose curve is lowest (best value) at budget=30 is most sample-efficient.

- Key Metric: Regret =

f(best_found) - f(global_optimum).

Protocol 2: Evaluating Multi-Fidelity Optimization for Drug Candidate Screening

- Objective: Reduce total cost by using a low-fidelity assay (e.g., computational docking score) to guide a high-fidelity assay (e.g., wet-lab IC50 measurement).

- Methodology:

- Define a search space of molecular descriptors or reaction conditions.

- Establish a fidelity parameter (e.g.,

lambda=0.1for fast docking,lambda=1.0for full MD simulation/experiment). - Use a multi-fidelity BO algorithm (e.g., Hyperband with BOHB, or GP-based with a fidelity kernel).

- The algorithm decides both which configuration to test and at what fidelity level, trading off information gain vs. cost.

- Allocate a total cost budget (e.g., equivalent to 20 high-fidelity runs). Compare the final best high-fidelity result against standard BO using only the high-fidelity assay.

Visualizations

Title: How High Cost Amplifies Core HPO Challenges & Strategies

Title: Multi-Fidelity Bayesian Optimization Workflow for Drug Screening

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for a Bayesian Optimization Pipeline

| Item/Reagent | Function in the "Experiment" | Example/Note |

|---|---|---|

| Surrogate Model | Approximates the expensive objective function; the core learner. | Gaussian Process (GP) with Matérn kernel. Sparse GPs for >1k data points. |

| Acquisition Function | Decides the next point to evaluate by balancing exploration vs. exploitation. | Expected Improvement (EI), Lower Confidence Bound (LCB). Noisy EI for robust settings. |

| Optimizer (of Acq. Func.) | Finds the maximum of the acquisition function to get the next candidate. | L-BFGS-B for continuous, random restart. Monte Carlo for mixed spaces. |

| Initial Design | Provides the initial data points to "seed" the surrogate model. | Latin Hypercube Sampling (LHS) or Sobol sequence. Better coverage than pure random. |

| Domain & Budget | Defines the search space constraints and total resource limits. | Must be carefully pruned using domain knowledge before starting. |

| Multi-Fidelity Wrapper | Manages cheaper, approximate evaluations of the objective. | Hyperband, FABOLAS, or a custom GP with fidelity dimension. |

| Parallelization Layer | Enables simultaneous evaluation of multiple configurations. | Constant Liar, Kriging Believer, or parallel Thompson Sampling. |

Troubleshooting & FAQ Hub for Computational Experiments

Context: This support center is designed for researchers dealing with High-Performance Computing (HPC) and High-Performance Optimization (HPO) in biomedical applications, where managing costly computational function evaluations (e.g., molecular dynamics simulations, virtual patient cohort runs) is a primary constraint.

FAQs: General HPO & Costly Evaluations

Q1: My drug screening pipeline involving molecular docking is prohibitively slow. What are the first steps to optimize it before scaling HPO? A: Prioritize protocol simplification. 1) Pre-filtering: Use rapid, low-fidelity methods like 2D similarity screening or pharmacophore models to reduce the candidate library size before expensive 3D docking. 2) Reduced Simulation Time: For initial HPO loops, use shorter MD simulation times or coarse-grained models. 3) Surrogate Models: Implement a surrogate (e.g., Random Forest, Gaussian Process) trained on a small subset of full simulations to predict outcomes of new parameters during HPO search.

Q2: During clinical trial simulation, generating virtual patient cohorts is a bottleneck. How can I reduce this cost in my optimization loop? A: Adopt a multi-fidelity approach. Create a hierarchy of cohort models:

- Low-fidelity: Small cohort size (N=100), simple pharmacokinetic (PK) models.

- Mid-fidelity: Moderate cohort size (N=500), standard PK/PD models.

- High-fidelity: Large, diverse cohort (N=1000+), complex systems biology models. Use HPO algorithms like Hyperband or BOHB that dynamically allocate resources, quickly discarding poor-performing trial designs using low-fidelity simulations and only evaluating promising ones with high fidelity.

Q3: My protein folding simulation (e.g., using AlphaFold2 or MD) consumes immense resources. How can I design an HPO study for force field parameters under this budget? A: Leverage transfer learning and warm starts. 1) Warm Start: Initialize your HPO search with parameters from published, successful folding simulations of homologous proteins. 2) Feature-Based Surrogates: Use protein features (e.g., sequence length, amino acid composition, predicted secondary structure) to build a surrogate model that predicts simulation success likelihood, guiding HPO away from poor parameters. 3) Early Stopping: Integrate metrics like RMSD plateauing to terminate unpromising simulations early, saving compute cycles.

FAQs: Specific Technical Failures

Q4: I encounter "GPU Out of Memory" errors when running large-scale virtual screening with a deep learning model. How can I proceed? A: This is a classic memory-cost trade-off. Solutions: 1) Gradient Accumulation: Reduce batch size drastically (e.g., to 1 or 2) and accumulate gradients over multiple batches before updating weights. This mimics a larger batch size with lower memory use. 2) Model Pruning/Quantization: For custom models, apply pruning to remove insignificant weights and use mixed-precision training (FP16). 3) Checkpointing: Use activation checkpointing in frameworks like PyTorch to trade compute for memory by recalculating activations during backward pass.

Q5: My clinical trial simulation results show anomalously low placebo arm response. What could be wrong in the patient population model? A: Likely an issue in the virtual patient generator (VPG). Troubleshoot: 1) Input Data Correlation: Verify that the real-world data used to train the VPG preserves correlations between baseline covariates (e.g., age, biomarker levels) and disease progression. 2) Natural History Model: Ensure the underlying disease progression model for the placebo arm is calibrated to historical control data, not just active treatment data. 3) Parameter Bounds: Check that sampled parameters for disease progression rates remain within biologically plausible ranges.

Q6: After optimizing protein folding simulation parameters, the experimental validation fails. What are common pitfalls? A: This indicates an optimization-to-reality gap. 1) Objective Function Mismatch: The metric optimized in silico (e.g., lowest free energy, Templeton score) may not correlate perfectly with experimental stability. Consider multi-objective HPO including metrics like root-mean-square fluctuation (RMSF) for flexibility. 2) Solvent/Omission Neglect: Ensure your simulation protocol and cost function account for critical factors like explicit solvent molecules, pH, or post-translational modifications. 3) Overfitting: You may have overfitted parameters to a single protein or fold class. Validate optimized parameters on a hold-out set of diverse protein structures.

Experimental Protocols

Protocol 1: Multi-Fidelity Bayesian Optimization for Drug Candidate Screening Objective: Identify top-10 candidate molecules with highest predicted binding affinity while minimizing full docking simulations.

- Library Preparation: Curate a library of 1M small molecules in SMILES format.

- Fidelity Tiers Definition:

- Low-fidelity (LF): Quick 2D fingerprint (ECFP4) similarity to a known active (Tanimoto coefficient >0.4). Cost: ~0.1 CPU-sec/mol.

- Medium-fidelity (MF): Fast rigid docking with Vina (exhaustiveness=8). Cost: ~30 CPU-sec/mol.

- High-fidelity (HF): Flexible docking with induced fit or short MD simulation. Cost: ~2 GPU-hour/mol.

- HPO Setup: Use a Multi-Fidelity Bayesian Optimization (MF-BO) framework (e.g., with

Axplatform). The acquisition function proposes batches of molecules, starting with LF evaluation. - Iteration: Molecules passing LF threshold are evaluated with MF. The top-performing MF molecules are promoted to HF. The surrogate model is updated after each batch.

- Termination: Stop after 100 HF evaluations or when marginal improvement plateaus.

Protocol 2: Simulation-Based Cost-Effective Clinical Trial Design Optimization Objective: Optimize trial design parameters (sample size, visit frequency, dose ratio) to maximize statistical power for detecting a treatment effect, given a fixed computational budget.

- Define Design Space: Parameters: Npatients (100-500), visits (4-12), doselevels (2-4). Total combinatorial space > 10,000 designs.

- Build Fast Surrogate: Run 50 high-fidelity trial simulations (using

RSimDesignorJuliaClinicalTrialSimulator) across a space-filling design. Record power, cost. - Train Model: Train a Gaussian Process (GP) regression model mapping design parameters to predicted power.

- Bayesian Optimization: Use the GP to run HPO (e.g., via

scikit-optimize). The acquisition function (Expected Improvement) suggests the next most promising trial design to simulate at high fidelity. - Validation: Simulate the top 3 optimized designs with 1000 Monte Carlo replicates to confirm power predictions.

Protocol 3: Resource-Constrained Optimization of Protein Folding Simulation Parameters Objective: Find MD simulation parameters (time step, cutoff, temperature coupling) that maximize folding accuracy (RMSD to native) for a given compute time.

- System Preparation: Select a small, fast-folding protein (e.g., villin headpiece, Protein B).

- Parameter Space: Define ranges: timestep (1-4 fs), nonbondcutoff (0.8-1.2 nm), thermostat (Berendsen, v-rescale).

- Low-Cost Proxy: Use folding simulations shortened to 10-50 ns as low-cost proxies for full 500ns+ runs.

- Asynchronous Successive Halving (ASHA) Scheduler:

- Launch many simulations with random parameters at 10ns.

- Promote only the top 1/3 of simulations (by lowest RMSD) to 50ns.

- Promote the best from that set to full 500ns simulation.

- This culls poor parameters early.

- Analysis: Correlate short-time scale metrics (e.g., radius of gyration at 10ns) with final RMSD to improve future proxy definitions.

Table 1: Comparative Cost of Different Fidelity Levels in Biomedical Simulations

| Application | Low-Fidelity Method (Cost/Evaluation) | Medium-Fidelity Method (Cost/Evaluation) | High-Fidelity Method (Cost/Evaluation) | Typical HPO Strategy Applicable |

|---|---|---|---|---|

| Drug Screening | 2D Similarity (0.1 CPU-sec) | Rigid Docking (30 CPU-sec) | Flexible Docking/MD (2 GPU-hour) | Multi-Fidelity BO, Hyperband |

| Clinical Trial Sim | Analytic PK Model (1 CPU-sec) | Small Cohort (N=100) Sim (1 CPU-min) | Large Cohort (N=1000) Sim (1 CPU-hour) | Multi-Fidelity BO, Surrogate-Assisted |

| Protein Folding | Homology Modeling (5 CPU-min) | Short MD (10 ns, 10 GPU-hour) | Long MD (1 µs, 1000 GPU-hour) | Successive Halving, Early Stopping |

Table 2: Impact of HPO Strategies on Reduction of Function Evaluations

| HPO Strategy | Application Example | Reduction in High-Fidelity Evals vs. Grid Search | Key Prerequisite |

|---|---|---|---|

| Bayesian Optimization (BO) | Optimizing docking scoring function weights | 60-70% | Initial dataset of ~20-50 evals |

| Multi-Fidelity BO | Virtual screening cascade | 80-90% | Defined fidelity hierarchy & cost model |

| Hyperband / BOHB | MD parameter tuning | 70-85% | Ability to assess intermediate results (early stop) |

| Surrogate Model Warm-Start | Clinical trial design space exploration | 50-60% | Relevant historical or public dataset available |

Visualizations

Diagram Title: Multi-fidelity HPO workflow for drug screening

Diagram Title: Surrogate-assisted HPO for clinical trial design

Diagram Title: Successive halving for protein folding MD parameter tuning

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Cost-Effective HPO in Biomedicine

| Item/Tool Name | Category | Function in Managing Expensive Evaluations |

|---|---|---|

Ax / BoTorch |

HPO Platform | Provides state-of-the-art BO and multi-fidelity BO implementations, enabling efficient parameter search. |

Ray Tune / Optuna |

HPO Scheduler | Implements early-stopping algorithms like ASHA and Hyperband to dynamically allocate resources. |

GROMACS / AMBER |

MD Engine | Allows checkpointing & restarting, and supports variable precision (single/double) to trade speed/accuracy. |

RDKit |

Cheminformatics | Enables fast low-fidelity filtering (descriptor calculation, 2D similarity) before expensive docking. |

OpenMM |

MD Engine | GPU-accelerated, supports custom forces and on-the-fly analysis for potential early stopping. |

SimBiology / Phoenix |

Trial Simulator | Allows building modular, hierarchical models of varying fidelity for PK/PD and trial execution. |

AlphaFold2 (Local Colab) |

Protein Structure | Provides a pre-trained surrogate for physical folding; use outputs as starting points for MD refinement. |

DOCK / AutoDock Vina |

Docking Engine | Configurable exhaustiveness parameter allows direct trade-off between evaluation cost and accuracy. |

Troubleshooting Guides & FAQs

Q1: During a high-dimensional HPO run for a molecular docking simulation, the optimization algorithm (e.g., Bayesian Optimization) appears to be taking longer to suggest the next configuration than the function evaluation itself. What could be the cause and how can I diagnose it?

A1: This is a classic symptom of optimization overhead exceeding evaluation cost. The overhead of training the surrogate model (e.g., Gaussian Process) scales poorly with the number of observations n (often O(n³)). Diagnose by logging timestamps: T_suggest_start, T_suggest_end, T_eval_start, T_eval_end. Calculate Overhead = (T_suggest_end - T_suggest_start) and Evaluation Cost = (T_eval_end - T_eval_start). If overhead dominates, consider switching to a more scalable surrogate (e.g., Random Forest, BOHB) or reducing the dimensionality of the search space via expert knowledge.

Q2: My objective function involves training a neural network on a large dataset, which costs >$100 per evaluation on cloud instances. How can I preemptively estimate total HPO cost and set a rational budget?

A2: You must establish baseline metrics. Perform a small design-of-experiments (e.g., 10 random configurations) to estimate the mean and variance of single evaluation cost (C_eval). For your chosen HPO algorithm, run a proxy study on a low-fidelity benchmark (e.g., training on a subset) to estimate the number of evaluations (N_eval) required for convergence. Total estimated cost = N_eval * C_eval. Always include a margin of error (e.g., 20%). Use this to set a strict monetary or time budget before the main experiment.

Q3: When using multi-fidelity optimization (e.g., Hyperband), how do I correctly attribute cost across fidelities, and what metrics capture the trade-off?

A3: The key metric is Cumulative Cost vs. Validation Performance. Attribute cost precisely: if a configuration uses 50 epochs (fidelity r) and the cost of one epoch is c, the cost for that evaluation is r * c. Log all partial evaluations. The optimization overhead here includes the cost of managing the successive halving logic. Compare algorithms using the area under the cost-curve (AUCC) — the integral of best-validation-error over cumulative cost spent.

Q4: I see high variance in evaluation runtime for identical configurations in my computational chemistry pipeline. This disrupts HPO scheduling. How to mitigate? A4: Non-deterministic evaluation cost is common due to network latency, shared resource contention, or stochastic algorithm components. Mitigation strategies:

- Isolate Resources: Use dedicated, identical instances.

- Containerization: Use Docker/Singularity for consistent environments.

- Warm Starts: For iterative simulations, use checkpoints from previous runs to avoid cold-start penalties.

- Metric: Report both median and the 90th percentile of evaluation cost, not just the mean. Schedule jobs based on pessimistic time estimates.

Q5: How do I quantify and report the "efficiency" of an HPO algorithm when evaluations are expensive? A5: The standard metric is the log-regret vs. cumulative cost. For each algorithm, plot the best-found validation error against the total computational cost (sum of all evaluation costs + overhead) expended up to that point. The algorithm that drives regret down fastest per unit cost is the most efficient. Explicitly break down cost into evaluation and overhead in a table.

Table 1: Comparative Overhead of Common HPO Surrogates

| Surrogate Model | Time Complexity (Suggest) | Space Complexity | Best for Eval Cost > Overhead When |

|---|---|---|---|

| Gaussian Process (GP) | O(n³) | O(n²) | n < 500, High-Dimensional Continuous |

| Tree Parzen Estimator (TPE) | O(n log n) | O(n) | n > 500, Categorical/Mixed |

| Random Forest (SMAC) | O(n log n * trees) | O(n * trees) | n > 1000, Structured/Categorical |

| Bayesian Neural Network | O(n * training steps) | Model Size | Very Large n, High-Dimension |

Table 2: Cost Breakdown for a Drug Property Prediction HPO Experiment (100 Trials)

| Cost Component | Measured Time (Hours) | Percentage | Notes |

|---|---|---|---|

| Total Wall Clock Time | 120.0 | 100% | 3 Days |

| Aggregate Evaluation Cost | 118.5 | 98.75% | Molecular Dynamics Simulations |

| Aggregate Optimization Overhead | 1.5 | 1.25% | GP Model Fitting & Prediction |

| Avg. Cost per Evaluation | 1.185 | - | Simulation Time |

| Avg. Overhead per Suggestion | 0.015 | - | Negligible in this case |

Experimental Protocols

Protocol 1: Benchmarking HPO Overhead Objective: To isolate and measure the time and computational resources consumed by the HPO algorithm's internal logic, separate from the objective function evaluation. Methodology:

- Instrumentation: Modify the HPO driver code to record high-resolution timestamps (

time.perf_counter_ns()in Python) and memory usage (memory_profiler) before and after thesuggestandevaluatefunctions. - Null Objective: Implement a mock objective function that returns a random value instantly (

time.sleep(0)). This eliminates evaluation cost. - Run: Execute the HPO algorithm for N iterations (e.g., 1000 suggestions) using the null objective on a standardized search space.

- Data Collection: For each iteration

i, record:T_suggest_i,Mem_suggest_i,T_eval_i,Mem_eval_i. - Analysis: Calculate cumulative overhead time

Σ T_suggest_iand peak memory. Plot overhead growth versus iteration numbern. Fit a curve to determine empirical complexity (e.g., O(n³) for GP).

Protocol 2: Multi-Fidelity Cost Accounting in Hyperband Objective: To accurately track cumulative resource consumption in an asynchronous Hyperband run. Methodology:

- Define Fidelity Parameter: Clearly define the fidelity parameter

r(e.g., number of epochs, dataset subset size, simulation time). - Calibrate Cost Function: Perform pilot runs to establish a function

cost(r)that maps fidelity to resource consumption (e.g.,cost(r) = a * r + b, wherebis fixed startup cost). - Instrument Scheduler: In the Hyperband scheduler, for every job promoted or evaluated, log:

job_id,configuration_id,fidelity r,start_time,end_time. - Attribute Cost: Upon job completion, calculate its cost as

cost(r). The cumulative cost at any time is the sum ofcost(r)for all completed jobs. - Validation: Ensure the sum of attributed costs closely matches total cluster resource usage (e.g., from SLURM or Kubernetes metrics).

Diagrams

Diagram 1: HPO Cost Breakdown & Bottleneck Identification Workflow

Diagram 2: Multi-Fidelity HPO Cost Attribution Logic

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Software & Hardware for Cost-Aware HPO Research

| Item | Function in Experiment | Example/Specification |

|---|---|---|

| HPO Framework | Orchestrates suggest-evaluate loops, provides key logging. | Ray Tune, DEAP, SMAC3, Optuna. |

| Profiling Tool | Measures precise CPU time, memory, I/O of function evaluations. | Python cProfile, line_profiler, memory_profiler. |

| Container Platform | Ensures evaluation environment consistency to reduce cost variance. | Docker, Singularity, Podman. |

| Cluster Scheduler | Manages parallel job queue, provides raw resource usage data. | SLURM, Kubernetes, AWS Batch. |

| Time-Series Database | Stores all timestamps, configurations, and results for analysis. | InfluxDB, Prometheus, SQLite. |

| High-Performance Computing (HPC) Resources | Provides the scale for parallel evaluations to amortize overhead. | Cloud instances (AWS EC2, GCP), on-premise cluster. |

| Cost Tracking Dashboard | Visualizes cumulative cost vs. performance in near real-time. | Grafana, custom Plotly Dash app. |

Beyond Grid Search: A Toolkit of Efficient HPO Algorithms for Limited Budgets

Technical Support & Troubleshooting Center

FAQs & Troubleshooting Guides

Q1: My Gaussian Process (GP) surrogate model is taking too long to fit as my dataset grows. What are my options? A: This is a common issue with standard GPs, which scale as O(n³). For expensive function evaluations where data is still limited, consider:

- Sparse Gaussian Process Approximations: Use inducing point methods (e.g., SVGP) to approximate the full posterior.

- Change the Kernel: A Matérn 3/2 or 5/2 kernel is often computationally more stable than the RBF kernel.

- Protocol: Implement a sparse variational GP. After collecting N>200 observations, select M=100 inducing points via k-means clustering. Optimize the variational distribution and hyperparameters jointly using stochastic gradient descent for a fixed budget of iterations.

Q2: My acquisition function (e.g., EI, UCB) constantly exploits known good areas and fails to explore new regions. How can I force more exploration? A: This indicates poor balancing of the exploration-exploitation trade-off.

- Adjust the Acquisition Parameter: For GP-UCB, increase the

kappaparameter. For Expected Improvement (EI), use a small, non-zeroxi(e.g., 0.01) to favor points with more uncertainty. - Protocol: Run a diagnostic: Plot the GP posterior and the acquisition function side-by-side. If the acquisition function's maximum coincides exactly with the GP's posterior mean maximum, increase exploration parameters. A recommended iterative protocol is to start with a higher

kappa(e.g., 3.0) and schedule it to decay toward 1.0 over iterations.

Q3: The performance of my BO loop seems highly sensitive to the choice of the initial design (points). What is the best practice? A: The initial design is critical for building the first GP model.

- Use Space-Filling Designs: Instead of random points, use quasi-random sequences (Sobol) or Latin Hypercube Sampling (LHS) to cover the search space uniformly.

- Protocol: For a

d-dimensional search space, start withn=10*dpoints generated via Sobol sequence, ensuring they are scaled to your parameter bounds. Evaluate these points expensively before starting the iterative BO loop.

Q4: My objective function is noisy (e.g., validation accuracy variance). How do I modify BO to handle this? A: Standard GP regression can explicitly model noise.

- Use a GP with a White Kernel: Specify a Gaussian likelihood and estimate the noise level (

alpha) directly from the data. - Change the Acquisition Function: Use a noise-aware version, such as Noisy Expected Improvement (qNEI).

- Protocol: When defining the GP model, use

WhiteKernel()as part of the kernel sum. During optimization, allow its noise level parameter to be optimized alongside other kernel hyperparameters. Re-evaluate promising points multiple times to reduce noise.

Q5: How do I handle mixed parameter types (continuous, integer, categorical) in BO? A: The standard GP with RBF kernel assumes continuous space.

- Use a transformed kernel/search space: For integer parameters, treat them as continuous and round the suggested point before evaluation. For categorical parameters, use a one-hot encoding with a specific kernel (e.g., Hamming kernel).

- Protocol: Define a composite search space. For a categorical parameter

Xwithnoptions, transform it into ann-dimensional one-hot encoded vector. Use aCoregionalizationkernel or a separateHammingkernel for this dimension and combine it with standard kernels for continuous dimensions via addition or multiplication.

Key Experimental Protocols in BO for HPO

Protocol 1: Benchmarking BO Variants for Hyperparameter Optimization

- Objective: Minimize validation loss of a neural network.

- Search Space: Define bounds for learning rate (log-scale: 1e-5 to 1e-1), batch size (categorical: 32, 64, 128, 256), and dropout rate (continuous: 0.0 to 0.5).

- Initialization: Generate 10 points via Sobol sequence.

- BO Loop: For 50 iterations:

a. Fit the GP surrogate model (Matérn 5/2 kernel) to all observed

(hyperparameters, validation loss)pairs. b. Optimize the Expected Improvement acquisition function using L-BFGS-B from multiple random starts. c. Evaluate the suggested hyperparameter configuration on the validation set. - Control: Compare against Random Search with an equal evaluation budget.

Protocol 2: Tuning the Acquisition Function for Drug Property Prediction

- Objective: Maximize the predicted binding affinity (pIC50) from a molecular simulation.

- Challenge: Each simulation costs ~100 GPU hours.

- Setup: Use a sparse GP to model the relationship between molecular descriptors (features) and pIC50.

- Exploration Strategy: Use GP-UCB with an annealing

kappa:kappa_t = 3.0 * exp(-0.05 * t), wheretis the iteration number. - Stopping Criterion: Stop if the top-3 observed values have not improved for 10 consecutive iterations.

Table 1: Common Kernel Functions for Gaussian Processes

| Kernel | Mathematical Form | Best For | Hyperparameters | ||||

|---|---|---|---|---|---|---|---|

| Radial Basis (RBF) | k(x,x') = σ² exp(- | x-x' | ² / 2l²) | Smooth, continuous functions | Length-scale (l), Variance (σ²) | ||

| Matérn 3/2 | k(x,x') = σ² (1 + √3r/l) exp(-√3r/l) | Less smooth functions | Length-scale (l), Variance (σ²) | ||||

| Matérn 5/2 | k(x,x') = σ² (1 + √5r/l + 5r²/3l²) exp(-√5r/l) | Moderately rough functions | Length-scale (l), Variance (σ²) |

Table 2: Comparison of Acquisition Functions

| Function | Formula | Key Parameter | Behavior |

|---|---|---|---|

| Expected Improvement (EI) | E[max(0, f' - f(x⁺))] | ξ (exploration weight) | Balances improvement prob. and magnitude. |

| Upper Confidence Bound (UCB) | μ(x) + κ σ(x) | κ (exploration weight) | Explicit, tunable exploration. |

| Probability of Improvement (PI) | Φ((μ(x) - f(x⁺) - ξ)/σ(x)) | ξ (exploration weight) | Exploitative; focuses on probability. |

Visualizations

Bayesian Optimization Main Workflow

Gaussian Process Core Components

The Scientist's Toolkit: BO Research Reagent Solutions

Table 3: Essential Software & Libraries for BO Research

| Item | Function | Example/Note |

|---|---|---|

| GP Modeling Library | Provides robust GP regression with various kernels. | GPyTorch, scikit-learn (GaussianProcessRegressor) |

| BO Framework | Implements full optimization loops, acquisition functions, and space definitions. | BoTorch (PyTorch-based), Ax, Dragonfly |

| Space Definition Tool | Handles mixed (continuous, discrete, categorical) parameter spaces. | ConfigSpace, Ax SearchSpace |

| Optimization Solver | Finds the maximum of the (non-convex) acquisition function. | L-BFGS-B (via scipy.optimize), CMA-ES |

| Visualization Package | Plots GP posteriors, acquisition functions, and convergence. | Matplotlib, Plotly for interactivity |

Troubleshooting Guides & FAQs

Q1: My low-fidelity model (e.g., subset of data, shorter training) consistently gives misleading predictions, leading the optimizer away from promising regions. What could be wrong?

A: This is often a fidelity bias issue. The correlation between low- and high-fidelity evaluations may be poor.

- Check: Compute the rank correlation (Spearman's) between a sample of low- and high-fidelity evaluations from your initial design.

- Solution: Implement a multi-task Gaussian Process (GP) or a non-linear auto-regressive model that explicitly learns the cross-fidelity correlation. Increase the initial budget allocated to building this correlation model.

Q2: The early stopping criterion is prematurely terminating potentially good hyperparameter configurations. How can I tune the stopping aggressiveness?

A: The stopping rule's hyperparameters (e.g., patience, performance threshold) are critical.

- Methodology: Run a small sensitivity analysis. On a known benchmark, test different stopping rules (e.g., Hyperband's successive halving vs. learning curve extrapolation).

- Protocol:

- Fix a total budget (B).

- Apply the stopping rule with different aggressiveness settings (η in Hyperband).

- Compare the rank of the best-found configuration against a full-fidelity, non-early-stopped baseline.

- Adjustment: If stopping is too aggressive, reduce η or increase the minimum budget before stopping is allowed.

Q3: How do I allocate budget between exploring new configurations and exploiting/refining promising ones in a multi-fidelity setup?

A: This is the core exploration-exploitation trade-off. An imbalance can cause suboptimal results.

- Diagnosis: Plot the proportion of budget spent on new configurations (rungs) vs. promoting existing ones over time.

- Solution: Implement an adaptive strategy. Use the uncertainty estimates from your surrogate model. If model uncertainty is high globally, increase the budget for exploration (evaluating new configs at low fidelity).

Q4: When using a multi-fidelity Gaussian Process, the model training becomes computationally expensive as data points accumulate. How can I mitigate this?

A: This is a known scalability limitation of exact GPs.

- Fix: Employ sparse Gaussian Process approximations (e.g., using inducing points) or switch to a Bayesian Neural Network surrogate for very large numbers of evaluations.

- Protocol for Inducing Points:

- After collecting N evaluations (e.g., N=200), cluster the configurations in hyperparameter space.

- Select cluster centers as inducing points (M points, where M<

- Train the sparse GP model using these M inducing points, dramatically reducing complexity from O(N³) to O(NM²).

Q5: My optimization results are not reproducible. What are the key random seeds to control?

A: Non-determinism can arise from multiple sources.

- Checklist:

- Algorithm Seed: The master random seed for the optimizer (e.g., for initial design sampling).

- Model Seed: The random seed for the surrogate model (e.g., for GP initialization).

- Training Seed: The random seed for the training procedure of each fidelity evaluation (e.g., neural network weight initialization, data shuffling).

- Environment Seed: For GPU-based computations, set

CUDA_LAUNCH_BLOCKING=1or usetorch.backends.cudnn.deterministic = Truein PyTorch.

- Best Practice: Log all seeds used for each experiment run.

Table 1: Comparison of Multi-Fidelity Optimization Methods

| Method | Core Mechanism | Key Hyperparameter | Best Suited For |

|---|---|---|---|

| Successive Halving (SHA) | Aggressively stops half of worst performers at each budget rung | Reduction factor (η) | Configurations with clear, early performance signals |

| Hyperband | Iterates over SHA with different aggressiveness levels | Max budget per config (R), η | Unknown early stopping aggressiveness; general robustness |

| Multi-Fidelity GP (AR1) | Models fidelity correlation via auto-regressive kernel | Correlation parameter (ρ) | Problems with strong, linear correlation between fidelities |

| Deep Neural Network as Surrogate | Non-linear mapping from config+fidelity to performance | Network architecture, learning rate | Very large-scale problems; complex fidelity relationships |

| BOHB | Bayesian Optimization + Hyperband | Kernel bandwidth, acquisition function | Expensive high-fidelity evaluations; needs strong guidance |

Table 2: Impact of Low-Fidelity Model Choice on Optimization Efficiency

| Low-Fidelity Approximation | Speed-up vs. High-Fid | Typical Correlation (Spearman's ρ) | Recommended Use Case |

|---|---|---|---|

| Subsample of Training Data (e.g., 10%) | 5x - 20x | 0.4 - 0.8 | Large-scale ML (CV/NLP) |

| Fewer Training Epochs | Linear in epochs | 0.7 - 0.95 | Neural Network HPO |

| Lower-Resolution Simulator | 100x - 1000x | 0.5 - 0.9 | Computational Fluid Dynamics, PDEs |

| Coarse Numerical Mesh | 50x - 200x | 0.6 - 0.95 | Engineering Design |

| Simplified Molecular Model (e.g., MM vs. DFT) | 1000x+ | 0.3 - 0.7 | Early-stage Drug Candidate Screening |

Detailed Experimental Protocol: Hyperband for Drug Discovery

Objective: Optimize the hyperparameters of a Graph Neural Network (GNN) for molecular property prediction (e.g., solubility) using progressively larger subsets of a molecular dataset as fidelity levels.

Protocol:

Define Search Space & Fidelities:

- Hyperparameters: GNN layers {2,4,6}, hidden dim {64,128,256}, learning rate [1e-4, 1e-2] (log), dropout [0.0, 0.5].

- Fidelity Parameter (s): Fraction of the training dataset, defined as

s_max = 1.0(full dataset). Lower fidelities:s = 1/η, 1/η², ...for a reduction factor η=3.

Initialize Hyperband:

- Set

R(max resources) = 81 epochs,η = 3. - Calculate brackets.

s_max = R*η^(-4) ≈ 1epoch equivalent for smallest budget.

- Set

Iterate through Brackets:

- For each bracket (different trade-off n vs. budget/run):

- Sampling: Randomly sample

nhyperparameter configurations. - Successive Halving Loop:

- Run each of the

nconfigs for the current budget (e.g., 1 epoch on 1/81 data). - Evaluate performance (validation loss).

- Keep the top

1/ηconfigurations (e.g., top 1/3). - Increase budget for survivors by factor

η(e.g., 3 epochs on 3/81 data). - Repeat until one config remains or max budget

Ris reached.

- Run each of the

- Sampling: Randomly sample

- For each bracket (different trade-off n vs. budget/run):

Final Evaluation:

- Train the best configuration(s) from all brackets on the full dataset (

s=1.0) forRepochs. - Report final test set performance.

- Train the best configuration(s) from all brackets on the full dataset (

Visualizations

Diagram Title: Multi-Fidelity Optimization Loop

Diagram Title: Hyperband Successive Halving Brackets

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Multi-Fidelity HPO Experiments

| Item / Software | Function / Purpose | Typical Specification |

|---|---|---|

| HPO Library (e.g., Optuna, Ray Tune) | Framework for defining search spaces, running trials, and implementing multi-fidelity algorithms. | Supports pruning (early stopping), parallel execution, and various samplers. |

| Surrogate Model Library (e.g., BoTorch, GPyTorch) | Provides probabilistic models (GPs, Bayesian NN) for building the multi-fidelity surrogate. | Enables custom kernel design (e.g., AR1) for fidelity correlation. |

| Benchmark Suite (e.g., HPOBench, YAHPO Gym) | Standardized set of optimization tasks with multiple fidelities for fair comparison. | Includes real-world tasks like SVM/MLP HPO and synthetic functions (e.g., Branin). |

| Cluster Job Scheduler (e.g., SLURM) | Manages computational resources for running hundreds of parallel fidelity evaluations. | Essential for large-scale experiments on HPC systems. |

| Experiment Tracker (e.g., Weights & Biases, MLflow) | Logs all configurations, results, and metadata for reproducibility and analysis. | Must track fidelity level, runtime, and performance metrics for each trial. |

Technical Support Center: Troubleshooting & FAQs

Context: This support center is designed for researchers dealing with expensive function evaluations in Hyperparameter Optimization (HPO). The following guides address common issues when implementing population-based methods like SMAC and BOHB, which aim to combine the robustness of population-based search with adaptive, focused sampling.

Frequently Asked Questions (FAQs)

Q1: My BOHB run is stuck in the initial random search phase for too long, consuming my budget on poorly performing configurations. How can I mitigate this?

A: This often occurs when the min_budget is set too high relative to the max_budget or when eta (the budget scaling factor) is too small. BOHB requires at least eta * num_workers random runs before starting the Hyperband succession and Bayesian optimization.

- Solution: Follow this protocol:

- Ensure

etais ≥ 3. - Set

min_budgetto a value low enough that evaluations are very fast (e.g., 1-5% ofmax_budget). - Increase

num_workersto at leastetato allow parallel sampling and faster progression through the random phase. - Consider a two-stage approach: run a separate, small purely random search, and use the best configurations to seed the BOHB population.

- Ensure

Q2: SMAC's surrogate model (Gaussian Process or Random Forest) is taking longer to fit than my function evaluation. Is this normal for high-dimensional problems? A: Yes, this is a known limitation, especially with Gaussian Processes (GPs) on problems with >50 dimensions. The model fitting cost can become the bottleneck, negating the benefit of reducing function evaluations.

- Solution: Implement the following troubleshooting steps:

- Switch Model: Use the Random Forest (RF) surrogate model (

model="rf"in SMAC). It scales better with dimensions and categorical variables. - Limit Data: Set

max_model_sizeto cap the number of observations used for training the model (e.g., 10000). - Feature Reduction: If possible, pre-process your HPO problem to reduce dimensionality through feature selection or embedding before passing it to SMAC.

- Switch Model: Use the Random Forest (RF) surrogate model (

Q3: How do I handle failed or crashed evaluations (e.g., model divergence, memory error) within SMAC or BOHB? A: Both frameworks allow for handling crashed runs by marking them with a penalty cost.

- Standard Protocol:

- Catch the Failure: In your objective function, use try-except blocks to catch exceptions.

- Return a High Cost: On failure, return a numerical value representing a high, penalized cost (e.g.,

np.inf, or a value 2x the worst observed cost). - Inform the Optimizer (SMAC): Use

Scenario.intensifier.tae_runner.cost_on_crashto set a standardized crash cost. This ensures the configuration is penalized but still informs the model.

Q4: The performance of BOHB seems highly variable across different runs on the same problem. What is the main cause and how can I ensure reproducibility? A: Variability stems from two sources: the random seed and parallel worker synchronization.

- Reproducibility Protocol:

- Set Seeds: Explicitly set the global random seed (

np.random.seed(),random.seed()) and the seed in the HPO framework (e.g.,seedparameter in BOHB). - Control Parallelism: For fully reproducible runs, run sequentially (

num_workers=1). In parallel mode, results may vary due to non-deterministic timing affecting which configurations are promoted. - Warm Start: Use a fixed set of initial configurations to ensure all runs start from the same population.

- Set Seeds: Explicitly set the global random seed (

Q5: When should I choose SMAC over BOHB, and vice versa, for expensive black-box functions? A: The choice depends on the nature of the budget and problem structure.

| Criterion | SMAC (Sequential Model-Based Configuration) | BOHB (Bayesian Optimization and HyperBand) |

|---|---|---|

| Primary Strength | Robust modeling of complex, categorical & conditional spaces. Adaptive acquisition. | Direct multi-fidelity optimization. Optimal budget allocation. |

| Best Use Case | Expensive, non-continuous hyperparameter spaces where no low-fidelity approximation exists. | Functions where a cheaper, low-fidelity approximation (epochs, data subset, tolerance) is available and correlates with final performance. |

| Budget Type | Single-fidelity (e.g., final validation error). | Multi-fidelity (e.g., performance vs. training epochs, dataset size). |

| Parallelization | Supports parallel runs via pynisher, but model updates are sequential. | Naturally supports aggressive parallelization at every budget level. |

| Key Parameter | Acquisition Function (EI, PI), model type (GP, RF). | eta (budget reduction factor), min_budget, max_budget. |

Experimental Protocols for Key Cited Studies

Protocol 1: Benchmarking SMAC vs. Random Search on Drug Property Prediction Model

- Objective: Evaluate reduction in expensive evaluations needed to tune a Graph Neural Network.

- Function: Validation ROC-AUC of a GNN predicting molecular solubility.

- Expensive Evaluation: A single training/validation run takes ~4 GPU-hours.

- Method:

- Define HPO space: 6 parameters (learning rate, hidden layers, dropout, etc.).

- Baseline: Run Random Search for 50 evaluations. Record best ROC-AUC vs. evaluation count.

- Intervention: Run SMAC with a Random Forest model for 50 evaluations.

- Metric: Compare the incumbent (best-found) performance after each batch of 5 evaluations. Statistical significance tested via Mann-Whitney U test on final performance across 10 independent seeds.

Protocol 2: Demonstrating BOHB for Neural Architecture Search (NAS) in Protein Folding

- Objective: Efficiently co-optimize architecture hyperparameters and training epochs.

- Low-Fidelity Proxy: Protein structure prediction accuracy (RMSD) after n training epochs.

- High-Fidelity Target: Final accuracy after full training.

- Method:

- Set

max_budget = 100epochs,min_budget = 5epochs,eta = 3. - Define a joint space: architectural params (attention heads, layer depth) and optimizer params.

- Run BOHB for a total time budget equal to 30 full

max_budgetevaluations. - Track how many configurations are sampled at each budget level (

[5, 15, 45, 100]epochs) and the successive halving process.

- Set

Visualizations

Title: BOHB Iteration Workflow: Hyperband and Bayesian Optimization Loop

Title: Multi-Fidelity Optimization in Population-Based HPO

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Reagent | Function in HPO Experiment | Example/Note |

|---|---|---|

| HPO Framework (SMAC3, DEHB) | Core library implementing the algorithms. | SMAC3 for SMAC; DEHB for a differential evolution variant of BOHB. |

| Benchmark Suite (HPOBench, YAHPO) | Provides standardized, expensive black-box functions for testing. | HPOBench includes drug discovery datasets like "protein_structure". |

| Containerization (Docker/Singularity) | Ensures reproducible execution environment for expensive, long-running jobs. | Critical for cluster deployments to fix software and library versions. |

| Parallel Backend (Dask, Ray) | Manages distributed evaluation of configurations across workers. | BOHB's HpBandSter uses Ray or Dask for parallelism. |

| Checkpointing Library (Joblib, PyTorch Lightning) | Saves intermediate state of function evaluations (e.g., model weights). | Allows pausing/resuming expensive evaluations and simulating multi-fidelity. |

| Visualization (Weight & Biases, TensorBoard) | Tracks and visualizes the optimization process in real-time. | Logs incumbent trajectory, population distribution, and resource use. |

Surrogate-Assisted Evolutionary Algorithms for Complex, Non-Convex Spaces

Technical Support Center

Troubleshooting Guides & FAQs

Q1: The surrogate model's predictions are accurate during training but diverge significantly from the true expensive function during the optimization run. How can I improve generalization?

- A: This is a classic sign of overfitting or distribution shift. Implement an adaptive retraining strategy. Set a threshold for prediction uncertainty (e.g., high variance in ensemble models) or a maximum number of iterations before retraining. Use an infill criterion like Expected Improvement (EI) that balances exploration (testing in uncertain regions) and exploitation. This will generate new data points that improve the surrogate's coverage of the search space.

Q2: My optimization is getting stuck in a local optimum, even with the surrogate. How do I enhance global exploration?

- A: Adjust your acquisition function. Increase the weight on the exploratory component (e.g., use Upper Confidence Bound with a higher κ parameter). Consider a multi-fidelity approach if available, using a low-fidelity, cheap model to scout broad regions and the high-fidelity model to refine. Also, ensure your initial Design of Experiments (DoE) is space-filling (e.g., Latin Hypercube Sampling) with a sufficient number of points before the first surrogate build.

Q3: The computational overhead of training the Gaussian Process (GP) surrogate is becoming too high as the dataset grows (>1000 points). What are my options?

- A: Transition to scalable surrogate models. Consider:

- Sparse Gaussian Processes that use inducing points.

- Random Forest or Gradient Boosting models, which often scale better.

- Neural Networks as surrogate models. Implement a data management policy to archive or downsample historical data that is no longer informative for the current region of interest.

Q4: How do I effectively handle high-dimensional parameter spaces (e.g., >50 parameters) where surrogate performance typically degrades?

- A: Employ dimensionality reduction or feature selection techniques as a preprocessing step if some parameters are known to be less influential. Use active subspaces to identify linear combinations of parameters that most influence the output. Alternatively, consider Bayesian Optimization variants designed for high-D spaces, like those using additive GP kernels or trust regions (e.g., TuRBO).

Q5: How can I integrate categorical/discrete parameters into a primarily continuous optimization framework?

- A: Use surrogate models that natively handle mixed spaces, such as Random Forests. For GP-based approaches, apply one-hot encoding or use specialized kernels (e.g., Hamming kernel) for categorical parameters. Ensure your evolutionary algorithm's variation operators (mutation, crossover) are compatible with the encoded representations.

Q6: My objective function is noisy (stochastic). How do I prevent the surrogate from overfitting to the noise?

- A: Explicitly model the noise. Use a Gaussian Process with a white kernel or a heteroscedastic noise model. When querying the expensive function, consider taking multiple replicates at promising points to better estimate the true mean, especially in later stages of optimization. Adjust the infill criterion to account for noise.

Experimental Protocol: Benchmarking SAEAs for Hyperparameter Optimization (HPO)

Objective: Compare the performance of three Surrogate-Assisted EA (SAEA) variants against a standard EA for optimizing a computationally expensive, non-convex black-box function, simulating an HPO task.

- Expensive Function Simulator: Use a pre-defined, computationally intensive benchmark (e.g.,

Levyfunction with additive Gaussian noise). Each evaluation is artificially delayed by 2 seconds to simulate expense. - Algorithms:

- Control: A standard Covariance Matrix Adaptation Evolution Strategy (CMA-ES).

- SAEA 1: GP-assisted EA using Expected Improvement (EI) infill.

- SAEA 2: Random Forest-assisted EA using Lower Confidence Bound (LCB) infill.

- SAEA 3: GP-assisted EA with trust region (TuRBO-1).

- Initialization: For each run, generate an initial DoE of

10*dpoints using Latin Hypercube Sampling, wheredis dimensionality. - Budget: Limit all algorithms to a maximum of

200expensive function evaluations. - Metrics: Track the best-found value over evaluations. Perform

30independent runs per algorithm. - Surrogate Management: Retrain the surrogate model every

10new evaluations. For GP, use a Matern 5/2 kernel. Optimize hyperparameters via maximum likelihood estimation at each training.

Key Results Summary:

| Algorithm | Median Best Value Found (30 runs) | Average Time per Eval. (s) | Success Rate (Within 1% of Global Optimum) |

|---|---|---|---|

| Standard CMA-ES (Control) | -15.23 | 2.05 | 40% |

| GP-EI (SAEA 1) | -19.95 | 2.12 | 93% |

| Random Forest-LCB (SAEA 2) | -18.74 | 2.08 | 83% |

| GP-Trust Region (SAEA 3) | -19.87 | 2.15 | 90% |

Diagram: SAEA Workflow for HPO

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in SAEA/HPO Experiments |

|---|---|

| Bayesian Optimization Library (e.g., BoTorch, Dragonfly) | Provides high-level implementations of GP models, acquisition functions, and optimization loops for seamless prototyping. |

| Gaussian Process Framework (e.g., GPyTorch, scikit-learn) | Enables custom construction and training of flexible surrogate models, including handling of different kernels and noise models. |

| Evolutionary Algorithm Toolkit (e.g., DEAP, pymoo) | Supplies robust population-based search operators (selection, crossover, mutation) for optimizing the acquisition function or performing the global search. |

| Benchmark Function Suite (e.g., COCO, HPOBench) | Offers a standardized set of non-convex, expensive-to-evaluate functions (or real HPO tasks) for reproducible benchmarking and comparison. |

| High-Performance Computing (HPC) Scheduler (e.g., SLURM) | Manages parallel evaluation of multiple expensive function candidates (e.g., multiple neural network training jobs), crucial for reducing wall-clock time. |

| Experiment Tracking (e.g., Weights & Biases, MLflow) | Logs all hyperparameters, performance metrics, and surrogate model states across iterations for analysis, reproducibility, and debugging. |

Maximizing ROI: Practical Techniques to Enhance Any Expensive HPO Workflow

Troubleshooting Guides & FAQs

Q1: My warm-started optimization is performing worse than a random search. What could be the cause? A1: This is often due to poor source-target task similarity. If the prior knowledge (source) is from a vastly different problem distribution, it can mislead the optimizer.

- Diagnosis: Calculate the Hellinger distance or Maximum Mean Discrepancy (MMD) between the source and target task parameter/performance distributions.

- Solution: Implement a task similarity metric before transfer. Use a small, random sample (e.g., 10-20 evaluations) from the target task to validate the prior model's predictive power. If similarity is low, consider discarding the prior or using a robust meta-learning algorithm that weights sources.

Q2: How do I prevent negative transfer when using multiple source tasks? A2: Negative transfer occurs when inappropriate prior knowledge degrades performance.

- Protocol: Implement a weighted ensemble or meta-feature-based selection.

- Characterize each source task ( Ti ) with meta-features (e.g., dataset statistics, optimal configuration landscape properties).

- Characterize your new target task ( T{new} ) with the same meta-features.

- Calculate the similarity (e.g., Euclidean distance) between ( T{new} ) and each ( Ti ).

- Weight the contribution of each source task's knowledge inversely proportional to this distance in the warm-start initialization.

Q3: The surrogate model collapses to the prior and fails to explore new regions. How can I fix this? A3: This indicates an overly strong prior belief.

- Solution: Adjust the regularization or acquisition function.

- For Gaussian Process (GP) priors: Tune the prior covariance function's scale and lengthscales. Start with a larger lengthscale to promote exploration. Explicitly set a prior mean function but reduce its weight via a hyperparameter (η):

Updated Mean = η * Prior Mean + (1-η) * Data Mean. - For acquisition functions (like EI): Add an exploration bonus term or use a higher xi parameter initially to encourage probing areas where the prior has high uncertainty.

- For Gaussian Process (GP) priors: Tune the prior covariance function's scale and lengthscales. Start with a larger lengthscale to promote exploration. Explicitly set a prior mean function but reduce its weight via a hyperparameter (η):

Q4: I have historical data, but it's from a different search space. How can I use it? A4: This requires search space transformation.

- Methodology:

- Feature Alignment: Identify and align common, interpretable hyperparameters (e.g., learning rate, batch size) between source and target spaces using log-scale normalization.

- For non-overlapping parameters, one-hot encode them as belonging to a specific subspace.

- Use a transfer learning model like Transfer Acquisition Function (TAF) or a Neural Process that can map observations from one space to another through a latent representation.

- Critical Check: Always validate the mapping on a small target task sample before full-scale warm-start.

Table 1: Impact of Intelligent Warm-Starting on Optimization Efficiency

| Benchmark Dataset / Task | Standard BO (Evaluations to Target) | Warm-Started BO (Evaluations to Target) | Reduction in Cost | Transfer Method Used |

|---|---|---|---|---|

| Protein Binding Affinity Prediction | 142 ± 18 | 67 ± 12 | 52.8% | Multi-task GP (MTGP) |

| CNN on CIFAR-100 | 89 ± 11 | 48 ± 9 | 46.1% | Surrogate-Based Transfer (RGPE) |

| XGBoost on Pharma QC Dataset | 115 ± 14 | 72 ± 10 | 37.4% | Meta-Learning (FABOLAS) |

| LSTM for Time-Series Forecasting | 102 ± 15 | 85 ± 13 | 16.7% | Weakly Informative Prior |

Data synthesized from recent literature on HPOBench, LCBench, and proprietary drug discovery benchmarks. Values represent mean ± std. deviation over 50 runs.

Table 2: Comparison of Transfer Learning Methods for HPO

| Method | Key Mechanism | Best For | Risk of Negative Transfer | Computational Overhead |

|---|---|---|---|---|

| Multi-Task Gaussian Process (MTGP) | Shares kernel function across tasks via coregionalization matrix. | Highly related tasks with shared optimal regions. | Medium | High (Matrix Inversion) |

| Ranking-Weighted GP Ensemble (RGPE) | Ensemble of GP surrogates from source tasks, weighted by ranking performance. | Multiple, potentially diverse source tasks. | Low | Medium |

| Transfer Acquisition Function (TAF) | Modifies the acquisition function to incorporate prior optimum locations. | Tasks with similar optimal configurations. | High | Low |

| Meta-Learning Initializations (e.g., FABOLAS) | Learns a meta-model from source tasks to predict good configurations for a new task. | Large collections of heterogeneous source tasks. | Medium-Low | Medium (Offline) |

Experimental Protocols

Protocol 1: Validating Source-Task Similarity for Drug Discovery HPO Objective: To assess if HPO data from a previous compound screen (Source) is suitable for warm-starting the optimization for a new target (Target).

- Data Preparation: From the Source task, extract the set of evaluated hyperparameters (Hs) and their corresponding validation losses (Ls). Normalize all hyperparameters to [0, 1].

- Meta-Feature Extraction: For both Source and Target tasks (Ts, Tt), compute:

mf1: Mean performance of a default configuration across 5 random seeds.mf2: The mean and standard deviation of the bestk=5configurations found.mf3: Landscape hardness metrics (e.g., fitness-distance correlation estimate using a small random sample of 20 points).

- Similarity Calculation: Compute the Euclidean distance between the meta-feature vectors:

D(T_s, T_t) = || [mf1_s, mf2_s, mf3_s] - [mf1_t, mf2_t, mf3_t] ||. - Decision Threshold: If

D(T_s, T_t) < θ(a pre-defined threshold, e.g., 0.5 based on historical validation), proceed with warm-start. Else, use a weaker prior or standard BO.

Protocol 2: Implementing a Warm-Started Bayesian Optimization Run

Objective: To minimize an expensive black-box function f_target(x) using knowledge from f_source(x).

- Prior Construction:

- Fit a Gaussian Process

GP_sourceto the historical data{X_source, y_source}. - Optionally, fit a variational autoencoder (VAE) if the search spaces differ to learn a shared latent space

z.

- Fit a Gaussian Process

- Warm-Start Initialization:

- Direct Transfer: Select the top-

kperforming configurations fromX_sourceas the initial design forf_target. - Model-Based Transfer: Set the prior mean of the target task's GP to the posterior mean of

GP_source. The covariance function is initialized with the same kernel, but lengthscales are made slightly longer to encourage initial exploration.

- Direct Transfer: Select the top-

- Informed Acquisition: For the first

N=5iterations, use an acquisition functionα(x)that balances the prior's prediction and its own uncertainty, e.g.,α(x) = μ_prior(x) + β * σ_target(x), whereβdecays over iterations. - Iterative Optimization: After the warm-start phase, continue with standard Expected Improvement (EI) or Upper Confidence Bound (UCB) acquisition, updating the GP model with all

{target}observations.

Visualizations

Title: Intelligent Warm-Starting Workflow for HPO

Title: Mapping Different Search Spaces via a Latent Representation

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Intelligent Warm-Starting HPO |

|---|---|

| HPOBench / LCBench | Provides standardized, publicly available benchmark datasets for multi-fidelity and transfer HPO research, enabling fair comparison. |

| BoTorch / RoBO | Advanced Bayesian optimization libraries that provide foundational implementations of GP models, acquisition functions, and multi-task/transfer learning modules. |

| OpenML | Repository for machine learning datasets and experiments, useful for extracting meta-features and finding potential source tasks for transfer. |

| Dragonfly | BO package with explicit support for transfer and multi-task optimization, including RGPE and modular prior integration. |

| Custom Meta-Feature Extractor | (Code-based) Essential for quantifying task similarity. Calculates landscape and dataset descriptors to inform the transfer process. |

| High-Throughput Computing Cluster | Enables the parallel evaluation of multiple warm-start strategies and the rapid collection of the initial target task samples needed for validation. |

| TensorBoard / MLflow | Experiment tracking tools critical for logging the performance of different warm-start strategies and visualizing the optimization trajectory. |

Design of Experiments (DoE) for Initial Configuration and Space Exploration

Troubleshooting Guide & FAQs

Q1: Our computational budget for High-Performance Optimization (HPO) is extremely limited. How can a DoE help before we start an expensive sequential search like Bayesian Optimization? A: A properly designed space-filling DoE (e.g., Latin Hypercube) for the initial configuration provides maximum information from a minimal set of initial function evaluations. This serves two critical purposes: 1) It builds a better initial surrogate model for Bayesian Optimization, reducing the number of iterations needed to find the optimum. 2) It can identify non-influential parameters early, allowing you to reduce the search space dimensionality. Always perform this step; skipping it often leads to wasted evaluations exploring irrelevant regions.

Q2: When exploring a high-dimensional parameter space for drug formulation, our screening DoE indicates several significant interaction effects. How should we proceed? A: Significant interactions mean the effect of one factor depends on the level of another. You must move from a screening design (like a fractional factorial) to a response surface methodology (RSM) design. A Central Composite Design (CCD) is standard for this phase. It will allow you to model the curvature and interactions accurately to find the optimal formulation. Do not attempt to optimize using only linear model results when interactions are present.

Q3: We used a Latin Hypercube Sample (LHS) for initial space exploration, but the resulting Gaussian Process model has poor predictive accuracy. What went wrong? A: This is often caused by an inappropriate distance metric or correlation kernel in the GP, mismatched to the nature of your parameter space. For mixed variable types (continuous, ordinal, categorical), a standard Euclidean distance fails. Troubleshoot by: 1) Verifying your LHS points are truly space-filling in each projection. 2) Switching to a kernel designed for mixed spaces (e.g., a combination of Hamming distance for categorical and Euclidean for continuous). 3) Checking if you have enough points; a rough rule is at least 10 points per dimension.

Q4: During an autonomous DoE for reaction condition optimization, the algorithm suggests a set of conditions that are physically implausible or unsafe. How do we constrain the space? A: This is a critical constraint handling issue. You must incorporate hard constraints into the DoE generation and optimization loop. For physical plausibility (e.g., total pressure < X), use a constrained LHS algorithm. For safety, define an "unacceptable region" and employ a barrier function in your acquisition function (e.g., in Expected Improvement) that penalizes suggestions near or inside this region. Never rely on post-suggestion filtering alone.

Q5: Our resource allocation for HPO is fixed. What is the optimal split between the initial DoE phase and the sequential optimization phase? A: There's no universal rule, but recent research provides a guideline. Allocate 20-30% of your total budget to the initial space-filling DoE. For example, with 100 total evaluations, use 20-25 for the initial LHS. The remaining 75-80 are for sequential exploitation/exploration. This balance is crucial; too small an initial set risks poor model initialization, while too large wastes resources on pure exploration.

| DoE Strategy | Initial Points (% of Total Budget) | Avg. Reduction in Evaluations to Optimum* | Key Advantage | Best For |

|---|---|---|---|---|

| Latin Hypercube (LHS) | 20-30% | 25-40% | Maximal space-filling property | Initial surrogate model building |

| Sobol Sequence | 20-30% | 30-45% | Better low-dimensional projection uniformity | Spaces with likely active low-order effects |

| Fractional Factorial | 10-20% | 15-30% | Efficient main effect screening | Very high-dim spaces (>15 factors) for screening |

| Random Uniform | 20-30% | 10-25% | Simple implementation | Baseline comparison |

*Compared to no initial DoE, based on synthetic benchmark studies.

Experimental Protocol: Sequential Model-Based Optimization with Initial DoE

Objective: Minimize/Maximize an expensive-to-evaluate black-box function f(x), where x is a vector of mixed-type parameters. Total Evaluation Budget (N): Fixed (e.g., 100 runs).

Pre-Experimental Phase:

- Define Domain: Specify bounds for continuous parameters, levels for categorical/ordinal parameters.

- Define Constraints: Codify hard (infeasible) and soft (undesirable) constraints.

- Choose Initial DoE: Select a space-filling design (e.g., LHS) for mixed spaces. Number of points,

n_init = ceil(0.25 * N).

Initial DoE Execution:

- Generate

n_initpoints using the chosen design. - Execute the expensive function evaluation (simulation, wet-lab experiment) for each point.

- Store results

(x_i, y_i).

- Generate

Sequential Optimization Loop (Repeat for

N - n_inititerations):- Model Training: Fit a surrogate model (e.g., Gaussian Process with Matérn kernel) to all data collected so far.

- Acquisition Optimization: Optimize the acquisition function (e.g., Expected Improvement) over the domain, using the surrogate model. This suggests the next point

x_next. - Constraint Check & Execution: Validate

x_nextagainst all constraints. Execute the expensive evaluation to obtainy_next. - Data Augmentation: Append

(x_next, y_next)to the dataset.

Post-Processing:

- Identify the best observed configuration.

- Perform analysis on the surrogate model to infer parameter sensitivity and interactions.

Workflow Diagram

Title: SMBO with Initial DoE for Expensive HPO

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Concept | Function in DoE for Expensive HPO |

|---|---|