Full Factorial Design in Reaction Optimization: A Comprehensive Guide for Pharmaceutical Scientists

This article provides a complete guide to applying full factorial design (FFD) in reaction and process optimization for researchers, scientists, and drug development professionals.

Full Factorial Design in Reaction Optimization: A Comprehensive Guide for Pharmaceutical Scientists

Abstract

This article provides a complete guide to applying full factorial design (FFD) in reaction and process optimization for researchers, scientists, and drug development professionals. It covers foundational principles, demonstrating how FFD systematically investigates all possible combinations of factor levels to evaluate main effects and interaction effects simultaneously. The content details methodological implementation from factor selection to statistical analysis, explores troubleshooting and optimization strategies for robust process development, and validates the approach through comparative analysis with other experimental designs. Supported by case studies from pharmaceutical development, including analytical method optimization and drug formulation, this resource equips scientists with the knowledge to efficiently design experiments, accelerate development timelines, and enhance product quality.

What is Full Factorial Design? Unlocking Core Principles for Efficient Experimentation

In the realm of reaction optimization research, particularly in pharmaceutical development, the ability to efficiently understand and control multiple variables simultaneously is paramount. Full Factorial Design (FFD) stands as a robust, systematic methodology for investigating the effects of multiple factors and their interactions on a response variable. Unlike traditional one-factor-at-a-time (OFAT) approaches, which can overlook critical interaction effects, FFD examines all possible combinations of factor levels, providing a comprehensive understanding of complex system behaviors [1] [2]. This comprehensive approach is especially valuable in drug development, where processes are inherently multivariate and interactions between factors like temperature, pH, and concentration can significantly impact critical quality attributes such as yield, purity, and stability [3].

The fundamental strength of Full Factorial Design lies in its ability to realistically emulate the nuanced dynamics of complex systems where variables interact in non-linear ways [2]. By accounting for these interplays, researchers can avoid oversimplification and gain profound insights into underlying realities, priming informed decisions throughout the development lifecycle. From initial screening to final process optimization, FFD provides a structured framework for extracting maximum information from experimental data, ultimately accelerating development timelines and enhancing process robustness in pharmaceutical manufacturing [3] [2].

Core Principles of Full Factorial Design

Fundamental Concepts and Terminology

At its core, Full Factorial Design is an experimental strategy that systematically investigates the effects of multiple independent variables (factors) on a dependent variable (response) by testing all possible combinations of factor levels [2] [4]. This approach enables researchers to determine not only the individual impact of each factor (main effects) but also how factors interact with one another (interaction effects) [1].

The key components of any factorial design include:

- Factors: These are the independent variables or inputs that are deliberately manipulated during the experiment to observe their effect on the response variable. Factors can be either quantitative (e.g., temperature, pressure, time) or qualitative (e.g., catalyst type, material supplier) [2] [4].

- Levels: Each factor is studied at two or more discrete values or settings called levels. For example, a temperature factor might be studied at low (50°C) and high (70°C) levels in a two-level design [2].

- Treatment Combinations: These represent the unique experimental conditions formed by combining one level from each factor. In a full factorial experiment, all possible treatment combinations are tested [1].

- Response Variable: This is the output or measured outcome of interest that is hypothesized to be influenced by the factors. Common response variables in reaction optimization include yield, impurity level, reaction time, and selectivity [2].

The Mathematics of Full Factorial Design

The foundation of Full Factorial Design is mathematical, relying on the principle of orthogonality, which ensures that factor effects can be estimated independently. For a design with k factors, each having L levels, the total number of experimental runs required is L^k [5]. This exponential relationship highlights both the comprehensiveness and the potential resource intensity of full factorial experiments.

The mathematical model for a two-level full factorial design with k factors can be represented as:

Y = β₀ + ΣβiXi + ΣΣβijXiX_j + ... + ε

Where Y is the response variable, β₀ is the overall mean effect, βi represents the main effect of factor i, βij represents the interaction effect between factors i and j, Xi and Xj are the coded factor levels (-1 for low level, +1 for high level), and ε represents the experimental error [1] [2].

For three-level designs, which can detect curvature in the response surface, the model expands to include quadratic terms:

Y = β₀ + ΣβiXi + ΣβiiXi² + ΣΣβijXiX_j + ε

This ability to model nonlinear relationships makes three-level full factorial designs particularly valuable for optimization studies where the optimal conditions may lie inside the experimental region rather than at its boundaries [5].

Types of Full Factorial Designs

Full factorial designs can be classified based on the number of levels used for each factor and the nature of the factors themselves. Understanding these variations is crucial for selecting the appropriate experimental strategy for specific research objectives.

Two-Level Full Factorial Designs

The two-level full factorial design (2^k), where each of the k factors is investigated at two levels (typically coded as -1 for low level and +1 for high level), is one of the most widely used experimental designs, particularly for screening experiments [2] [4]. These designs are efficient for identifying the most significant factors influencing a response variable before conducting more detailed investigations.

Key characteristics:

- Number of runs: 2^k

- Can estimate main effects and all interaction effects

- Assumes linear relationship between factors and response within the experimental region

- Cannot detect curvature in the response surface

- Often used as a foundation for response surface methodologies

For example, a 2^3 full factorial design with three factors would require 8 runs and would allow estimation of 3 main effects, 3 two-factor interactions, and 1 three-factor interaction [2].

Three-Level Full Factorial Designs

Three-level full factorial designs (3^k) include three levels for each factor (typically coded as -1, 0, +1), enabling researchers to investigate quadratic (curvilinear) effects and model curvature in the response surface [5]. These designs are essential when the relationship between factors and response is nonlinear or when the optimal conditions are expected to lie within the experimental region rather than at its boundaries.

Key characteristics:

- Number of runs: 3^k

- Can estimate main effects, interaction effects, and quadratic effects

- Requires more resources than two-level designs

- Particularly useful for optimization studies

The three-level design is especially valuable in reaction optimization research, where factors like temperature, pH, and concentration often exhibit quadratic effects on reaction outcomes [5]. However, as the number of factors increases, the number of runs required grows exponentially (3, 9, 27, 81, ... for 1, 2, 3, 4, ... factors), making these designs potentially resource-intensive [5].

Mixed-Level Full Factorial Designs

In many real-world applications, especially in pharmaceutical research, experiments involve a combination of factors with different numbers of levels. Mixed-level full factorial designs accommodate this reality by allowing researchers to investigate both categorical and continuous factors simultaneously [2] [4].

Common scenarios include:

- Combining two-level and three-level factors

- Incorporating categorical factors (e.g., catalyst type, solvent system) with continuous factors (e.g., temperature, concentration)

- Handling constraints that prevent certain factor combinations

These designs provide flexibility while maintaining a comprehensive understanding of the system, though they require careful planning to ensure balanced designs and interpretable results [2].

Table 1: Comparison of Full Factorial Design Types

| Design Type | Number of Runs | Effects That Can Be Estimated | Common Applications |

|---|---|---|---|

| Two-Level (2^k) | 2^k | Main effects, all interactions | Screening experiments, preliminary studies |

| Three-Level (3^k) | 3^k | Main effects, interactions, quadratic effects | Response surface modeling, optimization |

| Mixed-Level | Product of level counts | Varies by design | Real-world constraints, combined factor types |

Implementing Full Factorial Design: A Step-by-Step Methodology

Implementing a successful full factorial experiment requires meticulous planning, execution, and analysis. The following methodology provides a structured approach applicable to reaction optimization and pharmaceutical development.

Experimental Planning and Design

Step 1: Define Clear Experimental Objectives Clearly articulate the research questions and what you hope to learn from the experiment. In reaction optimization, this might include identifying critical process parameters, understanding their effects on critical quality attributes, or determining optimal operating conditions [3] [2].

Step 2: Select Factors and Levels Identify which factors to include and determine appropriate levels for each based on prior knowledge, literature, or preliminary experiments. Consider practical constraints and ensure levels span a range wide enough to detect effects but narrow enough to be operationally feasible [3] [4].

Table 2: Example Factor Selection for HPLC Method Development [3]

| Factor | Type | Level (-1) | Level (0) | Level (+1) |

|---|---|---|---|---|

| Flow Rate (mL/min) | Continuous | 0.8 | 1.0 | 1.2 |

| Wavelength (nm) | Continuous | 248 | 250 | 252 |

| pH of Buffer | Continuous | 2.8 | 3.0 | 3.2 |

Step 3: Determine the Appropriate Design Type Select between two-level, three-level, or mixed-level designs based on the research objectives, number of factors, and available resources. For initial screening, two-level designs are often sufficient, while three-level designs are better suited for detailed optimization [5] [2].

Step 4: Establish Response Variables Define what will be measured and how. In pharmaceutical applications, common responses include yield, purity, retention time, tailing factor, theoretical plates, and peak area [3].

Step 5: Address Practical Considerations Plan for replication to estimate experimental error, randomization to minimize bias, and blocking to account for known sources of variability [2].

Statistical Analysis Framework

Once experimental data has been collected, rigorous statistical analysis is essential for extracting meaningful insights. The following analytical approaches are commonly employed:

Analysis of Variance (ANOVA) ANOVA is used to partition the total variability in the response data into components attributable to each factor and their interactions, determining which effects are statistically significant [3] [2]. The ANOVA table provides F-statistics and p-values for hypothesis testing about each effect's significance.

Regression Analysis Regression modeling fits a mathematical equation to the experimental data, relating the response variable to the factors and their interactions [2]. This model can then be used for prediction and optimization within the experimental region.

Graphical Analysis Visual tools like main effects plots, interaction plots, and contour plots help interpret the results and communicate findings effectively [2]. Interaction plots are particularly useful for understanding how the effect of one factor depends on the level of another factor.

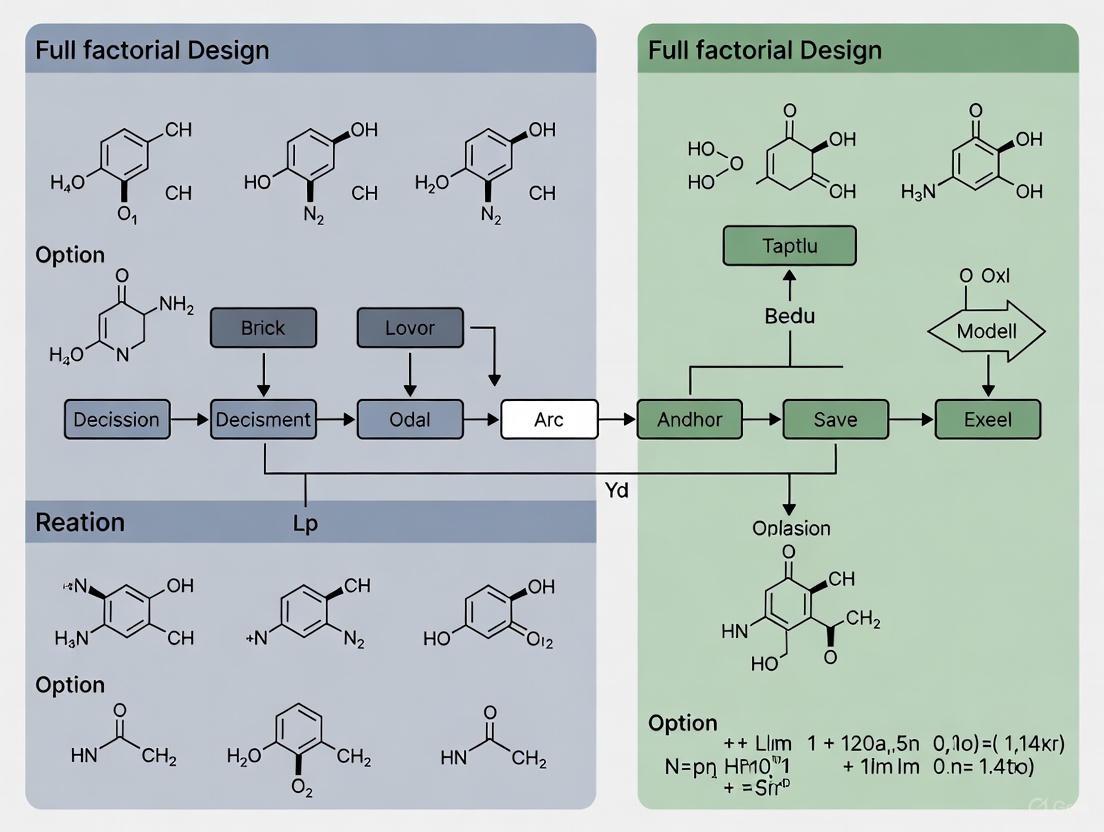

The following diagram illustrates the complete experimental workflow for a full factorial design in reaction optimization contexts:

Experimental Workflow for Full Factorial Design

Case Study: HPLC Method Development for Valsartan Analysis

A published study on the development and validation of an HPLC method for analyzing valsartan in nano-formulations provides an excellent example of full factorial design application in pharmaceutical research [3]. This case study illustrates the practical implementation and value of this methodology.

Experimental Design

The researchers employed a three-level full factorial design (3^3) to optimize the HPLC method parameters. The factors and levels investigated were:

Table 3: Experimental Design for HPLC Method Optimization [3]

| Factor | Symbol | Level (-1) | Level (0) | Level (+1) |

|---|---|---|---|---|

| Flow Rate (mL/min) | A | 0.8 | 1.0 | 1.2 |

| Wavelength (nm) | B | 248 | 250 | 252 |

| pH of Buffer | C | 2.8 | 3.0 | 3.2 |

The design required 27 experimental runs (3^3 = 27), and three critical responses were measured for each run: peak area (R1), tailing factor (R2), and number of theoretical plates (R3) [3].

Results and Statistical Analysis

Analysis of Variance (ANOVA) revealed several statistically significant effects:

- The quadratic effect of flow rate and wavelength (individually and in interaction) was highly significant (p < 0.0001 and p < 0.0086) on peak area

- The quadratic effect of pH was most significant (p < 0.0001) on tailing factor

- The quadratic effect of flow rate and wavelength individually was significant (p = 0.0006 and p = 0.0265) on the number of theoretical plates [3]

These findings demonstrated that the relationships between factors and responses were nonlinear, justifying the use of a three-level design over a simpler two-level approach.

Optimization and Outcomes

Based on the experimental results and statistical analysis, the optimal HPLC conditions were determined to be:

- Flow rate: 1.0 mL/min

- Wavelength: 250 nm

- pH of buffer: 3.0

Under these optimized conditions, the retention time of valsartan was found to be 10.177 minutes, and the percent recovery for valsartan nanoparticles ranged from 98.57% to 100.27%, demonstrating excellent accuracy [3]. This case study exemplifies how full factorial design enables systematic optimization of analytical methods critical to pharmaceutical development.

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing full factorial designs in reaction optimization research requires specific instrumentation, software, and reagents. The following table catalogues essential materials and their functions based on the valsartan case study and related research.

Table 4: Essential Research Reagents and Equipment for Pharmaceutical Experimentation

| Item | Specification/Example | Function in Research |

|---|---|---|

| HPLC System | Shimadzu LC-2010CHT with PDA detector [3] | Separation, identification, and quantification of chemical compounds |

| Analytical Column | HyperClone C18 column (250 mm × 4.6 mm id, 5 μm) [3] | Stationary phase for chromatographic separation |

| Buffer Reagents | Ammonium formate, formic acid [3] | Mobile phase components that maintain pH and improve peak characteristics |

| Organic Solvents | Acetonitrile HPLC Grade, Methanol HPLC Grade [3] | Mobile phase components that elute compounds from the column |

| pH Meter | Eutech Instruments pH 510 with glass electrode [3] | Precise measurement and adjustment of buffer pH |

| Filtration Apparatus | Millipore glass filter (0.22 μm) with vacuum pump [3] | Removal of particulate matter from mobile phases |

| Sonication Equipment | Ultrasonic Cleaner [3] | Degassing of mobile phases by removing dissolved gases |

| Statistical Software | ANOVA, regression analysis capabilities [3] [2] | Experimental design generation and statistical analysis of results |

Advantages and Limitations in Reaction Optimization Contexts

Key Advantages

Full Factorial Design offers several significant benefits for reaction optimization research:

Comprehensive Insight By studying all possible factor combinations, FFD provides a complete picture of main effects, interactions, and response surface curvature [2]. This comprehensiveness is invaluable in pharmaceutical development, where overlooking interactions could lead to suboptimal processes or unexpected scale-up issues.

Interaction Detection Unlike one-factor-at-a-time approaches, FFD explicitly accounts for interactions between factors [1] [2]. This capability is particularly important in complex reaction systems where the effect of one factor (e.g., temperature) often depends on the level of another factor (e.g., catalyst concentration).

Optimization Capability With a comprehensive understanding of main effects and interactions, researchers can estimate optimal variable settings for desired outcomes [2] [4]. This facilitates development of robust, well-understood processes aligned with Quality by Design (QbD) principles.

Model Building The data from full factorial experiments can be used to build mathematical models that predict system behavior under untested conditions [2]. These models support design space establishment and control strategy development in regulatory submissions.

Potential Limitations

Despite its advantages, Full Factorial Design presents certain challenges:

Resource Intensity As the number of factors and levels increases, the number of experimental runs grows exponentially, increasing costs, time, and resource requirements [5] [2]. This can be particularly challenging in pharmaceutical development where experiments may involve expensive materials or lengthy procedures.

Large Sample Sizes FFD often requires substantial experimentation to ensure statistical validity, which may be impractical when resources are limited or experimental conditions are difficult to replicate [2].

Data Complexity The comprehensiveness of FFD can generate large, complex datasets that require advanced statistical expertise to analyze and interpret correctly [2].

Alternative Designs and Future Directions

Comparison with Other Experimental Designs

When a full factorial design is impractical due to resource constraints or a large number of factors, several alternative strategies exist:

Fractional Factorial Designs These designs study only a fraction of the full factorial combinations, sacrificing some interaction effects for efficiency. They are particularly useful for screening many factors to identify the most influential ones [2].

Central Composite Designs (CCD) CCDs combine a two-level factorial design with additional center and axial points, enabling efficient estimation of second-order (quadratic) effects with fewer runs than a three-level full factorial [6] [7]. For example, a CCD with 3 factors requires 16-20 runs compared to 27 for a full 3^3 design [7].

Box-Behnken Designs These are spherical, rotatable designs that also require fewer runs than full factorial designs while still supporting quadratic model estimation [5].

The following diagram illustrates the relationship between different experimental designs and their applications in reaction optimization:

Experimental Design Selection Strategy

Emerging Trends and Future Applications

The application of Full Factorial Design continues to evolve, particularly in pharmaceutical and chemical development. Future directions include:

Integration with High-Throughput Experimentation Automation and miniaturization technologies enable execution of full factorial designs with large numbers of factors more efficiently, expanding their applicability [3].

Hybrid Approaches Combining full factorial elements with other design strategies creates more efficient experimental sequences tailored to specific development stages [6].

Integration with Multivariate Data Analysis Linking designed experiments with advanced multivariate analysis techniques enhances understanding of complex systems with multiple correlated responses [3] [2].

Artificial Intelligence and Machine Learning Incorporating AI and ML with traditional DOE enables more adaptive experimental strategies that learn from ongoing results to refine factor selection and level setting [2].

Full Factorial Design represents a powerful, systematic approach for optimizing chemical reactions and pharmaceutical processes. By comprehensively exploring all possible combinations of factor levels, this methodology provides unparalleled insights into main effects, interactions, and response surface curvature—addressing fundamental limitations of one-factor-at-a-time experimentation. While resource intensive for studies with many factors or levels, FFD remains invaluable for characterizing complex systems where interactions between variables significantly impact outcomes.

In the context of reaction optimization research, the rigorous understanding generated through full factorial experiments supports development of robust, well-characterized processes aligned with modern quality paradigms. When complemented with appropriate statistical analysis and modern experimental technologies, Full Factorial Design continues to be a cornerstone methodology for efficient, effective pharmaceutical development and optimization.

Within the rigorous framework of Design of Experiments (DOE), the systematic optimization of chemical reactions—a cornerstone of modern drug development—relies on a foundational lexicon. This guide explicates the core terminology of Factors, Levels, and Experimental Runs, framing them within the essential methodology of full factorial design for reaction optimization research [2] [8]. Mastery of these concepts enables researchers to deconstruct complex synthetic challenges into structured, efficient experimental campaigns that illuminate main effects and critical interactions between variables [9] [10].

Defining the Core Terminology

A Factor (or independent variable) is a controllable variable hypothesized to influence the outcome, or response, of an experiment [2] [1]. In reaction optimization, factors are the "knobs" a chemist can turn, such as catalyst type, temperature, concentration, solvent, or ligand [8].

Each factor is investigated at specific settings known as Levels [2]. Levels represent the discrete or continuous values a factor assumes during the experiment. For a temperature factor, levels could be 25°C and 80°C; for a categorical factor like catalyst, levels could be "Palladium" and "Copper" [9] [11]. The choice of levels defines the experimental space being explored.

An Experimental Run (or trial) is a single execution of the experiment under one unique combination of factor levels [9] [12]. The complete set of all possible combinations constitutes a Full Factorial Design. The total number of runs is the product of the number of levels for each factor [12]. For example, a reaction with three factors (A, B, C), each at two levels, requires 2 x 2 x 2 = 8 experimental runs to form a full factorial design [13].

Quantitative Relationships in Full Factorial Designs

The relationship between factors, levels, and runs is quantitatively precise. The tables below summarize the scalability and requirements of full factorial designs, which are critical for planning resource-intensive optimization campaigns in pharmaceutical development [11].

Table 1: Run Requirements for 2-Level Full Factorial Designs

| Number of Factors (k) | Number of Experimental Runs (2^k) |

|---|---|

| 2 | 4 |

| 3 | 8 |

| 4 | 16 |

| 5 | 32 |

| 6 | 64 |

| 7 | 128 |

| 8 | 256 |

| 10 | 1024 |

Note: The number of runs grows exponentially with added factors, often limiting practical full factorial studies to ~6 factors or fewer [11] [14].

Table 2: Example of a Mixed-Level Full Factorial Design

| Factor Name | Type | Level 1 | Level 2 | Level 3 |

|---|---|---|---|---|

| Reaction Temp. | Numerical | 25 °C | 60 °C | - |

| Catalyst Loading | Numerical | 1 mol% | 5 mol% | - |

| Solvent | Categorical | DMF | DMSO | THF |

| Total Runs | 2 x 2 x 3 = 12 |

This mixed-level design investigates two 2-level factors and one 3-level factor, requiring 12 runs for a full factorial exploration [2] [12].

Role in Reaction Optimization & Experimental Protocol

In reaction optimization, a full factorial design systematically maps how factors like ligand, base, and solvent interact to affect yield or selectivity [8]. The protocol involves:

- Defining Objectives & Factors: Identify the critical response (e.g., reaction yield) and select

kfactors to investigate based on mechanistic understanding [2]. - Setting Levels: Choose relevant level settings (e.g., high/low for continuous factors, specific types for categorical ones) that span a region of interest [1].

- Design Generation: List all

2^korL1 x L2 x ... x Lkunique factor-level combinations. This is the experimental run sheet [12]. - Randomization & Execution: Randomize the run order to mitigate confounding from lurking variables, then conduct experiments [2].

- Analysis: Use Analysis of Variance (ANOVA) to quantify the significance of main effects and interaction effects from the collected response data [2].

The following workflow diagram illustrates this structured process from planning to analysis.

Workflow for Full Factorial Reaction Optimization

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials commonly manipulated as factors in reaction optimization experiments, particularly in high-throughput experimentation (HTE) for drug development [8].

Table 3: Research Reagent Solutions in Reaction Optimization

| Reagent Category | Example Function in Experiment | Typical Role as a Factor |

|---|---|---|

| Catalysts | Facilitates bond formation; different metals/ligands alter pathway kinetics. | Categorical factor (e.g., Pd vs. Cu) |

| Ligands | Modifies catalyst activity and selectivity. | Categorical factor (e.g., Phosphine library) |

| Bases | Scavenges protons, influencing reaction rate and mechanism. | Categorical/Numerical factor (e.g., type, equiv.) |

| Solvents | Affects solubility, stability, and reaction polarity. | Categorical factor (e.g., DMF, THF, EtOH) |

| Substrates | The starting materials whose reactivity is being profiled. | Often a fixed variable or blocking factor. |

| Reagents | Direct coupling partners or transforming agents (e.g., fluorinating agents). | Categorical factor (e.g., reagent A, B, C) [8] |

Advanced Consideration: The Value of Interactions

A pivotal advantage of a full factorial design is its ability to estimate interaction effects between factors [9] [1]. An interaction occurs when the effect of one factor on the response depends on the level of another factor. For instance, a specific ligand (Factor A) may only give high yield at a high temperature (Factor B), but not at a low temperature—an effect completely invisible in "one-factor-at-a-time" studies [9]. The following diagram contrasts these experimental approaches.

Comparing OFAT and Full Factorial Approaches

For the researcher engaged in reaction optimization, factors, levels, and experimental runs are not merely abstract terms but the fundamental building blocks of efficient inquiry. Employing a full factorial design by manipulating these elements provides a complete, unbiased map of the experimental landscape [2] [10]. While the required number of runs can become prohibitive for many factors—leading to the use of fractional factorial or optimal designs for screening—the full factorial remains the gold standard for comprehensively understanding interactions within a focused set of critical variables, directly accelerating the development of robust synthetic routes in pharmaceutical science [11] [14].

In the realm of reaction optimization research, the ability to systematically explore multiple factors simultaneously is paramount for efficient process development. The 2^k factorial design stands as a fundamental screening design used to discover the vital few factors among the trivial many that influence a process [15] [16]. This framework refers to designs with k factors, each investigated at two levels, typically denoted as high (+1) and low (-1) [16]. By exploring all possible combinations of these factor levels, the 2^k design enables researchers to not only estimate the individual effect of each factor but also to uncover potential interactions between factors—where the effect of one factor depends on the level of another [17]. This approach provides a major set of building blocks for many experimental designs and is often the first stage in an experimental sequence, frequently followed by more detailed optimization studies such as response surface methodology [16].

The power of the 2^k framework lies in its structured efficiency. For a process with k factors, a full factorial design requires 2^k experimental runs. This comprehensive exploration allows for the estimation of all main effects and all interaction effects, from two-way interactions up to the k-way interaction [16]. In the context of reaction optimization, factors can be continuous (e.g., temperature, concentration) or categorical (e.g., catalyst type, solvent), though the initial screening often focuses on identifying which factors have a significant impact before proceeding to optimize their levels [15]. The application of this methodology has been demonstrated across various chemical processes, including pharmaceutical development and catalytic cracking, where it helps systematically navigate complex experimental spaces [18] [19].

Key Components and Quantitative Relationships

Yates Notation and the Design Matrix

A unique and efficient notation system, known as Yates notation, is employed to denote the various treatment combinations in a 2^k factorial design [16]. In this system, the presence of a lowercase letter indicates that the corresponding factor is at its high level, while its absence signifies the low level. The special case where all factors are at their low levels is denoted by (1). The table below illustrates this notation for a 2^3 factorial design (three factors, each at two levels).

Table 1: Treatment Combinations and Yates Notation for a 2^3 Factorial Design

| Run | Factor A | Factor B | Factor C | Yates Notation |

|---|---|---|---|---|

| 1 | - | - | - | (1) |

| 2 | + | - | - | a |

| 3 | - | + | - | b |

| 4 | + | + | - | ab |

| 5 | - | - | + | c |

| 6 | + | - | + | ac |

| 7 | - | + | + | bc |

| 8 | + | + | + | abc |

This notation is particularly valuable because each column in the design matrix (representing factors and their interactions) contains an equal number of plus and minus signs, forming contrasts that are used to compute the effects of factors and their interactions [16].

Calculating Effects and Sum of Squares

In the 2^k framework, the effect of a factor is defined as the difference in the mean response between the high and low levels of that factor [16]. This differs from the model coefficient (αi) used in standard linear models, as the Yates effect is actually twice the size of the estimated coefficient αi. The general form for calculating an effect for k factors with n replicates is given by:

Effect = (1/(2^(k-1)n)) × [Contrast of the Totals] [16]

Similarly, the sum of squares (SS), which quantifies the variation attributable to each effect, is calculated as:

SS(Effect) = (Contrast)^2 / (2^k n) [16]

The variance of an effect is given by σ² / (2^(k-2)n), where σ² represents the variance of the experimental error [16]. These calculations form the basis for determining the statistical significance of the observed effects through hypothesis testing, typically using t-tests or analysis of variance (ANOVA).

Table 2: Effect Calculations for a 2^2 Factorial Design with n=3 Replicates

| Factor Combination | Yates Notation | Total Yield (Example Data) | Calculation | Effect |

|---|---|---|---|---|

| A low, B low | (1) | 140 | A = 190/6 - 140/6 | 8.33 |

| A high, B low | a | 190 | B = 150/6 - 180/6 | -5.00 |

| A low, B high | b | 180 | AB = [(90+80)/6 - (100+60)/6] | 1.67 |

| A high, B high | ab | 200 |

Note: Example data adapted from PS [16]. The effects are calculated based on the difference in averages between high and low levels.

Experimental Protocol and Implementation

Step-by-Step Workflow for Design and Execution

Implementing a 2^k factorial design in reaction optimization follows a systematic workflow that ensures reliable and interpretable results. The following diagram illustrates the key stages of this process.

Workflow for 2^k Factorial Design

Step 1: Define Research Objectives and Factors Clearly articulate the goals of the study and identify the k factors to be investigated. Determine appropriate low and high levels for each factor based on scientific knowledge and practical constraints. In reaction optimization, typical factors include temperature, concentration, reaction time, catalyst loading, and solvent type [17].

Step 2: Select Design Type and Replication Strategy Choose between a full factorial design (all 2^k combinations) or a fractional factorial design if resource constraints warrant a reduced number of runs. Determine the number of replicates (n) based on the desired statistical power and practical considerations. Include center points to test for curvature and estimate pure error [17].

Step 3: Randomize and Execute Experiments Randomize the run order to protect against lurking variables such as time-based drift in equipment or environmental conditions [15]. Execute the experiments according to the randomized schedule, carefully controlling all non-investigated factors.

Step 4: Data Collection and Analysis Collect response data for each experimental run. Analyze the data using statistical methods to estimate factor effects, compute sum of squares, and determine statistical significance [16].

Research Reagent Solutions and Essential Materials

Table 3: Key Research Reagents and Materials for Factorial Experiments

| Item | Function in Experimental Context | Application Example |

|---|---|---|

| Catalyst | Substance that alters reaction rate without being consumed; a common factor in optimization | Nickel or palladium catalysts in coupling reactions [19] |

| Solvent System | Medium in which reaction occurs; can significantly influence yield and selectivity | Solvent selection guided by pharmaceutical guidelines for greener alternatives [19] |

| Starting Materials/Reagents | Reactants whose concentrations are often investigated as factors | Concentration of starting materials in chemical synthesis [17] |

| Analytical Instruments | Equipment for quantifying response variables (e.g., yield, purity) | HPLC for measuring area percent yield and selectivity [19] |

| High-Throughput Experimentation Platforms | Automated systems for highly parallel reaction execution | 96-well plates for screening numerous reaction conditions [19] |

Data Analysis and Interpretation

Statistical Analysis and Significance Testing

Once experimental data is collected, statistical analysis begins with estimating the effects of each factor and their interactions. The significance of these effects can be evaluated using a t-test, where the test statistic is calculated as:

t* = Effect / √(MSE/(n×2^(k-2))) with 2^k(n-1) degrees of freedom [16]

This tests the null hypothesis that the true effect is zero. Alternatively, analysis of variance (ANOVA) can be used to partition the total variability in the data into components attributable to each effect and the residual error. Effects with p-values below a predetermined significance level (typically 0.05) are considered statistically significant.

For unreplicated factorial designs (n=1), where there is no independent estimate of error variance, normal probability plots or half-normal plots are often used to identify significant effects. In these plots, non-significant effects tend to fall along a straight line, while significant effects deviate from this line [16].

Practical Application in Reaction Optimization

In a practical case study involving a waferboard manufacturer needing to reduce formaldehyde concentration in an adhesive-filtration operation, a 2^4 full factorial design was implemented to identify key factors affecting filtration rate [15]. The design included four factors (A, B, C, D) with the goal of maximizing filtration rate while reducing formaldehyde concentration (Factor C). The experimenters recorded filtration rates (in gallons/hour) at each combination of process settings.

Preliminary analysis through data sorting and scatter plots revealed that temperature (Factor A) had a strong correlation with filtration rate, while pressure (Factor B) showed little impact [15]. Coloring the scatter plot by formaldehyde concentration (Factor C) suggested a potential interaction between temperature and concentration, where the effect of temperature on filtration rate differed depending on the concentration level. This interaction effect would be formally quantified during the statistical analysis of the full factorial model.

Advanced Applications and Integration with Modern Approaches

The traditional 2^k factorial framework has evolved through integration with modern computational and automation technologies. In contemporary reaction optimization, especially within pharmaceutical development, 2^k designs often serve as the initial screening phase within larger machine learning-driven workflows [19]. These approaches combine the structured design of experiments with Bayesian optimization to efficiently navigate complex chemical spaces.

Hybrid modeling approaches have emerged that integrate mechanistic understanding with data-driven models [18]. In these frameworks, the "mechanism-driven model" typically forms the core, while the "data-driven model" helps solve parameters or function expressions, retaining the physical significance of the mechanism-driven model to the greatest extent [18]. This integration is particularly valuable in chemical process optimization, where first principles understanding can guide the experimental design while empirical data refines the model predictions.

The integration of 2^k factorial designs with high-throughput experimentation (HTE) has significantly accelerated reaction optimization in pharmaceutical process development [19]. HTE platforms, utilizing miniaturized reaction scales and automated robotic tools, enable highly parallel execution of numerous reactions, making it feasible to explore broader experimental spaces than traditional approaches. When combined with machine learning optimization, this synergy enables efficient data-driven search strategies with highly parallel screening of numerous reactions, offering promising prospects for automated and accelerated chemical process optimization [19].

The following diagram illustrates how traditional factorial designs integrate with modern optimization approaches.

Integration of Traditional and Modern Methods

In one pharmaceutical application, this integrated approach was deployed for a Ni-catalyzed Suzuki coupling and a Pd-catalyzed Buchwald-Hartwig reaction, where it successfully identified multiple reaction conditions achieving >95 area percent (AP) yield and selectivity [19]. This led to improved process conditions at scale in just 4 weeks compared to a previous 6-month development campaign, demonstrating the powerful synergy between traditional factorial design principles and modern optimization technologies [19].

The 2^k factorial design remains a cornerstone methodology in reaction optimization research, providing a systematic framework for screening multiple factors and identifying significant main effects and interactions. Its structured approach enables efficient exploration of experimental spaces while maintaining statistical rigor. The integration of this classical methodology with modern technologies—including high-throughput experimentation, machine learning optimization, and hybrid modeling—has further enhanced its power and applicability in contemporary research environments. For scientists and engineers engaged in process development and optimization, mastery of the 2^k framework provides an essential foundation for efficient and effective experimental strategy, serving as a critical first step in the journey from initial screening to optimized process conditions.

In the realm of reaction optimization research, particularly within pharmaceutical development, the selection of an experimental design is a critical determinant of a study's success and efficiency. Among the various methodologies available, the full factorial design stands out as a foundational and powerful approach for systematically investigating complex processes. This whitepaper delineates the three core advantages of full factorial design—comprehensiveness, interaction detection, and efficiency—framed within the context of drug development and formulation science. By enabling researchers to simultaneously explore multiple factors and their intricate interrelationships, full factorial design provides a complete picture of the reaction or formulation landscape, moving beyond the limitations of traditional one-factor-at-a-time (OFAT) experimentation [2] [4]. This systematic approach is indispensable for accelerating development timelines, optimizing product quality, and ensuring robust, scalable processes in pharmaceutical manufacturing.

Core Advantages of Full Factorial Design

The full factorial design distinguishes itself through three pivotal advantages that cater to the complex demands of reaction optimization research.

Comprehensiveness

A full factorial design is characterized by its systematic examination of all possible combinations of the levels of each factor under investigation [2] [11]. This exhaustive approach ensures that the entire experimental space is mapped, providing a holistic understanding of the system's behavior. Unlike other screening designs that might explore only a fraction of the possible combinations, full factorial design guarantees that no potential combination is overlooked, thereby casting light on the underlying realities of complex systems [2]. This comprehensiveness is crucial in pharmaceutical formulation, where critical quality attributes (CQAs) such as drug dissolution, stability, and bioavailability can be influenced by multiple interacting factors [20] [21]. The design offers a robust methodology for process understanding, allowing researchers to obtain a complete picture of the main effects and potential curvature in the response surface, which is foundational for subsequent optimization phases [2] [4].

Interaction Detection

Perhaps the most significant strength of the full factorial design is its ability to detect and quantify interactions between factors [2] [4] [11]. An interaction occurs when the effect of one factor on the response variable depends on the level of another factor [4]. In practical terms, this means that the optimal level of one process parameter, such as temperature, might be different at varying levels of another parameter, such as catalyst concentration.

- Realistic Modeling: This capability allows for a more realistic emulation of process dynamics, where variables often interact in non-linear and complex ways [2]. By accounting for these interplays, the design guards against the oversimplification that can stem from OFAT experiments or screening designs that confound interactions.

- Informed Decision-Making: Identifying significant interactions provides profound insights for informed decision-making. For instance, in drug formulation, an interaction between a binder and a disintegrant can critically influence the disintegration time of a tablet [20] [21]. Understanding such interactions is essential for optimizing the formulation and ensuring consistent product performance.

Efficiency

Despite requiring a larger number of runs than fractional factorial designs for a given number of factors, the full factorial design is highly efficient in its use of data [2] [22]. Its efficiency is derived from several key aspects:

- Simultaneous Factor Evaluation: Multiple factors are evaluated simultaneously in a single, integrated experiment, which is more efficient and informative than conducting a series of separate OFAT experiments [4].

- Data Maximization: The entire dataset from the experiment is used to estimate every main effect and interaction. In a 2^k factorial design, the complete sample size (N) is used to test the effect of each factor and their interactions, providing strong statistical power for these estimates [22]. This contrasts with RCTs that might only compare a single active treatment to a control.

When compared to other common designs, the advantages of full factorial become clear. The table below summarizes a comparative analysis based on a study optimizing metronidazole immediate-release tablets [21].

Table 1: Comparison of Experimental Designs in Formulation Optimization

| Design Type | Primary Use | Key Advantage | Key Limitation | Suitability for Reaction Optimization |

|---|---|---|---|---|

| Full Factorial | Screening & Initial Optimization | Comprehensiveness; detects all interactions | Runs grow exponentially with factors [11] | Ideal for initial studies with few (<5) critical factors [2] [21] |

| Fractional Factorial | Screening | Reduces runs when many factors are present | Confounds (aliases) interactions, leading to potential loss of information [23] | Best for screening many factors to identify vital few |

| Central Composite (CCD) | Optimization | Examines quadratic effects; good for response surface modeling | Extreme factor levels (α points) may exceed practical limits [21] | Excellent for final optimization and modeling curvature |

| Box-Behnken (BBD) | Optimization | Avoids extreme factor levels; requires fewer runs than CCD | Less efficient than CCD for studying quadratic effects [21] | Practical and cost-efficient for optimization within safe factor ranges |

Quantitative Analysis of Full Factorial Design

The structure and resource requirements of a full factorial design are mathematically precise. The number of experimental runs is a direct function of the factors and their levels.

Experimental Run Calculations

The total number of unique experimental runs (N) required for a full factorial design is calculated as: N = L^k where:

For the common two-level design (2^k), this leads to the following exponential growth in runs:

Table 2: Number of Runs Required for a 2-Level Full Factorial Design

| Number of Factors (k) | Number of Runs (2^k) |

|---|---|

| 2 | 4 [24] |

| 3 | 8 [11] [24] |

| 4 | 16 [11] |

| 5 | 32 [11] |

| 6 | 64 [11] |

| 7 | 128 [11] |

| 10 | 1024 [11] |

This exponential relationship is the primary reason full factorial designs are typically limited to a maximum of 4-6 factors in practice, as the number of runs quickly becomes unmanageable [11]. For factors with more than two levels, the number of runs increases even more rapidly. For example, a 3^3 design (three factors, each with three levels) requires 27 experimental runs [24].

Statistical Power and Sample Size

To reliably detect a specific effect size amidst natural process variability, replication is often necessary. The required sample size can be approximated using statistical power analysis. The underlying principle is that larger sample sizes are needed to detect smaller effects or when the inherent variability (standard deviation) of the system is high [11]. Sufficient replication ensures that the estimates of main effects and interactions are reliable and precise, reducing the risk of drawing incorrect conclusions from the experimental data [2].

Experimental Protocol for a Full Factorial Design

Implementing a full factorial design involves a structured sequence of steps, from initial planning to final analysis. The following workflow and protocol outline this process for a typical reaction optimization study.

Diagram 1: Full Factorial Experimental Workflow

Step-by-Step Methodology

Step 1: Identify Factors and Levels

- Determine Factors: Select the independent variables (factors) to be investigated. These can be continuous (e.g., temperature, concentration) or categorical (e.g., catalyst type, solvent) [2] [4]. In pharmaceutical formulation, Critical Material Attributes (CMAs) and Critical Process Parameters (CPPs) are chosen based on their risk to Critical Quality Attributes (CQAs) [21].

- Define Levels: Choose the specific values (levels) for each factor. For a 2-level design, these are typically "low" (-1) and "high" (+1) values, selected to cover a realistic and relevant range of operation [4]. For example, in a drug formulation study, factors could include the concentration of a binder (e.g., 10 mg vs. 20 mg) and a disintegrant (e.g., 20 mg vs. 40 mg) [21].

Step 2: Create the Experimental Design Matrix

- The design matrix is a table that systematically lists all possible combinations of the factor levels [4]. For a 2^3 design, this matrix would have 8 rows (runs) and 3 columns (factors). Each row represents a unique experimental condition to be tested.

Step 3: Determine Sample Size and Randomize Runs

- Calculate Total Runs: The base number of runs is determined by L^k. To account for experimental error and improve precision, replication (repeating the same experimental run multiple times) is incorporated [2] [11]. The number of replicates depends on the desired level of statistical confidence.

- Randomization: Once all runs (including replicates) are defined, the order of experimentation must be randomized. This helps to mitigate the impact of lurking variables and ensures that the factor effects are not confounded with uncontrolled sources of variation [2].

Step 4: Execute Experiments and Collect Data

- Conduct the experiments in the predetermined random order.

- Precisely measure the response variable(s) of interest for each run. In reaction optimization, responses could include yield, purity, or reaction time. In formulation, responses are often dissolution rate, disintegration time, or tablet hardness [21].

Step 5: Analyze Data using Statistical Techniques

- Analysis of Variance (ANOVA): Use ANOVA to partition the total variability in the response data into components attributable to each main effect and interaction. This test determines the statistical significance of these effects (typically at p < 0.05) [2].

- Regression Analysis: Fit a mathematical model to the experimental data. For a 2-level design, this is typically a linear model that relates the response variable to the factors and their interactions. This model can then be used for prediction and optimization [2].

Step 6: Interpret Effects and Optimize Settings

- Main Effects Plots: Visualize the individual impact of each factor on the response.

- Interaction Plots: Graphically examine the nature of significant interactions. Parallel lines indicate no interaction, while non-parallel or crossing lines suggest an interaction is present [2].

- Optimization: Use the fitted regression model to identify the factor level combinations that maximize or minimize the response variable, leading to the optimal process settings [2] [4].

Essential Research Reagent Solutions

The application of full factorial design in pharmaceutical reaction and formulation optimization involves the careful selection and control of critical materials. The following table details key reagent solutions and their functions, as exemplified in a metronidazole immediate-release tablet case study [21].

Table 3: Key Research Reagents in Pharmaceutical Formulation Optimization

| Reagent / Material | Function in Optimization | Example from Case Study |

|---|---|---|

| Active Pharmaceutical Ingredient (API) | The drug substance whose delivery and efficacy are being optimized. | Metronidazole [21] |

| Binder (e.g., Povidone K30) | Promotes granule formation and provides mechanical strength to the tablet. | Concentration identified as a Critical Material Attribute (CMA) [21] |

| Super Disintegrant (e.g., Crospovidone) | Facilitates tablet breakdown in fluid, critical for drug release. | Concentration optimized to achieve minimum disintegration time [21] |

| Glidant/Lubricant (e.g., Magnesium Stearate) | Improves powder flow and prevents adhesion to tooling during compression. | Concentration identified as a CMA and optimized [21] |

| Solvent (for wet granulation) | Facilitates the granulation process; typically evaporated and not present in final product. | Not specified in the case study, but essential for the wet granulation method used [21] |

Within the rigorous and resource-conscious field of drug development, the full factorial design emerges as a cornerstone methodology for reaction and formulation optimization. Its triad of key advantages—comprehensiveness, robust interaction detection, and statistical efficiency—provides researchers and scientists with an unparalleled tool for mapping complex experimental landscapes. By systematically investigating all possible factor combinations, this design uncovers not only the individual main effects but also the critical interactions that dictate process behavior, which are often missed by less thorough approaches. While its resource demands necessitate careful factor selection, its application in the initial stages of process development yields a deep, foundational understanding that enables precise optimization and robust validation. As the pharmaceutical industry continues to embrace structured, quality-by-design frameworks, the full factorial design remains an indispensable component of the scientist's toolkit for accelerating development and ensuring the delivery of high-quality, effective medicines.

In the fields of reaction optimization, drug development, and clinical research, the pursuit of efficiency is paramount. The traditional approach to experimentation, known as One-Factor-at-a-Time (OFAT), involves varying a single variable while holding all others constant [25]. This method has been largely superseded by more sophisticated strategies rooted in the Design of Experiments (DOE) framework, chief among them being the Full Factorial Design (FFD) [4]. A Full Factorial Design is an experimental strategy that systematically investigates the effects of multiple factors (independent variables) and their interactions on a response variable by testing all possible combinations of the levels assigned to each factor [9]. This in-depth technical guide will demonstrate the clear superiority of simultaneous testing via Full Factorial Designs over the OFAT approach, particularly within the critical context of reaction optimization research. The core thesis is that FFD provides a more efficient, informative, and robust framework for understanding complex systems, ultimately accelerating the pace of scientific discovery and industrial development.

Fundamental Concepts and Definitions

What is One-Factor-at-a-Time (OFAT)?

OFAT is a classical experimentation method where a researcher investigates the effect of one input factor on a response while maintaining all other factors at fixed, constant levels. Once the effect of that factor is determined, the process is repeated for the next factor [25]. The procedure is as follows:

- Select a baseline set of conditions for all factors.

- Vary one factor across its chosen levels while keeping all other factors rigidly fixed at their baseline.

- Observe and record the response.

- Return the varied factor to its baseline before selecting the next factor to vary. This cycle continues until all factors of interest have been tested individually [25].

What is Full Factorial Design (FFD)?

A Full Factorial Design is a systematic DOE approach that investigates the effects of multiple factors simultaneously. In an FFD, every possible combination of the levels from all factors is tested [4] [9]. This completeness allows for a comprehensive exploration of the experimental space.

- Factors: The independent variables or inputs that the researcher wishes to investigate (e.g., temperature, catalyst concentration, reaction time) [4].

- Levels: The specific values or settings chosen for each factor (e.g., for temperature: 30°C and 60°C) [4].

- Runs/Treatment Combinations: Each unique combination of factor levels in the design. For k factors each at 2 levels, the total number of runs is 2k [22] [9].

Table: Types of Full Factorial Designs

| Design Type | Description | Best Use Cases |

|---|---|---|

| 2-Level Full Factorial | Each factor has two levels (e.g., high/low). Allows estimation of main effects and interactions but cannot detect curvature. | Screening experiments to identify vital few factors from many potential factors [4]. |

| 3-Level Full Factorial | Each factor has three levels. Allows estimation of main effects, interactions, and quadratic effects (curvature). | Modeling and optimizing systems where a non-linear (curved) response is suspected [4]. |

| Mixed-Level Full Factorial | Different factors have different numbers of levels. Allows for combining continuous and categorical factors. | Studying systems with a mix of factor types (e.g., catalyst type (categorical) and temperature (continuous)) [4]. |

The Critical Limitations of the OFAT Approach

The OFAT method, while intuitively simple, possesses several critical flaws that limit its effectiveness in studying complex, modern systems.

- Failure to Capture Interaction Effects: OFAT's most significant limitation is its fundamental assumption that factors act independently on the response. It is incapable of detecting interactions, which occur when the effect of one factor depends on the level of another factor [25] [9]. In reality, factors often interact, and these interactions can be the key to major process improvements. As demonstrated in the SKF bearing case study, an OFAT approach would have missed a crucial interaction between heat treatment and osculation that led to a fivefold increase in bearing life [9].

- Inefficient Use of Resources: Although OFAT appears straightforward, it requires a large number of experimental runs to study multiple factors, and the data collected provides limited information. This makes it a time-consuming and resource-intensive strategy, particularly as the number of factors increases [25].

- Lack of Optimization Capabilities and Risk of Misleading Conclusions: The OFAT method is ill-suited for finding optimal process settings. Because it does not explore the combined space of all factors, it can easily converge on a local optimum, completely missing a far superior global optimum [25]. Furthermore, the conclusions drawn from OFAT can be highly misleading if interactions are present, leading researchers to incorrect conclusions about how a system truly behaves [9].

The Superior Power of Full Factorial Design

Full Factorial Designs were developed to directly address the shortcomings of OFAT. Their advantages are rooted in statistical principles and have been proven across countless industries.

Key Statistical and Practical Advantages

- Revealing Interactions: The primary advantage of FFD is its ability to quantify interactions between factors [4] [9] [26]. This is often the most crucial finding in an experiment, as it reveals how the system works holistically. A factorial design is required to detect such interactions; use of OFAT when interactions are present can lead to a serious misunderstanding of how the response changes with the factors [9].

- Greater Efficiency and Broader Validity: Factorial designs are highly efficient. They provide significantly more information—on main effects and all interactions—with the same number of runs or fewer than a comparable OFAT study [22] [26]. This efficiency allows researchers to "ask Nature multiple questions at once" [9]. Additionally, because factors are tested over a range of other factor levels, the conclusions from a factorial design are valid over a broader range of experimental conditions, enhancing external validity [9] [26].

- A Pathway to Optimization: The comprehensive data from an FFD allows researchers to build a statistical model of the process. This model can then be used to estimate the optimal settings for the independent variables to achieve the best possible outcome for the response variable, a capability OFAT lacks [4].

Table: Quantitative Comparison of Experimental Runs: OFAT vs. FFD

| Number of Factors | Levels per Factor | OFAT Runs Required | Full Factorial Runs Required (All Combinations) |

|---|---|---|---|

| 2 | 2 | 4 | 4 |

| 3 | 2 | 9 | 8 |

| 4 | 2 | 16 | 16 |

| 5 | 2 | 25 | 32 |

| 3 | 3 | 15 | 27 |

Note: The OFAT run count assumes a baseline and testing each factor individually. The efficiency gain of FFD is clear with 3 factors and becomes dramatic as factors increase, as FFD uses all data to estimate all effects, whereas OFAT data is only used for one factor at a time [22] [9].

A Concrete Example: The Bearing Life Experiment

A classic example from the bearing manufacturer SKF powerfully illustrates the advantage of FFD. Engineers wanted to test a new, cheaper cage design. A statistician, Christer Hellstrand, showed them how to test two additional factors (heat treatment and outer ring osculation) "for free" within their budget of eight experimental runs by using a 2x2x2 full factorial design. The results were revealing [9]:

- The new cage design itself made little difference.

- However, the experiment uncovered a dramatic interaction: when both heat treatment and osculation were set to their "high" condition, the bearing life increased fivefold. This profound discovery, which would have been completely missed by an OFAT study, led to a massive product improvement and demonstrated how interactions are often the key to unlocking major breakthroughs [9].

Implementing Full Factorial Design in Reaction Optimization

The implementation of FFD is a structured process that, when followed carefully, yields highly reliable and actionable results.

A Step-by-Step Experimental Protocol

- Identify Factors and Levels: Begin by selecting the key variables (factors) to investigate and choose appropriate levels for each. This decision should be based on prior knowledge, scientific judgment, and the goals of the experiment [4].

- Create the Design Matrix: Construct a table that lists all possible combinations of the factor levels. This matrix serves as the blueprint for the experimental runs [4].

- Determine Sample Size and Replicates: Calculate the total number of runs from the design (e.g., 2^k for a two-level design with k factors). To estimate experimental error and improve precision, include replicates—multiple runs of the same experimental conditions [4].

- Randomize Run Order: To minimize the impact of lurking variables and systematic biases, the order in which the experimental runs are conducted should be randomized [25].

- Execute Experiments and Collect Data: Run the experiments according to the randomized design matrix, carefully measuring and recording the response variable(s) for each run.

- Analyze Results: Use statistical methods to analyze the data:

- Evaluate Main Effects: Determine the individual impact of each factor on the response [4].

- Evaluate Interaction Effects: Identify and interpret significant interactions between factors [4].

- Optimize Settings: Use the resulting model to identify the factor level combinations that produce the optimal response [4].

FFD Implementation Workflow

The Scientist's Toolkit: Essential Reagent Solutions for a Catalysis Optimization FFD

When applying FFD to a reaction optimization, such as a catalytic reaction, the choice of materials and their functions is critical. The following table details key research reagent solutions for such a study.

Table: Essential Research Reagents for a Catalysis FFD

| Reagent / Material | Function in Experiment |

|---|---|

| Catalyst (e.g., Pd(PPh3)4) | The substance that increases the rate of the reaction; a primary factor whose loading is often varied (e.g., 1 mol% vs. 5 mol%). |

| Solvent (e.g., DMF, Toluene, THF) | The medium in which the reaction occurs; a categorical factor whose identity can profoundly influence reaction rate and selectivity. |

| Ligand (e.g., BINAP, XPhos) | A molecule that binds to the catalyst and can modify its activity and selectivity; often studied for its interaction with the catalyst and solvent. |

| Substrate | The starting material upon which the reaction is performed; its purity is controlled, and its structure is kept constant in a single study. |

| Base (e.g., K2CO3, Cs2CO3, Et3N) | A reagent used to neutralize byproducts or deprotonate substrates; a factor whose type and concentration can be critical. |

| Automated Parallel Reactor System | A platform for conducting multiple reaction experiments simultaneously under controlled conditions (temperature, stirring), ensuring reproducibility and enabling high-throughput screening [27]. |

Advanced Applications and Future Directions

The principles of FFD are amplified when integrated with modern technologies and advanced statistical methodologies.

- Integration with Automation and High-Throughput Screening: The power of FFD is fully realized when combined with automated reactor systems and high-throughput screening. These systems can execute the multiple experimental runs required by an FFD simultaneously and with high precision, drastically reducing the time and potential for human error associated with manual methods [27]. This is particularly crucial in drug discovery and chemical synthesis, where speed and reproducibility are essential [27].

- Connection to Response Surface Methodology (RSM): Full factorial designs, particularly 2-level designs, are often the foundation for further optimization. They are used as screening designs to identify important factors, which are then investigated in more detail using Response Surface Methodology (RSM) [25] [4]. RSM employs designs like Central Composite Designs (CCD) to model curvature in the response and pinpoint a precise optimum, building directly on the insights gained from the initial factorial experiment [25].

FFD in the Optimization Workflow

The evidence for the superiority of Full Factorial Designs over the One-Factor-at-a-Time approach is overwhelming. While OFAT offers superficial simplicity, it is a risky and inefficient strategy that often fails to reveal the true nature of complex systems, especially the critical interactions between factors. In contrast, FFD provides a structured, efficient, and powerful framework for understanding and optimizing processes. Its ability to use experimental resources efficiently, uncover interaction effects, and provide a solid foundation for further optimization makes it an indispensable tool in the modern researcher's toolkit. For researchers, scientists, and drug development professionals dedicated to accelerating discovery and achieving robust, optimal outcomes, the adoption and mastery of Full Factorial Design is not just a best practice—it is a necessity.

Implementing Full Factorial Design: A Step-by-Step Guide for Pharmaceutical Development

The Foundation of Full Factorial Design

In the realm of reaction optimization research, a Full Factorial Design (FFD) is a systematic methodology that enables the simultaneous investigation of multiple process parameters, or factors, and their complex interplay on a critical outcome, or response variable [2] [28]. This approach involves experimentally testing every possible combination of the levels assigned to each factor [9]. The foundational step—identifying these critical factors and defining their relevant levels—is paramount. A meticulously executed FFD provides a complete map of the experimental space, allowing for the precise determination of main effects (the individual impact of each factor) and interaction effects (how the effect of one factor changes across the levels of another) [2] [4]. This comprehensive understanding is crucial for developing robust, efficient, and scalable chemical processes in drug development.

A Systematic Methodology for Factor and Level Selection

The process of identifying factors and defining levels requires a disciplined, science-driven approach to ensure the experimental design is both efficient and informative.

1.2.1 Identifying Critical Factors: The selection of factors should be guided by prior knowledge, including preliminary research, historical process data, and mechanistic understanding of the reaction. The goal is to narrow the focus to the variables most likely to have a significant impact on the response. In a pharmaceutical context, typical critical factors for a chemical reaction might include Temperature, Reaction Time, Catalyst Loading, and Reactant Concentration [28]. It is essential to distinguish between continuous factors (e.g., temperature, pressure) that can be set to any value within a range, and categorical factors (e.g., solvent type, catalyst species) which represent distinct, non-numerical categories [2] [4].

1.2.2 Defining Relevant Levels: For each continuous factor, two or more levels are selected to span a realistic and relevant range of operation. A 2-level design (e.g., low/high) is highly efficient for screening and identifying significant linear effects [2] [29]. To detect curvature or nonlinear (quadratic) effects in the response, a 3-level design (e.g., low/medium/high) is necessary [2] [28]. The chosen range must be wide enough to provoke a measurable change in the response, yet not so extreme as to force the reaction into an impractical or unsafe operating regime. For a 2-level FFD, levels are often coded as -1 (low) and +1 (high) to simplify mathematical modeling and analysis [29] [28].

Table 1: Example of Factor and Level Definition for a Hypothetical Catalytic Reaction

| Factor Name | Factor Type | Low Level (-1) | High Level (+1) | Units |

|---|---|---|---|---|

| Reaction Temperature | Continuous | 60 | 100 | °C |

| Catalyst Loading | Continuous | 1.0 | 2.0 | mol% |

| Solvent Polarity | Categorical | Toluene | Acetonitrile | - |

| Mixing Speed | Continuous | 400 | 800 | rpm |

Experimental Protocol: Implementing the Design

Once factors and levels are defined, the experimental plan is formalized.

Construct the Design Matrix: For a 2-level FFD with k factors, the total number of unique experimental runs is 2^k [29] [28]. The matrix lists every possible combination of the low (-1) and high (+1) levels for all factors. This is often presented in standard order [29] [28].

Incorporate Replication and Randomization: To obtain an estimate of experimental error and ensure the reliability of the results, the entire set of runs is replicated [2] [29]. Furthermore, the run order should be fully randomized to protect against the influence of lurking variables (e.g., ambient humidity, reagent degradation over time) that could bias the results [2] [29].

Consider Center Points (for continuous factors): Adding experimental runs at the center point (coded level 0 for all continuous factors) is a critical best practice. These points do not change the estimates of the main or interaction effects but provide a direct check for curvature in the response surface and a more robust pure-error estimate [29].

Table 2: Full Factorial Design Matrix (2³) with Replication and Randomization for a Catalytic Reaction

| Standard Order | Random Run Order | Temperature (X₁) | Catalyst Loading (X₂) | Solvent (X₃) |

|---|---|---|---|---|

| 1 | 7 | -1 | -1 | -1 |

| 2 | 12 | +1 | -1 | -1 |

| 3 | 4 | -1 | +1 | -1 |

| 4 | 9 | +1 | +1 | -1 |

| 5 | 2 | -1 | -1 | +1 |

| 6 | 15 | +1 | -1 | +1 |

| 7 | 11 | -1 | +1 | +1 |

| 8 | 5 | +1 | +1 | +1 |

| (Center Point) | 1 | 0 | 0 | 0 |

| ... (Replicates & more center points follow the same randomized pattern) |

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and reagents essential for conducting high-quality optimization experiments.

Table 3: Key Research Reagent Solutions for Reaction Optimization

| Item | Function / Relevance |

|---|---|

| Anhydrous Solvents | To control reaction medium polarity and prevent undesirable side reactions with water, ensuring reproducibility [28]. |

| High-Purity Catalysts | To ensure consistent activity and selectivity; variations in purity can be a significant source of uncontrolled variability [28]. |

| Certified Reference Standards | For accurate calibration of analytical equipment (e.g., HPLC, GC) to ensure precise and accurate quantification of yield and purity [29]. |

| Inert Atmosphere Glove Box | For handling air- and/or moisture-sensitive reagents and catalysts, a critical requirement for many modern synthetic methodologies [29]. |

Visualizing the Full Factorial Workflow

The following diagram illustrates the logical workflow for the initial phase of a Full Factorial Design, from planning to execution.

Diagram 1: Factorial Design Setup Workflow

In the realm of reaction optimization research, the transition from a conceptual experimental plan to a tangible, executable setup is achieved through the construction of the Experimental Design Matrix. This matrix serves as the fundamental blueprint for any Full Factorial Design (FFD), systematically encoding the combinations of factor levels to be tested. It is the structured framework that enables researchers to efficiently explore complex experimental spaces and extract meaningful insights about main effects and interaction effects [2] [28].

Within the context of a broader thesis on Full Factorial Design, this step is paramount. It transforms the abstract principles of Design of Experiments (DOE) into a practical plan capable of revealing the intricate, and often non-linear, relationships that govern chemical reactions and process outcomes [30]. For professionals in drug development and other research-intensive fields, mastering the construction of this matrix is a critical skill for achieving robust, optimized, and well-understood processes.

Fundamental Concepts of the Design Matrix

The Experimental Design Matrix is a mathematical representation of the experimental plan. In a Full Factorial Design, every possible combination of all factors across their specified levels is included, making it a comprehensive approach to process investigation [2].

- Factors and Levels: Independent variables manipulated by the experimenter are termed factors. These can be numerical (e.g., temperature, pressure) or categorical (e.g., catalyst type, solvent). Each factor is assigned specific levels, which are the values or settings at which it will be tested during the experiment [2].

- Runs and Treatments: Each row in the design matrix represents a unique run or treatment—a specific combination of factor levels to be executed in the laboratory. The total number of runs in a full factorial design is given by the product of the number of levels for all factors. For ( k ) factors, each at 2 levels, this results in ( 2^k ) experimental runs [28].

- Coding Scheme: To simplify calculations and unify the scale for factors with different physical units, factor levels are often coded. The standard practice is to code the low level as

-1(or sometimes-), the high level as+1(or+), and if applicable, a center point as0[31] [3]. This coded matrix is also known as the model matrix or analysis matrix [31].

The Property of Orthogonality

A key characteristic of a properly constructed design matrix for a 2-level factorial design is orthogonality. This means that the columns representing the main effects and interactions are all pairwise uncorrelated; the sum of the products of their corresponding entries is zero [31]. The immense practical value of orthogonality is that it eliminates correlation between the estimates of the main effects and interactions. This allows each effect to be estimated independently and with maximum precision [31].

Constructing a Two-Level Full Factorial Design Matrix

The two-level full factorial design ((2^k)) is one of the most prevalent forms in scientific research, particularly valuable for screening influential factors and quantifying interaction effects [2] [28].

The Standard Order Algorithm

A systematic method for generating the design matrix for a (2^k) factorial design is to follow the standard order. This algorithm ensures a structured and non-arbitrary arrangement of experimental runs [31].

- Rule for Standard Order: The first column (Factor A) alternates signs with each run:

-1, +1, -1, +1, .... The second column (Factor B) alternates signs every two runs:-1, -1, +1, +1, .... The third column (Factor C) alternates signs every four runs, and so on. In general, the (i)-th column starts with (2^{i-1}) repeats of-1followed by (2^{i-1}) repeats of+1[31].