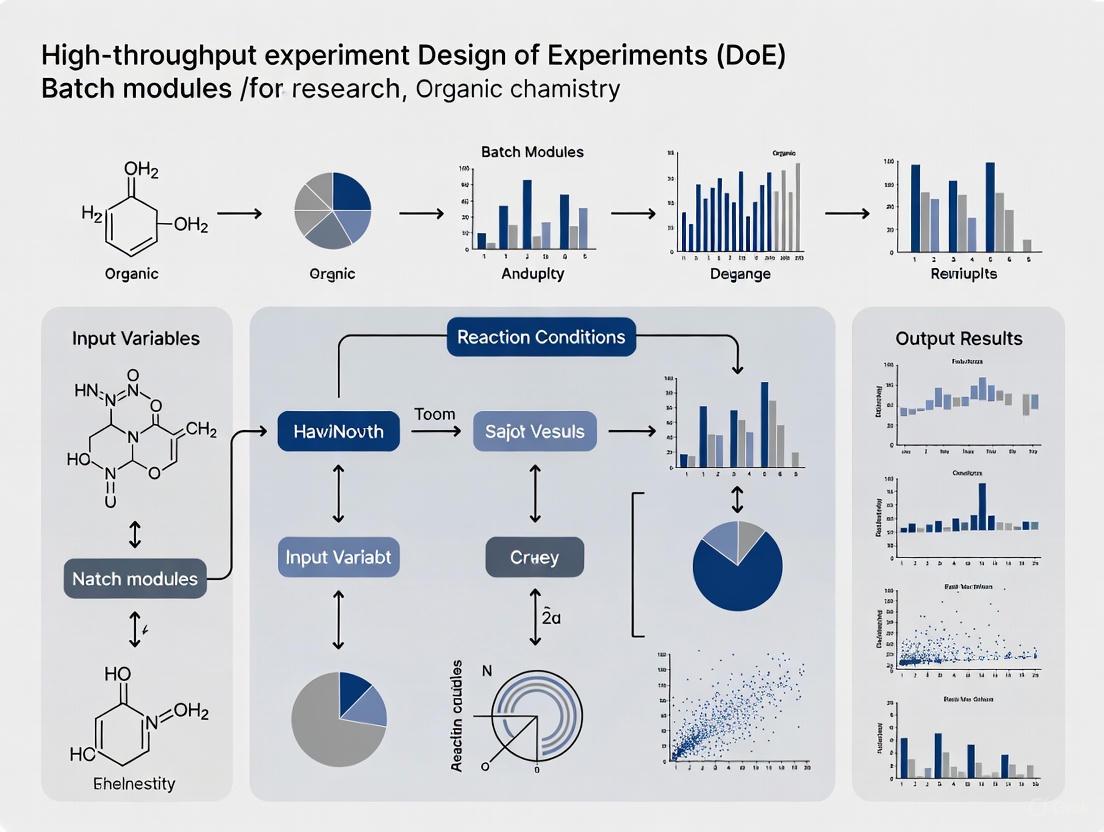

High-Throughput Experimentation Batch Modules: A Guide to Accelerated Discovery and Optimization

This article explores the transformative role of High-Throughput Experimentation (HTE) batch modules in accelerating chemical research and drug development.

High-Throughput Experimentation Batch Modules: A Guide to Accelerated Discovery and Optimization

Abstract

This article explores the transformative role of High-Throughput Experimentation (HTE) batch modules in accelerating chemical research and drug development. It provides a comprehensive overview of HTE fundamentals, detailing how the miniaturization and parallelization of reactions in platforms like 96-well plates enable rapid screening. The piece delves into practical methodologies and diverse applications, from organic synthesis and catalysis to pharmaceutical process development, and addresses common troubleshooting and optimization strategies. Furthermore, it examines the critical validation of HTE results and presents comparative analyses with other technologies like flow chemistry, highlighting how HTE, especially when enhanced by machine learning and automation, is revolutionizing efficiency and data-driven decision-making in scientific discovery.

What is High-Throughput Experimentation? Unlocking the Principles of Batch Module Screening

High-Throughput Experimentation (HTE) has emerged as a transformative approach across chemical and biological research, fundamentally changing how compounds are discovered, screened, and optimized. By systematically implementing the core principles of miniaturization, parallelization, and automation, HTE enables researchers to efficiently explore vast experimental spaces that would be impractical with traditional methods. This methodology is particularly crucial in drug discovery, where it can reduce screening time for thousands of compounds from years to weeks [1]. The following sections detail these core concepts, supported by quantitative data, experimental protocols, and visual workflows that define modern HTE practices.

Core Concepts and Foundational Principles

HTE represents a paradigm shift from traditional one-experiment-at-a-time approaches to highly efficient, data-rich research methodologies. This transformation is built upon three interconnected pillars.

Miniaturization

Miniaturization refers to the systematic reduction of reaction or assay volumes, typically performed in microtiter plates with well volumes of ∼300 μL or less [1]. This principle directly addresses resource constraints by dramatically reducing reagent consumption, cost, and waste generation while maintaining—or even enhancing—experimental integrity. In chemical synthesis, miniaturization allows for the rapid investigation of diverse reaction parameters with minimal material input [2]. Biologically, it enables the creation of scalable, homogeneous model systems, such as 2D human intestinal organoid (HIO) monolayers in 96-well plates, which provide greater reproducibility for high-throughput phenotypic studies compared to their 3D counterparts [3].

Parallelization

Parallelization enables the simultaneous execution of hundreds to thousands of experiments. This "brute force" approach allows a wide chemical or biological space to be explored concurrently rather than sequentially [1]. While traditional plate-based systems (96-, 384-, or 1536-well formats) remain prevalent [4], flow chemistry has emerged as a powerful complementary approach. Flow systems enable continuous variables such as temperature, pressure, and reaction time to be dynamically altered and investigated in a high-throughput manner, which is challenging in batch-wise systems [1]. This capability is particularly valuable for optimizing chemical processes, as parameters identified in flow systems often require less re-optimization when scaling up [1].

Automation

Automation integrates robotic systems, software, and hardware to perform repetitive tasks with minimal human intervention. This principle is crucial for achieving the scale, precision, and reproducibility required for HTE. Modern platforms range from simple, ergonomic pipettes for daily tasks to fully integrated, multi-robot workflows that can operate unattended [5]. The primary benefit of automation is the replacement of human variation with a stable system that generates reliable, reproducible data [5]. This not only increases throughput but also frees researchers from manual tasks, allowing them to focus on experimental design and data analysis [5].

Table: Core HTE Principles and Their Impact

| Principle | Key Implementation | Primary Benefits | Typical Scale |

|---|---|---|---|

| Miniaturization | Microtiter plates, chip reactors | Reduces reagent consumption and cost; improves heat/mass transfer [1] | 96- to 1536-well plates (∼300 µL/well) [1] |

| Parallelization | Multi-well reactors, parallel flow systems | Enables simultaneous testing of thousands of conditions; drastically reduces discovery time [1] | 3000+ compounds screened in 3-4 weeks vs. 1-2 years [1] |

| Automation | Robotic liquid handlers, automated platforms | Enhances reproducibility, reduces human error; enables 24/7 operation [5] | Fully automated protein production (DNA to protein in <48 hrs) [5] |

Diagram 1: The three core principles of High-Throughput Experimentation (HTE) and their primary outcomes. These concepts work synergistically to accelerate research.

High-Throughput Experimentation (HTE) Protocols

Protocol 1: Miniaturized HTE for Reaction Screening and Optimization

This protocol outlines a methodology for rapidly screening and optimizing chemical reactions using miniaturized high-throughput experimentation, adapted from a study that generated a dataset of 13,490 Minisci-type C-H alkylation reactions [6].

2.1.1 Research Reagent Solutions

Table: Essential Materials for Miniaturized Reaction Screening

| Item | Function | Specifications |

|---|---|---|

| 96- or 384-Well Microtiter Plate | Reaction vessel for parallel experimentation | Chemically resistant; typical well volume ~300 μL [1] |

| Automated Liquid Handling System | Precinct dispensing of reagents and solvents | Enables nanoliter to microliter volume transfers [4] |

| Inert Atmosphere Capability | Maintains anhydrous/anaerobic conditions | Critical for air- and moisture-sensitive reactions [2] |

| Plate Seals | Prevents solvent evaporation and contamination | Compatible with a range of organic solvents |

| Plate Reader or LC-MS | High-throughput analysis of reaction outcomes | Enables rapid quantification of conversion and yield [6] |

2.1.2 Step-by-Step Procedure

Experimental Design: Define the experimental space to be investigated, which may include variables such as catalysts, ligands, bases, and solvents. Using Design of Experiments (DoE) methodologies is recommended for efficient parameter space exploration [1].

Plate Preparation: Using an automated liquid handler, dispense stock solutions of solid reagents (e.g., catalysts, bases) into the designated wells of a dry microtiter plate in nanoliter to microliter quantities.

Solvent and Substrate Addition: Add the appropriate solvent to each well via the liquid handler. Finally, introduce the substrate solution to initiate the reactions simultaneously across the plate.

Reaction Incubation: Seal the plate and place it in a temperature-controlled incubator or agitator for the desired reaction time. For photochemical reactions, employ a dedicated multi-well batch photoreactor [1].

Reaction Quenching and Analysis: After the set time, automatically add a quenching solution to each well. Analyze the reaction outcomes using high-throughput analytical techniques, such as liquid chromatography-mass spectrometry (LC-MS) [1] or plate reader spectroscopy.

Data Processing: Convert analytical data into quantitative metrics (e.g., conversion, yield). The resulting dataset, such as the 13,490-reaction set for Minisci-type reactions, can be used for immediate analysis or to train machine learning models for reaction prediction [6].

Diagram 2: A high-throughput workflow for miniaturized reaction screening and optimization. This protocol enables the rapid generation of large datasets for empirical optimization or machine learning.

Protocol 2: Automated Imaging and Phenotypic Analysis of Human Intestinal Organoids

This protocol describes an automated pipeline for rapidly imaging and quantifying fluorescent labeling in 2D human intestinal organoid (HIO) cultures plated in 96-well plates, enabling high-throughput phenotypic screening [3] [7].

2.2.1 Research Reagent Solutions

Table: Essential Materials for HIO Phenotypic Screening

| Item | Function | Specifications |

|---|---|---|

| 96-Well Plate | Platform for growing 2D HIO monolayers | Optically clear glass or plastic bottom (e.g., Corning 3595) [3] |

| Collagen IV | Extracellular matrix coating for cell adhesion | Stock solution of 1 mg/mL in 100 mM acetic acid, diluted 1:30 [3] |

| HIO Culture Medium | Supports organoid growth and differentiation | L-WRN conditioned medium is commonly used [3] |

| Fixation and Staining Reagents | For cell labeling and immunostaining | Paraformaldehyde, permeabilization buffer, fluorescent antibodies/dyes |

| High-Throughput Confocal Microscope | Automated image acquisition | Spinning disk confocal system for fast z-stack imaging [3] |

| Image Analysis Software | Quantitative analysis of fluorescence | Open-source software (e.g., ImageJ) or commercial packages [3] |

2.2.2 Step-by-Step Procedure

Surface Coating: Dilute a stock solution of Collagen IV 1:30 in sterile deionized water. Add 100 μL of this solution to each inner well of a 96-well plate. Incubate the plate for 90 minutes at 37°C, then aspirate the solution, leaving a coated surface [3].

Cell Seeding: Harvest 3D HIOs cultured for 5-7 days by washing with an ice-cold 0.5M EDTA solution in PBS. Dissociate the organoids into a single-cell suspension and seed them onto the collagen IV-coated 96-well plate. Culture the cells to form a confluent 2D monolayer [3].

Experimental Treatment: Apply the compounds, microbial products, or other experimental stimuli to the HIO monolayers according to the experimental design. Include appropriate controls in designated wells.

Fixation and Staining: At the endpoint, wash the cells and fix them with paraformaldehyde. Permeabilize the cells if required for intracellular targets, and then incubate with fluorescently labeled antibodies or dyes (e.g., for cell identity markers or proliferation) [3].

Automated Imaging: Place the plate in a high-throughput spinning disk confocal microscope. Use an automated stage and predefined acquisition settings to image all wells of the plate, capturing multiple z-stacks per well to account for the 3D structure of cells [3].

Image and Quantitative Analysis: Use image analysis software to perform quantitative profiling. This can include measuring fluorescence intensity in different channels (nuclear or cytoplasmic), counting specific cell types, or analyzing morphological features. The pipeline can quantify inter-donor variability and cell-specific responses to treatments [3].

Application in Integrated Drug Discovery

The power of HTE is fully realized when its principles are integrated into a seamless discovery workflow. A seminal study demonstrated this by combining miniaturized HTE with deep learning to dramatically accelerate the hit-to-lead optimization phase in drug discovery [6].

Researchers first generated an extensive HTE dataset of 13,490 Minisci-type C–H alkylation reactions [6]. This large-scale experimental data was used to train deep graph neural networks, creating a predictive model for reaction outcomes. Subsequently, scientists scaffold-based enumeration of potential products from moderate starting compounds yielded a virtual library of 26,375 molecules [6]. This virtual library was virtually screened using the trained model, alongside physicochemical property assessment and structure-based scoring, to identify just 212 high-priority candidates for synthesis [6]. From these, 14 compounds were synthesized and exhibited subnanomolar activity, representing a potency improvement of up to 4,500-fold over the original hit compound [6]. This integrated approach, powered by initial HTE, significantly reduces cycle times in critical early drug discovery stages.

Diagram 3: An integrated HTE and machine learning workflow for accelerated drug discovery. This process leverages large-scale experimental data to train predictive models that efficiently identify potent lead compounds.

Advanced HTE Platforms and Technologies

Flow Chemistry as an HTE Tool

Flow chemistry addresses several limitations of traditional batch-wise HTE, particularly for challenging chemical transformations. It provides superior heat and mass transfer, enables safe handling of hazardous reagents, and allows easy pressurization to access superheated solvents [1] [8]. A key advantage is the ability to dynamically investigate continuous variables like temperature and residence time, which is difficult in batch systems [1]. Furthermore, scale-up is often more straightforward in flow, as increasing operating time can yield larger quantities of material without changing the reactor geometry, minimizing re-optimization [1]. This makes flow chemistry especially powerful for high-throughput screening in areas like photochemistry, where it ensures uniform light penetration [1].

The nELISA Platform for High-Plex Proteomic Screening

The nELISA platform represents a significant innovation for high-throughput, high-plex protein quantification. It overcomes the primary barrier to multiplexing in traditional sandwich immunoassays—reagent-driven cross-reactivity (rCR)—by preassembling antibody pairs on target-specific, barcoded beads [9]. This spatial separation prevents noncognate interactions. The platform incorporates a DNA-mediated detection mechanism, resulting in sub-picogram-per-milliliter sensitivity across a wide dynamic range [9]. In a demonstration of its high-throughput capability, nELISA was used to profile 191 proteins across 7,392 peripheral blood mononuclear cell (PBMC) samples, generating approximately 1.4 million protein measurements in under one week [9]. This scalability and efficiency make it a powerful tool for large-scale phenotypic screening in drug discovery.

High-Throughput Experimentation (HTE) has revolutionized research and development in the life sciences and chemical industries. This methodology enables the rapid testing of thousands of reactions or assays in parallel, dramatically accelerating the pace of discovery and optimization. The evolution of HTE represents a journey from its early foundations in biological screening towards its modern application in streamlined chemical synthesis. This progression is intrinsically linked to the adoption of sophisticated Design of Experiments (DoE) principles and batch modules, which allow for the efficient exploration of complex variable spaces. This Application Note details this technological evolution, providing structured protocols, data, and visualizations framed within contemporary HTE research paradigms for an audience of researchers, scientists, and drug development professionals.

Quantitative Comparison of Methodological Approaches

The transition from biological to chemical applications of HTE is characterized by distinct advantages and challenges. The following tables summarize the core characteristics and performance metrics of these approaches, providing a clear, comparative overview.

Table 1: Core Characteristics of Biological and Chemical HTE Approaches

| Feature | Biological HTE Assays | Modern Chemical HTE Synthesis |

|---|---|---|

| Primary Focus | Evaluation of biological activity (e.g., enzyme inhibition) [10]. | Optimization of chemical reaction conditions and discovery of new synthetic pathways [11]. |

| Typical Readout | Metabolic activity, luminescence/fluorescence, cell viability. | Chemical yield, conversion, purity (e.g., via LC/UV/MS, NMR) [11]. |

| Data Complexity | High biological variability; complex, multi-parametric outputs. | Structured data on yields, kinetics, and byproducts; ideal for AI/ML [11]. |

| Automation Focus | Liquid handling, cell culture, assay plating. | Robotic reactors, automated dispensing, high-throughput analysis [11]. |

| Key Challenge | Connecting cellular phenotypes to specific mechanistic actions [10]. | Integrating and automatically processing heterogeneous analytical data [11]. |

Table 2: Performance and Practical Considerations

| Consideration | Biological Assays | Chemical Synthesis |

|---|---|---|

| Throughput | Very High (10⁴ - 10⁶ samples/day) | High (10² - 10³ reactions/batch) |

| Reaction Scale | Micrograms - Milligrams | Milligrams - Grams |

| Environmental Impact | Often generates biological waste. | Chemical methods can face environmental concerns (e.g., metal catalysts); Biological methods offer greener alternatives [12]. |

| Resource Intensity | High cost of reagents and cell cultures. | High initial investment in robotics and analytics [11]. |

| Data Integration | Software often not designed for complex chemical intelligence [11]. | Platforms like Katalyst D2D integrate design, execution, and analysis, capturing data for AI/ML [11]. |

Experimental Protocols

Protocol A: A Representative Modern Chemical HTE Workflow — Synthesis of Lactobionic Acid

This protocol exemplifies a modern HTE approach to optimizing a chemical synthesis, in this case, the production of lactobionic acid (LBA), a valuable polyhydroxy acid with applications in pharmaceuticals and cosmetics [12].

1. Experimental Design (DoE Phase)

- Objective: Systemically explore the effect of catalyst type, temperature, and pressure on the yield and selectivity of lactose oxidation to LBA.

- Action: Utilize HTE software (e.g., Katalyst D2D) to design a DoE. The software will generate a set of experiments varying numerical parameters (e.g., temperature: 30-70°C, pressure: 1-5 bar) and categorical parameters (e.g., catalyst: Pd/Bi, Au, Mn/Ce oxides) [12] [11]. The Bayesian Optimization module can be employed to reduce the number of experiments required to find optimal conditions [11].

2. Reaction Setup & Execution

- Stock Solution Preparation: Prepare stock solutions of lactose and the various catalysts.

- HTE Plate Dispensing: Use an automated liquid handler to dispense the specified volumes of lactose stock solution into the wells of a high-throughput reactor block (e.g., 96-well format).

- Catalyst & Condition Assignment: Following the DoE template, add the designated catalyst to each well. The HTE software can automatically generate an instruction list for this step [11].

- Initiating Reactions: Transfer the reactor block to a parallel pressure reactor system. Program the system to pressurize with oxygen and heat each well to its designated temperature for a set reaction time (e.g., 2-12 hours).

3. Analysis & Data Processing

- Automated Analysis: Upon reaction completion, the samples are automatically transferred (or the data files are automatically swept) to integrated analytical instruments, such as LC/UV/MS or NMR [11].

- Data Processing: The software automatically processes the raw analytical data, calculating conversion and yield for each well. The results are linked back to the experimental conditions in the software interface [11].

- Reprocessing (if needed): If initial data processing is suboptimal (e.g., a key peak was not integrated), the entire plate or select wells can be directly reanalyzed within the platform without manually reopening each dataset [11].

4. Decision & Insight Generation

- Visualization: Use the heat map and well-size visualization tools within the HTE platform to quickly identify high-performing conditions.

- Modeling: Export the structured, high-quality experimental data (conditions, yields, byproducts) for use in AI/ML frameworks to build predictive models and guide future experimental campaigns [11].

Protocol B: Correlating Biological and Chemical Assays in HTE

This protocol outlines a comparative approach for assays common in pharmaceutical development, ensuring robust data correlation.

1. Objective To establish a correlation between a biological assay (e.g., measuring antimicrobial activity via a bioassay) and a chemical assay (e.g., quantifying drug concentration via HPLC) for a compound and its metabolites in test samples [13].

2. Parallel Assay Execution

- Bioassay: Test serially diluted samples against a target organism (e.g., M. tuberculosis). The bioassay measures total antimicrobial activity, which includes contributions from the parent drug and any active metabolites [13].

- Chemical Assay (HPLC): Simultaneously, analyze the same samples using a validated HPLC method to quantify the concentration of the parent drug specifically [13].

3. Data Correlation & Analysis

- Plot the bioassay results (e.g., zone of inhibition) against the HPLC results (parent drug concentration). A discrepancy, where the bioassay overestimates the concentration compared to the parent-drug-only HPLC assay, indicates the presence of active metabolites [13].

- A stronger correlation is typically observed when bioassay data is compared with the sum of the parent drug and its active metabolites as measured by HPLC [13]. This correlative data is essential for setting accurate antibiotic susceptibility test breakpoints.

Visualization of HTE Workflows and Signaling Pathways

The following diagrams, created using Graphviz DOT language, illustrate the logical relationships and workflows central to HTE.

Diagram 1: Integrated HTE Workflow

This diagram outlines the core cycle of a modern, integrated HTE platform.

Diagram 2: Biological vs. Chemical Assay Correlation

This diagram visualizes the process of correlating data from different assay types, a key step in validation.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for HTE in Synthesis and Screening

| Item | Function/Application | Example in Context |

|---|---|---|

| Heterogeneous Catalysts | Facilitate selective oxidation/reduction reactions. Essential for exploring green chemistry pathways. | Pd-Bi, Au, Mn/Ce oxides: Used in the selective catalytic oxidation of lactose to lactobionic acid [12]. |

| Redox Mediator Systems | Enable electron transfer in multi-enzymatic cascade reactions for biosynthesis. | Systems combining cellobiose dehydrogenase (CDH) and laccase for enzymatic LBA production [12]. |

| Immobilization Supports | Enhance enzyme stability and enable reuse in biocatalytic processes. | Chitosan, porous silica: Used as carriers for the co-immobilization of enzymatic systems [12]. |

| HTE Software Platform | Integrates DoE, inventory, automated reactor control, and data analysis in a chemically intelligent interface. | Katalyst D2D: Manages the entire workflow from design to decision, linking analytical results to each well [11]. |

| Integrated AI/ML Modules | Reduce experimental burden by intelligently predicting the most informative next experiments. | Bayesian Optimization (e.g., EDBO): Integrated into HTE software for reaction optimization [11]. |

High-Throughput Experimentation (HTE) has revolutionized drug discovery and development by enabling the rapid screening and optimization of vast chemical libraries. This approach allows researchers to conduct thousands of parallel experiments, dramatically accelerating the identification of lead compounds and optimal reaction conditions [14]. At the core of modern HTE lies the integrated batch platform—a sophisticated synergy of liquid handling, reactor blocks, and analytical systems designed to maximize throughput while maintaining data integrity and reproducibility.

The strategic value of HTE extends beyond mere speed. By applying statistical design of experiments (DoE) principles, HTE platforms generate high-quality, data-rich outcomes that are ideal for building predictive machine learning models [11]. This closed-loop workflow from design to decision is becoming essential for pharmaceutical and biotechnology industries facing increasing pressure to reduce development costs and timelines while improving success rates [15] [16]. This application note details the key components and protocols for implementing an effective HTE batch platform within a broader DoE batch modules research framework.

Core Component 1: Advanced Liquid Handling Systems

System Types and Specifications

Liquid handling systems form the operational backbone of any HTE platform, responsible for the precise transfer and manipulation of liquid reagents and samples. The choice of technology depends on the required volume range, throughput, and application specificity.

Table 1: Liquid Handling Technologies for HTE Applications

| Technology Type | Volume Range | Throughput Capability | Key Applications | Advantages |

|---|---|---|---|---|

| Non-contact Acoustic Dispensing [15] | Nanoliter to microliter | Ultra-high-throughput (1536-well formats) | Compound screening, dose-response assays | Minimal carryover, gentle handling, slashes dead volume |

| Piston-driven Automated Handlers [15] | Microliter to milliliter | High-throughput (96- to 384-well formats) | qPCR, ELISA, library prep | Robust liquid classes, lower carryover, verifiable QC |

| Manual/Semi-Automated Electronic [16] | Microliter to milliliter | Medium-throughput | Academic research, assay development | Lower initial investment, flexibility for method development |

Quantitative Performance Metrics

The performance of liquid handling systems is quantifiable through specific metrics that directly impact experimental reproducibility and data quality. For microliter-range liquid handling, modern automated systems achieve coefficients of variation (CV) below 5%, with precision increasing significantly with advanced calibration protocols and real-time verification methods [15]. For nanoliter-range acoustic dispensing, CVs below 10% are achievable, with precision being highly dependent on solvent properties and environmental controls [15]. System accuracy is typically validated through gravimetric analysis or absorbance-based dye assays across the entire operational volume range.

Protocol: Liquid Handler Calibration and QC

Principle: Regular calibration ensures volumetric accuracy and precision, critical for generating reproducible HTE data, especially in dose-response experiments and reagent additions where small volumetric errors can significantly impact outcomes.

Materials:

- Automated liquid handler

- Analytical balance (0.0001 g sensitivity)

- Distilled water

- Appropriate tips or disposables

- Temperature and humidity monitor

Procedure:

- Environmental Stabilization: Allow the liquid handler and all reagents to equilibrate to ambient temperature (20-25°C) for at least 2 hours before calibration.

- Gravimetric Setup: Tare a clean, dry weigh boat on the analytical balance.

- Dispensing Protocol: Program the liquid handler to dispense water across the operational volume range (e.g., 0.5, 1, 5, 10, 50, 100 µL) into the tared container, recording the actual dispensed mass for each volume.

- Data Collection: Repeat each volume measurement 10 times to establish precision.

- Calculation: Convert mass to volume using water density at the recorded temperature, then calculate accuracy (% of target volume) and precision (%CV) for each volume level.

- Documentation: Record all data and adjust instrument calibration factors if values fall outside manufacturer specifications (typically ±2% for accuracy, <5% CV for precision).

Core Component 2: Reactor Block Systems

Microplate Formats and Specifications

Reactor blocks provide the miniature reaction environments where chemical or biological transformations occur. The evolution toward higher-density microplates has been instrumental in increasing HTE throughput while reducing reagent consumption.

Table 2: Microplate Formats for HTE Applications

| Microplate Format | Well Count | Working Volume | Common Applications | Compatibility Notes |

|---|---|---|---|---|

| Standard Well Plates [14] | 96-well | 50-200 µL | Biochemical assays, cell-based screens | Broad equipment compatibility |

| High-Density Plates [14] | 384-well | 5-50 µL | Primary screening, kinetic studies | Requires compatible instrumentation |

| Ultra-High-Throughput [14] | 1536-well | 2-10 µL | Large compound library screening | Specialized equipment needed |

| Emerging Formats [14] | 3456-well | 1-2 µL | Specialized ultra-HTS applications | Limited commercial availability |

Material Considerations and Environmental Control

Reactor block material selection critically impacts chemical compatibility and experimental outcomes. Polypropylene remains the most common material due to its broad chemical resistance, while glass-filled polymers provide enhanced thermal stability for high-temperature applications. For specialized applications involving aggressive solvents or extreme temperatures, stainless steel reactor blocks offer superior durability but at higher cost.

Environmental control within reactor blocks is maintained through integrated heating/cooling systems capable of maintaining temperatures from 4°C to 150°C with uniformity of ±1°C across the block. For reactions requiring inert atmosphere, modular glovebox integration or on-deck gas manifolds maintain oxygen and moisture levels below 10 ppm during critical liquid handling and incubation steps [11].

Protocol: Reaction Setup in 384-Well Format

Principle: This protocol standardizes the setup of chemical reactions in 384-well microplates, ensuring consistent component addition and mixing for reproducible high-throughput experimentation.

Materials:

- 384-well polypropylene microplates

- Stock solutions of reactants, catalysts, and solvents

- Automated liquid handler with 384-well capability

- Plate sealer or mat

- Inert atmosphere enclosure (if required)

Procedure:

- Plate Layout Design: Create a plate map using experimental design software, randomizing condition positions to minimize positional bias.

- Solvent Addition: Using the liquid handler, dispense appropriate solvents to each well according to the experimental design, maintaining a minimum of 20% headspace for mixing.

- Reagent Dispensing: Add substrates, catalysts, and other reagents in order of increasing reactivity, with thorough mixing between additions if required by chemistry.

- Initiator Addition: For reactions requiring initiation, add the final initiating reagent (e.g., base, initiator, catalyst) last to start all reactions simultaneously.

- Sealing and Incubation: Immediately seal the plate with a chemically compatible seal and transfer to a controlled environment (temperature, atmosphere) for the prescribed reaction time.

- Quenching: For time-sensitive reactions, add quenching solution automatically at precise time intervals using programmed liquid handling.

Core Component 3: Integrated Analytics and Data Management

Analytical Techniques for HTE

The analytical subsystem transforms physical experiments into quantifiable data, with selection dependent on the required sensitivity, throughput, and information content.

Table 3: Analytical Methods for HTE Applications

| Analytical Method | Throughput (Samples/Day) | Information Gained | Typical Applications |

|---|---|---|---|

| LC/UV/MS [11] | 100-1,000 | Conversion, yield, purity, identity | Reaction screening, optimization |

| NMR Spectroscopy [11] | 10-100 | Structural information, conversion | Reaction discovery, mechanism elucidation |

| Fluorescence Detection [14] | 1,000-10,000 | Enzyme activity, binding affinity | Biochemical screening, enzymatic assays |

| Absorbance Spectroscopy [14] | 1,000-5,000 | Concentration, reaction progress | Cell-based assays, protein quantification |

Data Management and AI/ML Integration

Modern HTE platforms generate massive datasets that require sophisticated data management and analysis tools. The key challenge lies in integrating disparate data sources—experimental designs, analytical results, and chemical structures—into a unified, chemically intelligent database [11].

Specialized software platforms like Katalyst address this by providing integrated environments that connect experimental designs with analytical results while maintaining chemical intelligence through structure-rendering capabilities [11]. These systems enable automatic data processing and interpretation, with results linked directly to each well in the HTE plate, eliminating manual data transcription errors and accelerating decision-making.

For AI/ML integration, HTE platforms must export structured, normalized data in formats compatible with machine learning frameworks. The partnership between experimental design software and AI experts creates no-code solutions that use historical HTE data to guide future experimental designs through Bayesian optimization algorithms, progressively focusing on the most promising regions of chemical space [11].

Protocol: Automated LC/UV/MS Analysis and Data Processing

Principle: This protocol outlines a standardized workflow for high-throughput LC/UV/MS analysis of reaction mixtures, enabling rapid quantification of conversion and yield across large experimental arrays.

Materials:

- UHPLC system with autosampler and diode array detector

- Mass spectrometer with electrospray ionization

- Analytical columns (e.g., C18, 2.1 × 30 mm, 1.7 µm)

- Mobile phases (aqueous and organic) with appropriate modifiers

- Data processing software with batch capability

Procedure:

- Method Development: Establish a fast gradient method (3-5 minutes) that provides adequate separation of starting materials, products, and potential byproducts.

- Plate Mapping: Program the autosampler to access samples directly from the HTE microplate, maintaining the well-to-data association.

- Injection Sequence: Set up the sequence with randomized injection order to account for instrument drift, including quality control standards at regular intervals.

- Data Acquisition: Run the sequence with simultaneous UV and MS detection, monitoring appropriate wavelengths and mass ranges for expected compounds.

- Automated Processing: Apply integration parameters consistently across all samples, using UV traces for quantification and MS data for compound identification.

- Results Export: Automatically compile results (peak areas, retention times, molecular weight confirmation) into a structured data table linked to the original experimental design.

Integrated Workflow and Visualization

The power of an HTE batch platform emerges from the seamless integration of its components into a unified workflow. This integration enables a closed-loop cycle from experimental design through execution and analysis to decision-making and model building.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagent Solutions for HTE

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Chemical Libraries [14] | Diverse compound collections for screening | Typically 10,000-100,000 compounds; maintained in DMSO stocks at -20°C |

| Enzyme Preparations [14] | Biological catalysts for biocatalytic screening | Optimized for stability in HTE formats; often lyophilized for longevity |

| Catalyst Kits [11] | Pre-dispensed catalyst arrays for reaction screening | Available in microplates with 1-3 mg per well; covers diverse chemical space |

| Detection Reagents [14] | Fluorescent or colorimetric assay components | Homogeneous formats preferred (e.g., FRET, HTRF) for minimal processing |

| Metabolite Standards [14] | Analytical standards for quantification | Used for calibration curves in LC/UV/MS quantification |

| Stem Cell-Derived Models [14] | Biologically relevant systems for toxicity screening | hESC and iPSC-derived models compatible with industrial HTS formats |

The modern HTE batch platform represents a sophisticated integration of liquid handling, reactor block, and analytical technologies that together enable the rapid generation of high-quality chemical data. When implemented with the standardized protocols outlined in this application note, these systems provide researchers with an powerful tool for accelerating drug discovery and development timelines. The future of HTE lies in increasingly autonomous systems where AI-driven experimental design directly interfaces with robotic execution and analysis, creating self-optimizing discovery platforms that continuously learn from each experimental cycle [11] [15]. As these technologies continue to evolve toward greater integration and intelligence, HTE will solidify its position as an indispensable component of modern chemical and pharmaceutical research.

Within high-throughput experimentation (HTE) and Design of Experiment (DoE) batch modules, the selection of microplate format is a foundational technical decision that directly impacts the capacity, cost, and quality of research. High-Throughput Screening (HTS) efficiently assays multiple discrete biological reactions using multi-well microplates, enabling the rapid testing of thousands of compounds or conditions [17]. The evolution from the first 72-well plexiglass plate conceived by Dr. Gyula Takatsy in 1950 to today's standardized 96, 384, and 1536-well plates represents a continuous drive toward miniaturization and automation in biomedical research [17]. This progression is critical in fields like drug discovery, where overcoming bottlenecks in synthesis and analysis is paramount; while modern instrumentation can run thousands of reactions weekly, data analysis can still take a week of manual work, underscoring the need for efficient workflows [18]. The standardization of plate footprints by the Society for Biomolecular Screening (SBS) and American National Standards Institute (ANSI) ensures compatibility with automated instrumentation, making these plates the ubiquitous workhorses of modern laboratories [17].

Technical Specifications and Selection Criteria

Selecting the optimal microplate is a critical, multi-factorial process that balances assay requirements with practical constraints. The primary decision flow involves determining whether an assay is cell-based or cell-free, which then dictates needs for surface treatment, sterilization, and optical properties [17]. Key properties for any microplate include dimensional stability under various temperatures, chemical compatibility with assay reagents (e.g., DMSO stability), low binding surface energy to prevent adsorption, low autofluorescence, and support for cell viability and growth where applicable [17].

The following table summarizes the core quantitative specifications for the three standard plate formats, providing a basis for direct comparison and initial selection.

Table 1: Standard Microplate Specifications for High-Throughput Experimentation

| Specification | 96-Well Plate | 384-Well Plate | 1536-Well Plate |

|---|---|---|---|

| Total Well Number | 96 | 384 | 1536 |

| Standard Well Spacing (mm) | 9.0 | 4.5 | 2.25 |

| Typical Working Volume (μL) | 50-200 | 10-50 | 2-10 |

| Minimum Dispense Volume (μL) | ~2.0 [19] | ~0.5 [19] | ~0.5 [19] |

| Common Assay Volume (Example) | 35 μL (Gene Transfection) [20] | 8 μL (Gene Transfection) [20] | |

| Throughput Advantage | Baseline | 4x 96-well | 16x 96-well |

| Primary Application Examples | Cell culture, ELISA, initial assays [17] [21] | HTS, compound screening, dose-response [18] [22] | Ultra-HTS, large-scale library screening [18] |

Beyond the specifications in Table 1, other crucial factors influence microplate selection. The plate material (e.g., polystyrene (PS), polypropylene (PP), or cyclic olefin copolymer (COC)) affects chemical resistance, autofluorescence, and protein binding [17]. The plate bottom (e.g., clear, solid white, or black) must be selected for compatibility with the detection method, such as fluorescence, luminescence, or absorbance. For cell-based assays, surface treatments like plasma etching or covalent coatings (e.g., poly-D-lysine) are often essential for cell attachment and growth [17]. While cost is a factor, the primary driver should always be assay performance, as a more expensive but optimal plate can save money on expensive reagents in the long run [17].

Key Applications and Associated Protocols in Drug Discovery

The standardized microplate formats enable a wide array of critical experiments in the drug discovery pipeline. The following applications and their detailed protocols highlight the practical implementation of these tools in a high-throughput context.

Application 1: Miniaturized Gene Transfection and Reporter Assay

Objective: To determine the optimal parameters for gene delivery and expression in immortalized and primary cells, miniaturized from a 96-well format to 384-well and 1536-well plates to achieve higher throughput and reduce reagent costs [20].

Background: Gene transfection assays are fundamental for studying gene function and protein expression. Miniaturization into 384- and 1536-well formats greatly economizes on expenses and allows for much higher throughput when transfecting both immortalized and primary cells [20].

Table 2: Research Reagent Solutions for Gene Transfection Assays

| Reagent/Material | Function/Description |

|---|---|

| Polyethylenimine (PEI) | A polymeric cationic transfection reagent that complexes with DNA to facilitate cellular uptake. |

| Calcium Phosphate (CaPO₄) | A precipitation-based method for transfection, particularly effective for primary hepatocytes [20]. |

| Reporter Constructs (Luciferase/GFP) | Plasmid DNA encoding easily detectable proteins (e.g., luciferase, green fluorescent protein) to quantify transfection efficiency. |

| Cell Lines (e.g., HepG2, CHO, NIH 3T3) | Immortalized cells used for assay development and optimization. |

| Primary Hepatocytes | Freshly isolated liver cells, representing a more physiologically relevant but challenging model for transfection [20]. |

| Luciferin | The substrate for the firefly luciferase enzyme, which produces bioluminescence upon reaction. |

Protocol:

- Cell Seeding: Seed mammalian cells (e.g., HepG2, CHO, NIH 3T3) in 384-well plates at a density of 250-5,000 cells per well in a total volume of 35 μL, or in 1536-well plates in a total volume of 8 μL. Allow cells to adhere overnight under standard culture conditions (37°C, 5% CO₂) [20].

- Complex Formation: Prepare DNA-transfection reagent complexes. For PEI, use optimized reagent-to-DNA ratios (e.g., 1:1 to 5:1) in a serum-free medium. Incubate for 10-15 minutes at room temperature to allow polyplex formation [20].

- Transfection: Add the polyplex solution directly to the cells in the microplates. For 384-well plates, a common approach is to add 5-10 μL of the polyplex mixture to the existing 35 μL medium.

- Incubation: Incubate the cells with the complexes for 4-24 hours, then replace the transfection mixture with fresh complete growth medium.

- Reporter Quantification:

- For Luciferase: 24-72 hours post-transfection, add a luciferin substrate solution to the wells. Measure luminescent signal immediately using a compatible microplate reader [20].

- For GFP: 24-72 hours post-transfection, visualize and quantify fluorescence using a high-content imager or fluorescence microplate reader.

- Data Analysis: Calculate transfection efficiency based on the relative light units (RLU) for luciferase or fluorescence intensity for GFP. Normalize data to untreated control wells. A Z' factor of >0.5, as achieved in 384-well formats, indicates an assay robust enough for high-throughput screening [20].

The workflow for this protocol, from cell preparation to data analysis, is visualized below.

Application 2: High-Throughput Transporter Inhibition Assay for Drug Safety

Objective: To screen drug candidates for potential inhibition of key human drug transporters (e.g., P-gp, BCRP, OATs, OATPs) in a 384-well format to assess the risk of clinical drug-drug interactions (DDIs) and hepatic toxicities early in the discovery process [23].

Background: Transporter proteins play a critical role in the absorption, distribution, metabolism, and excretion (ADME) of drugs. Their inhibition can lead to serious DDIs and safety issues, prompting regulatory agencies to require such testing [23].

Protocol:

- Cell and Vesicle Preparation: Use transporter-overexpressing cell lines for uptake assays or membrane vesicles for efflux assays. Plate cells in 384-well, tissue culture-treated plates at a density ensuring confluence at the time of assay.

- Pre-incubation: Remove culture medium and wash cells with a pre-warmed assay buffer.

- Inhibition Reaction:

- For Uptake Inhibition (Cells): Incubate cells with a solution containing a known transporter substrate (e.g., a fluorescent probe) and the test compound at various concentrations.

- For Efflux Inhibition (Vesicles): Incubate vesicles with the substrate, test compound, and ATP (to energize the transport process).

- Termination and Washing: After a defined incubation period (e.g., 5-30 minutes), rapidly stop the reaction by removing the incubation solution and washing the cells or vesicles multiple times with ice-cold buffer.

- Substrate Quantification: Lyse the cells or vesicles. Quantify the accumulated substrate using an appropriate detection method, such as fluorescence, radiometry, or mass spectrometry.

- Data Analysis: Calculate the percentage of transporter inhibition by the test compound relative to a positive control (full inhibition) and a negative control (vehicle only). Fit dose-response data to determine IC₅₀ values.

Application 3: RNA-Seq in Drug Treatment Studies Using 384-Well Plates

Objective: To profile global gene expression changes in response to compound treatment in a 384-well plate format, enabling high-throughput analysis of drug effects, mode of action, and biomarker discovery [22].

Background: RNA sequencing (RNA-Seq) is a powerful, unbiased tool applied throughout the drug discovery workflow. Using 384-well plates for cell culture and treatment allows for the efficient processing of large sample numbers, which is crucial for robust statistical power in dose-response and compound combination studies [22].

Protocol:

- Experimental Design and Plate Layout:

- Define a clear hypothesis and aims. Include sufficient biological replicates (ideally 4-8 per treatment group) to account for natural variation [22].

- Design the 384-well plate layout to randomize treatment groups and control for batch effects, which are systematic non-biological variations [22].

- Include appropriate controls: untreated controls, vehicle controls (e.g., DMSO), and potentially spike-in RNA controls (e.g., SIRVs) for normalization and quality control [22].

- Cell Treatment and Lysis:

- Seed cells in a 384-well plate. The following day, treat with compounds across a range of concentrations and time points.

- After treatment, remove medium and lyse cells directly in the plate. For large-scale studies, extraction-free RNA-Seq library preparation (e.g., 3'-Seq) can be performed directly from the lysate to save time and cost [22].

- RNA Extraction and Library Prep:

- If required, perform total RNA extraction using a method suitable for the sample type and which retains the RNA species of interest.

- Perform library preparation. For gene expression analysis in large-scale screens, 3' mRNA-Seq methods (e.g., QuantSeq) are often ideal due to their cost-effectiveness and compatibility with early sample pooling [22].

- Sequencing and Data Analysis:

- Pool libraries and sequence on an appropriate NGS platform.

- Process the data through a bioinformatics pipeline for quality control, alignment, and differential expression analysis. The planned layout from step 1 will enable statistical correction for any remaining batch effects [22].

The plate layout is a critical component for a successful RNA-Seq experiment, as it mitigates confounding variables.

Essential Tools and Best Practices for High-Throughput Workflows

The effective use of high-density microplates is enabled by specialized liquid handling equipment and adherence to strict best practices.

Liquid Handling Systems

Accurate and precise liquid handling is non-negotiable in HTE. Systems suitable for 384 and 1536-well plates must dispense sub-microliter volumes reliably.

- Acoustic Liquid Handlers (e.g., Beckman Echo): Use sound energy to transfer nanoliter-volume droplets without tips, ideal for compound reformatting and stamping [24].

- Micro-Diaphragm Pump Dispensers (e.g., Formulatrix Mantis, Tempest): Employ tipless, microfluidic chips with integrated pumps to dispense volumes from 100 nL with high precision (CV < 2%), suitable for a wide range of reagents including viscous liquids and cells [25].

- Automated Liquid Handlers (e.g., Agilent Bravo, Hamilton Starlet): Use 384-channel pipetting heads to transfer liquids from source to destination plates. Proper alignment and spring-loaded plate nests are critical for accuracy in 1536-well formats [24].

- Bulk Reagent Dispensers (e.g., Welljet, Multidrop): Rapidly fill entire columns or plates with a single reagent, excellent for assays like ELISA or adding PCR master mixes [24].

Best Practices for Microplate Handling

- Mixing: Ensure homogeneity of reagents and cells in wells post-dispensing, using orbital shaking or plate vibration.

- Incubation: Maintain stable temperature and humidity, particularly for cell-based assays and enzymatic reactions, using controlled incubators or environmental chambers.

- Centrifugation: Briefly spin plates (e.g., 500-1000 rpm for 1 minute) to collect liquid at the well bottom and remove bubbles, which is critical for accurate optical measurements.

- Troubleshooting: Be aware of common issues such as well-to-well contamination (e.g., from poorly sealed plates), edge effects (evaporation in peripheral wells), and lot-to-lot variability in microplate performance [17]. Using plate seals and maintaining consistent environmental conditions can mitigate these problems.

{#header}

{#header}

{#header}

The systematic exploration of chemical space is no longer a luxury but a necessity for accelerating innovation in modern research and development.

{#header}

For decades, the one-variable-at-a-time (OVAT) approach has been a staple in experimental optimization across chemical synthesis and process development. While intuitively simple, this method, which holds all variables constant except one, is inherently inefficient, ignores critical factor interactions, and often fails to locate the true global optimum for a process [26] [27].

The integration of High-Throughput Experimentation (HTE) with statistical Design of Experiments (DoE) presents a paradigm shift. HTE enables the miniaturization and parallel execution of hundreds of experiments, while DoE provides a statistical framework for systematically selecting which experiments to run to maximize information gain [28] [2]. This powerful combination allows researchers to efficiently map complex experimental landscapes, transforming the speed and quality of scientific optimization.

This application note details the distinct advantages of the HTE-DoE approach over traditional OVAT, supported by quantitative comparisons and a detailed protocol for implementing these methods in reaction optimization.

Comparative Advantages of HTE-DoE over OVAT

The limitations of OVAT become particularly pronounced when optimizing complex, multi-factor systems common in catalysis and pharmaceutical development. Table 1 summarizes the key comparative advantages of using an integrated HTE-DoE strategy.

Table 1: Core Advantages of HTE-DoE over the OVAT Approach

| Aspect | Traditional OVAT Approach | Integrated HTE-DoE Approach | Impact and References |

|---|---|---|---|

| Experimental Efficiency | Low; requires many sequential runs. A 5-factor study requires many more individual experiments. [27] | High; screens factors simultaneously. A 5-factor screening can be achieved in as few as 8-16 experiments. [26] [27] | Up to 2-3x greater experimental efficiency; accelerates development cycles by 30%. [27] [29] |

| Detection of Factor Interactions | Cannot detect interactions between variables. [26] | Systematically identifies and quantifies synergistic or antagonistic factor effects. [26] [27] | Prevents development of suboptimal systems; provides deeper mechanistic understanding. [26] |

| Quality of Resulting Data | Prone to finding local optima; results are often not reproducible. [28] [27] | Generates robust, reproducible data; maps the response surface to find a global optimum. [28] [27] | Creates reliable, scalable processes and provides rich datasets for machine learning. [28] [30] [29] |

| Resource Utilization | Consumes more time, material, and resources per unit of information. [26] | Minimizes material use (e.g., nanomole scale) and maximizes information per experiment. [28] | Reduces experimental costs by 15-25% and minimizes waste. [28] [29] |

The radar graph below provides a visual comparison of the two methodologies across eight critical criteria as evaluated by chemists from academia and industry, clearly illustrating the superior performance profile of HTE [28].

Case Study: Optimizing a Key Step in Flortaucipir Synthesis

Background and Objective

Flortaucipir is an FDA-approved imaging agent for Alzheimer's disease diagnosis. [28] The synthesis of its core structure involves a challenging catalytic step that required optimization for yield and reproducibility. Traditional OVAT optimization was proving inefficient for this multi-variable system.

The objective of this HTE-DoE campaign was to systematically optimize this key catalytic step by screening critical factors simultaneously to identify not only main effects but also significant interactions. [28]

Experimental Protocol

Materials and Reagent Solutions

Table 2: Key Research Reagent Solutions and Materials

| Reagent/Material | Specification/Function | Supplier/Example |

|---|---|---|

| Catalast Library | Varies electronic properties & steric bulk (Tolman's cone angle) to map catalyst space. [26] | E.g., P(4-F-C6H4)3, P(4-OMe-C6H4)3, P(4-Me-C6H4)3, P(tBu)3. [26] |

| Solvent Library | Screens polarity, hydrogen bonding, and other solvent parameters. [26] | Dimethylsulfoxide (DMSO), Acetonitrile (MeCN), etc. [26] |

| Base Library | Investigates the effect of base strength and nature on reaction outcome. [26] | Sodium hydroxide (NaOH), Triethylamine (Et3N), etc. [26] |

| 96-Well Reaction Plate | 1 mL vials for miniaturized, parallel reaction execution. [28] | Analytical Sales and Services (e.g., 8 × 30 mm vials #884001). [28] |

| Internal Standard | Enables accurate quantitative analysis by UPLC/MS. [28] | Biphenyl in MeCN. [28] |

HTE-DoE Workflow and Execution

The following diagram outlines the core workflow for a combined HTE-DoE campaign, from design to analysis.

Step 1: Experimental Design

- Identify key factors to investigate (e.g., catalyst, ligand, base, solvent, temperature, concentration). [31]

- Select an appropriate DoE. For initial screening of many factors, a Plackett-Burman Design (PBD) or fractional factorial design is ideal to identify the most influential variables with minimal runs. [26] For subsequent optimization of critical few factors, a Response Surface Methodology (RSM) like Central Composite Design (CCD) is used. [26] [27]

- Use software to generate a randomized experimental run order to minimize bias. [28]

Step 2: HTE Campaign Execution

- Prepare stock solutions of all reagents and catalysts. [28]

- Using liquid handling systems (manual pipettes, multipipettes, or robotics), dispense reagents according to the DoE matrix into a 96-well plate. [28]

- Seal the plate and place it in a parallel reactor system (e.g., Paradox reactor) with precise temperature control and homogeneous stirring using tumble stirrers. [28]

- Quench reactions after the set time.

Step 3: Analysis and Data Processing

- Dilute samples uniformly with a solution containing an internal standard (e.g., biphenyl in MeCN) for quantitative analysis. [28]

- Analyze samples via UPLC/PDA-MS. [28]

- Calculate reaction conversion and yield based on the Area Under the Curve (AUC) ratios relative to the internal standard. [28]

- Input the response data (e.g., yield) into DoE software for statistical analysis.

The HTE-DoE campaign successfully identified the key factors and their interactions influencing the Flortaucipir synthesis step. The model derived from the data allowed researchers to pinpoint a set of optimal conditions that would have been extremely difficult to discover using OVAT. [28]

This approach provided a robust, data-rich understanding of the reaction, ensuring a more reproducible and scalable process for producing this critical diagnostic agent. [28]

Discussion and Future Outlook

The synergy between HTE and DoE is a cornerstone of modern research efficiency. By combining the parallel processing power of HTE with the intelligent design of DoE, researchers can navigate complex experimental spaces with unprecedented speed and depth. This methodology not only accelerates reaction optimization and discovery but also generates high-quality, reproducible datasets that are invaluable for training machine learning models, thereby creating a virtuous cycle of continuous improvement and prediction in chemical research. [30] [29] [2]

Future directions point towards even tighter integration of automation, real-time analytics, and adaptive machine learning algorithms, further reducing the need for human intervention and accelerating the design-make-test-analyze cycle. [1] [2] As these technologies become more accessible and user-friendly, their adoption as the standard for experimental optimization beyond big pharma and into academia and smaller enterprises is inevitable and will be a key driver of innovation across the chemical sciences. [28]

HTE Batch Modules in Action: Methodologies and Real-World Applications in Research

High-Throughput Experimentation (HTE) is a transformative paradigm that accelerates the discovery and optimization of compounds and synthetic processes by enabling the rapid, parallel screening of vast reaction spaces [1]. Within the context of a broader thesis on Design of Experiments (DoE) batch modules research, an HTE campaign represents a systematic workflow that integrates strategic planning, automated execution, and data-driven analysis. This protocol provides a detailed, step-by-step guide for researchers and drug development professionals to establish a robust HTE campaign, from initial design to final analytical interpretation.

Campaign Design & Strategic Planning

The success of an HTE campaign hinges on meticulous upfront planning, which aligns experimental goals with available resources and defines the parameters of the chemical space to be explored.

2.1. Defining Objectives and Scope Every campaign must begin with a clear objective, such as "Identify optimal photocatalyst and base for a fluorodecarboxylation reaction" or "Screen 100 substrate combinations for novel activity" [1]. This objective dictates the campaign's scope, including the number of variables, desired throughput, and material requirements.

2.2. Selection of HTE Platform Choosing the appropriate platform is critical. While traditional plate-based methods (e.g., 96- or 384-well plates) offer high parallelism for initial screening, flow chemistry platforms provide superior control over continuous variables (temperature, pressure, residence time) and facilitate easier scale-up without re-optimization [1]. The decision should be based on the reaction requirements, such as the need for specialized conditions (e.g., photochemistry, pressurized systems) or hazardous reagents.

2.3. Experimental Design (DoE) A structured DoE approach is superior to one-factor-at-a-time testing. This involves:

- Factor Identification: Selecting independent variables (e.g., catalyst loading, solvent ratio, temperature).

- Response Selection: Defining measurable outputs (e.g., yield, conversion, selectivity).

- Design Matrix: Implementing a factorial or response surface methodology (RSM) design to efficiently explore the variable space with a minimal number of experiments [1].

Table 1: Quantitative Benefits of HTE and Automation

| Metric | Traditional Approach | HTE/Automated Approach | Benefit | Source |

|---|---|---|---|---|

| Time for 3000-compound screen | 1–2 years | 3–4 weeks | ~95% reduction | [1] |

| Time saved on campaign management | N/A | Up to 80% | Major efficiency gain | [32] |

| Increase in qualified leads (marketing context) | Baseline | 451% | Dramatic process improvement | [32] |

Experimental Protocol: A Photoredox Reaction Case Study

This protocol adapts a published workflow for a flavin-catalysed photoredox fluorodecarboxylation reaction, illustrating the transition from plate-based screening to flow optimization and scale-up [1].

3.1. Primary HTE Screening (Plate-Based)

- Objective: Rapid identification of hit conditions from broad variable spaces.

- Materials: 96-well photoreactor plate, liquid handling robot, stock solutions of photocatalysts (24), bases (13), and fluorinating agents (4).

- Method:

- Prepare reaction plates via automated liquid handling, varying one component per well according to the DoE matrix.

- Seal the plate and place it in the photoreactor, irradiating under consistent wavelength and power.

- Quench reactions in parallel.

- Analyze outcomes via high-throughput LC-MS or NMR to identify combinations yielding high conversion.

3.2. Secondary Optimization & Validation (Batch/Flow)

- Objective: Refine hit conditions and assess scalability.

- Method:

- Validation: Transfer hit conditions from the plate to a stirred batch reactor for verification [1].

- DoE Optimization: Conduct a focused DoE study around the hits to map the response surface and locate the true optimum [1].

- Stability & Feed Study: Perform stability studies of reaction components to determine the number and composition of feed solutions required for continuous flow.

- Flow Translation: Transfer the optimized conditions to a flow photochemical reactor (e.g., Vapourtec UV150). Begin at a small scale (2 g), optimizing flow-specific parameters (light intensity, residence time, temperature) [1].

- Scale-up: Gradually increase production scale by extending run time, culminating in kilogram-scale synthesis (e.g., 1.23 kg product) [1].

Workflow Automation & Execution

Implementing automation is key to a reproducible and efficient HTE campaign. The workflow should move seamlessly from planning to execution.

Data Analysis, Modeling, and Interpretation

The raw data from HTE campaigns must be processed into actionable knowledge.

5.1. Data Processing Pipeline

- Normalization: Standardize analytical data (e.g., peak areas) against internal standards.

- Data Aggregation: Compile results from parallel experiments into a structured database, linking each well or flow experiment to its specific condition set and outcome.

5.2. Statistical Modeling & Visualization

- Model Fitting: Use multivariate regression (e.g., partial least squares) or machine learning algorithms to build models predicting response variables from input factors.

- Visualization: Generate contour plots, response surfaces, and heatmaps to visualize the relationship between factors and outcomes, identifying optimal regions and interaction effects.

5.3. Establishing Feedback Loops The analysis phase must feed directly back into the planning stage, creating a continuous improvement cycle [33]. Insights from one campaign should refine the hypotheses and experimental designs of the next.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Materials and Tools for an HTE Campaign

| Item | Function/Description | Application Context |

|---|---|---|

| Microtiter Plates (96/384-well) | Enable parallel reaction screening in small volumes (~300 µL). | Primary, brute-force screening of diverse conditions or compound libraries [1]. |

| Flow Chemistry Reactor | Tubular or chip-based reactor allowing continuous processing with precise control of time, temperature, and pressure. | Optimization, scale-up, and reactions requiring harsh conditions or improved mass/heat transfer [1]. |

| Automated Liquid Handling System | Robot for accurate, high-speed dispensing of reagents into wells or flow system feeds. | Essential for reproducibility and throughput in both plate and flow preparation [1]. |

| In-line Process Analytical Technology (PAT) | Analytical probes (e.g., FTIR, UV-Vis) integrated directly into the flow stream for real-time monitoring. | Provides immediate feedback for kinetic studies and autonomous optimization in flow HTE [1]. |

| High-Throughput LC-MS/ NMR | Analytical systems configured for rapid, sequential analysis of many samples from plate-based screens. | Critical for generating the quantitative data (conversion, yield) that feeds the analysis phase. |

| Statistical Software (e.g., JMP, R, Python) | Platform for designing experiments (DoE), performing multivariate data analysis, and building predictive models. | Transforms raw data into interpretable models and visualizations to guide decision-making. |

| Project Management/ELN Software | Digital system for documenting protocols, tracking reagent inventories, and managing experimental data. | Maintains workflow organization, ensures reproducibility, and creates visibility for the team [33]. |

A well-structured HTE campaign is a powerful engine for research acceleration. By adhering to a disciplined workflow encompassing strategic DoE-based design, automated execution via appropriate platforms, and rigorous data analysis that informs iterative learning, researchers can systematically navigate complex chemical spaces. This protocol, grounded in contemporary flow chemistry and automation advances [1], provides a replicable framework that aligns with the overarching goals of modern high-throughput DoE research, ultimately driving faster innovation in drug discovery and materials science.

High-Throughput Experimentation (HTE) has emerged as a transformative methodology that enables researchers to conduct numerous experiments in parallel, dramatically accelerating the pace of scientific discovery across pharmaceuticals, materials science, and energy storage research. This approach represents a fundamental shift from traditional single-experiment methodologies, allowing for the rapid screening of vast arrays of reaction conditions while requiring only minimal material [34]. The integration of automation technologies has been pivotal in realizing the full potential of HTE, facilitating precise, reproducible, and efficient experimental workflows that would be impossible to execute manually.

Within the context of Design of Experiments (DoE) batch modules research, automation serves as the critical bridge between experimental design and data acquisition. Automated and semi-manual systems span a spectrum from sophisticated commercial platforms to flexible custom-built rigs, each offering distinct advantages for specific research applications. These systems enable researchers to systematically explore complex experimental spaces, optimize reaction conditions, and generate high-quality data at unprecedented scales, ultimately supporting more robust scientific conclusions and accelerating innovation cycles [35] [36].

Commercial Automated Platforms

Integrated Commercial Systems

Commercial automated platforms offer fully integrated, validated solutions for high-throughput experimentation, providing comprehensive functionality with minimal development overhead. These systems are characterized by their robustness, user-friendly interfaces, and extensive support infrastructure, making them particularly suitable for industrial laboratories and research facilities operating under compressed timelines.

Companies such as Chemspeed Technologies, Unchained Labs, Tecan, Mettler-Toledo Auto Chem, and Hamilton offer integrated systems consisting of collections of modules capable of executing complex workflows [35]. These commercial platforms are typically expensive but require minimal development if factory acceptance testing is performed effectively. For instance, Evotec has implemented a comprehensive HTE platform with screening templates that can be quickly adapted to meet specific project requirements, playing a critical role in both drug discovery and development by enabling the collection of large amounts of data rapidly while using minimal quantities of valuable materials [34].

The appeal of these integrated systems lies in their potential for increased efficiency through offloading repetitive tasks, enhanced reproducibility due to the high precision of robotic tools, and improved safety when working with hazardous materials [35]. These advantages are particularly valuable in regulated industries like pharmaceutical development, where consistency and documentation are paramount.

Application Note: Automated RAFT Polymerization Screening

Objective: To develop an automated workflow for screening Reversible Addition-Fragmentation chain Transfer (RAFT) copolymerization conditions using a commercial Chemspeed robotic platform.

Experimental Protocol:

- System Setup: Configure Chemspeed robotic platform with liquid handling modules, solid dispensers, and temperature-controlled reaction stations within an inert atmosphere glove box.

- Monomer Preparation: Prepare stock solutions of oligo(ethylene glycol) acrylate, benzyl acrylate (control), and fluorescein o-acrylate in toluene, THF, and DMF.

- Reaction Initiation: Employ automated liquid handling to dispense monomer solutions, RAFT agent, and initiator into designated reaction vials under inert conditions.

- Parallel Reaction Execution: Conduct copolymerizations using three distinct methodologies:

- Batch: Single addition of all monomers

- Incremental: Sequential addition of monomers at predetermined time intervals

- Continuous Flow: Controlled continuous monomer addition at feed rates of 0.3-1.0 mL/hr

- Reaction Monitoring: Withdraw samples at defined time intervals using automated sampling system for analysis via ¹H NMR spectroscopy.

- Data Collection: Track conversion rates, molecular weight distributions, and copolymer compositions across all conditions.

Results and Discussion: The automated screening revealed that DMF offered the most consistent performance across all polymerization methodologies due to its high boiling point and enhanced solubility for both monomers, resulting in improved feed control and kinetic stability [37]. Continuous flow reactions demonstrated tunable composition based on feed rates, illustrating the potential for scalable synthesis of fluorescent copolymers. This automated workflow provided a robust platform for reproducible kinetic profiling, copolymer design, compositional control, and material property profiling with minimal manual intervention, enabling high-throughput polymerization strategies that would be impractical to execute manually.

Table 1: Commercial Automated Platforms for HTE

| Platform/Vendor | Key Features | Applications | Throughput Capacity |

|---|---|---|---|

| Chemspeed Technologies | Solid/liquid handling, temperature control, inert atmosphere capability | Polymerization screening, catalyst optimization, formulation studies | 96+ reactions per batch |

| Tecan | Liquid handling, plate handling, integration with analytical instruments | Drug discovery, biochemical assays, compound screening | 200+ experiments per day |

| Hamilton | Automated pipetting, sample preparation, custom application development | Liquid dispensing, compound management, assay readiness | Varies by configuration |

| Mettler-Toledo Auto Chem | Gravimetric solid dispensing, reaction calorimetry, process development | Reaction optimization, kinetic studies, safety testing | 10-100 reactions per batch |

Custom-Built Automated Systems

Development Considerations for Custom Automation

Custom-built automated systems offer researchers maximum flexibility to address specialized experimental requirements that may not be adequately served by commercial platforms. The development of these systems involves careful consideration of multiple factors, including the specific experimental workflows, available budget, technical expertise, and integration requirements with existing laboratory infrastructure.

The decision to build a custom system involves navigating the fundamental trade-off between flexibility and development investment. Building modules from individual components provides the highest degree of customization but requires significant development time and technical expertise to ensure adequate performance [35]. This approach is particularly common in academic settings where budgets may be constrained but timelines are more flexible, and where specialized graduate student labor is available for system development. As noted in the perspective "Automation isn't automatic," this process involves iterative refinement rather than straightforward implementation, with researchers often encountering unexpected challenges related to solvent compatibility, materials degradation, and system synchronization [35].

Key technical considerations for custom system development include:

- Module Selection: Identification of required unit operations (solid dispensing, liquid handling, temperature control, stirring, etc.)

- Control System Architecture: Development of software orchestration capable of coordinating multiple devices

- Materials Compatibility: Selection of components resistant to chemical degradation

- Integration Framework: Design of interfaces between custom and commercial components

- Data Management: Implementation of systems for capturing, processing, and storing experimental data

Application Note: Custom Automated Electrolyte Formulation and Battery Assembly (ODACell)

Objective: To develop an automated system for electrolyte formulation and coin cell assembly to minimize human error and reduce cell-to-cell variability in lithium-ion battery research.

Experimental Protocol:

- System Configuration: Implement ODACell system comprising three 4-axis robotic arms (Dobot MG400) and one liquid handling robot (Opentrons OT-2).

- Specialized End-Effectors: Equip each robotic arm with unique tooling:

- Vacuum head for component pickup

- Custom claw for component stack manipulation

- Electric gripper for general handling tasks

- Electrolyte Formulation: Utilize liquid handling robot to precisely mix stock solutions of 2 mol kg⁻¹ LiClO₄ in DMSO and 2 mol kg⁻¹ LiClO₄ in water to create desired electrolyte compositions.

- Coin Cell Assembly: Execute automated workflow:

- Retrieve coin cell components from custom trays

- Dispense precisely controlled electrolyte volumes

- Assemble component stacks (cathode, separator, anode)

- Crimp cells using modified electric crimper

- Electrochemical Testing: Transfer assembled cells to cycling stations for automated performance evaluation.

Results and Discussion: The ODACell system demonstrated a conservative fail rate of 5%, with the relative standard deviation of discharge capacity after 10 cycles at 2% for the studied LiFePO₄‖Li₄Ti₅O₁₂ system [38]. This high reproducibility enabled the detection of subtle performance differences, such as the overlapping performance trends between electrolytes with 2 vol% and 4 vol% added water, highlighting the nontrivial relationship between water contaminants and cycling performance. The modular design allowed for adaptation to different electrochemical systems with minimal alterations, providing a versatile platform for high-throughput electrolyte screening. The system's ability to operate in ambient atmosphere rather than requiring a dry room environment significantly reduced operational costs while maintaining data quality.

Table 2: Custom-Built Automation Systems in Research

| System Name | Key Components | Application Domain | Performance Metrics |

|---|---|---|---|

| ODACell | Dobot MG400 arms, Opentrons OT-2, custom end-effectors | Battery electrolyte formulation and coin cell assembly | 5% fail rate, 2% capacity RSD |

| PNNL HTE System | Robotic platforms in nitrogen/argon boxes, solid/liquid dispensers | Energy storage materials discovery | 200+ experiments per day |

| Academic HTE (UBC) | Custom-built modules for solid/liquid handling, temperature control | Reaction optimization, catalyst screening | Varies by configuration |

Semi-Automated and Hybrid Approaches

Principles of Semi-Automated Workflow Design