High-Throughput Temperature Screening in Parallel Reactors: A Foundational Guide for Accelerated Reaction Optimization

This article provides a comprehensive overview of high-throughput temperature screening within parallel reactor systems, a critical methodology for researchers and drug development professionals.

High-Throughput Temperature Screening in Parallel Reactors: A Foundational Guide for Accelerated Reaction Optimization

Abstract

This article provides a comprehensive overview of high-throughput temperature screening within parallel reactor systems, a critical methodology for researchers and drug development professionals. It covers the foundational principles of High-Throughput Experimentation (HTE) and the pivotal role of temperature control in reaction outcomes. The scope extends to practical methodologies, including the integration of automation and machine learning for experimental design, a detailed analysis of common temperature control challenges and their solutions, and finally, frameworks for the validation and comparative analysis of screening data to ensure robust and scalable process development.

The Principles and Critical Role of Temperature in High-Throughput Experimentation

High-Throughput Screening (HTS) and its evolution into Quantitative High-Throughput Screening (qHTS) represent a paradigm shift in chemical and biological research, enabling the rapid evaluation of thousands of compounds or reaction conditions in parallel. Traditional HTS methodologies, adapted from biological screening approaches, initially focused on testing compounds at a single concentration to identify hits based on activity thresholds [1] [2]. However, this approach suffered from significant limitations, including frequent false positives and false negatives, necessitating extensive follow-up testing [1]. The field has since progressed to qHTS, which generates complete concentration-response curves for every compound tested, providing rich datasets that enable immediate identification of reliable biological activities and structure-activity relationships directly from primary screens [1].

The technological advancement of HTS has been complemented by the development of High-Throughput Experimentation (HTE) in organic chemistry, which utilizes miniaturized and parallelized reactions to accelerate diverse compound library generation, optimize reaction conditions, and enable data collection for machine learning applications [2]. Modern HTE platforms, leveraging automation and sophisticated data analysis tools, have transformed reaction optimization from a resource-intensive process relying on chemical intuition and one-factor-at-a-time approaches to an efficient, data-driven exploration of chemical space [3]. This convergence of methodologies has created a powerful framework for comprehensive chemical genomics and accelerated drug discovery.

Key Principles and Technological Foundations

From Traditional HTS to Quantitative HTS

Traditional HTS operates by testing each compound in a library at a single concentration, classifying compounds as "active" or "inactive" based on whether their response exceeds a predefined threshold [1]. While this approach enabled the screening of large compound collections, it proved inadequate for comprehensively profiling biological activities due to its inability to detect partial agonism/antagonism and its susceptibility to false classifications from minor variations in sample preparation or assay conditions [1].

Quantitative HTS addresses these limitations through a titration-based approach where each compound is tested at multiple concentrations (typically seven or more), generating concentration-response curves that provide detailed pharmacological profiles [1] [4]. This methodology enables precise classification of compounds based on curve characteristics, including potency (AC50), efficacy, and response quality [1]. The implementation of qHTS with 60,000+ compounds demonstrated remarkable precision, with control compounds showing consistent response curves and statistical quality measures (Z' factor) averaging 0.87 throughout the screen [1].

Core Principles of Parallel Reactors

Parallel reactors enable high-throughput screening by allowing multiple reactions to proceed simultaneously under controlled conditions. The fundamental principles include:

- Miniaturization: Reactions are conducted at micro- to nanomole scales in multi-well plates (96, 384, or 1536 wells) or parallel reaction stations, significantly reducing reagent consumption and costs [2] [5].

- Parallelization: Multiple experiments proceed simultaneously rather than sequentially, dramatically increasing throughput [2].

- Environmental Control: Precise regulation of temperature, irradiation, and atmospheric conditions across all reaction vessels ensures reproducibility [5] [6].

- Automation: Robotic systems handle liquid dispensing, plate handling, and other repetitive tasks, improving precision and efficiency [2] [3].

Advanced parallel photoreactors have been developed specifically for photochemical applications, incorporating features such as high-intensity LED arrays with even photon distribution, safety interlocks, and active cooling systems to manage heat generated by irradiation [5] [6]. These systems enable systematic investigation of the complex interplay between parameters such as light intensity, catalyst loading, and reaction stoichiometry on nanomole scales [5].

Experimental Design and Workflow

Quantitative HTS Protocol for Enzyme Modulation Screening

The following protocol outlines a qHTS approach for identifying enzyme modulators, adapted from a pyruvate kinase screening campaign [1]:

Materials and Reagents:

- Compound library prepared as titration series (7 concentrations, 5-fold dilutions)

- Assay components: enzyme, substrates, coupling enzymes, detection reagents

- 1,536-well plates

- Liquid handling systems (pin tool or dispenser)

- Detection instrument (luminescence plate reader)

Procedure:

- Plate Preparation: Prepare compound titration plates with concentrations ranging from nM to μM concentrations. For a typical 7-point titration with 5-fold dilution, concentrations may range from 3.7 nM to 57 μM after transfer to assay plates [1].

Assay Assembly:

- Dispense 4 μL assay buffer to each well of 1,536-well plates

- Transfer compounds via pin tool (~23 nL)

- Initiate reaction by adding enzyme/substrate mixture

- Incubate under appropriate conditions (time, temperature)

Signal Detection: Measure endpoint or kinetic signals using appropriate detection method (e.g., luminescence for ATP-coupled assays)

Data Analysis:

- Normalize data to controls (positive/negative)

- Fit concentration-response curves using four-parameter Hill equation

- Classify curves based on quality (r²), efficacy, and asymptotes

- Calculate AC50 values for active compounds

Controls and Quality Assessment:

- Include control activators and inhibitors on every plate (e.g., ribose-5-phosphate as activator, luteolin as inhibitor for pyruvate kinase)

- Monitor assay performance using statistical parameters (Z' factor >0.5 acceptable, >0.7 excellent)

- Evaluate reproducibility through replicate measurements

Color-Mixing Strategy for Variant Detection

For genetic variant detection, a color-mixing strategy using toehold probes enables highly multiplexed analysis in a single reaction [7]:

Materials and Reagents:

- Asymmetric PCR primers

- Multiplex double-stranded toehold probes (fluorophore and quencher modified)

- DNA template

- iTaq Universal Probes Supermix or equivalent

- Thermal cycler with fluorescence detection capability

Procedure:

- Reaction Assembly:

- Prepare 30 μL reactions containing asymmetric primers, toehold probes, DNA template, and master mix [7]

- Utilize four fluorescence channels (ROX, CY5, FAM, HEX) with distinct emission spectra

Thermal Cycling:

- Initial denaturation: 95°C for 3 minutes

- 50 cycles of: 95°C for 30 seconds (denaturation), 64°C for 30 seconds (annealing), 72°C for 30 seconds to 2 minutes (extension, dependent on amplicon length)

- Measure background fluorescence during first 8 cycles

Probe Hybridization:

- Heat to 95°C for 5 minutes to dissociate double strands

- Cool to 40°C for probe binding

- Measure fluorescence at 40°C for 20 cycles

Data Analysis:

- Calculate final signals as raw signals minus background for each channel

- Assign color codes based on fluorescence patterns (on/off states)

- Identify variants based on specific color code combinations

Toehold Probe Design Considerations:

- Design protector and complementary strands with appropriate toehold, branch migration, and nonhomologous regions

- Calculate standard free energy of binding to ensure discrimination between variant and wild-type sequences

- Incorporate C3 spacer at 3' end of complementary strand to prevent polymerase extension

Diagram 1: Generalized qHTS workflow showing key stages from compound preparation to hit identification.

Equipment and Material Specifications

Quantitative HTS and Parallel Reactor Systems

Table 1: Comparison of High-Throughput Screening and Reaction Systems

| System Type | Throughput Capacity | Key Features | Applications | References |

|---|---|---|---|---|

| Quantitative HTS Platform | 60,000+ compounds in 30 hours | 1,536-well format, 7+ concentration titration, automated data analysis | Enzyme modulation screening, toxicological assessment, chemical genomics | [1] [4] |

| High-Intensity Parallel Photoreactor | 1,536 reactions in parallel | High photon flux, uniform illumination, efficient heat removal, temperature control | Photochemical reaction screening, reaction optimization | [5] |

| Illumin8 Parallel Photoreactor | 8 reactions simultaneously | Interchangeable LED modules (365-660 nm), magnetic stirring, heating to 80°C, safety interlocks | Benchtop photochemistry screening, method development | [6] |

| Machine Learning-Driven HTE | 96-well batch optimization | Bayesian optimization, multi-objective acquisition functions, automated experimentation | Reaction optimization, process chemistry, API synthesis | [3] |

Essential Research Reagent Solutions

Table 2: Key Reagents and Materials for High-Throughput Screening

| Reagent/Material | Function | Application Notes | References |

|---|---|---|---|

| Toehold Probes | Sequence-specific variant detection | Fluorophore-quencher modified, designed with toehold and branch migration regions for discrimination | [7] |

| Titrated Compound Libraries | Concentration-response testing | Typically 7 concentrations with 5-fold dilutions, covering 4 orders of magnitude | [1] |

| iTaq Universal Probes Supermix | qPCR reaction component | Provides enzymes, dNTPs, buffers for probe-based detection methods | [7] |

| Luminescence Detection Reagents | ATP-coupled assay detection | Enables detection of kinase activity through coupled luciferase reaction | [1] |

| Asymmetric PCR Primers | Preferential strand amplification | Enriches one strand for subsequent probe hybridization | [7] |

Data Analysis and Quality Control

Concentration-Response Curve Classification

In qHTS, concentration-response curves are systematically classified based on quality and characteristics [1]:

Class 1 Curves (Complete Response)

- Class 1a: Well fit (r² ≥ 0.9), full response (efficacy >80%), upper and lower asymptotes

- Class 1b: Same as 1a but with shallow curve (efficacy 30-80%)

Class 2 Curves (Incomplete Response)

- Class 2a: Good fit (r² ≥ 0.9), sufficient response (>80% efficacy) to calculate inflection point

- Class 2b: Weaker response (efficacy <80%, r² < 0.9)

Class 3 Curves (Marginally Active)

- Activity only at highest concentration tested with efficacy >30%

Class 4 Curves (Inactive)

- Insufficient response (efficacy <30%) or no activity

This classification system enables rapid prioritization of compounds for follow-up studies and facilitates structure-activity relationship analysis directly from primary screening data [1].

Quality Control Procedures

Robust quality control is essential for reliable qHTS data interpretation. The CASANOVA (Cluster Analysis by Subgroups using ANOVA) method provides automated quality control by identifying and filtering out compounds with multiple cluster response patterns [4]. This approach addresses the challenge of potency estimate variability that can arise from experimental factors such as chemical supplier, institutional site preparing chemical libraries, concentration-spacing, and compound purity [4].

Statistical measures for assay quality assessment include:

- Z' Factor: Measures separation between positive and negative controls (values >0.5 indicate acceptable assays, >0.7 indicate excellent assays) [1]

- Signal-to-Background Ratio: Should be sufficient for reliable detection (e.g., 9.6 as reported in pyruvate kinase screen) [1]

- Minimum Significance Ratio (MSR): Evaluates reproducibility of control compounds (e.g., 1.2-1.7 for control activators/inhibitors) [1]

Diagram 2: Data analysis workflow for qHTS, showing progression from raw data to hit selection with quality control checkpoints.

Advanced Applications and Integration with Machine Learning

The integration of machine learning with HTE has created powerful frameworks for reaction optimization and discovery. The Minerva system exemplifies this approach, combining Bayesian optimization with automated HTE to efficiently navigate complex reaction spaces [3]. This system addresses key challenges in chemical optimization, including:

- High-Dimensional Search Spaces: Handling up to 530 dimensions representing various reaction parameters

- Multi-Objective Optimization: Simultaneously optimizing yield, selectivity, cost, and other parameters

- Batch Constraints: Accommodating laboratory practicalities and experimental design limitations

- Chemical Noise: Maintaining robust performance despite experimental variability

In pharmaceutical process development, ML-driven HTE has demonstrated significant advantages over traditional approaches. For a challenging nickel-catalyzed Suzuki reaction exploring 88,000 possible conditions, the ML approach identified conditions with 76% yield and 92% selectivity, whereas chemist-designed HTE plates failed to find successful conditions [3]. Similarly, for active pharmaceutical ingredient synthesis, ML-guided optimization identified conditions achieving >95% yield and selectivity in significantly reduced timelines compared to traditional development campaigns [3].

The implementation of scalable multi-objective acquisition functions (q-NParEgo, TS-HVI, q-NEHVI) enables efficient optimization with batch sizes compatible with standard HTE workflows (24, 48, or 96 wells) [3]. Performance evaluation using the hypervolume metric, which quantifies the volume of objective space enclosed by selected reaction conditions, demonstrates the effectiveness of these approaches in rapidly identifying optimal regions of chemical space [3].

Troubleshooting and Technical Considerations

Common Challenges and Solutions

Spatial Bias in Microtiter Plates

- Problem: Edge effects causing uneven temperature distribution or evaporation

- Solution: Randomize sample placement, use control wells distributed across plates, implement plate mapping corrections [2]

Compound Library Quality

- Problem: Inconsistent results from sample preparation variations or compound degradation

- Solution: Implement quality control checks, purchase compounds from reliable suppliers, use fresh solutions [1] [4]

Photochemical Reaction Consistency

- Problem: Uneven light irradiation in parallel photoreactors

- Solution: Use reactors with uniform photon distribution, incorporate light-sensitive controls, calibrate light sources regularly [5] [6]

Data Quality and Reproducibility

- Problem: Inconsistent response patterns across experimental repeats

- Solution: Implement rigorous quality control procedures like CASANOVA, standardize protocols, account for experimental factors in statistical models [4]

Future Directions and Emerging Trends

The field of high-throughput screening continues to evolve with several promising directions:

- Ultra-HTE: Scaling to 1536 reactions simultaneously for even greater throughput [2]

- AI Integration: Enhanced machine learning algorithms for experimental design and data analysis [2] [3]

- Standardized Data Formats: Adoption of formats like Simple User-Friendly Reaction Format (SURF) for improved data sharing and reproducibility [3]

- Perceptual Contrast Algorithms: Development of advanced analytical methods like APCA (Accessible Perceptual Contrast Algorithm) for improved data visualization and interpretation [8]

- Democratization of HTE: Development of more accessible platforms and protocols for broader adoption in academic and small laboratory settings [2]

These advancements promise to further accelerate discovery timelines, enhance reproducibility, and expand the application of high-throughput methodologies across chemical and biological research domains.

In high-throughput experimentation (HTE) for chemical synthesis and process development, temperature is a fundamental parameter that exerts direct and profound influence over the reaction kinetics, selectivity, and ultimate yield. Unlike traditional one-factor-at-a-time optimization, modern HTE approaches, often enhanced by machine learning, enable the systematic exploration of temperature alongside other critical variables in highly parallelized systems [3] [9]. This allows researchers to rapidly map complex reaction landscapes and identify optimal conditions that satisfy multiple objectives simultaneously.

The integration of flow chemistry with HTE is particularly powerful for temperature screening, as it allows for precise dynamic control of temperature and access to conditions far beyond the boiling point of solvents at atmospheric pressure [9]. Understanding and controlling temperature is therefore not merely a practical necessity but a strategic tool for accelerating the discovery and development of robust chemical processes, especially in demanding fields like pharmaceutical development [3]. These application notes detail the core principles, quantitative data, and practical protocols for implementing high-throughput temperature screening.

Core Principles: Temperature's Role in Reaction Outcomes

Reaction Kinetics and the Arrhenius Law

The rate of a chemical reaction is intrinsically linked to temperature, a relationship classically described by the Arrhenius equation: [ k = A \exp\left(-Ea / RT\right) ] where (k) is the rate constant, (A) is the pre-exponential factor, (Ea) is the activation energy, (R) is the gas constant, and (T) is the absolute temperature in Kelvin. A modest increase in temperature can lead to a dramatic increase in the reaction rate, effectively reducing reaction times from hours to minutes.

Table 1: Experimentally Determined Arrhenius Parameters for Selected Reactions

| Reaction System | Temperature Range (K) | Arrhenius Expression | Application Context | Citation |

|---|---|---|---|---|

| Prenol + OH radical | 273 - 353 | ( k = (1.43 \pm 0.28) \times 10^{-11} \times \exp\left(\frac{691 \pm 59}{T}\right) ) | Atmospheric chemistry, biofuel oxidation | [10] |

| Prenol + OH radical (Extended) | 273 - 1290 | ( k = 1.46 \times 10^{-10} \times \left(\frac{T}{300}\right)^{-2.18} + 1.14 \times 10^{-10} \times \exp\left(-\frac{2961}{T}\right) ) | Combustion & atmospheric conditions | [10] |

| Mn²⁺ + Hydrated Electron (eₐq⁻) | 274 - 333 | Rate constant at 25°C: ( 2.4 \times 10^7 \, \text{M}^{-1}\text{s}^{-1} ); Follows Arrhenius behavior | Radiation chemistry, reactor coolant systems | [11] |

Selectivity and Yield

Temperature can differentially affect the activation energies of competing parallel or consecutive reactions, making it a powerful handle for controlling regioselectivity and stereoselectivity. An increase in temperature may favor the thermodynamically controlled product, while lower temperatures often favor the kinetically controlled product. In complex reactions, such as catalytic cross-couplings, temperature optimization is essential for suppressing side reactions and maximizing the yield of the desired product [3]. In one case study, a machine-learning-driven HTE campaign for a nickel-catalyzed Suzuki reaction successfully identified conditions that achieved a 76% area percent yield and 92% selectivity, a result that eluded traditional experimentalist-driven approaches [3].

High-Throughput Workflow for Temperature Screening

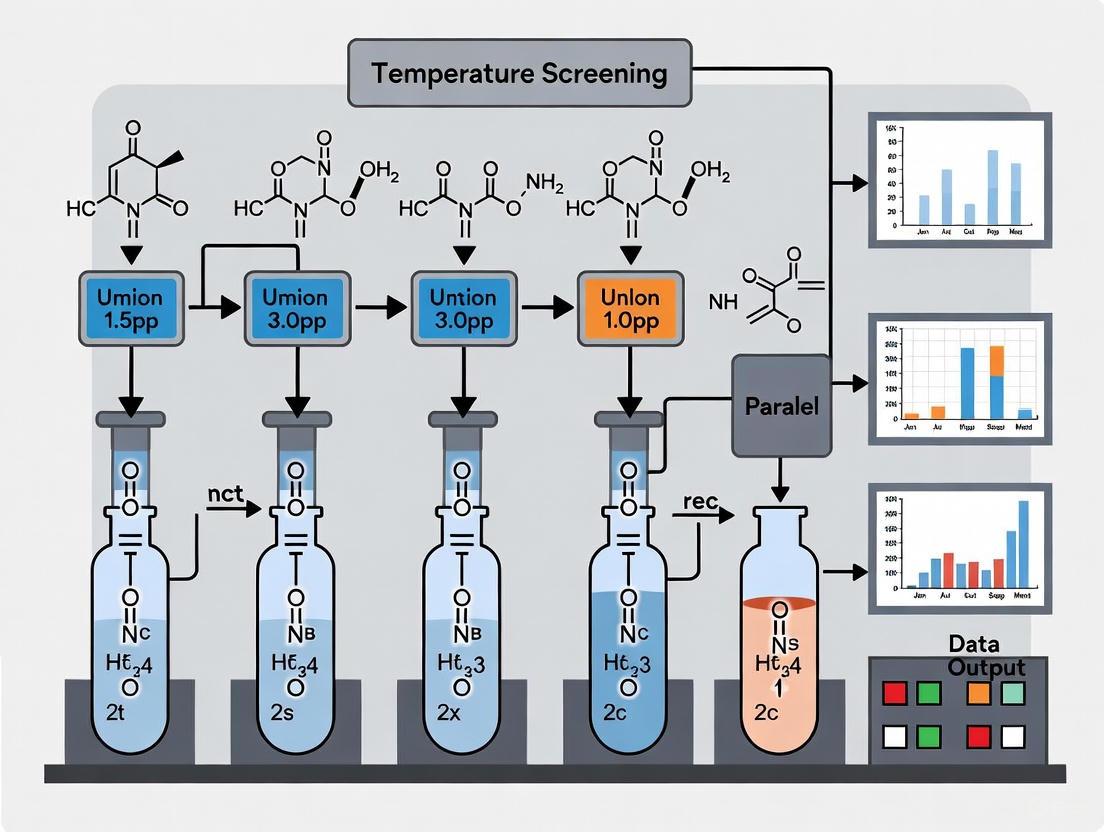

The following diagram illustrates a generalized, automated workflow for a high-throughput reaction optimization campaign that integrates temperature as a key variable, leveraging machine intelligence for experimental design.

Experimental Protocols

Protocol: High-Throughput Temperature Screening in Parallel Batch Reactors

This protocol is adapted from methodologies used in machine-learning-guided HTE campaigns for reaction optimization [3].

Objective: To systematically investigate the effect of temperature on the yield and selectivity of a model Ni-catalyzed Suzuki coupling reaction in a 96-well plate format.

Research Reagent Solutions:

- Catalyst Stock Solution: NiCl₂·glyme (0.1 M in anhydrous DMF)

- Ligand Stock Solution: 1,1'-Ferrocenediyl-bis(tert-butylphosphine) (0.2 M in anhydrous DMF)

- Base Stock Solution: Cs₂CO₃ (1.0 M in H₂O)

- Substrate Solutions: Aryl halide (1.0 M in DMF) and Boronic acid (1.0 M in DMF)

- Solvent: Anhydrous DMF

Procedure:

- Experimental Design: Use a Sobol sampling algorithm or a predefined temperature gradient to assign a specific temperature (e.g., 30°C, 50°C, 70°C, 90°C, 110°C) to each well or group of wells on a 96-well plate [3].

- Liquid Handling: Using an automated liquid handler, dispense into each well:

- 100 µL of DMF.

- 20 µL of Catalyst Stock Solution.

- 25 µL of Ligand Stock Solution.

- 50 µL of Aryl halide Solution.

- 50 µL of Boronic acid Solution.

- 50 µL of Base Stock Solution.

- Final volume: 295 µL.

- Sealing and Mixing: Seal the plate with a pressure-sensitive adhesive film and mix thoroughly on an orbital shaker for 1 minute.

- Parallel Reaction Execution: Place the plate in a thermostated agitating incubator or an HTE system with integrated thermal control. Run the reactions for the predetermined time (e.g., 6 hours).

- Quenching and Analysis: After the reaction time:

- Automatically quench reactions by adding 300 µL of a 1:1 MeOH/H₂O mixture.

- Centrifuge the plate to sediment any particulates.

- Analyze the supernatant directly via UPLC-MS or HPLC to determine conversion and selectivity using a calibrated internal standard.

Protocol: Investigating Temperature-Dependent Kinetics via Pulse Radiolysis

This protocol is based on studies of temperature-dependent reactions in aqueous systems, such as those between Mn²⁺ and water radiolysis products [11].

Objective: To determine the rate constant and Arrhenius parameters for the reaction between a metal ion (e.g., Mn²⁺) and the hydroxyl radical (•OH) over a temperature range of 1°C to 60°C.

Research Reagent Solutions:

- Analyte Solution: Mn(ClO₄)₂ hydrate (0.1 - 10 mM) in ultrapure water (18 MΩ·cm).

- Scavenger Gas: High-purity N₂O gas for saturating solutions to convert hydrated electrons into additional •OH radicals.

- Dosimetry Solution: 10 mM KSCN in N₂O-saturated water.

Procedure:

- Solution Preparation: Prepare a stock solution of the manganese salt in ultrapure water. Adjust the pH to the desired value (e.g., pH 5) using a non-interfering acid or base.

- Gas Saturation: Saturate the analyte solution with N₂O gas for at least 20 minutes prior to irradiation to remove dissolved oxygen and ensure complete conversion of ( e_{aq}^- ) to •OH.

- Temperature Equilibration: Load the solution into a flow-through quartz optical cell (1.0 cm pathlength) connected to the pulse radiolysis system. Use a thermoelectric cuvette holder to equilibrate the solution to the target temperature (e.g., 1°C, 25°C, 40°C, 60°C).

- Pulse Irradiation and Detection: Irradiate the solution with a short, high-energy electron pulse (e.g., 10 ns, 24.8 Gy). Monitor the formation and decay of transient species in real-time using a spectroscopic detection system, recording full transient absorption spectra or kinetics at a specific wavelength (e.g., 290 nm for H atom reaction) [11].

- Data Fitting: Fit the kinetic traces to exponential functions to obtain pseudo-first-order rate constants ((k')). Plot (k') against the Mn²⁺ concentration to determine the second-order rate constant ((k)) at each temperature.

- Arrhenius Analysis: Plot ( \ln(k) ) versus ( 1/T ) for the measured rate constants. The slope of the linear fit is ( -E_a/R ), and the intercept is ( \ln(A) ).

The Scientist's Toolkit

Table 2: Essential Reagents and Equipment for High-Throughput Temperature Screening

| Category | Item | Function & Application Notes |

|---|---|---|

| Reactor Systems | Automated Parallel Batch Reactors (e.g., 96-well) | Enables highly parallel reaction execution with independent thermal and mixing control for screening [3]. |

| Flow Chemistry Reactor Module | Provides precise temperature control and access to superheated conditions by pressurizing the system [9]. | |

| Pulse Radiolysis System | Allows direct measurement of reaction kinetics with short-lived species at varied temperatures [11]. | |

| Temperature Control | Thermostated Agitating Incubator | Provides stable, uniform heating for microtiter plates. |

| Peltier-Based Cuvette Holder | Enables rapid and precise temperature control for cuvette-based kinetics studies [11]. | |

| Analytical & Enabling Tech | Automated Liquid Handling Robot | Ensures precision and reproducibility in reagent dispensing for HTE [3]. |

| UPLC-MS / HPLC | Provides high-throughput, automated analysis for yield and selectivity determination. | |

| Machine Learning Platform (e.g., Minerva) | Guides experimental design by selecting promising reaction conditions (incl. temperature) for subsequent batches [3]. |

Data Analysis and Visualization

The relationship between temperature, kinetics, and final reaction outcomes can be visualized as a multi-parameter optimization landscape. The following diagram outlines the logical connections and decision points in this process.

Parallel reactor systems are specialized laboratory apparatuses designed to conduct multiple chemical or biological reactions simultaneously under controlled conditions. Unlike traditional single-reactor setups, these stations feature multiple independent reaction chambers, allowing researchers to rapidly screen reaction parameters such as temperature, pressure, stirring speed, and reaction time across different channels [12]. This capability significantly accelerates process development timelines across pharmaceuticals, specialty chemicals, and materials science by enabling high-throughput experimentation (HTE) [9]. The fundamental principle behind parallel reactor systems is the miniaturization and parallelization of reaction vessels, creating high-throughput laboratories that can generate extensive experimental data while consuming minimal resources [13] [14].

These systems have evolved from simple well-plate approaches to sophisticated integrated platforms featuring advanced control systems, real-time monitoring, and automation capabilities [12]. The growing demand for efficient and scalable reaction testing has made these systems essential in research laboratories focused on innovation and product development, particularly where optimizing yield, selectivity, and process safety are critical [15]. This document outlines common configurations, experimental protocols, and applications of parallel reactor systems within the context of high-throughput temperature screening for chemical and bioprocess development.

Common System Configurations and Their Characteristics

Parallel reactor systems are categorized based on their operational principles, scale, and design characteristics. The configuration selection depends on specific research needs, including the reaction type, parameters of interest, and required throughput.

Table 1: Classification and Characteristics of Parallel Reactor Systems

| Reactor Type | Scale/Volume | Key Features | Control Capabilities | Typical Applications |

|---|---|---|---|---|

| Shaken Bioreactors (Shake flask, Microtiter plates) [13] | ~300 μL to ~100 mL [9] [13] | Simple operation, high parallelization (96-384 wells) | Limited control over continuous variables; temperature often uniform per plate [9] [14] | Initial screening, cell culture studies, bioprocess development [13] |

| Stirred-Tank Reactors (Stirred-tank, Stirred column) [13] | ~mL to ~100 mL [13] | Individual stirring for each vessel, improved heat/mass transfer | Individual pH and dissolved oxygen (pO2) control, fed-batch operation [13] | Microbial fed-batch processes, reaction optimization where mixing is critical [13] |

| Sparged Bioreactors (Small-scale bubble column) [13] | ~mL to ~100 mL [13] | Gas introduction via sparging, no moving parts | Control of gas flow rate and composition | Gas-liquid reactions, aerobic fermentations [13] |

| Droplet-Based Microfluidic Reactors [14] | Nanoliter to Microliter scale [14] | Fluoropolymer tubing, stationary or oscillatory droplets, high surface-to-volume ratio | Totally independent conditions per channel, pressure (up to 20 atm), temperature (0-200°C) [14] | High-fidelity reaction screening, kinetic studies, thermal and photochemical transformations [14] |

| Flow Chemistry Reactors [9] | Continuous flow in narrow tubing | Enhanced heat/mass transfer, safe use of hazardous reagents, pressurization | Precise control of residence time, temperature, and pressure; wide process windows [9] | Photochemistry, electrochemistry, process intensification, scale-up studies [9] |

Table 2: Quantitative Performance Metrics of Advanced Parallel Reactor Systems

| Performance Parameter | Droplet-Based Microfluidic Platform [14] | Advanced Stirred Bioreactor Systems [13] | Automated Flow Chemistry HTE [3] |

|---|---|---|---|

| Throughput (Number of Parallel Reactors) | 10 independent channels | 48 stirred-tank reactors | 96-well plate format |

| Temperature Range | 0 °C to 200 °C (solvent-dependent) | Not specified | Not specified |

| Pressure Range | Up to 20 atm | Not specified | Accessible via pressurization |

| Reproducibility | <5% standard deviation | Not specified | Outperforms traditional methods |

| Reaction Scale | Nanoliter to Microliter scale | Milliliter scale | ~300 μL per well |

| Key Technological Integrations | On-line HPLC, Bayesian optimization, scheduling algorithm | Individual pH- and pO2-controls, automation, liquid handling | Machine learning (Minerva), robotic fluid handling, multi-objective optimization |

Configuration Workflow Diagram

The following diagram illustrates the general workflow for selecting and implementing a parallel reactor system for high-throughput temperature screening:

Experimental Protocols for High-Throughput Temperature Screening

Protocol: Automated Multi-Objective Reaction Optimization Using Machine Learning

This protocol details the use of a machine learning-driven workflow for reaction optimization with parallel reactors, capable of handling large experimental batches and high-dimensional search spaces [3].

1. Pre-Experimental Planning

- Define Reaction Objectives: Clearly specify primary and secondary optimization targets (e.g., yield, selectivity, cost, safety) [3].

- Delineate Reaction Condition Space: Compile a discrete combinatorial set of plausible reaction conditions including catalysts, ligands, solvents, and additives. Apply chemical knowledge filters to exclude impractical combinations (e.g., temperatures exceeding solvent boiling points, unsafe reagent combinations) [3].

- Select Platform and Scale: Choose an appropriate parallel reactor system (e.g., 96-well HTE platform or droplet-based microreactors) based on throughput needs and parameter control requirements [3] [14].

2. Initial Experimental Setup

- Algorithmic Initial Sampling: Employ quasi-random Sobol sampling to select the initial batch of experiments. This approach maximizes coverage of the reaction space, increasing the likelihood of discovering regions containing optimal conditions [3].

- Plate Preparation: Utilize automated liquid handlers to prepare reaction mixtures according to the initial experimental design in 96-well plates or microfluidic reactor channels [3] [14].

- Reactor Parameter Programming: Program individual reactor vessels with assigned temperatures, pressures, and other continuous variables as per the experimental design.

3. Iterative Optimization Cycle

- Execute Reactions: Run parallel reactions under specified conditions with precise temperature control.

- Analyze Outcomes: Employ inline, online, or offline analytical techniques (e.g., HPLC, UPLC, GC) to quantify reaction outcomes [14].

- Train Machine Learning Model: Using acquired experimental data, train a Gaussian Process (GP) regressor to predict reaction outcomes and their uncertainties for all possible conditions in the predefined space [3].

- Select Next Experiment Batch: Apply a multi-objective acquisition function (e.g., q-NParEgo, TS-HVI, q-NEHVI) to identify the most promising next batch of experiments, balancing exploration of uncertain regions with exploitation of known promising conditions [3].

- Repeat: Conduct additional optimization cycles until convergence, performance stagnation, or exhaustion of the experimental budget.

4. Data Analysis and Validation

- Identify Optimal Conditions: Select reaction conditions that best meet all objectives from the Pareto front of non-dominated solutions.

- Laboratory Validation: Confirm performance of identified optimal conditions in traditional laboratory glassware to verify transferability.

- Scale-Up Studies: Translate optimized conditions to larger scales using flow chemistry or traditional batch reactors [9].

Protocol: High-Throughput Temperature Screening for Photochemical Reactions

This protocol specifically addresses temperature screening for photochemical applications using parallel flow reactor systems [9].

1. System Configuration

- Reactor Selection: Configure a parallel droplet-based microfluidic platform or a continuous flow system with integrated photoirradiation capabilities [14] [9].

- Light Source Integration: Install and calibrate LED arrays or other controlled light sources with appropriate wavelengths for the photocatalytic system.

- Temperature Control Module: Implement precise temperature control for individual reactor channels using Peltier elements or circulating baths, ensuring each channel can maintain independent set points.

2. Experimental Execution

- Reagent Preparation: Prepare stock solutions of substrates, photocatalysts, and other reagents in appropriate solvents.

- Droplet/Flow Path Setup: For microfluidic systems, generate discrete reaction droplets separated by an immiscible carrier fluid. For continuous flow, establish stable flow rates through photoreactor channels [14].

- Parallel Reaction Execution: Simultaneously expose reactions across multiple channels to controlled light irradiation while maintaining individual temperature set points.

- Residence Time Control: Precisely control reaction times through flow rate adjustment (continuous flow) or droplet stationing times (microfluidic systems).

3. Analysis and Optimization

- Inline Analysis: Utilize integrated analytical systems (e.g., on-line HPLC with automated injection valves) for real-time reaction monitoring [14].

- Data Correlation: Correlate reaction outcomes (conversion, selectivity) with temperature profiles across all parallel channels.

- Temperature Optima Identification: Identify temperature conditions that maximize desired outcomes while minimizing decomposition or side reactions.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of parallel reactor screening requires carefully selected reagents and materials compatible with miniaturized, automated formats.

Table 3: Essential Research Reagents and Materials for Parallel Reaction Screening

| Reagent/Material Category | Specific Examples | Function in Parallel Screening | Compatibility Notes |

|---|---|---|---|

| Catalysts | Nickel catalysts [3], Palladium catalysts [3], Flavin photocatalysts [9] | Facilitate chemical transformations; non-precious metal alternatives (e.g., Ni) offer cost and sustainability advantages [3] | Compatibility with microfluidics; homogeneous catalysts preferred to avoid clogging [9] |

| Ligands | Diverse phosphine ligands, N-heterocyclic carbenes | Modulate catalyst activity and selectivity; key categorical variable in optimization [3] | Solubility in selected solvent systems crucial for performance |

| Solvents | Dimethylformamide (DMF), Acetonitrile, Tetrahydrofuran (THF), Toluene | Medium for reaction; significantly influences outcome; categorical screening variable [3] | Must be compatible with reactor materials (e.g., fluoropolymer tubing [14]); volatility considerations for open-well systems |

| Additives | Bases (e.g., carbonates, phosphates), Acids, Salts | Modify reaction environment; affect kinetics and selectivity [3] | Screening various bases identified optimal conditions in photoredox fluorodecarboxylation [9] |

| Analytical Standards | Internal standards for HPLC/GC, Calibration solutions | Enable accurate quantification of reaction outcomes [14] | Must be stable and compatible with automated sampling systems |

| Solid Handling Aids | Glass microvials [14], Solid-dispensing robots [3] | Enable accurate dispensing of small quantities of solids for library synthesis | Essential for preparing diverse reaction conditions in 96/384-well formats |

Workflow Integration Diagram

The following diagram illustrates the integrated workflow combining parallel reactor systems with machine intelligence for automated optimization:

Fundamentals of Heat Transfer and Temperature Uniformity in Miniaturized Reactors

The adoption of miniaturized reactors, including micro- and milli-reactors, has become transformative for high-throughput temperature screening in pharmaceutical and fine chemical research. These systems enable researchers to perform rapid, parallelized experimentation under tightly controlled conditions, dramatically accelerating reaction optimization and catalyst development [16]. The core principle enabling this advancement is the superior heat transfer characteristics inherent at reduced scales, which provide enhanced temperature uniformity and control compared to traditional batch reactors. This application note details the fundamental heat transfer principles, experimental protocols, and practical implementation strategies essential for leveraging miniaturized reactor technology effectively within high-throughput parallel reactor research environments.

Within the context of a broader thesis on high-throughput screening, mastering these fundamentals is critical for generating reproducible, scalable, and kinetically meaningful data. The ability to maintain precise and uniform temperature across multiple parallel reactors directly impacts critical process parameters such as reaction rate, selectivity, product yield, and ultimately, the reliability of the data used for scale-up decisions [17] [14]. This document provides a structured framework for understanding and applying these principles in practice.

Technical Background

Heat Transfer Fundamentals in Miniaturized Systems

The exceptional heat transfer performance in miniaturized reactors stems from their high surface-area-to-volume ratio. As reactor dimensions decrease, this ratio increases significantly, bringing a greater proportion of the reaction volume into close proximity with the heat transfer surface. This geometry fundamentally enhances heat dissipation and acquisition, minimizing internal temperature gradients and enabling rapid thermal equilibration [16]. This characteristic is particularly vital for exothermic reactions, where it prevents the formation of hot spots that can lead to side reactions, safety hazards, and inaccurate kinetic data.

Effective heat transfer management is a prerequisite for achieving the key technical indicators in reactor design: power-flow ratio, limit quality (resistance to flow instability), and critical heat flux (CHF) [17]. In single-phase flow, which is dominant in many continuous-flow applications, heat transfer is improved by promoting turbulence and continuous disturbance of the thermal boundary layer. In two-phase boiling flow, the objectives shift to suppressing vapor aggregation to delay DNB-type boiling crisis and promoting rewetting of the heating surface to enhance CHF [17].

Strategies for Temperature Uniformity in Parallel Systems

In parallel reactor configurations, ensuring temperature uniformity across individual reactor units is as important as maintaining it within a single unit. Inconsistencies can invalidate comparative screening results. Two primary engineering approaches address this:

- Passive Heat Transfer Enhancement: This involves design modifications to the reactor structure itself. Technologies include micro-channel designs and longitudinal vortex generators, which increase the specific surface area, enhance turbulent mixing, and disturb the near-wall thermal boundary layer [17]. These technologies are mature and widely applicable.

- Active Heat Transfer Enhancement: This involves external intervention, such as the application of magnetic or electric fields to influence coolant properties and flow fields. While effective for both single- and two-phase heat transfer, these technologies are predominantly in the research phase and lack broad engineering application [17].

For parallel systems, a common challenge is maintaining precise gas feed distribution when catalyst bed pressure drops vary between reactors. Individual Reactor Pressure Control (RPC) technology solves this by actively controlling the pressure at each reactor's outlet to ensure equal inlet pressures, thereby guaranteeing identical feed distribution and preserving reaction condition integrity across the entire bank [18].

The following tables consolidate key performance metrics and design parameters for miniaturized reactor systems, providing a reference for experimental planning and system selection.

Table 1: Performance Characteristics of Miniaturized Reactor Systems

| Parameter | Droplet-Based Platform [14] | Parallel Reactor System (e.g., PolyBLOCK) [19] | High-Pressure Screening System (e.g., PolyCAT) [20] |

|---|---|---|---|

| Reactor Channels | 10 independent parallel channels | 4 or 8 independent zones | 8 independent reactors |

| Typical Volume | Microliter to nanoliter scale (droplets) | Up to 500 mL (PB4) or 120 mL (PB8) | 16 mL (standard), up to 50 mL |

| Temperature Range | 0 °C to 200 °C (solvent dependent) | -40 °C to +200 °C | Ambient to 250 °C (with options down to -40 °C) |

| Pressure Range | Up to 20 atm | Not specified | Up to 200 bar |

| Key Feature | Online HPLC analysis; Bayesian optimization | Small footprint; flexible vessel options | Individual pressure control up to 200 bar |

Table 2: Heat Transfer Enhancement Technologies & Performance [17]

| Technology | Classification | Mechanism of Action | Primary Application | Maturity |

|---|---|---|---|---|

| Micro-channel / Structure Innovation | Passive | Increases surface-area-to-volume ratio; disturbs thermal boundary layer. | Single-phase & two-phase conditions; reactor core & heat exchangers. | High |

| Surface Micro-/Nano-structuring | Passive | Affects bubble dynamics and two-phase interface evolution. | Boiling / two-phase heat transfer. | Medium |

| Longitudinal Vortex Generators | Passive | Enhances turbulent mixing of the flow field. | Single-phase & two-phase conditions. | High |

| Magnetic/Electric Fields | Active | Alters coolant physical properties and flow field via external fields. | Single-phase & two-phase conditions (laboratory scale). | Low (R&D) |

Experimental Protocols

Protocol: Validating Temperature Uniformity Across a Parallel Reactor Block

This protocol is designed to verify and ensure temperature consistency across all zones of a parallel reactor system before commencing critical high-throughput screening campaigns.

The Scientist's Toolkit: Essential Materials for Temperature Uniformity Validation

| Item | Function | Example/Notes |

|---|---|---|

| Parallel Reactor System | Provides the multi-zone testing platform. | e.g., PolyBLOCK 4 or 8 [19]. |

| Calibrated Thermocouples | Accurate temperature measurement in each reactor vessel. | Ensure calibration is current. |

| Reference Fluid | A thermally stable fluid with known properties. | e.g., Silicone oil or a standard solvent. |

| Data Logging Software | Records temperature from all zones simultaneously. | e.g., labCONSOL or equivalent [20]. |

Methodology:

- System Setup: Install identical reaction vessels in all zones of the parallel reactor system (e.g., PolyBLOCK). Fill each vessel with the same volume of a reference fluid with known heat capacity.

- Sensor Calibration and Placement: Place pre-calibrated thermocouples in each vessel, ensuring consistent depth and position relative to the vessel geometry and heating mantle.

- Temperature Ramp: Program the reactor controller to execute a temperature ramp from ambient to a standard target temperature (e.g., 100 °C) at a defined rate (e.g., 2 °C/min) for all zones simultaneously.

- Data Collection: Use the data logging software to record the temperature in each zone at high frequency (e.g., every 10 seconds) throughout the ramp and during a subsequent 1-hour hold at the target temperature.

- Data Analysis: Calculate the mean temperature and standard deviation across all zones at the steady-state hold. A well-performing system should exhibit an inter-zone temperature standard deviation of < 1.0 °C. Plot the temperature trajectories for all zones to identify any laggards or outliers.

Protocol: High-Throughput Reaction Kinetics Study in a Droplet Platform

This protocol leverages an automated droplet-based platform to efficiently collect kinetic data for a model photochemical or thermal reaction.

The Scientist's Toolkit: Essential Materials for Droplet-Based Kinetics

| Item | Function | Example/Notes |

|---|---|---|

| Droplet Reactor Platform | Executes reactions in discrete, controlled droplets. | Platform with parallel channels and online analytics [14]. |

| Liquid Handler | Prepares and injects reagent solutions with high precision. | Integrated with the droplet platform. |

| On-line HPLC with UV/Vis | Provides real-time reaction conversion data. | Equipped with a nanoliter-scale injection valve [14]. |

| Bayesian Optimization Software | Intelligently selects subsequent experimental conditions. | Integrated into the platform's control software [14]. |

Methodology:

- Reagent Preparation: Prepare stock solutions of reactants in appropriate solvents. Utilize the liquid handler to formulate reaction mixtures according to an initial experimental design, which may vary concentration, catalyst loading, or other factors.

- Droplet Generation and Scheduling: The platform forms discrete reaction droplets and, using selector valves, directs each droplet to an assigned reactor channel. Each channel is pre-set to a specific temperature (for thermal reactions) or equipped with a photoirradiation source (for photochemical reactions). A scheduling algorithm orchestrates the movement of droplets to ensure integrity and efficiency [14].

- In-situ Reaction Monitoring: At designated time intervals, the system automatically samples the reaction droplet from a channel via a nanoliter-scale injection valve and transfers it to the on-line HPLC for analysis. This minimizes the delay between reaction quenching and analysis.

- Iterative Experimental Design: The Bayesian optimization algorithm analyzes the collected conversion/yield data and proposes a new set of reaction conditions (e.g., temperature, time) to maximize the information gain towards the objective (e.g., determining rate constants). This closed-loop process continues automatically until the kinetic model is sufficiently refined [14].

Implementation Workflows

The following diagrams illustrate the logical and experimental workflows central to operating and leveraging miniaturized parallel reactor systems.

Diagram 1: High-Throughput Screening Workflow. This flowchart outlines the end-to-end process for conducting a reliable screening campaign, highlighting the critical step of temperature validation before kinetic studies.

Diagram 2: System Architecture for an Automated Parallel Screening Platform. This diagram shows the integration of key components, including the microfluidic distributor for precise flow splitting and the individual reactor pressure control (RPC) for maintaining distribution integrity, all under the supervision of an optimization algorithm.

The successful implementation of high-throughput temperature screening in miniaturized reactors hinges on a deep understanding of heat transfer fundamentals and a meticulous approach to experimental execution. The high surface-area-to-volume ratio of these systems provides an inherent advantage for achieving superior temperature control and uniformity, which is a critical prerequisite for generating high-quality, reproducible data. By adhering to the validated protocols for temperature validation and kinetic studies, and by leveraging the advanced capabilities of modern parallel reactor systems—such as individual reactor pressure control and integrated Bayesian optimization—researchers can significantly accelerate the drug development and catalyst screening processes. The data and workflows provided herein serve as a foundational guide for the effective application of these powerful technologies in a research environment.

Implementing Temperature Control: Systems, Automation, and Workflow Integration

Temperature control is a critical parameter in high-throughput screening and parallel reactor research, directly influencing reaction kinetics, yield, and selectivity in chemical and pharmaceutical development. This application note provides a detailed comparative analysis of three predominant temperature control methodologies—Peltier, liquid circulation, and air cooling—within the context of parallel reactor systems. Each method offers distinct operational principles, performance characteristics, and suitability for specific experimental requirements. The analysis synthesizes current technical data and establishes standardized protocols to guide researchers and drug development professionals in selecting and implementing optimal thermal management strategies for their high-throughput workflows. By framing this comparison around quantitative performance metrics and practical implementation guidelines, this document aims to support robust experimental design and enhance reproducibility in accelerated research environments.

Fundamental Principles and System Architectures

Peltier (Thermoelectric) Systems

Peltier systems, or Thermoelectric Coolers (TECs), are solid-state heat pumps that utilize the Peltier effect to transfer heat from one side of the device to the other when an electrical current is applied [21] [22]. Inside a Peltier element, the Peltier effect (Qp) creates a temperature difference between two sides when DC current flows. This core effect is superimposed with heat backflow (QRth) from the hot to the cold side and Joule heating losses (QRv) from the device's electrical resistance [21]. The direction of heat pumping reverses with the direction of the electrical current, enabling both cooling and heating without mechanical reconfiguration [22]. A typical Peltier module comprises numerous n-type and p-type semiconductor pillars (commonly Bismuth Telluride) arranged electrically in series and thermally in parallel, sandwiched between ceramic substrates [22] [23]. This solid-state architecture provides a compact, reversible, and precisely controllable heat pump mechanism ideal for integrating into multi-reactor instrumentation, such as the Crystal16 parallel crystallizer, which uses Peltier elements for temperature control across 16 reactors [24].

Liquid Circulation Systems

Liquid circulation systems manage temperature by transferring heat via a pumped fluid, typically a water-glycol mixture, through a network of channels or jackets in contact with reaction vessels [25]. These systems operate on forced convection principles, where coolant absorbs heat from the reactor and rejects it to an external chiller or heat exchanger. The thermal performance is governed by coolant properties (e.g., specific heat capacity, thermal conductivity, flow rate), channel geometry, and heat exchanger efficiency [25]. Advanced systems, like the serpentine-channel cold plates studied for battery thermal management, demonstrate the critical impact of parameters such as channel depth, width, and flow rate on achieving temperature uniformity and managing significant heat loads [25]. This method excels in applications requiring high heat flux removal and precise temperature stability across multiple reaction sites.

Air Cooling Systems

Air cooling represents the most straightforward thermal management approach, relying on forced convection of ambient or conditioned air across heating elements or reactor surfaces to dissipate heat [26]. A typical implementation involves fans that blow air across target surfaces, with fan speed often modulated by temperature feedback to maintain a setpoint [26]. Its operation is fundamentally simple, but its effectiveness is highly dependent on the temperature differential between the target surface and the ambient air, as well as the airflow rate and pathway [26]. While mechanically simple and cost-effective, its cooling capacity and stability can be limited by fluctuating ambient conditions and relatively low heat transfer coefficients compared to liquid-based or solid-state systems.

Comparative Performance Analysis

The selection of an appropriate temperature control method requires a thorough understanding of performance boundaries, efficiency, and operational constraints. The following table synthesizes key quantitative and qualitative characteristics of the three methods, drawing from current technical data and application notes.

Table 1: Comparative Performance Analysis of Temperature Control Methods

| Parameter | Peltier (TEC) | Liquid Circulation | Air Cooling |

|---|---|---|---|

| Typical Temperature Range | -20°C to 150°C [24] | -25°C to >150°C (chiller-dependent) [24] | Near-ambient to >150°C (limited cooling) [26] |

| Max. Temperature Difference (ΔT) | ~70°C for single-stage [22] | Limited only by chiller capacity | Highly dependent on ambient temperature [26] |

| Cooling/Heating Rate | High (0 - 20 °C/min) [24] | Moderate to High (depends on flow rate and pump) | Low to Moderate [26] |

| Temperature Stability | High (±0.1°C or better) [23] | High (±0.5°C or better) [24] | Moderate (susceptible to ambient fluctuations) [26] |

| Coefficient of Performance (COP) | Low (typically <1) [21] [22] | Moderate to High | High (for cooling near ambient) [26] |

| Heat Load Capacity | Medium (up to hundreds of Watts) [23] | High (kW range possible) | Low [26] |

| Spatial Uniformity | Good (per reactor block) | Excellent (with optimized channel design) [25] | Poor to Fair |

| Key Advantage(s) | Bidirectional control, compact, no moving parts in module [22] [23] | High heat flux handling, excellent for large/exothermic loads [25] | Simplicity, low cost, maintenance-free [26] |

| Primary Limitation(s) | Low efficiency at high ΔT, self-heating (Joule effect) [21] [22] | System complexity, potential for leaks, higher cost | Low capacity, noisy, external temperature sensitivity [26] |

The performance data reveals a clear trade-off between control precision, heat load capacity, and system complexity. Peltier devices offer superior bidirectional control and precision in a compact form factor, making them ideal for medium-throughput platforms where rapid cycling and sub-ambient cooling are required, but they struggle with efficiency under high thermal loads [21] [23]. Liquid circulation systems provide the highest capacity and stability for managing large heat fluxes, as evidenced by their use in high-capacity battery modules and reactors requiring tight thermal uniformity, albeit with increased infrastructure and cost [25]. Air cooling remains a viable, cost-effective solution for applications with minimal heat loads and where operational temperatures are close to ambient, though its susceptibility to environmental changes makes it less suitable for critical, sensitive reactions [26].

Table 2: Suitability for High-Throughput Reactor Applications

| Application Scenario | Recommended Method | Rationale |

|---|---|---|

| Rapid Temperature Cycling (e.g., PCR, crystallization kinetics) | Peltier (TEC) | Inherent bidirectional control enables very fast heating and cooling rates [24] [23]. |

| High Exothermic/Endothermic Reactions | Liquid Circulation | High heat flux capacity prevents reactor runaway and maintains setpoint [25]. |

| Solubility Studies & Metastable Zone Width | Peltier (TEC) | High stability and precision allow for accurate clear/cloud point detection [24]. |

| Budget-Constrained, Near-Ambient Screening | Air Cooling | Lowest initial cost and system complexity for suitable applications [26]. |

| Operations Requiring Sub-Ambient Cooling | Peltier or Liquid Circulation | Both can achieve sub-ambient temperatures; choice depends on required ΔT and heat load [24]. |

| Large-Footprint or Distributed Reactors | Liquid Circulation | Centralized chiller can service multiple remote reactor blocks efficiently. |

Experimental Protocols for High-Throughput Screening

Protocol: Temperature Calibration and Uniformity Mapping

This protocol is essential for validating the performance of any temperature control system prior to critical experiments, ensuring data integrity and reproducibility.

1. Purpose and Scope: To verify the accuracy and spatial uniformity of temperature setpoints across all reactor positions in a parallel system. This method applies to Peltier, liquid circulation, and air-cooled systems.

2. Research Reagent Solutions & Essential Materials:

- Temperature Calibration Standard: Traceable precision thermometer (e.g., PT100 RTD) with a known uncertainty (e.g., ±0.05°C).

- Mapping Sensors: Multiple calibrated thermocouples (Type T or K) or a multi-channel data acquisition system.

- Thermal Simulant Fluid: A heat transfer fluid with similar viscosity and thermal capacity to typical reaction solvents (e.g., silicone oil, ethylene glycol/water mix).

- Reactor Vessels: Standard, clean, and dry reactor vials or vessels used in the platform.

3. Step-by-Step Workflow: 1. Setup: Place the thermal simulant fluid in all reactor vessels to the standard working volume. Insert the mapping sensors into the fluid of selected vessels, ensuring consistent depth and placement across the reactor block. 2. Data Acquisition: Set the control system to a series of target temperatures (e.g., 10°C, 40°C, 70°C). At each setpoint, allow the system to stabilize for a duration ≥ 5 times the system's reported time constant. 3. Measurement: Record temperatures from all mapping sensors and the calibration standard simultaneously over a stable period (e.g., 10 minutes). Calculate the average temperature and standard deviation for each reactor position. 4. Analysis: Generate a uniformity map. The system passes if the maximum deviation from the setpoint and the inter-reactor temperature spread are within the required tolerance for the intended application (e.g., ±0.5°C).

Protocol: Determining System-Specific Ramp Rates

Determining the maximum non-destructive ramp rate is critical for optimizing cycle times without triggering thermal runaway.

1. Purpose: To empirically determine the maximum heating and cooling ramp rate for a specific reaction load without causing control instability or thermal runaway.

2. Research Reagent Solutions & Essential Materials: * Reaction Simulant: A solution with thermal properties (heat capacity, density) mimicking the actual reaction mixture. * Data Logging Software: The system's native software or an external DAQ to record temperature and control output.

3. Step-by-Step Workflow: 1. Initialization: Load all reactors with the reaction simulant. Set the controller to a moderate starting ramp rate (e.g., 5°C/min). 2. Ramp Execution: Initiate a temperature cycle (e.g., from 25°C to 80°C and back to 25°C). Monitor the controller output (e.g., current for TEC, valve position for liquid). 3. Observation: If the controller output saturates at 100% for a prolonged period and the actual temperature ramp deviates significantly from the setpoint, the rate is too aggressive. Thermal runaway in TECs is indicated when increasing current leads to increased temperature on the cold side due to dominant Joule heating [23]. 4. Iteration: Repeat with adjusted ramp rates. The maximum safe rate is the fastest one where the controller does not saturate and the actual temperature profile closely follows the setpoint.

Protocol: High-Throughput Solubility and Crystallization Screening

This protocol leverages the strengths of Peltier-based parallel reactors for efficient material science research.

1. Purpose: To automatically generate solubility curves and metastable zone width (MSZW) data for multiple solvent-solute systems in parallel.

2. Research Reagent Solutions & Essential Materials: * Analyte: High-purity compound of interest. * Solvent Library: A selection of anhydrous solvents and solvent mixtures. * Parallel Reactor System: A system with integrated turbidity sensing and temperature control (e.g., Crystal16) [24].

3. Step-by-Step Workflow: 1. Preparation: Dispense standardized volumes of different solvents and a known mass of the analyte into each reactor vessel. 2. Dissolution: Heat the blocks with stirring to a temperature ensuring complete dissolution of the solute in all reactors. 3. Turbidity Tuning: Tune the integrated transmissivity probes against the clear solutions [24]. 4. Temperature Profile Execution: Program a controlled cooling ramp (e.g., 0.1-0.5°C/min). The software will automatically record the "cloud point" (temperature of first detection of particles) and the "clear point" upon subsequent heating. 5. Data Analysis: The software typically generates solubility curves and calculates the MSZW from the clear and cloud point data, allowing for rapid comparison across solvents [24].

System Integration and Control Logic

Effective temperature control requires more than just a heating/cooling element; it demands a well-integrated system with logical feedback. The following diagram illustrates the core control architecture common to all three methods, highlighting the closed-loop logic that ensures stability.

The control logic begins with a user-defined temperature setpoint. A sensor measures the actual reactor temperature, and this value is compared to the setpoint within a Proportional-Integral-Derivative (PID) controller. The controller computes a corrective signal sent to the method-specific actuator. For Peltier systems, this actuator adjusts the magnitude and direction of DC current [23]; for liquid systems, it modulates pump speed or a control valve [25]; and for air systems, it adjusts fan speed and/or auxiliary heater power [26]. The process heat is either added or removed, and the resulting temperature is measured again, closing the loop. Critically, all methods require a heat sink (ambient air, a chiller, or a liquid-cooled radiator) to dissipate the waste heat generated during operation.

The Scientist's Toolkit: Essential Materials and Reagents

Successful implementation of temperature control protocols relies on a foundation of key materials and instruments. The following table details these essential components.

Table 3: Essential Research Reagent Solutions and Materials for Temperature Control Experiments

| Item Name | Function/Description | Application Notes |

|---|---|---|

| Calibrated Precision Thermometer | Provides a traceable reference for validating sensor accuracy. | Use for initial calibration of integrated sensors; essential for GxP compliance. |

| Thermal Interface Material (TIM) | Improves thermal contact between surfaces (e.g., reactor vial and block). | Includes thermal greases, pads, or phase change materials; reduces thermal resistance [23]. |

| Standardized Solvent Library | A curated set of high-purity solvents for solubility and crystallization screening. | Ensures reproducibility in high-throughput material science studies [24]. |

| Heat Transfer Fluid | Circulating medium for liquid-based systems. | 50/50 water-ethylene glycol is common; check chemical compatibility and operating range [25]. |

| Data Acquisition (DAQ) System | Logs temperature data from multiple sensors for uniformity mapping. | Critical for characterizing system performance beyond the built-in controller readings. |

| Reaction Simulant Solution | A solution with known thermal properties (Cp, ρ) to mimic real reaction loads. | Used for system testing and ramp rate optimization without consuming valuable compounds. |

The comparative analysis presented in this application note underscores that there is no universally superior temperature control method; rather, the optimal choice is dictated by the specific demands of the high-throughput application. Peltier (thermoelectric) systems offer an unparalleled combination of bidirectional control, rapid cycling, and compact integration, making them ideal for precision tasks like solubility screening and polymorph research in parallel reactor platforms [24]. Liquid circulation systems excel in managing high heat fluxes and maintaining exceptional temperature uniformity, proving indispensable for scaling up exothermic reactions or managing large thermal masses [25]. Air cooling remains a pragmatic and cost-effective solution for applications operating near ambient conditions with low thermal loads [26].

The provided experimental protocols for calibration, ramp rate determination, and solubility screening furnish a framework for robust implementation, ensuring data quality and reproducibility. Ultimately, the convergence of these reliable thermal management technologies with automated platforms and intelligent control systems is foundational to advancing high-throughput research, accelerating the pace of discovery and development in the chemical and pharmaceutical sciences.

High-throughput experimentation (HTE) has revolutionized research and development in chemistry and pharmaceuticals, enabling the rapid parallel assessment of thousands of reaction conditions. Within this framework, temperature screening represents a critical parameter optimization domain, as temperature profoundly influences reaction kinetics, selectivity, yield, and scalability. Traditional one-factor-at-a-time (OFAT) temperature studies are prohibitively time-consuming and resource-intensive for exploring complex, multi-dimensional experimental spaces. This Application Note provides a detailed protocol for designing and executing a systematic temperature screening campaign within parallel reactor systems, contextualized within a broader thesis on advancing HTE methodologies. The integration of precision temperature control with automated platforms and machine intelligence, as exemplified by the Minerva framework [3], enables researchers to efficiently navigate high-dimensional optimization challenges and accelerate development timelines for chemical processes and active pharmaceutical ingredients (APIs).

Key Concepts and Scientific Rationale

The Role of Temperature in Reaction Optimization

Temperature is a fundamental physical parameter that directly affects molecular interactions and reaction pathways. In synthetic chemistry, temperature influences:

- Reaction Rate: According to the Arrhenius equation, rate constants exhibit exponential dependence on temperature.

- Reaction Selectivity: Differential activation energies for parallel pathways can make selectivity highly temperature-dependent.

- Catalyst Stability and Performance: Many catalytic systems, particularly non-precious metal catalysts like nickel, exhibit optimal activity within specific temperature windows [3].

- Phase Behavior: Temperature affects solubility, gas absorption, and mass transfer rates, particularly in multiphase systems.

High-Throughput Screening (HTS) Fundamentals

High-throughput screening involves the parallelized execution of numerous experiments using automated platforms and miniaturized reaction scales. Effective HTS campaigns require:

- Experimental Design: Strategic selection of factor combinations to maximize information gain within experimental constraints.

- Automation and Robotics: Enabling precise liquid handling, temperature control, and reaction setup.

- Analytical Integration: High-throughput analytical techniques for rapid product characterization.

- Data Analysis: Computational methods for extracting meaningful patterns and optimal conditions from large datasets.

The Z-factor is a key statistical parameter for assessing the quality and suitability of HTS assays, reflecting both the assay signal dynamic range and data variation associated with signal measurements [27].

Temperature Control Challenges in HTE

Maintaining precise and uniform temperature across multiple parallel reactions presents significant technical challenges:

- Thermal Gradients: Temperature variations across reactor blocks can lead to inconsistent results [28].

- Heat Transfer Limitations: Inefficient heat transfer through reactor materials and variable reaction volumes.

- Exothermic Reactions: Self-heating effects that create local temperature hotspots.

- Ambient Influences: Proximity to external ambient temperatures or nearby heat sources can significantly affect thermal performance [28].

Advanced thermal management systems address these challenges through closed-loop thermal control, thermal profiling, and design optimization to minimize impacts from heat sources [28].

Experimental Design and Workflow

Strategic Planning

A successful temperature screening campaign requires meticulous preliminary planning:

Define Clear Objectives: Establish primary optimization goals (e.g., yield maximization, impurity minimization, selectivity enhancement) and any constraints (e.g., temperature limits for thermally labile compounds).

Select Temperature Range and Intervals: Based on chemical feasibility and hardware capabilities. For many synthetic applications, a range from -20°C to +80°C can be explored using advanced temperature-controlled photoreactors [29]. The specific range should consider solvent boiling points, reagent stability, and catalyst activation requirements.

Determine Parallelization Scale: Standard HTE platforms typically operate at 24-, 48-, 96-, or 384-well formats, with 96-well plates being common in pharmaceutical process development [3].

Establish Experimental Budget: Define the total number of experiments feasible within time and resource constraints.

Temperature Screening Workflow

The following diagram illustrates the comprehensive workflow for a high-throughput temperature screening campaign:

Figure 1: High-Throughput Temperature Screening Workflow. The iterative optimization loop enables continuous model improvement based on experimental results.

Temperature Range Selection Guidelines

Table 1: Recommended Temperature Ranges for Different Reaction Types

| Reaction Category | Typical Range (°C) | Considerations |

|---|---|---|

| Biocatalytic Transformations | 20-45 | Limited by enzyme stability and activity |

| Photoredox Catalysis [29] | -20 to +80 | Temperature control critical for reproducibility |

| Transition Metal Catalysis [3] | 25-100 | Catalyst activation and stability dependent |

| Organometallic Reactions | -78 to 25 | Cryogenic conditions for unstable intermediates |

| Aqueous Mediated Processes | 50-150 | Enhanced kinetics while avoiding boiling |

| Solid-State Synthesis | 100-300 | Materials synthesis and crystallization |

Materials and Equipment

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Materials and Equipment for High-Throughput Temperature Screening

| Item | Function/Purpose | Specifications |

|---|---|---|

| Temperature-Controlled Parallel Reactor [29] | Parallel execution of reactions at defined temperatures | Precise control from -20°C to +80°C, 96-well format |

| Automated Liquid Handling System | Precise reagent dispensing across multiple reaction vessels | Nanoliter to milliliter volume range, temperature-controlled deck |

| Chemical Libraries | Diverse reactant sets for comprehensive screening | May include catalysts, ligands, substrates, additives |

| Thermal Stability Reference Standards | Monitoring and validation of temperature accuracy | Certified reference materials with known melting points |

| Sealed Reaction Vessels | Prevent solvent evaporation and atmospheric moisture ingress | Chemically resistant, temperature-stable materials |

| Inline Analytical Capability | Real-time reaction monitoring | HPLC, GC, FTIR, or MS detection compatible with flow systems |

| High-Throughput Flow Cytometry [30] [31] | Biological screening applications | Multi-parameter detection with compensation controls |

| Data Analysis Software | Processing and modeling of screening results | Machine learning algorithms for multi-objective optimization [3] |

Equipment Calibration and Validation

Proper calibration of temperature control systems is essential for generating reliable data:

- Regular Verification: Use calibrated thermocouples or resistance temperature detectors (RTDs) to verify setpoint accuracy across all reactor positions.

- Spatial Uniformity Mapping: Document temperature distribution across the entire reactor block to identify potential hotspots or cold spots [28].

- Dynamic Response Characterization: Assess heating and cooling rates to inform experimental timelines.

- Automated Calibration: Implement automated calibration procedures to baseline each thermal cell, applying offset values to ensure precise temperature control across all thermal locations [28].

Step-by-Step Experimental Protocol

Pre-Screening Preparation

Reactor System Setup

- Verify calibration of temperature control systems and thermal uniformity across all positions.

- Confirm clean, dry reaction vessels are properly seated in reactor blocks.

- Program temperature control method with defined setpoints, ramping rates, and stabilization periods.

Reaction Mixture Preparation

- Prepare master stocks of reagents, catalysts, and solvents at appropriate concentrations.

- Utilize automated liquid handlers to dispense reagents into reaction vessels according to experimental design.

- Implement appropriate mixing protocols to ensure homogeneity while considering potential solvent evaporation.

Temperature Gradient Establishment

- Program temperature controller with desired screening temperatures.

- Allow sufficient time for thermal equilibration before reaction initiation (typically 10-30 minutes depending on system).

- Verify stabilization using inline temperature probes in reference wells.

Screening Execution

Reaction Initiation

- For time-sensitive reactions, use automated reagent addition at temperature.

- Record precise reaction start times for each vessel to account for sequential processing.

Process Monitoring

- Monitor temperature stability throughout reaction duration.

- For extended reactions, implement periodic mixing to maintain homogeneity.

- Document any deviations from set conditions for subsequent data analysis.

Reaction Quenching

- Program automated quenching at predetermined timepoints.

- Alternatively, rapidly cool all reactions simultaneously to arrest reactivity.

- Ensure quenching method is compatible with subsequent analysis.

Analysis and Data Processing

Sample Processing

- Transfer reaction aliquots to analysis plates using automated liquid handlers.

- Dilute samples as needed to fit analytical method dynamic range.

- Include appropriate calibration standards and quality controls.

High-Throughput Analysis

Data Extraction and Management

- Automate data extraction from analytical instruments to structured databases.

- Apply necessary correction factors for background interference or matrix effects.

- Document all metadata including lot numbers, preparation dates, and any deviations.

Data Analysis and Interpretation

Response Surface Modeling

The complex relationship between temperature and reaction outcomes is best understood through response surface methodology: