Mastering Temperature and Solvent Interactions: A DoE Guide for Optimized Drug Development

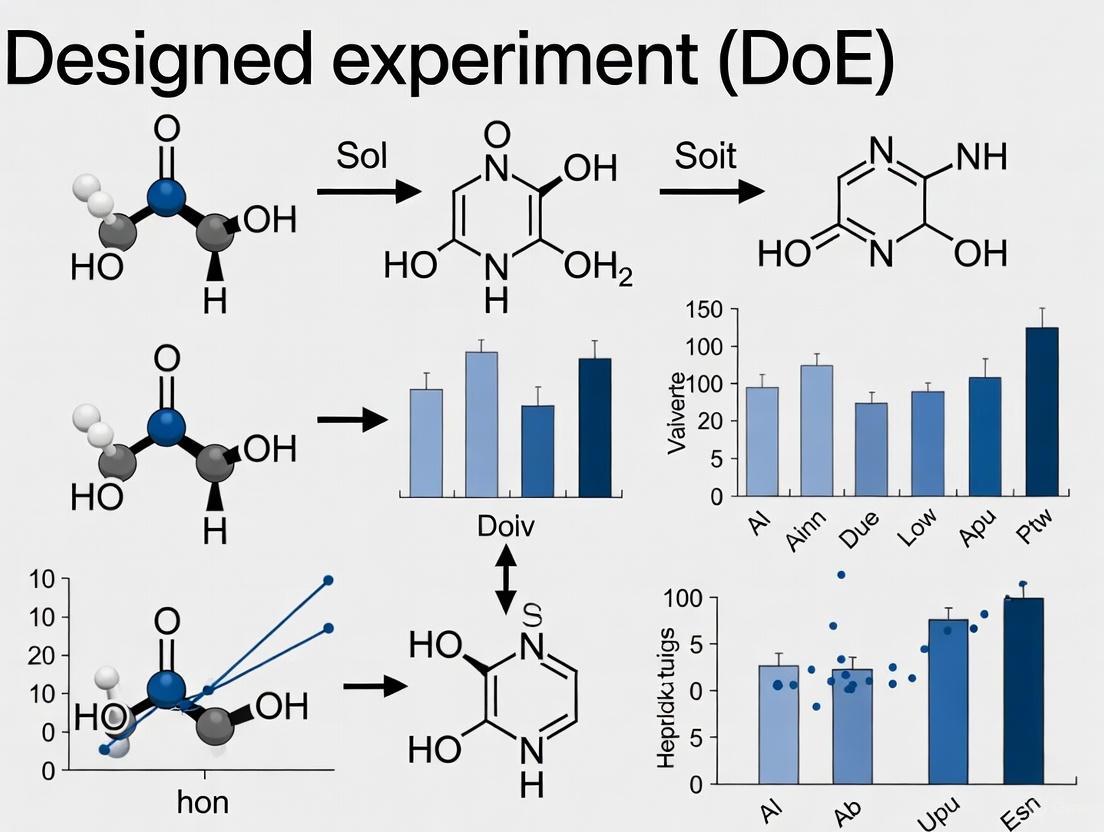

This article provides a comprehensive guide for researchers and drug development professionals on applying Design of Experiments (DoE) to systematically investigate the critical interaction effects between temperature and solvent in...

Mastering Temperature and Solvent Interactions: A DoE Guide for Optimized Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying Design of Experiments (DoE) to systematically investigate the critical interaction effects between temperature and solvent in chemical processes. Moving beyond traditional one-variable-at-a-time (OVAT) approaches, we explore the foundational principles of these interactions, detail methodological frameworks for efficient experimental design, and offer advanced troubleshooting and optimization strategies. Through validation and comparative analysis, we demonstrate how a robust DoE approach can accelerate process development, enhance reproducibility, and improve yields in complex systems such as API synthesis and radiochemistry, ultimately leading to more efficient and scalable pharmaceutical processes.

The Critical Interplay: Uncovering How Temperature and Solvent Properties Govern Chemical Outcomes

| Category | Item | Function & Application |

|---|---|---|

| Solvent Selection | ACS GCI Solvent Selection Tool [1] | Interactive tool using Principal Component Analysis (PCA) to select solvents based on physical properties, environmental, and safety data. |

| Solvent Classes | Polar Protic (e.g., Water, Methanol), Polar Aprotic (e.g., DMSO, DMF), Non-polar (e.g., Hexane) [2] [3] | Used to screen solvent space; different classes stabilize charges in transition states to different extents, critically affecting reaction rate and mechanism. [2] |

| Model Compounds | Paracetamol, Allopurinol, Furosemide, Budesonide [4] | Poorly water-soluble pharmaceutical compounds with established solubility data in various solvents and temperatures for model development and validation. |

| Statistical Software | DoE Software (e.g., for Response Surface Methodology) [5] | Enables design of efficient experiments and modeling of complex interactions between factors like pressure, temperature, and co-solvent concentration. |

| Thermodynamic Model | NRTL-SAC (Nonrandom Two-Liquid Segment Activity Coefficient) [4] | A thermodynamic framework for correlating and predicting drug solubility in pure and mixed solvents using conceptual segments. |

{# Frequently Asked Questions: Troubleshooting Temperature-Solvent Effects }

+++ Why did my reaction yield drop or my API precipitate when I scaled up the process? This is a classic sign of unoptimized temperature-solvent interactions. Small-scale reactions in vials can have very different heat transfer and mixing dynamics than larger batches. A solvent that provides adequate solubility at a small scale and a specific temperature may not do so in a larger vessel where local temperatures can vary. Furthermore, the enthalpy of dissolution is a key factor [6]. If the dissolving process is endothermic, higher temperatures increase solubility; if exothermic, higher temperatures decrease it. A temperature shift during scale-up can therefore lead to precipitation.

Solution: Use a DoE approach to systematically map solubility versus temperature for your compound in the chosen solvent. Investigate the use of co-solvents, which can alter the thermodynamic profile of the solution and improve solubility across a wider temperature range [5] [4]. +++ How can I make my nucleophilic substitution reaction proceed faster? The answer depends entirely on whether your reaction follows an SN1 or SN2 pathway, and the solvent choice is critical [2] [3].

For suspected SN1 reactions: The rate-determining step is the formation of a carbocation. Polar protic solvents (e.g., water, alcohols) stabilize the ionic transition state and intermediate through strong solvation, dramatically increasing the reaction rate [2] [3].

- For suspected SN2 reactions: The reaction is bimolecular and involves a charged nucleophile. Polar aprotic solvents (e.g., DMSO, DMF, CH₃CN) are optimal because they solvate the cation of the nucleophile but leave the anion largely "naked" and highly reactive, significantly accelerating the rate [2].

A summary of solvent effects on substitution reactions is provided in Table 1. +++ My supercritical fluid extraction (SFE) yield is low, even with a co-solvent. Which parameter should I adjust first? In SFE, parameters interact synergistically. Research on SFE of bioactive compounds shows that while higher pressure and co-solvent (e.g., ethanol) levels increase yield, higher temperature can sometimes have a negative effect [5]. The optimal temperature is a balance between increasing solute volatility and decreasing CO₂ fluid density.

- Solution: Do not adjust one factor at a time. Employ a Response Surface Methodology (RSM) to optimize the interactions. For instance, one study found the optimal conditions to be 250 bar, 45 °C, and 100% ethanol as a co-solvent [5]. Increasing temperature beyond a certain point without adjusting pressure can be detrimental. +++ How can I find a safer, "greener" solvent alternative without sacrificing reaction performance? Systematic solvent mapping is the most effective strategy. The ACS GCI Solvent Selection Tool uses Principal Component Analysis (PCA) to map solvents based on their physical properties [1]. You can:

- Identify the solvent you are currently using on the map.

- Locate several solvents that are clustered nearby, as they have similar properties and are likely to behave similarly in your reaction.

- Select a safer or greener alternative from this cluster for testing [7] [1]. This method has been successfully used to replace toxic/hazardous solvents in SnAr reactions [7]. +++ Why does the equilibrium of my tautomeric compound (e.g., a 1,3-dicarbonyl) shift when I change solvents? This is due to differential stabilization of the tautomers by the solvent. For keto-enol tautomerism, the enol form can often stabilize itself via intramolecular hydrogen bonding. In non-polar solvents that cannot compete for H-bonding, this intramolecular H-bond is very stable, and the enol form is favored. In polar protic solvents (e.g., water), the solvent molecules effectively H-bond with the carbonyl groups, destabilizing the intramolecular H-bond in the enol and shifting the equilibrium toward the diketo form [2]. Table 2 provides quantitative data on this effect.

{# Experimental Data and Protocols }

| Reaction Type | Solvent Type | Example Solvent (Dielectric Constant) | Relative Rate | Mechanistic Reason |

|---|---|---|---|---|

| SN1 | Polar Protic | Water (78) | 150,000 | Stabilizes carbocation transition state and intermediate. |

| Methanol (33) | 4 | |||

| Polar Aprotic | Dimethylformamide (37) | 2,800 | Less effective at stabilizing the cationic intermediate. | |

| SN2 | Polar Protic | Water (78) | 7 | Solvates and stabilizes the anionic nucleophile, making it less reactive. |

| Methanol (33) | 1 (Baseline) | |||

| Polar Aprotic | Dimethylformoxide (49) | 1,300 | Poorly solvates anions, resulting in a "naked" and highly reactive nucleophile. | |

| Acetonitrile (38) | 5,000 |

Equilibrium Constant KT = [cis-enol] / [diketo] for a 1,3-dicarbonyl compound

| Solvent | Polarity | KT |

|---|---|---|

| Gas Phase | N/A | 11.7 |

| Cyclohexane | Very Low | 42.0 |

| Benzene | Low | 14.7 |

| Dichloromethane | Medium | 4.2 |

| Ethanol | High (Protic) | 5.8 |

| Water | Very High (Protic) | 0.23 |

Response: Total Extraction Yield from Thai Fingerroot

| Factor | Low Level | High Level | Effect on Yield (Summary) |

|---|---|---|---|

| Pressure | 200 bar | 300 bar | Increase |

| Temperature | 35 °C | 55 °C | Negative effect (in this range) |

| CO₂ Flow Rate | 1 L/min | 3 L/min | Increase |

| Ethanol Co-solvent | 0% | 100% | Increase |

| Optimal Condition Combination: 250 bar, 45 °C, 3 L/min, 100% Ethanol → Yield: 28.67% |

Objective: To determine the solubility of a pharmaceutical compound (e.g., Paracetamol) in various pure solvents across a temperature range.

Materials:

- Active Pharmaceutical Ingredient (API) of high purity.

- Selected pure solvents (e.g., water, ethanol, acetone, ethyl acetate, n-hexane).

- Thermostatted water bath or incubator shaker.

- HPLC system with UV detector for analysis.

Method:

- Preparation: Pre-saturate a known volume of each solvent in separate sealed vials by adding an excess of the API.

- Equilibration: Place the vials in a thermostatted shaker. Agitate continuously at a constant temperature (e.g., 298.2 K) for a sufficient time (typically >24 hours) to ensure equilibrium is reached.

- Sampling: After equilibration, allow the undissolved solid to settle. Withdraw a sample of the saturated supernatant solution using a pre-warmed syringe to prevent precipitation.

- Analysis: Dilute the sample appropriately and analyze by HPLC. The concentration is determined by comparison with a standard calibration curve.

- Replication: Repeat the experiment at different temperatures (e.g., from 298.2 K to 315.2 K) to gather data for thermodynamic modeling.

- Modeling: Use the solubility versus temperature data to determine the thermodynamic properties of dissolution (Gibbs energy, enthalpy, entropy) and to evaluate thermodynamic models like NRTL-SAC [4].

Objective: To systematically find the optimal solvent and temperature for a new synthetic reaction, avoiding a One-Variable-at-a-Time (OVAT) approach.

Materials:

- Substrates and reagents.

- A selection of solvents covering the PCA "solvent space" [7] [1].

- DoE software.

Method:

- Factor Selection: Identify key factors to optimize (e.g., Solvent, Temperature, Catalyst Loading).

- Solvent Selection: Using a PCA solvent map, choose 4-8 solvents that are located at the extreme vertices of the map to maximize the diversity of solvent properties screened [7].

- Experimental Design: Create a statistical design (e.g., a Full Factorial or Central Composite Design) that includes the chosen solvents (as categorical factors) and temperature (as a numerical factor). The design will include recommended experiments, including center points.

- Execution: Run the experiments as specified by the design matrix.

- Analysis: Input the results (e.g., yield, conversion) into the DoE software. The model will identify which factors and factor interactions are statistically significant.

- Prediction & Validation: Use the model to predict the optimal combination of solvent and temperature. Run a validation experiment at the predicted optimum to confirm the result.

{# Workflow and Conceptual Diagrams }

FAQs: Core Principles and Troubleshooting

FAQ 1: How does temperature generally affect the solubility of solid solutes in liquid solvents? The relationship is not universal. While increasing temperature often increases the solubility of solid solutes, the extent varies dramatically. For example, the solubility of potassium nitrate in water increases significantly with temperature, whereas the solubility of sodium chloride remains largely unchanged. In some cases, like with cesium (III) sulfate, solubility can even decrease with rising temperature [8]. This variability is a critical consideration for mixtures, as you cannot assume all analytes will behave similarly.

FAQ 2: Why does my headspace analysis yield inconsistent results when I change the extraction temperature? Temperature has a non-uniform effect on the volatility of different compounds in a mixture. As temperature increases, it drives more analytes into the headspace, but the degree of change is analyte-dependent. This can alter the relative composition of the vapor phase. For instance, during the headspace analysis of aromatic hydrocarbons in olive oil using SPME, the response for different compounds changes in a complex, non-linear manner with temperature. This can skew quantitative results and impact selectivity. Always document and carefully control your extraction temperature to ensure reproducibility [8].

FAQ 3: I've observed unexpected solute behavior in supercritical fluid extraction (SFE) when adjusting temperature. Is this normal? Yes, this is a known complexity of SFE. The solvating power of a supercritical fluid is tied to its density. At a constant pressure, increasing the temperature typically decreases the fluid density, which would lower solubility. However, solute fugacity also plays a role, leading to non-intuitive outcomes. For example, the solubility of soybean oil in supercritical CO₂ remains low until a threshold temperature (60–70 °C) is reached, after which it increases substantially. In SFE, pressure is often the more straightforward variable to control for modulating solubility [8].

FAQ 4: How does temperature influence the partitioning of a solute between two immiscible solvents? The effect is governed by the heat of solution. You can apply Le Chatelier’s principle: if the dissolution process is exothermic (releases heat), the partition coefficient (e.g., KOW) will decrease with increasing temperature. Conversely, if the process is endothermic (absorbs heat), the partition coefficient will increase. The magnitude of the change is proportional to the molar heat of solution for the system [8].

FAQ 5: What is the risk of thermal degradation when using high-temperature extraction techniques? Thermal degradation is a valid concern. Research on techniques like Accelerated Solvent Extraction (ASE) has shown that while some stable compounds show no degradation at 100°C, others with known thermal sensitivity, like dicumyl peroxide, can begin to decompose at 150°C. A good practice is to run well-characterized standards or control samples at your intended method temperature and check for the formation of degradation products [8].

FAQ 6: Are dispersion interactions like CH–π bonds significantly affected by the solvent environment? Recent research indicates that solvent attenuation of dispersion interactions is remarkably consistent across a wide range of solvents. Studies using rigid molecular balances found that these interactions are attenuated to about 20-25% of their gas-phase strength (75-80% attenuation) in both polar solvents like DMSO and methanol and non-polar solvents. This suggests that while solvents consistently dampen these forces, the effect itself is not highly sensitive to solvent polarity [9].

Key Experimental Data and Protocols

Table 1: Temperature Dependence of Air-Water Partitioning for Neutral PFAS

| Compound | log Kaw at 25°C | Molar Internal Energy Change of Partitioning, ΔU (kJ/mol) |

|---|---|---|

| CF3-O-ALC | -2.6 to -1.0 | 20 - 37 |

| CF3-S-ALC | -2.6 to -1.0 | 20 - 37 |

| C3F7-O-ALC | ~ -1.0 (approx. 1.5 log units higher than CF3-) | 20 - 37 |

| C3F7-S-ALC | ~ -1.0 (approx. 1.5 log units higher than CF3-) | 20 - 37 |

| 4:2 FTOH | Matched previous studies | Matched previous studies |

Data sourced from a 2025 study on PFAS air-water partitioning [10].

Table 2: Observed Solubility Trends for Various Solutes in Water

| Solute | Observed Solubility Trend with Increasing Temperature |

|---|---|

| Potassium Nitrate | Significant increase |

| Sugar (Sucrose) | Moderate increase (approx. doubles with a 40°C increase) |

| Sodium Chloride | Negligible change |

| Cesium (III) Sulfate | Decrease above room temperature |

Data summarized from chromatographyonline.com [8].

Detailed Experimental Protocol: Determining Air-Water Partition Coefficient (Kaw)

This protocol is adapted from a 2025 study that used a modified static headspace method with analysis via the aqueous phase [10].

Objective: To determine the dimensionless air-water partition coefficient (Kaw) of a neutral chemical at various temperatures.

Principle: An aqueous solution of the analyte is equilibrated in vials with varying headspace-to-liquid volume ratios. The concentration of the analyte in the aqueous phase after equilibrium is used to calculate Kaw.

Materials and Reagents:

- Test Chemical: Pure standard of the neutral analyte.

- Water: High-purity water (e.g., Milli-Q water).

- Glass Vials: Multiple vials with sealed septa, of the same volume but prepared with varying solution volumes to create different Vhs/Vsol ratios.

- Temperature-Controlled Bath or Incubator: For precise temperature control.

- Analytical Instrument: LC-MS system for quantifying analyte concentration in the aqueous phase.

Procedure:

- Preparation: Prepare an aqueous stock solution of the test chemical.

- Sample Setup: Pipette different volumes of the stock solution into multiple sealed vials. This creates a series of samples with identical initial analyte amounts but different headspace/solution volume (Vhs/Vsol) ratios.

- Equilibration: Place all vials in a temperature-controlled environment and allow them to equilibrate until the chemical has partitioned between the water and headspace.

- Sampling: After equilibrium is reached, sample the aqueous phase from each vial.

- Analysis: Analyze the aqueous samples using LC-MS to determine the equilibrium concentration (csol) in each vial.

Data Analysis: The relationship between the measured LC-MS peak area and the volume ratio is given by: [ \text{Area} = \frac{\text{RF} \cdot c0}{1 + K{aw} \cdot \frac{V{hs}}{V{sol}}} ] Where:

- Area is the LC-MS peak area.

- RF is the response factor of the instrument.

- c0 is the initial concentration of the solution.

- Kaw is the air-water partition coefficient.

- Vhs/Vsol is the headspace-to-solution volume ratio.

Fit the measured Area and Vhs/Vsol data to this equation using nonlinear regression analysis to determine the value of Kaw and its confidence interval.

Temperature Dependence: Repeat the entire experiment at several temperatures. The temperature dependence is quantified using a Van't Hoff-like equation: [ \ln K{aw} = -\frac{\Delta U}{RT} + \text{constant} ] Plot (\ln K{aw}) against (1/T) (where T is temperature in Kelvin). The slope of the resulting line is (-\Delta U / R), from which the molar internal energy change of air-water partitioning (ΔU) can be calculated [10].

Workflow and Relationship Diagrams

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Materials for Thermodynamic Solvent Interaction Studies

| Reagent / Material | Function / Application |

|---|---|

| Rigid Molecular Balances (e.g., N-phenylsuccinimide scaffolds) | Quantifying weak noncovalent interactions (e.g., CH–π dispersion) and their solvent attenuation in solution [9]. |

| Neutral PFAS Alcohols (e.g., CF3-O-ALC, 4:2 FTOH) | Model compounds for studying temperature-dependent air-water partitioning and volatility of neutral PFAS transformation products [10]. |

| Deuterated Solvents (CDCl3, DMSO-d6, etc.) | Solvents for NMR-based conformational analysis of molecular balances to determine folding equilibria and interaction energies [9]. |

| High-Purity Sealed Vials | Essential for static headspace experiments with variable headspace/solution ratios to determine air-water partition coefficients (Kaw) [10]. |

| Supercritical CO₂ | The most common solvent for Supercritical Fluid Extraction (SFE); its density and solvating power are highly dependent on temperature and pressure [8]. |

| Subcritical Water | Water heated above 200°C under pressure; its lowered dielectric constant allows it to solubilize non-polar solutes, useful for specialized extractions [8]. |

FAQs: Temperature Effects in Pharmaceutical Development

1. Why does temperature increase the solubility of some solid drugs but decrease the solubility of others?

The effect of temperature on solubility is determined by whether the overall dissolution process is endothermic (absorbs heat) or exothermic (releases heat). For most ionic solids and salts, dissolution is endothermic. The energy required to break up the crystal lattice (endothermic) is greater than the energy released when ions are solvated (exothermic). Increasing temperature supplies this energy, enhancing solubility [11]. However, for salts where the solvation energy is very large, making the overall process exothermic, Le Chatelier’s principle dictates that increasing temperature will decrease solubility [11]. The solubility of gases in liquids, in contrast, typically decreases with increasing temperature, as the process of dissolution is usually exothermic.

2. How can we systematically optimize reaction temperature and solvent for a new API synthesis?

Using a "one variable at a time" (OVAT) approach can miss optimal conditions due to interactions between factors like temperature and solvent. A Design of Experiments (DoE) methodology is recommended. This involves:

- Mapping Solvent Space: Using a solvent property map based on Principal Component Analysis (PCA) to select solvents that represent a wide range of properties, rather than relying on a small, familiar set [12].

- Statistical Design: Running a structured set of experiments where multiple factors (e.g., temperature, solvent identity, concentration) are varied simultaneously. This efficiently explores the "reaction space" and identifies optimal conditions, even when factor interactions are present [12].

3. We need to inject large sample volumes in our analytical method, but this broadens the peaks. How can temperature help?

Temperature-Assisted On-Column Solute Focusing (TASF) is a technique that uses temperature to compress injection bands in capillary chromatography. The process is:

- Focusing: A short segment at the head of the column is transiently cooled (e.g., to 5°C). The lower temperature increases solute retention, causing the analytes to focus into a narrow band [13].

- Separation: The column is rapidly heated to the separation temperature (e.g., 60°C), releasing the focused band for analysis. This method can effectively manage injection volumes up to 130% of the column's fluid volume with minimal peak broadening and is particularly useful when solvent-based focusing is not feasible [13].

4. Beyond solubility, how does temperature directly affect the stability of a drug molecule?

Increasing temperature intensifies molecular vibrations, which can lead to degradation and instability. Computational studies show that for molecules like sinapic acid, increasing temperature within the range of 100 to 1000 Kelvin leads to a rise in heat capacity, enthalpy, and entropy. These thermodynamic changes indicate a higher energy state that can push the molecule toward decomposition, adversely affecting its shelf-life and efficacy [14].

Troubleshooting Guides

Problem: Inconsistent Solute Diffusivity in Multi-Component Systems

Background In complex systems like alloys or concentrated formulations, the diffusion rate of a solute can be unexpectedly enhanced or hindered by the presence of other solute atoms, affecting the material's properties [15].

Investigation and Solution

- Step 1: Identify Key Interactions. Use atomic-scale simulations (e.g., Density Functional Theory) to calculate the activation energy (Q) and diffusion prefactor (D0) for your solute pairs. The activation energy is often governed by impurity volume (strain effects), while the prefactor is influenced by chemical bonding and electron localization [15].

- Step 2: Determine the Mechanism.

- Step 3: Multi-scale Modeling. Incorporate the findings from step 1 into higher-scale models (e.g., phase-field methods) to predict and design stable microstructures under operational temperature cycles [15].

Problem: Unintended Changes in Selectivity During High-Temperature Extraction

Background Applying heat to enhance analytical extractions (e.g., Accelerated Solvent Extraction, headspace analysis) can change the relative extraction yield of different compounds, altering the perceived composition [16].

Investigation and Solution

- Step 1: Check Solute-Specific Solubility Trends. Do not assume all solubilities increase equally with temperature. Consult solubility curves for your specific analytes. While the solubility of sucrose increases dramatically with temperature, the solubility of sodium chloride remains almost constant [11] [16].

- Step 2: Analyze Thermodynamic Drivers. The change in a solute's partition coefficient (Kow) with temperature is proportional to its molar heat of solution. For exothermic dissolution, Kow decreases with temperature; for endothermic dissolution, it increases [16].

- Step 3: Optimize with DoE. For complex mixtures, use a Design of Dynamic Experiments (DoDE) to design input signals (e.g., temperature profiles) that are "plant-friendly" and can systematically identify the temperature that provides the best compromise between yield and selectivity for your specific analyte mixture [17].

- Step 4: Validate with Standards. Always run well-characterized standards or samples at the elevated temperature to check for thermal degradation products, which can confound selectivity measurements [16].

Data Presentation

Table 1: Solubility Trends of Common Solutes in Water

Data presented as grams of solute per 100 grams of water [11].

| Solute | 0°C | 20°C | 40°C | 60°C | 80°C | 100°C | Overall Trend |

|---|---|---|---|---|---|---|---|

| Sucrose | 179 | 204 | 241 | 288 | 363 | 487 | Strong increase |

| Potassium Nitrate (KNO₃) | ~14 | ~32 | ~64 | ~110 | ~169 | ~246 | Strong increase |

| Sodium Chloride (NaCl) | 35.5 | 36.0 | 36.5 | 37.5 | 38.0 | 39.0 | Slight increase |

| Lithium Sulfate (Li₂SO₄) | ~36 | ~35 | ~34 | ~33 | ~32 | ~31 | Slight decrease |

Table 2: Effect of a Secondary Solute on Diffusion Parameters in FCC Nickel

Data based on Density Functional Theory calculations showing how a secondary solute can alter the diffusion of a primary solute [15].

| Migrating Solute | Secondary Solute | Effect on Activation Energy (Q) | Effect on Prefactor (D₀) | Proposed Dominant Mechanism |

|---|---|---|---|---|

| Aluminium (Al) | Aluminium (Al) | Reduction | Increase | Strain relaxation & bond stiffening/softening |

| Aluminium (Al) | Cobalt (Co) | Reduction | Increase | Strain relaxation & bond stiffening/softening |

| Cobalt (Co) | Cobalt (Co) | Increase | Decrease | Strain & magnetic interactions |

| Cobalt (Co) | Aluminium (Al) | Significant deviation | Significant deviation | Complex electronic interactions |

Experimental Protocols

Protocol 1: Investigating Temperature-Dependent Solubility

Objective: To accurately measure and model the solubility of a solid solute in a solvent across a temperature range.

Materials:

- Research Reagent Solutions (see table below)

- Temperature-controlled water bath or hot plate with stirring

- Glass vessels (e.g., jacketed reactor)

- Analytical balance

- Filter assembly (for hot filtration)

- Analytical method for concentration quantification (e.g., HPLC, gravimetric analysis).

Procedure:

- Saturation: For each temperature point, add an excess of the solute to the solvent in the temperature-controlled vessel. Stir continuously for a prolonged period to ensure equilibrium is reached [18].

- Equilibration: Maintain a constant temperature with high precision (±0.1°C). The time to reach equilibrium can vary from hours to days and must be determined empirically.

- Sampling: Once equilibrium is achieved, sample the saturated solution. For high-temperature points, use hot filtration to prevent precipitation during sampling.

- Analysis: Quantify the concentration of the solute in the saturated solution using your chosen analytical method.

- Data Fitting: Plot solubility (e.g., mole fraction or g/100g solvent) versus temperature. For many systems, especially n-alkanes, a log-linear relationship between solubility and temperature provides a good fit for regression analysis [18].

Protocol 2: Computational Analysis of Temperature and Solvent Effects

Objective: To use Density Functional Theory (DFT) to predict how solvent polarity and temperature affect a drug molecule's structure and properties.

Materials:

- Software: Gaussian 09W or similar, GaussView or similar for visualization [14].

- Hardware: High-performance computing cluster.

- Molecule of interest (e.g., Sinapic Acid).

Procedure:

- Geometry Optimization: Optimize the molecular structure of the compound in the gas phase using DFT (e.g., B3LYP method) with a suitable basis set (e.g., 6-311++G(d,p)) [14].

- Solvent Modeling: Re-optimize the geometry using a solvation model (e.g., IEFPCM) to simulate different solvent environments (e.g., chloroform, ethanol, water) [14].

- Frequency Calculation: Perform a vibrational frequency analysis on the optimized structures to confirm a true minimum (no imaginary frequencies) and to obtain thermodynamic properties (enthalpy, entropy, heat capacity) [14].

- Thermodynamic Analysis: Calculate the thermodynamic parameters (heat capacity, Cp, enthalpy, H, and entropy, S) over a temperature range (e.g., 100-1000 K). The software outputs these values directly, which can be analyzed to understand energy and stability changes [14].

- Electronic Analysis: Calculate the HOMO-LUMO energy gap, dipole moment, and chemical reactivity indices from the optimized structures to understand changes in stability and reactivity due to solvent and temperature [14].

Visualizations

Diagram: Temperature-Solvent DoE Workflow

Diagram: Molecular-Level Temperature Effects

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Temperature-Solvent Interaction Studies

| Reagent / Material | Function / Application |

|---|---|

| Principal Component Analysis (PCA) Solvent Map | A statistical tool that groups solvents by multiple properties, enabling systematic selection of diverse solvents for DoE studies instead of relying on intuition [12]. |

| Density Functional Theory (DFT) | A computational method for modeling the electronic structure of atoms and molecules. It is used to predict atomic-scale properties like activation energy for diffusion, solute-solute interactions, and the effect of temperature on molecular structure [15] [14]. |

| Integral Equation Formalism Polarizable Continuum Model (IEFPCM) | A solvation model used in computational chemistry to simulate the effect of a solvent on a molecule's electronic structure, geometry, and energy, allowing for the study of solvent polarity effects [14]. |

| Pseudorandom Binary Sequence (PRBS) / Schroeder-Phase Signal | Types of input signals used in Design of Dynamic Experiments (DoDE) to persistently excite a system. This helps in efficiently capturing process dynamics and identifying model parameters with minimal experimental duration [17]. |

| Temperature-Controlled Capillary Column | A chromatographic column where a short segment at the inlet can be rapidly cooled and heated. It is the core component for Temperature-Assisted On-Column Solute Focusing (TASF) to mitigate volume overload [13]. |

What is the core concept behind mapping "solvent space"? Mapping solvent space is a dimensionality reduction technique that transforms complex, multi-property solvent data into a simplified, visual map. Each solvent is described by numerous physical and chemical properties (e.g., boiling point, dipole moment, hydrogen-bonding capacity). Principal Component Analysis (PCA) condenses these many dimensions into two or three primary principal components (PCs), which capture the most significant variance in the data. Solvents with similar properties cluster together on the resulting map, while dissimilar solvents are positioned far apart, providing an intuitive visual tool for comparing solvents and identifying potential substitutes. [19] [20]

How does this fit into a broader thesis on temperature and solvent interaction effects? Within a Design of Experiments (DoE) framework, understanding solvent space is a foundational step. Before optimizing reaction parameters like temperature, you must first select the candidate solvents to test. A PCA-based solvent map enables a rational, pre-screening selection of structurally diverse solvents for your DoE studies. This ensures that your experimental design efficiently explores the true range of solvent effects on your reaction, leading to more robust and predictive models of how temperature and solvent interactions influence yield, selectivity, and other critical responses. [21]

Table 1: Essential Research Tools and Resources for PCA-Based Solvent Selection

| Tool or Resource Name | Type | Key Function | Source/Reference |

|---|---|---|---|

| ACS GCI Solvent Selection Tool | Interactive Web Tool | Interactive PCA of 272 solvents based on 70 properties; allows filtering by functionality and greenness. | American Chemical Society Green Chemistry Institute Pharmaceutical Roundtable [20] |

| AI4Green / Solvent Surfer | Open-Source Software | An electronic laboratory notebook feature using interactive kernel PCA, allowing users to reshape the map with expert knowledge. | PMC [19] |

| CHEM21 Solvent Selection Guide | Database/Guide | Heuristic ranking of solvents as "Recommended", "Problematic", "Hazardous", or "Highly Hazardous" based on GHS hazards. | Pharmaceutical Roundtable Innovative Medicines Initiative [19] |

| Hansen Solubility Parameters (δD, δP, δH) | Descriptor Set | Quantifies dispersion forces, polar interactions, and hydrogen-bonding ability to predict solubility. | [19] |

| Kamlet–Abboud–Taft Parameters (α, β, π*) | Descriptor Set | Describes solvent hydrogen-bond acidity, basicity, and dipolarity/polarizability for linear solvation energy relationships. | [19] |

Table 2: Core Physical Property Descriptors for PCA [19]

| Descriptor | Units | Typical Range (Mean) | What It Represents |

|---|---|---|---|

| Molecular Weight | g mol⁻¹ | 18 - 179 (91) | Molecular size |

| Boiling Point | °C | 35 - 248 (120) | Volatility |

| Dielectric Constant | - | 1.8 - 89.8 (18.4) | Polarity |

| Dipole Moment | Debye | 0 - 4.8 (2.1) | Polarity |

| Vapor Pressure | mmHg | 0 - 538 (75) | Evaporation rate |

| Viscosity | cP | 0.2 - 16 (1.7) | Resistance to flow |

| Log P | - | -1.4 - 4.7 (0.8) | Hydrophobicity |

Experimental Protocols & Methodologies

Protocol: Building a Static PCA Solvent Map

Objective: To create a 2D map of solvents based on their inherent physical properties for initial substitute screening.

Materials & Data:

- Solvent List: A comprehensive list of target solvents (e.g., 57 to 272 solvents from research and process chemistry) [19] [20].

- Descriptor Set: A matrix of standardized numerical data for each solvent. The ACS tool uses 70 properties, while a more focused set may include the 16 core properties listed in Table 2 [19] [20].

- Software: A statistical software package (e.g., Python with scikit-learn, R, Spotfire, or the pre-built ACS web tool).

Method:

- Data Collection & Standardization: Compile a data matrix where rows are solvents and columns are descriptor values. Standardize the data (e.g., Z-score normalization) to ensure all descriptors contribute equally, regardless of their original units [19].

- Perform PCA: Submit the standardized data matrix to PCA. The algorithm will calculate new, orthogonal variables (Principal Components) that are linear combinations of the original descriptors. The first PC (PC1) captures the greatest variance in the data, followed by PC2, and so on [19].

- Generate the 2D Map: Create a scatter plot using the scores of PC1 and PC2. Each point on the plot represents a single solvent.

- Interpretation: Analyze the loadings of the original descriptors on PC1 and PC2. Descriptors with high absolute loadings for a specific PC are the most influential in determining the solvent positions along that axis. Solvents close to each other on the map are functionally similar [20].

Protocol: Applying Interactive Knowledge-Based Kernel PCA

Objective: To tailor the solvent map by incorporating domain-specific knowledge or experimental results, creating a custom model for a particular reaction or process.

Materials & Data:

- An initial PCA solvent map (from Protocol 3.1).

- Expert knowledge or experimental data (e.g., reaction yield, solubility) for a subset of "control point" solvents.

Method:

- Define Control Points: Select a few solvents on the existing map for which you have experimental data or strong expert knowledge.

- Impart Knowledge: Interactively drag these control points to new positions on the map that reflect their known performance. For example, group high-yielding solvents together in one region and poor-performing solvents in another [19].

- Recalculate the Map: The kernel PCA algorithm solves an optimization problem that maximizes the variance explained by the principal components while respecting the new user-defined constraints (the positions of your control points). The mathematical formulation is:

max Var(fs) + Ω(ysi, fs(xi))whereΩis the term that incorporates the control point constraints [19]. - Identify New Substitutes: The map recalculates, and the positions of all other solvents shift based on the newly imposed relationships. Potential substitute solvents are those that now appear close to your high-performing control points [19].

Troubleshooting Common Experimental Issues

FAQ: My reaction performance doesn't correlate well with the standard PCA map. Why? The generic PCA map is based on broad physical properties, which may not capture the specific molecular interactions governing your reaction. Solution: Use the interactive kernel PCA approach (Protocol 3.2). By providing just a few data points from your own reaction, you can reshape the map to reflect your specific "activity domain," making it a more accurate predictor for your system [19].

FAQ: I found a potential substitute solvent on the map, but it caused my polymer/resin to precipitate. What went wrong? The PCA map groups solvents by global similarity. Your formulation may be sensitive to a specific property like "solvent activity" or "solvation power" for your particular polymer. Solution: Cross-reference the PCA suggestion with a direct measurement of solvent activity. Prepare concentrated solutions of your polymer in the original and substitute solvents and measure their viscosities. The solvent that provides comparable viscosity reduction at the same concentration is the better functional substitute, even if it appears slightly farther away on the PCA map [22].

FAQ: How do I handle solvent blends in a PCA framework? PCA maps are typically built from data on pure solvents. Predicting the properties of a blend is non-trivial. Solution: Do not average solvent properties linearly. For key properties like evaporation rate, interactions between solvent molecules can make the blend's behavior very different from the weighted average. Use specialized software or laboratory testing to verify the properties of any solvent blend identified as a potential substitute [22].

FAQ: The "greenest" solvent on the map is too expensive or not available in my lab. What should I do? Solution: Use the PCA map's spatial relationships. Identify the cluster containing the ideal green solvent. Then, look for other solvents within the same cluster that have a better cost profile or are readily available to you. The CHEM21 guide within tools like AI4Green can help you quickly assess the greenness of these alternatives [19].

Integrating PCA with DoE for Temperature and Solvent Interaction Studies

How do I combine solvent mapping with DoE for a temperature study? A sequential, rational approach is most effective, as shown in the workflow below.

- Initial Screening: Select 4-6 solvents from the PCA map that are widely dispersed across the 2D space. This ensures maximum diversity in solvent properties for your initial DoE [21].

- Design the Experiment: Create a DoE where the factors include solvent (a categorical factor), temperature, and any other relevant process parameters. A response surface methodology (e.g., Central Composite Design) is often appropriate.

- Run & Model: Execute the experiments and fit a statistical model to your response data (e.g., yield, purity). This model will contain interaction terms between solvent and temperature, quantitatively revealing how the solvent effect changes with temperature.

- Refine the Map: Feed the performance data from your DoE back into an interactive kernel PCA model. This will create a powerful, customized solvent map that visually encodes the complex solvent-temperature interactions you discovered, guiding all future solvent selections for this specific reaction [19].

Technical Support Center: Troubleshooting Guides & FAQs

This resource is designed to support researchers conducting experiments on solvent-solute interactions within a Design of Experiments (DoE) framework for drug development, focusing on the thermodynamic analysis of hydrophobic and hydrophilic effects [23] [24].

Frequently Asked Questions (FAQs)

Q1: My molecular dynamics (MD) simulations of solute association show erratic Gibbs free energy (ΔG) values. What could be wrong? A: Fluctuations in ΔG, or the potential of mean force (PMF), often stem from inadequate system equilibration or sampling. Ensure your simulation follows a rigorous protocol: use a sufficient equilibration period (e.g., >10 ns) in the NPT ensemble, employ a Langevin thermostat/piston to maintain correct temperature and pressure (e.g., 1 atm) [23], and verify that your production run is long enough to achieve convergence. High uncertainty can also arise from force-field parameters; cross-check the Lennard-Jones and Coulombic parameters for your solutes against established libraries [23].

Q2: When measuring solubility or association constants, my experimental results deviate significantly from published models (e.g., PC-SAFT). How should I proceed? A: First, verify your experimental conditions. For solubility measurements, ensure temperature control is precise (±0.1 K) using calibrated instruments, as small temperature changes greatly affect hydrophobic interactions [25] [24]. Second, confirm solvent purity and sample preparation. Models like PC-SAFT or Jouyban-Acree require accurate binary interaction parameters (kij). If using a predictive model (kij=0), expect larger deviations; fitting kij to at least four experimental data points per solvent system improves accuracy significantly [25].

Q3: My spectrophotometer readings for sample concentration are noisy, especially after changing lamps. How do I diagnose this? A: Noisy or erratic readings are classic signs of a failing lamp source. Spectrophotometer lamps have a finite lifespan, and light intensity fades over time, introducing "noise" [26]. To mitigate this:

- Turn off the lamp when the instrument is not in use to extend its life.

- Perform regular calibration using NIST-traceable standards at known absorbance values to verify linearity and wavelength accuracy [26].

- Ensure the sample compartment and cuvettes are meticulously clean, as contaminants in the light path cause erroneous readings [26] [27].

Q4: I am investigating protein cold denaturation. Why do hydrophobic interaction measurements at low temperatures sometimes show contradictory trends? A: The temperature dependence of hydrophobic interactions is solute-size dependent [24]. For small hydrophobic solutes (e.g., methane), the strength of interaction (negative ΔG) typically increases with temperature [23] [24]. However, for larger hydrophobic surfaces, the relationship can be non-monotonic. Your observations may be valid if your system crosses a critical size threshold. Re-examine the critical radius (Rc) for your solute; Rc decreases with increasing temperature, affecting whether the process is entropy-driven at high temp or enthalpy-driven at low temp [24]. Ensure your analysis separates enthalpy (ΔH) and entropy (-TΔS) contributions from your PMF data [23].

Q5: During thermal analysis of my protein or polymer system, the software solver fails to converge or warns of an invalid temperature distribution. What steps can I take? A: This is common in models with high thermal gradients or mismatched material properties. Follow this checklist:

- Geometry & Mesh: Check for and repair poor-quality elements and ensure correct element normals [28].

- Thermal Couplings: Avoid "Perfect Contact" definitions. Use a "Thermal Coupling" with a high Heat Transfer Coefficient (e.g., 1e5 W/m²·C) for interfaces where meshes don't match [28].

- Solver Parameters: Add a diagonal rescaling parameter (e.g.,

GPARAM 12 731 -1E36) to aid convergence when conductance values vary widely [28]. - Relaxation Factor: If the temperature difference doesn't decrease, reduce the solver's relaxation factor, especially with many temperature-dependent properties [28].

Protocol 1: Molecular Dynamics Calculation of PMF for Solute Association This protocol is derived from studies on methane (hydrophobic) and water (hydrophilic) solutes [23].

- System Setup: Create an initial simulation box (e.g., 42.0 Å × 28.0 Å × 28.0 Å) with a pair of solutes and ~3000 solvent water molecules (e.g., TIP3P model).

- Force Field: Use explicit atomic parameters for solutes (e.g., OPLS-AA for methane) and water. Apply a switching function for non-bonded interactions between 10.0 Å and 12.0 Å cutoffs.

- Simulation Run: Perform simulations in the NPT ensemble. Maintain temperature (280-360 K) using a Langevin thermostat (damping coefficient ~1 ps⁻¹) and pressure (1 atm) using a Langevin piston.

- Umbrella Sampling: Restrain the distance between solute centers-of-mass with a harmonic potential. Run simulations at incremental distances (e.g., from 14.0 Å to contact in 0.25 Å steps). Each window should be simulated for >5 ns after equilibration.

- Data Analysis: Use the WHAM method to unbias and combine data from all windows to compute the mean force as a function of distance. Integrate this force to obtain the PMF (ΔG).

Protocol 2: Experimental Solubility Measurement for DoE Modeling Adapted from artemisinin solubility studies [25].

- Sample Preparation: Prepare binary solvent mixtures (e.g., toluene with n-heptane or ethanol) at varying volume fractions. Add excess solid solute (e.g., artemisinin) to each mixture.

- Equilibration: Place samples in sealed vials in a temperature-controlled agitated bath. Hold at constant temperature (e.g., 278.15 to 313.15 K) for >24 hours to reach equilibrium.

- Sampling & Analysis: Draw a saturated aliquot, filter rapidly to remove undissolved solid, and dilute as needed. Analyze concentration using a calibrated analytical method (e.g., HPLC, UV-Vis spectrophotometry [26] [27]).

- Data Fitting: Fit experimental solubility data to models like Jouyban-Acree (requires ~10 data points for reliable parametrization) or PC-SAFT (can use fewer points with fitted kij) [25].

Quantitative Data Summary

Table 1: Gibbs Free Energy (ΔG) of Association for Different Solute Pairs at Selected Temperatures Data inferred from PMF minima in MD simulations [23].

| Solute Pair | Interaction Type | ΔG at 280 K (kJ/mol) | ΔG at 320 K (kJ/mol) | ΔG at 360 K (kJ/mol) | Trend with ↑ Temp |

|---|---|---|---|---|---|

| Methane-Methane | Hydrophobic (HϕO) | -2.1 | -2.8 | -3.5 | ΔG more negative |

| Water-Water (Bridged) | Hydrophilic-Bridged (HϕI) | -10.5 | -9.8 | -9.0 | ΔG less negative |

| Water-Water (H-Bond) | Direct H-Bond | -15.0 | -15.8 | -16.5 | ΔG more negative |

Table 2: Thermodynamic Components for Methane Association at 320 K Based on dissection of PMF into enthalpy and entropy contributions [23].

| Component | Contribution (kJ/mol) | Molecular Interpretation |

|---|---|---|

| Total ΔG | -2.8 | Favorable association |

| ΔH (Total) | +4.0 | Unfavorable enthalpic change |

| -TΔS (Total) | -6.8 | Very favorable entropic change |

| ΔH (Solute-Solvent) | +9.5 | Loss of favorable solute-water VDW contacts |

| ΔH (Solvent-Solvent) | -5.5 | Compensation from improved water-water H-bonds |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Thermodynamic Interaction Studies

| Item | Function / Relevance | Example/Note |

|---|---|---|

| Molecular Dynamics Software | To compute PMFs, enthalpies, and entropies via atomistic simulation. | NAMD [23], GROMACS. Critical for mechanistic insight. |

| Calibrated Thermostatic Bath | To maintain precise, stable temperatures for solubility/kinetics experiments. | Required for protocols in [25]. Accuracy < ±0.1 K. |

| Spectrophotometer & Cuvettes | For quantitative concentration analysis in solubility or binding assays. | Ensure regular lamp checks and calibration [26] [27]. Use quartz for UV. |

| NIST-Traceable Calibration Standards | To validate instrument accuracy (wavelength, absorbance) for reliable data. | Holmium oxide filter for wavelength; absorbance standards [26]. |

| PC-SAFT / Thermodynamic Model Software | To predict and correlate solubility in solvent mixtures, reducing experimental load. | Useful for DoE research on solvent effects [25]. |

| High-Purity Hydrophobic/Hydrophilic Probes | Model solutes for foundational studies. | Methane (HϕO) [23] [24]; Water, alcohols (HϕI) [23]. |

| Contact Temperature Measurement Kit | To diagnose experimental setup issues (e.g., thermal gradients). | Multimeter, RTD, thermocouple for troubleshooting [29]. |

Visualization of Concepts and Workflows

Diagram 1: MD PMF Study Workflow

Diagram 2: Temperature Effects on Protein Stability

Beyond Trial and Error: A Practical Framework for DoE Implementation in Process Development

Frequently Asked Questions

What is the main advantage of DoE over the OVAT approach? DoE allows you to study multiple variables and their interactions simultaneously. This is more efficient and reveals complex interaction effects that OVAT misses, such as how temperature and solvent polarity can jointly influence yield and compound integrity [30].

My experimental runs are expensive. How can DoE help with this? DoE is designed for efficiency. Strategic designs like Box-Behnken or Central Composite require fewer experimental runs to model complex, multi-factor systems, optimizing resource use while maximizing information gain [30].

I've designed my experiment, but the results show a lot of noise. What could be wrong? Uncontrolled environmental factors or measurement inconsistencies are likely causing this. Implement Quality by Design (QbD) principles and risk assessment tools like Failure Mode and Effects Analysis (FMEA) early in your workflow to identify and control these sources of variation [30].

How do I validate that my DoE model accurately predicts real-world outcomes? Use confirmation experiments. Run a few additional experiments at the optimal conditions predicted by your model. If the experimental results closely match the predictions, your model is considered validated and reliable.

A key piece of equipment failed during one of my experimental runs. How should I handle this? Do not simply ignore the failed run or substitute a value. Document the failure and its suspected cause. You may need to exclude the point from analysis and, if it creates a significant gap in your experimental design, potentially rerun it to maintain the model's integrity.

Troubleshooting Common Experimental Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| Poor Model Fit | Significant factor interactions were not included in the initial model. | Re-analyze your data and add relevant interaction terms (e.g., Temperature*Solvent) to the model [30]. |

| Low Predictive Power | The experimental range for factors (e.g., temperature, solvent ratio) is too narrow. | Consider expanding the factor ranges in a subsequent experimental design, such as a Central Composite design, to better explore the response surface [30]. |

| Unexplained Variance in Results | Uncontrolled external factors or poor protocol consistency. | Introduce stricter process controls and utilize risk assessment tools (e.g., HACCP) within a QbD framework to ensure consistency [30]. |

| Difficulty Scaling Up | Optimal conditions from lab-scale DoE do not translate to larger systems. | Incorporate scale-up parameters (e.g., agitation rate, heating/cooling time) as factors in your DoE from the beginning to build a more robust model. |

Experimental Protocols & Data Presentation

Protocol: Optimizing Phytochemical Extraction Using a Box-Behnken Design

This protocol outlines a generalized methodology for applying DoE to optimize extraction processes, focusing on temperature and solvent interactions [30].

- Define Objective and Responses: Clearly state your goal (e.g., "Maximize yield of sinapic acid"). Define measurable responses (e.g., extraction yield, purity, antioxidant activity).

- Select Critical Factors: Identify and select the factors to study. For this context, key factors are:

- Factor A: Extraction Temperature

- Factor B: Solvent Polarity (e.g., ethanol/water ratio)

- Factor C: Extraction Time

- Design the Experiment: Use statistical software to generate a Box-Behnken design for these three factors. This design is efficient for modeling quadratic response surfaces with fewer runs than a full factorial design.

- Execute Experiments: Perform the extractions in a randomized order to minimize the effects of uncontrolled variables.

- Analyze Data and Build Model: Input your response data into the software. Perform analysis of variance (ANOVA) to identify significant factors and generate a regression model.

- Validate the Model: Conduct confirmation experiments at the predicted optimal conditions to validate the model's accuracy.

Quantitative Data on DoE Advantages

The following table summarizes the demonstrated benefits of moving from OVAT to a DoE-driven approach in green extraction technologies [30].

| Metric | OVAT Performance | DoE Performance (with Case Studies) | Improvement |

|---|---|---|---|

| Extraction Efficiency | Baseline | Up to 500% increase | ~5x improvement [30] |

| Solvent Consumption | Baseline | Significant reduction | More sustainable process [30] |

| Extraction Time | Baseline | Shortened | Faster research & development cycles [30] |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Sinapic Acid | A model hydroxycinnamic acid compound with proven antioxidant, anti-inflammatory, and neuroprotective properties; ideal for studying solvent and temperature effects [14]. |

| Solvents of Varying Polarity (e.g., water, ethanol, methanol, acetonitrile, chloroform) | Used to create a polarity gradient to investigate how solute-solvent interactions affect extraction yield, stability, and photophysical properties [14]. |

| Design Expert Software | A specialized software platform for designing experiments (e.g., Box-Behnken, Central Composite), performing statistical analysis, and building optimization models [30]. |

| Gaussian 09W & GaussView | Computational chemistry software used for molecular structure optimization and predicting molecular, photophysical, and thermodynamic properties via Density Functional Theory (DFT) [14]. |

Experimental Workflow Diagram

The diagram below illustrates the logical workflow for transitioning from a traditional OVAT approach to a systematic DoE methodology.

Frequently Asked Questions (FAQs)

General DoE Concepts

What is the main advantage of using Design of Experiments (DoE) over the one-factor-at-a-time (OFAT) approach? DoE studies multiple factors simultaneously, which saves time and resources and provides deeper insights into process behavior, including how factors interact with one another. In contrast, OFAT can miss these critical interactions and is less efficient [31].

When should I use a screening design versus an optimization design? Screening designs, like fractional factorial designs, are used in the early stages of experimentation to identify which factors have the most significant effect on your response. Optimization designs, like Response Surface Methodology (RSM), are used later to model complex relationships and find optimal factor settings, especially when curvature is suspected in the response [32].

Fractional Factorial Designs

What is aliasing, and why is it important in fractional factorial designs? Aliasing, or confounding, occurs when two or more effects cannot be distinguished from each other because you haven't run every possible combination of factor levels. This is a fundamental characteristic of fractional factorial designs. When analyzing data, you may not be able to tell if a significant effect is due to a main effect or its aliased interaction. You must use your process knowledge to decide or perform follow-up experiments [32] [33].

What does the "resolution" of a fractional factorial design mean? Resolution indicates the degree of confounding in your design and what effects you can estimate clearly.

- Resolution III: Main effects are not confounded with each other but are confounded with two-factor interactions.

- Resolution IV: Main effects are not confounded with each other or with two-factor interactions, but two-factor interactions may be confounded with each other.

- Resolution V: Main effects and two-factor interactions are not confounded with each other [33].

Response Surface Methodology (RSM)

What is the goal of Response Surface Methodology? RSM aims to optimize a response (output variable) by exploring the relationships between several input variables. It is used to find factor settings that either maximize or minimize a response, and to understand the shape of the response surface, which may include curvature [34] [35].

My factors are categorical (e.g., different catalyst types). Can I use RSM? In general, RSM designs are not typically applied to categorical factors. They are best suited for quantitative, continuous factors [32].

Troubleshooting Guides

Issue 1: Unclear or Confusing Results from a Screening Experiment

Problem: After running a fractional factorial design, the analysis shows that several effects are significant, but due to aliasing, you cannot determine the exact cause.

Solution:

- Apply the Sparsity Principle: Assume that not all effects are important. Higher-order interactions (e.g., three-factor interactions) are less likely to be significant than main effects and two-factor interactions [36] [33].

- Apply the Heredity Principle: An interaction effect is more likely to be significant if its parent main effects are also significant. Use this to help de-alias confounded effects [33].

- Perform Follow-up Experiments: Augment your initial design with additional experimental runs. This could involve testing the missing runs from the full factorial or adding only the runs needed to de-alias the specific effects of interest [33].

Issue 2: Failed Optimization Using RSM

Problem: You have performed an RSM analysis, but the predicted optimum does not yield the expected results in the lab, or the optimization algorithm seems stuck.

Solution:

- Check for Curvature: RSM is appropriate when there is curvature in the response. If you used a two-level factorial design initially and found no significant curvature, the relationship might be purely linear, and a simpler model is sufficient [32].

- Beware of Local Optima: Traditional RSM optimization using deterministic models can sometimes converge to a local optimum instead of the global best. A modern solution is to enhance RSM with metaheuristic algorithms (e.g., Differential Evolution, Particle Swarm Optimization), which are better at exploring the entire response surface and escaping local optima [35].

- Verify Model Adequacy: Check the fit of your response surface model. A lack-of-fit test or examining residual plots can tell you if the model is a poor predictor for certain regions of the experimental space.

Issue 3: Designing an Efficient DoE Campaign for a New Process

Problem: You are beginning a study on temperature and solvent interaction effects and are unsure how to structure your experiments efficiently.

Solution: Implement a sequential DoE strategy, as shown in the workflow below. This approach is highly efficient for moving from many factors to an optimized process.

Sequential DoE Workflow for Temperature and Solvent Studies

A real-world study on building performance provides a clear template. Researchers initially had eight factors (e.g., window-to-wall ratio and roof overhang on four orientations). They started with a Resolution V fractional factorial design (2^(8-2) = 64 runs) to screen for active factors. This screening identified three key factors, which they then used in a subsequent RSM optimization to find the optimal solution [37]. This demonstrates how a large number of potential factors can be efficiently reduced to a manageable set for in-depth optimization.

Experimental Protocols & Data Presentation

Protocol: Screening with a Fractional Factorial Design

This protocol is adapted from a case study investigating factors affecting a temperature field during a manufacturing process [38].

1. Define Objective and Response: Clearly state the goal. Example: "Screen factors affecting the maximum temperature in a chemical process." 2. Select Factors and Levels: Choose factors you suspect influence the response. For a initial screen, two levels (low and high) are sufficient. Table: Example Factors and Levels for a Solvent & Temperature Study

| Factor | Low Level (-1) | High Level (+1) |

|---|---|---|

| Reaction Temperature | 50 °C | 70 °C |

| Solvent Polarity | Toluene | Acetonitrile |

| Catalyst Loading | 1 mol% | 2 mol% |

| Stirring Rate | 300 rpm | 600 rpm |

3. Select the Specific Design: Use statistical software to select a fractional factorial design with an appropriate resolution. For 4 factors, a 2^(4-1) design (8 runs) is a common starting point [33].

4. Randomize and Execute Runs: Randomize the order of experiments to avoid bias from lurking variables.

5. Analyze Data: Fit a statistical model and use half-normal plots or Pareto charts to identify significant effects. Remember to consider the aliasing structure [33].

Protocol: Optimization with Response Surface Methodology

This protocol is based on methodologies used to optimize chemical and biological processes [35] [37].

1. Define Objective: Example: "Maximize reaction yield by optimizing the three key factors identified during screening." 2. Select an RSM Design: Common choices are Central Composite Design (CCD) or Box-Behnken Design (BBD). CCDs are often built upon an existing two-level factorial design by adding axial and center points [32]. 3. Define Factor Ranges: Set the low, center, and high levels for each factor based on your screening results. 4. Execute Runs: Perform the experiments in random order. RSM designs require more runs per factor than screening designs. 5. Model and Optimize: - Fit a second-order (quadratic) model to the data. - Use the model to generate a 3D response surface plot and a 2D contour plot to visualize the relationship between factors and the response [34]. - Find the factor settings that produce the maximum (or minimum) response. For multi-objective optimization, use a desirability function approach to balance multiple goals [37].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Temperature and Solvent Interaction DoE

| Item | Function in DoE |

|---|---|

| Solvent Library | A collection of solvents with varying polarity (e.g., cyclohexane, toluene, DMSO, methanol) to systematically study solvent effects on reaction outcomes like yield or purity [9]. |

| Temperature-Controlled Reactor | Provides precise and uniform control of reaction temperature as a key continuous factor in the experimental design [31]. |

| Statistical Software (e.g., JMP, R, Minitab) | Used to generate the design matrix, randomize run order, analyze results, build models, and create optimization plots [37] [33]. |

| Fractional Factorial Design | A structured experimental plan that allows for the efficient screening of a large number of factors with a minimal number of runs by strategically confounding high-order interactions [32] [33]. |

| Response Surface Design (e.g., CCD) | An experimental design used for optimization that models curvature and identifies optimal factor settings by fitting a quadratic model [32] [37]. |

Key Comparisons and Diagrams

The table below summarizes the core differences between full and fractional factorial designs, which is critical for selecting the right screening approach.

Table: Comparison of Full vs. Fractional Factorial Designs

| Feature | Full Factorial Design | Fractional Factorial Design |

|---|---|---|

| Purpose | Identify all main effects and all interaction effects. | Screen many factors to identify vital few; assumes sparsity of effects [36] [32]. |

| Number of Runs | 2^k (e.g., 4 factors = 16 runs). | 2^(k-p) (e.g., 4 factors = 8 runs for a 1/2 fraction) [32] [33]. |

| Aliasing | No aliasing; all effects can be estimated independently. | Effects are aliased (confounded); cannot distinguish between certain interactions and main effects [33]. |

| Best For | When the number of factors is small (e.g., <5) or when all interactions must be estimated. | Early screening phases with many factors or when experimental resources are limited [32] [39]. |

The following diagram illustrates the core philosophy of RSM, which is to model a curved response surface to find an optimum, unlike the linear models from a simple two-level factorial.

Evolution of Experimental Strategy

Frequently Asked Questions (FAQs)

Q1: Why is the strategic selection of factors like temperature and solvent identity so critical in Design of Experiments (DoE) for pharmaceutical development? The careful selection of factors is fundamental because they directly control critical quality attributes of the final product. Temperature and solvent identity, in particular, have a profound impact on reaction kinetics, solubility, and final product properties. Integrating these factors correctly in a DoE allows researchers to understand not just their individual effects, but also their complex interactions, leading to more robust and optimized processes while reducing experimental time and costs [40] [41] [42].

Q2: How can I model complex, non-linear relationships between process parameters and outcomes without an unmanageable number of experiments? Advanced machine learning models and surrogate modeling techniques are highly effective for this. For instance, Bayesian Neural Networks (BNN) and Neural Oblivious Decision Ensembles (NODE) have demonstrated excellent accuracy in capturing non-linear patterns, such as pharmaceutical solubility in binary solvents, even with limited data. Furthermore, employing optimal design criteria (like D-optimality) for selecting your training set of experiments ensures you gather the most informative data points, maximizing model reliability from a minimal number of runs [41] [42].

Q3: What is a practical method for optimizing multiple, potentially conflicting, responses simultaneously? The desirability function approach is a widely used and practical method for multi-response optimization. It involves transforming each response into an individual desirability value between 0 (undesirable) and 1 (fully desirable). These individual values are then combined into a single composite desirability score. Process parameters are then adjusted to maximize this composite score, thereby finding the best possible compromise to satisfy all your objectives at once [43].

Q4: My process involves internal defects that are difficult to model. How can I optimize parameters in such a scenario? A data-driven approach combining a prediction model with a multi-objective optimization algorithm is well-suited for this challenge. For example, you can use a Random Forest model, which can establish a non-explicit relationship between process parameters and quality levels (including internal defects like porosity). This model can then be used as the objective function for a multi-objective optimization algorithm like NSGA-II to find the set of process parameters (Pareto solutions) that minimize these defects [44].

Troubleshooting Guides

Issue 1: Poor Predictive Accuracy of a Surrogate Model

Problem: A regression model developed to predict a key outcome (e.g., reaction rate, solubility) is performing poorly, leading to unreliable optimization.

| Potential Cause | Recommended Action | Relevant Example |

|---|---|---|

| Non-linear patterns in the data are not captured by a simple linear model. | Employ advanced machine learning models capable of handling non-linearities, such as Bayesian Neural Networks (BNN) or the Neural Oblivious Decision Ensemble (NODE) method. Fine-tune hyperparameters using algorithms like Stochastic Fractal Search (SFS). [42] | A study on rivaroxaban solubility showed BNN achieved a test R² of 0.9926, far superior to a polynomial model's R² of 0.8200. [42] |

| Suboptimal selection of training data points (e.g., solvents for a model). | Apply statistical optimality criteria like D-optimality when choosing your training set from the available options. A D-optimal set maximizes the information content, making it more likely to produce a reliable model with a small number of data points. [41] | For a model of solvent effects on reaction kinetics, selecting a D-optimal set of solvents from a space of possibilities was found to correlate strongly with good surrogate-model performance. [41] |

| Inadequate data preprocessing, leading to model instability or bias. | Implement a robust preprocessing pipeline: use one-hot encoding for categorical variables (e.g., solvent identity), normalize feature scales (e.g., Min-Max scaling), and detect/remove outliers using methods like the Elliptic Envelope. [42] | In solubility modeling, solvent types were one-hot encoded, and feature ranges were normalized to [0,1] using Min-Max scaling before model training. [42] |

Issue 2: Suboptimal Process Outcomes Despite Parameter Adjustment

Problem: Even after varying known parameters, the process output (e.g., product strength, surface finish, temperature) does not meet the desired targets.

| Potential Cause | Recommended Action | Relevant Example |

|---|---|---|

| Ignored parameter interactions; factors are being optimized in isolation. | Use a Response Surface Methodology (RSM) design to fit a second-order model. This model can capture interaction effects between parameters (e.g., between temperature and holding time) and identify a true optimum that one-factor-at-a-time experiments would miss. [40] [43] | In heat treatment of dual-phase steel, a UDD-RSM model revealed how temperature and holding time interact, with optimal mechanical properties achieved at 800°C and 60 minutes. [40] |

| Key influencing factor has been overlooked in the experimental design. | Re-evaluate the system and include all suspected critical parameters. For example, in machining, the tool nose radius is a crucial factor alongside speed, feed, and depth of cut. Excluding it can prevent finding the true optimum. [43] | In CNC turning of Al 6061, the ideal parameter combination included a specific tool nose radius of 0.84 mm to achieve the minimum temperature of 23.6°C. [43] |

| Single-objective focus is causing trade-offs in other critical quality areas. | Adopt a multi-objective optimization framework. Define all critical responses and use a method like the desirability function or an algorithm like NSGA-II to find a parameter set that offers the best overall balance. [43] [44] | A method combining a Random Forest prediction model with the NSGA-II algorithm was used to optimize a laser metal deposition process for multiple quality levels and internal defects simultaneously. [44] |

Experimental Protocol: Optimizing a Process Using Response Surface Methodology (RSM)

This protocol outlines the key steps for using RSM to understand and optimize process parameters, integrating temperature and solvent effects.

1. Define Objectives and Parameters: Clearly state the primary objective (e.g., "Maximize yield," "Minimize internal defects"). Identify the critical process parameters (e.g., temperature, solvent composition, feed rate, holding time) and the responses to be measured (e.g., yield, hardness, surface roughness). [40] [43]

2. Select an Experimental Design: Choose an appropriate RSM design, such as Central Composite Design (CCD) or a User-Defined Design (UDD). This design will specify the number of experimental runs and the combination of factor levels for each run, ensuring the data is suitable for fitting a quadratic model. [40] [43]

3. Execute Experiments and Collect Data: Run the experiments in a randomized order to avoid systematic bias. Precisely control parameters like temperature and solvent composition and accurately measure the corresponding responses for each run. [40]

4. Develop and Validate the Model: Using statistical software, fit a second-order regression model to the experimental data. Validate the model's accuracy and significance through Analysis of Variance (ANOVA). Check that the model's R² value and lack-of-fit test are acceptable. [40] [43]

5. Perform Optimization and Verification: Use the validated model to locate the optimal parameter settings. This can be done by analyzing response surface plots or using numerical optimization techniques like desirability function analysis. Finally, conduct a confirmation experiment at the predicted optimum to verify the model's accuracy. [43]

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and computational tools frequently used in the strategic optimization of processes involving temperature and solvent effects.

| Item Name / Category | Function / Application in Optimization |

|---|---|

| Solvatochromic Parameters (e.g., π*, α, β) | Empirical solvent descriptors used to build Linear Free Energy Relationships (LFERs) and multivariate regression models for predicting solvent effects on reaction rates and equilibria. [41] |

| Bayesian Neural Network (BNN) | A machine learning model that treats weights as probability distributions, providing robust predictions and quantifying uncertainty, which is ideal for data-scarce environments like pharmaceutical solubility prediction. [42] |

| Pseudorandom Binary Sequence (PRBS) | A designed input signal for dynamic testing in pilot plants; it efficiently provides rich spectral content for precise model parameter estimation and captures confounding effects of multiple variables. [17] |

| D-Optimal Design Criterion | A statistical criterion used to select the most informative set of experiments from a discrete set of options (e.g., solvents), maximizing model reliability from a minimal number of data points. [41] |

| One-Hot Encoding | A data preprocessing technique used to convert categorical variables (e.g., solvent identity) into a binary numerical format, allowing them to be incorporated into machine learning models without implying false order. [42] |

| NSGA-II (Non-dominated Sorting Genetic Algorithm II) | A powerful multi-objective optimization algorithm used to find a set of Pareto-optimal solutions when balancing multiple, competing process objectives, such as quality and productivity. [44] |

| Carbide Cutting Tools (e.g., Al₂O₃ coated) | Used in machining process optimization (e.g., CNC turning) where tool nose radius is a critical parameter interacting with speed and feed to influence outcomes like temperature and surface finish. [43] |

| Elliptic Envelope Algorithm | A statistical technique for outlier detection that assumes a multivariate normal distribution, helping to clean datasets and improve the reliability of data-driven models before training. [42] |

Strategic Factor Selection Workflow

The following diagram illustrates a systematic workflow for strategic factor selection and process optimization, integrating the methodologies discussed.

Quantitative Data from Key Experiments

Table 1: Optimization of Mechanical Properties in Dual-Phase Steel via Temperature and Holding Time [40]

| Temperature (°C) | Holding Time (min) | Hardness (HV) | Ultimate Tensile Strength (MPa) | Yield Strength (MPa) |

|---|---|---|---|---|

| 650 | 30 | 143.26 | 500.641 | 257.333 |

| 800 | 60 | 168.82 | 598.317 | 303.246 |

Table 2: Performance Comparison of Machine Learning Models for Pharmaceutical Solubility Prediction [42]

| Model Type | Test R² | Mean Squared Error (MSE) | Mean Absolute Percentage Error (MAPE) |

|---|---|---|---|

| Bayesian Neural Network (BNN) | 0.9926 | 3.07 × 10⁻⁸ | Not Specified |

| Neural Oblivious Decision Ensemble (NODE) | 0.9413 | Not Specified | 0.1835 |

| Polynomial Regression | 0.8200 | Higher error rates | Higher error rates |

Table 3: Optimized Machining Parameters for Minimum Temperature in CNC Turning [43]

| Parameter | Optimal Value |

|---|---|

| Cutting Speed | 98.0 m/min |

| Feed Rate | 0.26 mm/rev |

| Depth of Cut | 0.893 mm |

| Tool Nose Radius | 0.84 mm |

| Resulting Temperature | 23.615 °C |

Technical Support Center: Troubleshooting Guides & FAQs