Method Validation Parameters for Organic Analytical Techniques: A Guide to ICH Compliance and Best Practices

This article provides a comprehensive guide to method validation for researchers, scientists, and drug development professionals employing organic analytical techniques.

Method Validation Parameters for Organic Analytical Techniques: A Guide to ICH Compliance and Best Practices

Abstract

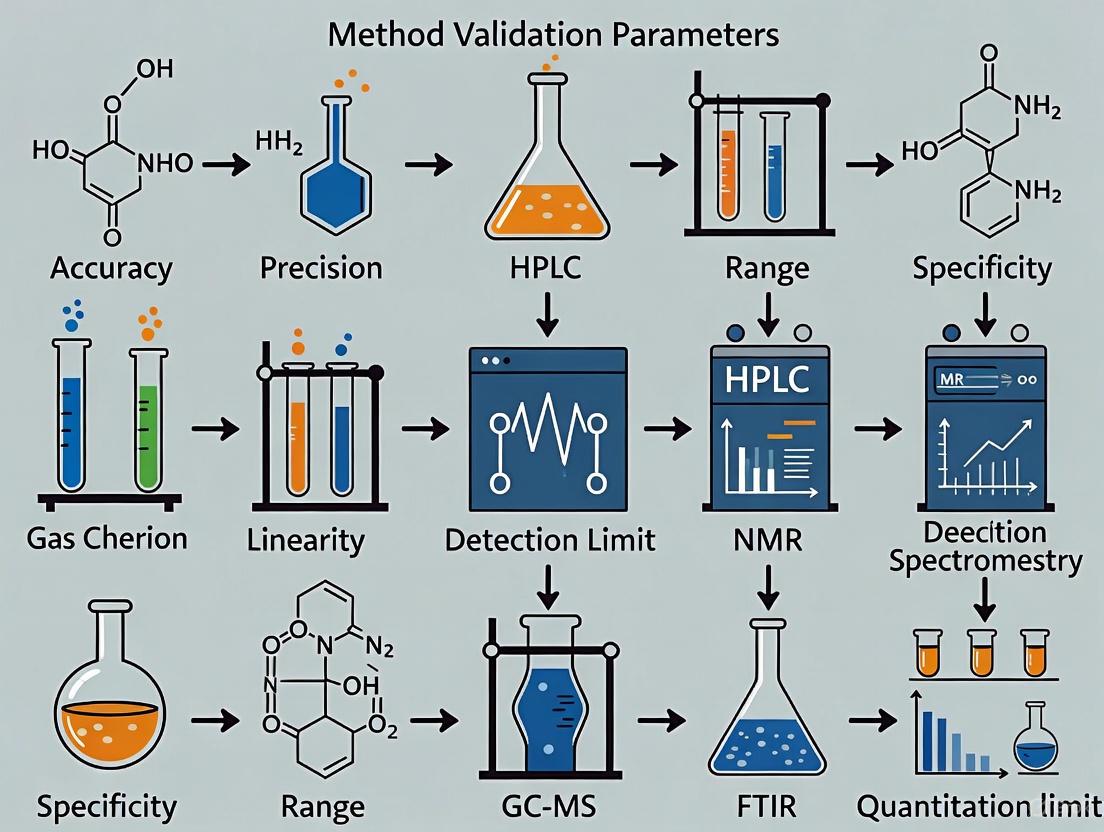

This article provides a comprehensive guide to method validation for researchers, scientists, and drug development professionals employing organic analytical techniques. It covers the foundational principles per ICH Q2(R2) and FDA guidelines, detailing core validation parameters like accuracy, precision, and specificity. The content extends to practical methodologies for HPLC and spectrophotometry, strategies for troubleshooting and robustness testing, and a comparative analysis of techniques. By embracing the modern, lifecycle-focused approach outlined in ICH Q14, this resource aims to empower professionals in developing reliable, compliant, and efficient analytical methods that ensure data integrity and patient safety.

The Pillars of Reliability: Understanding ICH and FDA Guidelines for Analytical Method Validation

FAQs on Analytical Method Validation

Q1: What is method validation and why is it necessary? Method validation is the process of proving that an analytical procedure is suitable for its intended purpose. It provides documented, objective evidence that a method consistently delivers results that meet pre-defined standards of accuracy and reliability [1]. It is a fundamental regulatory requirement [1] [2] and an essential part of Good Manufacturing Practice (GMP) to ensure the identity, strength, quality, purity, and potency of drug substances and products [3] [1].

Q2: When is analytical method validation required? Method validation is required in several key scenarios:

- Prior to the use of the method in routine testing [1].

- When the method is part of a regulatory submission, such as a New Drug Application (NDA) or Abbreviated New Drug Application (ANDA) [1].

- When significant changes are made to a previously validated method that are outside the original scope [1].

Q3: What are the key parameters evaluated during method validation? According to ICH Q2(R1) guidelines, the core validation characteristics include [1] [4]:

- Specificity: The ability to assess the analyte unequivocally in the presence of other components.

- Accuracy: The closeness of agreement between the accepted true value and the value found.

- Precision: The closeness of agreement between a series of measurements (repeatability, intermediate precision).

- Linearity: The ability to obtain test results proportional to the concentration of the analyte.

- Range: The interval between the upper and lower concentrations for which suitable levels of precision, accuracy, and linearity are demonstrated.

- Detection Limit (LOD): The lowest amount of analyte that can be detected.

- Quantitation Limit (LOQ): The lowest amount of analyte that can be quantified.

- Robustness: A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters.

Q4: How does 'fitness-for-purpose' influence validation? The "fitness-for-purpose" approach means that the level of validation rigor should be aligned with the method's intended application [5]. The method's position on the spectrum from a research tool to a critical clinical endpoint dictates the stringency of experimental proof required [5]. The validation must demonstrate that the method fulfills the specific requirements for its particular use [5].

Q5: What is the difference between method validation and verification?

- Method Validation establishes that a newly developed method is suitable for its intended use [1].

- Method Verification is the process of demonstrating that a compendial method (e.g., from USP) is suitable for use under the actual conditions in a specific laboratory [1].

Troubleshooting Common Method Validation Issues

Specificity and Interference Problems

| Problem | Root Cause | Solution |

|---|---|---|

| Inadequate Peak Separation | Insufficient method development; not all potential interferences considered. | Perform a thorough review of all potential interferences (sample matrix, solvents, buffers) during protocol design [4]. |

| Failing Acceptance Criteria | Use of generic, non-justified acceptance criteria from an SOP without assessing method capability [4]. | Review all acceptance criteria against known method performance data from development studies. Ensure they are scientifically sound [4]. |

| Method not Stability-Indicating | Failure to consider how the sample matrix may change over time (e.g., degradation) [4]. | For methods used in stability testing, include forced degradation studies in the validation to prove the method can separate degradation products [4]. |

Accuracy and Precision Failures

| Problem | Root Cause | Solution |

|---|---|---|

| High Imprecision (%CV) | Sample complexity causing interference; instrumentation issues; inadequate method optimization [2]. | Simplify sample preparation, optimize method parameters (e.g., mobile phase, column temperature), and ensure instrument qualification. |

| Inaccurate Results (Bias) | Poorly characterized reference standards; matrix effects; insufficient method robustness [2] [6]. | Use fully characterized, certified reference materials. Perform robustness testing during development to identify critical parameters. |

| Failed QC During Routine Use | Method not adequately optimized or validated for real-world variability; lack of system suitability testing [1]. | Incorporate system suitability tests as an integral part of the analytical procedure to ensure the system is working correctly at the time of analysis [1]. |

General Planning and Regulatory Mistakes

| Problem | Root Cause | Solution |

|---|---|---|

| Regulatory Deficiencies | Using a "cookie-cutter" approach; not considering the uniqueness of each New Chemical Entity (NCE) or API [3]. | Design the validation study based on a deep understanding of the molecule's physiochemical properties (solubility, pH, pKA, stability) [3] [2]. |

| Inefficient Tech Transfer | Not thinking ahead to method transfer during the initial validation [3]. | Plan for peer, QA, and regulatory review from the start. Optimize methods so they can be easily validated and transferred to a QC lab [3]. |

| Incomplete Reporting | Only reporting results that fall within acceptable limits during a regulatory submission [2]. | Report all validation data, both passing and failing. The FDA may request a complete dataset for review [2]. |

Experimental Protocols for Key Validation Tests

Protocol for Specificity (For a Stability-Indicating Method)

Objective: To demonstrate that the method can accurately quantify the analyte in the presence of other components like impurities, degradation products, or matrix components.

Materials:

- Analyte Standard: High-purity reference standard.

- Placebo/Blank: Sample matrix without the analyte.

- Stressed Samples: Analyte samples subjected to forced degradation (acid, base, oxidation, heat, light).

Procedure:

- Inject the placebo/blank preparation. The chromatogram should show no interfering peaks at the retention time of the analyte.

- Inject the analyte standard to confirm its retention time and peak characteristics.

- Inject individually stressed samples. The analyte peak should be resolved from any degradation peaks, typically with a resolution (Rs) of not less than 2.0 [4].

- Assess peak purity using a Diode Array Detector (DAD).

Acceptance Criteria:

- The placebo/blank shows no interference.

- The analyte peak is pure and baseline separated from all degradation peaks (Rs ≥ 2.0).

- The assay result from the stressed sample is calculated by ignoring all degradation peaks.

Protocol for Linearity and Range

Objective: To demonstrate that the analytical procedure produces results that are directly proportional to the concentration of the analyte within a given range.

Materials:

- Stock Solution: A primary stock solution of the analyte at a concentration near the top of the expected range.

- Dilutions: A series of minimum 5 concentrations prepared from the stock solution, covering the entire range (e.g., 50% to 150% of the target concentration).

Procedure:

- Prepare each linearity level in duplicate or triplicate.

- Inject each level into the chromatographic system.

- Plot the mean peak response (e.g., area) against the concentration.

- Perform a linear regression analysis on the data to obtain the correlation coefficient (r), slope, and y-intercept.

Acceptance Criteria:

- A correlation coefficient (r) of not less than 0.999 is typically expected for assay methods.

- The y-intercept should not be significantly different from zero.

Protocol for Accuracy (Recovery)

Objective: To establish the closeness of agreement between the measured value and the true value.

Materials:

- Placebo/Blank: Known amount of the sample matrix without the analyte.

- Analyte Standard: To spike the placebo at three concentration levels (e.g., 80%, 100%, 120%), with a minimum of three replicates per level.

Procedure:

- Spike known quantities of the analyte into the placebo.

- Analyze each sample using the validated method.

- Calculate the recovery (%) for each sample using the formula: (Measured Concentration / Theoretical Concentration) x 100.

Acceptance Criteria:

- Mean recovery at each level should be between 98.0% and 102.0% for drug substance assay.

- The Relative Standard Deviation (RSD) for the replicates at each level should be NMT 2.0%.

Method Validation Workflow and Decision Pathway

The following diagram illustrates the key stages and decision points in the analytical method lifecycle, from development through to routine use.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials required for successful method development and validation, particularly for chromatographic techniques like HPLC.

| Item | Function & Importance | Key Considerations |

|---|---|---|

| Certified Reference Standards | Serves as the benchmark for quantifying the analyte and establishing method accuracy [5]. | Must be of high and documented purity, fully characterized, and representative of the analyte [1] [5]. |

| Chromatographic Column | The heart of the separation; critical for achieving specificity, resolution, and reproducibility. | Column chemistry (C18, C8, etc.), dimensions, and particle size must be specified. Robustness testing should evaluate column lot-to-lot variability [7]. |

| High-Purity Solvents & Reagents | Used to prepare the mobile phase and sample solutions. | Impurities can cause baseline noise, ghost peaks, and interfere with detection, compromising accuracy and LOD/LOQ [2]. |

| System Suitability Standards | Verifies that the total chromatographic system is adequate for the intended analysis at the time of testing. | A mixture containing the analyte and key impurities is used to measure parameters like plate count, tailing factor, and resolution before a run [1]. |

| Stable Sample Matrix | Essential for accuracy (recovery) studies, especially for complex formulations. | The placebo or blank matrix must be free of the analyte and representative of the final product composition to reliably assess interference [2]. |

The International Council for Harmonisation (ICH) is a unique project that brings together regulatory authorities and the pharmaceutical industry to discuss the scientific and technical aspects of pharmaceutical product development and registration. Its mission is to achieve greater harmonization worldwide to ensure that safe, effective, and high-quality medicines are developed and registered in the most resource-efficient manner [8] [9]. Launched in 1990, the ICH's work is accomplished through the development of internationally harmonized guidelines [8].

The U.S. Food and Drug Administration (FDA) has participated in the ICH as a Founding Member since 1990 and implements all ICH Guidelines as FDA Guidance. The FDA's Center for Drug Evaluation and Research (CDER) plays a pivotal leadership role within the ICH framework, proposing new topics, leading expert working groups, and adopting final guidelines [8] [9].

Key Benefits of Harmonization

Regulatory harmonization through the ICH provides significant benefits [8] [9]:

- Reduced duplication of clinical testing and animal studies

- More efficient regulatory review processes

- Faster patient access to new medicines

- Promotion of public health by minimizing unnecessary testing

- Prevention of unnecessary duplication of clinical trials in humans

ICH Guidelines: Core Principles and Structures

The ICH develops guidelines through an established process involving technical expert working groups. As of 2022, over 700 experts from regulatory agencies and industry were involved across 34 working groups [9].

Primary Areas of Harmonization

ICH guidelines cover four primary areas of technical requirements [9]:

- Quality (Q Series): Addressing stability, impurities, manufacturing, and pharmaceutical development.

- Safety (S Series): Covering carcinogenicity, genotoxicity, reproductive toxicology, and other non-clinical studies.

- Efficacy (E Series): Encompassing good clinical practices, clinical trial design, and therapeutic area evaluation.

- Multidisciplinary (M Series): Including computational modeling, electronic standards, terminology, and biopharmaceutics.

Frequently Asked Questions (FAQs)

Which specific ICH guidelines are most critical for analytical method validation?

For analytical method validation, the most critical ICH guideline is ICH Q2(R1) - Validation of Analytical Procedures. This guideline defines key validation parameters and their acceptance criteria that ensure your analytical methods are suitable for their intended use. Additional relevant guidelines include ICH Q1 (Stability Testing), ICH Q3 (Impurities), and ICH M7 (Assessment and Control of DNA Reactive (Mutagenic) Impurities in Pharmaceuticals to Limit Potential Carcinogenic Risk) [10] [9].

How does the FDA's adoption of ICH guidelines impact method validation requirements?

When the FDA adopts an ICH guideline, it becomes part of the FDA's official guidance for industry. This means that compliance with ICH Q2(R1) is effectively a regulatory requirement for FDA submissions. The FDA encourages global implementation of ICH guidelines to facilitate mutual acceptance of clinical data and reduce redundant testing across different regions [8] [11].

What are the essential validation parameters required for chromatographic methods?

For chromatographic methods like HPLC, you must validate a core set of performance characteristics as defined in ICH Q2(R1). The essential parameters are often referred to as the key steps of analytical method validation [10]:

Table 1: Essential Method Validation Parameters for Chromatographic Methods

| Validation Parameter | Definition | Typical Acceptance Criteria |

|---|---|---|

| Accuracy | Closeness of agreement between accepted reference value and value found. | Measured as % recovery; 9 determinations over 3 concentration levels [10]. |

| Precision | Closeness of agreement between individual test results from repeated analyses. | Includes repeatability (intra-assay) and intermediate precision (inter-assay); reported as %RSD [10]. |

| Specificity | Ability to measure analyte accurately in the presence of other components. | Demonstrated by resolution, plate count, tailing factor, and peak purity tests [10]. |

| LOD/LOQ | Lowest concentration of analyte that can be detected (LOD) or quantitated (LOQ). | LOD: S/N ≈ 3:1; LOQ: S/N ≈ 10:1 [10]. |

| Linearity | Ability of method to obtain results proportional to analyte concentration. | Minimum of 5 concentration levels; reported with correlation coefficient (r²) [10]. |

| Range | Interval between upper and lower concentrations with acceptable precision, accuracy, and linearity. | Defined based on method type (e.g., assay: 80-120% of target concentration) [10]. |

| Robustness | Capacity of method to remain unaffected by small, deliberate variations in method parameters. | Measure of reliability during normal use [10]. |

How should I document accuracy and precision for my analytical method?

- Accuracy: Document by collecting data from a minimum of nine determinations over a minimum of three concentration levels covering the specified range. Report as the percent recovery of the known, added amount, or as the difference between the mean and true value with confidence intervals [10].

- Precision: Document at multiple levels:

- Repeatability: Analyze a minimum of nine determinations covering the specified range or six determinations at 100% of the target concentration. Report as %RSD.

- Intermediate Precision: Demonstrate the effects of random events (different days, analysts, equipment) using an experimental design. Compare results statistically (e.g., Student's t-test) [10].

What is the recommended approach for establishing system suitability?

System suitability is a critical step that verifies the analytical system's performance before and during the analysis. While parameters vary by method, they typically include precision, resolution, tailing factor, and plate count based on a standard solution. System suitability tests confirm that the entire system (instrument, reagents, columns, and analyst) is functioning correctly and can generate reliable data [10].

Troubleshooting Common Method Validation Issues

Problem: Failure to Meet Precision Criteria

Potential Causes and Solutions:

- Cause 1: Inconsistent sample preparation.

- Solution: Standardize and rigorously control sample preparation techniques. Ensure all analysts are trained using the same protocol.

- Cause 2: Instrument fluctuations (flow rate, temperature, detector noise).

- Solution: Perform robust instrument qualification (IQ/OQ/PQ). Establish and monitor system suitability criteria with tighter control limits during the method development phase.

- Cause 3: Column variability.

- Solution: Specify column brand, dimensions, and particle size in the method. Consider qualifying multiple columns or suppliers.

Problem: Lack of Specificity/Resolution

Potential Causes and Solutions:

- Cause 1: Co-elution of the analyte peak with impurities or matrix components.

- Solution 1: Optimize chromatographic conditions (mobile phase composition, pH, gradient profile, temperature).

- Solution 2: Employ a peak purity test using a Photodiode-Array (PDA) detector or Mass Spectrometry (MS) to confirm a single component. MS detection provides unequivocal peak purity information [10].

- Cause 2: Inadequate method development.

- Solution: Conduct forced degradation studies (stress testing) on the API and drug product to ensure the method can separate degradants from the main peak.

Problem: Poor Recovery (Accuracy)

Potential Causes and Solutions:

- Cause 1: Incomplete extraction of the analyte from the matrix.

- Solution: Re-optimize the extraction procedure (e.g., solvent strength, volume, sonication time, homogenization speed).

- Cause 2: Analyte degradation or adsorption during sample preparation.

- Solution: Use stabilized solvents, control temperature, and use appropriate container materials to prevent adsorption.

Experimental Protocol: Conducting a Full Method Validation

This protocol outlines the key experiments for validating a chromatographic method (e.g., HPLC-UV) for a small molecule drug substance, following ICH Q2(R1) principles [10].

Scope Definition

- Define the method's purpose (e.g., assay, related substances).

- Define the analytical range.

Specificity

- Procedure: Inject blank (matrix without analyte), standard, sample, and samples spiked with potential impurities/degradants.

- Data Analysis: Ensure the analyte peak is pure and free from interference. Use PDA or MS for peak purity confirmation. Report resolution between the analyte and the closest eluting peak.

Linearity and Range

- Procedure: Prepare and analyze a minimum of 5 concentrations of analyte solution spanning the defined range (e.g., 50-150% of target concentration for assay).

- Data Analysis: Plot peak response vs. concentration. Calculate the regression line (y = mx + b) and the coefficient of determination (r²). Evaluate residuals.

Accuracy

- Procedure: Prepare and analyze samples in triplicate at three concentration levels (e.g., 80%, 100%, 120%) within the range. For drug products, spike known amounts of analyte into a placebo mixture.

- Data Analysis: Calculate the mean % recovery and the relative standard deviation (%RSD) at each level.

Precision

- A. Repeatability:

- Procedure: Analyze six independent samples at 100% of the test concentration by the same analyst on the same day with the same equipment.

- Data Analysis: Calculate the %RSD of the results.

- B. Intermediate Precision:

- Procedure: Repeat the repeatability study on a different day, with a different analyst, and on a different instrument (a full or partial factorial design can be used).

- Data Analysis: Calculate the overall %RSD combining both sets of data. Statistically compare the means from the two analysts (e.g., using a t-test).

LOD and LOQ

- Procedure: Prepare serial dilutions of a standard solution.

- Data Analysis:

- Signal-to-Noise (S/N): Inject diluted solutions and calculate S/N. LOD is typically S/N ≥ 3, LOQ is S/N ≥ 10.

- Standard Deviation of Response: LOD = 3.3(SD/S), LOQ = 10(SD/S), where SD is the standard deviation of the response and S is the slope of the calibration curve.

Robustness

- Procedure: Deliberately introduce small variations in method parameters (e.g., mobile phase pH ±0.2, flow rate ±10%, column temperature ±5°C).

- Data Analysis: Monitor the effect on critical performance attributes (e.g., resolution, tailing factor, retention time). This defines the method's operable range.

Method Validation Workflow

The following diagram illustrates the logical sequence of the key stages in the analytical method validation lifecycle, from initial preparation to final reporting.

The Scientist's Toolkit: Key Reagents and Materials

Table 2: Essential Research Reagent Solutions for Analytical Method Validation

| Item | Function / Purpose |

|---|---|

| Reference Standard | Highly characterized substance used to prepare the standard solutions for quantification; essential for accuracy and linearity [10]. |

| Placebo Matrix | The formulation blank (excipients without API); critical for demonstrating specificity and accuracy in drug product methods [10]. |

| Forced Degradation Samples | Samples stressed under acid, base, oxidative, thermal, and photolytic conditions; used to validate method specificity and stability-indicating properties [10]. |

| System Suitability Solution | A reference solution used to verify that the chromatographic system is adequate for the intended analysis before the run [10]. |

| Mass Spectrometry (MS) Grade Solvents | High-purity solvents for LC-MS applications to minimize ion suppression and background noise, crucial for sensitivity and peak purity assessment [10]. |

Technical Support Center: Method Validation Troubleshooting

This guide addresses common challenges encountered when validating analytical methods for organic analysis, framed within a research thesis on method validation parameters. The following FAQs and protocols are designed to help researchers diagnose and resolve experimental issues.

Frequently Asked Questions (FAQs)

Q1: My method shows high overall accuracy, but I'm missing critical impurities. Which parameter should I investigate? A: This indicates a potential issue with Specificity. High accuracy in the main analyte assay does not guarantee the method can distinguish the analyte from closely eluting impurities or matrix components [12] [10]. You must demonstrate that the method can "assess unequivocally the analyte in the presence of components which may be expected to be present" [12]. A lack of specificity leads to false positives or an inability to detect impurities [13] [10].

- Troubleshooting Protocol: Perform a peak purity test using a photodiode-array (PDA) detector or mass spectrometry (MS) to check for co-eluting peaks [10]. Analyze samples spiked with known impurities or stress-degraded samples to confirm resolution and the absence of interference.

Q2: My replicate analyses show unacceptably high variation. What does this mean, and how do I pinpoint the cause? A: This is a Precision problem. Precision measures "the closeness of agreement among individual test results from repeated analyses" [10]. High variation can stem from multiple sources.

- Troubleshooting Protocol: Systematically assess different precision measures:

- Repeatability (Intra-assay): Have the same analyst perform multiple injections of a homogeneous sample in one session. High variability here points to issues with instrument stability, injection technique, or sample preparation inconsistency.

- Intermediate Precision: Have a different analyst repeat the assay on a different day or with a different instrument. Variability introduced here suggests the method is sensitive to normal laboratory variations [10].

- Check system suitability parameters like %RSD of peak areas, which should typically be <2% [14].

Q3: How do I know if my calibration curve is acceptable, and what do I do if it's not linear? A: This concerns Linearity and Range. Linearity is "the ability to obtain test results which are directly proportional to the concentration of analyte" [12] [15].

- Troubleshooting Protocol:

- Prepare a minimum of five standard concentrations across the expected range [10].

- Plot response vs. concentration and perform linear regression.

- Acceptance Criteria: A coefficient of determination (r²) ≥ 0.998 is often expected for assays. Visually inspect the residual plot for random scatter; patterns indicate non-linearity.

- If Non-Linear: Verify standard preparation accuracy. Ensure the detector response is within its linear dynamic range. The analyte or matrix may exhibit non-linear behavior at high concentrations; consider narrowing the validated range or using a weighted regression model.

Q4: What is the practical difference between Accuracy and Precision? A: Accuracy and Precision are distinct but complementary parameters crucial for method validity [12].

- Accuracy is "the closeness of agreement between the value found and the true value" [12]. It measures correctness (trueness).

- Precision is "the closeness of agreement among a series of measurements" [12]. It measures reproducibility (scatter). A method can be precise (consistent results) but inaccurate (consistently wrong), or accurate on average but imprecise (high scatter). A reliable method must demonstrate both [10].

Q5: My method works perfectly for the API, but fails for low-level impurity quantification. Which parameters are most critical here? A: For trace analysis, Specificity, Limit of Quantitation (LOQ), and Precision at the low end of the Range are paramount [14] [15].

- Specificity: Ensure the impurity peak is fully resolved from noise and other peaks.

- LOQ: Validate that the lowest impurity level can be quantified with acceptable accuracy and precision. The LOQ is typically defined by a signal-to-noise ratio of 10:1 or a calculated value based on the standard deviation of the response and the slope of the calibration curve [10].

- Range: The validated range must extend down to the LOQ [15].

The table below summarizes the key parameters, their definitions, and core experimental approaches based on ICH/FDA guidelines [12] [10] [15].

| Parameter | Definition | Key Experimental Protocol & Acceptance Criteria |

|---|---|---|

| Accuracy | Closeness of agreement between the measured value and the true/accepted reference value [12] [10]. | Analyze a minimum of 9 samples over 3 concentration levels within the range (e.g., 80%, 100%, 120%). Report as % recovery of the known added amount. Recovery should typically be 98-102% for assays [10]. |

| Precision | Closeness of agreement among a series of measurements from multiple sampling [12] [10]. | Repeatability: 6 injections at 100% concentration or 9 determinations across the range. %RSD < 2% for assay [10]. Intermediate Precision: Different analyst, day, or equipment. Compare means statistically (e.g., t-test). |

| Specificity | Ability to measure the analyte unequivocally in the presence of expected components like impurities or matrix [12] [10]. | Inject blank matrix, analyte standard, and samples spiked with potential interferents (impurities, degradants). Demonstrate baseline resolution (Rs > 2.0) and use PDA/MS for peak purity verification [10]. |

| Linearity | Ability to obtain results directly proportional to analyte concentration [12] [15]. | Prepare ≥5 standard solutions across the stated range. Perform linear regression. Report slope, intercept, correlation coefficient (r), and coefficient of determination (r²). r² ≥ 0.998 is common for assays. |

| Range | The interval between upper and lower concentration levels where linearity, accuracy, and precision are demonstrated [12] [15]. | Defined by the linearity and accuracy/precision experiments. For assay methods, a typical minimum range is 80-120% of the target concentration [10]. |

Visualizing Parameter Relationships and Workflows

Validation Parameter Hierarchy for a Quantitative Assay

Sequential Workflow for Core Parameter Validation

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in Validation |

|---|---|

| Certified Reference Standard (CRS) | Provides the "true value" for accuracy assessments. A high-purity, well-characterized analyte is essential [10]. |

| Blank Matrix | The sample material without the analyte. Critical for testing specificity (ensuring no interference) and establishing the baseline for LOD/LOQ [12]. |

| Spiked/Placebo Samples | Samples where a known amount of analyte is added to the blank matrix. Used for accuracy (recovery) and precision studies [10]. |

| Impurity/Degradant Standards | When available, these are used to challenge method specificity and demonstrate resolution from the main peak [10]. |

| Calibration Standards | A series of solutions at known concentrations spanning the intended range. Used to establish linearity and the calibration model [10]. |

| HPLC/UPLC Column | The stationary phase. Different chemistries (C18, phenyl, etc.) are screened and selected to achieve the required specificity and separation [14]. |

| MS-Grade Solvents & Buffers | High-purity mobile phase components minimize background noise, which is crucial for sensitivity (LOD/LOQ) and robust baseline [14]. |

| System Suitability Test Solution | A standard mixture used to verify chromatographic system performance (plate count, tailing, resolution) before validation runs [14] [10]. |

Fundamental Concepts and Regulatory Framework

What are LOD and LOQ, and why are they critical for method validation?

The Limit of Detection (LOD) is defined as the lowest amount of analyte in a sample that can be detected—but not necessarily quantified as an exact value—by the analytical procedure. Conversely, the Limit of Quantification (LOQ) is the lowest concentration of an analyte that can be quantitatively determined with acceptable precision and accuracy under the stated experimental conditions [16] [17]. These parameters are not merely academic exercises; they are fundamental requirements of global regulatory authorities, including the International Council for Harmonisation (ICH), the United States Environmental Protection Agency (USEPA), and the Food and Drug Administration (FDA) [16] [18] [19].

Understanding the distinction between these limits, along with the related Limit of Blank (LOB), is essential for characterizing the capabilities of any analytical method. A simple analogy can clarify these concepts:

- LOB: No one is talking, only the background noise of a jet engine is present.

- LOD: One person detects that another is speaking (lips are moving) but cannot understand the words over the engine noise.

- LOQ: The engine noise is sufficiently low that every word is heard and understood [16].

These limits define the lower end of an analytical method's working range, situated between the region where no signal can be detected and the linear quantitative range [16]. Determining them reliably ensures your method is "fit for purpose" and capable of supporting decisions in research, drug development, and quality control.

What is the difference between instrument detection limit and method detection limit?

It is crucial to distinguish between instrumental and methodological detection limits, as the latter provides a more realistic picture of analytical performance in practice.

- Instrument Detection Limit: This is determined under ideal conditions, typically using pure solvent standards and short-term measurement precision. It reflects the best-case scenario for the instrument's sensitivity [17] [20].

- Method Detection Limit (MDL): This accounts for the entire analytical process, including sample preparation, potential matrix effects, and all sources of variability introduced by the method itself. The USEPA defines the MDL as "the minimum measured concentration of a substance that can be reported with 99% confidence that the measured concentration is distinguishable from method blank results" [19]. The method detection limit is always higher than the instrumental detection limit because it incorporates the "noise" from the entire analytical procedure, not just the instrument [17] [20].

Calculation Methodologies and Experimental Protocols

What are the primary methods for calculating LOD and LOQ?

There are multiple approaches endorsed by various regulatory bodies, each with specific applications based on the nature of the analytical method and the presence of background noise. The following table summarizes the most common calculation criteria.

Table 1: Common Criteria for LOD and LOQ Calculation [16] [18] [19]

| Methodology | Basis of Calculation | Typical LOD | Typical LOQ | Best Suited For |

|---|---|---|---|---|

| Standard Deviation of the Blank | Mean and standard deviation (Stdev) of blank sample measurements. | Mean~blank~ + 3.3 × Stdev~blank~ [16] | Mean~blank~ + 10 × Stdev~blank~ [16] | Quantitative assays where a blank matrix is available. |

| Standard Deviation of the Response & Slope | Standard error of the regression (σ or s~y/x~) and the slope (S) of the calibration curve. | 3.3 × σ / S [16] | 10 × σ / S [16] | Quantitative assays without significant background noise. |

| Signal-to-Noise (S/N) | Ratio of the analyte signal to the background noise. | S/N = 2 or 3 [16] [17] | S/N = 10 [17] | Chromatographic and spectroscopic techniques with measurable baseline noise. |

| Visual Evaluation | Determination by an analyst or instrument of the lowest concentration that can be reliably detected. | Concentration at ~99% detection rate (via logistics regression) [16] | Concentration at ~99.9% detection rate [16] | Non-instrumental methods (e.g., visual color change, particle detection). |

Detailed Experimental Protocol: Standard Deviation of the Blank and Calibration Curve

Selecting the appropriate method is only the first step. Proper experimental design is critical for obtaining reliable and defensible limits.

1. Experimental Design for Blank Method

- Study Design: Analyze a sufficient number of independent blank samples (a matrix without the analyte). IUPAC recommends at least 20 determinations to robustly estimate the mean and standard deviation [20].

- Procedure:

- Prepare and analyze a minimum of 10-20 blank samples in the appropriate matrix [16].

- Measure the response for each blank.

- Calculate the mean (X̄~b~) and standard deviation (SD~b~) of these blank responses.

- Calculation:

2. Experimental Design for Calibration Curve Method

- Study Design: Prepare a calibration curve using samples in the range of the expected LOD/LOQ. Use a minimum of five concentrations, each with six or more replicates, to adequately characterize the standard error of the regression [16].

- Procedure:

- Prepare a series of standard solutions at low concentrations covering the expected LOD/LOQ range.

- Analyze each concentration level multiple times (e.g., in triplicate) to build a calibration curve.

- Perform linear regression (y = a + bx) on the data to obtain the slope (b) and the standard error of the estimate (s~y/x~ or σ), which represents the standard deviation of the residuals [16] [18].

- Calculation:

The workflow below illustrates the logical process for determining and verifying LOD and LOQ.

Troubleshooting Common Issues (FAQs)

FAQ 1: Why do my calculated LOD and LOQ values vary widely when using different criteria?

This is a frequently encountered scenario. Different calculation methods are based on diverse theoretical and empirical assumptions and utilize different amounts and types of experimental data (e.g., blank data vs. low-concentration fortified samples) [18]. For instance:

- The blank standard deviation method is highly dependent on the variability of your blank matrix.

- The calibration curve method depends on the precision of your low-level standards and the robustness of your linear regression in that range.

- The signal-to-noise method is a direct but sometimes less statistically rigorous estimate.

These approaches are not expected to yield identical results. The key is to consistently apply a single, justified methodology that aligns with your analytical technique and regulatory guidelines. When reporting LOD/LOQ, always specify the criterion used for calculation to ensure transparency and allow for fair method comparison [18].

FAQ 2: How does a complex sample matrix affect LOD/LOQ, and how can I address it?

The sample matrix is one of the most significant factors elevating the method detection limit above the instrumental detection limit. Components in the matrix can:

- Increase baseline noise or cause interfering signals.

- Suppress or enhance the analyte signal (e.g., ion suppression in mass spectrometry).

- Increase the variability (standard deviation) of measurements at low concentrations.

Solutions:

- Use a Proper Blank: The blank should mimic the sample matrix as closely as possible but without the analyte. For endogenous analytes, this can be challenging, and a "surrogate" blank or a background subtraction technique may be necessary [18].

- Implement Sample Cleanup: Techniques like solid-phase extraction (SPE) or liquid-liquid extraction can remove interfering matrix components and reduce noise.

- Utilize the Standard Addition Method: This can help compensate for matrix effects by adding known quantities of the analyte directly to the sample.

- Follow EPA Guidelines: The USEPA MDL procedure explicitly requires the use of method blanks to calculate the MDL~b~, ensuring that background contamination and matrix effects are accounted for. The reported MDL is the higher of the values derived from spiked samples (MDL~S~) or method blanks (MDL~b~) [19].

FAQ 3: My blank values are high and variable. What should I do?

High and variable blanks directly inflate the LOD and LOQ calculated via the blank standard deviation method (as SD~b~ increases). To address this:

- Identify the Source: Systematically investigate and eliminate sources of contamination. Common culprits include:

- Reagents: Use higher purity solvents and chemicals.

- Water: Ensure the purity of laboratory water.

- Glassware and Containers: Use appropriate cleaning protocols and ensure containers are not a source of leachates.

- Laboratory Environment: Contamination from dust, vapors, or previous samples.

- Document and Justify: If a high blank is unavoidable, document its source and justify its acceptance. Regulatory procedures like the EPA's MDL allow for the exclusion of blank results associated with a documented instance of gross failure [19].

- Consider Alternative Methods: If the blank issue cannot be resolved, consider using the calibration curve or signal-to-noise method for determining LOD/LOQ, provided they are appropriate for your technique.

The Scientist's Toolkit: Essential Reagents and Materials

The following table lists key materials and their functions in establishing LOD and LOQ, particularly for chromatographic assays.

Table 2: Key Research Reagent Solutions for LOD/LOQ Studies [16] [19] [20]

| Item | Function / Purpose |

|---|---|

| High-Purity Analytical Standards | To prepare accurate calibration standards and spiked samples for determining the slope and standard error of the calibration curve. |

| Matrix-Matched Blank | A sample of the biological or chemical matrix free of the analyte, critical for evaluating background noise, interference, and for calculating LOD via the blank method. |

| High-Purity Solvents | To minimize baseline noise and ghost peaks in chromatographic systems that can interfere with detection and inflate blank values. |

| Stock Solutions for Fortification | Used to prepare low-level spiked samples at concentrations near the expected LOD/LOQ for empirical determination and verification. |

| Quality Control (QC) Samples | Low-concentration QCs (near the LOQ) are used to continuously verify that the method's sensitivity and precision remain acceptable over time. |

Frequently Asked Questions (FAQs)

Q1: What is the main difference between the old ICH Q2(R1) and the new ICH Q2(R2) and Q14?

The fundamental difference is a paradigm shift from a one-time validation event to a comprehensive lifecycle approach [15] [21]. The old ICH Q2(R1) provided a static, "check-the-box" framework for validating analytical procedures post-development [22]. The new guidelines, ICH Q2(R2) and ICH Q14, introduce a modernized, continuous process that begins with proactive development and extends throughout the method's operational life [15] [21]. This is supported by the introduction of the Analytical Target Profile (ATP) and a greater emphasis on risk management and science-based decision-making [15].

Q2: What is an Analytical Target Profile (ATP) and why is it important?

The Analytical Target Profile (ATP) is a prospective summary that describes the intended purpose of an analytical procedure and defines its required performance characteristics [15]. As defined in ICH Q14, creating the ATP is the first step in method development.

- Function: It ensures the method is designed to be "fit-for-purpose" from the very beginning [15].

- Benefit: A well-defined ATP provides clear targets for development and validation, facilitates a more scientific approach, and allows for more flexible post-approval changes [15] [21].

Q3: Our lab has methods already validated per ICH Q2(R1). Do we need to revalidate them all?

Not necessarily. The transition focuses on adopting the new lifecycle principles for methods going forward and during significant updates [21]. However, a strategic recommendation is to reassess existing analytical methods and validation processes against the new guidelines to identify areas for improvement and integrate lifecycle management principles where beneficial [21]. This is part of a proactive compliance strategy.

Q4: What are "Established Conditions" and how do they relate to change management?

Established Conditions (ECs) are the legally binding, validated parameters that define the method [23]. ICH Q14 and ICH Q12 provide a framework for a more flexible, risk-based change management system [23] [21]. By understanding the method's robustness and critical parameters thoroughly during the enhanced development process (as per Q14), sponsors can make minor changes within pre-defined ranges without extensive regulatory filings, provided a sound scientific rationale exists [15] [23].

Q5: Where can I find official training on these new guidelines?

The ICH itself has released comprehensive training materials. On 8 July 2025, the ICH published a series of training modules covering both Q2(R2) and Q14, which are available for download from the official ICH Q2(R2)/Q14 Implementation Working Group (IWG) webpage and the ICH Training Library [23]. These modules cover fundamental principles, practical applications, and case studies.

Troubleshooting Common Implementation Challenges

Challenge 1: Defining a Meaningful Analytical Target Profile (ATP)

- Problem: The ATP is too vague (e.g., "measure concentration") and does not provide clear, measurable performance criteria for development.

- Solution: Develop a quantitative ATP. Before starting development, clearly define the analyte, the expected concentration range, and the required performance criteria for accuracy, precision, and other relevant validation parameters [15].

- Example Protocol: For a potency assay, the ATP could be: "The method must quantify the active ingredient in the range of 70-130% of the label claim with an accuracy (mean recovery) of 98-102% and a precision (RSD) of ≤2.0%."

Challenge 2: Transitioning from a Minimal to an Enhanced Approach

- Problem: Organizations are accustomed to the minimal, empirical approach and struggle with the systematic, science-based "enhanced approach" described in ICH Q14.

- Solution: Adopt Analytical Quality by Design (AQbD) principles and tools [24].

- Experimental Protocol:

- Define the ATP: As described above.

- Identify Critical Method Attributes (CMAs): Determine which method parameters (e.g., column temperature, mobile phase pH, flow rate) are critical to meeting the ATP.

- Perform Risk Assessment: Use a tool like Failure Mode and Effects Analysis (FMEA) to systematically identify and rank potential sources of variability [21].

- Design of Experiments (DoE): Instead of testing one factor at a time, use a structured DoE to understand the relationship and interactions between CMAs and the method's performance. This builds a method operable design space (MODS).

- Develop a Control Strategy: Define the ranges for the CMAs to ensure the method consistently meets the ATP [24].

- Experimental Protocol:

Challenge 3: Demonstrating Robustness as a Continuous Activity

- Problem: Treating robustness testing as a single, pre-validation experiment, rather than an ongoing lifecycle activity.

- Solution: Integrate robustness assessment into the method's control strategy and ongoing monitoring.

- Troubleshooting Guide:

- Symptom: Method performance drifts over time.

- Investigation: Revisit the robustness studies and the defined MODS. Check if actual operating conditions (e.g., new reagent lot, slight instrument drift) are still within the validated parameter ranges.

- Action: Use the knowledge from the enhanced development to adjust the method within the MODS or implement additional controls, rather than a full revalidation [15] [21].

- Troubleshooting Guide:

Challenge 4: Managing the Increased Documentation Burden

- Problem: The enhanced, science-based approach requires more thorough documentation, which can be perceived as a burden.

- Solution: Strengthen documentation practices by implementing robust systems from the start.

- Action Plan: Ensure all phases of method development, validation, and any subsequent changes are thoroughly documented. This includes detailed records of the risk assessments, DoE studies, rationale for setting parameter ranges, and the method’s performance over time. This investment facilitates easier troubleshooting and streamlines regulatory audits [21].

Key Parameters for Validation: Traditional vs. Modern Lifecycle View

The core validation parameters have been expanded and their application is now viewed through the lens of the method's entire lifecycle.

Table 1: Comparison of Validation Parameters in the Traditional vs. Modern Lifecycle Context

| Validation Parameter | Traditional View (ICH Q2(R1)) | Modern Lifecycle View (ICH Q2(R2) / Q14) |

|---|---|---|

| Accuracy & Precision | Validated once for the procedure. | Continuously monitored; intra- and inter-laboratory studies are emphasized to ensure reproducibility [21]. |

| Linearity & Range | Range is the interval where linearity, accuracy, and precision are confirmed. | Range is directly linked to the ATP; requirements for statistical evaluation are more comprehensive [21]. |

| Robustness | Often an informal study. | Now a compulsory, formalized part of development and lifecycle management, tied to the control strategy [15] [21]. |

| Specificity | Ability to assess analyte in the presence of expected components. | Expanded to include modern techniques; assessment is more rigorous, especially for complex biologics [15] [21]. |

| Lifecycle Stage | Treated as a one-time event before method use. | A continuous process from development through retirement, managed via an ATP and control strategy [15]. |

The Analytical Procedure Lifecycle Workflow

The following diagram illustrates the continuous, science-based workflow for managing an analytical procedure under ICH Q2(R2) and ICH Q14, from initial conception through post-approval management.

Essential Research Reagent Solutions for Modern Method Development

Implementing the enhanced approach requires specific tools and materials. The following table details key solutions used in modern, Q14-compliant analytical development.

Table 2: Essential Research Reagent Solutions for AQbD and Method Lifecycle Management

| Item / Solution | Function / Application in Modern Validation |

|---|---|

| Certified Reference Materials (CRMs) | Essential for demonstrating method accuracy and ensuring metrological traceability during validation and ongoing verification [25]. |

| Quality Risk Management Software | Software tools that facilitate systematic risk assessment (e.g., FMEA) to identify Critical Method Attributes during development, as recommended by ICH Q14 [21]. |

| Design of Experiments (DoE) Software | Enables efficient and scientific exploration of factor interactions to build a robust method operable design space (MODS), a core part of the enhanced approach [24]. |

| Stable Reagent Suppliers | Critical for ensuring the consistency of Critical Method Attributes (CMAs) identified during development. Using qualified suppliers is part of a robust control strategy. |

| Data Integrity & Management Systems | Robust electronic lab notebooks (ELNs) and LIMS are mandatory for managing the enhanced documentation and data integrity requirements of ICH Q2(R2) and Q14 [21]. |

FAQ: Core Concepts of the Analytical Target Profile

What is an Analytical Target Profile (ATP)?

An Analytical Target Profile (ATP) is a prospective summary of the performance characteristics that describes the intended purpose and the anticipated performance criteria of an analytical measurement [26]. In simpler terms, it is a formal document that outlines what an analytical procedure needs to achieve—in terms of quality and reliability—before the method is even developed [27]. The ATP ensures the procedure remains "fit for purpose" throughout its entire lifecycle, from development to routine use [26].

How does the ATP differ from the Quality Target Product Profile (QTPP)?

The ATP is the analytical counterpart to the QTPP. The QTPP defines the quality characteristics of the drug product, while the ATP defines the performance requirements for the analytical procedure used to measure those characteristics [28]. The ATP provides the critical link between a product's Critical Quality Attributes (CQAs), defined in the QTPP, and the analytical methods needed to verify them [29].

What is the regulatory basis for the ATP?

The ATP is a key concept in two major guidelines:

- ICH Q14: "Analytical Procedure Development" defines the ATP and describes science and risk-based approaches for development and lifecycle management [28] [26].

- USP <1220>: "Analytical Procedure Lifecycle" frames the ATP as a fundamental component for ensuring the quality of reportable values [26].

Why is implementing an ATP important?

Using an ATP offers several key benefits [27]:

- Systematic Development: Provides a focused, systematic approach to method development and validation.

- Regulatory Communication: Facilitates clearer and more effective communication with regulatory authorities.

- Lifecycle Management: Serves as a foundation for monitoring procedure performance and managing changes post-approval.

Troubleshooting Guide: Common ATP Challenges and Solutions

| Challenge | Root Cause | Proposed Solution & Experimental Protocol |

|---|---|---|

| Unclear Method Purpose | The link between the analytical method and the product's Critical Quality Attribute (CQA) is not defined. | Action: Revise the ATP to explicitly state the intended purpose and its connection to the specific CQA [28]. Protocol: Review the Quality Target Product Profile (QTPP) to confirm all relevant CQAs have a corresponding analytical procedure with a defined ATP. |

| Poor Method Robustness | The ATP did not prospectively define robustness as a required performance characteristic, or the acceptance criteria were too narrow. | Action: Use a risk assessment to identify factors (e.g., column temperature, mobile phase pH) that may impact method performance [7]. Protocol: Employ experimental designs (e.g., Design of Experiments) to systematically study the impact of these factors and establish a Method Operable Design Region (MODR) to define robust operating conditions [7]. |

| Inadequate Control Strategy | The Analytical Control Strategy (ACS) for ongoing method verification is not aligned with the performance criteria in the ATP. | Action: Develop an ACS based on the ATP's performance characteristics [27]. Protocol: Define specific elements for the ACS, including System Suitability Testing (SST) parameters and frequency, procedures for routine equipment maintenance and calibration, and a plan for monitoring quality control sample data over time [27]. |

| High Uncertainty in Reportable Results | The ATP did not set sufficiently strict limits for the combined uncertainty (accuracy and precision) of the reportable value. | Action: Revisit the ATP to define the maximum allowable uncertainty for the reportable result needed to support quality decisions [29]. Protocol: Conduct method validation studies that treat accuracy and precision as a combined, holistic uncertainty characteristic, rather than as separate parameters [29]. |

Experimental Protocol: Developing an ATP and a Corresponding Control Strategy

The following workflow outlines the key stages in the analytical procedure lifecycle, driven by the ATP.

Phase 1: Define the ATP The process begins by defining the ATP based on the needs of the QTPP. The table below provides a template for documenting an ATP [28] [27].

Table: Analytical Target Profile (ATP) Template

| ATP Component | Description and Criteria |

|---|---|

| Intended Purpose | e.g., "Quantitation of the active ingredient in drug product release testing." |

| Technology Selected | e.g., "Reversed-Phase High-Performance Liquid Chromatography (RP-HPLC)." |

| Link to CQAs | e.g., "To ensure the drug product potency is within specification limits." |

| Performance Characteristic: Accuracy | Acceptance Criterion: e.g., "Recovery of 98-102%." |

| Performance Characteristic: Precision | Acceptance Criterion: e.g., "RSD < 2.0%." |

| Performance Characteristic: Specificity | Acceptance Criterion: e.g., "No interference from placebo or known impurities." |

| Performance Characteristic: Reportable Range | Acceptance Criterion: e.g., "50% to 150% of the target concentration." |

Phase 2: Method Development and Validation

- Risk Assessment: Identify critical method parameters (e.g., buffer pH, column temperature) that may significantly impact the performance characteristics defined in the ATP [7].

- Experimental Design (DoE): Use a structured approach, such as a d-optimal design, to study the impact of the high-risk factors. For an HPLC method, factors could include the ratio of solvent (X1), pH of the buffer (X2), and column type (X3). Output responses (e.g., retention time, peak area, tailing factor) are measured against the ATP criteria [7].

- Define the Method Operable Design Region (MODR): Using software and simulation (e.g., Monte Carlo), establish the MODR—the multidimensional combination of analytical procedure parameters that ensure method performance meets ATP requirements [7].

- Method Validation: Perform validation studies per ICH Q2(R2) to demonstrate that the method meets all the pre-defined performance characteristics in the ATP [28].

Phase 3: Establish an Analytical Control Strategy (ACS) The ACS is a planned set of controls to ensure the analytical procedure performs as defined by the ATP throughout its lifecycle [27]. Key components include:

- System Suitability Testing (SST): Establish criteria (e.g., resolution, tailing factor, precision) verified before each use to ensure the analytical system is performing correctly [27].

- Method Performance Monitoring: Track key performance indicators from quality control samples and validation data over time to detect trends or deviations [27].

- Equipment Maintenance and Calibration: Adhere to a strict schedule for instrument calibration and preventive maintenance [27].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table: Key Reagents and Materials for HPLC Method Development (Illustrative)

| Item | Function / Rationale |

|---|---|

| Inertsil ODS-3 C18 Column | A specific, well-characterized reversed-phase column used for the separation of small molecules like favipiravir, providing a known functioning state [7]. |

| Disodium Hydrogen Phosphate Anhydrous Buffer | Used to prepare the aqueous component of the mobile phase. Controlling its pH and molar concentration (e.g., 20 mM, pH 3.1) is critical for achieving consistent retention times and peak shape [7]. |

| HPLC-Grade Acetonitrile | A common organic solvent used in the mobile phase for reversed-phase chromatography. Its high purity is essential to minimize baseline noise and ghost peaks [7]. |

| Quality Control Samples | Samples with known concentrations of the analyte, used to continuously monitor the method's accuracy and precision during routine analysis, ensuring it remains fit for purpose [27]. |

From Theory to Lab Bench: Implementing Validation for HPLC and Spectrophotometric Methods

This guide provides a structured framework for designing an analytical method validation protocol that complies with global regulatory standards, specifically within the context of organic analytical techniques research.

Troubleshooting Guides and FAQs

Common Experimental Issues and Solutions

Issue 1: Poor Method Precision

- Problem: High variability in results when the same homogeneous sample is analyzed multiple times.

- Investigation & Solution:

- Check instrument performance and ensure system suitability tests are met before analysis [1].

- Review sample preparation steps for consistency; ensure analysts are properly trained on the method [30].

- Evaluate the analytical procedure using a risk assessment to identify steps that may influence precision [30].

Issue 2: Inaccurate Calibration Curve

- Problem: The calibration curve lacks linearity, showing a poor coefficient of determination (R²).

- Investigation & Solution:

- Verify the preparation of standard solutions, including serial dilutions.

- Confirm that the concentration range used is appropriate for the analyte and falls within the validated range of the method [15].

- Check for instrument malfunctions, such as a faulty detector or inconsistent flow rates in chromatographic systems.

Issue 3: Failing Specificity/Selectivity

- Problem: The method cannot distinguish the analyte from interferents present in the sample matrix.

- Investigation & Solution:

- Analyze a blank sample (placebo, if available) to identify interfering peaks or signals.

- If using chromatography, optimize the separation conditions (e.g., mobile phase composition, gradient, column temperature) to improve resolution [30].

- Consider using a different detection technique or wavelength that is more specific to the analyte [15].

Issue 4: Low Analytical Recovery

- Problem: The amount of analyte recovered from a spiked sample is unacceptably low.

- Investigation & Solution:

- Investigate potential analyte degradation during sample preparation or analysis (e.g., due to light, heat, or pH).

- Examine the sample extraction process for incomplete extraction or chemical losses.

- Ensure the reference standard is pure, qualified, and properly stored [1].

Frequently Asked Questions (FAQs)

Q1: What is the core difference between method validation and method verification?

- A: Validation is the process of confirming that a newly developed analytical method is suitable for its intended purpose. Verification is the process of demonstrating that a compendial or previously validated method works satisfactorily under the actual conditions of use in a specific laboratory [31] [1].

Q2: When is a full method validation required?

- A: A full validation is typically required for new analytical methods, especially when they are part of a regulatory submission like a New Drug Application (NDA) or Abbreviated New Drug Application (ANDA). It is also necessary when an existing method undergoes significant changes that are outside the original scope [15] [1].

Q3: What is an Analytical Target Profile (ATP)?

- A: The ATP, introduced in ICH Q14, is a prospective summary that describes the intended purpose of an analytical procedure and its required performance criteria. Defining the ATP at the start of method development ensures the method is designed to be fit-for-purpose from the very beginning [15].

Q4: How is the robustness of a method determined?

- A: Robustness is evaluated by deliberately introducing small, deliberate variations in method parameters (e.g., pH, temperature, flow rate) and observing the effect on the method's results. It demonstrates the method's reliability during normal usage [15].

Q5: What is the role of a risk assessment in method validation?

- A: A risk assessment (as per ICH Q9) is used to identify potential sources of variability during method development and validation. This helps in designing robustness studies and defining a suitable control strategy, ensuring resources are focused on the most critical aspects of the method [15] [30].

Core Validation Parameters

The table below summarizes the fundamental performance characteristics that must be evaluated to demonstrate a method is fit-for-purpose, as defined by ICH Q2(R2) [15] [1].

Table 1: Core Analytical Method Validation Parameters as per ICH Q2(R2)

| Parameter | Definition | Typical Methodology & Acceptance Criteria |

|---|---|---|

| Accuracy | The closeness of agreement between the measured value and a true or accepted reference value [15]. | Analyzed by spiking a placebo with known amounts of analyte or using a certified reference material. Reported as percent recovery (%Recovery). |

| Precision | The degree of agreement among individual test results when the procedure is applied repeatedly to multiple samplings of a homogeneous sample [15]. | Repeatability: Multiple analyses of the same sample by the same analyst. Intermediate Precision: Different days, different analysts, different equipment. Reported as relative standard deviation (%RSD). |

| Specificity | The ability to assess the analyte unequivocally in the presence of other components like impurities, degradants, or matrix components [15]. | Compare chromatograms or spectra of a blank sample, a standard, and a sample spiked with potential interferents. Demonstrate baseline separation or lack of signal interference. |

| Linearity | The ability of the method to obtain test results that are directly proportional to the concentration of the analyte [15]. | Analyze a series of standard solutions across the claimed range. The correlation coefficient (r), slope, and y-intercept are reported. |

| Range | The interval between the upper and lower concentrations of analyte for which the method has suitable linearity, accuracy, and precision [15]. | Derived from the linearity study. Must be specified and justified based on the intended use of the method. |

| Limit of Detection (LOD) | The lowest amount of analyte that can be detected, but not necessarily quantified [15]. | Based on signal-to-noise ratio (e.g., 3:1) or standard deviation of the response. |

| Limit of Quantitation (LOQ) | The lowest amount of analyte that can be quantified with acceptable accuracy and precision [15]. | Based on signal-to-noise ratio (e.g., 10:1) or standard deviation of the response, confirmed by analyzing samples at LOQ for acceptable accuracy and precision. |

| Robustness | A measure of the method's capacity to remain unaffected by small, deliberate variations in method parameters [15]. | Small changes in parameters (e.g., pH ±0.2, temperature ±2°C) are introduced. System suitability criteria must still be met. |

Experimental Protocol: A Step-by-Step Roadmap

The following workflow outlines the key stages in designing and executing a compliant validation protocol, integrating principles from ICH Q2(R2) and Q14 [15] [30].

Step 1: Define the Analytical Target Profile (ATP) Before any development, clearly define the purpose of the method and its required performance criteria in an ATP. This includes the analyte, its expected concentration range, and the required levels of accuracy, precision, and other relevant characteristics [15].

Step 2: Conduct a Risk Assessment Use a systematic process (e.g., Failure Mode and Effects Analysis - FMEA) to identify and evaluate potential sources of variability in the analytical procedure. This assessment directly informs which parameters require the most attention during development and validation [30].

Step 3: Develop a Detailed Validation Protocol Create a formal document that outlines:

- The objective and scope of the validation.

- A detailed description of the analytical procedure.

- A list of validation characteristics to be tested (e.g., accuracy, precision).

- The experimental design for each characteristic.

- Pre-defined acceptance criteria for each parameter [15] [1].

Step 4: Execute Validation Experiments Perform the experiments as stipulated in the protocol. This involves:

- Accuracy: Typically assessed by analyzing samples spiked with known quantities of analyte across the specified range (e.g., at 3 levels, in triplicate). Calculate the mean percent recovery [15].

- Precision:

- Repeatability: Analyze a minimum of 6 determinations at 100% of the test concentration. Calculate the %RSD.

- Intermediate Precision: Have a second analyst repeat the study on a different day and/or with different equipment. The combined %RSD should meet criteria [15].

- Linearity & Range: Prepare a minimum of 5 concentration levels spanning the declared range. Inject each level in duplicate. Plot response versus concentration and perform linear regression analysis [15].

- Specificity: Demonstrate that the analyte response is free from interference by analyzing blanks, placebo, and samples spiked with potential interferents (degradants, impurities) [15].

- Robustness: Intentionally vary parameters like column temperature (±2°C), flow rate (±0.1 mL/min), or mobile phase pH (±0.2 units) in a controlled way. Evaluate the impact on system suitability criteria [15].

Step 5: Document Results and Finalize the Report Compile all data into a final validation report. The report should include a summary of the results, a comparison against the pre-defined acceptance criteria, a discussion of any deviations, and a final conclusion on the method's fitness for its intended purpose [1].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following materials are critical for successfully developing and validating analytical methods for organic compounds.

Table 2: Essential Materials for Analytical Method Development and Validation

| Item | Function & Importance |

|---|---|

| Certified Reference Standards | High-purity, well-characterized analyte substances used to prepare calibration standards. Essential for establishing method accuracy, linearity, and for qualifying analysts [1] [30]. |

| Chromatographic Columns | The stationary phase for separation (e.g., C18, phenyl). Different selectivities are required to achieve resolution of the analyte from impurities and matrix components, which is critical for specificity [30]. |

| High-Purity Solvents & Reagents | Used for mobile phases and sample preparation. Impurities can cause baseline noise, ghost peaks, and interfere with detection, adversely affecting accuracy and LOD/LOQ [1]. |

| Stable Matrix/Placebo Samples | The analyte-free sample matrix. Used to prepare spiked samples for accuracy, precision, and recovery studies, and to demonstrate specificity by proving the absence of interfering signals [15] [1]. |

| System Suitability Standards | A reference preparation used to confirm that the chromatographic system and procedure are capable of providing data of acceptable quality. Tests often include parameters like plate count, tailing factor, and resolution [31] [1]. |

In pharmaceutical analysis, specificity is the ability of a method to accurately measure the analyte in the presence of other components like impurities, degradation products, or matrix components [32] [33]. Demonstrating specificity is a fundamental requirement for analytical method validation as per ICH Q2(R1) guidelines [34] [32].

Forced Degradation Studies (FDS) are the primary experimental tool for proving that an analytical method is stability-indicating [35] [34]. These studies involve intentionally exposing a drug substance or product to harsh stress conditions to accelerate its degradation. The goal is to generate samples containing potential degradants, which are then used to verify that the analytical method can distinguish the active ingredient from its breakdown products [34] [36]. A well-executed FDS provides critical data on degradation pathways and products, which informs formulation development, packaging choices, and storage conditions, ultimately ensuring drug safety and efficacy [35] [37].

Frequently Asked Questions (FAQs)

1. What is the core regulatory purpose of a forced degradation study?

The core purpose is threefold [35] [34]:

- Identify Degradation Products and Pathways: To understand how the drug substance breaks down under various stress conditions and to identify the resulting degradation products. This is crucial for assessing potential toxicity risks [35].

- Verify Stability-Indicating Methods: To generate samples that prove the developed analytical method (e.g., HPLC) can accurately quantify the active ingredient without interference from degradation products or impurities, as required by ICH Q2(R1) [34] [36].

- Support Product Development: The findings inform formulation design, selection of packaging, and establishment of retest periods and storage conditions [35].

2. How much degradation should we aim for in a stress study?

The generally accepted target for small molecule drugs is 5–20% degradation of the active pharmaceutical ingredient (API) [34]. This range ensures that sufficient degradants are generated to challenge the analytical method without causing excessive secondary degradation, which may not be relevant to real-world stability [34].

3. What are the key stress conditions required by ICH guidelines?

ICH Q1A(R2) recommends investigating the drug's susceptibility to [35] [34]:

- Hydrolytic Stress: Exposure to acidic and basic conditions (e.g., 0.1-1.0 M HCl or NaOH) at elevated temperatures (40-80°C) [35] [34].

- Oxidative Stress: Treatment with oxidizing agents like hydrogen peroxide (3-30%) at room or elevated temperature [35].

- Thermal Stress: Exposure to elevated temperatures (e.g., 40-80°C) in solid state or solution [35] [34].

- Photolytic Stress: Exposure to UV and visible light as per the conditions outlined in ICH Q1B [34].

4. What is peak purity analysis and why is it critical?

Peak Purity Analysis (PPA) is an assessment to confirm that a chromatographic peak (typically from an HPLC analysis) represents a single, pure compound and is not a mixture of co-eluting substances, such as the API and a degradant [36]. It is a critical piece of evidence to demonstrate that a method is truly stability-indicating. If a degradant co-elutes with the main peak, the method cannot accurately measure the purity or potency of the drug over time [36].

5. My peak purity assessment passed, but I suspect a co-eluting impurity. What could be the cause?

This is a potential false negative result. The most common causes are [36]:

- The co-eluting impurity has a nearly identical UV spectrum to the parent API.

- The impurity is present at a very low concentration (e.g., <0.1%).

- The impurity elutes very close to the peak apex of the main compound. In such cases, techniques with higher discriminating power, such as Mass Spectrometry (MS), should be employed for peak purity assessment [36].

Troubleshooting Guides

Guide: Overcoming Challenges in Forced Degradation Study Design

Problem: Inconsistent or excessive degradation, leading to irrelevant degradation products.

| Challenge | Solution & Best Practices |

|---|---|

| Determining Optimal Stress Severity | Use a Design of Experiments (DoE) approach to systematically optimize factors like concentration, temperature, and time. Start with milder conditions and increase severity incrementally to achieve the 5-20% degradation target [34]. |

| Handling Highly Stable Molecules | For molecules that show little degradation, consider extending exposure times (up to 14 days in solution) or employing more aggressive conditions, such as higher temperatures or stronger acid/base concentrations, with scientific justification [34]. |

| Justifying Conditions to Regulators | Base your study design on the molecule's chemical structure and known reactive functional groups (e.g., esters for hydrolysis, phenols for oxidation). Refer to emerging regulatory guidelines, such as Anvisa RDC 964/2025, which allows for scientific justification of the approach [37]. |

Guide: Troubleshooting Peak Purity Analysis

Problem: Inconclusive or failing peak purity results during method validation.

| Symptom | Potential Cause | Investigation & Resolution |

|---|---|---|

| Purity Angle > Purity Threshold (Impurity detected) | True Co-elution: A degradant is not fully separated from the main peak. | Action: Modify the chromatographic method (e.g., adjust gradient, change column, modify pH of mobile phase) to improve resolution [36]. |

| False Positive: A significant baseline shift due to a mobile phase gradient; suboptimal integration; or noise at extreme wavelengths (<210 nm) [36]. | Action: Re-process data with careful baseline placement. If the issue persists, consider using a mobile phase that produces a flatter baseline. | |

| Purity Angle < Purity Threshold (No impurity detected) but other data suggests impurity. | False Negative: The co-eluting impurity has a nearly identical UV spectrum or a very poor UV response [36]. | Action: Employ an orthogonal technique for PPA, such as Mass Spectrometry (MS). MS can detect co-eluting compounds based on mass differences, even when UV spectra are identical [36]. |

| Poor Mass Balance (Assay + Impurities < 90-110%) | Undetected Degradants: Degradation products may be forming that are not detected by the chosen analytical method (e.g., no chromophore for UV detection) [35] [36]. | Action: Use a universal detector like a Corona Charged Aerosol Detector (CAD) or combine UV with MS detection to identify and quantify non-UV absorbing degradants [36]. |

The Scientist's Toolkit: Essential Reagents and Materials

The following table lists key materials used in forced degradation studies and analytical method validation [38] [35] [39].

| Reagent / Material | Function & Application in Analysis |

|---|---|

| Hydrochloric Acid (HCl) | Used in acid hydrolysis stress studies to simulate degradation under acidic conditions [35]. |

| Sodium Hydroxide (NaOH) | Used in base hydrolysis stress studies to simulate degradation under basic conditions [35]. |

| Hydrogen Peroxide (H₂O₂) | The most common reagent for oxidative stress studies to force the formation of oxidative degradants [35]. |

| High-Quality HPLC Solvents (ACN, MeOH) | Used in the preparation of the mobile phase and sample solutions. Purity is critical for achieving low baseline noise and reproducible results [38] [39]. |