Multi-Factor Design of Experiments (DoE): A Strategic Framework for Accelerating Drug Development

This article provides a comprehensive guide to multi-factor Design of Experiments (DoE) for researchers, scientists, and professionals in drug development.

Multi-Factor Design of Experiments (DoE): A Strategic Framework for Accelerating Drug Development

Abstract

This article provides a comprehensive guide to multi-factor Design of Experiments (DoE) for researchers, scientists, and professionals in drug development. It covers the foundational principles of moving beyond one-factor-at-a-time (OFAT) approaches, explores advanced methodological frameworks like factorial and response surface designs for complex process optimization, offers practical strategies for troubleshooting and improving robustness, and concludes with validation techniques and comparative analyses of successful industry case studies. The content is designed to equip teams with the knowledge to enhance process understanding, reduce development timelines, and improve the success rate of bringing new therapies to market.

Beyond One-Factor-at-a-Time: Building a Foundation for Multi-Factor Experimentation

The Critical Limitations of OFAT in Complex Biological Systems

Frequently Asked Questions (FAQs)

Q1: What is OFAT and why is it commonly used in biological research? OFAT, or One-Factor-at-a-Time, is a traditional experimental approach where researchers vary a single factor while keeping all other variables constant. After observing the outcome, they reset conditions before testing the next factor [1]. Its popularity stems from its straightforward, intuitive nature and ease of implementation, requiring no advanced statistical knowledge for initial setup [1] [2].

Q2: What are the main critical limitations of OFAT in complex biological systems? OFAT possesses several critical limitations that are particularly problematic in biology:

- Inability to Detect Interactions: This is the most significant flaw. OFAT assumes factors act independently, but biological systems are defined by complex, emergent interactions between genetic and environmental factors [3] [2]. OFAT can completely miss these interactions, leading to incorrect conclusions. For example, the optimal amount of a growth factor may be different for each cell line; testing them independently would miss this critical detail [2].

- Inefficient Use of Resources: OFAT requires a large number of experimental runs to investigate multiple factors, consuming significant time, costly reagents, and other resources. This is especially detrimental when working with precious biological samples [1] [2].

- High Risk of Misleading Results: By failing to account for interactions, OFAT can identify a sub-optimal state that is far from the true optimum. It is easy to conclude a factor has no effect, or to misjudge the direction of its effect, when it is studied in isolation from its interacting partners [3] [2].

- Lack of Optimization Capability: OFAT is primarily suited for understanding individual effects, not for finding the optimal combination of factors to maximize or minimize a desired response (e.g., protein yield or cell growth) [1].

Q3: How does Design of Experiments (DoE) overcome these limitations? DoE is a statistical framework that systematically tests multiple factors simultaneously. Its advantages over OFAT include [3] [1] [2]:

- Reveals Interaction Effects: DoE is specifically designed to identify and quantify how factors interact.

- Greater Efficiency and Confidence: It extracts more information from fewer experiments, saving time and resources. The structured approach and statistical analysis provide higher confidence in the results.

- Robust Optimization: Using methods like Response Surface Methodology (RSM), DoE can navigate a complex design space to find true optimal conditions, including robust "plateaus" that are insensitive to small variations [2].

Q4: My biological system is very complex with many unknown variables. Can DoE still help? Yes. In fact, DoE is particularly powerful in such scenarios. Its empirical nature helps tackle complexity without the bias of pre-existing theoretical frameworks. You can start with screening designs (e.g., fractional factorial designs) to efficiently identify which factors, from a large list of possibilities, have a material impact on your response. This allows you to focus resources on the most important variables [4] [2].

Q5: What should I do if I cannot control all the factors in my biological experiment? This is a common challenge. DoE handles it through specific design principles [4]:

- Blocking: If you know a source of variability (e.g., different days, different technicians, different reagent batches), you can group experiments into homogeneous "blocks" to isolate and account for this nuisance factor.

- Randomization: Running your experimental trials in a random order helps minimize the impact of lurking, uncontrolled variables.

- Covariates: If you can measure but not control a factor (e.g., ambient humidity), you can record it and include it as a covariate in your statistical analysis.

Troubleshooting Guides

Problem 1: Inconsistent or Irreproducible Experimental Results

Potential Cause: Unidentified interactions between factors are leading you to operate in a sensitive region of your design space, where small, uncontrolled variations have large effects on the outcome [2].

Solution Steps:

- Suspect Factor Interactions: If your OFAT results are difficult to reproduce, interactions are a likely culprit.

- Switch to a Factorial Design: Implement a DoE screening design, such as a 2-level full or fractional factorial design, to systematically test your key factors together.

- Analyze for Interactions: Use the statistical analysis from the DoE to create an interaction plot. This visualization will clearly show if the effect of one factor depends on the level of another.

- Find a Robust Region: Use a follow-up response surface design to locate a factor setting that is on a "plateau" of high performance, which is more robust to variation than a sharp "peak" [2].

Problem 2: Failing to Achieve Optimal System Performance (e.g., Low Titer, Poor Growth)

Potential Cause: The OFAT approach has led you to a local optimum and missed the global optimum because it cannot navigate the complex, multi-dimensional relationship between factors [2].

Solution Steps:

- Map the Design Space: Employ a Response Surface Methodology (RSM) design, such as a Central Composite Design or Box-Behnken Design [5] [1].

- Build a Predictive Model: Fit a quadratic model to your experimental data. This model will describe the curvature in your response.

- Locate the True Optimum: Use the model's graphical outputs, like contour plots and 3D surface plots, to identify the combination of factor levels that yields the maximum (or minimum) response.

- Validate the Model: Run a small number of confirmation experiments at the predicted optimal settings to verify the model's accuracy.

Potential Cause: The sequential nature of OFAT is inherently inefficient for studying multiple factors, leading to an explosion in the required number of experimental runs [1] [2].

Solution Steps:

- Adopt a Sequential DoE Strategy:

- Step 1: Screening: Use a highly fractional factorial design or a Plackett-Burman design to quickly screen a large number of factors and identify the vital few.

- Step 2: Characterization: Perform a more detailed factorial design on the important factors to characterize main effects and interactions.

- Step 3: Optimization: Use RSM on the most critical factors to find the optimum [5] [4].

- Utilize Software: Leverage statistical software (e.g., JMP, Minitab, R with DoE packages) to generate and analyze efficient experimental designs [4] [6].

Data Presentation

The table below quantifies the key differences between OFAT and DoE approaches.

Table 1: A Quantitative Comparison of OFAT and DoE

| Feature | OFAT Approach | DoE Approach | Implication for Biological Research |

|---|---|---|---|

| Detection of Interactions | Cannot detect interactions [1] [2] | Explicitly quantifies interaction effects [1] [2] | Prevents misleading conclusions in complex networks |

| Experimental Efficiency | Low; requires many runs (e.g., 16 for 4 factors) [1] | High; fewer runs for same information (e.g., 8 for 4 factors) [2] | Saves time, reagents, and biological materials |

| Statistical Robustness | Low; no inherent estimation of experimental error [1] | High; built on randomization, replication, & blocking [1] | Provides confidence in results and their reproducibility |

| Optimization Capability | Limited to understanding individual effects [1] | Powerful for single and multi-response optimization [5] [2] | Finds true optimal conditions for yield, growth, etc. |

| Risk of Sub-Optimal Result | High; easily misses global optimum [2] | Low; systematically explores design space [3] | Leads to better-performing biological systems |

Experimental Protocols

Protocol 1: Screening for Critical Factors Using a Fractional Factorial Design

Objective: To efficiently identify the most influential factors from a large set of potential variables in a cell culture medium optimization study.

Methodology:

- Define Factors and Ranges: List all factors to be investigated (e.g., pH, temperature, concentration of nutrients, trace elements). Define a high and low level for each based on prior knowledge.

- Select Design: Use a fractional factorial design, such as a 2^(k-p) design, where

kis the number of factors andpdetermines the fraction. This allows studying many factors with a fraction of the runs of a full factorial. - Randomize and Execute: Randomize the run order provided by the design to minimize confounding from lurking variables. Perform the experiments according to this randomized list.

- Analyze Results: Use statistical software to perform an Analysis of Variance (ANOVA). Identify significant main effects and two-factor interactions by examining Pareto charts and normal probability plots of the effects.

Protocol 2: Process Optimization Using a Central Composite Design (RSM)

Objective: To model the relationship between critical factors and a key response (e.g., protein expression titer) and locate the optimal factor settings.

Methodology:

- Define Critical Factors: Select the 2-4 most important factors identified from the screening phase.

- Create Design: Generate a Central Composite Design (CCD). A CCD includes a factorial part, axial points (to estimate curvature), and center points (to estimate pure error). It is highly efficient for fitting a quadratic model [5] [1].

- Run Experiments: Execute the experiments in a randomized order.

- Model and Optimize: Fit a second-order polynomial model to the data. Use ANOVA to check the model's significance and lack-of-fit. Visualize the response surface with contour and 3D plots. Use the desirability function to find factor settings that simultaneously optimize multiple responses [6].

Pathway and Workflow Visualizations

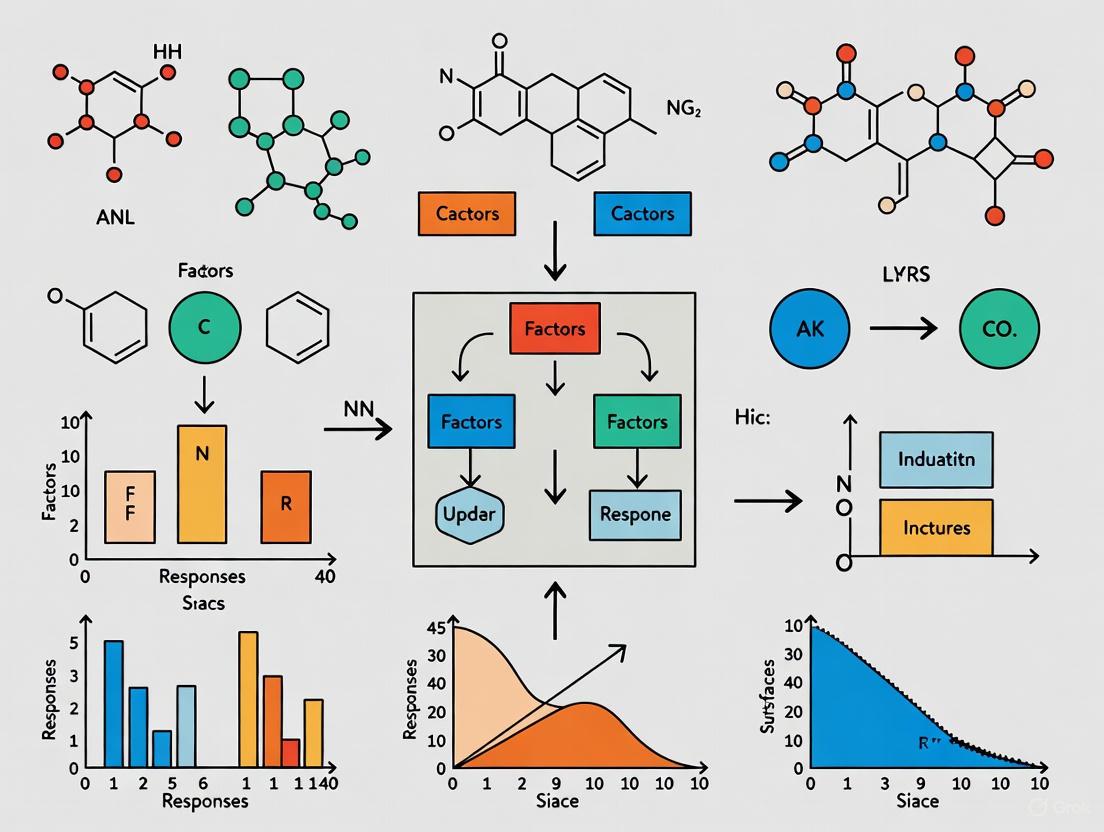

Diagram Title: Workflow Comparison of OFAT and DoE

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for DoE Implementation

| Reagent/Material | Function in DoE Context |

|---|---|

| Cell Culture Media Components | The factors to be optimized (e.g., glucose, amino acids, growth factors). Their concentrations are systematically varied in the experimental design. |

| Statistical Software (JMP, Minitab, R) | Crucial for generating efficient experimental designs, randomizing run orders, and performing complex statistical analyses (ANOVA, regression) to interpret results [4] [6]. |

| High-Throughput Screening Plates | Enable the parallel execution of multiple experimental runs from a DoE matrix, drastically reducing hands-on time and improving consistency. |

| Precision Liquid Handling Systems | Ensure accurate and reproducible dispensing of reagents and cells across all experimental runs, which is critical for reducing experimental error. |

| DoE Screening Designs | Pre-defined statistical templates (e.g., Plackett-Burman, Fractional Factorial) used to efficiently identify the most important factors from a long list with minimal runs [2]. |

| Response Surface Designs | Pre-defined statistical templates (e.g., Central Composite, Box-Behnken) used to model curvature and locate optimal factor settings after screening [5] [1]. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My experimental results are inconsistent and not reproducible. What core DoE principle might I be violating? A: This issue commonly stems from inadequate Replication [7]. Replication involves running multiple independent experimental units under the same treatment conditions. It increases statistical power, quantifies experimental noise, and improves the reliability of effect estimates. Ensure your design includes sufficient replicates to distinguish true signal from random variation.

Q2: I suspect an unknown external factor is biasing my results. How can DoE principles guard against this? A: You should apply Randomization [7]. This principle involves randomly assigning treatments or running experimental trials in a random order. It ensures that the influence of unknown or uncontrollable "nuisance" factors (e.g., instrument drift, ambient temperature fluctuations) is distributed evenly across all treatments, preventing them from being confounded with your factor effects.

Q3: I have a known source of variability (e.g., different reagent batches, day of week) that I cannot eliminate. How can I account for it in my design? A: Use Local Control (Blocking) [7]. Group similar experimental units into blocks (e.g., all experiments using the same reagent batch). By comparing treatments within the same block, you isolate and remove the block's effect from the experimental error, leading to a more precise analysis of the factors you care about.

Q4: I'm screening many factors. How do I efficiently identify the few that truly matter? A: Leverage the Effect Sparsity principle [7]. In most systems, only a small subset of factors and their low-order interactions have significant effects. Use screening designs (e.g., fractional factorials, Plackett-Burman) to efficiently test many factors with few runs, focusing resources on characterizing the vital few.

Q5: When analyzing a factorial experiment, which effects should I prioritize in my model? A: Follow the Effect Hierarchy principle [7]. Main effects (individual factors) are most likely to be significant, followed by two-factor interactions, then higher-order interactions. Prioritize identifying and estimating lower-order effects before considering complex interactions.

Q6: Can I include an interaction term in my model if the corresponding main effects are not significant? A: This is guided by Effect Heredity [7]. Strong heredity suggests an interaction should only be considered if both parent main effects are significant. Weak heredity allows it if at least one parent is significant. These are guidelines to prevent overfitting and build more interpretable models.

Q7: My traditional OFAT approach failed to find optimal conditions. Why would a multifactorial DoE be better? A: OFAT methods cannot detect interactions between factors [8]. In a system where factors interact, the effect of one factor depends on the level of another. DoE systematically varies all factors simultaneously, allowing you to model these interactions and uncover a true optimal region that OFAT would miss, as demonstrated in the Temperature/pH Yield example [8].

Q8: How does DoE contribute to regulatory goals like Quality by Design (QbD) in pharma? A: DoE is a foundational tool for implementing QbD [9]. It provides the statistical framework to build a design space—a multidimensional region where critical process parameters (CPPs) and material attributes (CMAs) are shown to produce material meeting Critical Quality Attributes (CQAs). This moves quality assurance from end-product testing to being built into the process through deep process understanding.

Data Presentation: OFAT vs. DoE Efficiency

Table 1: Comparison of Experimental Effort and Insight for a Two-Factor Optimization Scenario: Maximizing Yield with factors Temperature (T) and pH.

| Metric | One-Factor-At-a-Time (OFAT) Approach [8] | Design of Experiments (DoE) Approach [8] |

|---|---|---|

| Total Experiments | 13 runs (7 for T + 6 for pH) | 12 runs (9 treatment combos + 3 replicates) |

| Identified Maximum Yield | 86% (at T=30°C, pH=6) | 92% (Predicted at T=45°C, pH=7) |

| Ability to Detect Interaction (T*pH) | No | Yes |

| Coverage of Experimental Region | Limited to two lines | Comprehensive surface model |

| Resource Efficiency | Lower (missed optimum, no interaction data) | Higher (found true optimum with fewer runs vs. full factorial) |

Table 2: Core DoE Principles for Robust Experimentation [7]

| Principle | Purpose | Key Action |

|---|---|---|

| Replication | Increase precision, estimate error. | Run multiple independent units per treatment condition. |

| Randomization | Neutralize unknown bias, validate error estimates. | Randomly assign treatments/run order. |

| Blocking (Local Control) | Eliminate known nuisance variation. | Group similar units; randomize within blocks. |

| Effect Sparsity | Focus resources on vital factors. | Use screening designs for many factors. |

| Effect Hierarchy | Prioritize model terms. | Model main effects before interactions. |

| Effect Heredity | Guide model building for interactions. | Link interactions to their parent main effects. |

Experimental Protocols

Protocol 1: Conducting a Screening DoE for Assay Development Objective: Identify critical factors (e.g., reagent concentration, incubation time, temperature) affecting an assay's precision and accuracy.

- Define Purpose & Responses: Clearly state the goal (e.g., improve precision). Define measurable responses (e.g., %CV, signal-to-noise ratio) [10].

- Perform Risk Assessment: List all potential factors from materials, equipment, methods, and analysts. Use risk ranking to select 4-8 most likely influential factors for the study [10].

- Select Design: For 5-8 factors, use a fractional factorial or Plackett-Burman screening design. These designs use a minimal number of runs to identify active main effects [10].

- Implement Error Control: Include replicates (full repeat of run) to estimate pure error. Randomize the run order of all experiments [7].

- Execute & Analyze: Run experiments as per randomized list. Analyze data using multiple regression. Apply the Effect Sparsity principle to identify the 2-4 most significant factors [7].

- Plan Next Steps: Use significant factors from screening in a more detailed optimization design (e.g., Response Surface Methodology).

Protocol 2: Executing a Response Surface DoE for Process Optimization Objective: Model curvature and find optimal setpoints for Critical Process Parameters (CPPs) identified during screening.

- Define Design Space: Set low and high levels for each CPP (e.g., 2-3 factors) based on screening results or prior knowledge.

- Select Design: Use a Central Composite Design (CCD) or Box-Behnken Design. These include factorial points, center points (replicated to estimate pure error and curvature), and axial points (for estimating quadratic effects) [8].

- Randomization & Blocking: Fully randomize run order. If experiments must be done over multiple days, use "block" as a factor to account for day-to-day variation [7].

- Build a Predictive Model: Perform regression analysis, fitting a model with main effects, interactions, and quadratic terms (e.g.,

Yield = β0 + β1A + β2B + β12AB + β11A² + β22B²) [8]. - Define the Design Space: Use the model to create contour plots. The design space is the region where predictions meet all CQA criteria [9]. Conduct confirmation runs at the predicted optimum to validate the model.

Mandatory Visualization

DoE Workflow for Process Optimization

OFAT vs DoE: Finding the True Optimum

The Scientist's Toolkit: Research Reagent & Solution Essentials

Table 3: Key Materials for DoE-Driven Assay & Process Development

| Item | Function in DoE Context | Relevance to Protocol |

|---|---|---|

| Reference Standards [10] | Well-characterized materials used to determine method accuracy (bias). Essential for defining the "true" value when optimizing an analytical method as a process. | Critical for Protocol 1 (Assay Dev). |

| Liquid Handling System (e.g., non-contact dispenser) [11] | Enables precise, high-throughput dispensing of multiple reagents across many DoE runs. Automation enhances reproducibility, minimizes human error, and makes complex multifactorial experiments feasible. | Supports execution of both Protocols. |

| Cell Suspensions / Biological Reagents [11] [12] | The variable "material attributes" in biological DoE (e.g., cell type, media composition). DoE optimizes their expansion/activity (e.g., CAR-T cells) [12]. | Central to biological optimization in Protocol 2. |

| Buffer & Solvent Components [11] | Factors in formulation or assay condition DoE. Their concentrations, pH, and ionic strength are systematically varied to understand impact on stability or performance. | Key factors in both screening and optimization designs. |

| DOE Software Platform [11] [8] | Tools for designing statistically sound experiments, randomizing run orders, and performing advanced regression analysis to build predictive models and visualize design spaces. | Required for the Design and Analysis phases of all Protocols. |

Core Terminology in Pharmaceutical DoE

Factors

In Design of Experiments (DoE), factors are the input variables or conditions that an experimenter deliberately changes to observe their effect on the output (response) [13]. In a pharmaceutical context, these are variables that can influence a drug's effect, development process, or manufacturing outcome.

Table: Classification of Common Factors in Pharmaceutical Research

| Factor Category | Description | Pharmaceutical Examples |

|---|---|---|

| Controllable Process Factors | Variables that can be directly set and maintained by the researcher during development or manufacturing. | Temperature, pressure, concentration, flow rate, agitation [14]. |

| Drug-Related Factors | Inherent properties of the active pharmaceutical ingredient or formulation. | Dosage, route of administration, release profile [15] [16]. |

| Patient-Related Factors | Variables related to the individual taking the medication that can affect drug response. | Age, body size, genetic factors, presence of kidney or liver disease [16]. |

| Concomitant Factors | Other substances consumed by the patient that can interact with the drug. | Use of other prescription medications, dietary supplements, consumption of food or beverages [15] [16]. |

Responses

Responses are the measurable outputs or outcomes of an experiment. They are the critical quality attributes that are influenced by the changes in the input factors [13]. In pharmaceuticals, monitoring response to medications is crucial, as everyone responds to medications differently due to the many factors involved [16].

Table: Types of Responses in Pharmaceutical Development

| Response Type | Description | Pharmaceutical Examples |

|---|---|---|

| Primary Efficacy Response | The primary measure of a drug's intended therapeutic effect. | Reduction in viral load, tumor size reduction, pain score improvement. |

| Pharmacokinetic (PK) Response | Measurements related to the drug's absorption, distribution, metabolism, and excretion (ADME). | Serum drug concentration, half-life, area under the curve (AUC), time to maximum concentration (Tmax) [15]. |

| Safety & Toxicity Response | Measures of adverse effects or potential harm. | Severity of side effects, changes in liver enzymes, drug-induced toxicity. |

| Process Quality Attributes | Measurements of the physical or chemical properties of the drug product during manufacturing. | Tablet hardness, dissolution rate, impurity level (e.g., Host Cell Protein - HCP), stability [17]. |

Interactions

Interactions occur when the effect of one factor depends on the level of another factor. Identifying these is a key advantage of multi-factor DoE over one-factor-at-a-time (OFAT) experimentation [13]. In pharmacology, this often refers to how one substance affects another.

Table: Types of Interactions in DoE and Pharmacology

| Interaction Type | Description | Implications |

|---|---|---|

| Factor-Factor Interaction | When the effect of one input factor on the response depends on the level of a second input factor. | Allows for process optimization; reveals complex relationships that would be missed in OFAT studies [13]. |

| Drug-Drug Interaction | A change in a drug's effects due to recent or concurrent use of another drug[s] [15]. | Can increase or decrease the effects of one or both drugs, potentially causing adverse effects or therapeutic failure [15]. |

| Drug-Nutrient Interaction | A change in a drug's effects due to the ingestion of food [15]. | Can alter drug absorption (e.g., taking with food) and requires specific administration instructions. |

| Synergistic Effect | An interaction where the combined effect of factors is greater than the sum of their individual effects. | Can be used therapeutically (e.g., lopinavir and ritonavir coadministration increases serum lopinavir concentrations [15]). |

| Antagonistic Effect | An interaction where the combined effect of factors is less than the sum of their individual effects. | Can lead to therapeutic failure and may require dosage adjustments or medication changes [15]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why should I use a multi-factor DoE approach instead of the traditional one-factor-at-a-time (OFAT) method in my pharmaceutical research?

Multi-factor DoE is significantly more efficient and informative. It allows you to manipulate multiple input factors simultaneously to identify important interactions that would be missed in OFAT experimentation [13]. For example, a drug's efficacy (response) might be high at a specific combination of dosage and patient age that you would not discover if you only varied one factor at a time. OFAT is inefficient and can lead to incorrect conclusions about the key factors in a process [13].

Q2: My DoE results are unexpected or show high variability. What are the most likely causes and how can I resolve them?

Unexpected results often stem from uncontrolled factors or issues with the measurement system.

- Cause: Presence of a lurking variable (an unmeasured factor affecting the response). In pharmaceuticals, this could be an unnoticed drug interaction [15] or a patient-specific factor like liver function [16].

- Solution: Review your process map with subject matter experts to identify all potential input factors. Use blocking in your experimental design to account for known but uncontrollable sources of variation (e.g., different raw material batches) [13].

- Cause: Unreliable measurement of the response.

- Solution: Ensure your measurement system (e.g., ELISA for HCP quantification) is stable and repeatable. Use control samples across the analytical range for quality control [17]. For variable responses, a precise measure is preferable to a pass/fail attribute [13].

Q3: How can I model the relationship between factors and responses to find an optimal formulation or process?

After initial screening designs to identify key factors, a Response Surface Methodology (RSM) can be used. RSM is designed to model the response and locate the region of values where the process is close to optimization [13]. This involves:

- Running a designed experiment (e.g., a central composite design) that varies the key factors around the suspected optimum.

- Fitting a mathematical model (often a quadratic polynomial) to the data.

- Using the model to create a response surface plot and perform numerical optimization to find the factor settings that produce the most desirable response[s] [13].

Q4: In a clinical context, what are the most common factors that lead to variable drug response among patients?

The way a person responds to a medication is affected by many factors [16], including:

- Age: Infants and older adults have less effective liver and kidney function, leading to medication accumulation [16].

- Body Size: Affects drug distribution and dosage requirements [16].

- Concomitant Medications & Supplements: Drug-drug interactions can increase or decrease effects [15] [16].

- Food & Beverages: Drug-nutrient interactions can alter absorption [15] [16].

- Disease State: Conditions like kidney or liver disease impair the body's ability to metabolize and eliminate drugs [16].

Troubleshooting Common Experimental Issues

Problem: High Background Noise in Analytical Assays (e.g., ELISA)

- Potential Cause: Non-specific binding or sample matrix effects.

- Solution: Modify the assay protocol, such as adjusting sample volume or incubation times. It is critical to qualify that these changes achieve acceptable accuracy, specificity, and precision [17]. Always run positive and negative controls to assess performance [18].

Problem: A Drug-Drug Interaction is Suspected in Clinical Data

- Action: Consider the interaction as a possible cause of any unexpected problems [15].

- Investigation: Determine serum concentrations of the selected medications, consult the literature or an expert in drug interactions, and adjust the dosage until the desired effect is produced. If dosage adjustment is ineffective, the medication should be replaced by one that does not interact with other medications being taken [15].

Problem: Process Optimization Does Not Yield a Robust Solution

- Potential Cause: Key factor interactions were not adequately modeled, or the experimental region was too narrow.

- Solution: Apply a repetitive DoE approach. Start with a screening design to narrow the field of variables, followed by a full factorial design to study all combinations, and finally a response surface design to model the response for optimization [13].

Detailed Experimental Protocols

Protocol: Performing a Screening DoE for a Bioprocess

Objective: To identify the key factors (e.g., temperature, pressure, concentration) that significantly affect a critical quality attribute (response) like impurity level (HCP) or yield.

Methodology:

- Define Inputs and Outputs: Acquire a full understanding of the inputs (factors) and outputs (responses) using a process flowchart. Consult with subject matter experts [13].

- Select Factors and Levels: Choose the factors to investigate and determine realistic high (+1) and low (-1) levels for each [13].

- Create Design Matrix: Use a fractional factorial design (e.g., a 2^(n-1) design) to efficiently screen a large number of factors with a reduced number of experimental runs. For 4 factors, this requires 8 runs instead of the full 16 [13].

- Randomize and Run: Perform the experimental runs in a randomized order to eliminate the effects of unknown or uncontrolled variables [13].

- Analyze Results: Calculate the main effect of each factor and the interaction effects. Plot the effects in a Pareto chart to visually identify which factors are most significant [13].

- Statistical Analysis: Use DOE software to perform an analysis of variance (ANOVA) to determine the statistical significance of the effects.

Protocol: Qualifying a Modified Analytical Assay

Objective: To qualify an ELISA or similar assay after modifying its protocol (e.g., changing incubation times) to ensure it remains fit for purpose [17].

Methodology:

- Define Modification: Clearly state the change to the protocol (e.g., "reduction of Sample Incubation Time from 2 hours to 1 hour").

- Assess Specificity: Ensure the assay specifically measures the intended analyte without interference.

- Determine Accuracy via Spike Recovery:

- Prepare samples spiked with known quantities of the analyte.

- Calculate the percentage of the known amount that the assay recovers. Acceptable recovery rates (e.g., 80-120%) should be pre-defined based on the assay's requirements [17].

- Establish Precision: Run multiple replicates (e.g., n=6) of at least two control samples (a low and a high control) across multiple days. Calculate the %CV for within-run and between-run precision [17].

- Re-establish Sensitivity: Determine the new Limit of Detection (LOD) and Limit of Quantitation (LOQ) for the modified protocol [17].

Process Visualization & Workflows

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for DoE in Biopharmaceutical Development

| Item / Solution | Function / Application | Key Considerations |

|---|---|---|

| DoE Software (JMP, Minitab, Design-Expert) | Simplifies the design, analysis, and visualization of complex factorial experiments [14]. | Enables numerical optimization, generates 3D response surface plots, and calculates interaction effects [14] [13]. |

| Host Cell Protein (HCP) ELISA Kits | Quantifies process-related impurities (HCPs) in biotherapeutic products, a critical quality attribute [17]. | Assays are semi-quantitative; quality control requires running controls made with your specific analyte and matrix [17]. |

| Control Samples (e.g., PPIB, dapB) | Used as positive and negative controls in assays like RNAscope or ELISA to assess sample quality and assay performance [18]. | A positive control (e.g., PPIB) should generate a known score; a negative control (e.g., dapB) should show little to no signal [18]. |

| HybEZ Hybridization System | Maintains optimum humidity and temperature during in-situ hybridization (ISH) assays like RNAscope [18]. | Required for specific workflow steps to ensure consistent and reliable assay results [18]. |

| ImmEdge Hydrophobic Barrier Pen | Creates a barrier on slides to contain reagents during staining procedures [18]. | Essential for preventing tissue drying and ensuring consistent reagent coverage throughout the assay [18]. |

The Role of DoE in Implementing Quality by Design (QbD)

Troubleshooting Guides

Issue 1: Selecting the Wrong Type of DoE Design

Problem Description Researchers often struggle to choose the appropriate experimental design for their specific QbD stage, leading to inefficient experiments, overlooked interactions, or an unmanageable number of runs [19] [20].

Diagnosis and Solution

- Diagnosis: The experiment requires excessive resources, fails to identify key variables, or cannot model curvature in responses [19] [20].

- Solution: Select the design based on your project phase and objectives [20]:

- Early Phase (Screening): Use fractional factorial or Plackett-Burman designs to identify the few critical factors from many candidates with minimal runs [19] [20].

- Mid Phase (Refinement): Use full factorial designs to understand main effects and interactions for a focused set of factors [20].

- Final Phase (Optimization): Use Response Surface Methodology (RSM) designs like Central Composite or Box-Behnken to model complex, nonlinear relationships and find the optimal process settings [5] [20].

Issue 2: Inability to Detect Factor Interactions

Problem Description The "one-factor-at-a-time" (OFAT) approach is inefficient and fails to reveal how factors interact, resulting in a process that is fragile and performs poorly under real-world variability [21].

Diagnosis and Solution

- Diagnosis: Process performance changes unpredictably when multiple input parameters deviate slightly from their set points [21].

- Solution: Implement a structured DoE that systematically tests factor combinations [21] [20].

- Factorial Designs are the primary tool for detecting interactions [20].

- A

2³ full factorialdesign (3 factors, 2 levels each) requires only 8 runs to quantify all main effects and two- and three-factor interactions [21]. - Analyze data using statistical software to generate interaction plots and Pareto charts, which visually highlight significant interactions impacting your CQAs [22].

Issue 3: Managing High Experimental Effort and Cost

Problem Description A full factorial design becomes prohibitively expensive and time-consuming as the number of factors increases, making comprehensive experimentation impractical [19] [20].

Diagnosis and Solution

- Diagnosis: The number of experimental runs required for a full factorial design grows exponentially with factors [20].

- Solution: Implement a sequential DoE strategy [19] [20]:

- Screening: Use a highly fractional factorial or Plackett-Burman design to filter out insignificant factors [19] [20].

- Optimization: Apply a more detailed design (e.g., RSM) only on the vital few factors identified [5] [20].

- This approach can reduce experimental runs by over 50% while retaining the ability to find optimal conditions [19].

Frequently Asked Questions (FAQs)

General DoE and QbD Principles

Q1: What is the fundamental connection between DoE and QbD? A1: DoE is the primary statistical engine that makes QbD possible. QbD is a systematic framework for building quality into products based on sound science and risk management. DoE provides the structured methodology to gain the necessary process understanding required by QbD. It enables the precise definition of Critical Process Parameters (CPPs) and their functional relationships with Critical Quality Attributes (CQAs), leading to the establishment of a validated design space [22] [23] [21].

Q2: At what stage in drug development should we start applying DoE within a QbD framework? A2: Systematic DoE application is most valuable beginning at the end of Phase II clinical trials. At this stage, sufficient knowledge of the drug substance exists to intelligently select factors and levels for comprehensive process development. This includes defining a design space for unit operations and considering advanced control strategies like Real-Time Release Testing (RTRT) [24].

Technical Execution of DoE

Q3: What is the critical difference between a screening design and an optimization design? A3: The key difference is their objective and, consequently, their complexity and run count [19] [20].

- Screening Designs (e.g., Fractional Factorial, Plackett-Burman) aim to separate the "vital few" important factors from the "trivial many." They are efficient and use fewer runs, but they confound interactions and cannot model curvature [19] [20].

- Optimization Designs (e.g., Central Composite, Box-Behnken) aim to model the response surface in detail. They require more runs but can identify nonlinear effects and pinpoint a precise optimum [5] [20].

Q4: How do we handle both continuous and categorical factors in a single DoE? A4: A mixed-level approach is often effective [5]:

- First, use a design like Taguchi to identify the optimal level for the categorical factors (e.g., choice of excipient type, filter membrane material).

- Then, with the categorical factor fixed at its optimal level, perform a Response Surface Methodology (RSM) design like Central Composite to optimize the continuous factors (e.g., mixing speed, temperature) [5].

Data Analysis and Interpretation

Q5: In a fractional factorial design, what is "aliasing" and how should I address it? A5: Aliasing (or confounding) occurs when the design is unable to distinguish between the effects of two or more factors or interactions [20]. It's a trade-off for reducing run numbers.

- Addressing Aliasing: The strategy relies on the sparsity of effects principle—the belief that higher-order interactions (three-way and above) are negligible. If analysis suggests a significant effect is aliased with a less plausible interaction, you can often safely attribute the effect to the main factor or two-way interaction. If uncertainty remains, "folding" the design (adding a second set of runs) can de-alias the effects [19] [20].

Q6: What is a "design space" in QbD, and how is it different from a proven acceptable range (PAR)? A6: A design space is a multidimensional combination of input variables (e.g., material attributes) and process parameters that have been demonstrated to provide assurance of quality. It is established through rigorous DoE studies. Operating within the design space is not considered a change, thus offering regulatory flexibility [22]. A Proven Acceptable Range (PAR), is typically a univariate range for a single parameter that produces a product meeting quality criteria. It does not account for factor interactions and offers less operational and regulatory flexibility than a multivariate design space [22].

Experimental Protocols and Data Presentation

Protocol 1: Screening DoE for Identifying Critical Process Parameters

Objective: To efficiently identify the CPPs from a list of potential factors that significantly impact a CQA [19]. Methodology:

- Define Inputs: Select 5-8 potential process factors to investigate.

- Choose Design: Select a

2-level fractional factorialorPlackett-Burmandesign [19] [20]. - Execute: Run experiments in a randomized order to avoid bias.

- Analyze: Use statistical software to perform analysis of variance (ANOVA). Identify factors with p-values < 0.05 as significant.

Protocol 2: Optimization DoE for Establishing a Design Space

Objective: To model the relationship between CPPs and CQAs and define a robust design space [22] [5]. Methodology:

- Define Inputs: Use the 2-4 significant CPPs identified from screening.

- Choose Design: Select a Central Composite Design (CCD), a type of RSM design [5] [20].

- Execute: Run the CCD, which includes factorial, axial, and center points.

- Analyze: Fit a quadratic model to the data. Use contour plots and response surfaces to visualize the design space where all CQAs are met.

Table 1: Comparison of Common DoE Designs in Pharmaceutical QbD

| Design Type | Primary Purpose | Key Strength | Key Limitation | Typical Run Number for 5 Factors |

|---|---|---|---|---|

| Full Factorial | Understanding all main effects and interactions | Provides complete information on all interactions | Number of runs grows exponentially | 32 (2⁵) |

| Fractional Factorial | Screening many factors efficiently | Drastically reduces experimental runs | Aliasing (confounding) of interactions | 8-16 |

| Plackett-Burman | Screening a very large number of factors | Extreme efficiency for main effects screening | Cannot estimate any interactions | 12 |

| Central Composite (RSM) | Final optimization and design space mapping | Can model curvature (nonlinear effects) | Requires more runs than screening designs | ~32 |

Table 2: Essential Research Reagent Solutions for QbD-Based DoE Studies

| Material / Solution | Function in Experiment | QbD Context & Considerations |

|---|---|---|

| Multivariate Modeling Software | Statistical analysis, model building, and prediction of optimal conditions. | Critical for analyzing DoE data, generating predictive models, and visualizing the design space [22]. |

| Process Analytical Technology (PAT) | Enables real-time monitoring of CQAs during process development and manufacturing [22]. | Provides rich, continuous data streams for DoE models. Key enabler for Real-Time Release Testing (RTRT) [22]. |

| Designated Reference Standards | Calibrate analytical methods and ensure data integrity across all experimental runs. | Essential for ensuring that CQAs are measured accurately and consistently throughout the DoE campaign. |

| Stable Drug Substance/API | The core material under investigation in formulation and process DoE studies. | A Critical Material Attribute (CMA); consistency in its properties is vital for obtaining reliable DoE results [22]. |

Workflow and Relationship Visualizations

DoE Workflow in QbD

QbD and DoE Relationship

Frequently Asked Questions (FAQs)

Q1: What is the foundational relationship between QTPP, CQAs, and DoE in process optimization? A1: The Quality Target Product Profile (QTPP) is the strategic starting point. It is a prospective summary of the quality characteristics a drug product must possess to ensure safety and efficacy, considering elements like dosage form, strength, and stability [25] [26]. The QTPP guides the identification of Critical Quality Attributes (CQAs), which are the physical, chemical, or microbiological properties that must be controlled within specific limits to achieve the QTPP [27] [28]. Design of Experiments (DoE) is the primary methodological tool used to systematically investigate and model the relationship between process inputs—like Critical Material Attributes (CMAs) and Critical Process Parameters (CPPs)—and these CQAs. This data-driven understanding is essential for building a robust, optimized process [25] [29].

Q2: How do I choose the right DoE design for screening factors that affect my CQAs? A2: The choice depends on the number and type of factors. For initial screening of a large number of factors (both continuous and categorical), a fractional factorial design like Plackett-Burman is highly efficient for identifying the most impactful variables without testing all possible combinations [29]. If resources allow and the system is not excessively large, a full factorial design can provide complete interaction data but is costly [29]. For scenarios with many continuous factors, it is recommended to use a screening design first to eliminate insignificant factors [5].

Q3: We've identified key factors. What's the best DoE approach for final optimization towards our CQA targets? A3: For optimization focusing on a smaller set of critical continuous factors, Response Surface Methodology (RSM) is the standard. Within RSM, Central Composite Designs (CCD) are often the best performers for building a predictive polynomial model to find optimal factor settings, as they excel in multi-objective optimization of complex systems [5]. An alternative is the Box-Behnken Design (BBD). Recent studies indicate that CCDs generally perform best overall for final optimization [5].

Q4: How should we handle experiments with both continuous and categorical factors (e.g., different excipient grades or reactor types)? A4: A hybrid strategy is most effective. First, apply a Taguchi design or a suitable factorial design to handle all levels of the categorical factors and represent continuous factors in a two-level format. This helps determine the optimal level for each categorical factor [5]. Once the categorical factors are fixed at their optimal levels, follow up with a Central Composite Design (CCD) on the remaining continuous factors for the final optimization stage [5].

Q5: Our DoE model suggests an optimal operating point. How do we validate this and ensure it consistently meets CQAs? A5: Validation involves both confirmatory experiments and control strategy implementation. Run a small set of experiments at the predicted optimal conditions from your DoE model and compare the measured CQAs to the predictions. Subsequently, the knowledge gained is used to establish a control strategy. This includes setting validated ranges for CPPs, defining specifications for CMAs and CQAs, and implementing appropriate Process Analytical Technology (PAT) for monitoring [25] [30]. The control strategy ensures the process remains in a state of control, consistently delivering product that meets the QTPP [28].

Troubleshooting Guides

Issue 1: Unclear or Unmeasurable CQAs

- Problem: Difficulty in defining which attributes are truly "critical" or in measuring them effectively.

- Solution:

- Revisit the QTPP: Ensure every CQA can be directly traced back to a patient-centric goal in the QTPP (e.g., efficacy linked to dissolution, safety linked to impurity levels) [27].

- Conduct a Formal Risk Assessment: Use tools like Failure Mode and Effects Analysis (FMEA). Criticality is based on the severity of harm to the patient if the attribute is out of range. Probability and detectability inform risk but do not change the attribute's inherent criticality [30].

- Invest in Analytical Development: If a CQA is hard to measure, develop or employ advanced PAT tools for real-time or near-real-time analysis to enable control [28].

Issue 2: DoE Results are Inconclusive or Model Fit is Poor

- Problem: The experimental data does not yield a clear, statistically significant model relating factors to CQAs.

- Solution:

- Check Factor Ranges: The chosen "levels" for your factors may be too narrow. Reassess based on prior knowledge and expand the design space appropriately [29].

- Assess Measurement Error: High variability in measuring your response (CQA) can swamp the factor effects. Improve analytical method precision or increase replication within the DoE.

- Consider a Screening Design: You may have too many irrelevant factors. Use a Plackett-Burman screening design to filter out noise factors before optimization [29].

- Verify Assumptions: Ensure the underlying assumptions of your model (e.g., linearity for factorial designs) are valid for the system. You may need a more complex design like a CCD to capture curvilinear relationships [5].

Issue 3: Difficulty Scaling Up an Optimal Lab-Scale Process

- Problem: The factor settings that optimize CQAs in the lab fail to produce the same results at pilot or manufacturing scale.

- Solution:

- Incorporate Scale-Dependent Factors Early: Include factors known to be scale-sensitive (e.g., mixing power input, heat transfer rate) as variables in your development-stage DoE, even at small scale, using surrogate models.

- Employ a Risk-Based Scale-Up Strategy: Use Quality Risk Management (QRM) to assess risks from equipment differences, raw material variability, and environmental controls. Your DoE studies provide the scientific rationale for this assessment [30].

- Conduct a Conformation DoE at Scale: Perform a limited, focused DoE at the larger scale to confirm or slightly adjust the design space established at lab scale [30].

Experimental Protocols

Protocol 1: Screening Design for Initial Factor Identification

- Objective: Identify which of many potential CMAs and CPPs have a significant effect on a key CQA.

- Methodology:

- Define Factors & Levels: List all potential material attributes (e.g., API particle size distribution, excipient viscosity) and process parameters (e.g., mixing time, temperature). Assign a "high" and "low" level to each continuous factor, or specific options to categorical factors.

- Select Design: Choose a Plackett-Burman or a Resolution III fractional factorial design to minimize the number of experimental runs.

- Execute Runs: Randomize the order of experiments to avoid confounding with unknown time-based variables.

- Analyze Data: Use statistical software to perform analysis of variance (ANOVA). Factors with p-values below a chosen significance level (e.g., 0.05) are considered significant and retained for optimization.

Protocol 2: Central Composite Design (CCD) for Response Surface Optimization

- Objective: Model the curvilinear relationship between 3-5 critical continuous factors and a CQA to find the optimum.

- Methodology:

- Define Critical Factors: Use results from Protocol 1. For each factor, define a center point, an axial distance (alpha, typically ±1.414 for rotatability), and high/low factorial points.

- Construct CCD: The design consists of:

- A full or fractional factorial cube (2^k points).

- Center points (n_c, for estimating pure error).

- Axial (star) points (2k points).

- Execute & Analyze: Run the randomized experiments. Fit a second-order polynomial model (e.g., Y = β0 + ΣβiXi + ΣβiiXi² + ΣβijXiXj). Use the model to generate contour plots and locate the optimum operating region that meets all CQA targets.

Table 1: Comparison of Common DoE Designs for CQA-Based Optimization

| DoE Design | Primary Purpose | Key Strength | Key Limitation | Best For Stage |

|---|---|---|---|---|

| Full Factorial | Screening & Modeling | Estimates all main effects and interactions | Number of runs grows exponentially (2^k) | Small number of factors (<5) [29] |

| Fractional Factorial (e.g., Plackett-Burman) | Screening | Highly efficient for identifying vital few factors | Aliasing (confounding) of effects; no curvature estimation | Initial screening of many factors [29] |

| Taguchi Design | Robust Parameter Design | Efficient handling of categorical factors; signal-to-noise ratios | Less reliable for precise prediction; statistical criticism | Identifying optimal level of categorical factors [5] |

| Central Composite (CCD) | Response Surface Optimization | Excellent for fitting quadratic models; good coverage of space | Requires more runs than Box-Behnken | Final optimization of continuous factors [5] |

| Box-Behnken (BBD) | Response Surface Optimization | Fewer runs than CCD for 3+ factors; avoids extreme corners | Poor prediction at factorial corners of space | Optimization when extreme factor combinations are risky |

Table 2: Example CQAs and Linked DoE Objectives for a Solid Oral Dosage Form

| QTPP Element | Derived CQA | Potential CPPs/CMAs | Typical DoE Objective |

|---|---|---|---|

| Therapeutic Efficacy | Dissolution Rate | API particle size, lubricant concentration, compression force | Optimize CPPs to achieve target dissolution profile. |

| Dose Uniformity | Content Uniformity | Mixing time & speed, granule particle size distribution | Screen CMAs/CPPs to minimize variance in assay. |

| Patient Safety | Impurity Level | Reaction temperature/time, raw material purity | Model the effect of CPPs on impurity formation to keep it below threshold. |

| Stability | Degradation Products | Moisture content, excipient grade | Understand interaction of CMA (moisture) and CPP (blending) on stability CQA. |

Visualizations

Diagram 1: QTPP-CQA-DoE Logical Framework

Diagram 2: Multi-Stage DoE Experimental Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function in DoE for CQA Development |

|---|---|

| Statistical Software (e.g., JMP, Design-Expert, Minitab) | Essential for designing orthogonal arrays, randomizing runs, analyzing ANOVA results, fitting response surface models, and generating optimization plots. |

| Process Analytical Technology (PAT) Probes | Enables real-time measurement of CQAs (e.g., NIR for potency, FBRM for particle size) or CPPs (pH, temp) during DoE runs, providing rich, continuous data. |

| Characterized Raw Material Libraries | For studying CMAs, having batches of excipients or API with well-documented variations in key attributes (particle size, polymorphism) is crucial. |

| High-Throughput Experimentation (HTE) Systems | Automates the execution of many DoE runs (e.g., 96-well plates for formulation), making large screening or optimization designs practically feasible. |

| Designated DoE Experiment Batches | Dedicated, small-scale batches (e.g., in lab reactors or blenders) that allow for precise, independent manipulation of CPPs as per the design matrix. |

| Stability Chambers | Required to assess the long-term CQA "stability" as a response in DoE studies, linking CPPs/CMAs to shelf-life performance. |

Strategic DoE Frameworks: From Screening to Optimization in Development

Frequently Asked Questions

What is the primary goal of a Screening Design? The main purpose of a Screening Design of Experiments (DOE) is to efficiently identify the most critical factors influencing a process or product from a large set of potential variables. This allows researchers to focus subsequent, more detailed investigations on the factors that truly matter, saving significant time and resources [19].

When should I use a Full Factorial design over a Fractional Factorial? A Full Factorial design is the most comprehensive approach and should be used when the number of factors is small (typically less than 5) and it is feasible to test all possible combinations. It is necessary when you must understand all interaction effects between factors. A Fractional Factorial is a practical alternative when investigating a larger number of factors, as it requires only a fraction of the runs, though this comes at the cost of confounding (or aliasing) some interactions [19].

Can these designs be applied in pharmaceutical development? Yes. Factorial analysis is a valuable tool in pharmaceutical development. For example, it has been applied to optimize stability study designs for parenteral drug products, helping to identify critical factors like batch, container orientation, and filling volume, which can lead to a significant reduction in long-term stability testing [31].

What are the common pitfalls to avoid when running a Screening DOE?

- Testing too many variables at once: Avoid making numerous modifications simultaneously, as this makes it difficult to pinpoint the effective solution [32].

- Inadequate sample size: Testing too few units can lead to results that are not statistically significant. Scale the number of tests to the failure rate of the problem you are studying [32].

- Poor record keeping: Maintain extremely accurate records of all configurations to avoid errors and successfully isolate variables [32].

Experimental Protocols for Key Designs

Protocol for a 2-Level Full Factorial Design

A Full Factorial Design is used to comprehensively study the effects of multiple factors and their interactions.

- Define Factors and Levels: Select

kfactors you wish to investigate and assign two levels (e.g., high/low, present/absent) to each. - Determine Experimental Runs: The total number of unique experimental runs required is

2^k. For example, 3 factors require2^3 = 8runs. - Randomize Run Order: Randomize the order in which you perform the experimental runs to avoid the influence of confounding variables.

- Execute Experiments & Collect Data: Conduct the experiments according to the randomized schedule and measure the response variable(s) of interest.

- Analyze Data: Use statistical analysis (e.g., Analysis of Variance - ANOVA) to determine the main effects of each factor and the interaction effects between factors.

Protocol for a Screening DOE using a Fractional Factorial Design

This protocol is designed to screen a large number of factors efficiently [19].

- Identify Potential Factors: List all potential factors that could influence the process. This list can be extensive (e.g., 7-15 factors).

- Select a Fractional Factorial Design: Choose a specific fractional factorial design (e.g., a

2^(k-p)design) or a Plackett-Burman design, which uses a very small number of runs to estimate main effects. - Define the Design Resolution: Understand the resolution of your design (e.g., Resolution III, IV), which indicates which interactions are confounded with main effects. A Resolution III design confounds main effects with two-way interactions, while a Resolution IV design confounds two-way interactions with each other [19].

- Run the Experiment: Execute the greatly reduced set of experimental runs in a randomized order.

- Analyze Main Effects: Analyze the data to identify which factors have statistically significant main effects on the response.

- Plan Follow-up Experiments: Use the results to eliminate insignificant factors. The significant factors can then be investigated more thoroughly in a subsequent, optimized Full Factorial or Response Surface Methodology (RSM) design.

Protocol for Applying Factorial Analysis to Pharmaceutical Stability Studies

This methodology outlines how factorial design can reduce long-term stability testing for registration batches [31].

- Select Product and Define Factors: Select the parenteral drug product and define the factors to be studied (e.g., batch, container orientation, filling volume, drug substance supplier).

- Conduct Accelerated Stability Study: Perform a full factorial design under accelerated storage conditions (e.g., 40°C ± 2°C/75% RH ± 5% RH), testing at 0, 3, and 6 months [31].

- Perform Factorial Analysis: Statistically analyze the accelerated stability data to identify the factors that have a significant influence on critical quality attributes and to determine the "worst-case" scenarios.

- Design Reduced Long-Term Study: Based on the analysis, propose a strategically reduced long-term stability study (e.g., reducing the number of samples tested by 50% or more) by focusing on the worst-case combinations of factors [31].

- Validate with Long-Term Data: Confirm the validity of the reduced design by comparing its predictions with actual data from the conventional long-term stability study using regression analysis [31].

Comparison of Design of Experiments (DOE) Types

The table below summarizes the key characteristics of different DOE approaches to help you select the most appropriate one.

| Feature | Full Factorial | Fractional Factorial | Screening (Plackett-Burman) |

|---|---|---|---|

| Primary Goal | Understand all main and interaction effects | Screen many factors efficiently with fewer runs | Screen a very large number of factors with minimal runs |

| Information Obtained | All main effects and all interactions | Main effects, but some interactions are confounded (aliased) | Main effects only (assumes interactions are negligible) [19] |

| Number of Runs | 2^k (e.g., 5 factors = 32 runs) |

2^(k-p) (e.g., 5 factors = 16 runs) |

As few as k+1 runs [19] |

| Best Use Case | Small number of factors (<5), critical interactions | 5-10 factors, initial investigation | Very large number of factors, initial screening [19] |

| Key Limitation | Number of runs grows exponentially with factors | Some interaction effects are confounded with main effects or other interactions | Cannot detect interactions; may miss important effects if assumption is wrong [19] |

The Scientist's Toolkit: Essential Research Reagent Solutions

This table lists key materials and their functions in the context of the cited pharmaceutical stability study [31].

| Item | Function in the Experiment |

|---|---|

| Parenteral Dosage Form | The drug product being studied for stability (e.g., solution for injection/infusion) [31]. |

| Type I Glass Vials | Primary packaging material; its chemical inertness and light-protective properties are critical for product stability [31]. |

| Bromobutyl Rubber Stoppers | Used to seal vials; tested for compatibility and to ensure no leachables impact product stability [31]. |

| Active Pharmaceutical Ingredient (API) | The drug substance; its properties and potential variability from different suppliers are key factors in the stability study [31]. |

| Stability Chambers | Provide controlled long-term (e.g., 25°C/60% RH) and accelerated (e.g., 40°C/75% RH) storage conditions for ICH-compliant testing [31]. |

DOE Selection Workflow

The following diagram outlines the logical decision process for selecting the appropriate experimental design.

Screening DOE Implementation Process

This diagram details the workflow for successfully implementing a Screening Design of Experiments.

Central Composite Designs (CCD) for Response Surface Modeling and Robust Optimization

Central Composite Design (CCD) is a cornerstone of Response Surface Methodology (RSM), specifically developed to fit second-order polynomial models which are essential for process optimization. As an evolution of factorial designs, CCD systematically explores the relationship between multiple input variables (factors) and one or more output responses. This makes it exceptionally valuable for researchers and scientists engaged in multi-factor Design of Experiments (DoE) research, particularly in fields like pharmaceutical development where understanding complex interactions is crucial for achieving robust, optimal processes [33] [34].

The power of CCD lies in its structured approach to modeling curvature—a limitation of simpler two-level factorial designs. It achieves this through a strategic combination of three distinct types of experimental points, allowing it to efficiently map a response surface with a manageable number of experimental runs [33] [35]. This methodology is inherently sequential; it often follows initial screening experiments to identify vital factors, then focuses on refining the process region to locate optimum conditions [36].

Core Components and Types of CCD

A standard CCD is composed of three sets of experimental runs, each serving a specific purpose in modeling the response surface:

- Factorial Points: A full or fractional two-level factorial design that forms the "cube" of the experiment. These points estimate linear and interaction effects [33] [34].

- Axial (Star) Points: Points located on the axes of the design, at a distance α from the center. These points are crucial for estimating the quadratic effects that capture the curvature of the response surface [33] [37].

- Center Points: Multiple replicates at the center of the design space. These are used to estimate pure experimental error and to check for model curvature [33] [34].

The value of α (alpha), the distance of the star points from the center, is a key design parameter. It determines the geometry and properties of the design. Based on the chosen α, CCDs are primarily classified into three types, each with distinct characteristics and applications [33] [35]:

Types of Central Composite Designs

| Design Type | Abbreviation | Alpha (α) Value | Key Characteristics | Best Use Cases |

|---|---|---|---|---|

| Circumscribed CCD | CCC | α > 1 | Five levels per factor; considered rotatable [33]. | Ideal when the region of interest is spherical or the experimental range can be extended beyond the original factorial levels [33] [35]. |

| Face-Centered CCD | CCF | α = 1 | Three levels per factor; axial points are on the faces of the cube [33]. | Practical when the experimental factor levels are fixed and cannot be easily extended beyond the high/low settings [33]. |

| Inscribed CCD | CCI | α < 1 | Five levels per factor; the factorial points are scaled to fit within the original design space [33]. | Suitable when the experimental limits are strict and the region of interest is exactly the cube defined by the original factorial design [33] [35]. |

The total number of experimental runs (N) required for a CCD with k factors is given by the equation: N = 2^k + 2k + C₀, where 2^k is the number of factorial points, 2k is the number of axial points, and C₀ is the number of center point replicates [33] [35].

Experimental Protocol and Workflow

Implementing a CCD involves a series of methodical steps, from initial planning to final optimization. The following diagram illustrates the complete sequential workflow for a CCD-based optimization study.

Step-by-Step Protocol:

- Problem Definition and Screening: Clearly define the optimization goal (e.g., maximize yield, minimize impurity). Use prior knowledge or preliminary screening designs (e.g., Plackett-Burman) to identify the critical process parameters (CPPs) that significantly impact your Critical Quality Attributes (CQAs) [33] [36].

- Design Setup: For the selected k factors, establish the low (-1) and high (+1) levels. Choose the appropriate type of CCD (CCC, CCI, or CCF) based on operational constraints. The software will generate a design matrix showing the coded values for each experimental run, which should be executed in a randomized order to minimize bias [38].

- Model Fitting: After executing the experiments and recording the responses for each run, a second-order polynomial model is fitted to the data [33]. The general form of the model for two factors (X₁, X₂) is:

Y = β₀ + β₁X₁ + β₂X₂ + β₁₂X₁X₂ + β₁₁X₁² + β₂₂X₂² + εwhere Y is the predicted response, β₀ is the intercept, β₁ and β₂ are linear coefficients, β₁₂ is the interaction coefficient, β₁₁ and β₂₂ are quadratic coefficients, and ε is the error term [33] [36]. - Model Analysis and Validation: The fitted model is analyzed using Analysis of Variance (ANOVA) to determine its statistical significance and to check for lack-of-fit [38]. Diagnostic plots (e.g., residuals vs. predicted) are used to verify model adequacy. The model is then validated using checkpoints not included in the original design [39].

- Optimization and Design Space Exploration: Using the validated model, response surface plots and contour plots are generated to visualize the relationship between factors and responses [38]. Numerical optimization techniques (e.g., desirability function) are used to identify factor settings that jointly optimize all responses, leading to the establishment of a robust design space [38].

The Scientist's Toolkit: Research Reagent Solutions

The following table outlines essential materials and reagents commonly employed in experimental studies utilizing CCD, with examples drawn from pharmaceutical and chemical optimization research.

| Item Category | Specific Examples | Function in the Experiment |

|---|---|---|

| Pharmaceutical Actives | Diacerein [39], Protopine [40] | The drug substance or active pharmaceutical ingredient (API) whose formulation or analytical method is being optimized. |

| Lipids & Surfactants | Cholesterol, Span 40/60/80, Tween 20/80, Brij series [39] | Used to form vesicular structures like niosomes; act as emulsifiers, stabilizers, and penetration enhancers. |

| Solvents | Chloroform, Methanol, Acetonitrile, Diethylamine [39] [40] | Used for dissolving active ingredients and excipients, and as components of the mobile phase in analytical methods. |

| Catalysts & Reagents | Ferrous sulfate (FeSO₄·7H₂O), Hydrogen Peroxide (H₂O₂) [41] | Act as catalysts and oxidizing agents in chemical processes like the Photo-Fenton reaction for wastewater treatment. |

| Buffer Components | Disodium hydrogen phosphate, Potassium dihydrogen phosphate [39] | Used to prepare buffer solutions that maintain a specific pH during the experiment, a critical process parameter. |

Troubleshooting Guides & FAQs

FAQ 1: How do I choose the correct alpha (α) value for my CCD?

The choice of α is fundamental and depends on your design goals and operational constraints.

- For Rotatability: A design is rotatable if the prediction variance is the same for all points equidistant from the center. The α value for rotatability is calculated as α = (2^k)^(1/4) for a full factorial base. For example, for k=2 factors, α = (4)^(1/4) = 1.414 [37] [35]. This is a key feature of the Circumscribed (CCC) design.

- For Practicality: If you cannot experiment beyond the limits of your factorial points (e.g., due to physical constraints or safety reasons), a Face-Centered (CCF) design with α = 1 is the most practical choice, as it uses only three levels for each factor [33].

- For Orthogonality: An orthogonal design ensures the quadratic effect estimates are uncorrelated. The α value for orthogonality depends on the number of center points and is often the default in statistical software [33].

FAQ 2: My model shows a significant "Lack of Fit." What are the potential causes and remedies?

A significant lack-of-fit test (typically with a p-value < 0.05) indicates that the model is not adequately describing the systematic variation in the data.

- Potential Causes:

- Missing Important Terms: The model may be missing higher-order terms (e.g., cubic effects) or complex interactions that are present in the real process.

- Insufficient Model Scope: The experimental region might be too large for a single second-order model to fit well.

- Uncontrolled Variables: The presence of a lurking variable or an unaccounted background factor that is influencing the response [42] [36].

- Remedial Actions:

- Verify Data Integrity: Check for data entry errors or outliers.

- Consider Model Transformation: Explore if transforming the response variable (e.g., log(Y)) improves the fit.

- Collect More Data: If the region is too large, consider breaking the study into smaller sequential parts. Adding more center points can also help better estimate pure error [36].

- Investigate Other Factors: Re-examine the process for potential influential variables not included in the initial design.

FAQ 3: The contour plot for my optimization shows a "ridge" or "saddle point" instead of a clear peak. What does this mean?

- Ridge System: This indicates that the optimum is not a single point but a line or region where similar optimal responses are achieved. This is often a beneficial situation as it provides a robust operating region. You can choose a set of factor levels along the ridge that are easiest or most cost-effective to control in a manufacturing setting [36].

- Saddle Point: This represents a stationary point that is neither a maximum nor a minimum. It acts as a maximum in one direction and a minimum in another. In this case, the true optimum likely lies on the boundary of your experimental region. You may need to shift your experimental domain to find a true maximum or minimum [36].

FAQ 4: How many center point replicates are sufficient, and why are they necessary?

Center points are crucial for several reasons, and 3 to 5 replicates are generally considered sufficient [35].

- Estimate Pure Error: Replication at the center provides an independent estimate of the inherent, random variability of the experimental process, which is used to test for model lack-of-fit and the significance of model terms [33] [36].

- Check for Curvature: A significant difference between the average response at the center points and the response predicted by a first-order model (with only linear and interaction terms) is a clear indicator that curvature is present in the system, justifying the need for the quadratic terms in the CCD model [36].

- Stabilize Variance: Center points improve the predictability of the model across the design space.

In the development of biologics, immunoassays are critical for characterizing therapeutic proteins, monitoring pharmacokinetics, and detecting anti-drug antibodies (ADAs). Traditional one-factor-at-a-time (OFAT) optimization is inefficient and often fails to identify interactions between critical factors, leading to suboptimal assay performance [29]. Design of Experiments (DoE) is a powerful statistical approach that systematically investigates the effect of multiple factors and their interactions on key assay outputs simultaneously [43].

A Hybrid DoE approach combines different experimental designs—such as screening and optimization designs—to efficiently navigate the complex parameter space of an immunoassay. This method is particularly valuable for optimizing assays in complex matrices like cerebrospinal fluid (CSF), where sample volume may be limited and interfering factors can complicate development [44]. By implementing a structured DoE strategy, researchers can develop robust, sensitive, and reliable immunoassays with fewer resources and in a shorter timeframe.

Key DoE Designs and Their Application: A Comparative Table

The following table summarizes the primary DoE designs used in a hybrid approach for immunoassay optimization, their purposes, and typical applications.

| DoE Design Type | Primary Purpose | Key Characteristics | Application in Immunoassay Development |

|---|---|---|---|

| Full Factorial | Screening | Tests all possible combinations of factors and levels; identifies all main effects and interactions. | Best for small number of factors (e.g., initial assessment of 2-4 critical reagents). |

| Fractional Factorial / Plackett-Burman | Screening | Efficiently screens a large number of factors using a fraction of the full factorial runs; identifies significant factors. | Ideal for initial screening of many potential factors (e.g., buffer pH, ionic strength, incubation time, temperature, blocking agents) to find the most influential ones [29]. |

| Central Composite Design (CCD) | Optimization | A type of Response Surface Methodology (RSM); models curvature and identifies optimal factor settings. | Used after screening to fine-tune continuous factors (e.g., concentration of detection antibody, bead density) for performance outcomes like sensitivity [5]. |

| Taguchi Design | Handling Categorical Factors | Effective for identifying optimal levels of categorical factors with minimal experimental runs. | Useful for comparing different types of reagents (e.g., different brands of plates, buffer compositions, or sample types) [5]. |

Implementing a Hybrid DoE Strategy: A Practical Workflow

A hybrid DoE strategy sequentially applies different designs to move efficiently from a large set of potential factors to a finely tuned, optimized assay.

Phase 1: Screening with Fractional Factorial Designs

Objective: To identify the few critical factors from a large set of potential variables that significantly impact assay performance (e.g., sensitivity, background signal).

Methodology:

- Define Input Factors and Ranges: List all potential factors (e.g., detector antibody concentration, antigen incubation time, temperature, buffer pH, blocking agent concentration) and assign realistic high/low levels for each.

- Select a Screening Design: A Plackett-Burman or fractional factorial design is typically chosen to minimize the number of experimental runs while still estimating the main effects of each factor [29].