Optimizing Hyperparameters for Energy-Efficient AI in Biomedical Research: A Guide to Greener, Smarter Models

This article provides a comprehensive guide to hyperparameter optimization (HPO) techniques aimed at reducing the computational energy footprint of machine learning models, with a specific focus on biomedical and drug...

Optimizing Hyperparameters for Energy-Efficient AI in Biomedical Research: A Guide to Greener, Smarter Models

Abstract

This article provides a comprehensive guide to hyperparameter optimization (HPO) techniques aimed at reducing the computational energy footprint of machine learning models, with a specific focus on biomedical and drug discovery applications. We explore the foundational trade-offs between model performance and energy consumption, detail modern HPO methodologies like Bayesian optimization and multi-fidelity approaches, address common challenges in implementation, and present validation frameworks for comparing energy efficiency. Tailored for researchers and drug development professionals, this guide equips readers with the knowledge to build high-performing yet sustainable AI models for critical biomedical tasks.

The Energy-Performance Nexus: Why Hyperparameters Are Key to Greener Machine Learning

Modern AI, particularly deep learning models in biomedicine (e.g., for drug discovery, protein folding, genomic analysis), requires immense computational resources. Training a single large model can emit carbon dioxide equivalent to the lifetime emissions of five cars. This application note details the problem's scale and provides protocols for quantifying and mitigating energy use within hyperparameter optimization (HPO) frameworks.

Current Quantitative Data on AI Energy Costs in Biomedicine

Table 1: Estimated Energy Consumption of Notable AI Models in Biomedicine

| Model / Task | Hardware Used | Training Energy (kWh) | CO2e (lbs) | Equivalent Analog |

|---|---|---|---|---|

| AlphaFold2 (Initial Training) | 128 TPUv3 | ~2,300,000 | ~530,000 | 60 US homes annual electricity |

| GPT-3 (175B Params - Baseline Comparison) | Thousands of V100 GPUs | ~1,287,000 | ~1,400,000 | 1200+ flights from NYC to London |

| Large-scale Genome-Wide Association Study (GWAS) ML Model | 100 GPU cluster, 2 weeks | ~13,440 | ~14,800 | 3.5 gasoline-powered passenger vehicles annual emissions |

| Typical Drug Discovery Virtual Screening DL Model | 8x A100, 1 month | ~8,760 | ~9,600 | 2.2 passenger vehicles annual emissions |

Table 2: Energy Cost Comparison of Model Training Strategies

| Training Strategy | Relative Energy Use | Typical Accuracy Trade-off | Best For |

|---|---|---|---|

| Brute-Force Hyperparameter Search | 100% (Baseline) | 0% | Establishing baselines |

| Random Search | 65-80% | +/- 1-2% | Initial exploration |

| Bayesian Optimization | 40-60% | Often improved | Limited compute budgets |

| Multi-fidelity (e.g., Hyperband) | 20-50% | Slight decrease possible | Very large hyperparameter spaces |

| Green HPO (Early Stopping + Low-Fidelity) | 15-35% | Managed decrease | Energy-constrained projects |

Experimental Protocols

Protocol 3.1: Benchmarking Energy Consumption of a Biomedical AI Training Job

Objective: Quantify the total energy consumption and carbon footprint of training a specific model.

Materials: Compute cluster (GPUs), power meter (software: CodeCarbon, experiment-impact-tracker; or hardware), training script, dataset.

Procedure:

- Instrumentation: Integrate

CodeCarbontracker into your training script. Initialize before the main training loop.

- Baseline Power Draw: Run a 10-minute idle test on the compute node to record baseline power.

- Training Run: Execute the full training job, including validation and checkpointing. Ensure the tracker is active.

- Data Collection: Stop the tracker after job completion. Collect metrics:

total_energy_consumed_kWh,total_emissions_kgCO2,training_duration. - Analysis: Normalize energy use per epoch and per model parameter. Compare against benchmarks in Table 1.

Protocol 3.2: Implementing Energy-Aware Hyperparameter Optimization (HPO)

Objective: Identify a high-performance model configuration with minimal energy expenditure.

Materials: HPO framework (Ray Tune, Optuna), model code, reduced-fidelity dataset (e.g., subsampled), CodeCarbon.

Procedure:

- Define Search Space & Fidelity: Set hyperparameter ranges (e.g., learning rate:

loguniform(1e-5, 1e-3), layers:[4, 8, 16]). Define a low-fidelity setting (e.g., 10% training data, 50% image resolution, 3 epochs). - Configure Energy-Aware Scheduler: Use a multi-fidelity scheduler like ASHA or Hyperband with early stopping.

- Integrate Carbon Tracking: Use a custom reporter or callback to log energy per trial.

- Execute HPO: Run multiple parallel trials. The scheduler will stop underperforming, energy-intensive trials early.

- Pareto Analysis: Select configurations from the Pareto frontier balancing validation accuracy and total energy consumed.

Protocol 3.3: Profiling Computational Graph for Inefficiencies

Objective: Identify energy-intensive operations within the model's forward/backward pass.

Materials: Model in PyTorch/TensorFlow, profiler (PyTorch Profiler, TensorBoard), GPU.

Procedure:

- Profile with Detail: Run a profiler over 10 training iterations.

- Analyze Hotspots: Generate a trace and identify operations with highest FLOPs, longest GPU kernel runtime, or peak memory usage (leads to more memory transfers).

- Optimize: Replace inefficient layers (e.g., standard convolutions with separable convolutions), enable mixed precision training (

torch.cuda.amp), optimize batch size to maximize GPU utilization without triggering memory swapping.

Visualizations

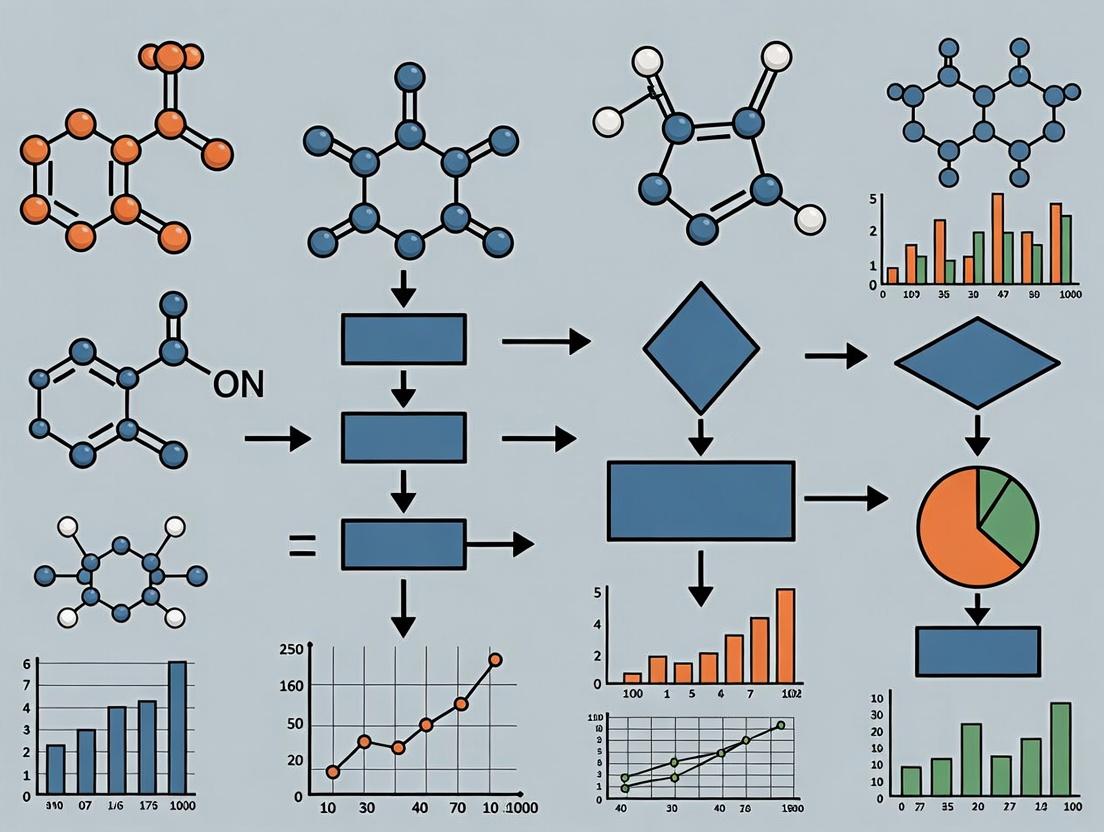

Diagram Title: Energy-Aware HPO with Early Stopping Workflow

Diagram Title: Root Causes and Solutions for AI Energy Costs

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Energy-Efficient Biomedical AI Research

| Tool / Reagent | Function / Purpose | Example / Provider |

|---|---|---|

| Energy Tracking Library | Monitors real-time power draw of CPU/GPU and estimates CO2 emissions. | CodeCarbon, experiment-impact-tracker, Carbontracker |

| Hyperparameter Optimization Framework | Automates search for optimal model settings using energy-efficient algorithms. | Ray Tune (with ASHA), Optuna, Hyperopt |

| Multi-Fidelity Datasets | Smaller, representative subsets of full data for low-cost initial trials. | Created via random stratified subsampling (e.g., 1%, 10% splits). |

| Low-Precision Arithmetic | Reduces computation energy by using 16-bit (FP16/BF16) instead of 32-bit floats. | PyTorch AMP, TensorFlow Mixed Precision, NVIDIA A100 TF32. |

| Model Profiler | Identifies computational bottlenecks and memory inefficiencies in model code. | PyTorch Profiler, TensorBoard Profiler, NVIDIA Nsight Systems. |

| Green Compute Cloud | Cloud platforms with carbon-neutral energy sourcing and high-efficiency hardware. | Google Cloud (Carbon-Intelligent Computing), Azure Sustainability. |

| Pre-trained Foundational Models | Start from existing weights to avoid training from scratch ("fine-tuning"). | Hugging Face Models, NVIDIA BioNeMo, ESMFold for proteins. |

In the pursuit of energy-efficient machine learning, a rigorous understanding of model parameters versus hyperparameters is fundamental. Parameters are the internal variables that the model learns autonomously from the training data (e.g., weights and biases in a neural network). Hyperparameters are external configuration variables set prior to the training process, governing the learning algorithm itself (e.g., learning rate, batch size, network depth). Their optimization is critical for developing models that achieve high performance with minimal computational and energy expenditure—a key concern in resource-intensive fields like drug development.

Comparative Analysis: Definitions and Roles

| Aspect | Parameters (e.g., Weights, Biases) | Hyperparameters (e.g., Learning Rate, Dropout) |

|---|---|---|

| Definition | Internal variables learned from data. | External configuration variables set before training. |

| Set By | The optimization algorithm (e.g., SGD, Adam). | The researcher/scientist or automated search. |

| Goal | Minimize the loss function on training data. | Optimize model generalization and efficiency. |

| Impact on Training | Directly define the model's mapping function. | Control the speed, quality, and dynamics of learning. |

| Impact on Energy Use | Indirect; final model size influences inference cost. | Direct and profound; governs training convergence time and computational load. |

Impact on Model Dynamics: Quantitative Synthesis

Recent research underscores the direct correlation between hyperparameter settings, model performance, and energy consumption. The following table summarizes key findings from current literature.

Table: Impact of Key Hyperparameters on Model Dynamics and Energy Efficiency

| Hyperparameter | Typical Value Range | Primary Impact on Model Dynamics | Impact on Training Energy (Relative) | Key Trade-off |

|---|---|---|---|---|

| Learning Rate | 1e-5 to 1e-1 | Controls step size in parameter space. High rates risk divergence; low rates slow convergence. | Very High | Convergence Speed vs. Stability |

| Batch Size | 32, 64, 128, 256 | Affects gradient estimate noise & generalization. Larger batches can leverage parallel compute. | High | Computational Efficiency vs. Generalization |

| Number of Layers / Width | Problem-dependent | Defines model capacity. Larger networks can overfit but are more expressive. | High | Model Expressivity vs. Overfitting/Runtime |

| Dropout Rate | 0.2 to 0.5 | Reduces overfitting by randomly dropping units during training. | Low (slight overhead) | Regularization vs. Training Signal Dilution |

| Number of Training Epochs | 10 to 100+ | Determines how many times the model sees the full dataset. Early stopping is crucial. | Very High | Underfitting vs. Overfitting/Energy Waste |

Experimental Protocols for Hyperparameter Optimization (HPO)

Protocol 1: Grid Search for Baseline Establishment

- Objective: Systematically evaluate a predefined set of hyperparameters to establish a performance baseline.

- Methodology:

- Define a discrete search space for 2-3 critical hyperparameters (e.g., learning rate: [0.01, 0.001, 0.0001]; batch size: [32, 64]).

- Train a unique model for every combination in the Cartesian product of these sets.

- Evaluate each model on a held-out validation set using the target metric (e.g., validation AUC-ROC for a drug response model).

- Select the combination yielding the best validation performance.

- Energy Consideration: Log the training time and GPU/CPU power draw for each run to correlate hyperparameter choice with energy cost.

Protocol 2: Bayesian Optimization for Efficient HPO

- Objective: Find high-performing hyperparameters with fewer trials than grid/search random search.

- Methodology:

- Define a bounded, continuous search space for key hyperparameters.

- Build a probabilistic surrogate model (e.g., Gaussian Process) to approximate the function from hyperparameters to validation score.

- Use an acquisition function (e.g., Expected Improvement) to select the next most promising hyperparameter set to evaluate.

- Iterate steps 2-3 for a fixed number of trials (e.g., 50), updating the surrogate model after each real evaluation.

- The hyperparameters from the trial with the best validation score are selected.

- Advantage for Energy Efficiency: Dramatically reduces the number of expensive training trials required to find optimal configurations.

Protocol 3: Assessing Hyperparameter Impact via Ablation Study

- Objective: Isolate and quantify the effect of a single hyperparameter on model performance and training stability.

- Methodology:

- Fix all hyperparameters to a standard baseline.

- Vary only the target hyperparameter (e.g., dropout rate) across a logical range.

- Train multiple models (with different random seeds) for each value.

- Record final validation performance, training loss curves, and time-to-convergence for each run.

- Analyze the variance in outcomes to determine the sensitivity and optimal range for the target hyperparameter.

Visualizations

Diagram 1: HPO Workflow for Energy-Efficient ML

Diagram 2: Parameters vs. Hyperparameters in Training

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Tools for Hyperparameter Optimization Research

| Tool / Reagent | Category | Primary Function in HPO Research |

|---|---|---|

| Weights & Biases (W&B) | Experiment Tracking | Logs hyperparameters, metrics, and system resource consumption (GPU power) across all runs for comparison. |

| Optuna / Ray Tune | HPO Framework | Provides efficient search algorithms (Bayesian, Evolutionary) and automated parallel trial scheduling. |

| TensorBoard | Visualization | Enables visual analysis of training dynamics (loss curves) under different hyperparameters. |

| CodeCarbon | Energy Tracking | A software package that estimates the electricity consumption and carbon footprint of ML training runs. |

| Scikit-learn | ML Library | Offers simple, consistent APIs for models and utilities for grid/random search on smaller-scale models. |

| Custom Validation Splits | Data Protocol | Carefully constructed validation sets (e.g., temporal, structural) are critical for unbiased hyperparameter selection in drug development. |

Application Notes

In the context of hyperparameter optimization for energy-efficient machine learning, particularly relevant to compute-intensive fields like drug discovery, quantifying efficiency is paramount. The core metrics—FLOPs, GPU Hours, and Watts—serve distinct but complementary roles in building a holistic view of computational and energy cost.

- FLOPs (Floating-Point Operations): A theoretical measure of computational workload. While useful for comparing model architectures, it does not capture hardware efficiency or real-world power draw. Lower FLOPs often, but not always, correlate with lower energy consumption.

- GPU Hours: A practical, cloud-cost-oriented metric representing the product of the number of GPUs and wall-clock time. It is a direct proxy for resource allocation and financial expenditure but ignores the power efficiency of the underlying hardware.

- Watts (Power Draw): The fundamental unit of energy per unit time. Direct measurement of system or GPU power (in Watts) during experiments, integrated over time to yield Joules, provides the most accurate account of actual energy consumption. This is critical for optimizing hyperparameters for both performance and minimal environmental impact.

The optimal strategy for energy-aware ML research involves multi-objective optimization, trading off traditional performance metrics (e.g., validation accuracy) against these efficiency metrics. The following tables summarize key quantitative relationships and benchmark data.

Table 1: Comparative Efficiency Metrics for Common Operations (Theoretical)

| Operation | Approx. FLOPs | Typical GPU Memory Access | Relative Energy Cost (Arbitrary Units) |

|---|---|---|---|

| Matrix Multiply (n×n) | 2n³ | High | 100 |

| Convolution (3x3 kernel) | ~2 * k * Hout * Wout * Cin * Cout | High | 95 |

| ReLU Activation | n | Low | 5 |

| Batch Normalization | 5n | Medium | 10 |

| Attention (Head) | ~4 * n² * d_model | Very High | 150 |

Table 2: Sample Energy Consumption for Hardware (Empirical)

| Hardware | Typical Peak Power (Watts) | FP32 TFLOPS (Theoretical) | Efficiency (TFLOPS/Watt) | Typical Cloud Cost ($/Hour) |

|---|---|---|---|---|

| NVIDIA A100 (40GB) | 250-300 | 19.5 | ~0.065 - 0.078 | ~$1.10 - $1.50 |

| NVIDIA H100 (80GB) | 350-400 | 67.0 | ~0.168 - 0.191 | ~$3.50 - $5.00 |

| NVIDIA V100 (32GB) | 250-300 | 15.7 | ~0.052 - 0.063 | ~$0.85 - $1.20 |

| NVIDIA RTX 4090 | 450 | 82.6 (FP16) | ~0.184 (FP16) | N/A (Consumer) |

Experimental Protocols

Protocol 1: Measuring End-to-End Task Energy Consumption

Objective: To quantify the total energy cost of training a model with a specific hyperparameter set.

Materials: ML training code, target dataset, GPU server with power monitoring, nvidia-smi/dcgmi tools, Python psutil/pynvml libraries.

Procedure:

- Baseline Power: Record idle system power for 60 seconds before job launch.

- Instrumentation: Integrate power sampling into training script. Use

pynvmlto poll GPU power draw (in Watts) at 1-second intervals. - Execution: Launch the training job with the defined hyperparameters (batch size, learning rate, model size, etc.).

- Data Collection: Log timestamp, GPU power, GPU utilization, and memory usage for each sample.

- Post-Processing: Integrate power over time:

Total Energy (Joules) = Σ (Power_sample_i (Watts) * sampling_interval (seconds)). Subtract estimated baseline energy. - Reporting: Report total Joules, average Watts, final model accuracy, and total wall-clock time.

Protocol 2: Hyperparameter Search with Efficiency Constraint

Objective: To identify hyperparameters that maximize model performance while staying under an energy budget. Materials: As in Protocol 1, plus a hyperparameter optimization framework (Optuna, Ray Tune). Procedure:

- Define Search Space: Specify ranges for key hyperparameters (e.g., batch size [16, 32, 64, 128], learning rate [1e-5 to 1e-3], layer width, dropout rate).

- Define Objective Function: Create a function that, given a hyperparameter set (trial), trains a model for a fixed number of epochs and returns a composite score:

Score = Validation_AUC - α * (Total_Joules / Joules_budget). Whereαis a weighting factor. - Constrained Search: Configure the optimizer to maximize the composite score. Implement early stopping if the running energy expenditure exceeds a pre-defined threshold.

- Analysis: Post-search, analyze the Pareto frontier of validation performance vs. energy consumed for all trials.

Visualizations

HPO Energy-Aware Workflow

Interplay of Factors Influencing Efficiency Metrics

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Energy-Efficient ML Research |

|---|---|

GPU Power Monitoring Tools (nvml/dcgmi, scaphandre) |

Direct measurement of hardware power draw at the GPU or system level. Essential for converting runtime to Joules. |

| Hyperparameter Optimization Frameworks (Optuna, Ray Tune) | Automate the search for high-performance, low-energy configurations. Enable multi-objective optimization. |

Profiling Suites (PyTorch Profiler, NVIDIA Nsight Systems, py-spy) |

Identify computational bottlenecks (high FLOPs ops) and memory inefficiencies that lead to wasted energy. |

Lightweight Model Libraries (Hugging Face PEFT, timm, TensorFlow Model Optimization) |

Provide access to efficient architectures (e.g., LoRA for fine-tuning) and techniques (pruning, quantization) that reduce FLOPs and memory footprint. |

| Energy-Aware Schedulers (Kubernetes with power metrics, SLURM) | Schedule jobs to maximize hardware utilization and potentially leverage lower-power idle states. |

| Precision Control (Automatic Mixed Precision - AMP) | Use torch.cuda.amp or TF32 to leverage lower-precision math (FP16/BF16) for significant speed-up and reduced energy per FLOP on modern hardware. |

| Efficiency Benchmarking Datasets (MLPerf Inference/Training) | Standardized tasks for comparing the performance-per-watt of different models, hardware, and frameworks. |

In the context of hyperparameter optimization (HPO) for energy-efficient machine learning research, a fundamental trilemma exists between model predictive accuracy, total required training time, and total energy consumption. This trilemma is particularly acute in computationally intensive fields like drug discovery, where large-scale virtual screening and molecular property prediction models are essential. Optimizing for one metric often degrades another, requiring a strategic, quantified trade-off. This application note provides protocols and analytical frameworks for navigating this trade-off, enabling researchers to make informed decisions aligned with their project's priorities—be it maximal accuracy, rapid iteration, or sustainable computing.

Quantitative Analysis of the Trade-off

Recent empirical studies, benchmarked on common drug discovery datasets (e.g., MoleculeNet), illustrate the quantitative relationships between these three core metrics. The following tables summarize key findings.

Table 1: Impact of HPO Strategy on the Trilemma (Benchmarked on Tox21 Dataset)

| HPO Strategy | Avg. Test ROC-AUC | Avg. Training Time (GPU hrs) | Avg. Energy Consumed (kWh) | Primary Trade-off Characteristic |

|---|---|---|---|---|

| Manual Search (Baseline) | 0.791 | 12.5 | 2.1 | High variance, often suboptimal efficiency |

| Random Search (50 trials) | 0.805 | 50.0 | 8.5 | Better accuracy, large time/energy cost |

| Bayesian Optimization (30 trials) | 0.812 | 32.0 | 5.4 | Optimal accuracy/efficiency balance |

| Early Stopping + Bayesian | 0.808 | 18.5 | 3.1 | Significant savings, minor accuracy loss |

| Low-Energy Preset Config | 0.795 | 8.2 | 1.4 | Minimized energy, acceptable accuracy |

Table 2: Effect of Model & Hardware Scaling on Energy Efficiency

| Model Architecture | Parameter Count | Target Task | Accuracy (RMSE/ROC-AUC) | Energy per Training Epoch (Wh) | Optimal Use Case |

|---|---|---|---|---|---|

| Graph Convolutional Network (GCN) | ~500k | Solubility Prediction (RMSE) | 1.15 | 45 | Rapid prototyping, limited data |

| Attention-based (Transformer) | ~5M | Protein-Ligand Affinity | 0.85 ROC-AUC | 210 | High-accuracy binding prediction |

| Ensemble (5x GCN) | ~2.5M | Toxicity Classification | 0.815 ROC-AUC | 225 | Maximizing prediction confidence |

| Quantized GCN (INT8) | ~500k | Solubility Prediction (RMSE) | 1.18 | 22 | Deployment inference, energy-critical training |

Experimental Protocols for Quantifying the Trade-off

Protocol 1: Establishing an Energy-Accuracy Pareto Frontier

Objective: To empirically define the optimal set of hyperparameter configurations that balance validation accuracy and total energy consumption.

Materials:

- Hardware: Single NVIDIA A100 GPU with power monitoring (via

nvidia-smi -l 1ordcgm-profi). - Software: Python with PyTorch/TensorFlow,

scikit-optimizeorOptunafor HPO,CodeCarbonorExperiment Impact Trackerfor energy tracking. - Dataset: Publicly available biochemical dataset (e.g., ClinTox from MoleculeNet).

Methodology:

- Define Search Space: Key hyperparameters include learning rate (log-uniform, 1e-5 to 1e-3), batch size (32, 64, 128, 256), number of GNN layers (2, 3, 4), and hidden dimension (128, 256, 512).

- Instrument Energy Tracking: Initialize the energy tracking library to log cumulative energy draw (kWh) from the GPU and CPU throughout each training job.

- Execute Multi-Objective HPO: Use a Bayesian optimization framework (e.g., Optuna with

TPESampler) to run 50 trials. The objective function for each trial is a compound metric:f = α * (1 - validation_ROC_AUC) + (1 - α) * (normalized_energy_consumed), where α is a weight (e.g., 0.7 for accuracy bias). - Data Collection: For each trial, record the final validation ROC-AUC, total wall-clock training time, and total energy consumed (kWh).

- Pareto Analysis: Plot all trials on a 2D scatter plot with "Validation Accuracy" on the y-axis and "Energy Consumed" on the x-axis. Identify the Pareto frontier—the set of points where no other point has both higher accuracy and lower energy.

- Analysis: Select 3-5 configurations from different parts of the frontier (high-accuracy/high-energy, balanced, low-energy/acceptable-accuracy) for final evaluation on a held-out test set.

Protocol 2: Evaluating the Impact of Dynamic Training Policies

Objective: To measure the energy and time savings of adaptive training policies versus static training.

Materials: As in Protocol 1.

Methodology:

- Establish Baseline: Train a standard GCN model on the Tox21 dataset using a fixed, well-tuned set of hyperparameters for a full 100 epochs. Record final test accuracy, training time, and energy.

- Implement Adaptive Policies:

- Early Stopping: Monitor validation loss with a patience of 10 epochs.

- Learning Rate Scheduling: Implement ReduceLROnPlateau scheduler.

- Gradient Accumulation: Simulate large batches with smaller physical batches to reduce memory pressure and allow for more energy-efficient GPU utilization.

- Run Experiments: Train the same model architecture, applying each policy individually and in combination.

- Compare Metrics: Create a comparison table showing the epoch at which training stopped, the percentage reduction in training time and energy compared to baseline, and the resulting test accuracy.

Visualization of Key Concepts and Workflows

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Energy-Efficient ML Research in Drug Development

| Item Name | Category | Primary Function & Relevance to Trade-off |

|---|---|---|

| Optuna / Ray Tune | HPO Framework | Enables efficient Bayesian optimization and early pruning of trials, directly reducing wasted computational time and energy. |

| CodeCarbon | Energy Tracking Library | Quantifies energy consumption and CO2 emissions of ML experiments, providing critical data for the trade-off analysis. |

NVIDIA DCGM / nvml |

Hardware Monitor | Provides low-level, precise measurement of GPU power draw (in watts), essential for correlating configuration with energy use. |

| PyTorch Geometric / DGL | Graph ML Library | Specialized libraries for molecular graph data, providing optimized, energy-efficient implementations of GNN layers. |

| TensorFlow Model Optimization Toolkit | Model Optimization | Provides tools for quantization (FP16/INT8) and pruning, enabling smaller, faster, less energy-intensive models with minimal accuracy loss. |

| Weights & Biases / MLflow | Experiment Tracking | Logs hyperparameters, metrics, and system resources across all experiments, enabling holistic analysis of the trilemma. |

| MoleculeNet | Benchmark Dataset Suite | Standardized biochemical datasets allow for fair comparison of efficiency gains across different HPO strategies and model architectures. |

Energy-Aware Learning as an Emerging Priority for Research Institutions and Pharma

The drive towards energy-aware machine learning (ML) in pharmaceutical research is no longer optional. As model complexity and computational demands surge, the carbon footprint and direct energy costs of drug discovery pipelines become significant. This document frames energy-aware learning within the critical thesis that strategic hyperparameter optimization (HPO) is the most effective lever for achieving substantial energy efficiency without compromising scientific outcomes. By systematically prioritizing energy consumption as a core metric during model development, institutions can reduce environmental impact and operational costs while accelerating research.

Quantitative Landscape: The Energy Cost of ML in Pharma

Recent analyses highlight the scale of the challenge. The following table summarizes key quantitative findings on energy consumption in computational drug discovery.

Table 1: Energy Consumption Benchmarks in Computational Pharma Research

| Task / Model Type | Approx. Energy Consumption (kWh) | CO2e (kg) | Key Influencing Hyperparameters | Potential Efficiency Gain via HPO |

|---|---|---|---|---|

| Molecular Dynamics Simulation (1µs, mid-size protein) | 500 - 1,500 | 240 - 720 | Time step, cut-off radius, PME parameters, ensemble type. | 20-40% |

| Deep Learning QSAR Model (training to convergence) | 50 - 300 | 24 - 144 | Batch size, layer count/width, learning rate schedule, dropout rate. | 30-60% |

| Generative Chemistry (VAE/GAN, 100k compounds) | 200 - 800 | 96 - 384 | Latent space dim, network depth, discriminator steps, batch norm. | 25-50% |

| Protein Folding (AlphaFold2) (single monomer) | 50 - 200 | 24 - 96 | Number of recycles, MSA depth, template mode, chunk size. | 15-35% |

| Hyperparameter Search (Bayesian, 100 trials) | 100 - 500* | 48 - 240 | Search space definition, early stopping aggressiveness, parallelization. | N/A (Base cost) |

- This is the meta-cost of the search process itself, which is amortized over all subsequent model runs.

Application Notes & Protocols

Application Note AN-EEHPO-01: Multi-Objective HPO for Ligand-Based Virtual Screening

Objective: To identify optimal neural network architectures for activity prediction that balance predictive power (AUC-ROC) with computational energy expenditure.

Core Thesis Context: This protocol directly tests the thesis by integrating energy consumption as a co-equal objective in the HPO search space, moving beyond accuracy-only optimization.

Protocol:

- Problem Framing: Define search space for a feed-forward network:

- Hyperparameters: Number of layers {2,3,4}, neurons per layer {64,128,256,512}, batch size {32,64,128,256}, learning rate {1e-2, 1e-3, 1e-4}, dropout rate {0.0, 0.2, 0.5}.

- Instrumentation: Use a power meter (e.g., WattsUp Pro) attached to the GPU server or query NVIDIA-SMI for GPU power draw. Log cumulative energy (kWh) per training job.

- Multi-Objective HPO Setup:

- Tool: Optuna with NSGA-II sampler.

- Objectives: (1) Minimize 1 - Validation AUC-ROC. (2) Minimize Energy Consumption (kWh).

- Constraint: Validation AUC-ROC >= 0.70.

- Execution: Run 100 trials. Each trial trains the model for a maximum of 50 epochs with early stopping (patience=10) on the validation loss.

- Analysis: Retrieve the Pareto front of optimal trade-off solutions. Select the "knee point" model that offers the best AUC gain per unit of additional energy.

Application Note AN-EEHPO-02: Energy-Conscious Federated Learning for Multi-Institutional ADME Studies

Objective: To enable collaborative model training on distributed, sensitive ADME datasets while minimizing total communication and client computation energy.

Core Thesis Context: HPO is applied not only to model parameters but to federated learning (FL) hyperparameters, which govern communication overhead—a major energy cost component.

Protocol:

- Framing: Define FL-specific HPO search space:

- Hyperparameters: Number of communication rounds R {50, 100, 150}, fraction of clients selected per round C {0.1, 0.3, 0.5}, local epochs E {1, 2, 5}, local batch size B {8, 16, 32}.

- Energy Profiling: Model energy for a single client as:

E_local = P_compute * T_local * E. Model communication energy per round as proportional to model size (ΔW) and client count. - Surrogate-Assisted HPO:

- Use a Gaussian Process to model the relationship between FL hyperparameters (R, C, E, B) and the two objectives: (1) Final global model accuracy, (2) Total system energy cost.

- Employ Expected Hypervolume Improvement (EHVI) to propose new hyperparameter sets that improve the Pareto front.

- Execution: Run the FL simulation on a proxy public dataset (e.g., Therapeutics Data Commons ADME group) using the Flower framework to validate the HPO results before live deployment.

Visualizations

Diagram 1: Multi-Objective HPO for Energy-Aware Model Training

Diagram 2: Energy-Optimized Federated Learning HPO Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Energy-Aware ML Research in Pharma

| Tool / Reagent | Category | Function in Energy-Aware Research |

|---|---|---|

| Optuna / Ray Tune | HPO Framework | Enables easy setup of multi-objective searches incorporating energy metrics. Essential for thesis validation. |

| CodeCarbon / Experiment Impact Tracker | Energy Tracking Library | Software-based estimator of hardware energy consumption and CO2 emissions for ML experiments. |

| NVIDIA Triton Inference Server | Model Serving | Optimizes deployed model inference throughput and latency, reducing energy cost per prediction. |

| PyTorch Lightning / TensorFlow | ML Framework | Provides hooks for profiling training loop energy and supports reduced precision (FP16) training. |

| Flower Framework | Federated Learning | Facilitates development of FL pipelines where communication efficiency is a primary HPO target. |

| Therapeutics Data Commons (TDC) | Benchmark Datasets | Provides standardized ADME/toxicity datasets to fairly benchmark energy-efficient models. |

| Docker / Singularity | Containerization | Ensures reproducible, portable environments that prevent energy waste from configuration errors. |

Practical HPO Strategies for Reducing Computational Footprint in Drug Discovery

Hyperparameter optimization (HPO) is a critical step in developing performant machine learning models. Traditional methods like exhaustive grid search, while thorough, are computationally and energetically prohibitive, especially for large-scale models prevalent in scientific domains like drug discovery. This article, framed within a thesis on HPO for energy-efficient ML, details modern algorithms that explicitly or implicitly reduce energy consumption during model tuning. We provide application notes, experimental protocols, and resource toolkits for researchers aiming to integrate energy consciousness into their workflows.

Core HPO Algorithm Categories & Energy Profiles

The following table summarizes key HPO strategies, their mechanisms, and relative energy efficiency.

Table 1: Overview of HPO Algorithms with Energy Considerations

| Algorithm Category | Key Mechanism | Primary Energy-Saving Strategy | Typical Use Case in Scientific ML | Relative Energy Efficiency (vs. Grid Search) |

|---|---|---|---|---|

| Random Search | Random sampling of hyperparameter space | Avoids exponential scaling; early stopping viable. | Initial screening of model configurations for bioactivity prediction. | Moderate-High |

| Bayesian Optimization (BO) | Surrogate model (e.g., Gaussian Process) guides sequential search. | Concentrates evaluations on promising regions; fewer total runs. | Optimizing deep neural networks for protein folding (AlphaFold-style). | High |

| Hyperband | Adaptive resource allocation via successive halving. | Dynamically stops poorly performing trials early ("aggressive early stopping"). | Large-scale hyperparameter sweep for compound toxicity classification. | Very High |

| BOHB (BO + Hyperband) | Combines Bayesian Optimization's sampling with Hyperband's resource efficiency. | Early stopping + intelligent search direction. | High-cost optimization of GNNs for molecular property prediction. | Very High |

| Population-Based Training (PBT) | Joint optimization and training; agents exploit good hyperparameters from peers. | No need for complete retraining from scratch; efficient resource reuse. | Evolving hyperparameters during long training of generative molecular models. | High |

| Multi-Fidelity Optimization | Uses low-fidelity approximations (e.g., subset of data, fewer epochs). | Low-cost approximations prune the search space before high-cost runs. | Screening architectures for electron density prediction in materials science. | Very High |

Experimental Protocols for Energy-Conscious HPO

Protocol 3.1: Benchmarking HPO Algorithms with Energy Metrics

Objective: Compare the performance and energy consumption of Grid Search, Random Search, and Bayesian Optimization for tuning a graph neural network (GNN) on a molecular dataset.

Materials:

- Dataset: Publicly available QM9 molecular property dataset.

- Base Model: A standard Message Passing Neural Network (MPNN) implemented in PyTorch.

- HPO Libraries: Optuna (for BO, Random Search) or Scikit-learn (for Grid Search).

- Hardware: Single NVIDIA V100 GPU, 16-core CPU.

- Monitoring Tool:

codecarbonPython library for tracking energy consumption.

Procedure:

- Define Search Space: Limit to 3 key hyperparameters: learning rate (log-uniform: 1e-4 to 1e-2), number of graph convolution layers (3, 4, 5), and hidden layer dimensionality (64, 128, 256).

- Configure Algorithms:

- Grid Search: Evaluate all 27 possible combinations.

- Random Search: Sample 30 random configurations from the space.

- Bayesian Optimization (TPE): Run for 30 trials.

- Implement Early Stopping: For all methods, integrate a callback to stop training if validation loss does not improve for 50 epochs (max epochs: 500).

- Execute & Monitor: For each HPO run:

a. Initialize the

codecarbontracker. b. Execute the HPO routine, training each candidate model to completion or until early stopped. c. Record the final validation Mean Absolute Error (MAE) of the best-found configuration. d. Stop the tracker and log total energy consumed in kWh. - Analysis: Plot (Energy Consumed) vs (Best Validation MAE) for each method.

Protocol 3.2: Implementing Multi-Fidelity Optimization with Hyperband

Objective: Efficiently tune a convolutional neural network (CNN) for high-content screening image analysis using the Hyperband algorithm.

Materials:

- Dataset: Annotated cellular imaging data from a drug perturbation assay.

- Base Model: ResNet-18 architecture.

- HPO Library: Ray Tune or Optuna with Hyperband scheduler.

- Hardware: Cluster of 4 GPUs (e.g., NVIDIA T4).

Procedure:

- Define Fidelity Parameter: Set the training epoch as the fidelity resource. Specify minimum resource (

min_epoch=1), maximum resource (max_epoch=100), and reduction factor (eta=3). - Define Search Space: Broad space covering optimizer type, learning rate, and batch size.

- Configure Hyperband:

a. Randomly sample

nconfigurations (e.g., n=81). b. For each bracket, train all configurations formin_epochepochs. c. Rank configurations by validation accuracy. Keep the top 1/etafraction, discard the rest. d. Increase the resource allocated to promising configurations by a factor ofeta. e. Repeat the train-rank-promote cycle untilmax_epochis reached for the top configuration(s). - Validation: Train the final best configuration from scratch on

max_epochand evaluate on a held-out test set.

Visualization of HPO Algorithm Workflows

Title: Hyperband's Successive Halving Workflow

Title: BOHB: Bayesian Optimization + Hyperband Loop

The Scientist's Toolkit: Research Reagent Solutions for HPO

Table 2: Essential Software & Hardware for Energy-Conscious HPO Experiments

| Item Name | Category | Function & Relevance to Energy-Efficient HPO |

|---|---|---|

| Optuna | Software Library | A flexible HPO framework supporting pruning (early stopping), multi-fidelity trials (Hyperband), and efficient sampling (BO). Central for implementing energy-saving strategies. |

| Ray Tune | Software Library | Scalable HPO library for distributed computing. Enables seamless parallelization of trials across clusters, reducing total wall-clock time and improving resource utilization. |

| CodeCarbon | Software Library | Tracks energy consumption (kWh) and carbon emissions of computational jobs. Essential for quantifying the environmental impact of HPO experiments. |

| Weights & Biases (W&B) / MLflow | Software Tool | Experiment trackers for logging hyperparameters, metrics, and system metrics (GPU power). Enables comparative analysis of efficiency. |

| NVIDIA DGX Systems | Hardware | Integrated AI servers with optimized power delivery and cooling. Provide high computational density, reducing energy overhead per experiment compared to non-optimized clusters. |

| Job Scheduler (e.g., SLURM) | System Software | Manages cluster resource allocation. Critical for queuing and efficiently packing HPO trials to maximize GPU/CPU utilization and minimize idle time. |

| Low-Precision Training (AMP) | Software Technique | Automatic Mixed Precision reduces memory footprint and increases training speed, directly lowering energy consumption per trial. Integrated into PyTorch/TensorFlow. |

Bayesian Optimization for Targeted, Sample-Efficient Hyperparameter Tuning

Application Notes

Within the thesis on Hyperparameter Optimization for Energy-Efficient Machine Learning, Bayesian Optimization (BO) emerges as a critical methodology for reducing the computational footprint of model development. By framing hyperparameter search as a sample-efficient global optimization problem, BO minimizes the number of costly model training runs required to identify performant configurations. This directly translates to significant energy savings, a core tenet of the thesis. In domains like computational drug development, where models are complex and training data is limited, BO's ability to incorporate prior knowledge and uncertainty provides a targeted, resource-conscious path to optimization.

Core Principle & Energy Efficiency Rationale

BO builds a probabilistic surrogate model (typically a Gaussian Process) of the objective function (e.g., validation loss). It uses an acquisition function (e.g., Expected Improvement) to balance exploration and exploitation, guiding the next hyperparameter evaluation to the most promising region. This sequential, informed strategy often requires 10-100x fewer evaluations than grid or random search to find comparable or superior optima, resulting in proportional reductions in energy consumption and carbon emissions associated with high-performance computing.

Quantitative Performance Data

Table 1: Sample Efficiency of BO vs. Baseline Methods on Benchmark Tasks

| Method | Num. Evaluations to Target (CNN on CIFAR-10) | Final Validation Error (%) (LSTM on PTB) | Relative Energy Consumption* |

|---|---|---|---|

| Bayesian Optimization (BO) | 65 | 78.5 | 1.0 (Baseline) |

| Random Search | 150 | 79.2 | ~2.3 |

| Grid Search | 250 | 79.0 | ~3.8 |

| Evolutionary Algorithm | 120 | 78.7 | ~1.8 |

Estimated based on typical compute time per evaluation.

Table 2: Impact of Prior Integration on Optimization Performance

| BO Variant | With Informative Prior | Without Prior (Default) |

|---|---|---|

| Evaluations to Converge | 42 | 65 |

| Optimal Learning Rate Found | 1.2e-3 | 5.8e-4 |

| Best Model Energy Use (Joules) | 12,450 | 13,100 |

Experimental Protocols

Protocol: Standard BO Loop for Neural Network Hyperparameter Tuning

Objective: Minimize validation loss of a model via hyperparameter optimization. Materials: See Scientist's Toolkit. Procedure:

- Define Search Space: Quantitatively specify ranges for each hyperparameter (e.g., learning rate: log-uniform [1e-5, 1e-2], batch size: categorical [32, 64, 128, 256]).

- Initialize Surrogate Model: Select a Gaussian Process (GP) with a Matérn 5/2 kernel. Collect an initial design of 5-10 points via Latin Hypercube Sampling (LHS) and evaluate the true objective function (train/validate model) at these points.

- Iterative Optimization Loop (Repeat for N=50-200 iterations): a. Update Surrogate: Fit the GP to all observed {hyperparameters, validation loss} pairs. b. Optimize Acquisition: Compute the Expected Improvement (EI) across the search space. Find the hyperparameter set x that maximizes EI. c. Evaluate Objective: Train a new model using hyperparameters x. Compute the validation loss. d. Augment Data: Append the new observation (x, loss) to the dataset.

- Termination: Halt after N iterations or when improvement plateaus (e.g., <0.1% for 10 consecutive steps).

- Output: Report the hyperparameter set corresponding to the best observed validation loss.

Protocol: BO with Multi-Fidelity for Energy-Aware Tuning

Objective: Leverage lower-fidelity approximations (e.g., fewer training epochs, subset of data) to reduce energy cost per BO step. Procedure:

- Setup Fidelity Space: Define fidelity parameter(s) (e.g.,

epoch_fraction∈ [0.1, 1.0],data_fraction∈ [0.2, 1.0]). - Choose Multi-Fidelity Model: Implement a Gaussian Process model that correlates information across fidelities (e.g., a linear multi-fidelity GP).

- Modify Acquisition: Use a cost-aware acquisition function (e.g., EI per unit cost).

- Joint Selection: At each iteration, jointly select the next hyperparameter set AND the fidelity at which to evaluate it.

- Final Evaluation: Train the model with the best-found hyperparameters at full fidelity (100% epochs/data) for final validation.

Visualizations

Title: Bayesian Optimization Iterative Workflow

Title: BO Surrogate Model & Acquisition Logic

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Bayesian Optimization

| Item/Solution | Function & Relevance to Energy-Efficient Tuning |

|---|---|

| BoTorch / Ax (Meta) | Open-source frameworks for modern BO, supporting multi-fidelity, constrained, and parallelized BO, crucial for complex, costly tuning tasks. |

| Scikit-Optimize | Lightweight library for sequential model-based optimization, ideal for prototyping and integrating into custom ML pipelines. |

| Gaussian Process (GP) | Core surrogate model; its calibrated uncertainty quantification drives sample efficiency. |

| Matérn 5/2 Kernel | Default kernel for GP in BO; less smooth than RBF, better for modeling complex, potentially non-stationary objective functions. |

| Expected Improvement (EI) | Standard acquisition function; balances local exploitation and global exploration to find the global optimum. |

| Hyperparameter Search Space | Carefully defined numerical ranges (continuous, integer, categorical) based on domain knowledge; a well-specified space reduces wasted evaluations. |

| Multi-Fidelity Proxy | Low-cost approximations (e.g., partial training) integrated via specific GP models to dramatically reduce energy cost per BO iteration. |

| Cost-Aware Acquisition | Modifies EI (e.g., EI per unit time/energy) to directly optimize for resource efficiency, aligning with the thesis core. |

I. Introduction Within the pursuit of energy-efficient machine learning (ML) for computationally intensive fields like drug discovery, hyperparameter optimization (HPO) presents a significant energy and financial cost. Traditional methods like grid or random search perform many full, high-fidelity (i.e., training to completion) evaluations of poor configurations. Multi-fidelity methods, notably Successive Halving (SH) and Hyperband, address this by dynamically allocating resources, aggressively pruning underperforming trials early, and focusing computational energy only on the most promising configurations. This protocol details their application for resource-conscious research.

II. Theoretical Framework and Comparative Analysis

Table 1: Core Multi-Fidelity Algorithms for Resource-Aware HPO

| Method | Core Principle | Primary Hyperparameter | Advantage | Disadvantage |

|---|---|---|---|---|

| Successive Halving (SH) | Allocates a budget (e.g., epochs, data subset) to n configurations, keeps the top 1/eta, repeats with increased budget until one remains. |

Elimination rate (eta, typically 3 or 4). |

Conceptually simple, aggressive pruning. | Requires careful choice of initial n; can eliminate promising but late-blooming configs. |

| Hyperband | Performs multiple SH loops (brackets) with different initial n and min budget, automating the n vs. budget trade-off. |

Same eta as SH. Number of brackets is derived. |

Robust; eliminates need to specify n; provides hedging strategy. |

Can appear to "waste" resources on low-budget brackets, but overall more efficient. |

| ASHA (Async SH) | Asynchronous variant of SH. Promotes trials as resources free, avoiding synchronization delays. | eta, max/min resource. |

High cluster utilization; practical for heterogeneous environments. | Can promote based on incomplete information. |

Table 2: Quantitative Energy & Resource Savings (Representative Study)

| Benchmark | Baseline (Random Search) | Hyperband | Speedup (x-fold) | Estimated Energy Reduction |

|---|---|---|---|---|

| CNN on CIFAR-10 | 100 full trainings | Equivalent performance in ~20 full-training equivalents | 5x | ~75-80% |

| Drug-Target Affinity Model (DeepDTA) | 50 full epochs x 100 configs | Equivalent validation loss in 1/5th total epochs | 4-6x | ~70-80% |

| Protocol Takeaway | High carbon cost, slow iteration. | Faster convergence, lower compute burden. | 3-6x typical | 60-80% possible |

III. Experimental Protocols

Protocol A: Implementing Hyperband for Ligand-Based Virtual Screening Model Tuning

Objective: Optimize a Graph Neural Network's hyperparameters to predict compound activity while minimizing total GPU energy consumption. Materials: Molecular dataset (e.g., from ChEMBL), HPO framework (Ray Tune, Optuna), GPU cluster with energy monitoring. Hyperparameter Search Space:

- Learning Rate: LogUniform[1e-5, 1e-3]

- Graph Convolution Layers: [2, 3, 4, 5]

- Hidden Layer Size: [64, 128, 256]

- Dropout Rate: [0.0, 0.1, 0.2, 0.3]

Procedure:

- Setup: Define the model training function that accepts a configuration dict and the fidelity parameter

epoch. Use a small subset of the training data for the first fidelity increment. - Configure Hyperband: Set

eta=3,max_epoch=100. This defines brackets. The min resource (min_epoch) will be automatically calculated (e.g.,100 / (eta^3)≈ 4 epochs). - Execution: Launch Hyperband via your chosen framework. Each bracket will start with a different number of random configurations (e.g., 27, 9, 3) all trained for

min_epoch. - Pruning: After each rung, only the top

1/etaconfigurations are promoted to train forepoch * etalonger. - Validation: The final, best-performing configuration (having trained for up to

max_epoch) is evaluated on a hold-out test set. - Energy Monitoring: Record total GPU wall-clock time and, if available, power draw (using tools like

nvidia-smi) for the entire HPO process. Compare to a baseline random search run for the same total wall-clock duration.

Protocol B: Adaptive Successive Halving (ASHA) for Protein Folding Simulation Calibration

Objective: Tune molecular dynamics (MD) or ML-based folding simulation parameters to maximize accuracy against known structures, with early stopping of poor runs. Materials: Simulation software (e.g., OpenMM, AlphaFold), target protein structures (PDB), high-performance computing (HPC) queue. Search Space: Force field parameters, learning rates for iterative refinement, number of relaxation steps. Procedure:

- Define Fidelity: Set fidelity as

computation_timeornumber_of_relaxation_steps. Lower fidelity gives a coarse, faster approximate of the final RMSD score. - Configure ASHA: Set

max_resource(e.g., 48 hours or 1000 steps),reduction_factor (eta)=4. Specify a large initial pool of random configurations. - Asynchronous Execution: Submit all configurations to the HPC queue at the minimum resource level. As jobs complete, promote the top performers to the next rung immediately, without waiting for all concurrent jobs to finish.

- Continuous Promotion: Continue until a configuration reaches

max_resourceor a performance threshold is met. This maximizes cluster utilization compared to synchronous SH.

IV. Visualization of Workflows

Title: Successive Halving Iterative Pruning Loop

Title: Hyperband Structure with Multiple Brackets (η=3)

V. The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Multi-Fidelity HPO in Scientific ML

| Tool/Reagent | Function & Relevance | Example/Note |

|---|---|---|

| Ray Tune | Scalable Python library for distributed HPO. Native support for Hyperband, ASHA, and other cutting-edge algorithms. | Preferred for large-scale, cluster-based experiments. Integrated with ML frameworks. |

| Optuna | Define-by-run HPO framework. Efficient implementation of multi-fidelity pruners (Hyperband, ASHA). | Highly flexible, easier for iterative, custom trial definitions. |

| Weights & Biases (W&B) / MLflow | Experiment tracking and visualization. Crucial for monitoring progressive fidelity of trials and comparing brackets. | Log validation loss vs. epochs for all trials to visualize pruning. |

| NVIDIA SMI / GPU Power Sensors | Hardware-level energy monitoring. Provides quantitative data for the energy-efficiency thesis. | nvidia-smi --query-gpu=power.draw --format=csv enables live tracking. |

| Configurable Fidelity Proxy | A reduced-model or subset of data used for low-fidelity evaluations. | e.g., 10% of training data, 1/4 of model layers, or fewer MD simulation steps. |

| High-Performance Compute (HPC) Scheduler | Manages job queues for asynchronous algorithms like ASHA on shared clusters. | SLURM, PBS Pro. Critical for Protocol B. |

Adaptive Early Stopping Policies to Halt Non-Promising Trials

Within the thesis on Hyperparameter Optimization for Energy-Efficient Machine Learning Research, adaptive early stopping is a critical strategy. It directly addresses the energy inefficiency of exhaustive hyperparameter optimization by terminating trials that are unlikely to yield optimal results, thereby conserving significant computational resources. This protocol is particularly relevant for compute-intensive fields like drug development, where molecular simulation or bioactivity prediction models require extensive tuning.

The following table summarizes key adaptive early stopping policies, their mechanisms, and performance data from recent literature.

Table 1: Comparative Analysis of Adaptive Early Stopping Policies

| Policy Name | Core Mechanism | Key Metric(s) | Typical Resource Saving vs. Exhaustive Search | Primary Reference (Year) |

|---|---|---|---|---|

| Median Stopping Rule | Halts trial if performance below median of running trials. | Intermediate Validation Loss | 30-50% | Google Vizier (2017) |

| Hyperband | Aggressive successive halving with bracketed resource allocations. | Loss/Accuracy at budget r | 5x-30x Speedup | Li et al. (2018) |

| ASHA (Async. Successive Halving) | Asynchronous, aggressive early stopping based on percentile rank. | Validation Error Rank | 10x-20x Speedup | Li et al. (2020) |

| Learning Curve Extrapolation | Predicts final performance from early learning curve. | Predicted Final Accuracy RMSE | 40-60% | Klein et al. (2020) |

| Gaussian Process-Based | Uses probabilistic model to predict trial promise. | Expected Improvement (EI) | 50-70% | Falkner et al. (2018) |

Experimental Protocols

Protocol 3.1: Implementing ASHA for a Drug Discovery CNN

Objective: To optimize a convolutional neural network (CNN) for protein-ligand binding prediction while minimizing GPU energy consumption.

Materials: See "Scientist's Toolkit" (Section 5).

Method:

- Define Search Space: Hyperparameters include number of convolutional layers [2, 5], filter size [32, 128], learning rate [1e-4, 1e-2], dropout rate [0.1, 0.5].

- Configure ASHA Scheduler:

- Set

max_epochs(total resource) to 50. - Define reduction factor

η=3. Each "rung" promotes the top 1/3 of trials. - Set minimum resource

min_epochs=2. - Configure to asynchronously stop any trial whose performance at its current rung is below the 25th percentile of completed trials at that rung.

- Set

- Execution:

- Launch 100 parallel trials via a distributed computing framework (e.g., Ray Tune).

- Each trial trains for 2 epochs, is evaluated, and is potentially paused.

- Promising trials are repeatedly continued until the next rung (e.g., 6, 18, 50 epochs).

- Halted trials' resources are immediately reallocated.

- Validation: The best-performing configuration from ASHA is trained fully (50 epochs) on a held-out validation set and compared against a model from a random search with no early stopping.

Protocol 3.2: Learning Curve Extrapolation for Clinical Trial Outcome Prediction

Objective: Early stopping of unpromising trials for a recurrent neural network (RNN) model predicting patient outcomes.

Method:

- Model Definition: An LSTM network with embeddings for patient demographics and treatment codes.

- Probabilistic Forecasting:

- After each training epoch, extract the sequence of validation losses so far.

- Fit a Bayesian neural network or a Gaussian Process regressor to this partial learning curve.

- The model predicts the final loss distribution and its uncertainty.

- Stopping Decision:

- Calculate the probability that the trial's final loss will be in the top 10% of the current Pareto frontier.

- If this probability falls below a threshold (e.g., 5%) after a minimum of 10 epochs, terminate the trial.

- Energy Monitoring: Use system profiling tools (e.g.,

nvidia-smi,powertop) to log energy consumption per trial, correlating early stopping decisions with joules saved.

Visualizations

Early Stopping Decision Workflow

Energy Impact of Early Stopping in HPO

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Energy-Aware HPO

| Item/Category | Function & Relevance to Early Stopping |

|---|---|

| Hyperparameter Optimization Library (Ray Tune, Optuna) | Provides pluggable, distributed implementations of ASHA, Hyperband, and other early stopping schedulers. Essential for protocol execution. |

| System Metrics Profiler (Prometheus, Grafana, nvidia-smi) | Monitors real-time GPU/CPU utilization, power draw (watts), and memory. Critical for quantifying energy savings from early stopping. |

| Checkpointing System (PyTorch Lightning, TF Checkpoint) | Saves model state periodically. Allows paused trials in asynchronous policies to be resumed seamlessly without wasting prior computation. |

| Probabilistic Modeling Library (GPyTorch, scikit-learn GPs) | Enables implementation of learning curve extrapolation and Bayesian optimization-based early stopping policies. |

| Distributed Compute Backend (Ray, Kubernetes) | Manages resource pooling and job scheduling across clusters, enabling the rapid reallocation of resources from halted trials. |

| Energy Measurement Hardware (Power Meters) | For precise, wall-level energy consumption tracking, providing ground-truth data for thesis validation. |

This work presents a detailed case study on hyperparameter optimization (HPO) of Graph Neural Networks (GNNs) for molecular property prediction. It is situated within a broader thesis focused on developing energy-efficient machine learning methodologies. The objective is to achieve state-of-the-art predictive accuracy while minimizing computational resource consumption, thereby reducing the carbon footprint of large-scale virtual screening and drug discovery pipelines.

Key Hyperparameters & Optimization Targets

The following table summarizes the core hyperparameters investigated, their typical ranges, and their primary impact on model performance and computational efficiency.

Table 1: Key GNN Hyperparameters for Optimization

| Hyperparameter | Typical Search Range | Impact on Performance | Impact on Efficiency (Compute/Energy) |

|---|---|---|---|

| Number of GNN Layers | 3 - 8 | Depth of message passing; too few/many layers can hurt performance (under/over-smoothing). | Directly impacts forward/backward pass time and GPU memory. |

| Hidden Layer Dimension | 64 - 512 | Model capacity and ability to capture complex molecular features. | Quadratically impacts parameter count and compute for dense layers. |

| Learning Rate | 1e-4 - 1e-2 | Convergence speed and final model accuracy. | Influences number of epochs required for convergence. |

| Batch Size | 32 - 256 | Gradient estimate stability and generalization. | Larger batches increase GPU memory use but can improve throughput. |

| Dropout Rate | 0.0 - 0.5 | Regularization strength to prevent overfitting. | Negligible direct compute cost. |

| Graph Pooling Method | {Sum, Mean, Attn} | How node features are aggregated to a graph-level representation. | Attention (Attn) is more computationally expensive than Sum/Mean. |

Experimental Protocols

Protocol A: Baseline GNN Training and Evaluation

Objective: Establish a performance baseline on standard molecular datasets. Workflow:

- Data Preparation: Use the MoleculeNet benchmark datasets (e.g., ESOL, FreeSolv, HIV).

- Molecular Graph Representation: Convert SMILES strings to graph objects using RDKit. Nodes represent atoms (features: atomic number, degree, hybridization). Edges represent bonds (features: bond type, conjugation).

- Model Architecture: Implement a standard Message Passing Neural Network (MPNN) with ReLU activation.

- Training: Use Adam optimizer, Mean Squared Error (MSE) loss for regression, Binary Cross-Entropy for classification. Train for a fixed 100 epochs.

- Evaluation: Report standard metrics (RMSE, MAE for regression; ROC-AUC for classification) on the held-out test set.

Protocol B: Multi-Fidelity Hyperparameter Optimization

Objective: Efficiently identify optimal hyperparameters balancing accuracy and energy use. Workflow:

- Search Space Definition: Define the ranges and choices for parameters in Table 1.

- Optimization Setup: Employ a multi-fidelity HPO algorithm (e.g., Hyperband or ASHA).

- Low-Fidelity Trial: A trial (hyperparameter set) is first evaluated with a small subset of training data and/or fewer training epochs. This quickly weeds out poor configurations.

- High-Fidelity Trial: Promising configurations are allocated more resources (full dataset, more epochs).

- Energy Monitoring: Use a tool like

codecarbonorexperiment-impact-trackerto log estimated energy consumption (kWh) and CO₂ equivalent for each trial. - Selection Criterion: Identify the Pareto-optimal set of hyperparameters that best trade-off validation metric and energy consumption.

Protocol C: Optimized Model Validation & Inference

Objective: Validate the final optimized model and profile its inference efficiency. Workflow:

- Retrain: Retrain the model with the optimal hyperparameters on the combined training and validation sets.

- Final Evaluation: Assess performance on the untouched test set.

- Inference Profiling: Measure average inference time and memory usage per molecule for a batch of 1024 molecules.

- Comparative Analysis: Compare accuracy and efficiency metrics against the baseline model from Protocol A.

Visualizations

Title: Optimized GNN Molecular Property Prediction Pipeline

Title: Multi-Fidelity Hyperparameter Optimization Workflow

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Materials

| Item | Function & Explanation |

|---|---|

| RDKit | Open-source cheminformatics toolkit. Used to parse SMILES strings, generate molecular graphs, and calculate basic molecular descriptors. |

| PyTorch Geometric (PyG) / DGL | Specialized libraries for building and training GNNs. Provide efficient, batched operations on graph-structured data. |

| MoleculeNet Benchmark | A standardized collection of molecular datasets for training and evaluating machine learning models. |

| Optuna or Ray Tune | Advanced HPO frameworks. Enable efficient, scalable, and parallel search over hyperparameter spaces using algorithms like ASHA and TPE. |

| Weights & Biases (W&B) / MLflow | Experiment tracking platforms. Log hyperparameters, metrics, model artifacts, and system resource usage for reproducibility. |

| CodeCarbon | A Python package for estimating the carbon dioxide (CO₂) emissions produced by computing infrastructure. Critical for energy-aware HPO. |

| High-Performance Computing (HPC) Cluster or Cloud GPU (e.g., NVIDIA V100/A100) | Provides the necessary parallel compute resources to run hundreds of HPO trials in a feasible timeframe. |

Overcoming Challenges: Solutions for Real-World Energy-Efficient HPO

Application Notes & Protocols for Hyperparameter Optimization in Energy-Efficient ML

Core Challenges in Hyperparameter Optimization (HPO)

The pursuit of optimal model performance often leads researchers into two critical traps: overfitting to the validation set during iterative HPO and neglecting the inference costs of the final deployed model. Within energy-efficient machine learning research, this translates to suboptimal real-world performance and unsustainable computational burdens.

Quantitative Impact of Overfitting to Validation Data: Table 1: Reported Performance Gaps Due to Validation Set Overfitting in Recent Literature

| Study / Benchmark (Year) | Model Class | Reported Validation Accuracy (%) | Test/External Accuracy (%) | Performance Gap (pp) | Primary Cause |

|---|---|---|---|---|---|

| Protein-Ligand Affinity Prediction (2023) | GNN Ensemble | 92.1 | 85.3 | 6.8 | Iterative tuning on small, non-stratified validation set |

| Medical Image Segmentation (2024) | Vision Transformer | 94.7 | 88.9 | 5.8 | Leakage via augmentation tuning on validation data |

| CRISPR Guide Efficacy (2024) | Hybrid CNN-LSTM | 89.5 | 82.1 | 7.4 | Multiple rounds of architecture search on same split |

Quantitative Impact of Ignoring Inference Costs: Table 2: Inference Cost Metrics for Common Model Archetypes in Drug Discovery

| Model Archetype | Avg. Params (M) | Avg. Inference Energy (J/1000 inf.) | Avg. Latency (ms/inf.) | Typical Deployment Scenario |

|---|---|---|---|---|

| LightGBM / XGBoost | < 1 | 12.5 | 1.2 | High-throughput virtual screening |

| 3D-CNN (Small) | 15 | 285.0 | 45.0 | Compound activity prediction |

| Graph Neural Network | 8 | 420.0 | 120.0 | Molecular property regression |

| Large Vision Transformer | 300+ | 5200.0 | 850.0 | Histopathology analysis |

Experimental Protocols

Protocol 2.1: Nested Cross-Validation for Robust HPO

Objective: To prevent overfitting to a single validation set during hyperparameter search.

- Outer Loop (Performance Estimation): Partition dataset into k folds (e.g., k=5). Reserve one fold as the test set. This test set is used only once for final evaluation.

- Inner Loop (Hyperparameter Search): On the remaining data, perform a second, independent k-fold cross-validation (e.g., k=3).

- Search: For each hyperparameter set: a. Train model on k-1 inner training folds. b. Validate on the held-out inner validation fold. c. Average performance across all inner validation folds.

- Selection: Choose the hyperparameter set with the best average inner validation performance.

- Final Training: Train a new model with the selected hyperparameters on all data from the outer training set (i.e., all data not in the outer test fold).

- Evaluation: Assess this final model on the held-out outer test fold.

- Iteration & Final Estimate: Repeat for all outer folds. The average performance across all outer test folds provides an unbiased estimate of generalization error.

Protocol 2.2: Multi-Objective HPO Incorporating Inference Cost

Objective: To identify Pareto-optimal model configurations balancing predictive performance and inference efficiency.

- Define Search Space: Include architectural hyperparameters that directly impact cost (e.g., number of layers, hidden dimensions, pruning rate) alongside learning parameters.

- Define Objectives: Formalize as a two-objective minimization problem: (1) Validation Loss (L), (2) Inference Cost Metric (C). C can be a proxy (e.g., FLOPs) or a direct measurement.

- Setup Cost Profiling: Implement a standardized profiling function that, for a given model configuration, computes C on a fixed hardware setup and a representative input batch.

- Perform Search: Utilize a multi-objective optimizer (e.g., NSGA-II, MOEA/D). a. For each candidate configuration, run Protocol 2.1's inner loop to estimate L. b. Run the cost profiling function to obtain C.

- Analysis: Retrieve the Pareto front of configurations. Report the trade-off curve to stakeholders for informed selection based on deployment constraints.

Mandatory Visualizations

Title: Nested Cross-Validation HPO Workflow

Title: Multi-Objective HPO Pareto Frontier

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Robust & Efficient HPO in ML Research

| Item / Solution | Function in HPO | Key Considerations for Energy-Efficiency |

|---|---|---|

| Ray Tune / Optuna | Distributed hyperparameter optimization frameworks enabling scalable, asynchronous searches (including multi-objective). | Supports early stopping, model pruning, and efficient search algorithms (e.g., Hyperband) to reduce total computational joules expended during HPO. |

| Weights & Biases (W&B) / MLflow | Experiment tracking platforms to log hyperparameters, metrics, and system metrics (GPU power, CPU utilization). | Enables correlation of model performance with inference energy cost. Critical for post-hoc Pareto analysis. |

| CodeCarbon / Experiment Impact Tracker | Libraries for estimating the carbon emissions and energy consumption of ML training and inference code. | Provides the quantitative cost metric (C) for integration into multi-objective HPO (Protocol 2.2). |

| PyTorch Profiler / TensorFlow Profiler | Low-level tools to analyze model operation time, memory footprint, and hardware utilization. | Identifies energy bottlenecks in the forward pass (inference) of candidate architectures during HPO. |

| NestedCrossValidator (scikit-learn) | Software implementation of nested cross-validation loops. | Prevents data leakage by enforcing strict separation between hyperparameter selection and model evaluation. |

| ONNX Runtime / TensorRT | High-performance inference engines. | Used in the profiling phase to estimate real-world deployment costs of candidate models post-HPO. |

Within hyperparameter optimization (HPO) for energy-efficient machine learning (ML) in scientific domains like drug discovery, computational resource heterogeneity is a primary constraint. Modern HPO campaigns leverage multi-node clusters (often with mixed GPU generations, CPU architectures, and memory hierarchies) and dynamic cloud environments (featuring preemptible VMs, spot instances, and diverse hardware accelerators). This heterogeneity directly impacts experiment runtime, energy consumption, and cost, making its management a critical component of a sustainable ML research thesis.

Core Strategies and Quantitative Analysis

Effective management strategies can be categorized by their primary objective: performance maximization, cost/energy minimization, or robustness. The following table summarizes current approaches and their quantitative trade-offs, synthesized from recent literature and cloud provider benchmarks (2023-2024).

Table 1: Comparative Analysis of Strategies for Heterogeneous Resource Management

| Strategy | Primary Goal | Key Mechanism | Typical Impact on HPO Time* | Estimated Cost/Energy Savings* | Best-Suited Environment |

|---|---|---|---|---|---|

| Dynamic Work Stealing | Performance | Idle workers pull tasks from busy queues. | Reduction of 15-25% | 5-10% (from reduced idle time) | Mixed-performance on-premise clusters |

| Hyperparameter-Aware Scheduling | Energy Efficiency | Co-scheduling trials and mapping compute-intensive HPs to efficient hardware. | Variable (can be neutral) | 15-30% | Cloud/Cluster with known performance-per-watt profiles |

| Adaptive Trial Early Stopping | Cost/Energy | Aggressively stop poorly performing trials using asynchronous metrics. | Reduction of 40-60% | 35-50% | All environments, especially costly cloud accelerators |

| Hybrid On-Prem/Cloud Bursting | Cost/Scale | Baseline on-prem, burst peak load to cloud spot instances. | Reduction of 30-40% (vs. pure on-prem) | 20-35% (vs. pure cloud) | Organizations with fixed + variable workload needs |

| Containerization & Hardware Abstraction | Robustness | Use Docker/Podman to encapsulate dependencies across nodes. | <5% overhead | Neutral (enables other strategies) | Highly heterogeneous or frequently changing environments |

| Performance Profiling & Prediction | Scheduling | Train a model to predict trial runtime on each resource type. | Reduction of 20-30% | 15-25% | Large, stable clusters with historical data |

*Estimates are relative to a naive FIFO scheduler on the same heterogeneous resource pool. Actual results vary by workload and heterogeneity degree.

Experimental Protocols for Validation

Protocol 1: Benchmarking Heterogeneous Cluster Performance for HPO Objective: To quantify the performance penalty and energy inefficiency of a naive scheduler on a heterogeneous cluster.

- Setup: Assemble a test cluster with at least two distinct node types (e.g., nodes with NVIDIA V100 vs. A100 GPUs, or different CPU generations).

- Workload Definition: Select a standard drug discovery ML task (e.g., ligand-based virtual screening using a Graph Neural Network).

- HPO Configuration: Define a search space with 50+ hyperparameter combinations (e.g., learning rate, hidden layers, dropout).

- Control Experiment: Run the HPO using a simple First-In-First-Out (FIFO) scheduler, assigning trials to resources as they become available without regard to capability.

- Metric Collection: Log for each trial: (a) Total wall-clock time to completion, (b) Energy consumption (via tools like

nvmlfor GPUs,RAPLfor CPUs), (c) Hardware utilization (%). - Analysis: Calculate makespan (total HPO completion time), total energy consumed, and average resource utilization.

Protocol 2: Evaluating a Dynamic Work-Stealing Scheduler Objective: To measure the improvement of a dynamic scheduler over the naive baseline.

- Baseline: Establish results from Protocol 1, Control Experiment.

- Scheduler Implementation: Implement or deploy a work-stealing scheduler (e.g., using Ray Tune's population-based training or a custom scheduler listening to worker heartbeat).

- Experimental Run: Execute the identical HPO workload (same random seed) using the work-stealing scheduler.

- Metric Collection: Collect identical metrics as in Protocol 1, Step 5.

- Comparative Analysis: Compute the percentage improvement in makespan and total energy consumption. Analyze the reduction in idle time on faster nodes.

Protocol 3: Adaptive Early Stopping for Energy Savings Objective: To validate the cost-energy savings of aggressive, performance-based early stopping.

- Setup: Use a cloud environment with preemptible/spot instances (e.g., AWS EC2 Spot Instances, GCP Preemptible VMs).

- HPO Configuration: Define a large search space (>100 trials). Establish a validation metric (e.g., validation loss) and a patience threshold.

- Control: Run HPO with conservative early stopping (high patience).

- Intervention: Run HPO with adaptive early stopping (e.g., Hyperband or ASHA algorithm), aggressively stopping bottom-quartile performing trials.

- Measurement: Record total compute cost (in cloud credits), total wall-clock time, and the performance of the best-found model.

- Analysis: Compare cost/time savings between control and intervention. Confirm that the best-found model's performance is not statistically degraded.

Visualization of Strategy Selection Logic

Title: Decision Logic for Selecting Resource Management Strategies

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Platforms for Managing Heterogeneity in HPO

| Item/Reagent | Function in the "Experiment" | Example/Note |

|---|---|---|

| Ray Tune / Ray Cluster | Orchestration framework for distributed, hardware-agnostic HPO. Enables easy implementation of work-stealing and early stopping. | Primary library for scalable HPO across heterogeneous nodes. |

| Kubernetes (K8s) | Container orchestration system. Abstracts hardware and enables seamless hybrid cloud bursting and deployment. | Manages containerized HPO workers across on-prem and cloud nodes. |

| Docker / Podman | Containerization platforms. Ensure environment consistency across all heterogeneous nodes. | Encapsulates Python, CUDA, and all dependencies. |

| Weights & Biases (W&B) / MLflow | Experiment tracking. Centralized logging of metrics, hyperparameters, and system resources across all trials and nodes. | Critical for comparing trial performance across different hardware. |

| Slurm / PBS Pro | High-performance computing workload managers. Native schedulers for many on-premise heterogeneous clusters. | Can be integrated with cloud bursting plugins. |

| NVIDIA DCGM / Intel RAPL | Performance monitoring libraries. Provide fine-grained energy and utilization metrics for GPUs and CPUs. | Essential for profiling and building performance prediction models. |

| Custom Performance Predictor | A small ML model that predicts trial runtime/energy use on a specific node type based on hyperparameters. | Enables intelligent scheduling; can be built using historical W&B/MLflow data. |

Integrating Hardware Awareness (GPU/CPU Power Capping) into the Optimization Loop