Optimizing Pharmaceutical Processes: A Design of Experiments (DoE) Framework for Comparing Solvent Effects

This article provides a comprehensive guide for researchers and drug development professionals on applying Design of Experiments (DoE) to systematically compare and optimize solvent effects.

Optimizing Pharmaceutical Processes: A Design of Experiments (DoE) Framework for Comparing Solvent Effects

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying Design of Experiments (DoE) to systematically compare and optimize solvent effects. It covers foundational principles, demonstrating how DoE overcomes the limitations of one-variable-at-a-time approaches by efficiently exploring complex solvent interactions. The content explores methodological applications, from screening key factors with Plackett-Burman designs to optimizing solvent systems using Principal Component Analysis (PCA) maps. It further addresses troubleshooting and optimization strategies for challenging systems, including data-sparse modeling and computational solvent optimization. Finally, the article presents validation and comparative frameworks, evaluating DoE performance against traditional methods across diverse pharmaceutical applications such as lipid-based formulations, API crystallization, and green extraction of bioactive compounds, offering a validated pathway to enhance solubility, bioavailability, and process efficiency.

Beyond Trial and Error: Foundational Principles of DoE for Solvent Effect Analysis

The Critical Role of Solvent Selection in Pharmaceutical Development and Bioavailability

In pharmaceutical development, solvent selection is a critical determinant of product quality, process efficiency, and ultimately, therapeutic efficacy. Over 70% of new chemical entities (NCEs) exhibit poor aqueous solubility, presenting significant bioavailability challenges that often necessitate strategic formulation interventions [1] [2]. Solvents function not merely as inert carriers but as active participants that influence crystal form, dissolution kinetics, and membrane permeability—factors directly impacting drug absorption. The selection process is further complicated by toxicological considerations, requiring manufacturers to minimize both the number and potential toxicity of solvents employed in pharmaceutical processes [3].

Traditional solvent selection approaches, often based on experience and analogy, are increasingly insufficient for modern drug development pipelines. Contemporary strategies now integrate systematic thermodynamic principles with advanced screening methodologies to optimize solvent systems for specific bioavailability challenges [3]. This paradigm shift recognizes that solvents are dynamic participants in pharmaceutical systems, with localized, time-resolved interactions governing many chemical and biological processes essential to drug performance [4]. Within this framework, Design of Experiments (DoE) has emerged as a powerful structured approach for investigating multiple solvent parameters simultaneously, enabling researchers to quantify cause-and-effect relationships and design optimal, robust formulation processes [5].

Solvent Functions and Thermodynamic Principles in Pharmaceutical Systems

Multifunctional Roles of Solvents in Drug Development

Solvents serve diverse, critical functions throughout the pharmaceutical development lifecycle. As summarized in Table 1, their roles extend far beyond simple dissolution to encompass nearly every aspect of drug product creation and performance.

Table 1: Pharmaceutical Functions of Solvents

| Function Category | Specific Applications | Impact on Development |

|---|---|---|

| Process Solvents | Reaction media, crystallization solvents, extraction solvents | Influence yield, purity, crystal form, and particle characteristics |

| Formulation Solvents | Co-solvents in liquid formulations, solvent-based dispersion systems | Affect solubility, stability, and bioavailability of final product |

| Processing Aids | Cleaning solvents, coating solvents | Impact manufacturing efficiency and product quality |

| Analytical Solvents | Mobile phases, extraction solvents | Affect accuracy and reproducibility of quality control methods |

Thermodynamic Basis for Solvent Selection

The solubility of pharmaceutical compounds is governed by fundamental thermodynamic principles that dictate solute-solvent interactions. Synthetic pharmaceuticals are typically medium-sized molecules (10-50 non-hydrogen atoms) composed of aromatic cores with multiple heteroatom substituents (N, O, S, P, halogens) [3]. These structural characteristics create molecules that are highly polarizable and conformationally flexible, requiring special consideration during solvent selection.

Key thermodynamic parameters influencing solvent selection include:

- Activity coefficients that measure deviation from ideal solution behavior

- Solvent-solute interaction parameters including polarization effects common between drug-like molecules and small polar solvents

- Hydrogen bonding capacity which significantly impacts solubility of pharmaceutical compounds containing heteroatoms

- Solvent polarity and polarizability which must complement the electronic characteristics of the drug molecule

The complexity of these interactions necessitates moving beyond simple solubility parameters to models that account for the dynamic, fluctuating nature of solvent-solute interactions [4]. Emerging approaches treat solvents as dynamic solvation fields characterized by fluctuating local structure, evolving electric fields, and time-dependent response functions [4].

Traditional versus Modern Approaches to Solvent Selection

Limitations of Traditional Solvent Descriptors

Traditional solvent selection has relied heavily on bulk parameters such as dielectric constant, donor number, and polarity scales. While valuable for initial screening, these static averages fail to account for localized, time-resolved interactions that govern many chemical transformations critical to pharmaceutical performance [4]. These conventional descriptors cannot adequately capture:

- Fluctuating local solvent structure around pharmaceutical molecules

- Transition state stabilization during dissolution processes

- Nonequilibrium reactivity in gastrointestinal environments

- Interfacial chemical processes at biological membranes

Furthermore, traditional approaches often overlook the multifunctional nature of pharmaceutical molecules, which frequently contain multiple aromatic rings and heteroatoms capable of diverse solvent interactions [3].

Systematic Methodologies and DoE-Driven Approaches

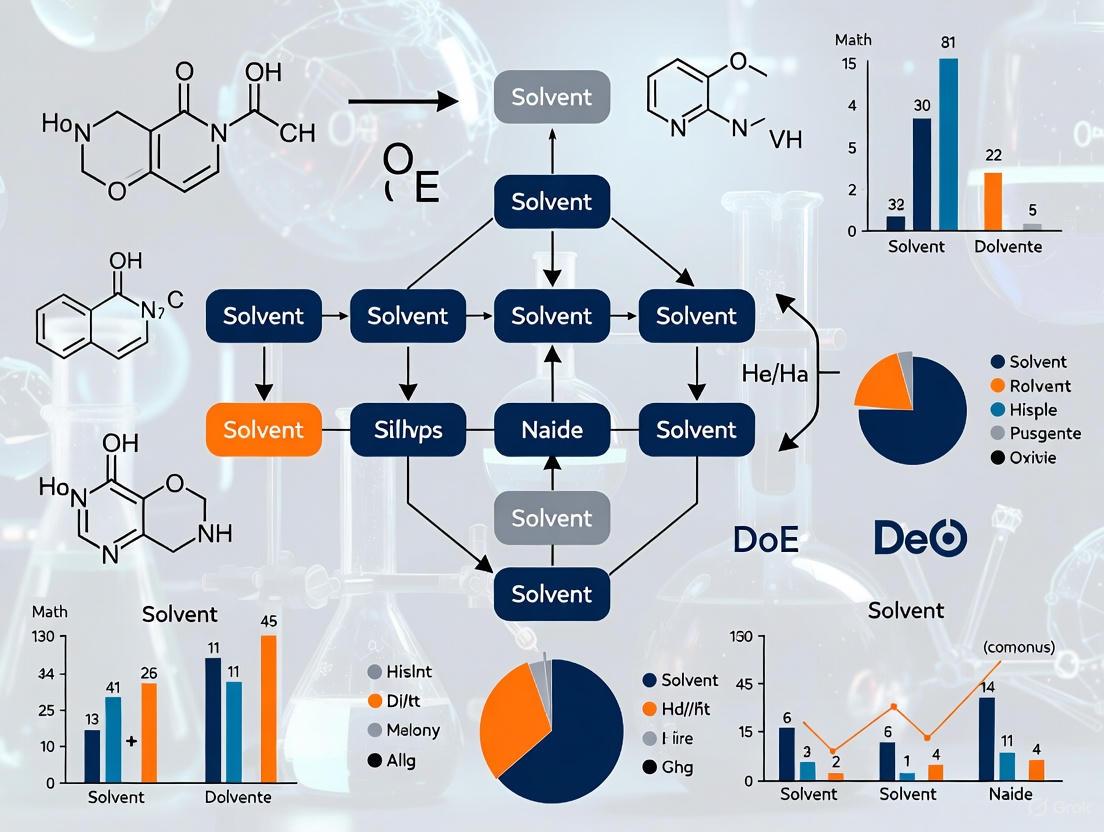

Modern solvent selection employs systematic methodologies that combine theoretical prediction with experimental validation. As illustrated in Figure 1, this integrated approach leverages both computational and empirical tools to optimize solvent systems for bioavailability enhancement.

Figure 1: Integrated workflow for systematic solvent selection incorporating DoE methodology

Critical components of modern solvent selection include:

- Computational prediction methods including quantum-mechanical/COSMO-RS calculations and group contribution methods [3]

- Systematic solubility databases of mono- and bifunctional compounds based on core molecular fragments of common drugs [3]

- DoE-driven optimization that simultaneously evaluates multiple solvent parameters and their interactions [6] [7]

- Bio-relevant testing incorporating physiological conditions and bioavailability assessment [8]

This systematic approach is particularly valuable for identifying crystallization solvents and antisolvents, where solvent selection directly impacts crystal form and purity—critical factors in dissolution behavior and bioavailability [3].

DoE-Enabled Formulation Technologies for Bioavailability Enhancement

Lipid-Based Formulations and Self-Emulsifying Systems

Lipid-based drug delivery systems represent a prominent formulation strategy for poorly soluble drugs, with solvent selection playing a crucial role in their performance. The Lipid Formulation Classification System (LFCS) categorizes these formulations into four types based on composition and emulsification properties, as detailed in Table 2 [6].

Table 2: Lipid Formulation Classification System (LFCS) and Solvent Requirements

| Formulation Type | Composition | Droplet Size After Dispersion | Solvent Considerations | Bioavailability Challenges |

|---|---|---|---|---|

| Type I | 100% oils (triglycerides) | Coarse dispersion | Requires digestible oils; limited solvent capacity | Dependent on lipase digestion; poor for drugs with log P < 2 |

| Type II | 40-80% oils + 20-60% lipophilic surfactants (HLB < 12) | 0.25-2.0 µm | Water-insoluble oils with self-emulsifying properties | Coarser emulsion may limit absorption |

| Type III | 40-80% oils + 20-40% surfactants + 0-40% co-solvents | 100-250 nm (IIIa), 50-100 nm (IIIb) | Balance between oil content and self-emulsification | Possible loss of solvent capacity on dispersion |

| Type IV | Hydrophilic surfactants (HLB > 12) + co-solvents | <50 nm | Oil-free; relies on surfactant/co-solvent mixtures | Risk of drug precipitation upon dispersion |

DoE approaches have proven particularly valuable for optimizing self-microemulsifying drug delivery systems (SMEDDS), which represent Type III lipid formulations [6]. Through careful experimental design, researchers can identify optimal ratios of oil, surfactant, and co-solvent to create robust formulations that spontaneously form fine microemulsions upon aqueous dilution, significantly enhancing drug solubility and absorption.

Amorphous Solid Dispersions and Spray Drying Processes

Amorphous solid dispersions (ASDs) represent another major technology for bioavailability enhancement, with solvent selection critically influencing both manufacturing process and final product performance. ASDs utilize polymers to maintain drugs in amorphous, high-energy states that demonstrate faster dissolution rates and increased apparent solubility [2].

The spray drying process for ASD manufacturing presents particular solvent challenges, especially for compounds with low organic solubility. Innovative approaches to address these limitations include:

- Temperature Shift Processes: Heating spray solutions above the boiling point of the solvent to increase drug solubility, enabling 8- to 14-fold increases in throughput for challenging compounds like alectinib HCL [9]

- Volatile Processing Aids: Using volatile acids (e.g., acetic acid) or bases (e.g., ammonia) to ionize drugs in organic solvents, followed by removal during drying processes, achieving 10- to 40-fold solubility improvements for compounds like gefitinib and piroxicam [9]

DoE methodologies enable systematic optimization of critical spray drying parameters including solvent composition, drug-polymer ratio, and processing temperatures to ensure complete dissolution of all components before spray drying while maintaining product stability and performance [2] [9].

Experimental Design and Analytical Methodologies

DoE Implementation for Solvent System Optimization

Design of Experiments provides a structured, efficient approach to solvent selection by employing statistical techniques to investigate multiple factors simultaneously. The DoE process for solvent optimization typically follows a sequential approach, as implemented in the development of a mixed micellar chromatographic method [7]:

- Screening Phase: Fractional factorial designs (FFD) efficiently identify critical factors from numerous potential solvent parameters with minimal experimental runs

- Optimization Phase: Box-Behnken designs (BBD) or central composite designs determine optimal solvent conditions by studying parameters at three levels

- Robustness Testing: Evaluating method performance under slight variations in solvent conditions to ensure reliability

This systematic approach enables researchers to model complex interactions between solvent parameters and critical quality attributes, establishing design spaces rather than single-point optima [7].

Advanced Analytical and Bio-relevant Assessment Methods

Contemporary solvent selection incorporates sophisticated analytical and bio-relevant assessment methods to predict in vivo performance, as summarized in Table 3.

Table 3: Advanced Methodologies for Evaluating Solvent-Enhanced Formulations

| Methodology Category | Specific Techniques | Application in Solvent Selection | Regulatory Relevance |

|---|---|---|---|

| In Vitro Permeability Assays | PAMPA (Parallel Artificial Membrane Permeability Assay) | Predicts passive transcellular permeability; cost-effective high-throughput screening | Early development decision-making |

| Bio-relevant Dissolution | FaSSGF/FeSSGF (Fasted/Fed State Simulated Gastric Fluid), FaSSIF/FeSSIF (Fasted/Fed State Simulated Intestinal Fluid) | Mimics gastrointestinal environment; evaluates precipitation risk | IVIVC (In Vitro-In Vivo Correlation) development |

| Advanced Characterization | DSC, XRPD, PLM, HSM | Determines solid-state properties, crystallinity, and stability | Quality by Design (QbD) documentation |

| Process Analytical Technology | In-line spectroscopy, particle size analysis | Monitors solvent effects in real-time during manufacturing | Process validation and control |

These methodologies enable formulators to select solvent systems that not only enhance solubility but also maintain supersaturation and prevent precipitation in the gastrointestinal environment—critical factors for bioavailability enhancement [8].

The Scientist's Toolkit: Key Research Reagent Solutions

Successful implementation of solvent-based bioavailability enhancement strategies requires carefully selected materials and reagents. Table 4 details essential components and their functions in formulation development.

Table 4: Key Research Reagent Solutions for Solvent-Enhanced Formulations

| Reagent Category | Specific Examples | Function in Formulation | Bioavailability Considerations |

|---|---|---|---|

| Lipid Phase Components | Medium-chain triglycerides (Miglyol 812, Captex 355), Long-chain triglycerides (soybean, corn oil) | Enhance lymphatic transport; increase solubilization capacity | MCTs offer better self-dispersing properties; LCTs have higher solubilization after digestion |

| Surfactants | Non-ionic surfactants (Gelucire 44/14, Labrasol), Polyoxylglycerides | Stabilize emulsions; reduce interfacial tension; enhance permeability | HLB value determines emulsion type; concentration affects toxicity profile |

| Polymeric Carriers | HPMC, HPMCAS, PVP, PVP-VA | Inhibit crystallization; maintain supersaturation; stabilize amorphous form | Polymer selection affects dissolution profile and stability |

| Solvents & Co-solvents | Ethanol, PEG, glycerin, triacetin | Enhance solvent capacity; modify viscosity | Volatile solvents require removal; non-volatile solvents remain in final product |

| Volatile Processing Aids | Acetic acid, ammonia | Temporarily ionize drug molecules to enhance organic solubility | Removed during processing; regenerate original API form |

Solvent selection represents a critical bridge between API properties and therapeutic performance, particularly for the growing percentage of poorly soluble drug candidates. The evolution from experience-based selection to systematic, DoE-driven approaches has significantly enhanced the pharmaceutical scientist's ability to optimize bioavailability through rational solvent and formulation design.

The most successful solvent strategies integrate thermodynamic principles, physiological considerations, and manufacturing practicality within a Quality by Design framework. This comprehensive approach ensures that solvent systems not only enhance solubility but also maintain drug stability, facilitate absorption, and enable robust manufacturing processes.

As pharmaceutical molecules continue to increase in complexity, emerging technologies—including dynamic solvation field modeling, machine-learned potentials, and bio-relevant in vitro models—will further refine solvent selection paradigms [4] [10]. By embracing these advanced methodologies within a systematic DoE framework, pharmaceutical scientists can effectively address the critical challenge of bioavailability enhancement through optimized solvent selection.

Limitations of One-Variable-at-a-Time (OVAT) Approaches in Complex Solvent Systems

In the realm of chemical research and drug development, understanding solvent effects is paramount for optimizing reactions, purification processes, and formulation development. For decades, the One-Variable-at-a-Time (OVAT) approach has been a common methodological staple in experimental workflows. This technique involves testing factors, or causes, one at a time while holding all other variables constant [11]. Also known as one-factor-at-a-time (OFAT), this method has been favored by non-experts, particularly in situations where data is cheap and abundant, or where the mental effort required for complex multi-factor analysis exceeds the effort required to acquire extra data [11] [12].

However, the rising complexity of modern solvent systems in pharmaceutical development has exposed significant limitations in the OVAT approach. Complex solvent systems typically involve multiple interacting variables including temperature, concentration, pH, polarity, and molecular structure, creating a multidimensional parameter space that OVAT methodologies struggle to navigate efficiently. Within the context of comparing solvent effects for a broader thesis, it becomes essential to recognize these limitations and explore more sophisticated experimental design frameworks that can better capture the intricate relationships within complex chemical systems.

Fundamental Limitations of the OVAT Approach

The OVAT method suffers from several critical shortcomings when applied to complex solvent systems, each contributing to suboptimal experimental outcomes and potential misinterpretations of solvent effects.

Inability to Detect Factor Interactions

The most significant limitation of OVAT in complex solvent systems is its fundamental inability to detect interactions between factors [11] [13]. In solvent chemistry, factors rarely operate in isolation; instead, they frequently interact in complex ways. For example, the effect of temperature on solubility often depends on pH, and the efficacy of a mixed solvent system can depend on synergistic relationships between its components. OVAT methodologies completely miss these interaction effects because they only vary one factor while holding others constant [13]. As one expert notes, "OFAT cannot estimate interactions" between factors [11], leading to an incomplete understanding of the system being studied.

Suboptimal Parameter Estimation

OVAT requires more experimental runs for the same precision in effect estimation compared to more sophisticated experimental designs [11]. This inefficiency stems from the sequential nature of OVAT testing, where each variable is explored independently without leveraging the information gain that can come from simultaneous variation of multiple factors. In complex solvent systems with numerous potentially influential factors, this approach becomes prohibitively resource-intensive, requiring substantially more time, materials, and analytical resources to achieve the same level of understanding as multifactor approaches.

Risk of False Optima

Perhaps the most dangerous limitation of OVAT in solvent optimization is its high chance of identifying false optimal conditions [11] [13]. When multiple factors interact to influence an outcome, the apparent optimum found by varying one factor at a time may be substantially different from the true global optimum. This occurs because OVAT cannot account for the interaction effects that significantly influence system behavior in higher-dimensional spaces. As noted in Six Sigma literature, OVAT has "high chances of False optimum (when 2+ factors considered) which can mislead" researchers [13].

Curvature Estimation Challenges

Complex solvent systems often exhibit nonlinear responses to factor changes, creating curvature in the response surface that OVAT methods struggle to characterize effectively [13]. While OVAT can be used to estimate curvature in individual factors, it does so inefficiently and may miss important curvature effects that only become apparent when multiple factors are varied simultaneously. As noted in expert comparisons, "If there is curvature, estimation is done by augmenting into central composite design" in Design of Experiments (DOE) approaches, whereas OVAT lacks such robust mechanisms for curvature characterization [13].

Table 1: Fundamental Limitations of OVAT in Complex Solvent Systems

| Limitation | Impact on Solvent Research | Consequence |

|---|---|---|

| Inability to Detect Interactions | Misses synergistic/antagonistic effects between solvent components | Incomplete understanding of solvent system behavior |

| Suboptimal Parameter Estimation | Requires more experiments for same precision | Increased time and resource costs |

| Risk of False Optima | May identify local rather than global optima | Suboptimal process conditions and formulations |

| Curvature Estimation Challenges | Poor characterization of nonlinear responses | Inaccurate modeling of solvent system behavior |

OVAT Versus Design of Experiments: A Comparative Analysis

Design of Experiments (DOE) represents a fundamentally different approach to experimental design that systematically varies multiple factors simultaneously according to predetermined mathematical structures known as experimental designs [13]. This approach stands in stark contrast to the sequential, isolated factor testing characteristic of OVAT methodologies.

Philosophical and Methodological Differences

The core philosophical difference between OVAT and DOE lies in their approach to factor variation. OVAT adopts a restrictive approach where "we hold 1 factor as constant and alter 2nd variable level" in a sequential manner [13]. In contrast, DOE allows "multiple (more than 2 factors) to be manipulated" simultaneously within a structured framework [13]. This fundamental difference in experimental structure enables DOE to capture the complex interactions that OVAT necessarily misses.

In practical terms, OVAT gives the experimenter discretion over the number and sequence of experiments, whereas in DOE, "the number of experiments is selected by the design itself" based on statistical principles [13]. This design-based approach ensures that experimental resources are allocated efficiently to maximize information gain while maintaining the statistical power needed to detect both main effects and interactions.

Quantitative Comparison of Experimental Efficiency

The efficiency advantages of DOE over OVAT become particularly pronounced as the number of experimental factors increases. For a relatively simple system with 3 factors, OVAT might require 15 experimental runs yet still deliver inferior prediction quality compared to a properly designed DOE with the same number of runs [13]. The efficiency gap widens exponentially as factor count increases, making DOE particularly valuable for complex solvent systems with numerous potentially influential factors.

Table 2: OVAT vs. DOE Methodological Comparison

| Characteristic | OVAT Approach | DOE Approach |

|---|---|---|

| Factor Manipulation | Sequential, one factor at a time | Simultaneous, multiple factors together |

| Experiment Count | Experimenter's decision | Determined by statistical design |

| Interaction Estimation | Cannot estimate interactions between factors | Systematically estimates interactions |

| Precision | Low precision in effect estimation | High precision in effect estimation |

| Optimal Conditions | High chance of false optima | High chance of finding true optimum |

| Curvature Detection | Limited ability to characterize curvature | Enhanced curvature detection through specialized designs |

| Experimental Design | No formal design structure | Structured designs (full/fractional factorial, etc.) |

| Prediction Quality | Poor prediction due to limited data spread | Better prediction with comprehensive data coverage |

Visualizing the Experimental Space Coverage

The following diagram illustrates the fundamental difference in how OVAT and DOE approaches explore the experimental space, particularly highlighting the coverage limitations of OVAT in detecting interactions:

Modern Alternatives to OVAT for Solvent System Analysis

Several sophisticated experimental design approaches have emerged as powerful alternatives to OVAT, particularly for complex solvent systems where multiple factors and their interactions significantly influence outcomes.

Design of Experiments (DOE) Frameworks

DOE methodologies provide structured approaches for simultaneously investigating multiple factors in solvent systems. The foundational principle of DOE is that by intentionally varying multiple factors according to specific mathematical patterns, researchers can efficiently characterize both main effects and interaction effects with minimal experimental runs [13]. Common DOE designs applicable to solvent research include full factorial designs (which study all possible combinations of factor levels), fractional factorial designs (which efficiently screen large numbers of factors), response surface methodologies (which optimize processes by modeling nonlinear responses), and definitive screening designs (which efficiently untangle important effects when considering many factors) [14].

The statistical robustness of DOE comes from its orthogonal design principles, which ensure that factor effects can be estimated independently despite being varied simultaneously [13]. This orthogonality, combined with careful design selection, enables researchers to build comprehensive mathematical models of solvent system behavior that accurately predict performance across the entire experimental space, not just along individual factor axes.

High-Throughput Experimentation (HTE)

High-Throughput Experimentation (HTE) represents a paradigm shift in experimental science, enabling the rapid miniaturization and parallelization of reactions [15]. This approach stands in direct contrast to OVAT by facilitating "the exploration of multiple factors simultaneously" [15]. In solvent system research, HTE allows researchers to test hundreds or even thousands of solvent combinations, ratios, and conditions in parallel, dramatically accelerating the optimization process.

Modern HTE platforms have evolved significantly from their origins in biological screening. Today's systems incorporate advanced automation, specialized microtiter plates compatible with diverse organic solvents, and sophisticated analytical interfaces that enable rapid analysis of reaction outcomes [15]. The integration of artificial intelligence and machine learning with HTE has further enhanced its capabilities, with AI-driven approaches "leveraging HTE data to not only refine conditions but also to uncover reactivity patterns by analyzing large data sets across diverse substrates, catalysts, and reagents" [15].

AI-Enhanced Experimental Design

The most cutting-edge alternative to OVAT emerges from the integration of artificial intelligence with experimental design. Platforms like Quantum Boost utilize "cutting-edge AI to ensure target achievement with the least experiments" [16]. This approach represents a significant advancement beyond traditional DOE by employing machine learning algorithms to adaptively design experiments based on accumulating results, continuously refining the experimental focus toward optimal regions of the parameter space.

AI-enhanced experimental design is particularly valuable for complex solvent systems with many potential factors because it can intelligently prioritize which factors and interactions to explore based on preliminary results, unlike traditional DOE which typically requires a fixed experimental design before beginning experimentation. This adaptive capability can reduce experimental burden by 2-5x compared to traditional DOE approaches [16], offering substantial efficiency gains for pharmaceutical companies and research institutions working with complex solvent systems.

Software Tools for Advanced Experimental Design

Several software platforms have been developed specifically to facilitate DOE and HTE approaches, providing researchers with user-friendly interfaces for designing, executing, and analyzing multifactor experiments.

Table 3: Experimental Design Software Comparison

| Software | Key Features | Best For | Pricing |

|---|---|---|---|

| Quantum Boost | AI-driven design, project flexibility, user-friendly interface | Rapid optimization with minimal experiments | Starting at $95/month [16] |

| JMP | Visual analysis, SAS integration, diverse statistical models | Complex analysis with advanced statistical needs | Starting at $1,200/year [16] |

| DesignExpert | Accessible interface, design versatility, visual interpretation | Users seeking DOE without excessive complexity | Starting at $1,035/year [16] |

| Minitab | Guided analysis, visual capabilities, robust data examination | Comprehensive data analysis with statistical rigor | Starting at $1,780/year [16] |

| MODDE Go | Classical factorial designs, online knowledge base, effective graphics | Researchers needing economical DOE solution | Starting at $399 [16] |

These software platforms significantly lower the barrier to implementing sophisticated experimental designs, making DOE methodologies accessible to researchers who may not have advanced statistical training. Their visualization capabilities also enhance interpretation of complex interaction effects, helping researchers develop deeper insights into their solvent systems.

Experimental Protocols for Solvent System Characterization

Implementing robust experimental methodologies for solvent system analysis requires careful planning and execution. The following protocols outline key methodological considerations for both traditional and advanced approaches.

Standardized OVAT Protocol for Solvent Comparison

For researchers beginning with OVAT approaches, a standardized protocol ensures consistency and reproducibility:

Factor Identification: Identify all potentially influential factors in the solvent system (e.g., solvent ratio, temperature, pH, concentration, mixing speed).

Baseline Establishment: Establish baseline conditions using historically optimal or literature values for all factors.

Sequential Variation: Systematically vary each factor of interest while maintaining all other factors at baseline levels.

Response Measurement: Measure relevant responses (e.g., solubility, reaction yield, selectivity, stability) for each experimental condition.

Data Analysis: Analyze results by plotting response versus factor level for each individually varied factor.

Optimum Selection: Select the apparent optimum level for each factor based on individual response curves.

This protocol, while straightforward, contains the inherent limitations discussed previously, particularly the inability to detect interactions between factors and the risk of identifying false optima.

Comprehensive DOE Protocol for Solvent System Optimization

A robust DOE protocol for solvent system characterization provides a more comprehensive approach:

Objective Definition: Clearly define experimental objectives (screening, optimization, or robustness testing).

Factor Selection: Identify critical factors and their plausible ranges based on prior knowledge or preliminary experiments.

Experimental Design Selection: Choose an appropriate experimental design (e.g., full factorial, fractional factorial, central composite design) based on the number of factors and experimental constraints.

Randomized Execution: Execute experimental runs in randomized order to minimize confounding from external factors.

Response Measurement: Measure all relevant responses for each experimental condition.

Statistical Analysis: Analyze results using statistical methods (ANOVA, regression analysis) to identify significant main effects and interaction effects.

Model Validation: Validate predictive models using confirmation experiments at predicted optimal conditions.

This structured approach enables comprehensive characterization of solvent system behavior while efficiently using experimental resources.

Integrated HTE Protocol for High-Throughput Solvent Screening

For maximum efficiency in screening large numbers of solvent combinations:

Plate Design: Design microtiter plates with predefined solvent combinations and concentrations.

Automated Dispensing: Use automated liquid handling systems to dispense solvents and reagents into plate wells.

Condition Control: Implement precise environmental control (temperature, atmosphere) for entire plates.

Parallel Reaction: Execute all reactions in parallel under controlled conditions.

High-Throughput Analysis: Employ automated analysis techniques (HPLC-MS, GC-MS, UV-Vis) for rapid response measurement.

Data Integration: Compile results into structured databases for pattern recognition and modeling.

Machine Learning Integration: Apply machine learning algorithms to identify complex relationships and predict optimal conditions.

This protocol is particularly valuable for pharmaceutical companies screening large solvent libraries for specific applications, such as crystallization optimization or formulation development.

Research Reagent Solutions for Solvent System Experimentation

Implementing advanced experimental approaches requires specific reagents, materials, and equipment. The following toolkit outlines essential resources for comprehensive solvent system characterization.

Table 4: Essential Research Reagent Solutions for Solvent System Characterization

| Category | Specific Examples | Function in Solvent Research |

|---|---|---|

| Organic Solvents | Aliphatic hydrocarbons, Aromatic hydrocarbons, Esters, Ethers, Ketones, Chlorinated hydrocarbons [17] | Primary media for solubility and reaction studies |

| Solvent Additives | Co-solvents, Surfactants, Ionic liquids, Deep eutectic solvents | Modifying solvent properties and enhancing solvation |

| Analytical Standards | Reference compounds, Internal standards, Certified materials | Quantification and method validation |

| HTE Equipment | Microtiter plates, Automated liquid handlers, Robotic systems | Enabling high-throughput parallel experimentation |

| Detection Reagents | Chromogenic compounds, Fluorogenic substrates, NMR shift reagents | Visualizing and quantifying reaction outcomes |

| Statistical Software | JMP, DesignExpert, Minitab, MODDE Go [16] | Designing experiments and analyzing complex results |

The One-Variable-at-a-Time approach presents significant limitations for characterizing complex solvent systems, including its inability to detect critical factor interactions, tendency to identify false optimal conditions, and inefficient use of experimental resources. While OVAT may remain suitable for simple systems with naturally uncorrelated variables or pedagogical settings [12], modern solvent research in pharmaceutical development demands more sophisticated approaches.

Design of Experiments, High-Throughput Experimentation, and AI-enhanced experimental design represent powerful alternatives that enable comprehensive characterization of complex solvent systems with greater efficiency and statistical rigor. The transition from OVAT to these advanced methodologies represents not merely a technical shift but a fundamental evolution in how we approach scientific inquiry in chemical and pharmaceutical research—from examining factors in isolation to understanding complex systems as integrated wholes.

Within the broader thesis of comparing solvent effects using Design of Experiments (DoE) research, the strategic application of screening designs is paramount. For researchers and drug development professionals, the initial challenge often involves navigating a vast landscape of potential factors—including solvent choice, temperature, concentration, and catalyst loading—that could influence a critical response, such as chemical yield or purity [18] [19]. Traditional one-factor-at-a-time (OFAT) approaches are not only inefficient but can completely miss optimal conditions due to unaccounted factor interactions [19] [20]. This guide objectively compares the core methodologies for simultaneous factor screening and interaction analysis, providing the experimental protocols and data frameworks essential for informed solvent effect studies.

Comparative Performance of Screening Design Strategies

The primary objective of a screening DoE is to efficiently separate the "vital few" influential factors from the "trivial many" [18]. Different design strategies offer varying capabilities in achieving this while also probing for interactions, with direct implications for solvent optimization studies.

Table 1: Comparison of Screening Design Types for Solvent Effect Studies

| Design Type | Key Principle | Ability to Estimate Main Effects | Ability to Estimate 2-Factor Interactions | Typical Run Efficiency (for 6-8 factors) | Best Use Case in Solvent Research |

|---|---|---|---|---|---|

| Plackett-Burman | Assumes interactions are negligible [21]. | High (explicit focus) [22]. | Very Low (severely confounded) [21]. | Very High (e.g., 12 runs for 11 factors) [21]. | Initial ultra-high-throughput screening of many solvent properties and process variables. |

| 2-Level Fractional Factorial | Sparsity, Hierarchy, Heredity principles [18]. | High. | Medium (depends on design resolution) [21]. | High (e.g., 16 runs for 6-8 factors) [18]. | General-purpose screening to identify critical solvents and process parameters with some interaction insight. |

| Definitive Screening Design (DSD) | Projection property; allows estimation of curvatures [18]. | High. | High (for interactions involving active main effects) [18] [21]. | Medium (e.g., 17 runs for 6 factors) [18]. | When curvature from solvent effects is suspected or when follow-up optimization is planned without additional screening. |

The choice of design involves a trade-off between run economy and information gain. For instance, a Plackett-Burman design might identify "Temperature" and "Solvent Polarity" as vital main effects but cannot reliably indicate if their interaction is significant. A Fractional Factorial design of Resolution IV or higher can estimate those main effects and reveal if their interaction is important, though it may confound other two-way interactions with each other [21]. A Definitive Screening Design offers a robust middle ground, efficiently providing data that can model both main effects and quadratic effects, which is crucial when solvent composition or property leads to non-linear response changes [18].

Experimental Protocols for Key Screening Methodologies

Protocol 1: Fractional Factorial Design for Solvent and Process Parameter Screening

This protocol is adapted from classic DoE applications in synthetic chemistry [19] and process development [18].

- Define Objectives & Factors: Clearly state the response (e.g., reaction yield, impurity level). List all potential factors (X) including continuous (e.g., temperature, solvent ratio, time) and categorical (e.g., solvent class A/B/C, catalyst type) variables [18]. In solvent studies, categorical factors are common.

- Select Factor Levels: For continuous factors, set a scientifically justified high (+1) and low (-1) level. For categorical factors, assign two distinct options (e.g., Polar Protic vs. Polar Aprotic) [19].

- Choose Design Resolution: Based on the number of factors (k) and the need to detect interactions, select a 2^(k-p) fractional factorial design. A Resolution IV design (e.g., 2^(6-2) with 16 runs) ensures main effects are not confounded with two-factor interactions, though some two-factor interactions are confounded with each other [21].

- Randomize & Execute Runs: Generate the experimental run order using software or a random number table to minimize bias from lurking variables [23]. Execute reactions strictly according to the randomized schedule.

- Analyze with Half-Normal Plot: Perform a multiple linear regression analysis. Construct a half-normal probability plot of the absolute estimated effects [22]. Factors that deviate significantly from the straight line formed by the majority of near-zero effects are deemed active (see Figure 3 in [22]).

- Model Reduction & Interpretation: Build a statistical model using only the active factors. Analyze the model coefficients to determine the direction and relative magnitude of each effect. Check for significant interaction terms in the model.

Protocol 2: Definitive Screening Design (DSD) with Solvent Property Mapping

This modern protocol integrates solvent space exploration with screening, as demonstrated in synthetic chemistry optimization [19].

- Map Solvent Space: Use a solvent selection guide or principal component analysis (PCA) to create a map of solvent properties [19] [24]. Select 3-5 representative solvents that span this map (e.g., one from each "corner" and a central point like an alcohol).

- Define Continuous Process Factors: Alongside the categorical solvent factor, identify 4-5 continuous process variables (e.g., temperature, pH, concentration).

- Generate DSD Matrix: Using statistical software, generate a Definitive Screening Design for the combined set of factors. A DSD for 6 factors typically requires 13 runs plus 2-4 center point replicates [18].

- Incorporate Center Points: Center points (where all continuous factors are at their midpoint) are crucial for estimating pure error and testing for curvature in the response [18].

- Execute & Analyze: Run the designed experiments in random order. Analyze the data using least squares regression. A DSD allows the model to include main effects, two-factor interactions, and pure quadratic terms for continuous factors.

- Project to Follow-Up: If only a subset of factors is found active, the DSD can be "projected" into a powerful response surface design (like a central composite design) for those factors, enabling direct optimization [18].

Visualizing the Screening Workflow and Solvent Analysis

The following diagrams, created using Graphviz DOT language, illustrate the logical flow of a screening study and the conceptual mapping of solvent space—a critical tool for designing efficient experiments.

Diagram 1: Decision Workflow for Selecting a Screening DoE Strategy

Diagram 2: Mapping Solvents in a 2D Property Space for DoE Selection

The Scientist's Toolkit: Essential Reagents & Solutions for DoE-Driven Solvent Studies

Table 2: Key Research Reagent Solutions for Solvent Effect DoE

| Item | Function in Experiment | Relevance to Screening & Interaction Analysis |

|---|---|---|

| Solvent Selection Guide (e.g., ACS GCI, CHEM21) | Provides ranked lists of solvents based on environmental, health, and safety (EHS) criteria [24]. | Used to define the categorical solvent factor levels, ensuring greener alternatives are systematically evaluated against traditional options. |

| Solvent Property Database (e.g., PubChem, Sigma-Aldrich Solvent Center) | Source of numerical descriptors (dielectric constant, log P, dipole moment, etc.) for PCA. | Essential for creating a "solvent map" to rationally choose representative solvents for the experimental design [19]. |

| Statistical Software (JMP, Design-Expert, Minitab) | Platform for generating design matrices, randomizing runs, and performing regression analysis. | Critical for analyzing interaction effects. Software automatically calculates interaction term coefficients and performs significance tests (p-values) [18] [20]. |

| Central Composite Design (CCD) or Box-Behnken Design (BBD) Template | Pre-defined experimental layouts for response surface methodology (RSM). | Not used in the initial screening but is the direct follow-up. The screening results project into these designs for optimization of the vital few factors [25]. |

| Standardized Substrate & Catalyst | A well-characterized chemical reaction (e.g., a common cross-coupling or hydrolysis). | Serves as a reliable model system to test the effect of solvent changes. Consistency here reduces noise, making it easier to detect significant factor effects [19]. |

The integration of these tools enables a rigorous comparison of solvent effects. For example, a screening DoE might reveal that for a specific reaction, the interaction between "Solvent Type" (green vs. traditional) and "Temperature" is statistically significant [19] [20]. This means the optimal temperature differs depending on the solvent class—a finding impossible to discover via OFAT methodology. The supporting quantitative data, structured as in Table 1, allows researchers to objectively select the most efficient screening approach for their specific thesis question, balancing the need for interaction analysis against practical constraints of time and material.

In pharmaceutical development, understanding solvent effects is critical for optimizing reaction yields, purity, and process efficiency. Design of Experiments (DoE) provides a systematic framework for investigating multiple factors simultaneously, offering a more efficient approach than traditional one-factor-at-a-time methods [26]. This guide compares three fundamental DoE designs essential for solvent research: Plackett-Burman designs for initial screening, Full Factorial designs for comprehensive factor interaction analysis, and Response Surface Methodology (RSM) for final process optimization.

These methodologies enable researchers to efficiently navigate complex experimental spaces, revealing not only individual factor effects but also interactive effects between different solvent parameters that might otherwise remain undetected. When applied to solvent selection and optimization, DoE can identify critical interactions between factors such as solvent polarity, temperature, concentration, and reaction time, leading to more robust and reproducible pharmaceutical processes [27].

The table below summarizes the primary characteristics, applications, and limitations of the three DoE designs discussed in this guide, providing a quick reference for researchers selecting an appropriate experimental strategy.

Table 1: Key Characteristics of DoE Designs for Solvent Research

| Design Aspect | Plackett-Burman | Full Factorial | Response Surface Methodology (RSM) |

|---|---|---|---|

| Primary Purpose | Factor screening [28] [29] | Comprehensive effect and interaction analysis [30] | Process optimization and modeling [31] [32] |

| Experimental Context | Early phase with many potential factors [27] | Middle phase with known critical factors [30] | Final phase for locating optimum conditions [31] |

| Factor Interactions | Not estimated (assumed negligible) [28] [29] | All interactions can be estimated [30] [26] | Quadratic and interaction effects modeled [32] |

| Typical Model | First-order (main effects only) [28] | First-order with interactions [30] | Second-order polynomial [31] [32] |

| Design Efficiency | Very high (N-1 factors in N runs) [28] [29] | Low (number of runs grows exponentially) [30] [26] | Medium (requires special designs like CCD) [31] |

| Key Limitations | Main effects confounded with interactions [29] | Resource-intensive with many factors [30] | Requires prior knowledge of important factors [32] |

Detailed Design Methodologies

Plackett-Burman Designs

Purpose and Applications

Plackett-Burman designs are screening designs specifically developed for efficiently identifying the "vital few" influential factors from a "trivial many" potential factors when resources are limited [28] [29]. These designs are particularly valuable in early-stage solvent research where numerous factors—such as solvent type, concentration, temperature, mixing speed, and pH—may potentially influence outcomes, but only a few are genuinely significant [27].

These designs belong to the Resolution III family, meaning that while main effects are not confounded with each other, they are partially confounded with two-factor interactions [28] [29]. This characteristic makes Plackett-Burman designs most appropriate when interaction effects are assumed to be negligible compared to main effects, which is often a reasonable assumption during initial screening phases.

Experimental Design Protocol

Step 1: Determine Design Size Plackett-Burman designs require the number of experimental runs (N) to be a multiple of 4 (e.g., 4, 8, 12, 16, 20, 24) [28] [29]. The design can screen up to N-1 factors in N runs. For example, a 12-run design can efficiently investigate 11 potential factors [29].

Step 2: Assign Factors and Levels Each factor is tested at two levels, typically coded as -1 (low) and +1 (high) [28]. For solvent-related factors, these might represent:

- Solvent polarity: low vs. high

- Temperature: lower bound vs. upper bound

- Concentration: minimum vs. maximum practical value

Step 3: Generate Design Matrix The design matrix is constructed using specific design generators that create balanced combinations of factor levels [28]. This ensures each factor is tested an equal number of times at its high and low levels, and the estimation of main effects is independent of other main effects.

Step 4: Randomize Run Order All experimental runs should be performed in random order to protect against systematic bias and minimize the impact of lurking variables [28].

Step 5: Analyze Results Calculate main effects by contrasting the average response when each factor is at its high level versus its low level [28]. Statistically significant effects can be identified using normal probability plots, half-normal plots, or analysis of variance (ANOVA).

Table 2: Example 12-Run Plackett-Burman Design for 6 Solvent Factors

| Run | Temp | pH | Conc | MixTime | SolventType | Catalyst | Yield |

|---|---|---|---|---|---|---|---|

| 1 | +1 | +1 | -1 | +1 | +1 | +1 | 85.2 |

| 2 | -1 | +1 | +1 | -1 | +1 | +1 | 72.6 |

| 3 | +1 | -1 | +1 | +1 | -1 | +1 | 88.4 |

| 4 | -1 | +1 | -1 | +1 | +1 | -1 | 69.7 |

| 5 | -1 | -1 | +1 | -1 | +1 | +1 | 75.3 |

| 6 | -1 | -1 | -1 | +1 | -1 | +1 | 68.9 |

| 7 | +1 | -1 | -1 | -1 | +1 | -1 | 81.5 |

| 8 | +1 | +1 | -1 | -1 | -1 | +1 | 90.1 |

| 9 | +1 | +1 | +1 | -1 | -1 | -1 | 92.4 |

| 10 | -1 | +1 | +1 | +1 | -1 | -1 | 74.8 |

| 11 | +1 | -1 | +1 | +1 | +1 | -1 | 86.7 |

| 12 | -1 | -1 | -1 | -1 | -1 | -1 | 65.3 |

Full Factorial Designs

Purpose and Applications

Full factorial designs investigate all possible combinations of factors and their levels, providing comprehensive information about both main effects and interaction effects [30] [26]. These designs are particularly valuable in solvent research when studying how different solvent parameters interact to influence reaction outcomes.

The key advantage of full factorial designs over one-factor-at-a-time (OFAT) experiments is their ability to detect and estimate interaction effects [30] [26]. For example, a full factorial design can reveal whether the effect of changing solvent polarity depends on the temperature setting—information that would be missed in OFAT experimentation.

Experimental Design Protocol

Step 1: Select Factors and Levels Typically, 2-level full factorial designs are used (coded as -1 and +1), though 3-level designs can detect curvature in the response [30]. Common solvent-related factors include temperature, pH, solvent composition, and catalyst concentration.

Step 2: Determine Number of Runs For k factors each at 2 levels, the number of runs required is 2^k [30] [26]. For example:

- 3 factors: 8 runs

- 4 factors: 16 runs

- 5 factors: 32 runs

Step 3: Create Design Matrix The design matrix includes all possible combinations of factor levels. For example, a 2^3 full factorial for solvent research would include all combinations of temperature, pH, and solvent concentration.

Step 4: Include Replication Replication (running the same combination multiple times) is essential for estimating experimental error and determining statistical significance [30].

Step 5: Randomize Run Order As with all experimental designs, randomization helps minimize the effects of uncontrolled variables [30].

Step 6: Analyze Results Use analysis of variance (ANOVA) to determine the statistical significance of main effects and interaction effects [30]. Regression analysis can develop a predictive model, and interaction plots can visualize significant interactions between factors.

Table 3: 2³ Full Factorial Design for Solvent Study with Results

| Standard Order | Temp (°C) | Solvent Ratio | Catalyst (%) | Yield (%) | Purity (%) |

|---|---|---|---|---|---|

| 1 | 50 (-1) | 70:30 (-1) | 0.5 (-1) | 65.2 | 92.1 |

| 2 | 70 (+1) | 70:30 (-1) | 0.5 (-1) | 72.4 | 90.3 |

| 3 | 50 (-1) | 90:10 (+1) | 0.5 (-1) | 68.7 | 94.2 |

| 4 | 70 (+1) | 90:10 (+1) | 0.5 (-1) | 80.3 | 92.8 |

| 5 | 50 (-1) | 70:30 (-1) | 1.5 (+1) | 74.1 | 89.5 |

| 6 | 70 (+1) | 70:30 (-1) | 1.5 (+1) | 79.6 | 87.9 |

| 7 | 50 (-1) | 90:10 (+1) | 1.5 (+1) | 77.8 | 93.4 |

| 8 | 70 (+1) | 90:10 (+1) | 1.5 (+1) | 88.9 | 91.7 |

Response Surface Methodology (RSM)

Purpose and Applications

Response Surface Methodology (RSM) is a collection of mathematical and statistical techniques used for empirical model building and process optimization [31] [32] [33]. When applied to solvent research, RSM helps identify the optimal combination of solvent parameters that produces the best possible response (e.g., maximum yield, highest purity, or minimal impurities).

RSM is typically employed after screening experiments have identified the critical few factors that significantly impact the response [32]. The methodology is particularly valuable for understanding and modeling nonlinear relationships between factors and responses, which are common in solvent-dependent chemical processes.

Experimental Design Protocol

Step 1: Define Optimization Goal Clearly specify the objective, such as maximizing yield, minimizing impurity formation, or achieving a target solubility profile [32].

Step 2: Select Factors and Ranges Choose 2-4 critical factors identified from previous screening studies and establish appropriate experimental ranges based on prior knowledge [32].

Step 3: Choose RSM Design Common RSM designs include:

- Central Composite Design (CCD): Combines factorial points, center points, and axial points to estimate curvature [31] [33]

- Box-Behnken Design (BBD): More efficient than CCD for 3 factors, with all points lying within safe operating limits [33]

Step 4: Conduct Experiments Perform experiments according to the design matrix, typically including center point replicates to estimate pure error [31].

Step 5: Develop Empirical Model Fit a second-order polynomial model to the experimental data using regression analysis [32] [33]: Y = β₀ + ∑βᵢXᵢ + ∑βᵢᵢXᵢ² + ∑βᵢⱼXᵢXⱼ + ε

Step 6: Validate Model Check model adequacy using statistical measures (R², adjusted R², lack-of-fit test) and residual analysis [32].

Step 7: Optimize and Confirm Use optimization techniques (e.g., steepest ascent/descent, canonical analysis) to locate optimum conditions and perform confirmation experiments [31] [32].

Table 4: Central Composite Design (CCD) for Solvent Optimization

| Run Type | Runs | Description | Purpose |

|---|---|---|---|

| Factorial | 2^k or 2^(k-1) | All combinations of ±1 factor levels | Estimate main effects and interactions |

| Axial (Star) | 2k | Points at (±α, 0, 0), (0, ±α, 0), etc. | Estimate curvature |

| Center | 3-6 | All factors at midpoint (0, 0, 0) | Estimate pure error and check model adequacy |

Experimental Workflow and Decision Pathway

The following diagram illustrates the sequential relationship between the three DoE methodologies in a comprehensive solvent optimization study.

Essential Research Reagents and Materials

The table below details key reagents, solvents, and materials commonly used in DoE studies of solvent effects, along with their primary functions in pharmaceutical research.

Table 5: Essential Research Reagents for Solvent Effect Studies

| Reagent/Material | Function in DoE Studies | Application Example |

|---|---|---|

| Poly(ethylene oxide) | Polymer matrix for controlled release studies [27] | Extended-release dosage forms [27] |

| Ethylcellulose | Hydrophobic polymer for release modification [27] | Controlling drug release in combination with hydrophilic polymers [27] |

| Theophylline/Caffeine | Model drugs with different solubility profiles [27] | Studying solubility effects on drug release [27] |

| Citric Acid | Drug release modifying agent [27] | Creating channels in polymer matrices for enhanced release [27] |

| Sodium Chloride | Release modifier through diffusion/erosion mechanisms [27] | Adjusting ionic strength to modify release rates [27] |

| Polyethylene Glycol | Plasticizer for polymer processing [27] | Improving processability of polymers in hot melt extrusion [27] |

| Glycerin | Plasticizer for flexibility enhancement [27] | Reducing extrusion temperature and improving flexibility [27] |

Plackett-Burman, Full Factorial, and Response Surface Methodology represent a powerful sequence of DoE approaches that, when applied strategically to solvent research, can significantly accelerate pharmaceutical development. Plackett-Burman designs provide an efficient screening mechanism to identify critical factors from a large set of possibilities. Full Factorial designs then characterize these critical factors in detail, revealing important interactions that might otherwise be overlooked. Finally, Response Surface Methodology locates optimal operating conditions, enabling researchers to maximize desired outcomes while minimizing undesirable effects.

This systematic approach to solvent research ensures efficient resource utilization while providing comprehensive process understanding—essential elements for developing robust, reproducible pharmaceutical processes in today's competitive landscape.

A Practical Workflow: Methodological Application of DoE for Solvent Screening and Optimization

Within the broader context of investigating solvent effects using Design of Experiment (DoE) methodologies, the systematic comparison and selection of critical reaction parameters is paramount. This guide objectively compares the performance impact of three fundamental factors—catalyst loading, base, and solvent polarity—in palladium-catalyzed cross-coupling reactions, which are cornerstone transformations in pharmaceutical and fine chemical synthesis [34]. Traditional one-factor-at-a-time (OFAT) approaches are inefficient and often miss critical factor interactions [34]. Statistical DoE (sDoE), particularly screening designs like Plackett-Burman (PBD), enables the simultaneous evaluation of multiple factors, providing a robust framework for comparison and optimization [34] [35]. This case study synthesizes experimental data from high-throughput sDoE studies to delineate the individual and comparative effects of these three key parameters.

Comparative Analysis of Key Factors

The following analysis is based on a Plackett-Burman Design study screening five factors across Mizoroki–Heck, Suzuki–Miyaura, and Sonogashira–Hagihara reactions. The quantitative effects of catalyst loading, base strength, and solvent polarity were ranked, providing a direct performance comparison [34].

Table 1: Factor Effects Ranking Across Different Cross-Coupling Reactions Data derived from a 12-run PBD evaluating factor levels (High: +1, Low: -1). The effect size indicates the change in reaction outcome (e.g., yield) when moving from the low to the high level of the factor.

| Reaction Type | Primary Influential Factor (Rank 1) | Secondary Influential Factor (Rank 2) | Tertiary Influential Factor (Rank 3) | Notes on Factor Interaction |

|---|---|---|---|---|

| Mizoroki–Heck | Phosphine Ligand Electronic Effect | Catalyst Loading | Base | Solvent polarity showed a lesser individual effect within the screened range [34]. |

| Suzuki–Miyaura | Phosphine Ligand Sterics (Cone Angle) | Solvent Polarity | Base | Catalyst loading was less influential than base and solvent in this system [34]. |

| Sonogashira–Hagihara | Phosphine Ligand Electronic Effect | Catalyst Loading | Solvent Polarity | Base strength had a minimal individual effect under the conditions tested [34]. |

Key Comparative Insights:

- Catalyst Loading (1 vs. 5 mol%): Exhibited a strong, positive effect on yield for the Mizoroki–Heck and Sonogashira–Hagihara reactions, indicating that achieving sufficient active catalytic species is critical for these transformations [34]. Its relative importance was lower in the Suzuki–Miyaura reaction under the conditions studied.

- Base Strength (Triethylamine vs. Sodium Hydroxide): Was a significant secondary factor for the Suzuki–Miyaura reaction, where a stronger base (NaOH, +1 level) generally promoted higher conversion [34]. Its effect was more muted in the other two reactions within the two-level screening, suggesting the optimal base is highly reaction-specific.

- Solvent Polarity (DMSO vs. MeCN): Demonstrated a clear and significant effect on the Suzuki–Miyaura reaction, with the more polar solvent (MeCN, +1 level) being favorable [34]. This aligns with principles that polar aprotic solvents can facilitate the activation of organoboron reagents and the transfer of anionic species [36]. Its effect was less pronounced in the Heck and Sonogashira couplings in this screening, though solvent properties like coordination ability and polarity fundamentally influence reaction pathways, solubility, and catalyst stability [37] [38].

Detailed Experimental Protocols

The comparative data presented are derived from the following standardized high-throughput experimental workflow [34].

General Procedure for Cross-Coupling Screening via PBD:

- Experimental Design: A 12-run, two-level Plackett-Burman Design was constructed using designated software. Factors (A-E) were assigned as follows: Ligand Electronic Effect, Ligand Cone Angle, Catalyst Loading, Base, Solvent Polarity. Columns F-K served as dummy factors for error estimation. High (+1) and Low (-1) levels were defined for each factor (see Table 2) [34].

- Reaction Setup: Reactions were performed in Carousel reaction tubes. For Mizoroki–Heck and Suzuki–Miyaura reactions: Substrate (2 mmol), nucleophile (2.4 mmol), catalyst precursor (K₂PdCl₄, 1 or 5 mol%), ligand (0.2 mmol), base (4 mmol), and solvent (5 mL) were combined. For Sonogashira–Hagihara: Substrate (1 mmol), phenylacetylene (1.2 mmol), catalyst precursor (Pd(OAc)₂, 1 or 5 mol%), ligand (0.1 mmol), base (2 mmol), and solvent (5 mL) were combined [34].

- Reaction Execution: The carousel was placed in a pre-heated oil bath at 60°C and stirred for 24 hours. The temperature was chosen to be compatible with both DMSO (b.p. 189°C) and MeCN (b.p. 82°C) [34].

- Analysis: Reactions were cooled, and an internal standard (dodecane) was added. Yields were determined quantitatively by Gas Chromatography (GC) or GC-Mass Spectrometry (GC-MS) [34].

- Data Analysis: The yield data for each of the 12 runs were entered into statistical analysis software. The main effect of each physical factor was calculated by subtracting the average yield at its low level from the average yield at its high level. Statistical significance (p-value) was assessed relative to the variation estimated from the dummy factors [34].

Table 2: Factor Levels in the Plackett-Burman Design Case Study

| Factor | Low Level (-1) | High Level (+1) | Justification for Levels |

|---|---|---|---|

| Catalyst Loading | 1 mol% | 5 mol% | Tests sufficiency of catalytic sites vs. cost/impurity concerns. |

| Base | Triethylamine (Et₃N) | Sodium Hydroxide (NaOH) | Represents a weak organic base vs. a strong inorganic base. |

| Solvent Polarity | DMSO (ε=46.7) | MeCN (ε=37.5) | Both are dipolar aprotic; DMSO has higher polarity/polarizability but MeCN was the "+1" level in the design framework [34]. |

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function in the Cross-Coupling Screening Context |

|---|---|

| Palladium Precursors (K₂PdCl₄, Pd(OAc)₂) | Source of the active Pd(0) catalyst, generated in situ. Different precursors may influence the initial reduction step and catalyst speciation [34]. |

| Phosphine Ligands (PPh₃, etc.) | Stabilize the active palladium species, modulate its electronic and steric properties, and are critical for catalytic cycle turnover [34]. |

| Aryl Halides (PhI, PhBr) | Electrophilic coupling partners. Halide identity (I, Br) affects oxidative addition rates, a key step in the catalytic cycle. |

| Nucleophiles (Alkenes, Boronic Acids, Alkynes) | The coupling partner that transfers to the aryl group. Its structure and functional groups critically impact reactivity and selectivity. |

| Bases (Et₃N, NaOH) | Essential for neutralizing acid byproducts (e.g., HX) and often participating in key mechanistic steps like transmetalation in Suzuki reactions [34] [36]. |

| Dipolar Aprotic Solvents (DMSO, MeCN) | Dissolve organic and inorganic components, stabilize charged intermediates or transition states, and can influence reaction mechanism and rate via polarity and coordination [34] [37] [38]. |

| Internal Standard (Dodecane) | An inert compound added in known quantity post-reaction to enable accurate quantitative yield analysis by GC-FID. |

DoE-Based Factor Selection Workflow

Title: Workflow for Comparative Factor Analysis Using DoE

Mechanism of Factor Influence on Reaction Outcome

Title: How Key Factors Drive Cross-Coupling Performance

In the competitive landscape of drug discovery and formulation development, high-throughput screening (HTS) has emerged as an indispensable approach for rapidly evaluating countless compounds, formulations, and process parameters. The global HTS market, estimated to be worth USD 26.12 billion in 2025 and projected to reach USD 53.21 billion by 2032, reflects the critical importance of these technologies in accelerating research and development timelines [39]. Within this context, statistical design of experiment (sDoE) methodologies, particularly Plackett-Burman designs (PBD), have gained prominence as powerful tools for efficient experimental planning. These designs enable researchers to systematically screen numerous factors while minimizing experimental runs, thereby conserving valuable resources and time.

Plackett-Burman designs represent a specific class of two-level fractional factorial screening designs developed by statisticians Robin Plackett and J.P. Burman in the 1940s [28]. Their fundamental strength lies in their ability to study up to N-1 factors using only N experimental runs, where N is a multiple of 4. This economical approach makes PBD particularly valuable during initial investigation phases when researchers must identify the "vital few" influential factors from a "trivial many" potential variables [40]. Unlike one-factor-at-a-time (OFAT) approaches that ignore potential factor interactions, PBD allows for simultaneous evaluation of multiple parameters, providing a more comprehensive understanding of complex systems [34].

The pharmaceutical industry increasingly leverages PBD within HTS frameworks to address diverse challenges, from optimizing drug nanocrystal production to screening solvent systems for separation processes [41] [42]. As automation, artificial intelligence, and advanced data analytics continue to transform laboratory workflows, the integration of efficient experimental designs like PBD becomes increasingly vital for maintaining competitive advantage in drug development [43] [44]. This guide explores the practical application of Plackett-Burman designs in high-throughput screening environments, with particular emphasis on evaluating solvent effects in pharmaceutical research and development.

Fundamental Principles and Methodology

Core Characteristics of Plackett-Burman Designs

Plackett-Burman designs belong to the family of Resolution III fractional factorial designs, meaning that while main effects are not confounded with other main effects, they are aliased with two-factor interactions [28]. This characteristic makes PBD particularly suitable for initial screening experiments where the primary objective is identifying significant main effects rather than precisely quantifying interactions between factors. The designs are constructed using a specific mathematical algorithm that ensures balance across all factors, meaning each factor is tested an equal number of times at its high (+1) and low (-1) levels throughout the experimental sequence [28] [40].

The economy of Plackett-Burman designs stems from their saturated nature, where all degrees of freedom are utilized to estimate effects. For example, a 12-run Plackett-Burman design can efficiently screen up to 11 different factors, while a full factorial design for the same number of factors would require 2,048 runs [40]. This dramatic reduction in experimental workload enables researchers to rapidly narrow their focus to the most critical parameters before conducting more detailed optimization studies using response surface methodologies or other advanced experimental designs [34].

Comparative Analysis of DoE Approaches

Table 1: Comparison of Different Experimental Design Approaches

| Design Type | Number of Runs for k Factors | Main Effects | Interaction Effects | Primary Application |

|---|---|---|---|---|

| Full Factorial | 2k | Fully estimated | All estimated | Comprehensive study of small factor sets |

| Fractional Factorial | 2k-p | Estimated | Some confounded with main effects | Balancing detail and efficiency |

| Plackett-Burman | N (where N = k+1) | Estimated | Aliased with main effects | Initial screening of many factors |

| Response Surface | Varies (typically >k) | Estimated | Estimated with curvature | Optimization of critical factors |

Plackett-Burman designs occupy a specific niche in the design of experiments landscape, particularly when compared to other common approaches. While full factorial designs provide comprehensive information about all main effects and interactions, they become prohibitively resource-intensive as the number of factors increases [28]. Fractional factorial designs offer a compromise, but still require more runs than PBD for equivalent factor screening. The key distinction of Plackett-Burman designs is their extreme efficiency in screening applications, making them ideal for the initial stages of investigation when numerous potential factors must be evaluated with minimal experimental investment [34] [40].

Implementation Workflow

The implementation of a Plackett-Burman design follows a systematic workflow that begins with careful factor selection and level determination. Researchers must identify all potential factors that might influence the response variable and assign appropriate high and low levels for each based on practical considerations and preliminary knowledge [28]. The experimental runs are then randomized to protect against systematic biases, and the resulting data is analyzed to identify statistically significant effects [34]. Normal probability plots and statistical significance testing are commonly used to distinguish active factors from those with negligible influence [28].

Figure 1: The systematic workflow for implementing Plackett-Burman designs in high-throughput screening applications, from initial factor identification through to optimization of significant factors.

Experimental Protocols and Applications

Protocol 1: Screening Solvent Effects in Cross-Coupling Reactions

A recent study demonstrated the application of Plackett-Burman design for screening solvent effects in carbon-carbon (C–C) cross-coupling reactions, which are fundamental transformations in pharmaceutical synthesis [34]. The research employed a 12-run PBD to evaluate five critical factors across three different cross-coupling reactions: Mizoroki-Heck, Suzuki-Miyaura, and Sonogashira-Hagihara reactions.

Materials and Equipment:

- Reagents: Bromobenzene (PhBr, 99%), iodobenzene (PhI, 98%), butylacrylate (99%), 4-fluorophenylboronic acid (95%), and phenylacetylene (98%)

- Catalysts: Potassium tetrachloropalladate (II) (K₂PdCl₄, 98%) and palladium acetate [Pd(OAc)₂, 99%]

- Bases: Sodium hydroxide (NaOH, 99.08%) and triethylamine (Et₃N, ≥99%)

- Solvents: Dimethylsulfoxide (DMSO, ≥99.9%), acetonitrile (MeCN, ≥99.8%)

- Equipment: Carousel reaction tubes, heating apparatus, analytical instrumentation for yield determination

Experimental Procedure:

- Factor Selection: Five key factors were identified: electronic effect of phosphine ligands, Tolman's cone angle of phosphine ligands, catalyst loading, base strength, and solvent polarity.

- Level Assignment: Each factor was assigned high (+1) and low (-1) levels based on preliminary knowledge and practical considerations.

- Experimental Matrix: A 12-run PBD was constructed with randomized run order to minimize systematic bias.

- Reaction Execution: Reactions were performed at 60°C for 24 hours in carousel tubes with appropriate substrate concentrations and reagent ratios.

- Response Measurement: Reaction yields were determined using appropriate analytical methods with dodecane as an internal standard.

- Data Analysis: Main effects were calculated and statistically significant factors identified using regression analysis and normal probability plots.

The PBD approach successfully identified solvent polarity and phosphine ligand properties as dominant factors influencing reaction yields across all three cross-coupling methodologies, providing valuable guidance for subsequent optimization studies [34].

Protocol 2: Nanocrystal Formulation Screening

In pharmaceutical formulation development, a study utilized Plackett-Burman design to screen parameters for producing drug nanocrystals using dual asymmetric centrifugation (DAC) [41]. This research aimed to identify critical factors affecting particle size and polydispersity in nanocrystal formulations.

Materials and Equipment:

- Model drugs: Albendazole, metronidazole, and curcumin

- Equipment: Dual asymmetric centrifuge, zirconia beads (milling media), particle size analyzer, transmission electron microscope, differential scanning calorimeter

- Excipients: Various stabilizers and surfactants

Experimental Procedure:

- Factor Identification: Eleven potential factors were identified, including drug concentration, stabilizer concentration, bead size, bead volume, centrifugation speed, and processing time.

- Design Implementation: A 12-run PBD was employed to screen these factors using three model drugs with different physicochemical properties.

- Nanocrystal Production: DAC processing was conducted using 1-minute milling cycles with zirconia beads as the milling media.

- Characterization: Particle size, polydispersity index, crystallinity, and morphology were characterized for each experimental run.

- Data Analysis: Plackett-Burman analysis identified significant factors, which were subsequently optimized using response surface methodology.

The study demonstrated that DAC could produce drug nanocrystals in just 1 minute of processing time—a dramatic reduction compared to conventional methods requiring hours or days. The PBD identified stabilizer concentration and bead size as the most critical factors influencing nanocrystal characteristics [41].

Key Reagent Solutions and Research Materials

Table 2: Essential Research Reagents and Materials for PBD Implementation in Solvent Screening

| Category | Specific Examples | Function in Experimental Design |

|---|---|---|

| Solvent Systems | DMSO, Acetonitrile, n-Heptane, Ethanol, Ionic Liquids, Deep Eutectic Solvents | Varied as factors to evaluate solvent effects on extraction, crystallization, or reaction yields [34] [42] [45] |

| Pharmaceutical Compounds | Artemisinin, Albendazole, Metronidazole, Curcumin | Model compounds for studying solubility, crystallization behavior, and formulation parameters [41] [45] |

| Catalysts/Ligands | K₂PdCl₄, Pd(OAc)₂, Various phosphine ligands | Factors in reaction optimization studies; evaluated for electronic and steric effects [34] |

| Analytical Tools | HPLC, GC, Particle Size Analyzer, DSC, TEM | Response measurement instruments for quantifying yield, particle size, crystallinity, and morphology [41] [34] |

| Process Equipment | Dual Asymmetric Centrifuge, Liquid Handlers, Automated Reactors | Enable high-throughput execution of experimental designs with minimal manual intervention [41] [43] |

Data Presentation and Analysis

Quantitative Results from Case Studies

Table 3: Comparative Performance Metrics of Plackett-Burman Design in Pharmaceutical Applications

| Application Area | Number of Factors Screened | Runs Saved vs Full Factorial | Key Significant Factors Identified | Reference |

|---|---|---|---|---|

| Cross-Coupling Reactions | 5 factors | 27 runs saved (from 32 to 5) | Solvent polarity, Ligand properties | [34] |