Parallel Reactor Temperature Control: Principles, Optimization, and Advanced Applications for Biomedical Research

This article provides a comprehensive guide to parallel reactor temperature control, a critical technology for accelerating and scaling biomedical research and drug development.

Parallel Reactor Temperature Control: Principles, Optimization, and Advanced Applications for Biomedical Research

Abstract

This article provides a comprehensive guide to parallel reactor temperature control, a critical technology for accelerating and scaling biomedical research and drug development. It covers foundational principles of heat transfer and reactor design, explores advanced methodological implementations including photoredox chemistry and microfluidic systems, and details strategies for troubleshooting and performance optimization. The content also addresses validation frameworks and comparative analyses of different control configurations, offering researchers and scientists a practical resource to enhance reproducibility, efficiency, and scalability in their experimental workflows.

Core Principles and System Architectures: Building a Foundation for Parallel Reactor Temperature Control

Fundamental Heat Transfer Modes in Parallel Reactor Systems

Parallel reactor systems have become indispensable in modern research and development, particularly in pharmaceuticals and drug development, where they enable high-throughput experimentation for rapid compound screening and optimization. The core principle of parallel synthesis involves conducting multiple chemical reactions simultaneously under carefully controlled conditions [1]. The ability to precisely manage heat transfer within these systems is fundamental to their success, as it directly impacts reaction kinetics, selectivity, product yield, and ultimately, the reproducibility and validity of experimental data [2]. This guide provides an in-depth examination of the fundamental heat transfer modes employed in parallel reactor systems, detailing their operational principles, implementation methodologies, and critical considerations for researchers.

Fundamental Heat Transfer Principles in Reactor Design

Heat transfer in parallel reactors, as in all thermal systems, occurs through three primary modes: conduction, convection, and radiation. In most reactor designs, these modes operate in combination. For instance, heat is typically transferred from a heating block to a reactor vial wall via conduction, then from the inner wall to the reaction mixture via convection, and if significant thermal gradients exist, radiation may also contribute.

A key concept in designing and analyzing heat exchangers for reactor temperature control is the Log Mean Temperature Difference (LMTD). The LMTD represents the driving force for heat transfer in flow systems and is crucial for calculating the heat removal or addition required. For a counter-flow heat exchanger (often more efficient), the LMTD is calculated as follows, where ΔT₁ and ΔT₂ are the temperature differences at each end of the exchanger [3]:

The overall heat transfer rate (Q) can then be determined using the equation:

Where U is the overall heat transfer coefficient and A is the heat transfer area [3]. The overall heat transfer coefficient accounts for the conductive and convective resistances throughout the entire assembly, from the heat transfer fluid to the reactor wall and finally to the reaction mixture [3].

Table 1: Comparison of Flow Arrangements in Heat Exchangers for Reactor Systems

| Flow Arrangement | Principle | Advantages | Disadvantages | Common Reactor Applications |

|---|---|---|---|---|

| Parallel Flow | Hot and cold fluids flow in the same direction. | Design simplicity; large initial temperature difference minimizes surface area needed initially. | Large thermal stress due to high initial temperature difference; cold fluid exit temperature cannot approach hot fluid inlet temperature. | Less common; used when fluids need to be brought to nearly the same temperature [3]. |

| Counter-Flow | Hot and cold fluids flow in opposite directions. | More uniform temperature difference minimizes thermal stress; higher average ΔT allows for greater heat transfer efficiency; cold fluid exit can approach hot fluid inlet temperature. | Slightly more complex design. | Standard for most jacketed reactor systems and condensers; ideal for precise temperature control [3]. |

The choice between parallel and counter-flow designs significantly impacts the efficiency and control of reactor temperature. The counter-flow arrangement is generally preferred for its superior performance and more uniform rate of heat transfer [3].

Heat Transfer Methods in Parallel Reactor Systems

Various active temperature control methods are employed in parallel reactors, each with distinct mechanisms for heat transfer. The selection of a method depends on the specific reaction requirements, including temperature range, precision, heat load, and scalability.

Active Temperature Control Modalities

Liquid Circulation Systems utilize a heat transfer fluid (e.g., water, silicone oil, or glycol mixtures) pumped through a jacketed reactor block. This method offers high heat capacity and excellent temperature uniformity across the reactor block [2] [4]. One implementation is the Temperature Controlled Reactor (TCR), a fluid-filled, 24 or 48-position reactor capable of maintaining temperatures from -40°C to 82°C with a remarkable well-to-well uniformity of ±1°C [4]. These systems are particularly valuable for managing heat loads from external sources like high-powered LEDs in photochemistry [4].

Peltier-Based (Thermoelectric) Systems employ solid-state heat pumps that use the Peltier effect to either heat or cool. When an electric current flows through the junctions of two dissimilar semiconductors, heat is absorbed on one side (cooling) and released on the other (heating). Their key advantages are compact design, rapid temperature changes, and the ability to both heat and cool without moving parts [2]. However, their efficiency decreases with larger temperature differentials, and they may require auxiliary cooling for prolonged use, making them ideal for small-scale laboratory reactors [2].

Air Cooling Systems represent a simpler, more cost-effective method that relies on fans or natural convection to dissipate heat, often augmented with heat sinks. While easy to implement and maintain, air cooling is less effective for precise temperature regulation or for reactions that generate significant exotherms [2]. Its use is typically confined to low-heat-load applications.

Table 2: Performance Characteristics of Active Temperature Control Methods

| Parameter | Liquid Circulation | Peltier-Based Systems | Air Cooling |

|---|---|---|---|

| Typical Temperature Range | -40°C to +150°C+ (fluid dependent) [4] | Limited by heat sink; efficient for small ΔT [2] | Ambient to moderate cooling/heating |

| Temperature Uniformity | High (±1°C achievable) [4] | Good for small volumes | Low |

| Best for Heat Load | High & Exothermic reactions [2] | Low to Moderate | Very Low |

| Scalability | Excellent for industrial scale [2] | Good for lab scale | Poor |

| Relative Cost & Maintenance | Higher initial cost & maintenance [2] | Moderate | Low [2] |

| Primary Advantage | High heat capacity & uniformity | Compact, reversible heating/cooling | Simplicity & low cost |

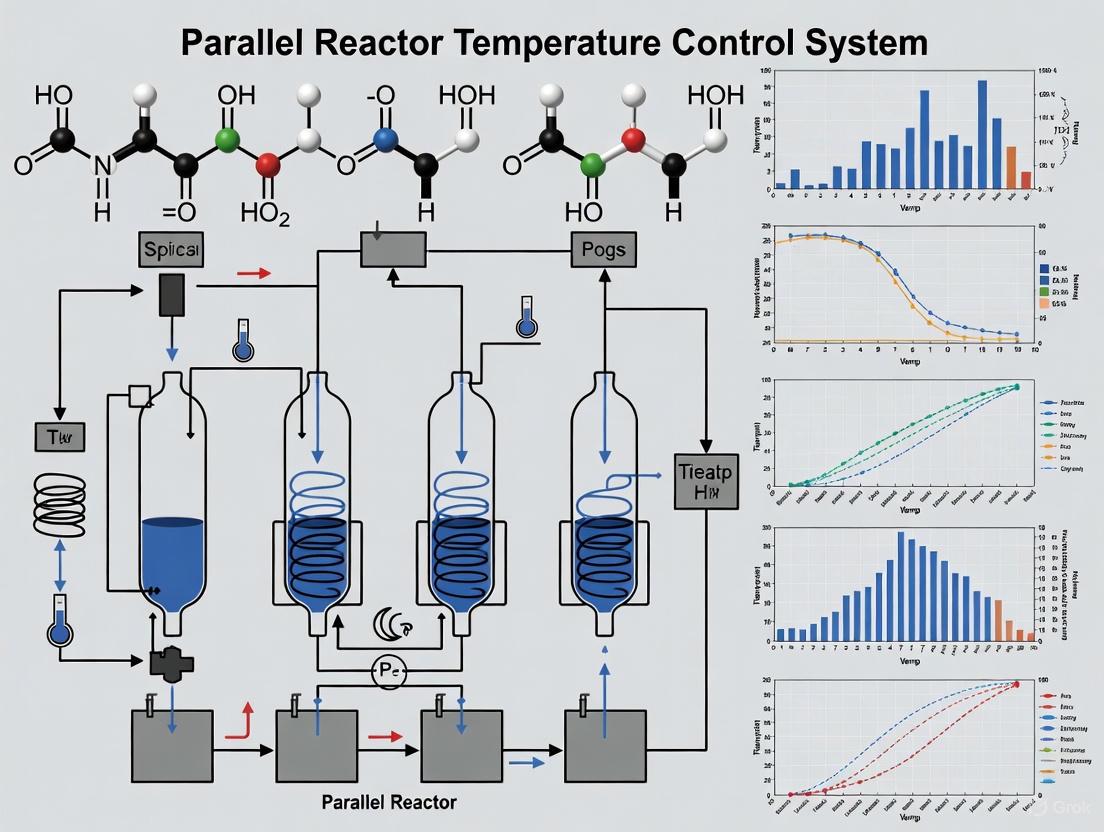

The following diagram illustrates the logical decision-making process for selecting an appropriate temperature control method based on key reaction parameters, synthesizing the criteria outlined in the search results [2].

System-Specific Heat Transfer Configurations

Heat transfer configurations are often tailored to specialized reactor types. In parallel photochemistry, temperature control must manage not only reaction enthalpy but also heat from high-intensity light sources [1] [4]. Systems like the Illumin8 or Lighthouse photoreactors incorporate cooling directly into their design to counteract radiative heating from LEDs, ensuring that temperature remains a controlled variable [1].

In parallel pressure reactors (e.g., for hydrogenation), systems like the Multicell run multiple reactions at elevated pressures in a single module [1]. Heat transfer in these systems must be designed to handle exothermic reactions safely, often incorporating robust heating blocks and, in some cases, cooling capabilities alongside pressure safety features like release valves [1].

Droplet-based microfluidic platforms represent another advanced configuration, where heat transfer occurs to or from individual nanoliter to microliter-scale reaction droplets flowing through a fluoropolymer tube [5]. The high surface-area-to-volume ratio enables very rapid heat transfer, allowing for precise thermal control and excellent reproducibility of fast, small-scale reactions [5].

Experimental Protocols for Heat Transfer Analysis

To ensure reliable and reproducible results in parallel synthesis, standardized protocols for verifying and utilizing heat transfer performance are essential.

Protocol: Verification of Temperature Uniformity in a Parallel Reactor Block

This protocol is designed to empirically validate the temperature uniformity of a reactor block, a critical factor for experimental consistency [4].

- Equipment Setup: Prepare the parallel reactor system (e.g., a Temperature Controlled Reactor, DrySyn SnowStorm MULTI, or similar) according to the manufacturer's instructions. Connect the temperature probe to a calibrated data logger or multimeter [6] [4].

- System Stabilization: Fill identical reactor vials with a thermally stable solvent (e.g., silicone oil) to mimic a standard reaction volume. Place the vials in all positions of the reactor block. Set the control temperature and allow the system to stabilize for a duration sufficient to reach a steady state (typically 30-60 minutes, depending on the system) [4].

- Data Collection: Using a fine-gauge thermocouple or RTD probe, measure the temperature of the solvent in the center of each vial. Record the temperature for every reactor position simultaneously or in rapid succession to minimize temporal drift.

- Data Analysis: Calculate the average temperature across all positions. Determine the maximum deviation from the average and the standard deviation of all measurements. A high-performance system should demonstrate a uniformity of ±1°C or better across the block [4].

Protocol: Performing a Parallel Synthesis with Controlled Low-Temperature Cooling

This methodology outlines the steps for executing exothermic or sub-ambient parallel reactions using an actively cooled system [6].

- Reactor Preparation: Mount the cooling unit (e.g., a DrySyn SnowStorm MULTI) on a magnetic stirrer plate and connect it to a refrigerated circulator. Set the circulator to the desired sub-ambient temperature (e.g., -30°C) and pre-cool the system [6].

- Reaction Mixture Assembly: In an inert atmosphere if necessary, charge the reaction vessels (e.g., 3 x 50 mL round-bottom flasks) with reactants and solvent. Equip each vessel with a stirrer bar [6].

- Initiating the Reaction: Once the reactor block has reached the target temperature, carefully place the charged reaction vessels into their positions. Start the magnetic stirrer to ensure efficient mixing and heat transfer.

- Reaction Monitoring: Maintain constant cooling and stirring for the required reaction time. Monitor the reaction progress using in-situ analytical techniques (e.g., RAMAN) or by periodically extracting samples for off-line analysis like TLC or HPLC [5].

- Sampling and Quenching: Upon completion, halt the reaction by removing the vessels or introducing a quench solution. The system's ability to hold constant sub-ambient temperatures enables safe handling of highly exothermic reactions and supports unattended operation, such as overnight reactions [6].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and equipment essential for implementing effective heat transfer control in parallel reactor experiments.

Table 3: Essential Materials and Equipment for Parallel Reactor Temperature Control

| Item | Function/Description | Key Considerations |

|---|---|---|

| Temperature Controlled Reactor (TCR) Block | A fluid-filled, multi-position reactor block that circulates a thermal fluid to maintain consistent temperature around samples [4]. | Provides superior well-to-well uniformity (±1°C); crucial for mitigating heat gradients in high-throughput photocatalysis [4]. |

| Refrigerated/Heating Circulator | An external device that pumps a heat transfer fluid at a precisely controlled temperature through a reactor jacket or block [6]. | Enables active cooling and heating; essential for maintaining sub-ambient temperatures (e.g., down to -30°C) for extended periods [6]. |

| Heat Transfer Fluids | Fluids such as water, silicone-based fluids (e.g., SYLTHERM), ethylene glycol, or polypropylene glycol used as the heat transfer medium [4]. | Selection depends on the working temperature range, viscosity, and chemical compatibility; water is suitable down to 5°C, while glycols are for lower temperatures [4]. |

| Parallel Photoreactor | A system like the Illumin8 or Lighthouse that allows multiple photochemical reactions to run simultaneously with controlled light irradiation and temperature [1]. | Integrated temperature control is vital to counteract heat from high-power LEDs, preventing unwanted thermal side reactions [1] [4]. |

| Microfluidic Droplet Reactor Platform | A system using discrete droplets suspended in a carrier fluid within tubing to perform reactions in nanoliter volumes [5]. | The high surface-area-to-volume ratio facilitates extremely rapid heat transfer, enabling high-fidelity screening with minimal material usage [5]. |

| Calibrated Temperature Probe | A precision sensor (e.g., thermocouple, RTD) for verifying the actual temperature within a reaction vessel or block. | Critical for empirical validation of setpoint temperatures and mapping thermal uniformity across a reactor block [4]. |

The precise control of heat transfer is a cornerstone of successful parallel reactor operation. Understanding the fundamental modes of heat transfer and the practical implementations of temperature control systems—from liquid circulation and Peltier devices to specialized configurations for photochemistry and microfluidics—empowers researchers to design more reliable and efficient experiments. The selection of an appropriate temperature control method must be guided by a clear understanding of reaction requirements, including heat load, desired precision, and scalability. By adhering to rigorous experimental protocols and utilizing the appropriate toolkit of reagent solutions, scientists and drug development professionals can leverage the full potential of parallel synthesis to accelerate research and development while ensuring the highest standards of data quality and reproducibility.

In the realm of thermal management systems for advanced reactors, the selection of an appropriate flow configuration within heat exchangers is a critical design decision with far-reaching implications for efficiency, safety, and operational stability. This analysis provides a comprehensive technical comparison between parallel flow and counter-flow configurations, framing this examination within the broader context of reactor temperature control research. Effective temperature control is fundamental to reactor safety, efficiency, and longevity, particularly in sensitive applications ranging from nuclear energy to pharmaceutical production where thermal precision dictates process success [7] [8].

The fundamental distinction between these configurations lies in fluid directionality: in parallel flow (or cocurrent flow), both hot and cold fluids move in the same direction, whereas in counter-flow (or countercurrent flow), the fluids move in opposite directions [9] [10]. While this difference appears simple, it creates significantly different thermal-hydraulic phenomena that directly impact the performance and safety of reactor temperature control systems. This whitepaper details these differences through quantitative data, experimental methodologies, and visualizations tailored for researchers, scientists, and drug development professionals engaged in thermal system design.

Theoretical Foundations of Flow Configurations

Fundamental Principles and Temperature Distribution Profiles

The underlying thermodynamics of the two flow configurations create distinctly different temperature distribution patterns along the heat exchanger length, which directly influence their operational characteristics and suitability for various applications.

In a parallel flow arrangement, the hottest and coldest fluids enter at the same end and move concurrently. This results in a large initial temperature difference at the inlet, which decreases exponentially along the flow path as the fluids approach thermal equilibrium [9]. This decaying temperature differential creates a fundamental limitation: the outlet temperature of the cold fluid can never approach or exceed the outlet temperature of the hot fluid. The significant temperature difference at the inlet can also induce substantial thermal stresses at the entrance region, potentially compromising material integrity over time [9] [10].

In a counter-flow arrangement, the fluids enter from opposite ends. The hot fluid transfers heat to the cold fluid along the entire exchange path, but crucially, the temperature difference between the two fluids remains more consistent throughout the device [9] [11]. This uniform gradient enables the cold fluid outlet temperature to approach much closer to the hot fluid inlet temperature, a thermodynamic advantage that makes counter-flow configurations particularly valuable in processes requiring precise high-temperature control or maximum heat recovery [11] [12].

Mathematical Basis for Performance Comparison

The performance superiority of counter-flow configurations can be quantified mathematically through the concept of Log Mean Temperature Difference (LMTD), which represents the driving force for heat transfer in exchangers [12]. For a counter-flow heat exchanger, the LMTD is calculated as:

[ \text{LMTD} = \frac{(T{h,i} - T{c,o}) - (T{h,o} - T{c,i})}{\ln\left(\frac{T{h,i} - T{c,o}}{T{h,o} - T{c,i}}\right)} ]

Where (T{h,i}) and (T{h,o}) are the hot fluid inlet and outlet temperatures, and (T{c,i}) and (T{c,o}) are the cold fluid inlet and outlet temperatures. For parallel flow, the calculation changes to:

[ \text{LMTD} = \frac{(T{h,i} - T{c,i}) - (T{h,o} - T{c,o})}{\ln\left(\frac{T{h,i} - T{c,i}}{T{h,o} - T{c,o}}\right)} ]

For the same inlet temperatures, the counter-flow arrangement consistently yields a higher LMTD, enabling greater heat transfer in an equivalently sized apparatus [12]. This mathematical foundation explains the higher thermal efficiency observed in counter-flow systems, which can reach efficiencies up to 85% in well-designed applications [11].

Quantitative Comparative Analysis

The theoretical advantages of counter-flow configurations manifest in measurable performance improvements across multiple operational parameters. The following tables consolidate quantitative findings from comparative studies, providing researchers with concrete data for design decisions.

Table 1: Thermal-Hydraulic Performance Comparison in Reactor Applications

| Performance Parameter | Parallel Flow Configuration | Counter-Flow Configuration | Experimental Context |

|---|---|---|---|

| Heat Transfer Efficiency | Lower heat transfer rates; gradual temperature equalization [13] | Higher efficiency; consistent temperature gradient maintained [13] | DFR Mini Demonstrator CFD simulations [13] |

| Temperature Approach | Cold fluid outlet temperature cannot exceed hot fluid outlet temperature [9] | Cold fluid can approach hottest temperature of incoming fluid [9] [11] | Industrial heat exchanger performance analysis [9] [10] |

| Thermal Stress | Large temperature differences at ends cause significant thermal stresses [9] | More uniform temperature difference minimizes thermal stresses [9] [10] | Material stress analysis in nuclear applications [13] |

| Swirling Effects | Intense swirling in fuel pipes enhancing local heat transfer but increasing mechanical stress [13] | Reduced swirling effects in fuel pipes, decreasing mechanical stress [13] | DFR fuel flow velocity analysis [13] |

| Temperature Distribution | Less uniform coolant temperature distribution; higher risk of localized overheating [13] | More uniform coolant temperature distribution across core [13] | Liquid lead coolant analysis in DFR [13] |

Table 2: Application-Specific Considerations for Flow Configuration Selection

| Application Domain | Preferred Configuration | Technical Rationale | Performance Notes |

|---|---|---|---|

| Nuclear Reactors (DFR) | Counter-flow | Higher heat transfer efficiency; more uniform flow velocity; reduced swirling and mechanical stresses [13] | Enhanced reactor safety and operational performance [13] |

| Pharmaceutical Industry | Parallel-flow | Gentler thermal transfer prevents product alteration; no thermal shocks [8] | Preserves quality of heat-sensitive compounds [8] |

| Chemical Processes | Counter-flow | Efficient heat recovery between process streams; maximum temperature utilization [11] | High efficiency up to 85% [11] |

| Ventilation & AC | Counter-flow | Efficient heat transfer between incoming and outgoing air streams [11] | Energy recovery in air handling systems [11] |

Experimental Protocols for Flow Configuration Analysis

Computational Fluid Dynamics (CFD) Methodology for Reactor Analysis

Advanced computational methods provide detailed insights into thermal-hydraulic behavior without requiring full-scale physical prototypes. The following protocol outlines a validated methodology for comparing flow configurations in nuclear reactor contexts, based on published research using the Dual Fluid Reactor (DFR) Mini Demonstrator (MD) as a test case [13].

Computational Model Setup:

- Geometry Definition: Create a 3D model representing the reactor core internals. The DFR MD model included 7 fuel pipes and 12 coolant pipes (6 large diameter, 6 small diameter). To optimize computational resources, leverage geometric symmetry by simulating only a quarter of the full domain [13].

- Mesh Generation: Develop a structured computational mesh with refined elements near pipe walls to resolve boundary layer effects. Implement mesh sensitivity analysis to ensure results are grid-independent.

Governing Equations and Physical Models:

- Solve the time-averaged mass, momentum, and energy conservation equations:

- Continuity: (\frac{\partial \rho}{\partial t} + \frac{\partial \rho Ui}{\partial xi} = 0)

- Momentum: (\frac{\partial \rho Ui}{\partial t} + \frac{\partial \rho Uj Ui}{\partial xj} = -\frac{\partial P}{\partial xi} + \frac{\partial}{\partial xj} \left[ \mu \left( \frac{\partial Ui}{\partial xj} + \frac{\partial Uj}{\partial xi} \right) - \rho \overline{u'i u'j} \right])

- Energy: (\frac{\partial \rho T}{\partial t} + \frac{\partial \rho Uj T}{\partial xj} = \frac{\partial}{\partial xj} \left[ \left( \frac{\lambda}{cp} + \frac{\mut}{\sigmat} \right) \frac{\partial T}{\partial xj} - \rho \overline{u'j T'} \right]) [13]

Specialized Modeling for Liquid Metal Coolants:

- Implement a variable turbulent Prandtl number model to accurately capture heat transfer in liquid metals with low Prandtl numbers. Use the empirical correlation: (Prt = 0.85 + \frac{0.7}{Pet}) where (Pet) is the turbulent Peclet number ((Pet = \frac{v_t}{v} Pr)) [13].

- Apply appropriate wall functions validated for liquid metal flows to bridge viscous sublayer regions.

Boundary Conditions and Simulation Parameters:

- Set mass flow inlet and pressure outlet boundaries for both fuel and coolant streams.

- Define operating temperatures representative of reactor conditions (e.g., 600-800°C for fuel, 400-600°C for coolant).

- Configure opposing flow directions for counter-flow analysis and same-direction flows for parallel flow assessment.

- Implement the k-ω SST turbulence model with curvature correction to capture swirling flows accurately.

Data Collection and Analysis Metrics:

- Extract temperature distributions throughout the domain to identify thermal hotspots and gradients.

- Quantify velocity profiles and swirling intensity using vorticity magnitude calculations.

- Calculate wall shear stresses to evaluate mechanical loading on components.

- Determine overall heat transfer coefficients and effectiveness for both configurations.

Experimental Validation Protocol for Laboratory-Scale Heat Exchangers

While computational studies provide valuable insights, experimental validation remains essential for confirming theoretical predictions. The following protocol describes a laboratory-scale approach for comparing flow configurations using representative heat exchanger test platforms.

Experimental Apparatus:

- Test Section: Utilize a concentric tube heat exchanger with transparent sections for flow visualization, or instrumented industrial plate heat exchanger modules.

- Flow System: Implement separate loops for hot and cold fluids with precision pumps for flow control.

- Heating and Cooling Systems: Incorporate electric heaters with PID control for the hot loop and a chiller unit for the cold loop.

- Instrumentation: Install resistance temperature detectors (RTDs) or thermocouples at all inlets and outlets, with additional temperature sensors along the flow path if possible. Include flow meters, pressure transducers, and differential pressure sensors to characterize hydraulic performance.

Experimental Procedure:

- System Preparation: Fill both loops with appropriate working fluids (water for initial validation, specialized coolants for application-specific testing).

- Flow Configuration Setup: Arrange piping and valves to establish either parallel or counter-flow configuration while maintaining identical flow paths and components.

- Steady-State Operation: For each test condition, adjust flow rates to desired values, activate heating and cooling systems, and allow the apparatus to reach thermal steady-state (confirmed by stable temperature readings over 10-15 minutes).

- Data Collection: Record all temperature, pressure, and flow rate measurements at steady-state conditions across a range of flow rates (e.g., 0.5-5.0 L/min) and inlet temperature combinations.

- Configuration Change: Carefully reconfigure the system to the alternate flow arrangement while maintaining all other system parameters, and repeat the measurement sequence.

Data Analysis Methods:

- Calculate heat transfer rates from both fluid sides: (Qh = \dot{m}h c{p,h} (T{h,i} - T{h,o})) and (Qc = \dot{m}c c{p,c} (T{c,o} - T{c,i}))

- Determine overall heat transfer coefficient (U) using the formula: (Q = U \times A \times \text{LMTD})

- Evaluate thermal effectiveness for both configurations: (\varepsilon = \frac{Q}{Q_{\text{max}}} = \frac{\text{Actual Heat Transfer}}{\text{Maximum Possible Heat Transfer}})

- Correlate pressure drop with flow rate to characterize hydraulic performance.

- Quantify temperature profile uniformity and identify any localized hotspots through detailed sensor arrays.

Visualization of Thermal-Hydraulic Phenomena

To enhance understanding of the fundamental differences between flow configurations, the following diagrams illustrate key concepts, relationships, and experimental workflows using standardized DOT visualization.

Diagram 1: Thermal Performance Characteristics Comparison

Diagram 2: CFD Analysis Workflow for Flow Configuration Assessment

The experimental and computational analysis of flow configurations requires specialized tools, materials, and computational approaches. The following table details essential resources referenced in the studies analyzed for this technical guide.

Table 3: Research Reagent Solutions and Essential Materials for Thermal-Hydraulic Experiments

| Resource Category | Specific Examples | Function/Application | Technical Notes |

|---|---|---|---|

| Computational Fluid Dynamics Software | ANSYS CFX, OpenFOAM, STAR-CCM+ | Simulation of thermal-hydraulic phenomena in complex geometries [13] | Requires specialized turbulence models for low Prandtl number fluids [13] |

| Advanced Coolants | Liquid lead, Lead-Bismuth Eutectic (LBE), Sodium | High-temperature reactor coolant with superior heat transfer properties [13] | Low Prandtl number requires modified simulation approaches [13] |

| Turbulence Models | k-ω SST with curvature correction, Variable Prandtl number models | Accurate prediction of heat transfer in liquid metal flows [13] | Prt = 0.85 + 0.7/Pet correlation for liquid metals [13] |

| Experimental Test Facilities | NACIE-UP Loop (ENEA), LIFUS5 Facility (ENEA), EAGLE (JAEA) | Experimental validation of thermal-hydraulic performance [13] | Provide benchmark data for computational model validation [13] |

| Temperature Measurement | Resistance Temperature Detectors (RTDs), Thermocouples | Precise temperature mapping in experimental setups | Critical for validating temperature distribution predictions |

| Flow Characterization | Coriolis flow meters, Laser Doppler Velocimetry, Particle Image Velocimetry | Flow rate measurement and velocity field mapping | Essential for quantifying swirling effects and flow distribution [13] |

Application Contexts and Configuration Selection Guidelines

Nuclear Reactor Temperature Control Applications

Within nuclear reactor systems, particularly advanced Generation IV designs like the Dual Fluid Reactor (DFR), thermal-hydraulic performance directly impacts safety, efficiency, and operational longevity. Research conducted on the DFR Mini Demonstrator reveals significant performance differences between flow configurations that inform design decisions [13].

The counter-flow configuration demonstrates distinct advantages in nuclear contexts, including higher heat transfer efficiency, more uniform flow velocity distributions, and reduced swirling effects in fuel pipes. These characteristics collectively reduce mechanical stresses on components, enhancing reactor safety and potentially extending service life [13]. The more uniform temperature distribution achieved in counter-flow arrangements mitigates the risk of localized overheating (hot spots) that can accelerate material degradation and compromise safety margins.

Conversely, parallel flow configurations in nuclear applications exhibit intense swirling in some fuel pipes, which while enhancing local heat transfer, simultaneously increases mechanical stress on components. This swirling phenomenon, combined with less uniform temperature distributions, presents challenges for long-term operational stability in high-temperature nuclear environments [13].

Pharmaceutical and Chemical Process Applications

In pharmaceutical manufacturing and specialized chemical processes, thermal considerations extend beyond efficiency to encompass product stability and quality preservation. Unlike nuclear applications where maximum heat transfer is often prioritized, pharmaceutical processes frequently require gentle, controlled thermal treatment to prevent product degradation [8].

For these applications, parallel flow configurations offer distinct advantages despite their lower thermodynamic efficiency. The progressively decreasing temperature differential along the flow path provides a gentler thermal environment that minimizes the risk of thermal shock to sensitive compounds [8]. This "softer" thermal transfer profile helps maintain molecular integrity in heat-sensitive pharmaceuticals, biologics, and specialty chemicals where excessive or rapid temperature changes could alter product characteristics.

Counter-flow configurations in pharmaceutical contexts are typically reserved for utility applications where product contact is not direct, such as initial heating or cooling of heat transfer fluids that subsequently interact with products through secondary exchangers. This approach leverages the efficiency benefits of counter-flow arrangements while maintaining precise control over product thermal history [11] [8].

The comparative analysis of parallel and counter-flow configurations reveals a consistent thermodynamic superiority of counter-flow arrangements in applications prioritizing maximum heat transfer efficiency and temperature utilization. The maintained temperature differential across the entire heat exchanger length enables performance unattainable with parallel flow designs, particularly in high-temperature nuclear reactor applications where thermal efficiency directly correlates with safety and operational effectiveness.

However, parallel flow configurations retain significant value in specialized applications where gentle thermal treatment outweighs efficiency considerations, such as in pharmaceutical manufacturing processes involving heat-sensitive compounds. The selection between these configurations ultimately represents a multi-variable optimization problem balancing thermal efficiency, hydraulic performance, mechanical stress, material compatibility, and process requirements.

For reactor temperature control systems specifically, the evidence strongly favors counter-flow configurations, which provide more uniform temperature distributions, reduced thermal stresses, and minimized localized overheating risks. These advantages translate directly to enhanced safety margins and potentially longer operational lifespans in critical nuclear applications. As thermal-hydraulic modeling capabilities continue advancing through improved computational methods and validated experimental data, further refinement of these flow configurations will emerge, enabling increasingly sophisticated temperature control strategies for next-generation reactor systems.

Parallel reactor systems are engineered platforms that enable researchers to conduct multiple chemical reactions simultaneously under carefully controlled conditions. These systems are fundamental to accelerating research and development in fields such as pharmaceutical discovery, catalyst testing, and materials science, where high-throughput experimentation is critical [1]. The core value of these systems lies in their ability to rapidly generate reproducible and comparable data, significantly reducing the time and resource demands associated with traditional sequential experimentation. This technical guide examines the key components of these systems, framing the discussion within the broader context of parallel reactor temperature control basics research. Effective temperature management is the cornerstone of reliable parallel reactor operation, as it ensures that each reaction vessel maintains its specified thermal environment independently, without interference from neighboring reactors, thus guaranteeing the integrity of experimental results.

Core Components of a Parallel Reactor System

A parallel reactor system is an integrated assembly of several critical subsystems. Each component must be carefully selected and configured to work in harmony, ensuring precise control over reaction parameters and enabling high-fidelity, high-throughput experimentation [5].

Reaction Vessels and Materials of Construction

The reaction vessels are the primary containment units where chemical transformations occur. The material selection for these vessels is paramount for ensuring both chemical compatibility and operational safety, especially when dealing with corrosive reagents, elevated temperatures, and high pressures.

Common Alloys: The choice of alloy directly impacts the system's resistance to corrosion and its maximum operating temperature.

- 316 Stainless Steel: This is the most commonly used material, composed of iron, chromium, nickel, and molybdenum. The molybdenum addition enhances corrosion resistance, making it suitable for a wide range of applications [14].

- Inconel: A nickel-iron-chromium-based superalloy known for its ability to retain strength and corrosion resistance at a high fraction of its melting point, ideal for extremely high-temperature processes [14].

- Hastelloy: This nickel-chromium-molybdenum alloy offers the highest corrosion resistance among the three, often selected for processes involving highly aggressive media [14].

Protective Liners: To further protect the reactor's internal structure, removable liners can be employed. These are typically made from borosilicate glass or PTFE (Polytetrafluoroethylene), providing an inert barrier between the reaction mixture and the metal vessel [14].

Heating and Temperature Control

Precise and uniform temperature control is one of the most critical aspects of parallel reactor design, directly influencing reaction kinetics and outcomes. Systems employ various methods to achieve this, often tailored to the specific application.

- Heating Methods: Common techniques include heating mantles, integrated hotplates, and aluminum heating blocks that accommodate multiple reaction vials or flasks [14] [1]. For very high-temperature applications beyond 800°C, specialized systems such as molten salt reactors are custom-designed [14].

- Cooling: To quench reactions or manage exothermic processes, cooling is typically provided by a refrigerated circulator that pumps a coolant through jackets or blocks surrounding the reaction vessels [14].

- Temperature Uniformity: Advanced systems housed in a single furnace, like the BenchCAT example, can maintain multiple reactors at identical high temperatures (e.g., 1000°C) with minimal gradient, which is essential for valid comparative screening [15].

Agitation and Mixing

Efficient mixing is essential for achieving homogeneity in the reaction mixture, which is critical for consistent heat and mass transfer. Parallel systems offer different agitation mechanisms to suit various viscosities and reaction types.

- Magnetic Stirring: This common method uses stir bars driven by a rotating magnet beneath the reaction vessel. It is suitable for many standard applications [14].

- Overhead Stirring: For reactions involving high viscosity or high solids content, overhead stirrers with mechanical seals provide more robust and reliable mixing torque [14].

- Droplet-Based Oscillation: In microfluidic droplet platforms, mixing is achieved by oscillating the reaction droplet back and forth within a tubular reactor, ensuring efficient mass transfer at a very small scale [5].

Pressure Management

Many advanced chemical reactions, such as hydrogenations and carbonylations, require elevated pressures to increase gas solubility and enhance reaction rates. Parallel systems are designed to safely contain and control these pressures.

- Pressure Control: Systems can be configured for independent pressure control in each reactor cell, allowing for the screening of pressure as a variable, or they can be manifolded together to run all reactions at the same pressure [14] [1].

- Safety Features: To ensure operational safety, these systems are equipped with pressure release valves and burst disks that activate if the internal pressure exceeds a predetermined safe limit [1].

- Operating Range: Standard parallel reactors, such as the Multicell PLUS, routinely operate at pressures up to 50 bar, with high-pressure options available up to 200 bar and beyond [14].

Automation, Control, and Sensor Networks

The integration of automation, sensors, and control software is what transforms a collection of reactors into a sophisticated high-throughput experimentation platform.

- Automated Fluid Handling: Liquid handlers and selector valves are used for precise, automated dosing of reagents into individual reactor channels, enabling the preparation of reaction mixtures without manual intervention [5].

- Sensor Networks: A critical component for process understanding and control. These networks typically include:

- Thermocouples: For accurate temperature monitoring of each reactor.

- Pressure Transducers: To monitor and provide feedback for pressure control systems.

- Scales: Integrated onto liquid feed vessels to enable mass balance calculations [15].

- Process Control Systems: These systems orchestrate all hardware operations, including scheduling, and can integrate Bayesian optimization algorithms for closed-loop, iterative experimental design. This allows the platform to autonomously propose and execute the next set of experiments based on previous outcomes [5].

- On-line Analytics: Integration with analytical instruments like HPLC or GC allows for real-time, automated analysis of reaction outcomes, minimizing delays between reaction completion and evaluation [5].

Table 1: Key Specifications of Commercial Parallel Reactor Systems

| System Name | Number of Reactors | Reactor Volume | Max Temperature | Max Pressure | Key Features |

|---|---|---|---|---|---|

| Quadracell [14] | 4 | 10 mL | 250 °C | 50-200 bar | Small footprint, Stainless Steel or Hastelloy construction. |

| Multicell [14] | 10 | 30 mL | 200 °C | 50 bar | Standardized 10-position screening. |

| Multicell PLUS [14] | 4, 6, 8, or 10 | Up to 100 mL | 200-300+ °C | 50-200 bar | Highly customizable, individual cell control options. |

| Integrity 10 [14] | 10 | N/A | N/A | 100 bar (std) | Parallel Pressure Reactor Module system. |

| Automated Droplet Platform [5] | 10 | Microscale | 0-200 °C | 20 atm | Independent channels, on-line HPLC, photochemistry capability. |

| Custom BenchCAT [15] | 6 | N/A | 1000 °C | N/A | Single furnace, dedicated MFCs per station, mass balance capability. |

Experimental Protocol for a High-Pressure Parallel Catalysis Screening

The following methodology details a representative experiment for screening catalysis reaction conditions using a parallel high-pressure reactor system, incorporating best practices for temperature control and data collection.

Experimental Setup and Preparation

- System Configuration: Select a parallel reactor system, such as a 10-position Multicell, constructed from 316 Stainless Steel, with independent temperature and pressure control for each cell [14].

- Reactor Preparation: Fit each reactor cell with the appropriate protective liner (e.g., PTFE). Ensure all vessels are clean, dry, and free of contaminants.

- Catalyst and Reagent Loading: In an inert atmosphere glovebox, weigh and load the solid catalyst candidates (e.g., 5 mg ± 0.1 mg) directly into the individual reactor cells. Subsequently, add the liquid substrate solution (e.g., 5 mL of a 0.1 M concentration in an appropriate solvent) to each cell using a positive displacement pipette for accuracy.

- Sealing and Leak Checking: Securely seal all reactor cells according to the manufacturer's instructions. Pressurize the system with an inert gas (e.g., N₂) to 10 bar and monitor the pressure gauge for 15 minutes to confirm there are no leaks before proceeding.

Process Execution and Data Acquisition

- Initialization and Purging: Initiate the system's software and create a new experiment profile. Purge the headspace of each reactor three times with the process gas (e.g., H₂ for hydrogenation) to ensure an oxygen-free environment.

- Parameter Setting and Reaction Start: Set the desired experimental parameters for each cell via the control software (e.g., Temperature: 150 °C, Stirring Rate: 750 rpm, Pressure: 50 bar H₂). Commence the experiment, noting ( t = 0 ) when the set temperature is reached in all cells.

- In-Process Monitoring: Throughout the reaction, the sensor network will continuously log data. Monitor the temperature of each cell in real-time to ensure it remains within ±1.0 °C of the setpoint, confirming the system's temperature control fidelity [5].

- Sampling and Analysis: For systems with in-line analytics, automated sampling will occur at pre-defined intervals. For manual systems, after a 2-hour reaction time, rapidly quench the reactions by activating the cooling circulator. Once at ambient temperature, carefully depressurize each cell and extract a representative sample (e.g., 100 µL) from each for off-line analysis by GC-MS.

Data Analysis and Interpretation

- Conversion and Yield Calculation: Process the chromatographic data to calculate the conversion of the starting material and the yield of the desired product for each catalyst candidate.

- Performance Comparison: Compare the results across all 10 reactors to identify the lead catalyst. Advanced systems with integrated Bayesian optimization would use this data to automatically propose the next set of conditions for further optimization [5].

- Final Reporting: The final report should document the performance of each catalyst under the controlled conditions, highlighting the reproducibility of the parallel system, which should demonstrate a standard deviation of less than 5% in reaction outcomes for replicate experiments [5].

System Architecture and Workflow

The logical flow of operation in an automated parallel reactor system, from experimental design to data acquisition, can be visualized as a continuous cycle. The following diagram illustrates the integrated relationship between the hardware components and the control software.

Diagram 1: Automated parallel reactor control loop.

The Scientist's Toolkit: Essential Research Reagent Solutions

The successful operation of a parallel reactor system relies on more than just the hardware. This table details key reagents, materials, and software solutions that constitute the essential toolkit for researchers in this field.

Table 2: Essential Research Reagent Solutions and Materials

| Item Name | Function / Purpose | Application Context |

|---|---|---|

| PTFE Liners [14] | Provides an inert, non-stick, and corrosion-resistant barrier inside the metal reactor vessel. | Essential for reactions with corrosive reagents or when metal catalysis interference must be avoided. |

| Borosilicate Glass Liners [14] | Offers chemical inertness and visual access to the reaction mixture. | Ideal for non-ablative reactions where visual monitoring of precipitation or color change is beneficial. |

| Standard Catalyst Libraries | Pre-selected collections of homogeneous or heterogeneous catalysts for rapid screening. | Used in catalyst discovery and optimization campaigns to identify the most active and selective catalyst for a given transformation. |

| High-Purity Process Gases | Reactants or inert atmospheres for pressure reactions (e.g., H₂, CO, CO₂, N₂). | Critical for hydrogenation, carbonylation, and other gas-liquid reactions where solubility and purity directly impact results. |

| Bayesian Optimization Software [5] | An algorithm integrated into the control system for intelligent, closed-loop experimental design. | Proposes the most informative next experiments based on previous results, dramatically accelerating reaction optimization. |

The pursuit of uniform irradiation is a critical objective in the design of advanced photochemical reactors, particularly for applications in pharmaceutical development and high-throughput experimentation where reproducibility and scalability are paramount. Achieving this uniformity requires the deliberate application of fundamental optical principles, primarily the Inverse Square Law and Lambert's Cosine Law [16] [17]. These laws govern how light intensity distributes itself spatially and angularly from a source, directly impacting reaction kinetics and product yields in photochemically-driven processes.

Within the broader context of parallel reactor temperature control research, precise optical management serves as a complementary and equally vital parameter. Just as thermal energy must be uniformly distributed to prevent hot spots and ensure consistent reaction rates, so too must photonic energy be evenly delivered to all reaction vessels or channels [18]. This guide provides an in-depth examination of how these optical laws inform reactor design, supported by quantitative data, validated experimental protocols, and essential implementation tools.

Fundamental Optical Principles

The Inverse Square Law

The Inverse Square Law is a foundational principle of radiometry that describes the geometric dilution of light intensity with distance from a point source. It states that the intensity of light is inversely proportional to the square of the distance from the source.

Mathematical Formulation: The law is expressed as: ( I = \frac{P}{4\pi r^2} ) Where:

- ( I ) is the irradiance (intensity per unit area)

- ( P ) is the total power emitted by the source

- ( r ) is the distance from the source

Design Implications: In reactor design, this law implies that small variations in the distance between a light source and a reaction vessel can lead to significant differences in incident light intensity [19]. For example, doubling the distance from the source reduces the irradiance to a quarter of its original value. This effect is particularly critical in parallel reactor systems where multiple vessels must receive identical irradiation; a failure to maintain equal source-to-vessel distances will result in inconsistent reaction outcomes.

Lambert's Cosine Law

Lambert's Cosine Law governs the angular distribution of light emitted or reflected from a surface. For a Lambertian (ideal diffuse) surface or emitter, the observed radiant intensity is directly proportional to the cosine of the angle θ between the observer's line of sight and the surface normal [20] [21].

Mathematical Formulation: The law is expressed as: ( I = I_0 \cdot \cos\theta ) Where:

- ( I ) is the intensity observed at angle θ

- ( I_0 ) is the intensity observed normal to the surface (at θ=0°)

Design Implications: This law has two primary consequences for reactor design [19] [21]:

- Surface Orientation: Reaction vessels or flow cells must be positioned normal to the incident light direction to maximize photon capture. A surface tilted at 60° receives only half the irradiance (( \cos60° = 0.5 )) of a normally-oriented surface.

- Apparent Brightness: For diffuse reflecting surfaces inside a reactor chamber, the perceived brightness remains constant regardless of viewing angle, which aids in creating uniform illumination environments.

Table 1: Quantitative Relationship Between Angle and Relative Intensity According to Lambert's Cosine Law

| Angle θ (degrees) | cos(θ) | Relative Intensity (I/I₀) |

|---|---|---|

| 0 | 1.000 | 100.0% |

| 15 | 0.966 | 96.6% |

| 30 | 0.866 | 86.6% |

| 45 | 0.707 | 70.7% |

| 60 | 0.500 | 50.0% |

| 75 | 0.259 | 25.9% |

| 90 | 0.000 | 0.0% |

Reactor Design Applications and Optimization

The strategic application of these optical laws enables the creation of photochemical platforms that deliver high irradiance intensity and exceptional uniformity across well plates, flow reactors, and droplet stop-flow systems [19].

Design Parameters and Optimization

Comprehensive ray-tracing simulations have been employed to optimize key design parameters for planar light sources comprising multiple LEDs [19]:

- LED Arrangement: Grid and offset grid patterns outperform concentric circles and spirals, particularly at higher irradiance intensities.

- Number of LEDs: Increasing LED count consistently improves performance; simulations tested configurations from 4 to 81 LEDs.

- Source Height: An optimal height of approximately 20 mm above the reaction surface minimizes normalized irradiance standard deviation, balancing hotspot reduction against intensity falloff.

- Pattern Width: Wider LED patterns enhance uniformity but with diminishing returns, creating a tradeoff between irradiance intensity and uniformity.

Table 2: Optimization Parameters for Planar LED Array Design from Ray-Tracing Analysis [19]

| Design Parameter | Tested Range | Optimal Value/Strategy | Impact on Performance |

|---|---|---|---|

| LED Arrangement Pattern | Concentric circles, spirals, grid, offset grid | Grid or offset grid | Superior uniformity at high mean irradiance |

| Number of LEDs | 4 to 81 LEDs | Maximize number within constraints | Always beneficial for both intensity and uniformity |

| Height Above Surface | 10-150 mm | ~20 mm | Minimizes normalized standard deviation of irradiance |

| Pattern Width | 75-150 mm | Wider patterns preferred | Improves uniformity with diminishing returns |

Optical Elements for Enhanced Performance

Incorporating optical elements can further refine irradiance profiles [19]:

- Mirrored Surfaces: Surrounding LED arrays with mirrors on all four sides contains and redistributes light.

- Diffusing Layers: Ground glass diffusers placed between lights and the reaction surface scatter light to eliminate hotspots. Ray-tracing simulations show optimal placement closer to the light source.

Experimental Validation Protocols

Radiometric Validation of Uniform Irradiance

Purpose: To quantitatively measure irradiance intensity and distribution across the reaction plane to validate design uniformity [19].

Materials:

- Optical power meter with calibrated radiometer probe

- Translation stage for precise positional control

- Data acquisition system

Methodology:

- Secure the photochemical platform in a fixed position

- Mount the radiometer probe on a translation stage capable of XY movement

- Position the probe at a defined height corresponding to the reaction plane

- Measure irradiance at multiple points across the entire reaction surface using a predefined grid pattern

- Record measurements with sufficient density to characterize intensity gradients (typically 100+ points for a 100 mm × 100 mm area)

- Calculate mean irradiance, standard deviation, and coefficient of variation (standard deviation/mean) across all points

Data Analysis:

- Generate 2D contour plots of irradiance distribution

- Calculate uniformity metric: ( U = (1 - \frac{\sigma}{\mu}) \times 100\% ), where σ is standard deviation and μ is mean irradiance

- Compare experimental results with ray-tracing simulations

Chemical Actinometry for Photon Flux Quantification

Purpose: To measure the total photon flux incident on reaction vessels using a standardized chemical reaction [16].

Materials:

- Potassium ferrioxalate solution (standard chemical actinometer)

- Spectrophotometer

- Reaction vessels identical to those used in experimental applications

Methodology:

- Prepare potassium ferrioxalate solution according to established protocols

- Fill reaction vessels with actinometer solution

- Expose to the photochemical platform for precisely measured time intervals

- Analyze the formation of Fe²⁺ complexes spectrophotometrically

- Calculate photon flux based on known quantum yield of the actinometric reaction

Data Analysis:

- Determine photon flux for each reaction vessel position

- Map spatial variation of photon flux across the reactor platform

- Correlate with radiometric measurements

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Materials and Equipment for Uniform Irradiation Reactor Implementation [19] [16]

| Item | Function/Application | Implementation Example |

|---|---|---|

| High-Power LEDs | Primary light source with tunable intensity and multiple wavelength options | Array of visible light LEDs (avoiding UV for safety); computer-controlled for integration with automation platforms [19] |

| Mirrored Surfaces | Reflect and redistribute light to enhance uniformity | Placed on all four sides of LED array to contain and direct light toward reaction plane [19] |

| Ground Glass Diffusers | Scatter light to eliminate hotspots and create uniform illumination | 300 mm × 300 mm layer placed between LEDs and reaction surface [19] |

| Optical Power Meter | Quantify irradiance and validate uniformity | Measure photon flux with display integrated into reactor control system [16] |

| Aluminum Reflectors | Broad-band light reflection to improve photon efficiency | Incorporated to redirect otherwise lost photons toward reaction vessels [16] [17] |

| Cooling Systems | Manage heat from high-power LEDs to prevent thermal effects on reactions | Active cooling solutions to maintain temperature control alongside optical optimization [19] |

Implementation Framework and Temperature Control Integration

Successful implementation requires systematic consideration of both optical and thermal factors:

- Hierarchical Design Approach: Begin with optical layout based on ray-tracing simulations, then integrate thermal management around this optical core [19].

- Control Systems Integration: Implement PID control algorithms for both LED output (intensity tuning) and temperature regulation, preferably with self-tuning capabilities for simplified operation [18].

- Validation Protocol: Employ both radiometric measurements and chemical actinometry to confirm performance before proceeding to experimental use.

The integration of optical and thermal control systems creates a comprehensive reactor environment where both photonic and thermal energy are precisely managed, enabling unprecedented reproducibility in photochemical research and development [19] [18].

The deliberate application of the Inverse Square Law and Lambert's Cosine Law provides the foundational framework for designing photochemical reactors capable of delivering uniform irradiation. Through strategic LED arrangement, optimized source-to-surface distances, and incorporation of reflective and diffusive elements, researchers can create systems that ensure consistent reaction conditions across all vessels in parallel setups.

When these optical principles are integrated with precision temperature control systems, the resulting platforms offer researchers in pharmaceutical development and other high-value chemical sectors the unprecedented ability to conduct photochemical reactions with exceptional reproducibility and scalability. This synergistic approach to managing both photonic and thermal energy represents the future of robust parallel reactor systems for advanced chemical research and development.

Impact of Temperature Gradients, Flow Patterns, and Swirling Effects on System Performance

The precise control of temperature and fluid dynamics is a cornerstone of efficient and safe operation across numerous industrial and research systems, from chemical reactors to energy generation equipment. This whitepaper, framed within broader thesis research on parallel reactor temperature control basics, examines the critical interplay between temperature gradients, flow patterns, and swirling effects on overall system performance. Understanding these coupled phenomena is essential for researchers and drug development professionals aiming to optimize reaction yields, enhance operational safety, and improve the scalability of processes. The following sections provide a detailed analysis of these parameters, supported by computational and experimental studies, and present structured data, experimental protocols, and visualization tools to guide further research and development.

Theoretical Foundations and Key Concepts

Flow Configurations and Heat Transfer Efficiency

The arrangement of fluid flows within a system is a primary determinant of its thermal performance. Two fundamental configurations are prevalent:

- Parallel Flow: In this configuration, the hot and cold fluids move in the same direction. This leads to a gradual temperature equalization along the flow path, resulting in a decreasing temperature gradient. While simpler to implement, this configuration typically yields lower heat transfer rates [22].

- Counter Flow: Here, the hot and cold fluids enter from opposite ends. This arrangement maintains a more consistent temperature gradient across the entire length of the heat exchanger, typically achieving higher heat transfer efficiency and allowing for more compact designs. It is widely used in high-temperature and cryogenic processes [22].

The Role and Implications of Swirling Flows

Swirling flows, intentionally generated by devices like twisted-tape inserts or axial-vane swirlers, are a key passive method for heat transfer intensification [23].

- Heat Transfer Intensification: The primary mechanism involves the creation of secondary flows and vortices that disrupt the thermal boundary layer at the tube wall, enhancing the mixing between the core of the flow and the wall region. This leads to a significant increase in the heat transfer coefficient [23].

- Trade-offs and Challenges: A universal drawback of heat transfer intensification is an increased pressure drop, which elevates the pumping power required. The efficiency of any intensification method must therefore be evaluated based on a combination of the improved heat transfer and the accompanying pressure loss [23]. Furthermore, in reactor systems, intense swirling can induce high mechanical stresses on components and create complex, unstable flow structures that may lead to undesirable temperature fluctuations [22].

Temperature Distribution and System Safety

Non-uniform temperature distributions pose significant risks to system integrity and performance.

- Hot-Spots: These are localized regions of excessively high temperature that can develop due to inadequate mixing, uneven flow distribution, or excessive heat generation. In pharmaceutical reactors, hot-spots can degrade product quality, while in nuclear applications, they threaten structural integrity through thermal fatigue [22] [24].

- Outlet Temperature Distribution Factor (OTDF): In combustors, the OTDF is a critical performance indicator that characterizes the temperature uniformity at the turbine inlet. A high OTDF, indicating significant non-uniformity, can lead to overheating of turbine blades and reduced component lifespan [25].

Quantitative Analysis of System Performance

The following tables synthesize key quantitative findings from various studies on flow configurations and swirling flows.

Table 1: Performance Comparison of Parallel vs. Counter Flow Configurations in a Dual Fluid Reactor Mini Demonstrator (DFR MD) [22]

| Performance Parameter | Parallel Flow Configuration | Counter Flow Configuration |

|---|---|---|

| Heat Transfer Efficiency | Lower | Higher |

| Temperature Gradient | Decreasing along flow path | More consistent and stable |

| Flow Velocity Uniformity | Less uniform | More uniform |

| Swirling Effects | Intense in fuel pipes | Significantly reduced |

| Mechanical Stress | Higher | Lower |

| Thermal Hot-Spot Risk | Higher | Lower |

Table 2: Influence of Swirl Number on Combustor Performance and Flow Features [25]

| Parameter / Feature | Low Swirl Number | High Swirl Number |

|---|---|---|

| Outlet Temperature Uniformity (OTDF) | Lower (Less Uniform) | Higher (More Uniform) |

| Precessing Vortex Core (PVC) Dynamics | Lower intensity | More pronounced, altered dynamics |

| Recirculation Zone Structure | Standard two vortices | Altered and strengthened |

| Hot-Spot Migration | Axial accumulation likely | Suppressed, promotes radial mixing |

| Mixing Efficiency | Standard | Enhanced |

Table 3: Generalized Heat Transfer and Friction Correlations for Swirling Flows in Tubes with Twisted Tape Inserts [23]

| Flow Regime | Nusselt Number (Nu) Correlation | Friction Factor (λ) Correlation |

|---|---|---|

| Turbulent Flow | ( Nu = 0.023 Re^{0.8} Pr^{0.4} \left(1 + \frac{0.769}{s/d}\right) ) | ( \lambda = \frac{0.0791}{Re^{0.25}} \left(1 + \frac{2.752}{(s/d)^{1.29}}\right) ) |

| Laminar Flow | ( Nu = 4.612 \left(1 + 0.0951 Gz^{0.894}\right)^{2.5} ) (Complex dependency on Sw) | ( \lambda = \frac{15.767}{Re} \left(1 + 10^{-6} Sw^{2.55}\right)^{0.16} ) |

| Transition Flow | ( Nu = 0.3 Re^{0.6} Pr^{0.43}_{f} \left(0.5 + \frac{8}{\pi^2}(s/d)^2\right)^{-0.135} ) | ( \lambda = \frac{6.34}{Re^{0.474}} \left(0.5 + \frac{8}{\pi^2}(s/d)^2\right)^{-0.263} + \frac{25.6}{Re} ) |

Note: ( Re ) = Reynolds number; ( Pr ) = Prandtl number; ( s/d ) = twist ratio (swirl pitch / tube diameter); ( Gz ) = Graetz number; ( Sw ) = Swirl parameter [23].

Experimental Protocols and Methodologies

Protocol 1: Comparative Thermal-Hydraulic Analysis of Flow Configurations

This protocol outlines the methodology for comparing parallel and counter-flow configurations using Computational Fluid Dynamics (CFD), as applied to a Dual Fluid Reactor [22].

- Objective: To evaluate and compare the thermal-hydraulic performance, including heat transfer efficiency, temperature gradients, velocity profiles, and swirling effects, between parallel and counter-flow configurations.

- Computational Model:

- Geometry: A 3D model of the reactor core is created. To save computational resources, simulations can often be performed on a symmetric segment (e.g., a quarter) of the full domain.

- Governing Equations: The time-averaged mass, momentum, and energy conservation equations are solved.

- Turbulence and Heat Transfer Model:

- The Reynolds-Averaged Navier-Stokes (RANS) framework is employed.

- A variable turbulent Prandtl number model is critical for accurate simulation of fluids with low Prandtl numbers (e.g., liquid metals). The Kays correlation is recommended: ( Prt = 0.85 + \frac{0.7}{Pet} ), where ( Pe_t ) is the turbulent Péclet number [22].

- Boundary Conditions: Set inlet flow rates and temperatures for hot and cold streams, and specify pressure or outflow conditions at the outlets.

- Simulation and Analysis:

- Run steady-state simulations for both configurations.

- Post-process the results to extract:

- Temperature and velocity field contours.

- Profiles of temperature and velocity along specified paths.

- Quantification of swirling intensity and identification of vortex structures.

- Calculation of global performance metrics like heat transfer rate and pressure drop.

Protocol 2: Experimental Analysis of Swirling Flow and Heat Transfer

This protocol describes an experimental approach to characterize the performance of different swirlers in a heat exchanger setup [23].

- Objective: To determine the heat transfer enhancement and pressure drop associated with different swirlers (e.g., constant vs. variable twist pitch) under controlled conditions.

- Experimental Setup:

- Apparatus: A vertical "pipe-in-pipe" heat exchanger with counter-current coolant flow. The test section is equipped with a swirler insertion port.

- Instrumentation:

- Thermocouples: For measuring the temperature field along the length and at the outlet of the test section.

- Pressure Transducers: For measuring the pressure drop across the test section.

- Flow Meters: For measuring the flow rates of the hot and cold streams.

- Procedure:

- Install a specific swirler into the test section.

- Set the flow rates of the hot internal fluid and the cold external coolant to desired values.

- Allow the system to reach steady-state conditions.

- Record temperature readings at all thermocouples and the pressure drop.

- Repeat measurements for various combinations of flow rates.

- Repeat the entire procedure for each swirler geometry under investigation.

- Data Analysis:

- Calculate the heat transfer coefficient and Nusselt number for each test case.

- Calculate the friction factor.

- Plot Nu and λ against Reynolds number for each swirler.

- Establish an efficiency criterion (e.g., ( \frac{Nu/Nu{smooth}}{\lambda/\lambda{smooth}}^{1/3} )) to compare the overall performance of different swirlers against a smooth pipe [23].

Visualization of Core Concepts and Workflows

Relationship Between Flow, Heat, and Swirl

The following diagram illustrates the logical relationships and feedback loops between flow patterns, swirling effects, and temperature distribution, which collectively determine system performance.

Diagram 1: Interplay of key parameters affecting system performance.

Workflow for CFD-Based Reactor Analysis

This diagram outlines the structured workflow for conducting a computational analysis of a reactor system, as detailed in Experimental Protocol 1.

Diagram 2: CFD analysis workflow for reactor design.

The Scientist's Toolkit: Essential Research Reagents and Materials

This section details key components and reagents used in experimental setups for studying temperature gradients and flow patterns, particularly in the context of parallel reactor systems [5] [1] [26].

Table 4: Essential Research Reagent Solutions and Materials

| Item | Function / Application | Key Characteristics |

|---|---|---|

| Parallel Reactor Stations | Enables high-throughput screening of reactions under controlled, parallel conditions. | Multiple independent reaction vessels; independent control of T, P, and stirring [26]. |

| Twisted Tape Swirlers | Passive heat transfer intensifier; induces swirling flow in tubular reactors and heat exchangers. | Simple, low-cost insert; defined by twist ratio (s/d); creates secondary flows [23]. |

| Bayesian Optimization Algorithm | Data-driven control software for automated reaction optimization over continuous & categorical variables. | Enables iterative experimental design; reduces time and material consumption [5]. |

| Fluoropolymer Tubing Reactor | Flexible and chemically resistant material for constructing microreactors. | High surface-to-volume ratio; excellent heat transfer; broad chemical compatibility [5]. |

| On-line HPLC System | Integrated analytics for real-time evaluation of reaction outcomes. | Provides immediate feedback; eliminates need for manual quenching and sampling [5]. |

| Liquid Handling Robot | Automated preparation and dosing of reaction mixtures. | Improves reproducibility; enables high-throughput experimentation [5]. |

The control of temperature gradients, flow patterns, and swirling effects is a complex but essential aspect of optimizing system performance in research and industrial applications. This whitepaper has demonstrated that counter-flow configurations generally offer superior heat transfer efficiency and temperature uniformity compared to parallel flow, while swirling flows are a powerful tool for enhancing mixing and heat transfer, albeit at the cost of increased pressure drop. The provided quantitative data, detailed experimental protocols, and visualizations offer a foundation for researchers to design, analyze, and optimize their systems. For drug development professionals, leveraging these principles through advanced tools like parallel reactors and machine learning-driven optimization promises accelerated discovery and development cycles, underpinned by a deeper understanding of fundamental thermal-fluid processes.

Implementation and Workflow Integration: Advanced Methodologies for Precision Temperature Control

Precise temperature control is a fundamental requirement in microfluidic technology, enabling advancements in a wide range of biological applications from rapid nucleic acid amplification and targeted cancer therapy to efficient cellular lysis [27]. The evolution of lab-on-a-chip devices necessitates the integration of robust, miniaturized thermal management systems that can deliver accurate spatial and temporal temperature profiles. Among the various techniques developed, induction, photothermal, and electrothermal (Joule) heating have emerged as prominent mechanisms for integrated thermal control. These methods facilitate direct, rapid, and localized heating within microfluidic systems, overcoming limitations of conventional external heaters [28] [29]. This guide provides a technical examination of these three core heating mechanisms, detailing their operating principles, implementation protocols, and performance characteristics to support research and development in parallel reactor temperature control.

Core Heating Mechanisms: Principles and Comparisons

The selection of a heating mechanism is critical in microfluidic design, with induction, photothermal, and electrothermal methods each offering distinct advantages for different application scenarios.

Electrothermal or Joule heating operates on the principle of power dissipation when an electric current passes through a resistive conductor. The generated power (P) is given by ( P = I^2R ) or ( P = V^2/R ), where I is the current, V is the voltage, and R is the electrical resistance. This heat is then transferred to the fluid within the microchannel through conduction [28] [29]. Joule heating enables rapid temperature ramp rates—exceeding 1000 °C/s in some implementations—and can achieve temperatures from ambient to 130 °C, making it suitable for applications like on-chip PCR [28].

Photothermal heating utilizes electromagnetic radiation, typically from lasers or LEDs, to excite nanoparticles or dyes within the fluid. These photothermal agents absorb photon energy and convert it to thermal energy through non-radiative relaxation processes [27]. The heating is highly localized to the vicinity of the nanoparticles, enabling precise thermal patterning without significantly heating the entire device substrate. Gold nanorods, for instance, have achieved heating rates of 12 °C/s under 808 nm laser irradiation [30].

Induction heating employs alternating magnetic fields to generate eddy currents within conductive materials, such as embedded metal nanoparticles or micro-electrodes. These currents encounter electrical resistance, resulting in Joule heating of the material [27]. The inductive coupling allows for non-contact heating through the device substrate, enabling efficient thermal transfer while isolating the power source from the fluidic pathways.

Table 1: Comparative Analysis of Microfluidic Heating Mechanisms

| Heating Mechanism | Operating Principle | Typical Temp. Range | Max. Ramp Rate | Spatial Resolution | Integration Level | Key Applications |

|---|---|---|---|---|---|---|

| Electrothermal (Joule) | Current through resistive element [28] | 25–130 °C [28] | >1000 °C/s [28] | Moderate (channel-level) [29] | High (on-chip) [28] | PCR, TGF, mixing [28] [29] |

| Photothermal | Light absorption by nanoparticles [27] | Ambient to >100 °C [27] | ~12 °C/s [30] | High (sub-cellular) [27] | Moderate (external source) [27] | Cellular lysis, cancer therapy [27] |

| Induction | Magnetic field on nanoparticles [27] | Not specified in results | Not specified in results | Moderate to High [27] | High (on-chip) [27] | Hyperthermia, droplet control [27] |

Table 2: Typical Power Requirements and Control Characteristics

| Heating Mechanism | Power Requirement | Control Method | Response Time | Temperature Homogeneity | Gradient Generation Capability |

|---|---|---|---|---|---|

| Electrothermal (Joule) | Up to 2.2 W [28] | PID on current/voltage [30] | Milliseconds-seconds [28] | High with design [29] | Yes (via electrode patterning) [28] |

| Photothermal | ~500 mW (laser) [28] | PID on laser power [27] | Seconds [30] | Localized to NPs [27] | Yes (via beam shaping) [27] |

| Induction | Varies with coil design [27] | PWM on magnetic field [27] | Seconds [27] | Dependent on NP distribution [27] | Possible with field focusing [27] |

Experimental Implementation and Protocols

Successful implementation of heating mechanisms requires careful attention to material selection, fabrication techniques, and control systems. Below are detailed methodologies for integrating each heating approach.

Electrothermal (Joule) Heating System

Integrated Microheater Fabrication:

- Substrate Preparation: Begin with a clean glass or silicon wafer. Deposit a 50-200 nm layer of platinum or indium tin oxide (ITO) via sputtering or evaporation. These materials are preferred for their stable resistive properties and biocompatibility [29].

- Patterning: Apply photoresist and pattern microheater designs using standard photolithography. The design typically features serpentine patterns to maximize resistance and heating uniformity within the target microchannel area.

- Etching: Use wet or dry etching to remove excess metal, creating the final microheater structure.

- Insulation: Deposit a thin dielectric layer (e.g., silicon nitride, SU-8) over the microheater for electrical insulation and fluid compatibility.

- Bonding: Bond the substrate containing microheaters with the PDMS microfluidic channel layer using oxygen plasma treatment [29].

Temperature Control Protocol:

- Calibration: Correlate electrical resistance of the microheater with temperature by measuring its resistance at known reference temperatures. Platinum's linear resistance-temperature relationship simplifies this process [29].

- Controller Implementation: Employ a PID feedback controller. Connect the microheater in a Wheatstone bridge configuration, using the imbalance voltage to drive a power amplifier that supplies the microheater.

- Validation: Use an infrared camera or integrated fluorescent dyes (e.g., Rhodamine B) to map temperature distribution during operation, verifying setpoint accuracy and homogeneity [28].

Photothermal Heating System

Nanoparticle Synthesis and Functionalization:

- Synthesis: Prepare gold nanorods using seed-mediated growth. First, create a seed solution by reducing chloroauric acid with sodium borohydride in the presence of cetyltrimethylammonium bromide (CTAB). Then, add seeds to a growth solution containing chloroauric acid, CTAB, and silver nitrate, with ascorbic acid as a reducing agent [30].