Precision Temperature Control in Parallel Reactors: Ensuring Reproducibility, Efficiency, and Product Quality in Pharmaceutical Development

This article explores the critical role of precise temperature control in parallel reactor systems for pharmaceutical research and drug development.

Precision Temperature Control in Parallel Reactors: Ensuring Reproducibility, Efficiency, and Product Quality in Pharmaceutical Development

Abstract

This article explores the critical role of precise temperature control in parallel reactor systems for pharmaceutical research and drug development. It establishes the fundamental impact of temperature on reaction kinetics and product yield, details advanced control methodologies from PID to AI-driven systems, and provides strategies for troubleshooting common thermal management challenges. By presenting validation frameworks and comparative analyses of control architectures, this guide equips scientists with the knowledge to design robust, reproducible, and efficient reaction screening and optimization campaigns, ultimately accelerating the development of new therapeutics.

The Critical Role of Temperature in Reaction Outcomes and System Design

Impact on Kinetics, Selectivity, and Product Distribution

In parallel reactor research, precise temperature control is not merely an operational detail but a fundamental determinant of experimental success. It directly governs reaction kinetics, product selectivity, and final product distribution, especially when multiple reactions compete for the same reactant. Modern automated platforms, such as the droplet reactor system featuring multiple independent parallel channels, enable high-throughput screening under meticulously controlled conditions [1]. The ability to independently control each reactor's temperature is crucial for generating reproducible, high-fidelity data essential for both reaction optimization and kinetic studies [1]. This technical guide examines the profound impact of temperature control within the context of parallel reactors, providing researchers with the theoretical foundation and practical methodologies needed to harness its full potential.

Theoretical Foundations: Temperature, Kinetics, and Selectivity

Temperature Dependence of Reaction Kinetics

The rate of a chemical reaction exhibits a strong, exponential dependence on temperature, as described by the Arrhenius equation: ( k = Ae^{-Ea/RT} ) where ( k ) is the rate constant, ( A ) is the pre-exponential factor, ( Ea ) is the activation energy, ( R ) is the gas constant, and ( T ) is the absolute temperature [2]. In a parallel reaction system where a single reactant ( A ) can form products ( B ) and ( C ) via two pathways with rate constants ( k1 ) and ( k2 ), the rate of formation for each product is given by:

- ( \frac{d[B]}{dt} = k_1[A] )

- ( \frac{d[C]}{dt} = k_2[A] ) [2]

Temperature as a Tool for Controlling Selectivity

In parallel reactions, the product distribution is determined by the ratio of the rate constants. For the aforementioned system:

- Ratio of products: ( \frac{[B]}{[C]} = \frac{k1}{k2} ) [2] Consequently, any factor affecting the relative values of ( k1 ) and ( k2 ) will directly influence selectivity. Since activation energy (( Ea )) governs a rate constant's sensitivity to temperature, the pathway with the higher ( Ea ) will experience a more dramatic rate increase with rising temperature [2]. This principle allows researchers to manipulate selectivity by strategically adjusting the reaction temperature to favor the desired pathway.

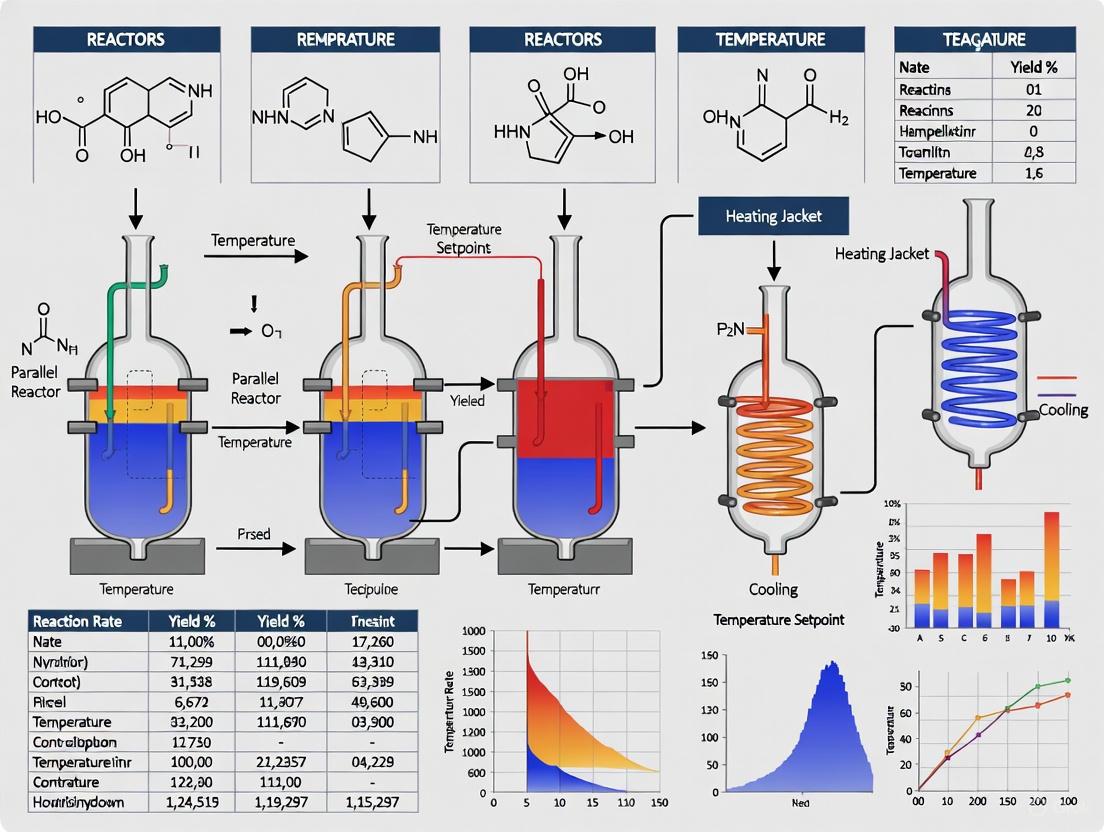

The diagram below illustrates how temperature influences the competition between parallel reaction pathways and the resulting product distribution.

Temperature Control Methods for Parallel Photoreactors

Selecting an appropriate temperature control system is critical for the performance of parallel photoreactors. The choice depends on reaction requirements, scalability, energy efficiency, and cost [3].

Table 1: Comparison of Temperature Control Methods for Parallel Photoreactors

| Method | Mechanism | Best For | Advantages | Limitations |

|---|---|---|---|---|

| Peltier-Based Systems [3] | Thermoelectric effect for heating/cooling | Small-scale reactions, rapid temperature changes | Compact design, precise control, no moving parts | Efficiency decreases at high ΔT, may need auxiliary cooling |

| Liquid Circulation [3] | Heat transfer fluid (e.g., water, oil) | Large-scale or exothermic reactions | High heat capacity, uniform temperature distribution | Requires more infrastructure and maintenance |

| Air Cooling [3] | Fans or natural convection | Low-heat-load applications | Simple, cost-effective, easy to maintain | Less effective for precise regulation or high-heat-load reactions |

Advanced parallel reactor platforms, like the automated droplet system with ten independent reactor channels, integrate these control methods to maintain precise temperatures (0–200 °C, solvent-dependent) across all experiments simultaneously [1]. This independent control is vital for meaningful reaction optimization and kinetics investigation, as it allows each reaction to proceed at its ideal temperature without cross-talk or compromise between parallel experiments [1].

Experimental Protocols and Workflow

Integrating precise temperature control into an automated, machine-learning-driven workflow significantly accelerates reaction optimization. The following protocol outlines this process for a parallel reactor system.

Protocol: Automated Reaction Optimization with Integrated Temperature Control

Objective: To efficiently identify optimal reaction conditions (including temperature) that maximize yield and selectivity for a given transformation using a parallelized reactor platform and machine learning guidance.

Materials and Equipment:

- Parallel reactor platform with independent temperature control per channel (e.g., 10-channel droplet reactor [1])

- Automated liquid handler

- On-line HPLC or similar analytical system

- Integrated control software and scheduling algorithm

Procedure:

- Reaction Space Definition: Define the combinatorial reaction condition space, including categorical variables (e.g., solvent, ligand) and continuous variables (e.g., temperature, concentration). Apply filters to exclude impractical or unsafe condition combinations [4].

- Initial Experiment Selection: Use an algorithmic sampling method (e.g., Sobol sampling) to select an initial batch of experiments. This ensures the reaction space is explored widely and increases the likelihood of finding promising regions [4].

- Experiment Execution and Analysis: a. The control software schedules operations, orchestrating the liquid handler and reactor bank. b. Reactions are executed in parallel reactors, with each channel maintaining its specified temperature [1]. c. Upon completion, reaction outcomes (e.g., yield, selectivity) are automatically analyzed by the on-line HPLC [1].

- Machine Learning-Guided Iteration: a. The experimental data is used to train a machine learning model (e.g., a Gaussian Process regressor) to predict reaction outcomes and their uncertainties for all possible conditions [4]. b. A multi-objective acquisition function (e.g., q-NParEgo, TS-HVI) uses the model's predictions to select the next batch of experiments that best balance exploration of unknown conditions and exploitation of currently promising ones [4].

- Iteration and Termination: Steps 3 and 4 are repeated. The campaign terminates when performance converges, objectives are met, or the experimental budget is exhausted [4].

The workflow diagram below visualizes this iterative, closed-loop optimization process.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Materials for Parallel Reaction Optimization

| Item | Function / Relevance | Example / Note |

|---|---|---|

| Non-Precious Metal Catalysts [4] | Lower-cost, earth-abundant alternatives to precious metals like Palladium. | Nickel catalysts for Suzuki and Buchwald-Hartwig couplings. |

| Solvent Library [4] | Screening solvent effects on kinetics and selectivity; adheres to pharmaceutical guidelines. | A diverse set selected for broad chemical compatibility and varying polarity. |

| Ligand Library [4] | Critical for modulating catalyst activity and selectivity, especially with non-precious metals. | A key categorical variable in ML-driven optimization campaigns. |

| Heat Transfer Fluids [3] | Medium for temperature regulation in liquid circulation systems. | Water or specialized oils, chosen for operational temperature range. |

Case Studies in Pharmaceutical Process Development

The integration of precise temperature control with highly parallelized experimentation and machine learning has led to groundbreaking successes in industrial process development.

Case Study 1: Nickel-Catalyzed Suzuki Reaction Optimization: A traditional, chemist-designed HTE approach failed to find successful conditions for this challenging transformation. However, an ML-driven workflow exploring an 88,000-condition search space in a 96-well HTE format identified conditions achieving a 76% area percent (AP) yield and 92% selectivity. The algorithm's ability to navigate complex variable interactions, including temperature, was key to this success [4].

Case Study 2: Accelerated API Synthesis: In the process development for an Active Pharmaceutical Ingredient (API) involving a Ni-catalyzed Suzuki coupling and a Pd-catalyzed Buchwald-Hartwig reaction, the ML-driven approach identified multiple conditions achieving >95% yield and selectivity for both transformations. This approach led to improved process conditions at scale in just 4 weeks, compared to a previous 6-month development campaign, dramatically accelerating the timeline [4].

Temperature control is a cornerstone of effective parallel reactor research, wielding direct and powerful influence over kinetic rates, reaction selectivity, and ultimate product distribution. As the case studies demonstrate, coupling this precise environmental control with the throughput of parallelized systems and the intelligence of machine learning creates a transformative paradigm for chemical research and development. This synergistic approach enables researchers to navigate vast reaction spaces with unprecedented efficiency, accelerating the discovery and optimization of chemical processes from laboratory curiosity to scalable industrial reality.

In the pursuit of accelerated chemical research and drug development, parallel reactor systems have become indispensable. These systems enable the simultaneous execution of multiple experiments, dramatically increasing throughput for reaction screening and optimization [1] [5]. The performance of these systems, and consequently the validity of the data they produce, rests upon three fundamental technical criteria: reproducibility, range, and fidelity. Within a parallel reactor, these criteria are profoundly influenced by the precision and stability of temperature control. Temperature is not merely a setting; it is a core reaction parameter that dictates kinetics, selectivity, yield, and mechanism. Inadequate temperature control introduces variability that undermines experimental integrity, making it impossible to distinguish true chemical effects from system-induced artifacts. This whitepaper defines these critical performance criteria, details their dependence on temperature management, and provides researchers with the methodological frameworks for their rigorous assessment.

Core Performance Criteria in Parallel Reactors

Reproducibility

Reproducibility refers to the ability of a parallel reactor system to yield consistent results under identical nominal conditions across its multiple reaction channels and over repeated experimental runs. It is the foundation for reliable and statistically significant data.

Quantifying Reproducibility: Performance is typically measured by the standard deviation in reaction outcomes (e.g., yield, conversion) across parallel channels. High-performance systems, like the automated droplet platform developed by MIT and Pfizer, target a standard deviation of less than 5% in reaction outcomes, a benchmark for excellent reproducibility [1] [6]. This low variability ensures that observed differences in outcome are due to intentional changes in reaction parameters rather than system noise.

The Critical Link to Temperature Control: Reproducibility is inextricably linked to temperature uniformity. In a study of a continuous fermentative biohydrogen process using three parallel reactors, even under strictly controlled conditions, full consistency was not achieved, underscoring the sensitivity of chemical and biological processes to operational disturbances [7]. Precise temperature control ensures that each reaction vessel in a parallel block experiences the same thermal environment. Inconsistent heating or cooling across vessels directly leads to divergent reaction rates and outcomes, invalidating comparative studies. Furthermore, stable temperature control prevents fluctuations that can alter reaction pathways during an experiment.

Range

Range defines the spectrum of operating conditions a parallel reactor system can accommodate. A broad range allows researchers to explore a more extensive experimental space, from mild to extreme conditions.

Key Operational Ranges:

- Temperature: Modern systems aim for a broad operating window. For instance, the parallel droplet reactor platform was designed for reaction temperatures from 0 to 200 °C (solvent-dependent) [1]. The PolyBLOCK 8 parallel reactor has been characterized to achieve internal reactor temperatures up to 180 °C [8].

- Pressure: Systems may support pressures up to 20 atm or higher, enabling reactions with volatile solvents or gaseous reagents [1].

- Reaction Scale: Systems can operate from nanoliter-scale droplets to millilitres [1] [9].

The Role of Temperature Range: The ability to accurately control temperature across a wide range is crucial for mimicking diverse reaction conditions, from cryogenic biological processes to high-temperature thermal transformations. This allows for the comprehensive investigation of reaction kinetics and the identification of optimal conditions for a given synthesis [1]. A wide temperature range also future-proofs equipment against evolving research needs.

Fidelity

Fidelity is the degree to which the conditions set by the researcher (e.g., setpoint temperature) are faithfully replicated and maintained within the actual reaction mixture. It reflects the "truthfulness" of the system's control.

Defining Fidelity: High fidelity means the recorded and controlled parameters match the actual experimental environment. Low fidelity introduces a hidden variable, as the assumed reaction conditions differ from the true conditions.

Temperature Fidelity in Practice: Achieving high temperature fidelity is an engineering challenge. Factors such as reactor material (glass vs. metal), solvent volume, and heating mechanism create a difference between the setpoint and the actual reaction temperature. A characterization of the PolyBLOCK 8 revealed that the maximum difference between the internal reactor temperature and the external oil-bath circulator could be as high as 90 °C [8]. Furthermore, smaller reactor volumes (e.g., 8 mL in a 16 mL reactor) showed different heating profiles and a reduced maximum temperature difference of 80 °C [8]. This highlights that the reactor material and solvent volume are critical considerations in experimental design to ensure fidelity.

Table 1: Quantitative Performance Targets for Parallel Reactor Systems

| Performance Criterion | Target Metric | Exemplary System Performance |

|---|---|---|

| Reproducibility | Standard Deviation in Reaction Outcome | <5% [1] [6] |

| Temperature Range | Minimum to Maximum Operating Temperature | 0°C to 200°C [1] |

| Heating Rate | Ramp Rate Under Control | Up to 6°C/min with no significant overshoot [8] |

| Pressure Range | Maximum Operating Pressure | Up to 20 atm [1] |

Experimental Protocols for Performance Validation

To ensure data quality, researchers must routinely validate the performance of their parallel reactor systems. The following protocols provide a framework for this essential activity.

Protocol for Assessing Reproducibility

Aim: To determine the inter-reactor variability of the parallel system by running a standardized reaction across all channels under identical nominal conditions.

Materials:

- Parallel reactor station (e.g., PolyBLOCK 8 [8] or a custom droplet platform [1])

- A well-characterized, robust probe reaction (e.g., a known catalytic transformation or hydrolysis reaction)

- Analytical instrument (e.g., HPLC, GC)

Method:

- Preparation: Prepare a large, homogeneous master batch of the reaction mixture for the probe reaction.

- Loading: Dispense identical volumes of the reaction mixture into each reactor vessel.

- Operation: Set all reactors to the same predetermined conditions (temperature, stirring speed, pressure). For temperature, use a setpoint within the common working range (e.g., 80°C).

- Execution: Initiate the reactions simultaneously and allow them to proceed for a fixed duration.

- Sampling & Analysis: Quench and sample each reaction mixture at the end of the run. Analyze each sample using the chosen analytical method to determine the reaction outcome (e.g., percent yield or conversion).

Data Analysis:

- Calculate the average and standard deviation of the reaction outcome across all reactors.

- High reproducibility is indicated by a low coefficient of variation (standard deviation / mean × 100%). The benchmark is a standard deviation of less than 5% [1].

Protocol for Characterizing Temperature Fidelity and Range

Aim: To map the relationship between the system setpoint temperature and the actual temperature achieved within individual reactor vessels across the operational range.

Materials:

- Parallel reactor station

- External, calibrated temperature probes (e.g., fine-wire thermocouples) traceable to a national standard

- Data logger

Method:

- Setup: Fill reactor vessels with solvents commonly used in your research (e.g., water, DMSO, silicone oil). Fit each vessel with a calibrated temperature probe immersed in the solvent.

- Data Collection:

- Set the system to a series of temperature setpoints (e.g., 40, 80, 120, 160°C) covering its claimed range.

- For each setpoint, record both the system's internal sensor reading (if available) and the reading from the calibrated external probe once thermal equilibrium is reached.

- Repeat this for different reactor positions and with different solvent volumes to understand spatial and volume-dependent effects [8].

- Ramp Rate Test: Program a linear temperature ramp (e.g., +4°C/min and +6°C/min) and record the actual temperature profile. This assesses the system's ability to track dynamic setpoints without overshoot [8].

Data Analysis:

- Plot actual temperature vs. setpoint temperature. The deviation from the y=x line represents the system's fidelity error.

- Calculate the average offset and the maximum deviation observed. This data is critical for applying corrections to future experimental setpoints.

Table 2: Research Reagent Solutions for Parallel Reactor Characterization

| Item | Function & Importance |

|---|---|

| Calibrated Fine-Wire Thermocouple | Provides ground-truth measurement of the actual reaction temperature, essential for validating system fidelity and identifying calibration offsets. |

| Well-Characterized Probe Reaction | A chemically robust reaction with known kinetics used as a diagnostic tool to measure reproducibility and inter-reactor variability across the platform. |

| Silicone Oil Heat Transfer Fluid | A common heat transfer fluid with a broad liquid phase temperature range, enabling system characterization across a wide range of temperatures [8]. |

| Swappable Nanoliter-Scale Injection Rotors | Enables direct, automated sampling from microreactors for online analysis (e.g., HPLC), eliminating the need for dilution and preserving reaction integrity [1]. |

System Integration and Workflow

The integration of hardware, software, and experimental design is what transforms a parallel reactor from a simple heater-stirrer into an intelligent experimentation platform. The workflow below visualizes how reproducibility, range, and fidelity are embedded throughout a modern, automated optimization campaign.

This workflow is embodied in platforms like the one described by Eyke et al., which integrates a bank of parallel microfluidic reactors with an online HPLC and a Bayesian optimization algorithm [1] [6]. The scheduling algorithm orchestrates all parallel hardware operations to ensure both droplet integrity and overall efficiency. This closed-loop system exemplifies how high-fidelity control over parameters like temperature directly feeds into the generation of high-quality data, which the machine learning model uses to efficiently navigate the complex reaction landscape and identify optimal conditions with minimal experimental iterations [4].

Reproducibility, range, and fidelity are not isolated specifications but interconnected pillars supporting the integrity of high-throughput experimentation in parallel reactors. As this whitepaper establishes, precise temperature control is the unifying thread that binds these criteria together. It is the foundational element that enables researchers to trust their data, confidently explore vast experimental spaces, and accelerate the development of new pharmaceuticals and chemicals. The ongoing integration of advanced temperature control systems with machine learning and automation, as seen in platforms like Minerva [4] and the MIT/Pfizer droplet reactor [1], promises to further enhance the performance and capabilities of these essential research tools. By adhering to the validation protocols and understanding the critical importance of these performance criteria, researchers can fully leverage parallel reactor technology to drive innovation.

In parallel reactor research, where multiple experiments are conducted simultaneously to accelerate development, precise temperature control is not merely convenient but foundational to scientific integrity. The ability to maintain uniform thermal conditions across all reaction vessels is a critical determinant of success, directly impacting the reliability of kinetic studies, the accuracy of catalyst screening, and the reproducibility of synthetic pathways. Thermal gradients—spatial variations in temperature within a single reactor—and hotspots—localized areas of significantly elevated temperature—introduce profound risks that can compromise data quality and derail scale-up efforts. Effective thermal management ensures that each reactor in a parallel array operates under identical, well-defined conditions, enabling high-throughput experimentation (HTE) to generate statistically significant and comparable data. This technical analysis explores the mechanisms by which thermal non-uniformity jeopardizes experimental outcomes, details methodologies for its characterization and mitigation, and provides a quantitative framework for assessing its impact, thereby underscoring why meticulous temperature control is indispensable in parallel reactor research.

Mechanisms and Risks of Thermal Non-Uniformity

Thermal gradients and hotspots arise from complex interplays between reaction engineering, fluid dynamics, and heat transfer. Understanding their underlying mechanisms is the first step toward effective mitigation.

Fundamental Formation Mechanisms

- Inhomogeneous Mixing: Inefficient mixing of reactants and initiators can create localized microenvironments with varying concentrations. This non-uniformity leads to disparate reaction rates, causing some regions to generate heat much faster than others. In polymerization processes, such as in Low-Density Polyethylene (LDPE) tubular reactors, poor initiator dispersion is a primary cause of localized hotspots that can trigger dangerous thermal runaways [10].

- Exothermic Reactions: Most industrial chemical reactions are exothermic. If the heat generated by the reaction exceeds the system's heat removal capacity, the temperature rises, further accelerating the reaction rate in a positive feedback loop known as thermal runaway [11] [12].

- Inefficient Heat Transfer: The physical design of a reactor and the properties of its materials dictate its heat transfer efficiency. Systems with low thermal conductivity or inadequate heat exchange surface area struggle to remove heat uniformly, leading to the establishment of persistent thermal gradients [13].

- External Radiant Heating: In applications like photocatalysis, the high-powered light sources used to drive reactions can themselves be significant, non-uniform heat sources. This can create severe "heat island" effects, where samples directly under the light source become drastically hotter than their neighbors [14].

Consequences for Data Integrity and Scalability

The impacts of poor temperature control permeate every aspect of research and development.

- Compromised Reaction Kinetics and Selectivity: Temperature directly influences reaction rate constants and pathways. A gradient across a reactor vessel means that the reaction proceeds at different rates in different locations, making accurate kinetic modeling impossible. Furthermore, many complex reactions have competing pathways with different activation energies. A hotspot can favor a secondary, undesirable reaction, altering product selectivity and yielding misleading results about the system's true behavior [12].

- Irreproducible Results and False Conclusions: In parallel reactors, the primary value is comparative. If thermal gradients differ from well to well, the same reaction condition may yield different outcomes across the block. This lack of reproducibility makes it difficult to identify genuine trends, such as the performance of different catalysts, leading to false conclusions and poor decision-making [14].

- Accelerated Material Degradation and Safety Hazards: Sustained high temperatures at hotspots can degrade sensitive catalysts or reaction components, invalidating long-term stability studies. More critically, localized overheating can initiate exothermic decomposition reactions, potentially leading to a thermal runaway. This presents a severe safety risk at any scale, from benchtop to production [12] [10].

- Failed Scale-Up (The "Scale-Up Paradox"): A process optimized in a small-scale reactor with uncharacterized or uncontrolled hotspots is a prime candidate for failure upon scale-up. Larger reactors have fundamentally different heat and mass transfer characteristics. A thermal gradient that was negligible at 100 mL can become a catastrophic 50°C hotspot in a 10,000 L production vessel. This scale-up paradox is a major source of cost and delay in process development, underscoring that a process developed under non-uniform conditions is not truly optimized at all [11].

Table 1: Quantified Impact of Thermal Non-Uniformity in Various Systems

| System/Context | Observed Thermal Issue | Consequence | Source |

|---|---|---|---|

| Standard 96-well Photoredox Reactor | Heat gradient of up to ±13°C from LED array | Severe heat island effects; invalid comparison between wells | [14] |

| LDPE Tubular Reactor | Localized hotspots from poor initiator mixing | Fluctuations in local temperature, potential for ethylene decomposition and thermal runaway | [10] |

| PEM Fuel Cell (Large-load) | Temperature deviation under load fluctuations | Risk of membrane dehydration, local hotspots, and membrane perforation | [15] |

| Temperature Controlled Reactor (TCR) | Uniformity controlled to ±1°C | Enables reproducible high-throughput experimentation | [14] |

| Bench-scale Exothermic Reaction | Thermal runaway in larger vessels due to lower surface-to-volume ratio | Hazardous conditions requiring careful scale-up safety testing | [11] |

Experimental Characterization and Monitoring Methodologies

Accurately characterizing thermal landscapes is essential for diagnosing problems and validating solutions. The following experimental protocols and tools are critical for this task.

Protocol 1: Mapping Temperature Uniformity in a Parallel Reactor Block

Objective: To quantitatively assess the spatial temperature distribution across a parallel reactor block under standard operating conditions.

Materials:

- Parallel reactor system (e.g., 48-position block)

- Multi-channel temperature data logger

- Calibrated fine-gauge thermocouples (e.g., T-type) or RTDs (e.g., PT100), one for each monitored position

- Heat-transfer fluid circulator (e.g., JULABO Presto)

- Insulating mat

Methodology:

- Setup: Place the reactor block on the heating/cooling platform. Select a representative subset of reaction vessel positions (e.g., the four corners and the center) for monitoring.

- Sensor Placement: Insert a temperature sensor into each selected vessel, ensuring identical depth and placement. For dry runs, suspend the sensor in the center of the well. For wet runs, immerse the sensor in a solvent with similar thermal properties to the reaction mixture.

- Conditioning: Set the circulator to the desired target temperature (e.g., 70°C). Allow the system to reach a steady state as indicated by the master sensor of the circulator.

- Data Acquisition: Record the temperature from all sensors simultaneously at 5-second intervals for a minimum of 30 minutes after the system has stabilized.

- Data Analysis: Calculate the average temperature, the standard deviation, and the range (max-min) across all sensors. Visualize the data as a heat map to identify spatial patterns of gradients.

Protocol 2: Investigating Mixing-Induced Hotspots via CFD

Objective: To simulate and visualize the formation of temperature hotspots resulting from inadequate mixing of reactants, as exemplified in LDPE tubular reactor studies [10].

Materials:

- Computational Fluid Dynamics (CFD) software (e.g., ANSYS Fluent, COMSOL)

- Workstation with sufficient processing power

- Geometry of the reactor and mixer (e.g., intrusive tee-junction)

- Physical properties of reactants (density, viscosity, thermal conductivity)

Methodology:

- Model Setup: Create a 3D geometric model of the reactor, including the precise details of the mixing element (e.g., insertion length and diameter of a side-feed pipe).

- Mesh Generation: Discretize the geometry into a computational mesh, refining it in critical areas like the mixing zone to ensure accuracy.

- Physics Definition:

- Select appropriate turbulence models (e.g., k-ε or k-ω).

- Activate the Energy Equation to model heat transfer.

- Activate the Species Transport Equation to model reactant concentration.

- Define a Volumetric Reaction source term that links the reaction rate to local species concentration and temperature, incorporating the reaction enthalpy.

- Boundary Conditions: Set inlet flow rates and temperatures for the main and side feeds. Define wall boundaries, often as no-slip and adiabatic or with a fixed heat flux.

- Simulation & Analysis: Run the simulation until convergence. Post-process the results to visualize contours of temperature and species concentration. Identify recirculation zones or stagnant areas where reactants can accumulate and form hotspots. The study in [10] used this approach to discover a "climbing mixing" pattern that can influence hotspot formation.

Diagram 1: CFD Hotspot Analysis Workflow

Mitigation Strategies and Control Systems

A multi-faceted approach is required to effectively combat thermal gradients and hotspots, encompassing hardware design, advanced control algorithms, and strategic process operation.

Advanced Reactor Hardware and Design

- Integrated Fluid Circulation Systems: Temperature Controlled Reactors (TCRs) that circulate a heat-transfer fluid through a built-in path within the reactor block are highly effective. This design ensures that heat is added or removed uniformly from every vessel, achieving well-to-well temperature uniformity as tight as ±1°C, a drastic improvement over the ±13°C gradients found in standard blocks [14].

- Optimized Mixer Configurations: For tubular and continuous flow reactors, the design of the mixing element is paramount. CFD studies have shown that intrusive tee-junctions with specific geometries can induce flow patterns like "climbing mixing" that enhance the dispersion of a side-stream initiator into the main flow, thereby preventing the concentration pockets that lead to hotspots [10].

- Jacketed Reactors and Micro-Finned Tubes: Incorporating double jackets for coolant flow or using tubes with extended internal surfaces increases the effective heat transfer area, improving the system's capacity to maintain a uniform temperature [12] [16].

Sophisticated Control Algorithms

Moving beyond simple Proportional-Integral-Derivative (PID) control can yield significant robustness, especially under dynamic load conditions.

- Cascade Internal Model Control (IMC): This strategy uses a nested loop architecture. The outer loop calculates the required cooling action based on the temperature error, while the inner loop rapidly adjusts the coolant valve to achieve that action. This effectively handles disturbances in the coolant system. When combined with current feedforward for actuators like cooling fans, it can drastically reduce the time delay in responding to load changes, keeping temperature deviations within ±0.6°C even under large fluctuations [15].

- Model Predictive Control (MPC): MPC uses a dynamic model of the process to predict future temperatures and proactively computes optimal control actions. This is particularly effective for managing the thermal inertia of large systems and for constraint handling, preventing temperature overshoot [15] [12].

- Modified Smith Predictors: For systems with significant and variable time delays (e.g., slow fluid transport), a Smith predictor can effectively compensate for the delay, improving stability. A modified version integrated into a cascade IMC structure has demonstrated enhanced rejection of delayed disturbances [15].

Table 2: Performance Comparison of Advanced Thermal Management Strategies

| Control Strategy | Application Context | Key Performance Metric | Advantages | Limitations | |

|---|---|---|---|---|---|

| Cascade IMC with Current Feedforward (CS3) | 150 kW PEM Fuel Cell | Limits deviation to ±0.6 °C under large-load steps | Best responsiveness to load changes; reduces time delay | [15] | |

| Double Inner-Loop Cascade IMC with Smith Predictor (CS2) | 150 kW PEM Fuel Cell | Strongest temperature tracking under voltage decay and disturbances | Best robustness and delayed disturbance rejection | Slightly worse convergence than CS3 | [15] |

| PID with Peltier Elements | Microfluidic PCR Chip | Ramp rates of ~100°C/s for heating, ~90°C/s for cooling | Fast cycling; mature, widely available technology | Can require complex tuning; performance can degrade with non-linearities | [13] |

| Active Disturbance Rejection Control (ADRC) | General Nonlinear Systems | Estimates and compensates for "total disturbance" in real-time | Does not require a highly accurate process model | Complex to configure with multiple parameters; high computational cost | [15] |

The Scientist's Toolkit: Essential Solutions for Thermal Management

Table 3: Key Research Reagent Solutions for Thermal Management

| Item / Solution | Function in Thermal Management | Key Characteristics |

|---|---|---|

| Temperature Controlled Reactor (TCR) [14] | Provides a fluid-filled block to maintain consistent temperature around all samples in a parallel array. | Achieves well-to-well uniformity of ±1°C; compatible with various heat-transfer fluids; operates from -40°C to 82°C. |

| High-Precision Circulator (e.g., JULABO) [12] | Pumps a thermostatic fluid through a reactor jacket or TCR to add/remove heat with high accuracy. | Features self-tuning PID control; integrates with PT100 sensors; capable of complex temperature profiles. |

| PT100 Resistance Temperature Detector (RTD) [12] | Provides high-precision temperature monitoring and feedback for control systems. | High accuracy and stability over time; preferred for precise measurements in circulators. |

| Heat-Transfer Fluids (e.g., SYLTHERM, Glycols) [14] | Medium that transfers thermal energy between the circulator and the reactor block. | Varieties cover wide temperature ranges; selected for thermal stability, viscosity, and safety. |

| Computational Fluid Dynamics (CFD) Software [10] | Models fluid flow, heat transfer, and reactions to predict and diagnose thermal gradients and hotspots. | Enables virtual prototyping of mixers and reactors; identifies problematic flow patterns. |

Mitigating the risks posed by thermal gradients and hotspots requires a holistic strategy that integrates design, control, and characterization. The journey begins with selecting appropriate hardware, such as fluid-cooled parallel reactors, designed for intrinsic thermal uniformity. The next layer of defense is implementing sophisticated control algorithms like cascade IMC or MPC, which provide the robustness needed to maintain setpoints despite internal and external disturbances. Underpinning all these efforts is the rigorous experimental and computational characterization of the thermal environment, ensuring that gradients are not merely hidden but are understood and eliminated.

Diagram 2: Integrated Strategy for Thermal Management

This integrated approach transforms parallel reactor research from a high-speed screening tool into a reliable engine of discovery and development. By systematically controlling the thermal variable, researchers can generate data with uncompromised integrity, build accurate kinetic models, and develop processes that transition smoothly from benchtop to production, thereby fully realizing the promise of high-throughput methodologies in advancing science and technology.

Temperature control is a foundational element in chemical reaction engineering, directly influencing reaction kinetics, product yield, and safety. In parallel reactor systems, the imperative for precise temperature control is magnified, transitioning from maintaining a single setpoint to ensuring absolute thermal uniformity across multiple simultaneous reaction vessels. This whitepaper examines the unique thermal challenges inherent in parallel systems, which are not merely scaled versions of single reactor problems but present distinct obstacles related to heat distribution, load variation, and system interdependency. We detail the critical consequences of thermal imprecision, including unreliable catalyst evaluation and flawed scale-up data, and provide a technical guide to methodologies and technologies that enable researchers to overcome these challenges. The content is framed within the core thesis that mastering thermal control in parallel reactors is not an operational detail but a fundamental prerequisite for generating high-fidelity, reproducible research data that can confidently inform drug development and commercial process design.

In the drive for accelerated catalyst screening and reaction optimization, parallel reactor systems have become an indispensable tool for researchers and drug development professionals. These systems allow for the high-throughput testing of multiple catalysts or reaction conditions simultaneously. However, their performance is critically dependent on a single, often underestimated factor: thermal control uniformity. The central thesis of this discussion is that without meticulous thermal management, the fundamental advantage of parallel systems—the generation of directly comparable, high-quality data—is compromised.

Temperature is a primary variable affecting reaction rate, selectivity, and mechanism. In a single reactor system, the challenge is to maintain a consistent temperature throughout the reaction volume. In a parallel system, this challenge is compounded exponentially. The requirement shifts from controlling one temperature to ensuring that multiple reactors operate at identical temperatures, despite potential variations in catalyst activity, fluid flow, and heat loss between individual vessels. Even minor temperature gradients between reactors can lead to significant differences in reaction outcomes, making it impossible to distinguish between a truly superior catalyst and one that merely operated at a slightly higher temperature. Therefore, precision thermal control is not a peripheral support function; it is the bedrock upon which valid and reliable parallel reactor research is built [17].

Comparative Analysis: Single vs. Parallel Reactor Systems

The transition from single to parallel reactor architectures fundamentally transforms the nature of thermal management. The table below summarizes the key distinctions that define the unique control challenges in parallel systems.

Table 1: Thermal Control Challenges in Single vs. Parallel Reactor Systems

| Aspect | Single Reactor System | Parallel Reactor System |

|---|---|---|

| Primary Control Objective | Maintain stable temperature at a single setpoint. | Ensure uniform temperature across all reactors simultaneously. |

| System Complexity | Relatively low; a single control loop. | High; multiple, potentially interacting control loops. |

| Impact of Heat Load Variation | Managed for one reactor; no cross-reactor impact. | A varying load in one reactor can disrupt the thermal equilibrium of the entire system. |

| Heat Distribution Challenge | Ensuring internal uniformity within one vessel. | Overcoming inherent physical layout differences (e.g., edge effects) to achieve inter-reactor uniformity. |

| Data Comparability | Not applicable. | Directly contingent on thermal uniformity; non-uniformity introduces critical experimental error. |

| Scalability of Solution | Standard heating mantles, jackets, or internal coils. | Requires specialized, integrated systems like microfluidic distributors and individual reactor control [17]. |

The core challenge in parallel systems, as illuminated by the concepts of precision and accuracy, is the equal distribution of process conditions. In this context, accuracy can be defined as the closeness of a measured temperature in any reactor to the desired setpoint, while precision is the closeness of the temperature measurements across all reactors to each other. A system must be both accurate and precise to generate truly comparable data. Traditional systems using capillaries for flow distribution are susceptible to manual tuning errors and cannot actively compensate for changes during operation, leading to a loss of precision [17].

Unique Thermal Control Challenges in Parallel Systems

The architecture of parallel reactor systems introduces specific, compounded thermal challenges that are absent in single-reactor setups.

The Interdependency of Flow and Thermal Distribution

In parallel systems, fluid flow and heat transfer are intrinsically linked. A common feed flow is distributed to multiple reactors, often through a network of capillaries or, more advancedly, a microfluidic flow distributor chip [17]. The precision of this flow distribution is a prerequisite for thermal uniformity. If the flow rate to one reactor differs from the others, the residence time and heat capacity of the fluid stream change, directly leading to a temperature discrepancy. Furthermore, changes in catalyst bed pressure drop or partial blockages over time can alter flow distribution dynamically, making sustained thermal precision difficult with passive hardware alone.

Dynamic and Variable Heat Loads

Unlike a single reactor running a homogeneous reaction, a parallel system may contain reactors with different catalysts, each exhibiting unique reaction kinetics and exothermicity. This creates a scenario of highly variable and dynamic heat loads across the reactor block. A highly active catalyst in one reactor may generate significant exothermic heat, while a less active one in an adjacent reactor may require constant heating. A traditional single-zone heating system for the entire block is incapable of managing this variation, leading to severe temperature imbalances that invalidate the experimental results.

The Critical Need for Individual Reactor Control

The challenges of interdependency and variable heat loads culminate in the requirement for individual reactor control. Passive systems, such as carefully balanced capillary networks, lack the feedback mechanism to respond to changing conditions during an experiment. As noted in research on parallel reactor systems, a change in pressure drop in one reactor "will have a direct impact on the precision of the feed distribution," subsequently affecting thermal performance [17]. The solution is active, per-reactor control. Technologies such as the Reactor Pressure Control (RPC) module demonstrate this principle by actively controlling the inlet pressure at each reactor to maintain precise flow distribution, which is a direct analogue to the requirement for individual thermal control to maintain temperature uniformity [17].

Methodologies for Effective Thermal Control

Addressing the challenges outlined requires a combination of advanced hardware design and sophisticated control strategies.

System Architecture and Hardware Solutions

The foundation of effective thermal management is laid by the physical design of the system.

- High-Precision Microfluidic Distributors: These chips, often made of silicon or glass, are engineered with micro-scale channels to provide a guaranteed flow distribution with high precision (< 0.5% RSD as reported in one system) [17]. This establishes a uniform starting point for thermal conditions across all reactors.

- Integrated Heating and Cooling Loops: Drawing from best practices in other industries, effective Thermal Management Systems (TMS) often segregate components into different cooling loops based on their heat load magnitudes and temperature requirements [18]. For example, a high-power motor-inverter loop might be separate from a battery-converter loop. In a chemical reactor context, this translates to separate thermal fluid loops for high-exothermicity reactors versus low-energy reactions, or for different temperature zones.

- Active and Passive Cooling Strategies: A robust system employs multiple cooling strategies. Passive cooling through natural convection and radiation requires no power and adds no complexity but is often insufficient for high heat loads. Active cooling, using pumped fluids or refrigerants, provides powerful heat removal but consumes energy and adds subsystems. The optimal approach is often a hybrid strategy that uses passive cooling where possible and seamlessly engages active cooling when heat loads exceed a threshold, thereby minimizing total power consumption while ensuring control [18].

Control Strategies and Experimental Protocols

Hardware must be directed by intelligent software and validated experimental protocols.

- Model Predictive Control (MPC): Advanced control strategies like MPC use a dynamic model of the reactor system to predict future temperatures and proactively adjust heating or cooling inputs. This is far more effective than simple reactive PID controllers at managing the thermal inertia and cross-talk in a tightly packed parallel reactor block.

- Protocol for Thermal Performance Validation: Before commencing catalytic testing, researchers should execute a validation protocol.

- Step 1: With reactors loaded with an inert material, set all reactors to a common target temperature.

- Step 2: Use the system's data logging to record the temperature in each reactor over a period of time until stable.

- Step 3: Calculate the mean temperature and standard deviation across all reactors. The standard deviation is a direct measure of the system's inter-reactor thermal precision.

- Step 4: Repeat this process at multiple temperature setpoints across the intended operating range to fully characterize system performance.

The following diagram illustrates a generalized workflow for achieving and maintaining thermal control in a parallel reactor system, integrating both the hardware components and the logical decision processes.

Diagram: Parallel Reactor Thermal Control Workflow. This diagram outlines the decision process for managing thermal modes in a parallel reactor system to maintain uniformity.

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing effective thermal control requires more than just reactors and heaters. The following table details key components and their functions in a typical advanced parallel reactor setup.

Table 2: Essential Components for Thermal Control in Parallel Reactor Systems

| Component | Function | Key Consideration |

|---|---|---|

| Microfluidic Distributor Chip | Precisely splits a common feed flow into multiple equal streams for each reactor, establishing a baseline for uniform conditions [17]. | Guaranteed flow distribution precision (e.g., < 0.5% RSD). |

| Individual Cartridge Heaters | Provides independent heating for each reactor vessel, allowing compensation for variable exothermicity and heat loss. | Response time and maximum power output. |

| In-line Temperature Sensors | PT100 or thermocouple sensors placed at the inlet and outlet of each reactor for real-time, per-reactor temperature monitoring. | Accuracy, response time, and chemical compatibility. |

| Coolant Control Valve Bank | A set of electronically controlled valves to adjust coolant flow through individual reactor jackets or heat exchangers. | Valve actuation speed and flow control resolution. |

| Back Pressure Regulator (BPR) | Maintains an elevated and consistent pressure within the reactor system, preventing solvent boil-off and ensuring consistent fluid properties [19]. | Setpoint accuracy and corrosion resistance. |

| Reactor Pressure Control (RPC) Module | Actively controls individual reactor inlet/outlet pressures to maintain precise flow distribution, indirectly securing thermal stability [17]. | Ability to compensate for catalyst pressure drop changes. |

| Thermal Insulation | Minimizes heat loss to the environment from each reactor and transfer lines, reducing external influences on reactor temperature. | Thermal conductivity and maximum service temperature. |

The pursuit of efficient and reliable research in catalysis and drug development has made parallel reactor systems a cornerstone of modern R&D. However, this paper has demonstrated that the data generated by these systems are only as valid as the thermal uniformity maintained across the reactor block. The challenges of flow-thermal interdependency, variable heat loads, and the need for individual control are unique to the parallel architecture and demand dedicated solutions. These solutions—ranging from precision microfluidic distributors and hybrid cooling strategies to advanced control protocols—are not mere accessories but essential components of a robust experimental setup. By recognizing thermal control as a fundamental research variable and investing in the appropriate "Scientist's Toolkit," researchers can ensure that their parallel reactor studies produce data of the highest fidelity, enabling confident decision-making in the journey from laboratory discovery to commercial application.

Advanced Control Architectures and Hardware for Parallel Reactor Platforms

The Critical Role of Temperature Control in Parallel Reactors

In parallel reactor systems, precise temperature control is not merely a convenience but a fundamental requirement for successful research and development. These systems, which enable the simultaneous execution of multiple experiments under varying conditions, rely on temperature stability to ensure reproducible results, effective scaling from laboratory to production, and comprehensive kinetic studies. The PolyBLOCK 8, a representative parallel reactor system, demonstrates this critical dependence through its capability to maintain different temperature setpoints across multiple reactors, typically achieving an 80°C range between the lowest and highest reactor temperatures [20]. This precise thermal management allows researchers to efficiently explore parameter spaces and accelerate development timelines for pharmaceutical compounds and specialty chemicals.

The consequences of inadequate temperature control are particularly pronounced in exothermic reactions, where the heat released can quickly lead to thermal runaway if not properly managed. This is especially dangerous during transitions from heating to cooling modes in batch operations [21]. Furthermore, temperature variations significantly impact reaction selectivity, as demonstrated in pharmaceutical attenuation studies where a 10°C temperature increase (from 25°C to 35°C) enhanced the removal rates of specific pharmaceuticals by 5-12% under certain redox conditions [22]. In parallel systems, where multiple reactions proceed simultaneously, maintaining independent precise temperature control for each reactor is essential for obtaining reliable, comparable data across all experimental conditions.

Fundamental Control Strategies

PID Control

Proportional-Integral-Derivative (PID) controllers represent the most widely deployed control algorithm in industrial processes, including chemical reactors. These controllers calculate the difference between a measured process variable and a desired setpoint (error), then apply correction based on proportional, integral, and derivative terms. The Parr 4848 Reactor Controller exemplifies modern PID implementation in reactor systems, featuring auto-tuning capabilities for precise temperature control with minimal overshoot, along with ramp and soak programming for complex temperature profiles [23].

Despite their widespread use, PID controllers face significant limitations when applied to nonlinear systems like chemical reactors. As operating conditions change, a single set of fixed PID parameters often proves inadequate. This challenge is particularly evident in Continuous Stirred Tank Reactors (CSTRs), where a PID controller designed for one conversion rate may perform poorly or even cause instability at different operating points [24]. Research demonstrates that using a family of PID controllers, each tuned for specific operating regions (C = 2 through 9), yields considerably better performance than a single controller across all conditions [24].

Table 1: PID Controller Performance at Different Operating Points in a CSTR

| Output Concentration (C) | Plant Stability | Controller Parameters (Kp, Ki, Kd) | Closed-Loop Performance |

|---|---|---|---|

| 2 | Stable | Tuned for C=2 | Satisfactory |

| 3 | Stable | Tuned for C=3 | Satisfactory |

| 4 | Unstable | Tuned for C=4 | Large overshoot |

| 5 | Unstable | Tuned for C=5 | Large overshoot |

| 6 | Unstable | Tuned for C=6 | Large overshoot |

| 7 | Unstable | Tuned for C=7 | Large overshoot |

| 8 | Stable | Tuned for C=8 | Satisfactory |

| 9 | Stable | Tuned for C=9 | Satisfactory |

Cascade Control

Cascade control architectures address complex dynamics by implementing multiple control loops arranged in a hierarchical structure. In reactor temperature control, a common implementation places a secondary loop (slave) for rapid disturbance rejection of jacket temperature, while a primary loop (master) maintains the core reactor temperature. This approach significantly improves disturbance rejection compared to single-loop configurations.

Recent research has introduced innovative parallel cascade control structures (PCCS) for nonlinear CSTRs, demonstrating superior performance over traditional series cascade configurations. In PCCS, both primary and secondary loops receive the same error signal simultaneously, enabling faster response to disturbances as the manipulated variable affects both responses concurrently [25]. For a third-order unstable CSTR model, the secondary loop controller is designed for enhanced regulatory performance, while the primary loop controller optimizes setpoint tracking [25]. This architecture provides greater flexibility in control design with reduced risk of controller interaction.

Figure 1: Parallel Cascade Control Structure for Reactor Temperature Control

Further advancements combine traditional PID with modern learning algorithms. In fluidized bed reactors for polyethylene production, a PID-DRL cascade control scheme places a Deep Reinforcement Learning controller in the secondary loop, outperforming conventional PID-only cascade control by reducing integral absolute error (IAE) by more than 50% [26].

Advanced Control: Model Predictive Control

Model Predictive Control (MPC) represents a significant advancement in control strategy by using a dynamic process model to predict future system behavior and compute optimal control actions through online optimization. Unlike PID controllers which react to current errors, MPC proactively determines control moves by solving a constrained optimization problem at each time step, making it particularly suited for processes with complex dynamics, constraints, and significant time delays.

In batch reactor applications, MPC has demonstrated exceptional capability in handling the challenging transition from heating to cooling modes in exothermic reactions. Traditional control strategies often struggle with this switching, but MPC can effectively manage the entire temperature trajectory from initial heat-up to setpoint maintenance [21]. For the highly nonlinear batch reactor system with parallel exothermic reactions, MPC utilizing multiple reduced-models running in series has shown robust performance even in the presence of plant/model mismatches [21].

Table 2: MPC Performance in Batch Reactor Temperature Control

| Control Aspect | Traditional Dual-Mode Control | Single-Model MPC | Multiple Reduced-Model MPC |

|---|---|---|---|

| Heating Phase | Open-loop, no feedback | Controlled using single model | Controlled using series of models |

| Cooling Phase | Switching to maximum cooling | Controlled using single model | Controlled using series of models |

| Model Mismatch Handling | Poor, no allowance for errors | Performance degradation | Robust performance |

| Computational Complexity | Low | Moderate | Higher but manageable |

The implementation of MPC typically involves three key steps in batch reactor control: (1) reference profile determination to establish the desired temperature trajectory, (2) operating condition selection at various points along the profile, and (3) model reduction to eliminate uncontrollable or unobservable states [21]. This approach ensures that the controller adapts to the changing dynamics throughout the batch process, maintaining precise temperature control despite the non-stationary operating conditions.

Emerging Approaches: Machine Learning and AI Integration

Reinforcement Learning in Reactor Control

Deep Reinforcement Learning (DRL) represents a paradigm shift in process control, combining the learning capabilities of neural networks with the decision-making framework of reinforcement learning. In the actor-critic framework specifically applied to reactor temperature control, the DRL agent (actor) interacts with the reactor environment, observing states and selecting actions to maximize cumulative reward, with an additional critic network evaluating the quality of the selected actions [26].

For fluidized bed polyethylene reactors, which exhibit significant time delays (approximately 5 minutes) and nonlinear dynamic behavior, a PID-DRL cascade control scheme has demonstrated substantial improvements over conventional approaches. The DRL controller in the secondary loop is trained using the Deep Deterministic Policy Gradient (DDPG) algorithm, with careful design of state, action, and reward functions to capture the system characteristics [26]. This hybrid approach leverages the reliability of PID control while incorporating the adaptability of DRL, resulting in improved setpoint tracking and disturbance rejection capabilities.

Machine Learning for Reaction Optimization

Beyond direct control, machine learning plays an increasingly important role in experimental optimization for parallel reactor systems. The Minerva framework exemplifies this approach, combining Bayesian optimization with automated high-throughput experimentation (HTE) to efficiently navigate complex reaction spaces [4]. This methodology is particularly valuable in pharmaceutical process development, where it has identified optimal conditions for Ni-catalyzed Suzuki coupling and Pd-catalyzed Buchwald-Hartwig reactions achieving >95% yield and selectivity.

The ML optimization workflow typically begins with quasi-random Sobol sampling to select initial experiments that maximally cover the reaction space [4]. A Gaussian Process regressor then predicts reaction outcomes and uncertainties for all possible conditions, guiding the selection of subsequent experiments through acquisition functions that balance exploration and exploitation. This approach has successfully navigated search spaces of up to 530 dimensions with batch sizes of 96 parallel reactions, dramatically accelerating process optimization timelines from months to weeks [4].

Figure 2: Machine Learning Optimization Workflow for Reaction Optimization

Experimental Protocols & Methodologies

Parallel Cascade Controller Synthesis for Nonlinear CSTR

The design of parallel cascade controllers for nonlinear CSTRs involves specific methodological steps to ensure robust performance:

System Identification: Model the dynamic behavior of the CSTR with a recirculating jacket heat transfer system as a third-order unstable transfer function [25].

Controller Synthesis: Apply model matching techniques in the frequency domain to design both secondary and primary loop controllers without approximating to lower-order systems [25].

Performance Validation: Conduct simulations using the nonlinear differential equations of the NCSTR rather than simplified transfer function models to ensure realistic performance assessment [25].

Robustness Testing: Evaluate controller performance under nominal, perturbed, and noisy conditions to verify disturbance rejection capabilities [25].

The parallel cascade control structure specifically employs a PI controller in the secondary loop designed for enhanced regulatory performance, and a PID controller in the primary loop optimized for setpoint tracking [25]. This configuration demonstrates satisfactory performance across all tested conditions, outperforming both series cascade control and simple parallel control structures.

Temperature Control in Exothermic Batch Reactors

For exothermic batch reactors, particularly those with complex reaction networks, temperature control requires specialized methodologies:

Reference Profile Determination: Establish closed-loop reference profiles using adaptive MPC controllers with specific tuning parameters (input weighting: 0.5, prediction horizon: 30, control horizon: 20) [21].

Operating Condition Selection: Identify pseudo steady-state conditions at various points along the reference profiles based on overall closed-loop system poles [21].

Model Reduction: Develop minimal-phase state-space models by eliminating uncontrollable and unobservable states through subspace identification methods [21].

This methodology successfully handles the challenging heating-cooling transition in exothermic batch reactors, achieving precise temperature control during both heating (0 ≤ t ≤ 17.1 minutes) and cooling (t ≥ 17.1 minutes) phases while minimizing temperature overshoot [21].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagent Solutions for Parallel Reactor Systems

| Reagent/Equipment | Function in Research | Application Example |

|---|---|---|

| PolyBLOCK 8 Parallel Reactor System | Provides eight independently controlled reaction zones for high-throughput experimentation | Enables temperature range of 80°C across reactors with heating rates up to 6°C/min [20] |

| Silicone Oil (Huber P20-275) | Heat transfer fluid for temperature control in jacketed reactors | Maintains temperature stability across broad operating range in parallel reactors [20] |

| Mn-Na₂WO₄/SiO₂ Catalyst | Metal oxide catalyst for oxidative coupling of methane (OCM) reactions | Achieves C₂ selectivity of 23% in packed bed membrane reactors [27] |

| BSCF (Ba₀.₅Sr₀.₅Co₀.₈Fe₀.₂O₃−δ) | Oxygen carrier material for chemical looping reactors | Enhances O₂ storage capacity and improves C₂ yield in OCM reactions [27] |

| Ni-catalyzed Suzuki Reaction Kit | Earth-abundant metal catalysis for cross-coupling reactions | Pharmaceutical process development with >95% yield achieved through ML optimization [4] |

Temperature control in parallel reactor systems has evolved significantly from basic PID algorithms to sophisticated model-based and learning-driven approaches. The fundamental limitation of single PID controllers for nonlinear systems operating across wide ranges has been addressed through advanced strategies including gain-scheduled PID families, cascade control architectures, model predictive control, and emerging deep reinforcement learning methods. The integration of machine learning with automated high-throughput experimentation further accelerates reaction optimization, enabling rapid identification of optimal conditions for complex chemical transformations. As parallel reactor technology continues to advance, the synergy between traditional control fundamentals and modern artificial intelligence approaches will undoubtedly yield even more powerful tools for chemical research and pharmaceutical development.

In parallel reactor research, precise temperature control is not merely a convenience but a fundamental prerequisite for success. It is the cornerstone of achieving reproducibility, accelerating process development, and ensuring operational safety, particularly in critical fields like pharmaceutical drug development. Parallel Pressure Reactors (PPR) enable the simultaneous execution of 2 to 6 reactions, screening catalysts, and optimizing processes like hydrogenations and carbonylation under pressures up to 150 bar and temperatures from -20 °C to +300 °C [28]. Within this context, the accuracy of temperature measurement directly impacts the reliability of the data generated. The choice of sensor technology—typically Pt100 RTDs or thermocouples—and the rigor of its calibration become paramount. Errors in temperature measurement can lead to flawed kinetic data, inaccurate scale-up predictions, and ultimately, failed experiments, wasting valuable resources and time. This guide provides researchers and scientists with an in-depth technical understanding of these essential sensor technologies and the best practices for their calibration.

Sensor Technologies: A Technical Deep Dive

Pt100 Resistance Temperature Detectors (RTDs)

Principle of Operation: A Pt100 is a type of Resistance Temperature Detector (RTD) whose operation is based on the predictable increase in the electrical resistance of platinum with rising temperature. Specifically, the "100" denotes a resistance of 100 ohms at 0 °C [29].

Key Characteristics:

- Accuracy and Linearity: Pt100 sensors are known for their high accuracy and excellent stability over time. They provide a more linear response compared to thermocouples over a wide temperature range [29].

- Temperature Range: They are typically used within a range of -200 °C to +420 °C, making them suitable for most parallel reactor applications [29].

- Wiring Configurations: The accuracy of a Pt100 is influenced by its wiring configuration. To mitigate the effect of lead wire resistance, a 3-wire or 4-wire system is essential. A 2-wire system is generally avoided for precision measurement as it cannot compensate for lead resistance [30].

Accuracy and Specifications: Pt100 sensors are available in different tolerance classes defined by international standards (IEC 60751). The two most common classes are:

- Class A: Permissible deviation of ±(0.15 + 0.002|t|) °C. This is used for higher-precision applications.

- Class B: Permissible deviation of ±(0.30 + 0.005|t|) °C [29].

Where 't' is the absolute value of the temperature in °C. For example, at 100 °C, a Class B sensor would have a tolerance of ±0.8 °C.

Thermocouples

Principle of Operation: A thermocouple operates on the Seebeck effect, where a voltage is generated when two dissimilar metal wires are joined at a junction and there is a temperature difference between this measuring ("hot") junction and the reference ("cold") junction [31].

Key Characteristics:

- Wide Temperature Range: Certain thermocouple types (e.g., Type R, S) can measure extremely high temperatures, far beyond the range of Pt100 sensors.

- Ruggedness and Cost: They are generally more rugged and less expensive than Pt100 sensors.

- Complexity and Drift: Their readings are less linear than RTDs and are susceptible to drift over time due to chemical changes in the metal wires (e.g., oxidation) [31]. They also require a stable and accurate reference junction compensation.

Pt100 vs. Thermocouple: A Quantitative Comparison for Reactor Applications

The choice between a Pt100 and a thermocouple depends on the specific requirements of the parallel reactor experiment. The following table summarizes the key differences to guide this decision.

Table 1: Comparative Analysis of Pt100 and Thermocouple Sensors

| Feature | Pt100 RTD | Thermocouple (Type K) |

|---|---|---|

| Principle | Electrical resistance change of platinum [29] | Thermoelectric voltage (Seebeck effect) [31] |

| Typical Range | -200 °C to 420 °C [29] | -200 °C to 1260 °C (Type K) [32] |

| Accuracy (at 100°C) | High; ±0.8 °C (Class B) or better [29] | Moderate; ±2.2 °C (Standard Limit of Error) [32] |

| Stability & Drift | Excellent long-term stability [29] | Prone to drift due to oxidation and aging [31] |

| Linearity | Good linearity | Moderate non-linearity |

| Response Time | Slower (depends on sheath diameter) [29] | Faster (junction is typically exposed) |

| Cost | Higher | Lower |

| Ideal Use Case | High-precision process development, catalyst screening, reproducible DoE studies [28] | High-temperature reactions, non-critical monitoring, where cost is a primary driver |

For parallel reactor systems where reproducibility and data integrity are critical—such as in Design of Experiments (DoE) and Quality by Design (QbD) initiatives for pharmaceutical development—the Pt100 is often the preferred sensor due to its superior accuracy and stability [28] [30].

Calibration Best Practices for Measurement Integrity

Calibration is the process of verifying and documenting the accuracy of a temperature sensor against a known reference standard. For a Pt100, this process is essentially a validation, as the sensor itself cannot be adjusted [30].

Calibration Methodologies

There are two primary methods for calibrating temperature sensors in a laboratory setting:

- Fluid-Filled Baths: A stirred fluid bath provides a highly stable and uniform temperature environment. It offers intimate contact between the sensor and the medium, leading to high accuracy and the ability to calibrate multiple sensors of different sizes simultaneously [30].

- Dry-Block Calibrators: A dry-block calibrator uses a metal block with drilled holes. It is portable and suitable for on-site calibration. However, air gaps between the sensor and the block can increase response time and reduce accuracy, and they are less suitable for calibrating short sensors or multiple probes of varying diameters at once [30].

Table 2: Comparison of Temperature Calibration Methods

| Method | Uniformity & Accuracy | Portability | Throughput | Key Consideration |

|---|---|---|---|---|

| Fluid-Filled Bath | High [30] | Low (fixed installation) [30] | High (multiple sensors) [30] | Temperature range limited by fluid properties [30] |

| Dry-Block Calibrator | Moderate (due to air gaps) [30] | High [30] | Low (limited by block holes) [30] | Must fully insert sensor; block size must match probe diameter [30] |

Step-by-Step Calibration Protocol for a Pt100 Sensor

The following workflow outlines a standardized procedure for calibrating a Pt100 sensor, incorporating best practices to minimize error.

Title: Pt100 Sensor Calibration Workflow

Detailed Protocol Steps:

- Preparation and Visual Inspection: Check the Pt100 sensor and its thermowell for any physical damage or corrosion. Ensure the connection head is secure and the cables are intact.

- Reference System Setup: Select a calibrated, high-accuracy reference thermometer and indicator. Choose the temperature points for calibration; typically, a minimum of three points (e.g., low, medium, high) that are representative of your process temperature range is recommended [30].

- Sensor Immersion: Place both the reference sensor and the Pt100 sensor under test (SUT) into the calibration bath or dry-block. To minimize stem conduction errors, immerse the sensors to a sufficient depth—a general rule is 10 times the stem diameter plus the sensing length of the element [30]. Position the sensors close to each other to ensure they experience the same temperature.

- Achieve Temperature Stability: Allow the system to stabilize at each set temperature point. Do not rush this process. It can take a minimum of 15 minutes or longer after the bath or block indicates stability for the entire sensor assembly to reach a uniform temperature [30].

- Record Measurement Data: Once stability is achieved, record the readings from both the reference standard and the SUT. For higher accuracy, take multiple readings and use the average value to reduce the influence of random errors [33].

- Generate Calibration Certificate: The recorded data is used to populate a calibration certificate. This certificate will detail the measured values and the associated errors or deviations at each temperature point, providing traceability to national standards [30].

Error Analysis and Correction

Understanding potential errors is crucial for accurate temperature measurement. Errors can be categorized as systematic or random.

- Systematic Errors: These are consistent, repeatable errors often caused by inaccuracies in the reference standard or poor temperature uniformity in the calibration bath. They can be quantified and corrected for in the final results [33]. For example, if a reference thermometer has a known deviation of +0.3 °C, this offset can be subtracted from the final measurement.

- Random Errors: These are non-repeatable fluctuations caused by environmental noise, small variations in the measuring instrument, or operator influence. Their impact can be reduced by taking the average of multiple measurements at each calibration point [33].

For thermocouples, additional common errors include:

- Sensor Type Mismatch: Configuring the transmitter for the wrong thermocouple type (e.g., selecting Type K for a Type J sensor) will cause significant inaccuracies [31].

- Polarity Errors: Reversing the positive and negative thermocouple wires will produce incorrect readings [31].

- Reference Junction Errors: Fluctuations in the temperature at the connection point (cold junction) of the thermocouple wires to the measuring instrument will introduce error. Using instruments with built-in cold junction compensation is essential [31].

- Drift and Aging: Over time, thermocouples can drift from their initial specifications due to metallurgical changes caused by high temperatures and chemical exposure. Regular calibration and scheduled replacement are necessary to mitigate this [31].

The Scientist's Toolkit: Essential Reagents and Materials

The following table lists key components and reagents used in advanced, automated parallel reactor systems, illustrating the ecosystem in which these temperature sensors operate.

Table 3: Research Reagent Solutions for Parallel Reactor Experimentation

| Item | Function | Application Example in Parallel Reactors |

|---|---|---|

| Parallel Pressure Reactor (PPR) | Enables simultaneous, automated execution of multiple pressurized reactions with individual parameter control [28]. | Core platform for catalyst screening, hydrogenations, and process development [28]. |

| Catalyst Library | Substances that increase the rate of a chemical reaction without being consumed. | Parallel testing of different catalysts (e.g., Ni vs. Pd) to identify the most effective and cost-efficient option [4]. |

| Ligand Library | Molecules that bind to a metal catalyst, modifying its reactivity and selectivity. | Optimizing challenging metal-catalyzed couplings (e.g., Suzuki, Buchwald-Hartwig) by screening ligand structures in parallel [4]. |

| Solvent Library | The medium in which a reaction takes place, capable of influencing mechanism and rate. | Screening solvent effects on yield and selectivity as part of a Design of Experiment (DoE) approach [28] [4]. |

| Machine Learning Software | Algorithmic-guided platforms for experimental design and multi-objective optimization. | Replaces traditional one-factor-at-a-time searches; efficiently navigates complex parameter spaces to find optimal conditions in fewer experiments [4]. |