Precision Thermal Control in Parallel Reactors: A Guide for Pharmaceutical and Biomedical Research

This guide provides researchers and drug development professionals with a comprehensive framework for implementing precision thermal control in parallel reactor systems.

Precision Thermal Control in Parallel Reactors: A Guide for Pharmaceutical and Biomedical Research

Abstract

This guide provides researchers and drug development professionals with a comprehensive framework for implementing precision thermal control in parallel reactor systems. It covers foundational principles of temperature management, advanced methodological setups for diverse chemical reactions, practical troubleshooting and optimization strategies, and robust validation techniques to ensure data integrity and reproducibility. The content is designed to help scientists overcome common challenges in high-throughput experimentation, improve catalyst testing accuracy, and accelerate reaction optimization and kinetics studies in pharmaceutical development.

Understanding Parallel Reactor Thermal Fundamentals: Principles, Components, and System Architecture

In thermal and fluid control systems for parallel reactor research, the distinct yet complementary concepts of precision and accuracy are foundational to data integrity and experimental reproducibility. This whitepaper delineates these core concepts, detailing their critical importance in reactor physics, temperature measurement, and microfluidic control. It further provides researchers with robust methodologies to quantify, mitigate error, and achieve the high standards of measurement required for advanced drug development and materials research.

In scientific research, the terms "accuracy" and "precision" are often used interchangeably in casual conversation; however, in metrology—the science of measurement—they describe fundamentally different concepts. For researchers working with parallel reactor thermal control systems, a rigorous understanding of this distinction is non-negotiable for ensuring reliable and meaningful experimental outcomes.

- Accuracy describes the closeness of agreement between a measured quantity value and a true quantity value of a measurand [1]. In essence, it measures correctness by quantifying how near a single measurement is to the actual or accepted reference value. It is primarily affected by systematic error, which introduces a consistent, reproducible bias into measurements [2].

- Precision, in contrast, is the closeness of agreement between measured quantity values obtained by replicate measurements on the same or similar objects under specified conditions [1]. It measures reproducibility and repeatability, regardless of whether the results are correct. It is primarily influenced by random error, which causes scatter in the data [2].

A classic analogy is a dartboard. If a player throws three darts that all cluster tightly in the upper left corner of the board, the throws are precise (repeatable). If the darts are clustered in the bullseye, the throws are both precise and accurate. If they are scattered randomly across the board, they are neither [3]. In the context of thermal and fluid systems, this translates to maintaining consistent reactor temperatures (precision) that also match the true setpoint temperature (accuracy), a cornerstone of valid parallel experimentation.

The Critical Distinction in Thermal and Fluid Systems

The theoretical definitions of accuracy and precision manifest in very specific, high-stakes ways within thermal and fluid control environments.

Impact on Data Integrity and Process Control

In parallel reactor studies, where multiple experiments run concurrently, a lack of precision between reactor units makes comparative analysis meaningless. If one reactor channel consistently operates at 50°C ± 0.1°C (precise) while another operates at 50°C ± 2°C (imprecise), researchers cannot determine if different outcomes are due to the experimental variable or the uncontrolled thermal fluctuation. Similarly, if all reactors are precisely controlled but inaccurately calibrated to run 5°C above the setpoint, the entire dataset is systematically biased, potentially leading to incorrect conclusions about reaction kinetics or catalyst performance.

This is particularly critical in biotech and pharmaceutical research, where precise reagent addition directly influences reaction kinetics and product yield [4]. Accuracy in dispensing ensures that concentrations are correct, while precision guarantees that the same results can be replicated across multiple tests or production batches, a fundamental requirement for regulatory compliance.

Quantifying Performance: Standards and Specifications

The performance of fluid control and temperature measurement devices is quantified using standardized metrics.

- For fluid handling, precision is often expressed as the coefficient of variation (CV), which is the ratio of the standard deviation to the mean volume of a run of dispenses, measuring reproducibility. Accuracy is reported as the deviation of the actual mean volume from the target volume, for example, "+3 nl or +3%" for a 100 nl target [2]. Modern high-performance syringe pumps can achieve volumetric accuracies of ± <0.35% [4].

- For battery cyclers (analogous to thermal/electrical control systems), accuracy is often defined by an equation such as "0.1% of the value measured plus 0.1% of the full scale," which highlights the importance of selecting an appropriate measurement range [1].

Table 1: Performance Parameter Examples in Different Systems

| System Type | Accuracy Metric | Precision Metric | Key Standard/Example |

|---|---|---|---|

| Liquid Handling | Deviation from target volume (e.g., +3%) [2] | Coefficient of Variation (CV) [2] | Volumetric accuracy of ± <0.35% in syringe pumps [4] |

| Battery Cycler (Electrical) | 0.1% of value + 0.1% of range [1] | Measurement noise-level [1] | High Precision Coulometry (HPC) [1] |

| Temperature Measurement | Closeness to true value (e.g., <0.001°C) [5] | Standard deviation of repeated measurements [5] | Ultra-high precision for coulometry [1] |

The Dominant Challenge: Thermal Effects on Measurement

Heat represents the single largest source of systematic error and non-repeatability in nearly all ultra-precision manufacturing and measurement processes [6]. Its impact is two-fold, affecting both the instruments and the workpieces or samples themselves.

Mechanisms of Thermal Interference

The primary mechanism through which heat degrades measurement quality is thermal expansion. Materials, including metals used in measurement instruments and reactor components, expand when heated and contract when cooled. This change in dimension directly alters measurement readings [7] [8]. For example:

- A micrometer used in a lab where the temperature rises from 73°F to 82°F will produce different readings for the same part simply due to its own expansion [7].

- A 200-mm long aluminum gauge block will change length by 0.0046 mm with a one-degree Celsius temperature change [6].

Furthermore, heat degrades the performance of electronic components, such as sensors and amplifiers, leading to signal drift and increased noise, which directly harms both accuracy and precision [7]. For battery cyclers, temperature stability is a critical parameter, with drift expressed as a percentage of the full-scale measurement per degree Celsius (e.g., 0.01%/°C) [1].

Consequences for Parallel Reactor Systems

In a parallel reactor setup, thermal effects can create cross-talk and invalidate comparisons. If heat from one reactor module influences the temperature sensor of a neighboring module, it introduces a systematic bias (reducing accuracy) in the second module while increasing variation in its readings (reducing precision). This undermines the core advantage of parallelization. The following diagram illustrates how thermal factors influence the measurement pathway in such a system.

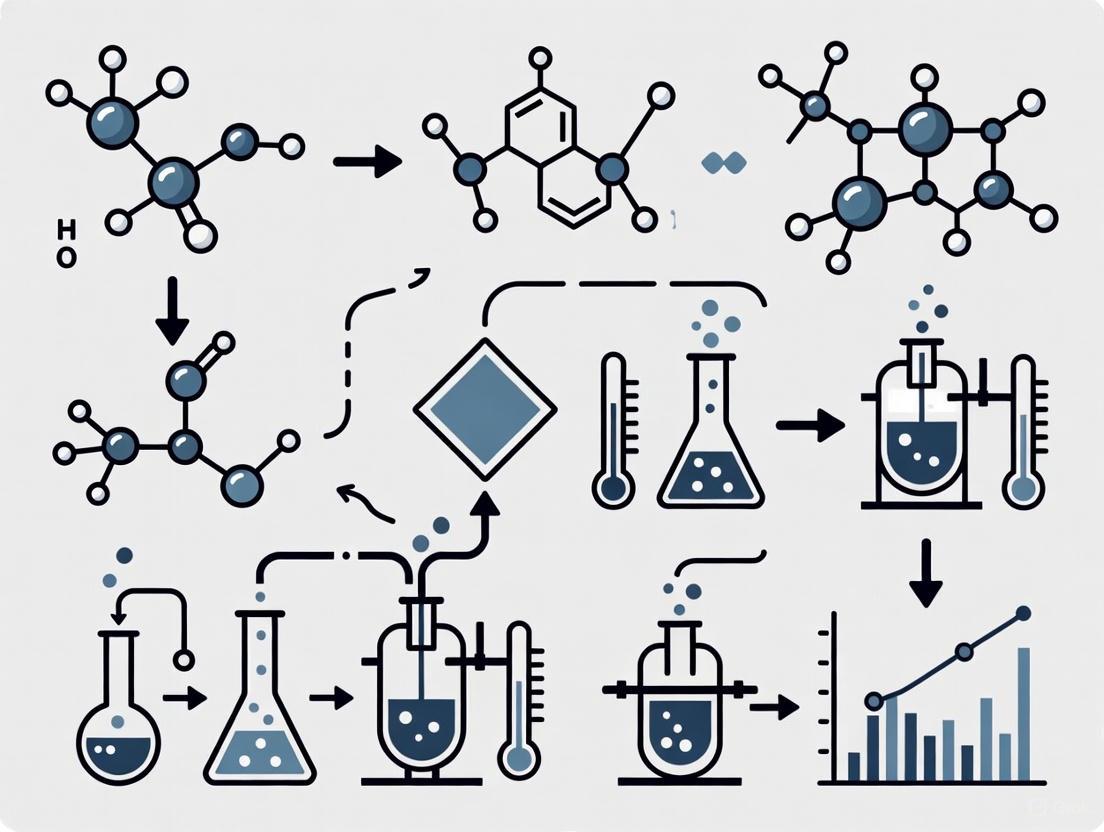

Figure 1: The Impact of Thermal Effects on Measurement Output. Heat from internal or external sources causes physical and electronic changes in the measurement instrument, leading to errors that degrade both precision (random error) and accuracy (systematic error).

Methodologies for Enhanced Measurement

Achieving high levels of accuracy and precision requires deliberate strategies, from system design to data analysis.

Experimental Protocol: High-Accuracy Temperature Measurement

The following methodology, derived from research, leverages the Central Limit Theorem (CLT) to statistically improve the accuracy and precision of temperature measurements in a liquid [5].

1. Principle: The CLT states that the mean of a sufficiently large number of independent and identically distributed (IID) random variables will have an approximately normal distribution, regardless of the original distribution. By oversampling and averaging, the precision of the mean value is improved.

2. Procedure:

- Setup: Immerse a high-accuracy thermometer (e.g., platinum resistance thermometer) in the liquid within a thermally insulated system to minimize external influences.

- Data Acquisition: Configure a data acquisition system to collect a large number of temperature measurement samples. Let

Nbe the number of samples in one measurement group. - Group Averaging: Calculate the mean temperature for each group of

Nsamples. This mean value,T_mean, is a single data point with higher precision. According to the CLT, the standard deviation of the mean (standard error) isσ/√N, whereσis the population's standard deviation. - Sequential Averaging: Repeat the process to obtain

Mnumber of these mean values (T_mean1,T_mean2, ...,T_meanM). - Final Calculation: The overall best estimate of the temperature is the grand mean of the

Mgroup means. The precision of this final value is further enhanced by the factor√M.

3. Key Consideration: For the CLT to be effective, the systematic error (bias, or Δμ) must be much smaller than the random error (standard deviation, σ), satisfying the condition Δμ << σ [5].

Mitigation Strategies for Thermal Errors

Proactive mitigation of thermal effects is essential for maintaining measurement integrity.

- Temperature Control: Maintaining a stable temperature environment is the most critical strategy. Metrology labs are often kept at a standard temperature (e.g., 20°C). Precision manufacturing processes can show a factor of two to ten improvement in accuracy with temperature control 100 times better than ambient [6].

- Thermal Equilibrium: Allowing both the measurement instrument and the workpiece (e.g., a reactor vessel or sample) to acclimate to the measurement environment for a sufficient period is necessary to reduce errors caused by transient thermal expansion [8].

- Calibration: Regular calibration against a reference standard of higher accuracy is essential for correcting systematic errors and maintaining accuracy [7] [1]. The frequency of calibration should be increased if instruments are used in environments with significant temperature fluctuations [7].

- Material Selection: Using materials with low coefficients of thermal expansion for critical components of measurement instruments and reactor fixtures minimizes dimensional changes with temperature [7] [8].

- Heat Shielding: Shielding instruments from direct exposure to radiant heat sources (e.g., reactors, motors) helps minimize thermal gradients and drift [7].

- Software Compensation: Advanced measurement systems can employ algorithms to compensate for known thermal expansion effects by adjusting the readings based on input from temperature sensors and known material properties [8].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key components and instruments essential for achieving high accuracy and precision in thermal and fluid control research.

Table 2: Essential Tools for Precision Thermal and Fluid Control Research

| Item | Function & Importance | Key Performance Parameters |

|---|---|---|

| High-Precision Syringe Pump | Precisely controls the infusion/withdrawal of fluids for reagents, catalysts, or pH control in microreactors. Essential for reproducible flow rates. [4] | Volumetric accuracy (e.g., ±<0.35%), flow rate range (e.g., nL/min to mL/min), minimal pulsation. [4] |

| Platinum Resistance Thermometer | Provides high-accuracy temperature sensing within a reactor vessel or fluid line. The foundation for reliable thermal data. [5] | High accuracy (e.g., referenced to within 0.13 mK), stability, compatibility with data acquisition systems. [5] |

| Temperature-Controlled Enclosure | Maintains a stable thermal environment for parallel reactor arrays or measurement instrumentation, mitigating thermal drift. [6] | Temperature stability (e.g., ±0.01°C), uniformity across the workspace. [6] |

| Data Acquisition & Control System | Interfaces with sensors and actuators to execute control algorithms (e.g., PID), log data, and implement protocols like oversampling. [5] | Resolution (bits), sampling rate, time base (responsiveness), software integration (e.g., LabVIEW, MATLAB). [4] [1] |

| Inline Degasser | Removes dissolved gases from fluids to prevent bubble formation, which can disrupt flow patterns, cause measurement artifacts, and interfere with sensors. [4] | Efficiency of gas removal, compatibility with solvents, operational backpressure. |

| Calibration Reference Standards | Certified materials or devices used to calibrate temperature sensors and flow meters, ensuring traceability and correcting systematic error. [1] | Certified uncertainty, traceability to national standards (e.g., NIST). |

In the demanding field of parallel reactor research, a profound understanding of accuracy and precision is not merely academic—it is a practical necessity for generating valid, reproducible data. Thermal effects present the most significant challenge to these metrological ideals, but through robust system design, disciplined experimental protocols, and the use of high-performance instrumentation, researchers can effectively mitigate these errors. By meticulously applying the principles and methodologies outlined in this whitepaper, scientists and engineers can enhance the reliability of their thermal and fluid control systems, thereby accelerating innovation in drug development and beyond.

Modern thermal control systems are engineered networks critical for maintaining specific temperature conditions in advanced technological applications, from parallel chemical reactors to spacecraft. These systems function as the unsung heroes in various industries, ensuring not only operational comfort but also the precise and efficient functioning of sensitive equipment [9]. The core principle of any thermal control system is to actively manage the flow of thermal energy to maintain a desired temperature setpoint, despite varying internal heat loads and external environmental conditions [10]. In the context of parallel reactor research for drug development, thermal control becomes paramount for ensuring reaction reproducibility, optimizing yields, and enabling scale-up processes.

The fundamental structure of these systems typically comprises sensors to monitor temperature, controllers to process this data and determine necessary adjustments, and actuators (such as heaters and circulators) to execute these thermal adjustments [9]. This creates a closed-loop feedback system that constantly works to maintain thermal equilibrium. The design and integration of these components—specifically heaters, sensors, and circulators—directly impact the system's precision, stability, and energy efficiency, making their selection and configuration a critical focus for researchers and engineers [9] [11].

Fundamental Principles of Thermal Control

The Core Objective and Heat Transfer Mechanisms

The primary objective of a thermal control system is to balance the heat flows within a system. This is elegantly captured by the fundamental energy balance equation used in spacecraft thermal control, which is equally applicable to terrestrial reactor systems [12]: qsolar + qalbedo + qplanetshine + Qgen = Qstored + Qout,rad

In this equation, Qgen represents the heat generated internally by the spacecraft or, by analogy, the heat generated by reactions in a reactor vessel. Qstored is the heat stored by the system mass, and Qout,rad is the heat emitted via radiation to the surroundings [12]. For earth-based reactor systems, the solar, albedo, and planetshine terms are often replaced with other environmental heat exchange mechanisms, but the core principle of balancing energy inputs and outputs remains unchanged.

Thermal control systems leverage the core principles of thermodynamics to manage heat flow, employing conduction, convection, and radiation [9]. In a vacuum, such as in space, heat transfer is limited to radiation and conduction, with no convective medium [12]. However, for most laboratory and industrial reactor systems on Earth, all three mechanisms are at play, with active systems often using forced convection to enhance heat transfer.

Active versus Passive Thermal Control

A critical distinction in thermal management is between active and passive control.

- Passive Thermal Control relies on innate material properties and natural phenomena—such as natural convection, conduction, and radiation—without consuming external power. Examples include heat sinks, thermal coatings, and multi-layer insulation [12] [10]. These systems are characterized by high reliability, low cost, and simplicity but offer limited thermal capacity and no direct control over temperature setpoints [10].

- Active Thermal Control (ATCS), the focus of this guide, consumes external energy to move and reject heat. Any system that uses electricity to power a pump, fan, or heater falls into this category [10]. Active systems are more complex and costly but are essential for handling high heat loads, achieving temperatures below ambient, or maintaining precise, stable setpoints, as required in rigorous parallel reactor research [10].

Table 1: Comparison of Active and Passive Thermal Control Strategies

| Feature | Passive Thermal Control | Active Thermal Control |

|---|---|---|

| Energy Consumption | None; relies on natural phenomena | Requires energy for fans, pumps, or heaters |

| Thermal Capacity | Low to moderate | High to very high |

| System Complexity | Simple; fewer components | Complex; more parts and control logic |

| Reliability (MTBF) | Extremely high (no moving parts) | Lower (dependent on component lifespan) |

| Cost | Low | Higher |

| Control Level | None; temperature floats with load | Precise; can target a specific setpoint |

| Common Example | Spacecraft MLI, SSD heat spreaders | CPU liquid coolers, reactor heating circulators |

Core Component Deep Dive: Heaters, Sensors, and Circulators

Heaters: Precision Energy Input

Heaters are the primary actuators for adding thermal energy to a system. In the context of parallel reactors and industrial processes, they are often integrated into a larger circulation unit. The heating element is the core of this subsystem, typically an electrical resistor that converts electrical energy into heat with high efficiency [13]. For chemical reactor jackets, the heater raises the temperature of a circulating fluid to a defined setpoint, initiating and maintaining endothermic reactions [13]. Advanced thermal control systems integrate heaters with sophisticated controllers that allow for ramp and dwell profiles, enabling complex temperature-time recipes that are essential for optimizing reaction kinetics and ensuring process consistency across multiple parallel reactors [13].

Sensors: The Feedback Loop Foundation

Sensors are the critical feedback components that monitor the system's thermal state. They provide the essential data that the controller uses to make decisions. In electronic thermal management, and by extension in reactor systems, highly accurate temperature sensors (e.g., ±0.1 °C) are strongly recommended to monitor temperature changes [14]. For systems aiming to maintain a human skin temperature, for instance, sensors must be placed to ensure close contact for accurate reading [14]. In a reactor setup, this would translate to sensors being in direct contact with the reaction vessel or the heat transfer fluid.

The principle of the feedback loop is paramount: sensors constantly monitor the temperature, and the system adjusts its actuator settings based on this real-time data [9]. This iterative process allows the system to adapt to changes in the environment or the internal heat load, maintaining the desired temperature with high precision. Many systems utilize Proportional-Integral-Derivative (PID) control algorithms, which dynamically combine responses to current, past, and anticipated future temperature errors to achieve stable and responsive regulation [9].

Circulators: Active Heat Transport

Circulators are the workhorses of active thermal transport in liquid-based systems. A heating circulator is a quintessential example of an integrated active thermal control unit, combining a heater, a circulation pump, a temperature controller, and sensors into a single device [13]. Its primary function is to accurately set and maintain the temperature of a fluid and circulate it through an external system, such as a reactor jacket [15].

The core components of a heating circulator are:

- Heating Element: Raises the fluid temperature.

- Circulation Pump: Drives the heated fluid through the closed loop, providing the necessary pressure and flow rate [13].

- Temperature Controller: The brain of the unit, which processes sensor data and modulates the heater and pump.

- Expansion and Safety Components: Manage fluid expansion and ensure safe operation.

- Sensors and Piping: Monitor fluid temperature and provide the pathway for heat transport [13].

Heating circulators can be fluid-specific, with water-based circulators used for temperatures up to 100°C or higher with pressurization, and oil-based circulators for applications requiring a higher temperature range [13] [15]. This makes them exceptionally versatile for parallel reactor systems where different reactions may have varying thermal requirements.

Diagram 1: This diagram illustrates the closed-loop feedback control within a heating circulator, demonstrating the interaction between sensors, the controller, and the actuators (heater and pump).

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Selecting the right components and materials is critical for designing and executing reliable thermal control experiments. The following table details key items essential for researchers in this field.

Table 2: Essential Materials and Reagents for Thermal Control Research

| Item | Function & Application | Key Considerations |

|---|---|---|

| Heating Circulator | Provides precise temperature control and fluid circulation for reactor jackets and external heat exchangers [13]. | Temperature range, pump pressure/flow rate, stability (±0.01°C), and compatibility with thermal fluids. |

| Thermal Interface Material (TIM) | Bridges microscopic gaps between heat sources and sinks (e.g., sensor and surface), enhancing conductive heat transfer [11]. | Thermal conductivity (W/mK), application method (paste, pad, adhesive), and long-term stability. |

| PID Controller | The computational core that provides precise temperature regulation by dynamically adjusting power to heaters based on sensor feedback [9]. | Tuning parameters, communication interface (e.g., Ethernet, RS-485), and control algorithm sophistication. |

| PT100/1000 RTD Sensor | A highly accurate type of temperature sensor that measures temperature by correlating the resistance of a platinum element with temperature. | Accuracy class (e.g., ±0.1°C), response time, and physical packaging for the application. |

| Thermal Management Fluid | The working fluid in a circulator or liquid cooling loop; acts as the medium for acquiring, transporting, and rejecting heat [13]. | Operating temperature range, viscosity, thermal capacity, and chemical compatibility (e.g., water, oil, glycol mix). |

| Data Acquisition System | Logs temperature data from multiple sensors for post-process analysis, validation, and optimization of thermal protocols. | Sampling rate, channel count, and software integration capabilities. |

Experimental Protocols for Thermal Performance Validation

Rigorous experimental validation is indispensable for characterizing thermal control components and system-level performance. The following protocols provide a framework for quantitative assessment.

Protocol for Transient Thermal Measurement

Objective: To determine the dynamic thermal response and time constant spectrum of a component or assembly, which is crucial for predicting behavior under fluctuating loads [16].

Methodology:

- Setup: Attach a heating element and a temperature sensor (e.g., thermocouple or RTD) to the Device Under Test (DUT). Ensure minimal thermal interference.

- Stabilization: Allow the DUT to reach a known equilibrium temperature, T0.

- Excitation: Apply a controlled step in heating power, P, to the heating element.

- Data Acquisition: Record the temperature response, T(t), of the DUT at a high sampling rate throughout the transient until a new steady-state is reached.

- Analysis: Compute the thermal impedance, Zth(t) = (T(t) - T0) / P. The resulting curve reveals the thermal capacitance and resistance network of the DUT. For deeper analysis, techniques like Network Identification by Deconvolution can be used to derive a Foster or Cauer model from the transient response, though this process is sensitive to measurement noise [16].

Protocol for Steady-State Performance & Efficiency

Objective: To measure the steady-state thermal resistance and maximum temperature under continuous operation, validating the system's ability to handle a continuous heat load [11].

Methodology:

- Setup: Place a known heat source (e.g., a calibrated power resistor) in the system. Integrate temperature sensors at the heat source (Tsource) and the heat sink outlet (Tsink).

- Conditioning: Apply a fixed power load, Q, to the heat source. Allow the system to stabilize until all temperatures remain constant (steady-state).

- Measurement: Record Tsource, Tsink, and ambient temperature (T_amb). For fluid systems, also record flow rate.

- Calculation: Compute the overall thermal resistance, Rth = (Tsource - Tsink) / Q. A lower Rth indicates better thermal performance.

Protocol for Sensor Calibration and System Verification

Objective: To ensure the accuracy of the temperature feedback loop, which is the foundation of reliable control.

Methodology:

- Reference Standard: Use a calibrated, high-accuracy temperature sensor (traceable to a national standard) as a reference.

- Co-location: Place the sensor under test and the reference sensor in a stable, uniform temperature environment (e.g., a calibrated thermal bath).

- Data Collection: Record the readings from both sensors across the operating temperature range of interest.

- Analysis: Create a calibration curve, correlating the sensor-under-test reading to the reference standard. Apply necessary correction factors or offsets in the data acquisition software or controller.

Diagram 2: A generalized workflow for thermal performance validation, outlining the key steps for both transient and steady-state experimental protocols.

The seamless integration of high-performance heaters, sensors, and circulators forms the backbone of modern, precise thermal control systems. As demonstrated, the interplay of these components—governed by feedback control principles and rigorous experimental validation—is what enables researchers to achieve and maintain the exacting thermal environments required for advanced parallel reactor research. The move from passive to active thermal control, while adding complexity, is a necessary step to manage the increasing power densities and precision demands of modern scientific and industrial processes [10]. By understanding the function, selection criteria, and characterization methods for these core components, scientists and engineers can design more reliable, efficient, and robust thermal management solutions that directly contribute to the success and reproducibility of their research and development efforts.

Within parallel reactor systems used for high-throughput experimentation in pharmaceutical and chemical development, precise thermal management is a critical determinant of success. These systems enable the simultaneous screening of numerous reaction conditions, dramatically accelerating research and development timelines. The thermal control architectures governing these reactors directly impact data quality, experimental reproducibility, and ultimately, the validity of scientific conclusions. This whitepaper examines the two predominant thermal control methodologies—Individual Reactor Control and Block Reactor Control—framed within the context of advanced parallel reactor thermal control system research. We provide a technical analysis of their operational principles, comparative performance, and implementation protocols to guide researchers, scientists, and drug development professionals in selecting and optimizing their experimental setups.

Core Control Architectures and Their Principles

Individual Reactor Control

The Individual Reactor Control architecture provides dedicated sensing and actuation for each reaction vessel within a parallel system. This approach facilitates independent temperature management for every reactor, allowing for unique thermal profiles to be run simultaneously. The core principle involves a closed-loop feedback system for each unit.

Advanced implementations, as seen in modern temperature-controlled reactors (TCRs), achieve remarkable uniformity by using computational fluid dynamics (CFD) to design intricate internal cooling channels. This engineering solution addresses the challenge of coolant warming along the flow path, enabling a temperature gradient as low as ±1°C across the reactor block [17]. This is crucial for sensitive applications like photocatalysis, where waste heat can create "heat islands" and cause reaction rates to vary by orders of magnitude [17].

Block Reactor Control

In contrast, the Block Reactor Control methodology manages a group of reactors as a single thermal unit. A common heating or cooling source, such as a temperature-controlled bath or a Peltier element, services all reactors in the block. The temperature is typically measured at one or a few points within the block, and the control system acts to maintain this set-point temperature.

The primary challenge with this architecture is thermal inequality. Reactors in different physical locations within the block can experience varying temperatures due to factors like proximity to the heat source and coolant flow distribution. As one study notes, poorly designed systems can exhibit temperature variations as large as 30°C [17]. This architecture is generally less complex and lower in cost than individual control but sacrifices flexibility and precision.

Foundational Control Topologies

Both individual and block control architectures leverage fundamental control topologies to achieve their objectives:

- Feedback Control: The most common topology, where a sensor's measurement (e.g., temperature) is "fed back" to a controller, which adjusts an actuator (e.g., a heater or coolant valve) to minimize the error between the measurement and a set-point [18].

- Cascade Control: This involves multiple control loops, where a primary controller's output sets the set-point for a secondary controller. For example, a reactor's outlet temperature could set the set-point for a steam flow controller feeding a heating jacket, improving disturbance rejection [18].

- Ratio Control: Used when an optimal ratio between two process variables must be maintained, such as the flow rates of two reactant feeds entering a reactor [18].

Table 1: Comparison of Core Control Architectures

| Feature | Individual Reactor Control | Block Reactor Control |

|---|---|---|

| Control Principle | Dedicated sensor & actuator per reactor [17] | Single control point for multiple reactors |

| Temperature Uniformity | High (e.g., ±1°C) [17] | Lower (gradients of 10-30°C possible) [17] |

| Experimental Flexibility | High; allows different temperatures per reactor | Low; all reactors run at the same temperature |

| System Complexity & Cost | High (more sensors, actuators, channels) | Low (simpler hardware and wiring) |

| Ideal Use Case | High-throughput screening with varied conditions | Parallel replication of the same condition |

Quantitative Performance Analysis

The choice between individual and block control has quantifiable impacts on mass transfer, heat transfer, and overall reactor efficiency. Research comparing reactor types for processes like Fischer-Tropsch synthesis provides illustrative data. While these are larger-scale industrial reactors, the underlying principles of thermal and mass transfer management are directly analogous to the challenges in laboratory-scale parallel systems.

Studies show that reactors with superior temperature control and minimized mass transfer resistances achieve significantly higher productivity. For instance, slurry bubble column reactors, which offer more isothermal operation, can be up to an order of magnitude more effective in terms of required reactor volume compared to fixed-bed reactors with less efficient heat removal [19]. This underscores the critical importance of the thermal control architecture on system performance.

Table 2: Reactor Performance Metrics Influenced by Control Architecture

| Performance Metric | Impact of Individual/Precise Control | Impact of Block/Less Precise Control |

|---|---|---|

| Catalyst Specific Productivity | Higher due to optimal thermal environment [19] | Lower due to thermal gradients and non-optimal conditions |

| Mass Transfer Resistance | Can be minimized with optimized design [19] | Often higher, limiting reaction rates [19] |

| Heat Transfer Efficiency | High; enables near-isothermal operation [19] | Lower; risk of hot/cold spots [19] |

| Reaction Rate Consistency | High; eliminates temperature-based rate differences [17] | Low; reactions proceed at different rates [17] |

Advanced System Implementation and Protocols

Experimental Protocol for Control System Characterization

To validate and characterize a parallel reactor thermal control system, the following experimental protocol is recommended:

- Setup and Instrument Calibration: Install the parallel reactor system according to manufacturer specifications. Prior to experimentation, calibrate all temperature sensors (e.g., RTDs, thermocouples) against a traceable standard across the intended operating temperature range.

- Static Uniformity Test: Set the control system to a target temperature (e.g., 25°C, 70°C). Without running a chemical reaction, allow the system to reach a steady state. Record the temperature from each reactor's sensor (for individual control) or from multiple strategically placed sensors within the block (for block control). The standard deviation of these measurements quantifies the system's temperature uniformity [17].

- Dynamic Response Test: Introduce a set-point change (e.g., a 20°C ramp). Record the time each reactor takes to reach within 5% of the new set-point. This measures the response time. Also, record the maximum overshoot (if any) for each reactor.

- In-Process Performance Test: Run a standardized, temperature-sensitive chemical reaction in all vessels. A model reaction with a well-characterized kinetic profile is ideal. After a fixed time, quench the reactions and analyze yields (e.g., by HPLC or GC). The standard deviation of yields across the reactors is a functional measure of the control system's efficacy under realistic experimental load [17].

A Modern Control System Architecture: The Copenhagen Atomics Example

Pushing the boundaries of control system design, Copenhagen Atomics has developed an open-source, redundant architecture for molten salt reactors, whose principles are transferable to complex chemical plant control. This system abandons traditional programmable logic controllers (PLCs) in favor of a network of Raspberry Pi computers (PiHubs) and STM32 microcontrollers [20].

- Data Handling: All measurement data (temperature, pressure, etc.) from input/output (IO) boxes is collected ten times per second (10 Hz) and assembled into a single data vector [20].

- Redundancy and Consensus: This vector is shared across all PiHubs in the network using the RAFT protocol. Each node independently calculates the required output actions (e.g., valve control). The system uses a voting mechanism to agree on actions, ensuring robust operation even if a node fails [20].

- Execution: Output commands are executed by the node connected to the relevant actuator. Critical components like valves are connected in series or parallel with control from multiple independent IO boxes to eliminate single points of failure [20].

The following diagram illustrates the logical flow of this decentralized and fault-tolerant control system.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key components and reagents essential for implementing and experimenting with advanced reactor thermal control systems.

Table 3: Key Materials and Reagents for Thermal Control Research

| Item | Function/Explanation |

|---|---|

| Calibrated Temperature Sensors (e.g., RTDs) | High-precision sensors are fundamental for accurate temperature feedback in both individual and block control systems. They provide the critical data point for the control algorithm [21]. |

| PID Controller | A standard feedback controller that calculates the error between a set-point and a measured value and applies a correction based on proportional, integral, and derivative terms. It forms the core of most temperature control loops [18] [21]. |

| Programmable Logic Controller (PLC) / Raspberry Pi | The computational brain of the system. Traditional industrial systems use PLCs, while modern, open-source architectures may use platforms like Raspberry Pi for greater flexibility and lower cost [20]. |

| Heat Transfer Fluid | A fluid (e.g., silicone oil, water) circulated through jacketing or internal channels to add or remove heat from the reactor block. Its properties (heat capacity, viscosity) impact control performance [17]. |

| Model Reaction Kit | A well-characterized chemical reaction with known kinetics and temperature sensitivity (e.g., a hydrolysis or catalytic reaction). Used to functionally validate the performance and uniformity of the thermal control system [17]. |

The selection between Individual and Block Reactor Control methodologies is a fundamental decision in designing parallel reactor thermal control systems. Individual control offers superior precision, flexibility, and consistency, making it indispensable for high-stakes, variable-condition screening where data integrity is paramount. Block control provides a cost-effective and simpler alternative for applications with lower precision requirements. The emerging trend, as evidenced by cutting-edge implementations in both chemical and nuclear fields, is toward more sophisticated, decentralized, and fault-tolerant digital architectures. These systems leverage open-source technologies and robust consensus protocols to achieve unprecedented levels of reliability and performance. As high-throughput experimentation continues to be a cornerstone of scientific advancement, the evolution of these thermal control architectures will remain a critical area of research and development.

The Impact of Thermal Management on Reaction Outcomes and Data Quality

Thermal management is a critical engineering discipline that extends far beyond simple temperature control. In parallel reactor systems used for research, development, and quality control, precise thermal management directly dictates the success, reproducibility, and scalability of chemical and biological processes. Effective thermal control ensures consistent reaction kinetics, predictable product yields, and reliable data acquisition across multiple simultaneous experiments. The strategic implementation of advanced thermal management systems enables researchers to achieve desired reaction pathways, minimize by-products, and generate high-quality, reproducible data essential for informed decision-making. This technical guide examines the profound impact of thermal management on experimental outcomes, providing detailed methodologies for achieving superior temperature control in parallel reactor configurations across pharmaceutical, materials, and chemical development applications.

Fundamental Thermal Principles in Reaction Engineering

Thermodynamic Foundations of Reaction Control

Thermal management exerts direct influence over the fundamental thermodynamic parameters governing all chemical reactions. The Gibbs free energy equation (ΔG = ΔH - TΔS) defines the spontaneity and extent of chemical processes, where temperature (T) serves as a multiplier that balances enthalpic (ΔH) and entropic (ΔS) contributions [22]. Even minor temperature variations can significantly alter this balance, shifting equilibrium positions and modifying reaction outcomes. For parallel reactor systems, maintaining identical thermodynamic conditions across all vessels is paramount for obtaining comparable, statistically significant experimental results.

Temperature fluctuations as small as 0.5°C can introduce significant errors in kinetic parameter determination and yield calculations, particularly for highly exothermic or endothermic processes [23]. The temperature dependence of reaction rates, typically described by the Arrhenius equation, means that a 10°C increase often doubles reaction velocity, potentially leading to runaway reactions if not properly controlled. Thermal management systems must therefore provide both precise setpoint maintenance and adequate heat transfer capacity to manage the heat generated or consumed by chemical transformations.

Heat Transfer Considerations in Parallel Reactor Design

Parallel reactor configurations introduce unique heat transfer challenges that must be addressed through careful thermal system design. The principal mechanisms of heat transfer—conduction, convection, and radiation—each contribute differently to the overall thermal profile of multi-reactor systems. Convective heat transfer through jacketed reactors or immersion circulators typically provides the most efficient and uniform temperature control for parallel setups [23].

Table 1: Heat Transfer Properties of Common Reactor Cooling/Heating Methods

| Method | Maximum Heat Flux (W/m²K) | Temperature Uniformity | Response Time | Scalability |

|---|---|---|---|---|

| Jacketed Reactors | 500-1,500 | Moderate | Moderate | Excellent |

| Immersion Circulators | 1,000-3,000 | High | Fast | Good |

| Direct Electrical Heating | 2,000-5,000 | Low | Very Fast | Poor |

| Forced Air Convection | 50-200 | Low | Slow | Excellent |

| Peltier Elements | 500-1,500 | High | Fast | Moderate |

Advanced thermal management systems incorporate multiple heat transfer mechanisms to maintain temperature uniformity across all reactors in parallel configurations. Computational fluid dynamics (CFD) simulations often reveal thermal cross-talk between adjacent reactors, necessitating strategic insulation or active isolation to prevent interference between experimental conditions [24]. The thermal mass of the system, including reactors, fittings, and sensors, must be balanced against responsiveness requirements to ensure both stability and agility during temperature ramping phases.

Thermal Management System Implementation

Core Components of Precision Thermal Control

Implementing robust thermal management for parallel reactor systems requires the integration of several critical components, each contributing to overall system performance. These elements form a cohesive ecosystem that maintains thermal stability across multiple simultaneous experiments.

Table 2: Essential Components for Parallel Reactor Thermal Management

| Component | Function | Performance Considerations |

|---|---|---|

| Temperature Sensor (RTD/Thermocouple) | Accurate temperature measurement | Precision (±0.01°C), response time, placement |

| Circulating Bath/Heat Exchanger | Add/remove heat from reactor | Stability (±0.05°C), capacity (W), pumping pressure |

| PID Control Algorithm | Maintain setpoint against disturbances | Tuning parameters, adaptive capabilities |

| Thermal Interface | Transfer heat to/from reaction vessel | Contact efficiency, corrosion resistance |

| System Insulation | Minimize environmental heat loss | Thermal conductivity, operating temperature range |

| Data Acquisition System | Record thermal profiles | Sampling rate, synchronization, resolution |

Modern thermal management systems employ high-precision PT100 resistance temperature detectors (RTDs) for their superior accuracy and stability over thermocouples, particularly in the critical process range of -50°C to 200°C common to many chemical and pharmaceutical applications [23]. These sensors interface with sophisticated proportional-integral-derivative (PID) control algorithms that continuously adjust heating and cooling outputs to maintain target temperatures. Advanced systems incorporate self-tuning PID functions that automatically optimize control parameters without manual intervention, significantly reducing setup time for parallel reactor configurations with varying thermal loads [23].

Control System Architecture and Algorithms

The control architecture represents the intelligence behind thermal management, transforming simple temperature regulation into a sophisticated process optimization tool. Modern systems implement cascade control strategies where primary and secondary control loops work in concert to reject disturbances before they impact reaction conditions. For parallel reactor systems, this often involves master-slave configurations where a central control unit coordinates individual reactor thermal profiles while managing shared utilities like chilled water or electrical power [25].

Proportional-Integral-Derivative (PID) algorithms form the foundation of most industrial thermal control systems, with each component addressing specific aspects of the control challenge:

- Proportional (P): Provides immediate response proportional to the current error

- Integral (I): Eliminates steady-state offset through continuous error correction

- Derivative (D): Anticipates future error based on rate of change

Advanced implementations incorporate model predictive control (MPC) and adaptive algorithms that dynamically adjust to changing process conditions, such as the varying heat generation rates during different phases of chemical reactions [23]. These sophisticated approaches enable temperature stabilities under 0.06°C, even during exothermic reaction phases or when implementing complex temperature ramps [25].

Thermal Control System Architecture

Experimental Protocols for Thermal System Validation

Temperature Uniformity Mapping Protocol

Validating thermal performance across parallel reactor systems requires systematic characterization to identify and address temperature gradients. The following protocol provides a comprehensive methodology for quantifying thermal uniformity and establishing performance baselines.

Materials and Equipment:

- Multi-channel data acquisition system with minimum 0.1°C resolution

- Certified reference temperature sensors (PT100 RTDs recommended)

- Calibrated heating/cooling system with documented stability

- Insulated reactor vessels identical to production units

- Heat transfer fluid with known thermal properties

Procedure:

- Install reference sensors at critical locations within each reactor vessel, including top, middle, and bottom positions, plus any identified dead zones.

- Fill reactors with a thermally representative fluid matching the heat capacity and viscosity of typical reaction mixtures.

- Program the thermal control system to execute a temperature ramp from ambient to 50°C at 1°C/minute, holding for 30 minutes once stabilized.

- Record temperatures from all sensors at 10-second intervals throughout the ramp and hold phases.

- Repeat the procedure for additional relevant temperature setpoints (e.g., 80°C, 100°C).

- Calculate mean temperature, standard deviation, and maximum observed deviation for each reactor and across the entire parallel system.

- Generate a thermal uniformity map identifying any reactors or zones requiring calibration or hardware modification.

Acceptance Criteria:

- Individual reactor stability: ±0.1°C of setpoint during hold phases

- Reactor-to-reactor consistency: ±0.25°C across all parallel vessels

- Internal reactor gradient: Maximum 0.5°C top-to-bottom

This validation protocol should be performed during system commissioning, after any significant hardware modifications, and at regular intervals (recommended quarterly) as part of preventive maintenance to ensure ongoing thermal performance [25] [23].

Dynamic Response Characterization Protocol

Chemical reactions often involve complex temperature profiles including ramps, holds, and cool-down phases. This protocol characterizes the system's ability to track dynamic temperature changes, a critical capability for modern reaction optimization.

Procedure:

- Configure the parallel reactor system with reference temperature sensors as described in Section 4.1.

- Program the following temperature profile:

- Ramp from 30°C to 70°C at maximum achievable rate

- Hold at 70°C for 15 minutes

- Ramp down to 25°C at maximum cooling rate

- Hold at 25°C for 10 minutes

- Execute the profile while recording all temperatures at 5-second intervals.

- Analyze the data to determine:

- Average ramp rates for heating and cooling phases

- Overshoot/undershoot as percentage of setpoint change

- Settling time to within ±0.1°C of setpoint after each transition

- Repeat with a simulated exothermic event by introducing a controlled heat pulse to one reactor while monitoring cross-talk to adjacent vessels.

This characterization enables fine-tuning of PID parameters specifically for the thermal mass and heat transfer characteristics of the parallel reactor configuration, optimizing both responsiveness and stability [25].

Thermal System Validation Workflow

Impact on Pharmaceutical Development and Quality Control

Thermal Influence on Reaction Outcomes

In pharmaceutical development, thermal management directly impacts critical reaction parameters including yield, selectivity, and impurity profiles. The thermodynamic characterization of molecular interactions provides essential insights for drug design, where the balance between enthalpic (ΔH) and entropic (ΔS) contributions to binding affinity can be manipulated through precise temperature control [22]. Even minor thermal variations can significantly alter this balance, potentially leading to different polymorphic forms with distinct physicochemical properties.

Case studies demonstrate that temperature fluctuations as small as 2°C during catalytic hydrogenation can shift enantiomeric excess by up to 5%, dramatically impacting drug efficacy and safety profiles [23]. Similarly, exothermic reactions in parallel reactor systems require precise thermal control to prevent thermal runaway scenarios where escalating temperatures accelerate reaction rates, generating additional heat in a dangerous positive feedback loop. Advanced thermal management systems incorporate predictive algorithms that detect early signs of excursion and implement corrective actions before critical conditions develop [23].

Table 3: Thermal Impact on Pharmaceutical Reaction Parameters

| Reaction Type | Critical Thermal Parameter | Outcome Influence | Control Tolerance |

|---|---|---|---|

| Catalytic Asymmetric Synthesis | Enantiomeric Excess | Therapeutic Efficacy | ±0.5°C |

| Polymorphic Crystallization | Nucleation Temperature | Bioavailability | ±0.2°C |

| Enzymatic Biotransformation | Enzyme Stability | Reaction Rate/Yield | ±1.0°C |

| Polymerization | Molecular Weight Distribution | Drug Release Profile | ±0.8°C |

| Oxidation | Selectivity vs. Over-oxidation | Impurity Profile | ±1.5°C |

Thermal Analysis in Pharmaceutical Characterization

Thermal analysis techniques provide essential data for pharmaceutical development, with differential scanning calorimetry (DSC), thermogravimetric analysis (TGA), and sorption analysis serving as critical tools for understanding API properties and excipient compatibility [26]. DSC measures heat flow associated with phase transitions, revealing polymorphic forms, glass transition temperatures (Tg), and amorphous content that directly influence dissolution rates and bioavailability. TGA characterizes thermal stability and decomposition behavior, identifying optimal storage conditions and packaging materials to prevent drug degradation [26].

These thermal analysis techniques are particularly valuable when integrated directly with parallel reactor systems, enabling real-time characterization of reaction products and immediate feedback for process optimization. The combination of DSC and TGA allows detailed examination of decomposition behavior and melting points, providing comprehensive thermal profiles that inform both development and quality control decisions [26]. For lyophilization processes, precise knowledge of thermal transitions enables optimization of freeze-drying cycles while maintaining protein stability and other delicate biological structures.

Essential Research Reagents and Materials

Table 4: Key Research Reagent Solutions for Thermal Management Studies

| Material/Reagent | Function | Application Notes |

|---|---|---|

| Silicone Heat Transfer Fluids | Temperature range -40°C to 200°C | Low viscosity, high thermal stability |

| PT100 Resistance Temperature Detectors | Precision temperature sensing | ±0.01°C accuracy, 3-wire or 4-wire configuration |

| Thermal Interface Compounds | Enhance heat transfer efficiency | High thermal conductivity, electrically insulating |

| Calibration Reference Standards | System validation | Certified melting point standards (e.g., gallium, indium) |

| Jacketed Reactor Systems | Uniform heat transfer | Glass or stainless steel, various volumes |

| Phase Change Materials | Isothermal operation | Constant temperature during phase transition |

| Graphene-enhanced TIMs | Thermal interface materials | High conductivity for electronics cooling [24] |

| Nanostructured Oxides | Thermal barrier coatings | High-temperature systems protection [24] |

Emerging Trends and Future Directions

Thermal management technology continues to evolve, with several emerging trends poised to impact parallel reactor research and development. The convergence of advanced sensors, digital simulation, and artificial intelligence enables predictive thermal management systems that anticipate and prevent thermal excursions before they impact reaction outcomes [24]. These systems process real-time temperature data from multiple points within parallel reactor configurations, using machine learning algorithms to identify patterns indicative of developing problems and implementing corrective actions automatically.

Advanced materials, particularly graphene-based thermal interface materials and nanostructured oxides, are transforming thermal management capabilities in high-performance applications [24]. Graphene-enhanced TIMs demonstrate dramatically improved thermal conductivity compared to conventional materials, enabling more efficient heat transfer in miniaturized reactor systems and microfluidic devices. Similarly, developments in two-phase immersion cooling, initially pioneered for data center applications, show promise for managing extreme thermal loads in high-throughput parallel reactor systems performing highly exothermic reactions [27] [24].

The growing emphasis on sustainability and energy efficiency is driving adoption of thermal energy storage systems that capture and reuse waste heat from exothermic reactions, improving overall process economics while reducing environmental impact [24]. These developments, combined with increasingly sophisticated control algorithms and high-precision sensing technologies, promise continued advancement in thermal management capabilities for parallel reactor systems, enabling more complex reactions, improved data quality, and accelerated development timelines across pharmaceutical, chemical, and materials science domains.

Efficient thermal management is a cornerstone of effective process control in parallel reactor systems, particularly in sensitive applications such as pharmaceutical development and chemical synthesis. The composition of the reactor vessel itself is a critical, yet often underestimated, determinant of overall thermal transfer efficiency. The material interface between the reaction mixture and the heating or cooling source directly influences heat flux, temperature uniformity, and ultimately, reaction kinetics and product quality. This guide provides an in-depth analysis of how reactor vessel composition impacts thermal performance, offering researchers a scientific framework for material selection and system optimization within parallel reactor platforms. By understanding these fundamental principles, scientists and engineers can enhance the reliability and scalability of experimental results, ensuring robust data generation for broader research thesis on thermal control systems.

Fundamentals of Heat Transfer in Reactor Vessels

The efficiency of heat transfer through a reactor wall is governed by the fundamental laws of thermodynamics. The overall heat transfer coefficient (U-value) quantifies the total effectiveness of the system to transfer heat, incorporating the resistance of the internal fluid film, the reactor wall itself, and the external fluid film [28]. This relationship is central to reactor design and is described by the general heat transfer equation: Q = U × A × ΔT, where Q is the rate of heat transfer, U is the overall heat transfer coefficient, A is the surface area, and ΔT is the temperature driving force [28].

A higher U-value indicates more efficient heat transfer, which is crucial for controlling exothermic reactions and achieving consistent temperature profiles across multiple reactors in a parallel setup. The U-value is intrinsically linked to the thermal conductivity (k) of the wall material—a material's inherent ability to conduct heat [29] [28]. Materials with high thermal conductivity, such as metals, facilitate rapid heat conduction, whereas low-conductivity materials act as thermal barriers. In practice, the choice of reactor material is a balance between this thermal performance and other critical factors such as chemical corrosion resistance, mechanical strength, and cost [29] [28]. Factors like flow configuration, fouling, and fluid velocity further modulate the final heat transfer efficiency achieved in a system [29].

Comparative Analysis of Reactor Materials

The selection of reactor construction material presents a direct trade-off between chemical compatibility and thermal performance. The following table summarizes key properties of common materials, providing a basis for quantitative comparison.

Table 1: Thermal Properties of Common Reactor Vessel Materials

| Material | Thermal Conductivity (W/m·K) | Typical Overall Heat Transfer Coefficient, U (W/m²·K) | Primary Application Rationale |

|---|---|---|---|

| Stainless Steel | 15 - 25 | 500 - 650 | Excellent combination of thermal efficiency, cost, and mechanical strength [28]. |

| Hastelloy | 10 - 15 | 400 - 550 | Superior corrosion resistance with a moderate penalty on thermal performance [28]. |

| Glass-Lined Steel | 0.8 - 1.5 | 200 - 300 | Exceptional chemical inertness for highly corrosive processes, but very poor heat transfer [28]. |

| PTFE-Lined Steel | ~0.25 | 50 - 100 | Maximum chemical resistance; thermal performance is severely limited [28]. |

The practical implication of these differences is profound. For instance, under identical conditions, a stainless steel reactor can remove heat approximately ten times more effectively than a PTFE-lined reactor [28]. This disparity directly impacts process safety and efficiency, especially in exothermic reactions where inadequate heat removal can lead to temperature overshoot, hot spots, or thermal runaway [28]. Consequently, the use of low-conductivity materials like glass-lined or PTFE-lined steel necessitates design compensations, such as larger heat transfer surfaces, higher coolant flow rates, or greater temperature differentials (ΔT) to achieve the required thermal control [28].

Experimental Methodologies for Thermal Analysis

Validating and optimizing thermal performance requires rigorous experimental protocols. The following methodologies are critical for characterizing and benchmarking reactor systems.

High-Throughput Thermal Validation Protocol

A proven method for evaluating thermal performance across multiple reactors involves a system with individual temperature control for each vessel. In one documented setup, eight parallel quartz reactors (23.5 mm diameter) were each equipped with a separate K-type thermocouple and radiant heater, allowing for independent measurement and control [30]. This configuration achieved steady-state temperature distributions within 0.5°C of a common setpoint across a range of 50°C to 700°C [30].

Procedure:

- System Calibration: Calibrate all thermocouples against a traceable standard prior to installation.

- Isothermal Equilibrium: Set all reactors to an identical target temperature. Without any reaction load, monitor the temperatures until all reactors reach a stable state.

- Data Collection: Record the temperature of each reactor over a defined period (e.g., 60 minutes) to assess stability and inter-reactor variance.

- Performance Metric: Calculate the standard deviation of temperatures across all reactors to quantify the system's thermal uniformity. The goal is to minimize this value.

This protocol directly validates the capability of a parallel system to maintain uniform temperatures, a prerequisite for reliable comparative experimentation.

Computational Modeling for Core Thermal Analysis

For systems where direct measurement is challenging, such as nuclear reactors or highly hazardous processes, computational modeling provides an indispensable tool. A high-fidelity model of the Impulse Graphite Reactor (IGR) demonstrates this approach, coupling neutronic (MCNP) and thermal (ANSYS Mechanical APDL) models to simulate core behavior under various operational modes [31].

Procedure:

- Model Development: Create a detailed 3D geometric model of the reactor core and experimental channels.

- Physics Coupling: Develop software (e.g., in a VB.Net environment) to facilitate data exchange between the neutronic and thermal models, capturing their mutual influence [31].

- Simulation Execution: Run transient simulations to model the reactor's response to operational changes, analyzing key parameters like neutron flux distribution and thermal stress.

- Model Validation: Validate the computational tool by comparing simulation results, such as neutron field data and core thermal state, with empirical data from actual reactor experiments [31].

This methodology enables the analysis of time-dependent irradiation effects and thermal stresses, providing a computational foundation for experimental safety and design [31].

Real-Time 3D Material Failure Monitoring

Advanced techniques now allow for the real-time observation of material degradation under extreme conditions. MIT researchers developed a method using high-intensity X-rays to image corrosion and cracking in 3D, simulating the intense radiation environment inside a nuclear reactor [32].

Procedure:

- Sample Preparation: Deposit a thin film of the material of interest (e.g., nickel) onto a substrate. A critical step involves adding a buffer layer of silicon dioxide to prevent unwanted chemical reactions between the film and substrate during heating [32].

- Dewetting and Crystal Formation: Heat the sample to a high temperature in a furnace to form isolated single crystals via solid-state dewetting [32].

- In-Situ Irradiation and Imaging: Subject the stable sample to a focused, high-intensity X-ray beam while applying environmental stressors. The X-rays mimic neutron irradiation and enable imaging [32].

- Image Reconstruction and Analysis: Use phase retrieval algorithms on the X-ray data to reconstruct the 3D shape and size of the crystals as they evolve, monitoring strain and failure mechanisms in real-time [32].

This technique provides unprecedented insight into how materials fail, informing the development of more resilient alloys for reactor vessels and other high-stress applications [32].

Implementation in Parallel Reactor Systems

The principles of material selection and thermal analysis converge in the design and operation of parallel reactor systems for research. Effective implementation requires a systems-level approach to thermal management.

Workflow for Thermal System Design

The following diagram outlines a logical workflow for integrating material considerations into the design of a parallel reactor thermal control system.

Diagram 1: Reactor Thermal Design Workflow.

Key Research Reagents and Materials

Selecting the appropriate materials and reagents is fundamental to executing the described experimental methodologies.

Table 2: Essential Research Reagent Solutions for Thermal Studies

| Item | Function/Description | Application Context |

|---|---|---|

| K-type Thermocouples | Temperature sensors for independent measurement and control of individual reactor temperatures [30]. | High-throughput thermal validation in parallel reactor systems [30]. |

| Silicon Dioxide (SiO₂) Buffer Layer | A thin film layer preventing chemical reaction between a sample material (e.g., nickel) and its substrate during high-temperature studies [32]. | Real-time 3D imaging of material failure under simulated reactor conditions [32]. |

| Liquid Metal Coolant (e.g., Lead-Bismuth Eutectic) | A coolant with high thermal conductivity and low Prandtl number, enabling efficient heat transfer in high-temperature systems [33]. | Thermal-hydraulic studies in advanced reactor designs like the Dual Fluid Reactor [33]. |

| Polymer-Plasticizer Blends (e.g., HPMC with Triacetin) | Materials used to study the effect of thermal properties (e.g., glass transition temperature) on processability in thermal systems like hot-melt extrusion [34]. | Analogous studies of heat transfer and material behavior in controlled thermal processes. |

The selection of reactor vessel composition is a decisive factor in determining the thermal transfer efficiency of parallel reactor systems. As demonstrated, the inherent thermal conductivity of materials like stainless steel, Hastelloy, and glass-lined steel directly dictates the achievable heat flux and control precision. By leveraging structured experimental protocols—from high-throughput validation and computational fluid dynamics to advanced real-time imaging—researchers can make informed decisions that balance chemical compatibility with thermal demands. Integrating these material considerations into a systematic design workflow ensures robust thermal management, which is foundational to obtaining reliable, reproducible, and scalable data in pharmaceutical development and chemical research. This rigorous approach to material science directly contributes to the advancement of parallel reactor thermal control systems, enabling safer and more efficient process development.

Implementing Thermal Control: Setup, Operation, and Advanced Application Strategies

Step-by-Step System Configuration for Different Reactor Types and Scales

The design and configuration of nuclear reactor systems are critical for ensuring safe, efficient, and predictable operation across a diverse range of reactor types and scales. This guide provides a structured, step-by-step framework for configuring these complex systems, with a specific focus on parallel thermal control systems essential for research applications. A properly configured thermal control system maintains the reactor core within its safe operating envelope, manages heat removal, and ensures the stability of the nuclear chain reaction. For researchers and drug development professionals, understanding these principles is foundational for utilizing nuclear technologies in material science, isotope production, and other advanced research domains. The following sections detail the core configuration parameters, provide comparative analysis of reactor types, and outline explicit experimental protocols for system characterization and control.

Core Configuration Parameters and Comparative Analysis

The performance and safety of any reactor system are governed by a set of interdependent core parameters. These parameters must be carefully balanced during the system design and configuration phase.

Table 1: Fundamental Reactor Configuration Parameters

| Parameter | Description | Impact on System Operation |

|---|---|---|

| Reactor Type | The physical design and principles of operation (e.g., PWR, BWR, MSR) [35]. | Determines coolant, fuel type, moderating material, and overall system architecture. |

| Thermal Power | The total rate of heat generation in the core (MWth). | Dictates the required heat removal capacity and the sizing of the coolant system. |

| Coolant & Properties | The substance (e.g., H₂O, Na, He, Molten Salt) and its thermo-physical properties [35]. | Impacts heat transfer efficiency, operating pressure, and chemical compatibility. |

| Core Inlet/Outlet Temperature | The temperature of the coolant as it enters and exits the core [36]. | Defines the thermodynamic efficiency and influences material thermal stresses. |

| System Pressure | The operational pressure of the primary coolant circuit. | Prevents coolant boiling (in PWRs) or is managed to allow boiling (in BWRs). |

| Mass Flow Rate | The rate of coolant mass passing through the core [36]. | Directly affects the core outlet temperature and the peak cladding temperature. |

| Fuel Assembly Design | The geometric arrangement of fuel pins, cladding, and spacing. | Influences power distribution, heat transfer surface area, and hydraulic resistance. |

Different reactor types leverage these parameters in distinct ways. The table below provides a comparative analysis of major reactor families, highlighting their key characteristics and primary research applications.

Table 2: Comparison of Reactor Types and Scales

| Reactor Type | Coolant / Moderator | Common Scale | Typical Configuration Notes | Primary Research Applications |

|---|---|---|---|---|

| Pressurized Water Reactor (PWR) | Light Water / Light Water [35] | Large (Gigawatt-scale) | Two-loop system: primary loop at high pressure, secondary loop generates steam [35]. | Base-load power generation, neutron beamline experiments. |

| Boiling Water Reactor (BWR) | Light Water / Light Water [35] | Large (Gigawatt-scale) | Single-loop system; steam is generated directly in the core and fed to the turbine [35]. | Base-load power generation. |

| Pressurized Heavy Water Reactor (PHWR) | Heavy Water / Heavy Water [35] | Large (Gigawatt-scale) | Uses natural uranium fuel; online refueling allows for high availability [35]. | Production of medical isotopes (e.g., Co-60). |

| Small Modular Reactor (SMR) | Often Light Water [35] | Small (<<700 MWe) | Integrated design or compact loop; emphasis on passive safety systems and modularity [35]. | Remote power, process heat, desalination. |

| Liquid Metal Fast Reactor (LMFR) | Sodium or Lead / None (Fast Spectrum) [35] | Demonstration & Commercial | Pool-type or loop-type design; requires intermediate heat exchanger to isolate reactive coolant [35]. | Fuel cycle closure, waste transmutation. |

| Molten Salt Reactor (MSR) | Molten Fluoride Salt / Graphite [35] | Experimental & Prototype | Fuel may be dissolved in coolant; high-temperature operation for thermal or fast spectrum [35]. | Advanced fuel cycle, high-temperature process heat. |

| High-Temperature Gas-Cooled Reactor (HTGR) | Helium / Graphite [35] | Demonstration & Prototype | Prismatic block or pebble-bed core; very high outlet temperatures (>750°C) [35]. | Hydrogen production, industrial process heat. |

| Lab-Scale Fixed-Bed | Gas / N.A. | Lab-Scale | Simple construction; small catalyst quantities; operable under isothermal conditions [37]. | Catalyst screening and evaluation [37]. |

| Lab-Scale CSTR | Liquid or Gas / N.A. | Lab-Scale | Perfectly mixed vessel; composition uniform throughout and equal to exit stream [37]. | Intrinsic kinetic studies [37]. |

Step-by-Step System Configuration Workflow

Configuring a reactor system, whether for large-scale power generation or lab-scale research, follows a logical sequence from initial definition to final validation. The diagram below outlines this overarching workflow.

Define Reactor Purpose, Scale, and Performance Goals

The first step involves a clear definition of the system's objectives. This foundational decision influences all subsequent configuration choices.

- Determine the Primary Function: Is the system intended for base-load electricity generation, process heat for industrial applications, advanced materials testing, or isotope production? For example, an HTGR is suited for high-temperature process heat, while a PWR is optimized for electricity generation [35].

- Establish the Power Scale: Determine the required thermal (MWth) and, if applicable, electrical (MWe) power output. This differentiates between large-scale power reactors (e.g., 1000+ MWe PWRs) and small modular reactors (SMRs), which are designed for smaller, more flexible deployment [35].

- Identify Key Performance Metrics: Define the target metrics, such as fuel burnup, capacity factor, outlet temperature, and overall thermal efficiency.

Select Fundamental Reactor Type

Based on the goals from Step 1, a fundamental reactor type is selected.

- Coolant and Moderator Selection: Choose the coolant (water, heavy water, gas, liquid metal, molten salt) and moderator (light water, heavy water, graphite) based on the neutron spectrum (thermal or fast) and desired operating temperatures [35]. For instance, liquid metal coolants are used in fast neutron reactors to avoid moderating the neutrons [35].

- Fuel Cycle Considerations: Select the fuel form (oxide, metal, ceramic) and enrichment, or consider alternative fuels like thorium. Heavy water reactors (PHWRs), for example, can use natural uranium, while most LWRs require enriched fuel [35].

- Evaluate Economic and Licensing Factors: Consider the technological maturity, fuel availability, waste management, and regulatory pathway for the chosen design.

Specify Core Design and Thermal-Hydraulic Parameters

This step involves the detailed engineering of the reactor core and its cooling characteristics.

- Fuel Lattice Design: Define the fuel assembly geometry, including fuel pin pitch, diameter, and arrangement. This affects the power density and heat transfer surface area. Introducing features like inlet orifice plates in fuel assemblies can help optimize flow distribution and reduce thermal inequalities across the core [36].

- Set Operational Envelopes: Define the target core inlet and outlet coolant temperatures and system pressure. For an SCW-SMR, an increase in the system mass flow rate was used as a specific design measure to successfully reduce the core outlet temperature [36].

- Conduct Neutronic and Thermal-Hydraulic Coupling Analysis: Perform preliminary calculations to ensure that the power distribution generated by the neutron physics model can be adequately removed by the thermal-hydraulic design without exceeding safety limits. This often requires coupled calculations, as demonstrated in the SCW-SMR analysis using the Apros and Serpent 2 codes [36].

Design Primary Coolant System and Safety Systems

The core design is integrated with the broader plant systems.

- Size Major Components: Design and size the primary coolant pumps, piping, pressurizer (for PWRs), and steam generators (for PWRs and some SMRs) based on the required flow rates and heat duty.

- Implement Redundant and Diverse Safety Systems: Design engineered safety features, including emergency core cooling systems (ECCS), shutdown systems, and containment. SMRs often leverage passive safety systems that rely on natural forces like gravity and convection [35].

- Configure the Balance of Plant: Design the secondary (power conversion) and tertiary (heat rejection) systems. For a BWR, this is a direct cycle from the core to the turbine. For a PWR, this involves steam generators and a separate secondary loop [35].

Integrate Instrumentation and Control Logic

The control system is the nervous system of the reactor, responsible for safe and stable operation.

- Select Sensor Suite: Choose appropriate in-core and ex-core instrumentation for monitoring neutron flux, core exit temperature, system pressure, and coolant flow rate.