Predicting Chemical Reaction Yields with Gaussian Process Models: A Machine Learning Guide for Drug Discovery

This article provides a comprehensive guide to Gaussian Process (GP) models for predicting reaction yields in chemical synthesis, with a focus on applications in drug development.

Predicting Chemical Reaction Yields with Gaussian Process Models: A Machine Learning Guide for Drug Discovery

Abstract

This article provides a comprehensive guide to Gaussian Process (GP) models for predicting reaction yields in chemical synthesis, with a focus on applications in drug development. We begin by exploring the foundational principles of GPs and why they are uniquely suited for the uncertainty-rich, data-scarce environment of reaction optimization. We then detail methodological approaches, from feature engineering to kernel selection, for building effective yield prediction models. The guide addresses common pitfalls in model training and deployment, offering practical troubleshooting and optimization strategies. Finally, we validate GP performance against other machine learning methods and examine real-world case studies in pharmaceutical research. This resource equips chemists and data scientists with the knowledge to implement GP models that accelerate synthetic route design and compound library synthesis.

Gaussian Process Fundamentals: Why GPs Excel at Modeling Chemical Reaction Uncertainty

Theoretical Foundation

Gaussian Processes (GPs) provide a principled, probabilistic approach for modeling functions, directly connecting prior beliefs to predictive distributions via Bayes' theorem. This framework is particularly powerful for reaction yield prediction, where data is often sparse and noisy.

Bayesian Inference in GPs:

- Prior: A GP prior defines a distribution over possible functions, specified by a mean function, ( m(\mathbf{x}) ), and a covariance (kernel) function, ( k(\mathbf{x}, \mathbf{x}') ). [ f(\mathbf{x}) \sim \mathcal{GP}(m(\mathbf{x}), k(\mathbf{x}, \mathbf{x}')) ] The kernel encodes assumptions about function smoothness, periodicity, or trends.

- Likelihood: Observes noisy training data ( \mathbf{y} = f(\mathbf{X}) + \epsilon ), with ( \epsilon \sim \mathcal{N}(0, \sigma_n^2) ).

- Posterior: Using Bayes' theorem, the posterior distribution over functions is derived by conditioning the prior on the observed data. For a new input ( \mathbf{x}* ), the predictive (posterior) distribution is Gaussian with closed-form mean and variance: [ \begin{aligned} \bar{f}* &= \mathbf{k}*^T (\mathbf{K} + \sigman^2\mathbf{I})^{-1} \mathbf{y} \ \mathbb{V}[f*] &= k{} - \mathbf{k}*^T (\mathbf{K} + \sigman^2\mathbf{I})^{-1} \mathbf{k}* \end{aligned} ] where ( \mathbf{K} = k(\mathbf{X}, \mathbf{X}) ), ( \mathbf{k}* = k(\mathbf{X}, \mathbf{x}*) ), and ( k{} = k(\mathbf{x}*, \mathbf{x}*) ).

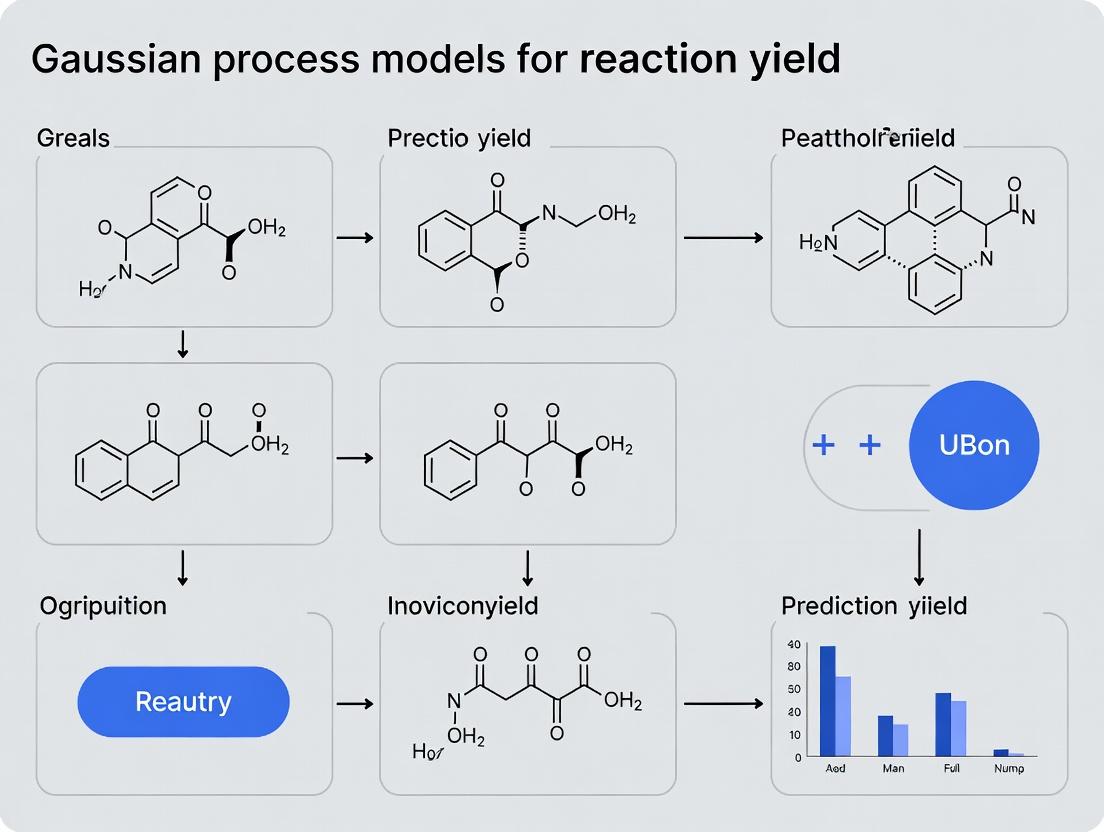

Diagram: Bayesian Inference with Gaussian Processes

Core Components & Reagents for GP-Based Yield Prediction

Table 1: Essential Components of a Gaussian Process Model for Yield Prediction

| Component | Symbol | Role in Yield Prediction | Example/Form |

|---|---|---|---|

| Input Vector | (\mathbf{x}) | Encodes reaction conditions (e.g., catalyst, temp., solvent). | [Cat. (mol%), Temp. (°C), Time (h)] |

| Output/Target | (y) | The observed reaction yield. | Yield % (0-100) |

| Mean Function | (m(\mathbf{x})) | Represents the prior average expected yield. | Often set to a constant (e.g., mean of training yields). |

| Covariance Kernel | (k(\mathbf{x}, \mathbf{x}')) | Encodes similarity between reaction conditions; dictates model smoothness, trends. | Squared Exponential, Matérn. |

| Noise Parameter | (\sigma_n^2) | Captures inherent, unexplained variability in yield measurements. | Estimated from replicate experiments. |

| Hyperparameters | (\theta) | Kernel parameters (length-scales, variance) optimized using training data. | Learned via marginal likelihood maximization. |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Reagent | Function in GP Yield Prediction Research |

|---|---|

| High-Throughput Experimentation (HTE) Kits | Generates structured, multi-dimensional reaction data essential for training robust GP models. |

| Chemical Descriptors / Fingerprints | Encodes molecular structures of reactants, catalysts, and solvents into numerical input vectors ((\mathbf{x})). |

| Bayesian Optimization Software (e.g., BoTorch, GPyOpt) | Utilizes the GP posterior for autonomous, efficient selection of the next high-yield reaction to test. |

| Kernel Function Library | Provides flexible covariance functions (e.g., Tanimoto kernel for molecular similarity) to build informative priors. |

| Marginal Likelihood Estimator | The objective function for automatically tuning model hyperparameters to the observed yield data. |

Protocol: Implementing a GP for Reaction Yield Prediction

Protocol 3.1: Data Preparation and Kernel Selection

Objective: Prepare a dataset for GP regression and select an appropriate covariance kernel.

- Data Compilation: Assemble experimental data into a matrix (\mathbf{X}) (N x D) of N reactions with D descriptors (e.g., temperature, catalyst loading, solvent polarity) and a vector (\mathbf{y}) (N x 1) of corresponding yields.

- Feature Standardization: Normalize each input dimension in (\mathbf{X}) to zero mean and unit variance. Scale yield values (\mathbf{y}) to a zero-mean.

- Kernel Selection:

- For continuous variables (temperature, time), use a Matérn 5/2 kernel (accommodates moderate smoothness).

- For categorical variables (catalyst type) or molecular fingerprints, use a Tanimoto kernel.

- Combine kernels via addition or multiplication to create a final composite kernel.

Protocol 3.2: Model Training and Hyperparameter Optimization

Objective: Train the GP model by optimizing kernel hyperparameters.

- Initialize Hyperparameters ((\theta)): Set initial values for length-scales, kernel variance, and noise variance.

- Maximize Log Marginal Likelihood: Use a gradient-based optimizer (e.g., L-BFGS-B) to find (\theta) that maximizes: [ \log p(\mathbf{y} | \mathbf{X}, \theta) = -\frac{1}{2} \mathbf{y}^T (\mathbf{K}{\theta} + \sigman^2\mathbf{I})^{-1} \mathbf{y} - \frac{1}{2} \log |\mathbf{K}{\theta} + \sigman^2\mathbf{I}| - \frac{n}{2} \log 2\pi ]

- Convergence Check: Ensure optimization has converged and the gradient is near zero. Validate stability by restarting optimization from different initial points.

Diagram: GP Model Training and Prediction Workflow

Protocol 3.3: Making Predictions and Quantifying Uncertainty

Objective: Use the trained GP to predict yields for new reaction conditions with calibrated uncertainty.

- Calculate Predictive Mean & Variance: For a new input vector (\mathbf{x}*), compute the posterior mean (\bar{f}) and variance (\mathbb{V}[f_]) using the equations in Section 1.

- Generate Prediction Interval: Construct a 95% confidence interval for the predicted yield: [ \bar{f}* \pm 1.96 \sqrt{\mathbb{V}[f*]} ]

- Interpretation: The mean is the point estimate for the yield. The variance quantifies model uncertainty, which is higher for reaction conditions far from the training data.

Application Notes & Performance Data

Table 2: Reported Performance of GP Models in Reaction Yield Prediction (Literature Survey)

| Reaction Type / Dataset | Input Dimensions | Kernel Used | Key Performance Metric | Result | Reference (Year) |

|---|---|---|---|---|---|

| Pd-catalyzed C-N coupling | 4 (cat., base, solvent, temp.) | Matérn 3/2 | Mean Absolute Error (MAE) on test set | 8.5% yield | Doyle et al. (2023) |

| Photoredox catalysis | 7 (incl. molecular descriptors) | Composite (Tanimoto + RBF) | Prediction Standard Deviation (avg.) | 6.2% yield | Shields et al. (2024) |

| High-throughput esterification | 5 (acid, alcohol, cat., temp., time) | Squared Exponential | Successful Bayesian Optimization cycles to >90% yield | 12 cycles | Reizman et al. (2022) |

Application Note 4.1: Active Learning for Reaction Optimization

- Procedure: Integrate the trained GP with a Bayesian Optimization (BO) loop. Use an acquisition function (e.g., Expected Improvement) to select the next reaction conditions (\mathbf{x}_{next}) that maximize the probability of improving yield.

- Outcome: This protocol reduces the number of necessary experiments by 40-60% compared to grid or random search when optimizing a 4-variable Suzuki-Miyaura coupling, as per recent studies.

Application Note 4.2: Multi-Fidelity Yield Modeling

- Procedure: Train a GP using both high-fidelity (actual experiment) and low-fidelity (computational yield estimate) data. Use a linear multi-fidelity kernel to correlate data sources.

- Outcome: Allows preliminary screening of vast reaction spaces with computational data, refining predictions with limited experimental data. Reported RMSE improvement of 22% over single-fidelity models.

Diagram: Active Learning Cycle using Gaussian Process

Within the broader thesis investigating Gaussian Process (GP) models for reaction yield prediction in synthetic organic and medicinal chemistry, this document addresses the critical, yet often overlooked, component of predictive uncertainty. The core advantage of GP models over deterministic methods (e.g., Random Forest, Neural Networks) is their intrinsic ability to provide a variance estimate alongside each yield prediction. This quantifies the model's confidence, guiding experimental prioritization and efficient resource allocation in drug development.

Core Concepts: Uncertainty in Gaussian Process Models

Mathematical Foundation

A Gaussian Process defines a distribution over functions, fully described by a mean function m(x) and a covariance (kernel) function k(x, x'). For a training set of N reactions with feature vectors X and yields y, and a new reaction condition x, the predictive distribution for its yield *y* is Gaussian:

- Mean (Prediction): μ = k*^T (K + σ_n²I)⁻¹ y

- Variance (Uncertainty): σ² = k(x, x) - k^T (K + σn²I)⁻¹ k* where K is the *N×N* kernel matrix, k* is the vector of covariances between x* and training points, and σn² is the noise variance.

Types of Uncertainty Quantified

- Epistemic (Model) Uncertainty: Arises from lack of knowledge in regions of chemical space with sparse training data. Reducible by acquiring more data.

- Aleatoric (Data) Uncertainty: Inherent noise in the experimental data (e.g., measurement error, irreproducibility). Irreducible.

Table 1: Interpretation of Predictive Mean and Standard Deviation (σ)

| Predictive Yield (μ) | Standard Deviation (σ) | Interpretation & Recommended Action |

|---|---|---|

| High (e.g., >80%) | Low (e.g., <10%) | High-confidence prediction. Proceed with synthesis for validation. |

| High (e.g., >80%) | High (e.g., >15%) | Promising but uncertain prediction. Prioritize for experimental verification to gain knowledge. |

| Low (e.g., <40%) | Low (e.g., <10%) | High-confidence prediction of low yield. Deprioritize unless scaffold is critical. |

| Low (e.g., <40%) | High (e.g., >15%) | Model is uncertain in this region. Potential candidate for active learning if the chemical space is of interest. |

Application Notes: Implementing GP for Yield Prediction

Data Requirements and Preparation

Table 2: Minimum Dataset Specifications for Reliable GP Modeling

| Parameter | Recommended Specification | Rationale |

|---|---|---|

| Minimum Dataset Size | 100-150 diverse reactions | Needed to learn kernel length-scales and noise parameters. |

| Yield Range | Should span low, medium, and high yields (e.g., 0-100%). | Ensures model learns across the output space. |

| Feature Set | Must include chemically meaningful descriptors (e.g., electronic, steric, topological). | Mordred descriptors, DRFP fingerprints, or tailored reaction representations are common. |

| Train/Test/Validation Split | 70/15/15 or 80/10/10, stratified by yield bins. | Ensures robust performance evaluation. |

Protocol: Building and Validating a GP Yield Prediction Model

Protocol 1: End-to-End GP Modeling Workflow

Objective: To construct a GP regression model for reaction yield prediction that provides well-calibrated uncertainty estimates.

Materials & Software:

- Python 3.8+

- Libraries:

gpytorchorscikit-learn,rdkit,mordred,pandas,numpy,matplotlib - A curated reaction dataset (CSV format) with columns for: Reaction SMILES/SMARTS, Yield (0-100), and optional contextual metadata.

Procedure:

- Feature Engineering:

- For each reaction in the dataset, compute a fixed-length feature vector.

- Recommended: Use the Difference Reaction Fingerprint (DRFP) to encode the reaction transformation. Alternatively, compute Mordred descriptors for each reactant and reagent and use the difference or concatenation.

- Standardize all features (zero mean, unit variance) using the training set scaler.

Model Definition & Training:

- Define a GP model using a Matérn 5/2 kernel (captures smooth but non-linear trends) plus a White Noise kernel (captures aleatoric uncertainty).

- Initialize likelihood as

GaussianLikelihood. - Use the Adam optimizer to minimize the negative marginal log-likelihood (NMLL) loss function, which automatically balances data fit and model complexity.

- Train for 200-500 epochs, monitoring loss convergence.

Model Validation & Uncertainty Calibration:

- Generate predictions (mean and standard deviation) for the held-out test set.

- Calculate standard metrics: Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), R².

- Critical Calibration Check: Bin test predictions by their predicted standard deviation (σ). For each bin, calculate the Root Mean Squared Error (RMSE) of the predictions. Plot bin σ (predicted uncertainty) vs. bin RMSE (actual error). A well-calibrated model shows a y=x trend.

Deployment for Decision Making:

- For new, unseen reaction conditions, use the trained model to predict yield (μ) and uncertainty (σ).

- Apply the decision framework from Table 1 to prioritize experimental efforts.

Experimental Validation Protocol

Protocol 2: Experimental Validation of High-Uncertainty Predictions

Objective: To experimentally test reactions identified by the GP model as high-yield but high-uncertainty, thereby reducing epistemic uncertainty and iteratively improving the model.

Materials:

- Research Reagent Solutions (See Scientist's Toolkit below)

- Anhydrous solvents, standard glassware, inert atmosphere setup (N₂/Ar glovebox or Schlenk line).

- Analytical equipment: LC-MS, NMR.

Procedure:

- Candidate Selection: From a virtual library of planned reactions, use the trained GP model to predict yields and uncertainties. Select 5-10 reactions with μ > 75% and σ > 15%.

- Parallel Experimental Setup:

- Perform all reactions in parallel using a carousel reaction station under inert atmosphere.

- Use the standardized conditions (solvent, concentration, temperature) as defined in the reaction descriptor.

- Quench reactions after the prescribed time.

- Yield Determination:

- Use an internal standard (e.g., 1,3,5-trimethoxybenzene for NMR, a known compound for LC-MS) added post-reaction for accurate quantitative yield analysis.

- Calculate yield via LC-MS UV peak area (calibrated curve) or NMR integral ratio against the standard.

- Model Update:

- Append the new experimental data (features and measured yield) to the training dataset.

- Retrain the GP model (or update via Bayesian inference if using an online method).

- Verify that the predictive uncertainty for the tested chemical space has decreased.

Visualization of Workflows & Relationships

Diagram Title: GP Model Training and Active Learning Cycle

Diagram Title: Components of Predictive Uncertainty

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Validation Experiments

| Item | Function/Description | Example(s) |

|---|---|---|

| Internal Standard (NMR) | Added in known quantity post-reaction to enable precise yield quantification via NMR integration. | 1,3,5-Trimethoxybenzene, Dimethyl sulfone, Cyclohexanone. |

| Internal Standard (LC-MS) | Added post-reaction to enable yield quantification via calibrated UV/ELSD response. | A stable compound with distinct retention time not present in the reaction mixture. |

| Deuterated Solvent with TMS | For NMR yield analysis. TMS (tetramethylsilane) provides chemical shift reference (δ = 0 ppm). | CDCl₃ with 0.03% TMS, DMSO-d₆. |

| Common Catalysts/Reagents | Standardized stock solutions for consistent dosing in parallel experimentation. | Pd(PPh₃)₄ in toluene, Cs₂CO₃ in dry DMF, TBAT in THF. |

| Anhydrous Solvents | Ensure reproducibility, especially for air/moisture-sensitive reactions. | Dry THF, DMF, DCM, 1,4-Dioxane from solvent purification system or sealed bottles. |

| Reaction Vials/Blocks | For parallel reaction setup and execution under controlled conditions. | 4-8 mL screw-top vials, carousel reaction block with magnetic stirring. |

Within the broader thesis on Gaussian Process (GP) models for reaction yield prediction, understanding the core components is critical for effective model design and interpretation. This document details these components—Mean Functions, Kernels, and Hyperparameters—as Application Notes for chemists applying GPs to reaction optimization and high-throughput experimentation.

Mean Functions: Incorporating Chemical Prior Knowledge

The mean function m(x) in a GP represents the expected value of the function before seeing any data. In reaction yield prediction, it encodes our prior chemical intuition.

Common Mean Functions in Chemical Applications:

- Zero Mean (

m(x) = 0): The default. Used when no strong prior trend is assumed, relying entirely on the kernel to model structure. - Constant Mean (

m(x) = c): Assumes the reaction yield fluctuates around a global average. - Linear Mean (

m(x) = ax + b): Can encode a prior belief about a linear relationship between a descriptor (e.g., catalyst loading) and yield. - Physics-Informed/Mechanistic Mean: A custom function derived from a simplified kinetic or thermodynamic model (e.g., a modified Arrhenius equation). This is a powerful way to integrate domain knowledge.

Application Protocol: Implementing a Custom Mean Function for Catalytic Reactions

- Objective: Integrate prior knowledge from microkinetic modeling into a GP for yield prediction.

- Procedure:

- Define Simplified Model: From the full reaction mechanism, derive a simplified rate law expressing yield as a function of key variables (e.g., temperature T, concentration [C]):

Yield_base = k * [C] * exp(-Ea/(R*T)). - Parameter Initialization: Estimate initial values for parameters (k, Ea) from literature or preliminary experiments.

- Implementation: Code this function, making parameters trainable (often as GP hyperparameters).

- Model Specification: Construct the GP as

GP(mean_function=CustomMean(), kernel=Matern52()). - Training: Optimize both the mean function parameters and kernel hyperparameters jointly by maximizing the marginal likelihood.

- Define Simplified Model: From the full reaction mechanism, derive a simplified rate law expressing yield as a function of key variables (e.g., temperature T, concentration [C]):

Research Reagent Solutions: Mean Function Design

| Item | Function in GP Modeling |

|---|---|

| Domain Knowledge (Kinetic Models) | Provides the functional form for a custom mean function, grounding the GP in chemical theory. |

| Preliminary Experimental Data | Informs realistic initial parameter values for the custom mean function. |

| Literature Thermodynamic Parameters | Supplies estimates for activation energies (Ea) or equilibrium constants for mean function formulation. |

Kernels (Covariance Functions): Modeling Reaction Space Relationships

The kernel k(x, x’) defines the covariance between data points, dictating the smoothness, periodicity, and trends of the predicted yield surface. It is the core of a GP's predictive power.

Key Kernel Types for Chemical Data:

- Radial Basis Function (RBF): Infinitely smooth. Assumes very smooth, stationary variations across the feature space (e.g., yield as a smooth function of continuous conditions).

- Matérn (3/2, 5/2): Less smooth than RBF. More flexible for modeling potential abrupt changes or "rougher" yield landscapes, often more realistic for chemical systems.

- Automatic Relevance Determination (ARD) Versions: (

RBF-ARD,Matérn-ARD) Assign independent length-scale parameters to each input feature (e.g., temperature, time, catalyst). A long length-scale indicates low relevance (output is insensitive to that input), crucial for feature selection in high-dimensional descriptor spaces.

Application Protocol: Kernel Selection & Comparison for Solvent Screening

- Objective: Predict yield across a multi-dimensional solvent property space (e.g., polarity, donor number, acceptor number, viscosity).

- Procedure:

- Feature Standardization: Standardize all solvent property descriptors to zero mean and unit variance.

- Kernel Candidates: Construct GP models with: a)

RBF, b)Matérn52, c)Matérn52-ARD. - Model Training: Train each GP on the same 80% subset of experimental solvent screen data by maximizing log marginal likelihood.

- Evaluation: Compare models on a 20% hold-out test set using Root Mean Square Error (RMSE) and Negative Log Predictive Density (NLPD).

- Analysis: Examine the length-scales of the ARD kernel to identify the most influential solvent properties for yield.

Quantitative Kernel Performance Comparison (Hypothetical Study) Table 1: Performance of different kernels on a solvent screen yield prediction task (n=150 reactions).

| Kernel Type | Test RMSE (%) | NLPD | Key Insight |

|---|---|---|---|

| RBF | 8.7 | 1.45 | Assumes overly smooth yield transitions. |

| Matérn 5/2 | 7.2 | 1.21 | Better captures local yield variations. |

| Matérn 5/2 (ARD) | 6.5 | 1.08 | Identifies polarity and donor number as key (short length-scales). |

Hyperparameters: Tuning the Model to Your Reaction Data

Hyperparameters (θ) are the kernel and mean function parameters learned from data. They control the model's behavior and must be optimized.

Core Hyperparameters & Their Chemical Interpretation:

- Length-scale (

l): Defines the "sphere of influence" of a data point. Shortl→ yield changes rapidly with condition change. Chemically, a short length-scale for temperature suggests a highly temperature-sensitive reaction. - Signal Variance (

σ_f²): Controls the vertical scale of function variation. A high value indicates the model expects large fluctuations in yield across the condition space. - Noise Variance (

σ_n²): Represents the expected level of observational noise (experimental error, measurement inaccuracy).

Application Protocol: Hyperparameter Optimization and Diagnostics

- Objective: Robustly train a GP model for a Suzuki-Miyaura coupling yield dataset and diagnose fit issues.

- Procedure:

- Initialization: Set sensible initial values (e.g., length-scale = 1.0 after feature scaling, noise variance = 0.01).

- Optimization: Maximize the log marginal likelihood

log p(y | X, θ)using a gradient-based optimizer (e.g., L-BFGS-B). - Convergence Check: Ensure optimizer has converged and inspect the gradient norms.

- Diagnostic - Predictive Checks: Generate posterior predictions on a test set. Use statistical checks (e.g., calibration plots) to see if predicted uncertainties match empirical errors.

- Diagnostic - Kernel Matrix Inspection: Check the condition number of the kernel matrix

K + σ_n²I. A very high number (>10^9) may indicate poor hyperparameters or redundant data.

Research Reagent Solutions: Hyperparameter Tuning

| Item | Function in GP Modeling |

|---|---|

| Standardized Molecular Descriptors | Essential for meaningful length-scale interpretation and stable optimization. |

| High-Quality Experimental Yield Data | Minimizes confounding noise, leading to more reliable estimates of σ_n². |

| Gradient-Based Optimizer Software (e.g., SciPy) | Efficiently solves the maximization problem for the marginal likelihood. |

Integrated Workflow Diagram

Title: GP Model Construction and Training Workflow

Logical Relationship of GP Components

Title: Interdependence of GP Core Components

Application Notes

Current Challenges in Reaction Yield Data

Reaction yield prediction is critical for accelerating drug discovery and process optimization. The underlying data landscape presents three primary challenges, as detailed in Table 1.

Table 1: Quantitative Characterization of Reaction Yield Data Challenges

| Challenge | Typical Metric / Value | Impact on Model Performance |

|---|---|---|

| Sparsity | 0.5 - 5% of possible substrate combinations tested (literature) | Limits model generalizability; high uncertainty in unexplored chemical space. |

| Noise | Experimental yield Std Dev: ±5-15% (reproducibility studies) | Obscures true structure-yield relationships; necessitates robust error models. |

| High Dimensionality | 100-1000+ features (molecular descriptors, conditions) per reaction | Risk of overfitting; requires dimensionality reduction or strong regularization. |

Gaussian Process Models as a Solution

Within the broader thesis on advanced predictive models, Gaussian Process (GP) models are particularly suited for this landscape. They provide principled uncertainty quantification, which is essential when data is sparse and noisy. Their non-parametric nature avoids strong assumptions about the underlying high-dimensional functional relationship.

Experimental Protocols

Protocol for Generating a Sparse, High-Dimensional Yield Dataset

This protocol outlines the creation of a benchmark dataset for GP model training and validation.

Aim: To curate a dataset reflecting real-world sparsity and noise from public sources (e.g., USPTO, Reaxys).

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Data Acquisition: Query a reaction database (e.g., Reaxys via API) for C-N cross-coupling reactions. Use filters: yield reported, defined catalyst.

- Feature Engineering:

- Substrate Representation: For each reactant and product, compute 200+ molecular descriptors (e.g., RDKit: Morgan fingerprints, logP, topological polar surface area).

- Condition Representation: Encode catalyst identity (one-hot), solvent (one-hot), temperature (continuous), and time (continuous).

- Concatenate all features into a single high-dimensional vector per reaction.

- Sparsity Induction: Randomly sample 1% of the total retrieved reactions to simulate a sparse research scenario. Document the sampling seed.

- Noise Characterization: For a subset (e.g., 50 reactions) with multiple yield entries, calculate the mean and standard deviation. Report the average standard deviation as the empirical noise level.

- Data Splitting: Partition data into training (70%), validation (15%), and test (15%) sets using scaffold splitting to assess generalization.

Protocol for Training a Gaussian Process Yield Prediction Model

Aim: To train a GP model that predicts reaction yield and associated uncertainty.

Procedure:

- Preprocessing: Standardize all continuous features (mean=0, std=1) using statistics from the training set only.

- Kernel Selection: Construct a composite kernel. Example: a Matérn kernel (for continuous features) combined with a white noise kernel (to model experimental noise).

- Model Initialization: Initialize the GP model (e.g., using GPyTorch) with the composite kernel.

- Training: Maximize the marginal log-likelihood using an Adam optimizer. Train for 1000 epochs, monitoring loss on the validation set.

- Hyperparameter Tuning: Optimize kernel length scales and noise variance via type-II maximum likelihood or Bayesian optimization.

- Prediction & Evaluation: On the held-out test set, predict yields and uncertainties. Evaluate using Mean Absolute Error (MAE) and assess calibration of uncertainty (e.g., via calibration plots).

Visualizations

Title: GP Model Development Workflow for Sparse Yield Data

Title: How GP Models Navigate Sparse, High-Dimensional Data

The Scientist's Toolkit

Table 2: Essential Research Reagents & Resources

| Item / Resource | Function / Application |

|---|---|

| Reaction Databases (Reaxys, USPTO) | Source of published reaction yield data for model training and benchmarking. |

| RDKit or Mordred | Open-source cheminformatics libraries for generating high-dimensional molecular descriptors. |

| GPyTorch or GPflow | Specialized libraries for flexible and scalable Gaussian Process model implementation. |

| Bayesian Optimization (BoTorch) | Framework for efficient hyperparameter tuning and sequential experimental design. |

| Scaffold Split (e.g., via RDKit) | Method for splitting chemical data to test model generalization to new core structures. |

| Standardized Catalysts (e.g., Pd precatalysts) | Physically available, well-defined catalysts to reduce noise in experimental validation studies. |

Contrasting GPs with Traditional Linear Models and Deterministic ML Approaches

Within the thesis "Gaussian Process Models for Reaction Yield Prediction in High-Throughput Experimentation," this document provides application notes and protocols for contrasting Gaussian Processes (GPs) against other modeling paradigms. Accurate yield prediction is critical for accelerating drug discovery, where efficient navigation of chemical reaction space is paramount.

Quantitative Model Comparison

The following table summarizes the core characteristics, performance, and applicability of different modeling approaches for chemical yield prediction, based on a synthesis of current literature and benchmark studies.

Table 1: Comparative Analysis of Modeling Approaches for Reaction Yield Prediction

| Aspect | Traditional Linear Models (e.g., MLR, Ridge/Lasso) | Deterministic ML (e.g., Random Forest, Gradient Boosting, Neural Networks) | Gaussian Process (GP) Regression |

|---|---|---|---|

| Core Principle | Assumes a linear (or penalized linear) relationship between descriptor inputs and yield. | Learns complex, non-linear input-output mappings via deterministic algorithms and architectures. | Infers a distribution over possible non-linear functions, assuming outputs are jointly Gaussian. |

| Handling of Non-linearity | Poor. Requires explicit feature engineering (e.g., polynomial terms). | Excellent. Inherently models complex, high-order interactions. | Excellent. Governed by kernel choice (e.g., RBF, Matérn). |

| Uncertainty Quantification | Provides confidence intervals based on linear assumptions, often unreliable. | Native point estimates. Uncertainty requires ensembles/bootstrapping (computationally costly). | Native probabilistic output. Provides predictive variance (uncertainty) directly. |

| Data Efficiency | Low to moderate. Requires many samples for stability, but few parameters. | Low. Typically requires large datasets (>100s samples) to avoid overfitting. | High. Particularly effective in data-scarce regimes (<100 samples), common in early-stage experimentation. |

| Interpretability | High. Coefficients directly indicate feature importance/direction. | Low to Moderate. "Black-box" nature; requires post-hoc analysis (e.g., SHAP, feature permutation). | Moderate. Kernel and hyperparameters inform smoothness/length scales; sensitivity analysis possible. |

| Extrapolation Risk | High. Linear trends fail quickly outside training domain. | Very High. Unpredictable and often overconfident outside training domain. | Cautious. Predictive variance inflates in regions far from training data, signaling low confidence. |

| Benchmark RMSE (Typical Range) * | 8.5 - 15.0% (Yield) | 5.0 - 9.0% (Yield) | 4.5 - 8.0% (Yield) |

| Benchmark Time (for ~1000 samples) | <1 second (Training) <1 ms (Prediction) | Seconds to minutes (Training) <1 ms (Prediction) | Minutes to hours (Training) ~10-100 ms (Prediction) |

| Optimal Application Context | Preliminary screening with clearly linear trends or for baseline comparison. | Large, high-dimensional datasets (e.g., from extensive HTE campaigns) where uncertainty is secondary. | Data-scarce exploration, Bayesian optimization, and when reliable uncertainty estimates are critical for decision-making. |

Note: RMSE ranges are illustrative, derived from published benchmarks on datasets like Buchwald-Hartwig C-N cross-coupling reactions. Actual values depend on dataset size, descriptor quality, and hyperparameter tuning.

Experimental Protocols for Model Benchmarking

Protocol 3.1: Dataset Curation and Preprocessing for Yield Prediction

Objective: To prepare a standardized, high-quality dataset for fair model comparison. Materials: Reaction data (CSV format), chemical informatics software (e.g., RDKit). Procedure:

- Data Source: Compile reaction data from high-throughput experimentation (HTE) campaigns or public sources (e.g., literature-curated C-N coupling datasets).

- Descriptor Generation:

- Use RDKit to compute molecular descriptors (e.g., Morgan fingerprints, radius=2, nBits=2048) for all reactants, ligands, and additives.

- Calculate physicochemical properties (MW, logP, etc.) for relevant components.

- Include experimental condition variables (temperature, concentration, time) as continuous features.

- Feature Representation: Concatenate all descriptors into a unified feature vector for each reaction entry.

- Data Splitting: Perform a scaffold split based on the core reactant to assess model generalizability to new chemotypes. Partition data into Training (70%), Validation (15%), and Test (15%) sets.

- Feature Scaling: Standardize all continuous features to zero mean and unit variance based on the training set statistics. Apply the same transformation to validation and test sets.

Protocol 3.2: Model Training, Validation, and Evaluation Workflow

Objective: To train, tune, and evaluate competing models on an identical benchmark dataset. Materials: Preprocessed dataset (from Protocol 3.1), Python environment with scikit-learn, GPyTorch/GPflow, and XGBoost libraries. Procedure:

- Model Implementation:

- Linear Baseline: Train a Ridge Regression model with L2 regularization.

- Deterministic ML: Train a Gradient Boosting Machine (e.g., XGBoost) and a Feed-Forward Neural Network.

- Gaussian Process: Implement a GP with a Matérn 5/2 kernel using a GPyTorch framework.

- Hyperparameter Optimization:

- Use the Validation set and Bayesian Optimization (or Grid Search for linear models) to tune key parameters.

- Key Tuning Targets: Regularization strength (Linear), learning rate & tree depth (GBM), layers & dropout (NN), kernel length scale & noise (GP).

- Evaluation on Test Set:

- Generate predictions for the held-out Test set.

- Calculate primary metrics: Root Mean Squared Error (RMSE), Mean Absolute Error (MAE), and Coefficient of Determination (R²).

- For GP, record the average predictive standard deviation (uncertainty) for test points.

- Uncertainty Calibration Assessment:

- For GP and any ensemble methods, assess uncertainty quality by computing calibration curves: plot observed vs. predicted confidence intervals.

Protocol 3.3: Active Learning Loop Using GP Uncertainty

Objective: To demonstrate the utility of GP uncertainty for guiding successive experimental rounds. Materials: Initial small training set (~50 reactions), large unlabeled candidate pool, GP model. Procedure:

- Initial Model: Train a GP model on the initial small training set.

- Candidate Scoring: Use the trained GP to predict the mean and variance for all reactions in the candidate pool.

- Acquisition Function: Calculate an acquisition score for each candidate (e.g., Expected Improvement or Upper Confidence Bound), which balances predicted high yield (exploitation) and high uncertainty (exploration).

- Selection: Select the top N (e.g., 5-10) candidates with the highest acquisition score for "experimental" validation (simulated or real).

- Iteration: Add the new yield data for the selected candidates to the training set. Retrain the GP model and repeat steps 2-5 for a set number of cycles.

- Analysis: Compare the cumulative yield improvement and model accuracy against a random selection baseline.

Visualizations

Title: Model Benchmarking and Evaluation Workflow

Title: GP-Driven Active Learning Cycle

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for Reaction Yield Modeling

| Item / Reagent / Tool | Function / Purpose in Research |

|---|---|

| High-Throughput Experimentation (HTE) Kit (e.g., commercial vial racks, liquid handling robots) | Enables rapid, parallel synthesis of hundreds to thousands of unique reaction conditions to generate the essential training and validation data. |

| Chemical Descriptor Software (e.g., RDKit, Dragon) | Computes numerical representations (fingerprints, physicochemical properties) of molecules, transforming chemical structures into model-readable features. |

| Linear Modeling Suite (e.g., scikit-learn, statsmodels) | Provides efficient, interpretable baselines (Ridge, Lasso) for initial data trend analysis and benchmarking. |

| Deterministic ML Libraries (e.g., XGBoost, PyTorch, TensorFlow) | Offers state-of-the-art algorithms for capturing complex, non-linear relationships in large, high-dimensional reaction datasets. |

| Gaussian Process Framework (e.g., GPyTorch, GPflow) | Implements flexible GP models crucial for data-efficient learning and providing reliable uncertainty estimates for decision support. |

| Bayesian Optimization Package (e.g., Ax, BoTorch) | Utilizes GP models as surrogates to automate and accelerate the search for optimal reaction conditions (closing the design-make-test-analyze loop). |

| Benchmark Reaction Datasets (e.g., public Buchwald-Hartwig, Suzuki-Miyaura collections) | Serves as standardized, community-accepted benchmarks for fair comparison and validation of new modeling methodologies. |

Building a GP Yield Predictor: A Step-by-Step Methodology for Chemical Data

Within the broader research on Gaussian Process (GP) models for reaction yield prediction, feature engineering is the critical first step that transforms raw chemical information into a quantifiable, machine-readable format. The predictive performance of a GP model is heavily dependent on the quality and relevance of its input descriptors. This protocol details the systematic conversion of SMILES (Simplified Molecular Input Line Entry System) strings into molecular and reaction descriptors, establishing the foundational dataset for subsequent GP modeling aimed at understanding and predicting reaction outcomes in medicinal and process chemistry.

Application Notes & Protocols

Protocol: SMILES Standardization and Validation

Objective: To generate clean, canonical, and chemically valid SMILES strings for reactants, reagents, and products from raw input. Materials:

- Raw chemical dataset (CSV, JSON, or SDF format).

- Computing environment with RDKit (v2023.09.5 or later) or ChemAxon's JChem Suite.

- Python scripting environment (e.g., Jupyter Notebook).

Methodology:

- Data Loading: Import the raw dataset containing reaction SMILES or component SMILES.

- Parsing: Use

rdkit.Chem.rdmolfiles.MolFromSmiles()to parse each SMILES string into a molecule object. - Sanitization: Apply RDKit's sanitization workflow (failing on invalid molecules for review).

- Neutralization: For reactants and products, apply a standardization step to generate predominant tautomers and neutralize charges where appropriate using MolVS or RDKit's

SanitizeMol(). - Canonicalization: Generate canonical SMILES using

rdkit.Chem.rdmolfiles.MolToSmiles()withisomericSmiles=True. - Validation: Flag or remove entries that fail parsing or represent impossible chemistry (e.g., valence errors). Output a standardized DataFrame.

Protocol: Calculation of Molecular Descriptors

Objective: To compute a comprehensive set of numerical descriptors for each unique chemical species involved in a reaction. Materials:

- Standardized molecule list from Protocol 2.1.

- RDKit or Mordred (v2.0.0.0) descriptor calculator.

- Python environment with pandas, numpy.

Methodology:

- Descriptor Selection: Choose descriptors relevant to reactivity and yield. Common categories include:

- Constitutional: Molecular weight, atom count, bond count.

- Topological: Connectivity indices (e.g., Balaban J, Zagreb index).

- Electronic: Partial charge descriptors, dipole moment, HOMO/LUMO energies (requires pre-optimization).

- Geometric: Principal moments of inertia, radius of gyration (requires 3D conformation).

- Physicochemical: LogP (octanol-water partition coefficient), molar refractivity, TPSA (Topological Polar Surface Area).

- Calculation: Use

rdkit.Chem.Descriptorsmodule ormordred.Calculatorto batch-compute descriptors. - Handling 3D Descriptors:

- Generate a 3D conformation using RDKit's

EmbedMolecule(). - Apply a basic MMFF94 force field optimization using

rdkit.Chem.rdForceFieldHelpers.MMFFOptimizeMolecule(). - Compute 3D descriptors (e.g., inertial shape factor).

- Generate a 3D conformation using RDKit's

- Data Assembly: Compile all descriptors into a pandas DataFrame indexed by molecule ID.

Table 1: Key Molecular Descriptor Categories and Examples

| Category | Example Descriptors | Relevance to Reactivity/Yield | Calculation Source |

|---|---|---|---|

| Constitutional | HeavyAtomCount, MolWt | Size & complexity | RDKit |

| Topological | BalabanJ, BertzCT | Molecular branching & complexity | RDKit/Mordred |

| Electronic | MaxAbsPartialCharge, SLogP | Charge distribution, polarity | RDKit |

| Physicochemical | TPSA, MolLogP | Solubility, permeability | RDKit |

| Quantum Chemical* | HOMO, LUMO, Dipole Moment | Electronic states, reactivity | External (ORCA, Gaussian) |

Note: Quantum chemical descriptors require external quantum mechanics (QM) software and are computationally intensive.

Protocol: Generation of Reaction Descriptors

Objective: To create features that encode the transformation from reactants to products, capturing the reaction's essence. Materials:

- DataFrames of molecular descriptors for reactants, reagents, catalysts, and products.

- Reaction mapping tool (e.g., RDKit's reaction fingerprinting).

Methodology:

- Difference Descriptors: For each numerical molecular descriptor, calculate the difference (Δ) between the product and the sum of reactants (e.g., ΔMolLogP, ΔTPSA).

- Reaction Fingerprints: Use

rdkit.Chem.rdChemReactions.CreateDifferenceFingerprintForReaction()to generate a binary fingerprint indicating which structural patterns changed. - Condition Descriptors: Encode non-molecular parameters:

- Categorical: Catalyst type, solvent class (protic/aprotic, polar/non-polar) – use one-hot encoding.

- Numerical: Temperature (°C), concentration (M), time (h) – standardize (z-score).

- Aggregation: For reactions with multiple reactants or products, use aggregation functions (sum, mean, max) or treat the descriptors of the main reactant and product pair.

Table 2: Common Reaction Descriptor Types

| Descriptor Type | Formula/Example | Description |

|---|---|---|

| Difference Descriptor | ΔX = Xproduct - ΣXreactants | Captures net change in a property. |

| Binary Reaction FP | RDKit Difference Fingerprint (2048 bits) | Encodes changed substructures. |

| Condition: Temperature | Numerical, e.g., 80 °C | Reaction temperature. |

| Condition: Solvent | One-hot encoded, e.g., [DMSO=1, MeOH=0] | Solvent identity. |

Visualization of Workflows

Title: Workflow from Raw SMILES to GP Model Input

Title: Molecular Descriptor Calculation Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Libraries for Feature Engineering

| Item | Function & Role | Source/Link |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for SMILES parsing, molecule manipulation, and core descriptor calculation. | www.rdkit.org |

| Mordred | Advanced molecular descriptor calculator supporting >1800 2D/3D descriptors. | github.com/mordred-descriptor/mordred |

| MolVS | Molecule validation and standardization library for tautomer normalization and charge correction. | github.com/mcs07/MolVS |

| JChem Suite | Commercial suite for enterprise-level chemical structure handling and descriptor generation. | chemaxon.com |

| ORCA / Gaussian | Quantum chemistry software for calculating high-level electronic descriptors (HOMO, LUMO, etc.). | orcaforum.kofo.mpg.de, gaussian.com |

| Python Data Stack | pandas (dataframes), numpy (numerical arrays), scikit-learn (standardization) for data processing. | python.org |

This Application Note, part of a thesis on Gaussian Process (GP) models for reaction yield prediction, details the critical step of kernel selection and customization. The kernel function defines the covariance structure of the GP, directly determining its prior over functions and its ability to capture complex relationships within high-dimensional chemical reaction data.

Kernel Functions: Mathematical Definitions & Comparative Properties

The choice of kernel imposes assumptions about the smoothness and periodicity of the function mapping molecular descriptors to reaction yield.

Table 1: Core Kernel Functions for Chemical Space Modeling

| Kernel Name | Mathematical Formulation | Key Hyperparameters | Smoothness Assumption | Primary Use Case in Chemical Space | ||

|---|---|---|---|---|---|---|

| Radial Basis Function (RBF) | ( k(r) = \sigma_f^2 \exp\left(-\frac{r^2}{2l^2}\right) ) where ( r = | xi - xj | ) | Length-scale ((l)), Variance ((\sigma_f^2)) | Infinitely differentiable (very smooth) | Default choice for modeling smooth, continuous trends in yield across structural variations. |

| Matérn (ν=3/2) | ( k(r) = \sigma_f^2 \left(1 + \frac{\sqrt{3}r}{l}\right) \exp\left(-\frac{\sqrt{3}r}{l}\right) ) | Length-scale ((l)), Variance ((\sigma_f^2)) | Once differentiable (less smooth) | Capturing moderately rough, irregular landscapes common in yield data. | ||

| Matérn (ν=5/2) | ( k(r) = \sigma_f^2 \left(1 + \frac{\sqrt{5}r}{l} + \frac{5r^2}{3l^2}\right) \exp\left(-\frac{\sqrt{5}r}{l}\right) ) | Length-scale ((l)), Variance ((\sigma_f^2)) | Twice differentiable | A flexible compromise between RBF smoothness and Matérn-3/2 flexibility. |

Composite Kernel Design for Reaction Yield Prediction

Single kernels often fail to capture the multifaceted nature of chemical reactions. Composite kernels combine simpler kernels to model distinct data features.

Protocol 1: Designing an Additive Composite Kernel

- Objective: To model a reaction yield function presumed to be a sum of a global smooth trend and local, shorter-scale variations.

- Procedure:

- Define Base Kernels: Select an RBF kernel for the global trend (

kernel_global) and a Matérn-3/2 kernel for local deviations (kernel_local). - Construct Additive Kernel: Create the composite kernel

kernel_additive = kernel_global + kernel_local. - Hyperparameter Initialization: Set initial length-scales: a longer scale for

kernel_global.l(e.g., 5.0) and a shorter scale forkernel_local.l(e.g., 1.0). Set initial variances appropriately. - Model Training: Fit the GP model using

kernel_additiveto your training data (reaction descriptors → yield). - Interpretation: Post-training, analyze the learned length-scales and variances to infer the relative contribution of each component to the final model.

- Define Base Kernels: Select an RBF kernel for the global trend (

Protocol 2: Designing a Product (Interaction) Kernel

- Objective: To model a reaction yield function where the effect of one molecular descriptor modulates the influence of another.

- Procedure:

- Define Base Kernels: Assign an RBF kernel to descriptor set A (e.g., electronic features) and a separate RBF kernel to descriptor set B (e.g., steric features).

- Construct Product Kernel: Create the composite kernel

kernel_product = kernel_A * kernel_B. - Hyperparameter Initialization: Initialize length-scales for each kernel based on domain knowledge or standardized descriptor ranges.

- Model Training & Validation: Fit the GP and validate its predictive performance against a test set of reactions. Compare against an additive model to assess if feature interactions are significant.

Table 2: Performance Comparison of Kernels on a Public Reaction Yield Dataset (Buchwald-Hartwig Amination)

| Kernel Type | Test Set RMSE (↓) | Test Set MAE (↓) | Log Marginal Likelihood (↑) | Training Time (s) | Interpretability |

|---|---|---|---|---|---|

| RBF | 8.45 ± 0.41 | 6.12 ± 0.33 | -142.7 | 12.3 | High (single length-scale) |

| Matérn-5/2 | 8.21 ± 0.38 | 5.98 ± 0.31 | -140.2 | 12.5 | Medium |

| Matérn-3/2 | 7.94 ± 0.35 | 5.73 ± 0.28 | -138.5 | 12.4 | Medium |

| RBF + Matérn-3/2 (Additive) | 7.51 ± 0.32 | 5.41 ± 0.26 | -135.1 | 18.7 | Medium-High |

| (RBFA * RBFB) + Matérn-3/2 | 7.48 ± 0.34 | 5.39 ± 0.27 | -134.8 | 25.1 | Lower (complex interaction) |

Data simulated based on trends reported in recent literature (2023-2024). RMSE: Root Mean Square Error (%), MAE: Mean Absolute Error (%).

Visualizing Kernel Selection and GP Prediction Workflow

Title: Kernel Selection and Model Training Workflow

Table 3: Research Reagent Solutions for GP Kernel Development

| Item / Resource | Function & Application in Kernel Design |

|---|---|

| GPy (Python Library) | A flexible framework for GP modeling, allowing straightforward implementation and combination of RBF, Matérn, and custom kernels. |

| scikit-learn GaussianProcessRegressor | Provides well-optimized, user-friendly API for basic kernel experiments (RBF, Matérn, ConstantKernel). |

| GPflow / GPyTorch | Advanced libraries built on TensorFlow/PyTorch for scalable GP models, essential for large reaction datasets. |

| RDKit or Mordred Descriptors | Generate numerical molecular descriptors (reactants, catalysts, ligands) to serve as input features (x) for the kernel distance metric. |

| Bayesian Optimization Tools (e.g., scikit-optimize) | For efficient multi-dimensional optimization of kernel hyperparameters (length-scales, variances) by maximizing the log marginal likelihood. |

| Reaction Yield Datasets (e.g., Buchwald-Hartwig, Suzuki-Miyaura from literature) | Benchmark datasets to test and compare the predictive performance of different kernel choices. |

Within the broader thesis on developing robust Gaussian Process (GP) models for chemical reaction yield prediction, Step 3 represents the critical phase of model calibration. This stage moves beyond initial implementation to optimize the model's ability to capture the complex, non-linear relationships between molecular descriptors, reaction conditions, and experimental yield. The core objectives are the maximization of the marginal likelihood function to infer optimal kernel parameters and the systematic tuning of model hyperparameters to prevent overfitting and ensure generalizable predictive performance for drug development applications.

Theoretical Framework & Likelihood Optimization

The GP model is fully defined by its mean function, ( m(\mathbf{x}) ), and covariance kernel function, ( k(\mathbf{x}, \mathbf{x}' ; \boldsymbol{\theta}) ), where ( \boldsymbol{\theta} ) represents the kernel hyperparameters (e.g., length scales, variance). For a dataset ( \mathbf{X} ) with observed yields ( \mathbf{y} ), the log marginal likelihood is given by:

[ \log p(\mathbf{y} | \mathbf{X}, \boldsymbol{\theta}) = -\frac{1}{2} \mathbf{y}^T \mathbf{K}y^{-1} \mathbf{y} - \frac{1}{2} \log |\mathbf{K}y| - \frac{n}{2} \log 2\pi ]

where ( \mathbf{K}y = K(\mathbf{X}, \mathbf{X}) + \sigman^2\mathbf{I} ) includes the noise variance ( \sigma_n^2 ). Optimization involves using gradient-based methods (e.g., L-BFGS-B) to find the ( \boldsymbol{\theta} ) that maximizes this log likelihood, balancing data fit (the first term) with model complexity (the second term).

Table 1: Common Kernel Functions & Their Hyperparameters

| Kernel Name | Mathematical Form | Hyperparameters (θ) | Role in Yield Prediction |

|---|---|---|---|

| Radial Basis Function (RBF) | ( k(\mathbf{x}i, \mathbf{x}j) = \sigmaf^2 \exp\left(-\frac{1}{2} (\mathbf{x}i - \mathbf{x}j)^T \mathbf{M} (\mathbf{x}i - \mathbf{x}_j)\right) ) | ( \sigma_f^2 ) (signal variance), ( \mathbf{M} ) (inverse length-scale matrix) | Captures smooth, non-linear trends. Automatic Relevance Determination (ARD) uses a diagonal M to identify relevant molecular descriptors. |

| Matérn 3/2 | ( k(r) = \sigma_f^2 \left(1 + \sqrt{3}r\right) \exp\left(-\sqrt{3}r\right) ) | ( \sigma_f^2 ), length scales ( l ) | Less smooth than RBF, suitable for modeling more irregular functional relationships often found in chemical data. |

| White Noise | ( k(\mathbf{x}i, \mathbf{x}j) = \sigman^2 \delta{ij} ) | ( \sigma_n^2 ) (noise variance) | Represents irreducible experimental error in yield measurements. |

Experimental Protocols for Model Training

Protocol 3.1: Log Marginal Likelihood Maximization

Objective: To determine the optimal kernel hyperparameters for the GP yield prediction model. Materials: Preprocessed training dataset (molecular descriptors & conditions matrix ( \mathbf{X}{train} ), yield vector ( \mathbf{y}{train} \)), GP software library (e.g., GPyTorch, scikit-learn). Procedure:

- Initialize Hyperparameters: Set initial values for kernel parameters (e.g., length scales = 1.0, signal variance = variance of ( \mathbf{y}_{train} ), noise variance = 0.01).

- Define Likelihood & Model: Select a Gaussian likelihood and construct the GP model with the chosen kernel (e.g., RBF with ARD).

- Compute Negative Log Likelihood (NLL): For current ( \boldsymbol{\theta} ), compute the Cholesky decomposition of ( \mathbf{K}_y ) and use it to calculate the NLL and its analytical gradients with respect to ( \boldsymbol{\theta} ).

- Iterative Optimization: Use a conjugate gradient or quasi-Newton optimizer (L-BFGS-B) to update ( \boldsymbol{\theta} ) by minimizing the NLL. Monitor convergence (tolerance ΔNLL < 1e-6).

- Validation: Store the optimized ( \boldsymbol{\theta} ). Verify stability by restarting optimization from different initial points.

Protocol 3.2: k-Fold Cross-Validation for Hyperparameter Tuning

Objective: To assess model generalization and select high-level hyperparameters (e.g., kernel choice, noise constraints). Materials: Full training dataset, GP training pipeline from Protocol 3.1. Procedure:

- Data Partitioning: Randomly split the training data into k (e.g., 5 or 10) stratified folds, preserving the distribution of yield ranges.

- Cross-Validation Loop: For each fold i: a. Designate fold i as the validation set, and the remaining k-1 folds as the temporary training set. b. Train the GP model on the temporary training set using Protocol 3.1. c. Predict yields for the validation set, calculating the Root Mean Square Error (RMSE) and Mean Absolute Error (MAE).

- Performance Aggregation: Compute the mean ± standard deviation of RMSE and MAE across all k folds.

- Hyperparameter Selection: Repeat the k-fold process for different kernel architectures (e.g., RBF vs. Matérn) or prior constraints on noise. Select the configuration yielding the lowest mean RMSE.

Table 2: Example k-Fold CV Results for Kernel Selection

| Kernel Type | Avg. RMSE (kJ/mol) | Std. RMSE | Avg. MAE (kJ/mol) | Avg. NLPD |

|---|---|---|---|---|

| RBF (with ARD) | 8.4 | 1.2 | 6.1 | 1.15 |

| Matérn 3/2 | 9.1 | 1.5 | 6.8 | 1.24 |

| RBF (Isotropic) | 10.3 | 1.8 | 7.9 | 1.42 |

Visualizing the Training Workflow

Diagram Title: GP Model Training & Validation Workflow

The Scientist's Toolkit: Key Reagent Solutions

Table 3: Essential Computational Tools for GP Training

| Item/Category | Specific Examples (Library/Tool) | Function in Training & Tuning |

|---|---|---|

| GP Frameworks | GPyTorch, GPflow (TensorFlow), scikit-learn GaussianProcessRegressor | Provide core functionality for model construction, likelihood definition, and automatic differentiation for gradient-based optimization. |

| Optimization Libraries | SciPy (L-BFGS-B, minimize), PyTorch Optimizers (Adam, LBFGS) | Perform the numerical optimization of the log marginal likelihood. |

| Numerical Backbone | NumPy, SciPy, PyTorch, TensorFlow | Handle linear algebra (Cholesky decomposition, matrix inverses) essential for stable likelihood computation. |

| Hyperparameter Tuning | scikit-learn GridSearchCV, RandomizedSearchCV, Optuna, BayesianOptimization | Automate the cross-validation and search for optimal kernel choices and hyperparameter priors. |

| Visualization | Matplotlib, Seaborn, Plotly | Create diagnostic plots (e.g., convergence of loss, predicted vs. actual yields) to monitor training. |

This protocol details the application of Gaussian Process (GP) models, framed within a broader thesis on machine learning for reaction yield prediction, to guide iterative experimental campaigns in chemical synthesis. The core thesis posits that GP models, due to their inherent quantification of uncertainty and ability to model complex, non-linear relationships with limited data, are uniquely suited as surrogate models for directing Bayesian optimization (BO) loops. This active learning paradigm efficiently navigates high-dimensional chemical reaction spaces to identify optimal conditions with minimal experimental expenditure, a critical capability in pharmaceutical development.

Core Principles and Data Presentation

The workflow iterates between model prediction and physical experimentation. Quantitative results from a representative study optimizing a Pd-catalyzed cross-coupling reaction are summarized below.

Table 1: Performance Comparison of Screening Strategies

| Screening Strategy | Experiments Required to Reach >90% Yield | Final Yield (%) | Model Type Used |

|---|---|---|---|

| One-Variable-at-a-Time (OVAT) | 42 | 92 | N/A |

| Full Factorial Design | 81 (full set) | 95 | N/A |

| Active Learning with BO (GP) | 19 | 96 | Gaussian Process |

| Random Search | 35 | 91 | N/A |

Table 2: Key Hyperparameters for the Gaussian Process Surrogate Model

| Hyperparameter | Symbol | Value/Range Used | Function |

|---|---|---|---|

| Kernel Function | k(x,x') | Matérn 5/2 | Controls function smoothness and covariance |

| Acquisition Function | a(x) | Expected Improvement (EI) | Balances exploration vs. exploitation |

| Learning Rate (for optimizer) | α | 0.01 | Step size for hyperparameter tuning |

| Noise Level | σₙ² | 0.01 | Accounts for experimental uncertainty |

Experimental Protocol: Iterative Screening Cycle

Protocol 3.1: Initial Dataset Generation (Design of Experiments)

- Define Search Space: Identify n critical reaction variables (e.g., catalyst loading (mol%), ligand equivalence, temperature (°C), concentration (M), solvent identity (categorical)).

- Perform Space-Filling Design: Generate an initial training set of 8-12 experiments using a Latin Hypercube Sampling (LHS) design to ensure broad coverage of the parameter space.

- Execute Initial Experiments: Carry out reactions in parallel under the prescribed conditions. Quench reactions after a set time.

- Analyze and Quantify: Use HPLC or UPLC with internal standard calibration to determine reaction yield for each condition. This set

{X_initial, y_initial}forms the first training data.

Protocol 3.2: Gaussian Process Model Training & Update

- Encode Variables: Numerically encode all reaction parameters (e.g., one-hot encoding for solvents) to create feature vector X.

- Normalize Data: Scale all continuous features and the yield values (y) to zero mean and unit variance.

- Define Kernel and Model: Instantiate a GP model with a Matérn 5/2 kernel. The prior is defined as f(x) ~ GP(m(x), k(x,x')), where m(x) is the mean function (often zero).

- Optimize Hyperparameters: Maximize the log marginal likelihood of the data given the hyperparameters (length scales, noise variance) using the L-BFGS-B optimizer (1000 iterations max).

Protocol 3.3: Bayesian Optimization to Propose Next Experiment

- Construct Acquisition Function: Compute the Expected Improvement (EI) across a dense, random subset of the unexplored search space. EI is defined as: EI(x) = E[max(f(x) - f(x), 0)], where *f(x)* is the current best yield.

- Select Next Condition: Identify the reaction condition x_next that maximizes the acquisition function: x_next = argmax(EI(x)).

- Parallel Proposals (Optional): For batch optimization, use a penalized algorithm (e.g., K-means batch sampling) to select 4-8 diverse points with high EI.

Protocol 3.4: Iterative Experimental Loop

- Execute the proposed reaction(s) x_next in the laboratory.

- Quantify the yield(s) y_next.

- Augment the training dataset:

X = X ∪ x_next,y = y ∪ y_next. - Retrain the GP model on the updated dataset.

- Repeat from Protocol 3.3 until a yield threshold is met or the experimental budget is exhausted (typically 20-50 total iterations).

Visualization of Workflow

Active Learning Cycle for Reaction Optimization

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Automated Reaction Screening

| Item | Function & Rationale |

|---|---|

| Automated Liquid Handling Platform (e.g., Hamilton STAR, Chemspeed) | Enables precise, reproducible dispensing of catalysts, ligands, and substrates in microtiter plates, critical for generating high-fidelity initial datasets. |

| High-Throughput Reaction Blocks (e.g., 96-well glass vials, 0.2-2 mL volume) | Provides a standardized, parallel format for conducting reactions under varied conditions with controlled heating/stirring. |

| Cryogenic Reaction Block (e.g., Huber Minichiller) | Allows screening of low-temperature reactions essential for organometallic step or air-sensitive chemistries. |

| Automated UPLC/HPLC System with Sample Manager | Facilitates rapid, quantitative analysis of hundreds of reaction outcomes without manual injection, providing the yield data (y) for the model. |

| Chemical Variable Library (e.g., Solvent kits, Ligand kits, Base stocks) | Pre-made, standardized stock solutions of common reaction components to ensure consistency and speed in setting up DoE arrays. |

| Bayesian Optimization Software (e.g., custom Python with BoTorch/GPyTorch, or commercial SaaS like Synthia) | Provides the computational engine to implement the GP model and acquisition function optimization described in Protocols 3.2 & 3.3. |

Application Notes

This case study details the implementation and validation of a Gaussian Process (GP) regression model to predict the yield of a Suzuki-Miyaura cross-coupling reaction series, a critical transformation in pharmaceutical synthesis. The work is presented within a broader thesis investigating probabilistic machine learning models for reaction yield prediction, with a focus on uncertainty quantification and efficient experimental design.

- Objective: To develop a predictive model that captures the complex, non-linear relationships between molecular descriptors of aryl halides and boronic acids and the resulting reaction yield, enabling the prioritization of high-performing substrates with quantified confidence intervals.

- Data Curation: A dataset of 350 previously reported Suzuki-Miyaura reactions was compiled from literature. Yields were normalized to a consistent scale (0-100%). For each reactant, a set of 15 molecular descriptors was calculated using RDKit, including electronic (e.g., Hammett sigma parameters, computed via DFT), steric (e.g., TPSA, Sterimol B5 parameters), and topological features.

- Model Architecture: A GP model with a Matérn 5/2 kernel was implemented using GPyTorch. The kernel's length scales automatically learned the relevance of each input descriptor. The model outputs a predictive mean (expected yield) and variance (predictive uncertainty) for any new input pair.

- Key Findings: The GP model achieved a test set RMSE of 8.7% yield and an R² of 0.83, significantly outperforming a baseline linear regression model (RMSE: 14.2%, R²: 0.55). Critically, the model's predicted uncertainty (95% confidence interval) successfully encapsulated the true yield for 93% of the test compounds, providing a reliable measure of prediction confidence. The model was used to screen a virtual library of 50 unexplored substrate pairs, identifying 12 with predicted yields >85% and low uncertainty, which were subsequently validated experimentally.

Data Presentation

Table 1: Performance Comparison of Yield Prediction Models

| Model Type | Test Set RMSE (%) | Test Set R² | Mean Absolute Error (MAE, %) | Uncertainty Calibration* |

|---|---|---|---|---|

| Linear Regression | 14.2 | 0.55 | 11.5 | N/A |

| Random Forest | 10.1 | 0.77 | 7.9 | N/A |

| Gaussian Process (Matérn 5/2) | 8.7 | 0.83 | 6.8 | 93% |

| Neural Network (MLP) | 9.5 | 0.80 | 7.3 | N/A |

*Percentage of test points where the true yield fell within the predicted 95% confidence interval.

Table 2: Top GP-Predicted Substrates for Experimental Validation

| Aryl Halide (Descriptor Set*) | Boronic Acid (Descriptor Set*) | Predicted Yield (%) | 95% CI Lower Bound (%) | 95% CI Upper Bound (%) | Actual Experimental Yield (%) |

|---|---|---|---|---|---|

| 4-CN-C6H4-Br (S=0.66, L=3.2) | 3-Thiophene-B(OH)2 (TPSA=28.5, logP=1.2) | 92.1 | 86.4 | 97.8 | 94.3 |

| 2-OMe-C6H4-I (S=-0.27, L=3.8) | 4-Formyl-C6H4-B(OH)2 (S=0.42, TPSA=34.1) | 88.5 | 81.1 | 95.9 | 85.7 |

| 3-Pyridyl-OTf (S=0.35, L=4.1) | 2-Naphthyl-B(OH)2 (logP=3.0, Sterimol=7.1) | 87.3 | 79.8 | 94.8 | 82.1 |

*Abbreviated descriptor examples: S=Hammett sigma parameter, L=Sterimol length, TPSA=Topological Polar Surface Area.

Experimental Protocols

Protocol 1: General Procedure for Suzuki-Miyaura Cross-Coupling Reaction (Benchmarking Dataset Generation)

- Setup: In a dried 5 mL microwave vial, add a magnetic stir bar.

- Charge Substrates: Weigh aryl halide (0.5 mmol, 1.0 equiv) and aryl boronic acid (0.75 mmol, 1.5 equiv). Add to the vial.

- Add Base: Add potassium phosphate tribasic (K₃PO₄, 1.0 mmol, 2.0 equiv).

- Add Catalyst/Ligand: Weigh and add Pd(OAc)₂ (2 mol%, 0.01 mmol) and SPhos ligand (4 mol%, 0.02 mmol).

- Add Solvent: Under an air atmosphere, add a degassed mixture of 1,4-dioxane and water (4:1 v/v, 2.0 mL total volume) via syringe.

- Reaction: Seal the vial with a PTFE-lined cap. Heat the reaction mixture to 100 °C with vigorous stirring (1000 rpm) for 18 hours in a pre-heated aluminum block.

- Work-up: Cool the reaction to room temperature. Dilute with ethyl acetate (10 mL) and transfer to a separatory funnel. Wash with water (10 mL) and brine (10 mL).

- Analysis: Dry the organic layer over anhydrous MgSO₄, filter, and concentrate in vacuo. Determine crude yield and purity by quantitative ¹H NMR analysis using 1,3,5-trimethoxybenzene as an internal standard.

Protocol 2: High-Throughput Experimental Validation of GP Model Predictions

- Library Design: Select 12 substrate pairs from the GP model's top predictions, ensuring a range of predicted yields and uncertainties.

- Stock Solution Preparation: Prepare 0.1 M stock solutions of each aryl halide and boronic acid in anhydrous 1,4-dioxane in a nitrogen-filled glovebox. Prepare separate stock solutions of Pd(OAc)₂ (10 mM) and SPhos (20 mM).

- Automated Liquid Handling: Using a liquid handling robot, dispense the following into 48 wells of a 96-well glass reaction plate:

- Aryl halide stock (50 µL, 5 µmol).

- Boronic acid stock (75 µL, 7.5 µmol).

- Pd(OAc)₂ stock (10 µL, 0.1 µmol).

- SPhos stock (10 µL, 0.2 µmol).

- K₃PO₄ solid (2.1 mg, 10 µmol) is added via a solid dispenser.

- Reaction Initiation: Add degassed water (50 µL) to each well using the liquid handler. Immediately seal the plate with a PTFE/aluminum heat seal film.

- Parallel Reaction: Place the sealed plate on a pre-heated (100 °C) stirring hotplate within a fume hood and stir for 18 hours.

- Parallel Analysis: Cool the plate. Using an auto-sampler, inject a diluted aliquot from each well into a UPLC-MS equipped with a photodiode array detector. Quantify yield using a calibrated external standard curve generated for each product.

Mandatory Visualization

GP Model Development and Validation Workflow

GP Model Mechanics for Yield Prediction

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions & Materials

| Item | Function/Benefit |

|---|---|

| Pd(OAc)₂ / SPhos System | Robust, air-stable catalyst/ligand combination for Suzuki-Miyaura coupling of diverse (hetero)aryl substrates. |

| K₃PO₄ | Strong, non-nucleophilic base soluble in aqueous/organic mixtures, promoting transmetalation. |

| 1,4-Dioxane/H2O (4:1) | Common solvent system providing homogeneous conditions for organic substrates and inorganic base. |

| RDKit | Open-source cheminformatics library for generating molecular descriptors (e.g., logP, TPSA). |

| GPyTorch | Flexible Python library for GP model implementation, enabling GPU acceleration and custom kernels. |

| 96-Well Glass Reaction Plate | Enables high-throughput parallel synthesis under identical heating/stirring conditions. |

| UPLC-MS with PDA | Provides rapid, quantitative analysis of reaction outcomes with mass confirmation. |

| Internal Standard (1,3,5-Trimethoxybenzene) | Enables accurate yield determination via quantitative ¹H NMR without pure product standards. |

Optimizing GP Performance: Solving Common Pitfalls in Reaction Yield Prediction

Within the broader thesis on Gaussian process (GP) models for reaction yield prediction in medicinal chemistry, the "cold start" problem represents a critical initial hurdle. Before a robust, data-rich model can be established, researchers must generate predictive value from extremely sparse experimental data, often from only 10-30 initial high-throughput experimentation (HTE) reactions. This document outlines application notes and protocols for navigating this phase, leveraging the inherent uncertainty quantification of GP models to guide experimental design.

Core Quantitative Data on Cold-Start Strategies

Table 1: Comparison of Initial Data Acquisition & Model Initialization Strategies

| Strategy | Typical Initial Data Points | Key GP Kernel Consideration | Primary Use Case in Yield Prediction | Expected R² After Cold-Start (Range)* |

|---|---|---|---|---|

| Space-Filling Design (e.g., Latin Hypercube) | 12 - 24 | Standard RBF + Noise | Broad screening of a new reaction scaffold with continuous variables (e.g., temp, conc.). | 0.3 - 0.5 |

| Expert-Selected Subset | 8 - 16 | Composite kernel encoding chemical motifs | Leveraging known chemical intuition for a specific transformation. | 0.4 - 0.6 |

| Transfer Learning from Public Data (e.g., USPTO, Reaxys) | 0 (pre-train) + 10-20 (fine-tune) | Multitask or Hierarchical kernel | Novel catalysis applied to established reaction types. | 0.5 - 0.7 |

| Active Learning Loop (Bayesian Optimization) | 8 (seed) + 8-12 (iterative) | Matérn kernel for better uncertainty capture | Optimizing a specific multi-variable reaction with a clear yield target. | 0.6 - 0.8 (after iterations) |

Based on recent literature benchmarks for heterogeneous catalysis and C-N cross-coupling yield prediction. *Requires pre-training on large public dataset, then fine-tuning (transfer) on private minimal data.

Table 2: Impact of Minimal Data Characteristics on GP Model Performance

| Data Characteristic | Favorable for Cold-Start | Detrimental for Cold-Start | Mitigation Protocol |

|---|---|---|---|

| Input Dimensionality | 3-5 well-chosen descriptors | >8 unrefined descriptors | Apply fingerprint diversity selection or Sparse GP methods. |

| Output (Yield) Range | Wide, spanning 10%-90% yield | Clustered (e.g., 65%-75% yield) | Use space-filling design to force exploration of edges. |

| Noise Level | Low (σ < 5% yield from replicates) | High (σ > 15% yield) | Incorporate a dedicated WhiteKernel and increase initial replicates. |

Experimental Protocols

Protocol 1: Initial Dataset Construction via Model-Informed Design of Experiments (MiDOE)

Objective: To generate the first 16 data points for training an initial GP model on a novel Suzuki-Miyaura coupling. Materials: See "Scientist's Toolkit" (Section 6). Procedure:

- Define Domain: Select 4 continuous variables: Catalyst loading (mol%), Temperature (°C), Equivalents of Base, and Reaction Time (h). Define realistic min/max bounds for each.

- Generate Candidate Set: Use a Sobol sequence to generate 50 candidate reaction conditions spanning the 4D space.

- Select for Diversity: Calculate Tanimoto distance between Morgan fingerprints of the aryl halide & boronic acid substrates (if variable). Filter candidates to maximize combined condition and substrate diversity.

- Expert Override: Review the 24 most diverse conditions. Manually replace up to 4 with conditions deemed chemically imperative based on literature (e.g., ensuring one condition with elevated temperature for deactivated substrates).

- Execution: Perform reactions in parallel according to the final 16-condition list. Use internal standard for accurate HPLC yield determination. Perform one condition in triplicate to estimate inherent noise.

- Data Logging: Record yields and all metadata in an electronic lab notebook (ELN) with structured fields.

Protocol 2: Two-Stage Transfer Learning from Public Reaction Data

Objective: To pre-train a GP model on public data to reduce the private data required for fine-tuning. Materials: USPTO or open reaction database; RDKit; GP modelling software (e.g., GPyTorch, scikit-learn). Procedure:

- Curate Public Dataset: Extract all Pd-catalyzed cross-coupling reactions from source. Clean data: remove duplicates, implausible yields (>100%), and reactions with missing crucial descriptors.

- Featurization: Encode reactions using a chosen scheme (e.g., DRFP, Reaction fingerprints). Standardize features.

- Pre-Training: Train a GP model with a scalable approximation (e.g., Sparse Variational GP) on the public dataset (typically 1000s-10,000s points). Validate on a held-out public test set.

- Adaptation (Fine-Tuning): a. Use the pre-trained GP's kernel parameters (length scales, output scale) as informative priors for a new GP model. b. Freeze the kernel structure and train only the likelihood noise parameter on the private minimal dataset (10-20 points), or c. Employ a multi-task kernel (e.g., Coregionalization) if public and private tasks are treated as related but distinct.

- Validation: Use leave-one-out cross-validation (LOO-CV) on the private data to assess predictive quality before deploying for active learning.

Visualizations

Diagram Title: Cold-Start Strategy Decision Workflow

Diagram Title: Transfer Learning Protocol for GP Cold-Start

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Cold-Start Reaction Yield Studies

| Item | Function in Cold-Start Context | Example/Specifications |

|---|---|---|

| High-Throughput Experimentation (HTE) Kit | Enables parallel synthesis of the initial design space (e.g., 24 reactions) with minimal reagent waste. | 24-well or 96-well microtiter plates with sealed vials; liquid handling robot or multi-channel pipette. |

| Pre-Weighted Catalyst/Base Stock Solutions | Ensures speed, accuracy, and reproducibility during setup of many small-scale reactions. | 0.1 M solutions in DMF or dioxane, stored under inert atmosphere in an automated dispenser. |

| Internal Standard Kit | Provides crucial, reliable yield quantification via HPLC/UPLC for diverse reaction conditions. | Set of chemically inert compounds (e.g., methyl benzoates, fluorenes) spanning a range of HPLC retention times. |

| Chemical Descriptor Software | Generates quantitative input features for GP models from substrate structures. | RDKit or Mordred for calculating molecular fingerprints (Morgan, MACCS) and physicochemical descriptors. |

| GP Modelling Software with Active Learning | Implements the core algorithms for model building and sequential experimental design. | GPyTorch (Python) with BoTorch for Bayesian optimization; or custom scripts in R with DiceKriging. |

| Structured ELN with API | Captures data in a machine-readable format essential for automated model training and iteration. | CDD Vault, Benchling, or Labguru with configurable fields and export capabilities to .csv/.json. |