Reaction Yield Optimization with Design of Experiments: A Strategic Guide for Pharmaceutical Scientists

This article provides a comprehensive guide for researchers and drug development professionals on leveraging Design of Experiments (DoE) to optimize chemical reaction yields.

Reaction Yield Optimization with Design of Experiments: A Strategic Guide for Pharmaceutical Scientists

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on leveraging Design of Experiments (DoE) to optimize chemical reaction yields. It covers foundational principles, contrasting DoE with inefficient one-factor-at-a-time (OFAT) approaches. The guide explores key methodological frameworks, including screening and optimization designs, and presents real-world case studies from pharmaceutical synthesis. It also addresses advanced troubleshooting, model validation techniques, and compares DoE with modern machine learning methods like Bayesian Optimization. The objective is to equip scientists with a structured methodology to enhance process efficiency, reduce experimental costs, and accelerate development timelines in biomedical research.

Beyond Trial and Error: Why DoE is Fundamental to Modern Reaction Optimization

The Critical Limitations of One-Factor-at-a-Time (OFAT) Optimization

Within the broader thesis on enhancing reaction yield optimization through Design of Experiments (DoE) research, it is imperative to critically evaluate traditional methodologies. The One-Factor-at-a-Time (OFAT) approach, historically rooted in scientific investigation, involves varying a single variable while holding all others constant [1] [2]. While intuitively simple, this method harbors significant, often overlooked, limitations that can impede efficient process development and optimization, particularly in complex systems like drug development and chemical synthesis [3] [4]. This application note delineates the critical drawbacks of OFAT, provides structured experimental data for comparison, and outlines robust DoE-based protocols to overcome these challenges.

Key Limitations and Quantitative Comparison of OFAT

The primary critiques of OFAT are its failure to capture interaction effects between factors, its inefficiency, and its unreliability in locating true optimal conditions [1] [3] [2]. The following table synthesizes quantitative and qualitative evidence from case studies comparing OFAT with DoE approaches.

Table 1: Comparative Analysis of OFAT vs. DoE/RSM in Optimization Studies

| Aspect | OFAT Performance / Outcome | DoE/RSM Performance / Outcome | Data Source & Context |

|---|---|---|---|

| Experimental Efficiency | Required 19 runs for a 2-factor problem [3]. | Required 14 runs for a full model (main effects, 2-way, squared, cubed terms) for a 2-factor problem [3]. | Simulation study on finding a process maximum. |

| Success Rate in Finding Optimum | Found the true process "sweet spot" only ~25-30% of the time in a 2-factor space [3]. | Consistently identified the optimal region and generated a predictive model [3]. | Simulation study using an interactive add-in. |

| Final Optimized Yield | Achieved LA production of 25.4 ± 0.42 g L⁻¹ [5]. | Achieved LA production of 40.69 g L⁻¹, a ~60% increase over OFAT result [5]. | Lactic acid fermentation optimization using beet molasses. |

| Interaction Effects | Cannot estimate or detect interactions between factors [1] [2]. | Explicitly models and quantifies interaction effects (e.g., catalyst load * pressure) [1] [6]. | Fundamental methodological limitation vs. DoE case study in reaction optimization. |

| Modeling & Prediction | Provides no predictive model for the response surface; new conditions require re-experimentation [3]. | Generates a mathematical model (e.g., quadratic) to predict outcomes across the design space [1] [3]. | Core advantage of DoE/Response Surface Methodology (RSM). |

| Scalability with Factors | Runs increase linearly but strategy becomes exponentially impractical and misleading [1]. | Uses fractional factorial or screening designs to manage many factors efficiently [1] [7]. | Discussion on limitations and modern ML-enhanced DoE. |

Detailed Experimental Protocols

Protocol 1: Traditional OFAT Optimization for Fermentation Parameters

Based on the lactic acid production case study [5].

Objective: To determine the optimal levels of four key factors (sugar concentration, inoculum size, pH, temperature) for maximizing lactic acid (LA) yield using the OFAT approach.

Materials: Fermentation broth (e.g., treated beet molasses medium), Enterococcus hirae ds10 culture, pH adjusters, incubator/shaker, LA quantification assay (e.g., HPLC).

Procedure:

- Baseline Establishment: Conduct a control fermentation with initial guessed conditions (e.g., 2% sugar, 5% inoculum, pH 7.0, 37°C).

- Factor Variation: a. Sugar Concentration: Hold inoculum size, pH, and temperature constant at baseline levels. Perform fermentations across a range of sugar concentrations (e.g., 2%, 4%, 6%, 8% w/v). Measure final LA yield. b. Identify Best Sugar Level: Select the concentration yielding the highest LA. c. Inoculum Size: Fix sugar at the new optimal level from step 2b. Hold pH and temperature constant. Perform fermentations across inoculum sizes (e.g., 5%, 10%, 15% v/v). Select the optimal size. d. pH: Fix sugar and inoculum at their optimal levels. Vary pH (e.g., 6.0, 7.0, 8.0, 9.0) at constant temperature. Select optimal pH. e. Temperature: Fix the first three factors at their optimal levels. Vary temperature (e.g., 35°C, 40°C, 45°C). Select optimal temperature.

- Conclusion: The combination of factors identified in steps 2b-2e is declared the OFAT-optimized condition.

Note: This protocol is time-consuming, ignores interactions, and risks converging on a local, not global, optimum [5] [3].

Protocol 2: DoE-Based Reaction Optimization (Screening & RSM)

Based on the catalytic reduction and pharmaceutical optimization case studies [6] [7].

Objective: To efficiently screen multiple factors and then optimize critical ones for a chemical reaction (e.g., a catalytic coupling) to maximize yield/selectivity.

Materials: Reactants, catalyst library, solvent selection map [8] [9], automated reaction platform (optional but recommended for HTE), analytical equipment (e.g., UPLC).

Procedure: Phase A: Initial Screening with Factorial Design

- Define Factors & Levels: Select 3-5 potentially influential factors (e.g., Catalyst Type (A, B, C), Solvent (DMAc, THF, Toluene), Temperature (Low, High), Concentration). Use chemical intuition and a solvent property map [8] [9].

- Design: Construct a fractional factorial or Plackett-Burman design using statistical software (e.g., JMP, Design-Expert). This explores multiple factors simultaneously with minimal runs.

- Execution & Analysis: Run experiments in randomized order. Analyze results using ANOVA to identify statistically significant main effects.

Phase B: Optimization with Response Surface Methodology (RSM)

- Refine Focus: Select 2-3 of the most significant continuous factors (e.g., catalyst loading, temperature) identified in Phase A.

- Design: Create a Central Composite or Box-Behnken design around a promising region to model curvature and interactions [1].

- Execution & Modeling: Run the RSM design. Fit a quadratic model (e.g., Yield = β₀ + β₁A + β₂B + β₁₁A² + β₂₂B² + β₁₂AB) to the data.

- Optimization & Validation: Use the model's profiler to locate factor settings predicting maximum yield. Run 1-3 confirmation experiments at the predicted optimum to validate the model.

Advanced Integration: For high-dimensional spaces, this DoE workflow can be integrated with Machine Learning (ML) and Bayesian optimization to guide highly parallel HTE campaigns, as demonstrated in recent pharmaceutical process development [7].

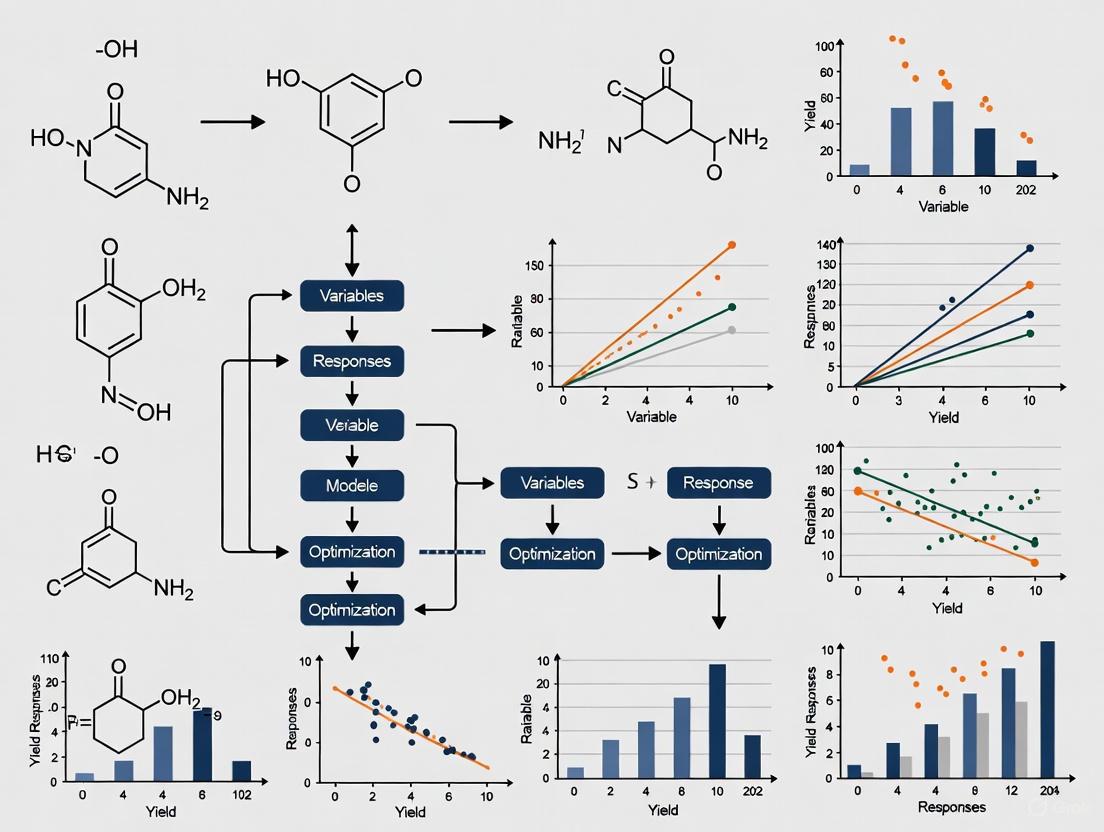

Visualization of Workflows

Diagram 1: Linear OFAT Optimization Pathway

Diagram 2: Integrated DoE & ML Optimization Cycle

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Modern Reaction Optimization Studies

| Item | Function & Relevance | Example/Note |

|---|---|---|

| DoE Software | Enables statistical design creation, randomization, data analysis (ANOVA), and model visualization (profilers, contour plots). Critical for implementing DoE protocols. | JMP, Design-Expert, Minitab, Python (SciPy, scikit-learn) [3]. |

| Solvent Property Map | A multi-dimensional map based on Principal Component Analysis (PCA) of solvent properties. Guides systematic solvent selection away from intuition-based OFAT [8] [9]. | A PCA map incorporating 136 solvents with diverse properties [8]. |

| Catalyst Library | A curated collection of diverse catalysts (e.g., varying metals, ligands) for high-throughput screening in early DoE stages to identify lead candidates [6]. | Commercial libraries from suppliers (e.g., 15 catalysts from 3 suppliers screened) [6]. |

| High-Throughput Experimentation (HTE) Platform | Automated robotic systems for parallel synthesis and analysis. Enables execution of large DoE arrays or ML-proposed batches efficiently [7]. | 96-well plate reactors for parallel reaction setup and analysis [7]. |

| Machine Learning Framework | For handling complex, high-dimensional optimization beyond standard RSM. Uses algorithms like Bayesian Optimization to guide experiment selection [7] [4]. | Frameworks like "Minerva" for multi-objective, batch-parallel optimization [7]. |

| Defined Culture Media Components | For bioprocess optimization. Precise components (salts, carbon sources, nitrogen like yeast extract) allow DoE-based media optimization, contrasting OFAT's sequential testing [5] [4]. | MRS medium components, ammonium chloride, yeast extract [5]. |

Core Principles of Design of Experiments (DoE) for Efficient Screening

In the critical early stages of reaction development, researchers are often faced with a vast array of potential factors that could influence key outcomes such as reaction yield and selectivity. Screening designs in Design of Experiments (DoE) provide a powerful, systematic methodology for identifying the most influential factors among many potential variables, effectively separating "the vital few from the trivial many" [10]. This approach is markedly superior to the traditional One-Variable-At-a-Time (OVAT) method, which is inefficient, fails to capture interaction effects between factors, and can lead to erroneous conclusions about true optimal reaction conditions [11].

For researchers in drug development, where time and material resources are often limited, implementing a rigorous screening DoE is a crucial first step in the optimization workflow. It ensures that subsequent, more detailed experimental efforts are focused exclusively on the factors that truly impact process performance, thereby accelerating development timelines and reducing costs [12].

Core Principles of Screening DoE

The effectiveness of screening designs and analysis methods rests on four key statistical principles that are commonly observed in practice [10].

Table 1: Core Principles of Screening Designs

| Principle | Description | Implication for Reaction Optimization |

|---|---|---|

| Sparsity of Effects | Only a small fraction of a large number of potential factors will have a significant effect on the response. | While many factors (e.g., temp., catalyst, solvent) can be proposed, only a few (e.g., temp., pH) will control yield [10]. |

| Hierarchy | Lower-order effects (main effects) are more likely to be important than higher-order effects (interactions, quadratic effects). | Main effects are analyzed first; two-factor interactions are considered less frequently, and three-factor interactions are rare [10]. |

| Heredity | For a higher-order interaction to be significant, it is likely that at least one of its parent factors (main effects) is also significant. | If a catalyst-solvent interaction is important, it is probable that the main effect of the catalyst or solvent is also important [10]. |

| Projection | A design that starts with many factors can be projected into a simpler, robust design if only a few factors are found important. | A screening design with 8 factors can be projected into a full factorial design for the 2 or 3 vital factors identified, enabling deeper study [10]. |

Experimental Protocols for Screening

A Generic Workflow for Screening DoE

The following workflow provides a structured protocol for planning and executing a screening design in the context of reaction yield optimization.

Step 1: Define the Problem and Responses Clearly articulate the experimental goal. In synthetic chemistry, the primary response is often reaction yield (%) [11]. For asymmetric transformations, selectivity factors (e.g., enantiomeric excess) become concurrent critical responses. A major benefit of DoE is the ability to systematically optimize multiple responses simultaneously [11]. Ensure your analytical methods for quantifying these responses are stable and repeatable [13].

Step 2: Select Factors and Levels Assemble a team, including subject matter experts, to brainstorm all potential factors affecting the reaction [10]. These typically include continuous factors (e.g., temperature, pressure, concentration, stoichiometry) and categorical factors (e.g., solvent type, catalyst class, ligand type) [11]. For each continuous factor, select a high (+1) and low (-1) level that represents a realistic but sufficiently wide range expected to cause a detectable change in the response [13].

Step 3: Choose an Experimental Design Select a design that efficiently fits your budget and goal. Common screening designs include [10] [12]:

- Fractional Factorial Designs: Efficient for estimating main effects and some two-factor interactions with fewer runs than a full factorial. The resolution of the design indicates what interactions are measurable [12].

- Plackett-Burman Designs: Very economical designs used primarily for estimating main effects only when the number of factors is large [10] [12]. They assume interactions are negligible.

- Definitive Screening Designs (Modern): A powerful modern alternative that can estimate main effects and identify active two-factor interactions in a very efficient number of runs [10].

Step 4: Conduct the Experiment Run the experiments in a fully randomized order to avoid confounding the effects of factors with systematic trends over time [13]. Include replication (e.g., center points) to estimate pure error and enable statistical significance testing [10] [13]. For reaction screening, this means executing the reactions according to the randomized run order provided by the design.

Step 5: Analyze the Data and Interpret Results Use multiple linear regression to fit a model for each response (e.g., Yield, Selectivity) [10]. Analyze the results using:

- Analysis of Variance (ANOVA): To determine the statistical significance of the model and its terms.

- Half-Normal/Pareto Plots: To visually identify the few significant effects from the many negligible ones.

- Coefficient Plots: To visualize the estimated effect size and direction (positive or negative) for each factor.

Step 6: Plan Subsequent Experiments Use the results to refine your model and design the next set of experiments. This may involve a more detailed study of the vital few factors using a Response Surface Methodology (e.g., Central Composite Design) to locate the precise optimum [13] [11].

Case Study Protocol: Screening for Yield and Impurity

The following protocol is adapted from a manufacturing process example, illustrating the practical application of the screening workflow [10].

Objective: To identify the factors, among nine candidates, that significantly affect the Yield and Impurity of a chemical reaction.

Response Variables:

- Yield (%): The percentage of desired product formed.

- Impurity (%): The percentage of a key undesired by-product.

Factors and Levels: Table 2: Research Reagent Solutions for Case Study

| Factor Name | Factor Type | Low Level (-1) | High Level (+1) | Function/Justification |

|---|---|---|---|---|

| Temperature | Continuous | 15 °C | 45 °C | Controls reaction kinetics and pathway. |

| pH | Continuous | 5 | 8 | Impacts reactivity and selectivity in aqueous systems. |

| Catalyst | Continuous | 1% | 2% | Influences reaction rate and mechanism. |

| Vendor | Categorical | Cheap, Fast, Good | N/A | Tests raw material source as a potential critical factor. |

| Stir Rate | Continuous | 100 rpm | 120 rpm | Affects mass transfer in heterogeneous systems. |

| Pressure | Continuous | 60 kPa | 80 kPa | Critical for reactions involving gases. |

| Blend Time | Continuous | 10 min | 30 min | Determines reaction residence time. |

| Feed Rate | Continuous | 10 L/min | 15 L/min | Controls reactant addition profile. |

| Particle Size | Categorical | Small, Large | N/A | Tests physical form impact on solid reagents. |

Experimental Design:

- A main-effects-only Plackett-Burman design was selected due to a small experimental budget and a large number of factors.

- The design comprised 22 runs, including 4 center points.

- Center points (all continuous factors set to their middle levels) were included to estimate pure error and test for the presence of curvature in the response via a lack-of-fit test [10].

Procedure:

- Setup: Prepare all reagents and equipment according to the factor levels specified for the first experimental run.

- Execution: Carry out the reaction following the standardized procedure, randomizing the run order to minimize bias.

- Analysis: Upon completion, quench the reaction and analyze the mixture using a pre-validated analytical method (e.g., HPLC) to determine Yield and Impurity values.

- Recording: Record the responses for the run.

- Repetition: Repeat steps 1-4 for all 22 experimental runs in the randomized sequence.

Data Analysis:

- Model Fitting: Use multiple linear regression to fit a model for both Yield and Impurity.

- Significance Testing: Employ ANOVA to assess the significance of the overall model and individual factor effects.

- Effect Ranking: Rank the factors by importance for each response using statistical measures (e.g., p-value, Logworth).

Results and Conclusion: In this case study, analysis revealed that Temperature and pH were the largest effects for Yield, while Temperature, pH, and Vendor were the largest effects for Impurity [10]. This outcome narrowed the field of critical factors from nine to two or three, providing a clear direction for the next phase of experimentation, which would involve a full factorial or response surface design focused on these vital few factors.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Reaction Optimization

| Item / Factor | Type | Primary Function in Screening |

|---|---|---|

| Solvent | Categorical | Screens solvent polarity, protic/aprotic nature, and coordinating ability, which dramatically influence mechanism, rate, and selectivity [11]. |

| Catalyst & Ligand | Categorical / Continuous | Screens catalyst identity, metal-ligand combinations, and loading (mol%) to find the most effective system for the transformation [11]. |

| Temperature | Continuous | Probes reaction kinetics, thermodynamics, and stability; often one of the most critical factors [10] [11]. |

| Reagent Stoichiometry | Continuous | Determines the optimal balance of reactants to maximize yield of the desired product while minimizing side-reactions [11]. |

| Concentration | Continuous | Impacts reaction rate and can influence pathway selectivity by modulating intermediate stability or interaction [11]. |

| Agitation Rate | Continuous | Critical for heterogeneous reactions (solid-liquid, liquid-liquid); ensures efficient mass and heat transfer [14]. |

| Additive | Categorical | Screens the effect of acids, bases, or other modifiers that can alter reactivity or suppress decomposition pathways. |

| Residence/Reaction Time | Continuous | Defines the time required for the reaction to reach completion and can impact the formation of late-stage by-products [10]. |

Design of Experiments (DoE) has emerged as a foundational statistical methodology that systematically transforms the approach to reaction yield optimization in chemical and pharmaceutical research. Unlike traditional one-variable-at-a-time (OVAT) methods, DoE enables the simultaneous investigation of multiple factors and their complex interactions, leading to profound resource savings and deeper process understanding. This application note details the key advantages of DoE, provides structured quantitative comparisons, outlines a standardized protocol for implementation, and visualizes the core workflow, serving as a practical guide for researchers and development professionals engaged in optimizing synthetic reactions.

In the competitive landscape of drug development and chemical synthesis, achieving maximum reaction yield is paramount. The traditional OVAT approach, while intuitive, is inefficient and fundamentally flawed as it fails to capture interaction effects between variables and can lead to misleading optimal conditions [15] [16]. Design of Experiments (DoE) is a structured, statistical methodology that overcomes these limitations. By systematically planning, conducting, and analyzing controlled tests, DoE allows researchers to efficiently explore the entire experimental space, quantify the impact of multiple input factors on output responses like yield, and build predictive models for process optimization [17] [15]. This document frames the application of DoE within a broader research thesis on reaction yield optimization, highlighting its transformative advantages through quantitative data, practical protocols, and clear visualizations.

Key Advantages of DoE in Practice

The strategic adoption of DoE provides a multi-faceted advantage over conventional optimization methods, ranging from direct cost savings to the generation of robust, transferable knowledge.

Significant Resource Efficiency

DoE dramatically reduces the number of experiments required to obtain comprehensive process understanding. This efficiency conserves valuable materials, time, and laboratory resources. For instance, a full factorial design for 7 factors would require 2^7=128 experiments. A fractional factorial design can screen these same 7 factors for significance in only 8 experiments, a 94% reduction in experimental load [15]. This efficiency is further demonstrated in a study optimizing the direct Wacker-type oxidation of 1-decene to n-decanal, where a systematic DoE approach successfully navigated seven factors to maximize selectivity and conversion without requiring an impractically large number of experimental runs [18].

Revelation of Critical Factor Interactions

This is arguably the most powerful advantage of DoE. Interactions occur when the effect of one factor on the response depends on the level of another factor. For example, the effect of a change in reaction temperature on yield might be different at a high catalyst concentration than at a low one. OVAT methodologies are blind to these interactions, whereas DoE systematically uncovers them, preventing process failures and revealing synergistic effects that can be leveraged for superior performance [17] [15]. The inability to detect interactions is a critical shortcoming of the OVAT approach [16].

Enhanced Process Robustness and Quality

By mapping the relationship between factors and responses, DoE helps identify a design space—a multidimensional combination of input variables that consistently delivers a high-quality output. Processes optimized using DoE are inherently more robust, meaning they are less sensitive to minor, uncontrollable variations in raw materials or environmental conditions, ensuring consistent product quality and yield [17] [15]. This aligns perfectly with the Quality by Design (QbD) principles advocated by regulatory bodies like the FDA [15].

Accelerated Development Timelines

The efficiency of DoE directly translates to faster project cycles. By obtaining maximum information from a minimal number of experiments, researchers can accelerate the reaction optimization phase, reducing the time from initial discovery to process transfer and commercialization. This faster time-to-market provides a significant competitive advantage [17].

Table 1: Quantitative Comparison of DoE vs. One-Variable-at-a-Time (OVAT) Approach

| Feature | Design of Experiments (DoE) | One-Variable-at-a-Time (OVAT) |

|---|---|---|

| Experimental Efficiency | High (e.g., 7 factors screened in 8 runs) [15] | Low (requires many more runs for equivalent factors) |

| Detection of Interactions | Yes, a core capability [17] [15] | No, fundamentally unable to detect [16] |

| Process Understanding | Deep, builds a predictive model of the system [15] | Superficial, only reveals main effects in isolation |

| Process Robustness | High, identifies a stable design space [17] | Low, optimal point may be fragile to variation |

| Regulatory Compliance | Supported, aligns with QbD principles [15] | Not favored for demonstrating deep process understanding |

Experimental Protocol for Reaction Yield Optimization

The following protocol provides a generalized, step-by-step guide for implementing a DoE to optimize a chemical reaction, drawing from established best practices and recent applications in synthetic chemistry [17] [15] [16].

Stage 1: Pre-Experimental Planning

Step 1.1: Define the Problem and Objectives Clearly articulate the experimental goal. For yield optimization, the primary objective is typically to "maximize the reaction yield of Product P." Ensure the objective is specific and measurable.

- Input: Knowledge of the reaction and its challenges.

- Output: A single-sentence objective statement.

Step 1.2: Identify and Select Factors and Responses Brainstorm all potential variables (factors) that could influence the reaction yield using a cross-functional team. Common factors include catalyst loading, temperature, reaction time, solvent identity/volume, and reactant stoichiometry.

- Factors: Select 4-7 potentially critical factors for an initial screening design. Define a realistic high and low level for each continuous factor (e.g., Temperature: 25°C and 75°C).

- Responses: Define the measurable outputs. The primary response will be Reaction Yield (e.g., determined by NMR or HPLC). Secondary responses can include purity, selectivity, or conversion [18].

- Output: A finalized list of factors with their levels and defined responses.

Step 1.3: Choose the Experimental Design The choice of design depends on the number of factors and the study's goal.

- Screening: For identifying the most important factors from a larger set (e.g., 5-7), use a Fractional Factorial or Plackett-Burman design [17] [15].

- Optimization: For refining the levels of a smaller number of critical factors (e.g., 2-4), use Response Surface Methodology (RSM) designs like Central Composite or Box-Behnken to model curvature and locate the true optimum [17] [18].

- Output: A design matrix (a table listing the factor level settings for each experimental run) generated by statistical software (e.g., JMP, Minitab, Design-Expert).

Stage 2: Execution and Analysis

Step 2.1: Execute the Experiments Run the experiments in a randomized order to minimize the impact of lurking variables (e.g., ambient humidity, reagent batch). Use automated workstations and inline analytics (e.g., benchtop NMR) if available to enhance reproducibility and throughput [19].

- Protocol: Precisely follow the factor settings for each run as defined in the design matrix. Accurately measure and record all response values.

Step 2.2: Analyze the Data and Interpret the Results Input the experimental results into the statistical software for analysis.

- Analysis of Variance (ANOVA): Use ANOVA to identify which factors and interactions have a statistically significant effect on the reaction yield. Look for low p-values (typically <0.05).

- Model Generation: The software will generate a mathematical model (often a polynomial equation) that describes the relationship between the factors and the yield.

- Visualization: Examine contour plots and 3D response surface plots to understand the nature of the effects and interactions and to identify the region of optimal yield.

- Output: A list of significant effects, a predictive model, and graphical plots indicating the optimal direction.

Stage 3: Validation and Implementation

Step 3.1: Validate the Model with Confirmatory Runs Perform a small number of additional experiments (typically 3-5) at the predicted optimal conditions. This critical step verifies that the model accurately predicts reality.

- Protocol: Run the reaction at the suggested optimum and measure the yield. Compare the experimental result with the model's prediction.

- Output: Validation data confirming the model's accuracy and the reproducibility of the high-yield conditions.

Step 3.2: Implement and Document Formally document the optimized reaction conditions and incorporate them into standard operating procedures (SOPs) for future use. The entire DoE process, from design to validation, should be thoroughly documented for internal knowledge sharing and regulatory compliance [15].

Visualization of the DoE Workflow for Yield Optimization

The following diagram illustrates the iterative, staged workflow of a typical DoE project for reaction optimization, integrating the key steps outlined in the protocol.

Diagram 1: Staged DoE Workflow for Reaction Optimization. This chart outlines the sequential and iterative stages of a DoE project, from initial problem definition through to final implementation and documentation.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful execution of a DoE requires both strategic planning and practical laboratory tools. The following table details key resources and their functions in the context of a reaction yield optimization study.

Table 2: Essential Reagents, Materials, and Tools for DoE-Driven Optimization

| Category/Item | Function in DoE for Yield Optimization |

|---|---|

| Statistical Software | Tools like JMP, Minitab, or Design-Expert are critical for generating design matrices, analyzing results via ANOVA, and creating visualizations like response surface plots [17] [20]. |

| Parallel Reactor Systems | Automated workstations (e.g., Chemspeed platforms) enable the high-throughput execution of multiple reaction conditions in parallel, ensuring consistency and saving significant time [19]. |

| In-line/At-line Analytics | Benchtop NMR (e.g., Bruker Fourier 80) or HPLC systems provide rapid, quantitative yield data for immediate feedback and analysis, closing the loop on automated optimization workflows [19]. |

| Catalyst/Ligand Libraries | A diverse collection of catalysts and ligands is essential for screening these critical factors in metal-catalyzed reactions (e.g., Buchwald-Hartwig, Suzuki couplings) to find the highest-performing combination [21]. |

| Solvent & Additive Kits | Pre-prepared kits of common solvents (e.g., DMF, THF, MeCN) and additives (e.g., bases, acids) streamline the preparation of many different reaction conditions defined by the DoE matrix. |

The transition from one-variable-at-a-time experimentation to a structured Design of Experiments approach represents a paradigm shift in chemical research. The key advantages of DoE—dramatic resource savings, the critical revelation of factor interactions, and the establishment of robust, well-understood processes—make it an indispensable tool for modern scientists, particularly in the demanding field of drug development. By adopting the protocols and principles outlined in this application note, researchers can systematically unlock superior reaction yields, accelerate development timelines, and build a deeper, more predictive understanding of their chemistry.

Identifying the Right Scenarios for Applying DoE in Reaction Development

Design of Experiments (DoE) is a powerful statistical methodology for planning, conducting, and analyzing controlled experiments to efficiently explore the relationship between multiple input factors and desired outputs [22]. In the context of reaction development and optimization, DoE provides a systematic approach to understanding complex chemical processes, enabling researchers to move beyond traditional, inefficient one-variable-at-a-time (OVAT) approaches [23] [24]. This application note outlines key scenarios where DoE delivers significant advantages in pharmaceutical development and synthetic chemistry, providing structured protocols for implementation.

The fundamental strength of DoE lies in its ability to simultaneously vary multiple experimental factors, which allows for the identification of critical interactions that would likely be missed when experimenting with one factor at a time [22]. By creating a carefully prepared set of representative experiments where all relevant factors are varied simultaneously, researchers can construct a map of the experimental region that returns maximum information about how factors influence responses [23]. This organized approach enables more precise information acquisition in fewer experiments while accounting for experimental variability [23] [25].

When to Apply DoE: Key Scenarios

Primary Application Scenarios

Table 1: Key Scenarios for DoE Application in Reaction Development

| Scenario | Traditional Approach Limitations | DoE Advantages | Typical DoE Design |

|---|---|---|---|

| Initial Reaction Screening | Inefficient identification of critical factors from many candidates | Identifies key influencing factors from many variables with minimal experiments [26] | Fractional Factorial or Plackett-Burman designs [26] |

| Process Optimization | Risk of missing true optimum due to factor interactions; requires many experiments [24] | Models complex response surfaces and identifies optimal conditions even with interactions [24] [27] | Response Surface Methodology (Central Composite, Box-Behnken) [22] [27] |

| Solvent Optimization | Trial-and-error based on limited experience; potentially overlooking superior solvents [24] | Systematically explores "solvent space" using PCA-based maps to identify optimal solvent properties [24] | Specialized mixture designs or PCA-based solvent selection [24] |

| Robustness Testing | Inability to predict performance under variable conditions | Quantifies effect of minor variations on process performance and defines control strategies [23] | Full or Fractional Factorial designs with center points [22] |

| Multistep Synthesis Optimization | Optimizing steps independently may miss cross-step interactions and global optimum | Identifies critical interactions between steps and optimizes overall process yield [26] | Sequential DoE approaches (Screening → Optimization) [22] |

| Biological Assay Development | Unreliable results due to unrecognized factor interactions affecting assay performance | Identifies optimal assay conditions and critical factors affecting robustness [26] | Screening designs followed by optimization designs [26] |

Limitations of Traditional OVAT Approaches

The traditional One-Variable-at-a-Time (OVAT) approach, sometimes called the COST (Change One Separate factor at a Time) approach, presents significant limitations in reaction development [23]. This method involves varying just one factor while keeping others constant, which can lead to several problems:

Failure to Identify Optima: OVAT can easily miss the true optimum conditions when interactions between factors exist [24]. For example, as shown in Figure 1, optimizing reagent equivalents and temperature separately may incorrectly identify suboptimal conditions while missing the true optimum combination [24].

Inefficient Resource Use: The OVAT approach typically requires more experiments to obtain less information about the system [23]. It explores only a small portion of the possible experimental space, potentially requiring repetition when interactions are discovered later [23].

False Confidence: Researchers may perceive they have found an optimum with OVAT when in reality, continuing experiments in different regions of the experimental space might yield significantly better results [23].

Figure 1: Comparison of Traditional OVAT vs. DoE Experimental Approaches

Experimental Protocols

Protocol 1: Initial Reaction Screening

Objective: Identify the most critical factors affecting reaction yield from a larger set of potential variables [26].

Step-by-Step Workflow:

Define Objective and Responses

- Clearly state the primary objective (e.g., "Identify factors most critical for achieving >80% yield")

- Identify the response measurements (e.g., yield, purity, selectivity) and ensure reliable analytical methods [25]

Select Factors and Levels

- Choose factors to investigate (typically 4-8 factors for initial screening)

- Select appropriate high/low levels for each factor based on prior knowledge or preliminary experiments

- Example factors: catalyst loading, temperature, solvent dielectric, concentration, reaction time

Choose Experimental Design

- For 4-6 factors: Use a fractional factorial design (Resolution V or higher)

- For 7+ factors: Consider a Plackett-Burman design for highly efficient screening

- Include 3-5 center point replicates to estimate experimental error and check for curvature [22]

Execute Experimental Plan

- Randomize run order to minimize confounding with external factors [22]

- Conduct experiments according to the randomized sequence

- Precisely record all response data and any observations

Statistical Analysis

- Perform Analysis of Variance (ANOVA) to identify statistically significant effects

- Create Pareto charts of standardized effects to visualize factor importance [22]

- Check model adequacy using residual plots and center point analysis

Interpretation and Next Steps

- Identify the 2-4 most critical factors for further optimization

- Document insignificant factors that can be fixed at economical levels

- Proceed to optimization designs for critical factors

Protocol 2: Reaction Optimization Using Response Surface Methodology

Objective: Model the relationship between critical factors and responses to identify optimal reaction conditions [22].

Step-by-Step Workflow:

Define Optimization Criteria

- Establish clear criteria for success (e.g., yield >90%, impurity <2%, cost constraints)

- Identify 2-4 critical factors identified from screening experiments

Select Response Surface Design

- For 2-3 factors: Central Composite Design (CCD) or Box-Behnken Design [27]

- For 4+ factors: Consider fractional CCD to maintain practical experiment count

- Include 5-8 center points to estimate pure error and model adequacy

Experimental Execution

- Execute all design points in randomized order

- Include additional center points throughout the sequence to monitor stability

- Consider blocking if experiments must be performed in multiple sessions

Model Development

- Fit experimental data to quadratic model: Y = β₀ + ΣβᵢXᵢ + ΣβᵢᵢXᵢ² + ΣβᵢⱼXᵢXⱼ

- Evaluate model significance using ANOVA (check F-statistic and p-values)

- Assess model adequacy (R², adjusted R², prediction R², residual analysis)

Optimization and Validation

- Use contour plots and response surface plots to visualize factor-response relationships [23]

- Apply numerical optimization to identify optimum conditions meeting all criteria

- Conduct 3-5 confirmation experiments at predicted optimum to validate model

Protocol 3: Solvent Optimization Using PCA-Based Solvent Maps

Objective: Systematically identify optimal solvent(s) for a reaction using principle component analysis of solvent properties [24].

Step-by-Step Workflow:

Define Solvent Selection Criteria

- Identify key reaction requirements (e.g., polarity, hydrogen bonding, coordinating ability)

- Consider practical constraints (safety, environmental impact, cost, availability)

Select Solvent Set

- Choose 5-8 solvents spanning different regions of PCA-based solvent space [24]

- Include solvents from different chemical classes (ethers, esters, hydrocarbons, amides, etc.)

- Consider including potentially "green" solvent alternatives

Experimental Design

- Use a special mixture design or categorical design for solvent screening

- If studying solvent mixtures, employ simplex-lattice or simplex-centroid designs

- Include additional factors as needed (concentration, temperature, etc.)

Execution and Analysis

- Conduct reactions in selected solvents using randomized order

- Measure key responses (conversion, yield, selectivity, etc.)

- Analyze data to identify optimal solvent region in PCA space

Optimization and Application

- Select additional solvents from promising regions for further testing

- Validate optimal solvent choice across multiple substrate types

- Document solvent-performance relationships for future reaction development

Research Reagent Solutions

Table 2: Essential Research Reagents and Materials for DoE Studies

| Reagent/Material | Function in DoE Studies | Application Notes |

|---|---|---|

| Catalyst Libraries | Systematic variation of catalyst type and loading | Maintain consistent ligand-to-metal ratios; consider stability under reaction conditions [24] |

| Solvent Kits | Exploration of solvent effects using PCA-based selection | Include diverse chemical classes covering principle component space [24] |

| Substrate Pairs | Evaluation of substrate generality and scope | Include electronically and sterically diverse examples [24] |

| Temperature Control Systems | Precise maintenance of reaction temperature | Critical for reproducible results across experimental series [22] |

| Analytical Standards | Accurate quantification of reaction outcomes | Essential for reliable response measurements [25] |

| Reagent Stocks | Controlled variation of reagent equivalents | Prepare concentrated stock solutions for accurate dispensing [24] |

| Inert Atmosphere Equipment | Exclusion of oxygen and moisture when required | Maintain consistent reaction conditions across all experiments [24] |

Data Analysis and Interpretation

Statistical Analysis Methods

Proper statistical analysis is crucial for extracting meaningful information from DoE studies. Key analysis methods include:

Analysis of Variance (ANOVA): Determines the statistical significance of factor effects and model terms [25]. Look for p-values <0.05 to identify significant effects, though this threshold may be adjusted based on practical significance.

Regression Analysis: Develops mathematical models relating factors to responses [25]. For optimization studies, quadratic models are typically employed: Y = β₀ + ΣβᵢXᵢ + ΣβᵢᵢXᵢ² + ΣβᵢⱼXᵢXⱼ.

Residual Analysis: Checks model adequacy by examining patterns in the differences between observed and predicted values. Random scatter in residual plots indicates a well-fitting model.

Contour and Response Surface Plots: Visualizes the relationship between factors and responses [23]. These plots are invaluable for identifying optimal conditions and understanding factor interactions.

Interpretation Guidelines

Effective interpretation of DoE results requires both statistical and practical reasoning:

Statistical vs. Practical Significance: An effect may be statistically significant but too small to be practically important. Consider the magnitude of effects alongside p-values.

Model Hierarchy: When effects are aliased or confounded, respect the principle of hierarchy - include lower-order terms even if non-significant when higher-order terms are in the model.

Leveraging Interactions: Significant interaction effects indicate that the impact of one factor depends on the level of another. These interactions often reveal opportunities for process optimization that would be missed with OVAT approaches.

Multiple Responses: When optimizing for multiple responses, use desirability functions or overlay contour plots to identify conditions that balance all requirements.

Design of Experiments provides a structured, efficient framework for reaction development that surpasses traditional OVAT approaches, particularly in scenarios requiring the identification of critical factors, process optimization, and understanding complex factor interactions. By implementing the protocols outlined in this application note, researchers can systematically explore experimental spaces, develop predictive models, and identify robust optimal conditions with fewer resources than conventional approaches. The sequential application of screening followed by optimization designs represents a particularly powerful strategy for comprehensive reaction development. As the pharmaceutical industry faces increasing pressure to accelerate development timelines while maintaining quality standards, adopting DoE methodologies provides a competitive advantage through more efficient and informative experimentation.

A Practical Framework: Implementing Screening and Optimization DoE Designs

In the development and optimization of chemical reactions, particularly in pharmaceutical research, researchers are often confronted with a vast array of potential factors that could influence critical outcomes such as reaction yield and purity. Screening designs provide a systematic, efficient methodology for identifying the "vital few" key variables from the "trivial many" potential factors, enabling focused optimization efforts [28] [10]. These experimental strategies are founded on the principle of effect sparsity, which posits that only a small subset of factors will have substantial effects on the response [29]. For drug development professionals working to maximize reaction yield while controlling impurities, screening designs offer a scientifically rigorous approach to experimental planning that conserves valuable resources—time, materials, and labor—by reducing the number of experiments required to identify significant factors [12] [14].

The hierarchy principle further supports the use of screening designs, suggesting that main effects (the individual effect of each factor) are more likely to be important than two-factor interactions, which in turn are more likely to be important than higher-order interactions [10]. This hierarchy guides the strategic selection of appropriate screening methodologies. Within this framework, two predominant screening approaches emerge: Fractional Factorial Designs (FFDs) and Plackett-Burman Designs [28] [30]. Both methodologies enable researchers to study numerous factors simultaneously with a fraction of the experimental runs required for full factorial experimentation, making them particularly valuable in the early stages of reaction optimization when many factors must be evaluated with limited resources [28] [29].

Fundamental Principles of Screening Designs

Core Concepts and Terminology

- Factors: Independent variables suspected of influencing the reaction outcome (e.g., temperature, catalyst concentration, solvent type) [12]. In screening designs, factors are typically investigated at two levels (high/low) to estimate main effects efficiently [31].

- Levels: The specific settings or values at which each factor is tested [12]. For continuous factors like temperature, this might be 50°C (low) and 80°C (high). For categorical factors like solvent type, this could be Solvent A and Solvent B.

- Responses: The dependent variables or measured outcomes of the experiment [12]. In reaction optimization, key responses typically include reaction yield (percentage of desired product formed) and impurity profile (types and amounts of byproducts) [32] [10].

- Aliasing: A fundamental concept in screening designs where certain effects are mathematically confounded or inseparable from others due to the reduced number of experimental runs [31] [33]. This is a deliberate trade-off that enables experimental efficiency.

- Resolution: A classification system (Roman numerals III, IV, V) that describes the degree to which estimated effects are aliased with one another [31]. Resolution III designs confound main effects with two-factor interactions, while Resolution IV designs confound two-factor interactions with each other but not with main effects [31].

Statistical Principles Underpinning Screening Efficiency

Screening designs derive their efficiency from several key statistical principles that align well with practical experimentation in chemical development:

- Sparsity of Effects: This principle states that while many factors may be investigated, typically only a few have substantial effects on the response [10] [29]. This is particularly relevant in reaction optimization, where experience shows that typically only a subset of reaction parameters truly drives yield and selectivity.

- Projection Property: A well-designed screening experiment with good projection properties will maintain its statistical integrity when unimportant factors are removed, effectively collapsing into a more comprehensive design for the remaining important factors [10]. This allows for a seamless transition from screening to optimization.

- Heredity Principle: This principle suggests that important interactions (e.g., between temperature and catalyst) are more likely to occur between factors that also have significant main effects [10]. This guides both experimental design and subsequent analysis.

Table 1: Key Statistical Principles in Screening Designs

| Principle | Description | Implication for Reaction Optimization |

|---|---|---|

| Effect Sparsity | Few factors and interactions have substantial effects | Enables efficient screening of many variables to find the critical few |

| Hierarchy | Lower-order effects (main effects) are more likely important than higher-order effects | Justifies focusing on main effects in initial screening |

| Heredity | Important interactions typically involve factors with significant main effects | Guides follow-up experiments to investigate specific interactions |

| Projection | Design maintains good properties when ignoring unimportant factors | Allows seamless progression from screening to optimization |

Fractional Factorial Designs (FFDs)

Theoretical Foundation and Design Structure

Fractional Factorial Designs (FFDs) are a class of screening designs that systematically select a subset (fraction) of the runs from a full factorial design [31]. The notation for a two-level FFD is (2^{k-p}), where (k) represents the number of factors, (p) determines the fraction of the full factorial ((1/2^p)), and the total number of runs is (2^{k-p}) [31]. For example, a (2^{5-2}) design studies 5 factors in 8 runs, which is 1/4 of the 32 runs required for a full factorial design [31]. The structure of FFDs is controlled by generators—mathematical relationships that determine which effects are intentionally confounded to reduce the number of runs [31]. The collection of these generators forms the defining relation, which is essential for determining the alias structure of the design [31].

Resolution Levels and Their Interpretation

The resolution of a fractional factorial design indicates its ability to separate main effects and low-order interactions [31]:

- Resolution III: Main effects are clear of each other but are aliased with two-factor interactions [31]. Useful for initial screening of many factors when interactions are presumed negligible.

- Resolution IV: Main effects are clear of two-factor interactions, but two-factor interactions are aliased with each other [31]. Preferred when there is concern that interactions might be present.

- Resolution V: Main effects and two-factor interactions are clear of each other, but two-factor interactions are aliased with three-factor interactions [31]. Provides more detailed information but requires more runs.

Table 2: Fractional Factorial Design Resolution Guide

| Resolution | Ability | Example | Use Case in Reaction Optimization |

|---|---|---|---|

| III | Estimate main effects, but they may be confounded with two-factor interactions | (2^{3-1}) with defining relation I = ABC | Initial screening with many factors (>5) where interactions are considered unlikely |

| IV | Estimate main effects unconfounded by two-factor interactions; two-factor interactions are aliased with each other | (2^{4-1}) with defining relation I = ABCD | Screening when some interactions are suspected but cannot be estimated separately |

| V | Estimate main effects and two-factor interactions unconfounded by each other | (2^{5-1}) with defining relation I = ABCDE | Later screening stages when key factors have been identified and interaction information is needed |

Application Protocol: Implementing Fractional Factorial Designs

Step 1: Design Selection and Setup

- Identify all potential factors (k) influencing the reaction yield [12]. For example, in a catalytic hydrogenation optimization, factors might include catalyst type, temperature, pressure, concentration, solvent, and agitation rate [32].

- Determine the appropriate resolution based on the number of factors and the importance of detecting interactions [28] [31]. For initial screening of 6 factors, a Resolution IV (2^{6-2}) design with 16 runs would be appropriate.

- Select the specific design generators to define the alias structure [31]. Standard generators are available in statistical references and software.

- Define factor ranges (levels) that are sufficiently different to detect an effect but remain within practical operating conditions [10].

Step 2: Experimental Execution

- Randomize the run order to protect against systematic bias and uncontrolled environmental factors [30].

- Execute experiments according to the design matrix, carefully controlling factor levels as specified.

- Measure response variables (e.g., reaction yield, impurity levels) for each experimental run [32] [10].

- Include center points (where all continuous factors are set at their mid-level) to check for curvature in the response and estimate experimental error [10].

Step 3: Data Analysis and Interpretation

- Calculate main effects by contrasting the average response at high and low levels for each factor [30].

- Use statistical significance testing (ANOVA) or half-normal probability plots to identify active factors [30] [12].

- Interpret the alias structure to understand what interactions are confounded with significant main effects [31] [33].

- Based on the results, reduce the model by removing unimportant factors and refit with significant terms [10].

Plackett-Burman Designs

Theoretical Foundation and Design Structure

Plackett-Burman designs are a specialized class of highly fractional factorial designs developed in the 1940s by statisticians Robin Plackett and J.P. Burman [30]. These designs are particularly valuable for screening a large number of factors when resources are limited, allowing the study of up to N-1 factors in N experimental runs, where N is a multiple of 4 (e.g., 12, 20, 24, 28) [33] [30]. Unlike traditional fractional factorial designs with run counts that are powers of two (8, 16, 32), Plackett-Burman designs fill the gaps between these numbers, providing greater flexibility in experimental planning [33]. These designs are Resolution III, meaning that main effects are not confounded with other main effects but are aliased with two-factor interactions [30]. The design matrix consists of orthogonal columns with an equal number of +1 and -1 entries, ensuring that all main effects can be estimated independently [33].

Comparative Advantages and Limitations

Plackett-Burman designs offer several distinct advantages for reaction screening applications. Their exceptional economic efficiency enables researchers to evaluate numerous factors with minimal experimental runs, making them ideal for early-stage reaction screening when many parameters must be investigated [30]. The availability of designs with run numbers that are multiples of 4 (12, 20, 24) provides greater flexibility compared to the power-of-two run counts in traditional fractional factorials [33]. The orthogonal structure ensures that all main effects are estimated independently, providing clear information on each factor's individual impact [33].

However, these designs have important limitations that must be considered. As Resolution III designs, they cannot estimate interaction effects independently, as these are completely confounded (aliased) with main effects [33] [30]. They also assume that three-factor and higher interactions are negligible, which is generally reasonable for screening but should be verified in follow-up experiments [30]. The analysis can be challenging when effect sparsity doesn't hold (when many factors are important), as the alias structure becomes more complex to interpret [29].

Application Protocol: Implementing Plackett-Burman Designs

Step 1: Design Selection and Setup

- Determine the number of factors (k) to be screened and select an appropriate design size (N) where N is a multiple of 4 and greater than k [33] [30]. For 9 factors, a 12-run design would be appropriate [34].

- Generate the design matrix using available tables, statistical software, or the cyclical generation method described in the literature [33] [34].

- Assign factors to columns in the design matrix, typically leaving any unused columns as dummy factors to estimate error [30].

- Include center points (typically 3-5) to estimate pure error and detect curvature [10] [34].

Step 2: Experimental Execution

- Randomize the run order to minimize the impact of uncontrolled variables [30] [34].

- Conduct experiments according to the design matrix, maintaining careful control of factor levels.

- Measure all relevant response variables, with particular emphasis on reaction yield and impurity profiles in chemical applications [32] [10].

- Document any observations or potential anomalies during experimentation.

Step 3: Data Analysis and Interpretation

- Calculate main effects for each factor by comparing the average response at high and low levels [30].

- Use statistical methods such as normal probability plots, Pareto charts, or t-tests to identify significant effects [30].

- Recognize that significant effects could represent either main effects or two-factor interactions due to the alias structure [33].

- Identify the "vital few" factors that demonstrate substantial effects on the response for further investigation [10].

Comparative Analysis and Selection Guide

Direct Comparison of Screening Methodologies

Table 3: Fractional Factorial vs. Plackett-Burman Designs

| Characteristic | Fractional Factorial Designs | Plackett-Burman Designs |

|---|---|---|

| Design Notation | (2^{k-p}) (powers of 2) | N (multiples of 4: 12, 20, 24) |

| Run Requirements | 8, 16, 32, 64, 128 runs | 12, 20, 24, 28, 36 runs |

| Factor Efficiency | Up to k factors in (2^{k-p}) runs | Up to N-1 factors in N runs |

| Resolution | III, IV, V (selectable) | III primarily |

| Aliasing Structure | Clear, systematic confounding patterns | Complex partial aliasing |

| Interaction Assessment | Possible in higher resolution designs | Not estimable (completely aliased) |

| Projection Properties | Excellent | Good |

| Optimal Use Case | When some interaction information is needed | Pure main effect screening with many factors |

Selection Guidelines for Reaction Optimization

The choice between fractional factorial and Plackett-Burman designs depends on several factors specific to the reaction optimization context:

- Number of Factors: For 5-7 factors, fractional factorial designs typically offer better properties. For 8 or more factors, Plackett-Burman designs become increasingly attractive due to their higher efficiency [33] [30].

- Resource Constraints: When material, time, or cost limitations are severe, Plackett-Burman designs provide the most economical screening approach [30] [14].

- Prior Knowledge: When there is strong theoretical or empirical reason to believe that specific interactions might be important, Resolution IV or V fractional factorial designs are preferable [28] [31].

- Experimental Sequence: For a single screening phase followed immediately by optimization, Plackett-Burman may suffice. For a more comprehensive understanding with less follow-up experimentation, fractional factorial designs provide more information [29].

Case Study: Catalytic Hydrogenation Optimization

Background and Experimental Challenge

A case study from a generic API producer illustrates the practical application of screening designs in pharmaceutical development [32]. The challenge involved optimizing a catalytic hydrogenation reaction of a halonitroheterocycle that initially produced an impure amine product with approximately 60% yield over 24 hours and an unacceptable impurity profile [32]. The development team needed to identify the key factors influencing both yield and purity from a potentially large set of reaction parameters, including catalyst type, concentration, temperature, pressure, and solvent composition.

Screening Approach and Implementation

The optimization followed a two-stage approach representative of best practices in reaction optimization [32]. First, discrete variables (14 different catalysts) were screened to identify the most promising candidates. Subsequently, a two-level factorial design was employed to optimize continuous parameters including concentration, temperature, and pressure [32]. While the specific screening design type isn't detailed in the source, this systematic approach exemplifies the strategic application of screening methodologies to separate the catalyst screening (a discrete selection process) from the optimization of continuous reaction parameters.

Results and Impact

The implementation of this screening and optimization strategy delivered substantial improvements in the reaction performance [32]. The yield was dramatically improved to 98.8% in just 6 hours (compared to the original 60% in 24 hours), while impurities were reduced to below 0.1% [32]. Additionally, the systematic approach resolved poor solubility and instability issues that had plagued the original process [32]. The entire optimization, from initial screening to final report and samples, was completed within two months, demonstrating the efficiency gains achievable through well-designed screening experiments [32].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents and Materials for Reaction Screening

| Reagent/Material | Function in Screening Experiments | Application Notes |

|---|---|---|

| Catalyst Library | Screening of different catalytic systems for reaction initiation and selectivity | Essential for identifying optimal catalyst; example: 14 catalysts screened in hydrogenation case [32] |

| Solvent Systems | Variation of reaction medium to optimize solubility, stability, and selectivity | Include polar, non-polar, protic, and aprotic solvents for comprehensive screening |

| Temperature Control System | Precise maintenance of reaction temperature at specified levels | Critical for reproducible results; temperature often identified as key factor [10] |

| Pressure Regulation Apparatus | Control of reaction pressure, particularly for gas-involved reactions | Important for hydrogenation, carbonylation, and other pressure-sensitive reactions [32] |

| Analytical Standards | Quantification of yield, conversion, and impurity profiles | HPLC/GC standards for product and key impurities essential for response measurement |

| Statistical Software | Design generation, randomization, and data analysis | Packages like Minitab, JMP, or R enable proper design implementation and analysis [10] [34] |

Screening designs represent a powerful methodology for efficiently identifying critical factors in reaction optimization, enabling researchers to focus resources on the parameters that truly impact reaction yield and selectivity. Fractional Factorial Designs provide a structured approach with selectable resolution levels, while Plackett-Burman designs offer exceptional economic efficiency for pure main effect screening. The successful application of these methodologies in pharmaceutical development, as demonstrated in the catalytic hydrogenation case study, highlights their practical value in accelerating process development while improving reaction outcomes. By integrating these statistical experimental strategies early in reaction optimization workflows, drug development professionals can systematically navigate complex factor spaces, reduce experimental burden, and ultimately develop more robust and efficient synthetic processes.

Within the framework of a broader thesis on optimizing chemical and pharmaceutical reaction yields using Design of Experiments (DoE), Response Surface Methodology (RSM) stands as a critical statistical tool. It moves beyond simple screening to model complex, curved relationships between critical process parameters (CPPs) and key performance outcomes, such as reaction yield or purity [35] [36]. For drug development professionals, this is indispensable for defining a robust design space that ensures consistent product quality. Two predominant RSM designs are the Central Composite Design (CCD) and the Box-Behnken Design (BBD). This article provides detailed application notes and experimental protocols for implementing these designs, framed within the context of reaction optimization research [27].

Design Comparison and Selection Guidelines

The choice between CCD and BBD hinges on the experimental objectives, process constraints, and stage of development. The table below synthesizes their key characteristics to guide selection.

Table 1: Comparative Summary of Central Composite Design (CCD) and Box-Behnken Design (BBD)

| Feature | Central Composite Design (CCD) | Box-Behnken Design (BBD) |

|---|---|---|

| Core Structure | Built upon a factorial (full or fractional) core, augmented with axial ("star") points and center points [35] [37]. | An independent quadratic design with points at the midpoints of edges of the factorial hypercube and at the center; no embedded factorial design [35] [38]. |

| Factor Levels | Typically 5 levels per factor (-α, -1, 0, +1, +α). A face-centered CCD (α=1) uses 3 levels [35] [39]. | Always 3 levels per factor (-1, 0, +1) [35] [38]. |

| Design Points | Number of runs = 2^(k-f) + 2k + C₀ (where k=factors, f=fraction, C₀=center points). Run count grows significantly for k>6 [37] [39]. | Generally more run-efficient for the same number of factors, especially beyond k=4 [37]. |

| Sequential Experimentation | Highly suited. One can begin with a factorial study and later add axial/center points to model curvature, allowing for progressive learning [35] [37]. | Not suited. Requires committing to a full quadratic model from the start; cannot be built upon a prior factorial experiment [35] [37]. |

| Exploration of Space | Tests extreme factorial corners and points beyond the original cube (via α >1), useful for locating an optimum outside initial bounds [35] [37]. | Never includes points where all factors are simultaneously at extreme high/low levels. All points lie within safe operating boundaries [35] [37]. |

| Primary Applications | Ideal for early-stage process understanding, sequential optimization, and when exploring beyond predefined limits is safe and desirable [27] [37]. | Preferred for optimizing well-characterized systems where testing extreme combinations is risky, expensive, or impractical, and for staying within strict operational limits [40] [37] [41]. |

| Example Run Count (k=3) | 14-20 runs (depending on center points) [37]. | 15 runs (typically) [40] [37]. |

A recent comparative study on optimizing nano-emulsion formulations found that while both designs yielded similar optimal conditions, the CCD model provided predictions slightly closer to the actual experimental values [42].

Experimental Protocols for Reaction Yield Optimization

Generic RSM Workflow Protocol

The following step-by-step protocol is applicable to both CCD and BBD within a reaction optimization thesis.

Problem Definition & Response Selection:

Factor Screening & Level Selection:

- Identify potential Critical Process Parameters (CPPs) from prior knowledge (e.g., catalyst loading, temperature, residence time, ligand equivalence) [43].

- Conduct preliminary screening (e.g., using a Plackett-Burman or fractional factorial design) to identify the most influential factors for the detailed RSM study [27] [36].

- Define the low (-1) and high (+1) levels for each continuous factor based on practical and safe operating ranges.

Design Selection & Matrix Generation:

- Choose between CCD or BBD based on Table 1. For a thesis, justifying this choice is crucial.

- Use statistical software (e.g., Design-Expert, STATISTICA, Minitab) to generate the experimental design matrix. The software will assign coded factor levels for each experimental run [40] [43].

- For CCD: Decide on the axial distance (α). A rotatable CCD (α = 2^(k/4)) is common. Specify the number of center points (typically 3-6) to estimate pure error [38] [37].

- For BBD: The software generates runs at the midpoints of edges. Specify the number of center points [40] [38].

Randomized Experiment Execution:

- Randomize the run order provided by the design matrix to minimize the effects of lurking variables.

- Execute the reactions meticulously, adhering to the specified factor levels for each run.

- Accurately measure and record the response(s) for each experimental run.

Model Fitting & Statistical Analysis:

- Input the experimental data into the software.

- Fit a second-order polynomial (quadratic) model:

Y = β₀ + ΣβᵢXᵢ + ΣβᵢᵢXᵢ² + ΣβᵢⱼXᵢXⱼ + ε - Perform Analysis of Variance (ANOVA) to assess the model's significance. Key metrics include:

- Model p-value: Should be < 0.05.

- Lack-of-Fit p-value: Should be non-significant (> 0.05).

- R² and Adjusted R²: Indicate the proportion of variation explained by the model.

- Perform diagnostic checks (e.g., residual plots) to validate model assumptions (normality, constant variance) [38] [36].

Response Surface Analysis & Optimization:

- Use the software's graphical tools (3D surface plots, 2D contour plots) to visualize the relationship between factors and the response.

- Employ numerical optimization techniques (e.g., desirability function) to identify factor level combinations that predict an optimal response [40] [36].

- The software will suggest one or more optimal solutions.

Model Validation & Verification:

Specific Protocol: Optimizing a Flow Reaction using CCD

This protocol is adapted from a published DoE study on a Pd-catalyzed aerobic oxidation [43].

- Objective: Maximize the yield of aldehyde 3 in a continuous flow system.

- Selected Factors (k=6): Catalyst loading (mol%), Pyridine equivalence, Temperature (°C), O₂ Pressure (bar), O₂ Flow rate (mL/min), Reagent Flow rate (mL/min).

- Design: A six-parameter, two-level fractional factorial design (2^(6-3)) was used for initial screening, which can be augmented with axial points to form a CCD for full optimization [43].

- Procedure:

- Prepare stock solutions of substrate and catalyst/Pyridine in the appropriate solvent system (e.g., toluene/caprolactone).

- Set up the flow reactor system with mass flow controllers for gases and pumps for liquids.

- Program the reactor conditions (temperature, pressure) according to the design matrix.

- For each run, initiate flows of the substrate stream and O₂, followed by merging with the catalyst stream as per the defined configuration.

- Collect the output stream and analyze by UHPLC to determine conversion and yield.

- Analyze data using DoE software to generate a predictive model and locate the optimum.

Visualization of Workflows and Design Structures

(Note: The second diagram conceptually represents a 2D projection of a 3-factor BBD, showing that points lie on edge midpoints. A full 3D visualization requires more complex DOT scripting.)

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for DoE-Driven Reaction Optimization

| Item | Function & Role in DoE Context | Example from Literature |

|---|---|---|

| Statistical Software | Used to generate design matrices, randomize runs, perform ANOVA, fit models, create response surfaces, and perform numerical optimization. Essential for data analysis. | Design-Expert [40], STATISTICA [43], Minitab [35]. |

| Catalyst Systems | A critical continuous or categorical factor. Variation in loading (mol%) is a common parameter to optimize for yield and cost. | Pd(OAc)₂/Pyridine for aerobic oxidation [43]. |

| Ligands/Additives | Can be a qualitative (type) or quantitative (equivalents) factor. Optimizing their type and amount is crucial for selectivity and yield. | Pyridine as a ligand/co-catalyst [43]. |

| Solvents | Often a categorical factor. Screening and optimizing solvent systems can dramatically affect solubility and reaction outcome. | Toluene/Caprolactone mixture [43]. |

| Analytical Standards & HPLC/UHPLC | Critical for accurate, quantitative measurement of the response variables (yield, conversion, impurity profile). Data quality is paramount for model accuracy. | Used for quantifying febuxostat [41] and oxidation products [43]. |

| Process Analytical Technology (PAT) | In-line sensors (e.g., FTIR, Raman) enable real-time data collection, facilitating high-throughput DoE and kinetic studies. | (Implied as best practice for advanced studies). |

| Continuous Flow Reactor System | Enables precise control of factors like residence time, temperature, and mixing. Ideal for executing designed experiments with high reproducibility. | Vapourtec system with PFA tubular reactors [43]. |

| Designated Lab Notebook/ELN | For meticulously recording the randomized run order, exact conditions for each experiment, and all raw response data. | Essential for traceability and reproducibility. |

The optimization of chemical reactions to maximize the yield of Active Pharmaceutical Ingredients (APIs) is a fundamental challenge in pharmaceutical development [44]. Traditionally, this process has been dominated by the One-Variable-At-a-Time (OVAT) approach, where a single parameter is altered while others are held constant [11]. While intuitive, this method is inefficient, fails to capture interaction effects between variables, and often misses the true optimum conditions, leading to suboptimal yields and extended development timelines [11].

This application note details a case study where Design of Experiments (DoE) was implemented to overcome the limitations of OVAT and achieve a three-fold yield increase in the synthesis of a model API. DoE is a statistical methodology that systematically varies all relevant factors simultaneously across a defined experimental space, enabling the efficient identification of optimal conditions and a deeper understanding of factor interactions [11]. Framed within a broader thesis on reaction yield optimization, this report provides detailed protocols, data, and workflows to guide researchers in applying DoE to their own synthetic challenges.

Experimental Design and Workflow

The DoE Optimization Workflow

A structured, multi-stage workflow is critical for the successful application of DoE in reaction optimization. The process, adapted from a practical guide for synthetic chemists, is designed to move from initial screening to a validated optimum with maximal efficiency [11]. The following diagram illustrates this sequential workflow.

Figure 1: A sequential workflow for implementing Design of Experiments (DoE) in reaction optimization. The process allows for iterative refinement if the initial model proves inadequate [11].

Case Study: Nucleophilic Substitution API

For this case study, we focused on a nucleophilic aromatic substitution reaction, a common step in the synthesis of many drug substances. The model transformation involves the reaction of a chlorinated heteroarene (Substrate A) with a secondary amine (Nucleophile B) to produce the target API.

- Initial Challenge: Initial OVAT optimization, which varied catalyst loading, temperature, and solvent individually, resulted in a maximum yield of 25%. This was economically unviable for scale-up and was suspected to be a false optimum due to unaccounted-for variable interactions [11].

- DoE Objective: To systematically optimize the reaction using DoE, achieving a significant yield improvement by identifying the true optimal conditions and understanding factor interactions.

Materials and Methods

Research Reagent Solutions

The table below catalogues the key reagents, solvents, and equipment essential for executing the described API synthesis and DoE optimization.

Table 1: Essential research reagents and equipment for the API synthesis and optimization.

| Item Name | Function/Description | Key Considerations |

|---|---|---|