Response Surface Methodology in Synthesis: A Complete Guide for Pharmaceutical Optimization

This comprehensive guide explores Response Surface Methodology (RSM) as a powerful statistical framework for optimizing synthesis processes in pharmaceutical development and drug formulation.

Response Surface Methodology in Synthesis: A Complete Guide for Pharmaceutical Optimization

Abstract

This comprehensive guide explores Response Surface Methodology (RSM) as a powerful statistical framework for optimizing synthesis processes in pharmaceutical development and drug formulation. Covering both foundational principles and advanced applications, the article details how RSM enables researchers to systematically model complex relationships between multiple input variables and critical quality responses. Through methodological guidance, troubleshooting insights, and comparative analysis with emerging AI techniques, this resource provides pharmaceutical scientists with practical strategies for enhancing yield, purity, and process robustness while reducing experimental burden and development costs.

Understanding Response Surface Methodology: Core Principles for Synthesis Optimization

Definition and Historical Development of RSM in Scientific Research

Response Surface Methodology (RSM) is a collection of statistical and mathematical techniques fundamental to modeling and optimizing processes in scientific research and development. This whitepaper delineates the core principles, historical evolution, and methodological framework of RSM, with a particular emphasis on its application in synthesis research, including pharmaceutical development. We provide a comprehensive examination of its foundational statistical concepts, a detailed guide to its experimental protocols, and an analysis of its implementation across diverse scientific disciplines. Structured tables compare quantitative design attributes, and visualized workflows illustrate the sequential nature of RSM. This guide serves as a technical resource for researchers and scientists seeking to employ RSM for efficient empirical model-building and optimization.

Response Surface Methodology (RSM) is defined as a collection of statistical and mathematical techniques designed for developing, improving, and optimizing processes and products by modeling the relationships between several explanatory variables (factors) and one or more response variables [1] [2]. Its primary objective is to identify the factor levels that produce the most desirable response values, often by approximating the true underlying response surface near an optimal point [2]. As an empirical model-building approach, RSM occupies a critical role in the broader framework of Design of Experiments (DOE), specifically focusing on optimization when the response of interest is influenced by multiple variables [3] [4].

Within the context of synthesis research—encompassing drug formulation, chemical synthesis, and biomolecule production—RSM provides a structured approach to understanding complex factor interactions and identifying optimal operational conditions. It moves beyond inefficient one-factor-at-a-time (OFAT) approaches, which fail to explain interactions between factors and can require a large number of experiments [5]. By systematically exploring the experimental space, RSM enables scientists to maximize yield, improve product quality, and reduce variability and costs with a minimal number of experimental runs [6] [7].

Historical Development and Evolution

The development of RSM is rooted in the convergence of statistical theory and industrial practicality. Table 1 outlines the key milestones in its evolution.

Table 1: Historical Milestones in the Development of RSM

| Time Period | Key Contributor(s) | Contribution | Impact on RSM |

|---|---|---|---|

| 1920s-1930s | Sir Ronald A. Fisher | Pioneered factorial designs and analysis of variance (ANOVA) at Rothamsted Experimental Station [8] [2]. | Laid the statistical foundations for modern experimental design, introducing concepts of randomization and multi-factor studies [8]. |

| 1951 | George E. P. Box and K. B. Wilson | Published seminal paper "On the Experimental Attainment of Optimum Conditions," formally introducing RSM [1] [2]. | Developed second-order rotatable designs and the method of steepest ascent for sequential optimization in industrial processes, shifting focus to curved response surfaces [8] [2]. |

| 1960 | George E. P. Box and Donald Behnken | Introduced the Box-Behnken Design (BBD) [8] [5]. | Provided efficient, rotatable three-level designs that required fewer runs than central composite designs for fitting quadratic models [8] [6]. |

| 1980s | Genichi Taguchi | Popularized robust parameter design [2]. | Emphasized optimizing processes to make them insensitive to uncontrollable "noise" factors, extending RSM's application to quality engineering [8] [7]. |

| 1987 | Box and Draper | Published "Empirical Model-Building and Response Surfaces" [2]. | Synthesized RSM developments into a comprehensive theoretical and applied guide [2]. |

| 1990s-Present | - | Integration with statistical software (e.g., JMP, Minitab, Design-Expert) [2]. | Democratized access to RSM, automating design construction and analysis for non-statisticians [5] [2]. |

| 2000s-Present | - | Emergence of hybrid models with machine learning (e.g., Gaussian processes, neural networks) [2]. | Addressing high-dimensional and highly non-linear problems beyond the scope of traditional polynomial models [9] [2]. |

The formal inception of RSM is credited to George E. P. Box and K. B. Wilson in 1951. Their work, conducted in an industrial context at Imperial Chemical Industries (ICI), was driven by the need to optimize chemical processes efficiently [8] [2]. They proposed using a sequence of designed experiments and a second-degree polynomial model to approximate the response surface, a technique that was easy to estimate and apply even with limited process knowledge [1]. A key innovation was the Central Composite Design (CCD), which combined factorial and axial points to efficiently estimate curvature [8].

The subsequent development of the Box-Behnken Design (BBD) in 1960 offered a more resource-efficient alternative for fitting quadratic models, further solidifying RSM's practicality [8] [6]. The methodology's expansion was fueled by the work of figures like Genichi Taguchi, who integrated the concept of robustness against uncontrollable noise factors [8] [7]. The advent of powerful statistical software in the 1990s and the ongoing integration with machine learning algorithms represent the modern computational evolution of RSM, enabling its application to increasingly complex scientific challenges [9] [2].

Core Principles and Methodological Framework

Polynomial Response Models

At the heart of RSM is the approximation of the true, unknown functional relationship between factors and responses using low-order polynomial models. This approximation is valid within a localized experimental region [2].

The first-order model, used in initial screening or when the system is assumed linear, is expressed as:

y = β₀ + ∑βᵢxᵢ + ε [2]

Where y is the predicted response, β₀ is the intercept, βᵢ are the linear coefficients, xᵢ are the coded factor levels, and ε is the random error term.

When curvature is present in the system—a prerequisite for locating a maximum or minimum—a second-order (quadratic) model is employed. This model incorporates interaction and quadratic terms:

y = β₀ + ∑βᵢxᵢ + ∑βᵢᵢxᵢ² + ∑∑βᵢⱼxᵢxⱼ + ε [3] [2]

The quadratic terms (βᵢᵢxᵢ²) capture the curvature of the response surface along each factor, while the interaction terms (βᵢⱼxᵢxⱼ) account for instances where the effect of one factor depends on the level of another [3]. This model is sufficient to identify stationary points (maxima, minima, or saddle points) on the response surface [2].

Key Experimental Designs in RSM

Selecting an appropriate experimental design is critical for efficiently estimating the model coefficients. The most prevalent designs in RSM are compared in Table 2.

Table 2: Comparison of Primary RSM Experimental Designs

| Design | Key Components | Number of Runs (for k=3 factors) | Key Characteristics | Best Use Cases |

|---|---|---|---|---|

| Central Composite Design (CCD) [3] [6] | - Factorial points (2ᵏ or fraction)- Axial (star) points (2k)- Center points (nₚ) | 14-20, depending on center points [10] | - Rotatable variant provides constant prediction variance at points equidistant from the center [3] [1].- Can be circumscribed, inscribed, or face-centered.- Estimates all model coefficients efficiently. | The most widely used design; ideal for sequential experimentation as it can augment a pre-existing factorial design [3] [4]. |

| Box-Behnken Design (BBD) [8] [6] | - Treatment combinations at midpoints of process space edges.- Center points. | 13 (for k=3, nₚ=1) [3] | - Spherical design; all points lie on a sphere.- Requires only 3 levels per factor.- Inefficient for studying factor extremes.- Near-rotatable. | A strong choice when the area of interest is known to be within a spherical experimental region and extremes are to be avoided [6]. |

| Full Factorial Design (FFD) | - All possible combinations of factor levels. | 27 (for a 3³ design) [10] | - Requires a large number of runs as factors increase.- Can estimate complex models but is often inefficient for quadratic models. | Less common for pure RSM; used when a very detailed model is needed and resources are not constrained. |

The Sequential Nature of RSM

RSM is inherently a sequential learning process. The following diagram illustrates the typical workflow for implementing RSM in a research setting.

This workflow begins with a screening phase to identify the few critical factors from a potentially large list using designs like factorial or Plackett-Burman designs [7] [2]. Once key factors are identified, a first-order model is fitted. If this model shows a significant lack-of-fit, particularly curvature, the analysis transitions to the RSM phase, employing a second-order design like CCD or BBD to model the complex response surface and locate the optimum [4] [2]. Throughout this process, techniques like the method of steepest ascent guide the experimenter toward the optimal region of the factor space in the most efficient manner [3] [2].

Experimental Protocols and the Scientist's Toolkit

Detailed Methodology for a Central Composite Design (CCD)

The following protocol outlines the key steps for executing an RSM study using a CCD, one of the most common designs.

- Problem Definition and Factor Selection: Clearly define the response variable(s) to be optimized (e.g., reaction yield, product purity). Select the continuous input factors to be studied (e.g., temperature, pH, concentration) and their ranges based on prior knowledge or screening experiments [6] [7].

- Design Construction: For

kfactors, a CCD consists of three parts:- A full or fractional factorial design (2ᵏ or 2ᵏ⁻¹) from the high and low levels of each factor. These are the factorial points.

- Axial (or star) points (2k points), positioned at a distance

±αfrom the center along each factor axis. The value ofαis chosen to achieve rotatability (α = 2ᵏ⁄⁴) or other properties [3] [6]. - Center points (nₚ ≥ 2), repeated runs at the midpoint of all factor ranges, to estimate pure error and check for curvature [3].

- Randomization and Experimentation: Randomize the order of all experimental runs to avoid confounding the effects of factors with systematic trends over time. Execute the experiments and record the response data [7].

- Model Fitting and Regression Analysis: Use multiple linear regression (typically via the least squares method) to fit a second-order polynomial model to the experimental data [3] [7]. The model's form for two factors (x₁, x₂) is:

y = β₀ + β₁x₁ + β₂x₂ + β₁₁x₁² + β₂₂x₂² + β₁₂x₁x₂ + ε. - Model Adequacy Checking: Validate the fitted model using:

- Analysis of Variance (ANOVA): To test the overall significance of the model.

- Lack-of-Fit Test: To determine if the model form is adequate.

- Coefficient of Determination (R² and Adjusted R²): To measure the proportion of variance explained by the model [6] [7] [10].

- Residual Analysis: To check the assumptions of normality, independence, and constant variance of the errors [6] [10].

- Optimization and Validation: Use the validated model to locate the optimal factor settings. This can be done graphically using contour plots and 3D surface plots, or numerically using optimization algorithms [4]. Finally, perform confirmation experiments at the predicted optimal conditions to verify the model's predictive accuracy [7].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful execution of an RSM study, particularly in synthesis research, relies on a foundation of precise materials and analytical techniques. Table 3 details key items in the researcher's toolkit.

Table 3: Essential Research Reagent Solutions for RSM in Synthesis

| Item/Category | Function in RSM Studies | Technical Considerations |

|---|---|---|

| High-Purity Chemical Reactants | Serve as the independent variables (factors) whose concentrations are systematically varied. Impurities can introduce uncontrollable noise. | Purity ≥ 98% is typically required to ensure reproducible responses and minimize confounding variability in the model [5]. |

| Buffers & pH Modulators | Control and maintain the pH of the reaction environment, a critical continuous factor in many biochemical and chemical syntheses. | Buffer capacity must be sufficient to maintain the desired pH level throughout the experiment, as drift can invalidate results. |

| Analytical Standards (e.g., HPLC, GC) | Enable accurate quantification of the response variable, such as product yield, impurity profile, or reactant conversion. | Certified reference materials (CRMs) are essential for calibrating instruments and ensuring the accuracy of response measurements [10]. |

| Catalysts & Enzymes | Act as factors whose type or concentration can be optimized to maximize reaction rate and yield. | Biological catalysts (enzymes) require controlled temperature and pH conditions, which themselves may be factors in the RSM design [5]. |

| Spectrophotometers / Chromatographs (HPLC, GC) | Primary instruments for measuring quantitative response data (e.g., concentration, purity). | Instrument precision and accuracy are paramount; the "response" data fed into the RSM model is only as good as its measurement [10]. |

| Statistical Software (e.g., JMP, Design-Expert, Minitab) | Used to create experimental designs, randomize runs, perform regression analysis, analyze variance (ANOVA), and generate optimization plots. | Modern software automates complex calculations, making RSM accessible and ensuring statistical rigor [4] [5] [2]. |

Applications in Scientific Research and Challenges

Cross-Disciplinary Adoption

RSM has seen widespread adoption across scientific and engineering disciplines due to its general-purpose utility in optimization.

- Pharmaceutical Development and Drug Formulation: RSM is extensively used to optimize drug formulations, ensuring desired properties like dissolution rate, stability, and bioavailability. It helps in balancing multiple excipient and process variables to achieve the target product profile [7] [5].

- Biotechnology and Fermentation Processes: Optimizing microbial growth and metabolite production (e.g., antibiotics, enzymes, organic acids) by modeling the effects of media composition (carbon, nitrogen sources) and cultivation conditions (pH, temperature, aeration) [5].

- Chemical Engineering and Reaction Optimization: A classic application area, used to maximize chemical reaction yield and selectivity while minimizing by-products and optimizing process parameters like temperature, pressure, and catalyst loading [8] [7].

- Food Science and Technology: Applied to optimize extrusion processes, maximize sensory qualities, and model the degradation kinetics of nutrients during processing [7].

- Environmental Engineering: Used to model the adsorption of pollutants and optimize photocatalytic degradation processes for wastewater treatment [7].

Challenges and Limitations

Despite its power, practitioners must be aware of RSM's limitations and associated challenges.

- Approximation Nature: The polynomial models are approximations of reality. An estimated optimum may not be the true optimum, especially if the model is inadequate or the experimental region is poorly chosen [1] [9].

- Sensitivity to Initial Design: The methodology's success is sensitive to the selection of the initial experimental range. A range that is too narrow may miss the optimum, while one that is too broad may make a second-order model a poor fit [8] [5].

- High-Dimensional Systems: The efficiency of RSM decreases as the number of factors increases, as the required number of experimental runs grows rapidly [9]. Screening designs are crucial for mitigating this.

- Model Validation: A common challenge is the inadequate validation of models. Researchers may fail to properly check for violations of statistical assumptions (normality, constant variance) or to run essential confirmation experiments [10].

- Multiple Responses: Optimizing for several responses simultaneously can be complex, as the optimal conditions for one response may be poor for another. Techniques like the desirability function approach are required to balance these competing goals [4] [7].

Response Surface Methodology stands as a cornerstone of empirical optimization in scientific research. From its historical origins in the work of Box and Wilson, it has evolved into a sophisticated, yet accessible, methodology supported by modern statistical software. Its power lies in its structured, sequential approach to experimentation, which efficiently leverages resources to build predictive models and locate optimal process conditions. For researchers in drug development and synthesis, a rigorous understanding of RSM's principles—from the selection of an appropriate experimental design to the thorough validation of the fitted model—is indispensable. While challenges such as model adequacy and multiple response optimization remain, the ongoing integration of RSM with advanced computational techniques ensures its continued relevance and capability in tackling the complex optimization problems that define modern scientific innovation.

This technical guide examines the integral role of Response Surface Methodology (RSM) within model-based optimization and robustness strategies in pharmaceutical development. Framed within the broader thesis of synthesis research, we detail how RSM provides a structured empirical approach for modeling complex processes, optimizing Critical Process Parameters (CPPs), and establishing robust design spaces. The content outlines fundamental statistical principles, provides detailed experimental protocols, and presents advanced applications aligned with Quality by Design (QbD) frameworks. Designed for researchers and drug development professionals, this whitepaper integrates current methodologies with practical implementation workflows to enhance process understanding and control, thereby reducing development times and improving product quality.

Response Surface Methodology (RSM) is a collection of statistical and mathematical techniques essential for modeling and analyzing problems in which multiple independent variables influence a dependent response or a set of responses [1]. The primary objective of RSM is to optimize this response through a structured sequence of designed experiments [7]. In the context of pharmaceutical synthesis research, RSM has become an indispensable component of the modern Quality by Design (QbD) paradigm, facilitating a systematic approach to development that begins with predefined objectives and emphasizes product and process understanding based on sound science and quality risk management [11].

The methodology was formally introduced by George E. P. Box and K. B. Wilson in 1951, who proposed using a second-degree polynomial model to approximate process behavior [1]. This empirical model-based approach is particularly valuable when theoretical models are cumbersome, time-consuming, or unreliable. For pharmaceutical development, RSM enables researchers to efficiently map the relationship between input factors—such as material attributes and process parameters—and Critical Quality Attributes (CQAs), thereby identifying the design space where product quality is assured [11]. This represents a significant advancement over traditional one-factor-at-a-time (OFAT) or empirical trial-and-error approaches, which often fail to capture interaction effects between variables and are inefficient in resource utilization.

The core value of RSM in synthesis research lies in its ability to:

- Quantify Joint Effects: Systematically quantify how multiple input variables jointly affect a critical quality response [3].

- Identify Optimal Conditions: Determine the optimal factor settings that maximize or minimize a response, or bring it to a desired target value [7].

- Assess Sensitivity: Evaluate the sensitivity of the response to changes in input variables, which is crucial for understanding process robustness [3].

- Support QbD Implementation: Provide the statistical foundation for defining a design space, as outlined in ICH Q8(R2), enabling flexible and regulatory-approved process adjustments [11].

Fundamental Principles and Statistical Foundations

Core Concepts of RSM

The implementation of Response Surface Methodology is built upon several fundamental statistical concepts and design properties that ensure the reliability and validity of the generated models.

- Experimental Design: The heart of RSM lies in the principles of experimental design. Systematic methods like factorial designs and Central Composite Designs (CCD) allow for planned changes to input factors to observe corresponding output responses. Factorial designs are effective for exploring factor interactions, while CCDs are highly efficient for fitting quadratic response surface models [7].

- Regression Analysis: RSM heavily utilizes regression analysis, particularly multiple linear regression and polynomial regression. The goal is to model the functional relationship between responses and independent input variables. Polynomial regression is key as it allows for curvature in the response surfaces, accounting for quadratic effects and interactions that are common in real-world processes [7].

- Response Surface Models: The primary output of an RSM study is a mathematical model that describes how input variables influence the response(s) of interest. Common models include first-order (linear), second-order, and quadratic models. An accurate model is essential for navigating the design space for optimization and enhancing process understanding [7].

- Model Validation: It is critical to evaluate the suitability and accuracy of the generated response surface models. Techniques like Analysis of Variance (ANOVA), lack-of-fit tests, R-squared values, and residual analysis are employed to validate models and identify potential issues or violations of underlying statistical assumptions [7].

Key Properties of RSM Designs

To ensure the collection of high-quality, analyzable data, RSM experimental designs possess several important properties:

- Orthogonality: This property allows the individual effects of the k-factors to be estimated independently without confounding. Orthogonality also provides minimum variance estimates of the model coefficients so that they are uncorrelated [1].

- Rotatability: A rotatable design has constant moments of the distribution of design points about the center of the factor space. This means that the variance of the predicted response is constant at all points equidistant from the center, ensuring uniform precision of prediction across the experimental region [1].

- Uniform Precision: Also known as uniformity, this third property of CCD designs controls the number of center points to ensure that the variance of the predicted response at the origin is nearly the same as the variance at a unit distance from the origin [1].

Experimental Design and Workflow for RSM

Implementing Response Surface Methodology involves a systematic series of steps to build an empirical model and optimize the response variables of interest. The following workflow provides a structured approach for pharmaceutical applications.

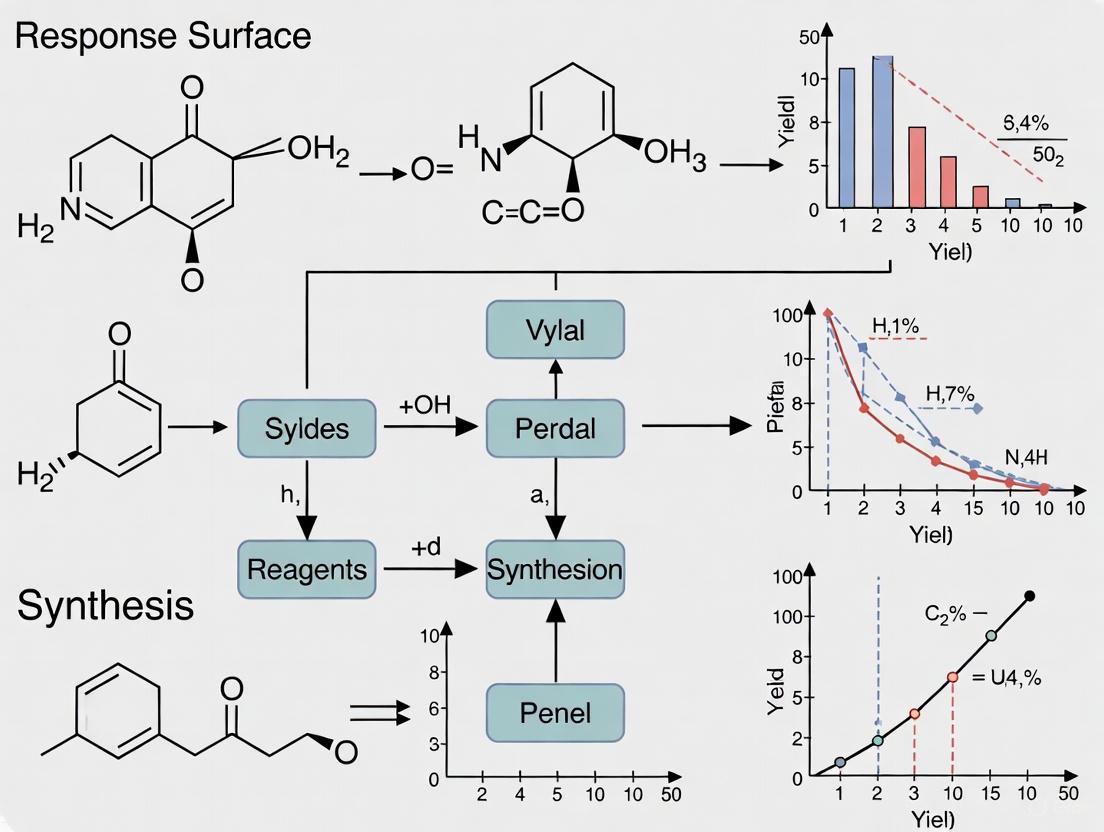

Figure 1: RSM Implementation Workflow in Pharmaceutical Development

Step-by-Step Implementation Protocol

Define the Problem and Response Variables: The initial step involves clearly defining the problem statement, goals, and identifying the critical response variable(s) to optimize. In pharmaceutical contexts, responses are typically Critical Quality Attributes (CQAs) such as yield, impurity level, dissolution rate, or content uniformity [7] [11].

Screen Potential Factor Variables: Identify the key input factors (process parameters and material attributes) that may influence the response(s) through prior knowledge and screening experiments using techniques like Plackett-Burman designs. This step reduces the number of variables to a manageable set for more detailed study [7].

Code and Scale Factor Levels: Selected factors are coded and scaled to low and high levels spanning the experimental region of interest. Coding techniques, such as those used in central composite designs, place factors on a common scale, improving model computation and enabling regression coefficients to be interpreted as main effects and interactions [7].

Select an Experimental Design: Choose an appropriate experimental design based on the number of factors, resources, and objectives. Common RSM designs include Central Composite Design (CCD), Box-Behnken Design (BBD), and D-optimal designs. These designs enable the efficient fitting of a quadratic polynomial regression model [7] [3].

Conduct Experiments: Run the experiments according to the chosen design matrix by setting factors at specified levels and measuring the response(s). Randomization is critical to minimize the effects of lurking variables [7].

Develop the Response Surface Model: Fit a multiple regression model, typically a second-order polynomial equation, to the experimental data. This model relates the response to the factor variables using regression analysis techniques. The general form of a quadratic model for k factors is:

Y = β₀ + ΣβᵢXᵢ + ΣβᵢᵢXᵢ² + ΣβᵢⱼXᵢXⱼ + εwhere Y is the response, Xᵢ and Xⱼ are the factors, β are the coefficients, and ε is the error term [3].Check Model Adequacy: Analyze the fitted model for accuracy and significance using statistical tests like ANOVA, lack-of-fit tests, R² values, and residual analysis. This ensures the model provides an adequate approximation of the real process [7].

Optimize and Validate the Model: Use optimization techniques like steepest ascent, canonical analysis, or numerical optimization to determine the factor settings that optimize the response(s) based on the fitted model. Validate these optimum conditions through confirmatory experimental runs [7].

Iterate if Needed: If the current experimental region is unsatisfactory or the model is inadequate, plan additional experiments in an updated region to refine and improve the model iteratively until satisfactory results are achieved [7].

Common Experimental Designs in RSM

Table 1: Comparison of Common RSM Experimental Designs

| Design Type | Key Characteristics | Number of Runs (for k=3 factors) | Advantages | Limitations | Pharmaceutical Application Examples |

|---|---|---|---|---|---|

| Central Composite Design (CCD) | Includes factorial points, center points, and axial (star) points; can be rotatable [3] | 15-20 runs (depending on center points) | Estimates pure error; captures curvature; rotatable properties [3] | Higher number of runs compared to BBD; axial points may be outside operable range [3] | Formulation optimization; process parameter characterization [11] |

| Box-Behnken Design (BBD) | Three-level design based on incomplete factorial designs; all points lie on a sphere [3] | 13-15 runs (depending on center points) | Fewer runs than CCD; avoids extreme factor combinations [3] | Cannot estimate full cubic model; poor prediction at corners of cube [3] | Lyophilization cycle development; granulation process optimization [12] |

| Face-Centered CCD | Variation of CCD where axial points are at the faces of the cube (α=±1) [3] | 15-20 runs (depending on center points) | All design points are at three levels (-1, 0, +1); easier to execute in practice [3] | Not rotatable; prediction variance higher than spherical designs [3] | Biopharmaceutical process development where factor ranges are constrained |

Model-Based Optimization in Pharmaceutical Processes

Integration of RSM with QbD Framework

Response Surface Methodology serves as a critical enabler for implementing Quality by Design in pharmaceutical development. Within the QbD framework, RSM provides the statistical foundation for several key elements:

Defining the Design Space: The design space, as defined in ICH Q8(R2), is the multidimensional combination and interaction of input variables demonstrated to provide assurance of quality [11]. RSM is the primary methodology for characterizing this space through empirical modeling, establishing proven acceptable ranges (PARs) for Critical Process Parameters (CPPs) and Critical Material Attributes (CMAs) [11].

Establishing Control Strategies: RSM models help identify which process parameters and material attributes have the greatest impact on CQAs, enabling the development of risk-based control strategies. This may include real-time monitoring through Process Analytical Technology (PAT) and parametric controls to ensure operation within the design space [13] [11].

Supporting Regulatory Flexibility: Once a design space is approved, changes within it are not considered regulatory variations. This flexibility, supported by RSM-derived models, allows for continuous improvement without requiring post-approval submissions [11].

Advanced Model-Based Optimization Applications

Beyond traditional RSM applications, recent advances have integrated mechanistic modeling with statistical approaches for enhanced pharmaceutical process optimization:

Mechanistic Modeling in Freeze-Drying: A model-based optimization strategy has been developed to achieve fast and robust freeze-drying cycles for biopharmaceuticals. This approach uses mechanistic models of heat and mass transfer to optimize the primary drying phase, maximizing sublimation rates while maintaining product temperature below the critical collapse temperature. The method incorporates variability data of process parameters into an uncertainty analysis to estimate the risk of failure, resulting in protocols that are both faster and more robust than classical approaches [12].

Hierarchical Time-Oriented Robust Design: For complex pharmaceutical problems with time-oriented, multiple, and hierarchical responses, advanced robust design optimization algorithms have been developed. These approaches create customized experimental frameworks for representing pharmaceutical quality characteristics and functional relationships between input factors and hierarchical time-oriented output responses. The resulting Hierarchical Time-Oriented Robust Design (HTRD) optimization models provide optimal solutions with significantly small biases and variances, addressing the interdisciplinary optimization challenges in drug development [14].

Integrated Continuous Manufacturing: Model-based optimization, supported by RSM, enables the implementation of end-to-end continuous manufacturing processes. This includes the integration of synthesis, purification, and final dosage formation, reducing development times and manufacturing costs while improving productivity and quality control [13].

Visualization and Interpretation of Response Surfaces

Effective visualization is crucial for interpreting response surface models and communicating results to stakeholders. The following techniques are commonly used in pharmaceutical RSM applications.

Figure 2: RSM Optimization and Visualization Process

Visualization Techniques

Contour Plots: These two-dimensional graphs show lines of constant response (similar to topographic maps) for two factors while holding other factors constant. They are particularly useful for identifying ranges of factor settings that achieve a desired response value and for understanding the relationship between two factors and a response [3].

3D Surface Plots: Three-dimensional representations of the response surface showing the relationship between two factors and the response. These plots provide an intuitive understanding of the response behavior, including the location of maxima, minima, and saddle points [3].

Overlaid Contour Plots: When multiple responses need to be optimized simultaneously, overlaid contour plots display the acceptable regions for each response on the same graph. The overlapping region that satisfies all constraints represents the design space where all responses meet their required specifications [3].

Interpretation of Response Surfaces

The interpretation of response surfaces involves analyzing the shape and features of the modeled relationship:

- Stationary Points: Locations on the response surface where the slope is zero in all directions. These can represent maximum, minimum, or saddle points.

- Ridge Systems: When the response surface shows a elongated maximum or minimum, indicating that factors can be adjusted together to maintain the same optimal response.

- Simple Maximum/Minimum: A peak or valley where optimal conditions are found at specific factor settings.

- Interaction Effects: When the effect of one factor depends on the level of another factor, visible as twisting in the contour lines.

Robustness and Reliability in Pharmaceutical Optimization

Robust Design Strategies

Achieving robustness in pharmaceutical processes involves designing systems that are insensitive to variability in input factors and environmental conditions. RSM contributes to robust design through several approaches:

Dual Response Surface Methodology: This technique involves modeling both the mean response and the variability (standard deviation) of the response. Optimization then focuses on finding factor settings that achieve the target mean while minimizing variability [7] [14].

Robust Parameter Design: Pioneered by Genichi Taguchi and adapted for use with RSM, this approach aims to minimize the effects of uncontrollable noise factors by choosing levels for controllable factors that make the process robust to external variability [7].

Incorporating Noise Factors: Advanced RSM designs can explicitly include noise factors in the experiment, enabling the modeling of control-by-noise interactions and identifying control factor settings that reduce sensitivity to noise [7].

Uncertainty Analysis and Reliability Assessment

In model-based optimization, it is essential to account for uncertainty in parameter estimates and model predictions:

Propagation of Error: Using the fitted response model, the propagation of error (POE) technique calculates how variability in the input factors propagates through the model to create variability in the response. This helps identify factor settings that minimize transmitted variability [14].

Monte Carlo Simulation: By simulating multiple scenarios based on the distributions of input parameters, Monte Carlo methods can estimate the probability of meeting specifications and assess the reliability of the process under optimal conditions [12].

Bayesian Approaches: These methods incorporate prior knowledge and uncertainty in parameter estimates directly into the optimization framework, providing probabilistic statements about the reliability of optimal solutions [13].

The Scientist's Toolkit: Essential Materials and Reagents

Table 2: Key Research Reagent Solutions for Pharmaceutical RSM Studies

| Category | Specific Items/Techniques | Function in RSM Studies | Application Examples |

|---|---|---|---|

| Statistical Software | JMP, Design-Expert, Minitab, R with specific packages (rsm, DoE.base) | Experimental design generation, model fitting, optimization, and visualization [7] [3] | Creating Central Composite Designs; performing regression analysis; generating contour plots |

| Process Analytical Technology (PAT) | NIR spectroscopy, Raman spectroscopy, FBRM (Focused Beam Reflectance Measurement) | Real-time monitoring of CQAs during process development studies [13] [11] | In-line monitoring of blend uniformity; particle size distribution during granulation |

| Material Characterization Tools | Laser diffraction particle size analyzers, DSC (Differential Scanning Calorimetry), surface area analyzers | Quantifying Critical Material Attributes (CMAs) as input factors in RSM studies [11] | Measuring API particle size distribution; excipient moisture content |

| Unit Operation Simulators | Custom MATLAB/Python scripts, gPROMS, Aspen Plus | Mechanistic modeling of unit operations for hybrid model-based optimization [13] [12] | Freeze-drying cycle optimization [12]; chemical reactor modeling |

| Risk Assessment Tools | FMEA (Failure Mode and Effects Analysis), Fishbone diagrams, Risk estimation matrices | Systematic evaluation of material attributes and process parameters impacting CQAs prior to RSM studies [11] | Prioritizing factors for inclusion in DoE studies |

Response Surface Methodology represents a powerful statistical framework that aligns perfectly with the modern QbD approach in pharmaceutical development. By enabling systematic experimentation, empirical modeling, and multi-objective optimization, RSM provides researchers and scientists with a structured methodology to enhance process understanding, define operable design spaces, and establish robust control strategies. The integration of RSM with mechanistic modeling and advanced optimization algorithms further extends its capability to address complex, hierarchical pharmaceutical problems with time-dependent responses. As the industry continues to advance toward continuous manufacturing and personalized medicines, the principles and applications of RSM outlined in this technical guide will remain fundamental to achieving efficient, reliable, and quality-focused pharmaceutical processes.

Response Surface Methodology (RSM) is a collection of statistical and mathematical techniques designed for developing, improving, and optimizing processes, with widespread application in synthesis research across chemical, material, and pharmaceutical domains [2]. Introduced by George E. P. Box and K. B. Wilson in 1951, its primary goal is to identify the levels of input variables (factors) that produce the most desirable output values (responses) by fitting empirical models, typically second-order polynomials, to experimental data [2]. The methodology is sequential, often beginning with screening designs to identify significant factors before progressing to more complex designs for optimization [2]. The efficiency and success of RSM heavily rely on the strategic choice of experimental design, which dictates how data points are distributed within the experimental region. Orthogonality, rotatability, and uniform precision are three fundamental statistical properties that guide the construction of these designs, particularly Central Composite Designs (CCDs), ensuring that the collected data yields a model with reliable and interpretable predictions [1] [15]. For researchers in synthesis, understanding these properties is crucial for designing experiments that efficiently lead to optimal conditions—such as maximum yield, purity, or performance—while minimizing experimental effort and cost.

Theoretical Foundations of Key Properties

Orthogonality

Orthogonality is a property that allows for the independent estimation of the individual effects of the k-factors in a model [1]. In an orthogonal design, the model coefficients are uncorrelated, meaning that the estimate of one coefficient is not confounded or influenced by the estimate of another [1]. This property is paramount during the initial stages of experimentation, such as when using factorial designs, to clearly separate the main effects of each factor from their interaction effects. From a computational standpoint, orthogonality ensures that the design matrix (X) is structured such that the information matrix (X'X) is diagonal, which simplifies the calculation of the regression coefficients via least squares estimation. The practical benefit for researchers is minimum variance estimates of the model coefficients, leading to more precise and interpretable effect estimates, which is critical for accurately identifying the key drivers in a synthetic process [1].

Rotatability

Rotatability is a property that ensures the variance of the predicted response remains constant at all points equidistant from the center of the design space [1] [15]. A design is rotatable if the moments of the distribution of the design points are constant [1]. This is a highly desirable property because it means that the precision of the predictions made by the fitted model is the same in all directions from the center point. The design does not favor one direction over another, providing a consistent and stable basis for exploration and optimization across the entire experimental region. Rotatability is achieved in a Central Composite Design (CCD) by setting the axial (star) points at a specific distance α from the center. The value of α is calculated as α = (2^(k/4)) for a full factorial design, where k is the number of factors. This precise placement ensures the rotatable nature of the design [3].

Uniform Precision

Uniform Precision (also called Uniformity) is a property that controls the number of center points in a CCD to make the prediction variance at the center of the design region approximately equal to the prediction variance at a unit distance from the center [1]. In essence, a uniform precision design aims to flatten the prediction variance profile within the immediate, most relevant area of the design space (often coded from -1 to +1) [15]. It does not mean the variance is perfectly constant across this entire cube, but that it is "very low and flat for a large proportion" of it [15]. This prevents the undesirable situation where the prediction error is significantly lower at the center points than at the edge points of the factorial cube, providing a more balanced level of confidence for predictions throughout the core region of interest.

Property Interrelationships and Comparisons

While these properties are distinct, they are often pursued in combination to create a robust experimental design. A common misconception is that Uniform Precision makes Rotatability redundant, but this is not the case [15]. Rotatability ensures consistent prediction variance on spherical contours, while Uniform Precision adjusts the variance profile within the spherical region of primary interest. A rotatable design with uniform precision offers superior overall performance in prediction variance compared to a design lacking one or both properties [15].

The table below provides a consolidated comparison of these three core properties.

Table 1: Comparative Overview of Fundamental RSM Properties

| Property | Primary Function | Key Statistical Implication | Primary Method of Achievement |

|---|---|---|---|

| Orthogonality [1] | Allows independent estimation of factor effects. | Model coefficients are uncorrelated, providing minimum variance estimates. | Proper design of the factorial portion of the CCD. |

| Rotatability [1] [15] | Ensures consistent prediction precision in all directions from the center. | Variance of predicted response is constant at points equidistant from the design center. | Setting axial points at α = (2^(k/4)) from the center in a CCD. |

| Uniform Precision [1] [15] | Balances prediction variance across the core design region. | Prediction variance at the center is roughly equal to the variance at a unit distance from the center. | Adding an appropriate number of center points to the CCD. |

The following diagram illustrates the geometric interpretation of these properties in a two-factor design space, showing the arrangement of points and the idealized behavior of prediction variance.

Experimental Implementation in Synthesis Research

A Case Study: Optimizing Biogenic Silica Extraction

A recent study on extracting biogenic silica from a mixture of rice husk (RH) and rice straw (RS) ash provides an excellent, real-world example of implementing a CCD with these properties in a synthesis context [16]. The research aimed to optimize the ash digesting process to maximize silica production, a valuable material for applications in construction, ceramics, and pharmaceuticals.

Table 2: Research Reagent Solutions for Silica Extraction Optimization [16]

| Reagent/Material | Specification | Function in the Experiment |

|---|---|---|

| Rice Husk (RH) & Rice Straw (RS) | Washed, dried (110°C), ground, and sieved (<2 mm); used as a 70:30 hybrid blend. | Primary biological source of silica; the precursor material for the synthesis. |

| Hydrochloric Acid (HCl) | 1 M solution in distilled water. | Acid pre-treatment agent to remove metal impurities (K, Na, Ca, etc.) for higher silica purity. |

| Sodium Hydroxide (NaOH) | 1-3 M solution in distilled water (analytical grade). | Alkaline digesting agent to dissolve silica from the ash into sodium silicate. |

| Distilled Water | N/A | Solvent for preparing acid and alkali solutions; used for washing and precipitation. |

Experimental Protocol and Workflow:

- Raw Material Preparation: RH and RS were washed to remove impurities, dried at 110°C for 12 hours, ground, and sieved to obtain particles smaller than 2 mm. A hybrid blend of 70% RH and 30% RS was selected based on preliminary tests [16].

- Acid Pre-treatment: The RH/RS blend was leached with 1 M HCl solution at 90°C. This critical step removes alkali and alkaline earth metals, enhancing the final purity and whiteness of the silica [16].

- Combustion and Ash Formation: The acid-leached material was combusted to produce ash, which is rich in amorphous silica [16].

- RSM-Driven Alkaline Digestion (Core Experiment): The ash was digested in NaOH solution under conditions determined by a Central Composite Design (CCD). The independent variables were:

- NaOH Concentration (1 - 3 M)

- Temperature (60 - 120 °C)

- Time (1 - 3 hours) The experimental runs, as defined by the CCD, were executed, and the yield of silica was measured as the response [16].

- Silica Precipitation and Characterization: The digested sodium silicate solution was precipitated, and the resulting silica was characterized using techniques like FTIR, XRF, and BET to confirm its purity (>97.35%) and properties [16].

The workflow for this optimized synthesis process, driven by the RSM experimental design, is outlined below.

Analysis and Outcomes

The researchers used RSM to fit a quadratic model that correlated the interaction effects of the three independent variables to the silica yield. Analysis of Variance (ANOVA) revealed that temperature was the most statistically significant parameter, followed by NaOH concentration and then digestion time [16]. The model was used to identify the optimum combination of process parameters within the experimental range to maximize silica production. This systematic approach, facilitated by a well-designed experiment, successfully transformed agricultural waste into a high-value material with confirmed purity exceeding 97.35% [16].

In the realm of synthesis research, from optimizing porous carbon materials for energy storage to fine-tuning biogenic silica extraction, the theoretical properties of RSM designs are not mere statistical abstractions [17] [16]. Orthogonality, rotatability, and uniform precision are foundational to constructing efficient and reliable experiments. They ensure that the empirical models derived from costly and time-consuming laboratory work provide clear insights into factor effects and generate robust predictions for locating optimal process conditions. Mastering these properties enables scientists and drug development professionals to strategically plan experiments that maximize information yield while minimizing resource expenditure, ultimately accelerating the development and optimization of synthetic processes.

Response Surface Methodology (RSM) is a collection of statistical and mathematical techniques specifically designed for modeling and analyzing problems in which a response of interest is influenced by several variables, with the ultimate goal of optimizing this response [1]. In the context of synthesis research—particularly in pharmaceutical development and material science—RSM provides a systematic framework for efficiently exploring the relationship between multiple input factors and critical quality attributes of the final product [3] [18]. Unlike traditional one-factor-at-a-time (OFAT) approaches, which are inefficient and incapable of detecting factor interactions, RSM enables researchers to understand complex interactions while minimizing experimental runs [19] [20].

The fundamental principle of RSM involves using experimental data to fit empirical models, typically second-order polynomials, that describe how input variables collectively affect the response [1]. These models are then used to generate contour and surface plots that visually represent the behavior of the response within the experimental region, allowing researchers to identify optimal conditions, robust operating ranges, and sensitivity to process parameter variations [10] [3]. For drug development professionals, this methodology is invaluable for accelerating formulation optimization, enhancing process robustness, and ensuring consistent product quality while reducing development costs [19] [3].

Theoretical Foundation of RSM

Mathematical Principles

The core mathematical model underlying RSM is a second-order polynomial equation that approximates the relationship between k input factors (x₁, x₂, ..., xₖ) and the response variable (y). For a system with three factors, the quadratic model takes the following form [10]:

y = β₀ + β₁x₁ + β₂x₂ + β₃x₃ + β₁₁x₁² + β₂₂x₂² + β₃₃x₃² + β₁₂x₁x₂ + β₁₃x₁x₃ + β₂₃x₂x₃ + ε

Where y represents the predicted response, β₀ is the constant term, β₁, β₂, β₃ are the linear coefficients, β₁₁, β₂₂, β₃₃ are the quadratic coefficients, β₁₂, β₁₃, β₂₃ are the interaction coefficients, and ε represents the error term [3]. This model structure enables RSM to capture not only the individual linear effects of each factor but also curvature (through quadratic terms) and synergistic/antagonistic effects between factors (through interaction terms) [20].

The assumption that a second-order model provides adequate approximation in the optimal region is fundamental to RSM [1]. This approximation holds particularly well when the region of interest is small enough or when the true response function is smoothly varying. The model parameters are typically estimated using least squares regression, which minimizes the sum of squared differences between observed and predicted response values [10] [3].

When RSM is Appropriate: Key Indicators

RSM is particularly valuable in specific research scenarios commonly encountered in synthesis and development workflows. The methodology is most appropriate when [19] [3] [20]:

The goal is optimization: When researchers need to find factor settings that maximize, minimize, or achieve a specific target value for one or more responses. For pharmaceutical synthesis, this could include maximizing yield, minimizing impurities, or achieving specific dissolution characteristics.

Factor interactions are suspected: When the effect of one factor depends on the level of another factor, which OFAT approaches cannot detect.

The process exhibits curvature: When the relationship between factors and response is nonlinear, requiring quadratic terms for adequate modeling.

The experimental region contains an optimum: When preliminary evidence suggests that the current operating conditions are near-optimal but require refinement.

Multiple responses must be balanced: When several critical quality attributes must be simultaneously optimized, requiring compromise solutions.

Table 1: Scenarios Warranting RSM Application in Synthesis Research

| Scenario | Traditional Approach Limitations | RSM Advantages |

|---|---|---|

| Formulation Optimization | Inefficient, misses interactions | Models complex interactions, finds optimal ratios |

| Process Parameter Tuning | Sequential adjustment, suboptimal | Simultaneous optimization of multiple parameters |

| Robustness Testing | Limited understanding of parameter sensitivity | Maps entire response surface, identifies robust regions |

| Quality by Design (QbD) | Difficulty establishing design space | Statistically-derived design space with known confidence |

| Scale-up Studies | Parameter adjustments based on limited data | Systematic approach to transfer optimal conditions |

Experimental Design Strategies for RSM

Core Design Types for Experimental Regions

The selection of an appropriate experimental design is critical for efficient and effective response surface exploration. Three primary designs dominate RSM applications in synthesis research, each with distinct characteristics and advantages [10] [3]:

Central Composite Design (CCD) is the most widely used RSM design, consisting of three components: factorial points (all combinations of factor levels), center points (repeated runs at midpoint levels), and axial points (points along each factor axis beyond the factorial range) [3]. CCD can be implemented in three variations: circumscribed (axial points outside factorial cube), inscribed (factorial points scaled inside axial range), and face-centered (axial points on factorial cube faces) [3]. The design is particularly valued for its rotatability property, which ensures uniform prediction variance at all points equidistant from the center [1].

Box-Behnken Design (BBD) is a spherical, rotatable design that combines two-level factorial arrangements with incomplete block designs [3]. Unlike CCD, BBD does not contain embedded factorial or fractional factorial designs and places all experimental points on a sphere of radius √2. For three factors, BBD requires only 13 experiments (including center points) compared to 15-20 for CCD, making it more efficient when factor levels are difficult or expensive to change [3].

Three-Level Full Factorial Design tests all possible combinations of factors at three levels each [10]. While this design provides comprehensive information about the response surface, the number of experimental runs increases exponentially with additional factors (3ᵏ for k factors), making it impractical for studies with more than 3-4 factors [10].

Table 2: Comparison of Primary RSM Experimental Designs

| Design Characteristic | Central Composite Design (CCD) | Box-Behnken Design (BBD) | 3-Level Full Factorial |

|---|---|---|---|

| Number of Runs (3 factors) | 15-20 (varies with α and center points) | 13 | 27 |

| Region of Exploration | Cuboidal or spherical | Spherical | Cuboidal |

| Ability to Estimate Pure Error | Excellent (multiple center points) | Good (multiple center points) | Limited (unless replicated) |

| Factor Level Settings | 5 levels per factor | 3 levels per factor | 3 levels per factor |

| Efficiency for Quadratic Models | High | Very High | Low |

| Rotatability | Achievable with proper α selection | Rotatable | Not rotatable |

| Practical Implementation | Suitable for sequential experimentation | Efficient when extreme points are costly | Comprehensive but resource-intensive |

Design Selection Guidelines

The choice among available RSM designs depends on several considerations specific to the research context [19] [3]:

Choose CCD when the experimental region is flexible and can be extended beyond the original factorial boundaries, the research follows a sequential approach (building on previous factorial experiments), and rotatability is a priority for uniform prediction variance.

Choose BBD when the experimental region is fixed and cannot exceed current boundaries, the number of experimental runs must be minimized due to cost or time constraints, and extreme factor level combinations are impractical or hazardous.

Choose Full Factorial when only a small number of factors (typically 2-3) are being studied, a comprehensive understanding of the entire experimental region is required, and resources permit a larger number of experimental runs.

For drug synthesis applications where materials may be expensive or scarce, BBD often provides the most efficient approach for initial optimization studies [3]. CCD is particularly valuable when preliminary experiments suggest the optimum may lie outside the current experimental region, as the axial points enable exploration beyond the initial boundaries [1].

Implementation Workflow and Protocol

Systematic RSM Implementation Framework

Implementing RSM effectively requires a structured approach consisting of sequential stages, each with specific objectives and deliverables. The following workflow diagram illustrates the complete RSM implementation process from problem definition through optimization and validation:

Detailed Experimental Protocol

Based on the implementation framework, the following step-by-step protocol provides specific guidance for executing RSM in synthesis research:

Step 1: Problem Definition and Objective Formulation Clearly articulate the research goal, specifying whether the objective is to maximize, minimize, or achieve a target value for the response variable. In pharmaceutical synthesis, this typically involves defining critical quality attributes (CQAs) that must be optimized, such as percentage yield, purity, particle size, or dissolution rate [19].

Step 2: Factor Screening and Response Selection Identify all potential factors that might influence the response, then use screening designs (e.g., fractional factorial or Plackett-Burman) to distinguish significant factors from negligible ones. Select measurable responses with appropriate precision and relevance to the research objective. A Pareto chart or half-normal probability plot can assist in identifying statistically significant effects [18].

Step 3: Experimental Region Definition Establish appropriate ranges for each factor based on prior knowledge, preliminary experiments, or theoretical constraints. The region should be sufficiently large to detect curvature and potential optimum points but not so large that the second-order model becomes inadequate [20].

Step 4: Design Selection and Randomization Choose an appropriate RSM design (CCD, BBD, or other) based on the considerations discussed in Section 3.2. Randomize the order of experimental runs to minimize the effects of lurking variables and external influences [3].

Step 5: Model Fitting and Validation Conduct regression analysis to estimate the coefficients of the second-order model. Evaluate model adequacy using analysis of variance (ANOVA), with particular attention to the coefficient of determination (R²), adjusted R², prediction R², and lack-of-fit test [10]. Examine residual plots to verify assumptions of normality, constant variance, and independence [10].

Step 6: Optimization and Validation Use the fitted model to locate optimal conditions through analytical methods (solving partial derivatives) or numerical optimization techniques. Conduct confirmatory experiments at the predicted optimal conditions to validate model predictions and verify optimization success [19].

Data Analysis and Interpretation

Model Evaluation Metrics and Criteria

The adequacy of a fitted response surface model must be rigorously evaluated using multiple statistical metrics before proceeding with optimization. The following table summarizes key evaluation criteria and their interpretation:

Table 3: Key Statistical Metrics for RSM Model Evaluation

| Metric | Calculation/Definition | Interpretation | Acceptance Criteria |

|---|---|---|---|

| R² (Coefficient of Determination) | SSregression/SStotal | Proportion of variance explained by the model | >0.80 (closer to 1.0 indicates better fit) |

| Adjusted R² | Adjusted for number of terms in model | Prevents artificial inflation from adding terms | Value should be close to R² |

| Predicted R² | Based on PRESS statistic | Measure of model's predictive ability | >0.70, close to adjusted R² |

| Adequate Precision | Signal-to-noise ratio | Compares predicted values to error | >4 (indicates adequate signal) |

| Lack-of-Fit Test | F-test for model adequacy | Tests if model adequately fits data | p-value >0.05 (not significant) |

| Coefficient of Variation (CV) | (SD/mean)×100 | Relative measure of experimental error | <10% preferred |

| PRESS (Predicted Residual Error Sum of Squares) | Sum of squared prediction errors | Measure of model's prediction capability | Smaller values indicate better prediction |

Beyond these quantitative metrics, residual analysis provides critical diagnostic information about model adequacy. Residuals (differences between observed and predicted values) should be randomly distributed without patterns when plotted against predicted values or run order [10]. Normal probability plots of residuals should approximate a straight line, confirming the normality assumption [10].

Interpretation of Response Surface Plots

Response surface plots and their two-dimensional counterparts (contour plots) provide powerful visual tools for interpreting the relationship between factors and responses [3]. The following diagram illustrates the interpretation of different contour plot patterns and their implications for optimization:

When interpreting response surfaces, researchers should note [3] [20]:

Elliptical contours indicate the presence of a stationary point (maximum, minimum, or saddle point) within the experimental region. The orientation of the ellipse reveals factor interactions.

Elongated ridges suggest that multiple factor combinations can produce similar response values, providing flexibility in selecting optimal conditions.

Circular contours indicate minimal interaction between the factors being plotted.

Steep gradients show regions where the response is highly sensitive to factor changes, while flat regions indicate robust operating conditions.

For pharmaceutical synthesis applications, the identification of robust regions (where response variation is minimal despite small factor fluctuations) is often as valuable as locating the theoretical optimum [19].

Case Study: Optimization of SnO₂ Thin Film Synthesis

Experimental Application of RSM

A recent study exemplifies the practical application of RSM in materials synthesis, specifically for optimizing the deposition parameters of SnO₂ thin films via ultrasonic spray pyrolysis [18]. This case study demonstrates the complete RSM workflow and its effectiveness in identifying optimal conditions within a defined experimental region.

The research employed a 2³ full factorial design with two replicates (total of 16 experimental runs) to investigate three critical factors: suspension concentration (0.001-0.002 g/mL), substrate temperature (60-80°C), and deposition height (10-15 cm) [18]. The response variable was defined as the net intensity of the principal X-ray diffraction peak, serving as a metric for the quality of the deposited crystalline phase.

Statistical analysis of the experimental data revealed that suspension concentration was the most influential factor, followed by significant two-factor and three-factor interactions [18]. The developed model exhibited a high coefficient of determination (R² = 0.9908) and low standard deviation (12.53), confirming its strong predictive capability [18].

Optimization Outcomes and Research Reagent Solutions

The response surface analysis identified the optimal deposition process conditions as the highest suspension concentration (0.002 g/mL), lowest substrate temperature (60°C), and shortest deposition height (10 cm) [18]. These conditions maximized the diffraction peak intensity, indicating superior crystalline quality of the SnO₂ thin films.

Table 4: Research Reagent Solutions for SnO₂ Thin Film Synthesis

| Material/Reagent | Specifications | Function in Synthesis | Supplier/Preparation |

|---|---|---|---|

| SnO₂ Powder | High purity, crystalline starting material | Primary precursor for thin film formation | Sigma-Aldrich |

| Distilled Water | Deionized, purified | Solvent for suspension preparation | Laboratory purification system |

| Agate Milling Container | 12 mL capacity, chemically inert | Homogenization of suspension | Fritsch Pulverisette system |

| Agate Milling Balls | 10 mm diameter, 1.39 g each | Mechanical energy transfer for dispersion | Fritsch Pulverisette system |

| SiO₂ Substrate | 25 × 75 × 1.3 mm dimensions | Support surface for film deposition | Commercial supplier |

| Ultrasonic Generator | 108 kHz frequency, 2 W power | Ultrasonic excitation for aerosol generation | Custom deposition system |

This case study demonstrates how RSM enables researchers to not only identify optimal factor settings but also quantify the relative importance of each factor and their interactions. The methodology provided a robust statistical framework that guided the synthesis of SnO₂ films with controlled crystallographic properties suitable for advanced functional applications [18].

Response Surface Methodology provides synthesis researchers with a powerful statistical framework for efficiently exploring experimental regions and identifying optimal conditions. By employing strategically designed experiments and empirical modeling, RSM enables comprehensive understanding of complex factor-response relationships while minimizing experimental resource requirements. The methodology's ability to model curvature and factor interactions makes it particularly valuable for pharmaceutical development, materials synthesis, and process optimization where multiple variables simultaneously influence critical quality attributes.

When properly implemented with appropriate design selection, rigorous model validation, and careful interpretation of response surfaces, RSM moves beyond traditional trial-and-error approaches to provide a scientifically rigorous pathway to process understanding and optimization. The integration of RSM into quality by design frameworks further enhances its value in regulated environments, supporting the development of robust, well-characterized synthesis processes with clearly defined operating ranges.

Response Surface Methodology (RSM) has emerged as a powerful empirical modeling approach that offers distinct advantages over theoretical models for optimizing complex synthesis systems in pharmaceutical and chemical research. This technical analysis demonstrates how RSM's structured experimentation and polynomial approximation capabilities provide researchers with a practical framework for navigating multivariate processes where mechanistic understanding remains incomplete. Through comparative evaluation and case studies, we establish RSM's value in accelerating process development while acknowledging its limitations in extrapolative prediction and fundamental mechanistic insight.

Response Surface Methodology (RSM) constitutes "a helpful statistical tool that uses math and statistics to model problems with multiple influencing factors and their results" [7]. This methodology explores how independent variables impact dependent outcome variables through carefully designed experiments and empirical modeling [7]. In synthesis research, RSM serves as a bridge between theoretical understanding and practical optimization, particularly when processes involve complex, nonlinear relationships that challenge conventional theoretical models.

The foundational premise of RSM lies in its ability to approximate complex systems using polynomial functions fitted to experimental data. As a comprehensive toolkit combining mathematical techniques and advanced statistics, "RSM holds a prominent position in both prediction and optimization" [21]. Its application involves a series of critical steps, encompassing experiment design, statistical analysis, and variable optimization, making it particularly valuable for researchers dealing with multivariate synthesis systems where theoretical models may be insufficient or impractical to develop.

Theoretical Foundations of RSM

Mathematical Framework

RSM operates on the principle that a response variable of interest (y) can be approximated as a function of multiple input variables (ξ₁, ξ₂, ..., ξₖ) plus statistical error (ε): Y = f(ξ₁, ξ₂, ..., ξₖ) + ε [22]. Since the true response function f is typically unknown, RSM employs empirical polynomial models to approximate this relationship within specified operating regions. These models are usually expressed in coded variables (x₁, x₂, ..., xₖ), which are dimensionless representations with zero mean and standard deviation [22].

The methodology utilizes sequential experimentation, often beginning with first-order models to identify important factors before progressing to more complex second-order models that capture curvature and interaction effects. For two independent variables, the first-order model with interaction takes the form: η = β₀ + β₁x₁ + β₂x₂ + β₁₂x₁x₂ [22]. When curvature becomes significant, a second-order model is employed: η = β₀ + β₁x₁ + β₂x₂ + β₁₁x₁² + β₂₂x₂² + β₁₂x₁x₂ [22]. This quadratic model provides the flexibility to represent various surface configurations, including maxima, minima, and saddle points, making it particularly useful for optimization in synthesis systems.

Experimental Design Strategies

The experimental design component is crucial to RSM's effectiveness. Various designs facilitate efficient exploration of the factor space while enabling statistical inference:

Table 1: Common Experimental Designs in RSM

| Design Type | Key Characteristics | Optimal Use Cases |

|---|---|---|

| Central Composite Design (CCD) | Combines factorial, axial, and center points; estimates curvature | General second-order modeling; sequential experimentation |

| Box-Behnken Design (BBD) | Three-level spherical design avoiding extreme factor combinations | Resource-constrained studies; avoidance of extreme conditions |

| 3ᵏ Factorial Design | Comprehensive assessment of all factor level combinations | Small factor sets (k≤3); detailed surface mapping |

| Plackett-Burman Design | Efficient screening design for identifying important factors | Preliminary factor screening with many potential variables |

Central Composite Designs (CCD) are particularly valuable as "they incorporate a full or fractional factorial design with center points, augmented by a group of axial points, which enables the estimation of the curvature in the model" [21]. The strategic arrangement of design points allows researchers to efficiently explore the factor space while maintaining statistical robustness.

Comparative Analysis: RSM vs. Theoretical Models

Fundamental Philosophical Differences

RSM and theoretical models approach complex systems from fundamentally different perspectives. Theoretical models seek to represent underlying mechanistic principles through mathematical equations derived from first principles, such as mass transfer kinetics, reaction thermodynamics, or quantum chemical calculations. In contrast, RSM employs empirical approximation, using statistical fitting to establish input-output relationships without requiring deep mechanistic understanding.

This distinction becomes particularly significant in complex synthesis systems where "relationships between variables and outcomes are unknown or complex, making traditional optimization tough" [7]. Theoretical models excel when system mechanisms are well-understood and can be accurately represented mathematically, while RSM provides a practical alternative when complexity overwhelms theoretical representation.

Practical Implementation Comparison

The implementation requirements and outputs of RSM versus theoretical models differ substantially, influencing their applicability to various research scenarios:

Table 2: Practical Implementation Comparison

| Aspect | RSM Approach | Theoretical Modeling Approach |

|---|---|---|

| Knowledge Requirement | Empirical relationships; statistical principles | Fundamental mechanisms; first principles |

| Data Requirements | Designed experiments within operational range | Comprehensive characterization across conditions |

| Computational Demand | Moderate (regression analysis) | High (solution of complex equations) |

| Output Provided | Empirical optimization conditions; factor effects | Mechanistic understanding; predictive capability |

| Extrapolation Reliability | Limited to experimental region | Potentially broader if mechanisms are correct |

| Development Time | Relatively short | Often extensive |

A key advantage of RSM lies in its ability to "determine an accurate model showing what's happening in a process or system" without requiring complete mechanistic understanding [7]. This empirical approach enables researchers to make progress even when theoretical foundations remain incomplete.

Advantages of RSM for Complex Synthesis Systems

Handling Multivariate Complexity

Complex synthesis systems typically involve multiple interacting factors that collectively influence outcomes. RSM excels in this environment by systematically investigating "the connections between multiple influencing factors and related outcomes" [7]. Unlike one-factor-at-a-time approaches, RSM captures interaction effects between variables, which often prove critical in synthetic processes.

The methodology "not only assesses the individual effects of independent variables but also accounts for their interactive responses" [21]. This capability is particularly valuable in pharmaceutical synthesis where factors such as temperature, catalyst concentration, reaction time, and solvent composition may interact in non-additive ways to influence yield, purity, and selectivity.

Efficiency in Experimental Resource Utilization

RSM provides structured approaches to maximize information gain while minimizing experimental effort. Through careful experimental design, RSM "helps deeply understand production influences" while optimizing resource allocation [7]. The strategic arrangement of experimental points in designs such as CCD and BBD enables efficient exploration of the factor space with fewer experiments than comprehensive grid searches.

This efficiency is evidenced in applications such as silica extraction from rice husk and straw ash, where RSM successfully optimized "sodium hydroxide concentration (1-3 M), temperature (60-120 °C) and time (1-3 h)" through a structured experimental plan [16]. The methodology enabled researchers to identify optimal conditions while systematically exploring the three-dimensional factor space.

Empirical Optimization Without Complete Mechanistic Understanding

Perhaps the most significant advantage of RSM in complex synthesis systems is its ability to facilitate optimization even when mechanistic understanding remains incomplete. In pharmaceutical development, where "compounds that operate through the same mechanism of action should induce similar patterns of interaction," RSM provides a framework for empirical optimization while gradually building mechanistic insight [23].

This capability is particularly valuable in early-stage development where theoretical models may be unavailable or unreliable. RSM enables researchers to "find the perfect settings to get the best results or acceptable performance ranges for a system" [7] without requiring complete theoretical understanding of underlying mechanisms.

Visualization and Interpretation Capabilities

RSM generates visual representations that enhance researcher understanding of complex systems. The methodology "builds visual response surfaces – graphs portraying input-output links" [7] that provide intuitive understanding of factor effects and optimal regions. These visualizations help researchers identify robust operating conditions and understand sensitivity to factor variations.

Contour plots and response surfaces enable researchers to simultaneously consider multiple factors while identifying optimal operating regions. This visualization capability supports more informed decision-making compared to theoretical models that may produce outputs less readily interpretable by non-specialists.

Case Studies: RSM Applications in Synthesis and Drug Development

Bioactive Compound Extraction Optimization

In a study comparing RSM and Artificial Neural Networks (ANN) for optimizing ultrasound-assisted extraction of bioactive compounds from Mimosa Wattle tree bark, researchers varied "temperature (30-70 °C), extraction time (10-60 min), and solvent-to-solid ratio (0.075-0.125 mL/g)" to maximize extraction yield and total phenolic content [24]. The RSM approach successfully identified optimum extraction conditions of "50 °C, 35 min, and a solvent-to-solid ratio of 0.1," predicting an extraction yield of 27.61% with total phenolic content of 81.84 mg GAE/g [24].