Scaling Up Organic Synthesis: A Practical DoE Guide for Robust Process Development

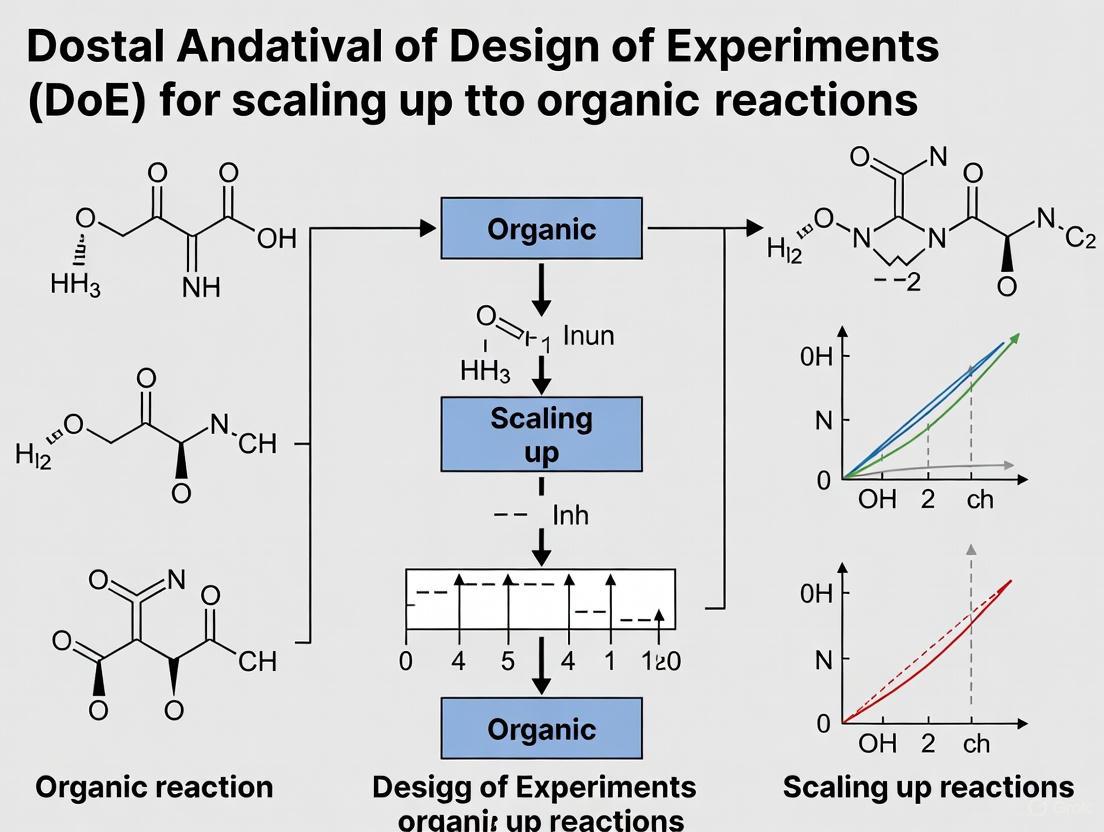

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for applying Design of Experiments (DoE) to scale up organic reactions.

Scaling Up Organic Synthesis: A Practical DoE Guide for Robust Process Development

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for applying Design of Experiments (DoE) to scale up organic reactions. It covers foundational principles, demonstrating how a systematic DoE approach overcomes the limitations of traditional one-variable-at-a-time (OVAT) optimization by efficiently exploring complex factor interactions. The content details practical methodologies, including High-Throughput Experimentation (HTE) and solvent optimization, alongside strategies for troubleshooting common scaling challenges. It further validates the DoE approach through comparative case studies from pharmaceutical research and discusses the growing role of machine learning and large-scale datasets in building predictive models for accelerated process development.

Why One-Variable-at-a-Time Fails: The Foundational Principles of DoE for Scale-Up

The Critical Limitations of OVAT Optimization in Complex Chemical Systems

In synthetic chemistry, the One-Variable-At-a-Time (OVAT) approach has been the traditional method for reaction optimization. This method involves holding all variables constant while systematically altering one factor—such as temperature, catalyst loading, or solvent—to observe its effect on the outcome, typically yield or selectivity [1]. While intuitively simple, this methodology contains critical flaws that become profoundly limiting when scaling up complex organic reactions, particularly in pharmaceutical development.

The OVAT approach treats variables as independent entities, completely ignoring the interaction effects between them [1]. In reality, chemical processes are complex systems where variables often have interdependent effects. For instance, the optimal temperature for a reaction may shift significantly depending on the catalyst loading, a nuance OVAT cannot capture. This frequently leads researchers to local optima rather than the true global optimum for the reaction [2] [3]. Consequently, the fraction of chemical space actually probed during an OVAT optimization remains minimal, risking erroneous conclusions about the true optimal reaction conditions [1].

Troubleshooting Guide: OVAT Limitations and DoE Solutions

FAQ: What are the most common symptoms of a failed OVAT optimization?

| Symptom | Underlying Cause | DoE-Based Solution |

|---|---|---|

| Poor Reproducibility | Unidentified factor interactions; optimal condition for one variable depends on the level of another [1] [3]. | Use Full Factorial or Response Surface designs to model and quantify interaction effects [1] [4]. |

| Failed Scale-Up | OVAT finds local, narrow optima that are not robust to slight variations in process parameters [2]. | Use DoE to map a robust operating region (e.g., via Response Surface Methodology) [1] [2]. |

| Inability to Optimize Multiple Responses | OVAT cannot systematically balance competing goals (e.g., high yield and high selectivity) [1]. | Use multi-response optimization and desirability functions in DoE [1] [5]. |

| Lengthy, Inefficient Optimization | The number of experiments grows linearly with each new variable, wasting time and resources [1] [2]. | Screen many factors simultaneously with Fractional Factorial or Definitive Screening Designs (DSD) [2] [4]. |

FAQ: How do I know if my reaction is suffering from significant factor interactions?

Problem: You have optimized each variable in isolation, but the combined "optimal" conditions do not deliver the expected performance.

Diagnosis: This is a classic sign of factor interactions. In statistical terms, an interaction occurs when the effect of one factor (e.g., Temperature) on the response (e.g., Yield) depends on the level of another factor (e.g., Catalyst Loading) [1] [3].

Experimental Protocol to Test for Interactions:

- Select Two Critical Variables: Choose the two factors you suspect might interact (e.g., Temperature and Catalyst Loading).

- Run a Two-Factor Factorial Design: Conduct experiments at all combinations of low and high levels for both factors. A minimum of 4 experiments (2²) is required [4].

- Analyze the Results: Calculate the average effect of changing each factor. Then, examine the four data points. If the effect of Temperature is different at low vs. high Catalyst Loading, a significant interaction is present.

Example of a significant interaction: A high catalyst loading might give excellent yield only at high temperatures, while at low temperatures, it performs worse than a low catalyst loading. OVAT would completely miss this nuanced relationship.

Quantitative Comparison: OVAT vs. DoE Efficiency

The experimental efficiency of DoE becomes dramatically apparent when optimizing multiple variables. The table below compares the number of experiments required by each method, assuming three levels (low, middle, high) are tested per variable [1].

| Number of Variables | Typical OVAT Experiments (3 levels/variable) | Typical DoE Screening Experiments | Efficiency Gain |

|---|---|---|---|

| 3 | 9 (3+3+3) | 8 (2³ Full Factorial) | Comparable |

| 4 | 12 (3x4) | 12-16 (e.g., 2⁴ Full Factorial) | Comparable to slightly better |

| 5 | 15 (3x5) | 16-20 (e.g., 2⁵⁻¹ Half-Fraction) | ~25% more efficient |

| 6 | 18 (3x6) | 16-24 (e.g., 2⁶⁻² Fractional Factorial) | ~25-40% more efficient |

| 8 | 24 (3x8) | 20-32 (e.g., Definitive Screening Design) | ~25-60% more efficient |

Beyond sheer efficiency, DoE provides a structured data set capable of modeling interaction effects, which OVAT data cannot [1] [2]. A study optimizing a copper-mediated radiofluorination reaction found that DoE identified critical factors and modeled their behavior with more than two-fold greater experimental efficiency than the traditional OVAT approach [2].

The Scientist's Toolkit: Essential Reagents and Materials for DoE

When setting up a DoE for reaction optimization, certain classes of reagents and variables are frequently explored. The following table details key "Research Reagent Solutions" and their common functions in catalytic reaction systems.

| Reagent / Material | Function in Optimization | Example / Note |

|---|---|---|

| Earth-Abundant Metal Catalysts (e.g., Co, Fe, Ni complexes) | Catalyze key bond-forming steps (e.g., C-H functionalization, cross-coupling); often provide unique selectivity vs. precious metals [6]. | Air-stable Ni(0) catalysts enable practical cross-coupling without inert atmospheres [7]. |

| Ligands (e.g., Phosphines, N-Heterocyclic Carbenes) | Modulate catalyst activity, stability, and selectivity; crucial for asymmetric induction [1]. | Often optimized in conjunction with metal catalyst and solvent. |

| Solvents | Affect solubility, stability of intermediates, reaction rate, and selectivity [3]. | DoE can be used with a "solvent map" to efficiently explore diverse chemical space [3]. |

| Additives (e.g., Salts, Acids, Bases) | Can accelerate reactions, suppress side pathways, or control selectivity (e.g., Li salts in glycosylations) [5]. | Bayesian optimization discovered Li salt-directed stereoselective glycosylations [5]. |

| Substrate / Reagent Stoichiometry | The relative amount of starting materials and reagents. | Optimizing this is critical for cost reduction and minimizing waste on scale-up. |

Implementing DoE: A Practical Workflow

Transitioning from OVAT to DoE involves a shift in mindset and practice. The following workflow, derived from synthetic chemistry case studies, provides a roadmap for implementation [1] [2].

Detailed Experimental Protocols:

- Define Objective and Responses: Clearly state the goal (e.g., "maximize yield while maintaining >98% enantiomeric excess"). Identify measurable responses (e.g., yield, selectivity, cost, impurity level) [1] [8].

- Select Factors and Ranges: Choose which variables to study (e.g., temperature, solvent, catalyst loading). Define feasible upper and lower limits for each based on chemical intuition and preliminary data [1] [2].

- Choose Experimental Design:

- Screening: For exploring 5+ variables, use Fractional Factorial or Definitive Screening Designs (DSD) to identify the most influential ("vital few") factors with minimal runs [2] [4].

- Optimization: For 2-4 critical variables, use Response Surface Methodology (RSM) designs like Central Composite Design to model curvature and find the true optimum [1] [2].

- Execute and Collect Data: Run experiments in a randomized order to avoid confounding from lurking variables (e.g., ambient humidity, catalyst degradation). Use a detailed checklist for each run to ensure consistency [8].

- Analyze Data and Build Model: Use statistical software to perform multiple linear regression. Analyze the model to identify significant main effects and interaction terms. The model will often take the form:

Response = β₀ + (β₁A + β₂B + ...) + (β₁₂AB + ...)where β are coefficients and A, B are variables [1]. - Validate the Model: Run 2-3 confirmation experiments at the predicted optimal conditions. If the experimental results match the model's predictions, the model is validated and the conditions are ready for scale-up [1] [4].

Advanced Alternatives: Beyond Traditional DoE

For particularly complex systems with a high number of variables or expensive experiments, advanced optimization strategies have emerged:

- Bayesian Optimization (BO): A machine-learning approach that treats the reaction as a "black box" and uses an acquisition function to intelligently suggest the next most informative experiments. It is highly efficient for optimizing noisy systems with many variables and has been successfully used for reaction discovery, such as finding novel stereoselective glycosylation conditions [5].

- High-Throughput Experimentation (HTE): Involves the miniaturization and parallelization of reactions, allowing hundreds to thousands of conditions to be tested simultaneously. HTE generates large, rich datasets ideal for training machine learning models and can dramatically accelerate both optimization and discovery cycles [9].

The OVAT approach to reaction optimization is fundamentally limited for complex chemical systems due to its inability to detect factor interactions, its inefficiency, and its high risk of converging on a local optimum. This creates significant risks during scale-up in pharmaceutical and process chemistry. The adoption of Design of Experiments (DoE) provides a structured, efficient, and statistically sound framework to overcome these limitations. By simultaneously varying factors, DoE maps the entire reaction space, reveals critical interactions, and reliably identifies robust, scalable conditions. For the modern researcher, moving from OVAT to DoE—and its advanced cousins like Bayesian Optimization and HTE—is not just an optimization step, but a necessary evolution for tackling the intricate challenges of synthetic chemistry.

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary advantage of a factorial design over a one-factor-at-a-time (OFAT) approach?

Factorial designs allow you to study multiple factors (process variables) simultaneously. This is more efficient than OFAT and, crucially, enables the detection of interaction effects between factors, which OFAT completely misses [10] [11]. An interaction occurs when the effect of one factor (e.g., Bath Time) on the response (e.g., Residual Surface Contaminants) depends on the level of another factor (e.g., Solution Type) [12]. Ignoring interactions can lead to incorrect conclusions about how a process truly works.

FAQ 2: My initial reaction has many potential factors. How can I efficiently identify the most important ones?

When facing a large number of process variables, a Screening Design of Experiments (Screening DOE) is the appropriate tool [13]. Its purpose is to quickly and efficiently identify the most significant factors influencing your response. Common screening designs include 2-level fractional factorial designs and Plackett-Burman designs, which use a carefully selected subset of runs from a full factorial to estimate main effects while saving time and resources [13]. This allows you to "screen out" insignificant factors and focus subsequent, more detailed optimization studies on the critical few.

FAQ 3: Why is randomization critical in my experimental runs?

Randomization refers to running your experimental trials in a random order. It is a fundamental principle that helps average out the effects of uncontrolled, or lurking, variables (e.g., ambient temperature, humidity, instrument drift) [12] [10]. If you don't randomize, and these uncontrolled variables change systematically with your factor levels, their effects become confounded with the factor you are studying. This means you cannot separate the true effect of your factor from the effect of the nuisance variable, compromising your conclusions [12].

FAQ 4: What are the key physical changes when scaling up an organic synthesis that DoE must address?

Scaling up organic synthesis from the laboratory to production introduces several physical parameter changes that a well-designed DoE must investigate. Key factors include [14]:

- Reaction Kinetics and Heat Transfer: Laboratory-scale reactions have high surface-area-to-volume ratios, facilitating heat dissipation. Industrial reactors do not, which raises the danger of thermal runaways for exothermic reactions.

- Mixing Performance: The efficiency of mass transfer and mixing can change dramatically with scale, impacting reaction rates and selectivity.

- Work-up and Purification Scalability: Techniques like column chromatography are often impractical at scale. The DoE should help identify scalable alternatives like distillation, extraction, or crystallization.

Troubleshooting Guides

Issue 1: Unreproducible or Noisy Data

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Uncontrolled Nuisance Variables | Check if environmental conditions (temperature, humidity) or raw material sources were consistent. | Implement randomization in the run order to average out these effects [12] [10]. |

| Faulty Measurement System | Perform a Gage R&R (Repeatability & Reproducibility) study on your analytical method. | Ensure the measurement system is stable and repeatable before starting the DoE [10]. |

| Spatial Bias in HTE (For High-Throughput Experimentation) | Check for patterns in results correlated to well location (e.g., edge vs. center wells). | Use equipment with even temperature and mixing control. Validate that light irradiation is consistent across all wells for photochemistry [9]. |

Issue 2: Failed Optimization or Inability to Find a Robust Solution

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Undetected Factor Interactions | Analyze your data for significant two-factor interactions. A 2-level factorial design is ideal for this. | Use a full factorial or a resolution V fractional factorial design that can estimate interaction effects without confounding them with main effects [11] [13]. |

| Ignored Curvature in Response | Check if a linear model is a poor fit. If the optimum appears to be inside the experimental region, there is likely curvature. | Move from a screening design to a Response Surface Methodology (RSM) design, like a Central Composite Design, which uses more than two levels to model curvature [12] [15]. |

| Process Not Robust to Noise | Check if minor variations in raw material quality or process settings cause large shifts in the response. | Use DoE to find factor settings where the response variation is minimized despite the presence of uncontrollable "noise" variables [14]. |

Issue 3: Scaling Up a Successful Lab-Scale Reaction

This is a common challenge in process chemistry. The table below outlines a systematic DoE-based approach to troubleshooting scale-up.

| Scale-Up Challenge | DoE-Based Troubleshooting Strategy | Key Factors to Investigate |

|---|---|---|

| Change in Reaction Kinetics & Heat Transfer [14] | Use a factorial DoE to model the relationship between scale-dependent factors and critical responses like yield or impurity levels. | Reaction Temperature, Addition Time, Agitation Speed, Cooling Rate. |

| Altered Impurity Profile | Employ a screening DoE to identify which process parameters most strongly influence the formation of key impurities. | Solvent Composition, Reagent Stoichiometry, Catalyst Loading, Reaction Time. |

| Inefficient Work-up/Purification [14] | Design experiments to optimize isolation steps for the larger scale. | Antisolvent Addition Rate, Crystallization Cooling Rate, Wash Solvent Volumes. |

Experimental Protocols

Protocol 1: Setting Up a Basic 2-Level Full Factorial Design

A 2-level full factorial design is the foundation for understanding main effects and interactions.

1. Define Objective and Scope:

- Clearly state the goal (e.g., "Understand the effect of

Temperature,Pressure, andCatalyst TypeonReaction Yield"). - Identify all relevant factors (independent variables) and the response (dependent variable) you will measure [12] [10].

2. Select Factors and Levels:

- For each continuous factor (e.g.,

Temperature), choose a realistic high (+1) and low (-1) level to investigate. - For categorical factors (e.g.,

Catalyst Type), assign the two types to+1and-1[11]. - Example:

- Factor A:

Temperature| -1 level: 100°C | +1 level: 150°C - Factor B:

Pressure| -1 level: 50 psi | +1 level: 100 psi - Factor C:

Catalyst Type| -1 level:Catalyst X| +1 level:Catalyst Y

- Factor A:

3. Create the Design Matrix:

- The number of experimental runs required is 2^k, where k is the number of factors.

- For 3 factors, this requires 8 runs. The standard design matrix is shown below. The run order should be randomized in practice.

Table: 2^3 Full Factorial Design Matrix

| Standard Run Order | Temperature (A) | Pressure (B) | Catalyst Type (C) | Response: Yield (%) |

|---|---|---|---|---|

| 1 | -1 (100°C) | -1 (50 psi) | -1 (Catalyst X) | ... |

| 2 | +1 (150°C) | -1 (50 psi) | -1 (Catalyst X) | ... |

| 3 | -1 (100°C) | +1 (100 psi) | -1 (Catalyst X) | ... |

| 4 | +1 (150°C) | +1 (100 psi) | -1 (Catalyst X) | ... |

| 5 | -1 (100°C) | -1 (50 psi) | +1 (Catalyst Y) | ... |

| 6 | +1 (150°C) | -1 (50 psi) | +1 (Catalyst Y) | ... |

| 7 | -1 (100°C) | +1 (100 psi) | +1 (Catalyst Y) | ... |

| 8 | +1 (150°C) | +1 (100 psi) | +1 (Catalyst Y) | ... |

4. Run Experiment and Analyze:

- Execute the runs in a randomized order to prevent confounding [11].

- Calculate the main effect of a factor as the average change in response when the factor moves from its low to high level.

Effect of Temperature= (Average Yield at High Temp) - (Average Yield at Low Temp) [10]

- Analyze the data to determine significant main effects and interaction effects.

Protocol 2: Screening Design for Scale-Up Studies

When scaling a reaction, many factors may seem important. This protocol uses a screening design to find the vital few.

1. Identify a Large Set of Potential Factors:

- Gather a team of subject matter experts and use a process map to identify all potential factors. In scale-up, these could include chemical (

Catalyst Loading,Solvent Ratio) and physical/engineering (Agitation Speed,Heating/Cooling Rate) parameters [14] [13].

2. Choose a Screening Design:

- For 5 to 10 factors, a Fractional Factorial or Plackett-Burman design is appropriate. These designs use a fraction of the runs of a full factorial (e.g., 8 runs for 5 factors instead of 32) to estimate main effects. Be aware that this can confound interactions [13].

3. Execute and Analyze to Downselect:

- Run the screening design and analyze the results using Pareto charts or statistical significance tests (p-values).

- The goal is to identify 2-4 factors that have the largest and most significant impact on your key responses (e.g.,

Yield,Purity,Safety).

4. Proceed to Optimization:

- Use the downselected factors in a more detailed optimization design, such as a Response Surface Method (RSM) like a Central Composite Design, which is highly effective for final optimization [15].

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Elements for a DoE-based Scale-Up Study

| Item / Category | Function in DoE for Scale-Up | Example & Notes |

|---|---|---|

| High-Throughput Experimentation (HTE) Platforms [9] | Enables rapid parallel testing of numerous reaction condition combinations (solvents, catalysts, ligands) in miniaturized format, accelerating data generation. | Uses microtiter plates (MTPs). Crucial for building comprehensive datasets for machine learning and robust optimization. |

| Process Analytical Technology (PAT) [14] | Provides real-time, in-situ monitoring of reactions (e.g., concentration, particle size) for rich, time-dependent data on multiple responses. | Includes tools like FTIR, Raman spectroscopy. Enhances process understanding and supports Quality by Design (QbD). |

| Reaction Calorimetry [14] | Measures heat flow of a reaction under controlled conditions. Critical for identifying and quantifying thermal hazards for safe scale-up. | Data on heat accumulation and potential for runaway reactions informs the design of safe operating spaces in the DoE. |

| Automated Work-up & Purification Systems | Scales the post-reaction steps (extraction, crystallization, chromatography) that are often bottlenecks and sources of yield loss. | Integrated with HTE platforms to create end-to-end automated workflows, ensuring purification is included in the optimization [14]. |

| Design of Experiments Software | Statistically sound software for designing experiments, randomizing runs, analyzing complex data, and visualizing interaction effects and response surfaces. | JMP, Minitab, or built-in functions in R/Python. Essential for correct design generation and powerful data analysis. |

Defining Critical Process Parameters (CPPs) and Critical Quality Attributes (CQAs)

Frequently Asked Questions (FAQs)

1. What is the fundamental relationship between a CQA and a CPP?

A Critical Quality Attribute (CQA) is a physical, chemical, biological, or microbiological property or characteristic that must be within an appropriate limit, range, or distribution to ensure the desired product quality, safety, and efficacy [16] [17]. A Critical Process Parameter (CPP) is a process parameter whose variability has a direct impact on a CQA and therefore must be monitored or controlled to ensure the process produces the desired quality [18] [17]. In essence, CPPs are the inputs you control to consistently achieve the output CQAs [19].

2. How is "criticality" determined? Is it a simple yes/no classification?

Modern regulatory guidance advocates that criticality should be viewed as a continuum rather than a simple binary state [17]. The level of criticality is a risk-based assessment of the impact a parameter has on a CQA. This means some parameters may have a high-impact criticality, while others have a medium or low-impact criticality. This continuum allows for control strategies to focus where the greatest impact on product quality is achieved [17] [20].

3. What is the role of Design of Experiments (DoE) in defining CPPs and CQAs?

Traditional "one factor at a time" (OFAT) experimentation is inefficient and can fail to identify interactions between process parameters [21] [3] [20]. Design of Experiments (DoE) is a structured, statistical approach that allows for the simultaneous variation of multiple factors [21] [22]. It is used to:

- Screen a large number of parameters to identify those with a significant impact (potentially critical ones) [20].

- Refine understanding of the key parameters and quantify their impact on CQAs.

- Optimize the process by modeling the relationship between CPPs and CQAs to establish a robust "design space" [21] [20]. Using DoE provides a higher level of process understanding and is a core component of the Quality by Design (QbD) framework [21] [23].

4. What is a common pitfall during solvent optimization, and how can it be avoided?

A common pitfall is selecting solvents based solely on a chemist's intuition and previous experience, which is a non-systematic, trial-and-error approach [3]. This can lead to the use of suboptimal or problematic solvents. A more robust method is to use a "map of solvent space" within a DoE. This approach uses principal component analysis (PCA) to classify solvents based on a range of properties, allowing researchers to systematically select solvents from different regions of the map to explore a wide range of solvent properties efficiently and identify the optimal solvent for the reaction [3].

Troubleshooting Guides

Problem 1: Inability to Distinguish Between Critical and Non-Critical Parameters

Symptoms: Every parameter is designated as "critical," leading to an overly complex and resource-intensive control strategy. Alternatively, parameters that later cause batch failures are missed during initial assessment.

| Root Cause | Recommended Solution |

|---|---|

| Reliance on binary (yes/no) criticality assessment | Adopt a risk-based continuum of criticality with multiple levels (e.g., High, Medium, Low). Use a Failure Mode and Effects Analysis (FMEA) to score parameters based on Severity, Occurrence, and Detectability [17]. |

| Insufficient process knowledge and data | Implement a staged DoE approach. Begin with a screening design (e.g., fractional factorial) to identify potentially critical parameters from a large list, then use refining designs (e.g., full factorial) to characterize their impact [20]. |

| Poor understanding of the relationship between process parameters and patient safety | Always link parameter assessment back to the Quality Target Product Profile (QTPP) and CQAs. A parameter is only critical if its variability impacts an attribute that affects product safety or efficacy [16] [17]. |

Problem 2: Process Fails Upon Scale-Up Despite Successful Lab-Scale Results

Symptoms: The reaction performs well at small scale but yields different results (e.g., lower purity, different impurity profile, reduced yield) when moved to a larger reactor.

| Root Cause | Recommended Solution |

|---|---|

| Ignoring scale-dependent parameters | Identify parameters that are likely to change with scale (e.g., mixing, heat transfer, mass transfer, gas dissolution) and include them as factors in your DoE studies. Use dimensionless numbers (e.g., Reynolds for mixing) to maintain consistency [20]. |

| OFAT studies that miss parameter interactions | Use multivariate DoE to model complex interactions. A parameter that is non-critical at small scale might become critical at large scale due to an interaction with another parameter that is harder to control consistently in a larger vessel [3] [20]. |

| Inadequate design space | The lab-scale design space was not representative of the full-scale operating space. Develop the design space using studies that model scale-dependent effects or conduct confirmation runs at pilot scale to verify the model [21] [20]. |

Problem 3: High Variability in CQA Results Even When CPPs are Controlled

Symptoms: CPPs are kept within their proven acceptable ranges (PAR), but the resulting CQAs (e.g., impurity levels, assay) still show unacceptable batch-to-batch variation.

| Root Cause | Recommended Solution |

|---|---|

| Uncontrolled Critical Material Attributes (CMAs) | Raw material attributes can be a source of variability. Identify and control CMAs by including different lots of key raw materials in your DoE studies or using a statistical blocking technique [20]. |

| Poor measurement system accuracy | The analytical method used to measure the CQA may be too variable. Perform a Gage R&R study to quantify the measurement system's variability. A percent contribution from R&R variability should be <20% for the measurements to be meaningful [20]. |

| Insufficient replication in DoE studies | The underlying process variability was not properly quantified. Include replicate runs (especially center points) in your experimental design to estimate "noise." This helps discern true "signal" responses from inherent variability [20]. |

Experimental Protocols for Identification and Characterization

Protocol 1: Staged DoE for CPP Screening and Characterization

This methodology efficiently identifies and quantifies the impact of process parameters [20].

Objective: To screen a large number of potential process parameters and characterize their impact on CQAs to define the process design space.

Methodology:

Screening Phase:

- Design: Use a fractional factorial or Plackett-Burman design.

- Purpose: To test a large number of parameters with the fewest runs and identify the main effects (individual impact) of each. The goal is to eliminate non-significant parameters.

- Levels: Typically 2 levels (high and low) for each parameter.

Refining Phase:

- Design: Use a full factorial design.

- Purpose: Having dropped out non-significant parameters, this phase tests both main effects and interactions between the remaining parameters. It generates first-order (linear) models.

- Levels: 2 levels, plus center points to estimate variability and detect curvature.

Optimization Phase:

- Design: Use a Central Composite Design (CCD) or Box-Behnken design.

- Purpose: To generate non-linear (quadratic) response surfaces. This allows for the identification of optimal set points and the formal definition of the design space.

- Levels: Typically 3 or 5 levels for key parameters.

The following workflow visualizes this iterative, staged approach:

Protocol 2: Lifecycle DoE (LDoE) for Holistic Process Knowledge

Objective: To integrate data from multiple, independently run development studies into a single, unified model, enhancing predictive capability and enabling early identification of potentially CPPs [21].

Methodology:

- Initial DoE: Begin with an initial optimal design (e.g., D-optimal) to investigate key parameters.

- Design Augmentation: As new development work packages arise, augment the existing DoE model with new experiments rather than starting from scratch. This incorporates new parameters or adjusts the ranges of existing ones.

- Unified Model: All data generated throughout the development lifecycle is consolidated into a single model file.

- Continuous Refinement: The model is continuously refined with each augmentation cycle, improving its predictions and robustness. This approach allows for a Process Characterization Study (PCS) to be performed primarily with development data [21].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function / Relevance to CPP & CQA Definition |

|---|---|

| DoE Software | Statistical software (e.g., JMP, Design-Expert, Minitab) is essential for generating optimal experimental designs, analyzing results, building models, and creating visualizations of the design space [21] [22]. |

| In-line/On-line Sensors | For real-time monitoring of CPPs like pH, Dissolved Oxygen (DO), and Dissolved CO2 in bioreactors. Reliable monitoring is the foundation for process knowledge and control [18]. |

| At-line/Off-line Analyzers | Used for monitoring nutrients and metabolites (e.g., glucose, lactate). Techniques include HPLC, glucose oxidase assays, and biochemical analyzers. These are often necessary for measuring attributes that lack robust in-line sensors [18]. |

| Solvent Map | A principal component analysis (PCA)-based map of solvent properties. Used within a DoE to systematically select solvents from different chemical spaces, moving beyond trial-and-error for solvent optimization [3]. |

| Gage R&R Tools | A methodology and associated tools to perform Measurement System Analysis. This ensures that the analytical methods used to measure CQAs are sufficiently accurate and precise, preventing erroneous conclusions from noisy data [20]. |

Leveraging DoE for Proactive Risk Assessment in Process Scale-Up

Troubleshooting Guides & FAQs

FAQ 1: What is the primary advantage of using DoE over traditional One-Factor-at-a-Time (OFAT) methods during scale-up?

DoE efficiently identifies interactions between critical process parameters (CPPs) that OFAT approaches miss. During scale-up, factors like heat and mass transfer behave differently than at lab scale; their interaction can critically impact quality attributes. Testing factors simultaneously with DoE reveals these interactions, preventing unexpected failures and providing a predictive model for process performance, ultimately leading to a more robust and reliable scaled-up process [24] [25].

FAQ 2: How can we proactively assess risks when scaling up a new organic synthesis route?

DoE enables proactive risk assessment by systematically mapping the relationship between your input factors and your Critical Quality Attributes (CQAs). By identifying these cause-and-effect relationships early, you can:

- Define a Proven Acceptable Range (PAR) for each CPP.

- Predict the impact of normal process variation on your CQAs.

- Identify which factor interactions pose the greatest risk to product quality. This data-driven approach replaces guesswork, allowing you to focus control strategies on the highest-risk parameters and ensure consistency from the lab to the manufacturing plant [24] [8].

FAQ 3: Our DoE results were inconclusive. What are the most common preparation errors that cause this?

Inconclusive results often stem from inadequate process preparation. The most common errors are:

- Lack of Process Stability: Running a DoE on a process not in a state of statistical control. Unstable baseline performance (noise) masks the effect of your controlled factors (signal) [8].

- Inconsistent Input Conditions: Uncontrolled variation in raw material batches, different operators, or fluctuating environmental conditions introduces noise that confounds your results [8].

- Unreliable Measurement System: If your measurement method lacks precision (poor Gage R&R), you cannot trust the data you collect, making it impossible to detect real process changes [8].

FAQ 4: Which DoE design should I start with for a new process with many potential factors?

For an early-stage process with numerous potential factors, begin with a screening design.

- Fractional Factorial or Definitive Screening Designs (DSD) are ideal. They allow you to efficiently screen a large number of factors (e.g., 6-10) with a minimal number of experimental runs to identify the few that are truly significant. This helps you focus your optimization efforts on the most impactful variables, saving time and resources [4] [24].

FAQ 5: How does DoE support regulatory compliance, specifically Quality by Design (QbD)?

DoE is a fundamental pillar of the QbD framework. It provides the scientific evidence to:

- Define your Design Space: The multidimensional combination of input variables demonstrated to provide assurance of quality.

- Identify Critical Process Parameters (CPPs): DoE objectively determines which parameters have a significant impact on your CQAs. By using DoE, you can present regulators with a data-rich understanding of your process, showing that quality is built into the design, not just tested into the product [24] [25].

Experimental Protocols & Methodologies

Protocol: Implementing a Definitive Screening Design (DSD) for Early-Phase Process Understanding

1. Objective Definition Clearly state the goal, e.g., "Identify the three most critical factors affecting reaction yield and impurity levels during the step-up of the hydrolysis reaction." Define measurable responses (Yield %, Impurity A %) [24] [8].

2. Factor and Level Selection Brainstorm with a cross-functional team (R&D, Engineering, Analytics) to identify 5-7 potential factors. Select a high and low level for each continuous factor (e.g., Temperature: 50°C vs. 70°C; Catalyst Loading: 1.0 mol% vs. 1.5 mol%) [24] [8].

3. Experimental Execution & Control

- Process Stabilization: Before starting the DSD, run the process at midpoint conditions to ensure stability and repeatability [8].

- Input Control: Use a single, homogeneous batch of starting material for all experiments. Keep all equipment and non-tested parameters constant [8].

- Randomization: Randomize the run order of all experiments to avoid confounding from lurking variables (e.g., ambient humidity) [4].

- Data Collection: Use a pre-defined data sheet. Ensure analytical methods are calibrated and capable (e.g., via MSA) [8].

4. Data Analysis

- Use statistical software (e.g., JMP, Minitab, Design-Expert) to fit a model to the data.

- Perform Analysis of Variance (ANOVA) to identify statistically significant factors (p-value < 0.05).

- Examine main effects and interaction plots to understand the direction and magnitude of each factor's effect.

5. Validation Conduct 2-3 confirmation runs at the optimal factor settings predicted by the model to verify that the responses fall within the predicted ranges [24].

Workflow: Strategic DoE Implementation for Scale-Up

The following workflow outlines a structured, multi-stage approach to applying Design of Experiments for successful process scale-up.

Quantitative Data & The Scientist's Toolkit

DoE Design Selection Guide

The table below summarizes the key DoE designs and their appropriate applications in a scale-up context.

| DoE Design | Primary Objective | Typical Factors | Key Advantage for Scale-Up |

|---|---|---|---|

| Full Factorial | Understand all factor interactions | 2 - 4 | Provides a complete interaction map for a small number of critical parameters [24]. |

| Fractional Factorial | Screening; identify vital factors | 5 - 8 | Highly efficient for reducing a large number of potential factors to a manageable few [4] [24]. |

| Definitive Screening | Screening with curvature detection | 6 - 12 | Requires very few runs; can detect nonlinear effects, ideal for early development [4]. |

| Response Surface (e.g., Central Composite) | Optimization; map response surfaces | 2 - 5 | Models curvature to find a true optimum and define the design space [24]. |

Research Reagent & Material Solutions

Essential materials and tools for executing a successful DoE in process chemistry.

| Item / Solution | Function in DoE for Scale-Up |

|---|---|

| Statistical Software (e.g., JMP, Minitab, Design-Expert) | Used to design the experiment, randomize runs, analyze complex data (ANOVA), and create predictive models and visualizations [24] [25]. |

| Homogeneous Raw Material Batch | A single, well-characterized batch of starting material ensures that variation in the response is due to the factors being tested, not raw material inconsistency [8]. |

| Process Analytical Technology (PAT) | Tools like in-situ FTIR or HPLC allow for real-time monitoring of reactions, providing rich, high-quality response data for each experimental run [26]. |

| Calibrated Measurement Systems | All analytical instruments (scales, calipers, HPLC) must be calibrated with a verified Measurement System Analysis (MSA/Gage R&R) to ensure data integrity [8]. |

| Flow Chemistry Reactor | A modular flow reactor system enables precise control of factors like residence time and temperature, facilitating the implementation and automation of DoE protocols [26]. |

DoE Readiness Checklist

A pre-experiment checklist is critical for success. Use the table below to verify your process and systems are prepared.

| Checkpoint Category | Specific Verification Item | Status (Y/N/NA) |

|---|---|---|

| Process Stability | Process exhibits statistical control via control charts on key parameters [8]. | |

| Preliminary trial runs show consistent and repeatable results [8]. | ||

| Input Control | A single batch of raw materials is secured for the entire DoE [8]. | |

| All non-tested equipment parameters are documented and fixed [8]. | ||

| Measurement System | All instruments are within calibration dates [8]. | |

| Gage R&R study is performed for critical measurements (<10% is ideal) [8]. | ||

| Experimental Protocol | A detailed, step-by-step procedure for each run is prepared [8]. | |

| A run-order randomization plan is created [4]. |

From Microtiter Plates to Pilot Plants: Practical DoE Methodologies and Applications

Integrating High-Throughput Experimentation (HTE) with DoE for Rapid Screening

Frequently Asked Questions (FAQs)

FAQ 1: What are the most critical steps to prepare a process for a DoE within an HTE workflow? Proper preparation is crucial for successful DoE. The key steps include [8]:

- Ensure Process Stability and Repeatability: The process must be under statistical control before starting DoE. Use control charts to verify that results are consistent and investigate any special causes of variation. This includes calibrating all equipment and standardizing operations [8].

- Maintain Consistent and Controlled Input Conditions: All factors not being actively investigated must be kept constant. Use a single batch of materials whenever possible, control environmental conditions, and use checklists or Poka-Yoke (mistake-proofing) to ensure starting conditions are identical for every experimental run [8].

- Ensure Measurement System Reliability: Verify that all instruments are calibrated. For critical measurements, perform a Measurement System Analysis (e.g., Gage R&R) to confirm your data is accurate and reliable [8].

FAQ 2: Our HTE data is generated quickly, but analysis is a bottleneck. How can we manage this effectively? This is a common challenge. Success requires a plan to connect data seamlessly from generation to analysis [27].

- Use Integrated Software Platforms: Specialized software can automate the link between experimental setup, analytical results (like LC/MS data), and data analysis. This avoids the need for manual data transcription from multiple systems, which is tedious and error-prone [28] [27].

- Structure Data for Secondary Use: From the outset, capture and curate data with future analysis in mind. Ensure metadata (e.g., factor levels, material batches) is consistently recorded and tied to results. Organized, standardized data is a prerequisite for machine learning and other advanced analyses [27].

FAQ 3: Why did our DoE rollout fail to be adopted by our research team? Successful adoption often depends more on cultural and operational change than on the science itself [29].

- Focus on the "Why": Clearly and regularly communicate the purpose of using DoE. Is it to screen factors, optimize a process, or improve robustness? An inspirational message helps maintain team buy-in [29].

- Start Small: Avoid a "kitchen sink" approach. Begin with a small, manageable DoE project instead of a massive, complex one. Use an iterative framework where you can build on small successes [29].

- Consider Your People: Understand the human impact of the new workflow. Involve users early, provide proper training, and choose tools that simplify their work, not complicate it [29] [27].

FAQ 4: When should I use DoE instead of a One-Factor-at-a-Time (OFAT) approach? DoE should be your default when [30]:

- More than one factor could influence the outcome.

- You need to test many factors with limited resources.

- Your goal is to understand interactions between factors. OFAT may only be suitable when you are certain there is a single variable and no interactions [30].

Troubleshooting Guides

Issue 1: Inconclusive or Misleading DoE Results

This is a common problem often traced back to issues before the experiment even began.

| Symptom | Possible Cause | Solution |

|---|---|---|

| Difficulty distinguishing factor effects from random noise [8]. | Lack of process stability or repeatability. | Stabilize the process using SPC before DoE. Conduct trial runs without changing factors to establish a predictable baseline [8]. |

| Effects of factors are masked or distorted [8] [31]. | Inconsistent input conditions (e.g., varying raw material batches, different operators). | Control all inputs not part of the DoE. Use a single material batch, standardize procedures, and employ blocking or randomization to account for operator or day-to-day variation [8]. |

| Apparent differences where none exist, or failure to detect real changes [8]. | Inadequate or unverified measurement system. | Calibrate instruments before the experiment. Perform a Measurement System Analysis (MSA) to ensure measurement variation is small relative to process changes [8]. |

| Unexplained anomalies in results; hard-to-trace errors [8]. | Lack of standard procedures and human error. | Use detailed checklists for each trial run and implement mistake-proofing (Poka-Yoke) devices or procedures to prevent incorrect setups [8]. |

| Inability to model effects or identify optimal conditions [31]. | An important factor was not investigated or was investigated in the wrong region. | Consult with process experts and review historical data during the planning phase to select meaningful factors and levels. Consider a sequential approach to narrow in on the important experimental region [8] [29]. |

Issue 2: Failure to Scale Optimal Conditions from HTE

You find excellent conditions in a microplate, but they don't work at a larger preparative scale.

| Symptom | Possible Cause | Solution |

|---|---|---|

| Reaction performance (e.g., yield, selectivity) differs significantly between HTE and scale-up. | Physical process differences: Factors like heat transfer, mixing efficiency, or mass transfer, which are constant in a microplate, become critical variables upon scaling [32]. | Design DoE to include scale-dependent factors: During HTE, proactively include and vary factors like agitation speed or heating/cooling rate. This builds a model that understands their effect, making scale-up more predictive. |

| Inaccurate quantification in HTE: The method for analyzing the tiny scales of HTE may not be representative of standard analytical methods used at larger scales [32]. | Validate HTE analysis methods: Correlate rapid, parallel analysis methods (e.g., plate readers) with standard analytical techniques (e.g., HPLC) during method development to ensure data reliability [32]. |

Issue 3: Overcoming Barriers to Implementing DoE

Many researchers face hurdles when first adopting a DoE methodology.

| Barrier | Description | Solution |

|---|---|---|

| Statistical Complexity [30] | The statistical foundation of DoE appears daunting to non-specialists. | Use modern DoE software that handles the mathematical burden. Foster collaboration between biologists/chemists and statisticians/bioinformaticians [30]. |

| Experimental Complexity [30] | Translating a DoE design into manual liquid handling instructions is time-consuming and prone to error. | Leverage lab automation and liquid handling robots. Collaborate with automation engineers to integrate DoE software output with robotic systems [30]. |

| Data Modeling Complexity [30] | Highly multidimensional data from DoE is difficult to visualize and interpret. | Use data analysis software with multidimensional plotting (contour plots, 3D surfaces). Continue collaboration with statisticians for advanced modeling and interpretation [30]. |

Essential Research Reagent Solutions for HTE-DoE

This table details key materials and tools commonly used in HTE platforms for running parallel DoEs, especially in chemical synthesis.

| Item | Function in HTE-DoE |

|---|---|

| 96-Well Reaction Blocks | The standard reactor for running up to 96 parallel reactions simultaneously. They are designed to fit heating/cooling and agitation systems [32]. |

| Glass Micro-insert Vials | Small-volume, chemically resistant vials that sit inside the wells of a reaction block, allowing for reactions at the 1-2 mL scale [32]. |

| Multichannel Pipettes | Essential for rapid and consistent dispensing of reagents, solvents, and stock solutions across multiple wells in a single action [32]. |

| Pre-made Stock Solutions | Preparing master mixes of catalysts, ligands, or substrates as solutions ensures homogeneity and dramatically speeds up experimental setup while improving reproducibility [8] [32]. |

| Solid-Phase Extraction (SPE) Plates | Enable parallel work-up and purification of reaction mixtures from a 96-well plate, a key step for cleaning samples before analysis [32]. |

| Automated Liquid Handling Systems | Robots that can accurately dispense sub-microliter to milliliter volumes, eliminating manual pipetting errors and enabling the execution of complex DoE protocols [30]. |

Experimental Workflow and Protocols

Detailed Protocol: A Representative HTE Workflow for Reaction Optimization

The following workflow is adapted from a published procedure for copper-mediated radiofluorination, demonstrating a robust HTE-DoE integration [32].

Objective: To optimize reaction conditions (Factors: Solvent, Copper Source, Ligand, Additive) for maximizing radiochemical conversion (Response) of multiple substrates.

Step-by-Step Methodology:

- DoE Design and Plate Layout: Using DoE software, select an appropriate design (e.g., fractional factorial, Plackett-Burman) to screen the factors. The software will generate a randomized run order. Use HTE software to map this design onto a 96-well plate layout, defining the contents of each well [28] [32].

- Stock Solution Preparation: Prepare homogenous stock solutions or suspensions of all reagents (Cu(OTf)₂, ligands, additives, and substrate libraries) at specified concentrations. This is critical for reproducibility [32].

- Parallel Reaction Setup:

- Manual: Using a multichannel pipette, dispense reagents into 1 mL glass vials seated in a 96-well block in a specified order (e.g., Cu solution first, then substrate, then isotope solution) [32].

- Automated: Transfer the plate layout file from the HTE software to an automated liquid handler, which will execute all dispensings [30].

- Parallel Reaction Execution: Use a custom transfer plate to simultaneously place all vials into a preheated aluminum reaction block. Seal the block and heat for the designated time [32].

- Parallel Work-up: After heating, use the transfer plate to move all vials to a cooling block. Then, use a multichannel pipette or robot to transfer reaction mixtures to a Solid-Phase Extraction (SPE) plate for parallel purification [32].

- High-Throughput Analysis:

- Option A (Radiochemistry): Quantify conversion by measuring radioactivity of product fractions using a gamma counter or autoradiography imaging of the entire plate [32].

- Option B (General Chemistry): Use parallel LC/MS or UHPLC systems with rapid injection cycles to analyze samples. Integrated software can automatically quantify yields [28].

- Data Analysis and Modeling: Transfer the response data (e.g., % conversion) back into the DoE software. Fit the data to a statistical model, identify significant factors and interactions, and generate contour plots to visualize the optimal reaction space [30].

Workflow Diagram

The diagram below illustrates the integrated, cyclical nature of a robust HTE-DoE workflow.

Scientist's Toolkit: Key Research Reagent Solutions

Table 1: Essential Materials and Software for DoE-based Solvent Optimization

| Item Name | Type | Function/Explanation |

|---|---|---|

| Solvent Map | Statistical Tool | A map of solvent space created via Principal Component Analysis (PCA), incorporating 136 solvents with a wide range of properties. It groups solvents with similar properties, enabling systematic exploration and identification of safer alternatives. [33] [3] |

| Principal Component Analysis (PCA) | Statistical Method | Converts a large set of solvent properties into a smaller set of numerical parameters, allowing solvents to be incorporated into an experimental design as factors. [3] |

| Design of Experiments (DoE) Software | Software Tool | Facilitates the design of the experiment, statistical analysis of results, and building of predictive models to understand factor interactions and identify optimal conditions. [34] [35] |

| Solvent Selection Guide | Reference Tool | Used to identify and select safer, less toxic solvents from the optimal region of the solvent map to improve the safety and sustainability profile of the synthetic method. [33] |

Experimental Protocol: Creating and Applying a Solvent Map

The following workflow outlines the key stages of systematic solvent optimization.

Detailed Methodology

Step 1: Define Solvent Properties

- Gather a wide range of physical and chemical properties for a comprehensive set of 136 solvents. The properties can include dipole moment, dielectric constant, hydrogen bonding parameters, toxicity data, and environmental impact metrics. [33] [3]

Step 2: Perform Principal Component Analysis (PCA)

- Use statistical software to perform PCA on the solvent property data matrix. This analysis reduces the many correlated solvent properties down to 2 or 3 independent Principal Components (PCs), which become the new coordinates for the solvent map. [3]

Step 3: Create the Solvent Map

- Plot the solvents on a 2-dimensional graph using the first two Principal Components as the X and Y axes. Solvents with similar properties will cluster together, creating a "map of solvent space." [3]

Step 4: Select Solvents for the DoE

- To explore the entire solvent space, select solvents from the vertices (corners) of the map and a solvent from the center point. This selection ensures a diverse and representative sampling of all solvent types. [3]

Step 5: Run the DoE Experiments

- Incorporate the selected solvents as a categorical factor in a broader DoE study that may also include continuous variables like temperature, concentration, and catalyst loading. Run the experiments in a randomized order to avoid bias. [3] [34]

Step 6: Analyze Results and Find the Optimum

- Analyze the experimental data (e.g., reaction yield) using the DoE software. The model will identify which area of the solvent map (which type of solvent) leads to the best performance and can also reveal significant interactions between the solvent and other reaction parameters. [3] [34]

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: Why should I use a solvent map with DoE instead of just testing a few common solvents? A1: Traditional, non-systematic solvent selection is based on intuition and can easily miss the true optimal solvent, especially if interactions with other factors (like temperature) exist. A solvent map allows you to efficiently explore a much wider and more diverse chemical space with fewer experiments, often leading to the discovery of superior and sometimes safer solvent choices. [33] [3]

Q2: What is the main advantage of DoE over the "One-Variable-at-a-Time" (OVAT) approach? A2: The key advantage is the ability to detect interactions between factors. In an OVAT approach, you might miss the true optimum because you never test the right combination of variables. DoE systematically explores the multi-dimensional "reaction space," allowing you to build a predictive model and find optimal conditions that OVAT would miss. [3] [34]

Q3: My reaction involves expensive catalysts. Is DoE still practical? A3: Yes. In fact, DoE is highly valuable for minimizing the use of expensive materials. By revealing the significance and interactions of factors like catalyst loading, temperature, and pressure, a DoE study can often identify conditions that use lower catalyst loadings without sacrificing yield, which might not be found using OVAT. [35]

Q4: How many solvents do I need to select from the map for an effective screening? A4: For an initial screening to cover the entire solvent space, you should select solvents from each vertex (corner) of the map, plus at least one solvent from the center region. This approach ensures you sample the full range of solvent properties in your experimental design. [3]

Troubleshooting Common Experimental Issues

Table 2: Troubleshooting Common Problems in Solvent Optimization

| Problem | Potential Cause | Solution |

|---|---|---|

| Poor Model Fit | High variability (noise) in experimental results obscuring the signal from the factors. | Ensure experimental precision and include replicate experiments (e.g., multiple runs at the center point) to estimate and account for experimental error. [34] [36] |

| Failed Prediction | The model's prediction at the "optimal" point does not match a confirmation experiment. | The model may be extrapolating beyond the studied region. Confirm the optimal point lies within the experimental boundaries. The presence of curvature not captured by a linear model can also be a cause; adding center points helps detect this. [34] |

| Low Reproducibility | Uncontrolled variables affecting the reaction outcome. | Use randomization when running the experimental order to prevent lurking variables (e.g., ambient humidity, reagent age) from biasing the results. [36] |

| Solvent Incompatibility | The selected solvent from the map reacts with the starting material or catalyst. | Consult solvent stability data before final selection. The case study on reducing an halogenated nitroheterocycle found the starting material was incompatible with nucleophilic solvents, which informed the final solvent choice. [35] |

The optimization of pharmaceutical hydrogenation reactions presents a significant challenge for researchers and process chemists, requiring careful balance of reaction efficiency, safety, and scalability. Traditional One-Variable-At-a-Time (OVAT) approaches often fail to capture critical parameter interactions and require extensive experimental resources [37]. In contrast, Design of Experiments (DoE) provides a systematic framework for exploring multiple factors simultaneously, enabling efficient identification of optimal conditions while understanding complex variable interactions [38] [39].

This case study establishes a technical support framework for DoE-driven hydrogenation optimization, addressing common challenges through targeted troubleshooting guides, detailed experimental protocols, and comprehensive FAQs. By integrating modern approaches such as High-Throughput Experimentation (HTE) and Process Analytical Technology (PAT), we demonstrate a structured pathway to robust, scalable hydrogenation processes that meet the stringent demands of pharmaceutical development [26] [9].

Troubleshooting Guide: Common Hydrogenation Challenges

Pressure Drop Increase in Reactors

Problem: Steady increase in reactor pressure drop (dP) over months of operation, particularly in severe service conditions (360°C+).

Investigation & Solution:

| Investigation Step | Key Actions | Expected Outcome |

|---|---|---|

| Feedstock Analysis | Characterize feedstock for contaminants, metals, and asphaltenic compounds [40]. | Identify foulants causing bed plugging. |

| Catalyst Grading Assessment | Implement macroporous guard beds as contaminant traps [40]. | Reduce pressure drop increase rate. |

| Tank & Filtration Check | Ensure feedstock tanks have adequate settling time (>24h); verify automatic backwash filter function [40]. | Prevent tank sump carryover to reactors. |

Catalyst Deactivation and Coking

Problem: Unexpected coking deposition despite low Carbon Conradson Residue (CCR ≈ 0.01 wt ppm) in feed.

Investigation & Solution:

| Parameter | Recommendation | Rationale |

|---|---|---|

| Aromatics Management | High aromatics (31.5%) increase coke laydown; optimize hydrogen partial pressure [40]. | Suppresses dehydrogenation pathways leading to coke. |

| Temperature Control | Implement/inter-optimize bed quench strategy to eliminate hot spots [40]. | Prevents localized cracking/dehydrogenation. |

| Catalyst Selection | Use catalysts designed for complex feeds with appropriate metal distribution [41]. | Improves resistance to fouling. |

Nitrogen Compound Inhibition

Problem: Poor hydrodesulfurization (HDS) performance due to competitive adsorption.

Investigation & Solution:

- Basic Nitrogen Compounds: Strongly adsorb to active acid sites, directly inhibiting catalyst activity [40].

- Non-basic Nitrogen Compounds: Compete for active sites, reducing overall HDS efficiency [40].

- Mitigation Strategy: Implement upstream hydrotreating section or specialized catalyst grading to protect primary catalyst beds [40].

Frequently Asked Questions (FAQs)

DoE and Methodology

Q1: What are the key advantages of DoE over OVAT for hydrogenation optimization?

A1: DoE provides superior efficiency and insight generation:

- Factor Interaction Mapping: Identifies synergistic or antagonistic effects between parameters (e.g., temperature-pressure-concentration relationships) that OVAT misses [37].

- Experimental Efficiency: Reduces total number of experiments required by exploring multiple variables simultaneously, accelerating process development [38] [37].

- Predictive Modeling: Enables creation of response surface models for predicting outcomes across the design space and identifying robust operating regions [39] [37].

Q2: How can machine learning enhance traditional DoE approaches?

A2: ML algorithms create powerful synergies with DoE:

- Predictive Optimization: ML models predict reaction outcomes and guide optimization algorithms to identify global optimum conditions with fewer experiments [38].

- Closed-Loop Systems: Enable autonomous experimental platforms that execute DoE campaigns with minimal human intervention [38].

- Pattern Recognition: Uncover complex, non-linear relationships between reaction variables that may not be captured by traditional polynomial models [38] [9].

Safety and Operational Considerations

Q3: What are the primary safety risks in hydrogenation scale-up?

A3: Key risks require systematic management:

- Hydrogen Gas Handling: Highly flammable nature demands precise pressure/temperature control and robust engineering controls [41] [42].

- Catalyst Hazards: Pyrophoric catalysts (e.g., Pd/C, Raney Ni) require special handling and inactivation procedures [41].

- Thermal Decomposition: Adiabatic calorimetry studies show concentrated reagents can undergo exothermic decomposition above 170°C, necessitating strict temperature limits [42].

- Scale-Dependent Effects: Mass transfer limitations become critical at larger scales, potentially creating localized hot spots or runaway reactions [41].

Q4: How can hydrogenation processes be safely intensified?

A4: Intensification strategies balance productivity and safety:

- Continuous Flow Systems: Offer improved heat transfer, smaller reactor volumes, and precise parameter control compared to batch [26].

- Process Analytical Technology: Real-time monitoring (e.g., FT-IR) enables immediate detection of deviations and facilitates self-optimizing systems [26] [37].

- Modular Equipment Design: Laboratory-scale reactors that mimic plant-scale equipment reduce scale-up risk [41].

Experimental Protocols

DoE-Driven Hydrogenation Optimization Workflow

The following diagram illustrates the integrated workflow for DoE-driven hydrogenation optimization:

Detailed Experimental Methodology

Protocol: DoE-Optimized Catalytic Hydrogenation of a Prostaglandin Intermediate [39]

Objective: Minimize Ullmann-type side product formation during catalytic hydrogenation.

Experimental Design:

- Initial Screening: Fractional factorial design to identify significant factors.

- Response Surface Methodology: Central composite design to model curvature and locate optimum.

- Factors Studied:

- Catalyst status (fresh vs. recycled)

- Water content in solvent system

- Hydrogen pressure

- Temperature

- Catalyst loading

- Response Variables: Yield of target intermediate, level of dimer side product.

Materials & Equipment:

| Category | Specific Items | Purpose & Notes |

|---|---|---|

| Reactor System | Parallel pressure reactors (25 mL - 5 L scale) | Enable small-scale condition screening under representative conditions [41]. |

| Catalysts | Pd-based catalysts (Pd/C, PdOH/C); Ru/Rh/Mn/Fe-based alternatives | Determine reaction selectivity and rate; screened in parallel [41]. |

| Process Controls | Design of Experiments software; Temperature/Pressure monitoring systems | Enable systematic parameter exploration and ensure safe operation [41]. |

| Analytical Tools | Inline FT-IR spectroscopy; Online HPLC; GC-MS | Real-time reaction monitoring and product quantification [37]. |

Procedure:

- DoE Setup: Define factor ranges based on preliminary experiments and safety limits.

- Reaction Execution:

- Charge reactor with substrate, catalyst, and solvent system according to DoE matrix

- Purge system with inert gas followed by hydrogen

- Pressurize to target hydrogen pressure (e.g., 10-100 bar)

- Heat to target temperature with continuous stirring

- Monitor pressure drop to track hydrogen consumption

- Reaction Monitoring: Use inline FT-IR to track reaction progress in real-time [37].

- Workup & Analysis:

- Filter to remove catalyst

- Analyze reaction mixture by HPLC/GC against standards

- Quantify main product and key impurities (especially dimer side product)

- Data Analysis:

- Fit experimental data to response surface models

- Identify significant factors and factor interactions

- Determine optimum conditions for validation

Key Findings: Response surface analysis revealed that water content and catalyst status were the dominant factors controlling dimer side product formation, supporting the mechanistic hypothesis of dimer production occurring on the catalyst surface [39].

Essential Research Reagent Solutions

| Category | Specific Items | Function & Application Notes |

|---|---|---|

| Catalysts | Pd/C, PdOH/C, Pt/C, Raney Ni, Wilkinson's Catalyst | Heterogeneous hydrogenation with varying selectivity; Pd/C most common for pharmaceutical applications [41]. |

| Specialty Catalysts | Ru/Rh/Mn/Fe-based catalysts | Alternative metals for specific selectivity requirements or cost considerations [41]. |

| Process Aids | Carbon filtration systems, Scavengers | Catalyst removal and impurity control in final product streams [41]. |

| Safety Equipment | Hydrogen detection systems, Pressure release valves | Essential engineering controls for hazardous gas handling [41]. |

| Analytical Tools | Inline FT-IR spectrometers, Automated sampling systems | Real-time reaction monitoring and kinetic data collection [37]. |

Advanced Methodologies: Integration of DoE with Emerging Technologies

Flow Chemistry and Continuous Manufacturing

The integration of DoE with continuous flow systems represents a paradigm shift in hydrogenation process development:

- Process Intensification: Continuous systems enable precise control of parameters such as residence time, temperature, and pressure, which can be systematically optimized using DoE [26].

- Enhanced Safety: Small reactor volumes minimize inventory of hazardous materials, allowing exploration of more aggressive conditions within the safety envelope [26] [37].

- Real-Time Optimization: Combined with PAT, these systems can implement self-optimizing feedback loops where DoE models are continuously updated based on real-time process data [26] [37].

High-Throughput Experimentation Platforms

HTE systems dramatically accelerate DoE execution for hydrogenation optimization:

- Parallel Reactor Systems: Enable simultaneous testing of multiple catalysts and conditions, generating the comprehensive datasets required for robust DoE models [38] [9].

- Automated Workflows: Integrate liquid handling, reaction execution, and analysis to minimize human intervention and maximize reproducibility [38].

- Data-Rich Experimentation: Generate high-quality data for ML algorithms that can identify complex patterns beyond traditional DoE models [38] [9].

The synergy between DoE, HTE, and continuous flow technologies creates a powerful framework for accelerating pharmaceutical process development while enhancing safety and robustness. This integrated approach represents the current state-of-the-art in hydrogenation optimization for pharmaceutical applications.

Troubleshooting Guides

Troubleshooting Guide: Screening Design Issues

Problem: Inconclusive or Conflicting Main Effects

- Symptoms: Analysis shows several factors with similar, low-impact P-values, or the effect direction for a factor seems inconsistent.

- Potential Cause: High levels of random noise or uncontrolled variables (e.g., ambient humidity, reagent supplier variability) are obscuring the true factor effects [13].

- Solution:

- Re-evaluate Experimental Controls: Identify and standardize procedures for potential noise factors.

- Implement Blocking: If a known nuisance variable exists (e.g., different batches of starting material), use blocking in the experimental design to account for its effect.

- Increase Replication: Adding more replicates for each experimental run can help average out random noise and provide a clearer signal.

Problem: Suspected Significant Interactions Were Confounded

- Symptoms: The model has a good fit, but predictions are inaccurate when changing multiple factors simultaneously. A follow-up experiment yields unexpected results.

- Potential Cause: The screening design used (e.g., a low-resolution fractional factorial) aliased two-factor interactions with each other or with main effects, making them impossible to distinguish [43] [13].

- Solution:

- Perform a 'Fold-over' Design: Augment your original screening design with a second set of runs where the levels of all factors are reversed. This is a standard technique to break the aliasing between main effects and two-factor interactions [13].

- Switch to a Definitive Screening Design (DSD): For the next iteration, consider a DSD, which can estimate main effects and two-factor interactions without complete confounding, though it may require slightly more runs [13].

Problem: The Model Shows Significant Curvature

- Symptoms: Analysis of the model residuals indicates a non-linear relationship, or a central point (a run with all factors at their mid-level) shows a significantly different result than the model predicts.

- Potential Cause: The response to a factor is not linear but curved, which a standard 2-level screening design cannot model [43].

- Solution:

- Add Axial Points: To detect and model curvature, add experimental runs where factors are set to levels between the center and the high/low points. This transitions the design towards a Response Surface Methodology (RSM) design like a Central Composite Design [43] [13].

- Proceed to an RSM Design: If curvature is confirmed, move to the optimization stage using a dedicated RSM design like a Box-Behnken or Central Composite Design [43].

Troubleshooting Guide: Mapping & Optimization Design Issues

Problem: Poor Model Precision or "Inexact" Optima

- Symptoms: The model's prediction intervals are wide, leading to uncertainty about the exact location of the optimal factor settings.

- Potential Cause: Insufficient replication or an overly ambitious design that tries to fit too many model terms with too few experimental runs [43].

- Solution:

- Add Replicate Points: Include replicate runs, especially at the center point, to get a better estimate of pure error. This improves the power of statistical tests and narrows prediction intervals.

- Increase Model Resolution: If the design was a fractional factorial, consider "folding over" or expanding it to a full factorial to de-alias interactions [13]. For RSM designs, ensure an adequate number of axial and center points are included [43].

Problem: Failure to Achieve Predicted Performance at Scale

- Symptoms: The optimized conditions from a small-scale DoE fail to produce the same results when scaled up to pilot or production scale.

- Potential Cause: A key scale-dependent factor (e.g., mixing efficiency, heat transfer rate) was not included as a variable in the original DoE. The model is only valid for the specific scale and equipment it was developed on.

- Solution:

- Include Scale-Dependent Factors Early: In the screening stage, proactively include factors known to be sensitive to scale, even if they are hard to change at the small scale (e.g., by using specialized lab equipment to simulate mixing times).

- Use a Split-Plot Design (SPD): For experiments where some factors are hard to change (like reactor type or scale), an SPD allows for their efficient inclusion, providing a model that is robust across different scales.

Frequently Asked Questions (FAQs)

Q1: I have over 10 potential factors to study. Where should I even begin? Start with a highly fractional design like a Plackett-Burman or a very low-resolution fractional factorial design [13]. These designs are specifically intended to screen a large number of factors with a minimal number of experimental runs, helping you identify the 2-4 most critical factors for further investigation.

Q2: What is the single most common mistake in a Screening DOE? The most common mistake is failing to control for noise and contamination, which can lead to misidentifying insignificant factors as important [13]. Before starting, list all potential sources of variability (e.g., operator, raw material lot, instrument calibration) and implement controls to minimize their impact.

Q3: When should I stop iterating with screening designs and move to optimization? You should move to optimization when you have a small, manageable set of critical factors (typically 3-5), and you have evidence (e.g., from a center point) that the optimal conditions likely lie within the experimental region you are studying, not at its boundary [43].

Q4: My RSM model suggests an "optimum" that is a saddle point or a ridge. What does this mean? This indicates that the system is less sensitive to specific changes in the factors along that ridge. In practice, this can be an advantage, as it provides a range of factor settings that yield similar, near-optimal performance. You can choose the specific settings within this range that are most cost-effective or easiest to control in a manufacturing environment [43].

Q5: How do I validate a model from a Robust Process Optimization study? The gold standard is to run 3-5 additional confirmation experiments at the predicted optimal conditions. The average response from these confirmation runs should fall within the prediction intervals of your model. If it does, you have strong evidence that the model is valid and robust.

Experimental Protocols & Data

Protocol 1: Screening with a Fractional Factorial Design

Objective: To efficiently identify the critical factors (from a list of 5-7) affecting the yield of an organic reaction. Methodology:

- Factor Selection: Define all factors to be investigated (e.g., Catalyst Load, Temperature, Solvent Equivalents, Reaction Time) and assign a high (+1) and low (-1) level to each.

- Design Generation: Use statistical software to generate a Resolution V fractional factorial design. A Resolution V design ensures that no main effect or two-factor interaction is aliased with any other main effect or two-factor interaction [13].

- Randomization: Randomize the order of all experimental runs to mitigate the effects of lurking variables [44].

- Execution: Perform all reactions according to the randomized run order.

- Analysis: Analyze the yield data using linear regression to estimate the main effects and two-factor interactions. A Pareto chart of the standardized effects can visually highlight the most significant factors.

Protocol 2: Optimization with a Central Composite Design (CCD)

Objective: To model the response surface and locate the optimal conditions for reaction yield, focusing on 2-3 critical factors identified from screening. Methodology:

- Design Structure: A CCD is built upon a full factorial or fractional factorial core, augmented with axial (or "star") points and multiple center points [43].

- Axial Points: Axial points are placed at a distance ±α from the center on each factor axis, allowing for estimation of curvature.

- Center Points: Several replicates at the center point are included to estimate pure error and check for model lack-of-fit.

- Execution: Perform all runs in a randomized order.

- Analysis: Fit the data to a second-order polynomial model using regression analysis. The model can be visualized as a 3D surface or contour plot to identify maxima, minima, or saddle points.

Table 1: Comparison of Common DOE Design Types and Their Properties

| Design Type | Primary Stage | Typical Run Number for k Factors | What It Estimates | Key Limitation |

|---|---|---|---|---|

| Plackett-Burman | Screening | k + 1 (for k=11, N=12) | Main effects only | Assumes all interactions are negligible [13] |