Scaling Up Precision: A Comparative Analysis of Scalable Temperature Control Methods for Biomedical Research and Drug Development

This article provides a comprehensive comparative analysis of temperature control methods, with a specific focus on their scalability for biomedical and clinical research applications.

Scaling Up Precision: A Comparative Analysis of Scalable Temperature Control Methods for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive comparative analysis of temperature control methods, with a specific focus on their scalability for biomedical and clinical research applications. It explores foundational principles of precision temperature regulation and examines the transition from traditional PID controllers to advanced AI-driven and model-free adaptive strategies. The content details practical methodologies for implementing these systems in environments ranging from laboratory-scale bioreactors to large-scale industrial processes, addressing common operational challenges and optimization techniques. Through rigorous validation frameworks and comparative performance metrics, the analysis equips researchers and drug development professionals with the knowledge to select, implement, and optimize scalable temperature control systems that ensure experimental integrity, enhance process reliability, and accelerate therapeutic development.

Fundamentals of Scalable Temperature Control: From Physical Principles to System Architecture

The Critical Role of Precision Temperature Control in Biomedical Applications

Precision temperature control is a foundational element in modern biomedical research and drug development, directly determining the success of experimental validity, product safety, and therapeutic efficacy. In temperature-sensitive processes ranging from cell culture and protein characterization to vaccine production and long-term sample storage, even minor thermal deviations can compromise cellular viability, alter reaction kinetics, and invalidate research outcomes. This comparative analysis examines the performance of prevailing temperature control methodologies against emerging advanced strategies. By evaluating these approaches through experimental data and application-specific case studies, this guide provides researchers with the evidence necessary to select appropriately scalable and precise thermal management solutions for their biomedical projects.

Comparative Analysis of Temperature Control Methods

Established Control Methodologies

Traditional temperature control methods remain widely implemented in biomedical laboratories due to their operational simplicity and proven reliability. The on-off controller represents the most basic approach, activating heating or cooling systems when temperatures deviate from a setpoint. While simple and cost-effective, this method results in continuous temperature cycling and relatively wide fluctuations around the desired setpoint [1]. A more refined conventional approach employs Proportional-Integral-Derivative (PID) control, which calculates corrective actions based on the present error (Proportional), the accumulation of past errors (Integral), and the predicted future error (Derivative) [1]. When enhanced with Pulse Width Modulation (PWM), PID controllers deliver power in precise digital pulses rather than analog signals, achieving more stable temperature maintenance. Experimental evaluations using Integral of Absolute Error (IAE), Integral of Square Error (ISE), and Integral of Time-weighted Absolute Error (ITAE) indices demonstrate that PID-driven PWM significantly outperforms basic on-off control, particularly when implemented with DC fans for improved heat distribution [1].

Advanced Data-Driven Control Strategies

Emerging data-driven methodologies represent a paradigm shift in precision temperature management, leveraging artificial intelligence and predictive modeling to achieve unprecedented control accuracy and energy efficiency. Model Predictive Control (MPC) stands out as a particularly advanced strategy that employs a dynamic process model to forecast future system behavior and proactively optimize control actions [2] [3]. Unlike reactive conventional controllers, MPC utilizes weather forecasts, occupancy patterns, and system dynamics to anticipate thermal demands and adjust operations accordingly [3].

A groundbreaking development in this domain is the dual-layer MPC framework, which combines a primary controller establishing nominal trajectories with an ancillary controller that dynamically compensates for uncertainties and disturbances [2]. When implemented in a high-tech greenhouse environment (a relevant analog for many biomedical incubation systems), this approach demonstrated remarkable precision with mean absolute errors of just 0.09°C in winter and 0.10°C in summer, while simultaneously reducing energy consumption by 13.34-20.01% compared to conventional systems [2].

Further advancing this field, Artificial Neural Network (ANN)-based controllers trained via the Levenberg-Marquardt method have exhibited exceptional capability in modeling complex non-linear thermal systems. These networks have demonstrated "remarkable prediction accuracy" with mean squared error values approaching zero when applied to phase change energy storage systems, accurately capturing intricate nonlinear heat transfer dynamics despite complex thermal interactions [4].

Table 1: Performance Comparison of Temperature Control Strategies

| Control Strategy | Temperature Accuracy | Energy Efficiency | Implementation Complexity | Best Suited Applications |

|---|---|---|---|---|

| On-Off Control | ±1.0-2.0°C | Low | Low | Non-critical storage, basic heating baths |

| PID with PWM | ±0.2-0.5°C | Medium | Medium | Bioreactors, chromatography columns |

| Model Predictive Control (MPC) | ±0.1-0.2°C | High (11-20% savings) | High | Vaccine production, sensitive cell cultures |

| Dual-Layer MPC with ANN | ±0.09-0.10°C | Very High (13-20% savings) | Very High | Large-scale pharmaceutical production |

Experimental Protocols and Validation Methodologies

Performance Evaluation Metrics

Rigorous assessment of temperature control systems requires standardized metrics that quantitatively evaluate stability, accuracy, and efficiency. Research institutions typically employ three primary error indices for comparative analysis: Integral of Absolute Error (IAE), which sums the absolute value of error over time and provides a direct measure of total controller deviation; Integral of Square Error (ISE), which squares the error before integration, thereby penalizing larger deviations more severely; and Integral of Time-weighted Absolute Error (ITAE), which multiplies the absolute error by time before integration, emphasizing persistent errors over transient fluctuations [1]. These metrics collectively provide a comprehensive profile of controller performance under dynamic operating conditions.

Complementing these error metrics, thermal distribution analysis evaluates uniformity across the controlled space, a critical factor in applications like bioreactor control and sample incubation. Studies commonly implement K-type thermocouples connected to data acquisition systems (e.g., Agilent 34970A) to simultaneously monitor multiple locations, with circulating fans often deployed to enhance uniformity [1]. The coefficient of performance (COP) serves as the paramount metric for energy efficiency evaluation, particularly when comparing thermoelectric systems against conventional vapor-compression technologies [5].

Validation Case Studies

Bioreactor Temperature Control

Precise thermal management is particularly crucial in bioreactor operations, where temperature directly influences cellular metabolism, product quality, and process reliability. Experimental protocols typically involve jacketed bioreactors connected to precision circulators (e.g., JULABO DYNEO series) with species-specific temperature setpoints [6]. Eukaryotic and prokaryotic cells require tightly controlled environments, as deviations of just 1-2°C can disrupt metabolic pathways, reduce yield, and potentially cause protein denaturation or cell lysis [6]. Validation involves maintaining setpoints between 20-40°C for extended periods while monitoring cell viability and product expression, with regulatory compliance requiring documentation of strict temperature control throughout production and storage [6].

Protein Crystallization Studies

Protein crystallization represents an exceptionally temperature-sensitive process typically conducted between 20°C and 0°C, sometimes extending to -40°C, with critically slow cooling gradients of 0.1-1.0°C per hour to ensure proper crystal formation and purity [6]. Experimental protocols employ incubators or Peltier elements in microfluidic cells for small-scale work, while larger setups utilize jacketed reactors with high-precision circulators. Success validation involves X-ray diffraction quality assessment of the resulting crystals, directly correlating crystal purity and structural integrity with thermal control precision during the crystallization process [6].

Thermoelectric Heat Pump Wall Systems

Innovative Thermoelectric Heat Pump Wall Systems (THPWS) present a promising alternative to conventional HVAC technologies through compact, refrigerant-free thermal management. Experimental analysis involves dual-channel designs with multiple thermoelectric modules, aluminum heat sinks, and inlet fans driving airflow [5]. Validation protocols assess impacts of electrical current (0.1-4.0A), inlet air velocity (0.5-0.9 m/s), and ambient temperature on system performance, including flow fields, heating output, and COP [5]. Numerical simulations solving Navier-Stokes, turbulence, and energy equations are validated against experimental measurements, with studies reporting maximum deviation of 7.4% and average deviation of 3.6% between models and empirical data [5].

Table 2: Experimental Performance Data for Advanced Control Systems

| System/Application | Control Method | Performance Metrics | Experimental Conditions |

|---|---|---|---|

| Greenhouse (Biomedical Analog) | Dual-Layer MPC with ANN | MAE: 0.09°C (winter), 0.10°C (summer); Energy reduction: 20.01% (winter), 13.34% (summer) [2] | 4-day simulation period with system uncertainties |

| Heat Pump System | Data-Driven MPC | 11% energy reduction; 3% SCOP increase; Compressor speed: 46 Hz (MPC) vs 63 Hz (conventional) [3] | Typical winter day, Potsdam test reference year |

| Guarded Hot Box Facility | PID with PWM + DC Fans | Superior performance in IAE, ISE, and ITAE indices vs. on-off control [1] | Ambient temperature: 22.6°C |

| Thermoelectric HP Wall | Dual-channel TE System | Heating load reduction: 61.5% (0.1A), 44.7% (1.0A), 40.3% (4.0A) with velocity increase (0.5 to 0.9 m/s) [5] | Temperature drops up to 29.3°C in hot channel |

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful implementation of precision temperature control requires appropriate selection of both control methodologies and physical hardware components. The following essential materials represent critical elements in biomedical thermal management systems:

Table 3: Essential Research Reagent Solutions for Precision Temperature Control

| Item | Function | Application Examples |

|---|---|---|

| High-Precision Circulators (e.g., JULABO CORIO, DYNEO, MAGIO series) | Provide precise temperature control for jacketed reactors and baths via external circulation [6] | Bioreactor control, chromatography, protein refolding |

| Recirculating Chillers (e.g., JULABO FL Series) | Deliver stable cooling for instrumentation with PID regulation (±0.5°C stability) [6] | HPLC systems, rotary evaporators, vacuum pumps |

| Shaking Water Baths (e.g., JULABO SW Series) | Combine precise temperature control (±0.02°C) with mechanical agitation for sample incubation [6] | Cell culture, enzymatic reactions, solubility studies |

| Optical Fiber Temperature Sensors (FBG, Fabry-Pérot) | Enable minimally invasive temperature monitoring with electromagnetic immunity and small dimensions [7] | Intracellular measurements, MRI environments, miniature bioreactors |

| Thermoelectric Modules | Solid-state heat pumps enabling precise heating/cooling without refrigerants or moving parts [5] | Portable medical devices, point-of-care diagnostics, compact incubators |

| PID with PWM Controllers | Digital control technique delivering power in precise pulses for superior temperature stability [1] | Guarded hot boxes, stability testing chambers, thermal cyclers |

| Data Acquisition Systems (e.g., Agilent 34970A) | Log and convert thermocouple signals for multi-point temperature monitoring and validation [1] | Experimental validation, thermal mapping, compliance documentation |

Technological Workflows and System Architectures

Advanced Control System Architecture

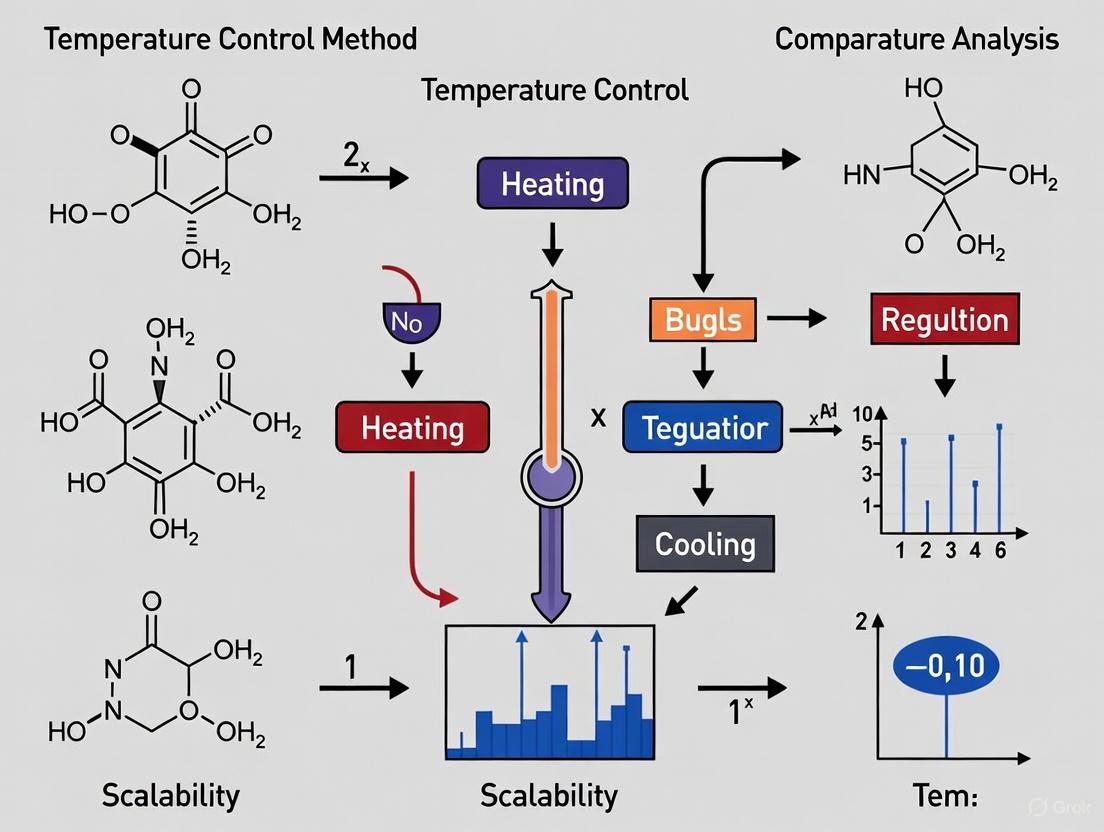

The implementation of data-driven control strategies follows a sophisticated architectural framework that integrates physical systems with computational intelligence. The diagram below illustrates the interconnected components of an advanced model predictive control system:

Biomedical Temperature Control Workflow

Temperature-sensitive biomedical processes require carefully orchestrated sequences of thermal control actions. The workflow below represents a generalized protocol for applications such as protein crystallization or vaccine production:

Precision temperature control represents a critical enabling technology across the biomedical spectrum, from basic research to commercial pharmaceutical production. This comparative analysis demonstrates a clear performance hierarchy among control strategies, with advanced data-driven approaches consistently outperforming conventional methodologies in both accuracy and energy efficiency. The experimental data presented reveals that dual-layer MPC with artificial neural network support can achieve temperature accuracies within ±0.1°C while reducing energy consumption by 13-20% compared to traditional systems [2]. Similarly, PID controllers with PWM techniques demonstrate significantly improved performance over basic on-off control when properly implemented with DC circulating fans [1].

Selection of appropriate temperature control technology must be guided by specific application requirements, with basic storage applications potentially tolerating simpler on-off control, while critical processes like vaccine production and protein characterization demand the precision of advanced MPC or dual-layer control systems. As biomedical applications continue to advance toward miniaturization, point-of-care implementation, and personalized medicine, emerging technologies like thermoelectric systems and optical fiber sensors will play increasingly important roles in providing the precise, scalable thermal management required for next-generation biomedical innovations.

In the context of temperature control methods, scalability refers to a thermal management system's capacity to maintain performance, efficiency, and reliability while adapting to varying thermal loads, physical sizes, and operational conditions. For researchers and scientists, particularly in fields like drug development where precision is critical, understanding scalability is essential for selecting systems that can accommodate evolving research needs, from laboratory-scale prototypes to full-scale production. A scalable thermal management system must effectively handle increases in heat flux density, spatial constraints, and dynamic workloads without compromising temperature stability or incurring disproportionate efficiency penalties. This comparative guide examines scalability metrics and challenges across multiple thermal management technologies, providing a framework for objective evaluation grounded in experimental data and comparative analysis.

Key Scalability Metrics for Comparative Analysis

Evaluating thermal management systems for research applications requires quantifying scalability through specific, measurable parameters. The table below summarizes the core metrics essential for comparative assessment.

Table 1: Key Scalability Metrics for Thermal Management Systems

| Metric Category | Specific Metric | Definition & Significance | Target for Scalability |

|---|---|---|---|

| Thermal Performance | Heat Removal Capacity (W) | Maximum power dissipation per unit or system [8] | Linear scaling with power density |

| Thermal Resistance (K/W) | Temperature difference per unit heat flow [9] | Minimal increase with system size | |

| Temperature Uniformity (°C) | Spatial temperature variation across a system [10] [11] | Maintained homogeneity at larger scales | |

| Energy Efficiency | Coefficient of Performance (COP) | Ratio of heat removed to energy consumed [2] | Maintained or improved at scale |

| Power Usage Effectiveness (PUEcooling) | DCi-specific metric for cooling overhead [11] | Approaches 1.0 (ideal) | |

| Energy Consumption per Heat Unit (kWh/W) | Total energy used per unit of heat managed [12] | Decreases or remains stable | |

| Spatial & Physical | Volumetric/Areal Power Density (W/cm³, W/cm²) | Power dissipation per unit volume/area [9] [11] | Increases with miniaturization |

| Counter-Gravity Performance (W at angle/height) | Heat removal capability against gravity [8] | Maintained across orientations | |

| Operational & Control | Response Time to Thermal Transients | Time to stabilize temperature after a disturbance [2] | Fast response despite increased inertia |

| Part-Load Efficiency | Performance at fractional design loads [12] | High efficiency across load range | |

| Control Stability & Accuracy (°C) | Precision in maintaining setpoint [2] [13] | High precision across operational range |

Comparative Analysis of Thermal Management Technologies

Different thermal management strategies exhibit distinct scalability profiles. The following section provides a comparative analysis of prominent technologies, supported by experimental data.

Table 2: Comparative Scalability Analysis of Thermal Management Technologies

| Technology | Typical Application Scale | Reported Performance Data | Key Scalability Strengths | Key Scalability Challenges |

|---|---|---|---|---|

| Advanced Air Cooling (Rack-Based) | Data Centers (150 kW module) [11] | PUEcooling: 1.28 [11] | - Modular architecture simplifies capacity expansion- Good temperature uniformity (validated by CFD) [11] | - Performance plateaus at very high power densities (>40 kW/rack) [11]- Limited heat flux handling (~100 W/cm²) [9] |

| Microfluidic Cooling | 3D Advanced Semiconductor Packaging [9] | Forecast: Commercial scaling 2026-2036 [9] | - Exceptional heat flux capability (>500 W/cm²) [9]- Enables direct integration into 3D IC stacks | - High manufacturing complexity and cost [9]- Reliability data for large-scale deployment is limited |

| Latent Thermal Energy Storage (LTES) | Residential HP/AC Systems (5 kW unit, 18 kWh storage) [12] | Energy use reduction: 13-20% vs. conventional [12] | - Decouples energy supply from demand, enhancing grid-level scalability [12]- High energy density (per unit volume) | - Dynamic response degraded by compressor modulation at part-load [12]- Control complexity increases with system size |

| Additively Manufactured Heat Pipes | Satellite Electronics (Target: 20 W/pipe) [8] | Demonstrated: 24 W at 0° inclination; 18 W at 15° [8] | - Custom lattice wicks optimize capillary/permability trade-off [8]- Geometric freedom enables embedded, shape-conforming designs | - Mechanical integrity under vibration must be validated for larger arrays [8]- Powder bed fusion process may limit maximum unit size |

| AI-Predictive Control (Blockchain Framework) | Smart Home Zones [13] | Energy reduction: 15.8% vs. traditional thermostat [13] | - Software-based scaling with minimal physical infrastructure- Improves efficiency via predictive load shifting | - Computational overhead for security (blockchain) may limit control frequency [13]- Model retraining required for significant system expansion |

Experimental Protocols for Scalability Assessment

Protocol 1: Flow Field Design for Proton Exchange Membrane Fuel Cells (PEMFCs)

This protocol quantitatively assesses how flow field geometry impacts performance, a key scalability factor for fuel cell stacks [10].

- Objective: To evaluate the impact of six different cathode-side flow field geometries (Designs A-F) on the performance, water management, and thermal homogeneity of a PEMFC with an active area of 25 cm².

- Methodology:

- A three-dimensional computational fluid dynamics (CFD) model was developed to simulate the multi-physics phenomena within the PEMFC.

- The model was experimentally validated by comparing its predictions for temperature and pressure drop against physical measurements from a single cell, achieving a discrepancy of less than 6% for temperature and 4% for pressure drop.

- Key parameters measured included the polarization curve, peak power density, pressure drop, oxygen concentration at the cathode exit, and temperature distribution uniformity index.

- Key Scalability Insight: The optimized flow field (Design E) achieved a 47.08% higher peak power density (0.85 W cm⁻²) than the reference design (0.58 W cm⁻²) [10]. This demonstrates that component-level design optimization is a critical lever for scaling system-level performance, as it directly improves efficiency and thermal management.

Protocol 2: Data-Driven Model Predictive Control (MPC) for Greenhouses

This protocol evaluates a control strategy's scalability by its ability to maintain precision and efficiency under varying climatic conditions [2].

- Objective: To assess the performance of an improved, data-driven Model Predictive Control (MPC) framework for temperature regulation in a high-tech greenhouse, with a focus on handling system uncertainties.

- Methodology:

- An Artificial Neural Network (ANN) was developed using historical greenhouse data to create a dynamic model of the system.

- A dual-layer controller was implemented: a primary controller established the nominal temperature trajectory, and an ancillary controller compensated for predictive model errors and external disturbances (e.g., weather).

- The system was tested over 4-day simulation periods in both winter and summer conditions. Its performance was compared against an existing greenhouse climate system, a deterministic MPC, and a robust MPC.

- Metrics included mean absolute error (MAE) and root mean squared error (RMSE) for temperature control, and total energy consumption.

- Key Scalability Insight: The dual-layer MPC maintained exceptional temperature control (MAE of 0.09°C in winter and 0.10°C in summer) while reducing energy consumption by 20.01% (winter) and 13.34% (summer) compared to the existing system [2]. This shows that intelligent control algorithms can enhance scalability by improving adaptability and efficiency without changes to physical hardware.

Protocol 3: Characterization of Lattice Structures for Additive Heat Pipes

This protocol outlines a material- and structure-level approach to scaling the performance of passive thermal components [8].

- Objective: To identify the optimal lattice structure for use as a wick in an additively manufactured heat pipe by comparing their fluidic and thermal properties.

- Methodology:

- Multiple lattice variants (e.g., L1, L2, L3) with a diamond topology were manufactured from AlSi10Mg using Laser-Based Powder Bed Fusion (PBF-LB/M).

- Capillary Rise Test: Dedicated specimens were used to measure the rate and height of capillary rise of acetone, from which an equivalent pore radius (rp) was computed.

- Permeability Test: The permeability (Kf) of the lattice structures was measured using a specialized experimental setup to quantify how easily fluid can pass through the wick.

- Thermal Performance Test: Full heat pipes with the selected lattices were tested for heat removal capacity (W) at various inclinations and for temperature difference along their length.

- Key Scalability Insight: The study found a trade-off between permeability and capillary performance. Lattice L2 offered a superior balance, enabling a heat pipe that could remove 24 W at 0° inclination and maintain near-isothermal operation at 18 W against a 15° incline [8]. This highlights that optimizing internal microstructure is fundamental to scaling the performance of compact thermal management systems.

Visualizing Scalability Analysis and Challenges

The following diagrams map the core relationships and workflows involved in assessing the scalability of thermal management systems.

Diagram 1: Scalability Assessment Framework

Diagram 2: Experimental Workflow for System-Level Testing

The Scientist's Toolkit: Key Research Reagents and Materials

For researchers designing experiments to evaluate thermal management system scalability, the following materials and tools are essential.

Table 3: Essential Research Reagents and Materials for Scalability Experiments

| Item | Primary Function in Experiments | Specific Application Example |

|---|---|---|

| Phase Change Materials (PCMs) | High-density latent thermal energy storage. | Bio-based PCM with melting point of 9°C for cold storage in HP/AC systems [12]. |

| Advanced Thermal Interface Materials (TIMs) | Reduce thermal resistance between solid surfaces. | Liquid metal, graphene sheets, or indium foil as TIM1/TIM1.5 in 3D semiconductor packaging [9]. |

| Additively Manufactured Lattice Structures | Serve as optimized wicks for capillary-driven fluid return. | AlSi10Mg diamond lattice structures in heat pipes for satellite thermal control [8]. |

| Computational Fluid Dynamics (CFD) Software | Model multi-physics phenomena for system design and scaling predictions. | Predicting temperature distribution and pressure drops in PEMFC flow fields with <6% error [10]. |

| Artificial Neural Network (ANN) Models | Create data-driven predictive models for system control. | Modeling greenhouse dynamics for a dual-layer Model Predictive Control (MPC) system [2]. |

| Wireless Sensor Networks (WSNs) | Enable dense, real-time monitoring of environmental parameters. | Tracking room temperature and radiator activity for AI-powered predictive control in smart homes [13]. |

The scalability of thermal management systems is constrained by several interconnected challenges. Thermal-Physical Coupling is pronounced in 3D integrated circuits, where thinner dies limit lateral heat spreading and inter-die materials with low thermal conductivity create severe thermal bottlenecks [9]. Control System Complexity escalates with system size, as demonstrated in LTES systems where compressor modulation and anti-frost cycles cause significant cooling capacity fluctuations under part-load conditions [12]. Material and Manufacturing Limits are evident in advanced packaging, where trade-offs between TSV density, manufacturing complexity, and defect rates directly impact thermal performance [9].

Future research must focus on co-design and integration strategies. The successful coupling of LTES with HP/AC units requires co-optimized design to avoid performance degradation [12]. Similarly, the transition from 2.5D to 3D semiconductor packaging demands holistic solutions encompassing backside power delivery, advanced TIMs, and microfluidic cooling [9]. For researchers in drug development and other precision-dependent fields, selecting a thermal management system requires careful analysis of these scalability metrics and challenges, with particular attention to the control stability and temperature uniformity essential for reproducible scientific results.

In the domain of temperature control for critical applications such as pharmaceutical development, the selection of a system's methodology is paramount for ensuring efficacy, scalability, and energy efficiency. The core physical principles of heat transfer, thermal inertia, and dynamic response govern the performance of these systems. Static insulation, a traditional mainstay, provides constant thermal resistance but lacks the adaptability to fluctuating environmental conditions or internal heat loads [14]. In contrast, emerging adaptive technologies leverage dynamic thermal properties to optimize performance in real-time.

This guide provides a comparative analysis of three distinct temperature control methods: the conventional static wall, an advanced adaptive building envelope, and a smart air-conditioning control system. The comparison is framed within the context of scalability research, offering scientists and researchers a data-driven foundation for selecting appropriate temperature control strategies for laboratory environments, pilot plants, and large-scale production facilities.

Fundamental Principles

Thermal Inertia and Dynamic Response

Thermal inertia describes a material's inherent resistance to changes in temperature. It is the property that causes a delay in a body's temperature response during heat transfer, effectively acting as a "thermal flywheel" [15]. This phenomenon exists because of a material's dual ability to store and transport heat [15].

In practical terms, materials with high thermal inertia, such as concrete or brick, heat up and cool down slowly. This capacity to store heat and delay its transmission helps moderate indoor temperature swings by attenuating and shifting peak thermal loads [16]. The dynamic response of a system—how quickly it reacts to a change in heating or cooling demand—is intrinsically linked to its thermal inertia. Systems with high inertia respond more sluggishly, while those with low inertia can react more rapidly but may be more susceptible to temperature fluctuations.

A key quantitative property related to thermal inertia is thermal effusivity ((e)), which measures a material's ability to exchange thermal energy with its surroundings. It is defined as: [ e = \sqrt{k \rho cp} ] where (k) is thermal conductivity (W/m·K), (\rho) is density (kg/m³), and (cp) is specific heat capacity (J/kg·K) [15] [17]. A higher effusivity value generally indicates a greater surface-level thermal inertia, meaning the material will feel hotter or colder to the touch for a longer period when exposed to a heat flux.

Heat Transfer in Adaptive Systems

Adaptive temperature control systems move beyond static principles by actively modulating the rate and direction of heat transfer. A prime example is the Heat Pipe-Embedded Wall (HPEW), which can switch between being a highly efficient thermal conductor and a effective insulator [14]. When activated, the heat pipes facilitate rapid phase-change heat transfer, drastically lowering the wall's effective thermal resistance. When deactivated, the system reverts to the innate insulation properties of the wall structure [14]. This capability allows for climate-adaptive building envelopes that can utilize favorable outdoor thermal conditions year-round.

Comparative Analysis of Temperature Control Methods

The following table summarizes the core characteristics, performance data, and scalability of three distinct temperature control approaches.

Table 1: Comparative Performance of Temperature Control Methods

| Feature | Static Insulation Wall (Conventional) | Heat Pipe-Embedded Wall (HPEW) [14] | ANN-Based Smart HVAC Control [18] |

|---|---|---|---|

| Core Principle | Static thermal resistance | Dynamic, reversible heat transfer via phase change | Real-time setpoint optimization using artificial neural networks |

| Operational Mode | Passive, immutable | Switchable between active/passive states | Active, predictive control |

| Typical Application | Building envelopes, basic insulation | Climate-adaptive building envelopes | Building HVAC systems |

| Thermal Resistance | Static (~1.55 (m²·K)/W) | Tunable from 1.55 to 0.04 (m²·K)/W | Not Applicable (System-level control) |

| Dynamic Performance | High thermal inertia, slow response | Rapid thermal response; inner surface temp up to 4.5°C higher in winter and 1.5°C lower in summer vs. conventional | Maintains adaptive comfort range via real-time adjustment |

| Key Experimental Data | Baseline for comparison | Thermal resistance in active mode is 3% of conventional wall | Cooling energy reduction: 8.4–12.4% |

| Scalability for Research | Simple but inflexible | High potential for energy-efficient, climate-adaptive spaces | Highly scalable control logic; requires data and integration |

Experimental Protocols and Methodologies

Protocol for HPEW Dynamic Thermal Performance

The experimental validation of the Heat Pipe-Embedded Wall provides a robust methodology for assessing dynamic thermal systems [14].

- Objective: To quantify the dynamic thermal performance and tunable thermal resistance of a novel HPEW with reversible valves under both constant-power and real-world dynamic conditions.

- Apparatus: A prototype HPEW featuring a symmetric two-phase loop thermosiphon integrated with an intelligent valve group for reversible heat transfer. Data acquisition systems for temperature and heat flux measurement.

- Procedure:

- Constant-Power Heating Tests: Apply a range of constant heat fluxes (25 to 400 W/m²) to the wall surface.

- System Activation: For each power level, activate the heat pipe system to initiate phase-change heat transfer.

- Data Recording: Measure the temperature distribution across the wall and the heat flux through it to calculate the effective thermal resistance in both active and inactive states.

- Dynamic Climate Tests: Expose the wall prototype to typical summer and winter climatic conditions in a controlled environment or field setting.

- Comparative Analysis: Record the inner surface temperature of the HPEW and a conventional wall simultaneously under identical conditions.

- Key Metrics: Thermal resistance (m²·K/W), inner surface temperature differential (°C), and response time to switching events.

Protocol for ANN-Based Setpoint Control

The development of the real-time setpoint control method demonstrates a data-driven approach to system optimization [18].

- Objective: To define an optimum HVAC setpoint temperature that minimizes cooling energy consumption while maintaining indoor temperature within the adaptive comfort range.

- Apparatus: A case study building instrumented with sensors for indoor and outdoor conditions, energy meters, and a building automation system.

- Procedure:

- Data Collection: Gather historical data on indoor temperature, outdoor conditions, and cooling energy consumption from the building.

- Model Development: Train an Artificial Neural Network (ANN) predictive model using the collected data. The model learns to forecast the next hour's indoor temperature and energy consumption based on current indoor/outdoor conditions and setpoint.

- Control Algorithm Implementation: Integrate the trained ANN model into the building's control system. The algorithm uses real-time sensor information as input to the ANN to identify the setpoint temperature for the upcoming hour that minimizes energy use while keeping the indoor temperature within the adaptive comfort band.

- Validation: Deploy the control system and compare energy consumption and occupant comfort surveys against periods of conventional static setpoint operation.

- Key Metrics: Percentage reduction in cooling energy consumption, adherence to adaptive comfort standards (e.g., ASHRAE 55), and occupant satisfaction scores.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential components and their functions in the study of advanced temperature control systems.

Table 2: Essential Materials and Components for Thermal Systems Research

| Item | Function in Research Context |

|---|---|

| Heat Pipes / Thermosiphons | Core element for passive, high-efficiency heat transfer via phase change; enables dynamic thermal resistance in adaptive envelopes [14]. |

| Reversible Valve Systems | Allows control over the direction of heat flow in a thermal circuit, facilitating year-round operation of systems like the HPEW [14]. |

| Artificial Neural Network (ANN) Software | A "black box" predictive model used to forecast system states (e.g., indoor temperature) and optimize control parameters for energy efficiency and comfort [18]. |

| Temperature & Heat Flux Sensors | Critical for empirical data collection; used to validate simulation models and measure real-world performance of prototypes. |

| Data Acquisition System | Hardware and software for collecting, logging, and processing real-time data from multiple sensors during experimental protocols. |

| High Thermal Mass Materials | Substances with high effusivity (e.g., concrete, water) used to provide thermal inertia, dampen temperature swings, and store thermal energy [17]. |

System Comparison and Workflow Visualization

The diagram below illustrates the logical relationship and fundamental operational differences between the three temperature control methods discussed, highlighting their approach to managing environmental thermal loads.

Diagram 1: A comparison of temperature control system operational principles. The diagram shows how each system processes an environmental thermal load through its unique core principle to produce a distinct output.

The comparative analysis reveals a clear evolution from static to intelligent, dynamic temperature control. Static insulation remains a simple, passive solution but offers no adaptability. The Heat Pipe-Embedded Wall represents a significant leap in materials science, providing a tunable building envelope with a experimentally validated, rapid thermal response and significant potential for energy savings in climate-adaptive structures [14]. Conversely, ANN-based smart HVAC control operates at the system level, using data and prediction to optimize energy use without compromising comfort, demonstrating that intelligence can be layered onto existing infrastructure [18].

For researchers in drug development and other fields requiring precise thermal environments, the choice of method depends on the application's specific scalability needs. The HPEW is promising for constructing new, highly efficient laboratory spaces, while ANN-based control offers a path to optimize existing facilities. A hybrid approach, combining adaptive envelopes with intelligent system-level control, likely represents the future of scalable, energy-efficient temperature management in scientific research.

In the pursuit of scalable, efficient, and robust temperature control systems for applications ranging from industrial manufacturing to smart buildings, the choice of architectural paradigm is fundamental. This guide provides a comparative analysis of centralized and distributed control systems, framed within scalability research for temperature regulation. The evaluation is grounded in experimental data and methodologies relevant to researchers and scientists engaged in process optimization and drug development, where precise environmental control is critical [19] [20].

Architectural Comparison: Core Principles and Trade-offs

The fundamental distinction lies in the locus of decision-making and system organization. A Centralized Control System relies on a single control node (e.g., a central server or ground station) that collects global system data, computes control actions, and dispatches commands to all actuators [21] [22]. This traditional hub-and-spoke model simplifies oversight and can achieve global optimality under static conditions. However, it introduces a single point of failure, creates communication bottlenecks as the system scales, and exhibits limited real-time responsiveness to local disturbances [23] [22].

In contrast, a Distributed Control System (DCS) or a Distributed Multi-Agent System (MAS) decentralizes intelligence. Control is allocated to multiple autonomous or semi-autonomous agents (e.g., smart thermostats, UAVs, heat exchanger controllers) that interact with neighbors to achieve a global objective [19] [21]. This paradigm enhances scalability, fault tolerance, and adaptability to dynamic changes, as the failure of one node does not cripple the network and decisions can be made based on local information [22]. The trade-off often involves accepting near-optimal solutions and managing the complexity of coordination protocols [21].

Performance Analysis: Quantitative Comparison

Experimental studies across domains, including central heating and multi-UAV coordination, provide quantitative metrics for comparison. The following table synthesizes key performance data from empirical research.

Table 1: Comparative Performance Metrics of Control Architectures

| Performance Metric | Centralized Control | Distributed (Multi-Agent) Control | Experimental Context & Source |

|---|---|---|---|

| Energy Efficiency | Baseline | 15.8% - 25.27% improvement in energy consumption | Smart home predictive control [24]; User-following heating strategy [19] |

| System Stability under Demand Fluctuation | Low adaptability; supply-demand imbalance | Dynamically adjusts heat distribution; improves stability & coordination | Central heating system simulation under demand fluctuation [19] |

| Response to Topology Change/Fault | Limited ability; system paralysis if center fails | Maintains operation; re-negotiates tasks or heat allocation | Heating system simulation [19]; UAV resilience analysis [21] |

| Scalability (Communication Overhead) | High; scales O(m·n), causing bottlenecks [21] | Low; peer-to-peer communication scales better | Multi-UAV task allocation framework [21] |

| Mission Completion Time / Responsiveness | Potentially optimal but slower in dynamic settings | Faster real-time response; suitable for dynamic environments | UAV task allocation in dynamic settings [21] |

| Implementation & Hardware Cost | Higher cost for central server and complex terminals [23] | Lower cost per node; simpler terminal hardware | Cost comparison of temperature system architectures [23] |

Detailed Experimental Protocols

To contextualize the data in Table 1, below are the methodologies from key cited experiments.

Protocol 1: Evaluating Multi-Agent Control for Central Heating [19]

- Objective: To assess the robustness, stability, and energy-saving effect of a distributed multi-agent consensus algorithm versus traditional centralized control.

- System Model: A mathematical model of a centralized heat supply system was constructed based on a first-order discrete consensus algorithm. Each heat exchange station or user node was modeled as an intelligent agent.

- Simulation Conditions: The system was tested under four scenarios: 1) Supply-demand equilibrium, 2) Heat shortage, 3) Demand fluctuation, and 4) Communication topology change.

- Metrics Collected: Heat distribution efficiency, energy waste, system stabilization time, and coordination quality were measured across conditions.

- Comparison: Outcomes were directly compared against a traditional centralized control method's performance in the same scenarios.

Protocol 2: Framework for Comparing UAV Task Allocation Algorithms [21]

- Objective: To compare centralized and distributed Multi-UAV Task Allocation (MUTA) algorithms in terms of optimality, scalability, and resilience.

- Algorithms Tested: Centralized: Hungarian algorithm, Bertsekas auction algorithm. Distributed: Consensus-Based Bundle Algorithm (CBBA), distributed auction refinement.

- Simulation Environment: Simulations incorporated UAV-specific constraints (flight time, energy capacity, comms range) and dynamic elements like real-time task arrivals and intermittent connectivity.

- Metrics Collected: Mission completion time, total energy expenditure, communication overhead, and resilience to UAV failures were quantified.

- Analysis: Trade-offs between strict optimality (favored by centralized methods in small, static fleets) and scalable, robust coordination (favored by distributed methods in large, dynamic deployments) were analyzed.

Protocol 3: AI-Blockchain Smart Home Temperature Control [24]

- Objective: To evaluate a distributed framework integrating AI prediction and blockchain for secure, efficient temperature control.

- System Design: A wireless sensor network (WSN) collected temperature/radiator data. Machine Learning (ML) algorithms on edge devices predicted heating/cooling needs. Blockchain secured data and managed decentralized energy trading.

- Experimental Setup: The system's predictive scheduling was activated in a smart home environment. Detection accuracy for heating/cooling events and reliability of scheduled triggers were measured.

- Metrics Collected: Energy consumption reduction vs. traditional thermostats, accuracy of event detection (28.5% heat-on, 37.3% cool-down), scheduling reliability (68.4%), and computational load reduction via time-shifted analysis (22%).

- Comparison Baseline: Performance was benchmarked against conventional reactive thermostat control systems.

System Architecture and Workflow Visualization

Diagram 1: Control Architecture Data Flow Comparison (76 chars)

Diagram 2: Distributed System Evaluation Protocol Workflow (74 chars)

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Research Materials for Control System Scalability Experiments

| Item / Solution | Function in Research | Exemplary Use Case / Reference |

|---|---|---|

| Multi-Agent System (MAS) Simulation Platforms (e.g., JADE, MATLAB) | Provides the software environment to model autonomous agents, define interaction rules, and simulate consensus algorithms. | Used for simulating short-term generation scheduling in microgrids and district heating agent models [19]. |

| Deep Operator Networks (DeepONet) / ScaleONet | A deep learning framework for creating scalable, control-oriented surrogate models of complex system dynamics (e.g., building thermal response). | Enables fast, accurate thermal forecasting for large building clusters to train control policies [25]. |

| Programmable Logic Controller (PLC) with DCS Architecture | The hardware core for implementing distributed control in industrial settings; reduces wiring and offers modular, reliable control. | Basis for designing the temperature control system of an industrial sintering furnace with edge computing [20]. |

| Wireless Sensor Network (WSN) Kits | Provides the physical layer for distributed data acquisition, enabling real-time monitoring of temperature, occupancy, etc., across a spatial domain. | Fundamental for data collection in AI-powered smart home temperature control and industrial IoT systems [24] [20]. |

| Model Predictive Control (MPC) Software Toolboxes | Implements advanced predictive control algorithms that optimize future system behavior, crucial for both centralized and distributed optimal control. | Used in centralized heat network control based on load prediction [19]. |

| Blockchain Development Framework (e.g., for Ethereum, Hyperledger) | Enables the implementation of secure, decentralized data ledgers and smart contracts for trustworthy automation in distributed systems. | Integrates with AI for secure data handling and decentralized energy trading in smart home experiments [24]. |

Scaling profoundly influences the dynamics of physical systems, fundamentally altering time delays and making sensor placement not merely a logistical task but a critical component of system design and controllability. In scalable systems, particularly those governed by thermal-hydraulic or advection-dominated processes, the relationship between system size and temporal dynamics is paramount. As systems scale up, transport delays increase, and spatial gradients become more pronounced, which can degrade the performance of control systems and reduce the accuracy of state estimation. This comparative analysis examines temperature control and monitoring methodologies across different scales, from laboratory models to full-scale industrial and research facilities. We objectively evaluate the performance of various sensor placement strategies and scaling frameworks, supported by experimental data, to provide researchers and drug development professionals with validated approaches for managing scale-induced dynamic effects. The findings offer critical insights for applications where precise environmental control is essential, such as in pharmaceutical process development, bioreactor control, and large-scale experimental halls.

Theoretical Framework: Scaling Laws and Time-Delay Emergence

The Finite Similitude Theory for Scaled Systems

Traditional dimensional analysis, while useful, provides limited insight into scale effects. The modern finite similitude theory offers a more robust framework, connecting systems at different scales through a countably infinite number of similitude rules. This theory repurposes scaled experimentation to relate models of different sizes while automatically accounting for all scale effects. The zeroth-order rule captures everything possible with conventional dimensional analysis, but higher-order rules necessitate investigations at multiple scales, giving rise to additional systems of equations that must be solved [26]. This approach provides a practical framework for designing and analyzing mechanical components that operate over a range of sizes, directly representing how system-level scale effects manifest in dynamic responses.

Time-Delay Dynamics in Scaled Systems

Time delays are pivotal components in accurate dynamical system models, representing the transfer of material, energy, or information between subsystems that does not occur instantaneously. In the context of scaling, these delays become particularly significant. As system size increases, several phenomena occur:

- Transport Delay Scaling: In flow-based systems, fluid transit times increase linearly with physical dimension, creating longer delays between actuator action and sensor response.

- Thermal Inertia Effects: The thermal mass of a system scales with volume, while heat transfer often occurs through surfaces that scale with area, creating non-linear relationships in thermal dynamics.

- Control-Induced Delays: In large-scale networked systems, delays arise from sensor latency, data transfer to processors, and actuator execution time, all of which are often magnified in larger systems [27].

These scale-dependent delays are not merely inconveniences; they can fundamentally alter system stability. In traffic flow models, for instance, reaction delays are pivotal in the mechanisms that lead to traffic jams. Similarly, in platooning of autonomous vehicles, eliminating human reaction delay doesn't eliminate the problem but transforms it into one of managing electronic system delays to ensure string stability [27].

Table 1: Scaling Impact on System Dynamics and Time Delays

| System Aspect | Small-Scale Behavior | Large-Scale Behavior | Practical Implications |

|---|---|---|---|

| Transport Delays | Negligible or short | Significant and long | Control systems require longer prediction horizons |

| Thermal Response Time | Fast dynamics | Slow dynamics with significant inertia | Thermal management requires proactive strategies |

| Sensor-Actuator Coordination | Nearly instantaneous | Noticeable latency | Network architecture critically impacts performance |

| Information Propagation | Rapid throughout system | Delayed across domains | Subsystem coordination becomes challenging |

| Stability Margins | Generally robust | Often compromised | Requires specialized control approaches |

Comparative Analysis of Sensor Placement Methodologies

Physics-Driven Sensor Placement Optimization (PSPO)

The Physics-Driven Sensor Placement Optimization (PSPO) method addresses a critical challenge in large-scale systems: determining optimal sensor locations before experimental data is available. This methodology derives theoretical upper and lower bounds of reconstruction error under noise scenarios, proving these bounds correlate with the condition number determined by sensor locations [28].

The PSPO framework employs three key components:

- Physics-Based Criterion: Uses the condition number of the coefficient matrix derived from discretizing the mathematical model as the optimization criterion.

- Genetic Algorithm Optimization: Iteratively improves sensor placement by minimizing the condition number.

- Reconstruction Validation: Validates placements using non-invasive end-to-end models, non-invasive reduced-order models, and physics-informed models [28].

Experimental results demonstrate that PSPO significantly outperforms random and uniform selection methods, improving reconstruction accuracy by nearly an order of magnitude. Importantly, it achieves comparable reconstruction accuracy to data-driven placement optimization methods, despite operating in data-free scenarios [28].

Offline Sensor Placement for Flow Estimation

For advection-dominated flows, an efficient offline sensor placement method leverages time-delay embedding to enrich sensor information. This approach identifies promising sensor positions using solely preliminary flow field measurements with non-time-resolved Particle Image Velocimetry (PIV), without introducing physical probes during the optimization phase [29] [30].

The methodology exploits the principle that in advection-dominated flows, rows of vectors from PIV fields embed similar information to that of probe time series located at the downstream end of the domain. The optimization uses row data from non-time-resolved PIV measurements as a surrogate for data that real probes would capture over time [30]. This approach is particularly valuable for large-scale systems where performing online combinatorial searches to identify optimal sensor placement is often prohibitive due to cost and complexity.

Data-Driven Sensor Placement Framework

For thermal-hydraulic experiments, a comprehensive data-driven framework optimizes sensor placement through three systematic steps:

- Sensitivity analysis to construct datasets

- Proper Orthogonal Decomposition (POD) for dimensionality reduction

- QR factorization with column pivoting to determine optimal sensor configuration under spatial constraints [31]

This framework proved particularly valuable in TALL-3D Lead-bismuth eutectic (LBE) loop experiments, where optical techniques like PIV are impractical, and quantification of momentum and energy transport relies heavily on thermocouple readings [31].

Table 2: Comparative Performance of Sensor Placement Methodologies

| Methodology | Required Data | Computational Load | Optimization Approach | Reported Accuracy Improvement |

|---|---|---|---|---|

| Physics-Driven Sensor Placement Optimization (PSPO) | Mathematical model only | Moderate (Genetic Algorithm) | Condition number minimization | Nearly one order of magnitude over uniform placement [28] |

| Offline Flow Estimation | Non-time-resolved PIV snapshots | Moderate (SVD-based) | Greedy optimization or QR pivoting | Outperforms equidistant positioning and greedy techniques [29] |

| Data-Driven Thermal-Hydraulic Framework | Simulation or preliminary experimental data | High (Sensitivity analysis + POD + QR) | QR factorization with column pivoting | Enables accurate full-field reconstruction with noise robustness [31] |

| Genetic Algorithm-Based Guided Wave | Analytical/numerical models | High (Population-based optimization) | Multi-objective cost function optimization | Effective coverage-complexity trade-off (Pareto front) [32] |

Experimental Protocols and Case Studies

Large-Space Precision Temperature Control

The Jiangmen Experimental Hall case study demonstrates the challenges of precise temperature control (±0.5°C) in large-space buildings with complex thermal disturbances. Researchers employed a 1:38 scaled physical model with Archimedes number similarity to ensure thermal similitude between the scaled model and prototype [33].

Experimental Protocol:

- Construct a geometrically scaled model (1:38) of the large experimental space

- Establish boundary conditions through similarity theory scaling

- Employ unsteady Computational Fluid Dynamics (CFD) with RNG k-ε turbulence model

- Validate numerical model against scaled physical measurements

- Analyze dynamic response characteristics of multiple monitoring points

- Identify optimal sensor placement based on sensitivity and delay metrics [33]

Results revealed that thermal stratification and heat accumulation near the equatorial heating zone and upper-right spherical region caused localized temperature deviations. Through dynamic response analysis, "Monitoring Point B" – located at the cold-hot airflow interface – was identified as optimal, exhibiting the highest temperature fluctuation sensitivity, minimal delay (4.5 minutes), and low system time constant (45-46 minutes) [33].

Offline Sensor Placement for Flow Estimation

Experimental Protocol for Advection-Dominated Flows:

- Conduct a single preliminary experiment with standard non-time-resolved PIV

- Extract rows of vectors from PIV fields as surrogates for probe time series

- Use Extended Proper Orthogonal Decomposition (EPOD) to establish correlations between temporal modes of velocity field and synthetic probe data

- Reconstruct flow fields with different combinations of sensor locations on the downstream edge of the domain

- Identify optimal positioning with highest reconstruction accuracy

- Install physical probes at identified locations and operate simultaneously with PIV for time-resolved field estimation [29] [30]

This protocol successfully avoids the need for multiple experimental runs with different probe configurations, significantly reducing the cost and complexity of optimal sensor placement in large-scale flow systems.

Structural Health Monitoring with Guided Waves

For structural health monitoring of plate-like structures, researchers developed a genetic algorithm-based optimization strategy for sensor placement of guided wave transducers.

Experimental Protocol:

- Define application demands: maximum coverage with sensor-actuator pairs, minimum number of sensors

- Establish operational parameters: minimum distance between sensors based on outer diameter, distance from edges

- Create grid of candidate locations (10×10 as trade-off between thoroughness and computation time)

- Implement multi-objective optimization with scalarized cost function:

cost = -1 × (β × coverage₃/sγ + (1-β) × coverage₁/sδ)where coverage₃ is area covered by ≥3 sensor-actuator pairs, coverage₁ is area covered by ≥1 pair, and s is number of sensors [32] - Validate optimal placement through analytical, numerical, and experimental approaches

This methodology successfully balanced coverage requirements against sensor count constraints, providing a framework applicable to complex structures with non-convex shapes and anisotropic materials.

Research Reagent Solutions: Essential Tools for Scaling and Sensor Placement Research

Table 3: Essential Research Tools for Scaling and Sensor Placement Studies

| Research Tool | Function | Application Context |

|---|---|---|

| Particle Image Velocimetry (PIV) | Non-intrusive flow field measurement | Provides velocity field data for offline sensor placement optimization in fluid systems [29] [30] |

| Proper Orthogonal Decomposition (POD) | Dimensionality reduction technique | Identifies dominant modes in system response for efficient sensor placement [29] [28] [31] |

| Genetic Algorithm (GA) | Heuristic optimization method | Solves NP-hard sensor placement problems through population-based search [28] [32] |

| QR Factorization with Column Pivoting | Deterministic sensor selection | Identifies sensor locations that maximize observability of dominant modes [31] |

| Thermoelectric Heat Pump Wall Systems (THPWS) | Active thermal management technology | Provides precise temperature control in building-scale environments [5] |

| Finite Similitude Framework | Scaling analysis theory | Connects system behavior across different scales while accounting for scale effects [26] |

| RNG k-ε Turbulence Model | Computational fluid dynamics approach | Models complex turbulent flows in large-scale thermal environments [33] |

Visualization of Methodologies and Relationships

Finite Similitude in Scaling Analysis

Scaling Analysis Methodology illustrates how finite similitude theory connects prototype systems with scaled models through mathematical relationships that explicitly account for scale effects, enabling accurate full-scale performance prediction.

Sensor Placement Optimization Workflow

Sensor Placement Workflow shows the systematic process for determining optimal sensor locations, from problem definition through methodology selection, criterion optimization, and experimental validation.

This comparative analysis demonstrates that scaling effects fundamentally alter system dynamics, particularly through the introduction of significant time delays that complicate control and monitoring. The evaluated sensor placement methodologies show distinct advantages for different application contexts. Physics-Driven Sensor Placement Optimization offers robust performance in data-scarce environments, while data-driven approaches provide optimal results when sufficient preliminary data is available. For advection-dominated systems, offline methods using PIV data as proxies for physical sensors present a cost-effective solution.

The experimental protocols and case studies provide validated frameworks for implementing these methodologies across various domains, from large-scale thermal management to structural health monitoring. As systems continue to scale in complexity and size, the integration of these sensor placement strategies with scaling-aware control architectures will become increasingly critical for maintaining performance, stability, and efficiency across domains ranging from industrial processing to pharmaceutical development and energy systems.

Methodologies for Scalable Control: From Traditional PID to AI-Driven Frameworks

In the domain of process control, particularly for temperature regulation in critical applications such as pharmaceutical development, traditional control strategies often prove inadequate when confronted with highly nonlinear processes, significant time delays, and persistent disturbances. Among such challenging systems, the Continuous Stirred-Tank Heater (CSTH) serves as a classical benchmark for evaluating advanced control strategies, representing a category of systems with complex dynamics and inherent instabilities [34]. While conventional Proportional-Integral-Derivative (PID) controllers have been widely applied due to their simplicity and reliability, they frequently fail to deliver optimal performance for highly nonlinear environments, creating a compelling need for more sophisticated control architectures [34] [35].

This comparative analysis examines two advanced control strategies that extend traditional PID control: the Two Degrees of Freedom PID Acceleration (2DOF-PIDA) controller and Cascade Control architectures. The 2DOF-PIDA represents an evolutionary enhancement of the PID algorithm, incorporating an additional degree of freedom to decouple setpoint tracking from disturbance rejection, while the Acceleration term provides improved dynamic response [34]. Cascade Control, conversely, employs a multi-loop architecture where a secondary, faster loop is nested within a primary control loop to address disturbances before they significantly impact the process variable of interest [36] [37]. Within the context of scalable temperature control research for drug development, understanding the comparative performance, implementation complexity, and applicability of these advanced controllers is paramount for designing robust, efficient, and reproducible processes.

Theoretical Foundations and Operational Principles

Two Degrees of Freedom PIDA (2DOF-PIDA) Control

The 2DOF-PIDA controller represents a significant architectural advancement over conventional PID controllers. Its fundamental innovation lies in the decoupling of setpoint tracking (servo response) and disturbance rejection (regulatory response) into two separate degrees of freedom [34] [38]. This separation provides controllers with enhanced flexibility to optimize both performance aspects independently, a capability lacking in single-degree-of-freedom PID controllers where tuning for aggressive setpoint tracking often compromises disturbance rejection performance and vice versa.

The "A" in PIDA denotes an "Acceleration" term, extending the standard Proportional, Integral, and Derivative actions. This additional term enhances the controller's ability to respond to the rate of change of the error derivative, providing superior handling of systems with complex nonlinear dynamics and fast-changing disturbances [34]. In practice, this architecture often incorporates a setpoint filter that modifies the reference signal seen by the primary PIDA controller, effectively shaping the closed-loop response to setpoint changes without affecting its ability to reject load disturbances [38]. For nonlinear temperature control applications such as those found in CSTH systems, this decoupling capability is particularly valuable, as it allows researchers to prioritize either precise reference following or robust disturbance attenuation based on process requirements.

Cascade Control Architecture

Cascade control employs a nested architecture comprising two distinct control loops: an inner secondary loop and an outer primary loop [36] [37]. These loops operate in concert but with different objectives and response characteristics. The inner loop, typically faster and responsible for controlling a secondary process variable, is nested within the outer loop, which controls the primary variable of interest [39]. The output of the primary controller becomes the setpoint for the secondary controller, creating a master-slave relationship that enables early disturbance rejection [36].

For cascade control to function effectively, several critical criteria must be met. The secondary process variable must be measurable, must respond more rapidly to actuator manipulations and disturbances than the primary variable, and must be manipulated by the same final control element [36] [37]. A classic implementation example is a heat exchanger temperature control system, where the outer loop maintains the fluid outlet temperature (primary variable) while the inner loop regulates steam flow rate (secondary variable) [37]. When header pressure disturbances affect steam flow, the inner flow loop initiates corrective action immediately, preventing the disturbance from significantly impacting the outlet temperature [36] [37]. This "early warning" capability forms the core advantage of cascade control, allowing disturbances to be addressed before they propagate through the entire process.

The following diagram illustrates the fundamental architecture and signal flow of a cascade control system:

Cascade Control Architecture with Inner and Outer Loops

Experimental Performance Comparison

Quantitative Performance Metrics

To objectively evaluate the performance of 2DOF-PIDA and Cascade Control architectures against conventional PID controllers, we have compiled experimental data from multiple studies involving temperature control applications, particularly focusing on Continuous Stirred-Tank Heater (CSTH) systems. The table below summarizes key performance indicators including tracking accuracy, disturbance rejection, robustness, and implementation complexity:

Table 1: Comprehensive Performance Comparison of Advanced Control Architectures

| Performance Metric | Conventional PID | Cascade PID Control | 2DOF-PIDA with SFOA |

|---|---|---|---|

| Setpoint Tracking Accuracy | Moderate overshoot (Typical: 10-15%) | Improved stability, reduced overshoot [37] | Superior tracking with minimal overshoot [34] |

| Disturbance Rejection | Slow recovery, significant deviation | Fast rejection via inner loop [36] [39] | Enhanced rejection through decoupled architecture [34] |

| Steady-State Error | Possible with improper tuning | Eliminated through integral action in both loops | Effectively eliminated with optimized parameters [34] |

| Robustness to Nonlinearities | Limited performance in highly nonlinear conditions [34] | Inner loop compensates for some nonlinearities (e.g., valve stiction) [40] | High robustness via metaheuristic optimization [34] |

| Implementation Complexity | Low: Single loop tuning | Moderate: Requires sequential tuning of two controllers [36] [39] | High: Requires optimization algorithms for parameter tuning [34] |

| Hardware Requirements | Standard: 1 sensor, 1 controller | Increased: 2 sensors, 2 controllers [40] [36] | Standard: 1 sensor, 1 controller (advanced computation) |

| Experimental IAE (Disturbance) | Baseline | 40-60% reduction compared to single loop [39] | 55-75% reduction compared to conventional PID [34] |

| Experimental Settling Time | Baseline | 30-50% faster disturbance recovery [37] [39] | 45-65% faster for setpoint changes [34] |

Experimental Protocols and Methodologies

CSTH Temperature Control with 2DOF-PIDA

The experimental validation of the 2DOF-PIDA controller for CSTH temperature regulation employs a metaheuristic optimization approach using the Starfish Optimization Algorithm (SFOA) for parameter tuning [34]. The methodology follows these key stages:

System Identification: Developing a nonlinear mathematical model of the CSTH process based on mass balance, energy balance, and heat transfer equations [34]. The transfer function model is derived using Laplace transforms to represent the dynamic relationship between heater power and tank temperature.

Controller Parameterization: Implementing the 2DOF-PIDA controller structure with separate tuning parameters for setpoint response and disturbance rejection. The acceleration term provides additional capability to handle the CSTH's nonlinear dynamics.

Optimization Framework: Applying SFOA to optimize controller parameters by leveraging its powerful exploration and exploitation capabilities. The optimization objective typically minimizes integrated absolute error (IAE) while maintaining specified robustness margins.

Performance Validation: Comparing the optimized 2DOF-PIDA against conventional methods through simulation studies evaluating tracking accuracy, disturbance rejection, and robustness to model uncertainties [34].

Cascade Control Implementation

The experimental protocol for cascade control system design follows a structured methodology that ensures proper loop interaction and stability [36] [39]:

Inner Loop Design: The secondary controller is tuned first with a focus on rapid disturbance rejection. The inner loop bandwidth is typically set to be 5-10 times faster than the outer loop to ensure effective cascade operation [39].

Outer Loop Design: With the inner loop closed, the primary controller is tuned to regulate the main process variable. The outer loop can be tuned more conservatively as the inner loop handles most disturbances [40] [39].

Performance Evaluation: The complete cascade system is tested for both setpoint tracking and disturbance rejection. In the heat exchanger example, this involves introducing disturbances in steam header pressure and evaluating temperature deviation and recovery time [37] [39].

The workflow below illustrates the comparative experimental methodology for evaluating these advanced control architectures:

Experimental Methodology for Advanced Controller Evaluation

The Researcher's Toolkit: Implementation Essentials

Successful implementation of advanced control architectures requires both hardware components and computational tools. The following table details essential "research reagent solutions" for developing and deploying these control systems in experimental temperature control applications:

Table 2: Essential Research Tools for Advanced Controller Implementation

| Category | Item | Specification/Function | Application Notes |

|---|---|---|---|

| Hardware Components | Temperature Sensors | High-accuracy RTD or thermocouple for primary variable measurement | Critical for cascade control which requires secondary sensor [40] |

| Flow Sensors | For cascade inner loop (e.g., Coriolis flow meters) | Must have fast response time relative to temperature dynamics [36] | |

| Final Control Element | Control valve with precision actuator or solid-state relay | Should exhibit minimal stiction and hysteresis [40] | |

| Data Acquisition System | High-resolution ADC with appropriate sampling rates | Sampling rate should be 10-20x faster than process time constant [34] | |

| Computational Tools | Optimization Toolbox | Implementation of metaheuristic algorithms (SFOA, GA, HBA) | Essential for 2DOF-PIDA parameter tuning [34] |

| System Identification Tools | For developing process models from experimental data | Required for both controller design and simulation [34] | |

| Control Design Software | MATLAB/Simulink, Python Control Systems Library | Cascade design requires proper tools for multi-loop analysis [39] | |

| Implementation Resources | Tuning Guidelines | Methodical procedures for controller parameter adjustment | Systematic inner-then-outer loop tuning for cascade [39] |

| Performance Metrics | Quantitative measures (IAE, ISE, Settling Time, Overshoot) | Enable objective comparison of different control strategies [34] |

This comparative analysis demonstrates that both 2DOF-PIDA and Cascade Control architectures offer significant performance advantages over conventional PID controllers for complex temperature regulation tasks, particularly in demanding applications such as pharmaceutical manufacturing and chemical processing. The 2DOF-PIDA controller with metaheuristic optimization excels in applications where system nonlinearities are pronounced, and where decoupling of setpoint tracking from disturbance rejection provides tangible benefits for process performance. However, this approach demands substantial expertise in optimization algorithms and may involve considerable computational resources for parameter tuning [34].

Conversely, Cascade Control provides a more structured approach to disturbance rejection, particularly when secondary process variables can be measured and controlled effectively. Its ability to address disturbances before they significantly impact the primary output variable makes it invaluable for processes with significant time delays or slow dynamics [36] [37]. While cascade implementation increases hardware requirements and tuning complexity, its conceptual framework remains accessible to practitioners familiar with single-loop PID control [40].

For research in scalable temperature control methods, particularly in drug development contexts where reproducibility and precision are paramount, both architectures warrant consideration. The 2DOF-PIDA approach offers a sophisticated software-based solution that maximizes performance from existing hardware, while cascade control provides a robust hardware-inclusive architecture that physically contains disturbances before they propagate. Future research directions should explore hybrid approaches that combine elements of both architectures and investigate machine learning techniques for autonomous tuning and adaptation of these advanced control strategies in the face of changing process dynamics.

Parameter tuning for control systems represents a significant challenge in process engineering, particularly for complex, nonlinear systems like temperature regulation. Metaheuristic optimization algorithms provide powerful solutions for automatically determining optimal controller parameters, overcoming the limitations of manual tuning methods. Among the numerous available algorithms, Genetic Algorithms (GA) and the more recently developed Starfish Optimization Algorithm (SFOA) have demonstrated notable effectiveness for control applications [41] [42] [43]. This guide provides an objective comparison of these two algorithms, focusing on their application in temperature control systems, to support researchers and engineers in selecting appropriate optimization methods for their specific control challenges.

Starfish Optimization Algorithm (SFOA)

The SFOA is a metaheuristic algorithm inspired by the foraging, predation, and regeneration behaviors of starfish in nature [41] [44]. A key innovation of SFOA lies in its dimension-adaptive search strategy during the exploration phase. For problems with dimensions > 5, it employs a coordinated five-dimensional search mimicking the five-armed structure of starfish, while for dimensions ≤ 5, it utilizes a one-dimensional search pattern [44]. This adaptive approach helps address limitations of other algorithms in processing inseparable functions. During the development phase, SFOA implements predation and regeneration strategies, using a parallel bidirectional search that leverages information from two candidate solutions to encourage movement toward better positions [44].

Recent enhancements to SFOA have incorporated multiple strategies to improve performance:

- Sine chaotic mapping for population initialization to increase diversity [44]

- T-distribution mutation to enhance local search capabilities [44]

- Logarithmic spiral reverse learning to update positions and avoid local optima [44]

Genetic Algorithms (GA)