Screening Designs for Reaction Discovery: A Guide to Efficient Experimentation in Drug Development

This article provides a comprehensive guide to screening designs for researchers, scientists, and drug development professionals.

Screening Designs for Reaction Discovery: A Guide to Efficient Experimentation in Drug Development

Abstract

This article provides a comprehensive guide to screening designs for researchers, scientists, and drug development professionals. It covers the foundational principles of Design of Experiments (DOE) for efficiently identifying critical reaction variables from a large set of candidates. The scope extends to methodological applications, including fractional factorial and Plackett-Burman designs, best practices for troubleshooting and optimizing experimental protocols, and strategies for validating results and comparing different design approaches. By integrating these core intents, the article serves as a strategic resource for accelerating reaction discovery and optimization, particularly in the context of modern, AI-enhanced drug discovery pipelines.

What Are Screening Designs and When to Use Them in Reaction Discovery?

Screening Design of Experiments (DOE) represents a foundational statistical methodology employed in the early stages of research and process development to efficiently identify the most influential factors from a large set of potential variables. In the context of reaction discovery and optimization, where numerous parameters such as temperature, catalyst loading, solvent, and concentration may affect the outcome, screening designs provide a systematic and rigorous framework for separating the "vital few" factors from the "trivial many" [1] [2]. This in-depth technical guide elucidates the core principles, methodologies, and practical applications of screening designs, underscoring their critical role in accelerating scientific research and drug development.

In the development of new synthetic methodology or manufacturing processes, researchers are often confronted with a vast array of potential factors that could influence the desired outcome. Investigating all these factors using a full factorial design, which tests every possible combination of variables, would be prohibitively time-consuming and resource-intensive [1]. Screening designs address this challenge by enabling the efficient and systematic evaluation of a large number of factors in a relatively small number of experimental runs [3].

The primary objective of a screening study is factor selection: to identify which factors have significant main effects on one or more responses, thereby allowing researchers to focus subsequent, more detailed investigations on these critical parameters [3]. This is particularly crucial in reaction discovery research, where the initial "optimization" phase is often a time-consuming part of the project, traditionally performed via non-systematic trial-and-error or one-factor-at-a-time (OFAT) approaches [4]. These traditional methods can fail to identify true optimum conditions, especially when interactions between factors are present, and they often lead to the investigation of only a narrow substrate scope [4]. The power of screening DOE lies in its ability to explore a multi-dimensional "reaction space" efficiently, providing a robust basis for understanding the factors underpinning a new chemical reaction [4].

Core Principles of Screening Designs

The effectiveness of screening designs and their associated analysis methods rests on four key principles that are commonly observed in practice [1]:

- Sparsity of Effects: While a process may have many candidate factors, only a small fraction of them will have a substantial impact on any given response. This principle justifies the screening approach, as it is likely that resources are being wasted on inconsequential variables [1].

- Hierarchy: Lower-order effects (e.g., main effects) are more likely to be important than higher-order effects (e.g., two-factor interactions), which are, in turn, more likely to be important than three-factor interactions. This principle guides the initial model specification [1].

- Heredity: For a higher-order interaction to be significant, it is more likely that at least one of its parent factors (i.e., a main effect or lower-order interaction) is also significant. This principle aids in the interpretation of complex models [1].

- Projection: A well-designed screening experiment can be "projected" into a smaller, follow-up experiment focusing only on the significant factors. This property ensures that the initial screening effort is not wasted and provides a clear path for further optimization [1].

Key Types of Screening Designs

Several statistical designs are commonly used for screening purposes. The choice among them depends on the number of factors to be screened, the experimental budget, and the need to estimate potential interactions between factors.

Table 1: Comparison of Common Screening Designs

| Design Type | Key Characteristics | Optimal Use Case | Strengths | Limitations |

|---|---|---|---|---|

| Plackett-Burman (P-B) | Non-geometric designs; run count is a multiple of 4 (e.g., 12, 20, 24); estimates main effects only [3]. | Screening a very large number of factors when the assumption of negligible interactions is valid. | Highly efficient for main effects; all main effects are estimated with the same precision [3]. | Cannot estimate two-factor interactions; they are confounded (aliased) with main effects [2]. |

| Fractional Factorial | Geometric designs; run count is a power of 2 (e.g., 8, 16, 32) [2]. | Screening a moderate number of factors where some information about interactions is needed. | Allows estimation of main effects and some interactions, depending on the design's resolution [2]. | Higher-resolution designs requiring more runs are needed to clearly separate interactions from main effects. |

| Definitive Screening Design (DSD) | Modern, algorithmic design; requires only one more than twice the number of factors (e.g., 7 factors require 15 runs) [1] [5]. | Screening where curvature or second-order effects are suspected, and some interaction estimation is desired. | Highly efficient; can estimate all main effects, clear two-factor interactions, and detect curvature; robust to the choice of factor ranges [1] [5]. | Limited ability to fully model all quadratic effects compared to a Response Surface Methodology (RSM) design. |

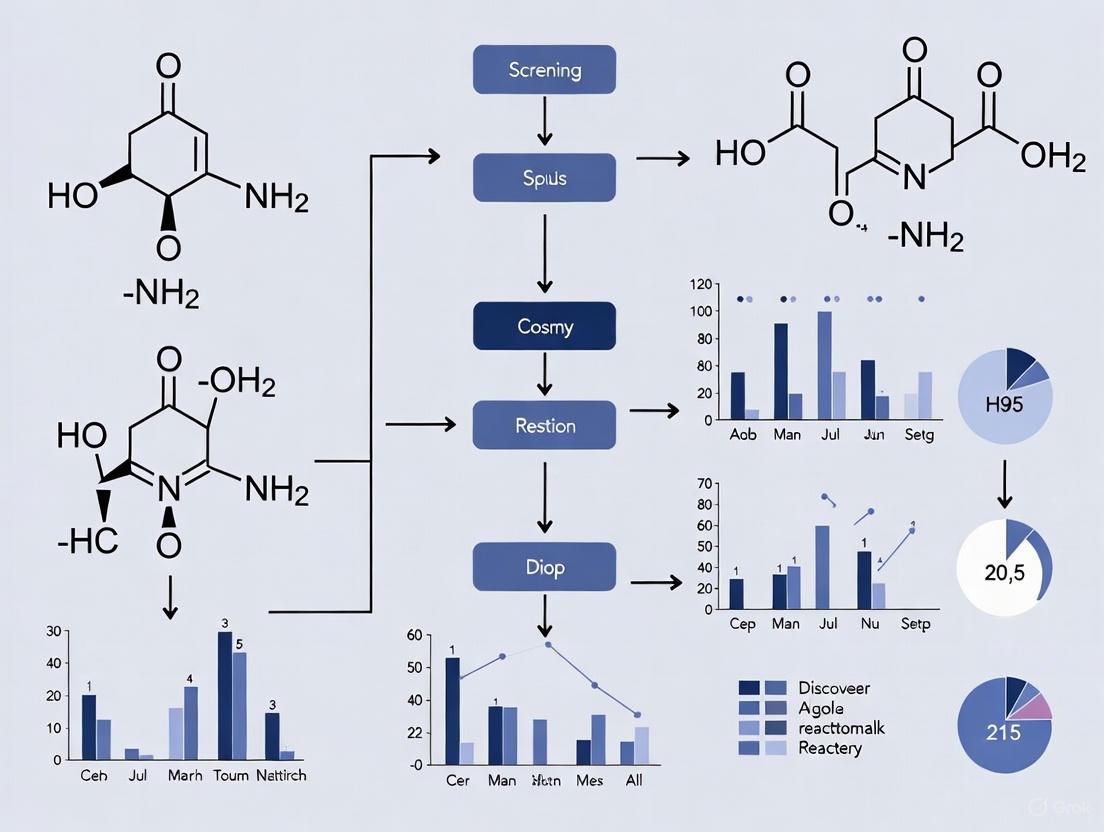

The workflow for implementing a screening design, from planning to application, follows a logical sequence that ensures rigorous and actionable results, as illustrated below.

Experimental Protocols and Methodologies

A Generic Protocol for Screening in Reaction Optimization

The following protocol provides a detailed methodology for applying a screening design to a reaction discovery or optimization project, adaptable to various design types.

- Define the Objective and Response(s): Clearly state the goal of the experiment (e.g., "maximize reaction yield" or "minimize impurity formation"). Identify the quantitative measures (responses) that will determine success [1].

- Identify and Select Factors: Assemble a team to brainstorm all potential factors that might influence the response(s). This includes continuous factors (e.g., temperature, concentration) and categorical factors (e.g., catalyst type, solvent vendor). Based on subject matter expertise, select the factors and their high/low levels for the initial screen. The ranges should be large enough to potentially produce a detectable effect [1] [4].

- Choose a Screening Design: Based on the number of factors (

k) and the available resources (number of experimental runsN), select an appropriate design.- For a quick, main-effects-only screen, a Plackett-Burman or Resolution III fractional factorial design is suitable [3] [2].

- If some information on two-factor interactions is desired and resources allow, a higher-resolution fractional factorial design is preferable [2].

- To efficiently detect curvature and some interactions with a minimal number of runs, a Definitive Screening Design is an excellent modern choice [5].

- Generate the Design Matrix and Randomize: Use statistical software (e.g., JMP, R, or other dedicated packages) to generate the design matrix, which specifies the factor settings for each experimental run [6]. Randomize the run order to protect against the influence of lurking variables and ensure the validity of statistical conclusions.

- Include Center Points (Recommended): Incorporate several replicate experiments where all continuous factors are set at their mid-points. Center points provide three key benefits:

- An estimate of pure experimental error.

- A check for process stability during the experiment.

- A test for the presence of curvature in the response, which may indicate a quadratic effect not captured by a linear model [1].

- Execute the Experiments and Collect Data: Perform the reactions according to the randomized run order, carefully controlling all non-studied variables. Accurately record the response(s) for each run.

- Analyze the Data: Use multiple linear regression or specialized analysis of variance (ANOVA) to fit a model to the data.

- Create Pareto charts or half-normal plots to visually identify the factors that stand out from the noise.

- Assess the statistical significance of each factor using p-values or other measures like the Logworth value [1].

- Interpret Results and Plan Next Steps: Reduce the model by removing statistically insignificant terms. The significant factors identified become the "vital few" for the next round of experimentation, which may involve a more detailed factorial design or an optimization design like Response Surface Methodology (RSM) to find the optimal process settings [1] [3].

Case Study: Optimizing a Mass Spectrometry DIA Method

A study demonstrates the power of a Definitive Screening Design (DSD) to optimize a complex analytical method for identifying crustacean neuropeptides using data-independent acquisition (DIA) mass spectrometry [5]. With seven critical acquisition parameters to optimize, a full factorial approach would have been infeasible. The DSD allowed the researchers to evaluate all seven parameters in just 17 experimental runs.

Table 2: Research Reagent Solutions for DIA Method Optimization

| Item / Parameter | Function / Description | Levels Tested in DSD |

|---|---|---|

| m/z Range | Defines the precursor mass-to-charge range for fragmentation. | 400, 600, 800 m/z (from a base of 400 m/z) [5] |

| Isolation Window Width | Width (in m/z) of each fragmentation window. Affects spectral complexity. | 16, 26, 36 m/z [5] |

| Collision Energy (CE) | The energy applied to fragment precursor ions. | 25, 30, 35 V [5] |

| MS2 Maximum Ion Injection Time (IT) | Maximum time spent accumulating ions for MS/MS scan. | 100, 200, 300 ms [5] |

| MS2 Target AGC | Automatic Gain Control target value for MS/MS scans. | 5e5, 1e6 (categorical) [5] |

| MS1 Scans per Cycle | Number of MS1 scans collected per instrument cycle. | 3, 4 (categorical) [5] |

| Library-Free Software | Data analysis tool that deconvolutes DIA spectra without a pre-existing spectral library, crucial for discovering novel peptides [5]. | N/A |

Results and Impact: The DSD analysis identified several parameters with significant first- and second-order effects. The model predicted optimal parameter values, which, when implemented, resulted in the identification of 461 peptides—a substantial improvement over the 375 and 262 peptides identified through standard data-dependent acquisition (DDA) and a previously published DIA method, respectively. This case highlights how a screening DOE can optimize a multi-parameter system with limited experimental resources, leading to a superior methodological outcome [5].

The Scientist's Toolkit: Implementation Guide

Successfully implementing a screening DOE requires careful planning and the right tools. The following table outlines key considerations and resources for researchers.

Table 3: Essential Considerations for Implementing Screening DOE

| Aspect | Guidance |

|---|---|

| Software | Modern statistical software (e.g., JMP, R, Minitab, Python with relevant libraries) is essential for designing experiments and analyzing the resulting data. These tools automate the complex statistical calculations and provide intuitive visualization of results [6]. |

| Resource Planning | The number of factors to be screened must be balanced against the available experimental budget (time, materials, cost). Screening designs are chosen specifically when this budget is constrained [1] [6]. |

| Design Selection | The choice of design (e.g., Plackett-Burman, Fractional Factorial, DSD) depends on the number of factors, the need to detect interactions, and the suspicion of curvature. There is no one-size-fits-all solution, and the selection should be guided by the experimental objectives [7]. |

| Avoiding Pitfalls | A key pitfall is ignoring the "confounding" or "aliasing" structure of a design. In Resolution III designs, for example, main effects are confounded with two-factor interactions. If a significant effect is found, it is crucial to determine whether it is a true main effect or the result of a confounded interaction in a subsequent experiment [2]. |

Screening Design of Experiments is an indispensable methodology in the toolkit of modern researchers and drug development professionals. By providing a structured and highly efficient framework for identifying the critical few factors that drive process outcomes, screening DOE enables a more focused and effective research strategy. Moving beyond the outdated and inefficient one-factor-at-a-time approach, it empowers scientists to rapidly characterize complex systems, optimize reaction conditions, and accelerate the pace of discovery. As the case studies in reaction optimization and analytical method development illustrate, the rigorous application of screening designs directly translates to enhanced performance, reduced costs, and deeper fundamental understanding, solidifying its role as a cornerstone of efficient R&D.

In the realm of reaction discovery research, efficiently identifying critical factors from a vast set of possibilities is a fundamental challenge. Screening designs, a specialized class of designed experiments, provide a powerful methodology for this purpose. The underlying properties sought in an ideal screening design are effectively summarized by three core principles: effect sparsity, effect hierarchy, and effect heredity [8]. These principles serve as guiding assumptions that help researchers navigate complex experimental spaces, allowing them to focus limited resources on the most significant factors and interactions. Within the context of a broader thesis on screening methodologies for reaction discovery, understanding these principles is paramount for designing efficient experiments that accelerate innovation in drug development and material science.

The Three Core Principles

Detailed Explanation of Each Principle

Effect Sparsity: This principle posits that in any factorial experiment, only a small fraction of the potential effects (factors and their interactions) are truly significant; the majority are negligible and can be considered random noise [8]. This is particularly relevant in early-stage reaction discovery where researchers may investigate dozens of factors simultaneously—such as catalyst type, temperature, solvent, and concentration—with the expectation that only a few will have a substantial impact on the reaction outcome, such as yield or purity. The principle justifies the use of highly fractional factorial designs, as it allows researchers to screen a large number of factors with a relatively small number of experimental runs.

Effect Hierarchy: This principle states that main effects (the primary influence of a single factor) are generally more likely to be important than second-order interaction effects (the combined influence of two factors), which in turn are more likely to be important than third-order interactions, and so on [8]. Furthermore, effects of the same order are considered equally likely to be important. In practice, this means that when resources are limited, the search for significant effects should prioritize main effects and lower-order interactions. For a researcher optimizing a synthetic pathway, this principle suggests that identifying the key reagents (main effects) is typically more crucial than understanding complex, multi-factor interdependencies in the initial screening phases.

Effect Heredity: This principle provides a rule for interpreting interactions. It states that for an interaction effect to be considered significant, at least one of its parent main effects must also be significant [8]. For example, a significant temperature-solvent interaction is unlikely to exist if neither temperature nor solvent has a significant main effect. This principle helps to constrain the model selection process, ruling out models with complex interactions that lack support from simpler effects, thereby enhancing the model's interpretability and physical plausibility.

Interrelationship and Practical Implications

These principles are not mutually exclusive but are deeply interconnected. Effect hierarchy guides the initial design of a screening experiment, leading to the selection of a resolution that prioritizes the estimation of main effects. Effect sparsity then simplifies the subsequent statistical analysis, as the researcher can focus on identifying a small subset of active effects from a potentially large set of possibilities. Finally, effect heredity acts as a logical filter during model building, ensuring that the final statistical model is both parsimonious and scientifically coherent. Collectively, they form a philosophical and practical foundation for efficient empirical inquiry in complex systems.

Quantitative Data and Experimental Analysis

The following table synthesizes quantitative data and findings from studies that have applied or validated these core principles in various contexts.

| Study / Method | Application Context | Key Finding Related to Core Principles | Impact on Factor Identification |

|---|---|---|---|

| Factor-Effect Bayesian Quantile Regression (FEBQR) [9] | Reliability improvement with unknown lifetime distribution. | Integration of effect sparsity, weak effect hierarchy, and effect heredity via factor indicator variables. | Provides more accurate factor identification, especially with small sample sizes and censored data. |

| Hierarchical Selection in Genetic Studies [10] | Selection of gene-environment interaction (GEI) effects. | A GEI effect is selected only if the corresponding genetic main effect is also selected (Hierarchical Heredity). | Increases statistical power and model interpretability; reduces false positives for GEI effects. |

| Traditional Screening Designs [8] | General factorial experiments in process and product design. | Empirical evidence supports that these principles are reasonable assumptions for guiding experimentation. | Underpins the effectiveness of fractional factorial designs and the custom design platform for screening. |

Detailed Experimental Protocol: FEBQR Method

The Factor-Effect Bayesian Quantile Regression (FEBQR) model presents a modern methodology that formally integrates the core principles into a statistical framework for reliability analysis, which is analogous to reaction optimization [9]. The protocol is as follows:

- Problem Formulation: The goal is to identify significant factors affecting a response variable (e.g., reaction yield, product lifetime) when its underlying distribution is unknown.

- Model Construction:

- A set of novel factor indicator variables is constructed. These variables are designed to incorporate the principles of effect sparsity, weak effect hierarchy, and effect heredity directly into the model's structure.

- The model extends the Bayesian quantile regression framework. It describes the relationship between a specific percentile (e.g., the 50th percentile or median) of the response variable's distribution and the experimental factor effects. The general form of the model for the τ-th quantile is:

t_ij = Q_τ(t_ij | x_ik) + ε_ij = X'_ik β_τ + ε_ijwheret_ijis the observed response for the j-th sample under the i-th treatment combination,Q_τis the conditional quantile function,X_ikrepresents the factor settings, andβ_τare the parameters to be estimated [9].

- Parameter Estimation: The unknown parameters, including the factor indicator variables and the coefficients in

β_τ, are estimated using a Gibbs sampling algorithm. This is a Markov Chain Monte Carlo (MCMC) method that generates samples from the posterior distribution of the parameters. - Significant Factor Identification: Based on the posterior mean of the factor indicator variables, significant main effects, interaction effects, and higher-order effects are identified. A factor is deemed significant if its associated indicator provides strong posterior evidence.

Visualization of Principles and Workflows

Logical Framework of Core Principles

The following diagram illustrates the logical relationship and workflow between the three core principles within a typical screening design process.

Hierarchical Selection Workflow

This diagram details the specific process of hierarchical selection, a direct application of the effect heredity principle, as used in genetic studies and other fields.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key computational and methodological "reagents" essential for implementing experiments based on the core principles of sparsity, hierarchy, and heredity.

| Tool/Reagent | Function/Description | Relevance to Core Principles |

|---|---|---|

| Fractional Factorial Designs | Experimental designs that test a carefully chosen fraction of all possible factor combinations. | Directly exploits Effect Sparsity and Hierarchy to screen many factors efficiently [8]. |

| Bayesian Quantile Regression (BQR) | A distribution-free statistical modeling technique that relates factors to specific percentiles of the response. | Provides a flexible framework for analysis when Effect Sparsity is assumed but the response distribution is unknown [9]. |

| Factor Indicator Variables | Binary variables in a model that act as gates, turning effects "on" or "off". | The primary mechanism for formally integrating Sparsity, Hierarchy, and Heredity into a statistical model like FEBQR [9]. |

| Gibbs Sampling | A Markov Chain Monte Carlo (MCMC) algorithm used for estimating complex posterior distributions. | Enables estimation of parameters in sophisticated models (e.g., FEBQR) that incorporate the core principles [9]. |

| Composite Absolute Penalty (CAP) | A regularization penalty used in variable selection models. | Used to enforce Hierarchical Heredity in models, ensuring interactions are only selected with their parent main effects [10]. |

| Custom Design Algorithms | Computer-based algorithms for generating optimal experimental designs for given objectives and constraints. | Allows researchers to build screening designs with these principles as underlying assumptions, optimizing for the estimation of main effects [8]. |

In the field of reaction discovery and process development, researchers frequently encounter a common challenge: an overwhelmingly large number of potential variables that can influence reaction outcomes. These variables include catalysts, ligands, solvents, temperature, concentration, and other parameters that collectively create a multidimensional optimization space. Screening provides a systematic approach to navigate this complexity, enabling scientists to efficiently identify promising regions of chemical space for further investigation. Within the broader context of screening designs for reaction discovery research, this guide examines the strategic implementation of screening methodologies to accelerate the identification of viable reaction pathways and optimal process conditions. By employing well-designed screening strategies, researchers can transform the daunting task of exploring vast experimental landscapes into a manageable, data-driven process that maximizes resource efficiency while minimizing blind alleys.

The critical importance of screening in modern chemical research is underscored by its central role in major scientific advances. Nobel Prize-winning work on asymmetric hydrogenation and oxidation reactions relied heavily on empirical screening approaches [11]. Similarly, industrial process development for pharmaceuticals such as Sitagliptin utilized both transition metal catalysis and biocatalysis screening, with both approaches receiving Presidential Green Chemistry Challenge awards [11]. These successes highlight how strategic screening methodologies can lead to transformative advances in synthetic chemistry.

Screening Methodologies in Reaction Discovery

Biomacromolecule-Assisted Screening

Biomacromolecule-assisted screening methods leverage the inherent molecular recognition capabilities of biological macromolecules to provide sensitive and selective readouts for reaction discovery and optimization. These approaches capitalize on the chiral nature of enzymes, antibodies, and nucleic acids to sense product stereochemistry and binding events [11].

Enzymatic sensing methods typically yield UV-spectrophotometric or visible colorimetric readouts, enabling rapid detection of reaction products. For instance, the in situ enzymatic screening (ISES) method has been employed to discover novel transformations such as the first Ni(0)-mediated asymmetric allylic amination and a new thiocyanopalladation/carbocyclization transformation where both C-SCN and C-C bonds are formed sequentially [11].

Antibody-based sensors provide alternative detection mechanisms, typically generating direct fluorescent readouts upon analyte binding or employing cat-ELISA (Enzyme-Linked ImmunoSorbent Assay)-type readouts. This approach has proven valuable in identifying new classes of sydnone-alkyne cycloadditions [11].

DNA-based screening methods offer unique advantages through templation effects that facilitate reaction discovery by converting bimolecular reactions into pseudo-unimolecular formats. The DNA-encoded library (DEL) technology allows barcoding of reactants, enabling screening of billions of compounds in a single experiment. This method has been instrumental in uncovering oxidative Pd-mediated amido-alkyne/alkene coupling reactions [11]. The sensitivity of DEL screening depends heavily on selection coverage, as insufficient sequencing depth can obscure useful ligands, potentially causing researchers to miss critical hits for drug discovery programs [12].

High-Throughput Experimentation (HTE) and Automation

Modern high-throughput screening incorporates automation, miniaturization, and sophisticated software algorithms to dramatically increase throughput and accuracy. HTS enables the rapid testing of numerous compounds against biological targets throughout the entire drug development path, from initial discovery to process development [13].

Key technological advances in HTS include:

- Automated liquid-handling robots that work with extremely low volumes of reagents

- Multi-plate handling systems integrated with incubators, centrifuges, and imagers

- Miniaturized platforms like microfluidics and nanodispensing

- High-content imaging and automated electrophysiology for multi-parametric cellular data [13]

Advanced detection methodologies have significantly expanded HTS capabilities:

- Affinity selection mass spectrometry (ASMS)-based platforms including self-assembled monolayer desorption ionization (SAMDI)

- Target protein degradation (TPD) and molecular glue platforms

- CRISPR-based functional screening for elucidating biological pathways in disease processes [13]

For process development, HTS helps scientists rapidly evaluate different synthetic routes and optimal chemical combinations, including solvents, catalysts, and bases. This approach is particularly valuable during early stages of candidate development when synthetic pathways remain flexible [13].

Computational and Machine Learning Approaches

Computational screening methods have emerged as powerful tools for guiding experimental efforts, significantly reducing the experimental burden associated with reaction screening. The artificial force induced reaction (AFIR) method uses quantum chemical calculations to screen for viable reaction pathways computationally before laboratory verification [14].

Active machine learning approaches iteratively select maximally informative experiments from all possible experiments in a domain, dramatically reducing the number of experiments required. This method is particularly effective when datasets are heavily skewed toward low- or zero-yielding reactions, potentially achieving very low test set errors with minimal experimental effort [15].

Integrated computational and experimental workflows have demonstrated remarkable success in reaction discovery. Researchers at the Institute for Chemical Reaction Design and Discovery (ICReDD) in Japan used computational simulations to suggest previously unimagined three-component reactions involving difluorocarbene molecules, leading to the development of 48 new reactions that produce compounds potentially useful for novel drug development [14]. This approach successfully addressed the challenging transformation of breaking the aromatic electron system in pyridine molecules to attach fluorine atoms at previously inaccessible positions [14].

Table 1: Comparison of Screening Methodologies in Reaction Discovery

| Methodology | Key Features | Applications | Throughput | Information Content |

|---|---|---|---|---|

| Biomacromolecule-Assisted | High sensitivity and selectivity; chiral recognition | Asymmetric reaction discovery; catalyst optimization | Medium | Product chirality; binding affinity |

| High-Throughput Experimentation | Automation; miniaturization; multiple detection modes | Compound library screening; process optimization | Very High | Multiple parameters simultaneously |

| Computational Screening | In silico prediction; minimal experimental resources | Reaction pathway discovery; variable space mapping | Highest | Reaction mechanisms; transition states |

| Active Machine Learning | Iterative experimental design; maximal information gain | Reaction optimization; catalyst screening | High (focused) | Predictive models with uncertainty |

Experimental Protocols and Workflows

Biomacromolecule-Assisted Screening Protocol

Objective: To discover and optimize catalytic reactions using biomacromolecular sensors for product detection and enantioselectivity determination.

Materials:

- Purified enzymes, antibodies, or nucleic acid sequences appropriate for target analyte

- Potential catalyst libraries (metal complexes, organocatalysts, etc.)

- Substrates for the reaction of interest

- Appropriate buffers and solvents

- Microplates (96-well or 384-well format)

- Plate reader capable of absorbance, fluorescence, or luminescence detection

Procedure:

- Sensor Preparation: Select and characterize the biomacromolecular sensor based on the anticipated reaction product. For enzymatic sensors, this may involve monitoring cofactor conversion (e.g., NADH to NAD+). For antibody-based sensors, immobilize the capture antibody on microplate wells.

- Reaction Setup: In a 96-well or 384-well microplate, set up reactions containing potential catalysts, substrates, and necessary additives in appropriate solvents. Include positive and negative controls.

- Reaction Execution: Allow reactions to proceed under specified conditions (temperature, time, atmosphere).

- Product Detection:

- For enzymatic detection: Add enzyme solution and monitor spectrophotometric changes.

- For cat-ELISA: Transfer reaction mixture to antibody-coated plates, add detection antibody, then enzyme-conjugated secondary antibody and substrate.

- For DNA-encoded screening: Amplify and sequence DNA barcodes to identify successful reactions.

- Data Analysis: Quantify reaction outcomes based on signal intensity. For enantioselectivity determinations, use chiral sensors or competition experiments.

Troubleshooting:

- Low signal-to-noise: Optimize sensor concentration or reaction time.

- High background: Include additional wash steps or adjust blocking conditions.

- Poor reproducibility: Ensure consistent mixing and temperature control.

High-Throughput Screening Workflow for Process Optimization

Objective: To rapidly identify optimal process conditions for a chemical reaction by testing multiple variables simultaneously.

Materials:

- Automated liquid handling system

- Library of potential catalysts, ligands, solvents, and additives

- Stock solutions of substrates

- Microtiter plates appropriate for reaction conditions

- High-throughput LC-MS, GC-MS, or other analytical systems

- Data analysis software with visualization capabilities

Procedure:

- Experimental Design: Define the experimental space using statistical design of experiments (DoE) principles. Identify key variables and their ranges.

- Reaction Assembly: Use automated liquid handlers to dispense catalysts, ligands, solvents, and additives into microtiter plates according to the experimental design.

- Reaction Initiation: Add substrates to initiate reactions, maintaining appropriate temperature control.

- Quenching and Analysis: After specified reaction times, quench reactions and analyze using high-throughput analytical methods.

- Data Processing: Automate data extraction and processing. Apply statistical analysis to identify significant factors and interactions.

- Hit Validation: Confirm promising conditions in scaled-up experiments.

Key Considerations:

- Miniaturization reduces reagent consumption but may introduce mass/heat transfer limitations.

- Ensure adequate controls for normalization between plates.

- Consider using standardized compound libraries to exclude problematic chemotypes [13].

Computational Screening and Experimental Verification Protocol

Objective: To use computational methods to identify promising reactions followed by experimental verification.

Materials:

- Quantum chemistry software (e.g., Gaussian, ORCA)

- Computational resources adequate for the screening scale

- Laboratory equipment for synthetic chemistry

- Standard analytical instruments (NMR, LC-MS, etc.)

Procedure:

- Reaction Selection: Define the scope of potential reactions and components to screen computationally.

- Quantum Chemical Calculations: Use methods such as AFIR to simulate reaction pathways and evaluate thermodynamic and kinetic parameters.

- Virtual Screening: Rank potential reactions based on computed feasibility, selectivity, and other relevant parameters.

- Experimental Verification: Select top candidates from computational screening for laboratory testing.

- Mechanistic Studies: For successful reactions, perform additional computations to understand mechanism and guide optimization.

- Reaction Scope: Explore substrate scope and functional group compatibility for promising reactions.

Application Example: The discovery of difluorocarbene-based three-component reactions for alpha-fluorination of N-heterocycles began with computational screening of various unsaturated molecules, followed by targeted experimental verification and optimization [14].

Visualization of Screening Workflows

Biomacromolecule-Assisted Screening Workflow

Biomacromolecule-Assisted Screening Workflow

Integrated Computational-Experimental Screening

Integrated Computational-Experimental Screening

High-Throughput Screening Decision Pathway

High-Throughput Screening Decision Pathway

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Screening Applications

| Reagent/Material | Function | Application Examples | Considerations |

|---|---|---|---|

| DNA-Encoded Libraries (DEL) | Barcoding of reactants for multiplexed screening | Hit identification for drug discovery; reaction discovery | Selection coverage critical for detecting weak ligands [12] |

| Enzyme Libraries | Biocatalytic screening; enzymatic detection | Asymmetric synthesis; enzymatic sensors | Thermostability; solvent tolerance; substrate specificity [11] |

| Catalyst Libraries | Variable ligand-metal complexes | Transition metal catalysis optimization | Structural diversity; stability under reaction conditions |

| Fragment Libraries | Low molecular weight starting points | Fragment-based drug discovery | Complexity; three-dimensionality [13] |

| Specialized Solvents | Solvation properties; reaction medium | Solvent screening for process optimization | Green chemistry principles; viscosity; boiling point |

| CRISPR-Modified Cell Lines | Functional screening of biological pathways | Target validation; phenotypic screening | Specificity; off-target effects [13] |

| Affinity Selection Mass Spectrometry (ASMS) | Label-free detection of binding events | Protein-protein interactions; RNA binders | Throughput; sensitivity [13] |

Screening methodologies represent indispensable tools for navigating the complex landscape of potential process variables in reaction discovery and optimization. The strategic implementation of biomacromolecule-assisted screening, high-throughput experimentation, and computational approaches enables researchers to efficiently explore vast parameter spaces that would otherwise be prohibitive to investigate systematically. As screening technologies continue to advance through improvements in automation, miniaturization, and data analysis, their application throughout the drug discovery and process development pipeline will undoubtedly expand. The integration of machine learning and artificial intelligence with experimental screening promises to further enhance the efficiency of these approaches, creating a future where reaction discovery and optimization become increasingly predictive and deterministic. By thoughtfully selecting and implementing appropriate screening strategies based on the specific research context and available resources, scientists can dramatically accelerate the journey from conceptual chemistry to practical processes.

In reaction discovery research, efficiently identifying critical factors that influence chemical outcomes is paramount. Design of Experiments (DOE) provides a structured methodology for this purpose, with Screening DOE and Full Factorial DOE representing two fundamental approaches with distinct trade-offs. Screening DOE serves as an efficient tool for rapidly identifying the most significant process variables from a large set of candidates, making it invaluable during early exploratory phases [16]. In contrast, Full Factorial DOE provides a comprehensive investigation of all possible factor combinations, delivering complete information on main effects and interaction effects but at a significantly higher experimental cost [16]. For researchers in drug development facing complex reaction spaces with numerous potential factors, understanding this balance is crucial for allocating resources effectively and accelerating the discovery pipeline.

Core Conceptual Comparison

The fundamental difference between these approaches lies in their experimental philosophy. Screening DOE uses a carefully selected subset of runs from a full factorial design, creating a fractional factorial structure that sacrifices information about interactions to achieve efficiency [16]. This makes it ideal for the initial phase of investigation when the number of potential factors is large, and the primary goal is to separate the vital few influential factors from the trivial many.

Full Factorial DOE, by testing every possible combination of factor levels, provides a complete picture of the experimental space. This comprehensive data allows for precise estimation of all main effects and all interaction effects between factors, which is critical when factors may influence each other in complex ways [16].

Table 1: Quantitative Comparison Between Screening and Full Factorial DOE

| Characteristic | Screening DOE | Full Factorial DOE |

|---|---|---|

| Primary Purpose | Identify significant main effects from many factors [16] | Characterize all main effects and interactions [16] |

| Experimental Runs | Fewer runs; highly efficient [16] | All possible combinations; can be prohibitively large [16] |

| Main Effects | Estimated efficiently [16] | Precisely estimated |

| Interaction Effects | Often confounded with main effects or other interactions [16] | All can be independently estimated [16] |

| Resolution | Lower (e.g., III, IV) [16] | Highest (e.g., V) |

| Best Application Stage | Early discovery, factor selection [16] | Later-stage optimization, detailed characterization |

| Resource Requirement | Lower cost and time [16] | High cost and time [16] |

Table 2: Types of Screening Designs and Their Properties

| Design Type | Key Features | Optimal Use Case |

|---|---|---|

| 2-Level Fractional Factorial | Fractions of a full factorial; main effects are clear, but interactions are confounded [16] | Screening when some interaction information is needed and can be de-aliased [16] |

| Plackett-Burman | Very high efficiency for main effects; assumes interactions are negligible [16] | Screening a very large number of factors where the main effect assumption holds [17] |

| Definitive Screening Design (DSD) | 3-level design; can estimate main effects, quadratic effects, and some two-way interactions with few runs [18] | Screening when curvature is suspected or for quantitative factors prior to optimization [18] |

Experimental Workflow and Decision Pathways

The choice between a screening and full factorial design is not merely a selection but a strategic decision within a larger experimental sequence. The following workflow outlines a systematic path for reaction discovery research, from initial factor screening to detailed characterization.

Diagram 1: Experimental Design Workflow

The process begins with a Screening DOE when the number of potential factors is large. This initial screen efficiently identifies the subset of factors that have a statistically significant impact on the reaction outcome. If successful, the process proceeds to a Full Factorial DOE on the reduced set of factors. This sequential approach leverages the strengths of both methods: the efficiency of screening to narrow the focus, followed by the comprehensive analysis of a full factorial to fully understand interactions and optimize conditions [16]. If the screening design does not yield clear significant factors, the researcher must re-evaluate the initial factor set before proceeding.

Practical Application: A Case Study in Analytical Chemistry

A recent study developing a high-performance liquid chromatography (HPLC) method for quantifying N-acetylmuramoyl-L-alanine amidase (NAM-amidase) activity provides an excellent example of a sequential DOE strategy in a biochemical context [17].

Experimental Protocol and Workflow

The researchers employed a two-stage optimization process guided by DOE principles:

- Initial Screening Phase: A Plackett-Burman design was used to screen a wide range of method variables. This design efficiently identified the most critical factors influencing the chromatographic separation and detection of the enzymatic product, p-nitroaniline [17].

- Subsequent Optimization Phase: The critical factors identified from the Plackett-Burman screen were then investigated using a Box-Behnken design (a type of Response Surface Methodology) to model curvature and locate the optimal method conditions [17].

This hierarchical approach is a classic and powerful application of screening designs to conserve resources while building a robust and optimized final method. The workflow for this specific case study is detailed below.

Diagram 2: HPLC Method Development Case

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents and materials used in the featured HPLC method development case study, which are also common in related reaction discovery and analytical research [17].

Table 3: Key Research Reagent Solutions for HPLC Method Development

| Reagent/Material | Function in the Experiment |

|---|---|

| NAM-amidase Enzyme | The target protein whose enzymatic activity is being measured [17]. |

| p-Nitroaniline (pNA) | The enzymatic product of the reaction; its quantification serves as a direct measure of enzyme activity [17]. |

| Methanol & o-Phosphoric Acid | Components of the isocratic mobile phase used to elute the analyte from the HPLC column [17]. |

| RP-18 Column | A reverse-phase C18 chromatography column (10 cm) used for the separation of the reaction mixture [17]. |

| UV-vis Detector | Standard detector used to quantify p-nitroaniline based on its absorbance [17]. |

Strategic Implementation and Best Practices

When to Use Each Design

- Use a Screening DOE when: Dealing with a large number of process variables (e.g., more than 5) [16], preparing for a subsequent optimization study [16], or when resources are limited and the primary goal is to identify the most significant variables quickly [16].

- Use a Full Factorial DOE when: The number of factors is small (typically ≤ 4) and manageable [16], interactions between factors are suspected to be critical [16], or a complete understanding of the system within the experimental region is required.

Interpreting Results and Avoiding Pitfalls

Successful implementation requires careful interpretation of results. For screening designs, it is crucial to understand the concept of resolution. A design's resolution indicates the degree to which estimated main effects are confounded (aliased) with interaction terms [16]. For example, in a Resolution III design, main effects are confounded with two-factor interactions, whereas in a Resolution IV design, main effects are clear but two-factor interactions are confounded with each other [16].

A key best practice is to assess the importance of interactions before selecting a design. If prior knowledge or fundamental principles suggest interactions are likely to be significant, a Plackett-Burman design (which ignores them) may be risky. In such cases, a higher-resolution fractional factorial or a Definitive Screening Design (DSD) is more appropriate [16] [18]. DSDs are particularly powerful as they can estimate quadratic effects and are robust to the presence of two-factor interactions, all while maintaining a relatively low run count [18].

Furthermore, all DOEs should be planned to eliminate noise and contamination by controlling for known sources of variation and using robust measurement systems [16]. The analysis should always include an assessment of model adequacy, such as a lack-of-fit test, when the data contain replicates [19].

The strategic choice between Screening DOE and Full Factorial DOE is a cornerstone of efficient reaction discovery research. Screening designs offer a powerful, resource-conscious method for navigating vast experimental landscapes and identifying critical factors. In contrast, full factorial designs provide an uncompromisingly detailed map of a more confined but highly important experimental region. The most effective research strategies do not view these methods in isolation but employ them sequentially: using screening to illuminate the path forward and full factorial designs to fully characterize the destination. By mastering this balance between efficiency and information, researchers and drug development professionals can significantly accelerate the journey from initial discovery to optimized, well-understood chemical processes.

The Role of Screening in the Broader Drug Discovery Workflow

Screening represents a critical, foundational pillar in the modern drug discovery process, serving as the essential bridge between target identification and the development of clinical candidates. This methodological approach encompasses a range of technologies designed to identify initial hit compounds that modulate biologically validated targets. Within the broader context of reaction discovery research, screening designs provide systematic frameworks for exploring chemical space, optimizing reaction conditions, and identifying novel synthetic pathways with efficiency and precision. The integration of advanced screening methodologies has transformed early drug discovery from a serendipitous process to a rigorous, data-driven science, significantly impacting timelines and success rates [20] [21].

The drug discovery pathway remains long and resource-intensive, spanning an average of 12-13 years with costs reaching $2.5-3 billion per approved medicine. Attrition presents the greatest challenge, with only 10-15% of compounds that enter clinical trials ultimately achieving regulatory approval [20]. Within this complex landscape, screening technologies serve as crucial gatekeepers, ensuring that only the most promising chemical starting points progress through later development stages. This whitepaper provides a comprehensive technical examination of screening methodologies, their integration within the drug discovery workflow, and their growing relevance to reaction discovery research.

The Drug Discovery Workflow: Context for Screening

The journey from target identification to marketed therapeutic follows a structured, albeit iterative, pathway. Screening operations occupy a central position in the early discovery phases, transitioning the process from biological hypothesis to chemical starting points [20] [21].

Figure 1: Drug Discovery Workflow with Screening Integration. Screening operations occur early in the discovery phase, transitioning the process from biological targets to chemical starting points.

Pre-Screening Stages: Establishing Foundation

Target Identification initiates the drug discovery process by selecting biological molecules (typically proteins) with significant disease involvement. Approaches include genomic and transcriptomic technologies (GWAS, RNA sequencing), proteomics (mass spectrometry), and phenotypic screening in disease models [20]. The ideal target must be both "druggable" (accessible to pharmacological modulation) and demonstrate clear disease relevance [21].

Target Validation confirms that modulating the identified target produces therapeutic benefit without unacceptable toxicity. Validation employs genetic tools (CRISPR/Cas9, RNAi), pharmacological approaches (tool compounds, antibodies), and transgenic models [20] [21]. Multi-validation strategies increase confidence in the target-disease relationship before committing to resource-intensive screening campaigns.

Screening Methodologies: Technical Approaches

High-Throughput Screening (HTS)

High-Throughput Screening represents the most established screening paradigm, involving the rapid testing of large compound libraries (often hundreds of thousands to millions of compounds) against biological targets in automated formats [22]. HTS campaigns generate massive datasets from which researchers identify initial hit compounds based on predefined activity thresholds.

Key HTS Characteristics:

- Library Size: 10⁴–10⁶ compounds typically screened

- Format: Miniaturized assays (96, 384, or 1536-well plates)

- Automation: Fully automated platforms enable rapid screening

- Readouts: Biochemical, cell-based, or phenotypic endpoints [20] [22]

Evotec's screening platform exemplifies industrial-scale HTS capabilities, with a curated library of >850,000 compounds and infrastructure supporting >750 biochemical, cellular, or microorganism-based campaigns [22].

Virtual Screening

Virtual screening employs computational methods to prioritize compounds for experimental testing, significantly reducing resource requirements. Structure-based approaches use molecular docking to predict binding affinity, while ligand-based methods leverage known active compounds to identify structurally similar candidates [23] [20].

Table 1: Virtual Screening Hit Identification Criteria Analysis (2007-2011) [23]

| Hit Identification Metric | Studies Using Metric | Typical Activity Range | Ligand Efficiency Application |

|---|---|---|---|

| Percentage Inhibition | 85 studies | Varies by study | Not routinely applied |

| IC50 | 30 studies | 1-25 μM (most common) | Rarely used |

| EC50 | 4 studies | 25-50 μM | Not employed |

| Ki/Kd | 4 studies | 50-100 μM | Not utilized |

| Other/Not Reported | 290 studies | Not specified | Occasionally considered |

Analysis of 421 virtual screening studies reveals limited standardization in hit identification criteria. Only approximately 30% of studies reported clear, predefined hit cutoffs, with significant variation in activity thresholds employed. Ligand efficiency metrics, which normalize activity to molecular size, were notably underutilized despite their value in identifying optimized starting points [23].

Specialized Screening Approaches

Fragment-Based Drug Discovery (FBDD) screens low molecular weight compounds (<300 Da) using sensitive biophysical methods. While fragments typically exhibit weak binding affinity, they offer superior optimization potential and efficiency metrics [20] [22].

DNA-Encoded Library (DEL) Technology represents a transformative approach where each compound is tagged with a DNA barcode encoding its structure. This enables screening of extraordinarily large libraries (up to 10¹² compounds) against protein targets using minimal quantities and time [20].

Affinity Selection Mass Spectrometry (ASMS) directly detects binding between compounds and targets without requirement for functional activity, particularly valuable for challenging target classes [22].

Experimental Protocols: Screening Cascade Design

Assay Development and Validation

Robust assay development forms the critical foundation for successful screening campaigns. Assays must be optimized for sensitivity, reproducibility, and scalability while maintaining physiological relevance [20].

Protocol: Biochemical Assay Development for Kinase Targets [24]

- Target Preparation: Produce and purify recombinant kinase domain, confirming enzymatic activity via phosphotransfer assays.

- Substrate Selection: Identify physiologically relevant peptide substrates with optimal kinetic parameters (KM < 100 μM).

- Detection Method: Implement radioisotopic (³³P-ATP) or luminescent detection systems measuring phosphate transfer.

- Miniaturization & Optimization: Transition assay to 384-well format, optimizing DMSO tolerance, incubation time, and reagent concentrations.

- Validation: Establish Z' factor >0.5, signal-to-background ratio >3:1, and coefficient of variation <10% using control inhibitors.

Protocol: Cell-Based Phenotypic Screening [24] [22]

- Cell Line Development: Engineer reporter cell lines expressing fluorescent or luminescent markers under control of pathway-responsive elements.

- Assay Conditions: Define serum concentration, cell density, and compound incubation time through systematic optimization.

- Counter-Screening: Implement orthogonal assays to identify technology-interfering compounds (e.g., auto-fluorescent molecules, luciferase inhibitors).

- Validation: Confirm assay performance using known pathway modulators across multiple cell passages.

Primary Screening and Hit Confirmation

The screening cascade employs sequential filters to identify and validate genuine hits while eliminating false positives.

Figure 2: Screening Cascade for Hit Identification. Multi-stage screening process progressively filters compound libraries to identify validated hits with desired properties.

Protocol: Hit Confirmation Cascade [22]

- Confirmatory Screening: Re-test active compounds from primary screen under identical conditions to assess reproducibility.

- Dose-Response Analysis: Generate concentration-response curves (typically 8-10 point dilutions) to determine potency (IC50, EC50 values).

- Orthogonal Assays: Employ biophysical methods (SPR, ITC, X-ray crystallography) to confirm direct target binding and determine mechanism of action.

- Counter-Screening: Test compounds against related targets and assay technology controls to establish selectivity and eliminate technology artifacts.

- Secondary Assays: Evaluate compounds in functionally relevant cellular models to confirm pharmacological activity.

Hit Criteria and Prioritization

Establishing systematic hit selection criteria is essential for identifying chemical starting points with optimal development potential. While activity thresholds vary by project scope and target class, best practices incorporate multiple parameters [23].

Table 2: Hit Selection Criteria and Optimization Metrics [23] [20]

| Parameter | Typical Hit Threshold | Lead Optimization Target | Measurement Method |

|---|---|---|---|

| Potency | IC50 < 10-50 μM | IC50 < 100 nM | Concentration-response assays |

| Ligand Efficiency | ≥ 0.3 kcal/mol/HA (fragments) | Maintained or improved | Calculated from potency and size |

| Selectivity | >10-100 fold vs. related targets | >100-fold selectivity | Counter-screening panel |

| Solubility | >10 μM in PBS | >100 μM | Kinetic solubility assay |

| Chemical Tractability | Presence of synthetic handles | Robust SAR established | Medicinal chemistry assessment |

| Cellular Activity | Consistent with biochemical potency | <1 μM in cellular assays | Cell-based secondary assays |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagents for Screening Operations [24] [22]

| Reagent Category | Specific Examples | Function in Screening | Considerations |

|---|---|---|---|

| Compound Libraries | Diverse small molecules, Fragments, Natural products | Source of chemical starting points | Diversity, quality, drug-likeness |

| Detection Reagents | ³³P-ATP, Fluorescent probes, Luminescent substrates | Enable measurement of biological activity | Signal-to-background, interference |

| Cellular Systems | Reporter cell lines, Primary cells, Engineered tissues | Provide physiological context | Relevance, stability, scalability |

| Protein Targets | Recombinant enzymes, Purified receptors, Membrane preparations | Biological targets for screening | Activity, purity, stability |

| Assay Platforms | Radioisotopic filtration, Fluorescence polarization, TR-FRET | Technology for detecting activity | Sensitivity, robustness, cost |

| Biophysical Tools | SPR chips, Crystallography plates, ITC reagents | Confirm binding and characterize interactions | Throughput, information content |

Screening in Reaction Discovery Research

While biological screening dominates drug discovery, analogous methodologies are increasingly applied to reaction discovery and optimization. The principles of systematic exploration, robust detection, and iterative optimization translate effectively to chemical reaction development.

Parallels Between Biological and Reaction Screening

Reaction discovery employs screening methodologies to identify optimal catalysts, conditions, and substrate combinations. High-Throughput Experimentation (HTE) in chemistry mirrors biological HTS, enabling rapid evaluation of thousands of reaction conditions [25]. Recent advances integrate artificial intelligence with experimental screening to prioritize promising reaction spaces, directly analogous to virtual screening in drug discovery [25].

Self-Driving Laboratories for Reaction Optimization

The Reac-Discovery platform exemplifies the convergence of screening and automation in reaction engineering. This integrated system combines:

- Reac-Gen: Parametric design of reactor geometries using mathematical models

- Reac-Fab: High-resolution 3D printing of customized reactors

- Reac-Eval: Automated evaluation with real-time NMR monitoring and machine learning optimization [26]

This closed-loop approach simultaneously optimizes both process parameters (temperature, flow rates, concentration) and topological descriptors (reactor geometry), dramatically accelerating the discovery of efficient catalytic systems [26].

Data-Driven Reaction Discovery

Advanced data analytics transform reaction screening from empirical optimization to predictive science. Large Language Models (LLMs) process extensive chemical literature to extract trends, substrate combinations, and reaction conditions, generating testable hypotheses for experimental validation [25]. This methodology, exemplified in cross-electrophile coupling (XEC) case studies, identifies unexplored substrate pairs and designs efficient screening strategies that minimize reliance on serendipity [25].

Emerging Technologies and Future Directions

Screening methodologies continue to evolve through integration of advanced computational and engineering technologies. Artificial intelligence and machine learning now augment multiple screening stages, from virtual compound prioritization to experimental design [20] [26]. These tools analyze complex datasets to identify patterns beyond human discernment, improving prediction accuracy and reducing experimental requirements.

Self-driving laboratories represent the frontier of integrated screening, combining automated experimentation with real-time analysis and adaptive decision-making [26]. These systems continuously refine experimental parameters based on incoming results, dramatically accelerating optimization cycles. The Reac-Discovery platform demonstrates this principle in catalytic reactor optimization, achieving record performance in multiphase reactions through simultaneous process and topological optimization [26].

DNA-encoded library technology continues to expand accessible chemical space, with libraries now exceeding 150 billion compounds in some screening platforms [22]. Combined with advanced detection methods and computational analysis, DEL screening provides unprecedented access to novel chemotypes for challenging biological targets.

Screening methodologies occupy a central, indispensable role in the drug discovery workflow, providing the critical transition from biological targets to chemical starting points. The continued evolution of screening technologies—from HTS to virtual screening, fragment-based approaches, and DEL screening—has progressively enhanced the efficiency and success rates of early drug discovery. As these methodologies mature, their principles and applications increasingly extend to reaction discovery research, creating parallel frameworks for biological and chemical exploration.

The future of screening lies in the deeper integration of experimental and computational approaches, with AI-driven prioritization guiding automated experimentation in iterative optimization cycles. These advanced screening paradigms will continue to reduce discovery timelines, improve success rates, and expand the accessible frontiers of both therapeutic and chemical space. For researchers engaged in both biological and reaction discovery, mastering these screening methodologies remains essential for success in an increasingly complex and competitive landscape.

Selecting and Executing the Right Screening Design for Your Reaction

In the realm of reaction discovery and pharmaceutical development, researchers routinely face the challenge of evaluating numerous potential factors to identify those with significant effects on critical outcomes such as yield, purity, or potency. Screening designs provide a systematic, statistically-powered framework for this initial investigation, allowing for the efficient evaluation of multiple factors simultaneously. These designs are employed early in experimental processes when the primary goal is to identify the most influential factors from a large set of candidates, thereby conserving resources and guiding subsequent optimization efforts [16]. The fundamental principle underpinning screening experiments is effect sparsity—the assumption that only a small subset of factors will have substantial effects on the response [27]. This principle justifies the use of fractional designs that strategically sacrifice some information to achieve efficiency.

This guide focuses on three predominant screening design types—Fractional Factorial, Plackett-Burman, and Definitive Screening Designs—framed within the context of reaction discovery research. Each design offers a distinct balance of run efficiency, confounding structure, and ability to detect interactions and curvature, making them suitable for different stages and objectives within the drug development pipeline. By understanding the properties and appropriate applications of each design, researchers and scientists can make informed decisions that accelerate the identification of promising reaction pathways and drug candidates.

Core Concepts and Terminology

Before delving into specific designs, it is essential to establish a foundation in the key concepts that govern screening experiments.

- Factors and Levels: A factor is an independent variable suspected of influencing the response (e.g., temperature, catalyst loading, solvent type). The specific values at which a factor is set are its levels. In screening, factors are typically investigated at two levels (low and high), though Definitive Screening Designs introduce a middle level [28] [29].

- Confounding (Aliasing): This occurs when the statistical effect of one factor or interaction is indistinguishable from that of another. Confounding is a direct consequence of not running a full factorial experiment. The goal is to select a fraction where main effects are confounded only with higher-order interactions presumed negligible [30].

- Resolution: The resolution of a design (denoted by Roman numerals) indicates the degree of confounding.

- Resolution III: Main effects are not confounded with each other but are confounded with two-factor interactions. Use with the assumption that interactions are negligible [30] [31].

- Resolution IV: Main effects are not confounded with each other or with two-factor interactions, but two-factor interactions are confounded with one another. This allows for unbiased estimation of main effects even if interactions are present [30] [29].

- Resolution V: Main effects and two-factor interactions are not confounded with each other, though two-factor interactions may be confounded with three-factor interactions [30].

- Design Generators: These are the rules (often based on multiplying higher-order interactions) used to select the specific subset of runs from the full factorial set. They define the alias structure of the design [30].

The following workflow outlines the strategic decision-making process for selecting and implementing a screening design in a research setting.

Fractional Factorial Designs

Definition and Rationale

A Fractional Factorial Design is a carefully chosen subset (a fraction) of a full factorial design. For k factors each at two levels, a full factorial requires 2^k runs. A fractional factorial design, denoted as 2^(k-r), requires only a fraction of these runs (e.g., 1/2, 1/4, 1/8), making it practical for studying multiple factors with limited resources [30]. Its primary use is to screen a moderate number of factors where some information about interactions is desired, but running a full factorial is impractical.

Key Characteristics and Applications

Fractional factorial designs are characterized by their resolution, which dictates the alias structure. For example, in a resolution III design (e.g., a 2^(3-1) design with 4 runs), the main effects are not confounded with each other but are confounded with two-factor interactions [30]. In a resolution IV design, main effects are clear of two-factor interactions, but the two-factor interactions themselves are confounded with each other [30]. This design is highly useful in early-stage reaction discovery for identifying critical process parameters, such as in semiconductor manufacturing where factors like Gas Flow, Temp, LF Power, and HF Power were screened to understand their impact on film thickness [30].

Experimental Protocol

A generalized protocol for executing a fractional factorial design is as follows:

- Define the System: Identify

kfactors to be investigated and assign practical low (-1) and high (+1) levels to each. - Select the Fraction and Resolution: Choose a

2^(k-r)design with a resolution appropriate for the goals. Resolution IV is often preferred for screening as it protects main effects from two-factor interaction bias [30]. - Generate the Design Matrix: Use statistical software to generate the set of experimental runs. The software will use generators to create the design and define its alias structure.

- Randomize and Execute: Randomize the order of the experimental runs to mitigate the effects of lurking variables. Execute the runs and record the response data.

- Analyze the Data: Fit a statistical model to the data. For saturated models (where the number of terms equals the number of runs), use analysis tools like half-normal plots and Lenth's Pseudostandard Error (PSE) to identify active effects, as traditional p-values are unavailable [30].

Table 1: Analysis of a 2^(4-1) Fractional Factorial Design for a Polymerization Reaction

| Factor | Low Level (-1) | High Level (+1) | Standardized Effect Estimate | Status (α=0.10) |

|---|---|---|---|---|

| Catalyst Type (A) | Type I | Type II | 5.75 | Active |

| Temperature (B) | 80 °C | 100 °C | 1.20 | Not Active |

| Concentration (C) | 0.5 M | 1.0 M | -0.95 | Not Active |

| Stir Rate (D) | 200 rpm | 400 rpm | 7.25 | Active |

| A*B (Interaction) | - | - | 1.50 | Not Active |

| C*D (Interaction) | - | - | -6.50 | Active (Aliased) |

Plackett-Burman Designs

Definition and Rationale

Plackett-Burman Designs are a specific class of two-level resolution III screening designs used to study n-1 factors in n experimental runs, where n is a multiple of 4 (e.g., 4, 8, 12, 16, 20) [31]. Their key advantage is run number flexibility, allowing researchers to screen a large number of factors with a run count that falls between the powers of two required by traditional fractional factorials. This makes them ideal for situations with extreme resource constraints.

Key Characteristics and Applications

These designs are resolution III, meaning main effects are not confounded with each other but are confounded with two-factor interactions. A critical feature is that the confounding is partial, meaning a main effect is partially confounded with many two-factor interactions, rather than being completely confounded with a single one [31]. This increases the variance of the estimates but allows for the detection of large main effects. The analysis of Plackett-Burman designs heavily relies on the assumption that two-factor interactions are negligible. They have been successfully applied in diverse fields, from screening ten factors affecting polymer hardness in 12 runs [31] to identifying key parameters in cross-coupling reactions [32].

Experimental Protocol

- Define Factors and Levels: Select the factors to be screened and set their two levels.

- Determine Run Size: Choose a Plackett-Burman design where the number of runs

nis the smallest multiple of 4 that can accommodate yourn-1factors. - Construct the Design: Statistical software can generate the design matrix. The runs are typically presented in a standardized order and must be randomized before execution.

- Run the Experiment and Collect Data: Conduct the experiments in the randomized order and measure the response(s).

- Analyze the Data: Fit a main-effects-only model. Because the design is not saturated, it is often possible to calculate p-values for the effects. A common strategy is to use a higher significance level (e.g., α=0.10) to avoid missing potentially important factors [31].

Table 2: Plackett-Burman Design for Screening 6 Factors in 12 Runs

| Run # | Catalyst (A) | Ligand (B) | Temp (C) | Solvent (D) | Conc (E) | Time (F) | Dummy (G) | Yield (%) |

|---|---|---|---|---|---|---|---|---|

| 1 | +1 | -1 | +1 | -1 | -1 | -1 | +1 | 85 |

| 2 | +1 | +1 | -1 | +1 | -1 | -1 | -1 | 62 |

| 3 | -1 | +1 | +1 | -1 | +1 | -1 | -1 | 78 |

| 4 | +1 | -1 | +1 | +1 | -1 | +1 | -1 | 81 |

| 5 | +1 | +1 | -1 | +1 | +1 | -1 | +1 | 65 |

| 6 | +1 | +1 | +1 | -1 | +1 | +1 | -1 | 90 |

| 7 | -1 | +1 | +1 | +1 | -1 | +1 | +1 | 74 |

| 8 | -1 | -1 | +1 | +1 | +1 | -1 | +1 | 70 |

| 9 | -1 | -1 | -1 | +1 | +1 | +1 | -1 | 55 |

| 10 | +1 | -1 | -1 | -1 | +1 | +1 | +1 | 58 |

| 11 | -1 | +1 | -1 | -1 | -1 | +1 | +1 | 60 |

| 12 | -1 | -1 | -1 | -1 | -1 | -1 | -1 | 48 |

Definitive Screening Designs

Definition and Rationale

Definitive Screening Designs are a modern class of screening designs that offer unique advantages for reaction discovery. Each continuous factor in a DSD is studied at three levels: low (-1), high (+1), and center (0) [28]. DSDs are both statistically and practically efficient, requiring only slightly more than twice the number of runs as factors [28] [29].

Key Characteristics and Applications

DSDs possess several powerful properties that make them exceptionally useful for screening:

- Orthogonality: Main effects are completely independent of (orthogonal to) both two-factor interactions and quadratic effects. This means the estimate of a main effect is not biased even if interactions or curvature are present [28].

- No Complete Confounding: No two-factor interactions are completely confounded with one another, reducing ambiguity in identifying active interactions [28] [29].

- Curvature Detection: The three-level structure allows for the estimation of quadratic effects, helping researchers identify factors where the optimal setting is at an intermediate level, not at an extreme [28] [29].

- Path to Optimization: If only a few active factors are found, the DSD data can often be used directly to fit a full quadratic model for optimization, eliminating the need for a follow-up experiment [28].

Experimental Protocol

- Define Continuous Factors: DSDs are ideal for continuous factors (e.g., temperature, time, concentration). Set the low, middle, and high levels for each.

- Generate the Design: Use statistical software to create the DSD. The design will consist of

2k + 1runs forkfactors, often augmented with a few extra runs for better precision [28]. - Execute the Experiment: Run the experiments in a randomized order. The design includes foldover pairs and a center point.

- Analyze the Data: Begin by fitting a main effects model. Use Pareto charts or other methods to identify active main effects. Then, refine the model by adding potential interaction and quadratic terms for the active factors, using variable selection techniques to arrive at a final model [28] [29].

Table 3: Comparison of Screening Design Properties

| Characteristic | Fractional Factorial | Plackett-Burman | Definitive Screening |

|---|---|---|---|

| Typical Runs for k=6 | 8 (1/8 fraction) | 12 | 13 |

| Factor Levels | 2 | 2 | 3 |

| Resolution | III, IV, V | III | IV |

| Main Effects Aliasing | Confounded with interactions in Res III | Partially confounded with many 2FI | Not confounded with 2FI or quadratic |

| 2FI Aliasing | Confounded with other 2FI or main effects | Partially confounded with many other 2FI | Partially confounded, but not completely |

| Quadratic Effects | Not estimable | Not estimable | Estimable |

| Best Use Case | Moderate factor count, some run flexibility | High factor count, severe run constraints | Suspected curvature, a path to optimization |

The Scientist's Toolkit: Essential Reagents and Materials

The following table details key reagents and materials commonly employed in screening experiments for reaction discovery and pharmaceutical development.

Table 4: Key Research Reagent Solutions for Screening Experiments

| Reagent/Material | Function in Screening Experiments | Application Example |

|---|---|---|

| Phosphine Ligands | Modulate steric and electronic properties of metal catalysts, directly influencing activity and selectivity. | Screening ligand effects in Pd-catalyzed cross-coupling reactions (e.g., Suzuki, Heck) [32]. |

| Palladium Catalysts (e.g., Pd(OAc)₂, K₂PdCl₄) | Serve as precatalysts for a wide range of carbon-carbon bond forming reactions. | Catalyst loading is a common continuous factor to screen in reaction discovery [32]. |

| Polar Aprotic Solvents (e.g., DMSO, MeCN) | Affect reaction rate, solubility, and mechanism through polarity and solvation without acting as proton donors. | Solvent polarity is a key categorical or continuous factor in screening designs [32]. |

| Inorganic and Organic Bases (e.g., NaOH, Et₃N) | Scavenge acids, facilitate key mechanistic steps (e.g., transmetalation), and impact reaction kinetics. | Base strength and equivalence are critical factors to screen in base-promoted reactions [32]. |

| Internal Standards (e.g., Dodecane) | Added to reaction mixtures to enable precise quantification of yield and conversion via GC or LC analysis. | Used for accurate, reproducible measurement of the response variable (e.g., yield) in all run types [32]. |