Strategic Byproduct Reduction: A Design of Experiments Framework for Pharmaceutical R&D

This article provides a comprehensive guide for researchers and drug development professionals on applying Design of Experiments (DoE) to minimize byproduct formation.

Strategic Byproduct Reduction: A Design of Experiments Framework for Pharmaceutical R&D

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying Design of Experiments (DoE) to minimize byproduct formation. It covers the foundational principles of identifying byproduct mechanisms, details practical DoE methodologies for screening and optimization, addresses common troubleshooting scenarios, and outlines strategies for validation and regulatory alignment. By integrating concepts from Quality by Design (QbD), the content demonstrates how a systematic DoE approach can enhance process robustness, reduce development costs, and ensure the quality and safety of pharmaceutical products.

Understanding Byproduct Formation: Mechanisms, Sources, and Impact on Drug Quality

In drug development and research, controlling byproduct formation is critical for ensuring product safety, stability, and efficacy. Three common pathways—autoxidation, hydrolysis, and peroxide-mediated reactions—are frequently responsible for the generation of undesirable byproducts that can compromise pharmaceutical quality. Autoxidation involves the spontaneous oxidation of compounds by molecular oxygen, while hydrolysis entails cleavage of chemical bonds by water. Peroxide-mediated reactions utilize hydrogen peroxide or organic peroxides as oxidizing agents, which can be introduced as impurities or formed in situ through other chemical processes. Understanding the mechanisms, influencing factors, and detection methods for these pathways enables researchers to design robust experimental protocols that minimize byproduct formation and enhance product quality.

Frequently Asked Questions (FAQs)

Q1: What are the primary factors that accelerate autoxidation in pharmaceutical formulations? Several key factors influence autoxidation rates:

- pH: Alkaline conditions significantly accelerate autoxidation for many compounds, as the phenolate anion is more reactive than the undissociated phenol [1].

- Temperature: Elevated temperatures increase reaction rates; studies show heating phosphate buffer from room temperature to 75°C increased H₂O₂ formation [1].

- Oxygen concentration: Higher oxygen availability promotes autoxidation.

- Light exposure: UV and visible light can initiate radical chains.

- Metal ion contaminants: Transition metals like iron and copper catalyze autoxidation, even at trace concentrations [1].

Q2: How does hydrogen peroxide form spontaneously in common laboratory reagents and buffers? Hydrogen peroxide can form through multiple mechanisms:

- Microdroplet formation: Atomizing bulk water into microdroplets (1-20 μm) can generate ~30 μM H₂O₂ via hydroxyl radical recombination [1].

- Mechanical agitation: Shaking air-saturated water at 30 Hz can produce H₂O₂ at ~1 nM/min [1].

- Thermal effects: Heating air-saturated phosphate buffer (pH 6.8) to 75°C for 4 hours increased H₂O₂ from 5.7 nM to approximately 8.5 nM [1].

- Photochemical generation: Exposure to light can promote H₂O₂ formation in various solutions.

Q3: Which amino acids are most susceptible to oxidation via peroxide-mediated pathways? Methionine and cysteine are highly vulnerable to peroxide-mediated oxidation:

- Methionine: Can experience 25-75% loss, primarily forming methionine sulfoxide through a two-electron oxidation pathway [2].

- Cysteine: Effectively scavenges H₂O₂ and is prone to oxidation [2].

- Aromatic amino acids: Tryptophan and tyrosine can be oxidized to form dioxindolyl-ʟ-alanine, kynurenine, 3,4-dihydroxyphenylalanine, N′-formylkynurenine, and 5-hydroxytryptophan, though typically in lower amounts (nmol/mol-mmol/mol range) [2].

Q4: What analytical approaches are most effective for detecting and quantifying byproducts from these pathways?

- LC-Orbitrap-MS/MS: Effectively identifies protein-polyphenol adducts (>177 distinct adducts identified in one study) and oxidation products [2].

- Cellular Thermal Shift Assay (CETSA): Validates direct target engagement in intact cells and tissues, helping identify unintended modifications [3].

- HPLC with UV/Vis detection: Monitors polyphenol oxidation and related byproducts.

- Spectrophotometric assays: Detect hydrogen peroxide formation using peroxidase-coupled reactions.

Troubleshooting Guides

Problem: Unexpected Hydrogen Peroxide Formation in Solutions

Symptoms: Solution discoloration, precipitation, decreased API potency, unexpected cytotoxicity in biological assays.

Investigation Steps:

- Test for H₂O₂ using commercial peroxide test strips or spectrophotometric assays.

- Review recent handling procedures - check for vigorous shaking, heating, or exposure to light.

- Analyze solution composition for known H₂O₂ precursors (e.g., polyphenols, ascorbate, thiols).

- Evaluate pH dependence, as autoxidation rates often increase significantly above pH 6 [1].

Resolution Strategies:

- Add chelators: Include EDTA or desferoxamine (0.1-1 mM) to chelate metal catalysts [1].

- Adjust pH: When possible, formulate at lower pH (pH 4-6) to minimize autoxidation.

- Use oxygen-free atmosphere: Sparge solutions with nitrogen or argon and maintain under inert gas.

- Include antioxidants: Add appropriate radical scavengers like tocopherol or ascorbate (considering their potential pro-oxidant effects).

Problem: Protein Oxidation and Aggregation

Symptoms: Protein aggregation, loss of enzymatic activity, unusual migration on SDS-PAGE, particulates in formulations.

Investigation Steps:

- Perform LC-MS to identify specific amino acid modifications (e.g., methionine sulfoxide).

- Test for reactive oxygen species in buffer components.

- Evaluate metal contamination in buffers and storage containers.

- Assess correlation between mechanical stress (shipping, handling) and oxidation.

Resolution Strategies:

- Add protective excipients: Include methionine (1-10 mM) as a sacrificial antioxidant for methionine residues [2].

- Use metal-free containers: Store solutions in plastic rather than glass when possible.

- Implement cryoprotection: For freeze-thaw sensitive proteins, add sucrose or trehalose.

- Purge with inert gas: Reduce oxygen content in headspace of storage containers.

Problem: Hydrolysis of Active Pharmaceutical Ingredients

Symptoms: pH drift, loss of potency, appearance of new peaks in chromatograms, particularly after storage.

Investigation Steps:

- Conduct forced degradation studies at different pH values.

- Identify hydrolysis products using LC-MS.

- Evaluate moisture content in solid formulations.

- Assess packaging integrity and moisture barrier properties.

Resolution Strategies:

- Optimize formulation pH: Identify and use pH of maximum stability.

- Use appropriate packaging: Implement moisture-resistant containers with desiccants.

- Employ lyophilization: Convert to solid state for moisture-sensitive compounds.

- Modify molecular structure: When possible, incorporate hydrolytically stable bioisosteres.

Quantitative Data on Byproduct Formation

Table 1: Hydrogen Peroxide Generation from Polyphenol Autoxidation

| Polyphenol (4 mM) | H₂O₂ Produced (μM) | Incubation Conditions | Key Influencing Factors |

|---|---|---|---|

| Epigallocatechin gallate (EGCG) | Varies up to ~242 | pH-dependent, 37°C | 100x increase from pH 6→8 [1] |

| General Polyphenols | 0.2 - 242 | Time, temperature, pH dependent | Higher pH, transition metals [2] |

| Catechin derivatives | Variable | Metal-catalyzed | Enhanced by Cu²⁺, Fe²⁺ [1] |

Table 2: Amino Acid Susceptibility to Peroxide-Mediated Oxidation

| Amino Acid | Oxidation Products | Relative Susceptibility | Scavenging Efficiency |

|---|---|---|---|

| Methionine | Methionine sulfoxide | High (25-75% loss) | Moderate |

| Cysteine | Cystine, higher oxides | High | Complete H₂O₂ scavenging [2] |

| Tryptophan | Dioxindolyl-ʟ-alanine, kynurenine, N′-formylkynurenine | Moderate | Low |

| Tyrosine | 3,4-Dihydroxyphenylalanine | Moderate | Low |

Experimental Protocols for Byproduct Investigation

Protocol: Quantifying Hydrogen Peroxide Formation from Compound Autoxidation

Purpose: Measure H₂O₂ generation from test compounds under various conditions.

Materials:

- Test compounds (e.g., polyphenols, ascorbate, thiols)

- Phosphate or other appropriate buffers (10-100 mM)

- Horseradish peroxidase (HRP, 10 U/mL)

- Colorimetric substrate (e.g., Amplex Red, 100 μM)

- Microplate reader or spectrophotometer

- Chelators (EDTA, desferoxamine) for metal-free conditions

Procedure:

- Prepare compound solutions (typically 1-10 mM) in appropriate buffers at desired pH.

- Incubate at relevant temperatures (25-37°C) for predetermined times (1-24 hours).

- Remove aliquots at time points and mix with HRP and substrate solution.

- Measure absorbance or fluorescence according to substrate specifications.

- Quantify H₂O₂ using a standard curve (0-100 μM).

- Repeat under metal-free conditions (with chelators) and at different pH values.

Variations:

- Include transition metals (Fe²⁺, Cu²⁺, 1-100 μM) to assess catalytic effects.

- Exclude oxygen by purging with nitrogen to confirm oxidative mechanism.

- Test the impact of light exposure versus dark conditions.

Protocol: Assessing Protein Oxidation and Adduct Formation

Purpose: Evaluate protein modification resulting from autoxidation or peroxide-mediated reactions.

Materials:

- Target protein (e.g., BSA, β-lactoglobulin, therapeutic protein)

- Oxidizing agents (polyphenols, H₂O₂, radical initiators)

- LC-MS/MS system (Orbitrap preferred)

- Proteomic digestion reagents (trypsin, digestion buffer)

- Solid-phase extraction materials for cleanup

Procedure:

- Incubate protein (1-10 mg/mL) with oxidizing compounds or under oxidizing conditions.

- At time points, remove aliquots and stop reaction with specific inhibitors.

- Digest protein with trypsin using standard proteomic protocols.

- Analyze peptides by LC-MS/MS with data-dependent acquisition.

- Search data for specific modifications: methionine sulfoxide (+16 Da), kynurenine (+4 Da), protein-polyphenol adducts.

- Quantify modification extent using label-free or labeled quantitation methods.

Pathway Diagrams

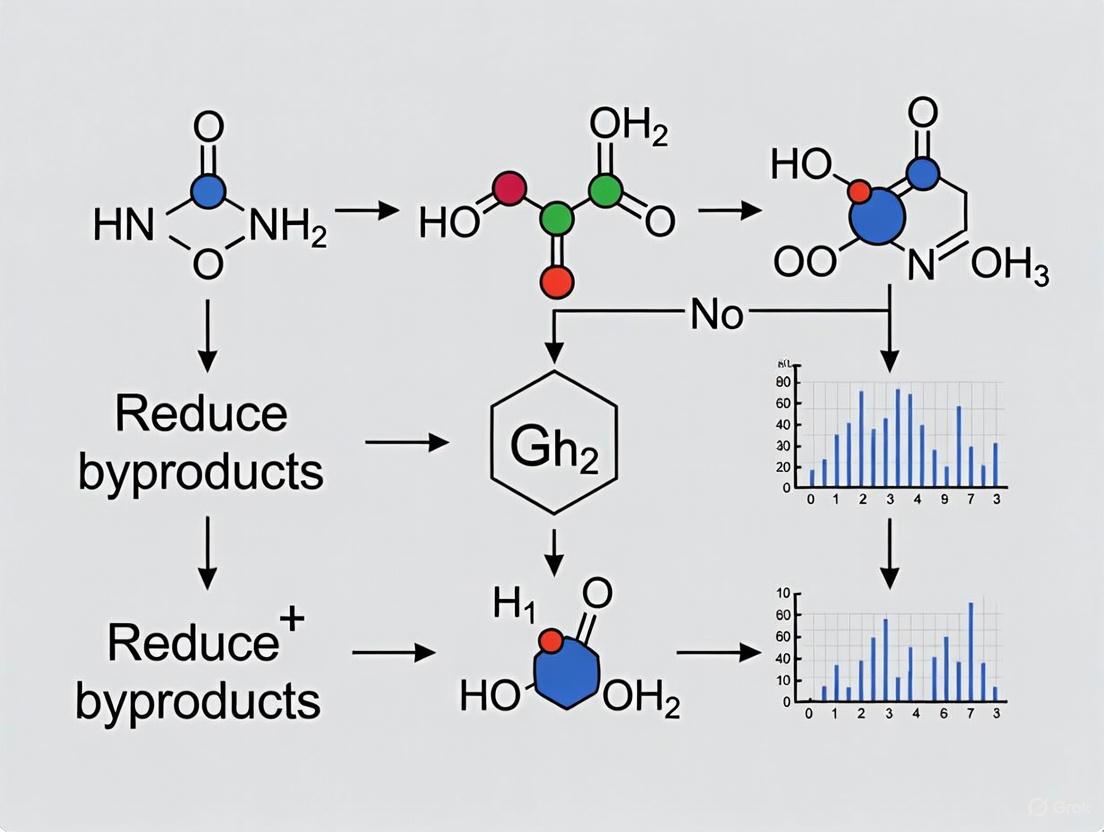

Diagram 1: Pathways of Autoxidation and Peroxide-Mediated Protein Modification. This diagram illustrates how molecular oxygen initiates polyphenol autoxidation, generating hydrogen peroxide and quinones that subsequently mediate protein oxidation and adduct formation through multiple mechanisms.

Diagram 2: Experimental Workflow for Byproduct Investigation and Control. This workflow integrates Quality by Design principles with specific analytical techniques to systematically identify, characterize, and control byproducts throughout development.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Investigating Byproduct Formation Pathways

| Reagent/Category | Specific Examples | Function/Application | Key Considerations |

|---|---|---|---|

| Metal Chelators | EDTA, desferoxamine, DTPA | Inhibit metal-catalyzed autoxidation | Desferoxamine more specific for iron; EDTA may redox cycle under certain conditions [1] |

| H₂O₂ Detection | Amplex Red/UltraRed, peroxidase, ferrous oxidation-xylenol orange (FOX) assay | Quantify H₂O₂ formation | Amplex Red more sensitive (nM range); avoid peroxidase inhibition by test compounds |

| Radical Scavengers | Trolox, tocopherol, ascorbate, glutathione | Trap radical intermediates | Ascorbate can be pro-oxidant in some contexts; consider combination approaches |

| Analytical Standards | Methionine sulfoxide, kynurenine, 3,4-dihydroxyphenylalanine | Quantify specific amino acid oxidation products | Essential for LC-MS/MS quantification and method validation |

| Enzymatic Scavengers | Catalase, superoxide dismutase (SOD), glutathione peroxidase | Specific H₂O₂ and superoxide removal | Catalase confirms H₂O₂ involvement; SOD distinguishes superoxide vs. H₂O₂ effects |

| MS-Compatible Buffers | Ammonium bicarbonate, ammonium acetate | LC-MS sample preparation | Avoid non-volatile salts that interfere with MS detection |

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary sources of variability in biopharmaceutical manufacturing? The primary sources of variability include raw material impurities, excipient interactions, and process parameters. Raw materials, even those of the same grade, can have divergent chemical or physical characteristics, contaminants, and impurities between lots, leading to process inconsistencies and yield loss [4]. Excipients and other process components can exhibit lot-to-lot variability that impacts cell growth, stability, and interactions with other processing components [5]. Unoptimized process parameters during upstream production can further introduce variability that challenges downstream purification [6].

FAQ 2: How can risk assessment help in managing raw material variability? Risk assessment provides a structured methodology to systematically identify, evaluate, and mitigate risks associated with raw materials [6]. It enables businesses to make informed decisions, allocate resources effectively, and pinpoint inefficiencies without compromising quality. By implementing effective risk-assessment strategies and working with reliable, selected solution providers, biopharmaceutical manufacturers can minimize these challenges and improve product quality [5].

FAQ 3: Why is high-quality raw material selection crucial for downstream processing? The use of high-quality, consistent raw materials is crucial because many impurities introduced upstream are difficult and costly to remove downstream. For instance, the removal of lipopolysaccharides and endotoxins is complicated and causes high costs in the downstream process; selecting endotoxin-free starting materials can significantly improve this process and minimize risk [5]. Downstream process costs currently account for the majority (about 80%) of the cost to produce and purify a biopharmaceutical active molecule [5].

FAQ 4: What is an Experimental Design for Mixtures (DoE) and how can it help reduce byproducts? Experimental Design for Mixtures (DoE) is a rational chemometric approach for studying the effects of ingredients/components in formulations where the total is a constant value (100%) [7]. It is particularly useful for understanding the effect of variation in the proportions of ingredients on outcomes like byproduct formation. Modeling the response(s) allows researchers to achieve a global knowledge of the system within the defined experimental domain, enabling the optimization of formulations to minimize undesirable byproducts [7].

Troubleshooting Guides

Problem: Inconsistent Cell Culture Performance

| Potential Cause | Investigation Method | Corrective & Preventive Action |

|---|---|---|

| Raw Material Lot Variability | - Test new lots against existing specifications.- Perform side-by-side bioreactor runs comparing different lots. | - Strengthen supplier qualification and implement raw material risk assessment [6] [5].- Use application-specific raw materials (e.g., Kolliphor P188 Cell Culture) to reduce performance variations [4]. |

| Impurities (e.g., Endotoxins) | - Test raw materials for endotoxin levels and other critical impurities. | - Source compendial (e.g., Ph. Eur., USP) GMP-grade raw materials where possible [5].- Implement raw material testing strategies aligned with pharmacopeial standards [5]. |

Problem: Increased Levels of Process-Related Impurities (e.g., HCPs, DNA)

| Potential Cause | Investigation Method | Corrective & Preventive Action |

|---|---|---|

| Inefficient Downstream Purification | - Track impurity clearance across each purification unit operation. | - Re-optimize chromatography steps and cleaning-in-place (CIP) procedures. Consider next-generation flocculants for downstream intensification [4]. |

| Upstream Process Drift | - Correlate impurity levels with upstream process parameter data (e.g., cell viability, metabolite profiles). | - Control critical process parameters (CPPs) within a tighter design space. Use risk assessment to forecast potential issues and prioritize corrective actions [6]. |

| Copurifying Impurities (e.g., PLBL2) | - Use specific ELISA assays to monitor difficult-to-remove host-cell proteins like PLBL2 [6]. | - Adjust purification conditions (e.g., pH, conductivity) to disrupt protein-protein interactions. Ensure precise HCP monitoring is in place [6]. |

Problem: Inconsistent Final Drug Product Quality

| Potential Cause | Investigation Method | Corrective & Preventive Action |

|---|---|---|

| Excipient-Drug Product Interactions | - Conduct formulation compatibility studies using mixture design (DoE) [7]. | - Optimize the formulation using a structured DoE approach to understand the effect of excipient proportions on product stability and quality [7]. |

| Unoptimized Formulation | - Study the stability of the drug product under various stress conditions (e.g., thermal, mechanical). | - Select excipients known for their stabilizing properties, such as sucrose, which serves as an excellent stabilizer for mAb products and as a cryoprotectant [6]. |

Experimental Protocols for Key Investigations

Protocol 1: Assessing Raw Material Lot-to-Lot Variability Using a Risk-Based Approach

Objective: To evaluate the impact of a new lot of a critical raw material (e.g., a cell culture medium component) on process performance and product quality.

Materials:

- New lot and qualified reference lot of the raw material.

- Relevant cell line.

- Bioreactor or shake flask systems.

- Analytics for key quality attributes (e.g., cell viability, titer, product aggregation, HCP levels).

Methodology:

- Risk Ranking: Based on prior knowledge and literature, assign a risk score to the raw material. High-risk materials (e.g., those directly contacting cells or product) proceed to experimental assessment.

- Experimental Design: Perform parallel bioreactor experiments (n≥3) using the new lot (Test) and the qualified reference lot (Control).

- In-Process Monitoring: Monitor and record cell growth (VCD, viability), metabolite profiles (glucose, lactate), and product titer throughout the run.

- Product Quality Analysis: At harvest, analyze the product for critical quality attributes (CQAs) such as:

- Purity: SEC-HPLC for aggregates and fragments.

- Impurities: ELISA for HCP and DNA.

- Potency: Relevant bioassay or binding assay.

- Data Analysis: Use statistical tools (e.g., t-test, ANOVA) to compare the performance and CQAs of the Test and Control groups. The new lot is considered equivalent if all CQAs fall within pre-defined acceptance ranges.

Protocol 2: Mixture Design (DoE) for Formulation Optimization to Minimize Byproducts

Objective: To systematically determine the optimal proportions of key excipients (e.g., stabilizers, buffers) in a formulation to minimize degradation products (e.g., aggregates).

Materials:

- Drug Substance.

- Excipients (e.g., Sucrose, Polysorbate 80, Histidine buffer).

- Analytical equipment (e.g., SEC-HPLC, visual inspection station).

Methodology:

- Define Goal and Factors: The goal is to minimize aggregation. The factors are the concentrations of three excipients (A, B, C), with the constraint that A+B+C = 100% of the excipient system.

- Select Design: A simplex-centroid mixture design is often suitable for exploring the full experimental region [7]. This design includes points representing pure components, binary mixtures, and a ternary mixture.

- Prepare Formulations: Prepare the drug product formulations according to the experimental points defined by the design.

- Apply Stress: Subject all formulations to a controlled stress condition (e.g., 40°C for 4 weeks) to accelerate degradation.

- Analyze Response: Measure the percentage of aggregates in each stressed sample using SEC-HPLC.

- Model and Optimize: Use statistical software to fit a regression model (e.g., a special cubic model) to the data. The model will generate a response surface that predicts the aggregation level for any combination of A, B, and C. Identify the excipient proportion that minimizes the aggregation response.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| Application-Specific Raw Materials (e.g., Kolliphor P188 Bio) | Developed to address performance variability in cell culture, providing more consistent shear stress protection and reducing process risks [4]. |

| Compendial GMP Raw Materials (e.g., Kollipro Urea Granules) | A compendial GMP product for use in inclusion body solubilization and chromatography column cleaning. The granule form offers improved flowability, reduced agglomeration, and decreased handling time [4]. |

| Sucrose | A pharmaceutical excipient with a long history of use as an excellent stabilizer for monoclonal antibody (mAb) products, peptide-based drugs, and vaccines. It acts as a cryoprotectant in mRNA-based vaccines [6]. |

| Reference Standards | Used as calibrators to ensure that substances are used consistently, meet the same specifications, and are transferred to consistent production, bridging the gap in raw material guidelines [5]. |

| PLBL2-Specific ELISA Kits | Enable precise monitoring of this high-risk, difficult-to-remove host-cell protein, which is known for its immunogenicity and tendency to copurify with recombinant proteins [6]. |

Workflow and Relationship Diagrams

Diagram 1: A high-level workflow illustrating the logical process for identifying and controlling critical sources of variability to reduce byproducts.

Diagram 2: A detailed workflow for applying a Mixture Design (DoE) to optimize a multi-excipient formulation, with the goal of minimizing degradation byproducts like aggregates.

The Impact of Byproducts on Drug Safety, Efficacy, and Regulatory Compliance

Frequently Asked Questions (FAQs)

Q1: What are the primary sources of byproducts in pharmaceutical products? Byproducts, also known as degradation products, can originate from multiple sources. Environmental factors like temperature, moisture, light, and oxygen can cause the active pharmaceutical ingredient (API) to break down through processes like hydrolysis and oxidation [8]. Furthermore, interactions between the API and excipients (inactive ingredients) or impurities within the excipients themselves can catalyze degradation reactions, leading to the formation of unwanted byproducts [8] [9].

Q2: How can byproducts impact drug safety and efficacy? Byproducts can compromise patient safety by introducing toxic or allergenic impurities into the drug product [8] [9]. For example, some degradation products may be carcinogenic or cause hypersensitivity reactions. Regarding efficacy, byproducts often signify that the API itself is degrading, which reduces the potency of the drug and can lead to sub-therapeutic dosing, treatment failure, and diminished shelf life [8] [9].

Q3: What analytical techniques are used to identify and quantify byproducts? A combination of analytical techniques is typically employed. Chromatographic methods like High-Performance Liquid Chromatography (HPLC) are standard for separating and quantifying byproducts [8]. Spectroscopic methods such as Raman spectroscopy and Mass Spectrometry (MS) are used for structural elucidation [10]. For particulate contamination, physical methods like Scanning Electron Microscopy with Energy-Dispersive X-ray spectroscopy (SEM-EDX) can identify inorganic compounds, while techniques like LC-UV-SPE coupled with NMR are powerful for isolating and characterizing unknown organic impurities [10].

Q4: How does regulatory guidance address the control of byproducts? Regulatory agencies like the FDA mandate strict adherence to Current Good Manufacturing Practice (CGMP) regulations, which are the minimum requirements for methods, facilities, and controls used in manufacturing [11]. These regulations ensure a product is safe and has the ingredients and strength it claims to have. Furthermore, for combination products (e.g., drug-device combinations), a rigorous regulatory framework exists to evaluate safety and efficacy, which includes assessing potential risks from interactions between the components [12].

Troubleshooting Guides

Guide 1: Investigating Particulate Contamination in a Vial

Problem: Visible particles are observed in a liquid drug product during a routine quality check.

Investigation Protocol:

- Initial Assessment & Documentation: Visually inspect the affected vials under controlled lighting. Document the size, color, and approximate number of particles. Isolate the affected batch to prevent further use [10].

- Non-Destructive Physical Analysis: Begin with techniques that preserve the sample. Use Light Microscopy to examine particle morphology. Proceed with SEM-EDX to obtain high-resolution images of the particle surface and determine its elemental composition (e.g., to identify metals, silica) [10].

- Chemical Identification: If particles are soluble, proceed with chemical structure elucidation. Perform qualitative solubility tests in various media. Use coupled techniques like LC-HRMS (Liquid Chromatography-High Resolution Mass Spectrometry) or GC-MS (Gas Chromatography-Mass Spectrometry) to separate components and identify their molecular structure. NMR can provide definitive structural information [10].

- Root Cause Analysis & Corrective Action: Correlate the analytical findings with the manufacturing process. The identified chemical (e.g., a plasticizer, lubricant, or API degradation product) can be traced back to a specific source, such as a faulty seal, equipment abrasion, or an unstable formulation. Implement corrective actions, which may include replacing a component, modifying the manufacturing process, or reformulating the product [10].

Table: Key Analytical Techniques for Particulate Contamination

| Technique | Primary Function | Application Example |

|---|---|---|

| SEM-EDX | Provides surface topology and elemental composition. | Identifying metallic abrasion from machinery or inorganic residues [10]. |

| Raman Spectroscopy | Provides a molecular fingerprint for identification. | Identifying organic particles like polymer fragments from single-use equipment [10]. |

| LC-HRMS | Separates mixtures and provides precise molecular weight and structure. | Identifying and characterizing soluble organic byproducts or degradants [10]. |

| LC-UV-SPE-NMR | Traps, separates, and isolates individual impurities for definitive structure elucidation. | Identifying unknown degradants when a reference standard is unavailable [10]. |

Guide 2: Addressing Drug-Excipient Incompatibility

Problem: A solid dosage formulation shows discoloration and a decrease in potency during stability studies.

Investigation Protocol:

- Compatibility Screening: Review the chemical structures of the API and all excipients. Is the API prone to oxidation? Does it contain a primary amine that could react with a reducing sugar? Use techniques like Differential Scanning Calorimetry (DSC) to screen for physical interactions [8] [9].

- Identify Degradation Pathway: Based on the suspected pathway (e.g., Maillard reaction, oxidation), design experiments to confirm.

- For Maillard Reaction: Check if the formulation contains a reducing sugar like lactose. Stress the API-excipient mixture with heat and humidity and monitor for browning and the formation of new peaks in HPLC analysis [9].

- For Oxidation: Check excipient specifications for peroxide or aldehyde impurity levels. Stress the product under an oxygen-rich atmosphere and use HPLC to monitor for oxidative degradants [9].

- Formulation Optimization: Implement mitigation strategies based on the root cause.

- Switch Excipients: Replace a problematic excipient (e.g., replace lactose with a non-reducing sugar like mannitol) [9].

- Use Stabilizers: Incorporate antioxidants (e.g., chelators like EDTA) or pH-stabilizing buffers to control the microenvironment [8].

- Advanced Techniques: Consider technologies like microencapsulation to create a protective barrier around the API, shielding it from interactive excipients or moisture [8].

Table: Common Drug-Excipient Interactions and Mitigation Strategies

| Interaction Type | Mechanism | Mitigation Strategy |

|---|---|---|

| Maillard Reaction | Reaction between a primary amine (API) and a reducing sugar (excipient, e.g., lactose). | Replace lactose with mannitol or starch. Use excipient grades with low reducing sugar content [9]. |

| Oxidation | Peroxide or aldehyde impurities in excipients (e.g., Povidone, PEG) oxidize the API. | Select excipient grades with low peroxide/aldehyde limits. Add antioxidants like ascorbic acid or chelators like EDTA [8] [9]. |

| Physical Over-lubrication | Excessive mixing with hydrophobic lubricants (e.g., Mg Stearate) coats API particles. | Optimize mixing time and shear force during the blending step [9]. |

Experimental Protocols

Protocol 1: Forced Degradation Studies (Stress Testing)

Objective: To identify likely degradation products and elucidate the degradation pathways of an API, establishing the intrinsic stability of the molecule and validating analytical methods.

Materials:

- API sample

- Reagents: Acid (e.g., 0.1M HCl), Base (e.g., 0.1M NaOH), Hydrogen Peroxide (e.g., 3%), Solvents

- Equipment: HPLC system with UV/PDA detector, controlled stability chambers, thermal analysis equipment.

Methodology:

- Acidic/Basic Hydrolysis: Prepare solutions of the API in acidic and basic conditions. Heat these solutions at an elevated temperature (e.g., 60°C) for a defined period (e.g., 1-7 days). Periodically sample and neutralize the solution before HPLC analysis [8].

- Oxidative Stress: Expose the API to an oxidizing agent like hydrogen peroxide. This can be done in solution at ambient or slightly elevated temperatures. Monitor the reaction by HPLC for the appearance of new peaks [8] [9].

- Photostability: Expose solid API and the formulated product to controlled UV-Vis light (as per ICH Q1B guidelines). Compare against a dark control. Use a UV spectrophotometer to assess changes [8].

- Thermal Stress: Subject the solid API and formulation to elevated temperatures (e.g., 40°C, 60°C) in stability chambers. Analyze samples at intervals for potency and related substances [8].

Data Analysis: Compare HPLC chromatograms of stressed samples with unstressed controls. The new peaks that appear are degradation products. Their formation under different stress conditions helps map the degradation pathway of the API.

Protocol 2: Root Cause Analysis for a Manufacturing Deviation

Objective: To systematically investigate a quality defect (e.g., out-of-specification assay result) detected during manufacturing, identify the root cause, and implement a corrective and preventive action (CAPA).

Materials:

- Batch manufacturing records

- Samples from the affected batch and reference batches

- Relevant analytical equipment (HPLC, etc.)

Methodology:

- Problem Definition: Clearly describe the problem (What?), including the timing (When?) and the personnel, equipment, and materials involved (Who?) [10].

- Information Gathering: Collect all relevant data, including batch records, IPC data, equipment logs, and environmental monitoring records. Transfer this information completely to the analytical team [10].

- Hypothesis & Testing: Brainstorm potential root causes. Design an analytical strategy using parallel techniques (e.g., physical and chemical methods) to test these hypotheses efficiently [10].

- Localization & Cause Determination: Use analytical results to pinpoint where the incident happened in the manufacturing process (Where?) and how it happened (e.g., a specific malfunction) [10].

- Root Cause Identification & CAPA: Determine why the incident occurred (e.g., a risk that was not previously obvious). Define and implement preventive measures to avoid recurrence [10].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Investigating and Mitigating Byproducts

| Item / Reagent | Function / Purpose |

|---|---|

| Buffers (Citrate, Phosphate) | To maintain a stable pH in liquid formulations, preventing acid/base-catalyzed degradation [8]. |

| Antioxidants & Chelators (e.g., EDTA) | To bind metal ions and prevent oxidative degradation of the API [8]. |

| Stabilizers (e.g., HPMC, PVP) | To improve the physical stability and solubility of the product, potentially protecting the API [8]. |

| Alternative Excipients (e.g., Mannitol) | Non-reducing sugars used as fillers to avoid Maillard reactions with amine-containing APIs [9]. |

| Lyophilizer (Freeze Dryer) | To remove water from heat-sensitive products, stabilizing them against hydrolysis [8]. |

| Reference Standards for Byproducts | Pure substances used to identify and quantify specific degradation products in analytical methods (e.g., HPLC) [10]. |

Experimental Workflow Visualizations

Byproduct Investigation Workflow

Byproduct Risk Mitigation Framework

Frequently Asked Questions

Q1: Our degradation studies are generating unexpectedly high levels of a particular byproduct. What are the primary experimental factors we should investigate?

- A: High byproduct levels often stem from suboptimal control of stress agent intensity or solution pH. An overly aggressive stress condition (e.g., excessive temperature or oxidant concentration) can push reactions down alternative pathways. Similarly, a small shift in pH can dramatically alter the degradation mechanism of an active pharmaceutical ingredient (API). We recommend a systematic review of your stress parameters and a Design of Experiments (DoE) approach to identify the precise factors influencing this byproduct's formation [13].

Q2: During acidic stress, we are seeing multiple new peaks in our HPLC chromatogram. How can we determine if these are all relevant degradation products?

- A: Not all chromatographic peaks are significant. To prioritize, correlate the peak area of each new substance with the main peak's decrease. Any degradation product exceeding the identification threshold (generally ≥0.1% of the drug substance) must be identified structurally using techniques like LC-MS/MS. Products below this threshold should still be monitored for growth over time [14].

Q3: The color of our drug solution changes significantly during photostability testing, but no new degradation products are detected by our HPLC method. What could be the cause?

- A: This is a common issue. The color change likely indicates the formation of low-level, polymeric degradation products or chromophoric impurities that your current analytical method is not capturing. Consider modifying your HPLC method with a different column chemistry (e.g., HILIC) or a broader gradient. Additionally, employ UV-Vis spectroscopy to quantify the color change and confirm the method is fit-for-purpose [15].

Q4: How can we use forced degradation results to improve the formulation design and reduce byproducts in the final drug product?

- A: Forced degradation is a predictive tool. By identifying the API's key degradation pathways, you can design a formulation that is inherently more stable. For example, if oxidation is a major pathway, the use of antioxidants like BHT or chelating agents like EDTA is warranted. If hydrolysis is the issue, a lyophilized (freeze-dried) powder instead of an aqueous solution may be the optimal strategy to minimize byproduct formation throughout the product's shelf life.

Troubleshooting Guides

Issue: Poor Mass Balance in HPLC Analysis

A poor mass balance (>98%) occurs when the sum of the area percentages of the parent drug and all detected degradation products is significantly less than 100% of the initial drug area. This indicates that not all degradation products are being detected.

- Potential Cause 1: The analytical method is not detecting all degradation products.

- Solution: Develop a orthogonal screening method. If using reversed-phase HPLC (RP-HPLC), try a different detection method (e.g., Charged Aerosol Detection or Evaporative Light Scattering Detection) which is better at detecting compounds with weak chromophores. Also, screen with a different separation mechanism, such as Hydrophilic Interaction Chromatography (HILIC) to capture highly polar degradants.

- Potential Cause 2: Degradation products are volatile or have been lost during sample preparation.

- Solution: Review sample preparation steps. Avoid drying steps if volatile degradants are suspected. Use lower temperatures during sample concentration.

- Potential Cause 3: The degradation product co-elutes with the parent drug or another peak.

- Solution: Employ LC-MS to check for peak purity. Re-develop the HPLC method to improve resolution, potentially by adjusting the mobile phase pH, gradient profile, or column temperature.

Issue: Unreproducible Degradation Kinetics

The rate of degradation varies significantly between different experimental runs, making data unreliable.

- Potential Cause 1: Inconsistent control of stress conditions.

- Solution: For thermal studies, ensure the use of a calibrated temperature-controlled oven or stability chamber, not a hot plate. For photostability, use a qualified light source that meets ICH Q1B requirements. Document environmental conditions meticulously.

- Potential Cause 2: Uncontrolled solution pH.

- Solution: Always use adequately buffered solutions and confirm the initial pH. Remember that pH can shift with temperature changes (e.g., during thermal stress). Use buffers with appropriate pKa values for the stress condition.

- Potential Cause 3: The presence of trace metals catalyzing reactions.

- Solution: Use high-purity reagents and solvents. Consider performing studies in containers that minimize leachables (e.g., glass type I) or adding a chelating agent like EDTA (0.01-0.05%) to sequester catalytic metal ions [13].

Quantitative Data from Forced Degradation Studies

The following table summarizes typical stress conditions and the quantitative data they generate, which is crucial for understanding degradation pathways and kinetics.

Table 1: Standard Forced Degradation Stress Conditions and Key Metrics

| Stress Condition | Typical Parameters | Key Quantitative Metrics | Target Degradation (for method validation) | Common Byproducts Monitored |

|---|---|---|---|---|

| Acidic Hydrolysis | 0.1-1M HCl, 40-70°C, 1-7 days | - Purity (% main peak)- % Total Related Substances- Mass Balance (%) | 5-20% Degradation | Deamidation products, Hydrolysis products (e.g., from esters/amides) |

| Basic Hydrolysis | 0.1-1M NaOH, 40-70°C, 1-7 days | - Purity (% main peak)- % Total Related Substances- Mass Balance (%) | 5-20% Degradation | Hydrolysis products, Diketopiperazine (for peptides) |

| Oxidative Stress | 0.1-3% H₂O₂, room temperature, 1-24 hours | - Purity (% main peak)- % of Major Oxidant- Mass Balance (%) | 5-20% Degradation | Sulfoxides, N-oxides, Hydroperoxides |

| Thermal Stress (Solid) | 70-105°C, 1-4 weeks | - Purity (% main peak)- % Total Related Substances- Appearance/Color | 5-20% Degradation | Degradation products from pyrolysis, dehydration |

| Photostability | ≥1.2 million lux hours (Visible), ≥200 W·h/m² (UV) | - Purity (% main peak)- % Total Related Substances- Color Change (ΔE) | Evidence of change | Photolysis dimers, Isomers (e.g., cis/trans), Decarboxylation products |

Table 2: Example Degradation Kinetics Data for a Hypothetical API (BY-2024) Under Thermal Stress at 80°C

| Time Point (Days) | Potency (% of Label) | Total Related Substances (%) | Mass Balance (%) | Observation |

|---|---|---|---|---|

| 0 (Initial) | 100.2 | 0.15 | 100.4 | White, free-flowing powder |

| 7 | 98.5 | 1.2 | 99.7 | Slight off-white color |

| 14 | 95.8 | 3.5 | 99.3 | Light yellow tint |

| 21 | 92.1 | 6.8 | 98.9 | Yellow color |

| 28 | 87.4 | 11.1 | 98.5 | Brownish-yellow color |

Experimental Protocols

Protocol 1: Forced Degradation Study for an API in Solution

Objective: To elucidate the inherent stability characteristics of an API and identify likely degradation products under hydrolytic and oxidative conditions.

Materials:

- API powder (High Purity)

- Diluent (e.g., Water, Acetonitrile, Methanol)

- Stress Agents: 1M Hydrochloric Acid (HCl), 1M Sodium Hydroxide (NaOH), 3% w/v Hydrogen Peroxide (H₂O₂)

- Neutralization Agents: 1M Sodium Hydroxide (for acid stress), 1M Hydrochloric Acid (for base stress)

- Lab Equipment: Volumetric flasks, micropipettes, controlled temperature water bath or oven, HPLC system with PDA and MS detectors.

Methodology:

- Solution Preparation: Prepare a stock solution of the API at a concentration of 1 mg/mL in a suitable diluent.

- Stress Application:

- Acidic Hydrolysis: Transfer 1 mL of stock solution to a vial. Add 1 mL of 1M HCl. Mix well. Seal and place in a 70°C water bath.

- Basic Hydrolysis: Transfer 1 mL of stock solution to a vial. Add 1 mL of 1M NaOH. Mix well. Seal and place in a 70°C water bath.

- Oxidative Stress: Transfer 1 mL of stock solution to a vial. Add 1 mL of 3% H₂O₂. Mix well. Store at room temperature protected from light.

- Control: Prepare a control sample in diluent and store under the same conditions as the stressed samples.

- Sampling and Quenching: At predetermined time points (e.g., 24, 48, 72 hours), withdraw samples.

- For acid and base stresses, neutralize the sample immediately using an equivalent molar amount of base or acid, respectively.

- Dilute all samples with the mobile phase to a concentration suitable for HPLC analysis.

- Analysis: Analyze all samples using a validated stability-indicating HPLC method (e.g., RP-HPLC with a C18 column and UV detection). Inject the control sample and stressed samples to track the appearance of new peaks and the disappearance of the main peak.

Protocol 2: Photostability Testing per ICH Q1B

Objective: To evaluate the photosensitivity of a drug substance and generate relevant degradation products.

Materials:

- API powder in a clear glass vial or as a thin film in a Petri dish.

- Qualified photostability chamber meeting ICH Q1B requirements.

- UV and visible light sources providing the required illumination.

- Lux meter and radiometer for calibration.

Methodology:

- Sample Preparation: Prepare a representative sample of the API, ensuring a surface area of at least 1 cm² is exposed.

- Calibration: Confirm the chamber delivers the minimum required light exposure: 1.2 million lux hours for visible light and 200 watt-hours per square meter for UV light.

- Exposure:

- Place the sample and a dark control (wrapped in aluminum foil) inside the chamber.

- Expose the samples to both visible and UV light for a sufficient duration to meet the required total exposure.

- Analysis: After exposure, analyze the sample and dark control for changes in appearance, color, and purity using visual inspection, HPLC, and other relevant techniques (e.g., UV-Vis for color).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Forced Degradation Studies

| Reagent/Material | Primary Function in Forced Degradation | Key Consideration for Byproduct Reduction |

|---|---|---|

| Buffer Salts (e.g., Phosphate, Acetate) | To maintain a constant pH during stress studies, ensuring reproducible kinetics. | The choice of buffer can catalyze certain reactions; always include a control and consider multiple buffers. |

| Antioxidants (e.g., BHT, BHA) | To investigate the potential for oxidative degradation and test protective strategies in formulations. | Effectiveness is highly dependent on the drug molecule and the formulation matrix; screening is essential. |

| Chelating Agents (e.g., EDTA) | To bind trace metal ions (e.g., Fe²⁺, Cu²⁺) that can catalyze oxidation reactions. | Crucial for biologics and metal-sensitive small molecules. Can significantly reduce oxidation-related byproducts. |

| High-Purity Solvents (HPLC Grade) | To prepare solutions and mobile phases, minimizing interference from impurities. | Solvent impurities can react with the API under stress, generating misleading degradation products. |

| LC-MS Grade Additives (e.g., Formic Acid) | To enhance ionization in mass spectrometric detection for the identification of degradants. | Essential for obtaining clear, interpretable mass spectra to elucidate the structure of unknown byproducts. |

Experimental Workflow and Pathway Visualization

Diagram 1: Forced degradation study workflow.

Diagram 2: API degradation pathways and mitigation.

Implementing DoE for Byproduct Control: From Screening to Optimization

Frequently Asked Questions

1. What is the most important initial step before running any DoE? Before launching any experiment, the most critical step is ensuring your process is stable and that you have controlled all input conditions not being actively tested [16]. A DoE performed on an unstable process will not be able to distinguish the effects of your factors from random background noise, leading to false conclusions [16]. Key preparatory activities include:

- Process Stabilization: Use Statistical Process Control (SPC) to confirm the process is consistent and repeatable under normal settings [16].

- Input Control: Secure a single, consistent batch of raw materials and ensure all machine settings not part of the experiment are locked in [16].

- Measurement System Analysis (MSA): Verify that your measuring instruments are calibrated and reliable. A Gage R&R study is recommended for critical measurements [16].

2. I have 5 or more factors to screen. Which design should I start with? For screening 5 or more factors, a Fractional Factorial or D-Optimal design is typically the best starting point [17] [18] [19]. A full factorial design with 5 factors, each at 2 levels, requires 32 runs. This number can be halved to 16 runs with a Resolution V fractional factorial design, which still allows you to estimate all main effects and two-factor interactions without confusing them with each other [17]. If you have unusual constraints (e.g., a specific maximum number of runs, or certain factor combinations are impossible), a D-optimal design can create a custom, efficient screening plan [20] [19].

3. When is a Full Factorial design necessary? A Full Factorial design is most appropriate when you have identified a few critical factors (typically 2 to 4) and need to fully characterize their interactions and optimize the process [21] [17]. It is the only design that investigates all possible combinations of factors and levels, allowing you to estimate all main effects and every interaction, no matter how high the order [22]. However, be cautious as the number of runs grows exponentially with each additional factor [21].

4. What does "aliasing" mean in Fractional Factorial designs? Aliasing (or confounding) occurs when a fractional factorial design is intentionally constructed so that two or more effects cannot be distinguished from one another [21] [17]. For example, a main effect might be aliased with a four-factor interaction, or a two-factor interaction might be aliased with a three-factor interaction [17]. This is a trade-off for reducing the number of experimental runs. The assumption is that higher-order interactions (involving three or more factors) are rare and can be safely ignored [17].

5. How do I choose between a classical design (Full or Fractional Factorial) and an "Optimal" design like D-optimal? The choice often depends on the constraints and specific goals of your experiment [20] [19].

- Choose Classical Designs (Full or Fractional Factorial) when your experimental situation fits a standard, tabulated design. They are statistically optimal (orthogonal), easy to set up and analyze, and their aliasing structure (for fractional factorials) is clear and well-understood [20] [19].

- Choose D-Optimal Designs when you face practical constraints that classical designs cannot accommodate. This includes a specific, limited number of runs; the need to avoid impossible factor combinations; or the inclusion of both continuous and categorical factors in non-standard ways [20] [19]. A key disadvantage is that D-optimal designs require you to specify a model in advance and can result in partially correlated (non-orthogonal) factor effects [20] [19].

6. My goal is to find the optimal settings for a reaction to minimize a byproduct. Which design should I use? Once screening has identified a few vital factors (e.g., 2-4), and you suspect there might be curvature in the response (i.e., the optimum is not at the edge of your experimental space), a Response Surface Methodology (RSM) design is the correct choice [21]. Common RSM designs include Central Composite Designs (CCD) and Box-Behnken Designs [21] [18]. These designs are specifically created to fit a quadratic model, which allows you to locate a maximum, minimum, or saddle point—exactly what is needed for optimization tasks like minimizing an unwanted byproduct [21].

Experimental Design Comparison Table

The table below summarizes the key characteristics of the three design types to help you make an informed selection.

| Feature | Full Factorial | Fractional Factorial | D-Optimal |

|---|---|---|---|

| Primary Goal | Optimization; understanding all interactions [17] | Factor screening; identifying vital few factors [21] [17] | Screening & modeling with constraints [20] |

| Key Principle | Runs all possible factor combinations [22] | Runs a carefully chosen subset (fraction) of full factorial [17] | Uses algorithm to select runs that minimize parameter variance [20] |

| Number of Runs | 2^k (for k factors at 2 levels). Grows exponentially [21] | 2^(k-p) (e.g., half, quarter). Grows much slower [21] | User-specified; can be any number [20] |

| Interactions | Can estimate ALL interactions [22] | Higher-order interactions are aliased/confounded [21] | User-specified in the model [20] |

| Efficiency | Low for many factors [21] | High for screening [17] | Highly efficient for given number of runs [20] |

| Best Use Case | Few factors (<5); when all interactions must be studied [21] [17] | Many factors (>4); initial screening to reduce factor set [21] [18] | Unusual constraints; mixed factor types; disallowed combinations [20] [19] |

| Key Limitation | Impractical for many factors due to run count [21] | Aliasing of effects; may require follow-up experiments [17] | Model-dependent; can produce correlated estimates [20] |

Detailed Experimental Protocols

Protocol 1: Screening with a Fractional Factorial Design

Objective: To efficiently identify which of several factors (e.g., temperature, catalyst concentration, raw material supplier, mixing speed) have a significant effect on the yield and byproduct formation of an Active Pharmaceutical Ingredient (API) [18].

Methodology:

- Define Factors and Levels: Select 5-6 potential critical factors. Set each to a "high" (+1) and "low" (-1) level that represents a reasonable and safe operating range [23].

- Select Design Resolution: Choose a Resolution V (or higher) design. This ensures that main effects and two-factor interactions are not aliased with each other, preventing misleading conclusions [17].

- Randomize Runs: Execute the experimental runs in a randomized order to avoid systematic bias from lurking variables [24].

- Replicate Center Points: Add 3-5 replicate runs at the center point (the midpoint between the high and low levels for all continuous factors). This provides a check for curvature and an estimate of pure experimental error [21].

- Analysis: Use statistical software to perform an Analysis of Variance (ANOVA). Focus on identifying factors with statistically significant main effects. Examine significant two-factor interactions to understand how factors influence each other.

Protocol 2: Optimization with a D-Optimal Design

Objective: To model and optimize a process with constraints, such as a limited budget for runs or the existence of factor combinations that are impossible or unsafe to run [20] [19].

Methodology:

- Define the Model: Specify the factors and the model you wish to fit (e.g., a main effects model, a model with interactions, or a quadratic model for RSM).

- Specify Constraints: Define any disallowed combinations of factors (e.g., "High Temperature and High Pressure cannot be run simultaneously") [19].

- Generate the Design: Use statistical software to generate the D-optimal design. The algorithm will select the set of runs from a candidate set that provides the most precise estimates for your specified model [20].

- Execute and Analyze: Run the experiments in a randomized order. Analyze the data to fit the model and create a response surface plot. This plot will visually guide you towards the factor settings that minimize byproducts or maximize yield [25].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Consistent Raw Material Batch | Using a single, verified batch of materials (e.g., a specific lot of an excipient) eliminates variability from material composition, ensuring observed effects are due to the tested factors [16]. |

| Calibrated Measurement Instruments | Reliable data collection depends on instruments (e.g., HPLC, scales) that are recently calibrated and verified via a Measurement System Analysis (MSA) [16]. |

| Central Points | Replicate runs at the middle of the factor ranges help detect curvature and estimate pure, unassigned experimental error [21]. |

| Checklists & Poka-Yoke | Standardized checklists and mistake-proofing procedures ensure each experimental run is set up identically, preventing human error from contaminating the results [16]. |

| Blocking Plans | A plan for grouping runs (e.g., by day or operator) accounts for known sources of nuisance variation (like different production shifts) that cannot be controlled directly [16] [24]. |

DoE Selection Workflow

The following diagram outlines a logical decision pathway to select the most appropriate Design of Experiments based on your project goals and constraints.

Diagram 1: A logical workflow to guide the selection of an experimental design.

In the critical pursuit of reducing process byproducts in pharmaceutical development, screening designs serve as a powerful statistical methodology for efficiently identifying the "vital few" factors from the "trivial many" that significantly influence your desired output and byproduct formation [26]. When developing a new drug substance, researchers often face numerous potential factors—such as temperature, catalyst concentration, solvent composition, and mixing rate—that could affect the yield and purity of the final product. Testing all possible combinations of these factors would be prohibitively time-consuming and resource-intensive. Screening designs address this challenge by using a strategically selected subset of experimental runs to distinguish significant main effects from less influential factors, providing a cost-effective approach for initial experimentation [27] [26].

The fundamental principles that make screening designs particularly effective for byproduct reduction include the sparsity of effects (relatively few factors actually have significant effects), hierarchy (main effects are more likely to be important than interactions, and lower-order interactions are more likely than higher-order ones), and heredity (important interactions are most likely to occur between factors that have significant main effects) [26]. By applying these principles, researchers can rapidly focus their optimization efforts on the critical parameters that most impact byproduct formation, ultimately leading to cleaner, more efficient manufacturing processes with reduced impurity profiles.

Troubleshooting Guides & FAQs

Common Experimental Issues and Solutions

| Problem Scenario | Possible Causes | Recommended Solutions |

|---|---|---|

| Inconsistent results between experimental runs | Uncontrolled noise variables; measurement system variability; improper randomization [27] | Replicate center points to estimate pure error; randomize run order; control environmental factors [26] |

| No statistically significant factors identified | Factor ranges too narrow; large experimental error; important factors not included [27] | Widen factor levels; increase replication; include additional factors based on process knowledge |

| Cannot separate effects of two factors (aliasing) | Resolution III design where main effects are confounded with two-factor interactions [28] | Use design folding to increase resolution; augment with additional runs; select higher-resolution design initially |

| Unexpected curvature in response | Linear model insufficient; optimal conditions within experimental range [26] | Add center points to detect curvature; follow with response surface methodology for optimization [29] |

| Model fails validation tests | Important interactions or quadratic effects missing from model; unreliable effect estimates [28] | Conduct confirmation runs; augment design to estimate interactions; use sequential experimentation approach |

Frequently Asked Questions

What is the primary purpose of a screening design in pharmaceutical development? The primary purpose is to efficiently identify the most critical factors affecting your process response—particularly beneficial when working with complex reactions where multiple parameters may influence both main product yield and byproduct formation. This approach saves considerable time and resources compared to one-factor-at-a-time (OFAT) experimentation, which additionally fails to detect factor interactions [27] [30].

How many factors can I screen in a single design? Screening designs can typically handle from 4 to over 20 factors, though the practical limit depends on your experimental budget and willingness to accept some confounding of effects [26]. For example, a 12-run Plackett-Burman design can screen up to 11 factors, though with the limitation that main effects are aliased with two-factor interactions [30].

When should I choose a Plackett-Burman design over a fractional factorial design? Plackett-Burman designs are particularly useful when you need to screen a large number of factors (e.g., more than 5) with a very small number of runs and are primarily interested in main effects only [29]. Fractional factorial designs offer more flexibility in terms of resolution and ability to estimate some interactions, though they typically require more runs for the same number of factors [28].

How do I handle both continuous and categorical factors in my screening design? When dealing with both continuous factors (e.g., temperature, concentration) and categorical factors (e.g., catalyst type, solvent supplier), a recommended approach is to first use a Taguchi design or similar approach to handle the categorical factors and represent continuous factors in a two-level format. After determining optimal levels for categorical factors, use a central composite design for final optimization of the continuous factors [31].

What should I do after my screening experiment identifies important factors? Once key factors are identified, the next steps typically include: (1) conducting confirmation runs to verify the findings, (2) reducing the model by removing unimportant factors, (3) designing a follow-up experiment (often a response surface methodology) to fully characterize the response landscape and identify optimal factor settings [28] [26].

Quantitative Comparison of Screening Design Methods

Performance Characteristics of Different Screening Designs

| Design Type | Number of Runs for 6 Factors | Maximum Factors for 16 Runs | Resolution | Ability to Detect Curvature | Best Use Cases |

|---|---|---|---|---|---|

| Plackett-Burman | 12 runs [30] | 15 factors | III (main effects aliased with 2FI) [29] | No (unless center points added) [26] | Initial screening with many factors, main effects only [30] |

| Fractional Factorial (½ fraction) | 32 runs [28] | 5 factors (in 16 runs) | V (main effects and 2FI clear) [28] | No (unless center points added) [26] | Screening when some 2FI estimation needed [28] |

| Definitive Screening | 13 runs | 6 factors | Special structure (main effects clear of 2FI) | Yes (estimates quadratic effects) | Screening when curvature is suspected [27] |

| Taguchi OA | Varies by array | Varies by array | III or higher [29] | Limited | Robust parameter design, multiple categorical factors [31] |

Statistical Performance Metrics for Byproduct Reduction

| Design Characteristic | Impact on Byproduct Reduction | Recommended Approach |

|---|---|---|

| Aliasing Structure | Critical for identifying true byproduct causes vs. accidental correlations [28] | Use Resolution IV or higher when interactions likely; understand confounding pattern [29] |

| Projection Properties | Ensures design remains useful after eliminating unimportant factors [26] | Select designs with good projection properties for sequential experimentation |

| Design Efficiency | Enables more factors to be studied with limited experimental resources [27] | Balance number of factors vs. runs; 1.5 to 3 times as many runs as factors often effective |

| Power for Effect Detection | Determines ability to detect practically significant effects on byproduct formation [26] | Consider expected effect size and process variability when determining number of runs |

Experimental Protocols for Screening Designs

Protocol: Plackett-Burman Screening Design for Reaction Byproduct Reduction

Objective: Identify critical factors influencing byproduct yield in a catalytic cross-coupling reaction [30].

Materials and Equipment:

- Reaction substrates and reagents

- Catalyst system (e.g., palladium-based catalyst)

- Ligands with varying electronic and steric properties

- Solvents of different polarity (e.g., DMSO, acetonitrile)

- Temperature-controlled reaction vessels

- Analytical equipment (HPLC, GC-MS) for yield and byproduct quantification

Procedure:

- Factor Selection: Identify 5-11 potential factors that may influence byproduct formation (e.g., catalyst loading, ligand electronic properties, solvent polarity, temperature, base strength) [30].

- Level Setting: Define high (+1) and low (-1) levels for each factor based on prior knowledge or preliminary experiments.

- Design Construction: Select appropriate Plackett-Burman design matrix using statistical software. For 7 factors, a 12-run design is appropriate [30].

- Randomization: Randomize the run order to minimize bias from uncontrolled variables.

- Experimental Execution: Conduct reactions according to the design matrix, ensuring careful control of factor levels.

- Response Measurement: Quantify both main product yield and byproduct formation using analytical methods.

- Statistical Analysis:

- Fit a linear model to the experimental data

- Use half-normal plots or Lenth's method to identify significant effects [28]

- Validate model assumptions through residual analysis

Troubleshooting Notes:

- If significant curvature is detected (via center points), consider adding axial points or transitioning to a response surface design [26].

- If aliasing prevents clear interpretation of significant effects, consider design augmentation through folding or adding additional runs [28].

Protocol: Fractional Factorial Screening for Bioprocess Byproduct Optimization

Objective: Screen key nutrients and process parameters affecting lactic acid production and byproduct formation in a fermentation process [32].

Materials and Equipment:

- Microbial strain (e.g., Lactobacillus delbrueckii)

- Fermentation media components

- Carbon source (e.g., cane molasses)

- Nitrogen sources (e.g., yeast extract, peptone)

- Bioreactor or shake flasks

- pH and temperature control system

- Analytics for product and byproduct quantification (HPLC, spectrophotometer)

Procedure:

- Factor Identification: Select 4-8 potential factors (e.g., carbon source concentration, nitrogen source type, pH, temperature, trace elements) [32].

- Design Selection: Choose appropriate fractional factorial design (e.g., 2⁴⁻¹ with 8 runs for 4 factors) with resolution IV or higher [28].

- Center Points: Include 3-4 center points to estimate pure error and detect curvature [26].

- Randomization and Execution: Randomize run order and conduct fermentations.

- Response Monitoring: Track multiple responses including main product titer, byproduct formation, and cell biomass [32].

- Data Analysis:

- Use multiple linear regression to model each response

- Apply hierarchical ordering principle (prioritize main effects over interactions)

- Identify significant factors using statistical significance (p-value) and practical significance (effect size)

Validation:

- Conduct confirmation runs at predicted optimal conditions

- Compare predicted vs. actual response values

- If agreement is poor, consider additional experiments to resolve aliased interactions

Visualization of Screening Design Workflows

Screening Design Selection Algorithm

Screening to Optimization Workflow

Research Reagent Solutions for Byproduct Reduction Studies

Essential Materials for Screening Experiments

| Reagent/Resource | Function in Screening Experiments | Application Example |

|---|---|---|

| Plackett-Burman Design Templates | Provides experimental layout for efficient main effects screening [30] | Screening 11 factors in only 12 runs to identify critical process parameters |

| Fractional Factorial Design Arrays | Balanced subsets of full factorial designs for estimating main effects and some interactions [28] | Studying 5 factors in 8 runs while estimating main effects clear of two-factor interactions |

| Center Points | Replicate runs at middle factor levels to estimate pure error and detect curvature [26] | Detecting nonlinear relationships between catalyst loading and byproduct formation |

| Statistical Analysis Software | Tools for designing experiments and analyzing results (e.g., JMP, Minitab, R) [28] [26] | Generating half-normal plots to distinguish significant effects from noise |

| Definitive Screening Designs | Modern screening approach that estimates main effects, interactions, and quadratic effects [27] | Identifying factors with nonlinear effects on reaction yield in a single experiment |

| Taguchi Orthogonal Arrays | Specialized designs for handling multiple categorical factors and robust parameter design [31] | Screening different catalyst types and solvent combinations simultaneously |

Advanced Applications in Pharmaceutical Development

Screening designs have proven particularly valuable in pharmaceutical development where byproduct reduction is critical for regulatory approval and patient safety. In synthetic chemistry applications, these designs have successfully identified key factors in cross-coupling reactions—including phosphine ligand properties, catalyst loading, base strength, and solvent polarity—that influence both yield and impurity profiles [30]. By systematically varying these parameters simultaneously rather than through traditional OFAT approaches, researchers can also detect interaction effects where the impact of one factor depends on the level of another, leading to more robust process understanding.

In biopharmaceutical applications, screening designs have optimized fermentation processes by identifying critical media components and process parameters that maximize product titer while minimizing undesirable byproducts [32]. For instance, in lactic acid production, factors such as amino acid supplementation, surfactant concentration (Tween 80), and carbon source levels were efficiently screened using statistical designs, leading to significant yield improvements and potentially reduced impurity formation. This approach is directly applicable to microbial production of antibiotics, therapeutic proteins, and other biopharmaceuticals where byproduct profiles impact both efficacy and safety.

The sequential nature of screening designs makes them particularly valuable for quality by design (QbD) initiatives in pharmaceutical development. By first screening broadly across many potential factors, then focusing on critical parameters for optimization, developers can establish proven acceptable ranges and design space boundaries that ensure consistent product quality with minimal byproducts—addressing key regulatory expectations for modern pharmaceutical manufacturing.

Response Surface Methodologies (RSM) for Mapping and Optimizing Process Parameters

Troubleshooting Guides and FAQs

This section addresses common challenges researchers face when implementing Response Surface Methodology (RSM) to reduce process byproducts.

Frequently Asked Questions (FAQs)

Q1: What is the primary value of RSM in process optimization, particularly for reducing byproducts? RSM is a collection of mathematical and statistical techniques that models the relationship between multiple input variables (factors) and one or more output responses (e.g., yield, byproducts). Its main value lies in efficiently identifying the optimal factor settings that maximize desired outcomes (like product yield) while minimizing undesired ones (like byproducts), without requiring a prohibitively large number of experiments. It combines design of experiments, regression analysis, and optimization methods into a unified strategy [33] [34] [35].

Q2: My quadratic model shows a high R-squared value, but its predictions are poor. What might be wrong? A high R-squared alone does not guarantee a good model. This issue often stems from model inadequacy. To diagnose this [33] [35]:

- Check for Lack-of-Fit: Perform a lack-of-fit test. A significant p-value indicates the model does not adequately represent the data.

- Analyze Residuals: Plot residuals against predicted values. Patterns in this plot (e.g., funnel shape) suggest violation of statistical assumptions like constant variance, which may require data transformation.

- Validate with Confirmation Runs: Always run additional experiments at the predicted optimal conditions to verify the model's accuracy in practice.

Q3: How do I choose between a Central Composite Design (CCD) and a Box-Behnken Design (BBD)? The choice depends on your experimental constraints and the factor space you need to explore. The table below compares key attributes [36] [35]:

| Feature | Central Composite Design (CCD) | Box-Behnken Design (BBD) |

|---|---|---|

| Design Points | Factorial points + Center points + Axial (star) points | Points at the midpoints of the edges of the factor space + Center points |

| Factor Levels | Typically 5 levels | 3 levels |

| Runs Required | More runs than BBD for the same number of factors | Fewer runs than CCD for the same number of factors |

| Best For | Fitting a full quadratic model and exploring a wide, rotatable region | Efficiently fitting a quadratic model when experimentation at the extreme corners (factorial points) is difficult or expensive |

Q4: I have multiple responses to optimize (e.g., maximize yield and minimize impurity). How can RSM handle this? This is a common multiple response optimization problem. A standard approach is the Desirability Function Method [36]. This method converts each response into an individual desirability function (a value between 0 for undesirable and 1 for fully desirable). These individual functions are then combined into a single overall desirability score, which is subsequently optimized.

Q5: My process factors have physical constraints. How can I ensure the RSM solution is practical? Ignoring constraints can lead to optimal conditions that are impossible to implement. The solution is to incorporate constraints directly into the optimization phase [33]. Techniques like the Dual Response Surface Method or the use of penalty functions can be employed to find the best possible operating conditions that satisfy all experimental and system constraints.

Key Research Reagent Solutions

The following table details essential "reagents" or components for a successful RSM experiment in a research context.

| Item / Solution | Function in RSM Experiment |

|---|---|

| Screening Design (e.g., Fractional Factorial) | Identifies the few critical factors from a large pool of potential variables, saving resources by focusing subsequent RSM on what truly matters [35]. |

| Statistical Software | Used to design the experiment, perform regression analysis, fit the response surface model, check its adequacy, and perform numerical optimization [33]. |

| Central Composite Design (CCD) | An experimental design that efficiently estimates first-order and second-order (quadratic) terms for building a accurate response surface model, crucial for locating an optimum [34] [35]. |

| Quadratic Regression Model | The core mathematical model (Y = β₀ + ∑βᵢXᵢ + ∑βᵢᵢXᵢ² + ∑βᵢⱼXᵢXⱼ) that captures curvature and interaction effects in the process, allowing for the prediction of responses [36] [34]. |

| Desirability Function | A multi-objective optimization technique that simultaneously optimizes multiple, potentially conflicting, responses (e.g., maximizing yield while minimizing a key byproduct) [36]. |

Experimental Protocol for Byproduct Reduction

This detailed protocol outlines the application of RSM to minimize byproduct formation in a chemical or biochemical process.

Objective: To determine the optimal levels of temperature (X₁), catalyst concentration (X₂), and reaction time (X₃) that minimize the concentration of a specified byproduct (Y₁) while maintaining a satisfactory level of primary product yield (Y₂).

Step-by-Step Methodology

1. Problem Definition and Screening

- Clearly define the response variables: Byproduct Concentration (Y₁) (to be minimized) and Product Yield (Y₂) (to be maximized or kept above a threshold).

- Identify all potential influencing factors through literature review and process knowledge.

- Use a Plackett-Burman or a 2-level Fractional Factorial design to screen and identify the most significant factors (e.g., X₁, X₂, X₃) for the detailed RSM study [33] [35].

2. Selection of Experimental Design

- For the three critical factors, select a Central Composite Design (CCD). A face-centered CCD with 3 center points is often a practical choice.

- This design will require 17 experimental runs (8 factorial points, 6 axial points, and 3 center points) [35].

- Code the factor levels into -1 (low), 0 (center), and +1 (high) units for analysis.

3. Model Fitting and Analysis

- Conduct the 17 experiments in a randomized order to avoid bias.

- Measure the responses (Y₁ and Y₂) for each run.

- Use multiple regression analysis to fit a second-order polynomial model for each response [33] [36].

- The generic model form is:

Y = β₀ + β₁X₁ + β₂X₂ + β₃X₃ + β₁₂X₁X₂ + β₁₃X₁X₃ + β₂₃X₂X₃ + β₁₁X₁² + β₂₂X₂² + β₃₃X₃² - Perform Analysis of Variance (ANOVA) for each model to check its statistical significance and the significance of individual terms [33] [35].

4. Model Validation and Optimization

- Validate the fitted models using residual analysis and lack-of-fit tests [35].

- If the models are adequate, use the Desirability Function Approach to find the factor settings that simultaneously minimize Y₁ and keep Y₂ within a desirable range [36].

- Perform 2-3 confirmation experiments at the recommended optimum conditions to verify the model's predictions. The average response from these runs should fall within the predicted confidence interval.

The workflow below visualizes this iterative RSM process.

Frequently Asked Questions

What is a Fractional Factorial Design and when should I use it? A Fractional Factorial Design (FFD) is a type of screening experiment that tests only a carefully selected subset, or fraction, of all the possible combinations of factors and levels from a full factorial design [28] [37]. You should use it in the early stages of experimentation, such as media optimization, when your goal is to efficiently screen a large number of factors (e.g., media components, process parameters) to identify the few that are most important [28] [38]. This approach is ideal when conducting a full factorial experiment would be too time-consuming, costly, or resource-prohibitive [39] [28].

How do I choose the right Resolution for my design? The choice of Resolution is a balance between experimental economy and the clarity of your results [39]. The table below summarizes common design Resolutions.

| Resolution | Key Characteristics | Best Use Case |

|---|---|---|