Thermal Management in Parallel Reactors: Fundamentals, Optimization, and Validation for Advanced Applications

This article provides a comprehensive overview of thermal management strategies for parallel reactor systems, a critical technology for enhancing process efficiency and safety in chemical synthesis and drug development.

Thermal Management in Parallel Reactors: Fundamentals, Optimization, and Validation for Advanced Applications

Abstract

This article provides a comprehensive overview of thermal management strategies for parallel reactor systems, a critical technology for enhancing process efficiency and safety in chemical synthesis and drug development. It explores the foundational principles of heat transfer and system architecture, delves into advanced methodological approaches including AI-driven control and multi-objective optimization, and addresses key troubleshooting and validation techniques. By synthesizing the latest research, this guide offers scientists and engineers a structured framework for designing, optimizing, and validating robust thermal management systems to accelerate R&D timelines and improve product yields.

Core Principles and System Architectures of Parallel Reactor Thermal Management

Fundamental Heat Transfer and Thermal-Hydraulic Phenomena in Reactor Systems

The study of thermal-hydraulic phenomena is fundamental to the design, operation, and safety analysis of nuclear reactor systems. Thermal-hydraulics encompasses the combined study of heat transfer and fluid flow, which directly impacts a reactor's efficiency, power density, and safety margins. In nuclear systems, thermal-hydraulics plays a critical role in removing heat generated from nuclear fission, maintaining fuel temperatures within safe limits, and ensuring reliable performance under both normal operation and postulated accident conditions. The primary challenge lies in managing extremely high power densities while preventing thermal failure of fuel cladding and structural materials.

Quantitative parameters representing the heat removal capacity in reactor systems are generally the maximum fuel temperature and the maximum cladding temperature. In water-cooled reactors with two-phase conditions, boiling crisis and post-dryout (PDO) heat transfer become limiting phenomena that can lead to unexpectedly high cladding temperatures [1]. The reliability of thermal-hydraulic analysis depends strongly on the accuracy of applied closure models describing key phenomena such as transversal exchange between sub-channels, critical heat flux (CHF), and post-CHF heat transfer [1].

For reactors with single-phase flow conditions, such as those cooled with liquid metals or supercritical water, the main challenge remains the reliable prediction of heat transfer coefficients under various flow regimes [1]. This technical guide examines the fundamental phenomena, modeling approaches, and experimental methodologies essential for advancing thermal management in parallel reactors research.

Fundamental Heat Transfer Phenomena

Heat Transfer Mechanisms and Governing Equations

Heat transfer in reactor systems occurs through three primary mechanisms: conduction, convection, and radiation. The energy balance for a body between two parallel isothermal plates at different temperatures under steady-state conditions can be expressed through a one-dimensional equation where edge effects are ignored [2]:

[\frac{d}{dx}\left(k(T)\frac{dT}{dx}\right) - \frac{dq_R}{dx} = 0]

With boundary conditions: [T(0) = T0] [T(L) = TL]

Where (k) is the thermal conductivity, (T) is temperature, (x) is the dimensional coordinate parallel to the heat flow, (qR) is the radiative heat flux, (L) is the length of the body, and (T0) and (T_L) are the temperatures at the cold and hot plates, respectively [2].

Heat conduction through gas and solid fibers is well described by applying the Fourier law for modeling the interaction of gas and solid conductivities. For fibrous media, such as insulation materials in reactor systems, the main differences in models primarily come from the evaluation of the radiation term [2].

Key Thermal-Hydraulic Phenomena in Reactor Cores

Table 1: Key Thermal-Hydraulic Phenomena in Nuclear Reactor Systems

| Phenomenon | Description | Impact on Reactor Safety & Performance |

|---|---|---|

| Transversal Exchange between Sub-channels | Includes turbulent mixing, void drift, and wire wrap induced sweeping flow | Affects temperature distribution and hot spot formation in fuel assemblies [1] |

| Circumferential Non-uniform Heat Transfer | Non-uniform heat transfer behavior inside single sub-channels in tight lattice fuel assemblies | Influences local hot spot formation on fuel pin surface; critical in assemblies with pitch-to-diameter ratio < 1.25 [1] |

| Post-Dryout (PDO) Heat Transfer | Heat transfer regime after critical heat flux is exceeded | Leads to unexpected high temperatures of cladding; requires accurate prediction models [1] |

| Critical Heat Flux (CHF) | Point where heat transfer coefficient deteriorates rapidly | Limiting phenomenon for reactor power levels; determines safety margins [1] |

| Flow Configuration Effects | Parallel vs. counter flow arrangements in heat exchangers and core design | Impacts temperature gradients, heat transfer efficiency, and mechanical stresses [3] |

Modeling Approaches for Reactor Thermal-Hydraulics

Multi-Scale Numerical Approaches

Three different types of numerical approaches are typically applied to analyze thermal-hydraulic behavior in reactor cores and fuel assemblies [1]:

- System Thermal-Hydraulics (STH): Focuses on overall plant behavior and transient response

- Sub-Channel Thermal-Hydraulics (SCTH): The most widely applied approach for fuel assembly and core analysis due to extensive validation, relatively high accuracy, and reasonable computational efforts

- Computational Fluid Dynamics (CFD): Provides detailed three-dimensional analysis of flow and heat transfer phenomena

A promising perspective is combining SCTH methods with STH or CFD approaches to fulfill diverse numerical analysis needs [1]. For instance, one study coupled a sub-channel code with a system code to enhance simulation capabilities for supercritical water-cooled reactors [1].

Advanced Computational Frameworks

Recent advances in computational frameworks have enabled more accurate and efficient thermal-hydraulic simulations. The YHACT software represents one such general-purpose CFD tool developed specifically for thermal-hydraulic analysis of nuclear reactors. It employs a modular development architecture based on scalability, incorporating key CFD solver components such as data loading, physical pre-processing, iterative solving, and result output [4].

For large-scale simulations, parallel decomposition of grid data is essential. The grid is divided into non-overlapping blocks of grid sub-cells, with each process reading only one piece of grid data. After data decomposition, dummy cells are generated on physical boundaries adjacent to each sub-grid for data communication only [4]. This approach enables parallel testing of turbulence models with up to 39.5 million grid volumes, as demonstrated in pressurized water reactor engineering case components with 3×3 rod bundles [4].

Table 2: Comparison of Radiative Heat Transfer Models for Fibrous Media

| Model | Mathematical Formulation | Applications & Limitations |

|---|---|---|

| Diffusion Approximation | (q_R = -\frac{16\sigma T^3}{3\beta}\frac{dT}{dx}) | Suitable for optically dense media; incorporates Rosseland diffusion approximation [2] |

| Schuster-Schwarzschild Approximation | (\frac{dG^+}{dx} = -\beta G^+ + \beta\sigma T^4) (\frac{dG^-}{dx} = \beta G^- - \beta\sigma T^4) | Based on two-flux approach with negligible scattering; validated for multilayer thermal insulators [2] |

| Milne-Eddington Approximation | (\frac{dqR}{dx} = \beta(1-\omega0)(4\sigma T^4 - G)) (\frac{dG}{dx} = -3\beta q_R) | Assumes gray body behavior; shows 13.5% agreement with experimental data at high temperatures under vacuum conditions [2] |

Renumbering Algorithms for Computational Efficiency

To enhance computational performance for large-scale fluid simulations, effective grid renumbering algorithms can be integrated into CFD software:

- Greedy Algorithm: A heuristic approach for optimizing grid numbering

- RCM (Reverse Cuthill-McKee): Reduces the bandwidth of sparse matrices

- CQ (Cell Quotient): An alternative method for optimizing data access patterns

These algorithms significantly impact computational efficiency when solving sparse linear systems. An important judgment metric, called median point average distance (MDMP), serves as a discriminant of sparse matrix quality to select the most effective renumbering method for different physical models [4]. Experiments demonstrate that this approach can achieve acceleration effects up to 56.72% at parallel scales of 1536 processes [4].

Experimental Methodologies and Protocols

Measurement of Thermal-Hydraulic Parameters

Experimental validation remains crucial for verifying theoretical models and computational simulations. In the Bandung TRIGA research reactor, thermal-hydraulic parameters were investigated both theoretically and experimentally, focusing on the maximum powered channel concerning coolant temperature, void fraction, heat flux, and coolant velocities [5].

Theoretical investigations using the STAT computer code determined that with a core condition and water inlet temperature of 28°C, the maximum flow velocity is 34.0 cm/s and 26.4 cm/s for thermal powers of 2000 kW and 1000 kW, respectively. These results correspond to exit coolant channel temperatures of 70.3°C and 55.0°C [5].

Experimental measurements were conducted by inserting a temperature probe into the Central Thimble hole, allowing measurement of coolant temperature in sub-channels. Results indicated that the exit coolant temperature in the maximum powered channel for a thermal power of 1000 kW was almost the same as the exit coolant temperature for a thermal power of 2000 kW from theoretical investigations (approximately 70°C) [5].

Comparative Flow Configuration Analysis

Detailed computational fluid dynamics (CFD) simulations have been employed to compare parallel and counter flow configurations in advanced reactor designs like the Dual Fluid Reactor (DFR) mini demonstrator. For such analyses, incorporating a variable turbulent Prandtl number model is essential when dealing with liquid metals with uniquely low Prandtl numbers [3].

The research methodology typically involves:

Geometric Modeling: Creating a detailed 3D model of the reactor core, often leveraging geometric symmetry to optimize computational resources (e.g., simulating only a quarter of the domain) [3]

Governing Equations: Solving the time-averaged mass, momentum, and energy conservation equations: [\frac{\partial \rho}{\partial t} + \frac{\partial \rho Ui}{\partial xi} = 0] [\frac{\partial \rho Ui}{\partial t} + \frac{\partial \rho Uj Ui}{\partial xj} = -\frac{\partial P}{\partial xi} + \frac{\partial}{\partial xj}\left[\mu\left(\frac{\partial Ui}{\partial xj} + \frac{\partial Uj}{\partial xi}\right) - \rho \overline{u'i u'j}\right]]

Turbulence Modeling: Implementing appropriate turbulence models such as k-ε or k-ω SST with modifications for low Prandtl number fluids

Heat Transfer Analysis: Evaluating temperature distributions, heat transfer efficiency, and identifying potential hotspots

Results from such studies demonstrate that counter flow configurations yield higher heat transfer efficiency and more uniform flow velocity while reducing swirling and mechanical stresses compared to parallel flow arrangements [3].

Visualization of Thermal-Hydraulic Phenomena

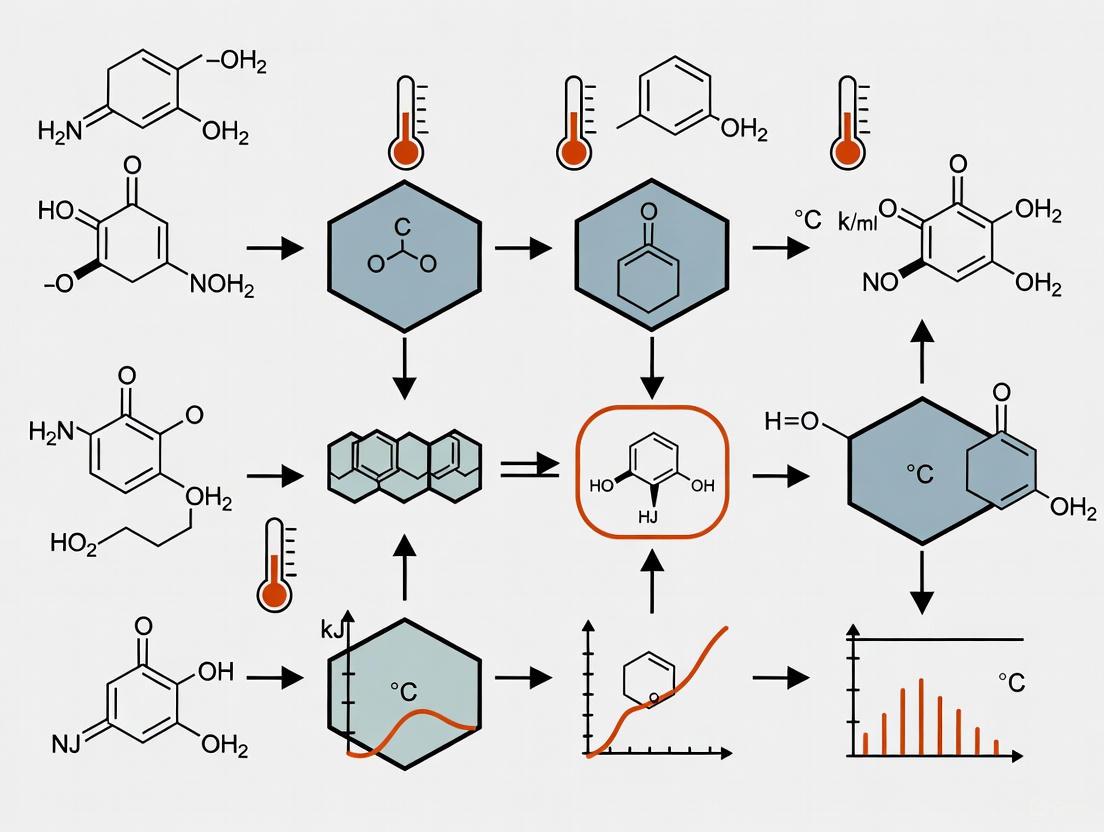

Sub-channel Thermal-Hydraulic Analysis Workflow

Parallel vs Counter Flow Configuration Thermal Profiles

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Materials for Thermal-Hydraulic Experiments

| Material/Component | Function/Significance | Application Context |

|---|---|---|

| TRIGA Fuel Elements | Contain 8.5 w/o, 12 w/o, or 20 w/o uranium enriched to 20%; provide fission heat source | Experimental research reactors (e.g., Bandung TRIGA) for thermal-hydraulic parameter measurement [5] |

| Liquid Lead/LBE Coolant | Low Prandtl number liquid metal coolant with high thermal conductivity; enables high-temperature operation | Advanced reactor designs (DFR, LFR); requires specialized turbulent Prandtl number models [3] |

| Thermocouple Probes | Measure temperature distribution in sub-channels and at fuel element surfaces | Experimental validation of computational models in reactor cores [5] |

| Fibrous Insulation Materials | Reduce heat loss; studied for coupled radiation-conduction heat transfer | Insulation systems; models evaluate effective thermal conductivity [2] |

| Wire Wrap Spacers | Provide mechanical support and enhance turbulent mixing between sub-channels | Fuel assembly design; induces sweeping flow that improves heat transfer [1] |

| CFD Mesh Generation Tools | Create structured/unstructured grids for numerical simulation | Pre-processing for thermal-hydraulic codes; impacts computational efficiency and accuracy [4] |

Thermal-hydraulic phenomena fundamentally influence the performance, efficiency, and safety of nuclear reactor systems. Continued research into fundamental heat transfer mechanisms, advanced modeling approaches, and experimental validation remains essential for advancing nuclear technology. The integration of multi-scale simulation methods, enhanced computational frameworks, and detailed experimental protocols enables researchers to address increasingly complex thermal management challenges in advanced reactor designs.

Recent progress in reactor core thermal-hydraulics modeling has yielded improved understanding of key phenomena such as transversal exchange between sub-channels, circumferential non-uniform heat transfer, post-dryout heat transfer, and critical heat flux prediction. These advances, coupled with emerging capabilities in large-scale parallel numerical simulation, provide powerful tools for optimizing thermal management in parallel reactors research. Future work should focus on further integrating advanced data fusion methods and digital twin technologies to enhance predictive capabilities and support the development of safer, more efficient nuclear energy systems.

Effective thermal management is a cornerstone of advanced engineering systems, from nuclear reactors to electric vehicles and high-performance electronics. The architecture of cooling loops, particularly parallel configurations, plays a pivotal role in determining the efficiency, safety, and reliability of these systems. In parallel cooling architectures, multiple cooling loops or pathways operate simultaneously to manage thermal loads. These systems can incorporate active components that consume energy (e.g., pumps, compressors), passive components that rely on natural phenomena (e.g., heat pipes, natural convection), or hybrid strategies that combine both approaches to optimize performance [6] [7].

The thermal management challenge is particularly acute in advanced reactor systems, where heat fluxes can be extreme and safety margins paramount. Research into parallel cooling architectures aims to balance competing demands of thermal performance, system reliability, energy efficiency, and operational flexibility. This whitepaper provides a comprehensive technical examination of active, passive, and hybrid parallel cooling strategies, with specific application to thermal management in parallel reactors research.

Fundamental Cooling Strategies

Passive Cooling Systems

Passive cooling leverages fundamental laws of physics to transport thermal energy without consuming additional power. These systems rely on conduction, natural convection, and radiation to move heat from sources to the environment [6].

The process begins with conduction, where heat moves through solid materials according to Fourier's Law (q=-k∇T). The heat then transfers to surroundings via natural convection and radiation. The latter is described by the Stefan-Boltzmann Law (P=ϵσA(Tₕₒₜ⁴-T꜀ₒₗ𝒹⁴)), explaining why heat sinks are often anodized black to increase emissivity (ϵ) and maximize heat dissipation [6].

Advanced passive systems employ two-phase heat transfer mechanisms through heat pipes and vapor chambers. These sealed systems contain a working fluid that evaporates at the hot interface (absorbing latent heat) and condenses at the cold interface (releasing heat), achieving effective thermal conductivity orders of magnitude higher than solid copper [6].

Table: Passive Cooling Components and Characteristics

| Component | Mechanism | Applications | Advantages |

|---|---|---|---|

| Extruded Heat Sinks | Conduction, Natural Convection | Electronics, Routers | Low cost, Simple design |

| Heat Pipes | Two-Phase Heat Transfer | Laptops, Compact PCs | High conductivity, Compact |

| Vapor Chambers | Two-Phase Heat Transfer | High-performance Computing | Uniform spreading, High flux |

| Phase Change Materials (PCMs) | Latent Heat Absorption | Thermal Buffering | Manages transient loads |

| Skin Heat Exchangers | Convection, Radiation | Aircraft, Vehicles | No parasitic power |

Active Cooling Systems

Active cooling systems consume energy to enhance heat transfer beyond what passive methods can achieve. These systems overcome natural convection limitations through forced convection mechanisms, enabling management of much higher heat fluxes within compact form factors [6].

The most common active cooling approach uses fans or blowers to move air across heat sinks at high velocity. This turbulent flow dramatically increases the heat transfer coefficient (h), enhancing cooling performance. For more demanding applications, active liquid cooling employs pumps to circulate coolant through cold plates mounted on heat sources. The heated liquid then flows to a radiator where fans dissipate the heat into the air [6].

In specialized applications, thermoelectric coolers (TECs) use the Peltier effect to "pump" heat electrically. These solid-state devices can achieve precise temperature control for spot cooling in sensitive equipment [6].

Table: Active Cooling Performance Comparison

| Cooling Method | Heat Flux Capacity | Power Consumption | Complexity | Typical Applications |

|---|---|---|---|---|

| Air Cooling (Fans) | Low-Moderate | Low | Low | Computers, Servers |

| Liquid Cooling (Pumps) | High | Moderate | High | High-performance Computing, EVs |

| Thermoelectric Cooling | Low | High | Moderate | Laboratory Equipment |

| Refrigeration Cycles | Very High | High | Very High | Precision Environmental Control |

Hybrid Cooling Systems

Hybrid cooling systems strategically combine passive and active approaches to leverage the benefits of both while mitigating their limitations. These systems typically use passive methods for base-load thermal management and activate powered components only when thermal loads exceed passive capacity [7] [8].

A prominent example is the dual-loop system developed for data centers, which technically decouples vapor compression (active) and gravity heat pipe (passive) loops. This architecture eliminates refrigerant-lubricant mixing problems while enabling seamless mode switching based on cooling demands [8]. Similarly, research on hybrid-electric aircraft has demonstrated integrated power and thermal management systems (IPTMS) that shift between operating modes depending on cooling requirements during flight missions [7].

Parallel Cooling Loop Architectures

Fundamental Flow Configurations

In parallel cooling systems, the arrangement of fluid paths significantly influences thermal performance. Two primary configurations dominate engineering applications: parallel flow and counter flow arrangements [3].

In parallel flow systems, hot and cold fluids move in the same direction, leading to gradual temperature equalization along the flow path. This configuration generates smoother thermal gradients but typically offers lower heat transfer rates as the temperature differential decreases along the exchanger length [3].

Counter flow arrangements, where fluids enter from opposite ends, maintain a more consistent temperature gradient across the entire exchanger length. This setup typically achieves higher heat transfer efficiency, making it particularly valuable in high-temperature systems where maintaining substantial temperature differentials is essential [3].

Table: Comparison of Flow Configurations in Nuclear Reactor Applications

| Parameter | Parallel Flow | Counter Flow |

|---|---|---|

| Heat Transfer Efficiency | Moderate | High |

| Temperature Distribution | Gradual equalization | Consistent gradient |

| Mechanical Stress | Higher in specific zones | More uniform distribution |

| Swirling Effects | Intense in some pipes | Reduced |

| Thermal Hotspots | More likely | Less likely |

System-Level Parallel Architectures

Beyond individual heat exchangers, parallel architectures can be implemented at the system level where multiple independent cooling loops serve different components or subsystems. For example, in hybrid-electric aircraft, components are divided into three cooling loops: motor-inverter, bus, and battery-converter loops, categorized by their heat load magnitudes and installation requirements [7].

This modular approach allows customized thermal management for different components while maintaining system-level integration. Research indicates that in such integrated systems, the motor-inverter loop may account for up to 95% of pump power and 97% of ram air drag, highlighting the importance of prioritizing optimization efforts on the most demanding loops [7].

Experimental Analysis and Performance Metrics

Thermal-Hydraulic Analysis in Nuclear Applications

Computational fluid dynamics (CFD) simulations provide critical insights into thermal-hydraulic behavior in parallel cooling systems. Recent comparative studies of parallel and counter flow configurations in Dual Fluid Reactor (DFR) designs reveal significant differences in performance characteristics [3].

In parallel flow configurations, heat exchange occurs gradually along the core, generating smoother thermal gradients but producing intense swirling in some fuel pipes. This swirling enhances local heat transfer but increases mechanical stress and complicates flow uniformity. Counter flow arrangements demonstrate more uniform flow velocity distribution while reducing swirling and mechanical stresses [3].

For nuclear applications using liquid metal coolants with uniquely low Prandtl numbers, specialized modeling approaches incorporating variable turbulent Prandtl numbers are essential for accurate simulation results [3].

Performance Metrics and Evaluation

Quantitative assessment of cooling system performance employs several key metrics:

- Energy Efficiency Ratio (EER): Particularly valuable for active and hybrid systems, representing cooling output per unit energy input [8]

- Power Usage Effectiveness (PUE): Critical for data center applications, measuring total facility energy divided by IT equipment energy [8]

- Cooling Load Factor (CLF): Represents the fraction of total energy consumption dedicated to cooling [8]

- Thermal Resistance (Rth): Characterizes the temperature difference per unit heat flow [6]

- Mean Time Between Failures (MTBF): Especially relevant for comparing reliability of active versus passive components [6]

Experimental studies of dual-loop active-passive data center cooling systems have demonstrated annual average PUE values of 1.27, with winter PUE as low as 1.23, significantly outperforming traditional vapor compression systems [8].

Implementation Protocols

Dual-Loop Cooling System Experimental Setup

Objective: To evaluate the performance of a parallel active-passive cooling system under varying thermal loads and ambient conditions.

Apparatus:

- Vapor Compression (VC) Loop: Compressor, air-cooled condenser, thermal expansion valve, evaporator

- Gravity Heat Pipe (GHP) Loop: Evaporator, condenser, working fluid reservoir

- Instrumentation: Temperature sensors, flow meters, power meters, data acquisition system

- Thermal load simulator with adjustable power input

Procedure:

- Assemble the dual-loop system with completely separate VC and GHP loops to prevent refrigerant-lubricant mixing [8]

- Position the GHP condenser above the evaporator with sufficient height difference to drive natural circulation

- Implement control system with mode-switching logic based on outdoor temperature and cooling demand:

- VC Mode: Activate when outdoor temperature > 24°C

- Hybrid Mode: Engage when outdoor temperature between 18°C and 24°C

- GHP Mode: Switch when outdoor temperature < 18°C

- Ventilation Mode: Initiate when outdoor temperature < 10°C [8]

- Apply thermal loads incrementally from 20% to 100% of system capacity

- Measure key parameters at steady-state conditions:

- Temperature distribution across critical components

- Power consumption of active components

- Flow rates in both loops

- Heat rejection capacity

- Calculate performance metrics (EER, PUE, CLF) for each operating mode

Photovoltaic Thermal Management with Hybrid Cooling

Objective: To analyze the enhancement of electrical efficiency through parallel active-passive cooling of concentrated photovoltaic panels.

Apparatus:

- Photovoltaic panel with concentration ratio measurement

- Water channel integrated with panel base

- Phase Change Material (PCM) container beneath water channel

- Flow control system with variable speed pump

- Irradiance simulator with adjustable intensity

- Thermal imaging camera and I-V characteristic tracer

Procedure:

- Configure two test cases:

- Case 1: PV panel with active water channel cooling only

- Case 2: PV panel with both water channel and PCM container [9]

- Apply insulation to side and bottom surfaces to minimize parasitic heat loss

- Subject panels to standardized irradiance conditions while monitoring:

- Cell temperature distribution

- Electrical output power

- Water outlet temperature

- PCM melting rate and interface position [9]

- Vary parameters systematically:

- Water inlet temperature (5°C to 25°C)

- Water flow rate (0.1 to 1.0 L/min)

- Ambient temperature (15°C to 35°C)

- Concentration ratio (1 to 5 suns)

- Calculate electrical efficiency improvement compared to uncooled baseline

- Perform economic analysis including Levelized Cost of Energy (LCOE)

Visualization of System Architectures

Parallel Cooling Loop Configuration

System Flow Parallel Config

Mode Switching Logic

Control Logic Diagram

Research Reagent Solutions and Materials

Table: Essential Materials for Parallel Cooling Loop Research

| Material/Component | Function | Application Examples |

|---|---|---|

| Liquid Lead/LBE Coolant | High-temperature heat transfer | Nuclear reactor cores [3] |

| Phase Change Materials (PCMs) | Thermal energy storage, Buffer transient loads | Photovoltaic cooling, Battery thermal management [9] |

| Nano-Enhanced PCMs | Enhanced thermal conductivity | Improved heat transfer rates [9] |

| Micro-channel Heat Exchangers | High surface-area-to-volume ratio | Compact electronics cooling [10] |

| Thermoelectric Modules | Solid-state active cooling | Precision temperature control [6] |

| Thermal Interface Materials | Reduce contact resistance | Component-level heat transfer [6] |

| Dielectric Coolants | Electrically insulating liquid cooling | Direct immersion cooling [10] |

Parallel cooling loop architectures represent a sophisticated approach to thermal management that enables customization, redundancy, and optimization across diverse operating conditions. The integration of active, passive, and hybrid strategies within parallel configurations provides researchers and engineers with a versatile toolkit for addressing escalating thermal challenges in advanced reactor systems.

The experimental protocols and analytical frameworks presented in this work establish a foundation for continued innovation in parallel cooling technologies. As thermal densities increase across energy, transportation, and computing applications, the architectural principles of parallel cooling loops will play an increasingly critical role in enabling safe, efficient, and reliable system operation.

In parallel reactor systems, effective thermal management is a cornerstone of operational safety, efficiency, and experimental reproducibility. These systems, whether used for chemical synthesis, pharmaceutical development, or energy research, generate significant heat loads that must be precisely controlled. The thermal management system's core function is to maintain the reactor within a defined temperature range, ensuring consistent reaction kinetics and product yield. This guide details the three key components that form the backbone of this system: coolant pumps, which drive the heat-transfer fluid; heat exchangers, which facilitate the actual heat removal; and sensor networks, which provide the critical data for control and monitoring. The integrated performance of these components directly impacts the reactor's stability, as studies have shown that proper maintenance of these elements can reduce failure probabilities to as low as 2.5% for valves and 3.2% for sensors [11]. The following sections provide a technical deep-dive into each component, supported by quantitative data, experimental protocols, and system visualizations.

Coolant Pumps

Coolant pumps are the heart of any active thermal management system, responsible for circulating the heat-transfer fluid through the reactor blocks and the broader cooling loop. Their primary function is to ensure a consistent and adequate volumetric flow rate, which directly determines the heat removal capacity.

Performance Metrics and Selection Criteria

When selecting a coolant pump for a parallel reactor setup, engineers must balance several key parameters:

- Flow Rate and Pressure Head: The pump must overcome the system's total pressure drop, which includes friction in pipes, valves, and the reactor block itself, while delivering the required flow.

- Chemical Compatibility: The pump's wetted materials must be resistant to the selected coolant, whether it is water, a glycol-water mixture, or a specialized silicone-based fluid [12].

- Precision and Controllability: The ability to precisely adjust flow rates is essential for maintaining thermal stability, especially during reaction phases with exothermic or endothermic characteristics.

Advanced systems, such as those in high-performance AI data centers, often employ Coolant Distribution Units (CDUs) with redundant pumping systems at the base of each rack to ensure reliability [13]. This principle of redundancy is equally critical in research reactors to prevent single points of failure.

Quantitative Performance Data

The table below summarizes key parameters for coolant pumps in different application scales, from laboratory reactors to large-scale industrial systems.

Table 1: Coolant Pump Performance Characteristics Across Applications

| Application Scale | Typical Flow Rate Range | Primary Function | Key Characteristic |

|---|---|---|---|

| Laboratory Parallel Reactor [12] | System Dependent | Circulate fluid through a temperature-controlled reactor block. | Precision control for thermal uniformity of ±1°C. |

| Industrial Nuclear Reactor [14] | System Dependent | Remove 30 MW of heat via primary pressurized water cooler. | High-reliability design for safety-critical systems. |

| AI Data Center Rack [13] | System Dependent | Provide redundant coolant flow to server cold plates. | Integrated CDU with redundant pumps for high availability. |

Heat Exchangers

Heat exchangers are the components where waste heat from the reactor is transferred to a secondary coolant or the environment. The configuration of the heat exchanger profoundly impacts the overall efficiency of the thermal management system.

Flow Configuration and Performance

In parallel reactor systems, heat exchangers can be arranged in different flow configurations, each with distinct advantages:

- Parallel Flow: The hot (reactor coolant) and cold (utility coolant) fluids enter at the same end and move in the same direction. This configuration provides a stable outlet temperature and minimizes thermal stress but offers lower thermal efficiency because the temperature difference between the fluids decreases along the length [15].

- Counter Flow: The two fluids enter from opposite ends. This maintains a more consistent and higher temperature difference across the entire exchanger, resulting in superior thermal efficiency compared to parallel flow [15].

- Cross Flow: One fluid moves perpendicular to the other. This is often a space-efficient design used in applications like cooling towers [15].

A critical challenge in systems with multiple parallel channels is flow maldistribution, where an uneven flow rate through each channel leads to temperature gradients and heat transfer deterioration. Research on two-phase flow in parallel systems has shown that decreasing the channel-to-header area ratio (AR) significantly improves flow distribution until AR is less than 0.3 [16].

Heat Exchanger Types and Specifications

Table 2: Heat Exchanger Types and Their Applications in Thermal Management

| Heat Exchanger Type | Common Application | Advantages | Disadvantages |

|---|---|---|---|

| Shell and Tube [14] | Nuclear Reactor Cooling (PPWC) | Robust design, handles high pressures. | Large footprint, less efficient than compact designs. |

| Plate [15] | Process Industries, Compact Systems | High efficiency in a small volume, easy to maintain. | Pressure and temperature limitations. |

| U-Tube [14] | Nuclear Reactor Cooling (PPWC) | Accommodates thermal expansion. | More complex to manufacture than straight-tube designs. |

Sensor Networks

Sensor networks provide the digital nervous system for the thermal management loop, delivering the real-time data required for process control, safety monitoring, and experimental validation.

Key Measurands and Sensor Types

A comprehensive sensor network for a parallel reactor system will monitor several physical quantities:

- Temperature: Typically measured by thermocouples or Resistance Temperature Detectors (RTDs) at multiple points, including reactor inlets/outlets and individual reactor vessels [17]. Calibration is critical, as studies attribute 59.3% of the variance in reactor performance to this factor [11].

- Pressure: Pressure transducers monitor the health of the cooling loop, detect blockages, and ensure the system remains within safe operating limits [17].

- Flow Rate: Flow meters verify that the coolant pump is delivering the required volumetric flow to achieve the necessary heat transfer.

- Component Health: Vibration sensors and other dedicated instruments monitor the status of pumps and valves.

The trend is toward increasingly automated metrology, using data fusion and machine learning to ensure the reliability, accuracy, and traceability of the measurements from these sensor networks [18].

Integrated Control and Data Acquisition

Modern systems integrate these sensors with a central Process Controller [17]. This controller not only records data like temperature, pressure, and stirring speed but also uses this information in a closed-loop feedback system to actuate components like control valves and pump speeds, maintaining the reactor at its set-point. The Bayesian Network analysis highlighted that proper maintenance of sensors and valves significantly reduces system failure risks [11].

Integrated System Operation and Experimental Protocols

The true performance of a thermal management system emerges from the seamless interaction of its components. This section outlines how these parts work together and provides a methodology for evaluating their performance.

System Workflow and Interaction

The following diagram illustrates the logical flow of information and coolant within an integrated thermal management system for a parallel reactor.

Experimental Protocol for System Characterization

Researchers can characterize the performance of their thermal management system using a structured experimental design. The following protocol, inspired by factorial design approaches used in reactor stability studies [11], provides a methodology for identifying key performance factors.

Objective: To quantify the individual and interactive effects of coolant flow rate, heat exchanger configuration, and sensor calibration on the thermal stability of a parallel reactor system.

Methodology:

- Experimental Design: Employ a 2³ factorial design. The three factors are:

- Factor A: Coolant Pump Flow Rate (Low vs. High, specific values depend on system).

- Factor B: Heat Exchanger Configuration (Parallel Flow vs. Counter Flow).

- Factor C: Sensor Calibration State (Nominal vs. Optimized/Recently Calibrated). This design requires 8 unique experimental runs.

Procedure:

- For each of the 8 experimental conditions, initiate the reactor system with a standardized exothermic or heat-generating process.

- Use the integrated sensor network to log temperature data from each reactor vessel at a high frequency (e.g., 1 Hz).

- Run each experiment until the system reaches a steady-state or for a fixed duration.

Data Analysis:

- Response Variable: Calculate the temperature variance (standard deviation) across all reactor vessels over the steady-state period for each run.

- Statistical Analysis: Perform an Analysis of Variance (ANOVA) to determine the F-statistic and p-value for each main effect (A, B, C) and their interaction effects (AB, AC, BC). This will identify which factors explain the most variance in thermal stability. Prior research indicates that factors like sensor calibration can explain over 59% of performance variance [11].

The Researcher's Toolkit: Essential Materials and Reagents

Equipping a laboratory for parallel reactor research requires specific materials and reagents for the thermal management system. The following table details key items.

Table 3: Essential Research Reagent Solutions for Thermal Management Systems

| Item Name | Function/Brief Explanation | Application Notes |

|---|---|---|

| Silicone-based Heat Transfer Fluid [12] | Circulates through reactor jacket/block to add or remove heat; offers a wide operating temperature range. | Stable over a broad temperature range (-40°C to 200°C+). Example: SYLTHERM. |

| Ethylene Glycol / Water Mixture [12] | Common coolant fluid for moderate temperature ranges; provides freeze protection. | Cost-effective; requires careful consideration of concentration for optimal thermal properties and corrosion inhibition. |

| Thermal Interface Material (TIM) [19] | Improves thermal contact between a heat source (e.g., reactor base) and a cold plate or heat exchanger. | Critical for minimizing thermal resistance at material interfaces. |

| Calibration Standards [11] | Reference materials or devices used to calibrate temperature and pressure sensors in the network. | Essential for ensuring data accuracy and reactor control; regular calibration is a key maintenance activity. |

| Redundant Coolant Pump [13] | A backup pump system to ensure continuous coolant flow in case of primary pump failure. | A key design feature for high-reliability and safety-critical reactor systems. |

Coolant pumps, heat exchangers, and sensor networks are not isolated components but deeply interconnected elements of a sophisticated thermal management system. The performance of parallel reactors in research and development is directly contingent on the optimized selection, integration, and maintenance of these core components. As evidenced by advanced fields from nuclear engineering to high-performance computing, the principles of redundant pumping, efficient counter-flow heat exchange, and high-fidelity, automated sensor metrology are universal drivers of stability and efficiency [11] [13] [18]. By applying the quantitative data, experimental protocols, and system-level understanding outlined in this guide, researchers and engineers can design and operate more reliable, reproducible, and safe parallel reactor systems.

Defining Thermal Stability and Performance Metrics for Reactor Safety and Efficiency

Thermal management represents a critical enabling technology for modern chemical research and development, particularly within automated reaction platforms. In parallel reactor systems, thermal stability ensures that reaction outcomes accurately reflect specified conditions. This is paramount for generating high-fidelity, reproducible data for kinetic studies and reaction optimization. Effective thermal performance is characterized by a system's ability to maintain precise, uniform, and stable temperatures across all independent reactor channels. This guide establishes the core metrics and methodologies essential for evaluating and ensuring thermal safety and efficiency within the context of parallel reactor research, a field vital for advancing drug development and chemical synthesis [20].

The transition from traditional single-channel reactors to parallelized systems introduces significant thermal management challenges. Each independent reactor channel must operate within a broad temperature range while maintaining isolation from its neighbors. Furthermore, the integration of online analytics necessitates minimal delay between reaction completion and evaluation, placing additional demands on thermal control systems to ensure sample integrity. For researchers and scientists, a deep understanding of these metrics is not merely an engineering concern but a fundamental prerequisite for obtaining reliable and scalable chemical data [20].

Core Thermal Performance Metrics

Quantifying thermal performance requires tracking specific, measurable parameters. The table below summarizes the key metrics vital for assessing reactor safety and operational efficiency.

Table 1: Key Performance Metrics for Parallel Reactor Thermal Management

| Metric Category | Specific Metric | Target Value/Standard | Impact on Safety & Efficiency |

|---|---|---|---|

| Temperature Control | Operational Temperature Range [20] | 0 to 200 °C (solvent-dependent) [20] | Defines the breadth of chemically accessible reaction space. |

| Temperature Uniformity (across channels) | < ±1.0 °C | Ensures experimental consistency and reproducibility between parallel experiments. | |

| System Stability | Reproducibility of Reaction Outcomes [20] | <5% standard deviation [20] | A direct measure of the platform's control over reaction conditions, including temperature. |

| Pressure Tolerance [20] | Up to 20 atm [20] | Allows for higher-temperature reactions and expands compatible solvent systems. | |

| Thermal Load Management | Heat Load from System Components | Varies by component (e.g., motors, inverters) [7] | Dictates the required cooling capacity; excessive loads diminish efficiency. |

| Cooling Power Consumption [7] | Minimized (e.g., via passive cooling) [7] | Reduces the parasitic energy draw of the TMS, improving overall system efficiency. | |

| Induced Ram Air Drag (for air-cooled systems) [7] | Minimized | In aerospace or mobile applications, this drag is a direct efficiency penalty. |

These metrics are interdependent. For instance, poor temperature uniformity often leads to unacceptable reproducibility, while inadequate management of heat loads from electrical components can force a system to operate outside its stable temperature window, compromising both safety and data quality [20] [7].

Experimental Protocols for Thermal Analysis

Protocol for Assessing Temperature Uniformity and Reproducibility

This protocol is designed to validate that all channels in a parallel reactor system maintain consistent and repeatable temperatures.

- Instrumentation Preparation: Calibrate all thermocouples using a traceable standard. Position each thermocouple in the same relative location within each reactor channel (e.g., immersed in a thermally conductive fluid at the reactor's geometric center) [20].

- System Baseline: With the reactor system empty, set all channels to a common target temperature (e.g., 50 °C). Record the temperature readout from each channel's sensor once the system reaches a steady state.

- Loaded System Test: Load each reactor channel with a standard solvent (e.g., acetonitrile) of a defined volume. Program the system to execute a temperature ramp from 30 °C to 150 °C across all channels simultaneously.

- Data Collection: At 10 °C intervals, log the temperature from every channel sensor. Repeat this process for three independent experimental runs.

- Data Analysis:

- Calculate Uniformity: For each temperature setpoint, calculate the mean and standard deviation of the measured temperatures across all channels.

- Calculate Reproducibility: For each individual channel, calculate the standard deviation of its temperature across the three experimental runs at a single, fixed setpoint (e.g., 100 °C). The overall system reproducibility is the average of these per-channel standard deviations. The target is a standard deviation of less than 5% in final reaction outcomes, which demands even tighter control on temperature [20].

Protocol for Quantifying Heat Load and Cooling Efficiency

This methodology identifies major heat sources and evaluates the efficiency of the Thermal Management System (TMS).

- Component Isolation: Operate individual high-power components (e.g., motors, inverters, pumps) independently while the reactor channels are idle.

- Thermal Mapping: Use a thermal imaging camera or a distributed sensor network to map surface temperatures of components and identify hotspots.

- Power Consumption Measurement: For each active component, measure the electrical power input using a power meter. Simultaneously, measure the temperature of the coolant at the inlet and outlet of the component's cooling loop.

- Heat Load Calculation: The heat load (( Q )) dissipated by a component can be calculated using the thermodynamic formula: ( Q = \dot{m} \times Cp \times (T{out} - T{in}) ) where ( \dot{m} ) is the coolant mass flow rate, ( Cp ) is the specific heat capacity of the coolant, and ( T{out} ) and ( T{in} ) are the outlet and inlet coolant temperatures, respectively.

- Efficiency Analysis: Compare the calculated heat load (( Q )) to the electrical power input. The difference represents losses. The dominant factors affecting system-level efficiency, such as coolant pump power and induced ram air drag, can be analyzed by comparing their power consumption across different operating modes [7].

Visualization of Thermal Management Systems

TMS Architecture and Mode Switching Logic

The following diagram illustrates a typical TMS architecture for a parallel system and the logical workflow for switching between passive, active, and temperature control modes to optimize energy use.

Integrated Power and Thermal Management (IPTMS) Workflow

This diagram outlines the core control logic of an IPTMS, which balances power allocation between propulsion (or primary function) and cooling to manage heat loads from various components.

The Scientist's Toolkit: Research Reagent and Material Solutions

The following table details essential materials and reagents used in the development and operation of advanced parallel reactor platforms.

Table 2: Key Research Reagent Solutions for Parallel Reactor Systems

| Item | Function | Technical Specification / Rationale |

|---|---|---|

| Fluoropolymer Tubing [20] | Reactor channel material. | Provides broad chemical compatibility with organic solvents and operates at pressures up to 20 atm, unlike many polycarbonate or PDMS microfluidic devices [20]. |

| Coolants (Engine Oil) [7] | Heat transfer fluid for cooling high-power components. | Traditionally used for cooling gas turbine system components (gearboxes, bearings, generators), offering both cooling and lubrication [7]. |

| Phase-Change Materials (PCMs) [7] | Passive thermal management. | Used in hybrid cooling strategies; absorbs heat during phase transition, consuming less power than active cooling, though it can add weight [7]. |

| Selector Valves [20] | Fluidic routing to parallel reactor channels. | Enables distribution of reagent droplets to assigned reactors and collection for analysis, crucial for decoupling parallel synthesis steps [20]. |

| Nanoliter Injection Rotors [20] | On-line analytical sampling. | Swappable rotors (20-100 nL) enable minuscule injection volumes for HPLC, eliminating the need to dilute concentrated reactions prior to analysis [20]. |

| Skin Heat Exchangers (SHXs) [7] | Passive terminal heat exchanger. | Uses the aircraft skin to reject heat to ambient air without requiring power or inducing ram air drag, though it can have area limitations [7]. |

Advanced Modeling, AI, and High-Throughput Experimental Methods

Computational Fluid Dynamics (CFD) and Multi-Physics Simulation for Thermal Analysis

Computational Fluid Dynamics (CFD) has emerged as an indispensable tool for thermal analysis in complex engineering systems, particularly in the domain of parallel reactors and advanced energy systems. These high-fidelity simulations enable researchers to obtain intricate details of flow fields and thermal characteristics that are often difficult or impossible to measure experimentally [21]. The role of CFD is especially critical in nuclear reactor design and optimization, where it serves as an essential component of "virtual reactor" projects globally, supporting reactor safety analysis, thermal-hydraulic system design, and performance optimization [21]. The transition from traditional system-level thermal-hydraulic codes to refined CFD calculations represents a significant advancement in the field, allowing for high-resolution thermal data that supports precise positioning and focusing on regions with large parameter values or spatial gradients [22].

The multi-physics nature of thermal analysis in reactor systems involves the complex interplay of fluid dynamics, heat transfer, structural mechanics, and in many cases, electrochemical phenomena. This complexity is exemplified in specialized reactors such as small modular reactors (SMRs) and space reactor power systems (SRPSs), where compact geometries and unique operating conditions including microgravity result in complex flow behaviors like flow separation and convective instability [21]. Similarly, in hybrid-electric propulsion systems and electrochemical energy storage devices, thermal management becomes a critical challenge that necessitates integrated multi-physics approaches [23] [7] [24]. The fundamental challenge in these simulations lies in accurately capturing the coupled physics while managing computational costs, a balance that requires sophisticated numerical techniques and advanced computational resources.

Computational Frameworks and Parallel Implementation

Distributed Parallel Computing Schemes

The computational demands of high-fidelity CFD simulations for reactor thermal analysis necessitate innovative parallel computing strategies. A notable advancement is the Distributed Parallel (DP) computing scheme specifically tailored for reactor cores using plate-type fuel assemblies [22]. This approach enables the completion of extensive domain CFD calculations using modestly equipped personal workstations (8 cores, 128GB RAM), which traditionally would require supercomputing platforms [22]. The implementation of this scheme for the China Advanced Research Reactor (CARR) demonstrated that detailed results could be obtained with reduced computational resources, representing a significant breakthrough for CFD engineering analysis.

The DP scheme operates by decomposing the computational domain according to the structural characteristics of plate-type fuel assemblies. In the CARR reactor implementation, the single calculation object of the distributed parallel scheme is one fuel assembly, while the CFD calculation of the entire core covers 17 fuel assemblies [22]. This domain decomposition strategy significantly reduces memory requirements, making large-scale simulations feasible on limited hardware. Verification studies demonstrated that the error in the coolant channels was within 5% of the mass flow rate of reference literature for most channels, with slightly higher errors (about 10%) in peripheral channels, indicating a high level of accuracy in the calculations [22].

Mesh Generation and Renumbering Techniques

Mesh generation and optimization represent critical components in the CFD workflow that directly impact simulation accuracy and computational efficiency. For large-scale nuclear reactor thermal-hydraulic models, researchers have developed sophisticated frameworks that integrate meshing techniques with mesh renumbering algorithms to enhance computational performance [4]. The effectiveness of Greedy, Reverse Cuthill-Mckee (RCM), and Cell Quotient (CQ) grid renumbering algorithms has been demonstrated in the YHACT software, a specialized CFD tool for nuclear reactor thermal-hydraulic analysis [4].

A key innovation in this domain is the introduction of the median point average distance (MDMP) metric, which serves as a discriminant of sparse matrix quality to select the most effective renumbering method for different physical models [4]. Experimental results show that these optimization techniques can yield significant acceleration, with the renumbering acceleration effect reaching a maximum of 56.72% at a parallel scale of 1536 processes [4]. This enhancement enables the simulation of increasingly complex geometries, such as pressurized water reactor engineering case components with 3×3 rod bundles with 39.5 million grid volumes [4].

Table 1: Computational Performance of Advanced CFD Techniques

| Technique | Application | Performance Improvement | Computational Scale |

|---|---|---|---|

| Distributed Parallel (DP) Scheme | Plate-type fuel assemblies in CARR reactor | Enables large-domain CFD on workstations vs. supercomputers | 17 fuel assemblies on 8-core workstation [22] |

| Mesh Renumbering Algorithms (RCM, Greedy, CQ) | PWR 3×3 rod bundles | Maximum 56.72% acceleration at 1536 processes | 39.5 million grid volumes, up to 3072 processes [4] |

| Verification & Validation (V&V) Process | Reactor thermal-hydraulic systems | Improved simulation credibility | Dependent on specific application [21] |

Experimental Protocols and Methodologies

Verification, Validation, and Uncertainty Quantification (V&V&UQ)

A rigorous V&V&UQ process is widely acknowledged as essential for assessing the credibility of CFD simulation results [21]. The verification process involves determining that a computational model accurately represents the underlying mathematical model and its solution, while validation focuses on assessing the accuracy of the computational model in representing the real world. Uncertainty quantification characterizes the statistical uncertainty in the simulation results due to input uncertainties.

The general process of parallel CFD simulation of reactors can be divided into four distinct stages, each with specific error and uncertainty considerations [21]:

- Input: Geometric modeling, material properties, and boundary/initial conditions

- Pre-processing: Mesh generation, physical model selection, and numerical scheme determination

- Solving: Parallel computation involving linear and nonlinear system solutions

- Post-processing: Data analysis and visualization

Key sources of error and uncertainty include mesh quality, turbulence modeling, numerical discretization, and boundary condition selection, all of which contribute to user effects where results vary significantly depending on the user's choices [21]. For specialized reactors with compact cooling circuits and complex operational environments, the V&V&UQ process faces a paradox: while CFD is often used to model complex flow phenomena that are difficult to measure experimentally, the V&V&UQ process relies heavily on high-quality experimental data for validation [21].

Multi-Physics Integration Methodologies

Multi-physics integration represents a sophisticated approach to simulating complex coupled phenomena. In the context of battery thermal management, researchers have developed comprehensive models that simulate electrochemical, thermal, and thermal runaway behaviors [25]. These models utilize established frameworks like the pseudo-2-dimensional model by Newman et al., which provides a well-established foundation for comprehending electrochemical behavior [25].

The methodology typically involves several interconnected modules:

- Electrochemical Model: Provides insights into the relationship between current distribution, different current densities, and the system's overpotential

- Thermal Model: Utilizes the strong temperature affinity of electrochemical systems to relate performance to electrochemical-thermal behavior

- Thermal Runaway Simulation: Models the series of decomposition reactions that occur when the system loses its ability to control temperature escalation

For nuclear reactor applications, multi-physics coupling extends to neutronics-thermal-hydraulics interactions, where the Consortium for Advanced Simulation of Light Water Reactors (CASL) and Nuclear Energy Advanced Modeling and Simulation (NEAMS) projects have driven the development of advanced simulation tools like Nek5000 and NekRS for full-core CFD simulations [21]. The China Virtual Reactor (CVR) project has similarly developed specialized tools, including CVR-PACA, a large-scale parallel CFD software for pressurized water reactors and fast reactors [21].

Diagram 1: CFD Simulation Workflow with V&V&UQ Integration

Uncertainty Quantification and Error Reduction

The credibility challenges in CFD simulations stem from various sources of error and uncertainty that can lead to inaccurate or erroneous results, posing significant challenges for reactor safety analysis [21]. From a software developer's perspective, these sources can be categorized based on their occurrence throughout the CFD simulation process:

- Modeling Uncertainties: These arise from approximations in physical models, particularly turbulence models, and the inherent limitations in representing complex physical phenomena with simplified mathematical representations

- Numerical Uncertainties: Discretization errors, iterative convergence errors, and round-off errors contribute to numerical uncertainties, with discretization errors being particularly significant in complex flow domains

- Input Uncertainties: Inaccurate material properties, imprecise boundary conditions, and geometric simplifications introduce input uncertainties that propagate through the simulation

- Code Implementation Uncertainties: Programming errors, parallel communication issues, and algorithm implementations can introduce unexpected uncertainties

The presence of these uncertainties is particularly problematic for specialized reactors where experimental data for validation is scarce due to the challenging operating conditions and complex flow phenomena [21].

Approaches for Improved Reliability

Several strategic approaches have been identified to enhance the reliability of CFD simulations for reactor thermal analysis [21]:

- Minimizing Model Uncertainty: This involves improving physical models, particularly for complex flow phenomena, and developing more comprehensive validation databases

- Reducing Numerical Uncertainty: Advanced discretization schemes, improved convergence criteria, and careful mesh quality assessment contribute to reduced numerical uncertainties

- Establishing Robust Mesh Quality Evaluation: Implementing standardized metrics for mesh quality assessment and developing adaptive meshing techniques that respond to solution characteristics

- Advancing Supportive Tools and Datasets: Enhancing V&V&UQ tools and making validation data more accessible to the research community

These approaches are particularly important for advanced reactor systems where the compact and complex flow channels present unique challenges for thermal-hydraulic analysis. The China Virtual Reactor (CVR) project experience highlights the importance of addressing these uncertainty sources throughout the development and application of reactor CFD simulation software [21].

Table 2: Uncertainty Sources and Mitigation Strategies in Reactor CFD Simulations

| Uncertainty Category | Specific Sources | Mitigation Strategies |

|---|---|---|

| Modeling Uncertainties | Turbulence models, multiphase flow models, physical approximations | Model improvement, validation against experimental data [21] |

| Numerical Uncertainties | Discretization errors, iterative convergence, round-off errors | Higher-order schemes, rigorous convergence criteria [21] |

| Input Uncertainties | Material properties, boundary conditions, geometric inaccuracies | Sensitivity analysis, uncertainty propagation studies [21] |

| User Effects | Mesh generation, model selection, boundary condition specification | Comprehensive guidelines, training, automation [21] |

The Scientist's Toolkit: Research Reagent Solutions

The computational infrastructure required for advanced CFD and multi-physics simulations varies significantly based on the scope and fidelity of the analysis. For large-scale reactor simulations, high-performance computing (HPC) resources are typically essential, though innovative approaches like the Distributed Parallel scheme have enabled certain analyses on workstations with 8 cores and 128GB RAM [22]. At the extreme end of the spectrum, full-core CFD simulations may require thousands of processors, with parallel tests demonstrating capabilities up to 3072 processes [4].

Specialized CFD software tools have been developed specifically for nuclear reactor applications:

- YHACT: Parallel analysis code of thermohydraulics with modular development architecture based on scalability, designed for thermal-hydraulic analysis of nuclear reactors [4]

- CVR-PACA: Large-scale parallel CFD software developed under the China Virtual Reactor project for pressurized water reactors and fast reactors [21]

- Nek5000/NekRS: Spectral element codes developed under the CASL and Exascale Computing Project for full-core CFD simulations [21]

In addition to specialized tools, general-purpose multi-physics platforms like COMSOL Multiphysics are employed for specific applications, including battery thermal management and biomedical device analysis [25] [26]. These platforms provide integrated environments for solving coupled physics phenomena, though they may have limitations for the largest-scale reactor simulations.

Meshing and Discretization Tools

Mesh generation represents a critical preprocessing step that significantly influences simulation accuracy and computational efficiency. For complex reactor geometries, unstructured meshing techniques are often employed, with recent research focusing on methods that transition from triangular/tetrahedral meshes to quadrilateral/hexahedral meshes for improved accuracy and efficiency [4]. The relationship between cells and neighbors can be abstracted as graph partitioning problems, where the large-scale physical model is divided into multiple blocks through coarse-grained and fine-grained lattice partitioning to facilitate parallel computation [4].

Advanced renumbering algorithms play a crucial role in optimizing memory access patterns and improving cache utilization:

- Reverse Cuthill-Mckee (RCM): Reorders matrices to reduce bandwidth, improving computational efficiency

- Greedy Algorithm: Provides an alternative approach to matrix reordering for optimized memory access

- Cell Quotient (CQ): Additional renumbering strategy integrated into advanced CFD software like YHACT

These algorithms significantly impact the solving phase of CFD simulations, particularly for the large sparse linear systems that arise in finite volume discretizations of the governing equations [4].

Diagram 2: Computational Framework Architecture for Reactor Thermal Analysis

The integration of advanced CFD methodologies with multi-physics simulation capabilities has fundamentally transformed thermal analysis in parallel reactors and energy systems. The development of distributed parallel computing schemes, sophisticated mesh optimization techniques, and comprehensive V&V&UQ frameworks has enabled researchers to address increasingly complex thermal management challenges with greater confidence in simulation results. These computational advances are particularly valuable for specialized reactor systems where experimental data is limited and the consequences of design errors are significant.

Future advancements in this field will likely focus on enhanced multi-physics coupling, improved uncertainty quantification methods, and greater integration of machine learning techniques to further accelerate simulations and improve model fidelity. As computational resources continue to evolve, the role of high-fidelity CFD and multi-physics simulation in thermal analysis will expand, enabling more sophisticated virtual prototyping and reducing reliance on physical experiments for reactor design and safety assessment.

AI-Enhanced Energy Management Systems (EMS) for Real-Time Thermal Control

The management of heat within parallel reactors—a critical system in advanced chemical and pharmaceutical processes—presents a formidable engineering challenge. These systems, characterized by simultaneous, independent reactions, require precise thermal conditions to ensure optimal yield, product quality, and operational safety. Even minor temperature deviations can lead to failed experiments, inconsistent products, or hazardous situations. Artificial Intelligence (AI) and Machine Learning (ML) are revolutionizing Energy Management Systems (EMS) by transforming thermal control from a static, reactive process to a dynamic, predictive, and self-optimizing function [27]. This paradigm shift is essential for supporting the complex and sensitive operations inherent to parallel reactor research and development.

The integration of AI into EMS marks a significant departure from conventional thermal management. Traditional systems often rely on predefined setpoints and simple feedback loops, which struggle with the nonlinear dynamics, variable loads, and complex heat transfer phenomena present in multi-reactor setups. AI-enhanced systems, by contrast, can process vast amounts of operational data in real-time, learn from historical trends, and predict thermal behavior to proactively adjust cooling or heating inputs [27] [28]. This capability is vital for maintaining the strict temperature uniformity and stability required in drug development, where reproducibility is paramount. This technical guide explores the core algorithms, implementation protocols, and practical applications of AI-driven EMS, framing them within the specific context of thermal management for parallel reactor systems.

Core AI and ML Techniques for Thermal Management

The application of AI for thermal control leverages a suite of machine learning techniques, each suited to specific aspects of the energy management problem. These algorithms form the computational backbone of an intelligent EMS.

Supervised Learning for State Estimation and Prediction: Supervised learning models are trained on historical data to predict key thermal variables. Deep learning models, particularly Long Short-Term Memory (LSTM) networks, are exceptionally effective for time-series forecasting of reactor core temperatures, heat exchanger performance, and coolant demand based on scheduled reactions [27]. Furthermore, ML models like support vector machines and ensemble methods are instrumental in predictive maintenance, analyzing sensor data to forecast equipment such as pump or chiller failures before they disrupt critical reactions [27] [29].

Reinforcement Learning (RL) for Real-Time Optimization: RL is a powerful paradigm for adaptive control. In a parallel reactor EMS, an RL agent learns optimal control policies—such as adjusting coolant flow rates or valve positions—by continuously interacting with the system. The agent is rewarded for actions that minimize energy consumption while maintaining all reactors within their target temperature bands. Research has demonstrated that distributed reinforcement learning frameworks can reduce operational costs by 12.2% and significantly improve system stability by learning to balance multiple, competing objectives [27].

Neural Networks for Modeling Complex Nonlinear Systems: Physics-informed neural networks (PINNs) and other deep learning architectures can model the complex, nonlinear relationship between a reactor's energy input, chemical processes, and heat generation. These models can serve as digital twins for the thermal system, allowing for safe simulation and testing of control strategies under extreme or hazardous conditions without risking actual experiments [30].

Table 1: Key AI Algorithms and Their Applications in Thermal EMS

| AI Technique | Primary Function | Key Advantage | Quantified Benefit |

|---|---|---|---|

| Long Short-Term Memory (LSTM) | State of Charge/Temperature Forecasting | Captures long-term temporal dependencies | Mean Absolute Error of 0.10 for state estimations [27] |

| Reinforcement Learning (RL) | Real-time Control Optimization | Adapts to changing conditions without explicit programming | Reduces operational costs by 12.2% and grid disruptions by 40% [27] |

| Multi-Objective Optimization | System Design & Planning | Balances competing goals (e.g., energy use, safety, cost) | Reduces power losses by 22.8% and voltage fluctuations by 71% [27] |

| Genetic Algorithms | Parameter Optimization | Efficiently searches large parameter spaces for optimal solutions | Optimizes coolant parameters for immersion boiling heat transfer [31] |

Implementation and Experimental Protocols

Implementing an AI-enhanced EMS requires a structured methodology, from data acquisition to model deployment. The following protocol outlines the key stages for developing and validating such a system for parallel reactor thermal control.

System Architecture and Data Acquisition

The foundation of any AI system is high-quality, high-frequency data. A comprehensive sensor network must be deployed across the parallel reactor facility.

- Sensor Deployment: Temperature sensors (e.g., RTDs, thermocouples) must be strategically placed at critical points: on each reactor vessel, at inlets and outlets of cooling jackets, within heat exchangers, and along coolant distribution lines. Additional sensors should monitor ambient conditions, coolant flow rates, and pump power consumption.

- Data Infrastructure: A robust data acquisition (DAQ) system must collect this sensor data in real-time, with timestamps for synchronization. Data is then aggregated into a central platform, such as a time-series database, where it is accessible for model training and real-time inference.

The architecture for this system involves a closed-loop control logic where AI decisions directly influence the thermal environment, which is then measured again, creating a cycle of continuous learning and adjustment.

Model Training and Validation Protocol

The core intelligence of the EMS is developed through a rigorous process of model training and validation.

- Data Preprocessing: The collected historical data is cleaned, handling missing values and removing outliers. It is then normalized to ensure all features contribute equally to the model.

- Feature Engineering: Relevant features are identified and created. These may include rolling averages of temperature, rate-of-change calculations, time-of-day indicators, and scheduled reaction profiles.

- Model Selection and Training: Based on the objective (e.g., prediction vs. control), an appropriate algorithm (e.g., LSTM, RL) is selected. The preprocessed data is split into training and validation sets (e.g., 80/20 split). The model is trained on the training set, and its hyperparameters are tuned to optimize performance on the validation set, using metrics like Mean Absolute Error (MAE) for predictors or cumulative reward for RL agents.

- Experimental Validation: The trained model is deployed in a controlled experimental setup. A series of reactions are run in parallel reactors, with the AI-EMS managing thermal control. Its performance is benchmarked against a traditional PID-controlled system. Key Performance Indicators (KPIs) include:

- Temperature Stability: Standard deviation of reactor temperature from setpoint.

- Energy Consumption: Total kWh used by the cooling system.

- Uniformity: Maximum temperature differential between parallel reactors.

- Response Time: Time taken to recover from a simulated thermal disturbance.

Table 2: Key Reagent Solutions for AI-EMS Experimental Research

| Research Reagent / Tool | Function in AI-EMS Development |

|---|---|

| Long Short-Term Memory (LSTM) Network | Models complex temporal sequences for predicting reactor temperature drift and cooling demand. |

| Reinforcement Learning (RL) Framework | Provides the environment and algorithms for training an autonomous control agent that optimizes for energy use and stability. |

| Digital Twin Platform | Creates a physics-based virtual replica of the reactor system for safe, low-risk testing and validation of AI control strategies. |

| Genetic Algorithm | Used for multi-objective optimization, such as identifying the ideal coolant parameters or hardware setpoints. |

| Sensor Fusion Software | Integrates data from disparate sensor types (temperature, flow, power) to create a unified state representation for the AI model. |

Advanced Applications and Performance Analysis

Advanced cooling technologies, when coupled with AI, yield remarkable performance gains. Immersion boiling heat transfer is one such method, where reactor components or entire modules are submerged in a dielectric coolant [32] [31]. The AI's role is to optimize the coolant parameters and manage the system to maximize heat transfer efficiency. Research shows that AI algorithms can optimize key coolant parameters—with density, viscosity, and specific heat capacity being the most critical—to significantly reinforce immersion boiling heat transfer performance, thereby preventing dangerous thermal runaway conditions [31].

The experimental workflow for integrating AI with such a advanced system involves a tight coupling between physical testing and computational optimization, as outlined below.

The quantitative outcomes of implementing AI-driven thermal management are substantial. Studies in energy storage, a field with analogous thermal challenges, show that AI-enabled real options analysis can achieve 45-81% cost reductions compared to conventional planning approaches [27]. This underscores the significant economic advantage of flexible, intelligent systems. Furthermore, AI's predictive capabilities are key to safety. By accurately forecasting thermal states, these systems can initiate preemptive cooling or safely shut down reactions before critical temperatures are reached, directly addressing the risk of thermal runaway in exothermic processes [31] [28].

The future of AI-enhanced EMS for thermal control is poised for further innovation. Key emerging trends include the development of explainable AI (XAI) to build trust in model decisions, the use of federated learning to train models across multiple secure facilities without sharing proprietary data, and the integration of physics-informed neural networks to ensure model predictions adhere to fundamental laws of thermodynamics [27]. The market for these intelligent thermal solutions is projected to grow steadily, with a CAGR of 7.8%, reaching approximately USD 35.3 billion by 2035, indicating strong industrial adoption and technological advancement [33].

In conclusion, the integration of AI and ML into Energy Management Systems represents a transformative leap for real-time thermal control in parallel reactors. By moving beyond reactive strategies to embrace predictive, adaptive, and optimizing control, AI-enhanced EMS directly supports the core objectives of modern research and drug development: enhanced reproducibility, superior safety, and improved operational efficiency. As these intelligent systems continue to evolve, they will become an indispensable component of the research infrastructure, enabling more complex, sensitive, and high-throughput processes in the scientific pursuit.

Machine Learning Frameworks for Highly Parallel Reaction Optimization