Validating Optimal Reaction Conditions: A Practical DoE Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on validating optimal reaction conditions using Design of Experiments (DoE).

Validating Optimal Reaction Conditions: A Practical DoE Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on validating optimal reaction conditions using Design of Experiments (DoE). It covers the foundational principles of moving beyond one-variable-at-a-time (OVAT) approaches, practical methodologies for implementing screening and optimization designs, strategies for troubleshooting and enhancing robustness, and advanced techniques integrating machine learning for superior outcomes. Through real-world case studies and comparative analysis, the content demonstrates how a structured DoE validation strategy can accelerate process development, improve yield and selectivity, and ensure reliable scale-up in pharmaceutical synthesis.

Beyond One-Variable-at-a-Time: Laying the Groundwork for Effective DoE

The Critical Limitations of OVAT Optimization in Complex Syntheses

In the pursuit of optimal reaction conditions across chemical synthesis and bioprocessing, the one-variable-at-a-time (OVAT) approach has historically been the default investigative method. This traditional technique involves holding all process variables constant while systematically altering a single factor until its optimal value is identified, then repeating this process sequentially for each subsequent variable [1]. While intuitively simple and straightforward to implement, OVAT methodology contains fundamental scientific flaws that become critically limiting when applied to complex, multidimensional synthesis environments where factors interact in non-linear ways. The pharmaceutical industry, in particular, faces mounting pressure to reform optimization paradigms, as evidenced by initiatives like the FDA's Project Optimus which seeks to ensure patients receive cancer therapeutics with dosages that maximize efficacy while minimizing toxicity through more sophisticated optimization approaches [2].

This article objectively examines the critical limitations of OVAT optimization when applied to complex syntheses, comparing its performance against statistically rigorous alternatives like Design of Experiments (DoE). Through experimental data and case studies, we demonstrate how OVAT's methodological constraints compromise both scientific understanding and practical outcomes in sophisticated synthesis environments.

Fundamental Methodological Flaws of OVAT

Inability to Detect Factor Interactions

The most significant limitation of OVAT optimization lies in its systematic failure to detect interactions between process variables. In complex chemical and biological systems, factors rarely operate in isolation; rather, they frequently interact in ways that profoundly influence outcomes. For example, the optimal level of a catalyst may depend on the reaction temperature, or the ideal nutrient concentration may shift with pH variations [1].

- Non-additive effects: OVAT assumes that factor effects are purely additive, an assumption that rarely holds in complex syntheses

- Missed optima: True optimal conditions often exist at specific combinations of factor levels that OVAT cannot discover

- Incomplete understanding: The method provides no insight into how factors jointly influence responses

As demonstrated in combinatorial chemistry and pharmaceutical development, these interaction effects are not merely academic concerns—they directly impact critical quality attributes including yield, purity, and selectivity [1] [3].

Inefficient Exploration of Experimental Space

OVAT represents an exceptionally resource-intensive approach to process optimization, requiring numerous experimental runs to investigate even a modest number of factors. This inefficiency stems from its sequential nature, where each variable must be investigated independently while others remain fixed [4].

Table: Experimental Efficiency Comparison - OVAT vs. DoE

| Methodology | Number of Factors | Experimental Runs Required | Information Gained | Optimization Reliability |

|---|---|---|---|---|

| OVAT | 5 | 25-50 | Main effects only | Local optima likely |

| DoE Screening | 5 | 8-16 | Main effects + key interactions | Directional guidance |

| DoE Optimization | 3 | 15-20 | Full model with interactions | Global optima identified |

This experimental inefficiency has tangible consequences: extended development timelines, increased consumption of valuable materials, and delayed process implementation [4]. In radiochemistry, where researchers work with short-lived isotopes and expensive precursors, these limitations become particularly acute [4].

Comparative Performance in Complex Synthesis Environments

Case Study: Pharmaceutical Process Development

In pharmaceutical development, where synthesis complexity is high and timelines are compressed, OVAT's limitations have significant practical implications. Studies indicate that combinatorial library preparation groups spend the majority of their time optimizing chemistry rather than conducting actual synthesis when using traditional approaches [1].

A comparative analysis revealed that DoE approaches provided more than two-fold greater experimental efficiency than traditional OVAT optimization while simultaneously generating more comprehensive process understanding [4]. The statistical approach enabled researchers to simultaneously evaluate multiple variables according to a predefined experimental matrix, mapping process behavior across the entire experimental space rather than along isolated axes [4].

Case Study: Microbial Metabolite Production

The limitations of OVAT become particularly evident in bioprocess optimization, where multiple nutrients and environmental factors interact complexly to influence productivity. In a study optimizing pigment production from the marine-derived fungus Talaromyces albobiverticillius 30548, initial OVAT analysis provided preliminary insights but failed to identify optimal conditions [5].

Table: Performance Comparison in Fungal Pigment Production

| Optimization Method | Biomass Production (g/L) | Red Pigment Yield (g/L) | Experimental Runs | Key Interactions Identified |

|---|---|---|---|---|

| Initial OVAT | 6.60 | 2.44 | ~30 | None |

| Response Surface Methodology | 20.95 | 9.35 | ~25 | Yeast extract × MgSO₄, K₂HPO₄ × MgSO₄ |

When researchers applied Response Surface Methodology (a DoE technique) following initial OVAT screening, they achieved substantial improvements: 11-fold and 16.7-fold improvements in biomass and pigment production, respectively, demonstrating OVAT's inability to locate true optima even after extensive experimentation [5].

Similar results emerged in bioprocessing, where OVAT optimization of edible oil production by Rhodotorula glutinis initially increased biomass and lipid production by 4.4-fold and 6-fold respectively, but subsequent statistical optimization through Plackett-Burman and Box-Behnken designs led to far more significant 11-fold and 16.7-fold improvements overall [6].

Practical Consequences in Research and Development

Suboptimal Process Conditions

The failure to detect factor interactions and efficiently explore experimental space inevitably leads to the identification of local rather than global optima. In OVAT optimization, the identified "optimum" is heavily dependent on the starting conditions selected for the investigation, often representing merely the best conditions along the limited paths investigated rather than the true optimum within the multidimensional space [4].

In copper-mediated radiofluorination reactions, OVAT approaches resulted in poor reproducibility and synthesis performance at larger scales, ultimately failing to establish robust, scalable conditions. Only through DoE could researchers understand the nuanced, precursor-specific experimental factors and their interactions that controlled reaction performance [4].

Incomplete Process Understanding and Control

OVAT optimization generates fragmented process knowledge that provides limited guidance for troubleshooting, scale-up, or regulatory justification. Without understanding how factors interact, researchers cannot predict how process adjustments will affect outcomes or how to compensate for raw material variability [1].

This limitation has significant quality implications, prompting regulatory bodies to encourage more systematic approaches like Quality by Design (QbD), which employs DoE to establish a design space within which critical process parameters can be varied while maintaining product quality [3]. The ICH Q8(R2) guideline specifically recommends this approach for pharmaceutical development, representing a fundamental shift from the OVAT-based paradigm [3].

The Modern Alternative: Design of Experiments

Fundamental Methodological Advantages

DoE addresses OVAT's core limitations through structured, simultaneous variation of multiple factors according to mathematical principles that enable efficient space exploration and interaction detection [1] [4]. Key advantages include:

- Factorial efficiency: Multiple factors investigated simultaneously in balanced arrays

- Interaction detection: Experimental structures capable of quantifying how factor effects depend on other factor levels

- Model building: Statistical models that predict responses throughout the experimental space

- Error estimation: Proper accounting of experimental variability without excessive replication

These capabilities make DoE particularly valuable for optimizing complex synthetic transformations, where the relationship between process inputs and outputs is often multivariate and non-linear [4].

Implementation Workflow

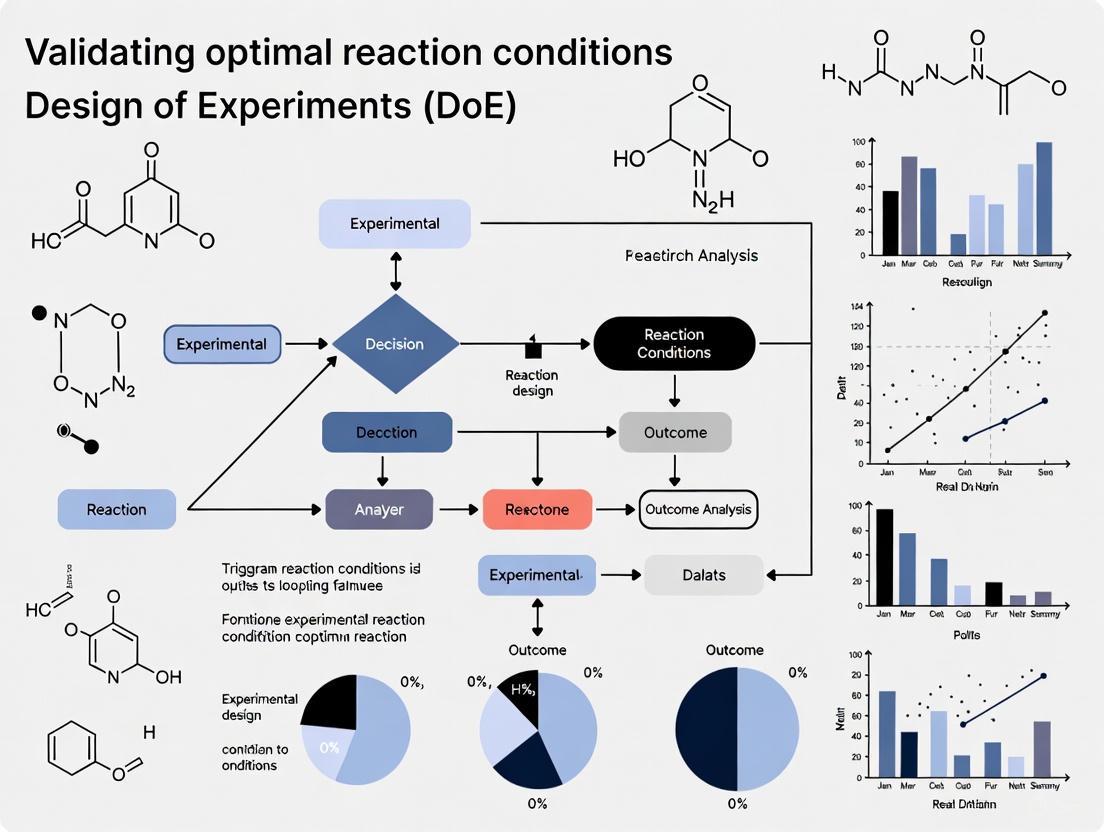

The following diagram illustrates the fundamental differences in how OVAT and DoE approaches explore experimental space, with OVAT examining one dimension at a time while DoE investigates multiple dimensions simultaneously:

Essential Research Reagent Solutions for Effective Optimization

Implementing effective optimization strategies requires specific reagents and tools designed for systematic experimentation:

Table: Essential Research Reagent Solutions for Synthesis Optimization

| Reagent/Tool Category | Specific Examples | Function in Optimization | Application Notes |

|---|---|---|---|

| Parallel Synthesis Equipment | Automated dispensing robots, parallel reaction devices | Enables simultaneous execution of multiple experimental conditions | Critical for efficient DoE implementation [1] |

| High-Throughput Analytics | MS, HPLC, plate readers | Rapid analysis of multiple samples from parallel experiments | Enables quick turnaround between experimental phases [7] |

| Experimental Design Software | Modde, JMP | Statistical design creation and data analysis | Reduces barrier to implementation; provides statistical rigor [4] |

| Specialized Reactors | Controlled parallel microreactors | Maintains consistent conditions across multiple experiments | Minimizes spatial bias in HTE [7] |

| Chemical Libraries | Diverse catalyst/ligand sets, substrate arrays | Broad exploration of chemical space | Enables comprehensive rather than limited screening [7] |

The critical limitations of OVAT optimization in complex syntheses—including its inability to detect factor interactions, inefficient exploration of experimental space, tendency to find local optima, and generation of fragmented process understanding—render it inadequate for modern chemical and pharmaceutical development. As synthesis complexity increases and development timelines compress, these limitations become increasingly consequential [1].

Alternative methodologies centered on statistical design of experiments offer not only practical efficiency advantages but, more importantly, generate the profound process understanding necessary for robust, scalable, and well-controlled syntheses. The transition from OVAT to DoE represents more than a technical improvement—it constitutes a fundamental shift toward a more scientific approach to process optimization that properly accounts for the multidimensional, interactive nature of complex syntheses [1] [4].

While OVAT may retain value for preliminary screening of individual factors, its role should be recognized as limited to this initial exploratory phase rather than the primary method for comprehensive optimization [5] [6]. As the field continues to advance, embracing more sophisticated optimization strategies will be essential for addressing the increasingly complex challenges of modern chemical synthesis and bioprocessing.

For researchers, scientists, and drug development professionals, validating optimal reaction conditions represents a fundamental challenge in process development and optimization. The traditional "one-factor-at-a-time" (OFAT) approach, while intuitively simple, suffers from critical limitations including experimental inefficiency, inability to detect factor interactions, and tendency to identify only local optima rather than true optimal conditions [4]. In contrast, Design of Experiments (DoE) provides a systematic, statistical framework for planning and executing experiments that can simultaneously investigate multiple factors and their complex interactions [8] [9]. This methodology has demonstrated particular value in complex optimization scenarios such as copper-mediated radiofluorination reactions in PET tracer development, where it has enabled more efficient identification of critical factors and their optimal settings compared to traditional approaches [4].

The core strength of factorial experiments lies in their ability to realistically emulate dynamics where variables interact intricately and nonlinearly [8]. By accounting for these interplays, DoE guards against oversimplification and provides insights into underlying realities that inform resolution and refinement pursuits across diverse applications from pharmaceutical development to manufacturing optimization [8]. This article examines core DoE principles, with particular emphasis on factorial designs and the critical role of interaction effects in validating optimal reaction conditions.

Fundamental DoE Principles and Comparative Efficiency

Core Principles of DoE

DoE methodology rests upon several foundational principles that ensure robust, reliable experimental outcomes:

- Randomization: The process of randomly assigning experimental runs to different factor level combinations helps mitigate potential impacts of nuisance variables and ensures observed effects can be attributed to the factors under investigation [8].

- Replication: Repeating experimental runs under identical conditions allows researchers to estimate inherent process variability and provides a measure of experimental error, ensuring result reliability [8].

- Blocking: This technique accounts for known sources of variability by grouping experimental runs into homogeneous blocks, enabling researchers to isolate and quantify effects of nuisance variables for more precise factor effect estimation [8].

DoE vs. OFAT: A Quantitative Comparison

Table 1: Experimental Efficiency Comparison Between OFAT and DoE Approaches

| Aspect | OFAT Approach | DoE Approach |

|---|---|---|

| Experimental Efficiency | Less efficient; requires more runs for same precision [10] | More efficient; provides more information at similar or lower cost [10] |

| Interaction Detection | Cannot detect interactions between factors [10] | Specifically designed to detect and quantify interactions [8] |

| Optima Identification | Prone to finding local optima [4] | Better at identifying true optimal conditions [10] |

| Validity Range | Conclusions valid only at specific experimental conditions [10] | Conclusions valid over range of experimental conditions [10] |

| Resource Requirements | Resource-intensive for multiple factors [4] | More information with fewer experimental runs [4] |

The efficiency advantage of DoE becomes particularly evident in complex optimization scenarios. In the optimization of copper-mediated 18F-fluorination reactions, DoE identified critical factors and modeled their behavior with more than two-fold greater experimental efficiency than the traditional OFAT approach [4]. Similarly, factorial designs have been shown to provide more information at similar or lower cost compared to OFAT experiments, enabling researchers to find optimal conditions faster [10].

Factorial Designs: Structure and Applications

Types of Factorial Designs

Full factorial designs systematically examine all possible combinations of factors and their levels, providing comprehensive insights into system behavior [8] [11]. These designs can be categorized based on their structure and application:

- 2-Level Full Factorial Designs: These designs, where each factor has two levels (typically labeled "low" and "high"), are commonly employed in screening experiments to identify the most significant factors influencing the response variable [8] [9]. The number of experimental runs required for a full factorial design with k factors is 2k [9].

- 3-Level Full Factorial Designs: Unlike 2-level designs that assume linear relationships, 3-level designs allow investigation of quadratic effects and can model curvature in the response surface more accurately [8].

- Mixed-Level Full Factorial Designs: These accommodate combinations of categorical factors (e.g., material type, production method) and continuous factors (e.g., temperature, pressure), providing comprehensive understanding of systems with different factor types [8] [11].

Table 2: Factorial Design Types and Their Characteristics

| Design Type | Factor Levels | Key Applications | Key Advantages | Limitations |

|---|---|---|---|---|

| 2-Level Full Factorial | 2 levels per factor (high/low) [8] | Screening experiments [8] | Identifies significant factors efficiently [8] | Cannot detect curvature [8] |

| 3-Level Full Factorial | 3 levels per factor (low/medium/high) [8] | Modeling nonlinear responses [8] | Captures quadratic effects [8] | Requires more experimental runs [8] |

| Mixed-Level Full Factorial | Different levels for different factors [8] | Combined categorical/continuous factors [8] | Handles different factor types [8] | Complex analysis and interpretation [8] |

Experimental Protocol for Full Factorial Design

Implementing a full factorial design involves a structured methodology:

- Identify Factors and Levels: Determine which variables (factors) may affect the response variable and select appropriate levels for each factor. The choice of factor levels is crucial as it determines the range of conditions under which the experiment is conducted [8] [11].

- Create Experimental Design Matrix: Construct a matrix that specifies all possible combinations of factors and levels to be tested [11].

- Calculate Total Number of Experiments: Multiply the number of levels for each factor to determine the total experimental runs required. For example, a design with three factors at two levels each requires 2 × 2 × 2 = 8 experimental runs [11] [9].

- Determine Replication Strategy: Plan additional experiments under identical conditions to estimate experimental error. The number of replicates depends on the experimental design and desired statistical confidence [11].

- Randomize Run Order: Randomly assign the order of experimental runs to mitigate potential confounding effects of external variables [8].

- Execute Experiments and Collect Data: Conduct experiments according to the design matrix while carefully controlling experimental conditions.

- Analyze Results: Evaluate main effects and interaction effects using statistical methods such as Analysis of Variance (ANOVA) to identify significant factors and optimize settings [11].

Figure 1: Factorial Design Experimental Workflow

Interaction Effects: The Critical Differentiator

Understanding Interaction Effects

Interaction effects represent perhaps the most significant advantage of factorial designs over OFAT approaches. An interaction occurs when the effect of one factor on the response variable depends on the level of another factor [12] [11]. In practical terms, this means factors do not act independently, but rather their combined effect differs from what would be expected based on their individual effects.

A concrete example demonstrates this concept: temperature and humidity may interact to affect human comfort. At low humidity (0%), comfort might increase by 5 units as temperature increases from 0° to 75°F. However, at high humidity (35%), the same temperature increase might increase comfort by 7 units. The different effect of temperature at different humidity levels demonstrates an interaction between these factors [12].

Calculating and Visualizing Interaction Effects

The calculation of interaction effects involves comparing the differences in response across factor levels. Using the temperature/humidity example:

- At low humidity: Temperature effect = 5 units

- At high humidity: Temperature effect = 7 units

- Interaction effect AB = (7 - 5)/2 = 1 unit [12]

This result indicates that the change in comfort level increases by 1 more unit at the high level compared to the low level of humidity when temperature increases from low to high [12].

Figure 2: Types of Interaction Effects

Case Study: Bearing Lifespan Optimization

A compelling example from the bearing manufacturer SKF demonstrates the practical importance of interaction effects. Engineers initially planned to test a modified cage design using an OFAT approach with four runs each for standard and modified designs. A statistician showed how they could test two additional factors (heat treatment and outer ring osculation) "for free" using a 2×2×2 factorial design with the same eight runs [10].

The results revealed that cage design alone had minimal impact on bearing lifespan. However, the analysis discovered a dramatic interaction: when outer ring osculation and heat treatment were increased together, bearing life increased fivefold [10]. This extraordinary discovery, which had been missed during decades of bearing production, highlights how OFAT approaches can miss critical interactions that significantly impact process outcomes.

Analytical Approaches for Factorial Experiments

Statistical Analysis Methods

The analysis of factorial experiments employs several statistical techniques to extract meaningful insights from experimental data:

- Analysis of Variance (ANOVA): ANOVA is used to determine the significance of main effects and interaction effects on the response variable. By partitioning total variability into components attributable to each factor and their interactions, ANOVA identifies the most influential factors and their relationships [8]. The p-value obtained from ANOVA (typically compared against α = 0.05) indicates whether the association between a term and the response is statistically significant [13].

- Regression Analysis: This technique involves fitting a mathematical model to experimental data, relating the response variable to the independent variables and their interactions. The resulting model can predict response values for any factor combination within the experimental region and support optimization efforts [8].

- Graphical Analysis: Interaction plots display the response variable as a function of one factor at different levels of another factor, helping researchers visualize and understand complex relationships within the system [8].

Key Analytical Outputs and Interpretation

When analyzing factorial design results, several key outputs guide interpretation:

- Pareto Chart of Standardized Effects: This chart compares the relative magnitude and statistical significance of both main and interaction effects, helping identify which terms contribute most to response variability [13].

- Model Summary Statistics: Goodness-of-fit statistics including S, R-squared (R²), adjusted R-squared, and predicted R-squared indicate how well the model describes the response data [13].

- Coefficients Table: The estimated coefficients for each factor and interaction indicate the direction and magnitude of their effects on the response variable [13].

- Residual Plots: These plots help verify that model assumptions are met, checking for random distribution of residuals, constant variance, and normality [13].

Table 3: Essential Research Reagent Solutions for DoE Implementation

| Reagent/Category | Function/Purpose | Application Context |

|---|---|---|

| Statistical Software | Data analysis, model fitting, visualization | JMP, Minitab, R, SPSS for experimental design and analysis [14] [13] |

| Experimental Design Platforms | DoE construction, randomization, blocking | Specialized software for creating factorial, fractional factorial designs [4] |

| Coefficient Estimates | Quantify factor effect direction and magnitude | Determining how changes in factors affect the response variable [13] |

| P-value Indicators | Assess statistical significance of effects | Hypothesis testing for factor significance (typically α = 0.05) [13] |

| Model Diagnostics | Verify model adequacy and assumptions | Residual plots, lack-of-fit tests, normality checks [13] |

Advanced Applications and Future Directions

Integration with Machine Learning

Recent advances have demonstrated the powerful synergy between DoE and machine learning (ML) approaches. In tissue engineering, ML offers potential to overcome limitations of traditional DoE, particularly for processing complex data types such as images, video, audio, and high-dimensional data where the number of features exceeds observations [9]. The integration of these methodologies shows promise for enhancing optimization processes in biomaterials and tissue engineering research [9].

A notable application in organic light-emitting device (OLED) development combined DoE with machine learning predictions to correlate reaction conditions with device performance. Researchers used support vector regression (SVR), partial least squares regression (PLSR), and multilayer perceptron (MLP) methods to generate predictive heatmaps, with the SVR model successfully identifying optimal conditions that yielded high-performance OLEDs surpassing purified materials [15].

Response Surface Methodology

For advanced optimization beyond initial screening, Response Surface Methodology (RSM) provides powerful techniques for modeling and optimizing systems influenced by multiple variables [16]. RSM builds upon factorial designs by adding center points and axial points to estimate curvature and build second-order polynomial models, enabling more sophisticated optimization of process conditions [16].

Central Composite Designs (CCD) and Box-Behnken Designs (BBD) represent two common RSM approaches that extend basic factorial structures to efficiently explore quadratic response surfaces while managing experimental resource requirements [16].

Factorial designs and the understanding of interaction effects represent cornerstone principles in the Design of Experiments methodology. By enabling simultaneous investigation of multiple factors and their interactions, these approaches provide a more comprehensive, efficient pathway to process optimization compared to traditional one-factor-at-a-time experimentation. The ability to detect and quantify interaction effects is particularly valuable, as these interactions often reveal the most significant opportunities for process improvement, as demonstrated in the bearing lifespan case where a previously unknown interaction led to a fivefold improvement.

For researchers, scientists, and drug development professionals focused on validating optimal reaction conditions, mastering these core DoE principles provides a robust framework for efficient, effective process optimization. The structured methodology of factorial designs, coupled with rigorous statistical analysis and emerging integrations with machine learning, offers powerful tools for advancing research and development across diverse scientific and industrial domains.

In the development of chemical reactions, particularly for the pharmaceutical industry, validating optimal conditions requires a clear framework of objectives. The key metrics of Yield, Selectivity, and Purity form the traditional triad for assessing reaction efficiency and product quality. Meanwhile, Green Metrics provide a crucial lens for evaluating environmental and economic sustainability. Within modern Design of Experiments (DoE) research, these objectives are not pursued in isolation but are optimized simultaneously. This guide compares these critical validation parameters, detailing their distinct roles and interrelationships, and provides methodologies for their integrated assessment to guide researchers in validating robust, efficient, and sustainable chemical processes.

Comparative Analysis of Core Validation Objectives

The following table defines the four core validation objectives, their quantitative measures, and their primary significance in reaction validation.

Table 1: Comparison of Core Validation Objectives in Reaction Optimization

| Objective | Definition & Measurement | Primary Significance |

|---|---|---|

| Yield [17] [18] | Percent Yield = (Actual Mass of Product / Theoretical Mass of Product) × 100 [17]. | Measures the efficiency of a reaction in converting reactants to a desired product. A high yield indicates minimal material loss during the reaction itself [18]. |

| Selectivity [19] | The ability of a reaction to preferentially form a specific desired product over other by-products. It is crucial for minimizing the formation of undesired compounds [19]. | Determines the purity potential and directly impacts the cost and difficulty of downstream purification. High selectivity reduces waste [19]. |

| Purity | The proportion of the target molecule within the isolated product mixture, often assessed by chromatography (e.g., HPLC) or spectroscopy (e.g., NMR). | Ensures product quality and safety. Critical for pharmaceuticals, where impurities can have toxicological consequences. |

| Green Metrics [20] [21] | A set of metrics to quantify environmental performance, including Atom Economy (mass of desired product/mass of all reactants) [21] and E-Factor (mass of total waste/mass of product) [21]. | Evaluates the environmental and economic sustainability of a process. A lower E-Factor and higher Atom Economy signify less waste generation [21]. |

Experimental Protocols for Measurement

This section outlines standard and advanced methodologies for determining these critical metrics.

Standard Measurement Protocols

Yield Determination (Isolated Yield)

- Reaction Execution: Perform the reaction using precise quantities of reactants, noting the mass or moles of the limiting reagent.

- Workup and Purification: Isolate the crude product through standard workup procedures (e.g., extraction, filtration). Purify the product via techniques such as recrystallization, distillation, or column chromatography [17].

- Calculation: Weigh the final, purified product. The percent yield is calculated as (mass of isolated pure product / theoretical mass of product) × 100, where the theoretical mass is based on the limiting reagent [17] [18].

Selectivity and Purity Assessment (Chromatographic Analysis)

- Sample Preparation: Dissolve a small sample of the crude or purified product in a suitable solvent.

- Analysis: Inject the sample into an HPLC or GC system equipped with a UV or mass spectrometry detector.

- Data Interpretation: The relative area of the peak corresponding to the desired product compared to the areas of all other peaks provides a measure of selectivity (in the crude mixture) and purity (in the final isolated product).

Advanced Protocol: Implementing Design of Experiments (DoE)

The One-Variable-At-a-Time (OVAT) approach is inefficient for optimizing multiple objectives and fails to capture interaction effects between variables [22]. DoE is a superior statistical methodology that systematically explores how multiple factors simultaneously impact all responses (e.g., yield, selectivity, green metrics) [22].

Table 2: Key Steps for a DoE Optimization Protocol

| Step | Description | Consideration for Multiple Objectives |

|---|---|---|

| 1. Define Variables | Select independent variables to study (e.g., temperature, catalyst loading, concentration) and set their high/low bounds [22]. | Ensure the chosen range is feasible for all responses of interest. |

| 2. Choose Experimental Design | Select a statistical design (e.g., full factorial, fractional factorial) that defines the set of experimental runs [22]. | The design must capture enough data to model all desired responses. |

| 3. Run Experiments & Measure Responses | Execute the experiments in the designed order and measure the outcomes for each run (e.g., yield, selectivity, E-Factor) [22]. | All responses must be measured for every experiment to build comprehensive models. |

| 4. Statistical Analysis & Modeling | Use software to analyze the data and generate mathematical models linking the variables to each response [22]. | Models will show how variables affect yield, selectivity, and green metrics individually and interactively. |

| 5. Find Optimum Conditions | Use optimization algorithms (e.g., desirability functions) to find the variable settings that deliver the best balance of all objectives [22]. | This allows for finding a compromise that maximizes yield and selectivity while minimizing environmental impact (E-Factor). |

The workflow for a typical DoE-based optimization is visualized below.

Case Study & Data Presentation

A recent study on recycling platinum group metals (PGMs) via bioleaching provides an excellent example of how green metrics are quantified and used for validation [20].

Experimental Summary: The study used cyanogenic bacteria (Pseudomonas fluorescens, Bacillus megaterium, Chromobacterium violaceum) in a two-step bioleaching process to recover PGMs from spent automotive catalysts. Experiments were conducted at different pulp densities (0.5-4% w/v) [20].

Methodology for Green Metrics: Green metrics, including atom economy and E-Factor, were calculated for the process under four different boundary conditions defined by limiting reactants and desired metals [20]. This rigorous approach allows for a comprehensive environmental impact assessment.

Table 3: Quantitative Green Metrics from Platinum Group Metal Bioleaching Study [20]

| Experimental Condition (Pulp Density) | PGM Extraction Efficiency | E-Factor (Mass Waste/Mass Product) | Atom Economy |

|---|---|---|---|

| 0.5% w/v | Reported data for Pt, Pd, Rh | Calculated for overall process | Calculated for overall process |

| 1% w/v | Reported data for Pt, Pd, Rh | Calculated for overall process | Calculated for overall process |

| 2% w/v | Reported data for Pt, Pd, Rh | Calculated for overall process | Calculated for overall process |

| 4% w/v | Reported data for Pt, Pd, Rh | Calculated for overall process | Calculated for overall process |

Note: The original study [20] contains the specific numerical data for extraction efficiency and the calculated green metrics, which would be populated in a table like this for comparison. The key finding is that metrics were successfully quantified for each condition, enabling a data-driven sustainability comparison.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table lists key reagents and tools essential for experiments focused on these validation objectives, especially in the context of green chemistry and DoE.

Table 4: Essential Reagents and Tools for Reaction Validation and Optimization

| Reagent / Tool | Function / Application |

|---|---|

| Cyanogenic Bacteria (e.g., P. fluorescens) [20] | Used in sustainable leaching processes; produce cyanide as a metabolite to form complexes with metals for recovery [20]. |

| Green Metrics Calculation Software | Enables the quantification of sustainability indicators like Atom Economy and E-Factor from experimental data [20] [21]. |

| Statistical Software Suite | Essential for designing experiments (DoE), analyzing complex datasets, and building models to optimize multiple objectives simultaneously [22]. |

| Analytical Standards | High-purity compounds used to calibrate instruments like HPLC and GC for accurate assessment of yield, selectivity, and purity. |

| HPLC with UV/Vis Detector | A core analytical instrument for separating mixture components and quantifying the target compound's purity and selectivity. |

Integrated Workflow for Validated Optimization

The interplay between the traditional objectives of yield, selectivity, and purity with the modern imperative of green metrics creates a multi-dimensional optimization challenge. The following diagram synthesizes these concepts into a single, integrated validation workflow.

Essential Software and Statistical Tools for the Modern Chemist

The validation of optimal reaction conditions is a cornerstone of chemical research and development. In this pursuit, Design of Experiments (DoE) has emerged as a critical, systematic methodology for efficiently exploring multiple factors and their complex interactions. This guide objectively compares the performance of modern software and statistical tools that empower chemists to implement robust DoE strategies, accelerate discovery, and streamline process optimization.

Software Solutions for Design of Experiments (DoE)

DoE software provides a structured environment for designing, executing, and analyzing experiments. These tools help chemists move beyond the inefficient one-factor-at-a-time approach, enabling them to uncover complex interactions between variables with fewer experimental runs [23].

The table below summarizes the core features, strengths, and costs of leading DoE software platforms relevant to chemical applications.

Table 1: Comparison of Leading DoE Software for Chemical Applications

| Software | Primary Use Case | Standout Features | Pricing (Starts at) | Experimental Design Support |

|---|---|---|---|---|

| Design-Expert [24] | Product and process optimization | Intuitive interface; strong visualization tools (2D/3D graphs); optimization functionality [25] [24] [26] | ~$1,035/year [25] [26] | Factorial, Response Surface (RSM), Mixture, Optimal designs [24] |

| JMP [25] [26] | Advanced statistical analysis & data exploration | Powerful visual analytics; seamless SAS integration; diverse statistical models [25] [26] | ~$1,200/year [26] | Broad range of screening and optimization designs [25] |

| Minitab [25] [26] | Statistical analysis and quality improvement | Comprehensive statistical tools; strong training resources; widely used in industry [25] [26] | ~$1,780/year [26] | Factorial, Response Surface, Taguchi designs [25] |

| MODDE [25] | Biopharmaceutical process optimization | Automated analysis wizard; robust optimum identification; tailored for biopharma [25] | Custom Pricing [25] | Classical factorial and RSM designs [25] |

| SafetyCulture (iAuditor) [25] | Mobile-friendly quality control & data collection | Real-time data collection via sensors; quality control checklists; offline mobile capabilities [25] | $24/seat/month [25] | Basic design templates for quality control [25] |

| Quantum Boost [25] [26] | AI-driven R&D acceleration | AI-powered to reduce experiment count; project flexibility; cloud-based platform [25] [26] | $95/month [25] [26] | AI-suggested optimal designs [26] |

Performance Comparison and Experimental Data

Selecting the right software often depends on the specific stage of research and the user's statistical expertise.

- For Screening and Optimization: A comparative review of software platforms highlights that Design-Expert is frequently praised for its specialized focus on DoE and user-friendliness, making it particularly suitable for researchers who need to apply multifactor testing tools without a steep learning curve [26]. Its intuitive layout and design wizards efficiently guide users from initial screening to advanced optimization [25].

- For Advanced Statistical Analysis: JMP and Minitab are recognized for their extensive statistical capabilities, which are valuable for in-depth, data-rich exploration [25] [26]. However, their full potential is best unlocked with a solid foundation in statistics. JMP's strengths lie in its interactive visualizations that link data with graphics, while Minitab is an industry standard for quality control and improvement projects [25].

- For AI-Powered Efficiency: Quantum Boost leverages artificial intelligence to minimize the number of experiments required to reach a target, claiming to accelerate project development by 2-5 times compared to traditional DoE [26]. This approach can lead to significant resource savings in high-cost or time-consuming experimental settings.

Advanced Statistical Tools for Complex Mixture Analysis

In environmental chemistry and toxicology, chemists frequently face the challenge of characterizing complex chemical mixtures. Traditional statistical methods often fall short, leading to the development of sophisticated methodologies. A 2025 simulation study provides empirical evidence on the performance of various methods for different analytical goals [27].

Table 2: Statistical Methods for Analyzing Chemical Mixtures Based on a 2025 Simulation Study

| Analytical Goal | Recommended Methods | Key Performance Findings [27] |

|---|---|---|

| Identifying Important Mixture Components | Elastic Net (Enet), Lasso, Group Lasso [27] | These penalized regression methods showed stable performance in accurately selecting toxicants associated with a health outcome across various simulation settings. |

| Detecting Interactions Among Components | HierNet, SNIF [27] | These methods were specifically designed or demonstrated effectiveness in uncovering interaction effects between different pollutants in a mixture. |

| Creating a Summary Risk Score | Super Learner, WQS, Q-gcomp [27] | Using the Super Learner ensemble method to combine multiple environmental risk scores led to improved risk stratification and prediction properties. |

Experimental Protocol for Mixture Analysis

To ensure robust and reproducible analysis of chemical mixtures, researchers can follow this standardized protocol, which leverages the "CompMix" R package mentioned in the 2025 study [27]:

- Data Preprocessing: Prepare exposure data by handling missing values, log-transforming skewed chemical concentrations, and standardizing variables.

- Method Selection and Implementation: Based on the primary research question (from Table 2), select one or more appropriate methods. The CompMix R package provides a unified platform to implement a pipeline of these methods, including Enet, HierNet, SNIF, and Super Learner [27].

- Model Fitting and Validation: Execute the chosen methods within the CompMix framework. Use built-in functions to perform cross-validation and assess model stability.

- Result Synthesis and Interpretation: Compare outputs from different methods. Consensus findings across multiple methods, such as a chemical being consistently flagged as important, provide stronger evidence for conclusion drawing [27].

Experimental Workflow for DoE in Reaction Optimization

The following diagram illustrates a generalized, iterative workflow for applying DoE to validate optimal reaction conditions, from initial planning to final verification.

Diagram 1: DoE Workflow for Reaction Optimization. This chart outlines the key stages, from initial problem definition through screening, optimization, and final verification.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Beyond software, a modern chemist's toolkit includes both computational and methodological "reagents" essential for conducting a robust DoE study.

Table 3: Essential Reagents for a DoE-Driven Research Project

| Tool/Reagent | Function in DoE Research |

|---|---|

| OECD Test Guidelines [28] | Provide internationally accepted standard methods for safety testing of chemicals, ensuring regulatory relevance and data acceptance. |

| R Package 'CompMix' [27] | A comprehensive software toolkit that provides a unified platform for implementing various statistical methods for environmental mixture analysis. |

| Elastic Net (Enet) [27] | A statistical method that performs variable selection and regularization, ideal for identifying key components in a high-dimensional chemical mixture. |

| Super Learner [27] | An ensemble machine learning algorithm used to create a composite summary risk score from multiple models, improving prediction accuracy. |

| Response Surface Methodology (RSM) | A core set of DoE techniques (e.g., Central Composite Designs) used to model and optimize a response based on multiple factors. |

The modern chemist has a powerful arsenal of software and statistical tools at their disposal. Platforms like Design-Expert and JMP streamline the classic DoE workflow, while emerging AI-driven tools like Quantum Boost offer new pathways to efficiency. For the complex challenge of analyzing chemical mixtures, statistical methods such as Elastic Net and the Super Learner, accessible through platforms like the CompMix R package, provide data-driven solutions. Mastering this integrated toolkit is essential for efficiently validating optimal reaction conditions and advancing research in chemistry and drug development.

From Screening to Optimization: A Stepwise DoE Methodology for Reaction Validation

In the initial stages of investigating a complex process—such as optimizing reaction conditions in drug development—researchers often face a large number of potential influencing factors. A 2-level full factorial design is a powerful statistical strategy used specifically for screening these factors to efficiently distinguish the critical few from the trivial many [29]. This method involves experimentally testing each factor at two levels (typically coded as -1 for low and +1 for high) across all possible combinations [10] [30]. Its primary strength lies in its ability to not only estimate the individual (main) effect of each factor but also to detect interactions between factors—situations where the effect of one factor depends on the level of another [8] [10]. This capability is crucial, as interactions are common in complex biological and chemical systems and cannot be detected by traditional one-factor-at-a-time (OFAT) experimentation [10].

Using this design as a screening tool allows research teams to conserve valuable resources. By focusing subsequent, more detailed optimization studies only on the factors proven to be significant, the overall research process becomes faster, more cost-effective, and more likely to succeed in identifying truly optimal conditions, such as those required for a robust drug formulation process [29] [31].

The "Screening" Mindset: A Strategic Workflow

The following diagram illustrates the strategic position of the screening step within a broader experimental workflow for validating optimal reaction conditions.

Core Principles of 2-Level Factorial Designs

Core Concepts and Notation

A 2-level full factorial design for k factors, denoted as a 2^k design, requires 2^k experimental runs for a single replicate [29]. For example, with 3 factors, 2^3 = 8 runs are needed. The design is highly efficient, providing estimates for k main effects and all possible two-factor, three-factor, and higher-order interactions from a relatively small number of runs [30].

A specialized notation, known as Yates notation, is often used to conveniently represent the treatment combinations [30]:

(1)represents the run where all factors are at their low level.arepresents the run where factor A is high and all others are low.brepresents the run where factor B is high and all others are low.abrepresents the run where both A and B are high, and so on for higher numbers of factors.

Calculating and Interpreting Effects

The core objective of screening is to calculate the effect of a factor, which quantifies how much the response variable changes when the factor is moved from its low to its high level [30]. Mathematically, the effect of factor A is defined as the difference between the average response when A is high and the average response when A is low [30]:

Effect A = ȳ(A+) - ȳ(A-)

Similarly, an interaction effect (e.g., AB) measures the extent to which the effect of factor A changes across the different levels of factor B. A significant interaction effect indicates that the factors are not independent [10].

The following table summarizes the types of effects that can be estimated in a 2^k design and their interpretation.

Table: Types of Effects in a 2-Level Factorial Design

| Effect Type | Description | Interpretation in a Screening Context |

|---|---|---|

| Main Effect | The average change in the response caused by moving a factor from its low to its high level, averaged over all levels of other factors [30]. | A large absolute value indicates a vital factor that strongly influences the outcome. |

| Two-Factor Interaction | Measures how the effect of one factor depends on the level of another factor [8]. | Reveals interdependencies; critical for understanding complex system behavior missed by OFAT. |

| Higher-Order Interaction | An interaction between three or more factors. | These are often, but not always, negligible. A significant effect can reveal complex synergies. |

Advantages Over One-Factor-at-a-Time (OFAT)

The 2-level factorial design offers profound advantages over the still-common One-Factor-at-a-Time approach. The following diagram contrasts the experimental patterns and informational outcomes of the two methods.

As summarized in the table below, the factorial approach is not just statistically superior but also more resource-efficient and reliable for process optimization.

Table: Comparison of OFAT vs. 2-Level Factorial Design

| Characteristic | One-Factor-at-a-Time (OFAT) | 2-Level Full Factorial |

|---|---|---|

| Experimental Efficiency | Inefficient; requires more runs to obtain less information [10]. | Highly efficient; provides more information per experimental run [10]. |

| Detection of Interactions | Cannot detect interactions, leading to potentially flawed conclusions [10]. | Explicitly estimates and tests all two-factor and higher-order interactions [8] [10]. |

| Scope of Conclusion | Conclusions are only valid at the single fixed level of other factors [10]. | Conclusions about main effects are valid over a range of experimental conditions [10]. |

| Optimal Condition Search | Slow and unreliable, as it may miss regions of improved performance due to interactions [10]. | Faster and more effective path to optimal conditions [8] [11]. |

Practical Experimental Protocol

A Step-by-Step Workflow for Screening

Implementing a screening design involves a sequence of logical steps, from planning to analysis, as detailed below.

- Define Objective and Select Factors: Clearly state the goal (e.g., "Identify factors most affecting drug yield"). Assemble a cross-functional team to brainstorm and select

kpotential factors (e.g., temperature, catalyst concentration, reaction time, raw material source) based on scientific knowledge and process experience [11]. - Set Factor Levels: For each of the

kfactors, choose a high (+1) and low (-1) level. These should represent a sufficiently wide, but realistic and safe, range of operation expected to cause a measurable change in the response. Levels can be quantitative (e.g., 50°C vs. 70°C) or qualitative (e.g., Catalyst Type A vs. Catalyst Type B) [29] [30]. - Build the Design Matrix and Randomize: Create a table listing all

2^kunique treatment combinations. The run order for these combinations should be randomized to protect against the influence of lurking variables and ensure the validity of statistical conclusions [8]. - Run Experiment and Collect Data: Execute the experiments according to the randomized run order, carefully measuring the primary response variable(s) (e.g., yield, purity) for each run. If resources allow, running more than one replicate (e.g.,

n=2or3) improves the estimate of experimental error. - Calculate Effects: For each factor and interaction, calculate the effect using the contrast of the response data [30]. Modern statistical software packages automate this process, but the underlying calculation is a difference of averages.

- Identify Vital Factors: Use statistical methods like Analysis of Variance (ANOVA) and graphical tools like the Normal Probability Plot of Effects or Pareto Chart to distinguish significant effects from noise [29] [30]. Factors and interactions with large, statistically significant effects are deemed "vital" and selected for further study.

The Scientist's Toolkit: Essential Reagents and Materials

The following table lists common categories of materials and reagents used in pharmaceutical development experiments, along with their core functions in a screening context.

Table: Key Research Reagent Solutions for Reaction Condition Screening

| Reagent/Material Category | Function in Screening Experiments |

|---|---|

| Chemical Reactants & Substrates | The core materials undergoing transformation; their quality and source are often themselves factors screened for impact on yield and impurity profile. |

| Catalysts (e.g., metal-ligand complexes, enzymes) | Substances that accelerate the reaction rate and improve selectivity; catalyst type and loading are among the most frequently screened factors. |

| Solvents | The reaction medium; solvent choice can profoundly influence reaction kinetics, selectivity, and mechanism, making it a critical screening factor. |

| Reagents & Ligands | Used to facilitate specific chemical transformations or modify catalyst properties; their structure and stoichiometry are common factors. |

| Acids/Bases (pH Modifiers) | Used to control reaction pH, which can drastically impact reaction pathway, rate, and decomposition of products or reactants. |

Presenting and Interpreting Screening Data

Statistical Analysis and Data Presentation

After conducting the experiment, the calculated effects must be formally analyzed. Analysis of Variance (ANOVA) is the primary statistical method used to partition the total variability in the response data into components attributable to each main effect and interaction, and then test them for statistical significance [8]. A key output is to determine if the effect of a factor is larger than what would be expected due to random experimental variation alone.

The results of a screening study are often effectively communicated through a summary table of estimated effects.

Table: Example Summary of Effects from a 3-Factor (2³) Screening Study on Reaction Yield

| Factor | Effect Estimate (%) | Sum of Squares | p-value | Conclusion |

|---|---|---|---|---|

| A (Temperature) | +12.5 | 312.5 | 0.001 | Significant, Vital |

| B (Catalyst Load) | +8.2 | 134.5 | 0.015 | Significant, Vital |

| C (Stirring Rate) | +1.1 | 2.4 | 0.452 | Not Significant |

| AB (Interaction) | -5.8 | 67.3 | 0.042 | Significant, Vital |

| AC | -0.7 | 1.0 | 0.602 | Not Significant |

| BC | +1.3 | 3.4 | 0.410 | Not Significant |

| ABC | -0.9 | 1.6 | 0.532 | Not Significant |

Note: This table presents illustrative data. The positive effect for Temperature (A) means yield increased when moving Temperature from low to high. The significant negative AB interaction indicates that the effect of Temperature depends on the Catalyst Load, a critical finding that would be missed by OFAT.

Case Study Example: Bearing Lifespan Improvement

A classic example from the literature demonstrates the power of factorial designs. Engineers investigated three factors on bearing lifespan: Cage Design (A), Heat Treatment (B), and Outer Ring Osculation (C). A full 2³ factorial experiment revealed that the main effect of Cage Design was negligible. However, a dramatic interaction between Heat Treatment and Osculation was discovered. The data showed that increasing both factors together resulted in a fivefold increase in bearing life—an "extraordinary discovery" that had been missed for decades because previous experiments had varied only one factor at a time [10]. This powerfully illustrates how screening designs can reveal optimal conditions that are invisible to simpler methods.

In the pursuit of validating optimal reaction conditions, researchers traditionally relied on the one-factor-at-a-time (OFAT) approach. While intuitive, this method is inefficient and carries a significant risk: missing critical interaction effects between factors [32]. In pharmaceutical development, where multiple parameters like temperature, concentration, and catalyst type can interdependently influence yield and purity, such oversights can compromise process validation.

Factorial design addresses this fundamental limitation. It is a systematic Design of Experiments (DoE) method that allows for the simultaneous investigation of multiple factors and their interactions [33]. This guide provides a practical, step-by-step framework for implementing your first factorial design, enabling a more efficient and comprehensive path to process optimization.

What is a Factorial Design? Core Concepts

A factorial design is an experimental construct that tests all possible combinations of the levels of two or more factors [8]. This approach allows researchers to determine not only the main effect of each individual factor but also how factors interact with one another [34].

- Factor: An independent variable that is deliberately varied during an experiment (e.g., temperature, reaction time, catalyst amount) [8] [34].

- Level: The specific values or settings a factor takes on (e.g., for temperature: 50°C and 70°C) [34].

- Treatment Combination: A unique experimental run defined by a specific combination of the levels of each factor.

- Main Effect: The average change in a response variable caused by moving a single factor from its low to high level, averaged across the levels of all other factors [8] [34].

- Interaction Effect: Occurs when the effect of one factor on the response depends on the level of another factor [32] [34]. This is a key insight that OFAT experiments cannot provide.

The most common type is the 2-level factorial design (e.g., 2^3 for three factors), where each factor is studied at a high and low level. This design is highly efficient for screening a large number of factors to identify the most influential ones [8] [32].

A Step-by-Step Guide to Implementing a Factorial Design

The following workflow outlines the key stages for planning, executing, and analyzing a factorial design experiment. Adhering to this structure ensures a methodologically sound approach.

Step 1: Define Objective and Select Factors & Levels

Clearly define the research problem and the response variable you want to optimize (e.g., reaction yield, purity, cost) [33]. Subsequently, select the factors you wish to investigate. For a screening design, limit each factor to two levels (high/low), chosen to represent a realistic and meaningful range [32]. The total number of unique experimental runs is the product of the levels of all factors (e.g., a 2x3 design has 6 runs).

Step 2: Select a Design and Create a Design Matrix

For a first experiment, a full factorial design is often appropriate. This design tests all possible combinations of your factors and levels, ensuring all main effects and interactions can be estimated [8]. The design is often represented in a worksheet or matrix that outlines the specific settings for each experimental run [35] [32].

Step 3: Execute the Experiment with Randomization

Once the design matrix is set, the experiments must be executed. A critical practice here is randomization—running the trials in a random order rather than in a structured sequence. This helps to minimize the impact of confounding "nuisance" variables (e.g., ambient humidity, reagent degradation) and ensures that the factor effects are not biased by external conditions [8] [32].

Step 4: Analyze Data and Model the Response

After collecting data for the response variable for each run, statistical analysis is performed. Analysis of Variance (ANOVA) is used to determine the statistical significance of the main effects and interaction effects [8]. Furthermore, regression analysis can be used to fit a mathematical model that relates the factors to the response, creating a predictive equation for the process [8] [4].

Step 5: Optimize and Predict

Use the model generated in the previous step to identify the factor level settings that produce the optimal response [35] [8]. The model can predict the outcome for any combination of factor levels within the studied range, allowing you to validate the predicted optimum with confirmatory experiments.

Factorial Design in Action: A Pharmaceutical Case Study

A study published in Scientific Reports perfectly illustrates the power of DoE. Researchers aimed to optimize a copper-mediated 18F-fluorination reaction, a critical process for developing new PET imaging tracers [4].

- Traditional Approach (OVAT): The authors noted that optimizing this multi-component reaction using a one-factor-at-a-time approach was complex, time-consuming, and prone to finding local, rather than global, optimum conditions [4].

- DoE Approach: They employed a factorial-based DoE approach to screen multiple factors simultaneously. This included a initial screening design to identify critical factors, followed by a more detailed optimization study [4].

- Outcome: The researchers successfully identified significant factor interactions that an OFAT approach would have missed. This led to the development of a robust predictive model and optimized reaction conditions with more than two-fold greater experimental efficiency than the traditional OFAT method [4].

Quantitative Data Comparison: OFAT vs. Factorial Design

The table below summarizes a core advantage of factorial design: its superior efficiency as the number of factors increases.

| Number of Factors | Experimental Runs Required (OFAT) | Experimental Runs Required (2-Level Factorial) | Relative Efficiency of Factorial Design |

|---|---|---|---|

| 2 | 8 [32] | 4 | 2.0x |

| 3 | 16 [32] | 8 | 2.0x |

| 5 | Not explicitly stated, but significantly higher [32] | 32 | Increases substantially |

Key Reagent Solutions for a Successful Experiment

The following table details essential components and methodologies that form the foundation of a well-executed factorial design study in a chemical or pharmaceutical context.

| Item / Solution | Function / Role in the Experiment |

|---|---|

| Statistical Software (e.g., JMP, MODDE, OriginLab) | Provides a platform to create the experimental design matrix, randomize run order, and perform ANOVA and regression analysis [35] [4]. |

| Response Variable | The measurable outcome (e.g., % yield, impurity level) used to evaluate the effect of the factors [8]. |

| Coded Factor Levels | A unitless scale (e.g., -1 for low level, +1 for high level) that allows for direct comparison of factor effects regardless of their original units [35]. |

| Randomization Algorithm | A procedure to determine the random run order, mitigating the effect of confounding variables and ensuring statistical validity [8] [32]. |

| ANOVA (Analysis of Variance) | A statistical test used to determine which factors and interactions have a statistically significant effect on the response variable [8]. |

For researchers and drug development professionals tasked with validating optimal reaction conditions, transitioning from a one-factor-at-a-time approach to a factorial design is a critical step toward robust, data-driven science. The methodology's ability to uncover complex factor interactions while maintaining high experimental efficiency provides a more complete and accurate map of the process landscape [8] [32] [4]. By following the structured guide outlined above, you can confidently implement your first factorial design, leading to more reliable, optimized, and thoroughly understood processes in your research.

Response Surface Methodology (RSM) is a powerful collection of statistical and mathematical techniques for modeling and analyzing problems in which a response of interest is influenced by several variables, with the primary goal of optimizing this response [36] [37]. Within a broader Design of Experiments (DoE) framework for validating optimal reaction conditions, RSM serves a critical function in the later stages of experimentation. After initial screening experiments have identified the few key factors from a larger set, RSM provides a structured approach for locating the true optimum conditions, particularly when the response surface exhibits curvature and interaction effects that simple linear models cannot capture [36] [38].

This methodology was pioneered in the 1950s by Box and Wilson and has since become an indispensable tool in technical and scientific fields, including pharmaceutical manufacturing, chemical engineering, and analytical method development [36] [39] [37]. Its unique value lies in its ability to build empirical models using data from a strategically designed set of experiments, then graphically represent the relationship between factors and responses through contour plots and 3D surface plots, enabling researchers to visualize the path to optimal conditions [36] [40].

Core Principles and Experimental Designs in RSM

Fundamental Concepts and Mathematical Basis

RSM is fundamentally based on the concept that a response variable (y) can be modeled as a function of several input factors (x₁, x₂, ..., xₖ) plus an experimental error term (ε) [38] [40]. This relationship is expressed as:

y = f(x₁, x₂, ..., xₖ) + ε

While the true functional relationship f is typically unknown, RSM approximates it using low-degree polynomial models, most commonly first-order or second-order models [38]. For a system with two independent variables, a second-order model including interaction effects takes the form:

η = β₀ + β₁x₁ + β₂x₂ + β₁₁x₁² + β₂₂x₂² + β₁₂x₁x₂

This quadratic model is particularly valuable for optimization as it can represent the curvature of the response surface, including maximum, minimum, and saddle points [38]. The coefficients (β) are estimated from experimental data using regression analysis techniques, primarily the method of least squares [38] [40].

Key Experimental Designs for RSM

The choice of experimental design is critical for efficiently building accurate response surface models. Different designs offer varying balances between experimental effort and model capability.

Table 1: Key Experimental Designs Used in Response Surface Methodology

| Design Type | Characteristics | Best Use Cases | Sample Requirements |

|---|---|---|---|

| Central Composite Design (CCD) | Consists of factorial points, axial points, and center points; can test 3+ levels; good for fitting second-order models [38] [39] | General optimization with 3+ factors; sequential experimentation [38] [39] | 13+ runs (for 3 factors) [40] [41] |

| Box-Behnken Design (BBD) | Three-level spherical design based on balanced incomplete block designs; no corner points [38] [39] | Smaller number of factors (typically 3-7); avoids extreme conditions [38] [39] | 22 runs (for 3 factors) [40] [41] |

| Full Factorial Design | All possible combinations of factors and levels; number of runs increases exponentially with factors [40] | When resources permit; studying all interactions [40] | 27 runs (for 3 factors at 3 levels) [40] |

| Taguchi Design | Uses orthogonal arrays to study many factors with few runs; focuses on robustness [42] | Screening; parameter design for quality; cost-constrained studies [42] | Varies by orthogonal array [42] |

According to a survey of published literature, Central Composite Design is the most frequently used RSM design, followed by Full Factorial Design, with Box-Behnken Design being the least common among the three major approaches [40]. However, the use of Box-Behnken designs has been increasing in recent years [40].

Comparative Performance: RSM vs. Alternative Optimization Methods

RSM vs. Artificial Neural Networks (ANN)

A 2025 comparative study on the removal of Diclofenac Potassium from synthesized pharmaceutical wastewater provides direct experimental comparison between RSM and Artificial Neural Networks (ANN) [43]. Researchers used a palm sheath fiber nano-filtration membrane and evaluated the influence of four process factors: temperature (30-50°C), pH (6-10), flow rate (1-5 ml/min), and initial concentration (40-120 mg/L) [43].

Table 2: Performance Comparison of RSM and ANN for Pharmaceutical Wastewater Treatment Optimization

| Metric | RSM Model | ANN Model |

|---|---|---|

| Predictive Accuracy | Strong correlation with experimental data | Best predictive accuracy |

| Validation Result | - | 84.67% (experimental) vs. 84.78% (predicted) |

| Statistical Metrics | Good R² value | Higher R², Lower AARD and MAE |

| Optimal Conditions | - | Initial concentration: 102 mg/L, pH: 8.8, Temperature: 40.6°C, Flow rate: 3.6 ml/min |

The study concluded that while both models demonstrated strong correlation with experimental data, the ANN model provided superior predictive accuracy according to statistical metrics including correlation coefficients (R²), Absolute Average Relative Deviation (AARD), and Mean Absolute Error (MAE) [43].

RSM vs. Taguchi Method

A comprehensive 2025 study compared the performance of RSM (specifically Box-Behnken and Central Composite designs) with the Taguchi method for optimizing process parameters in fabric manufacturing [42]. The research focused on four factors at three levels each, with the goal of maximizing color strength in cotton knit fabric dyeing.

Table 3: Comparison of RSM and Taguchi Method for Dyeing Process Optimization

| Method | Experimental Runs | Optimization Accuracy | Key Strengths | Limitations |

|---|---|---|---|---|

| Taguchi Method | Fewer runs (L9 orthogonal array for 4 factors) [42] | 92% [42] | Cost-effective; robust parameter design [42] | Less accurate for complex interactions [42] |

| Box-Behnken Design (RSM) | Moderate (25 runs for 4 factors) [42] | 96% [42] | Good accuracy with reasonable experimental load [42] | Not suitable for extreme factor levels [38] |

| Central Composite Design (RSM) | More runs (30 runs for 4 factors) [42] | 98% [42] | Highest accuracy; captures curvature well [42] | More resource-intensive [42] |

The Taguchi method required fewer experimental runs, providing a more cost-effective solution, while both BBD and CCD delivered higher optimization accuracy with greater precision [42]. The most significant factor affecting color strength was Evercion Red EXL Concentration (62.6% contribution), followed by Temperature (22.4%), Na₂SO₄ Concentration (11.3%), and Na₂CO₃ Concentration (3.69%) [42].

RSM vs. Kriging for Computational Optimization

In injection molding simulations, a 2025 study compared the performance of RSM and Kriging surrogate models for optimizing process parameters to minimize deformation, shrinkage, and cycle time [44]. Both methods significantly reduced computational cost per evaluation by several orders of magnitude compared to full injection molding simulations [44].

Table 4: RSM vs. Kriging for Injection Molding Optimization

| Performance Aspect | RSM | Kriging |

|---|---|---|

| Prediction Accuracy | Good for simpler geometries | Superior for complex geometries |

| Error Rates | Higher, especially for complex systems | Lower error rates |

| Computational Efficiency | Fast, efficient for iterative optimization | Slightly more computationally intensive |

| Implementation Complexity | Straightforward polynomial approach | More complex Gaussian process approach |

The findings indicated that Kriging outperformed RSM, especially in complex geometries, by providing more accurate predictions with lower error rates, making it preferable for applications requiring high precision in process optimization [44].

Experimental Protocols and Methodologies

General Workflow for RSM Implementation

The implementation of Response Surface Methodology follows a systematic sequence of steps to ensure reliable model development and validation [36] [38]:

Case Study: RSM in Pharmaceutical CT Imaging Optimization

A 2025 study demonstrated the application of RSM for predicting optimal conditions in very low-dose chest CT imaging [45]. The experimental protocol was designed to minimize the number of experiments while ensuring diagnostic quality.

Experimental Objective: To determine optimal reconstruction parameters (noise index and percentage of ASIR-V) and reconstruction techniques (iterative and deep learning-based) that ensure diagnostic quality while minimizing radiation dose [45].

Methodology:

- Experimental Design: Doehlert matrix was used to define the experiments [45]

- Phantom: Anthropomorphic chest phantom with a 5 mm diameter lesion (HU of -800) [45]

- Scanner: 128-slice CT scanner [45]

- Reconstruction Techniques: Iterative (ASIR-V) and deep learning-based reconstruction at low (DLIR-L) and high (DLIR-H) strengths [45]

- Response Assessment: Lesion detectability assessed using self-supervised learning-based model observers and six human observers [45]

- Modeling: Second-order polynomial functions established to model the combined effect of noise index (NI) and percentage of ASIR-V on dose and model observers' performances [45]

Results: The optimal conditions predicted by RSM were NI = 64, % ASIR-V = 60, and DLIR-H reconstruction, which showed good agreement with experimental results from human observers [45]. The method suggested an approximately 64% dose reduction potential for DLIR-H without compromising lesion detection [45].

Case Study: RSM in Chemical Reaction Optimization

A 2025 study applied RSM to optimize the gas-phase hydrogenation of carbon dioxide on nickel-based catalysts [37]. The research aimed to determine optimal reaction conditions with mild reaction parameters and stoichiometric hydrogen deficiency.

Experimental Design:

- Factors: Temperature and H₂ to CO₂ molar ratio [37]

- Design: Central Composite Design [37]

- Experiments: 36 experiments total [37]

- Response: Carbon dioxide conversion [37]

Results: The maximum carbon dioxide conversion was obtained at 318°C with a molar H₂ to CO₂ ratio of 3.5 [37]. The RSM approach successfully identified optimal conditions with a minimal number of experiments, confirming the method's efficiency for chemical process optimization [37].

The Researcher's Toolkit: Essential Reagents and Solutions

Table 5: Essential Research Reagent Solutions for RSM Experiments

| Reagent/Solution | Function in RSM Experiments | Example Application |

|---|---|---|

| Statistical Software | Model development, experimental design, regression analysis, visualization [40] [41] | All RSM applications |

| Central Composite Design Matrix | Defines experimental points for efficient model building [38] [39] | General optimization with 3+ factors [38] |

| Box-Behnken Design Matrix | Three-level design avoiding extreme conditions [38] [39] | Processes where extreme factor levels are problematic [38] |

| ANOVA (Analysis of Variance) | Determines statistical significance of model terms [36] [40] | Model adequacy checking in all RSM studies |

| Lack-of-Fit Test | Evaluates whether model adequately fits experimental data [36] [40] | Model validation in all RSM studies |

| Contour and 3D Surface Plots | Visualizes relationship between factors and responses [36] [40] | Identifying optimal conditions in all RSM studies |

| Desirability Functions | Simultaneously optimizes multiple responses [36] | Pharmaceutical formulations with multiple quality targets |

Response Surface Methodology remains an essential component of the Design of Experiments toolkit for locating true optimum conditions in complex systems. The comparative analysis reveals that RSM, particularly using Central Composite Designs, provides excellent optimization accuracy (up to 98% in dyeing processes) while requiring moderate experimental resources [42]. While alternative methods like Artificial Neural Networks may offer superior predictive accuracy in some applications, and Kriging may perform better for highly complex, nonlinear systems, RSM maintains distinct advantages in interpretability, implementation simplicity, and visualization capabilities [43] [44].

For researchers and drug development professionals validating optimal reaction conditions, RSM is particularly valuable when:

- The relationship between factors and responses is expected to exhibit curvature

- Interaction effects between factors are significant

- Visualization of the response surface would aid process understanding

- Resources allow for 15-30 experimental runs depending on factor number